Eddies Continuously Adaptive Query Processing Based on a

Eddies: Continuously Adaptive Query Processing Based on a SIGMOD’ 2002 paper and talk by Avnur and Hellerstein.

State-of-Art in Query Optimization • • • Given: 1. Database state and statistics known a-priori 2. One (short) user query to process 3. Query may be run only once Query Processing: 1. A-priori decide on a (static) query plan 2. Run query using this one plan Also: 1. Possibly update statistics sometimes (in steady state)

Adaptive Systems: General Flavor Repeat: 1. Observe (model) environment 2. Use observation to choose behavior 3. Take action

Adaptivity in Current DBs • Limited & coarse grain Repeat: 1. Observe (model) environment – runstats (once per week!!): model changes in data 2. Use observation to choose behavior – query optimization: fixes a single static query plan 3. Take action – query execution: blindly follow plan

Query Optimization – Adaptivity at a per-week frequency! • Not suited for volatile environments

A Networking Problem!? • Networks do dataflow! • Significant history of adaptive techniques – E. g. TCP congestion control – E. g. routing • But traditionally much lower function – Ship bitstreams – Minimal, fixed code • Lately, moving up the foodchain? – app-level routing – active networks

Varying … • Computing resources – Data flows unpredictably from sources – Code performs unpredictably along flows – Continuous volatility due to many decentralized systems • Data Characteristics – Distributions – Burstiness • User preferences – What get fast – How much data

Toward Continuous Adaptivity • Need much more frequent adaptivity – Goal: adapt per tuple of each relation? ? – The traditional runstats-optimize-execute loop is far too coarse-grained – So, continuously perform all 3 functions, at runtime – Aim for adaptivity over best-case performance (as the later never exists for long)

Road Map • Adaptive Query Processing • Intra-join adaptivity – Synchronization Barriers – Moments of Symmetry • Eddies – Encapsulated, adaptive dataflow

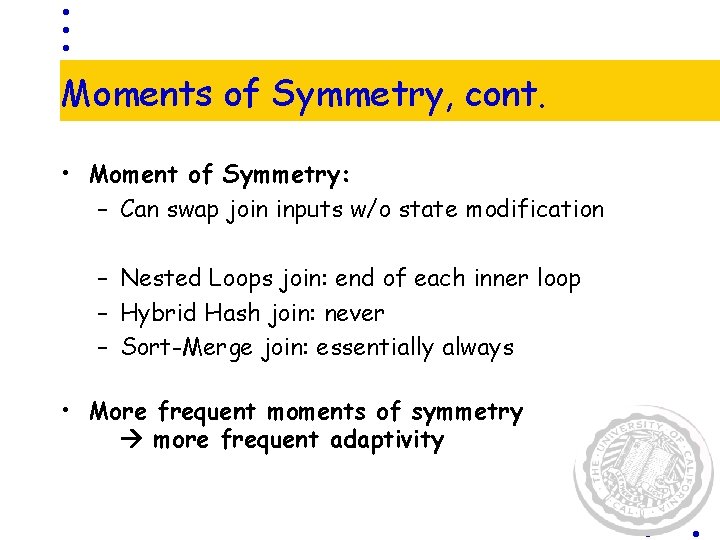

Adaptable Operators and Plans • Moments of symmetry = query processing stage during which pipelined query operators or inputs can be easily reordered (with no or minimal state management) • Synchronization barriers = require inputs from different sources to be coordinated and possibly restricted to the rate of the slower input • We need “good” operators.

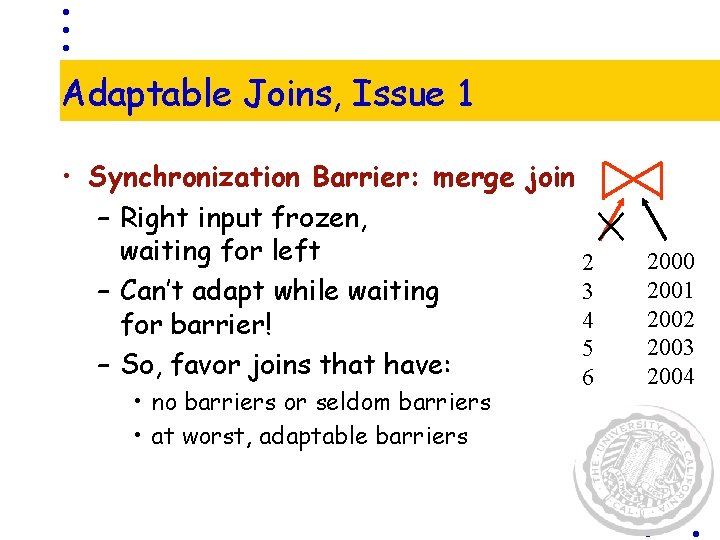

Adaptable Joins, Issue 1 • Synchronization Barrier: merge join – Right input frozen, waiting for left 2 3 – Can’t adapt while waiting 4 for barrier! 5 – So, favor joins that have: 6 • no barriers or seldom barriers • at worst, adaptable barriers 2000 2001 2002 2003 2004

Adaptable Joins, Issue 2 • Would like to reorder in-flight (pipelined) joins • Base case: swap inputs to a join ? ? • Moment of symmetry: – inputs can be swapped with no/little state management • Aim for frequent moments of symmetry more frequent adaptivity

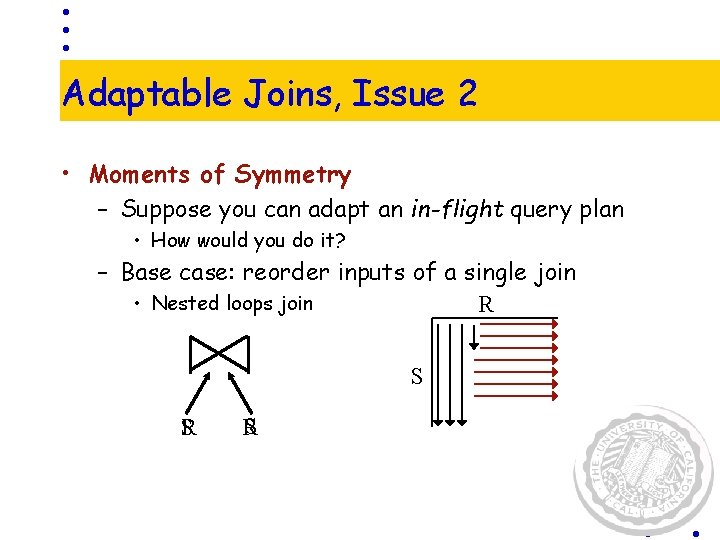

Adaptable Joins, Issue 2 • Moments of Symmetry – Suppose you can adapt an in-flight query plan • How would you do it? – Base case: reorder inputs of a single join • Nested loops join R S S R

Adaptable Joins, Issue 2 • Moments of Symmetry – Suppose you can adapt an in-flight query plan • How would you do it? – Base case: reorder inputs of a single join • Nested loops join R • Cleaner if you wait til end of inner loop S

Adaptable Joins, Issue 2 • Moments of Symmetry – Suppose you can adapt an in-flight query plan • How would you do it? – Base case: reorder inputs of a single join • Nested loops join R • Cleaner if you wait til end of inner loop – Hybrid Hash • Reorder while “building”? S

Moments of Symmetry, cont. • Moment of Symmetry: – Can swap join inputs w/o state modification – Nested Loops join: end of each inner loop – Hybrid Hash join: never – Sort-Merge join: essentially always • More frequent moments of symmetry more frequent adaptivity

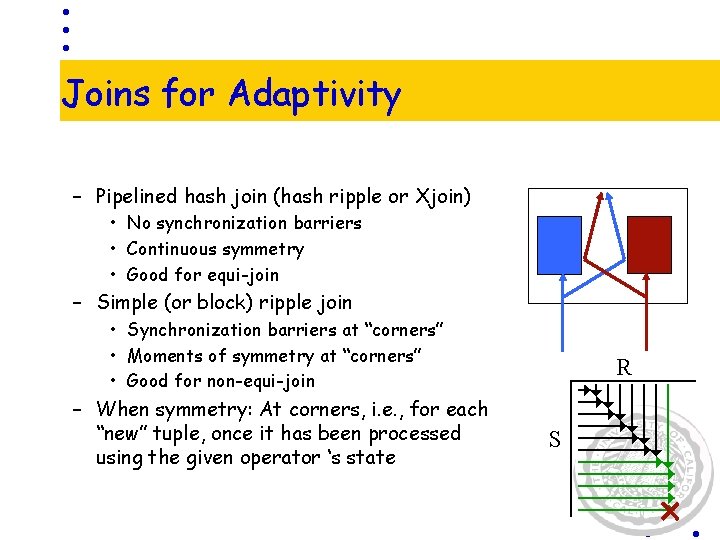

Joins for Adaptivity – Pipelined hash join (hash ripple or Xjoin) • No synchronization barriers • Continuous symmetry • Good for equi-join – Simple (or block) ripple join • Synchronization barriers at “corners” • Moments of symmetry at “corners” • Good for non-equi-join – When symmetry: At corners, i. e. , for each “new” tuple, once it has been processed using the given operator ‘s state R S

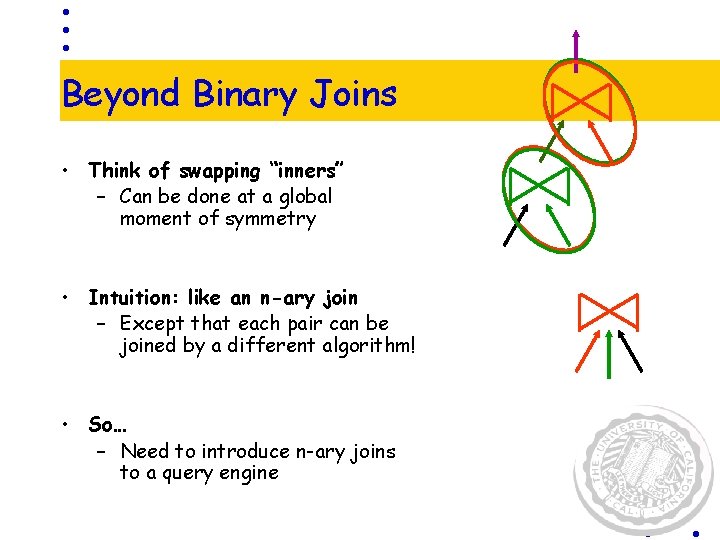

Beyond Binary Joins • Think of swapping “inners” – Can be done at a global moment of symmetry • Intuition: like an n-ary join – Except that each pair can be joined by a different algorithm! • So… – Need to introduce n-ary joins to a query engine

Need well-behaved join algorithms – Pipelining – Avoid synch barriers – Frequent moments of symmetry

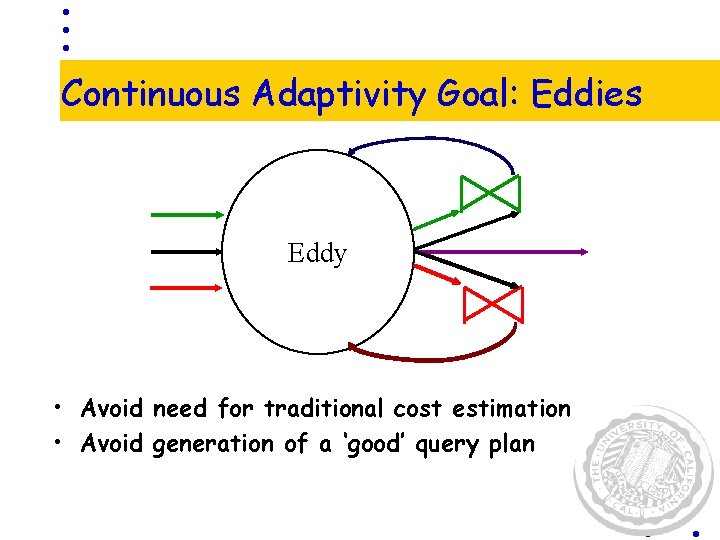

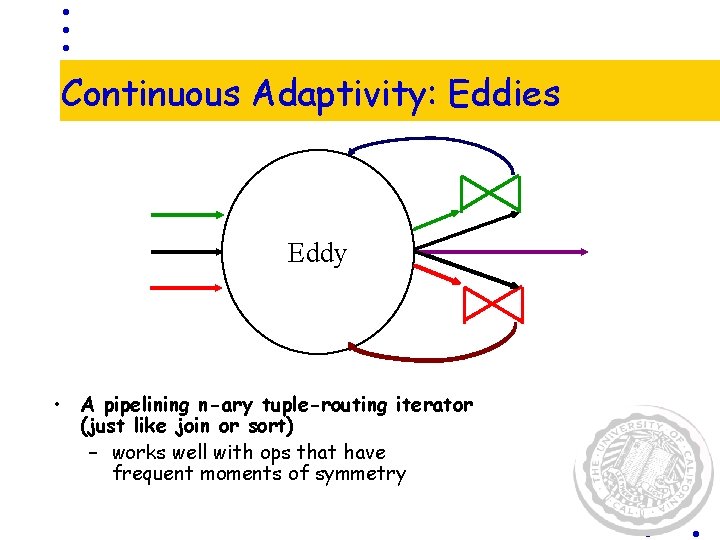

Continuous Adaptivity Goal: Eddies Eddy • Avoid need for traditional cost estimation • Avoid generation of a ‘good’ query plan

Continuous Adaptivity: Eddies Eddy • A pipelining n-ary tuple-routing iterator (just like join or sort) – works well with ops that have frequent moments of symmetry

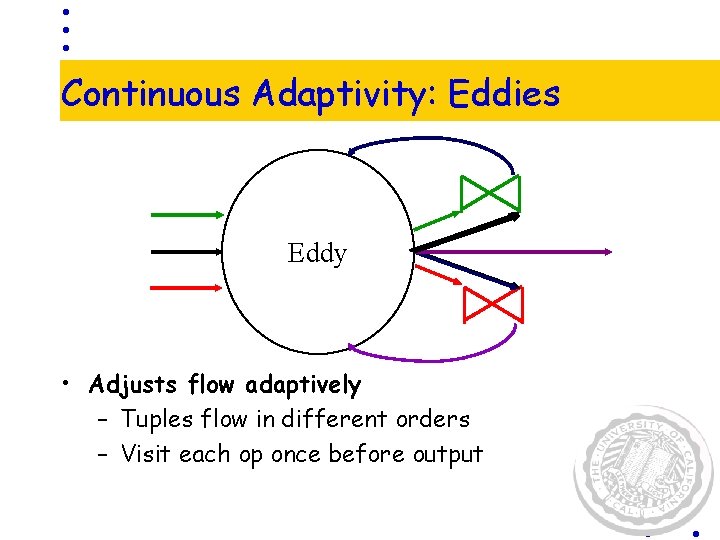

Continuous Adaptivity: Eddies Eddy • Adjusts flow adaptively – Tuples flow in different orders – Visit each op once before output

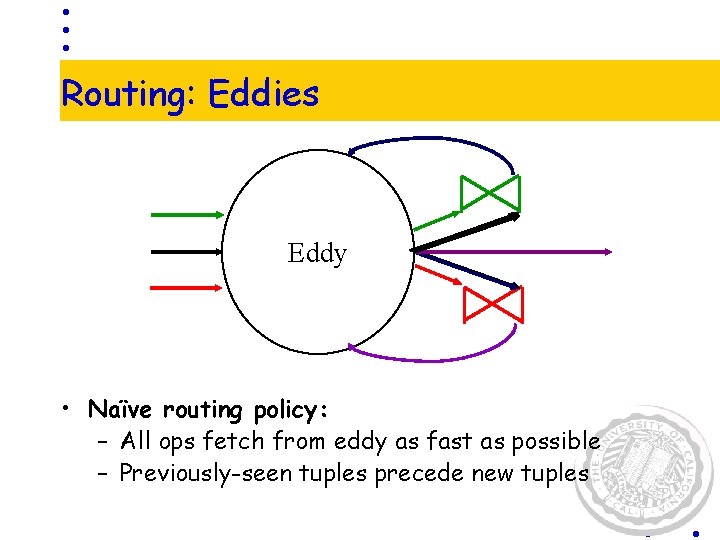

Routing: Eddies Eddy • Naïve routing policy: – All ops fetch from eddy as fast as possible – Previously-seen tuples precede new tuples

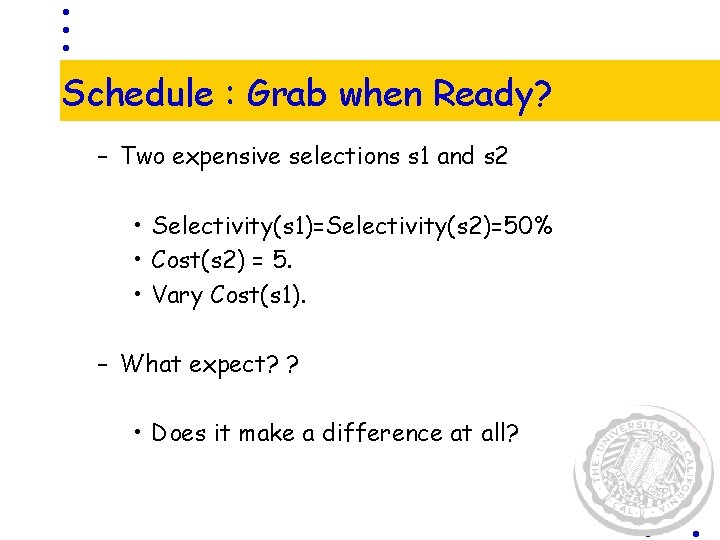

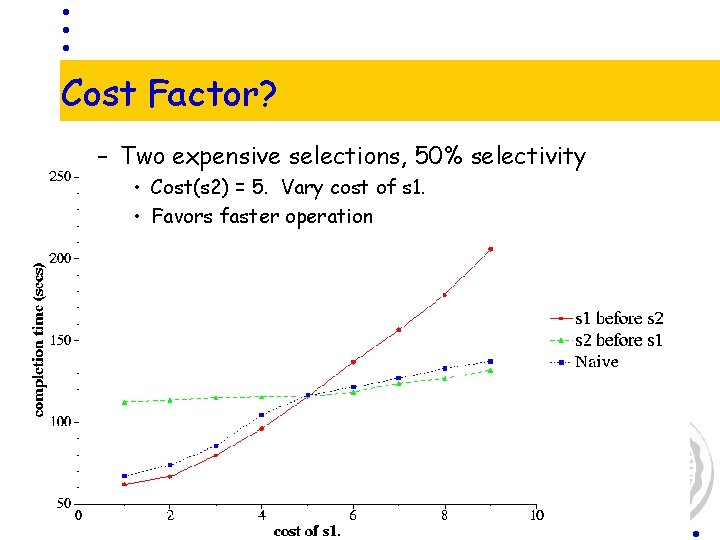

Schedule : Grab when Ready? – Two expensive selections s 1 and s 2 • Selectivity(s 1)=Selectivity(s 2)=50% • Cost(s 2) = 5. • Vary Cost(s 1). – What expect? ? • Does it make a difference at all?

Cost Factor? – Two expensive selections, 50% selectivity • Cost(s 2) = 5. Vary cost of s 1. • Favors faster operation

But is it Enough? – Given two expensive selections: • Cost same, say cost(s 1)=cost(s 2)=5 • Selectivity(s 2) = 50%. • Vary selectivity of s 1. – Does that make a difference?

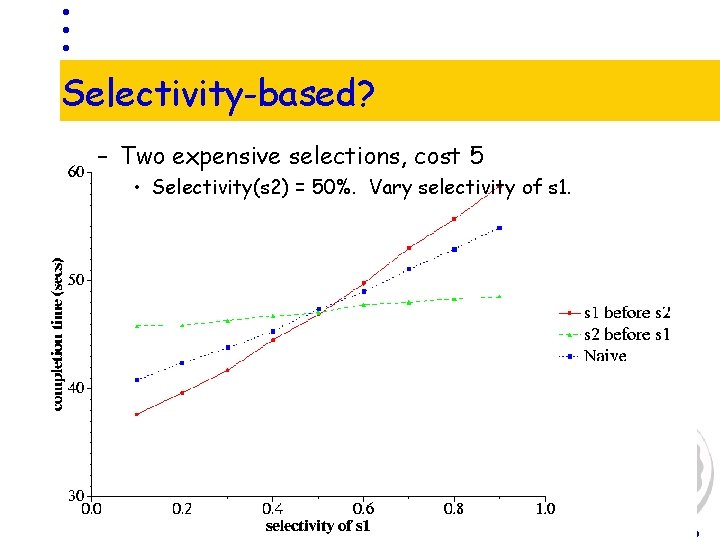

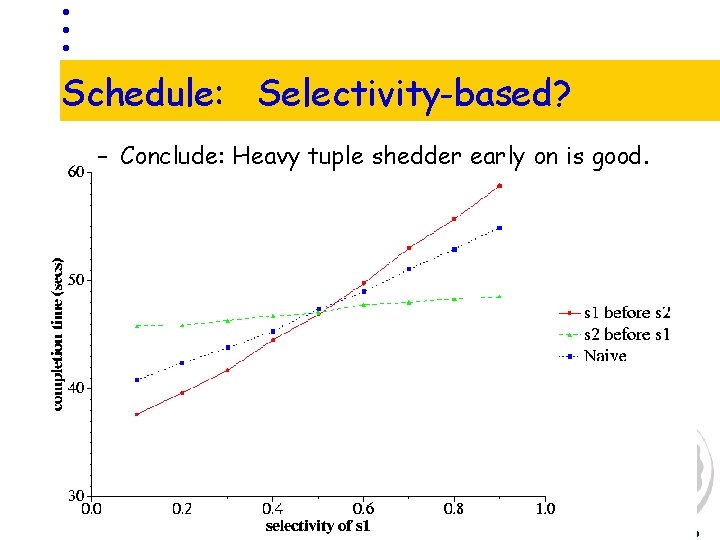

Selectivity-based? – Two expensive selections, cost 5 • Selectivity(s 2) = 50%. Vary selectivity of s 1.

Schedule: Selectivity-based? – Conclude: Heavy tuple shedder early on is good.

How to choose? • If we knew all selectivities and all costs (and they were static), maybe we could pick the best overall “schedule” here. • Otherwise, we need a cheap means to observe their changes • And, we need a means to react in a simply manner based on those perceived changes

An Aside: How to choose? • A machine learning problem? – Each agent pays off differently – Explore Or Exploit? – Heuristics ? • Sometimes want to randomly choose one • Usually want to go with the best • If probabilities are stationary, dampen exploration over time

Eddies with Lottery Scheduling • Operator gets 1 ticket when it takes a tuple – Favor operators that run fast (low cost) • Operator loses a ticket when it returns a tuple – Favor operators that drop tuples (low selectivity) • Winner? – Large number of tickets == measure of goodness • Lottery Scheduling: – When two operators vie for the same tuple, hold a lottery – Never let any operator go to zero tickets • Support occasional random “exploration”

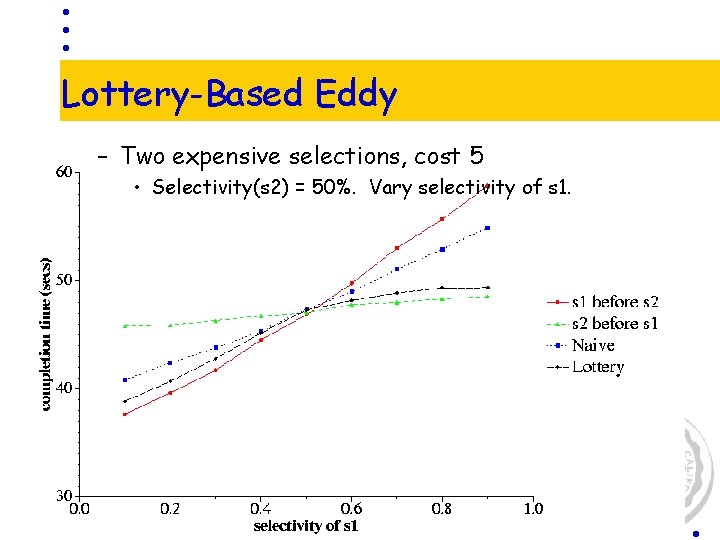

Lottery-Based Eddy – Two expensive selections, cost 5 • Selectivity(s 2) = 50%. Vary selectivity of s 1.

Summary • Eddies: Continuously Adaptive Dataflow – Suited for volatile performance environments • Changes in operator/machine peformance • Changes in selectivities (e. g. with sorted inputs) • Changes in data delivery – Currently adapts join order • Competitive methods to adapt access & join methods? • Requires well-behaved join algorithms – Pipelining – Avoid synch barriers – Frequent moments of symmetry • The end of the runstats/optimizer/executor boundary! – At best, System R is good for “hints” on initial ticket distribution

- Slides: 33