Metaanalytic corrections for publication bias YOU CANT SQUEEZE

Meta-analytic corrections for publication bias YOU CAN’T SQUEEZE BLOOD OUT OF A TURNIP

Gather your supplies Install R: https: //cran. r-project. org/ Install Rstudio: https: //www. rstudio. com/ Grab our R script: https: //osf. io/qn 8 fa/

Why worry about bias?

Promises of meta-analysis Hypothesis testing with great power Effect estimation with great precision Differences between studies: ◦ Describe heterogeneity ◦ Explain it with moderators

One study

Meta-analysis (in theory)

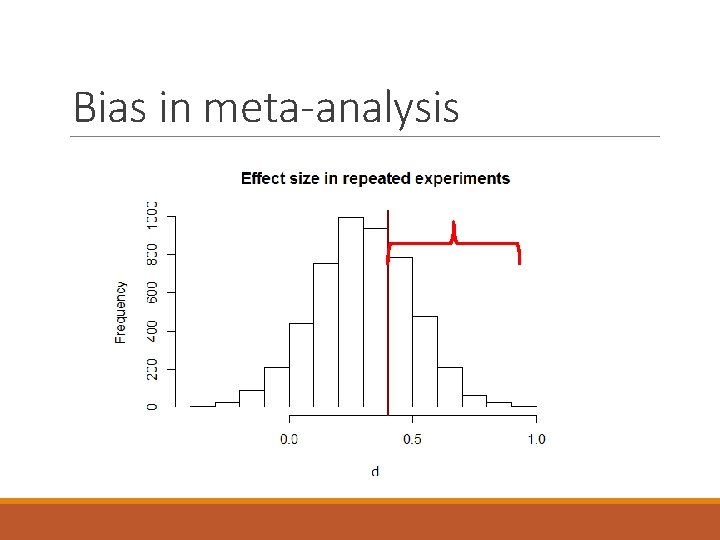

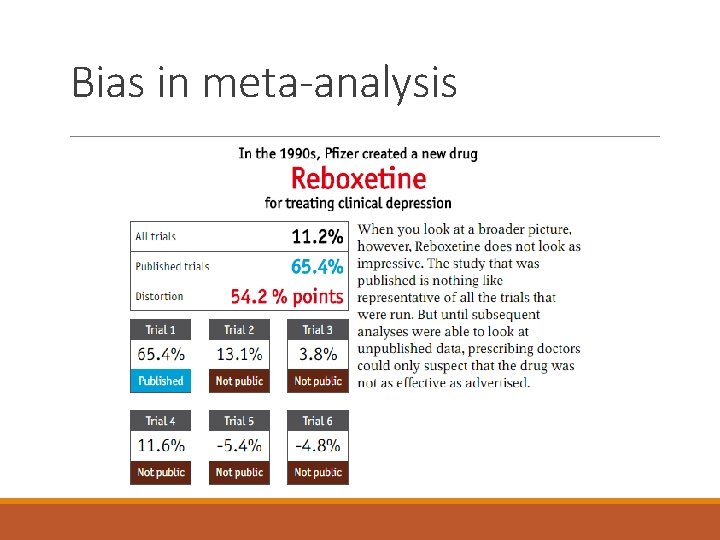

Bias in meta-analysis

Bias in meta-analysis

Bias in meta-analysis

Meta-analysis (in practice)

Meta-analysis (in practice) Early theorists hoped study biases would cancel out. Instead, publication bias is accumulated and magnified in meta-analysis.

Can we adjust for bias? Hagger et al. (2010): Ego depletion is real (d = 0. 62) Carter & Mc. Cullough (2014): Don’t be so sure (d ≈ 0) Hagger et al. (2016) RRR: Uh oh (d = 0. 04) Vohs (2018): super tiny, if anything

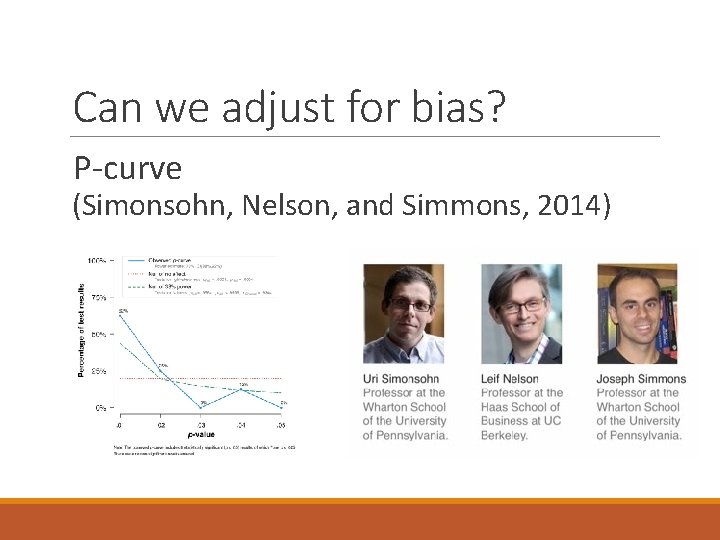

Can we adjust for bias? P-curve (Simonsohn, Nelson, and Simmons, 2014)

Many potential techniques Fail safe N Trim-and-fill Egger’s test PET-PEESE P-curve / p-uniform Selection models ?

About this workshop

Workshop goals Introduce you to the available methods for handling publication bias. Show you how to use and inspect them, hands on, in R. Talk you through the strengths and weaknesses of these methods so you write better metas and peer reviews.

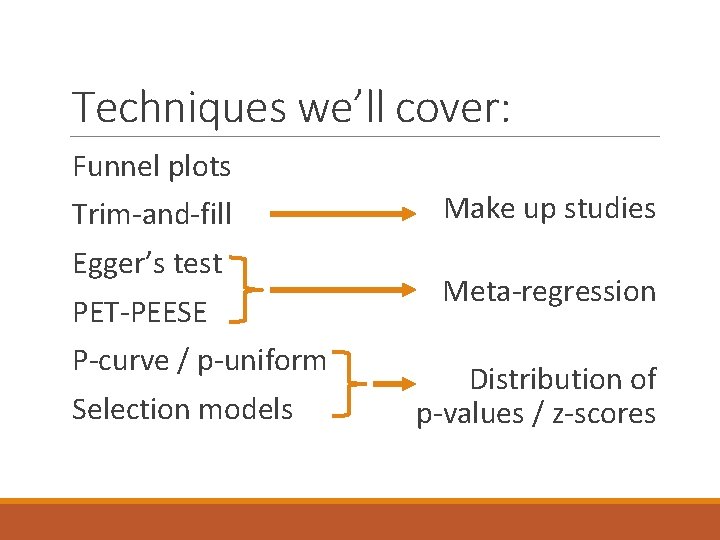

Techniques we’ll cover: Funnel plots Trim-and-fill Egger’s test PET-PEESE P-curve / p-uniform Selection models Make up studies Meta-regression Distribution of p-values / z-scores

Our demo dataset Anderson et al. (2010)

Our simulation study Felix Schonbrodt Evan Carter and me Will Gervais

Our simulation study Preprint at https: //psyarxiv. com/9 h 3 nu

Simulation study Size of true effect Degree of heterogeneity Number of studies Degree of publication bias P-hacking behavior

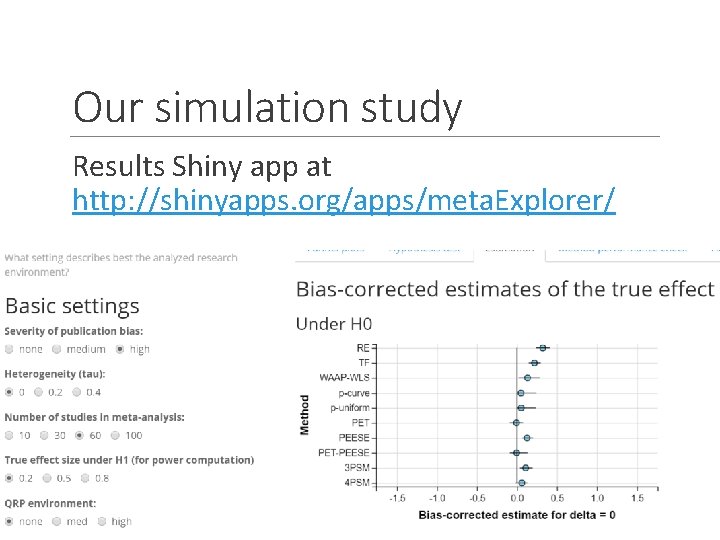

Our simulation study Results Shiny app at http: //shinyapps. org/apps/meta. Explorer/

Structure of this workshop 1. We’ll talk about what each technique does in theory… 2. Fit it to our demo dataset and see what the technique does in application… 3. Then talk about how the technique behaves in simulation studies.

How this will work: I’ve prepared an Rmarkdown file. Each step in analysis is one “chunk”. As we work through, I’ll ask you to run the markdown file one chunk at a time.

How this will work: If you fall behind, you can catch up. Restart R, go to the chunk we’re discussing, hit “Run all chunks above (CTRL-ALT-P)”

Let’s start with a basic meta Our working example: Data from Anderson et al. (2010) on violent video game effects on aggressive behavior

Our data Fisher. s. Z Effect size from each study, extracted as Pearson r and transformed to Fisher’s Z. Std. Err Standard error of Fisher’s Z.

Our data Best. Do the authors think this study used best practices? “y” or “n” SEX, AGE, PERSON, East. West Moderator variables

Meta-analysis without adjustment rma(yi = Fisher. s. Z, sei = Std. Err, data = dat) rma(yi = Fisher. s. Z, sei = Std. Err, data = dat. best)

Fail-Safe N

Fail-Safe N Ego depletion meta-analysis (Hagger et al. , 2010) 50, 000!

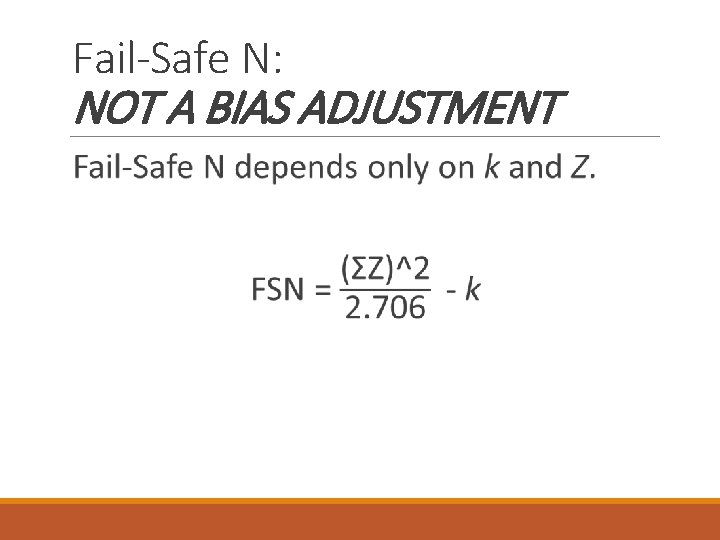

Fail-Safe N: NOT A BIAS ADJUSTMENT “In a world without p-hacking, how many studies with effect size = 0 would have to be in file-drawers to end up with p >. 05? ”

Fail-Safe N: NOT A BIAS ADJUSTMENT “In a world without p-hacking, how many studies with effect size = 0 would have to be in file-drawers to end up with p >. 05? ”

Fail-Safe N: NOT A BIAS ADJUSTMENT

The following scenarios can all have the same FSN: A few honestly-reported studies on a moderate effect. A lot of honest studies on a teeny-tiny effect. A single study with a very significant result. A dozen p-hacked studies on a null effect.

What is our question? Is there p-hacking? How much? Is there publication bias? How much? What is the effect size without phacking or publication bias?

Funnel plot methods

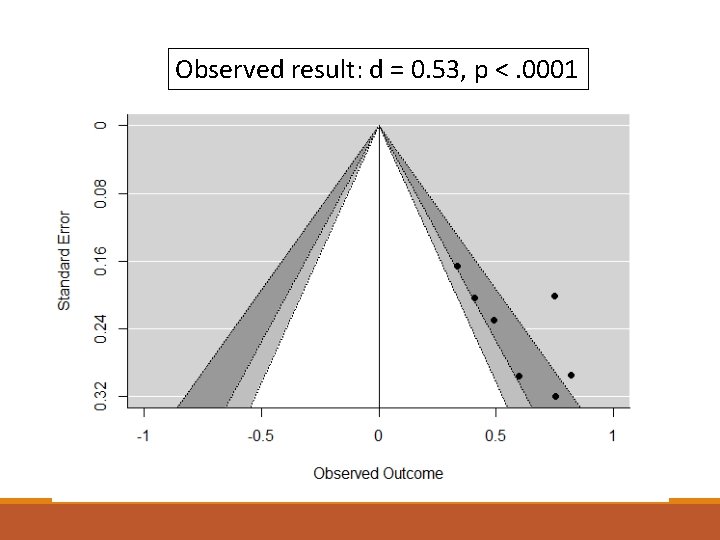

Funnel plot A scatterplot: Effect size on the X-axis, Standard error on the (inverted) Y-axis.

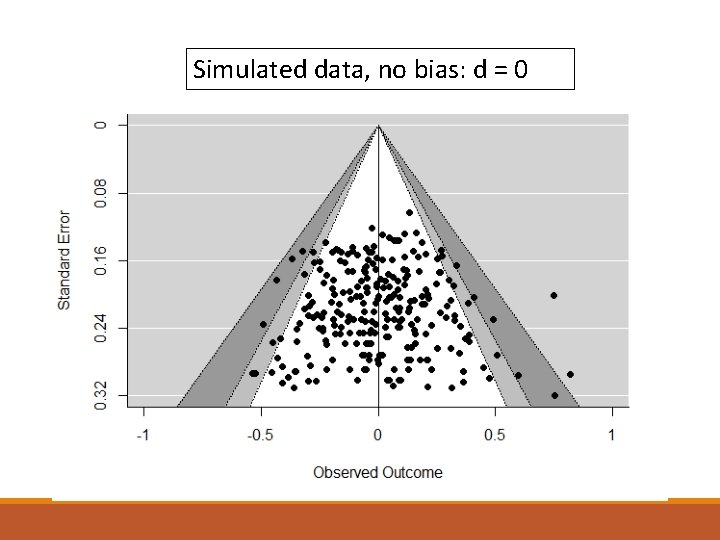

Simulated data, no bias: d = 0

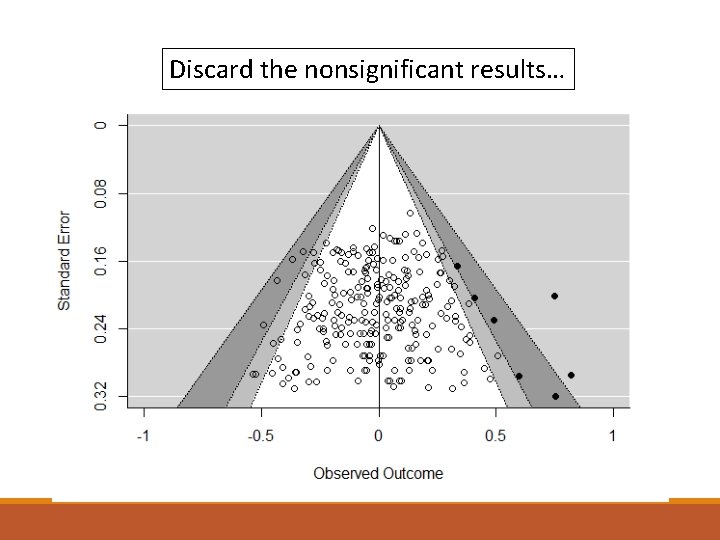

Discard the nonsignificant results…

Observed result: d = 0. 53, p <. 0001

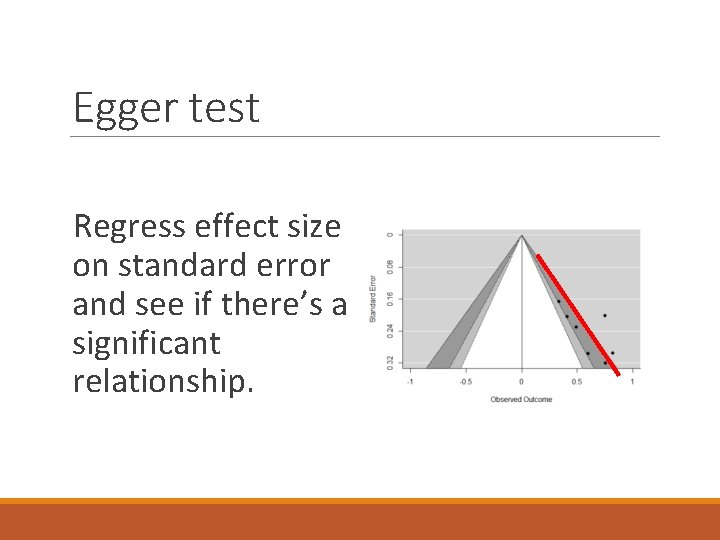

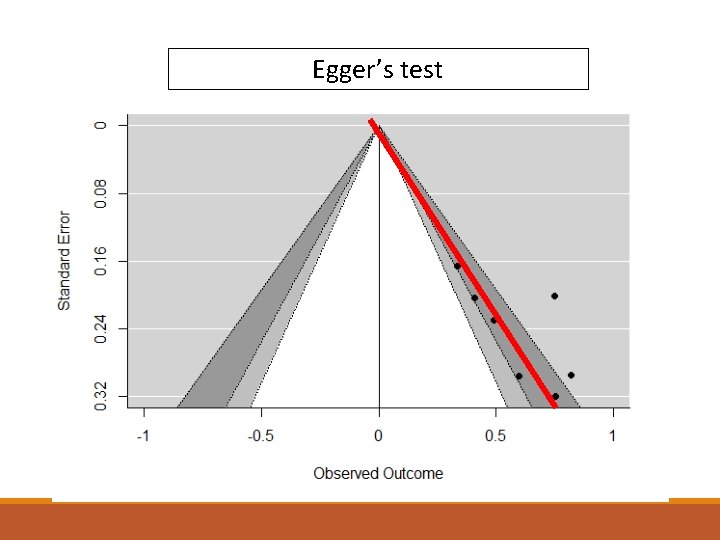

Egger test Regress effect size on standard error and see if there’s a significant relationship.

Egger’s test

Funnels and Egger: A Cautionary Note Can be harmless reasons for smallstudy effects. For example, big expensive interventions might have larger effects and smaller sample sizes. Look for these potential moderators.

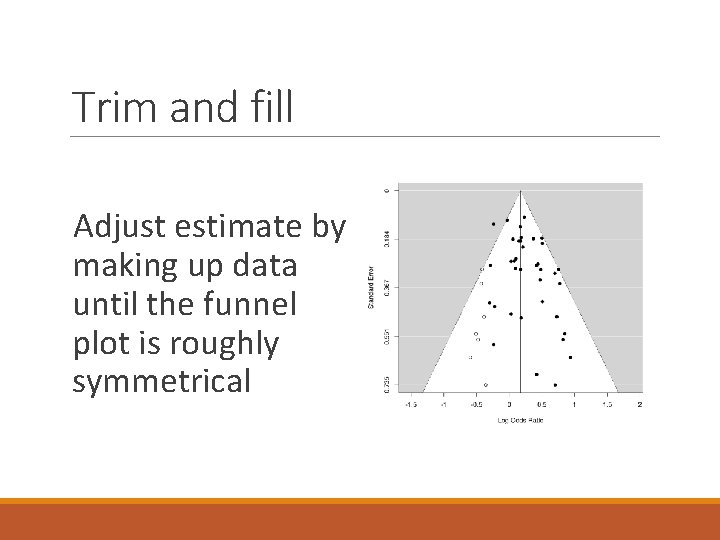

Trim and fill Adjust estimate by making up data until the funnel plot is roughly symmetrical

Trim and fill: Simulation performance Type I error: Type I error rates remain high; improvement is minimal when bias or QRPs are strong. Power: Loss of power is very small

Trim and fill: Simulation performance Bias: Tends to shave off about d = 0. 10 from the naïve estimate; not enough when bias is strong. RMSE: Usually a slight improvement relative to RE.

Trim and fill: Simulation performance Heterogeneity: Heterogeneity doesn’t seem to particularly screw it up. QRPS: Just add to the total bias.

But see Brunwasser, Gillham, and Kim (2009): d = 0. 11 [0. 01, 0. 20] goes to d = 0. 09 [-0. 01, 0. 19]

Top Ten / WAAP: Simulation performance Type I error: Can help to cut Type I error rates, but still high (60+%) Power: Some noticeable loss of power.

Top Ten / WAAP: Simulation performance Bias: Still biased upwards; can be better than trim-and-fill if k is large RMSE: If there’s bias, WAAP can improve RMSE slightly. If there’s no bias, it harms it slightly.

Top Ten / WAAP: Simulation performance Not particularly sensitive to heterogeneity QRPs increase total bias

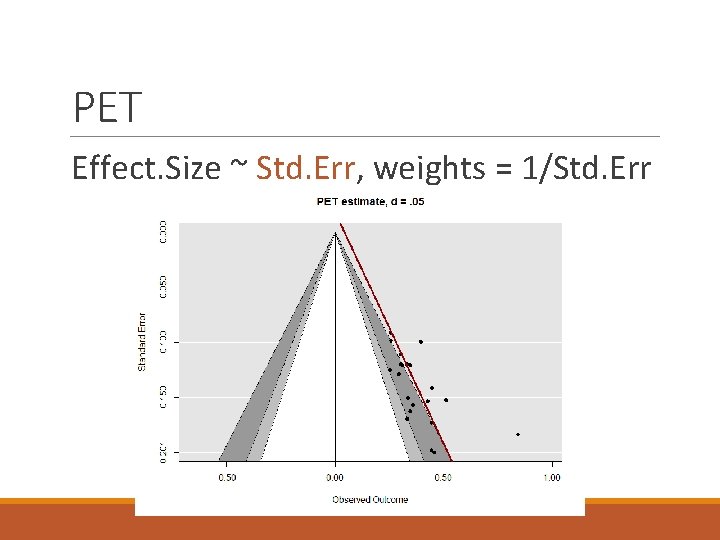

PET Effect. Size ~ Std. Err, weights = 1/Std. Err

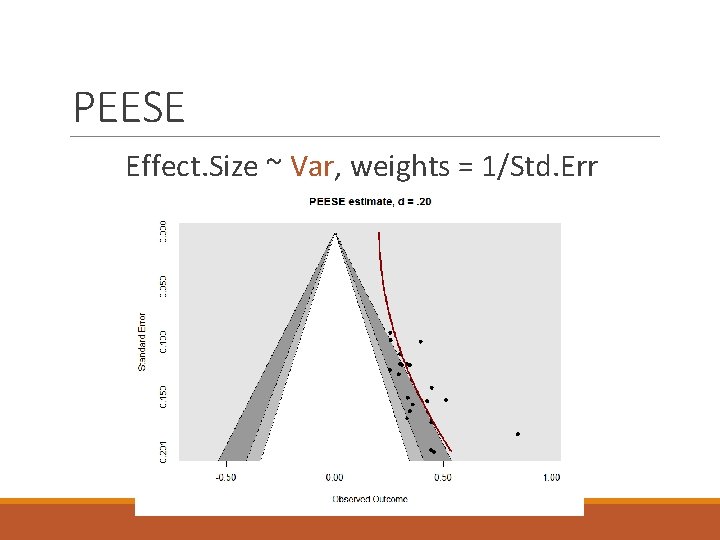

PEESE Effect. Size ~ Var, weights = 1/Std. Err

PET-PEESE: Simulation performance Type I error: Good Type I error rates under homogeneity Power: Loses a little power

PET-PEESE: Simulation performance ME: Inherits a little of PET’s downward bias Gets better with more k RMSE: If there’s no bias, it increases RMSE. If there is bias and decent k, it improves RMSE.

PET-PEESE: Simulation performance Heterogeneity: Can cause upward bias under H 0 or worsen downward bias under H 1. QRPs: Nudge the mean error downwards

PET-PEESE: Simulation performance Sometimes the combination of QRPs and pub bias can make PET think the effect is significantly negative.

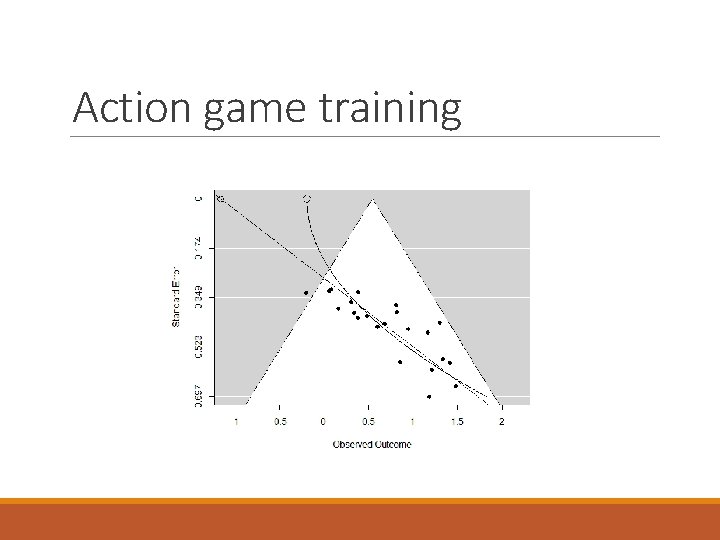

Action game training

SELECTION MODELS

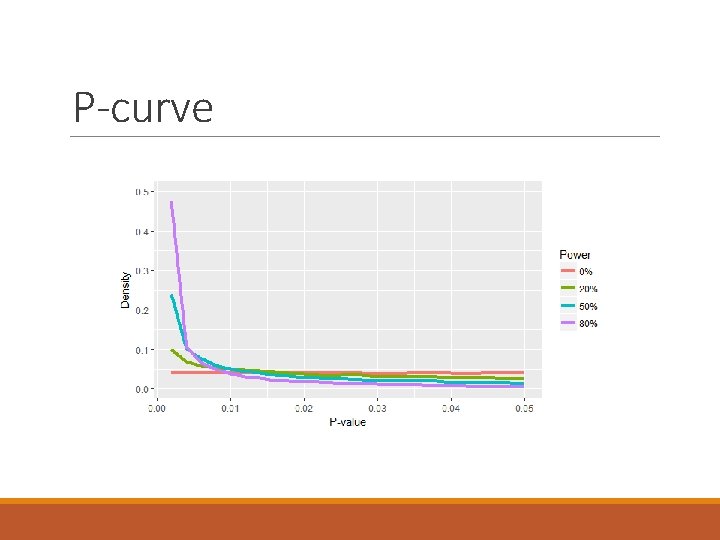

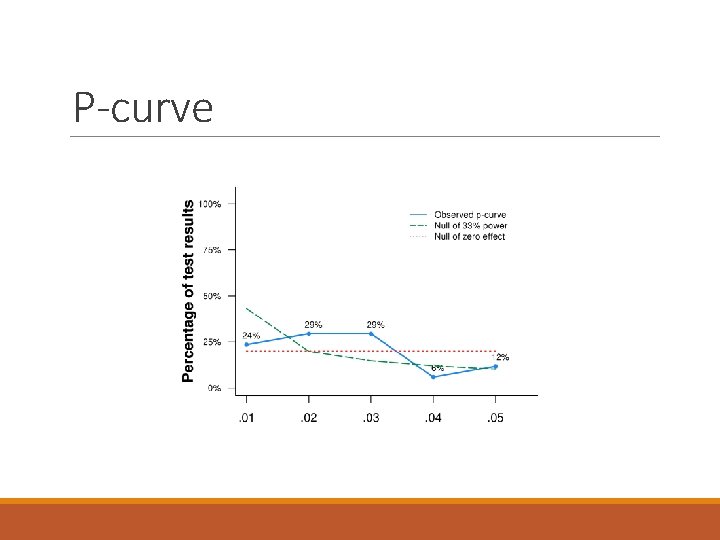

P-curve

P-curve

P-curve: Simulation performance If there’s no effect and no pub bias, p-curve can fail to run – not enough p <. 05.

P-curve: Simulation performance Type I error: Great! given homogeneity Type II error: Poor power without pub bias Good power with pub bias

P-curve: Simulation performance Bias: When δ = 0, some upward bias because p-curve does not return negative estimates RMSE: Improves RMSE given δ = 0 or large k

P-curve: Simulation performance Heterogeneity causes upward bias. With as little as tau = 0. 2, Type I error can approach inevitability.

P-curve: Simulation performance Simonsohn et al. argue that p-curve isn’t trying to recover delta, it’s trying to recover the average effect of the observed studies. (Data Colada 67) See Data Colada 70 and 33 for their point of view: There is no true effect size, studies are not a random sample.

P-curve: Simulation performance QRPs cause lower mean estimates. This can cause downward bias under homogeneity, or offset the upward bias from heterogeneity.

Selection models Try to recreate the true value by estimating the strength of bias against p >. 05.

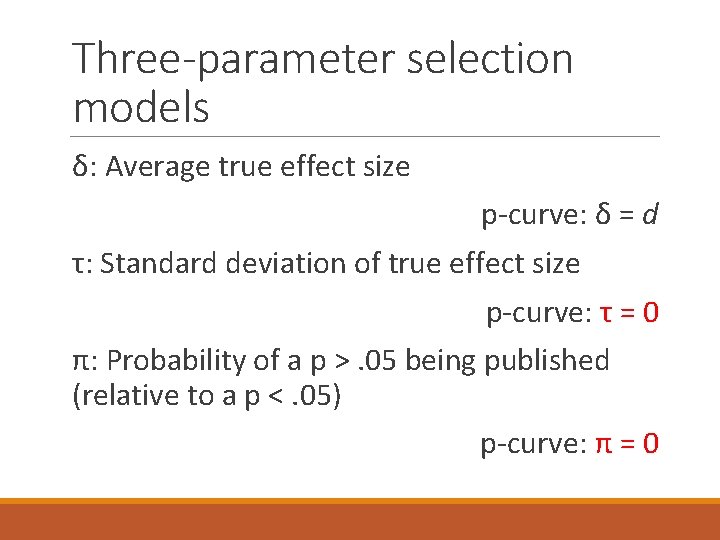

Three-parameter selection models δ: Average true effect size τ: Standard deviation of true effect size π: Probability of a p >. 05 being published (relative to a p <. 05)

Three-parameter selection models δ: Average true effect size p-curve: δ = d τ: Standard deviation of true effect size p-curve: τ = 0 π: Probability of a p >. 05 being published (relative to a p <. 05) p-curve: π = 0

Selection models: Simulation performance Type I error: Still elevated, and gets worse with k Power: Quite good

Selection models: Simulation performance ME: Overestimates the true effect RMSE: Good improvements overall

Selection models: Simulation performance Handles heterogeneity just fine QRPs generally move ME downwards

Selection models vs. p-curve “An unknown filter is impossible to correct for. ” -Leif Nelson, Data Colada 70 “All models are wrong, but some are useful. ” – George Box

Results Given bias, naïve analysis is badly misleading, and Type I error is certain Trim-and-fill shaves off only a little bias (≈ . 05 units of d)

Results PET is biased down under H 1, PEESE is biased up under H 0, both suffer from poor efficiency

Results P-curve and p-uniform need lots of significant results, have poor efficiency 3 PSM has good performance but can still be biased upwards by violations of the model

Results P-hacking can increase bias when there’s medium publication bias; when there’s strong publication bias, it just increases k. P-hacking biases many adjustments downward In the case of PET and PEESE, the combo of publication bias and p-hacking lead to wildly negative estimates

Results See more at http: //www. shinyapps. org/apps/meta. E xplorer/

Discussion The historically popular adjustments for publication bias don’t work. ◦ Trim-and-fill is much too weak to recover H 0 ◦ Fail-Safe N isn’t even a measure of bias!

What to do Don’t accept fail-safe N and trim-andfill. Request the data. Make graphs. Ask for more. Use selection models, but beware heterogeneity and p-hacking Keep doing everything you can to ensure the quality of the original literature.

![No silver bullet “[p-curve analyses] indicate studies examining elderly priming are phacked, while studies No silver bullet “[p-curve analyses] indicate studies examining elderly priming are phacked, while studies](http://slidetodoc.com/presentation_image_h/c91fa88a6efbc0d0963b83fd351d7850/image-83.jpg)

No silver bullet “[p-curve analyses] indicate studies examining elderly priming are phacked, while studies examining professor priming contain evidential value. ” Daniel Lakens (2017)

Future directions? None of these techniques are designed to deal with QRPs None of these techniques are suitable for hierarchically structured data (e. g. , several related outcomes per study) None of these techniques are suitable for attenuation for reliability.

Thanks Illinois State University ◦ Olivia Cody ◦ Hyunji Suh ◦ Conrad Niederhauer University of Missouri ◦ Bruce Bartholow ◦ Jeffrey Rouder ◦ Chris Engelhardt Meta-analysts ◦ Felix Schönbrodt ◦ Evan Carter ◦ Will Gervais ◦ Marcel van Assen ◦ Uri Simonsohn ◦ Tom Stanley

Questions? Joseph Hilgard Assistant Professor Illinois State University jhilgard@gmail. com @Joe. Hilgard www. github. com/Joe-Hilgard

- Slides: 86