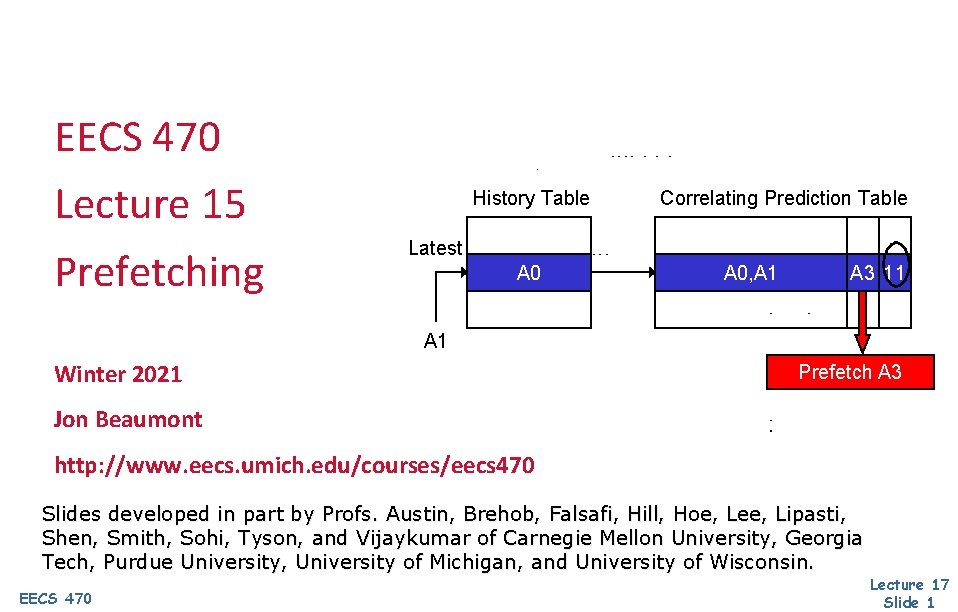

EECS 470 Lecture 15 Prefetching History Table Correlating

EECS 470 Lecture 15 Prefetching History Table Correlating Prediction Table Latest A 0, A 1 A 3 11 A 1 Winter 2021 Prefetch A 3 Jon Beaumont http: //www. eecs. umich. edu/courses/eecs 470 Slides developed in part by Profs. Austin, Brehob, Falsafi, Hill, Hoe, Lee, Lipasti, Shen, Smith, Sohi, Tyson, and Vijaykumar of Carnegie Mellon University, Georgia Tech, Purdue University, University of Michigan, and University of Wisconsin. EECS 470 Lecture 17 Slide 1

Administrative HW #4 due Friday (4/2) Let me know if there is any issues with other HW on Gradescope Milestone III next week • No submissions needed • Should target to have simple programs (including memory ops) running correctly • Remaining couple weeks should focus on testing and optimizing EECS 470 Lecture 17 Slide 2

Last time Cache techniques to reduce cache misses and cache penalties EECS 470 Lecture 17 Slide 3

Today Finish up cache enhancements Reduce number of cache misses through prefetching EECS 470 Lecture 17 Slide 4

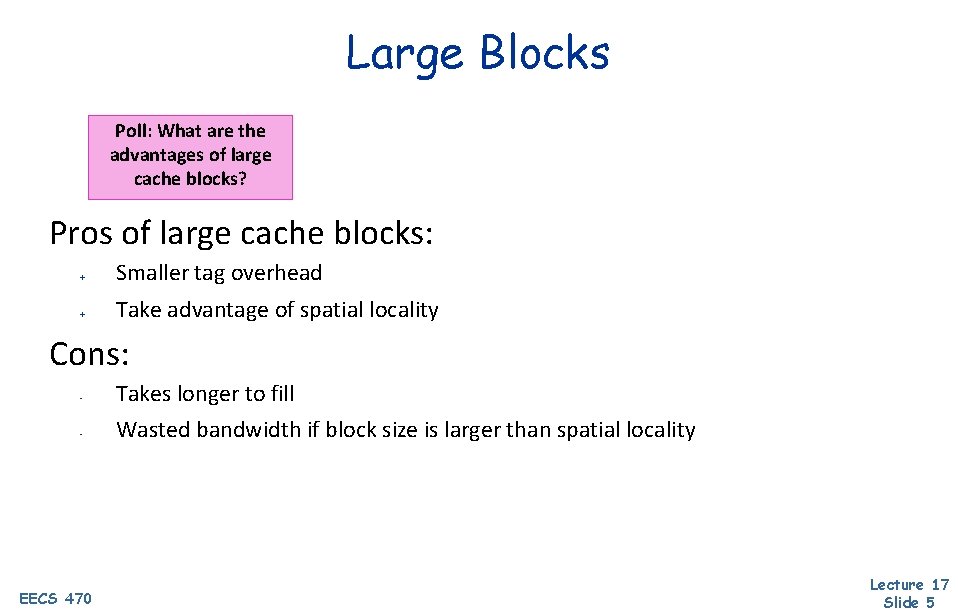

Large Blocks Poll: What are the advantages of large cache blocks? Pros of large cache blocks: + Smaller tag overhead + Take advantage of spatial locality Cons: - Takes longer to fill - Wasted bandwidth if block size is larger than spatial locality EECS 470 Lecture 17 Slide 5

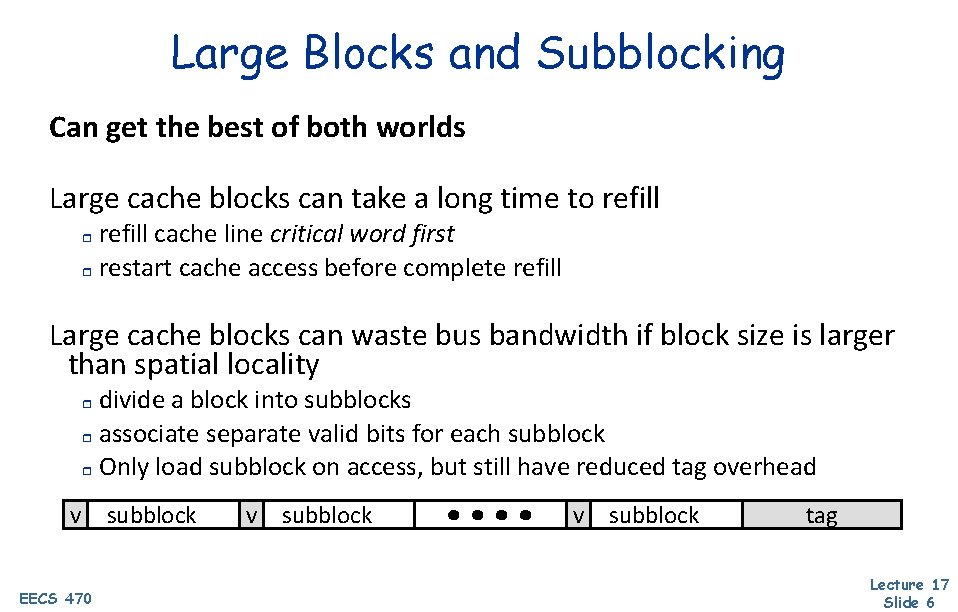

Large Blocks and Subblocking Can get the best of both worlds Large cache blocks can take a long time to refill cache line critical word first r restart cache access before complete refill r Large cache blocks can waste bus bandwidth if block size is larger than spatial locality divide a block into subblocks r associate separate valid bits for each subblock r Only load subblock on access, but still have reduced tag overhead r v EECS 470 subblock v subblock tag Lecture 17 Slide 6

Multi-Level Caches Processors getting faster w. r. t. main memory larger caches to reduce frequency of more costly misses r but larger caches are too slow for processor => gradually reduce miss cost with multiple levels r tavg = thit + miss ratio x tmiss EECS 470 Lecture 17 Slide 7

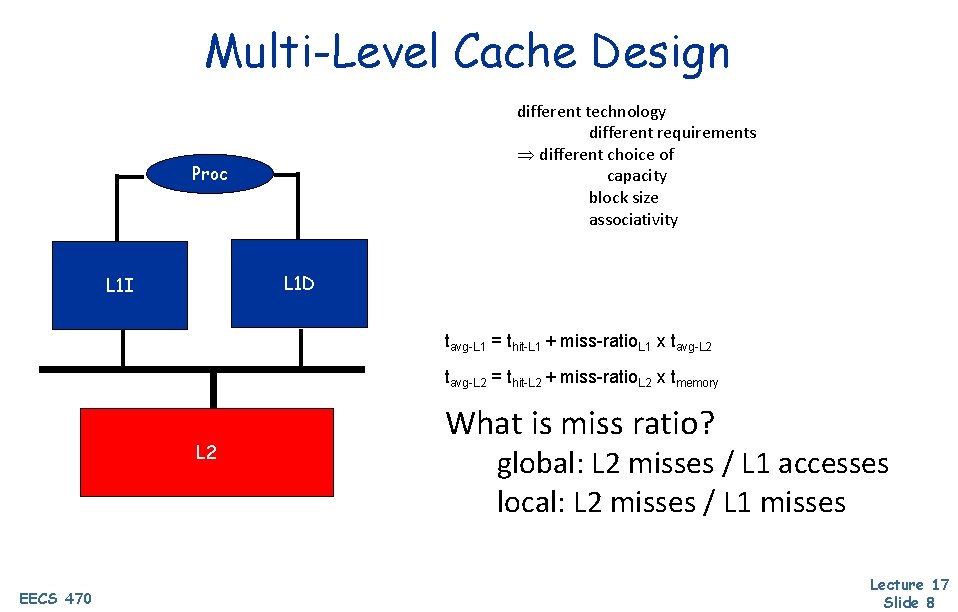

Multi-Level Cache Design different technology different requirements different choice of capacity block size associativity Proc L 1 D L 1 I tavg-L 1 = thit-L 1 + miss-ratio. L 1 x tavg-L 2 = thit-L 2 + miss-ratio. L 2 x tmemory L 2 EECS 470 What is miss ratio? global: L 2 misses / L 1 accesses local: L 2 misses / L 1 misses Lecture 17 Slide 8

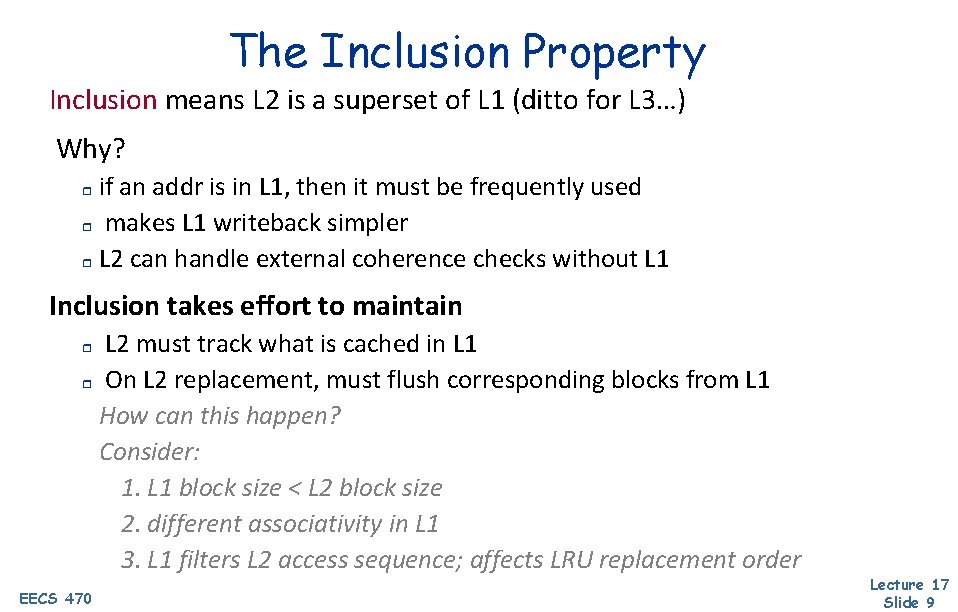

The Inclusion Property Inclusion means L 2 is a superset of L 1 (ditto for L 3…) Why? if an addr is in L 1, then it must be frequently used r makes L 1 writeback simpler r L 2 can handle external coherence checks without L 1 r Inclusion takes effort to maintain L 2 must track what is cached in L 1 r On L 2 replacement, must flush corresponding blocks from L 1 How can this happen? Consider: 1. L 1 block size < L 2 block size 2. different associativity in L 1 3. L 1 filters L 2 access sequence; affects LRU replacement order r EECS 470 Lecture 17 Slide 9

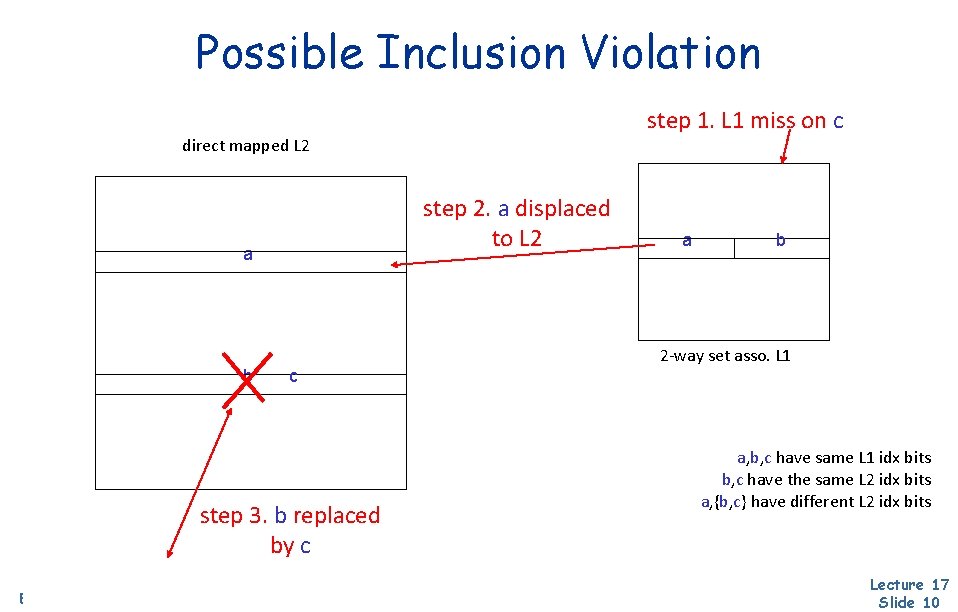

Possible Inclusion Violation step 1. L 1 miss on c direct mapped L 2 step 2. a displaced to L 2 a b c step 3. b replaced by c EECS 470 a b 2 -way set asso. L 1 a, b, c have same L 1 idx bits b, c have the same L 2 idx bits a, {b, c} have different L 2 idx bits Lecture 17 Slide 10

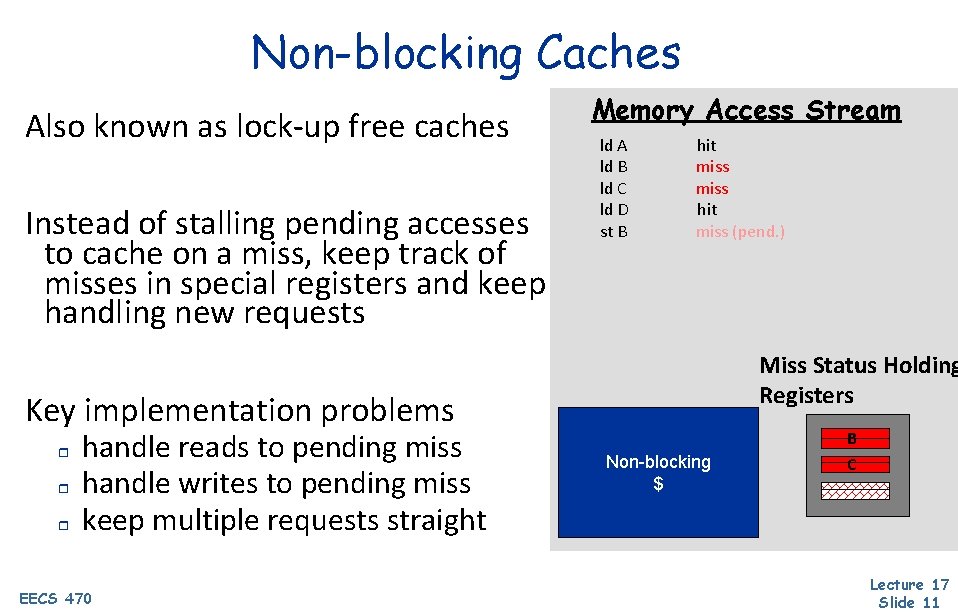

Non-blocking Caches Also known as lock-up free caches Instead of stalling pending accesses to cache on a miss, keep track of misses in special registers and keep handling new requests Memory Access Stream ld A ld B ld C ld D st B hit miss (pend. ) Miss Status Holding Registers Key implementation problems r r r handle reads to pending miss handle writes to pending miss keep multiple requests straight EECS 470 Non-blocking $ B C Lecture 17 Slide 11

EECS 470 Roadmap Speedup Programs Reduce Instruction Latency Parallelize Reduce average memory latency Caching Reduce number of instructions Instruction Level Parallelism Memory Flow Instruction Flow Prefetching EECS 470 Lecture 17 Slide 12

The memory wall Processor Memory Source: Hennessy & Patterson, Computer Architecture: A Quantitative Approach, 4 th ed. Today: 1 mem access 500 arithmetic ops How to reduce memory stalls for existing SW? EECS 470 Lecture 17 Slide 13 13

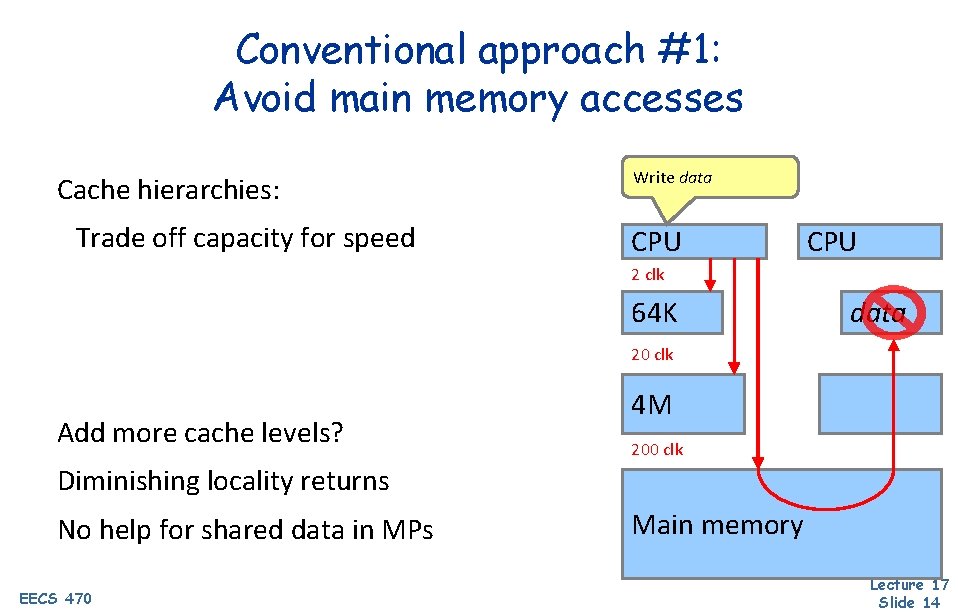

Conventional approach #1: Avoid main memory accesses Cache hierarchies: Trade off capacity for speed Write data CPU 2 clk 64 K data 20 clk Add more cache levels? 4 M 200 clk Diminishing locality returns No help for shared data in MPs EECS 470 Main memory Lecture 17 Slide 14

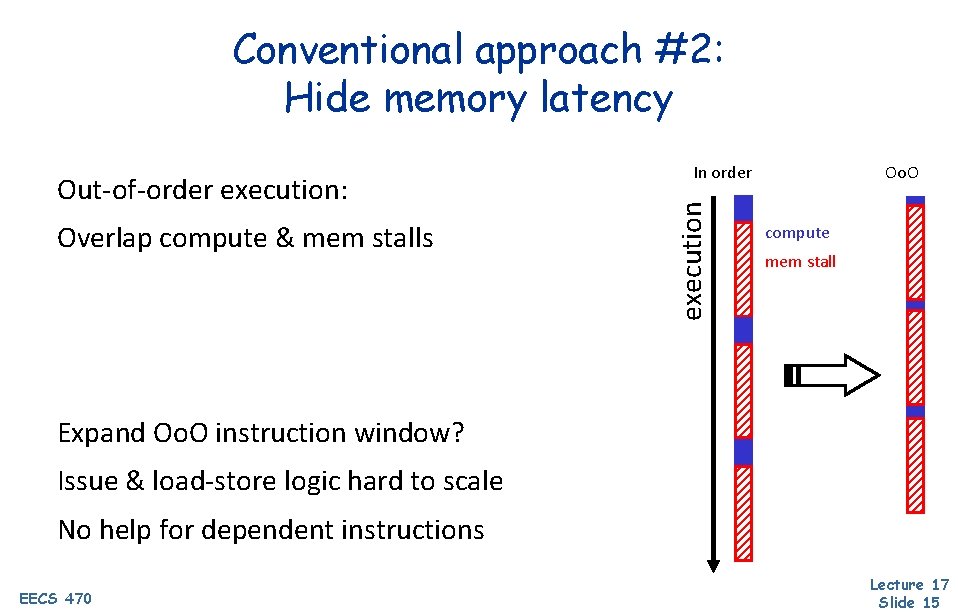

Conventional approach #2: Hide memory latency Overlap compute & mem stalls execution Out-of-order execution: In order Oo. O compute mem stall Expand Oo. O instruction window? Issue & load-store logic hard to scale No help for dependent instructions EECS 470 Lecture 17 Slide 15

What is Prefetching? • Fetch memory before it's needed • Targets compulsory, capacity, & coherence misses Big challenges: knowing “what” to fetch 1. • Fetching useless info wastes valuable resources “when” to fetch it 2. • • EECS 470 Fetching too early clutters storage Fetching too late defeats the purpose of “pre”-fetching Lecture 17 Slide 16

Software Prefetching Compiler/programmer places prefetch instructions r requires ISA support why not use regular loads? found in recent ISA’s such as SPARC V-9 Prefetch into r r EECS 470 register (binding) caches (non-binding) Lecture 17 Slide 17

Software Prefetching (Cont. ) e. g. , for (I = 1; I < rows; I++) for (J = 1; J < columns; J++) { prefetch(&x[I+1, J]); sum = sum + x[I, J]; } EECS 470 Lecture 17 Slide 18

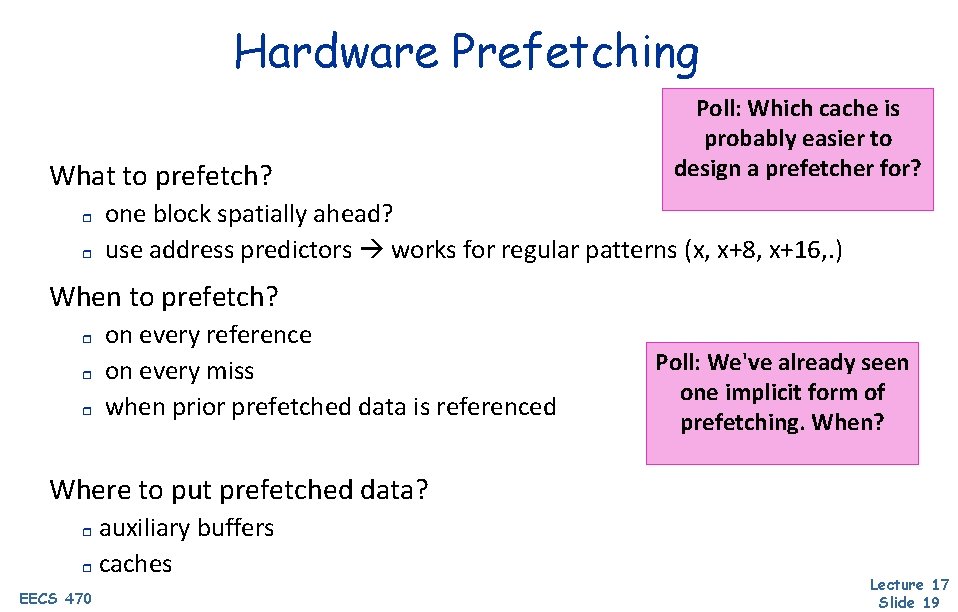

Hardware Prefetching What to prefetch? r r Poll: Which cache is probably easier to design a prefetcher for? one block spatially ahead? use address predictors works for regular patterns (x, x+8, x+16, . ) When to prefetch? r r r on every reference on every miss when prior prefetched data is referenced Poll: We've already seen one implicit form of prefetching. When? Where to put prefetched data? auxiliary buffers r caches r EECS 470 Lecture 17 Slide 19

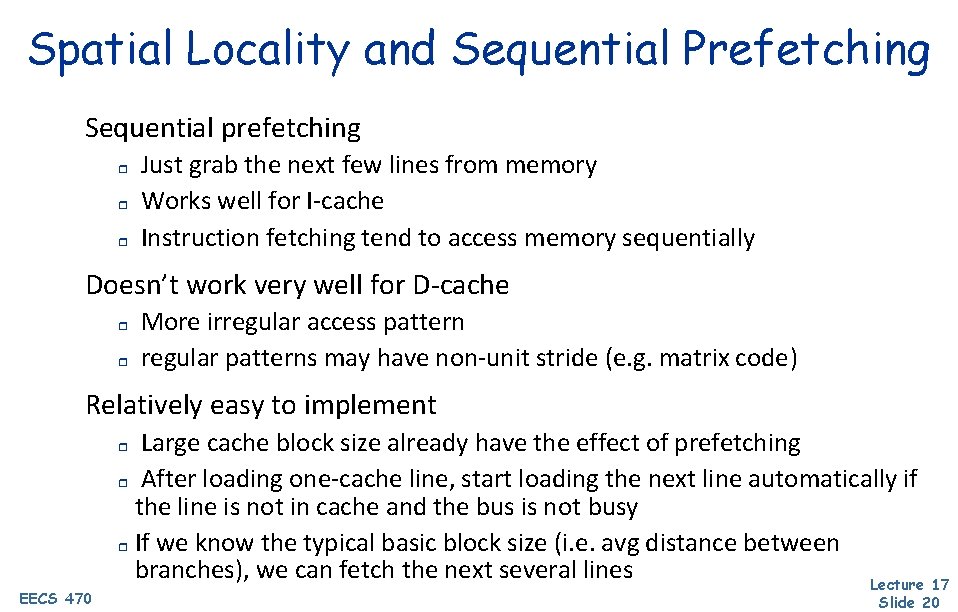

Spatial Locality and Sequential Prefetching Sequential prefetching r r r Just grab the next few lines from memory Works well for I-cache Instruction fetching tend to access memory sequentially Doesn’t work very well for D-cache r r More irregular access pattern regular patterns may have non-unit stride (e. g. matrix code) Relatively easy to implement Large cache block size already have the effect of prefetching r After loading one-cache line, start loading the next line automatically if the line is not in cache and the bus is not busy r If we know the typical basic block size (i. e. avg distance between branches), we can fetch the next several lines Lecture 17 r EECS 470 Slide 20

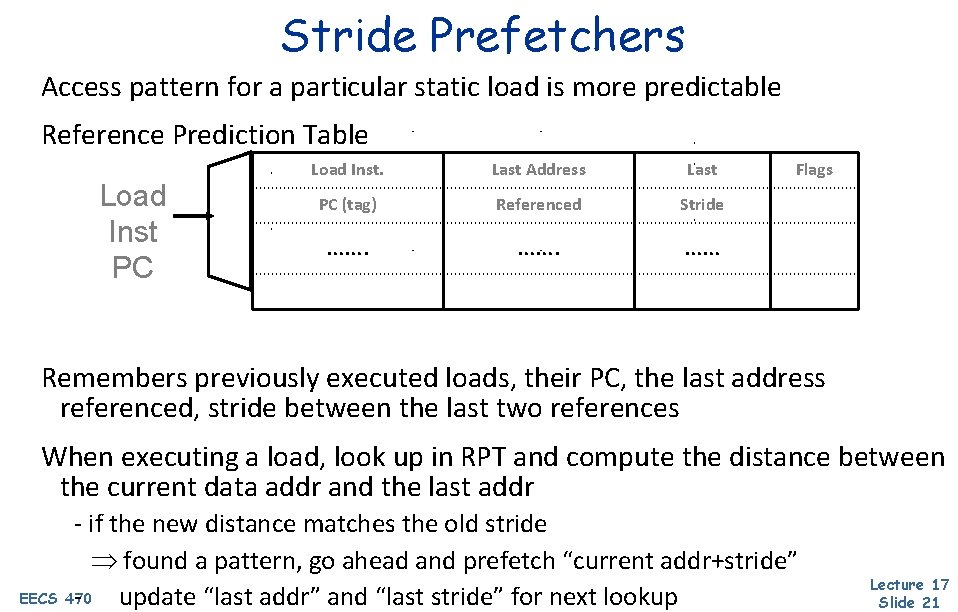

Stride Prefetchers Access pattern for a particular static load is more predictable Reference Prediction Table Load Inst PC Load Inst. Last Address Last PC (tag) Referenced Stride ……. …… Flags Remembers previously executed loads, their PC, the last address referenced, stride between the last two references When executing a load, look up in RPT and compute the distance between the current data addr and the last addr - if the new distance matches the old stride found a pattern, go ahead and prefetch “current addr+stride” EECS 470 - update “last addr” and “last stride” for next lookup Lecture 17 Slide 21

![Stream Buffers [Jouppi] Each stream buffer holds one stream of sequentially prefetched cache lines Stream Buffers [Jouppi] Each stream buffer holds one stream of sequentially prefetched cache lines](http://slidetodoc.com/presentation_image_h2/395bb00eeccaf7495cf618713f52e582/image-22.jpg)

Stream Buffers [Jouppi] Each stream buffer holds one stream of sequentially prefetched cache lines No cache pollution On a load miss check the head of all stream buffers for an address match r r FIFO if hit, pop the entry from FIFO, update the cache with data if not, allocate a new stream buffer to the new miss address (may have to recycle a stream buffer following LRU policy) DCache Stream buffer FIFOs are continuously topped-off with subsequent cache lines whenever there is room and the bus is not busy FIFO Stream buffers can incorporate stride prediction mechanisms to support non-unit-stride streams FIFO EECS 470 Memory interface FIFO Lecture 17 Slide 22

Generalized Access Pattern Prefetchers How do you prefetch 1. Heap data structures? 2. Indirect array accesses? 3. Generalized memory access patterns? Current proposals: • Precomputation prefetchers (runahead execution) • Address correlating prefetchers (temporal memory streaming) • Spatial pattern prefetchers (spatial memory streaming) EECS 470 Lecture 17 Slide 23

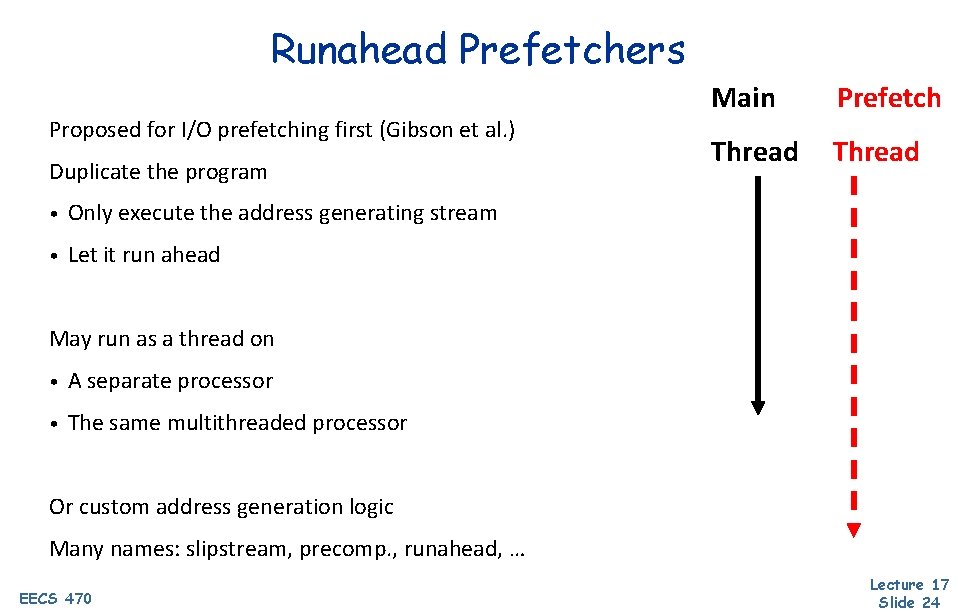

Runahead Prefetchers Proposed for I/O prefetching first (Gibson et al. ) Duplicate the program • Only execute the address generating stream • Let it run ahead Main Prefetch Thread May run as a thread on • A separate processor • The same multithreaded processor Or custom address generation logic Many names: slipstream, precomp. , runahead, … EECS 470 Lecture 17 Slide 24

Runahead Prefetcher To get ahead: • Must avoid waiting • Must compute less Predict 1. Control flow thru branch prediction 2. Data flow thru value prediction 3. Address generation computation only + Prefetch any pattern (need not be repetitive) ― Prediction only as good as branch + value prediction How much prefetch lookahead? EECS 470 Lecture 17 Slide 25

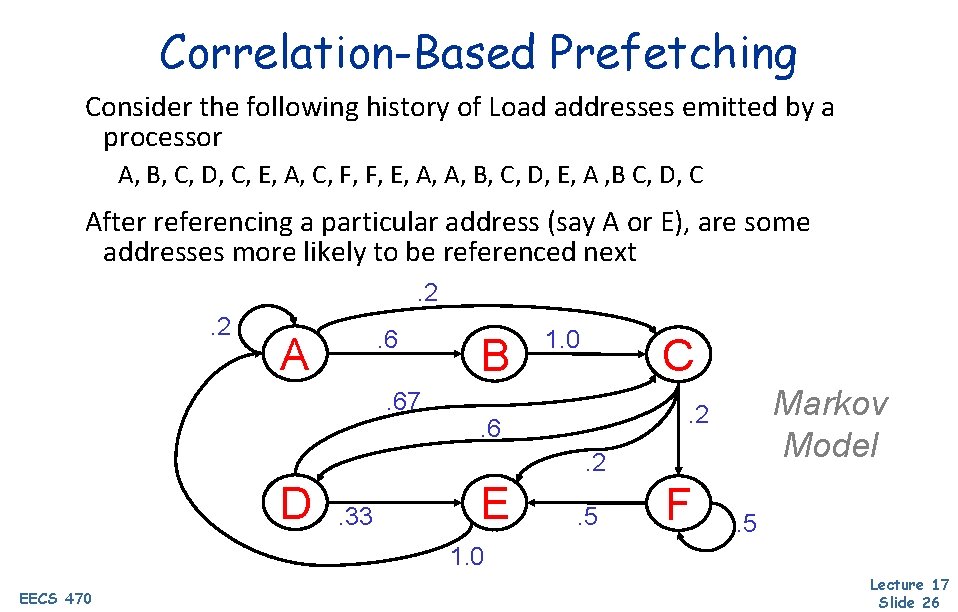

Correlation-Based Prefetching Consider the following history of Load addresses emitted by a processor A, B, C, D, C, E, A, C, F, F, E, A, A, B, C, D, E, A , B C, D, C After referencing a particular address (say A or E), are some addresses more likely to be referenced next. 2. 2 . 6 A . 67 B 1. 0 C Markov Model . 2 . 6. 2 D . 33 E . 5 F . 5 1. 0 EECS 470 Lecture 17 Slide 26

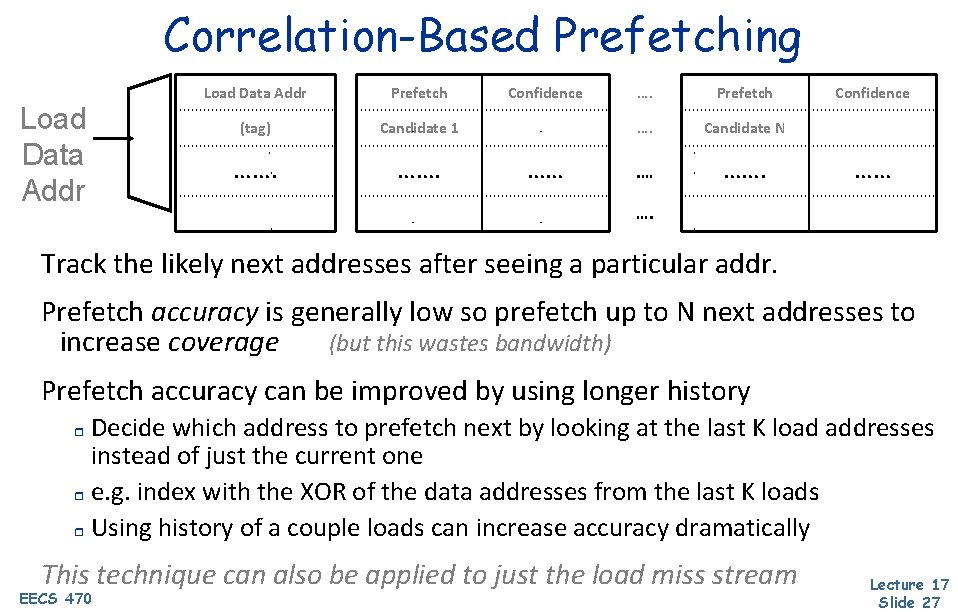

Correlation-Based Prefetching Load Data Addr Prefetch (tag) Candidate 1 ……. Confidence …… …. Prefetch …. Candidate N . … ……. Confidence …… …. Track the likely next addresses after seeing a particular addr. Prefetch accuracy is generally low so prefetch up to N next addresses to increase coverage (but this wastes bandwidth) Prefetch accuracy can be improved by using longer history Decide which address to prefetch next by looking at the last K load addresses instead of just the current one r e. g. index with the XOR of the data addresses from the last K loads r Using history of a couple loads can increase accuracy dramatically r This technique can also be applied to just the load miss stream EECS 470 Lecture 17 Slide 27

More info on Prefetching? Professor Wenisch (professor here, currently working at Google) wrote a great summary of the state of the art here (available through umich IP addresses): https: //www. morganclaypool. com/doi/abs/10. 2200/S 00581 ED 1 V 01 Y 2 01405 CAC 028 EECS 470 Lecture 17 Slide 28

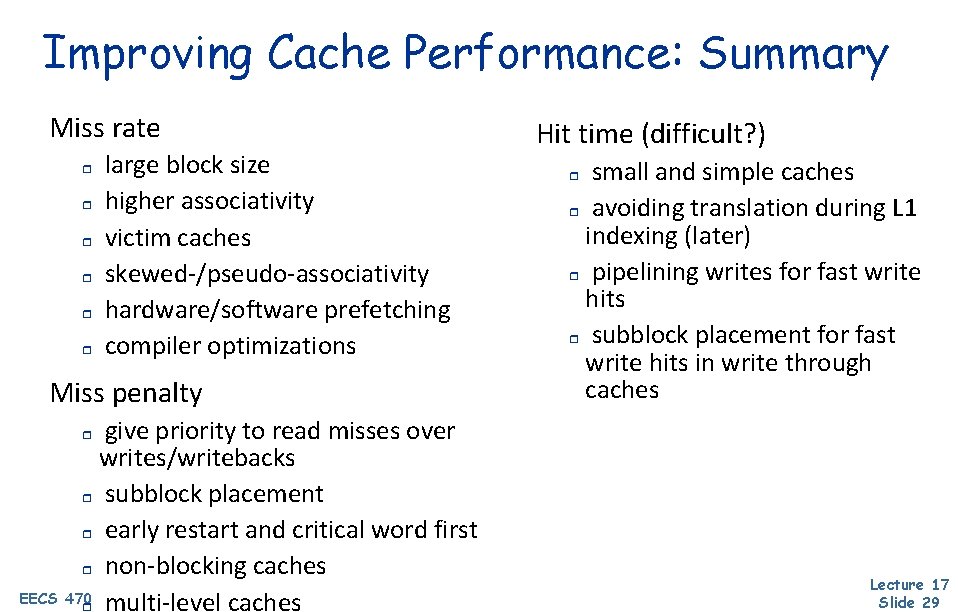

Improving Cache Performance: Summary Miss rate r r r large block size higher associativity victim caches skewed-/pseudo-associativity hardware/software prefetching compiler optimizations Miss penalty give priority to read misses over writes/writebacks r subblock placement r early restart and critical word first r non-blocking caches EECS 470 r multi-level caches Hit time (difficult? ) small and simple caches r avoiding translation during L 1 indexing (later) r pipelining writes for fast write hits r subblock placement for fast write hits in write through caches r r Lecture 17 Slide 29

Next Time Multicore! Lingering questions / feedback? I'll include an anonymous form at the end of every lecture: https: //bit. ly/3 o. Sr 5 FD EECS 470 Lecture 17 Slide 30

- Slides: 30