Codebookbased Feature Compensation for Robust Speech Recognition ShihHsiang

Codebook-based Feature Compensation for Robust Speech Recognition Shih-Hsiang Lin (林士翔) Graduate Student National Taiwan Normal University, Taipei, Taiwan 2007/02/08

Outline • Introduction • Codebook-based Cepstral Compensation – With Both Clean and Noisy (Stereo) Data • • Stereo-based Piecewise Linear Compensation (SPLICE) SNR Dependent Cepstral Normalization (SDCN) Codeword Dependent Cepstral Normalization (CDCN) Probabilistic Optimum Filtering (POF) – With Only Noisy Data • • Maximum Likelihood based Stochastic Vector Mapping (ML-SVM) Maximum Mutual Information-SPLICE (MMI-SPLICE) Minimum Classification Error based SVM (MCE-SVM) Stochastic Matching • Conclusions 2

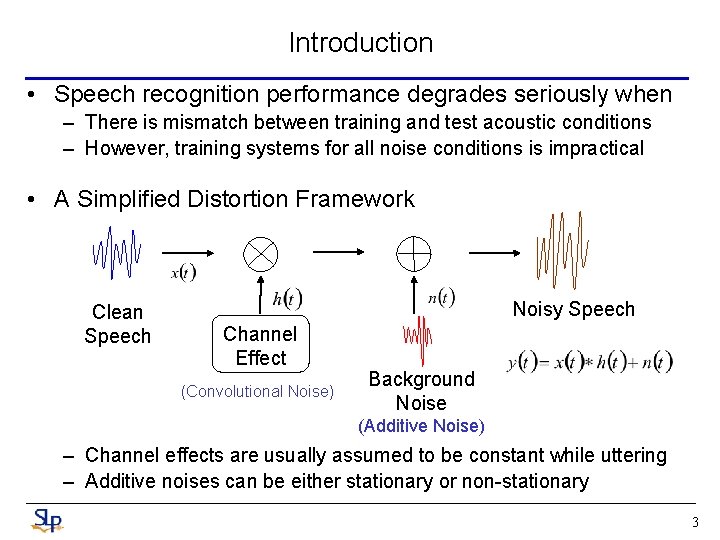

Introduction • Speech recognition performance degrades seriously when – There is mismatch between training and test acoustic conditions – However, training systems for all noise conditions is impractical • A Simplified Distortion Framework Clean Speech Noisy Speech Channel Effect (Convolutional Noise) Background Noise (Additive Noise) – Channel effects are usually assumed to be constant while uttering – Additive noises can be either stationary or non-stationary 3

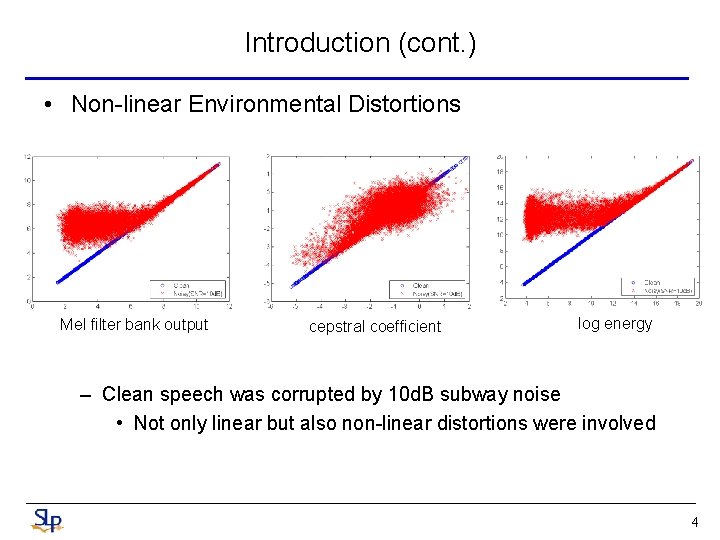

Introduction (cont. ) • Non-linear Environmental Distortions Mel filter bank output cepstral coefficient log energy – Clean speech was corrupted by 10 d. B subway noise • Not only linear but also non-linear distortions were involved 4

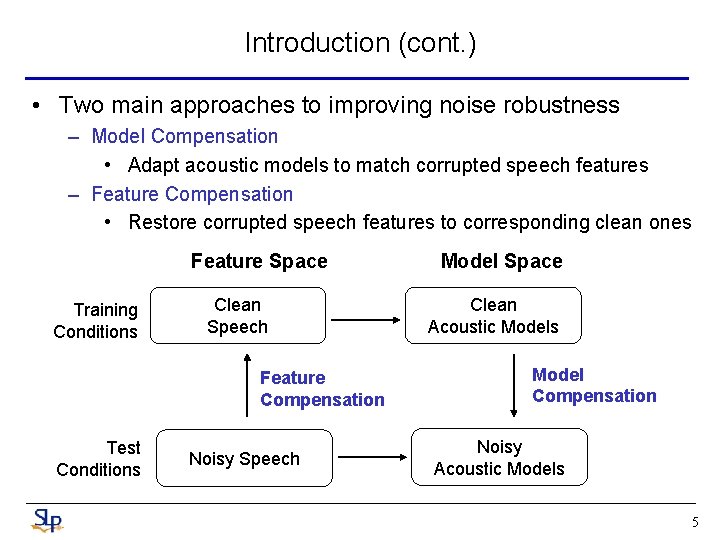

Introduction (cont. ) • Two main approaches to improving noise robustness – Model Compensation • Adapt acoustic models to match corrupted speech features – Feature Compensation • Restore corrupted speech features to corresponding clean ones Feature Space Training Conditions Clean Speech Feature Compensation Test Conditions Noisy Speech Model Space Clean Acoustic Models Model Compensation Noisy Acoustic Models 5

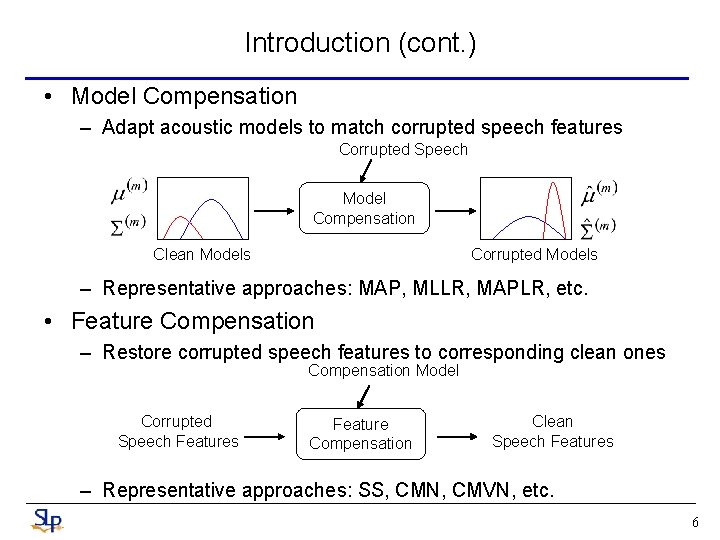

Introduction (cont. ) • Model Compensation – Adapt acoustic models to match corrupted speech features Corrupted Speech Model Compensation Clean Models Corrupted Models – Representative approaches: MAP, MLLR, MAPLR, etc. • Feature Compensation – Restore corrupted speech features to corresponding clean ones Compensation Model Corrupted Speech Features Feature Compensation Clean Speech Features – Representative approaches: SS, CMN, CMVN, etc. 6

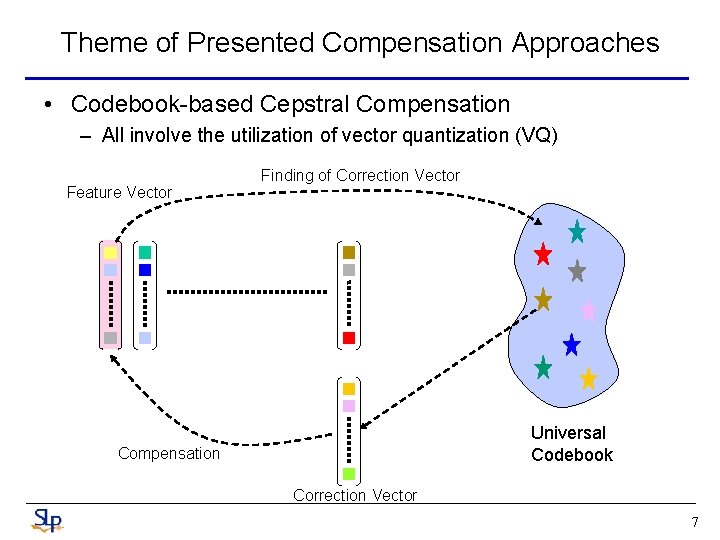

Theme of Presented Compensation Approaches • Codebook-based Cepstral Compensation – All involve the utilization of vector quantization (VQ) Feature Vector Finding of Correction Vector Universal Codebook Compensation Correction Vector 7

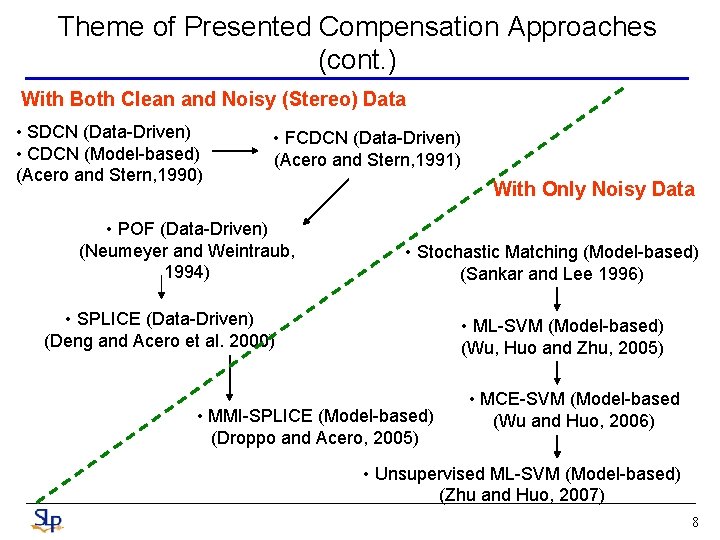

Theme of Presented Compensation Approaches (cont. ) With Both Clean and Noisy (Stereo) Data • SDCN (Data-Driven) • CDCN (Model-based) (Acero and Stern, 1990) • FCDCN (Data-Driven) (Acero and Stern, 1991) • POF (Data-Driven) (Neumeyer and Weintraub, 1994) With Only Noisy Data • Stochastic Matching (Model-based) (Sankar and Lee 1996) • SPLICE (Data-Driven) (Deng and Acero et al. 2000) • ML-SVM (Model-based) (Wu, Huo and Zhu, 2005) • MMI-SPLICE (Model-based) (Droppo and Acero, 2005) • MCE-SVM (Model-based (Wu and Huo, 2006) • Unsupervised ML-SVM (Model-based) (Zhu and Huo, 2007) 8

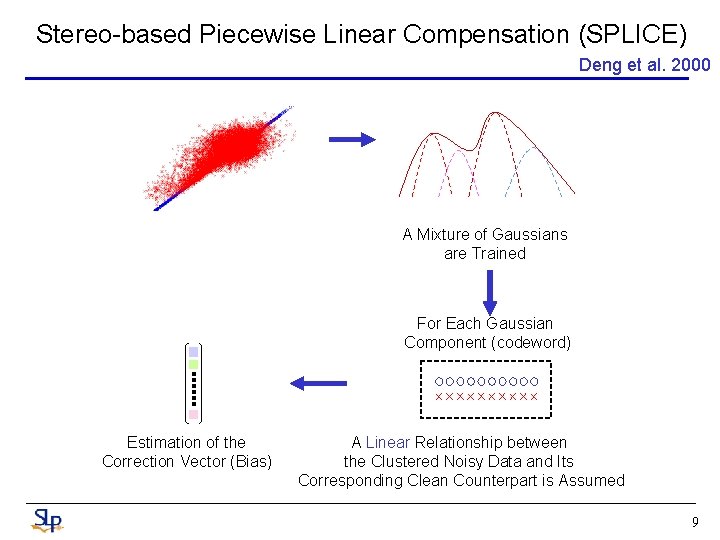

Stereo-based Piecewise Linear Compensation (SPLICE) Deng et al. 2000 A Mixture of Gaussians are Trained For Each Gaussian Component (codeword) Estimation of the Correction Vector (Bias) A Linear Relationship between the Clustered Noisy Data and Its Corresponding Clean Counterpart is Assumed 9

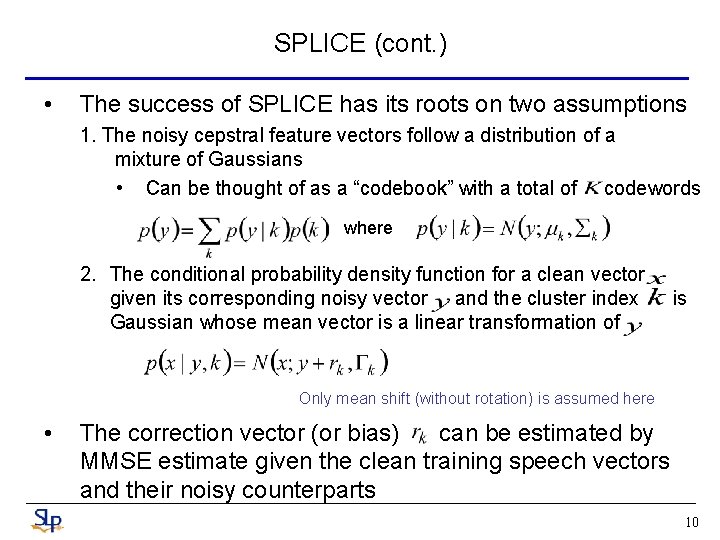

SPLICE (cont. ) • The success of SPLICE has its roots on two assumptions 1. The noisy cepstral feature vectors follow a distribution of a mixture of Gaussians • Can be thought of as a “codebook” with a total of codewords where 2. The conditional probability density function for a clean vector given its corresponding noisy vector and the cluster index Gaussian whose mean vector is a linear transformation of is Only mean shift (without rotation) is assumed here • The correction vector (or bias) can be estimated by MMSE estimate given the clean training speech vectors and their noisy counterparts 10

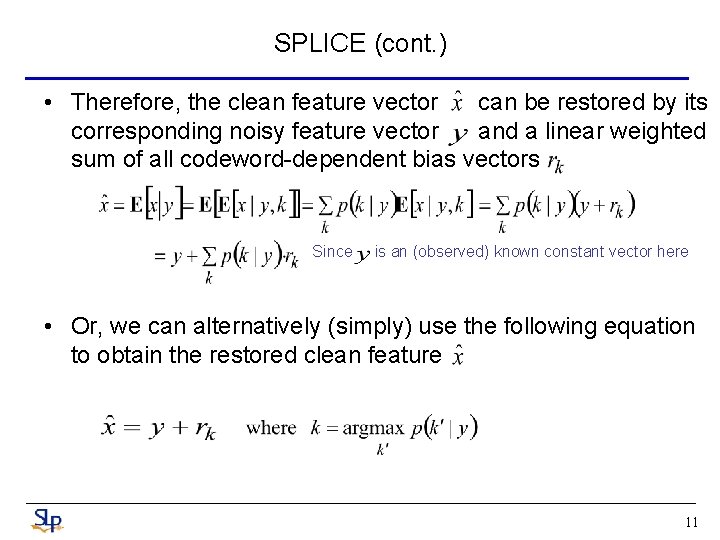

SPLICE (cont. ) • Therefore, the clean feature vector can be restored by its corresponding noisy feature vector and a linear weighted sum of all codeword-dependent bias vectors Since is an (observed) known constant vector here • Or, we can alternatively (simply) use the following equation to obtain the restored clean feature 11

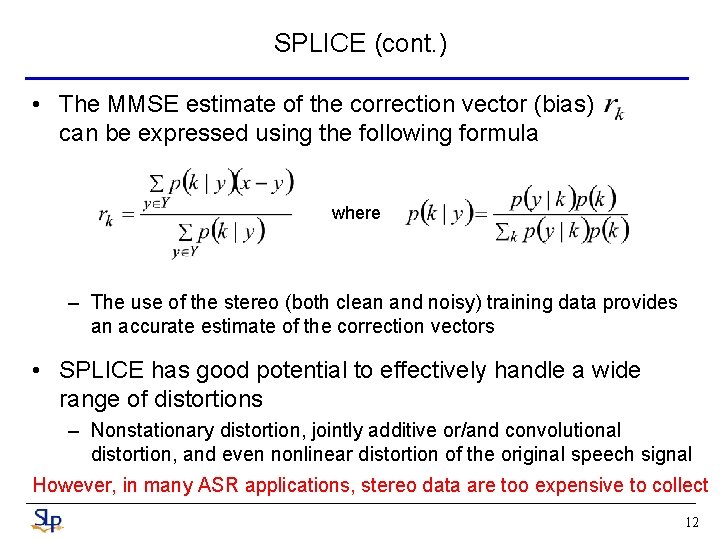

SPLICE (cont. ) • The MMSE estimate of the correction vector (bias) can be expressed using the following formula where – The use of the stereo (both clean and noisy) training data provides an accurate estimate of the correction vectors • SPLICE has good potential to effectively handle a wide range of distortions – Nonstationary distortion, jointly additive or/and convolutional distortion, and even nonlinear distortion of the original speech signal However, in many ASR applications, stereo data are too expensive to collect 12

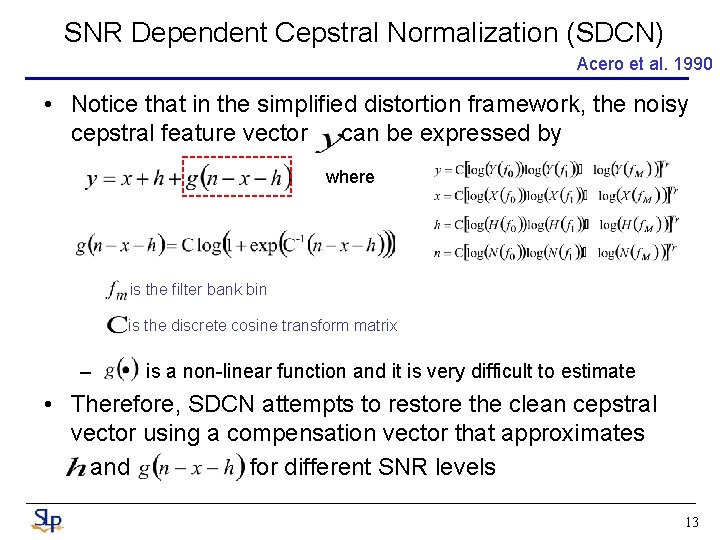

SNR Dependent Cepstral Normalization (SDCN) Acero et al. 1990 • Notice that in the simplified distortion framework, the noisy cepstral feature vector can be expressed by where is the filter bank bin is the discrete cosine transform matrix – is a non-linear function and it is very difficult to estimate • Therefore, SDCN attempts to restore the clean cepstral vector using a compensation vector that approximates and for different SNR levels 13

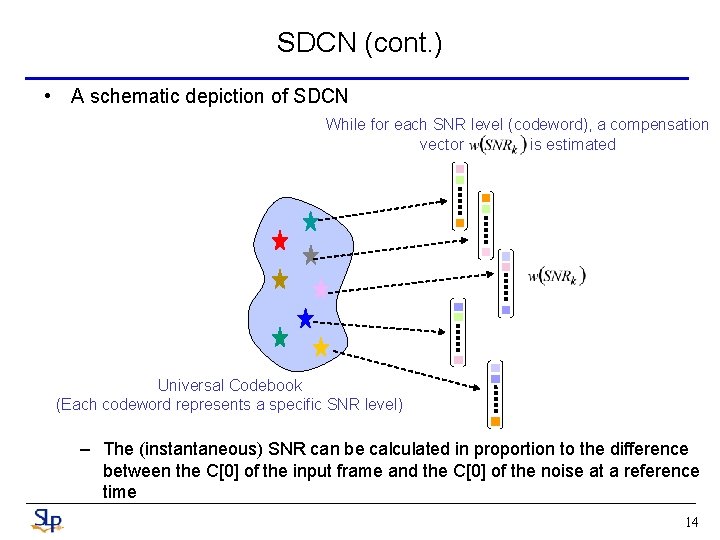

SDCN (cont. ) • A schematic depiction of SDCN While for each SNR level (codeword), a compensation vector is estimated Universal Codebook (Each codeword represents a specific SNR level) – The (instantaneous) SNR can be calculated in proportion to the difference between the C[0] of the input frame and the C[0] of the noise at a reference time 14

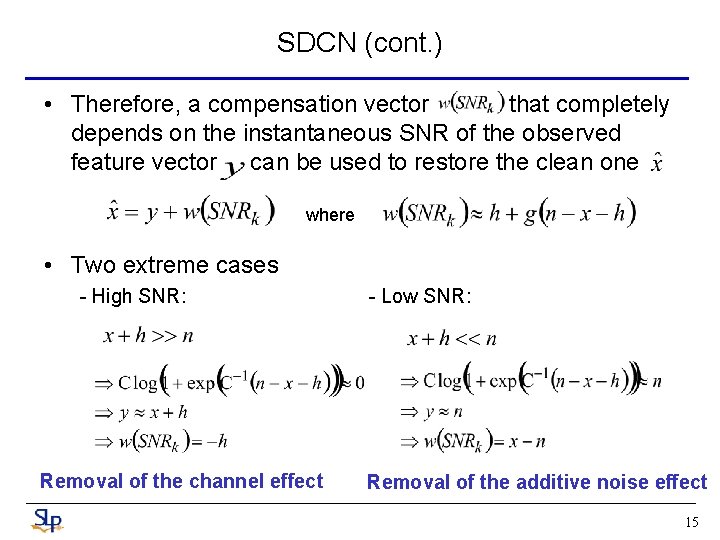

SDCN (cont. ) • Therefore, a compensation vector that completely depends on the instantaneous SNR of the observed feature vector can be used to restore the clean one where • Two extreme cases - High SNR: Removal of the channel effect - Low SNR: Removal of the additive noise effect 15

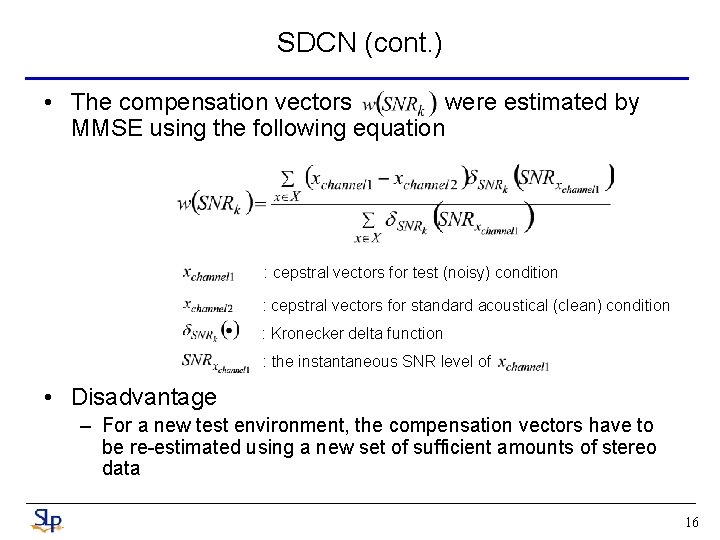

SDCN (cont. ) • The compensation vectors were estimated by MMSE using the following equation : cepstral vectors for test (noisy) condition : cepstral vectors for standard acoustical (clean) condition : Kronecker delta function : the instantaneous SNR level of • Disadvantage – For a new test environment, the compensation vectors have to be re-estimated using a new set of sufficient amounts of stereo data 16

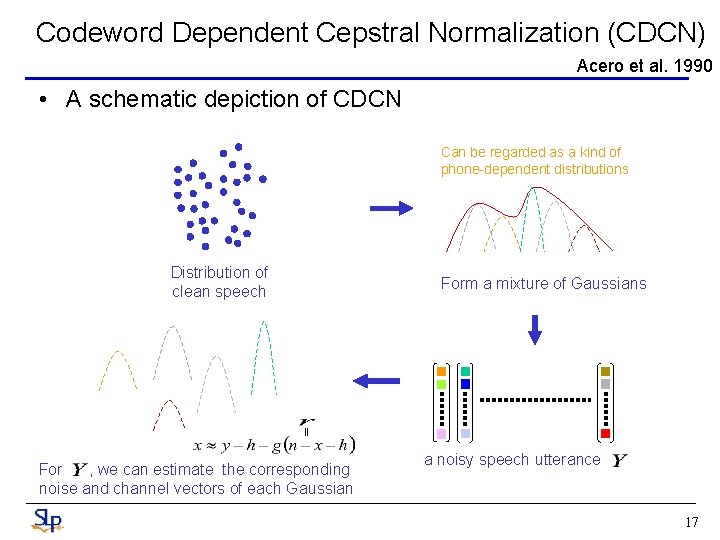

Codeword Dependent Cepstral Normalization (CDCN) Acero et al. 1990 • A schematic depiction of CDCN Can be regarded as a kind of phone-dependent distributions Distribution of clean speech Form a mixture of Gaussians || For , we can estimate the corresponding noise and channel vectors of each Gaussian a noisy speech utterance 17

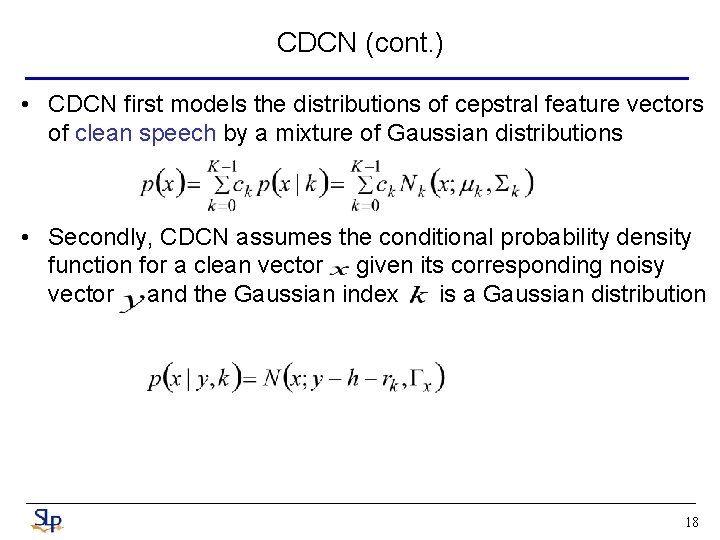

CDCN (cont. ) • CDCN first models the distributions of cepstral feature vectors of clean speech by a mixture of Gaussian distributions • Secondly, CDCN assumes the conditional probability density function for a clean vector given its corresponding noisy vector and the Gaussian index is a Gaussian distribution 18

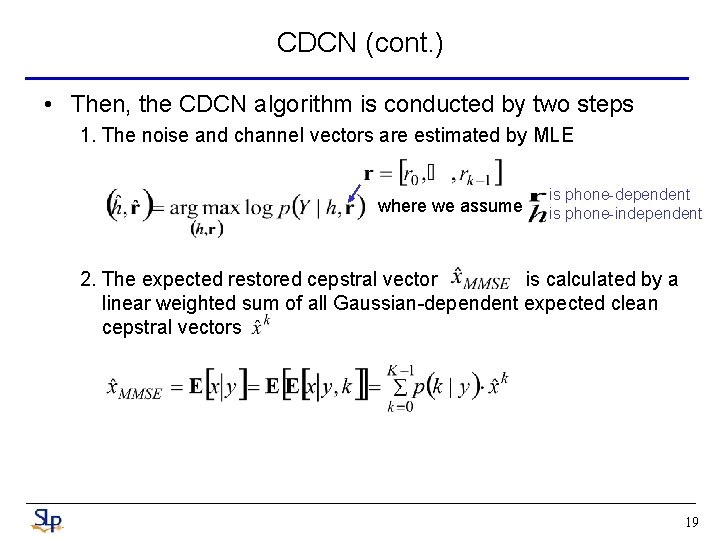

CDCN (cont. ) • Then, the CDCN algorithm is conducted by two steps 1. The noise and channel vectors are estimated by MLE where we assume is phone-dependent is phone-independent 2. The expected restored cepstral vector is calculated by a linear weighted sum of all Gaussian-dependent expected clean cepstral vectors 19

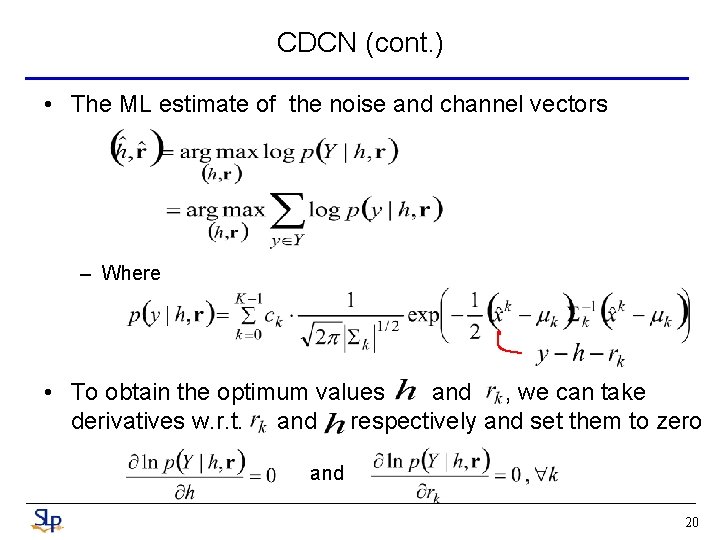

CDCN (cont. ) • The ML estimate of the noise and channel vectors – Where • To obtain the optimum values and , we can take derivatives w. r. t. and respectively and set them to zero and 20

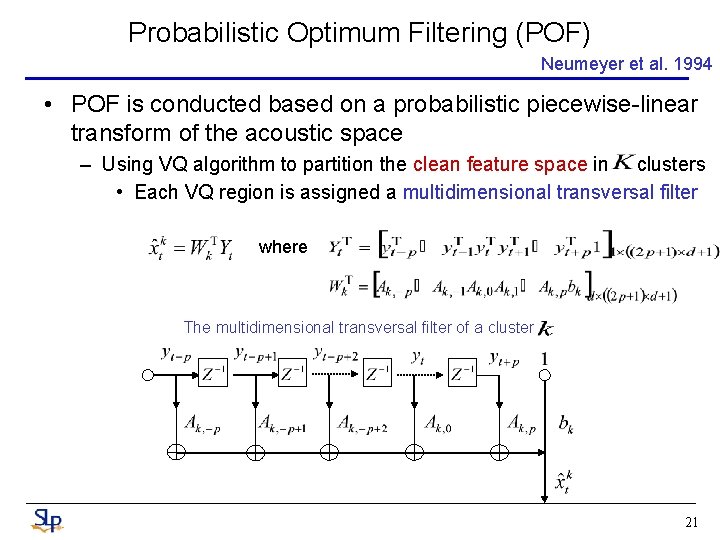

Probabilistic Optimum Filtering (POF) Neumeyer et al. 1994 • POF is conducted based on a probabilistic piecewise-linear transform of the acoustic space – Using VQ algorithm to partition the clean feature space in clusters • Each VQ region is assigned a multidimensional transversal filter where The multidimensional transversal filter of a cluster 21

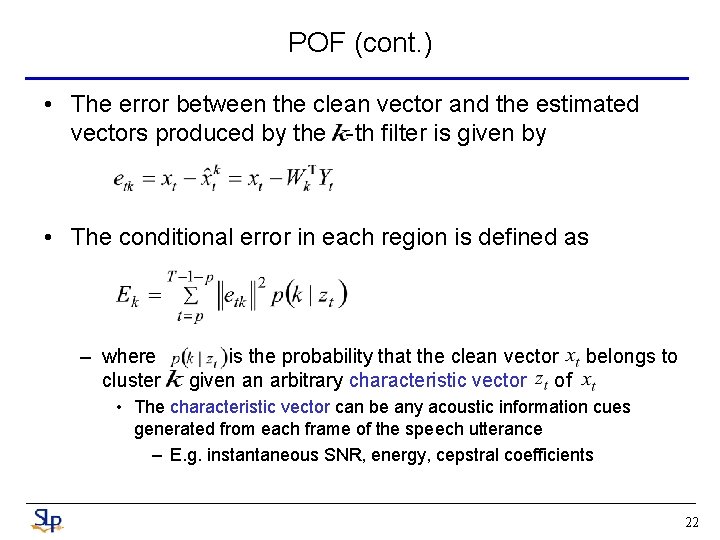

POF (cont. ) • The error between the clean vector and the estimated vectors produced by the -th filter is given by • The conditional error in each region is defined as – where cluster is the probability that the clean vector belongs to given an arbitrary characteristic vector of • The characteristic vector can be any acoustic information cues generated from each frame of the speech utterance – E. g. instantaneous SNR, energy, cepstral coefficients 22

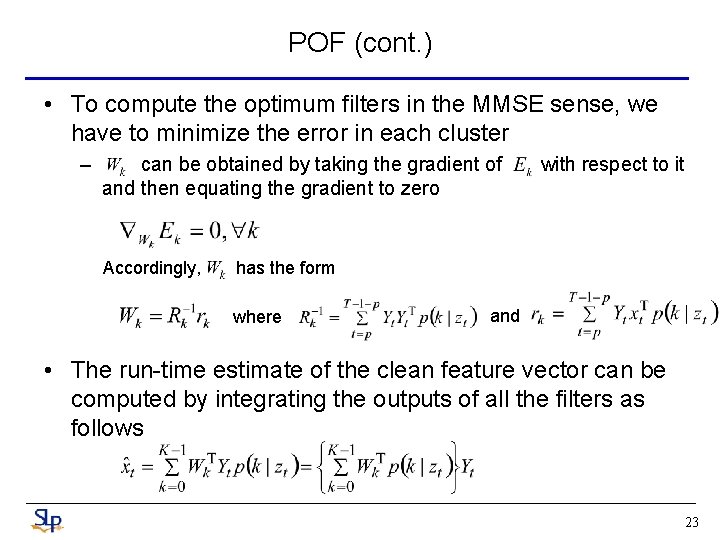

POF (cont. ) • To compute the optimum filters in the MMSE sense, we have to minimize the error in each cluster – can be obtained by taking the gradient of and then equating the gradient to zero Accordingly, with respect to it has the form where and • The run-time estimate of the clean feature vector can be computed by integrating the outputs of all the filters as follows 23

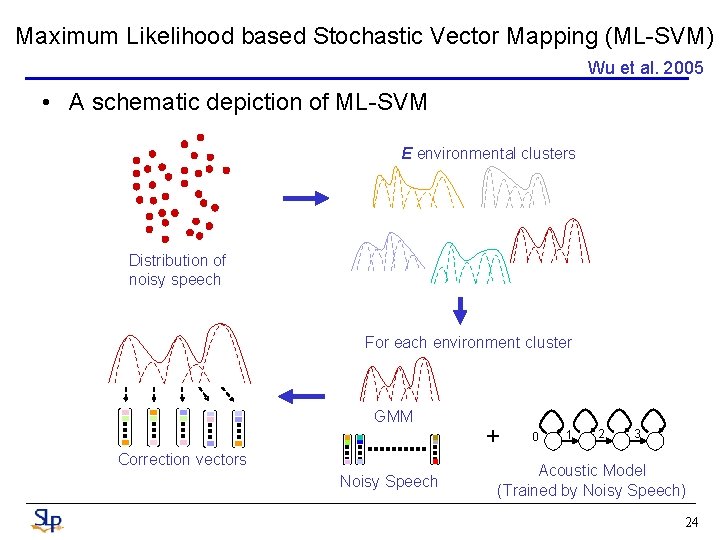

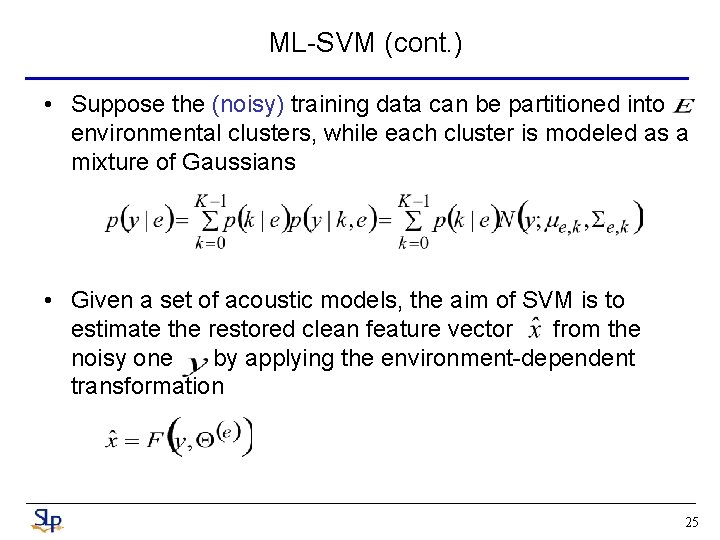

Maximum Likelihood based Stochastic Vector Mapping (ML-SVM) Wu et al. 2005 • A schematic depiction of ML-SVM E environmental clusters Distribution of noisy speech For each environment cluster GMM Correction vectors Noisy Speech + 0 1 2 3 Acoustic Model (Trained by Noisy Speech) 24

ML-SVM (cont. ) • Suppose the (noisy) training data can be partitioned into environmental clusters, while each cluster is modeled as a mixture of Gaussians • Given a set of acoustic models, the aim of SVM is to estimate the restored clean feature vector from the noisy one by applying the environment-dependent transformation 25

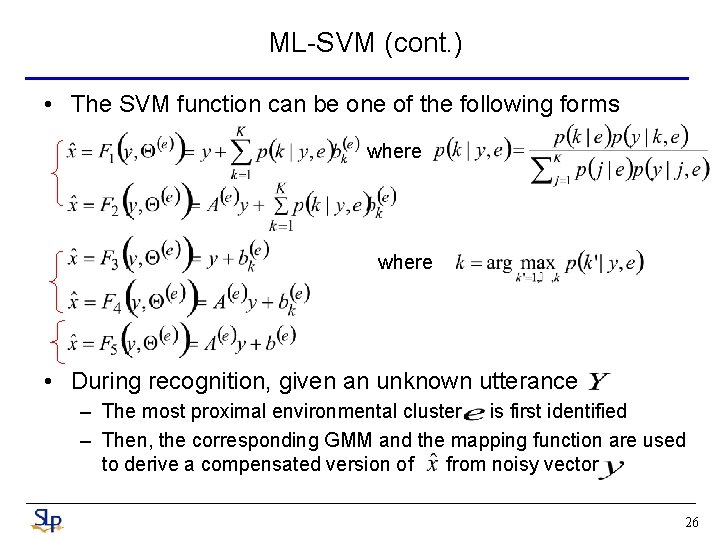

ML-SVM (cont. ) • The SVM function can be one of the following forms where • During recognition, given an unknown utterance – The most proximal environmental cluster is first identified – Then, the corresponding GMM and the mapping function are used to derive a compensated version of from noisy vector 26

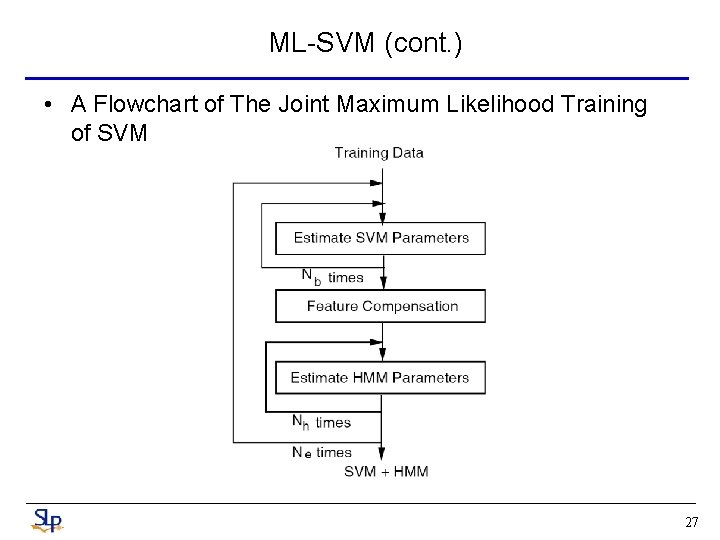

ML-SVM (cont. ) • A Flowchart of The Joint Maximum Likelihood Training of SVM 27

ML-SVM (cont. ) • The detailed procedures depicted in the above flowchart are explained as follows Step 1 : Initialization A set of HMM acoustic models with diagonal covariance matrices trained with multi-condition training data are used as the initial models, and the initial bias vectors are set to be zero vectors Step 2 : Estimating SVM Function Parameters Given the HMM acoustic model parameters , for each environmental class , Nb times of EM training are performed to estimate the environment-dependent mapping function parameters to increase the likelihood function 28

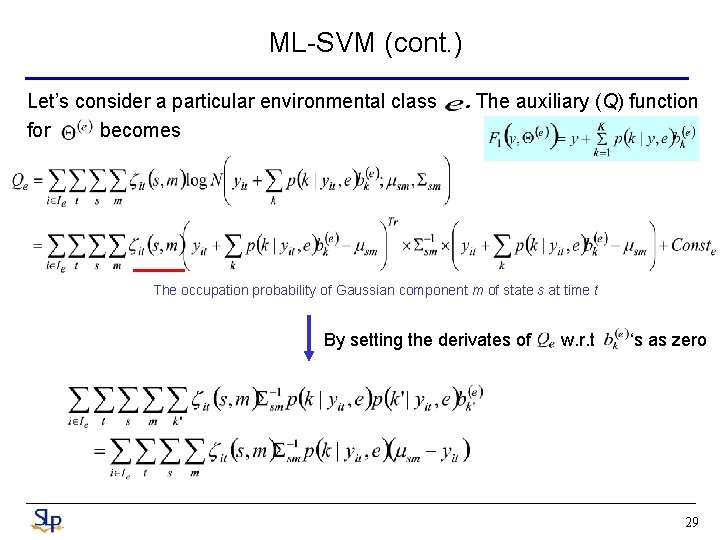

ML-SVM (cont. ) Let’s consider a particular environmental class for becomes . The auxiliary (Q) function The occupation probability of Gaussian component m of state s at time t By setting the derivates of w. r. t ‘s as zero 29

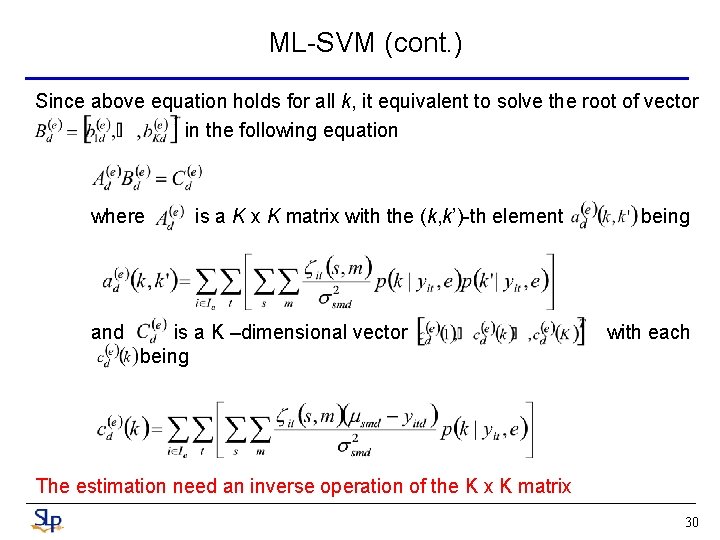

ML-SVM (cont. ) Since above equation holds for all k, it equivalent to solve the root of vector in the following equation where and is a K x K matrix with the (k, k’)-th element is a K –dimensional vector being with each The estimation need an inverse operation of the K x K matrix 30

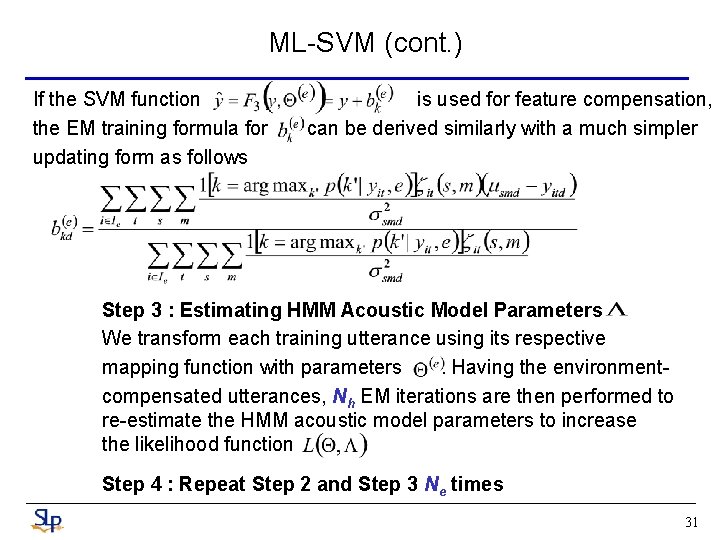

ML-SVM (cont. ) If the SVM function the EM training formula for updating form as follows is used for feature compensation, can be derived similarly with a much simpler Step 3 : Estimating HMM Acoustic Model Parameters We transform each training utterance using its respective mapping function with parameters. Having the environmentcompensated utterances, Nh EM iterations are then performed to re-estimate the HMM acoustic model parameters to increase the likelihood function Step 4 : Repeat Step 2 and Step 3 Ne times 31

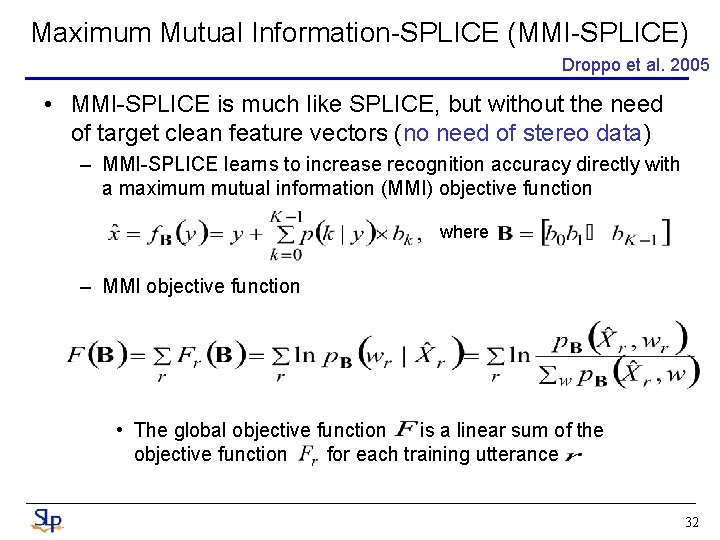

Maximum Mutual Information-SPLICE (MMI-SPLICE) Droppo et al. 2005 • MMI-SPLICE is much like SPLICE, but without the need of target clean feature vectors (no need of stereo data) – MMI-SPLICE learns to increase recognition accuracy directly with a maximum mutual information (MMI) objective function where – MMI objective function • The global objective function is a linear sum of the objective function for each training utterance 32

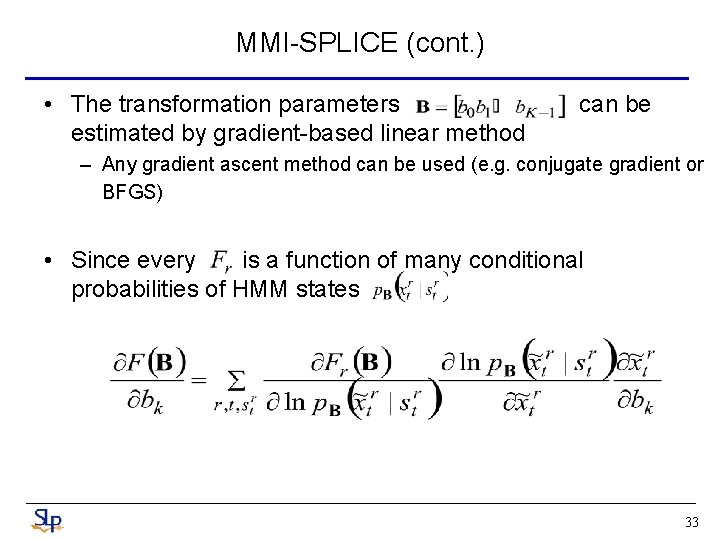

MMI-SPLICE (cont. ) • The transformation parameters estimated by gradient-based linear method can be – Any gradient ascent method can be used (e. g. conjugate gradient or BFGS) • Since every is a function of many conditional probabilities of HMM states 33

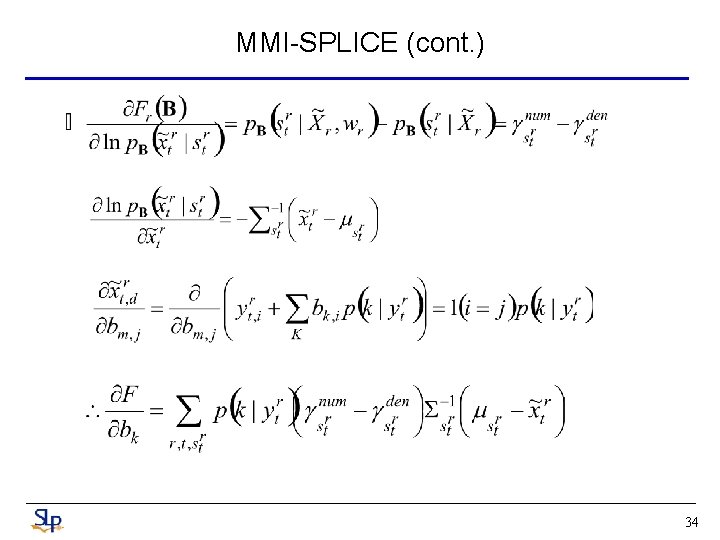

MMI-SPLICE (cont. ) 34

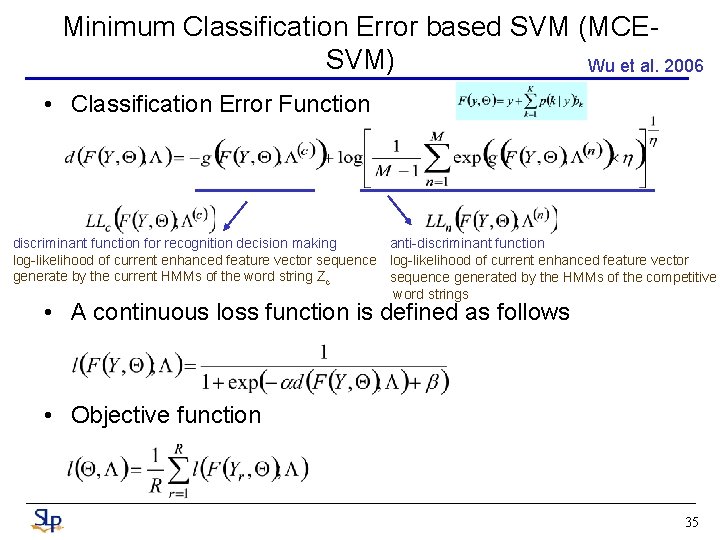

Minimum Classification Error based SVM (MCESVM) Wu et al. 2006 • Classification Error Function discriminant function for recognition decision making anti-discriminant function log-likelihood of current enhanced feature vector sequence log-likelihood of current enhanced feature vector generate by the current HMMs of the word string Zc sequence generated by the HMMs of the competitive word strings • A continuous loss function is defined as follows • Objective function 35

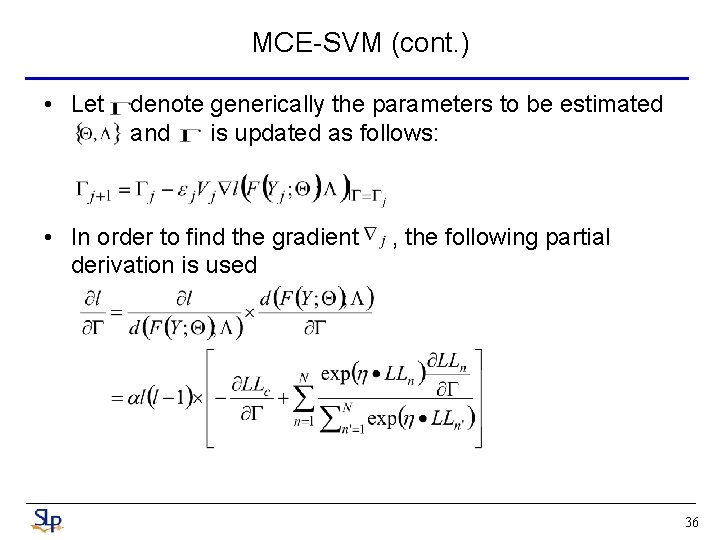

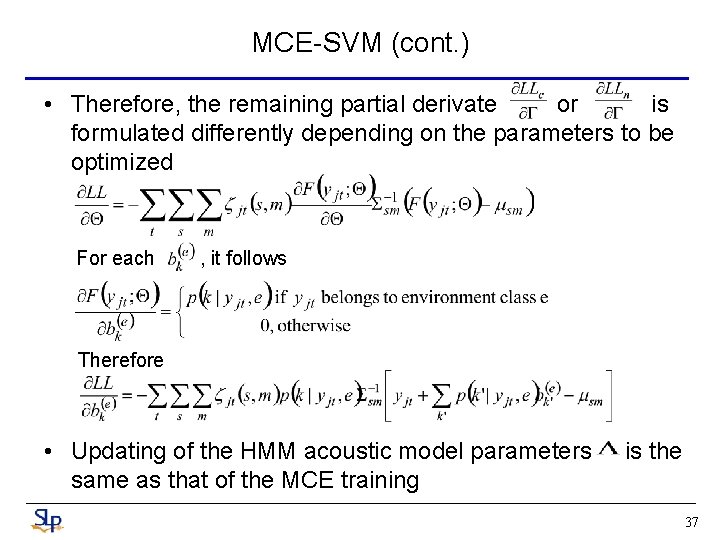

MCE-SVM (cont. ) • Let denote generically the parameters to be estimated and is updated as follows: • In order to find the gradient derivation is used , the following partial 36

MCE-SVM (cont. ) • Therefore, the remaining partial derivate or is formulated differently depending on the parameters to be optimized For each , it follows Therefore • Updating of the HMM acoustic model parameters same as that of the MCE training is the 37

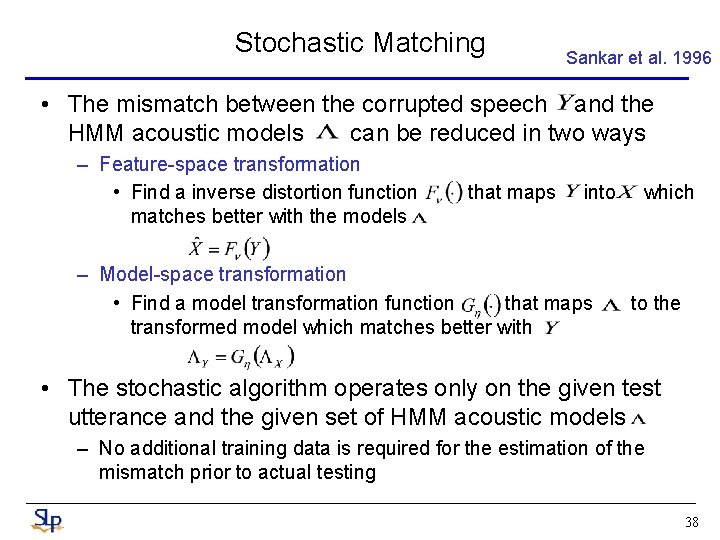

Stochastic Matching Sankar et al. 1996 • The mismatch between the corrupted speech and the HMM acoustic models can be reduced in two ways – Feature-space transformation • Find a inverse distortion function matches better with the models that maps into – Model-space transformation • Find a model transformation function that maps transformed model which matches better with which to the • The stochastic algorithm operates only on the given test utterance and the given set of HMM acoustic models – No additional training data is required for the estimation of the mismatch prior to actual testing 38

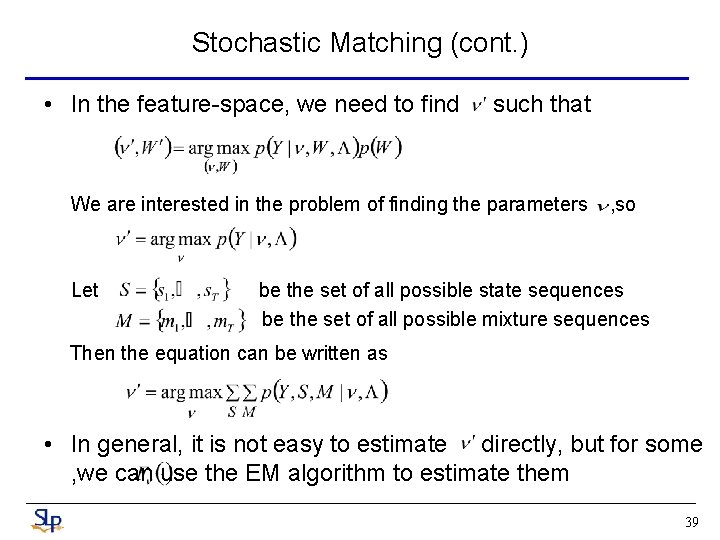

Stochastic Matching (cont. ) • In the feature-space, we need to find such that We are interested in the problem of finding the parameters Let , so be the set of all possible state sequences be the set of all possible mixture sequences Then the equation can be written as • In general, it is not easy to estimate directly, but for some , we can use the EM algorithm to estimate them 39

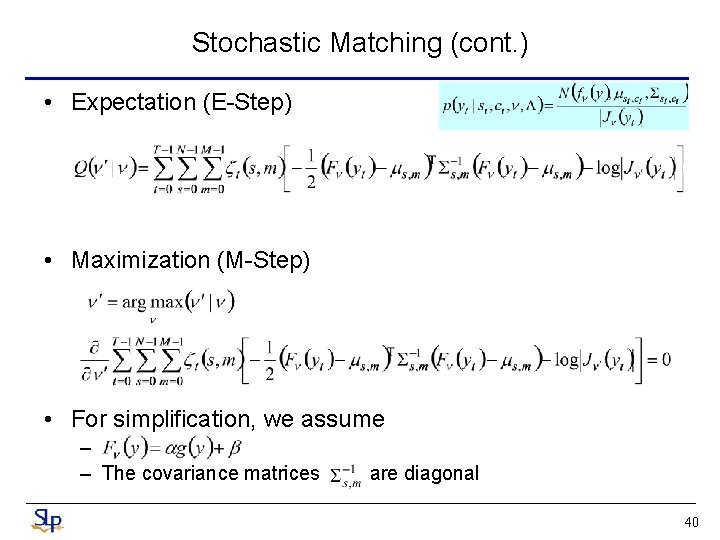

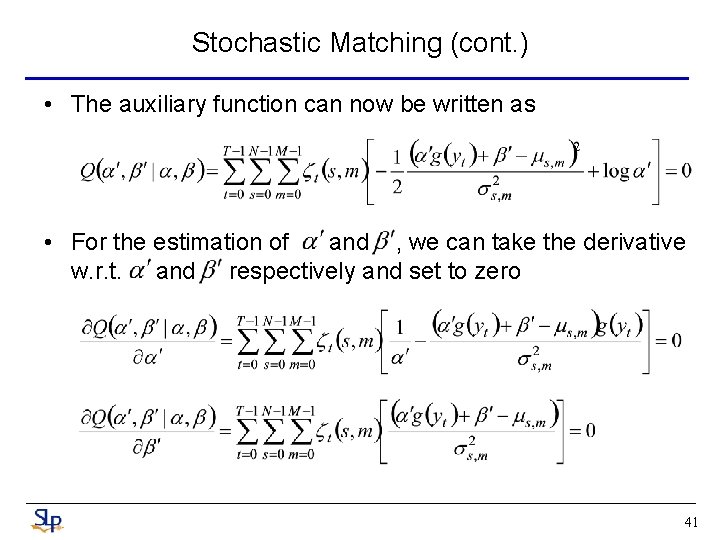

Stochastic Matching (cont. ) • Expectation (E-Step) • Maximization (M-Step) • For simplification, we assume – – The covariance matrices are diagonal 40

Stochastic Matching (cont. ) • The auxiliary function can now be written as • For the estimation of and , we can take the derivative w. r. t. and respectively and set to zero 41

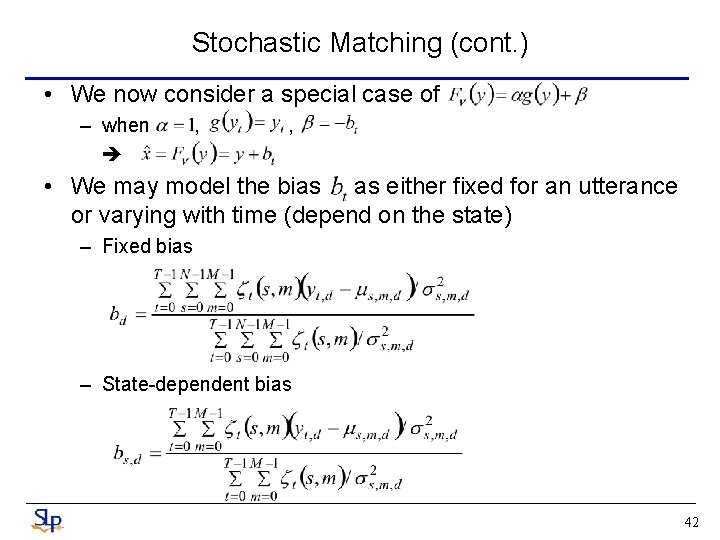

Stochastic Matching (cont. ) • We now consider a special case of – when , , • We may model the bias as either fixed for an utterance or varying with time (depend on the state) – Fixed bias – State-dependent bias 42

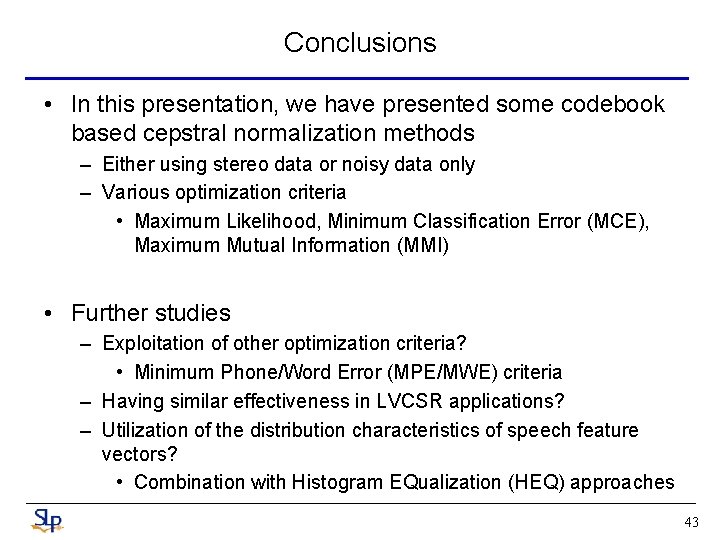

Conclusions • In this presentation, we have presented some codebook based cepstral normalization methods – Either using stereo data or noisy data only – Various optimization criteria • Maximum Likelihood, Minimum Classification Error (MCE), Maximum Mutual Information (MMI) • Further studies – Exploitation of other optimization criteria? • Minimum Phone/Word Error (MPE/MWE) criteria – Having similar effectiveness in LVCSR applications? – Utilization of the distribution characteristics of speech feature vectors? • Combination with Histogram EQualization (HEQ) approaches 43

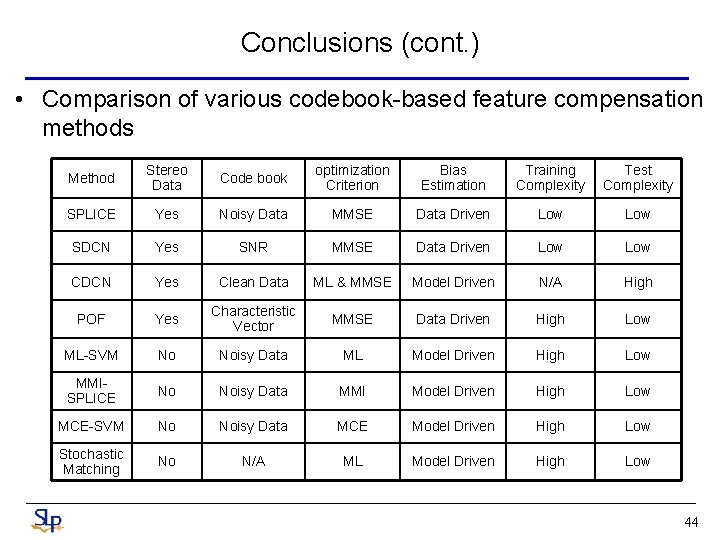

Conclusions (cont. ) • Comparison of various codebook-based feature compensation methods Method Stereo Data Code book optimization Criterion Bias Estimation Training Complexity Test Complexity SPLICE Yes Noisy Data MMSE Data Driven Low SDCN Yes SNR MMSE Data Driven Low CDCN Yes Clean Data ML & MMSE Model Driven N/A High POF Yes Characteristic Vector MMSE Data Driven High Low ML-SVM No Noisy Data ML Model Driven High Low MMISPLICE No Noisy Data MMI Model Driven High Low MCE-SVM No Noisy Data MCE Model Driven High Low Stochastic Matching No N/A ML Model Driven High Low 44

References • SDCN, CDCN, FCDCN – A. Acero, and R. M. Stern, "Environmental Robustness in Automatic Speech Recognition, “ in Proc. ICASSP 1990 – A. Acero, and R. M. Stern, "Robust Speech Recognition by Normalization of the Acoustic Space, " in Proc. ICASSP 1991 • POF – L. Neumeyer and M. Weintraub, "Probabilistic Optimum Filtering for Robust Speech Recognition, " in Proc. ICASSP 1994 • SPLICE – L. Deng, A. Acero, M. Plumpe and X. Huang, “Large-Vocabulary Speech Recognition under Adverse Acoustic Environments, ” in Proc. ICSLP 2000 – L. Deng, A. Acero, L. Jiang, J. Droppo, and X. -D. Huang, “Highperformance robust speech recognition using stereo training data, ” in Proc. ICASSP 2002 45

References (cont. ) – J. Droppo, A. Acero and L. Deng. Evaluation of the SPLICE Algorithm on the Aurora 2 Database, ” in Proc. Euro. Speech 2001 • Stochastic Mapping – A. Sankar and C. H. Lee, “A Maximum-Likelihood Approach to Stochastic Matching for Robust Speech Recognition, ” IEEE Trans. Speech and Audio Processing, 1994 • ML-SVM – J. Wu, Q. Huo, D. Zhu, “An Environment Compensated Maximum Likelihood Training Approach Based on Stochastic Vector Mapping, ” in Proc. ICASSP 2005 – Q. Huo and D. Zhu, “A Maximum Likelihood Training Approach to Irrelevant Variability Compensation Based on Piecewise Linear Transformations” in Proc. ICSLP 2006 – D. Zhu and Q. Huo, “A Maximum Likelihood Approach to Unsupervised Online Adaptation of Stochastic Vector Mapping Function for Robust Speech Recognition, ” in Proc. ICASSP 2007 46

References (cont. ) • MCE-SVM – J. Wu and Q. Huo, “An Environment-Compensated Minimum Classification Error Training Approach Based on Stochastic Vector Mapping, ” IEEE Trans. Audio, Speech and Language Processing 14(6), 2006 • MMI-SPLICE – J. Droppo and A. Acero. , “Maximum Mutual Information SPLICE Transform for Seen and Unseen Conditions, ” in Proc. Euro. Speech 2005. – J. Droppo, M. Mahajan, A. Gunawardana and A. Acero. "How to Train a Discriminative Front End with Stochastic Gradient Descent and Maximum Mutual Information, " in Proc. ASRU 2005 • Others – H. Liao and M. J. F. Gales. "Joint Uncertainty Decoding for Noise Robust Speech Recognition, " in Proc. Euro. Speech 2005 47

- Slides: 47