Robust RealTime Object Detection Paul Viola Michael Jones

Robust Real-Time Object Detection Paul Viola & Michael Jones

Introduction l Frontal face detection is achieved l l l Comparatively satisfactory detection rates Efficient decrease in false positive rate Extremely rapid operation l 384*288 pixel image is processed for 15 frames/second

Contribution of The Paper l Integral image l l Ada. Boost l l A new image representation Effective classifier selection Cascade structure of complex classifiers l Dramatic decrease in detection time

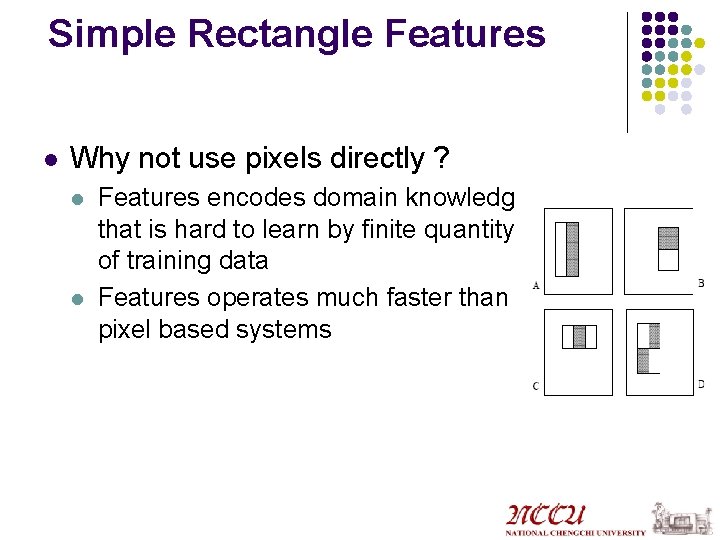

Simple Rectangle Features l Why not use pixels directly ? l l Features encodes domain knowledge that is hard to learn by finite quantity of training data Features operates much faster than pixel based systems

Integral Image l l Double integral of original image A new representation of image for fast calculation of rectangle features

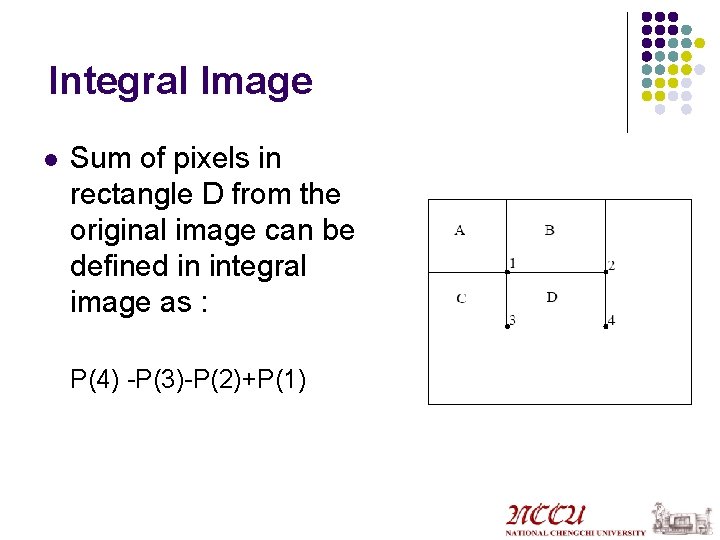

Integral Image l Sum of pixels in rectangle D from the original image can be defined in integral image as : P(4) -P(3)-P(2)+P(1)

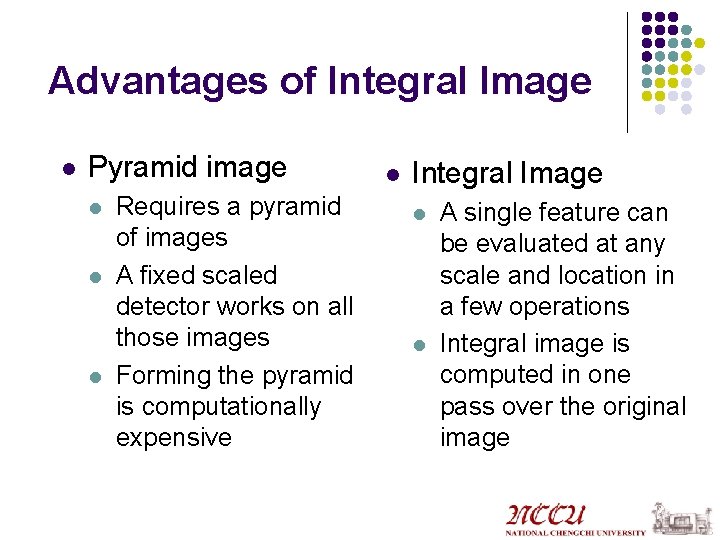

Advantages of Integral Image l Pyramid image l l l Requires a pyramid of images A fixed scaled detector works on all those images Forming the pyramid is computationally expensive l Integral Image l l A single feature can be evaluated at any scale and location in a few operations Integral image is computed in one pass over the original image

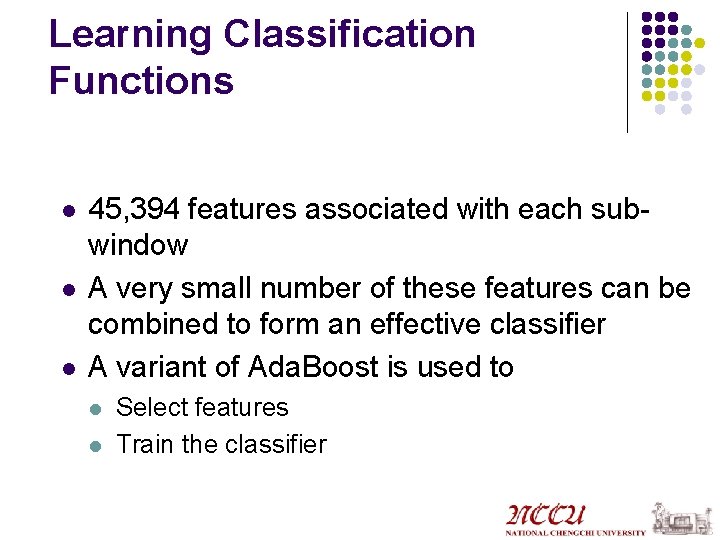

Learning Classification Functions l l l 45, 394 features associated with each subwindow A very small number of these features can be combined to form an effective classifier A variant of Ada. Boost is used to l l Select features Train the classifier

How does Ada. Boost work? l l Combines a mixture of weak classifiers to form a strong one Percepton algorithm returns the one having the minimum classification error The examples are re-weighted in according to the accuracy of the first classifier The final strong classifier is a weighted combination of weak classifiers

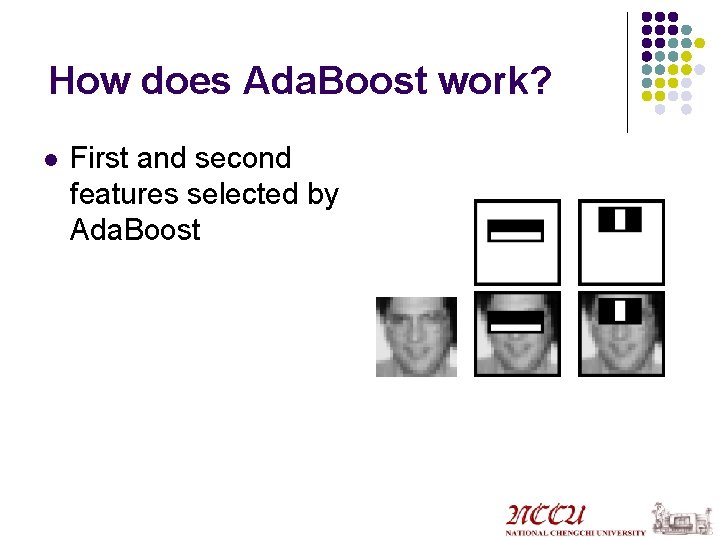

How does Ada. Boost work? l First and second features selected by Ada. Boost

Attentional Cascade l l l Increase detection performance & reduce computation time Calling simpler classifiers before complex ones A simple classifier example (two-feature): l l l 100% detection rate 40% false positives 60 microprocessor instructions (very efficient)

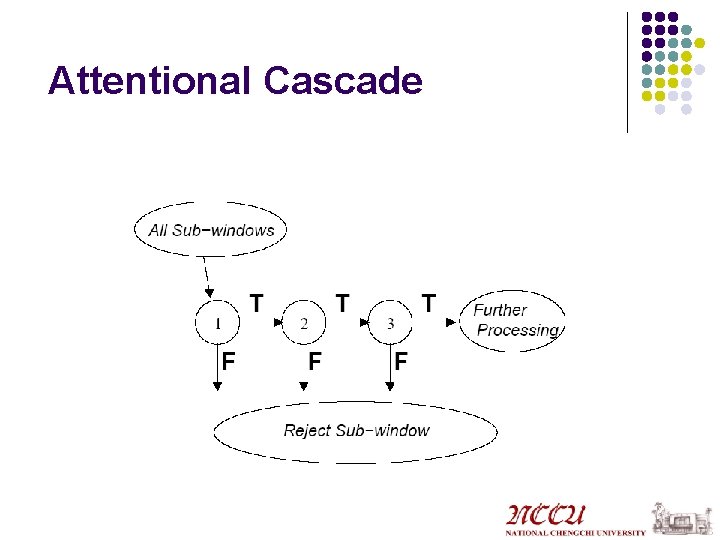

Attentional Cascade

Training of Cascade of Classifiers l l l The deeper classifiers are trained with harder examples Simple classifiers in the first stages, complex ones in the deeper parts of the cascade Complex classifiers takes more time to compute

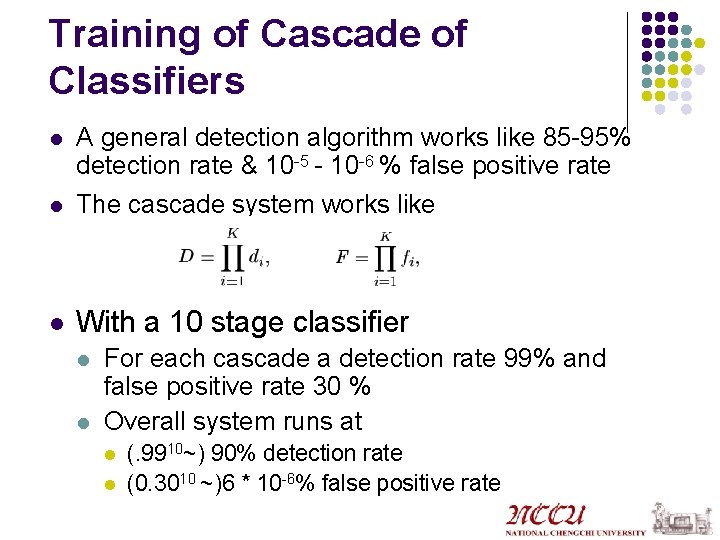

Training of Cascade of Classifiers l A general detection algorithm works like 85 -95% detection rate & 10 -5 - 10 -6 % false positive rate The cascade system works like l With a 10 stage classifier l l l For each cascade a detection rate 99% and false positive rate 30 % Overall system runs at l l (. 9910~) 90% detection rate (0. 3010 ~)6 * 10 -6% false positive rate

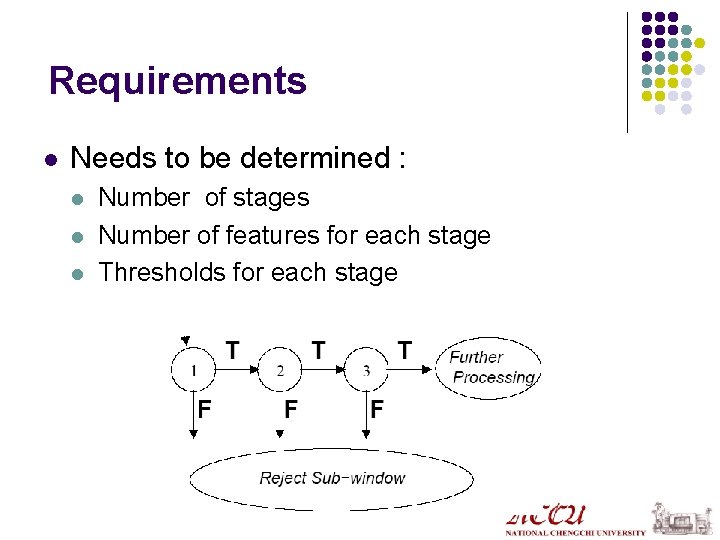

Requirements l Needs to be determined : l l l Number of stages Number of features for each stage Thresholds for each stage

Practical Implementation l l User selects acceptable fi and di for each layer Each layer is trained for Adaboost Number of features are increased until target fi and di are met for this level If overall target F and D is not met for the system we add a new level to the cascade

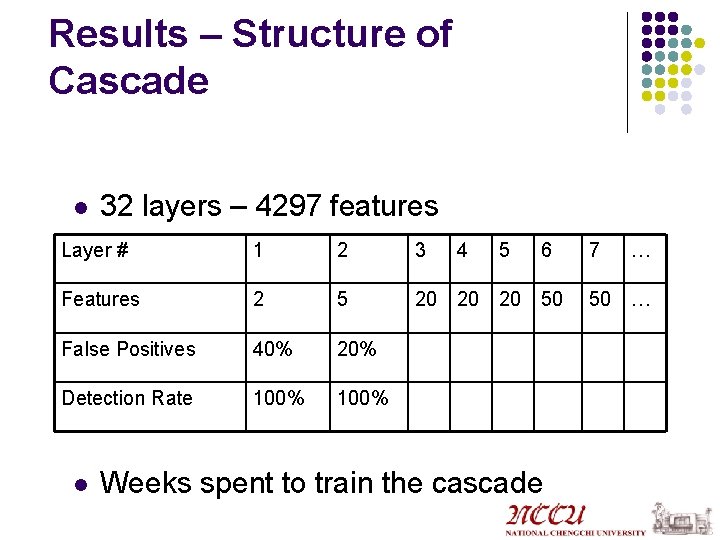

Results – Structure of Cascade l 32 layers – 4297 features Layer # 1 2 3 Features 2 5 20 20 20 50 False Positives 40% 20% Detection Rate 100% l 4 5 6 Weeks spent to train the cascade 7 … 50 …

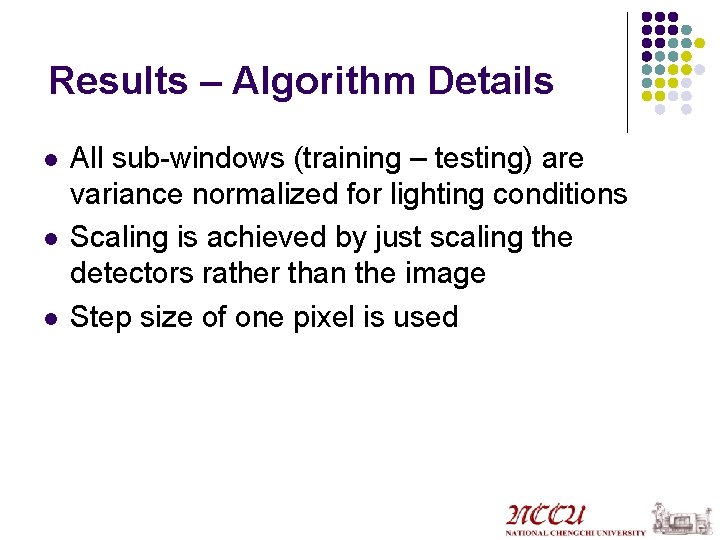

Results – Algorithm Details l l l All sub-windows (training – testing) are variance normalized for lighting conditions Scaling is achieved by just scaling the detectors rather than the image Step size of one pixel is used

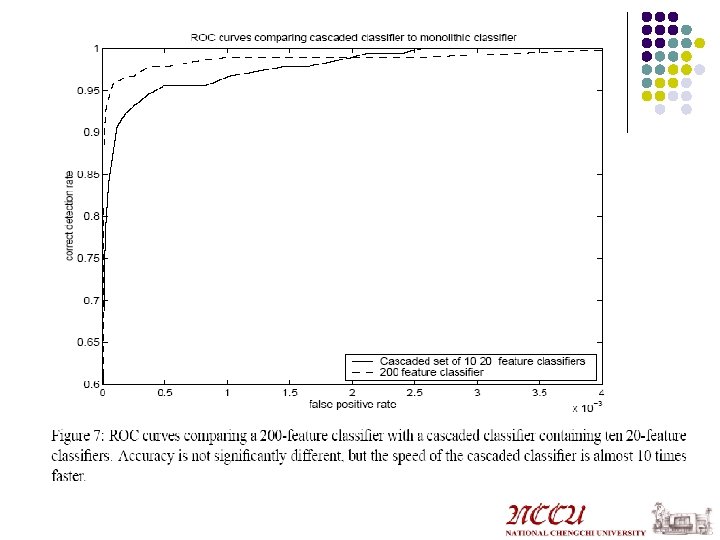

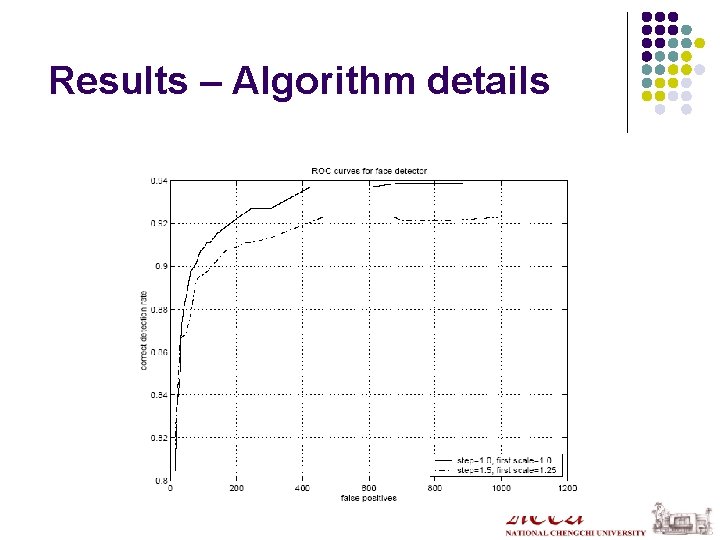

Results – Algorithm details

Results l l Most of the windows are rejected in the first & second cascade Face detection on a 384 x 288 image runs in about 0. 067 seconds 15 times faster than Rowley-Baluja-Kanade 600 times faster than Schneiderman- Kanade

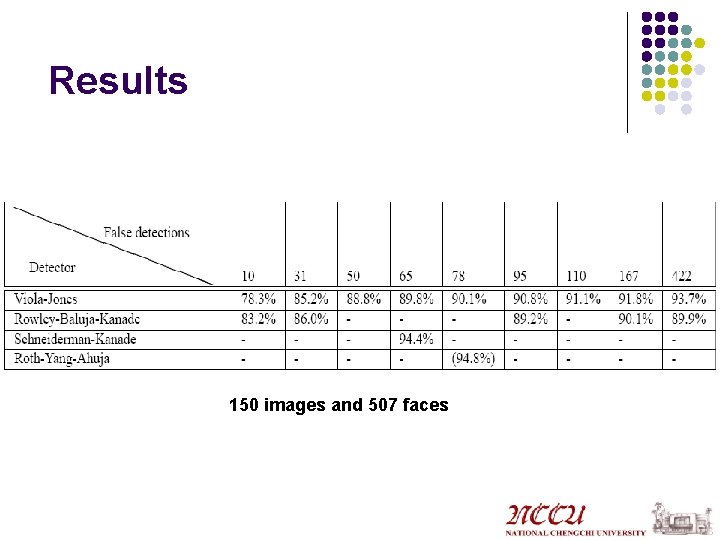

Results 150 images and 507 faces

- Slides: 22