Cern VM Software Appliance Predrag Buncic CERNPHSFT OSG

Cern. VM Software Appliance Predrag Buncic (CERN/PH-SFT) OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 1

Overview • Introduction § Cern. VM project goals § Cern. VM architecture • CVMFS § Architecture § Features § Scalability • Cern. VM@Grid • Status and Plans • Conclusions OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 2

What is Cern. VM? Linux used for Large Hadron Collider project “According to Internet. News. com, the Large Hadron Collider project that we’ve been hearing so much about runs a customized version of Linux called Cern. VM. Apparently it ran Vista at first, but the Aero interface kept slowing down the proton acceleration” (http: //www. crunchgear. com/2008/09/11/linux-used-for-large-hadroncollider-project/) OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 3

Cern. VM is NOT. . . • … Linux that is used to run Large Hadron Collider project § This is Scientific Linux 4/5 • … related to IBM VM/370 that once used to be installed at CERN § but we do use http: //cernvm. cern. ch for our Web site • … a hypervisor (or Virtual Machine Monitor) § it is a Virtual Machine that requires a hypervisor • … based on SL 4/5 distribution § but it is binary compatible with SL 4 and the future versions will be based on upstream SL 5 packages packaged for Cern. VM using conary package manager • … project dealing with VM deployment § There are many open source and commercial projects doing that OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 4

Cern. VM@CERN • R&D (WP 9) Project in Physics Department (SFT Group) • the same group that takes care of ROOT & Geant, looks for common projects and seeks synergy between experiments • Cern. VM Project started in 01/01/2007, funded for 4 years OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 5

Cern. VM Project • Aims to provide a complete, portable and easy to configure user environment in form of a Virtual Machine for developing and running LHC data analysis locally and on the Grid independent of physical software and hardware platform (Linux, Windows, Mac. OS) Code check-out, edition, compilation, local small test, debugging, … § Grid submission, data access… § Event displays, interactive data analysis, … § Suspend, resume… § • Decouple application lifecycle from evolution of system infrastructure • Reduce effort to install, maintain and keep up to date the experiment software • Web site: http: //cernvm. cern. ch OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 6 6

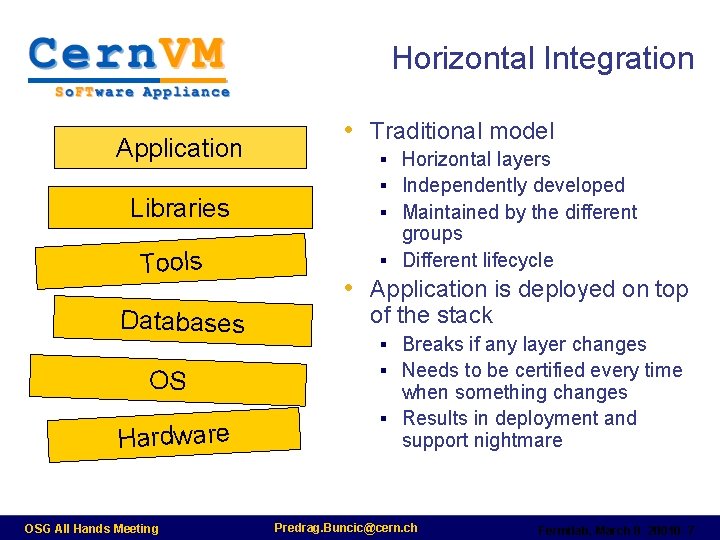

Horizontal Integration Application Libraries Tools Databases OS Hardware OSG All Hands Meeting • Traditional model § Horizontal layers § Independently developed § Maintained by the different groups § Different lifecycle • Application is deployed on top of the stack § Breaks if any layer changes § Needs to be certified every time when something changes § Results in deployment and support nightmare Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 7

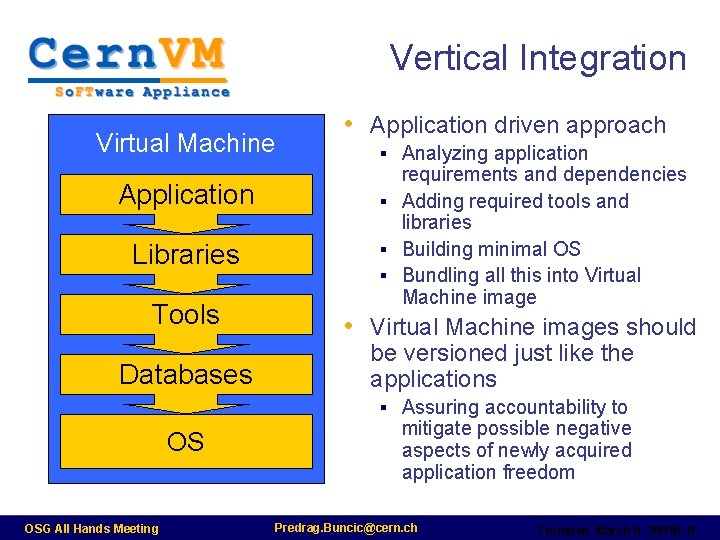

Vertical Integration Virtual Machine Application Libraries Tools Databases • Application driven approach § Analyzing application requirements and dependencies § Adding required tools and libraries § Building minimal OS § Bundling all this into Virtual Machine image • Virtual Machine images should be versioned just like the applications § Assuring accountability to OS OSG All Hands Meeting mitigate possible negative aspects of newly acquired application freedom Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 8

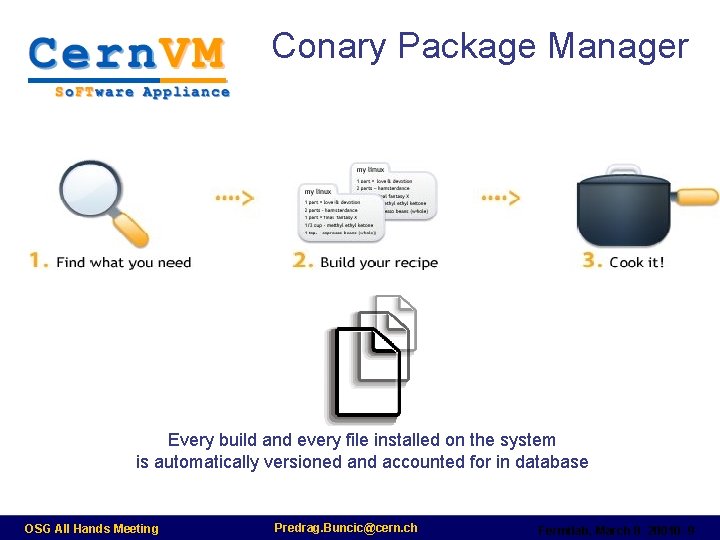

Conary Package Manager Every build and every file installed on the system is automatically versioned and accounted for in database OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 9

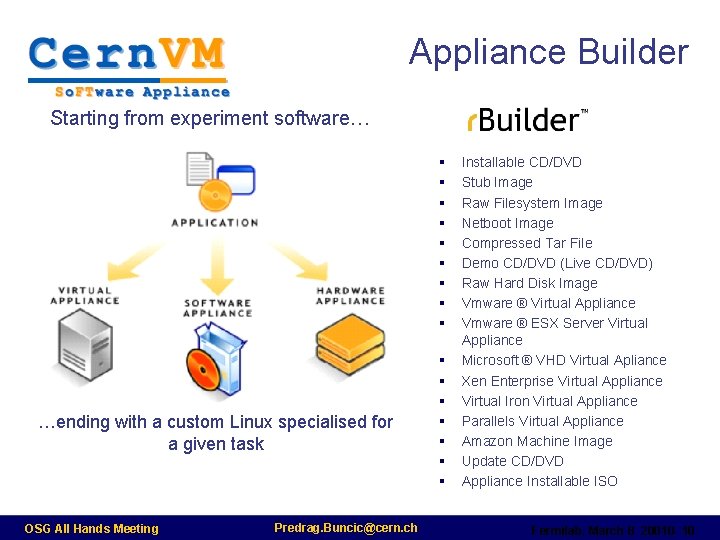

Appliance Builder Starting from experiment software… § § § …ending with a custom Linux specialised for a given task § § OSG All Hands Meeting Predrag. Buncic@cern. ch Installable CD/DVD Stub Image Raw Filesystem Image Netboot Image Compressed Tar File Demo CD/DVD (Live CD/DVD) Raw Hard Disk Image Vmware ® Virtual Appliance Vmware ® ESX Server Virtual Appliance Microsoft ® VHD Virtual Apliance Xen Enterprise Virtual Appliance Virtual Iron Virtual Appliance Parallels Virtual Appliance Amazon Machine Image Update CD/DVD Appliance Installable ISO Fermilab, March 8 20010 - 10

Why appliance? OSG All Hands Meeting Predrag. Buncic@cern. ch CERN, June 16 2009 Fermilab, March 8 2001011 - 11

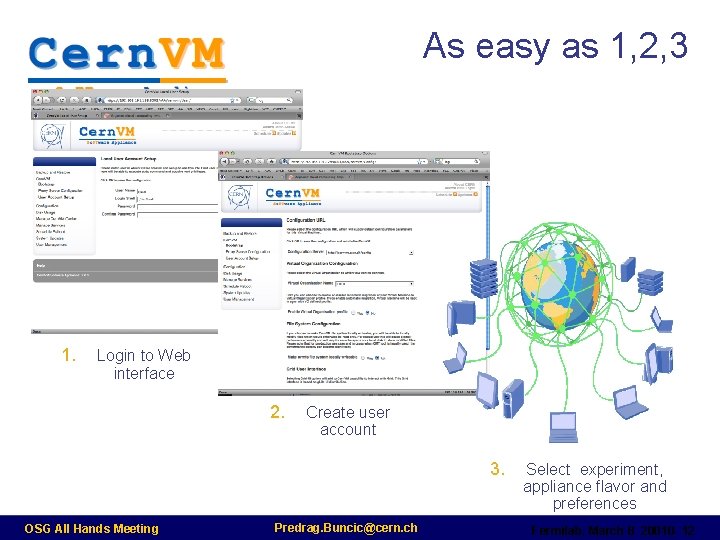

As easy as 1, 2, 3 1. Login to Web interface 2. Create user account 3. OSG All Hands Meeting Predrag. Buncic@cern. ch Select experiment, appliance flavor and preferences Fermilab, March 8 20010 - 12

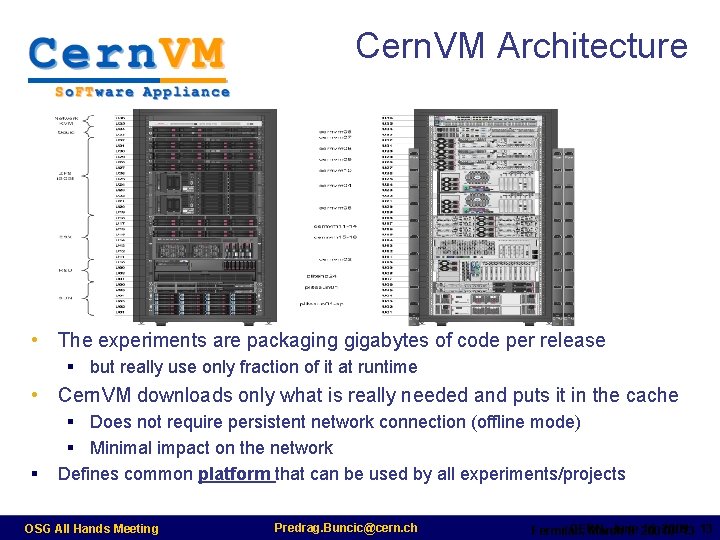

Cern. VM Architecture • The experiments are packaging gigabytes of code per release § but really use only fraction of it at runtime • Cern. VM downloads only what is really needed and puts it in the cache § Does not require persistent network connection (offline mode) § Minimal impact on the network § Defines common platform that can be used by all experiments/projects OSG All Hands Meeting Predrag. Buncic@cern. ch CERN, June 16 2009 Fermilab, March 8 2001013 - 13

Cern. VM File System (CVMFS) OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 14

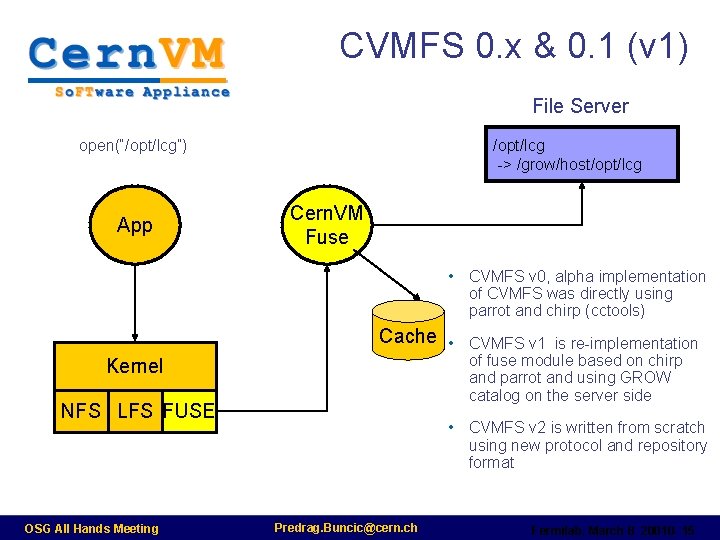

CVMFS 0. x & 0. 1 (v 1) File Server /opt/lcg -> /grow/host/opt/lcg open(“/opt/lcg”) App Cern. VM Fuse • CVMFS v 0, alpha implementation of CVMFS was directly using parrot and chirp (cctools) Cache • Kernel NFS LFS FUSE OSG All Hands Meeting CVMFS v 1 is re-implementation of fuse module based on chirp and parrot and using GROW catalog on the server side • CVMFS v 2 is written from scratch using new protocol and repository format Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 15

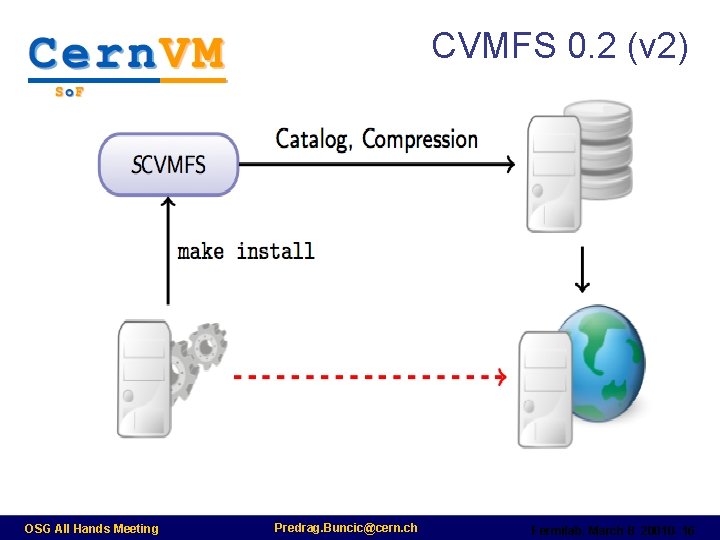

CVMFS 0. 2 (v 2) OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 16

Catalog Update OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 17

CVMFS Components CVMFS v 2 is using standard libraries and tools as building blocks OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 18

Security File integrity is verified on download using SHA 1 checksum Catalogs can be signed by X. 509 certificate OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 19

CVMFS v 2 main features • Requires only outgoing HTTP(S) connection, i. e. works with practically every Internet connection § Supports HTTP proxies • • • Transparent file compression Integrity checks using checksums, signed file catalog Catalogs are given time to live, which allows for automatic updates Support for nested catalogs Client uses failover mechanism for chain of forward/reverse proxy servers Possibility to pre-load cache Support for managed file cache with quotas Offline mode Trace file system operations OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 20

Scalability OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 21

Where are our users? ~1000 different IP addresses ~2000 different IP addresses OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 22

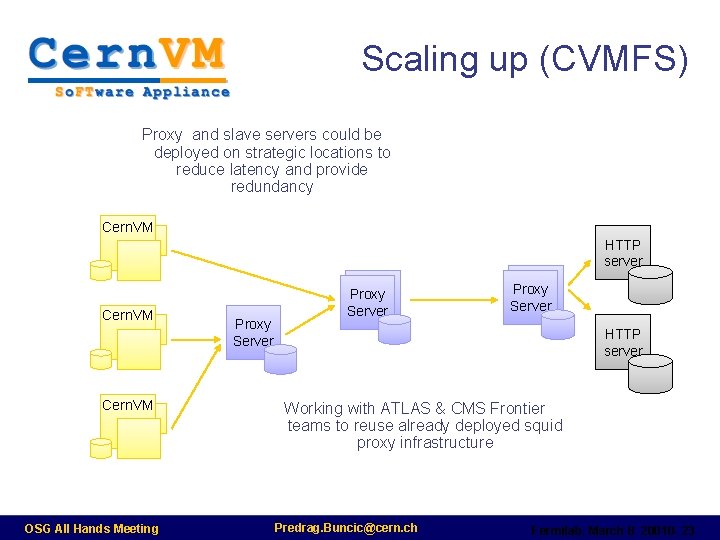

Scaling up (CVMFS) Proxy and slave servers could be deployed on strategic locations to reduce latency and provide redundancy Cern. VM HTTP server Cern. VM OSG All Hands Meeting Proxy Server HTTP server Working with ATLAS & CMS Frontier teams to reuse already deployed squid proxy infrastructure Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 23

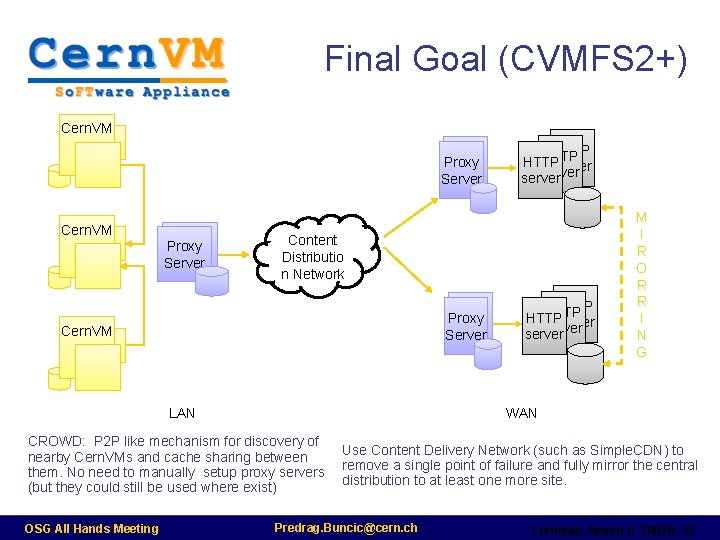

Final Goal (CVMFS 2+) Cern. VM Proxy Server Content Distributio n Network Proxy Server Cern. VM LAN HTTP server M I R O R R I N G WAN CROWD: P 2 P like mechanism for discovery of nearby Cern. VMs and cache sharing between them. No need to manually setup proxy servers (but they could still be used where exist) OSG All Hands Meeting HTTP server Use Content Delivery Network (such as Simple. CDN) to remove a single point of failure and fully mirror the central distribution to at least one more site. Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 24

Online replication Anybody interested? OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 25

CVMFS Servers OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 26

Virtualized Infrastructure OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 27

Cern. VM, Cloud & Grid OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 28

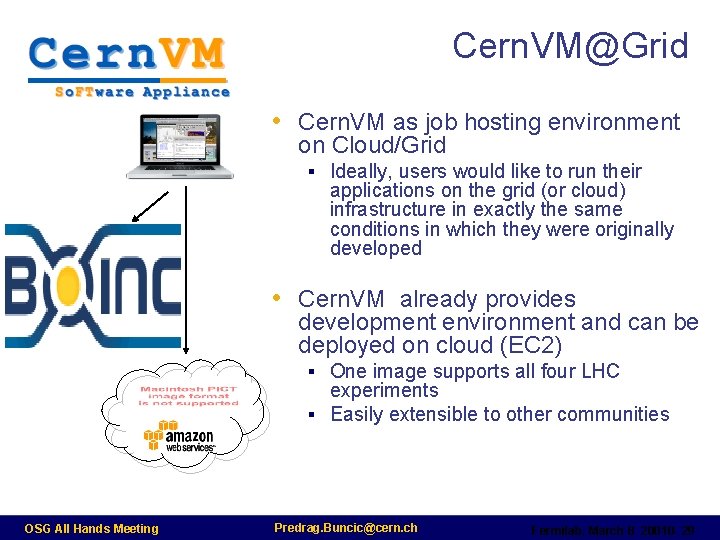

Cern. VM@Grid • Cern. VM as job hosting environment on Cloud/Grid § Ideally, users would like to run their applications on the grid (or cloud) infrastructure in exactly the same conditions in which they were originally developed • Cern. VM already provides development environment and can be deployed on cloud (EC 2) § One image supports all four LHC experiments § Easily extensible to other communities OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 29

Advantages • Exactly the same environment for development and job execution • Software can be efficiently installed using CVMFS § HTTP proxy assures very fast access to software even if VM cache is cleared • Can accommodate multi-core jobs • Deployment on EC 2 or alternative clusters § Nimbus, Elastic OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 30

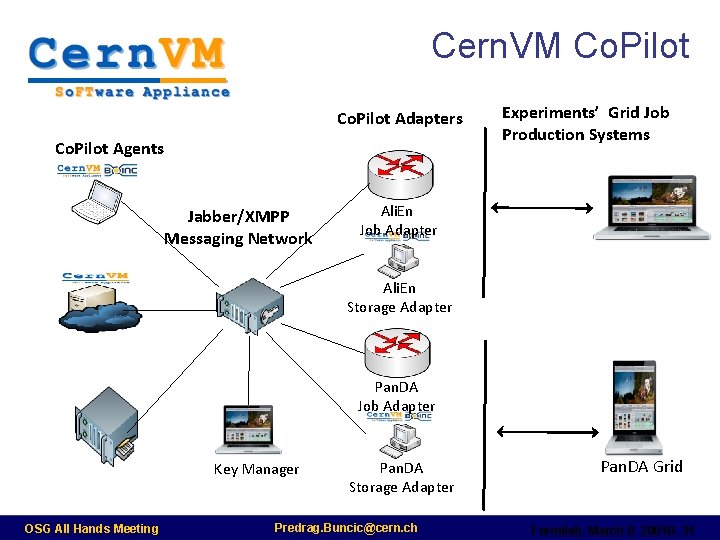

Cern. VM Co. Pilot Adapters Co. Pilot Agents Jabber/XMPP Messaging Network Experiments’ Grid Job Production Systems Ali. En Job Adapter Ali. En Storage Adapter Pan. DA Job Adapter Key Manager OSG All Hands Meeting Pan. DA Storage Adapter Predrag. Buncic@cern. ch Pan. DA Grid Fermilab, March 8 20010 - 31

Co. Pilot for PANDA OSG All Hands Meeting Predrag. Buncic@cern. ch 32 Fermilab, March 8 20010 - 32

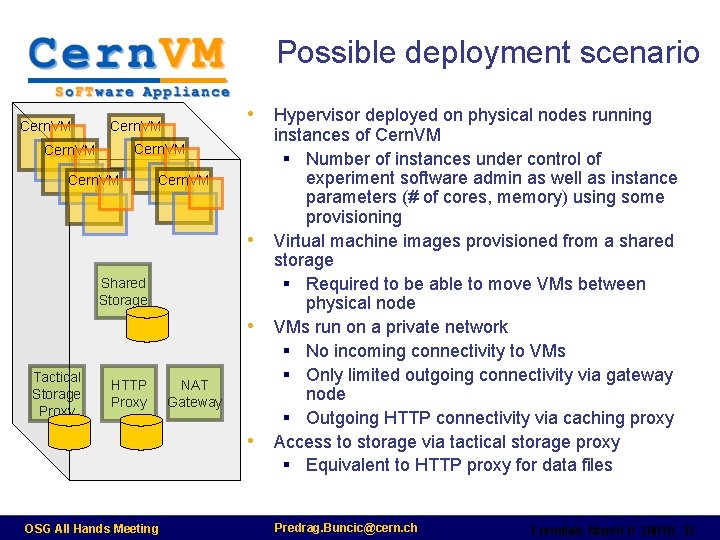

Possible deployment scenario Cern. VM • Hypervisor deployed on physical nodes running Cern. VM • Shared Storage • Tactical Storage Proxy HTTP Proxy NAT Gateway • OSG All Hands Meeting instances of Cern. VM § Number of instances under control of experiment software admin as well as instance parameters (# of cores, memory) using some provisioning Virtual machine images provisioned from a shared storage § Required to be able to move VMs between physical node VMs run on a private network § No incoming connectivity to VMs § Only limited outgoing connectivity via gateway node § Outgoing HTTP connectivity via caching proxy Access to storage via tactical storage proxy § Equivalent to HTTP proxy for data files Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 33

Status & Plans OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 34

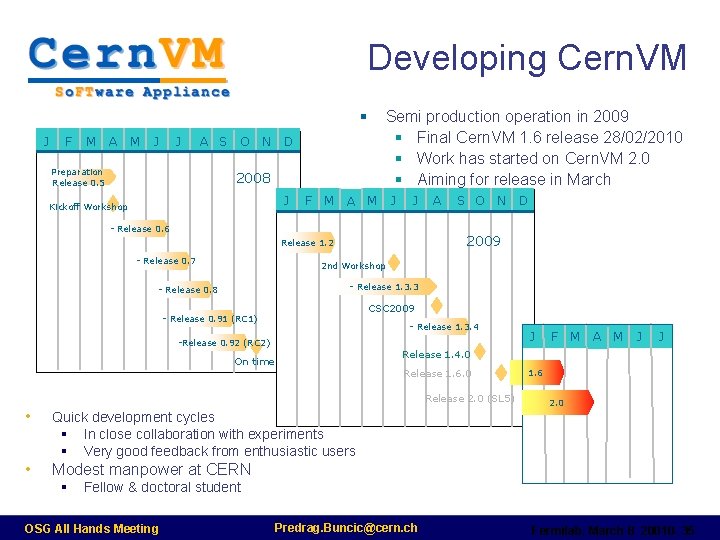

Developing Cern. VM § J F M A M J J A S Preparation Release 0. 5 O N Semi production operation in 2009 § Final Cern. VM 1. 6 release 28/02/2010 § Work has started on Cern. VM 2. 0 § Aiming for release in March D 2008 J Kickoff Workshop F M A M J J - Release 0. 6 S O N 2 nd Workshop - Release 1. 3. 3 - Release 0. 8 CSC 2009 - Release 0. 91 (RC 1) - Release 1. 3. 4 -Release 0. 92 (RC 2) On time! • J F M A M J J Release 1. 4. 0 Release 1. 6. 0 Release 2. 0 (SL 5) • D 2009 Release 1. 2 - Release 0. 7 A 1. 6 2. 0 Quick development cycles § In close collaboration with experiments § Very good feedback from enthusiastic users Modest manpower at CERN § Fellow & doctoral student OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 35

Transition from R&D to Service OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 36

Other possibilities § Once day our laptops and desktops will have more cores than we could possibly use. . § Start Cern. VM, tick “Share my CPU” in Preferences § enable your colleagues to discover your Cern. VM and use your spare cores to setup ad hoc § Condor cluster § PROOF cluster for analysis § Parallel build cluster § Parallel python (pyserver) … § All this could be based on the same foundation of protocols developed for Cern. VM/CVMFS § Software distribution and file sharing, caching, messaging, discovery and auto configuration OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, Prague, March 826/03/2009 20010 - 37

Conclusions • Cern. VM Software Appliance § Used by ATLAS, LHCb and to lesser extent NA 61, ALICE and CMS § Based on innovative second generation package manager § Provides versioning of every build and build products installed on the system • Initially developed as user interface for laptop/desktop § Already deployable on the cloud (EC 2, Nimbus) § Can be deployed on unmanaged infrastructure like BOINC § Ongoing effort to connect existing Pilot Job frameworks (Ali. En, Panda, DIRAC, Condor glidein) using Co. Pilot framework • CVMFS § Being used not only for software distribution but also for calibration data (ATLAS) § Performs very well but it is still in development § Also available as standalone package outside Cern. VM OSG All Hands Meeting Predrag. Buncic@cern. ch Fermilab, March 8 20010 - 38

- Slides: 38