ALICE Status Report Predrag Buncic RUN 2 detector

ALICE Status Report Predrag Buncic

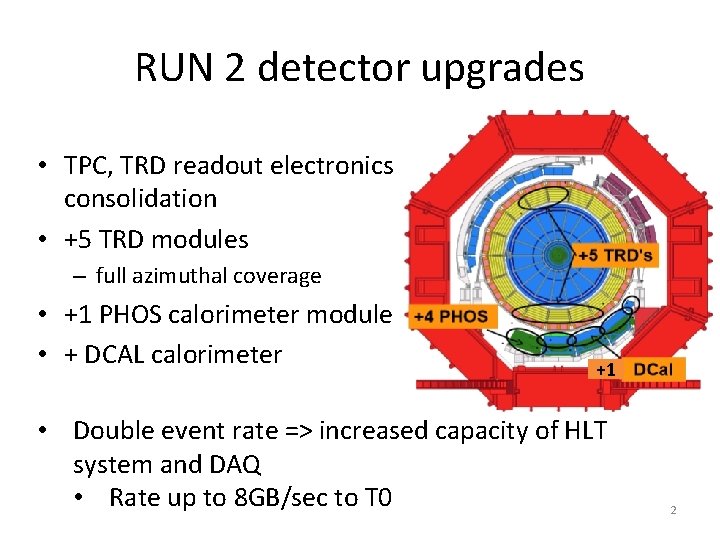

RUN 2 detector upgrades • TPC, TRD readout electronics consolidation • +5 TRD modules – full azimuthal coverage • +1 PHOS calorimeter module • + DCAL calorimeter +1 • Double event rate => increased capacity of HLT system and DAQ • Rate up to 8 GB/sec to T 0 2

Preparations for Run 2 • 25% larger raw event size due to the additional detectors • Higher track multiplicity with increased beam energy and event pileup • Concentrated and successful effort to improve performance of ALICE reconstruction software • Improved TPC-TRD alignment • TRD points used in track fit in order to improve momentum resolution for high p. T tracks • Factor 2 speed-up of magnetic field calculation • Streamlined calibration procedure • HLT track can be used as seeds for offline reconstruction • Reduced memory requirements during reconstruction and calibration (~500 Mb, the resident memory is below 1. 6 GB and the virtual - below 2. 4 GB) 3

Simulation Geant 4 v 10 Physics Validation has started CPU performance still 2 x worse compared to simulation with G 3 First test production (done) Pythia 6, pp, 7 Te. V QA in progress Some gains that we made with G 4 v 9. 6 are gone with v 10 But, we can use G 4 multithreaded capabilities to put our hands on resources that would otherwise be out of reach Next Step Comprehensive comparison of detector response with data 4

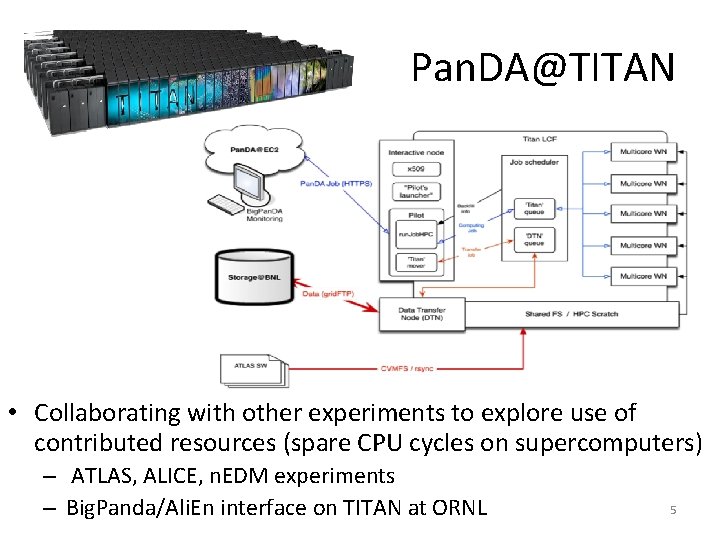

Pan. DA@TITAN • Collaborating with other experiments to explore use of contributed resources (spare CPU cycles on supercomputers) – ATLAS, ALICE, n. EDM experiments – Big. Panda/Ali. En interface on TITAN at ORNL 5

ALICE/ATLAS tests on TITAN • In May 2014 we ran the first 24 hour continuous job submission test via Pan. DA@EC 2 with pilot in backfill mode, with MPI wrappers for two workloads from ATLAS and ALICE – Stable operations, ~22 k core hours collected in 24 hours – Observed encouragingly short job wait time on Titan ~4 minutes • Ran second set of tests in July 2014, with pilot modifications that were based on information obtained from the first test – Job wait time limit introduced – 5 minutes – 145763 core hours collected, average wait time ~70 sec • Final tests have been conducted in August – Were able to collect ~ 200, 000 core hours • Work continues with testing performance of multithreaded G 4 in this environment 6 A. Klimentov

Use of HLT farm for Offline processing • ALICE HLT is relatively small compared to other experiments – New HLT farm was commissioned just 2 weeks ago – After upgrade, it could provide additional 3% CPU resources – Ongoing activity to add these resources to ALICE grid pool • Open. Stack instalation on top of HLT farm at P 2 • Side by side operation with HLT – HLT has priority, VMs are suspended if needed – Using Cern. VM, Cern. VM Online and CVMFS to configure virtual cluster running Condor – Dedicated VOBOX acts as a gateway between HLT and public network in order to present virtual cluster as standard Ali. En site • Similar approach successfully used to create on-demand release validation cluster on CERN Agile Infrastructure 7

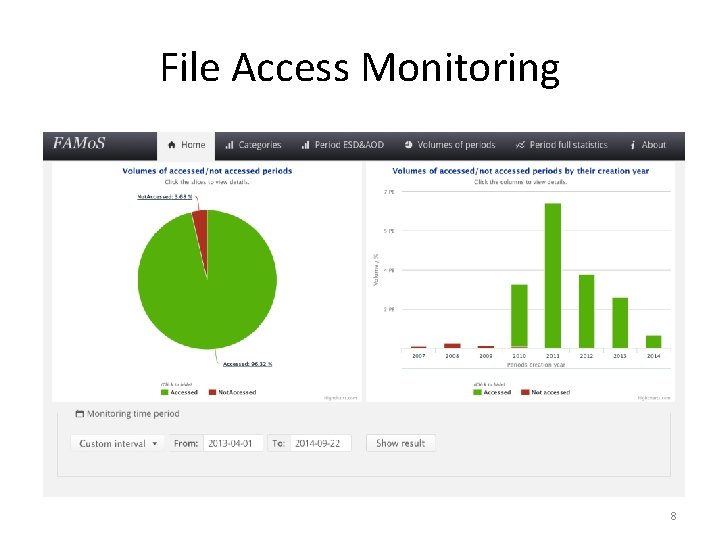

File Access Monitoring 8

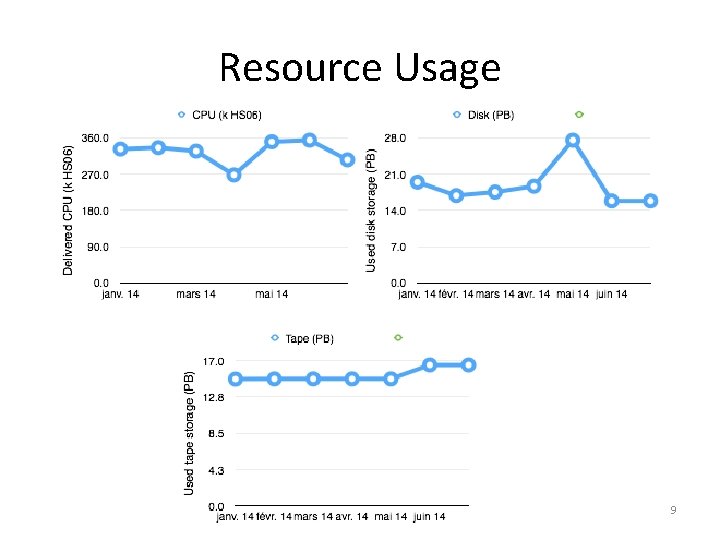

Resource Usage 9

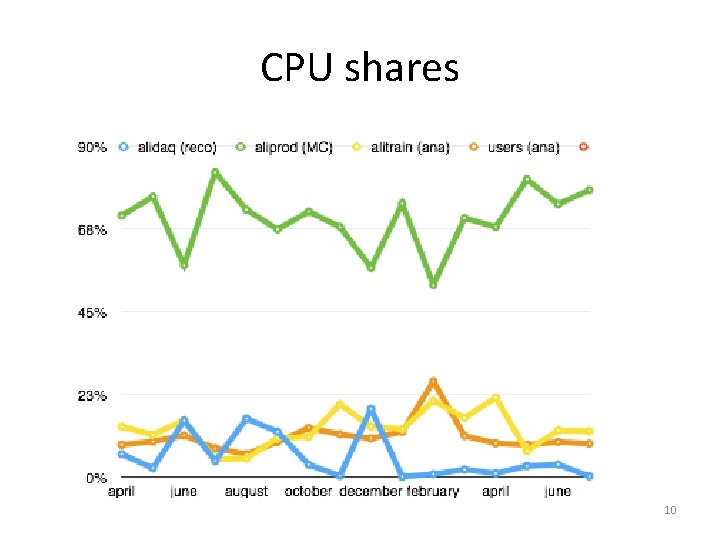

CPU shares 10

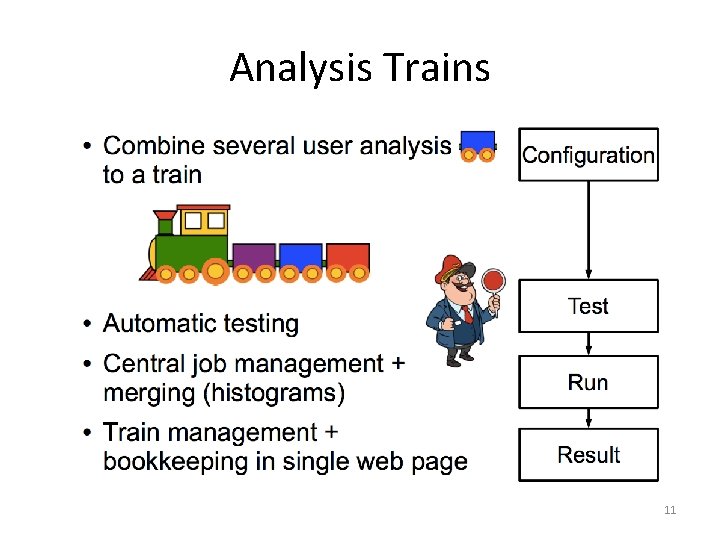

Analysis Trains 11

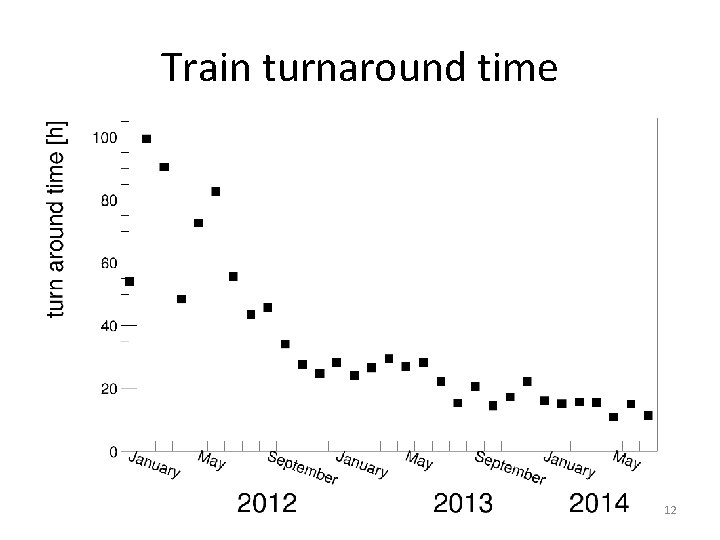

Train turnaround time 12

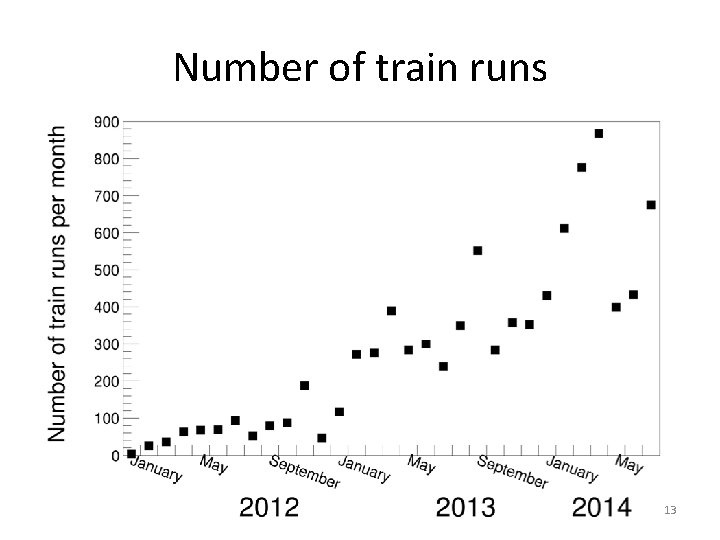

Number of train runs 13

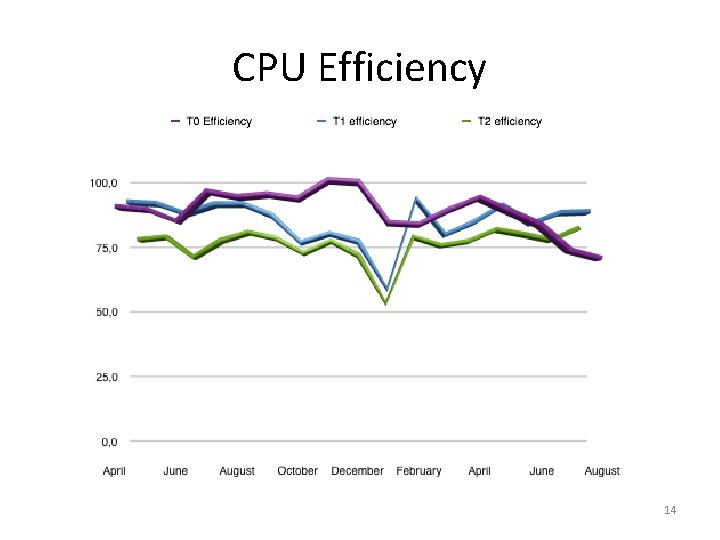

CPU Efficiency 14

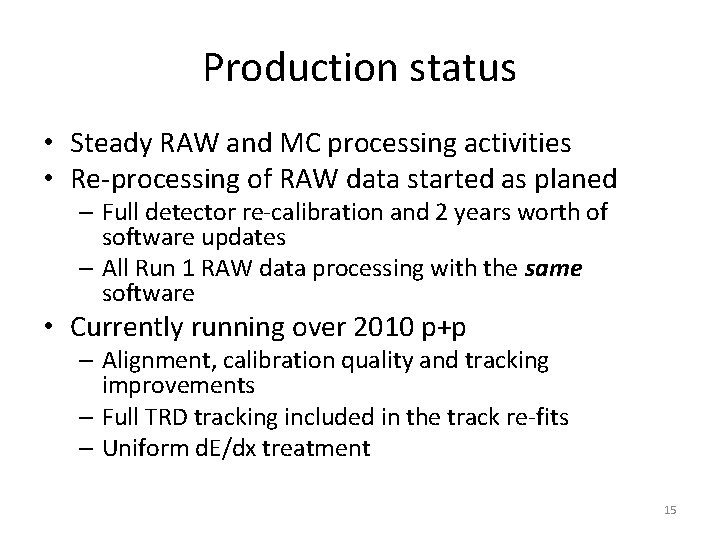

Production status • Steady RAW and MC processing activities • Re-processing of RAW data started as planed – Full detector re-calibration and 2 years worth of software updates – All Run 1 RAW data processing with the same software • Currently running over 2010 p+p – Alignment, calibration quality and tracking improvements – Full TRD tracking included in the track re-fits – Uniform d. E/dx treatment 15

Plans for the next 6 months • Data processing – Continue RAW data reconstruction on 2011 and 2012/2013 data (p+p and p+Pb) – Run associated MC – general and PWG specific – The reprocessing campaign will be completed by April 2015 • September 2014 onward – ALICE recommissioning – Test of upgraded detectors readout, Trigger, DAQ, new HLT farm – Full data recording chain, with conditions data gathering – November-December - cosmics trigger data taking with Offline processing 16

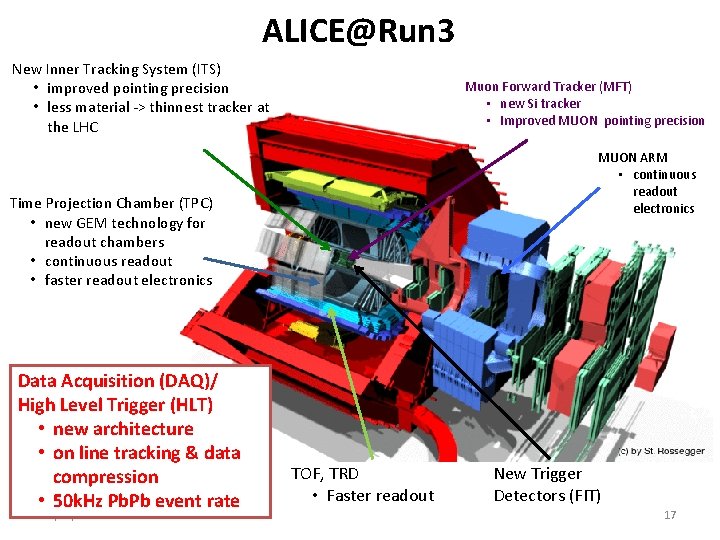

ALICE@Run 3 New Inner Tracking System (ITS) • improved pointing precision • less material -> thinnest tracker at the LHC Muon Forward Tracker (MFT) • new Si tracker • Improved MUON pointing precision MUON ARM • continuous readout electronics Time Projection Chamber (TPC) • new GEM technology for readout chambers • continuous readout • faster readout electronics Data Acquisition (DAQ)/ High Level Trigger (HLT) • new architecture • on line tracking & data compression • 50 k. Hz Pb. Pb event rate 21/01/2022 TOF, TRD • Faster readout New Trigger Detectors (FIT) 17

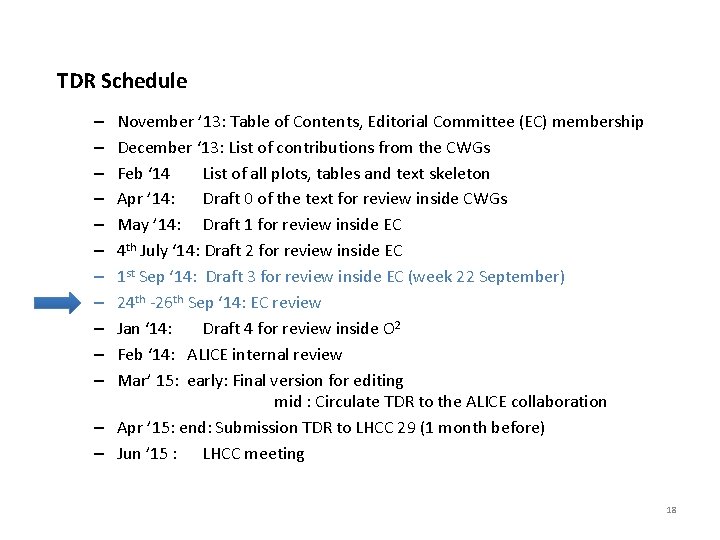

TDR Schedule November ’ 13: Table of Contents, Editorial Committee (EC) membership December ‘ 13: List of contributions from the CWGs Feb ‘ 14 List of all plots, tables and text skeleton Apr ’ 14: Draft 0 of the text for review inside CWGs May ’ 14: Draft 1 for review inside EC 4 th July ‘ 14: Draft 2 for review inside EC 1 st Sep ‘ 14: Draft 3 for review inside EC (week 22 September) 24 th -26 th Sep ‘ 14: EC review Jan ‘ 14: Draft 4 for review inside O 2 Feb ‘ 14: ALICE internal review Mar’ 15: early: Final version for editing mid : Circulate TDR to the ALICE collaboration – Apr ’ 15: end: Submission TDR to LHCC 29 (1 month before) – Jun ’ 15 : LHCC meeting – – – 18

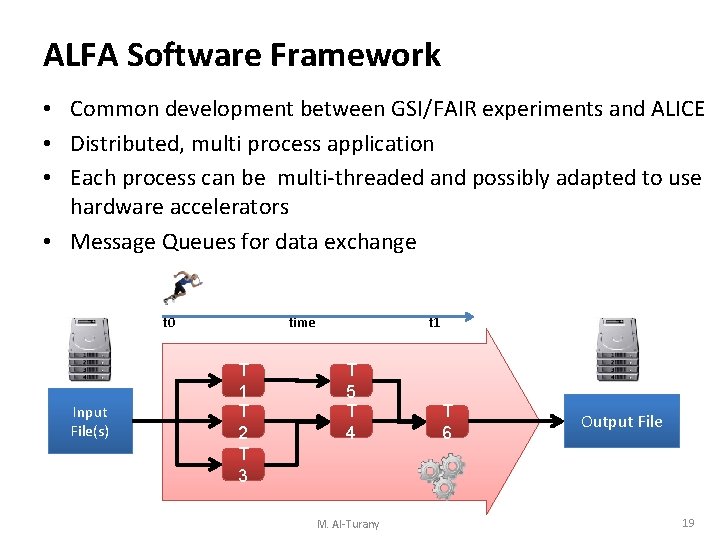

ALFA Software Framework • Common development between GSI/FAIR experiments and ALICE • Distributed, multi process application • Each process can be multi-threaded and possibly adapted to use hardware accelerators • Message Queues for data exchange t 0 Input File(s) time T 1 T 2 T 3 t 1 T 5 T 4 M. Al-Turany T 6 Output File 19

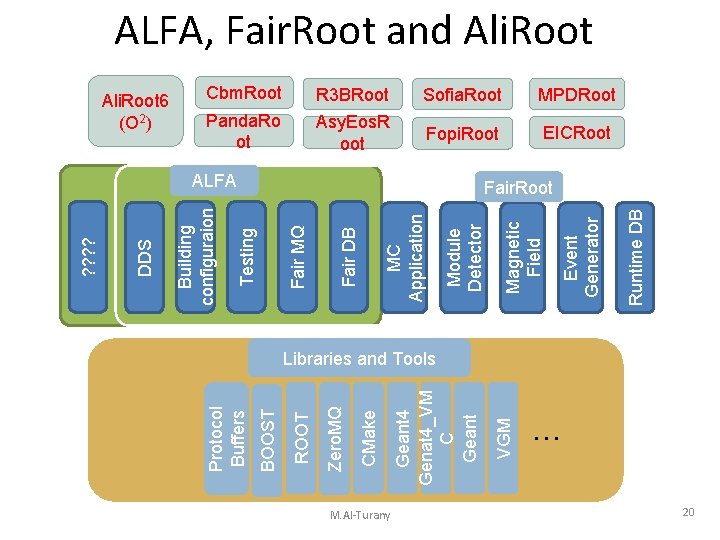

M. Al-Turany Cbm. Root R 3 BRoot Sofia. Root MPDRoot Panda. Ro ot Asy. Eos. R oot Fopi. Root EICRoot Runtime DB Event Generator Magnetic Field Module Detector MC Application Fair DB Fair MQ Testing ALFA VGM Geant 4 Genat 4_VM C Geant CMake Zero. MQ ROOT BOOST Protocol Buffers Ali. Root 6 (O 2) Building configuraion DDS ? ? ALFA, Fair. Root and Ali. Root Fair. Root Libraries and Tools … 20

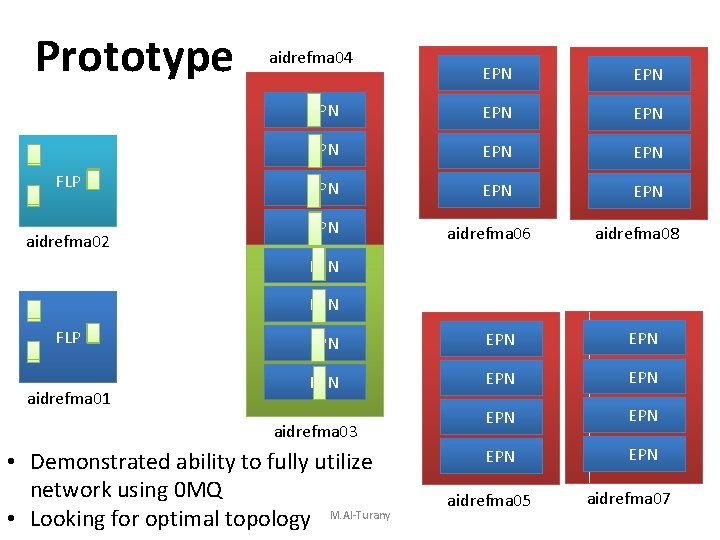

Prototype FLP aidrefma 02 aidrefma 04 EPN EPN EPN aidrefma 06 aidrefma 08 EPN FLP aidrefma 01 EPN EPN EPN aidrefma 03 • Demonstrated ability to fully utilize network using 0 MQ • Looking for optimal topology M. Al-Turany aidrefma 05 21 aidrefma 07

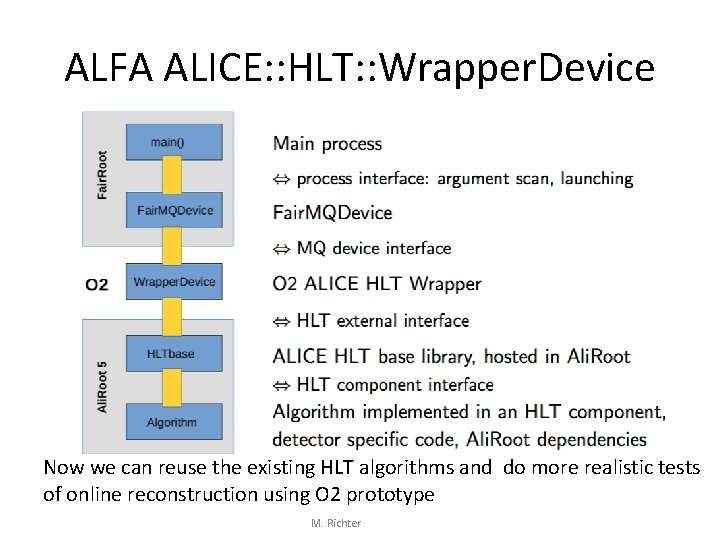

ALFA ALICE: : HLT: : Wrapper. Device Now we can reuse the existing HLT algorithms and do more realistic tests of online reconstruction using O 2 prototype M. Richter

Long Term Data Preservations • Goals – Public: provide open access to scientific data, including software and documentation – Private: assure reproducibility of ALICE data processing and analysis at any time by anybody • Ongoing effort to make our analysis internally reproducible • ALICE Master Classes available via common Open Access portal – Currently strange particles counting, RAA… – Along with minimal data sample to support this exercises • Need to solve technical issues (data access) before larger dataset can be published 23

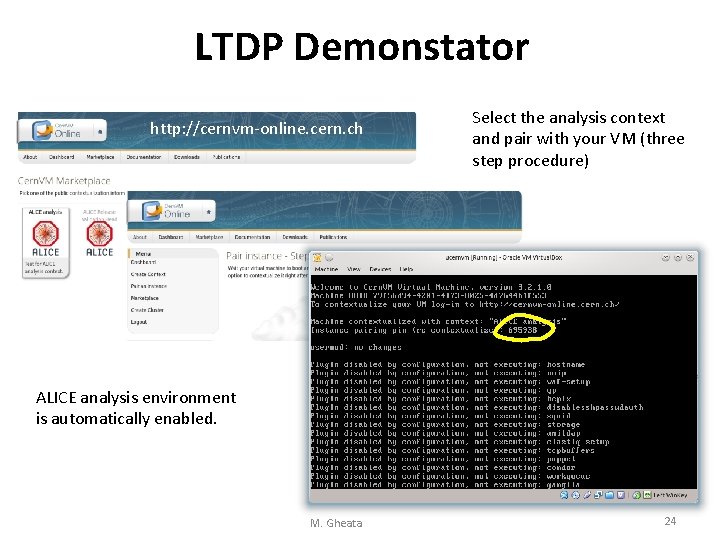

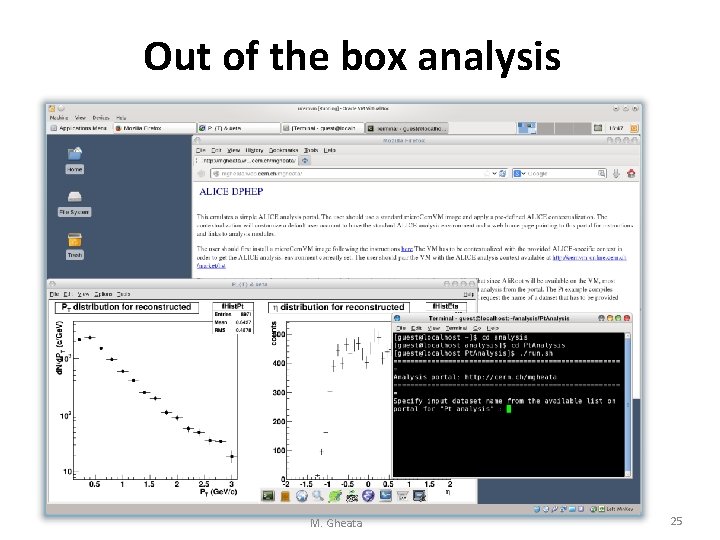

LTDP Demonstator http: //cernvm-online. cern. ch Select the analysis context and pair with your VM (three step procedure) ALICE analysis environment is automatically enabled. M. Gheata 24

Out of the box analysis M. Gheata 25

Summary – Run 2 • Computing resources are used efficiently and outlook for Run 2 is good • Analysis trains have significantly improved analysis efficiency and turnaround time • Ongoing collaboration with ATLAS on effective use of HPC resources for simulation – Run 3 • O 2 TDR is well in progress, meeting of editorial board this week • Ongoing collaboration between ALICE and FAIR on future common software framework (ALFA) – Long Term Data Preservation • Example analysis available via common portal 26

- Slides: 26