Bayesian models of human inductive learning Josh Tenenbaum

Bayesian models of human inductive learning Josh Tenenbaum MIT Department of Brain and Cognitive Sciences Computer Science and AI Lab (CSAIL)

Collaborators Pat Shafto Charles Kemp Vikash Mansinghka Tom Griffiths Chris Baker Lauren Schmidt Takeshi Yamada Naonori Ueda Funding: US NSF, AFOSR, ONR, DARPA, NTT Communication Sciences Laboratories, Schlumberger, Eli Lilly & Co. , James S. Mc. Donnell Foundation

The probabilistic revolution in AI • Principled and effective solutions for inductive inference from ambiguous data: – – – Vision Robotics Machine learning Expert systems / reasoning Natural language processing • Standard view: no necessary connection to how the human brain solves these problems.

Bayesian models of cognition Visual perception [Weiss, Simoncelli, Adelson, Richards, Freeman, Feldman, Kersten, Knill, Maloney, Olshausen, Jacobs, Pouget, . . . ] Language acquisition and processing [Brent, de Marken, Niyogi, Klein, Manning, Jurafsky, Keller, Levy, Hale, Johnson, Griffiths, Perfors, Tenenbaum, …] Motor learning and motor control [Ghahramani, Jordan, Wolpert, Kording, Kawato, Doya, Todorov, Shadmehr, …] Associative learning [Dayan, Daw, Kakade, Courville, Touretzky, Kruschke, …] Memory [Anderson, Schooler, Shiffrin, Steyvers, Griffiths, Mc. Clelland, …] Attention [Mozer, Huber, Torralba, Oliva, Geisler, Yu, Itti, Baldi, …] Categorization and concept learning [Anderson, Nosfosky, Rehder, Navarro, Griffiths, Feldman, Tenenbaum, Rosseel, Goodman, Kemp, Mansinghka, …] Reasoning [Chater, Oaksford, Sloman, Mc. Kenzie, Heit, Tenenbaum, Kemp, …] Causal inference [Waldmann, Sloman, Steyvers, Griffiths, Tenenbaum, Yuille, …] Decision making and theory of mind [Lee, Stankiewicz, Rao, Baker, Goodman, Tenenbaum, …]

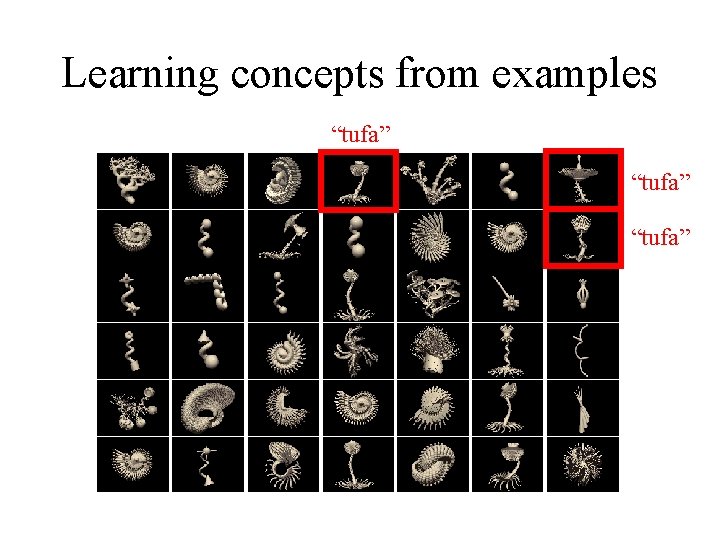

Everyday inductive leaps How can people learn so much about the world from such limited evidence? – Learning concepts from examples “horse”

Learning concepts from examples “tufa”

Everyday inductive leaps How can people learn so much about the world from such limited evidence? – Kinds of objects and their properties – The meanings of words, phrases, and sentences – Cause-effect relations – The beliefs, goals and plans of other people – Social structures, conventions, and rules

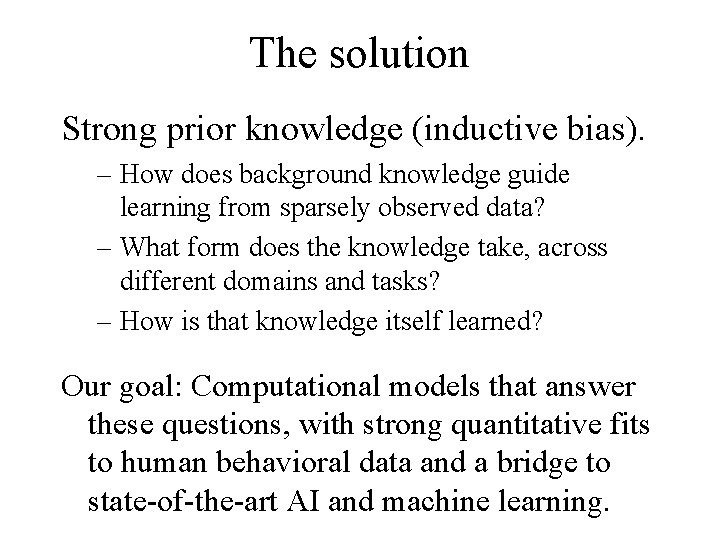

The solution Strong prior knowledge (inductive bias).

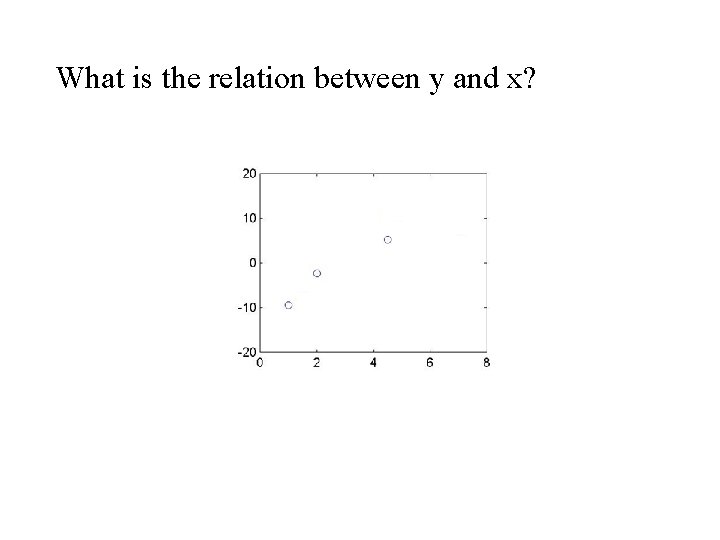

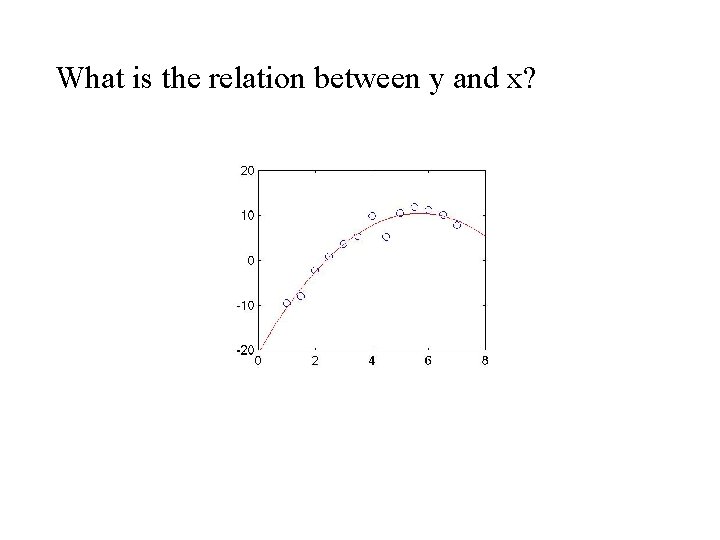

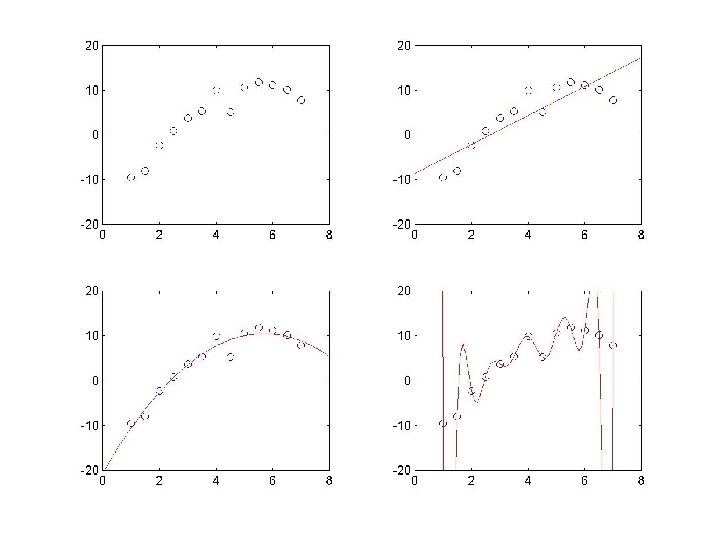

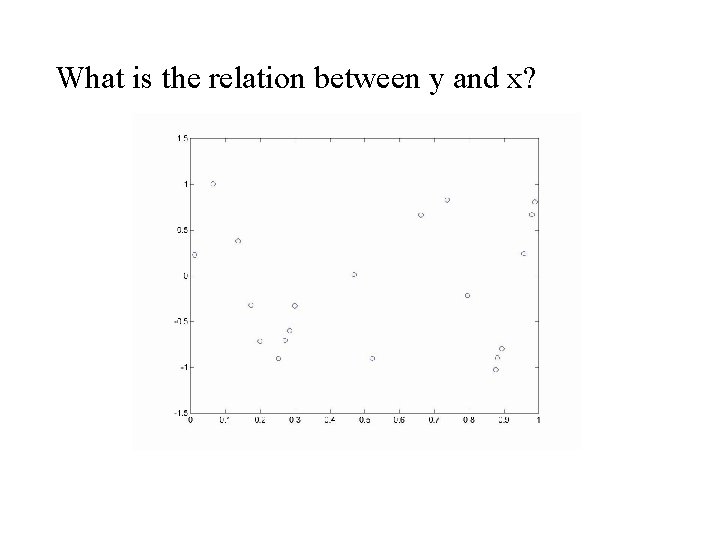

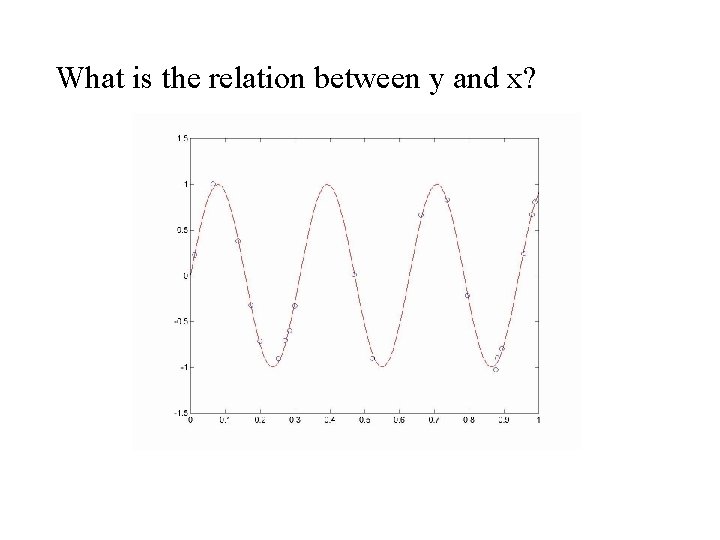

What is the relation between y and x?

What is the relation between y and x?

What is the relation between y and x?

What is the relation between y and x?

The solution Strong prior knowledge (inductive bias). – How does background knowledge guide learning from sparsely observed data? – What form does the knowledge take, across different domains and tasks? – How is that knowledge itself learned? Our goal: Computational models that answer these questions, with strong quantitative fits to human behavioral data and a bridge to state-of-the-art AI and machine learning.

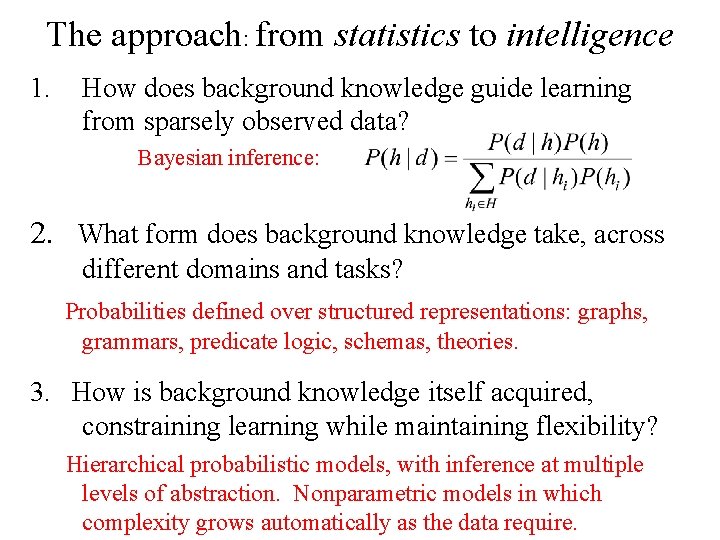

The approach: from statistics to intelligence 1. How does background knowledge guide learning from sparsely observed data? Bayesian inference: 2. What form does background knowledge take, across different domains and tasks? Probabilities defined over structured representations: graphs, grammars, predicate logic, schemas, theories. 3. How is background knowledge itself acquired, constraining learning while maintaining flexibility? Hierarchical probabilistic models, with inference at multiple levels of abstraction. Nonparametric models in which complexity grows automatically as the data require.

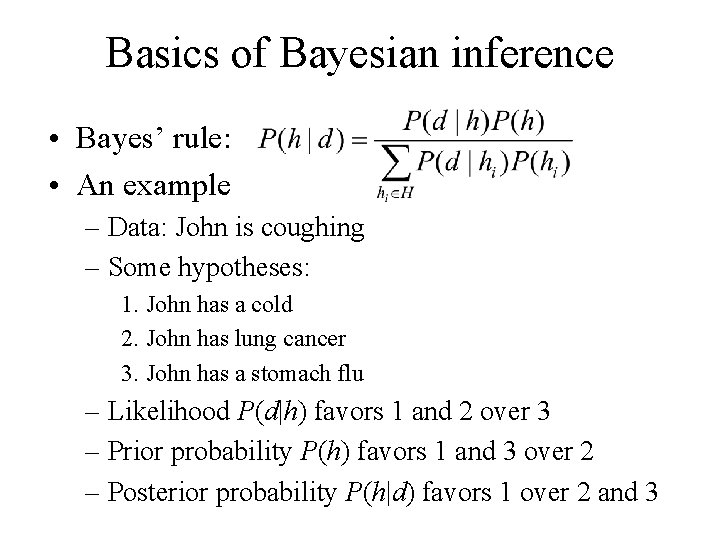

Basics of Bayesian inference • Bayes’ rule: • An example – Data: John is coughing – Some hypotheses: 1. John has a cold 2. John has lung cancer 3. John has a stomach flu – Likelihood P(d|h) favors 1 and 2 over 3 – Prior probability P(h) favors 1 and 3 over 2 – Posterior probability P(h|d) favors 1 over 2 and 3

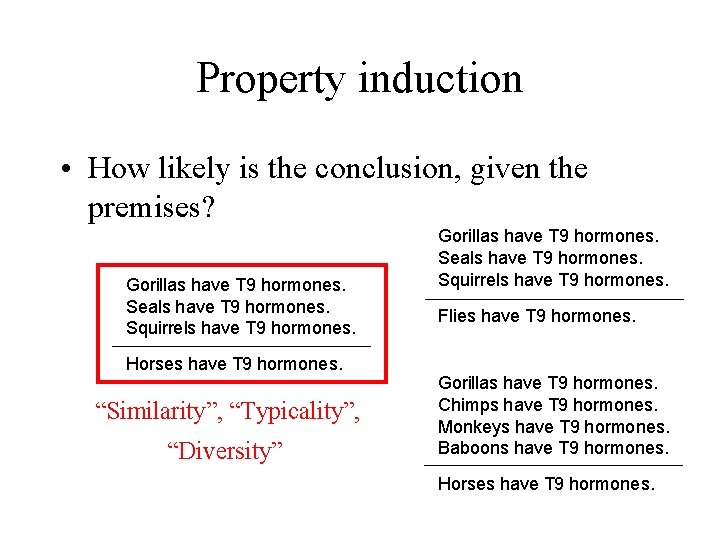

Property induction • How likely is the conclusion, given the premises? Gorillas have T 9 hormones. Seals have T 9 hormones. Squirrels have T 9 hormones. Horses have T 9 hormones. “Similarity”, “Typicality”, “Diversity” Gorillas have T 9 hormones. Seals have T 9 hormones. Squirrels have T 9 hormones. Flies have T 9 hormones. Gorillas have T 9 hormones. Chimps have T 9 hormones. Monkeys have T 9 hormones. Baboons have T 9 hormones. Horses have T 9 hormones.

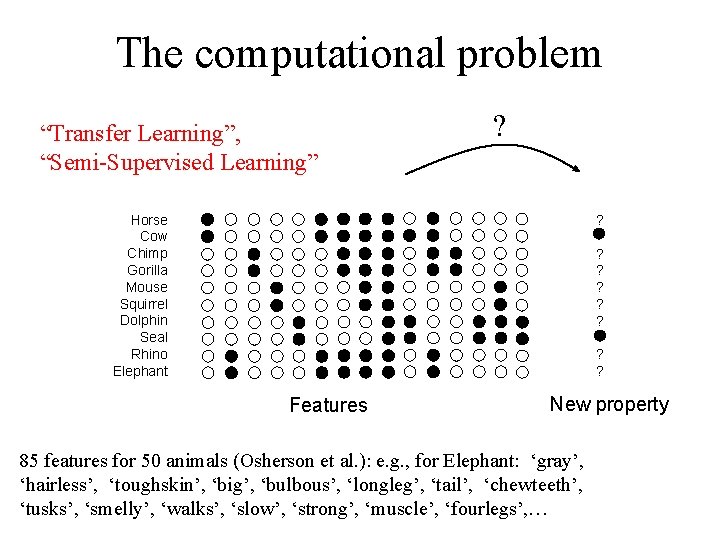

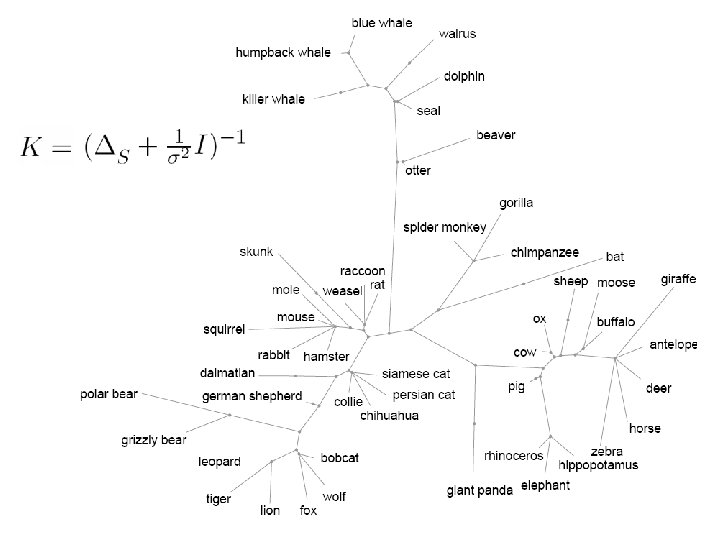

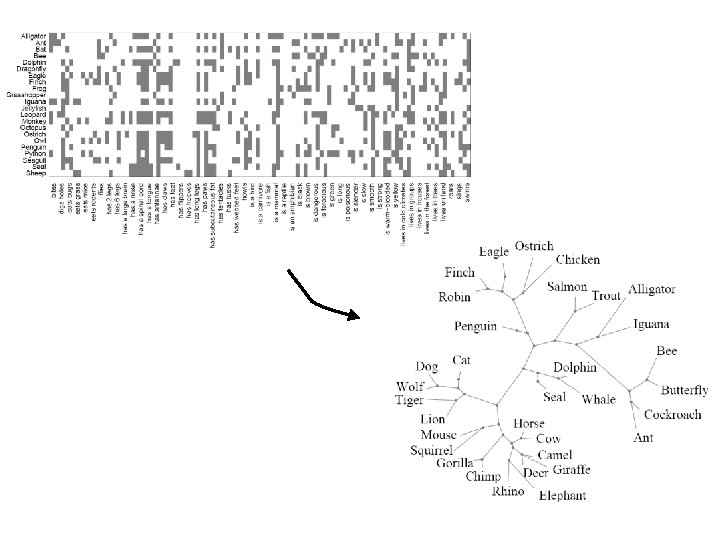

The computational problem “Transfer Learning”, “Semi-Supervised Learning” ? Horse Cow Chimp Gorilla Mouse Squirrel Dolphin Seal Rhino Elephant ? ? ? ? Features New property 85 features for 50 animals (Osherson et al. ): e. g. , for Elephant: ‘gray’, ‘hairless’, ‘toughskin’, ‘big’, ‘bulbous’, ‘longleg’, ‘tail’, ‘chewteeth’, ‘tusks’, ‘smelly’, ‘walks’, ‘slow’, ‘strong’, ‘muscle’, ‘fourlegs’, …

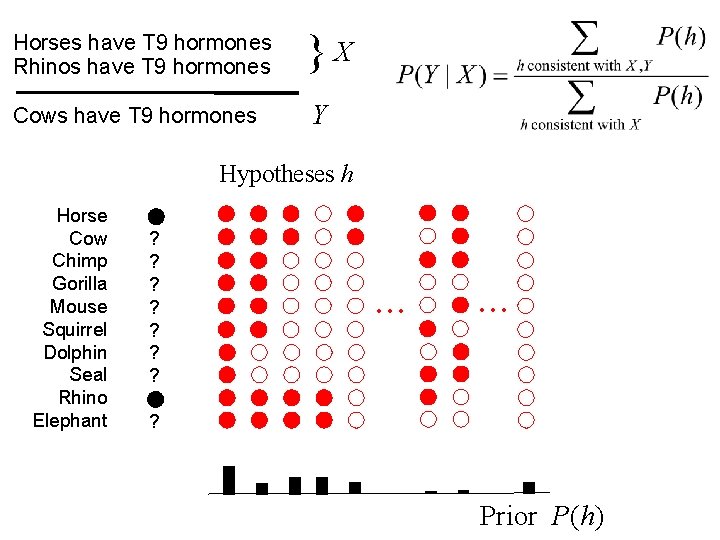

Horses have T 9 hormones Rhinos have T 9 hormones Cows have T 9 hormones }X Y Hypotheses h Horse Cow Chimp Gorilla Mouse Squirrel Dolphin Seal Rhino Elephant ? ? ? ? . . . ? Prior P(h)

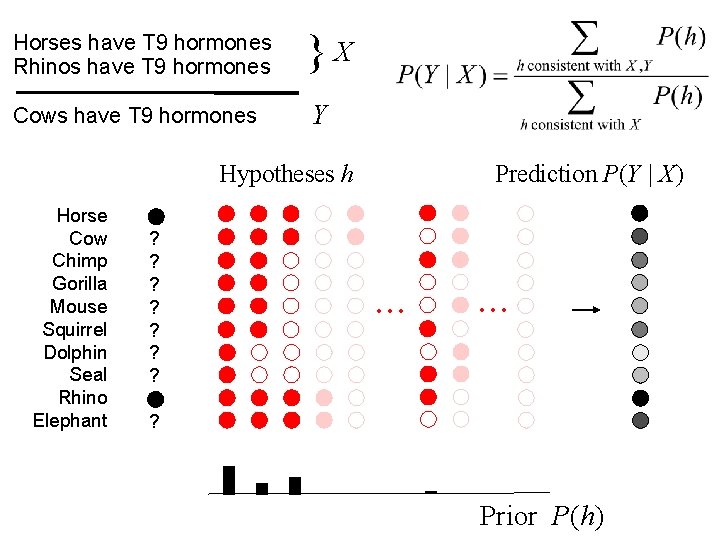

Horses have T 9 hormones Rhinos have T 9 hormones Cows have T 9 hormones }X Y Hypotheses h Horse Cow Chimp Gorilla Mouse Squirrel Dolphin Seal Rhino Elephant ? ? ? ? Prediction P(Y | X) . . . ? Prior P(h)

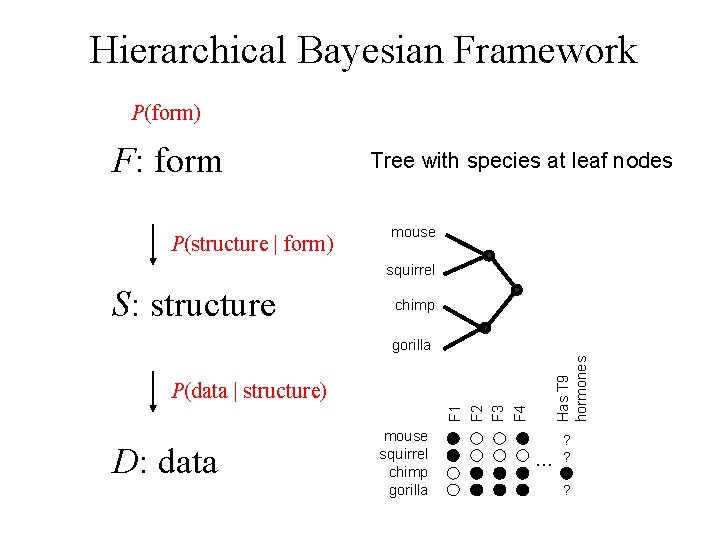

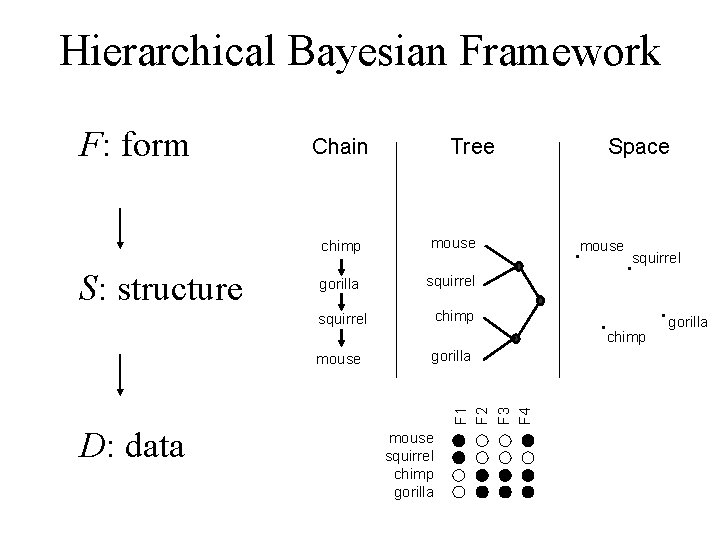

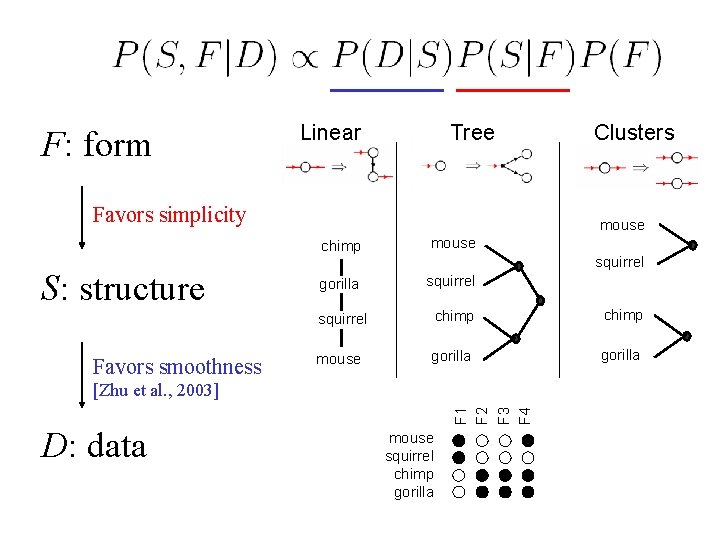

Hierarchical Bayesian Framework P(form) F: form P(structure | form) Tree with species at leaf nodes mouse squirrel S: structure chimp Has T 9 hormones gorilla F 1 F 2 F 3 F 4 P(data | structure) D: data mouse squirrel chimp gorilla … ? ? ?

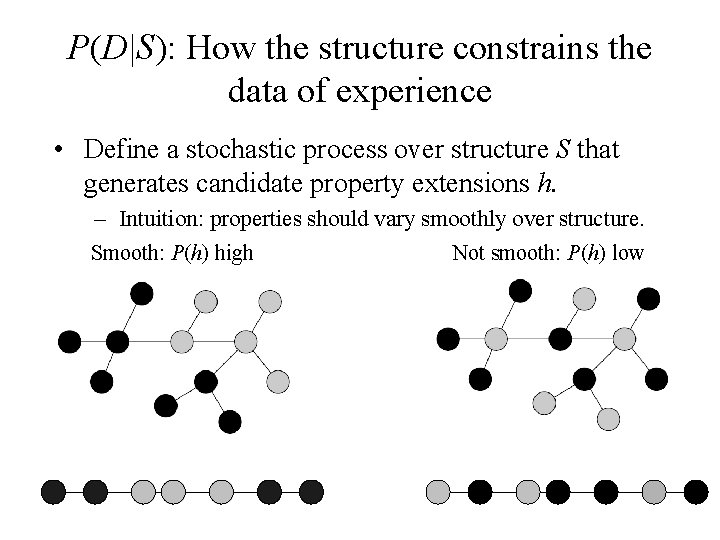

P(D|S): How the structure constrains the data of experience • Define a stochastic process over structure S that generates candidate property extensions h. – Intuition: properties should vary smoothly over structure. Smooth: P(h) high Not smooth: P(h) low

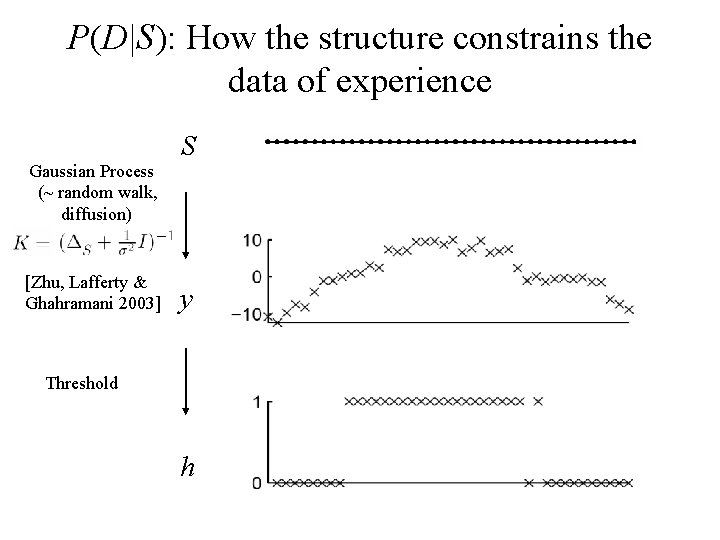

P(D|S): How the structure constrains the data of experience Gaussian Process (~ random walk, diffusion) [Zhu, Lafferty & Ghahramani 2003] S y Threshold h

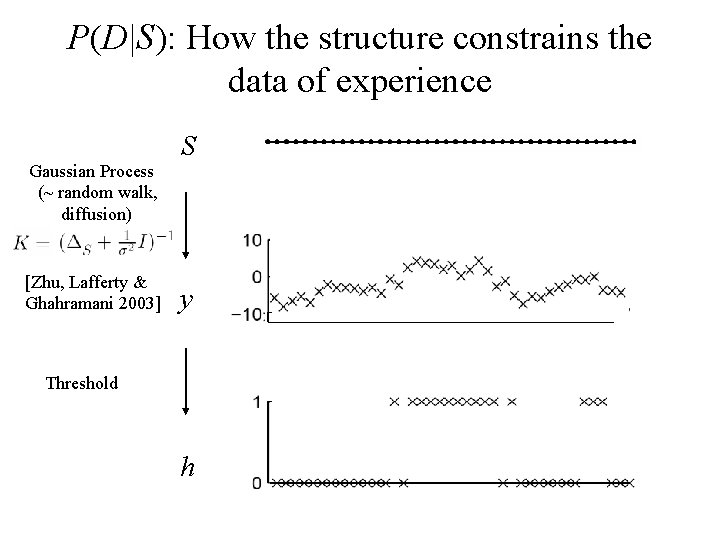

P(D|S): How the structure constrains the data of experience Gaussian Process (~ random walk, diffusion) [Zhu, Lafferty & Ghahramani 2003] S y Threshold h

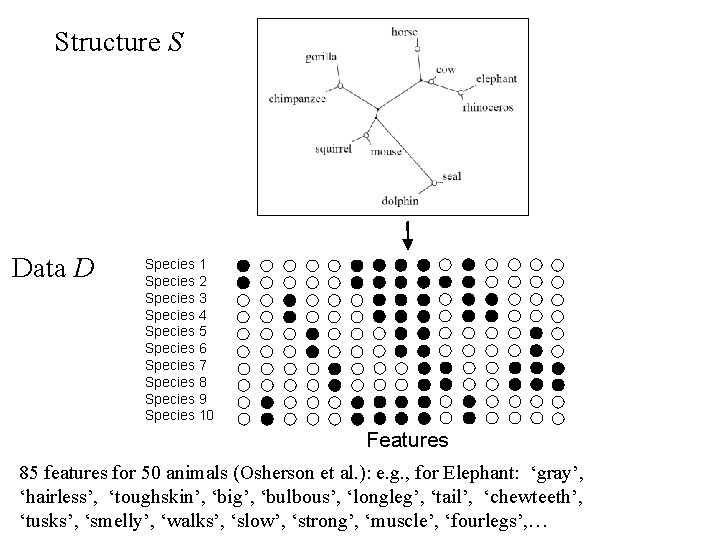

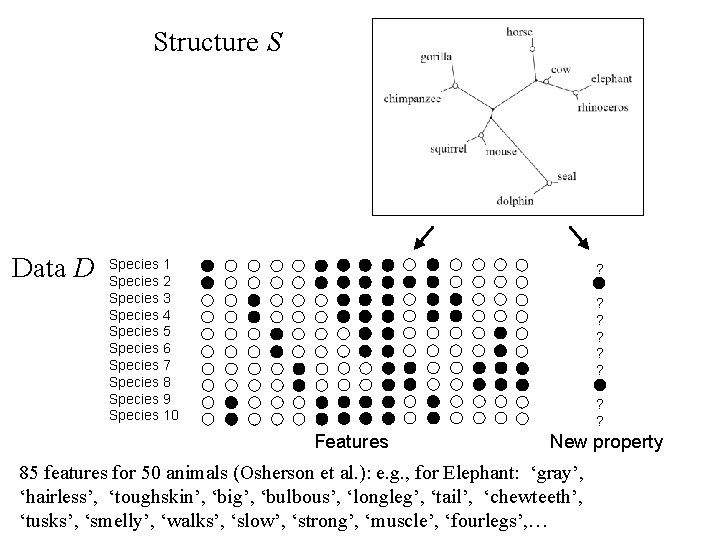

Structure S Data D Species 1 Species 2 Species 3 Species 4 Species 5 Species 6 Species 7 Species 8 Species 9 Species 10 Features 85 features for 50 animals (Osherson et al. ): e. g. , for Elephant: ‘gray’, ‘hairless’, ‘toughskin’, ‘big’, ‘bulbous’, ‘longleg’, ‘tail’, ‘chewteeth’, ‘tusks’, ‘smelly’, ‘walks’, ‘slow’, ‘strong’, ‘muscle’, ‘fourlegs’, …

![[c. f. , Lawrence, 2004; Smola & Kondor 2003] [c. f. , Lawrence, 2004; Smola & Kondor 2003]](http://slidetodoc.com/presentation_image/355fb29c8e459417c7fba8dbdcbb2b2e/image-27.jpg)

[c. f. , Lawrence, 2004; Smola & Kondor 2003]

Structure S Data D Species 1 Species 2 Species 3 Species 4 Species 5 Species 6 Species 7 Species 8 Species 9 Species 10 ? ? ? ? Features New property 85 features for 50 animals (Osherson et al. ): e. g. , for Elephant: ‘gray’, ‘hairless’, ‘toughskin’, ‘big’, ‘bulbous’, ‘longleg’, ‘tail’, ‘chewteeth’, ‘tusks’, ‘smelly’, ‘walks’, ‘slow’, ‘strong’, ‘muscle’, ‘fourlegs’, …

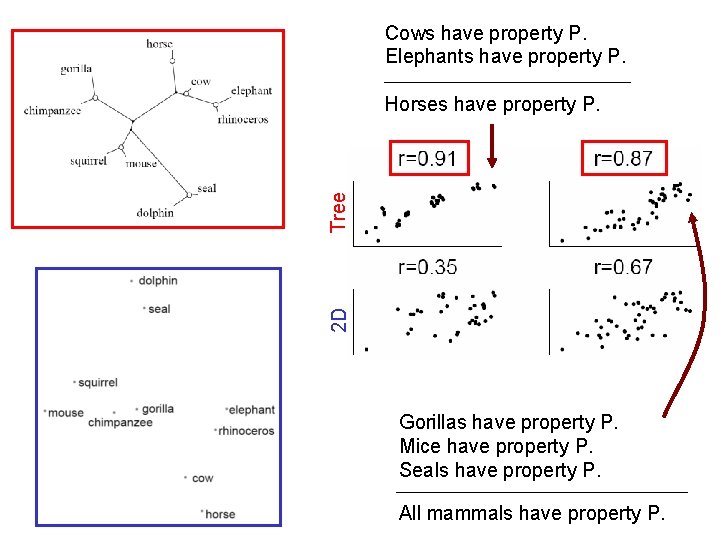

Cows have property P. Elephants have property P. 2 D Tree Horses have property P. Gorillas have property P. Mice have property P. Seals have property P. All mammals have property P.

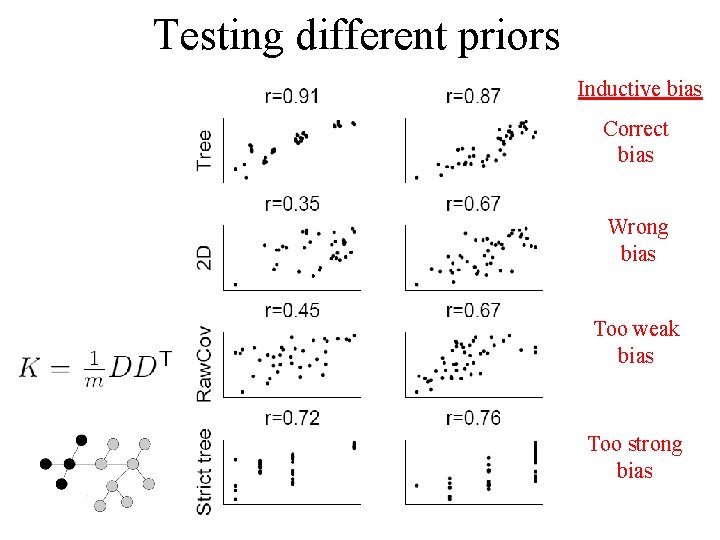

Testing different priors Inductive bias Correct bias Wrong bias Too weak bias Too strong bias

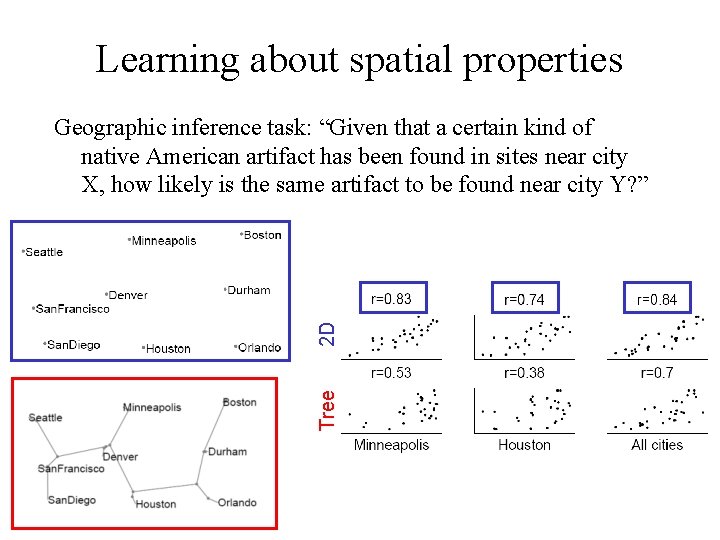

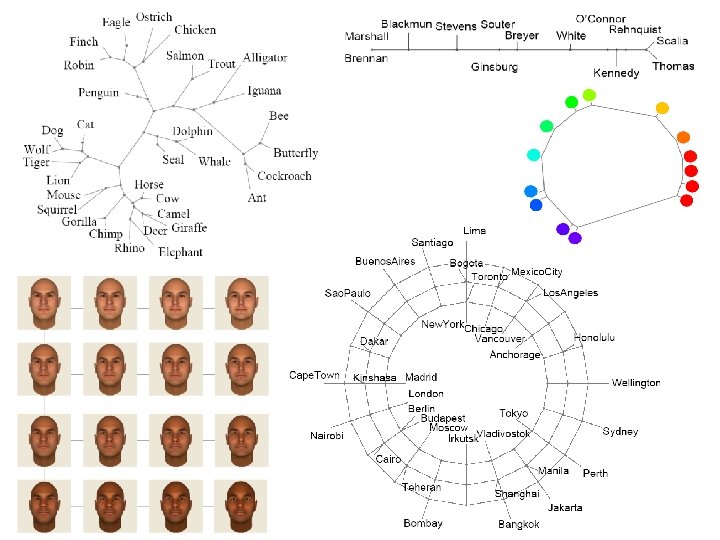

Learning about spatial properties Tree 2 D Geographic inference task: “Given that a certain kind of native American artifact has been found in sites near city X, how likely is the same artifact to be found near city Y? ”

Hierarchical Bayesian Framework F: form S: structure Chain Tree chimp mouse gorilla squirrel chimp Space mouse squirrel chimp D: data gorilla F 1 F 2 F 3 F 4 mouse squirrel chimp gorilla

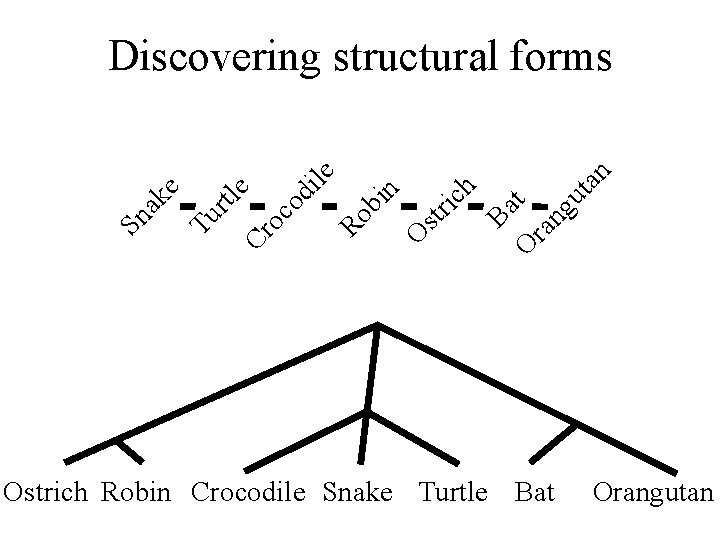

Cr e oc od ile Ro bi n O str ic h Ba O t ra ng ut an rtl Tu Sn ak e Discovering structural forms Ostrich Robin Crocodile Snake Turtle Bat Orangutan

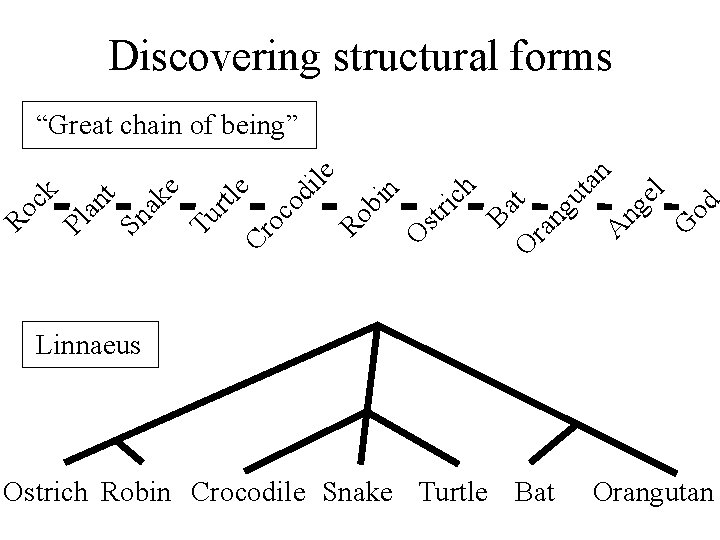

Discovering structural forms Cr e oc od ile Ro bi n O str ic h Ba O t ra ng ut an A ng el G od Tu rtl e Sn ak t an Pl Ro ck “Great chain of being” Linnaeus Ostrich Robin Crocodile Snake Turtle Bat Orangutan

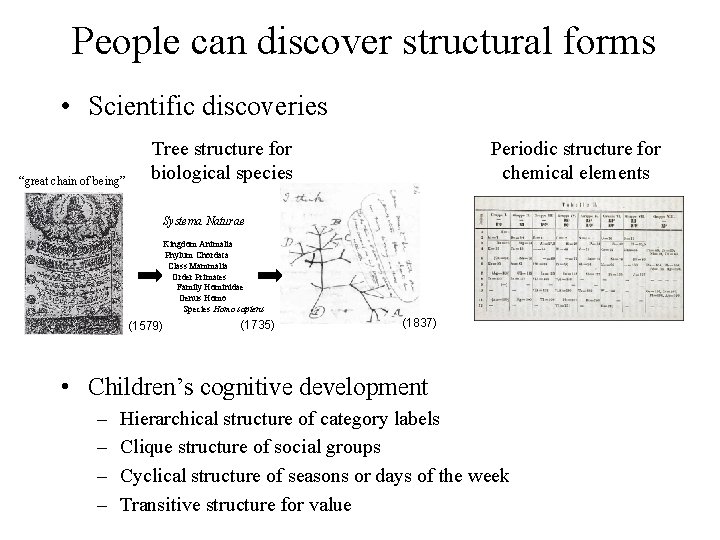

People can discover structural forms • Scientific discoveries Tree structure for “great chain of being” Periodic structure for chemical elements biological species Systema Naturae Kingdom Animalia Phylum Chordata Class Mammalia Order Primates Family Hominidae Genus Homo Species Homo sapiens (1579) (1735) (1837) • Children’s cognitive development – – Hierarchical structure of category labels Clique structure of social groups Cyclical structure of seasons or days of the week Transitive structure for value

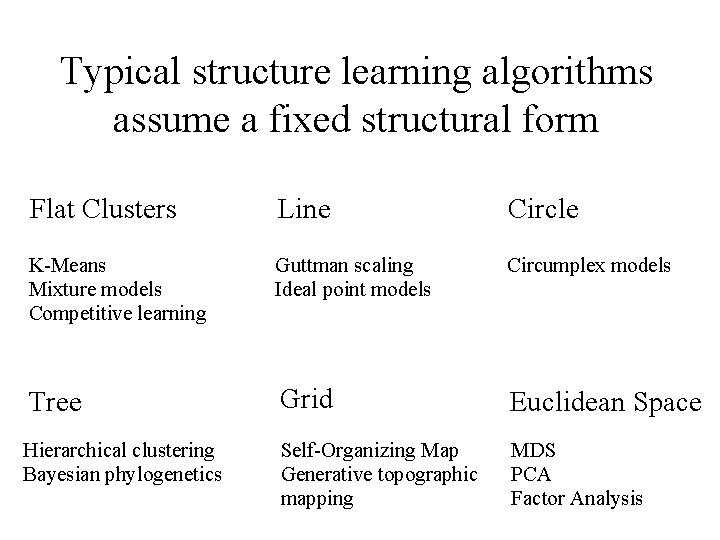

Typical structure learning algorithms assume a fixed structural form Flat Clusters Line Circle K-Means Mixture models Competitive learning Guttman scaling Ideal point models Circumplex models Tree Grid Euclidean Space Hierarchical clustering Bayesian phylogenetics Self-Organizing Map Generative topographic mapping MDS PCA Factor Analysis

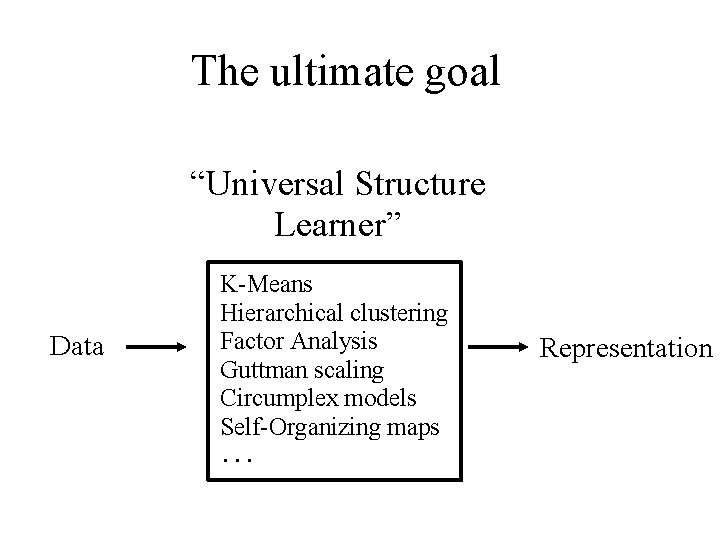

The ultimate goal “Universal Structure Learner” Data K-Means Hierarchical clustering Factor Analysis Guttman scaling Circumplex models Self-Organizing maps ··· Representation

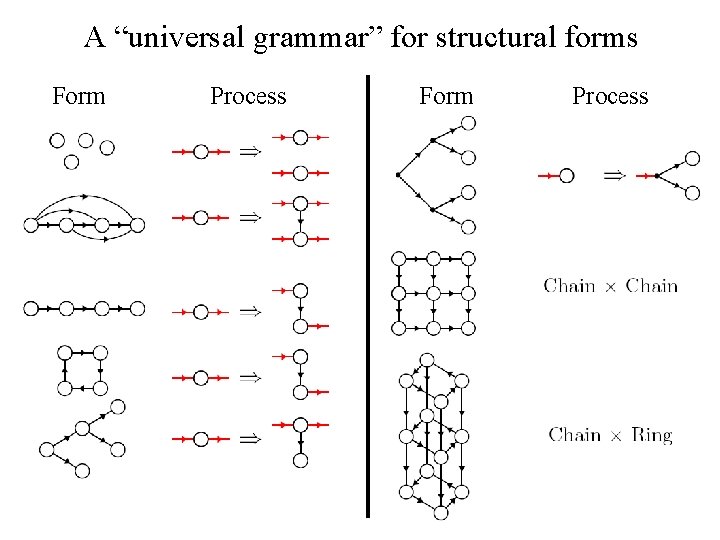

A “universal grammar” for structural forms Form Process

F: form Linear Tree Favors simplicity S: structure Favors smoothness mouse chimp mouse gorilla squirrel F 1 F 2 F 3 F 4 [Zhu et al. , 2003] D: data Clusters mouse squirrel chimp gorilla

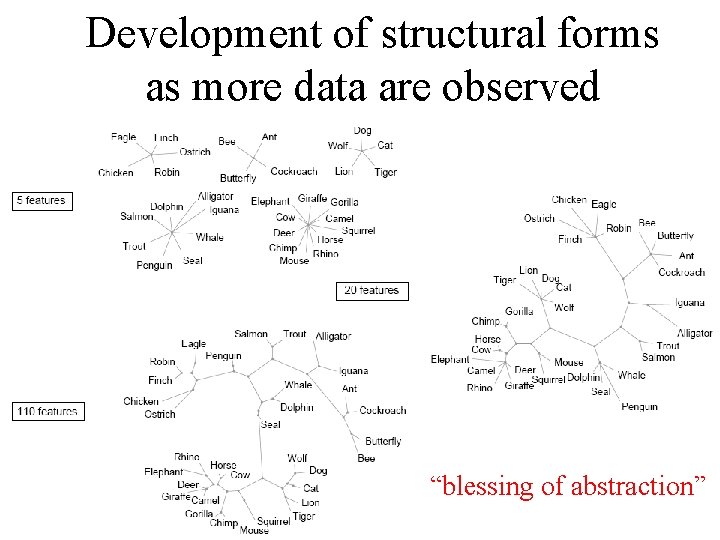

Development of structural forms as more data are observed “blessing of abstraction”

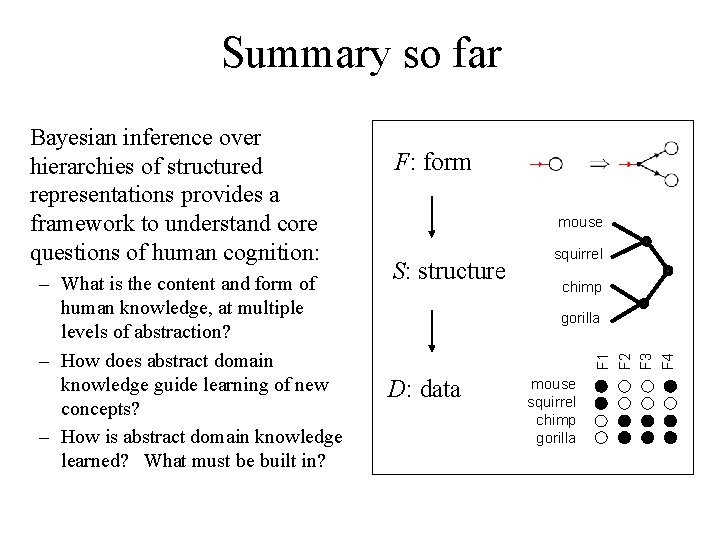

Summary so far – What is the content and form of human knowledge, at multiple levels of abstraction? – How does abstract domain knowledge guide learning of new concepts? – How is abstract domain knowledge learned? What must be built in? F: form mouse S: structure squirrel chimp gorilla F 1 F 2 F 3 F 4 Bayesian inference over hierarchies of structured representations provides a framework to understand core questions of human cognition: D: data mouse squirrel chimp gorilla

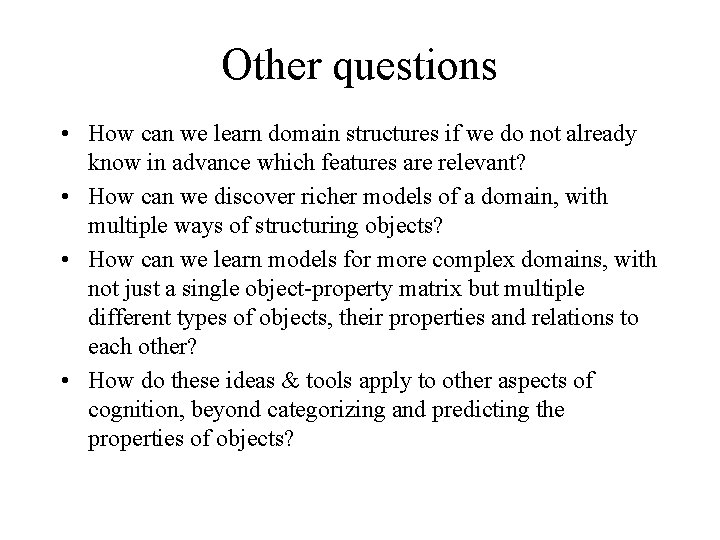

Other questions • How can we learn domain structures if we do not already know in advance which features are relevant? • How can we discover richer models of a domain, with multiple ways of structuring objects? • How can we learn models for more complex domains, with not just a single object-property matrix but multiple different types of objects, their properties and relations to each other? • How do these ideas & tools apply to other aspects of cognition, beyond categorizing and predicting the properties of objects?

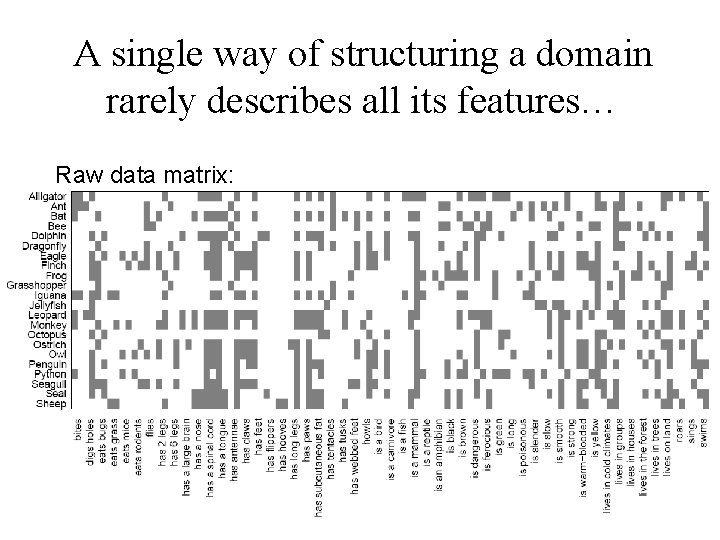

A single way of structuring a domain rarely describes all its features… Raw data matrix:

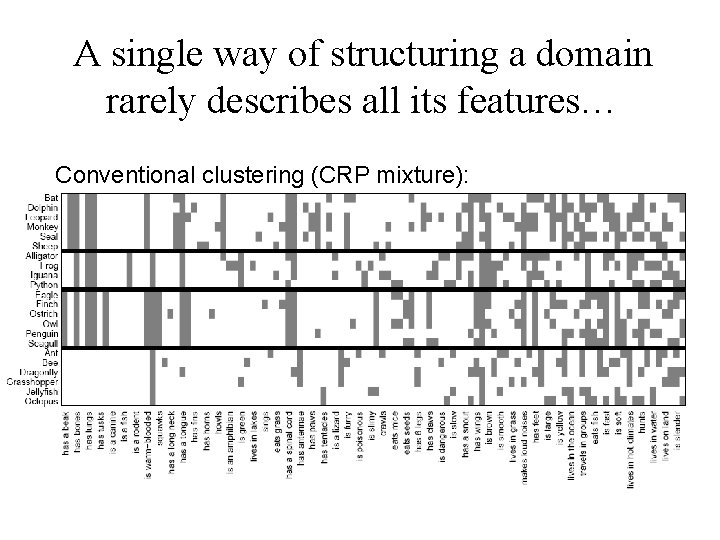

A single way of structuring a domain rarely describes all its features… Conventional clustering (CRP mixture):

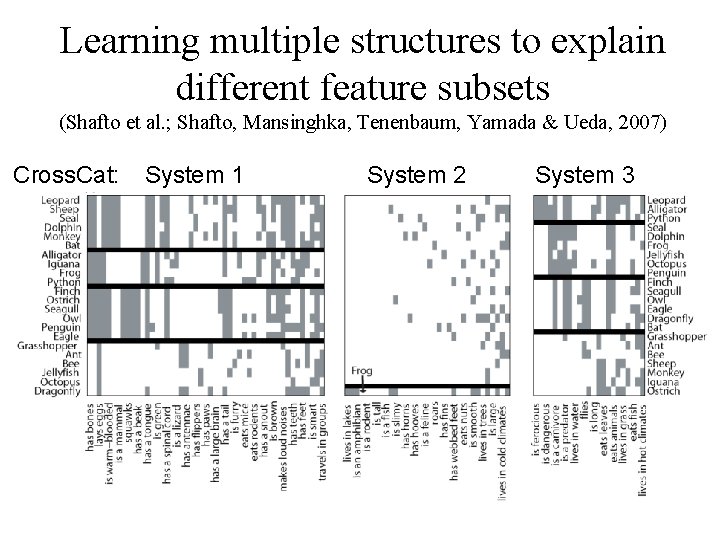

Learning multiple structures to explain different feature subsets (Shafto et al. ; Shafto, Mansinghka, Tenenbaum, Yamada & Ueda, 2007) Cross. Cat: System 1 System 2 System 3

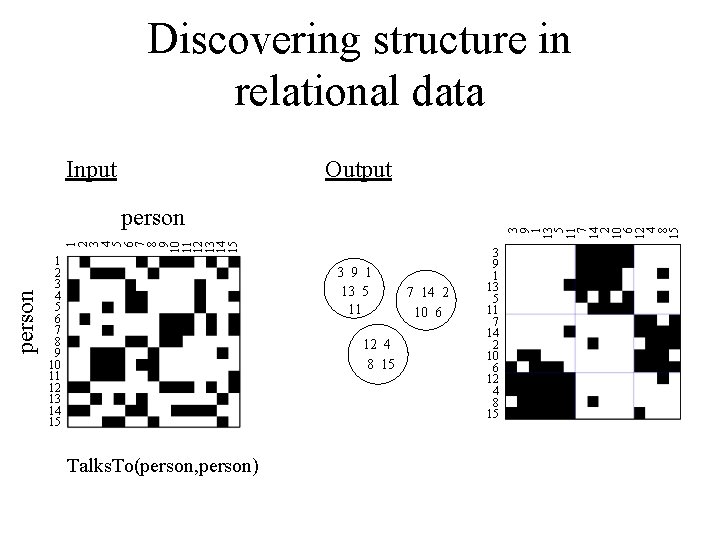

Discovering structure in relational data Input Output person 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 3 9 1 13 5 11 7 14 2 10 6 12 4 8 15 person 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 3 9 1 13 5 11 12 4 8 15 Talks. To(person, person) 7 14 2 10 6 3 9 1 13 5 11 7 14 2 10 6 12 4 8 15

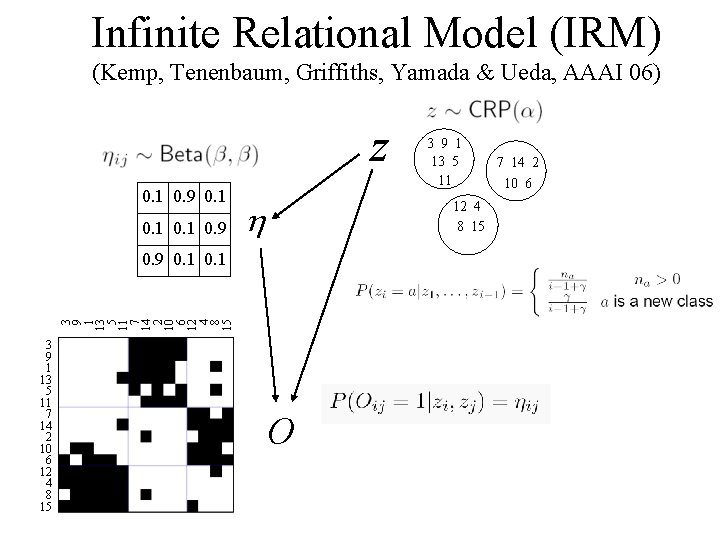

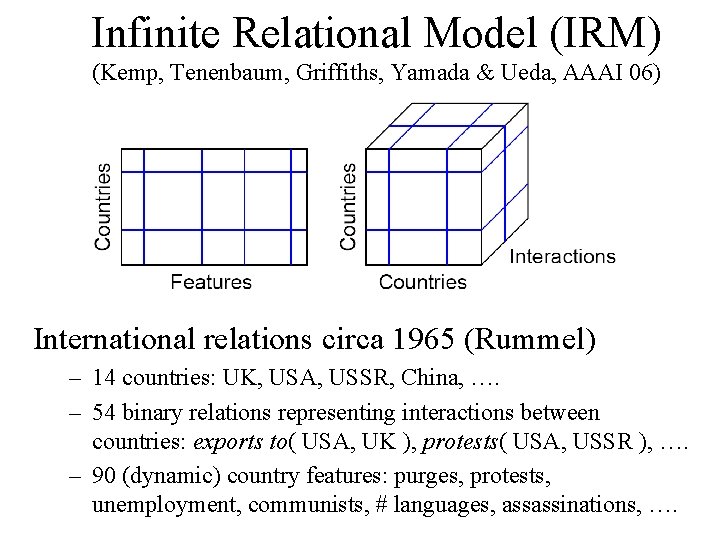

Infinite Relational Model (IRM) (Kemp, Tenenbaum, Griffiths, Yamada & Ueda, AAAI 06) z 0. 1 0. 9 0. 1 0. 9 h 12 4 8 15 3 9 1 13 5 11 7 14 2 10 6 12 4 8 15 0. 9 0. 1 3 9 1 13 5 11 7 14 2 10 6 12 4 8 15 3 9 1 13 5 11 O 7 14 2 10 6

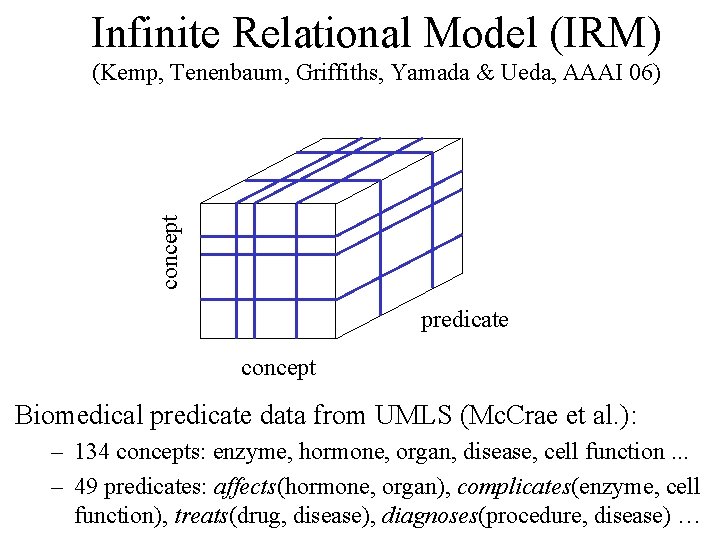

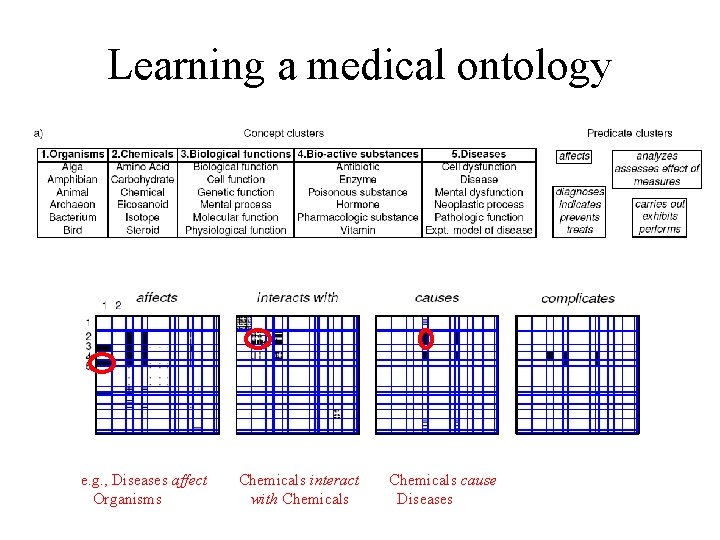

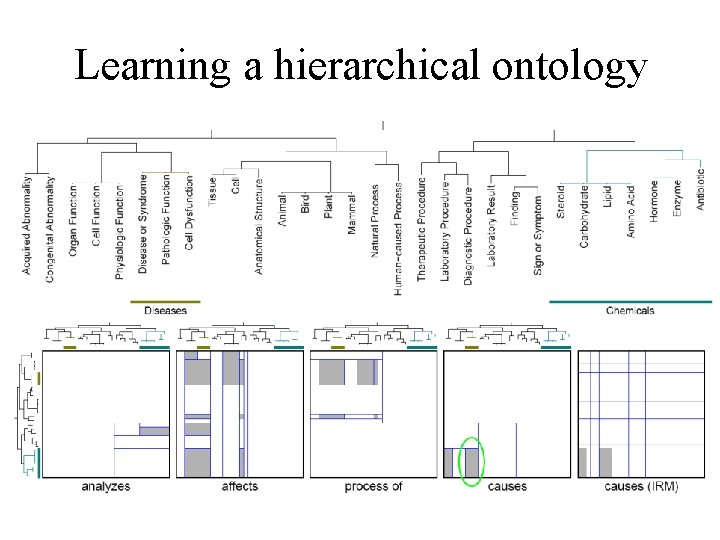

Infinite Relational Model (IRM) concept (Kemp, Tenenbaum, Griffiths, Yamada & Ueda, AAAI 06) predicate concept Biomedical predicate data from UMLS (Mc. Crae et al. ): – 134 concepts: enzyme, hormone, organ, disease, cell function. . . – 49 predicates: affects(hormone, organ), complicates(enzyme, cell function), treats(drug, disease), diagnoses(procedure, disease) …

Learning a medical ontology e. g. , Diseases affect Organisms Chemicals interact with Chemicals cause Diseases

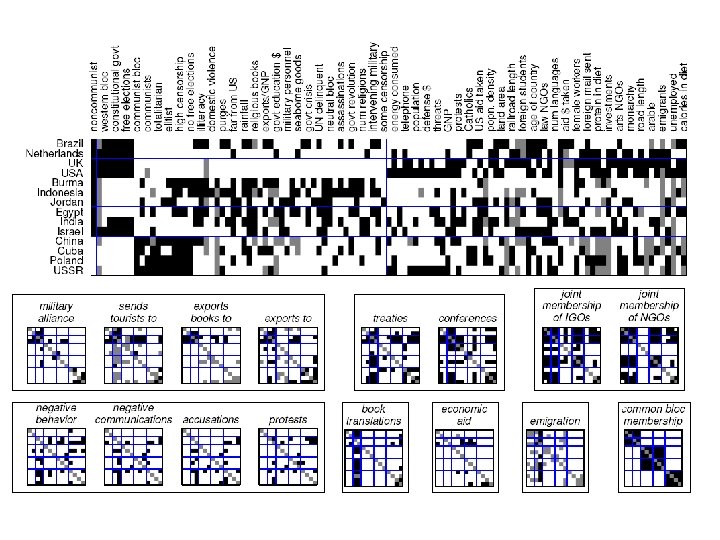

Infinite Relational Model (IRM) (Kemp, Tenenbaum, Griffiths, Yamada & Ueda, AAAI 06) International relations circa 1965 (Rummel) – 14 countries: UK, USA, USSR, China, …. – 54 binary relations representing interactions between countries: exports to( USA, UK ), protests( USA, USSR ), …. – 90 (dynamic) country features: purges, protests, unemployment, communists, # languages, assassinations, ….

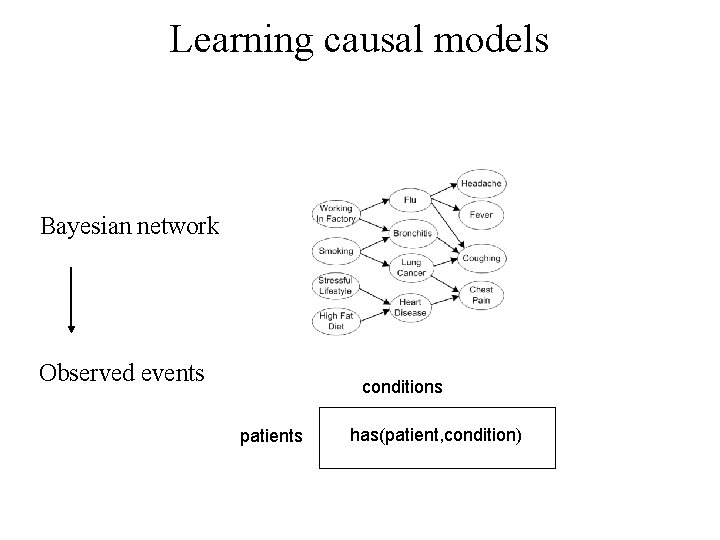

Learning causal models Bayesian network Observed events conditions patients has(patient, condition)

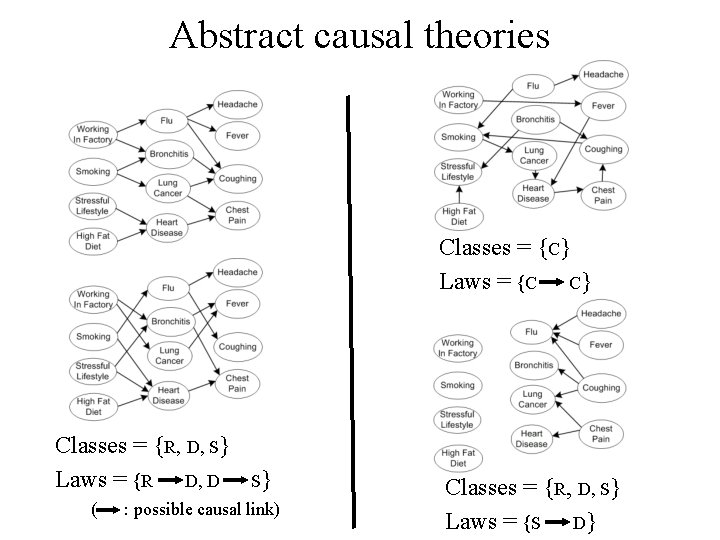

Abstract causal theories Classes = {C} Laws = {C C} Classes = {R, D, S} Laws = {R D, D S} ( : possible causal link) Classes = {R, D, S} Laws = {S D}

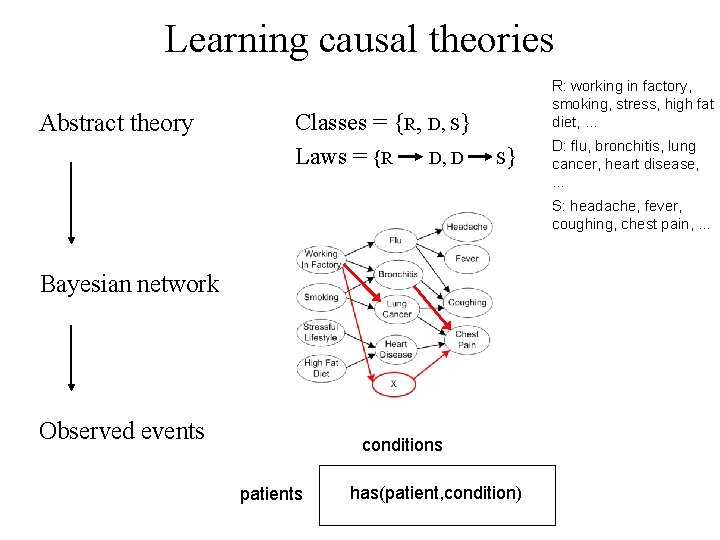

Learning causal theories Abstract theory Classes = {R, D, S} Laws = {R D, D S} R: working in factory, smoking, stress, high fat diet, … D: flu, bronchitis, lung cancer, heart disease, … S: headache, fever, coughing, chest pain, … Bayesian network Observed events conditions patients has(patient, condition)

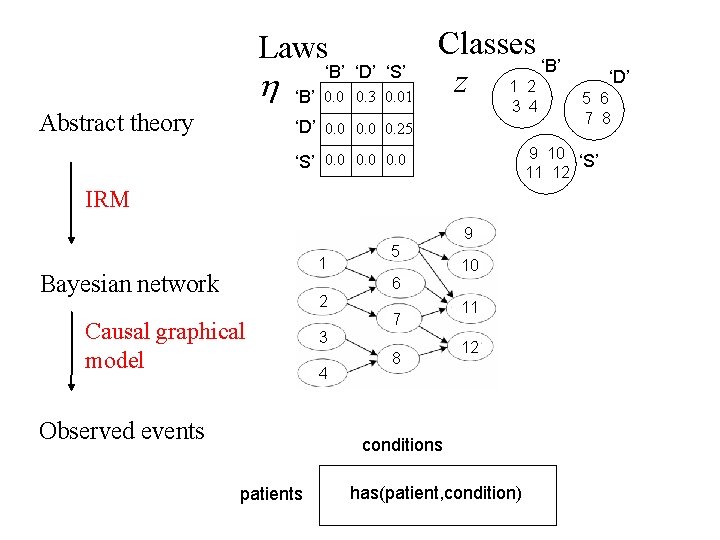

Laws h Abstract theory ‘B’ ‘D’ ‘S’ ‘B’ 0. 0 0. 3 0. 01 Classes ‘B’ z 1 2 3 4 ‘D’ 0. 0 0. 25 IRM 9 Bayesian network 5 10 6 2 Causal graphical model Observed events 7 3 4 8 11 12 conditions patients 5 6 7 8 9 10 ‘S’ 11 12 ‘S’ 0. 0 1 ‘D’ has(patient, condition)

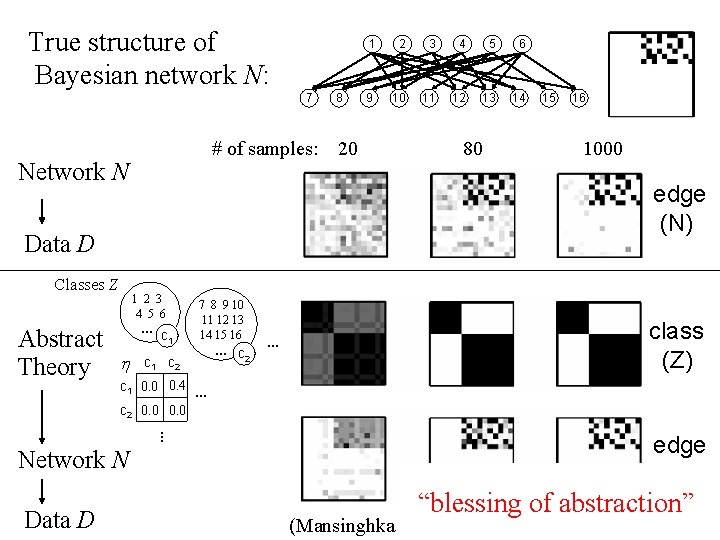

True structure of Bayesian network N: 7 1 2 3 4 5 6 … c 1 h 4 5 6 9 10 11 12 13 14 15 16 c 1 c 2 7 8 9 10 11 12 13 14 15 16 … c 2 … class (Z) … c 1 0. 0 0. 4 … c 2 0. 0 Network N Data D 3 edge (N) Data D Abstract Theory 2 # of samples: 20 80 1000 Network N Classes Z 8 1 edge (N) “blessing of abstraction” (Mansinghka, Kemp, Tenenbaum, Griffiths UAI 06)

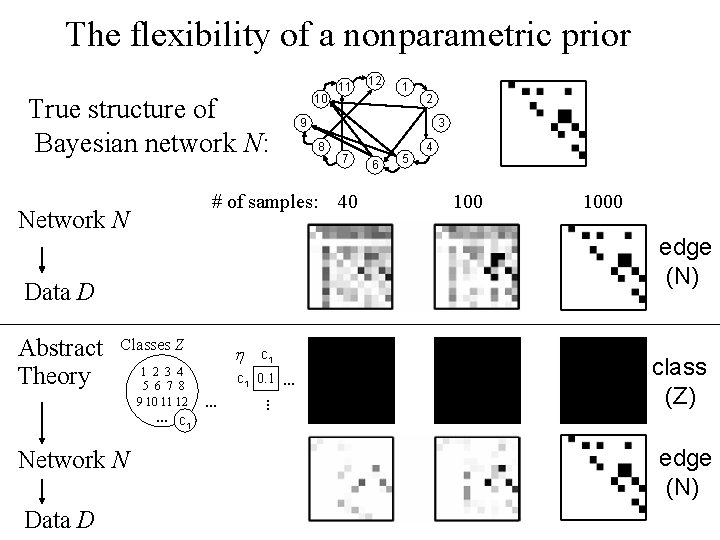

The flexibility of a nonparametric prior True structure of Bayesian network N: 12 1 2 9 3 8 7 6 5 4 # of samples: 40 1000 Network N edge (N) Data D Classes Z h 1 2 3 4 5 6 7 8 9 10 11 12 … c c 1 0. 1 … … … Abstract Theory 10 11 class (Z) 1 Network N Data D edge (N)

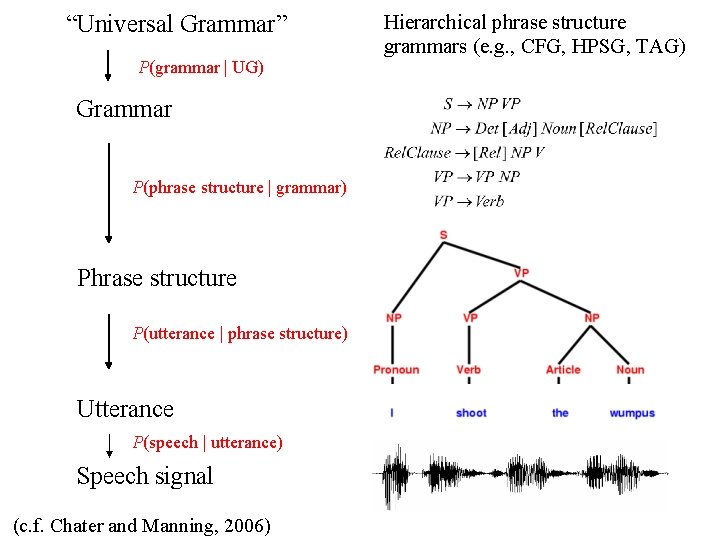

“Universal Grammar” P(grammar | UG) Grammar P(phrase structure | grammar) Phrase structure P(utterance | phrase structure) Utterance P(speech | utterance) Speech signal (c. f. Chater and Manning, 2006) Hierarchical phrase structure grammars (e. g. , CFG, HPSG, TAG)

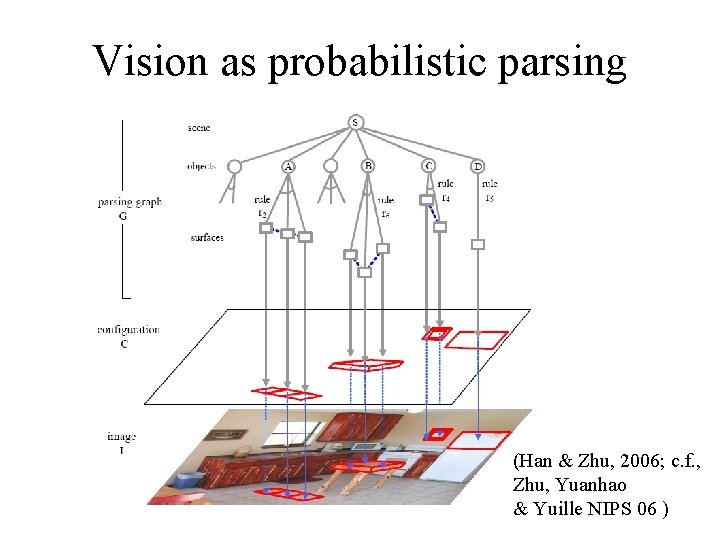

Vision as probabilistic parsing (Han & Zhu, 2006; c. f. , Zhu, Yuanhao & Yuille NIPS 06 )

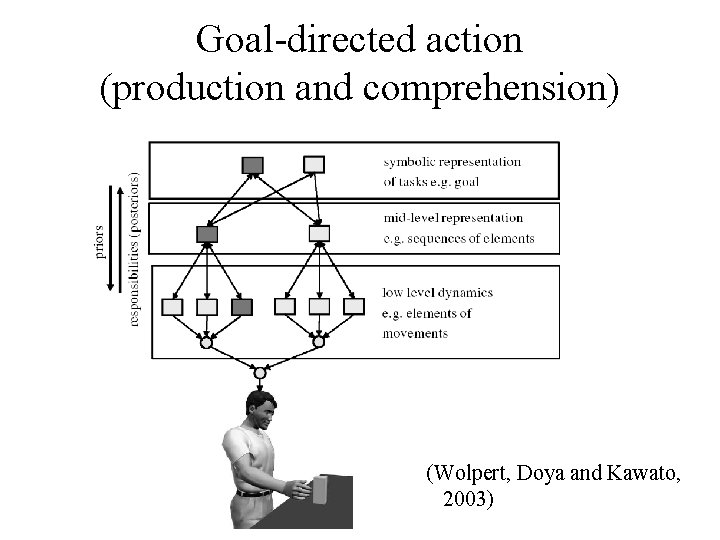

Goal-directed action (production and comprehension) (Wolpert, Doya and Kawato, 2003)

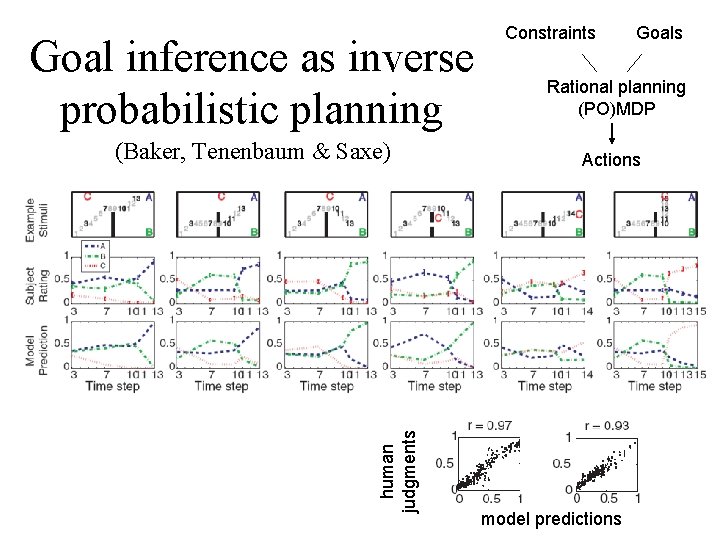

Goal inference as inverse probabilistic planning human judgments (Baker, Tenenbaum & Saxe) Constraints Goals Rational planning (PO)MDP Actions model predictions

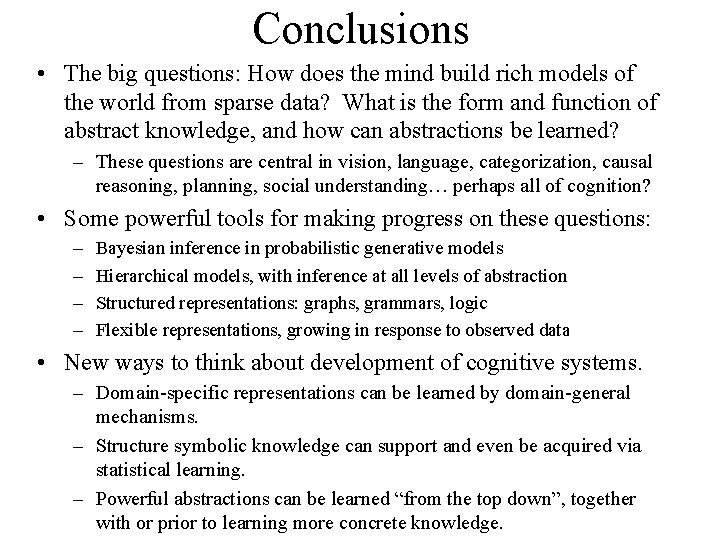

Conclusions • The big questions: How does the mind build rich models of the world from sparse data? What is the form and function of abstract knowledge, and how can abstractions be learned? – These questions are central in vision, language, categorization, causal reasoning, planning, social understanding… perhaps all of cognition? • Some powerful tools for making progress on these questions: – – Bayesian inference in probabilistic generative models Hierarchical models, with inference at all levels of abstraction Structured representations: graphs, grammars, logic Flexible representations, growing in response to observed data • New ways to think about development of cognitive systems. – Domain-specific representations can be learned by domain-general mechanisms. – Structure symbolic knowledge can support and even be acquired via statistical learning. – Powerful abstractions can be learned “from the top down”, together with or prior to learning more concrete knowledge.

Extra slides

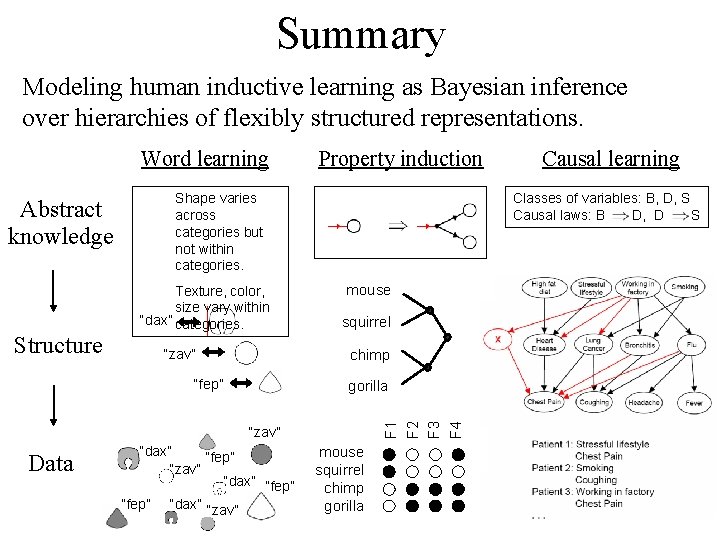

Summary Modeling human inductive learning as Bayesian inference over hierarchies of flexibly structured representations. Word learning Structure Shape varies across categories but not within categories. Texture, color, size vary within “dax” categories. “zav” mouse squirrel chimp “fep” gorilla “zav” Data “dax” “fep” “zav” “dax” “fep” “dax” “zav” Causal learning Classes of variables: B, D, S Causal laws: B D, D S F 1 F 2 F 3 F 4 Abstract knowledge Property induction mouse squirrel chimp gorilla

Conclusions • Learning algorithms for discovering domain structure, given feature or relational data. • Broader themes – Combining structured representations with statistical inference yields powerful knowledge discovery tools. – Hierarchical Bayesian modeling allows us to learn domain structure at multiple levels of abstraction. – Nonparametric Bayesian formulations allow the complexity of representations to be determined automatically and on the fly, growing as the data require.

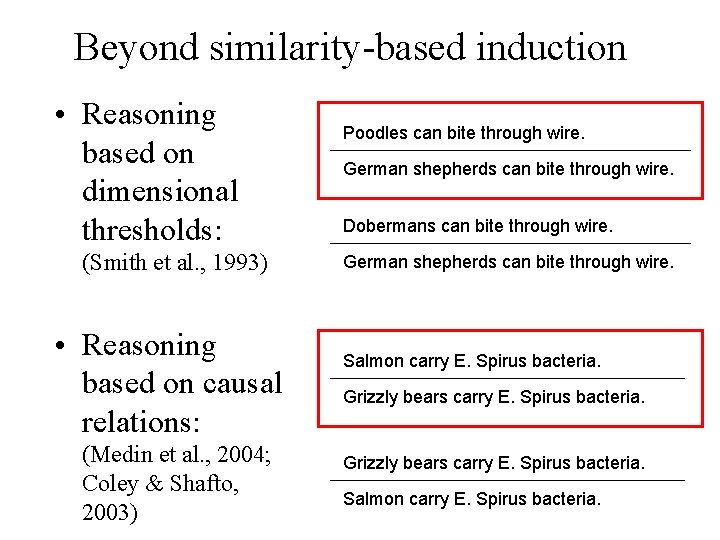

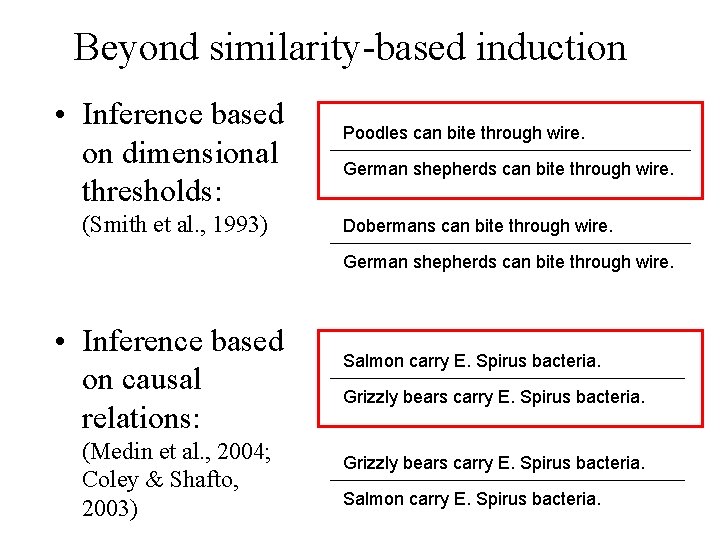

Beyond similarity-based induction • Reasoning based on dimensional thresholds: (Smith et al. , 1993) • Reasoning based on causal relations: (Medin et al. , 2004; Coley & Shafto, 2003) Poodles can bite through wire. German shepherds can bite through wire. Dobermans can bite through wire. German shepherds can bite through wire. Salmon carry E. Spirus bacteria. Grizzly bears carry E. Spirus bacteria. Salmon carry E. Spirus bacteria.

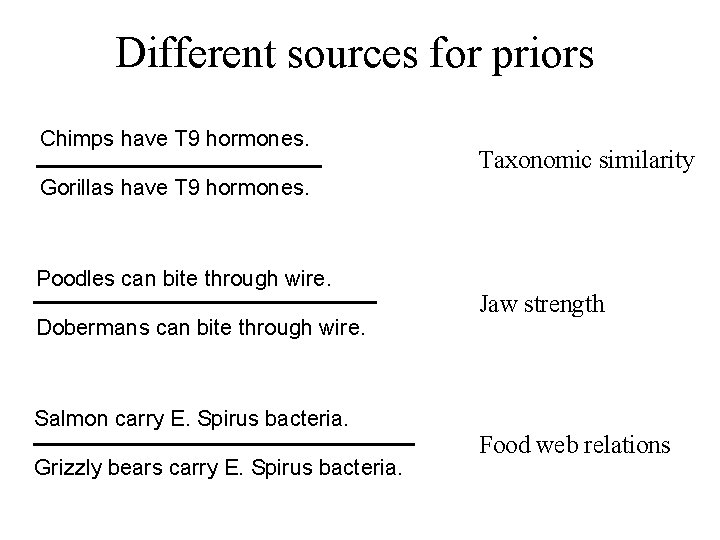

Different sources for priors Chimps have T 9 hormones. Taxonomic similarity Gorillas have T 9 hormones. Poodles can bite through wire. Dobermans can bite through wire. Jaw strength Salmon carry E. Spirus bacteria. Grizzly bears carry E. Spirus bacteria. Food web relations

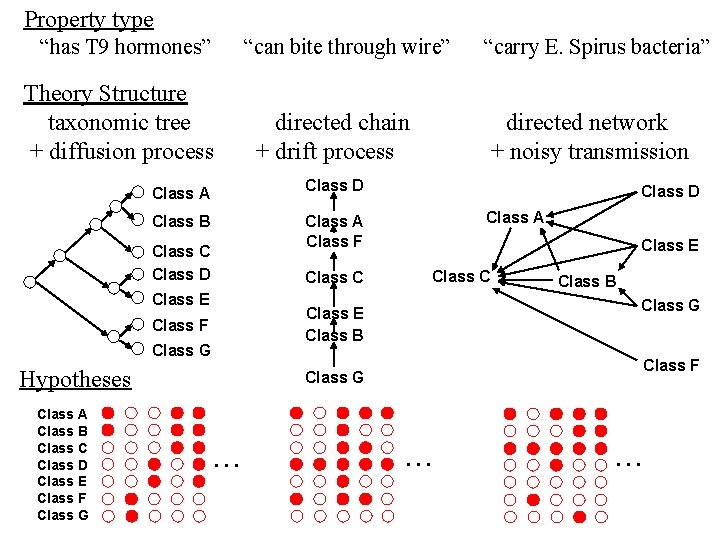

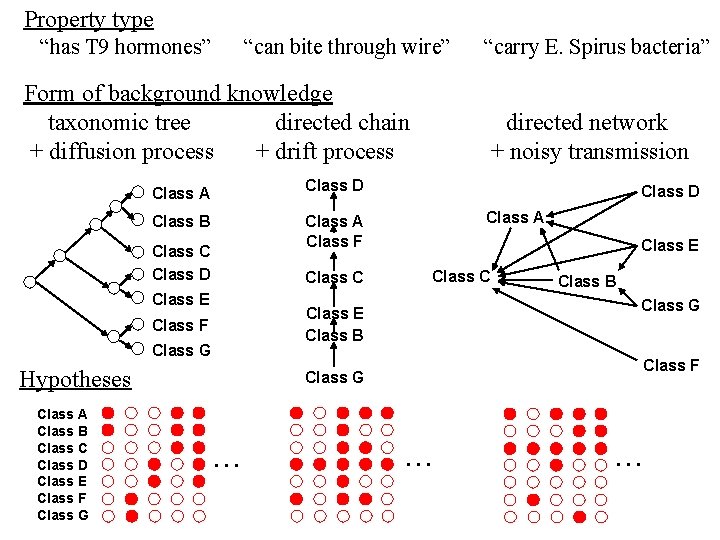

Property type “has T 9 hormones” “can bite through wire” “carry E. Spirus bacteria” Theory Structure taxonomic tree directed chain directed network + diffusion process + drift process + noisy transmission Class D Class A Class B Class A Class F Class C Class D Class E Class C Class E Class B Class G Class E Class B Class F Class G Hypotheses Class A Class B Class C Class D Class E Class F Class G Class D Class F Class G . .

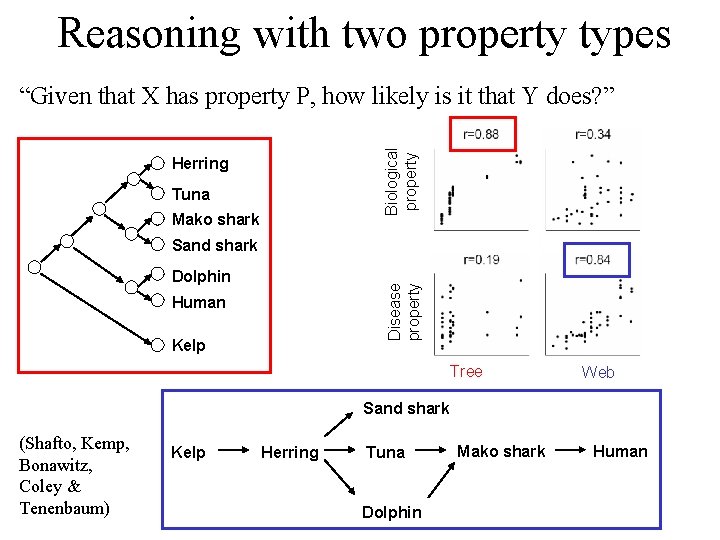

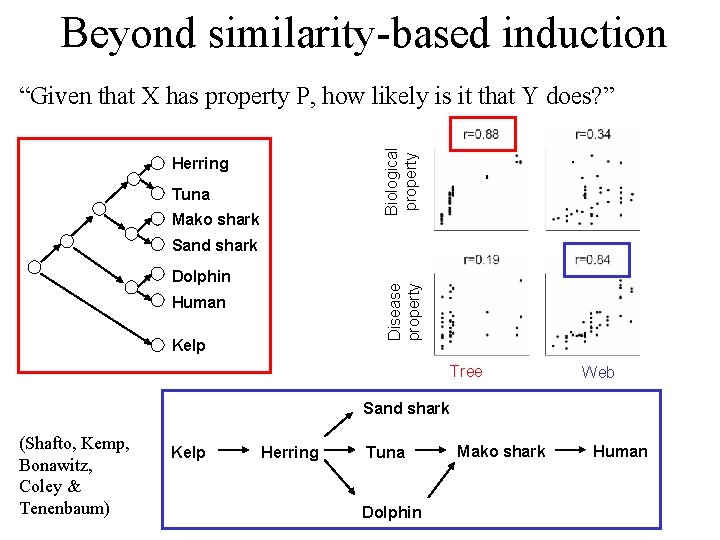

Reasoning with two property types Biological property “Given that X has property P, how likely is it that Y does? ” Herring Tuna Mako shark Sand shark Disease property Dolphin Human Kelp Tree Web Sand shark (Shafto, Kemp, Bonawitz, Coley & Tenenbaum) Kelp Herring Tuna Dolphin Mako shark Human

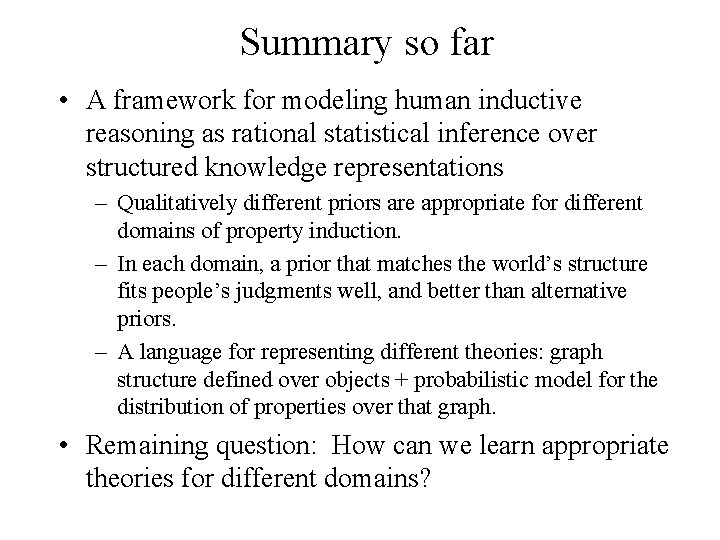

Summary so far • A framework for modeling human inductive reasoning as rational statistical inference over structured knowledge representations – Qualitatively different priors are appropriate for different domains of property induction. – In each domain, a prior that matches the world’s structure fits people’s judgments well, and better than alternative priors. – A language for representing different theories: graph structure defined over objects + probabilistic model for the distribution of properties over that graph. • Remaining question: How can we learn appropriate theories for different domains?

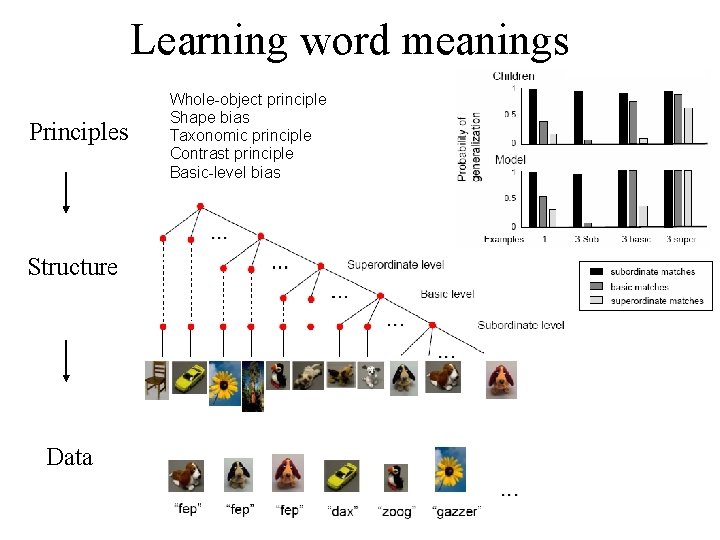

Learning word meanings Principles Structure Data Whole-object principle Shape bias Taxonomic principle Contrast principle Basic-level bias

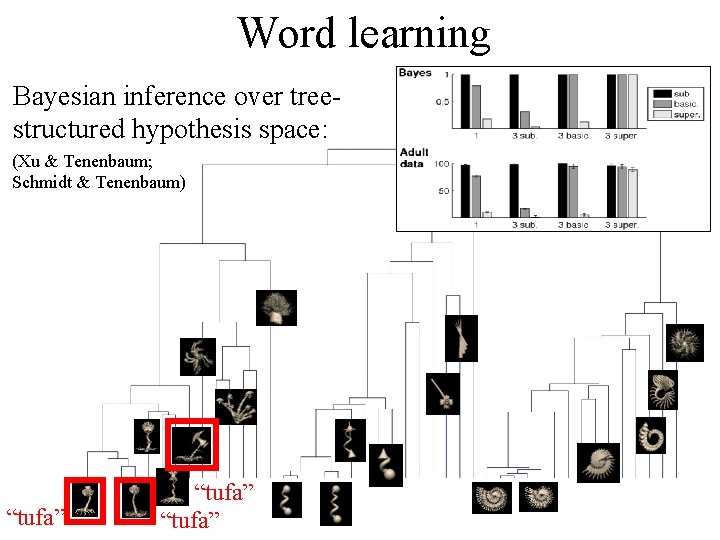

Word learning Bayesian inference over treestructured hypothesis space: (Xu & Tenenbaum; Schmidt & Tenenbaum) “tufa”

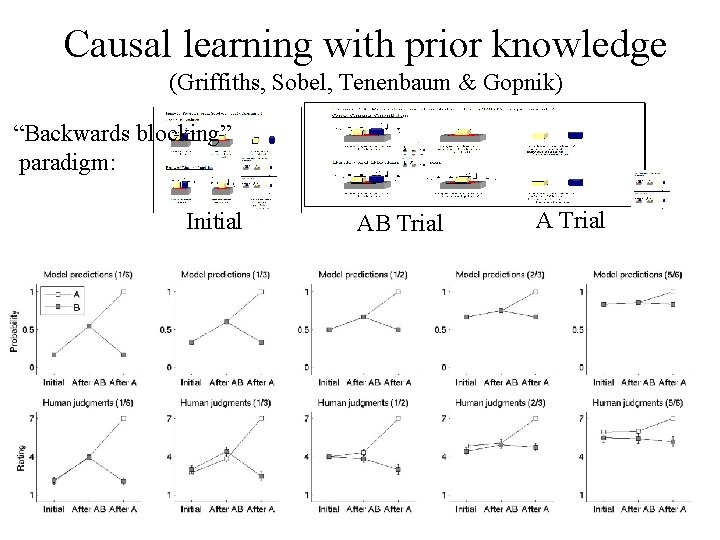

Causal learning with prior knowledge (Griffiths, Sobel, Tenenbaum & Gopnik) “Backwards blocking” paradigm: Initial AB Trial A Trial

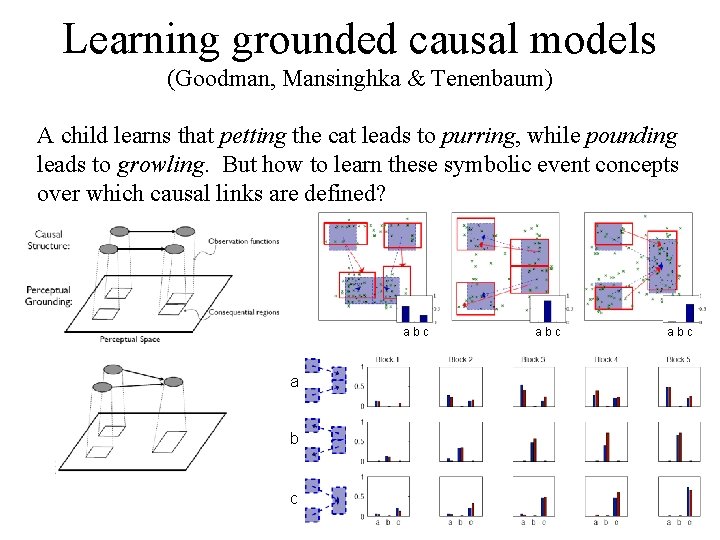

Learning grounded causal models (Goodman, Mansinghka & Tenenbaum) A child learns that petting the cat leads to purring, while pounding leads to growling. But how to learn these symbolic event concepts over which causal links are defined? abc a b c abc

The big picture • What we need to understand: the mind’s ability to build rich models of the world from sparse data. – – – Learning about objects, categories, and their properties. Causal inference Understanding other people’s actions, plans, thoughts, goals Language comprehension and production Scene understanding • What do we need to understand these abilities? – – Bayesian inference in probabilistic generative models Hierarchical models, with inference at all levels of abstraction Structured representations: graphs, grammars, logic Flexible representations, growing in response to observed data

Overhypotheses • Syntax: Universal Grammar • Phonology Faithfulness constraints Markedness constraints • Word Learning Shape bias Principle of contrast Whole object bias • Folk physics Objects are unified, bounded and persistent bodies • Predicability M-constraint • Folk biology Taxonomic principle (Chomsky) (Prince, Smolensky) (Markman) (Spelke) (Keil) (Atran) . . .

Beyond similarity-based induction • Inference based on dimensional thresholds: (Smith et al. , 1993) Poodles can bite through wire. German shepherds can bite through wire. Dobermans can bite through wire. German shepherds can bite through wire. • Inference based on causal relations: (Medin et al. , 2004; Coley & Shafto, 2003) Salmon carry E. Spirus bacteria. Grizzly bears carry E. Spirus bacteria. Salmon carry E. Spirus bacteria.

Property type “has T 9 hormones” “can bite through wire” “carry E. Spirus bacteria” Form of background knowledge taxonomic tree directed chain directed network + diffusion process + drift process + noisy transmission Class D Class A Class B Class A Class F Class C Class D Class E Class C Class E Class B Class G Class E Class B Class F Class G Hypotheses Class A Class B Class C Class D Class E Class F Class G Class D Class F Class G . .

Beyond similarity-based induction Biological property “Given that X has property P, how likely is it that Y does? ” Herring Tuna Mako shark Sand shark Disease property Dolphin Human Kelp Tree Web Sand shark (Shafto, Kemp, Bonawitz, Coley & Tenenbaum) Kelp Herring Tuna Dolphin Mako shark Human

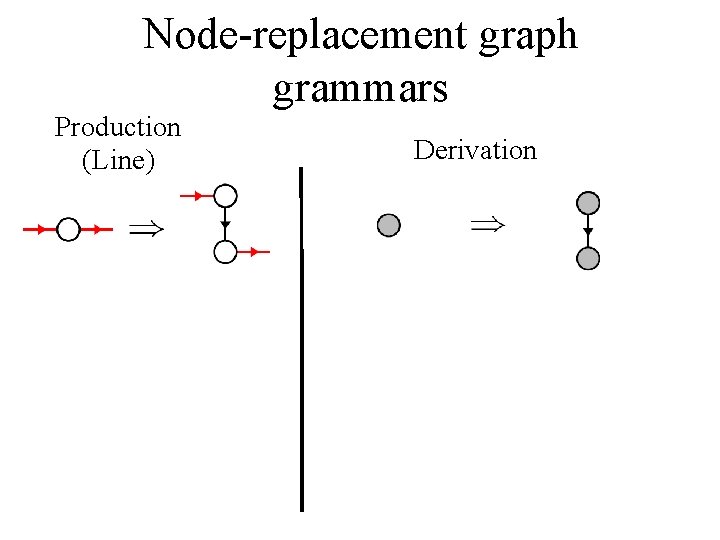

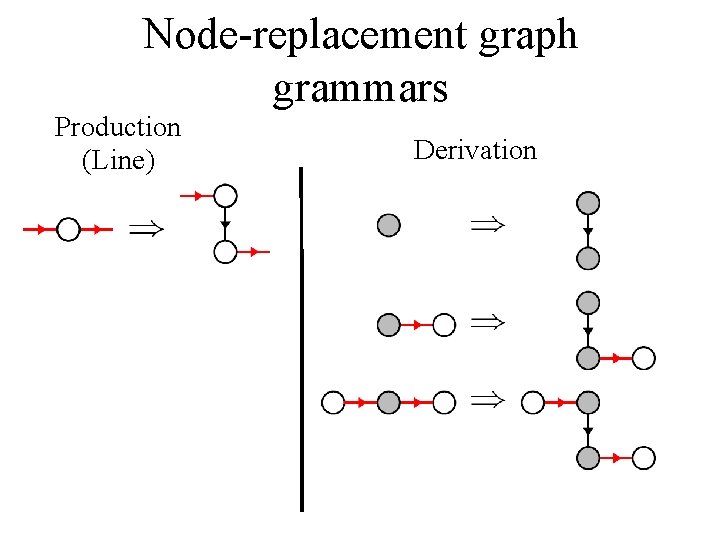

Node-replacement graph grammars Production (Line) Derivation

Node-replacement graph grammars Production (Line) Derivation

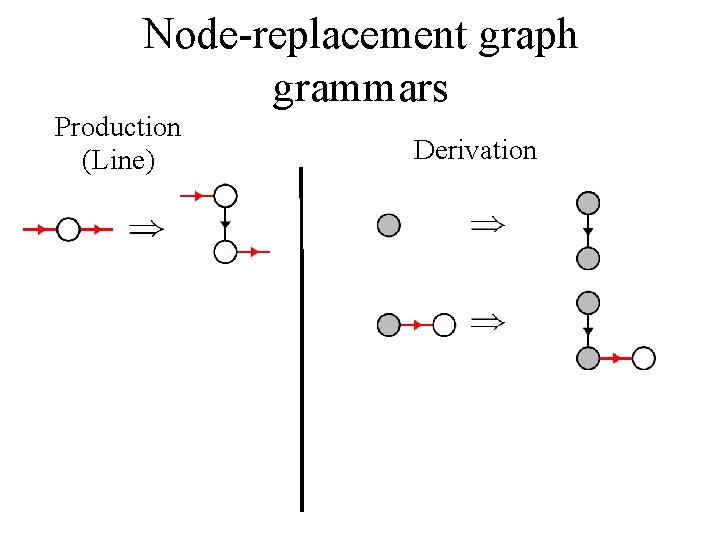

Node-replacement graph grammars Production (Line) Derivation

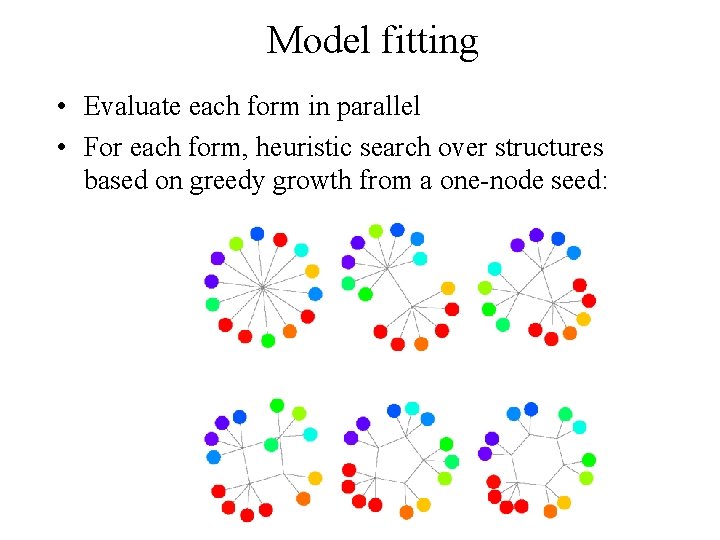

Model fitting • Evaluate each form in parallel • For each form, heuristic search over structures based on greedy growth from a one-node seed:

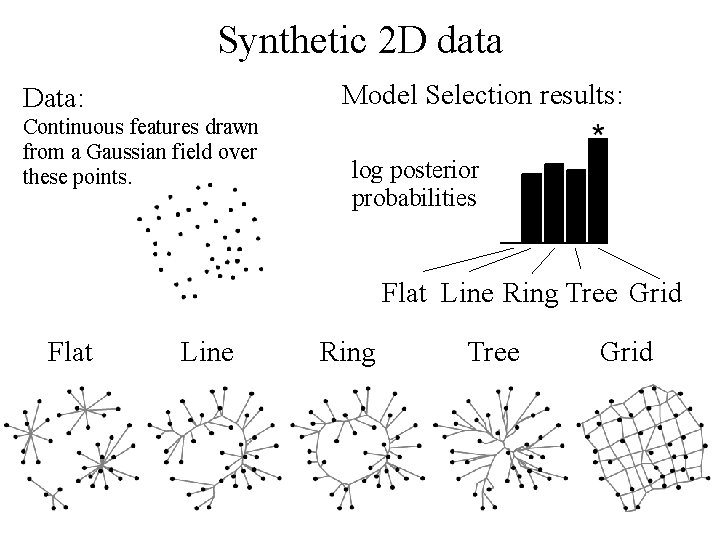

Synthetic 2 D data Model Selection results: Data: Continuous features drawn from a Gaussian field over these points. log posterior probabilities Flat Line Ring Tree Grid

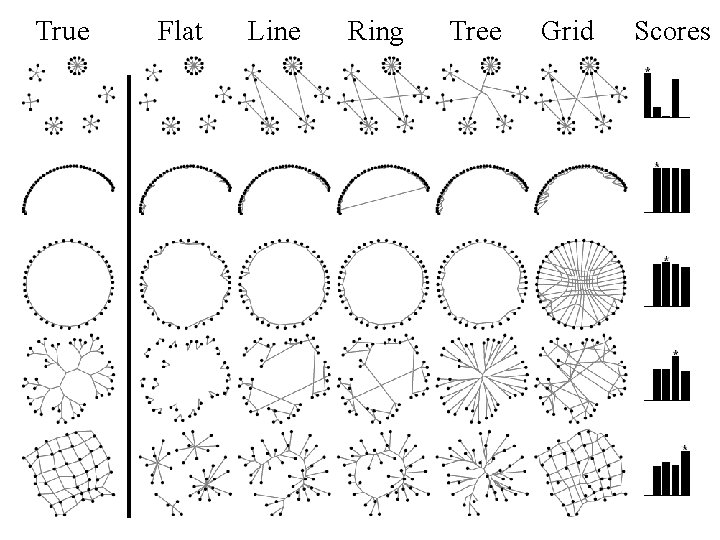

True Flat Line Ring Tree Grid Scores

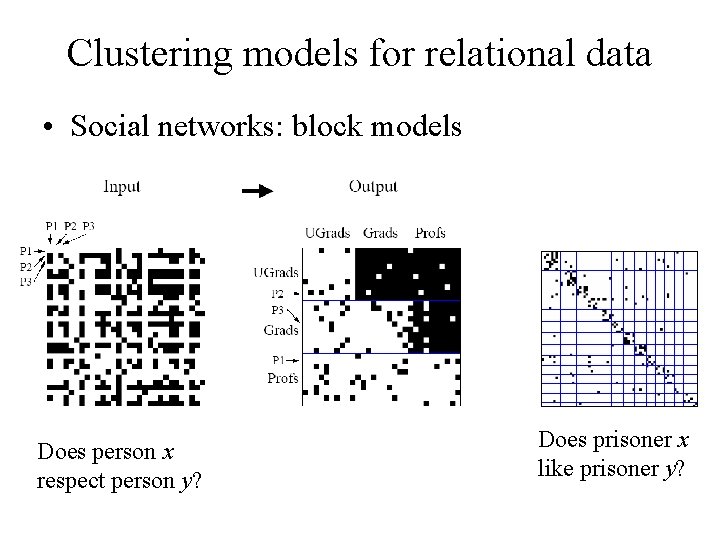

Clustering models for relational data • Social networks: block models Does person x respect person y? Does prisoner x like prisoner y?

Learning a hierarchical ontology

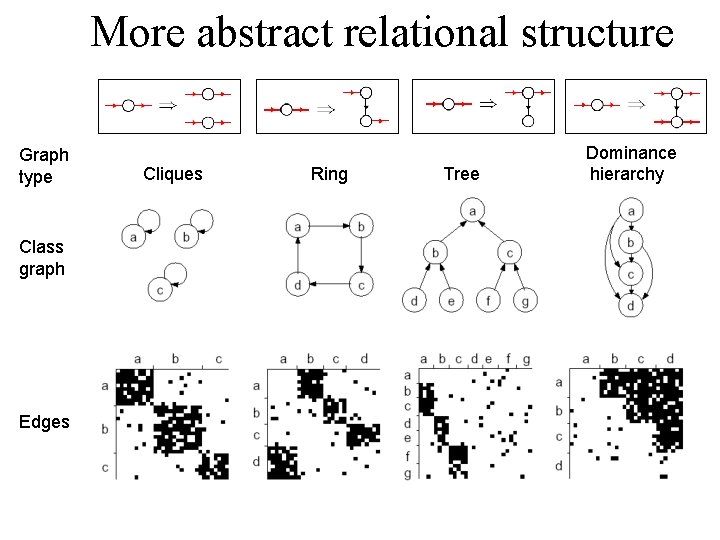

More abstract relational structure Graph type Class graph Edges Cliques Ring Tree Dominance hierarchy

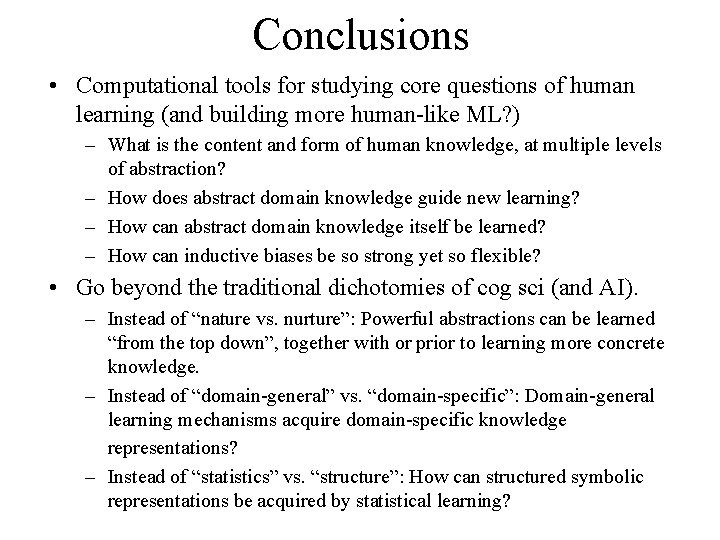

Conclusions • Computational tools for studying core questions of human learning (and building more human-like ML? ) – What is the content and form of human knowledge, at multiple levels of abstraction? – How does abstract domain knowledge guide new learning? – How can abstract domain knowledge itself be learned? – How can inductive biases be so strong yet so flexible? • Go beyond the traditional dichotomies of cog sci (and AI). – Instead of “nature vs. nurture”: Powerful abstractions can be learned “from the top down”, together with or prior to learning more concrete knowledge. – Instead of “domain-general” vs. “domain-specific”: Domain-general learning mechanisms acquire domain-specific knowledge representations? – Instead of “statistics” vs. “structure”: How can structured symbolic representations be acquired by statistical learning?

- Slides: 94