Using the Bayesian Information Criterion to Judge Models

Using the Bayesian Information Criterion to Judge Models and Statistical Significance Paul Millar University of Calgary

Problems • Choosing the “best” model • Aside from OLS, few recognized standards • Few ways to judge if adding an explanatory variable is justified by the additional explained variance • Conventional p-values are problematic • Large, small N • Potential unrecognized relationships between explanatory variables • Random associations not always detected

Judging Models • Explanatory Framework • Need to find the “best” or most likely model, given the data • Two aspects • Which variables should comprise the model? • Which form should the model take? • Predictive Framework • Of the potential variables and model forms, which best predicts the outcome?

Bayesian Approach • • • Origins (Bayes 1763) Bayes Factors (Jeffreys 1935) BIC (Swartz 1978) Variable Significance (Raftery 1995) Judging Variables and Models Stata Commands

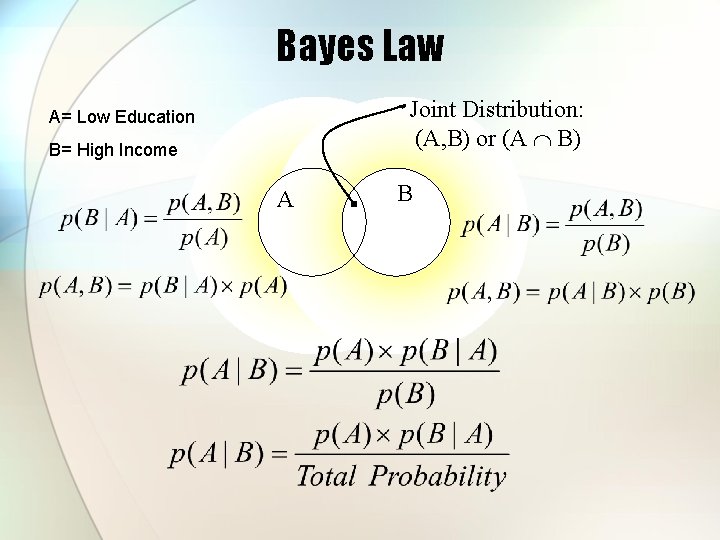

Bayes Law Joint Distribution: (A, B) or (A B) A= Low Education B= High Income A B

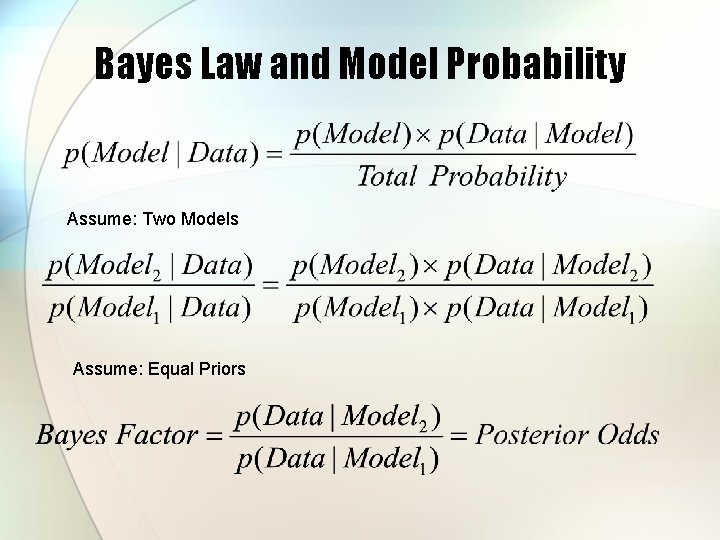

Bayes Law and Model Probability Assume: Two Models Assume: Equal Priors

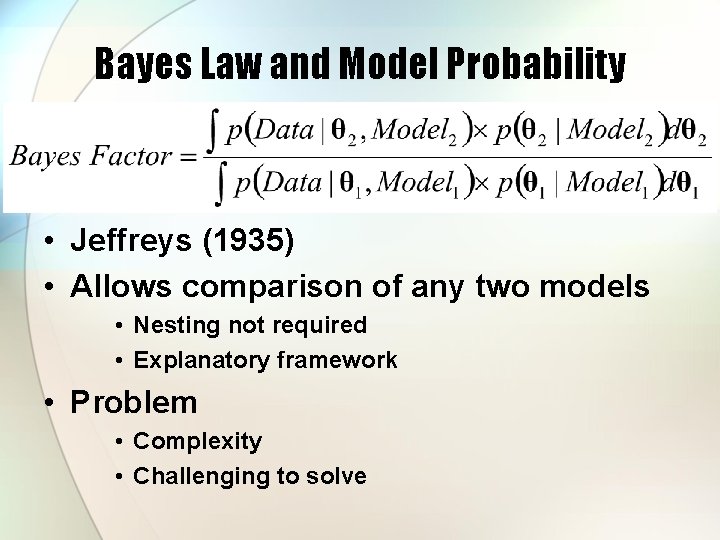

Bayes Law and Model Probability • Jeffreys (1935) • Allows comparison of any two models • Nesting not required • Explanatory framework • Problem • Complexity • Challenging to solve

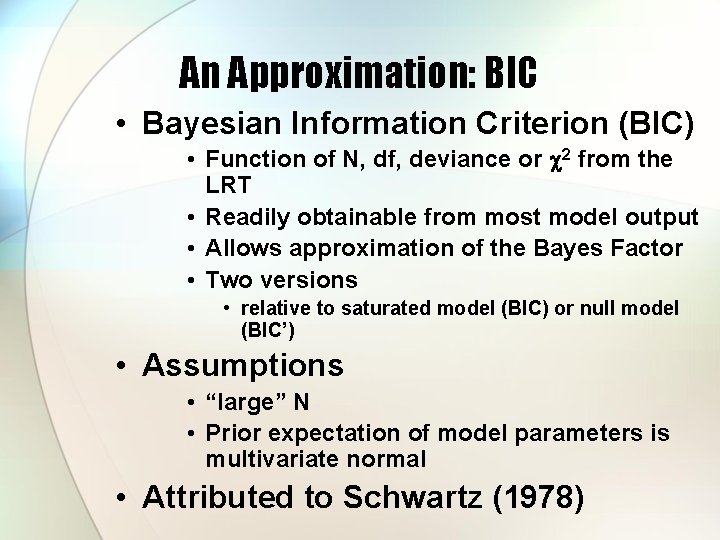

An Approximation: BIC • Bayesian Information Criterion (BIC) • Function of N, df, deviance or c 2 from the LRT • Readily obtainable from most model output • Allows approximation of the Bayes Factor • Two versions • relative to saturated model (BIC) or null model (BIC’) • Assumptions • “large” N • Prior expectation of model parameters is multivariate normal • Attributed to Schwartz (1978)

An Alternative to the t-test • Produces over-confident results for large datasets • Random relationships sometimes pass the test • Widely varying results possible when combined with stepwise regression • Only other significance testing method (re-sampling) provides no guidance on form or content of model

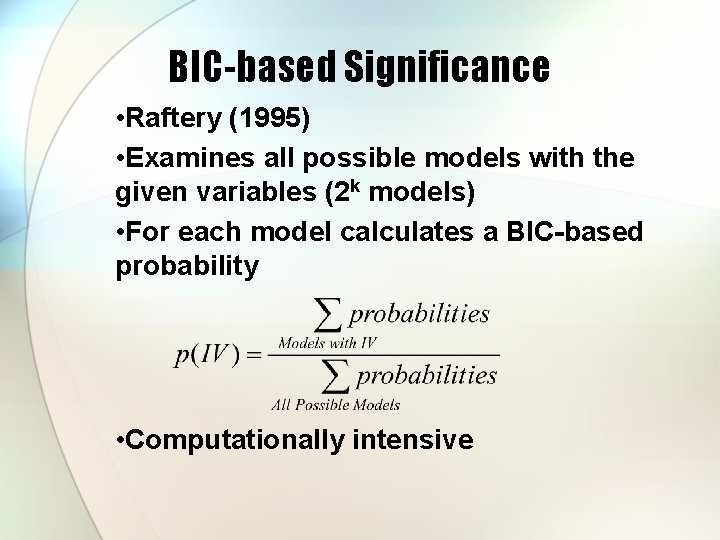

BIC-based Significance • Raftery (1995) • Examines all possible models with the given variables (2 k models) • For each model calculates a BIC-based probability • Computationally intensive

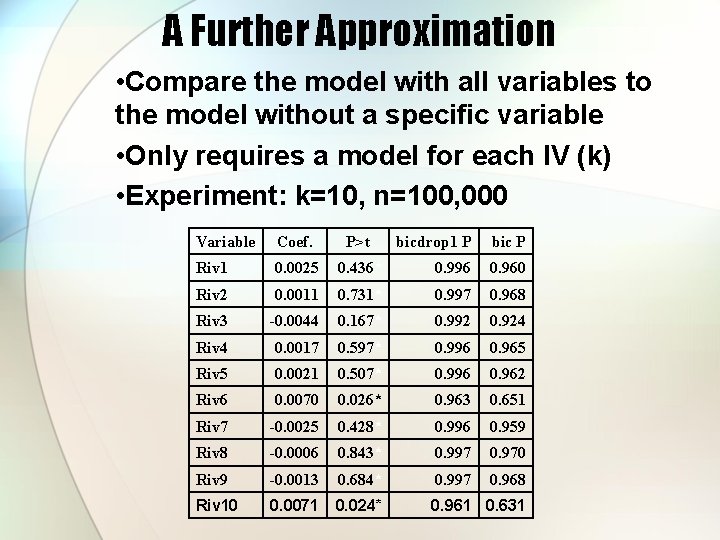

A Further Approximation • Compare the model with all variables to the model without a specific variable • Only requires a model for each IV (k) • Experiment: k=10, n=100, 000 Variable Coef. P>t bicdrop 1 P bic P Riv 1 0. 0025 0. 436* 0. 996 0. 960 Riv 2 0. 0011 0. 731* 0. 997 0. 968 Riv 3 -0. 0044 0. 167* 0. 992 0. 924 Riv 4 0. 0017 0. 597* 0. 996 0. 965 Riv 5 0. 0021 0. 507* 0. 996 0. 962 Riv 6 0. 0070 0. 026* 0. 963 0. 651 Riv 7 -0. 0025 0. 428* 0. 996 0. 959 Riv 8 -0. 0006 0. 843* 0. 997 0. 970 Riv 9 -0. 0013 0. 684* 0. 997 0. 968 Riv 10 0. 0071 0. 024* 0. 961 0. 631

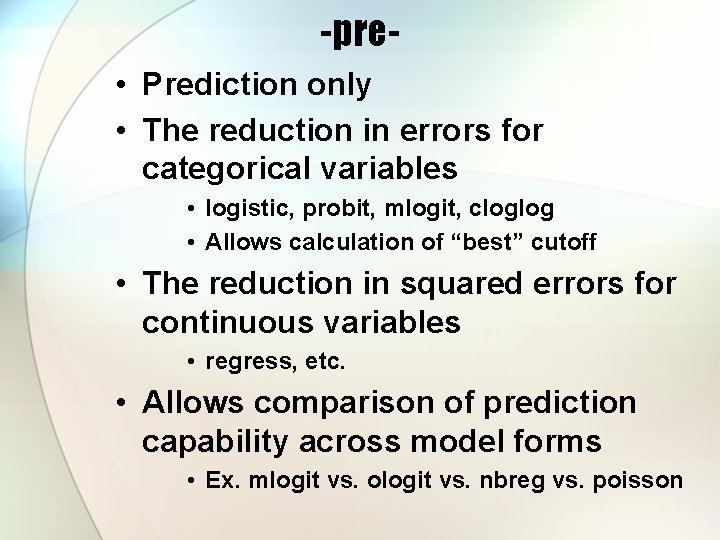

-pre • Prediction only • The reduction in errors for categorical variables • logistic, probit, mlogit, cloglog • Allows calculation of “best” cutoff • The reduction in squared errors for continuous variables • regress, etc. • Allows comparison of prediction capability across model forms • Ex. mlogit vs. ologit vs. nbreg vs. poisson

bicdrop 1 • Used when –bic– takes too long or when comparisons to the AIC are desired

-bic • Reports probability for each variable using Raftery’s procedure • Also reports pseudo-R 2, pre, bicdrop 1 results • Reports most likely models, given theory and data (hence a form of stepwise)

Further Development • “-pre-” –wise regression • Find the combination of IVs and model specification that best predict the outcome variable • Variable significance ignored • Bayesian cross-model comparisons • Safer than stepwise • Bayes Factors • Requires development of reasonable empirical solutions to integrals

- Slides: 15