15 744 Computer Networking L7 Software Forwarding SoftwareBased

15 -744: Computer Networking L-7 Software Forwarding

Software-Based Routers • Motivation • Enabling innovation in networking research • Software data planes • Readings: • Open. Flow: Enabling Innovation in Campus Networks • The Click Modular Router • Optional reading • Route. Bricks: Exploiting Parallelism To Scale Software Routers 2

Active Networking Recap • Network API exposes capabilities • Processing, queues, storage • Custom code/functions run on each packet • E. g. , conventional IP is best effort, dst based • When could this be insufficient? 3

Two models of active networks • “Capsule” • Packet carries code! • Programmable router • Operator installs modules on router • Pros/cons? 4

Criticisms • Too far removed from conventional networks • Upgrade/deployability? • Capsule was considered insecure • No killer apps (continues to be problem) • Performance? 5

Three logical stages (more hindsight) • Active networking era • Case for “programmable” network devices • “Separation” of control vs data era • Specifically about routing etc • Open. Flow/Network OS era 6

Network Management Traffic Engineering Performance Security Compliance Resilience 7

Problem: Toolbox is bad! Traffic Engineering Performance Security Compliance Resilience 8

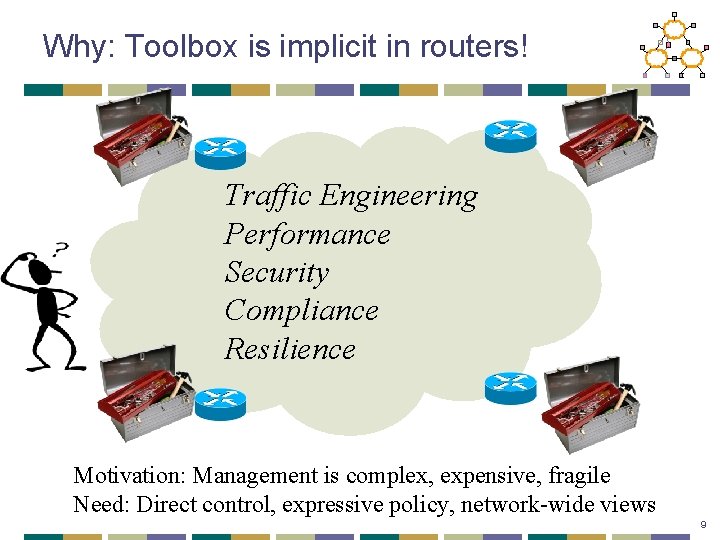

Why: Toolbox is implicit in routers! Traffic Engineering Performance Security Compliance Resilience Motivation: Management is complex, expensive, fragile Need: Direct control, expressive policy, network-wide views 9

Solution • Separate out the “data” and the “control” • Open interface between control/data planes • Logically centralized views • Simplifies optimization/policy management • Network-wide visibility 10

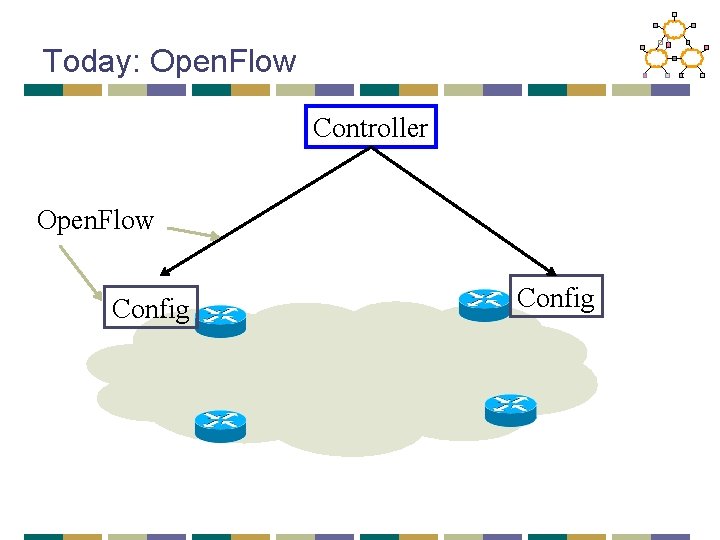

Today: Open. Flow Controller Open. Flow Config

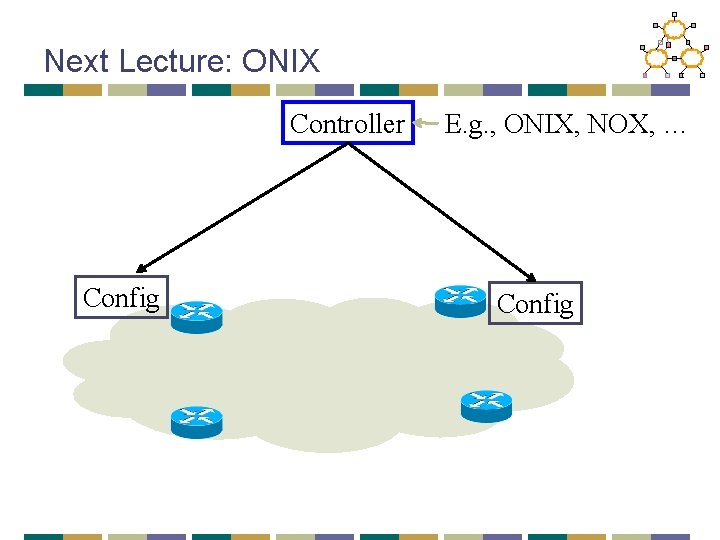

Next Lecture: ONIX Controller Config E. g. , ONIX, NOX, … Config

Open. Flow: Motivation • The Internet is a “success disaster” • • Many successful applications Critical for economy as a whole Too huge a vested infrastructure Vendors loathe to change anything • Fear in community: “ossification” • New ideas cannot get deployed

Driving questions • Get our own operators comfortable with running network experiments • Isolate experimental traffic from production traffic • What is the functionality that enables innovation?

Rejected alternatives • Get vendors to support • Use PC/Linux based network elements • Existing research prototypes for programmable elements

Their Path • “Pragmatic compromise” • Sacrifice generality for: • Performance • Cost • Vendor “buy-in”

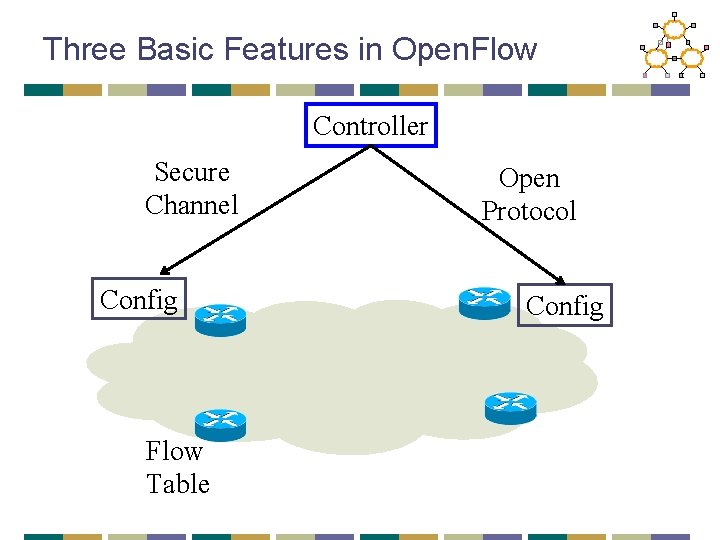

Three Basic Features in Open. Flow Controller Secure Channel Config Flow Table Open Protocol Config

Flow. Table Actions • Forward on specific port/interface • Forward to controller (encapsulated) • Drop • Forward legacy • Future support: counters, modifiers

What is nice • Fits well with the TCAM abstraction • Most vendors already have this • They can just expose this without exposing internals

Example Apps • Ethane • Amy’s own OSPF • VLAN • Vo. IP for Mobile • Support for non-IP

Driving questions: Did it achieve this? • Get operators comfortable with running experimental? • Isolate experimental traffic from production traffic? • What is the functionality that can enable innovation?

Software-Based Routers • Enabling innovation in networking research • Software data planes • Readings: • Open. Flow: Enabling Innovation in Campus Networks • The Click Modular Router • Optional reading • Route. Bricks: Exploiting Parallelism To Scale Software Routers 22

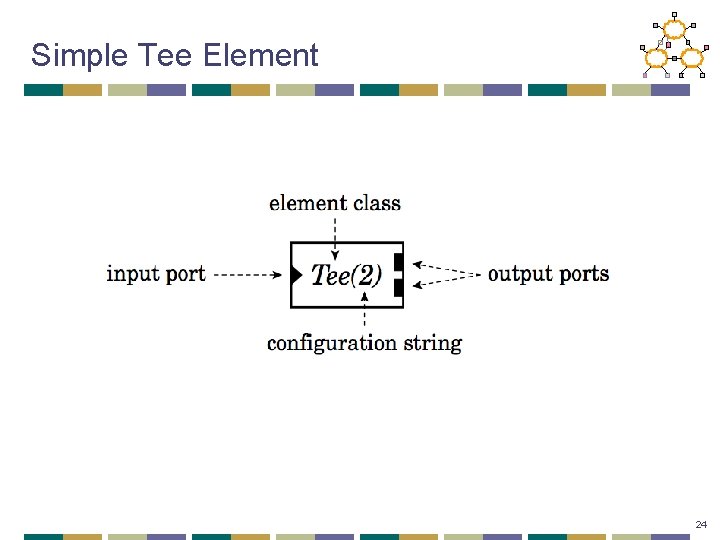

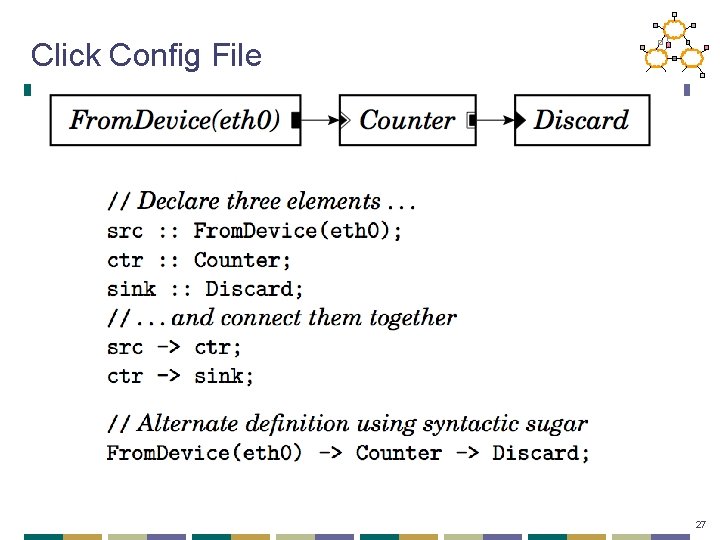

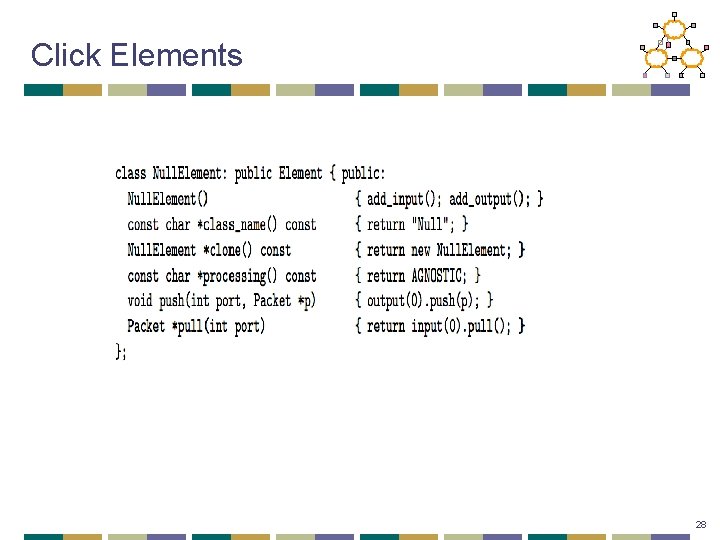

Click overview • Modular architecture • Router = composition of modules • Router = data flow graph • An element is the basic unit of processing • Three key components of each element: • Ports • Configuration • Method interfaces 23

Simple Tee Element 24

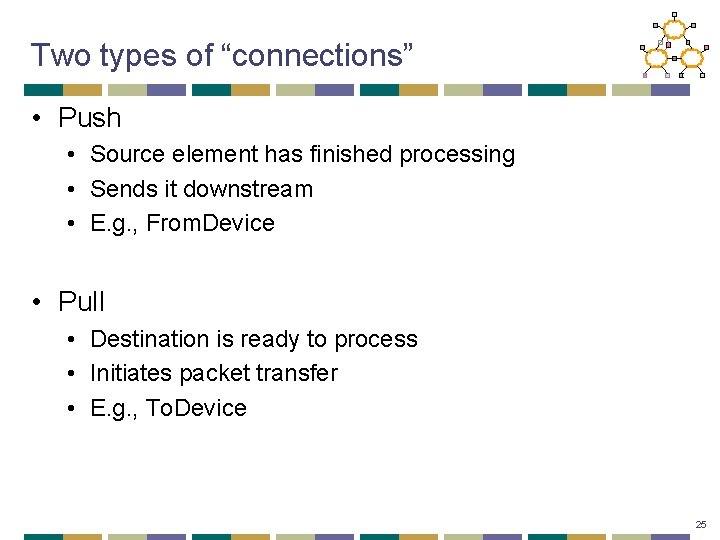

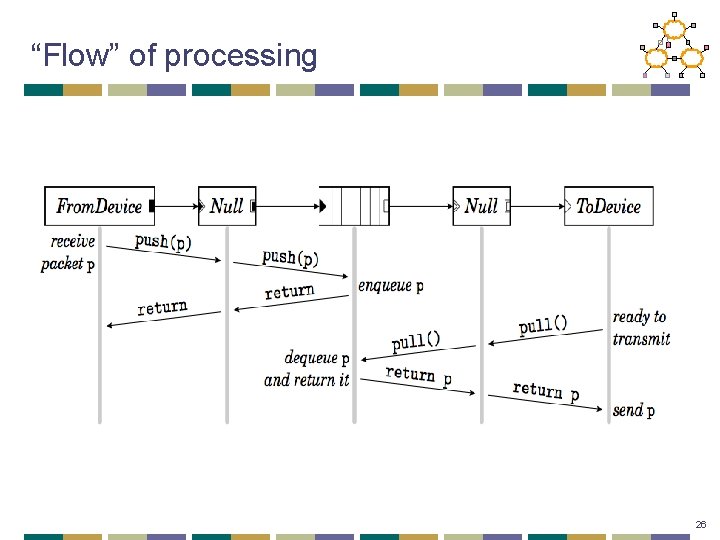

Two types of “connections” • Push • Source element has finished processing • Sends it downstream • E. g. , From. Device • Pull • Destination is ready to process • Initiates packet transfer • E. g. , To. Device 25

“Flow” of processing 26

Click Config File 27

Click Elements 28

Other elements • Packet Classification • Scheduling • Queueing • Routing • What you write… 29

Idea: Polling • Under heavy load, disable the network card’s interrupts • Use polling instead • Ask if there is more work once you’ve done the first batch • Click paper we read – does pure polling

Takeaways • Click is a flexible modular router • Shows that s/w x 86 can get pretty good performance • Extensible/modular • Widely used in academia/research • Play with it! 31

Software-Based Routers • Enabling innovation in networking research • Software data planes • Readings: • Open. Flow: Enabling Innovation in Campus Networks • The Click Modular Router • Optional reading • Route. Bricks: Exploiting Parallelism To Scale Software Routers 32

Building routers • Fast • Programmable • • custom statistics filtering packet transformation … Route. Bricks slides: Katerina Argyraki, 2009 33

Why programmable routers • New ISP services • intrusion detection, application acceleration • Simpler network monitoring • measure link latency, track down traffic • New protocols • IP traceback, Trajectory Sampling, … Enable flexible, extensible networks 34

Today: fast or programmable • Fast “hardware” routers • throughput : Tbps • little programmability • Programmable “software” routers • processing by general-purpose CPUs • throughput < 10 Gbps 35

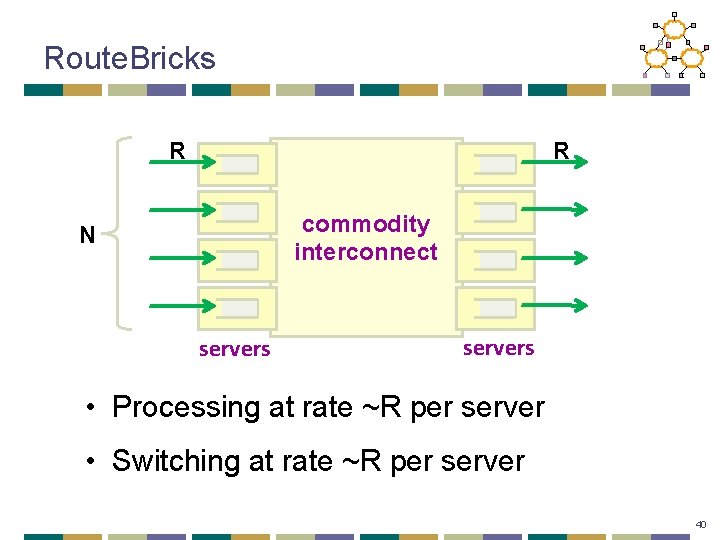

Route. Bricks • A router out of off-the-shelf PCs • familiar programming environment • large-volume manufacturing • Can we build a Tbps router out of PCs? 36

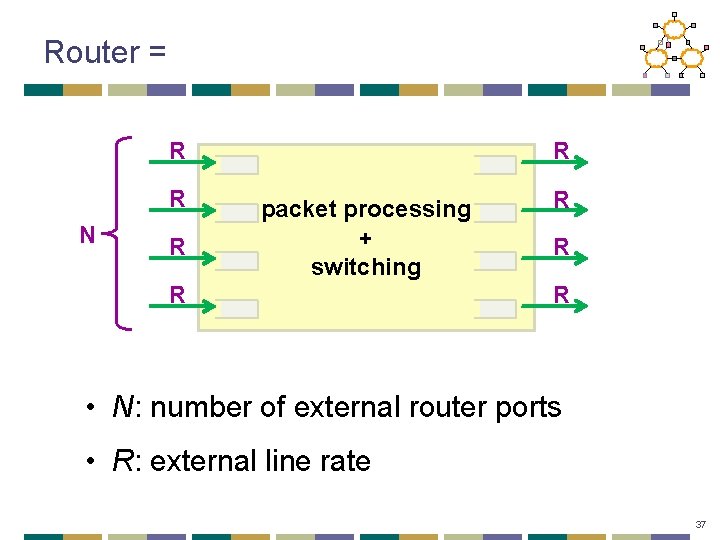

Router = R R N R R packet processing + switching R R • N: number of external router ports • R: external line rate 37

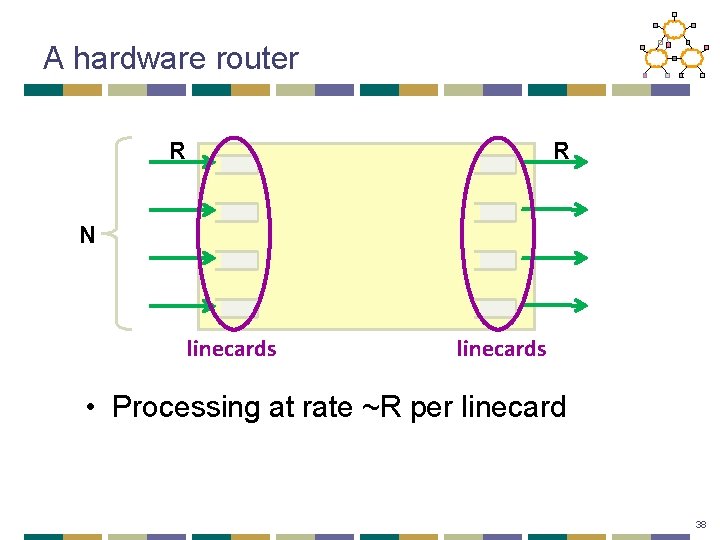

A hardware router R R N linecards • Processing at rate ~R per linecard 38

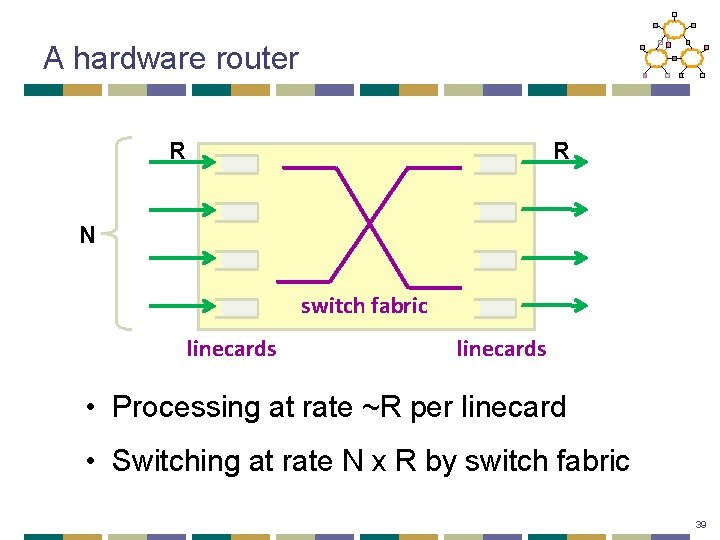

A hardware router R R N switch fabric linecards • Processing at rate ~R per linecard • Switching at rate N x R by switch fabric 39

Route. Bricks R R commodity interconnect N servers • Processing at rate ~R per server • Switching at rate ~R per server 40

Outline • Interconnect • Server optimizations • Performance 41

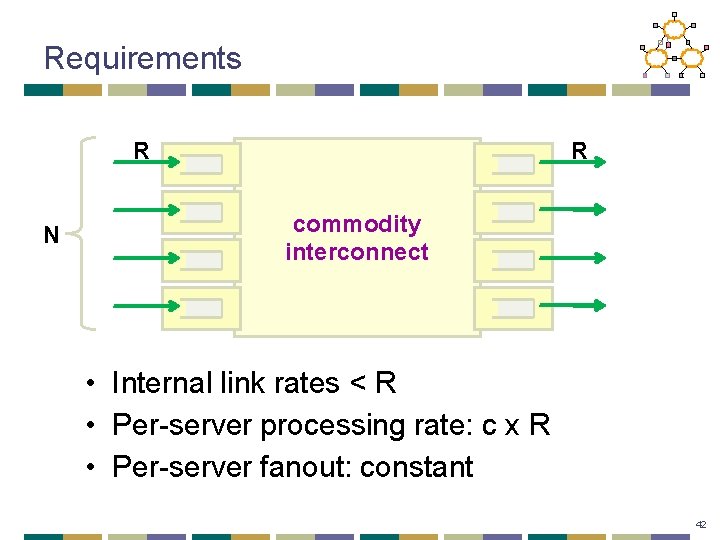

Requirements R N R commodity interconnect • Internal link rates < R • Per-server processing rate: c x R • Per-server fanout: constant 42

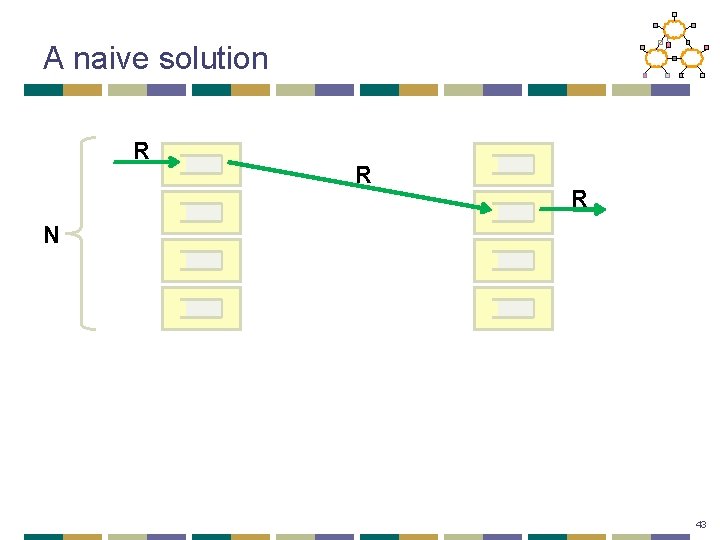

A naive solution R R R N 43

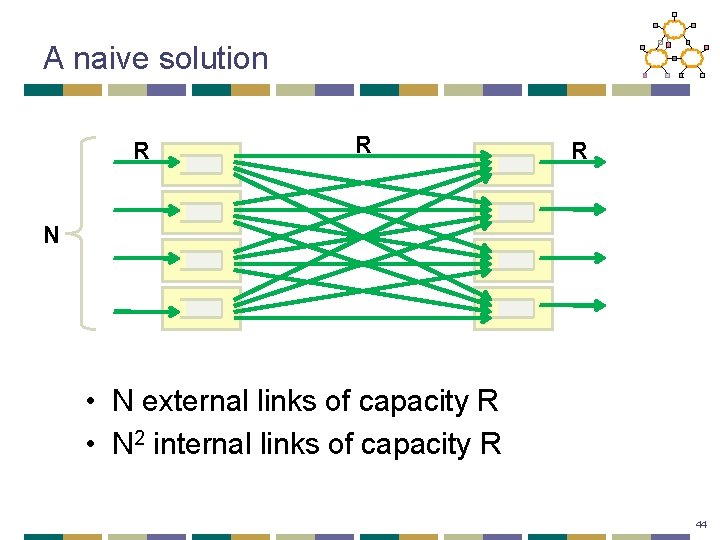

A naive solution R R R N • N external links of capacity R • N 2 internal links of capacity R 44

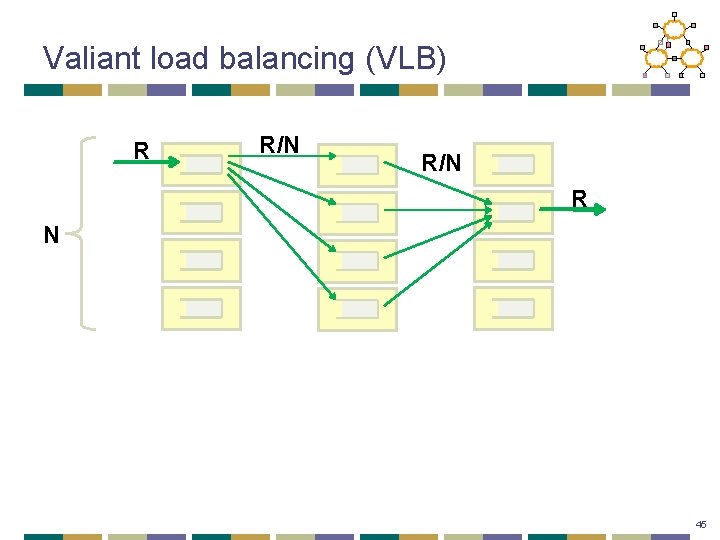

Valiant load balancing (VLB) R R/N R N 45

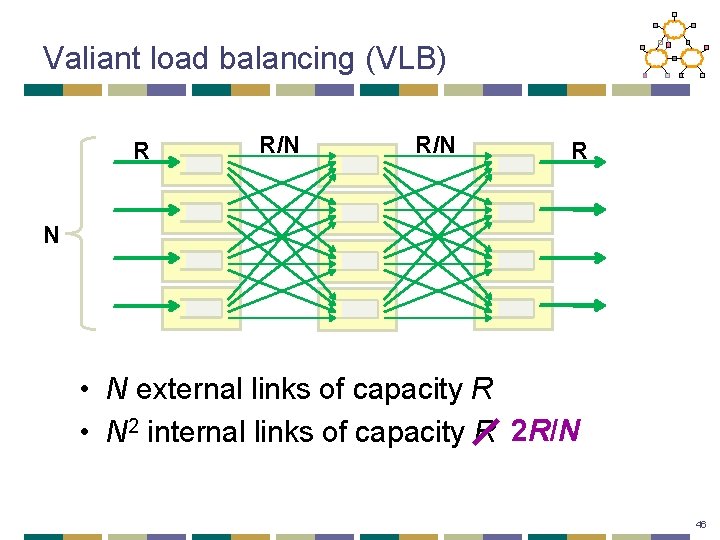

Valiant load balancing (VLB) R R/N R N • N external links of capacity R • N 2 internal links of capacity R 2 R/N 46

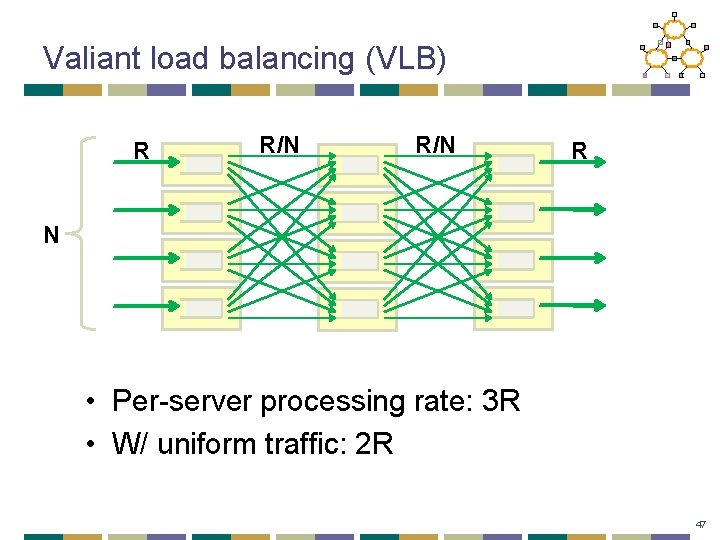

Valiant load balancing (VLB) R R/N R N • Per-server processing rate: 3 R • W/ uniform traffic: 2 R 47

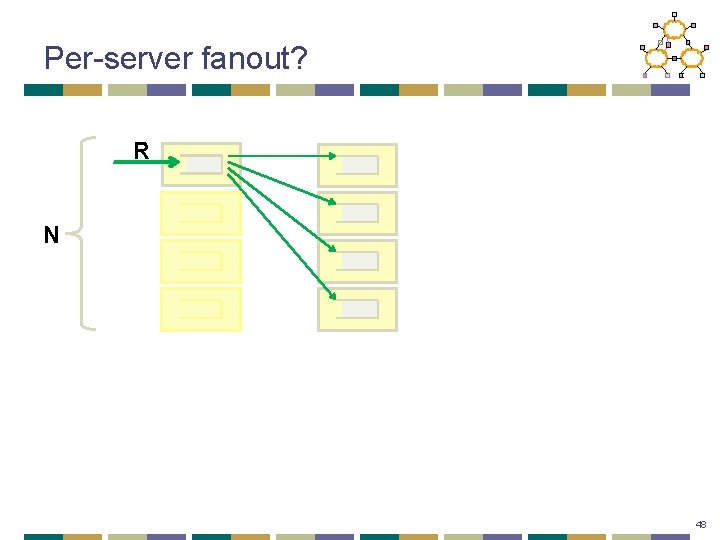

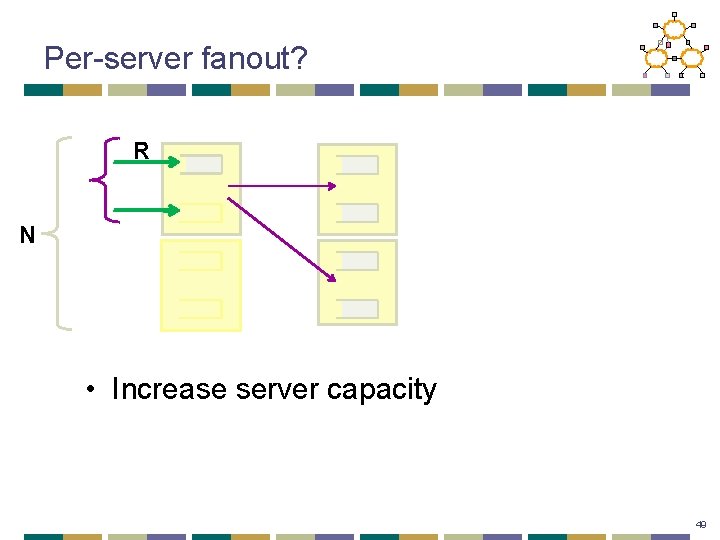

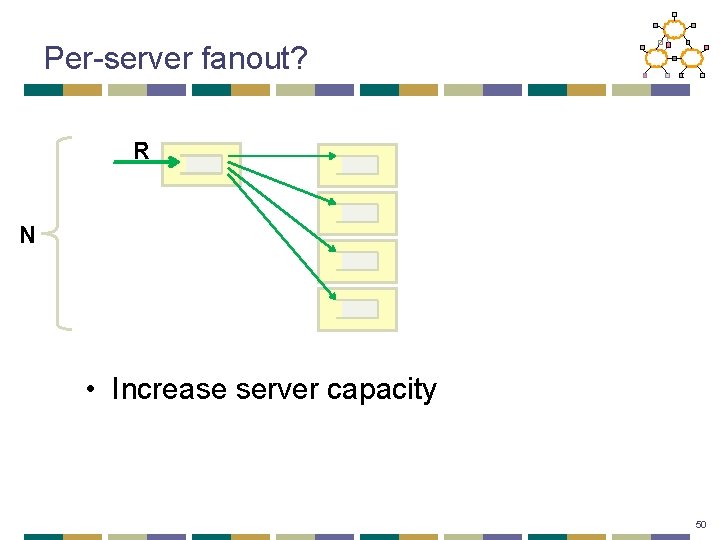

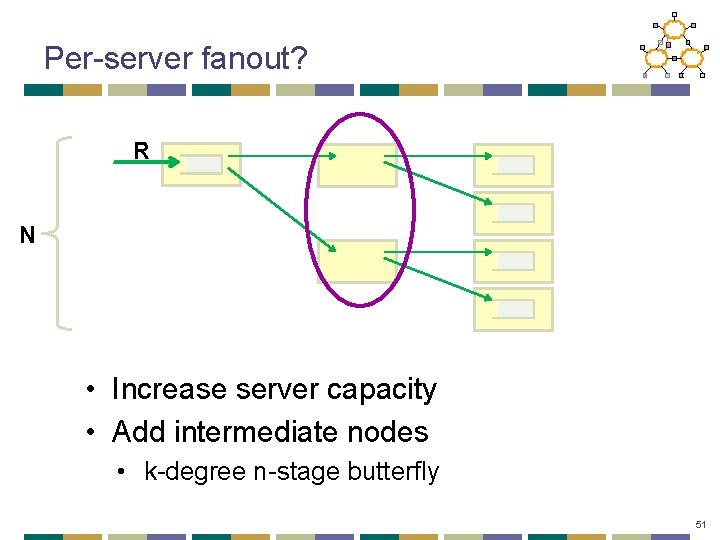

Per-server fanout? R N 48

Per-server fanout? R N • Increase server capacity 49

Per-server fanout? R N • Increase server capacity 50

Per-server fanout? R N • Increase server capacity • Add intermediate nodes • k-degree n-stage butterfly 51

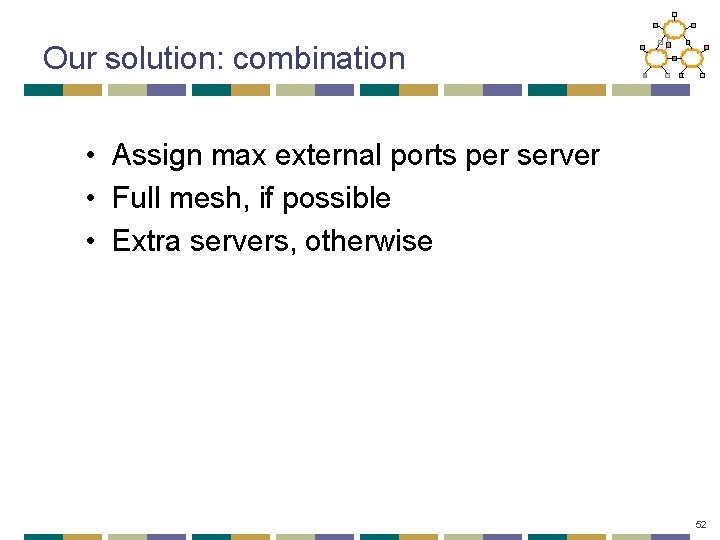

Our solution: combination • Assign max external ports per server • Full mesh, if possible • Extra servers, otherwise 52

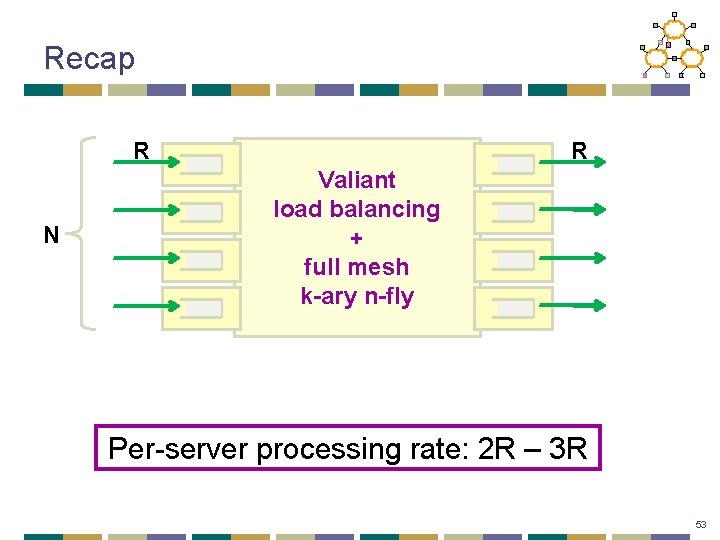

Recap R N R Valiant load balancing + full mesh k-ary n-fly Per-server processing rate: 2 R – 3 R 53

Outline • Interconnect • Server optimizations • Performance 54

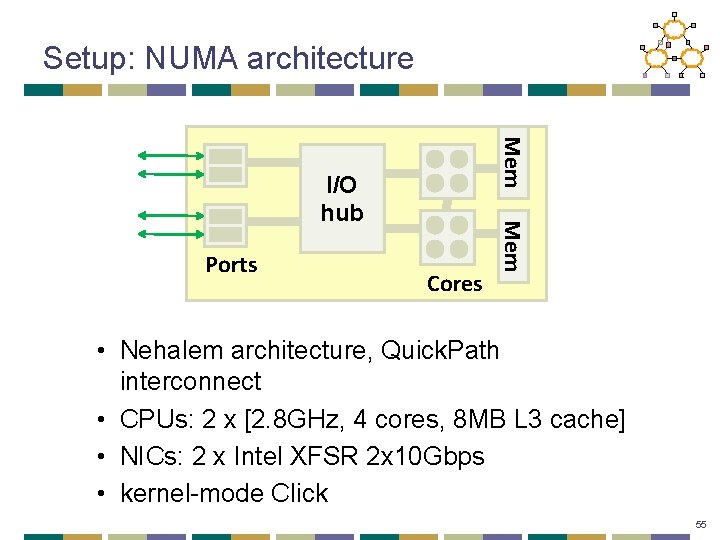

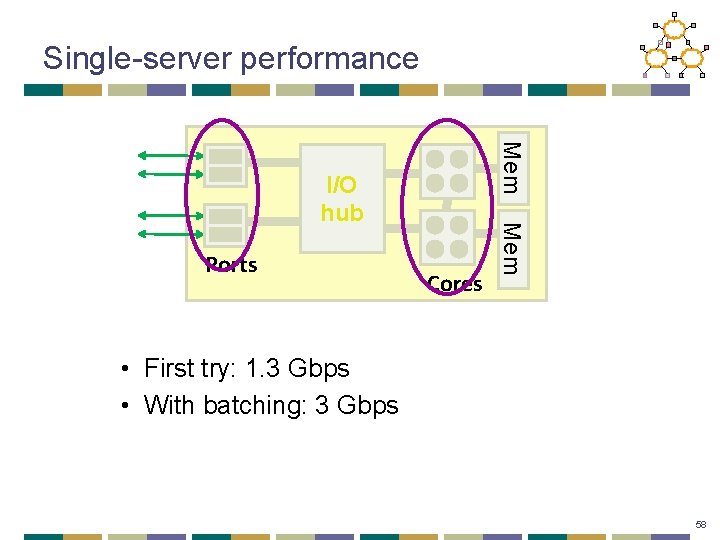

Setup: NUMA architecture Mem Ports Cores Mem I/O hub • Nehalem architecture, Quick. Path interconnect • CPUs: 2 x [2. 8 GHz, 4 cores, 8 MB L 3 cache] • NICs: 2 x Intel XFSR 2 x 10 Gbps • kernel-mode Click 55

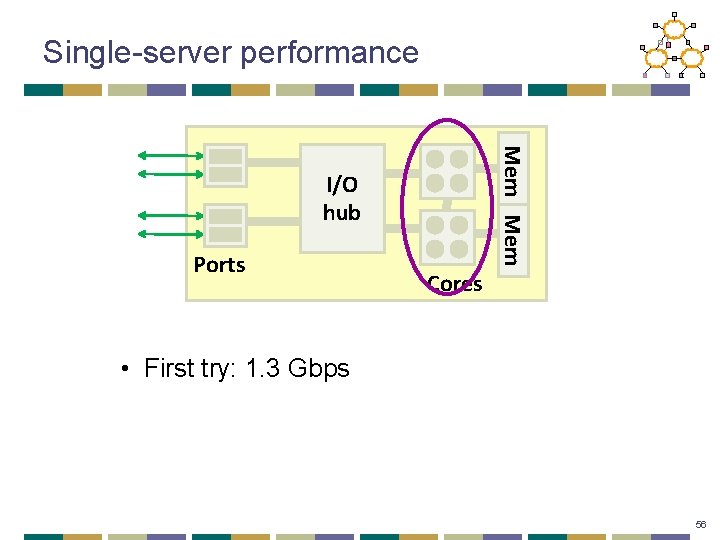

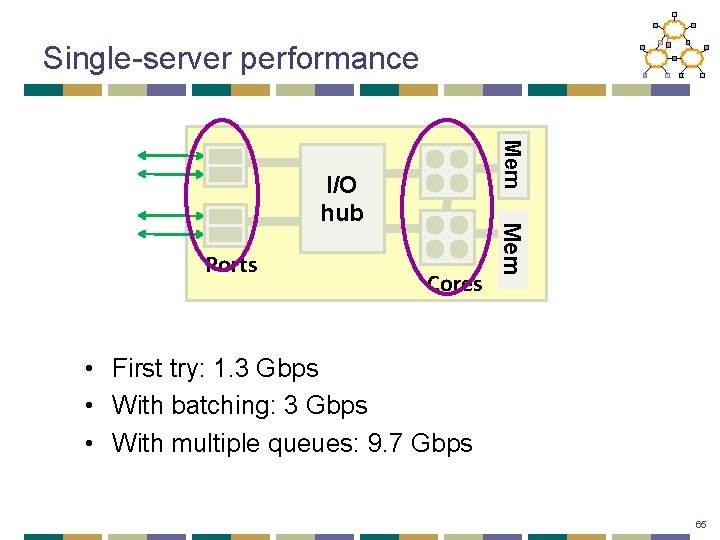

Single-server performance Mem I/O hub Ports Cores • First try: 1. 3 Gbps 56

Problem #1: book-keeping • Managing packet descriptors • moving between NIC and memory • updating descriptor rings • Solution: batch packet operations • NIC batches multiple packet descriptors • CPU polls for multiple packets 57

Single-server performance Mem Ports Cores Mem I/O hub • First try: 1. 3 Gbps • With batching: 3 Gbps 58

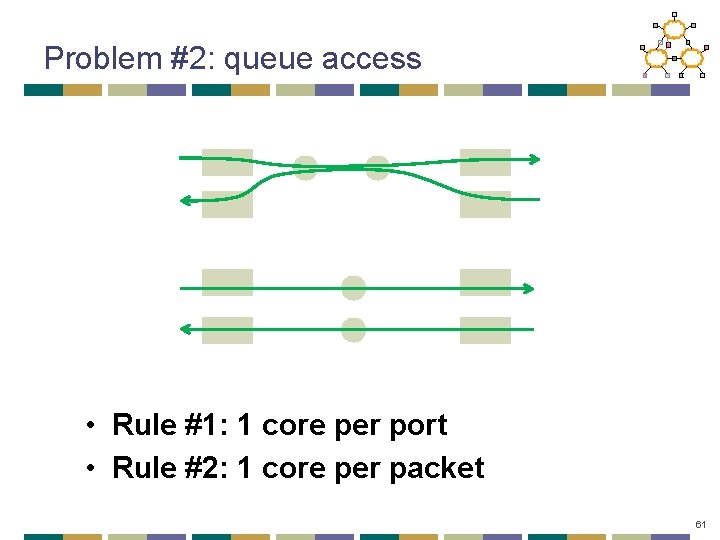

Problem #2: queue access Ports Cores 59

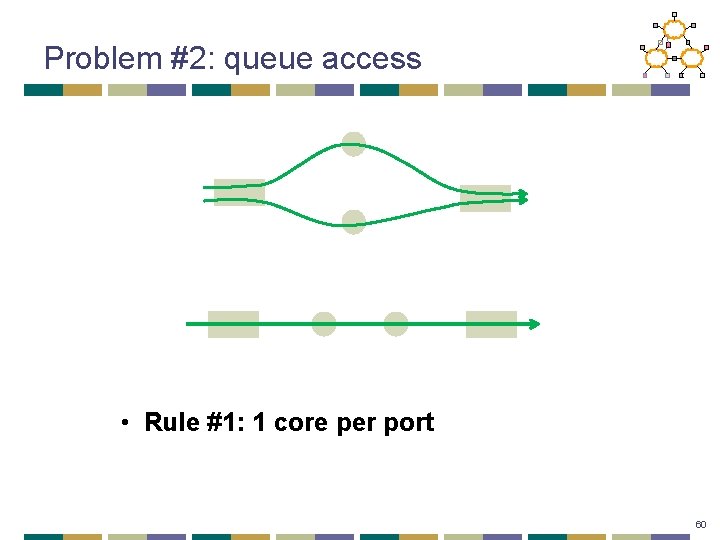

Problem #2: queue access • Rule #1: 1 core per port 60

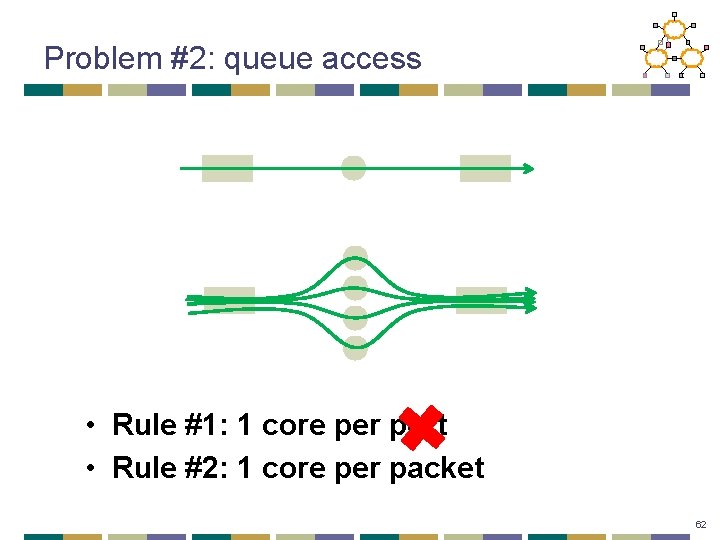

Problem #2: queue access • Rule #1: 1 core per port • Rule #2: 1 core per packet 61

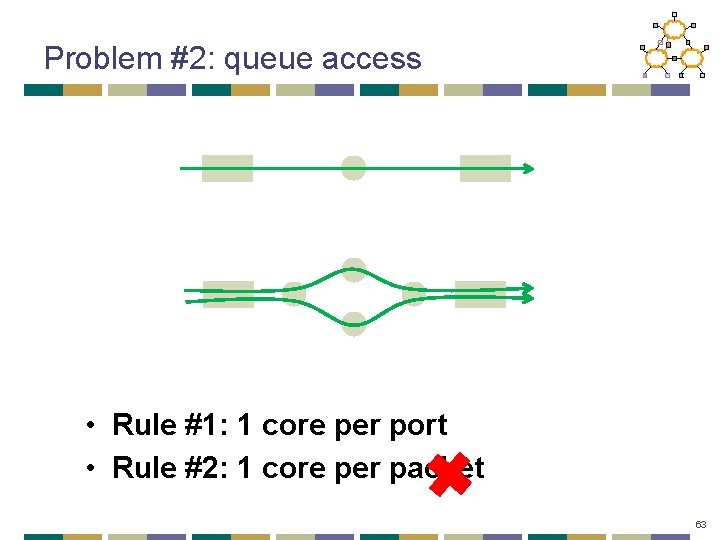

Problem #2: queue access • Rule #1: 1 core per port • Rule #2: 1 core per packet 62

Problem #2: queue access • Rule #1: 1 core per port • Rule #2: 1 core per packet 63

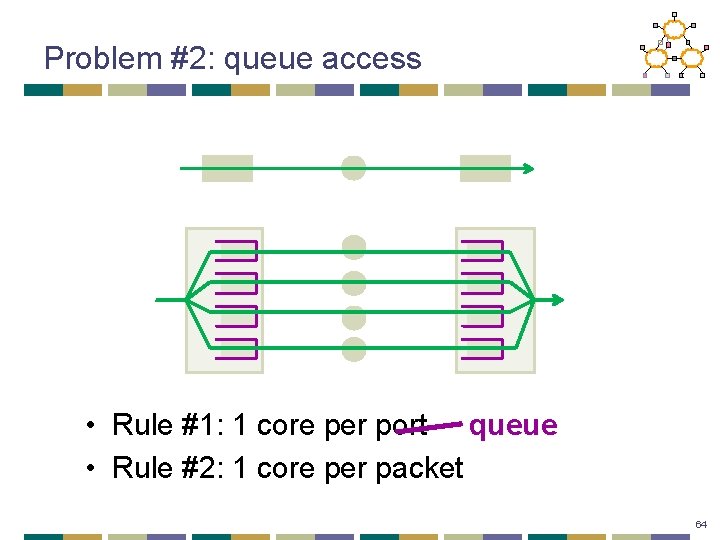

Problem #2: queue access • Rule #1: 1 core per port queue • Rule #2: 1 core per packet 64

Single-server performance Mem Ports Cores Mem I/O hub • First try: 1. 3 Gbps • With batching: 3 Gbps • With multiple queues: 9. 7 Gbps 65

Recap • State-of-the art hardware • NUMA architecture, multi-queue NICs • Modified NIC driver • batching • Careful queue-to-core allocation • one core per queue, per packet 66

Outline • Interconnect • Server optimizations • Performance 67

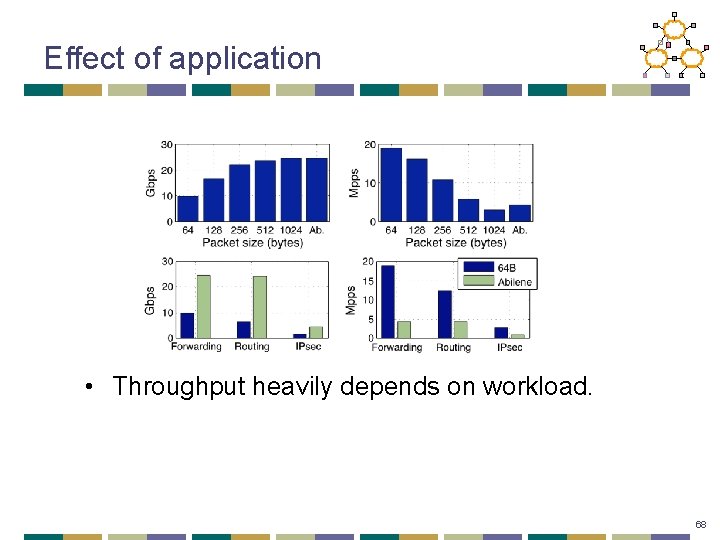

Effect of application • Throughput heavily depends on workload. 68

Summary • Vision of active networking • Separating data plane and control plane • Building software routers by starting with: • closed, commercial routers vs. • commodity PCs • Pros and cons? 69

Next Lecture • Software-Defined Networking • Readings: • 4 D: Read in full • Onix: Read intro • Ethane: Optional reading 70

- Slides: 70