Sampling Bayesian Networks ICS 295 2008 1 Algorithm

Sampling Bayesian Networks ICS 295 2008 1

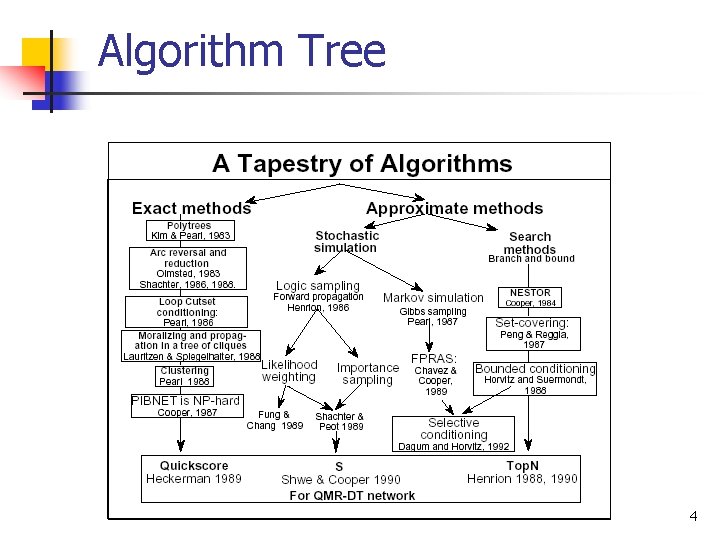

Algorithm Tree 4

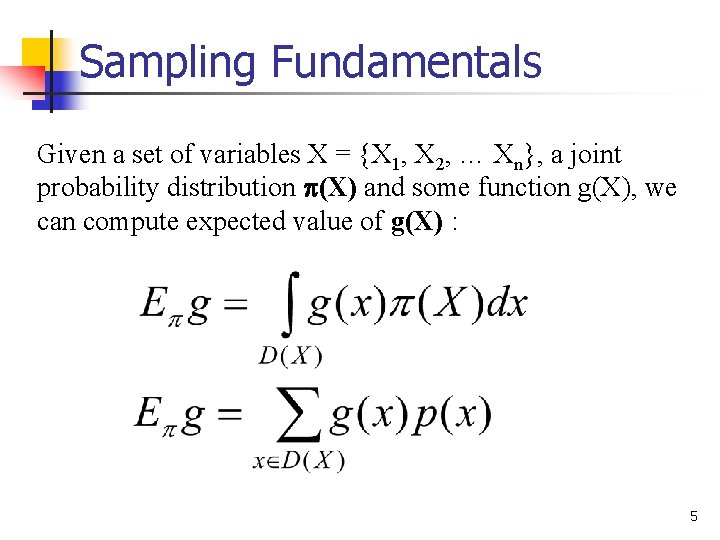

Sampling Fundamentals Given a set of variables X = {X 1, X 2, … Xn}, a joint probability distribution (X) and some function g(X), we can compute expected value of g(X) : 5

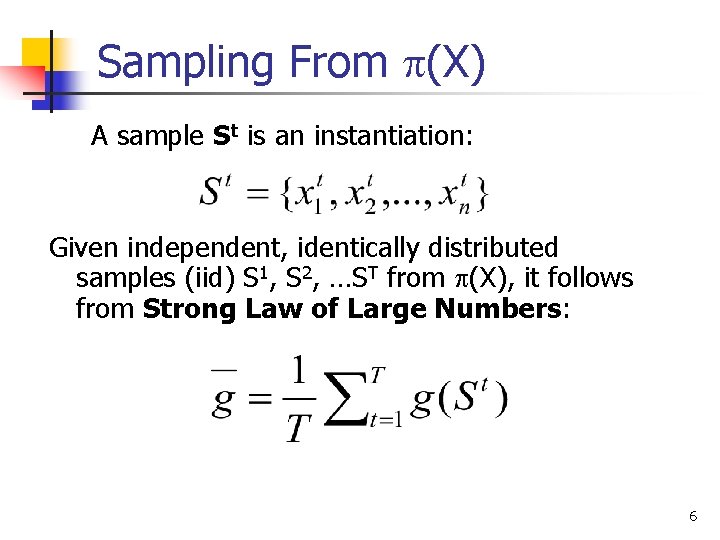

Sampling From (X) A sample St is an instantiation: Given independent, identically distributed samples (iid) S 1, S 2, …ST from (X), it follows from Strong Law of Large Numbers: 6

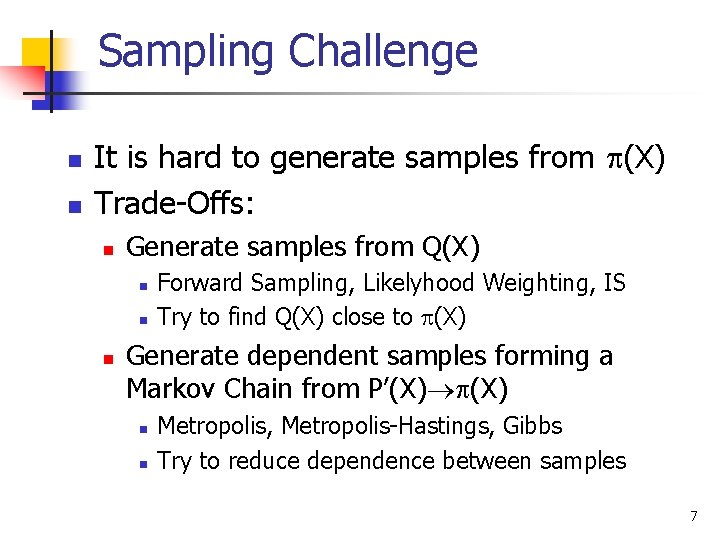

Sampling Challenge n n It is hard to generate samples from (X) Trade-Offs: n Generate samples from Q(X) n n n Forward Sampling, Likelyhood Weighting, IS Try to find Q(X) close to (X) Generate dependent samples forming a Markov Chain from P’(X) n n Metropolis, Metropolis-Hastings, Gibbs Try to reduce dependence between samples 7

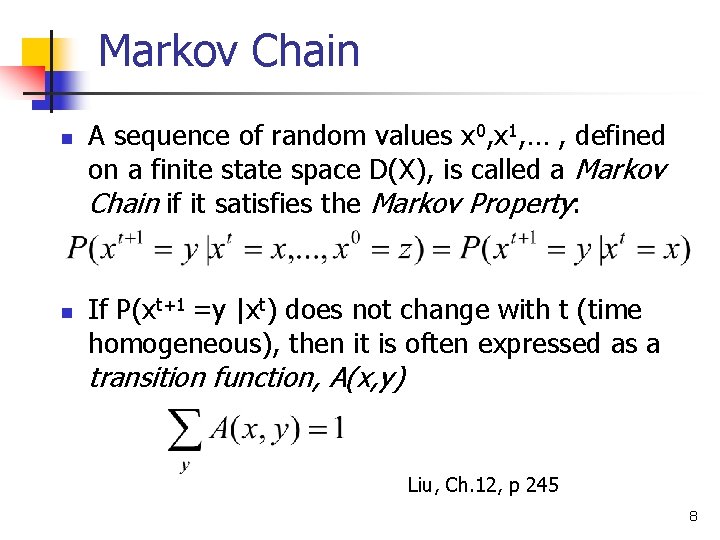

Markov Chain n n A sequence of random values x 0, x 1, … , defined on a finite state space D(X), is called a Markov Chain if it satisfies the Markov Property: If P(xt+1 =y |xt) does not change with t (time homogeneous), then it is often expressed as a transition function, A(x, y) Liu, Ch. 12, p 245 8

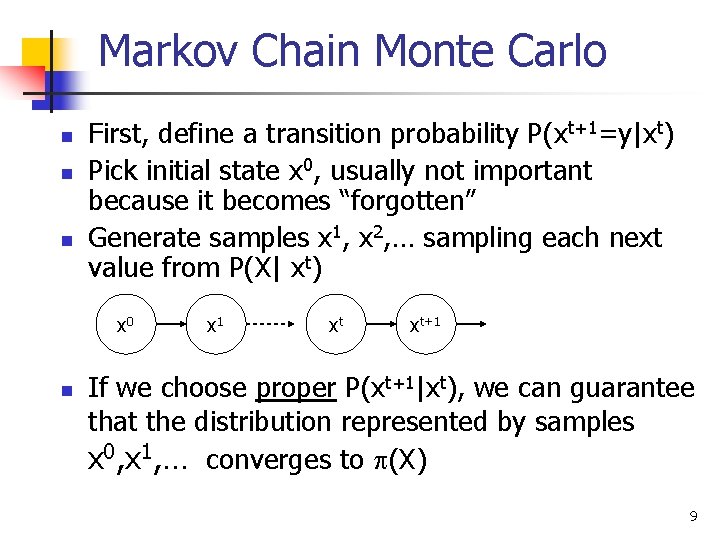

Markov Chain Monte Carlo n n n First, define a transition probability P(xt+1=y|xt) Pick initial state x 0, usually not important because it becomes “forgotten” Generate samples x 1, x 2, … sampling each next value from P(X| xt) x 0 n x 1 xt xt+1 If we choose proper P(xt+1|xt), we can guarantee that the distribution represented by samples x 0, x 1, … converges to (X) 9

Markov Chain Properties n n n Irreducibility Periodicity Recurrence Revercibility Ergodicity Stationary Distribution 10

Irreducible n n A station x is said to be irreducible if under the transition rule one has nonzero probability of moving from x to any other state and then coming back in a finite number of steps. If on state is irreducible, then all the sates must be irreducible. Liu, Ch. 12, pp. 249, Def. 12. 1. 1 11

Aperiodic n n A state x is aperiodic if the greatest common divider of {n : An(x, x) > 0} is 1. If state x is aperiodic and the chain is irreducible, then every state must be aperiodic. Liu, Ch. 12, pp. 240 -250, Def. 12. 1. 1 12

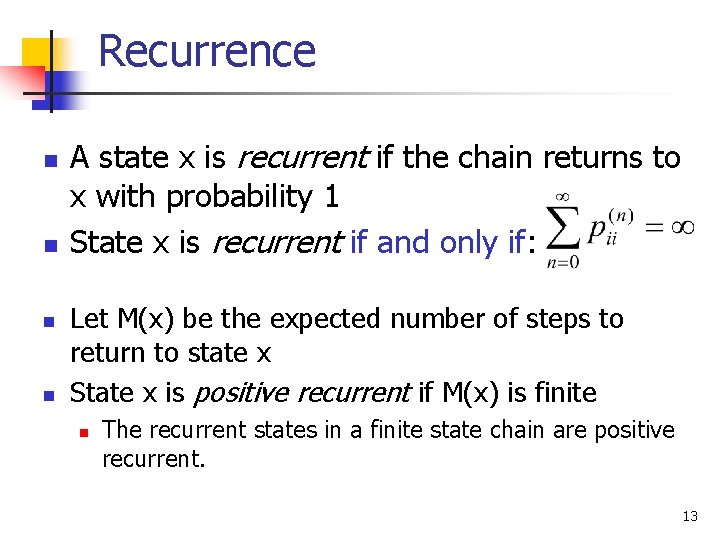

Recurrence n n A state x is recurrent if the chain returns to x with probability 1 State x is recurrent if and only if: Let M(x) be the expected number of steps to return to state x State x is positive recurrent if M(x) is finite n The recurrent states in a finite state chain are positive recurrent. 13

Ergodicity A state x is ergodic if it is aperiodic and positive recurrent. n If all states in a Markov chain are ergodic then the chain is ergodic. n 14

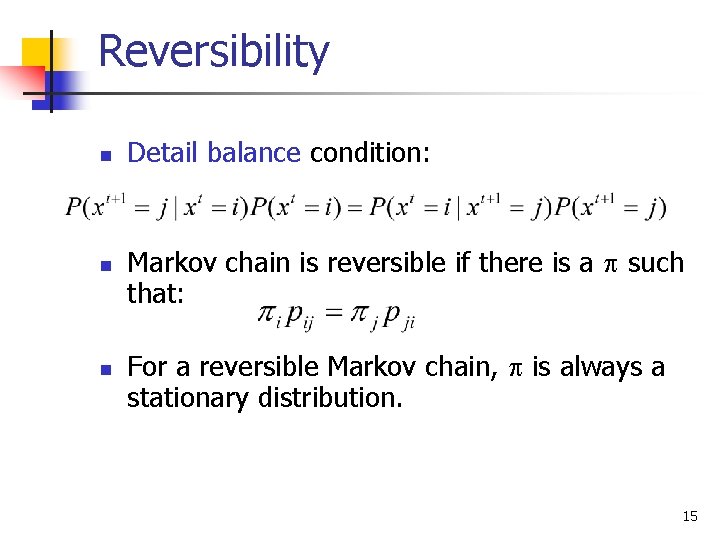

Reversibility n n n Detail balance condition: Markov chain is reversible if there is a such that: For a reversible Markov chain, is always a stationary distribution. 15

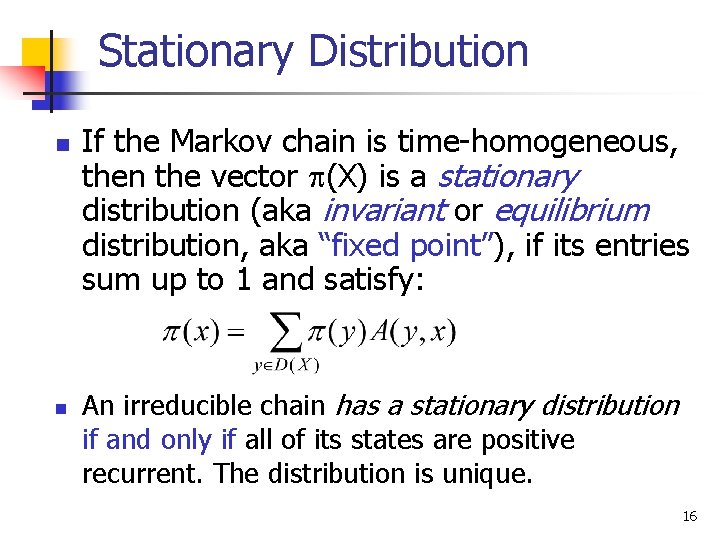

Stationary Distribution n n If the Markov chain is time-homogeneous, then the vector (X) is a stationary distribution (aka invariant or equilibrium distribution, aka “fixed point”), if its entries sum up to 1 and satisfy: An irreducible chain has a stationary distribution if and only if all of its states are positive recurrent. The distribution is unique. 16

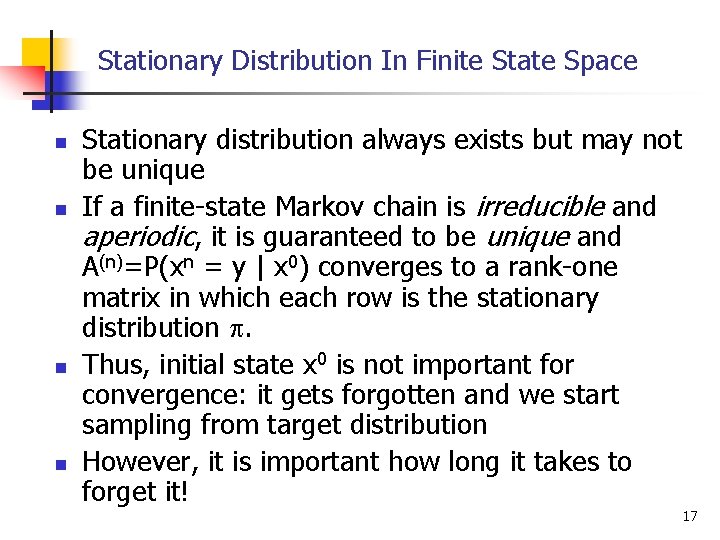

Stationary Distribution In Finite State Space n n Stationary distribution always exists but may not be unique If a finite-state Markov chain is irreducible and aperiodic, it is guaranteed to be unique and A(n)=P(xn = y | x 0) converges to a rank-one matrix in which each row is the stationary distribution . Thus, initial state x 0 is not important for convergence: it gets forgotten and we start sampling from target distribution However, it is important how long it takes to forget it! 17

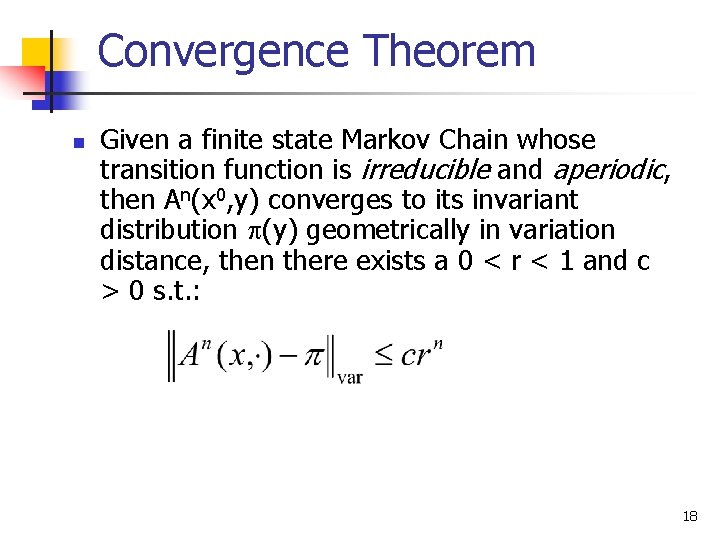

Convergence Theorem n Given a finite state Markov Chain whose transition function is irreducible and aperiodic, then An(x 0, y) converges to its invariant distribution (y) geometrically in variation distance, then there exists a 0 < r < 1 and c > 0 s. t. : 18

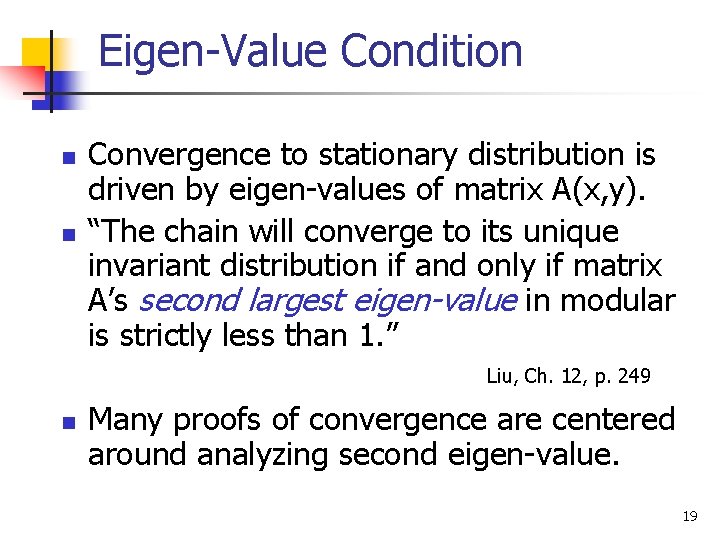

Eigen-Value Condition n n Convergence to stationary distribution is driven by eigen-values of matrix A(x, y). “The chain will converge to its unique invariant distribution if and only if matrix A’s second largest eigen-value in modular is strictly less than 1. ” Liu, Ch. 12, p. 249 n Many proofs of convergence are centered around analyzing second eigen-value. 19

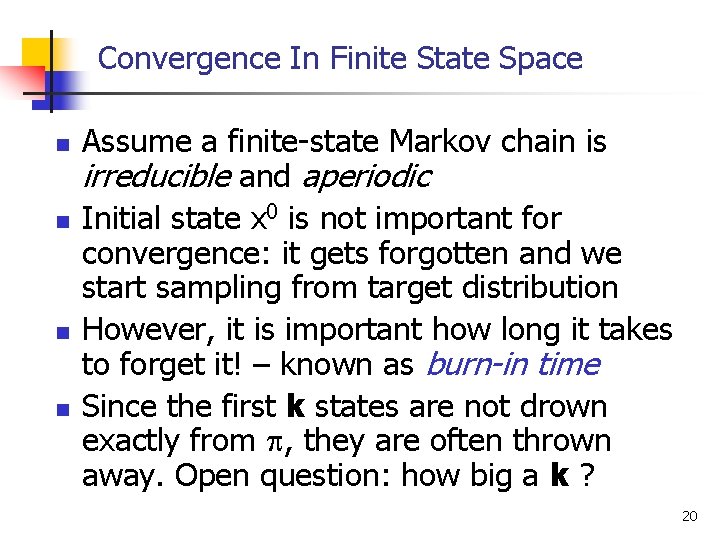

Convergence In Finite State Space n n Assume a finite-state Markov chain is irreducible and aperiodic Initial state x 0 is not important for convergence: it gets forgotten and we start sampling from target distribution However, it is important how long it takes to forget it! – known as burn-in time Since the first k states are not drown exactly from , they are often thrown away. Open question: how big a k ? 20

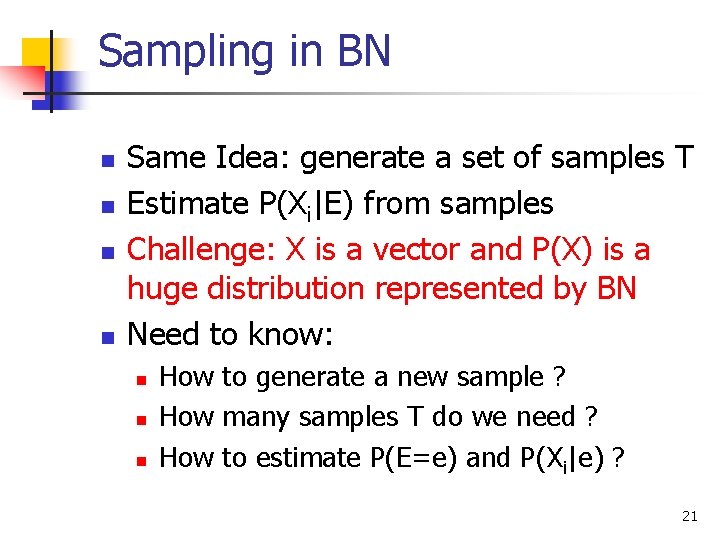

Sampling in BN n n Same Idea: generate a set of samples T Estimate P(Xi|E) from samples Challenge: X is a vector and P(X) is a huge distribution represented by BN Need to know: n n n How to generate a new sample ? How many samples T do we need ? How to estimate P(E=e) and P(Xi|e) ? 21

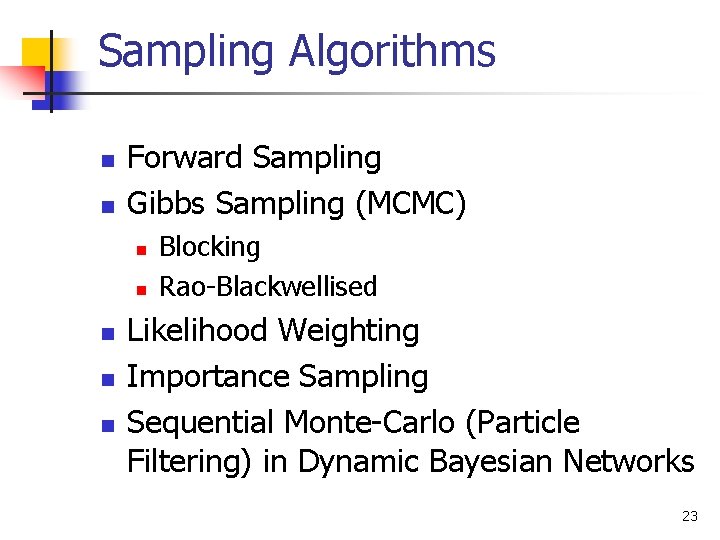

Sampling Algorithms n n Forward Sampling Gibbs Sampling (MCMC) n n n Blocking Rao-Blackwellised Likelihood Weighting Importance Sampling Sequential Monte-Carlo (Particle Filtering) in Dynamic Bayesian Networks 23

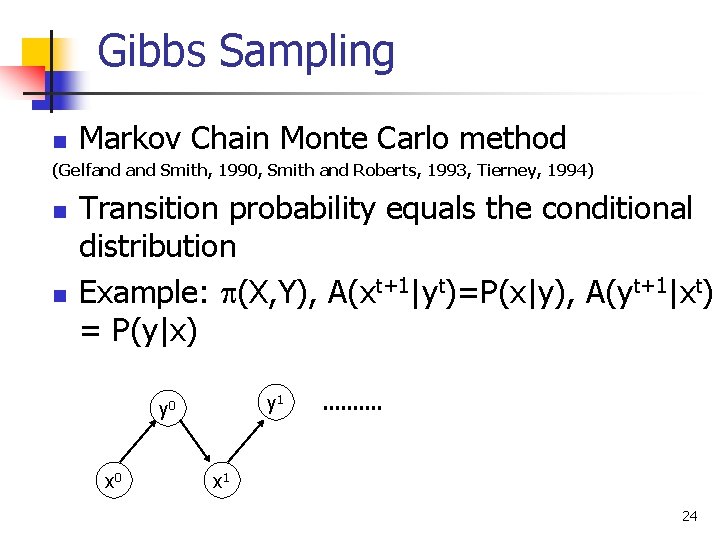

Gibbs Sampling n Markov Chain Monte Carlo method (Gelfand Smith, 1990, Smith and Roberts, 1993, Tierney, 1994) n n Transition probability equals the conditional distribution Example: (X, Y), A(xt+1|yt)=P(x|y), A(yt+1|xt) = P(y|x) y 1 y 0 x 1 24

Gibbs Sampling for BN n n Samples are dependent, form Markov Chain Sample from P’(X|e) which converges to P(X|e) Guaranteed to converge when all P > 0 Methods to improve convergence: n n n Blocking Rao-Blackwellised Error Bounds n n Lag-t autocovariance Multiple Chains, Chebyshev’s Inequality 25

![Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an](http://slidetodoc.com/presentation_image_h2/007f5701fe5e94923e297572b25c17a8/image-23.jpg)

Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an instantiation of all variables in the network: Sampling process n n Fix values of observed variables e Instantiate node values in sample x 0 at random Generate samples x 1, x 2, …x. T from P(x|e) Compute posteriors from samples 26

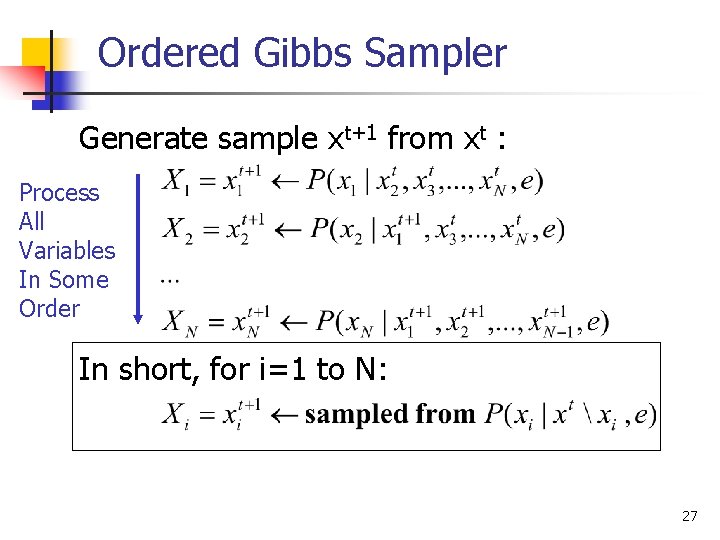

Ordered Gibbs Sampler Generate sample xt+1 from xt : Process All Variables In Some Order In short, for i=1 to N: 27

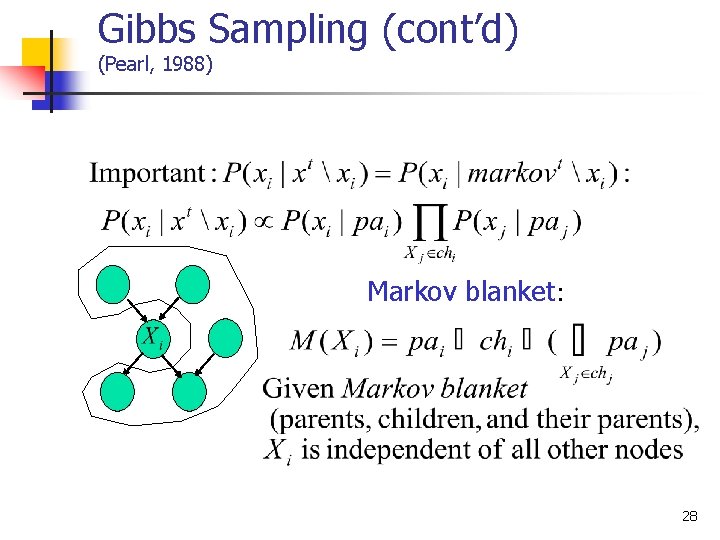

Gibbs Sampling (cont’d) (Pearl, 1988) Markov blanket: 28

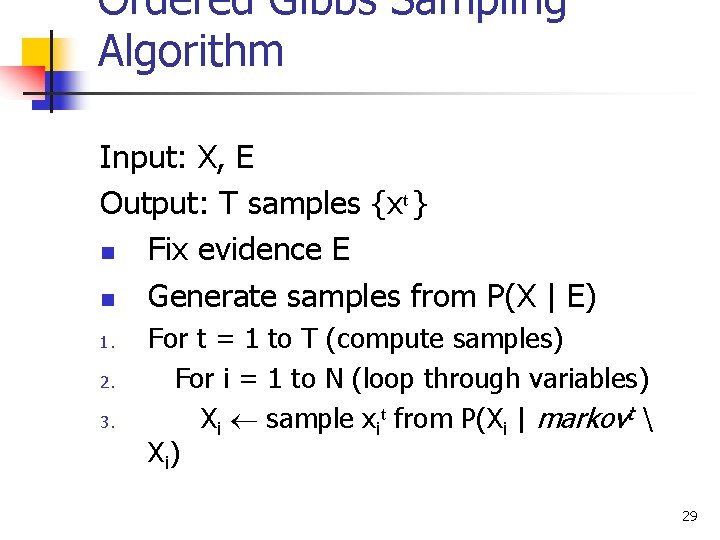

Ordered Gibbs Sampling Algorithm Input: X, E Output: T samples {xt } n Fix evidence E n Generate samples from P(X | E) 1. 2. 3. For t = 1 to T (compute samples) For i = 1 to N (loop through variables) Xi sample xit from P(Xi | markovt X i) 29

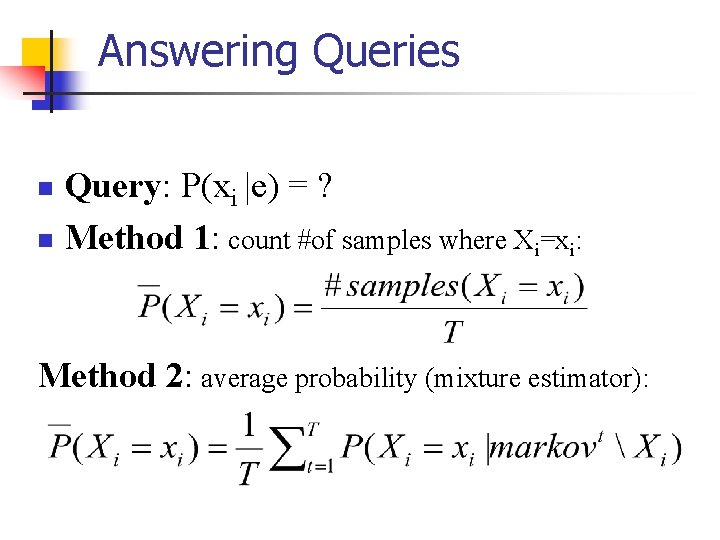

Answering Queries Query: P(xi |e) = ? n Method 1: count #of samples where Xi=xi: n Method 2: average probability (mixture estimator):

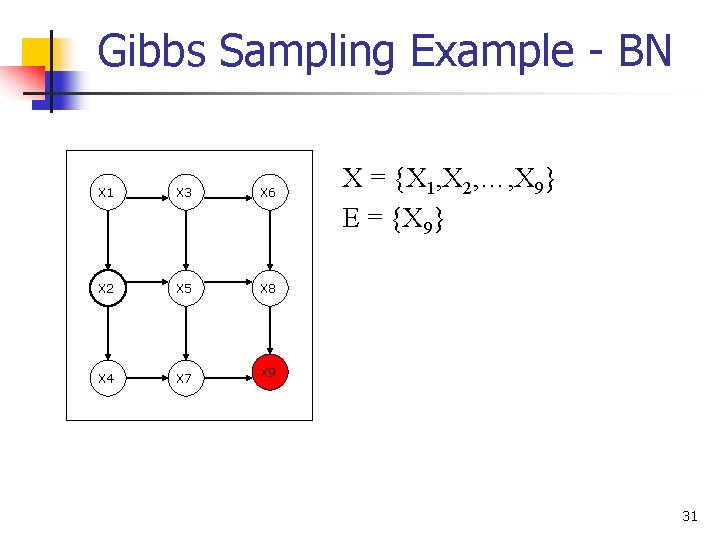

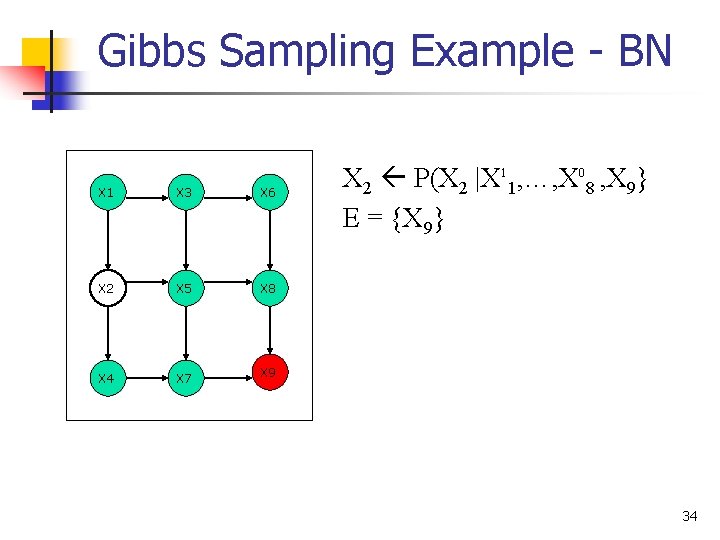

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X = {X 1, X 2, …, X 9} E = {X 9} 31

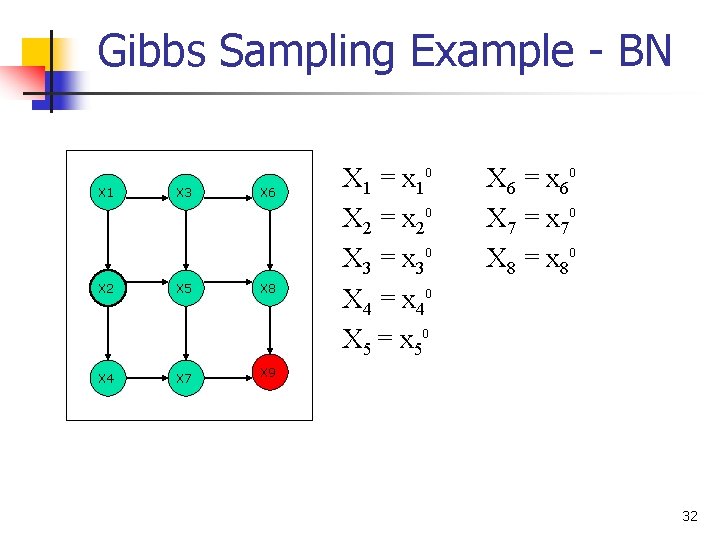

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 1 = x 10 X 2 = x 20 X 3 = x 30 X 4 = x 40 X 5 = x 50 X 6 = x 60 X 7 = x 70 X 8 = x 80 32

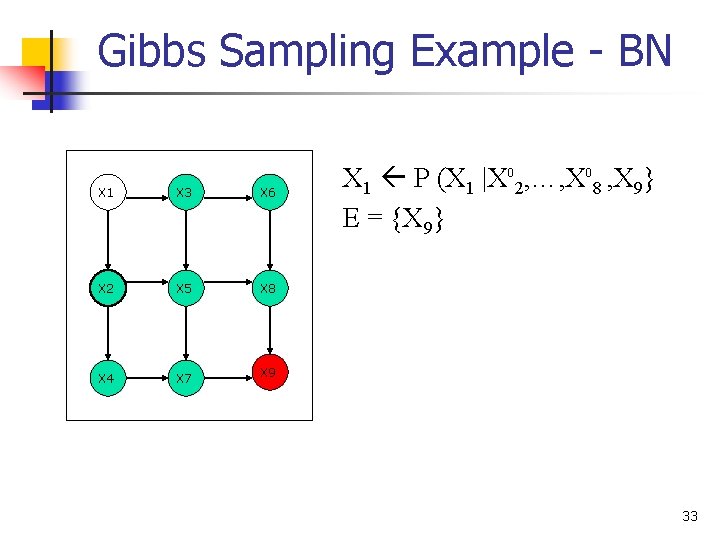

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 1 P (X 1 |X 02, …, X 08 , X 9} E = {X 9} 33

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 2 P(X 2 |X 11, …, X 08 , X 9} E = {X 9} 34

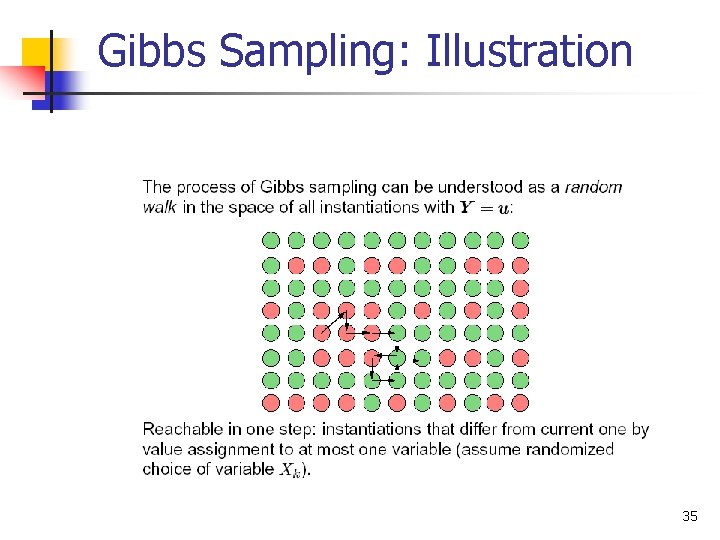

Gibbs Sampling: Illustration 35

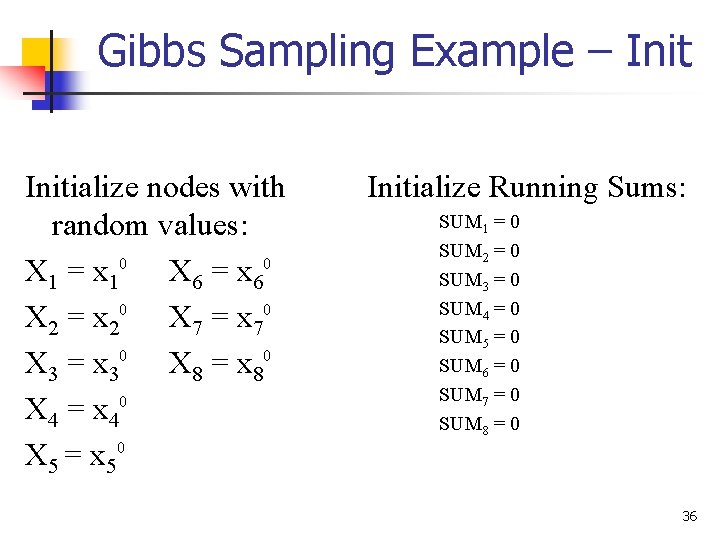

Gibbs Sampling Example – Initialize nodes with random values: X 1 = x 10 X 6 = x 60 X 2 = x 20 X 7 = x 70 X 3 = x 30 X 8 = x 80 X 4 = x 40 X 5 = x 50 Initialize Running Sums: SUM 1 = 0 SUM 2 = 0 SUM 3 = 0 SUM 4 = 0 SUM 5 = 0 SUM 6 = 0 SUM 7 = 0 SUM 8 = 0 36

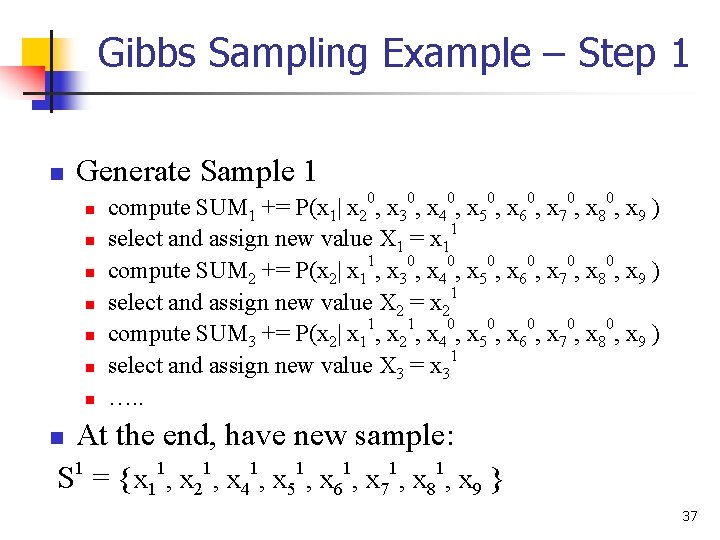

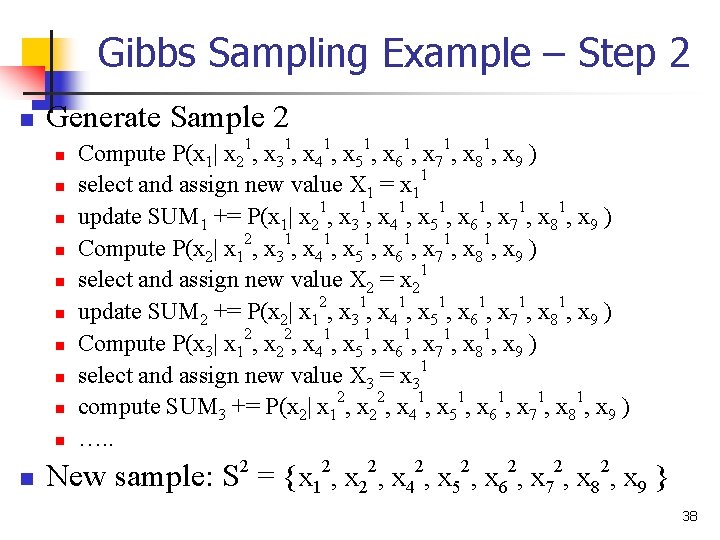

Gibbs Sampling Example – Step 1 n Generate Sample 1 n n n n compute SUM 1 += P(x 1| x 20, x 30, x 40, x 50, x 60, x 70, x 80, x 9 ) select and assign new value X 1 = x 11 compute SUM 2 += P(x 2| x 11, x 30, x 40, x 50, x 60, x 70, x 80, x 9 ) select and assign new value X 2 = x 21 compute SUM 3 += P(x 2| x 11, x 21, x 40, x 50, x 60, x 70, x 80, x 9 ) select and assign new value X 3 = x 31 …. . At the end, have new sample: S 1 = {x 11, x 21, x 41, x 51, x 61, x 71, x 81, x 9 } n 37

Gibbs Sampling Example – Step 2 n Generate Sample 2 n n n Compute P(x 1| x 21, x 31, x 41, x 51, x 61, x 71, x 81, x 9 ) select and assign new value X 1 = x 11 update SUM 1 += P(x 1| x 21, x 31, x 41, x 51, x 61, x 71, x 81, x 9 ) Compute P(x 2| x 12, x 31, x 41, x 51, x 61, x 71, x 81, x 9 ) select and assign new value X 2 = x 21 update SUM 2 += P(x 2| x 12, x 31, x 41, x 51, x 61, x 71, x 81, x 9 ) Compute P(x 3| x 12, x 22, x 41, x 51, x 61, x 71, x 81, x 9 ) select and assign new value X 3 = x 31 compute SUM 3 += P(x 2| x 12, x 22, x 41, x 51, x 61, x 71, x 81, x 9 ) …. . New sample: S 2 = {x 12, x 22, x 42, x 52, x 62, x 72, x 82, x 9 } 38

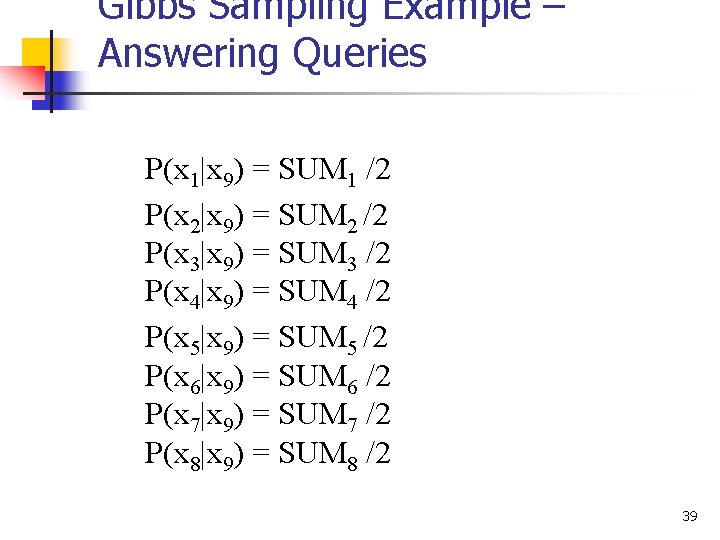

Gibbs Sampling Example – Answering Queries P(x 1|x 9) = SUM 1 /2 P(x 2|x 9) = SUM 2 /2 P(x 3|x 9) = SUM 3 /2 P(x 4|x 9) = SUM 4 /2 P(x 5|x 9) = SUM 5 /2 P(x 6|x 9) = SUM 6 /2 P(x 7|x 9) = SUM 7 /2 P(x 8|x 9) = SUM 8 /2 39

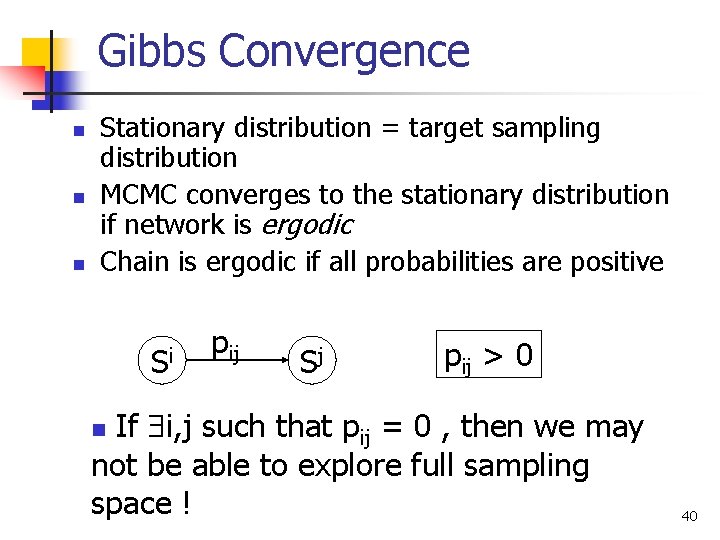

Gibbs Convergence n n n Stationary distribution = target sampling distribution MCMC converges to the stationary distribution if network is ergodic Chain is ergodic if all probabilities are positive Si pij Sj pij > 0 If i, j such that pij = 0 , then we may not be able to explore full sampling space ! n 40

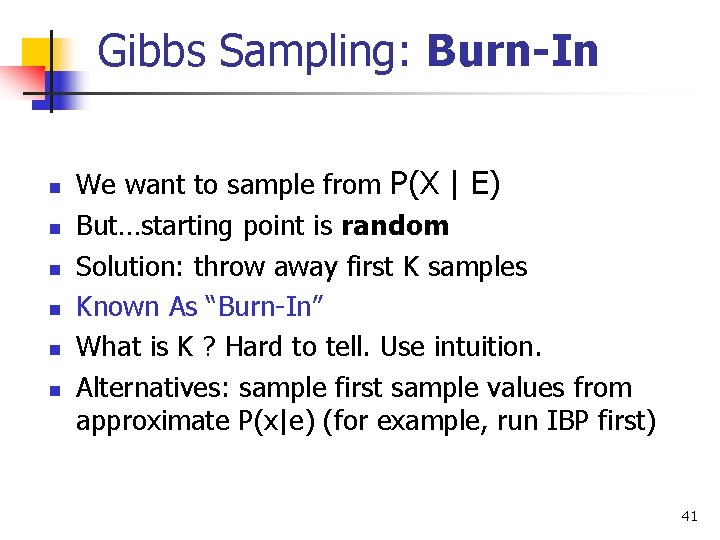

Gibbs Sampling: Burn-In n n n We want to sample from P(X | E) But…starting point is random Solution: throw away first K samples Known As “Burn-In” What is K ? Hard to tell. Use intuition. Alternatives: sample first sample values from approximate P(x|e) (for example, run IBP first) 41

Gibbs Sampling: Performance +Advantage: guaranteed to converge to P(X|E) -Disadvantage: convergence may be slow Problems: n n Samples are dependent ! Statistical variance is too big in highdimensional problems 42

Gibbs: Speeding Convergence Objectives: 1. Reduce dependence between samples (autocorrelation) n n 2. Skip samples Randomize Variable Sampling Order Reduce variance n n Blocking Gibbs Sampling Rao-Blackwellisation 43

Skipping Samples Pick only every k-th sample (Gayer, 1992) Can reduce dependence between samples ! Increases variance ! Waists samples ! n 44

Randomized Variable Order Random Scan Gibbs Sampler Pick each next variable Xi for update at random with probability pi , i pi = 1. (In the simplest case, pi are distributed uniformly. ) In some instances, reduces variance (Mac. Eachern, Peruggia, 1999 “Subsampling the Gibbs Sampler: Variance Reduction”) 45

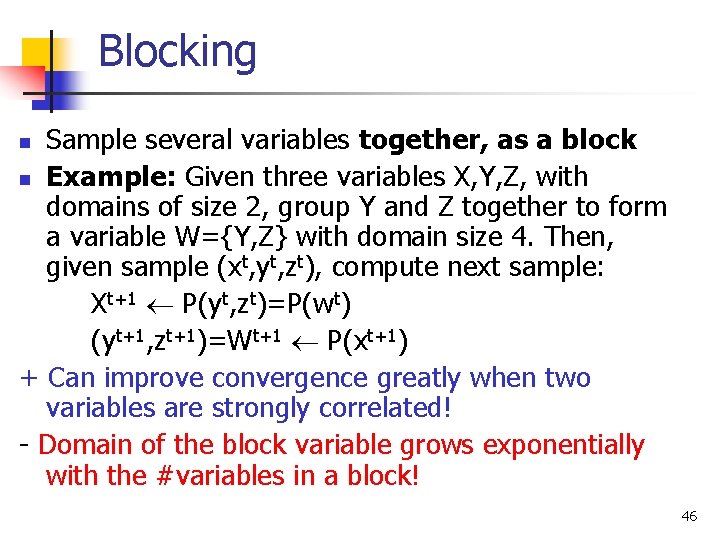

Blocking Sample several variables together, as a block n Example: Given three variables X, Y, Z, with domains of size 2, group Y and Z together to form a variable W={Y, Z} with domain size 4. Then, given sample (xt, yt, zt), compute next sample: Xt+1 P(yt, zt)=P(wt) (yt+1, zt+1)=Wt+1 P(xt+1) + Can improve convergence greatly when two variables are strongly correlated! - Domain of the block variable grows exponentially with the #variables in a block! n 46

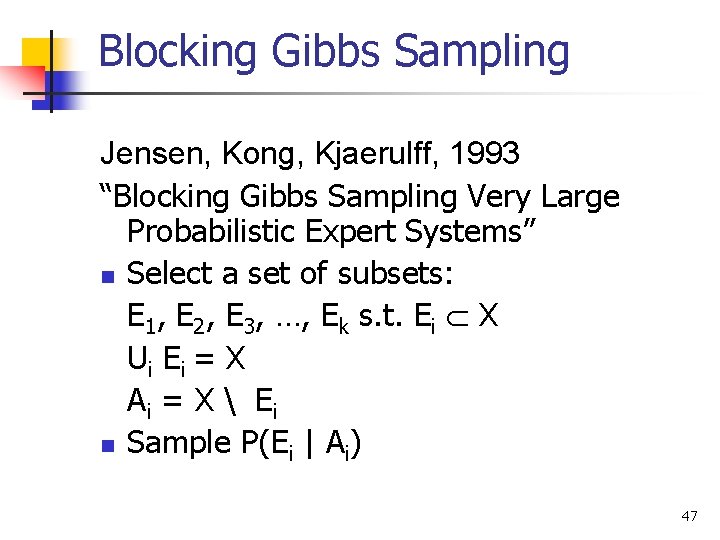

Blocking Gibbs Sampling Jensen, Kong, Kjaerulff, 1993 “Blocking Gibbs Sampling Very Large Probabilistic Expert Systems” n Select a set of subsets: E 1, E 2, E 3, …, Ek s. t. Ei X U i Ei = X Ai = X Ei n Sample P(Ei | Ai) 47

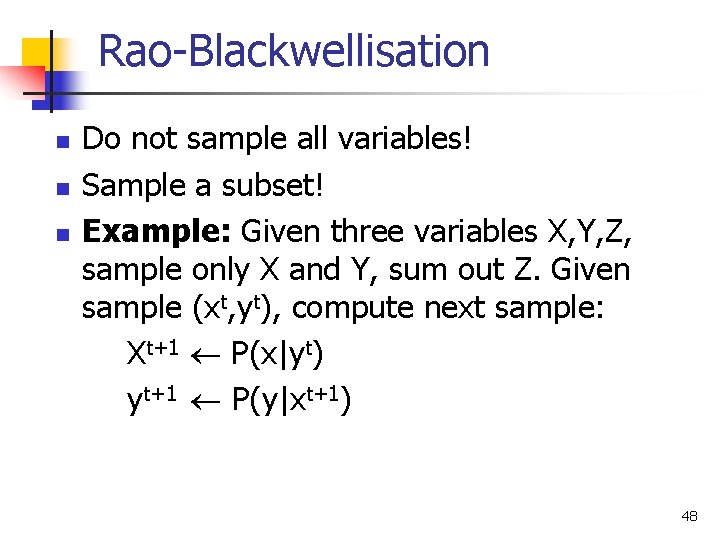

Rao-Blackwellisation n Do not sample all variables! Sample a subset! Example: Given three variables X, Y, Z, sample only X and Y, sum out Z. Given sample (xt, yt), compute next sample: Xt+1 P(x|yt) yt+1 P(y|xt+1) 48

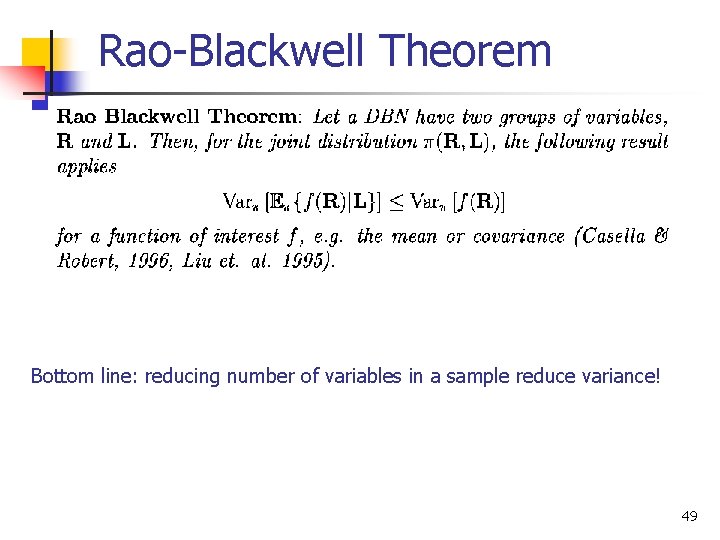

Rao-Blackwell Theorem Bottom line: reducing number of variables in a sample reduce variance! 49

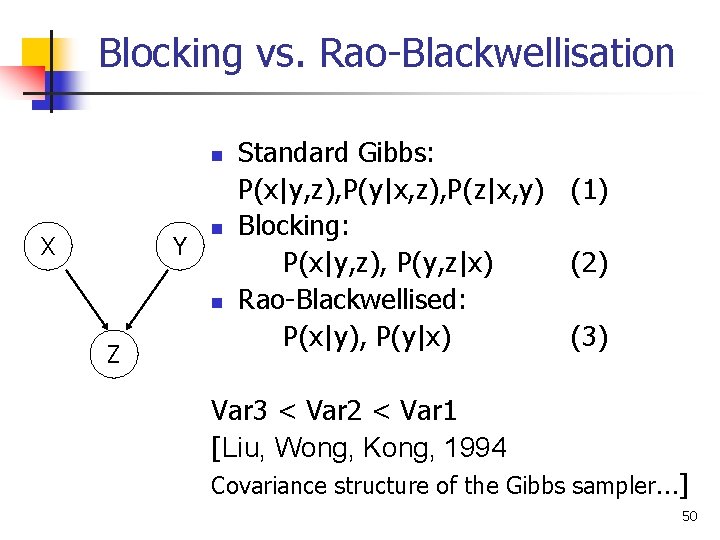

Blocking vs. Rao-Blackwellisation n X Y n n Z Standard Gibbs: P(x|y, z), P(y|x, z), P(z|x, y) (1) Blocking: P(x|y, z), P(y, z|x) (2) Rao-Blackwellised: P(x|y), P(y|x) (3) Var 3 < Var 2 < Var 1 [Liu, Wong, Kong, 1994 Covariance structure of the Gibbs sampler…] 50

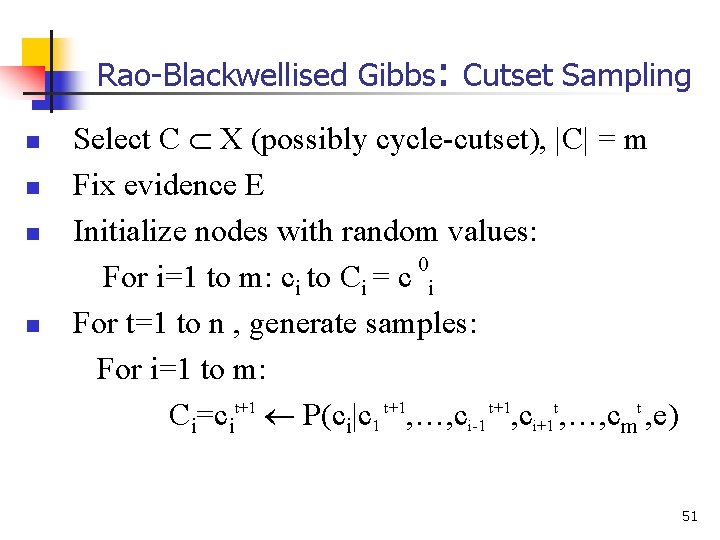

Rao-Blackwellised Gibbs: Cutset Sampling n n Select C X (possibly cycle-cutset), |C| = m Fix evidence E Initialize nodes with random values: 0 For i=1 to m: ci to Ci = c i For t=1 to n , generate samples: For i=1 to m: Ci=cit+1 P(ci|c 1 t+1, …, ci-1 t+1, ci+1 t, …, cmt , e) 51

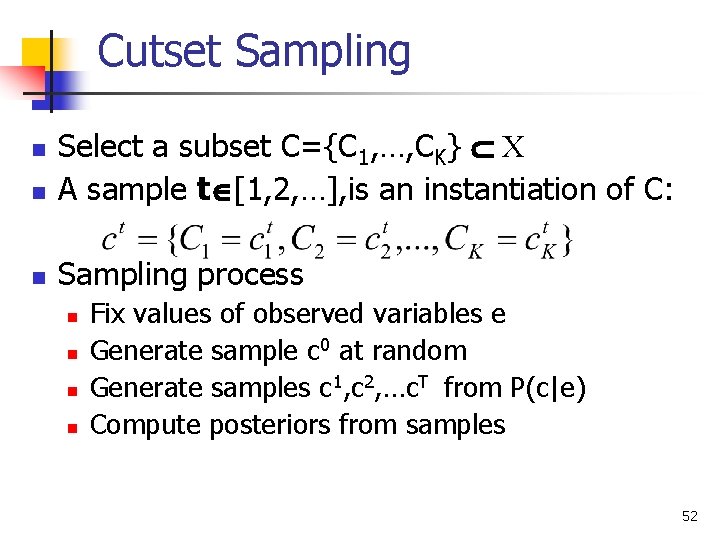

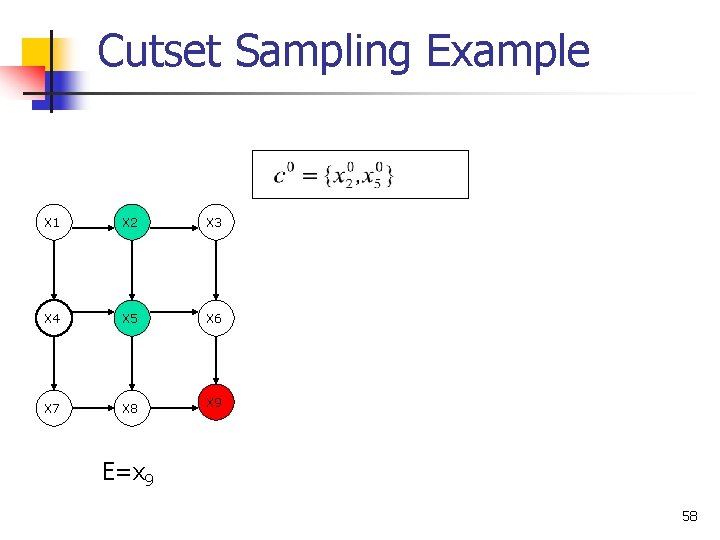

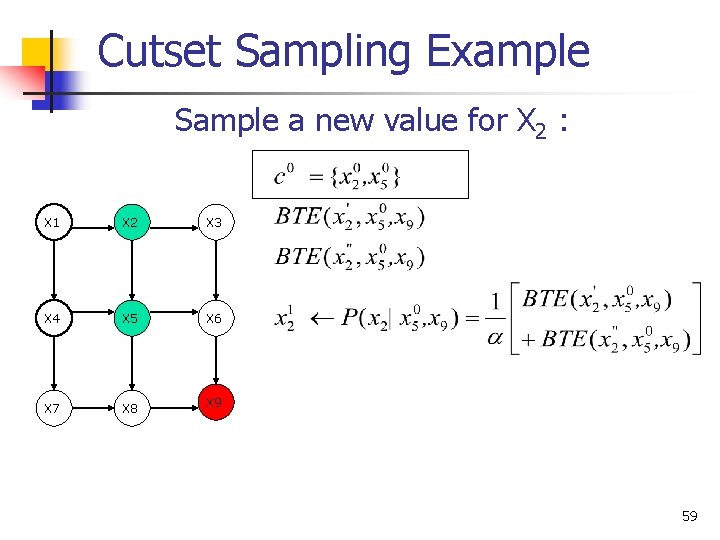

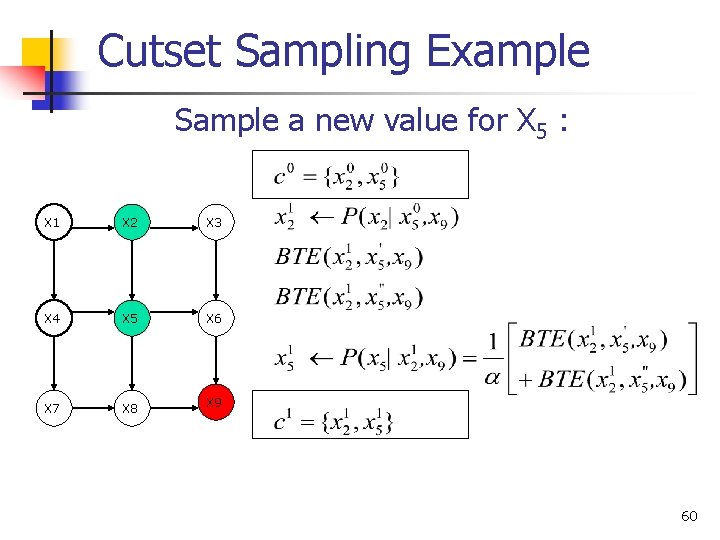

Cutset Sampling n Select a subset C={C 1, …, CK} X A sample t [1, 2, …], is an instantiation of C: n Sampling process n n n Fix values of observed variables e Generate sample c 0 at random Generate samples c 1, c 2, …c. T from P(c|e) Compute posteriors from samples 52

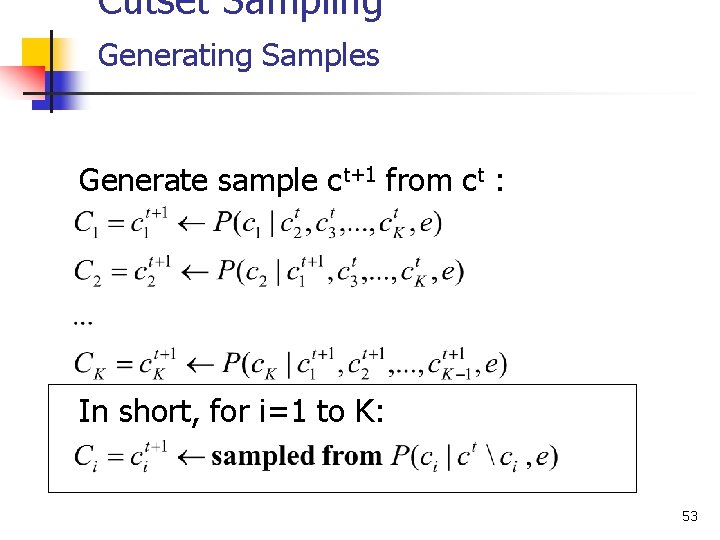

Cutset Sampling Generating Samples Generate sample ct+1 from ct : In short, for i=1 to K: 53

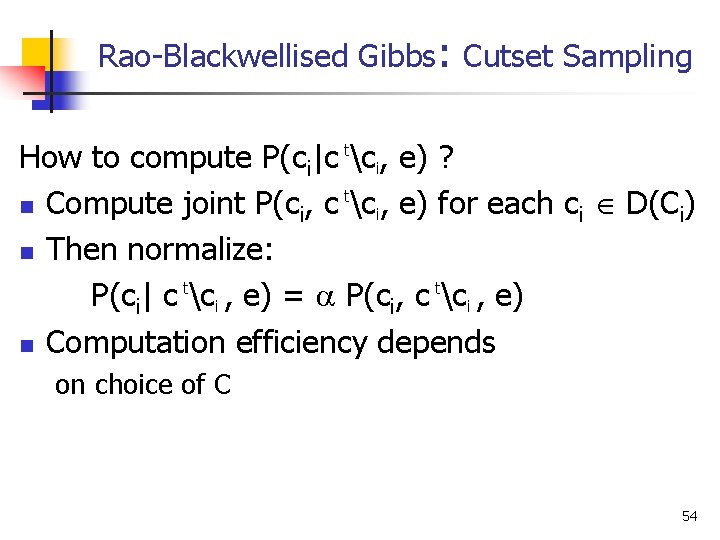

Rao-Blackwellised Gibbs: Cutset Sampling How to compute P(ci|c tci, e) ? t n Compute joint P(ci, c ci, e) for each ci D(Ci) n Then normalize: P(ci| c tci , e) = P(ci, c tci , e) n Computation efficiency depends on choice of C 54

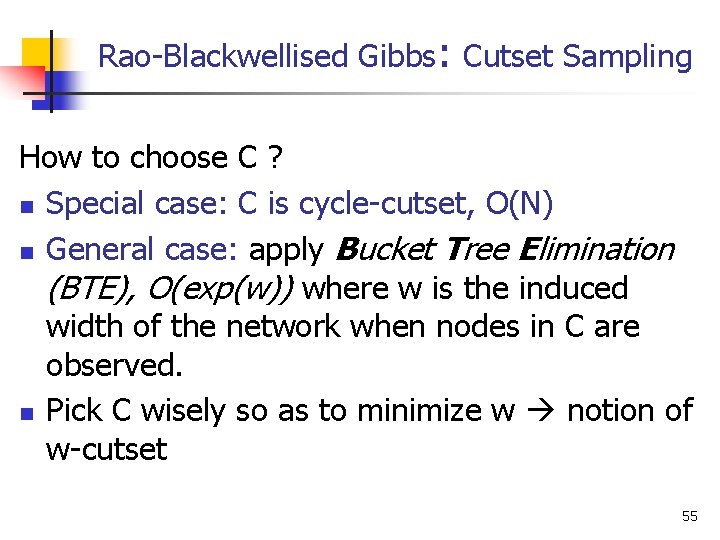

Rao-Blackwellised Gibbs: Cutset Sampling How to choose C ? n Special case: C is cycle-cutset, O(N) n General case: apply Bucket Tree Elimination (BTE), O(exp(w)) where w is the induced width of the network when nodes in C are observed. n Pick C wisely so as to minimize w notion of w-cutset 55

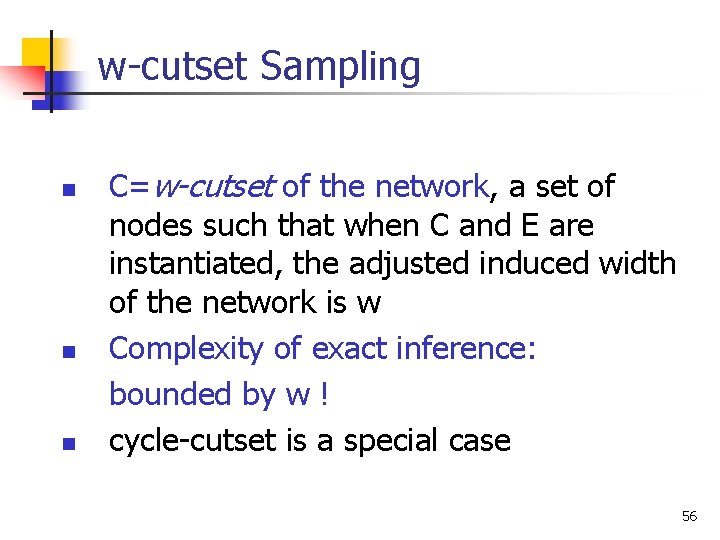

w-cutset Sampling n n n C=w-cutset of the network, a set of nodes such that when C and E are instantiated, the adjusted induced width of the network is w Complexity of exact inference: bounded by w ! cycle-cutset is a special case 56

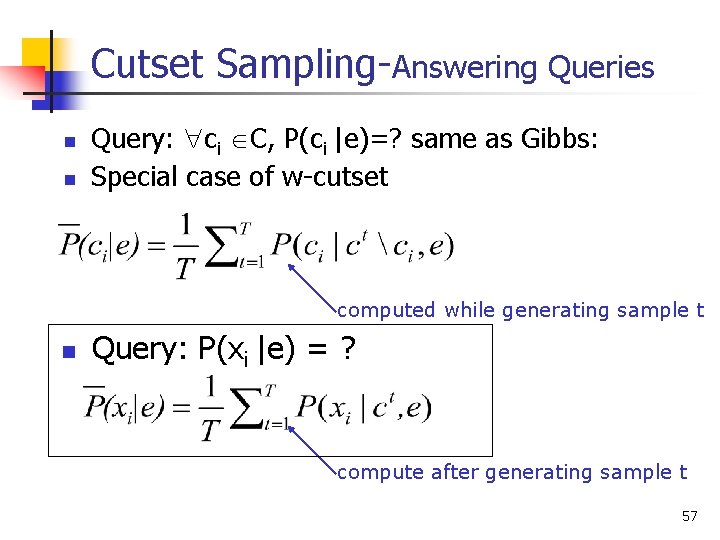

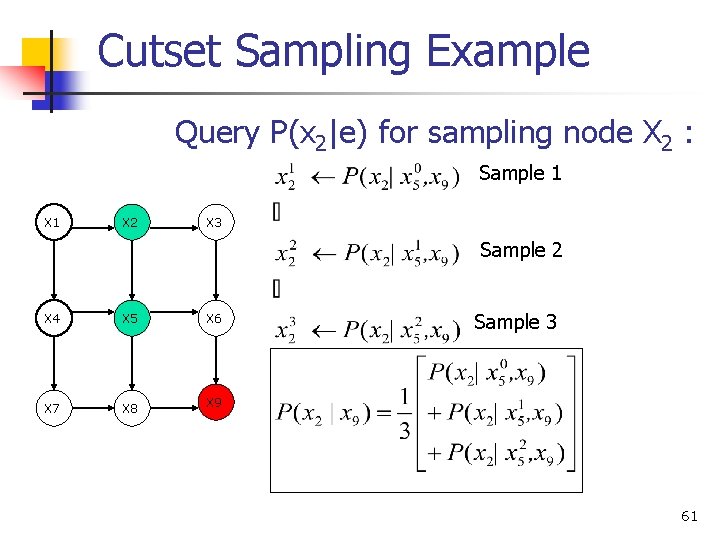

Cutset Sampling-Answering Queries n n Query: ci C, P(ci |e)=? same as Gibbs: Special case of w-cutset computed while generating sample t n Query: P(xi |e) = ? compute after generating sample t 57

Cutset Sampling Example X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 E=x 9 58

Cutset Sampling Example Sample a new value for X 2 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 59

Cutset Sampling Example Sample a new value for X 5 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 60

Cutset Sampling Example Query P(x 2|e) for sampling node X 2 : Sample 1 X 2 X 3 Sample 2 X 4 X 5 X 6 X 7 X 8 X 9 Sample 3 61

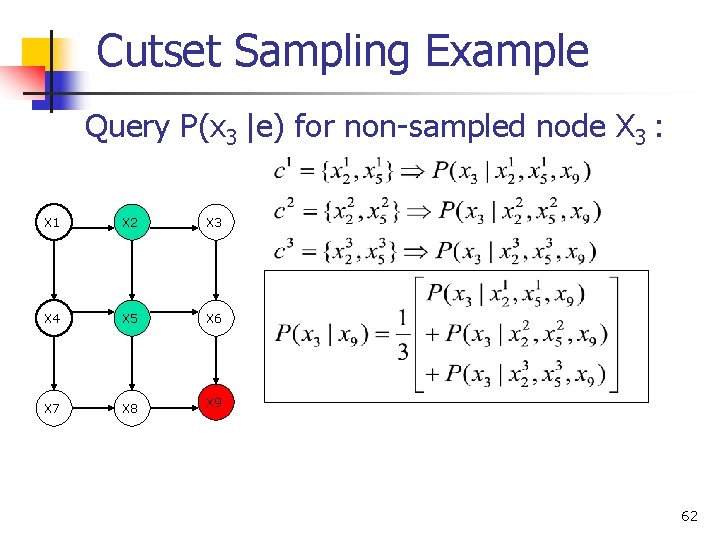

Cutset Sampling Example Query P(x 3 |e) for non-sampled node X 3 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 62

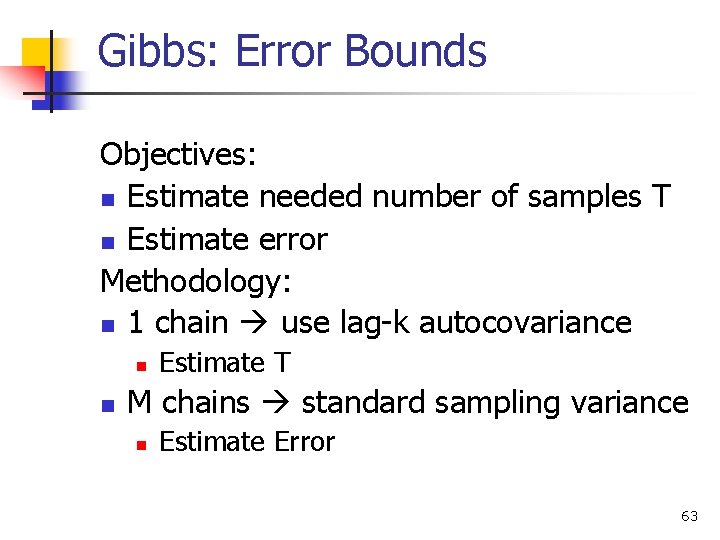

Gibbs: Error Bounds Objectives: n Estimate needed number of samples T n Estimate error Methodology: n 1 chain use lag-k autocovariance n n Estimate T M chains standard sampling variance n Estimate Error 63

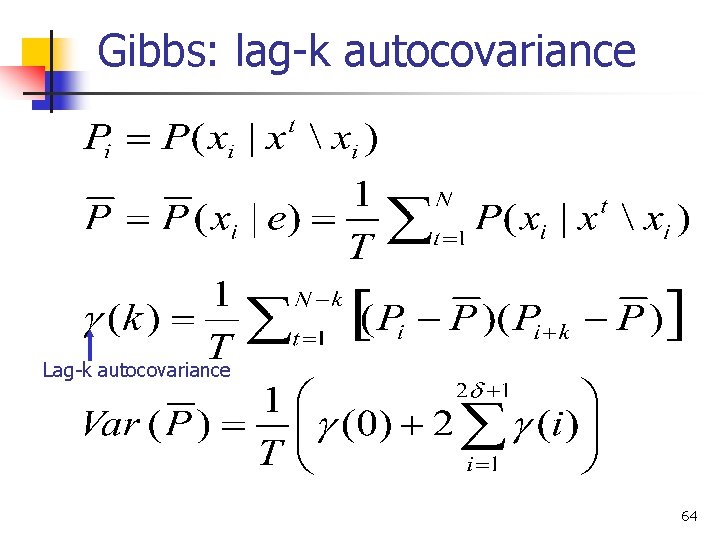

Gibbs: lag-k autocovariance Lag-k autocovariance 64

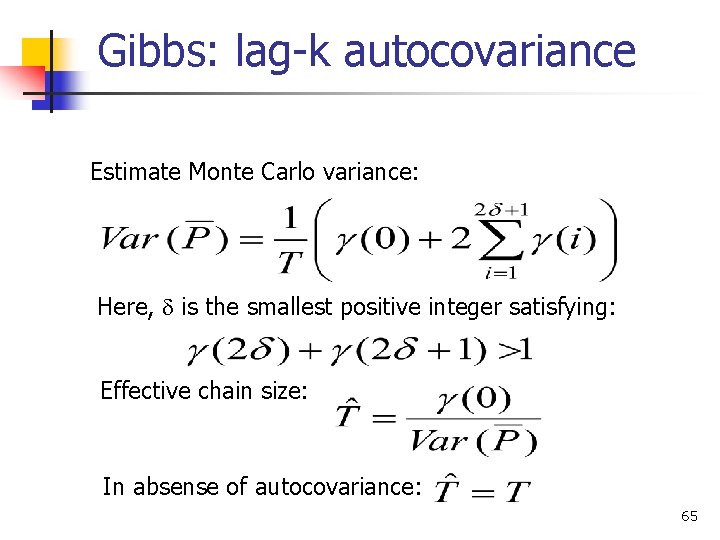

Gibbs: lag-k autocovariance Estimate Monte Carlo variance: Here, is the smallest positive integer satisfying: Effective chain size: In absense of autocovariance: 65

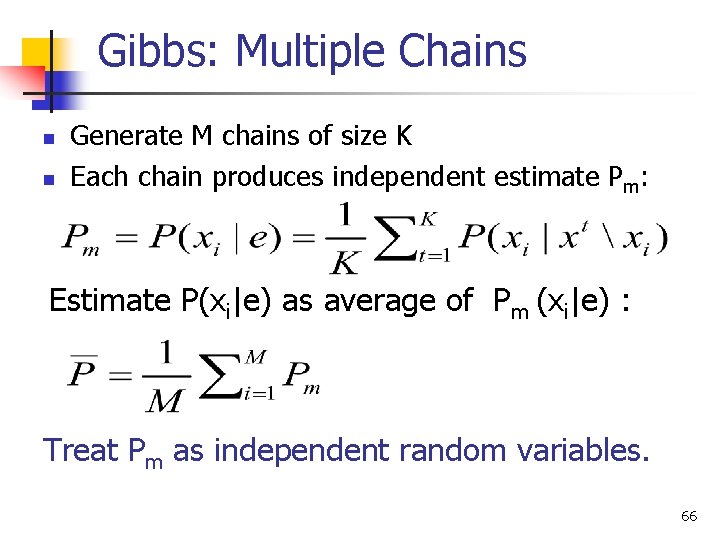

Gibbs: Multiple Chains n n Generate M chains of size K Each chain produces independent estimate Pm: Estimate P(xi|e) as average of Pm (xi|e) : Treat Pm as independent random variables. 66

Gibbs: Multiple Chains { Pm } are independent random variables Therefore: 67

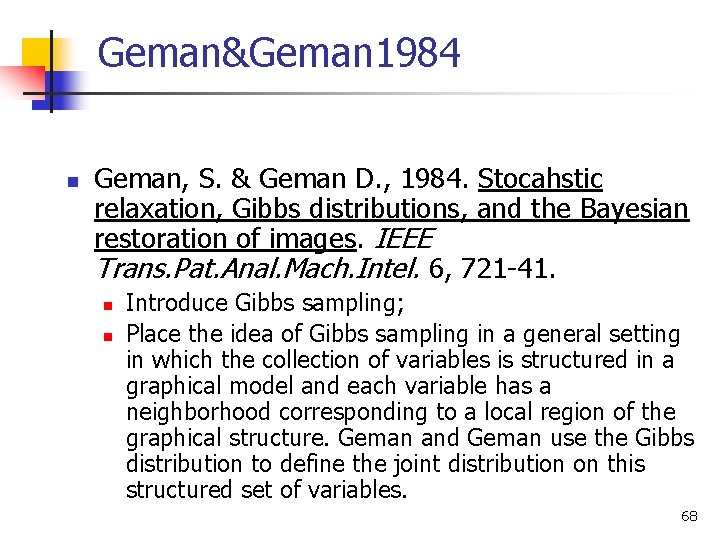

Geman&Geman 1984 n Geman, S. & Geman D. , 1984. Stocahstic relaxation, Gibbs distributions, and the Bayesian restoration of images. IEEE Trans. Pat. Anal. Mach. Intel. 6, 721 -41. n n Introduce Gibbs sampling; Place the idea of Gibbs sampling in a general setting in which the collection of variables is structured in a graphical model and each variable has a neighborhood corresponding to a local region of the graphical structure. Geman and Geman use the Gibbs distribution to define the joint distribution on this structured set of variables. 68

Tanner&Wong 1987 n Tanner and Wong (1987) n n Data-augmentation Convergence Results 69

Pearl 1988 n Pearl, 1988. Probabilistic Reasoning in Intelligent Systems, Morgan-Kaufmann. n In the case of Bayesian networks, the neighborhoods correspond to the Markov blanket of a variable and the joint distribution is defined by the factorization of the network. 70

Gelfand&Smith, 1990 n Gelfand, A. E. and Smith, A. F. M. , 1990. Sampling-based approaches to calculating marginal densities. J. Am. Statist. Assoc. 85, 398 -409. n Show variance reduction in using mixture estimator for posterior marginals. 71

Neal, 1992 n R. M. Neal, 1992. Connectionist learning of belief networks, Artifical Intelligence, v. 56, pp. 71 -118. n Stochastic simulation in Noisy-Or networks. 72

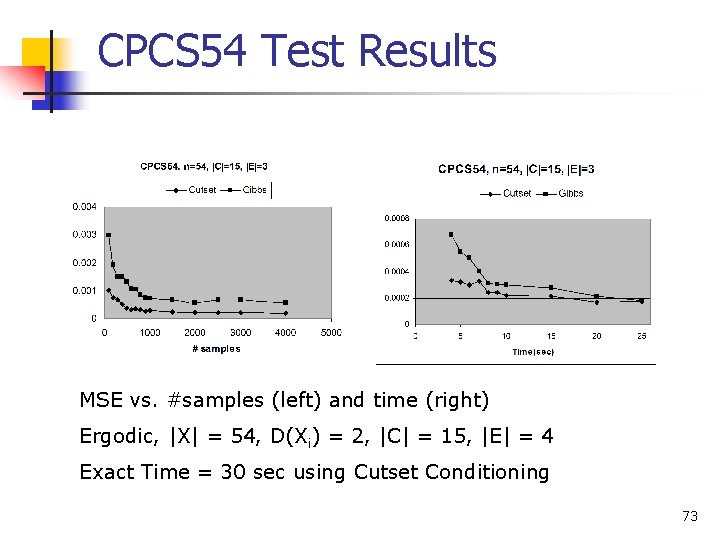

CPCS 54 Test Results MSE vs. #samples (left) and time (right) Ergodic, |X| = 54, D(Xi) = 2, |C| = 15, |E| = 4 Exact Time = 30 sec using Cutset Conditioning 73

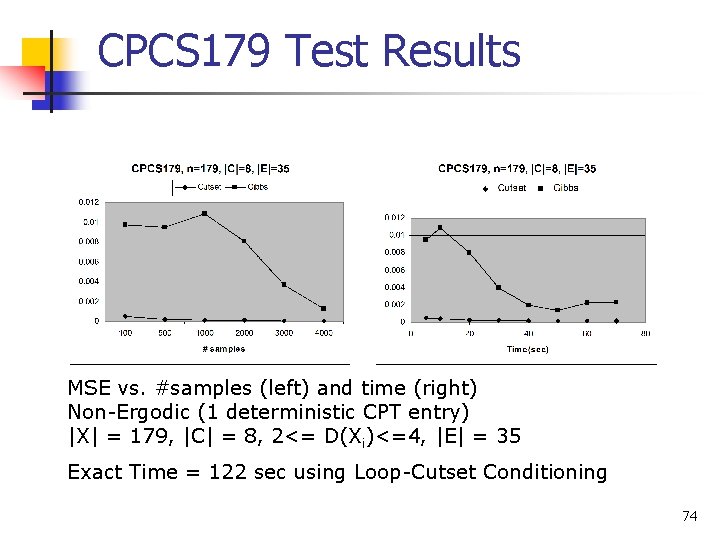

CPCS 179 Test Results MSE vs. #samples (left) and time (right) Non-Ergodic (1 deterministic CPT entry) |X| = 179, |C| = 8, 2<= D(Xi)<=4, |E| = 35 Exact Time = 122 sec using Loop-Cutset Conditioning 74

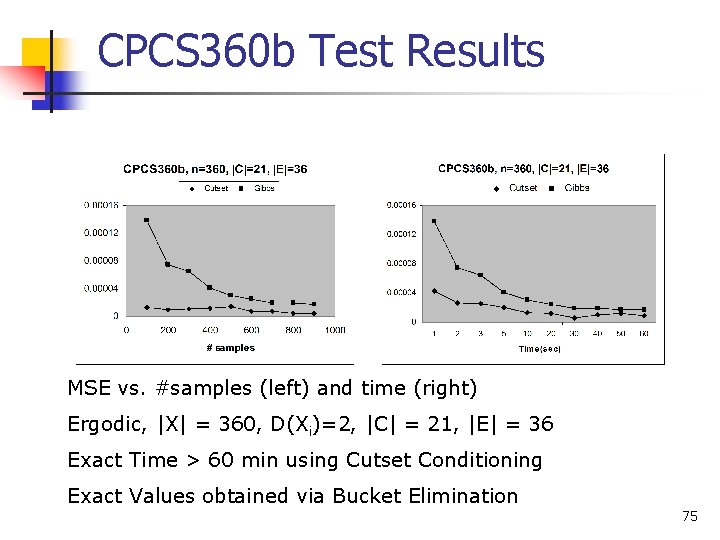

CPCS 360 b Test Results MSE vs. #samples (left) and time (right) Ergodic, |X| = 360, D(Xi)=2, |C| = 21, |E| = 36 Exact Time > 60 min using Cutset Conditioning Exact Values obtained via Bucket Elimination 75

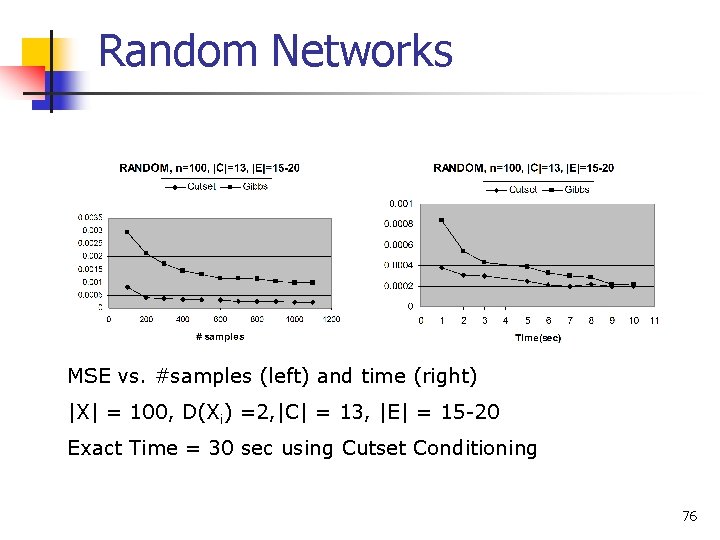

Random Networks MSE vs. #samples (left) and time (right) |X| = 100, D(Xi) =2, |C| = 13, |E| = 15 -20 Exact Time = 30 sec using Cutset Conditioning 76

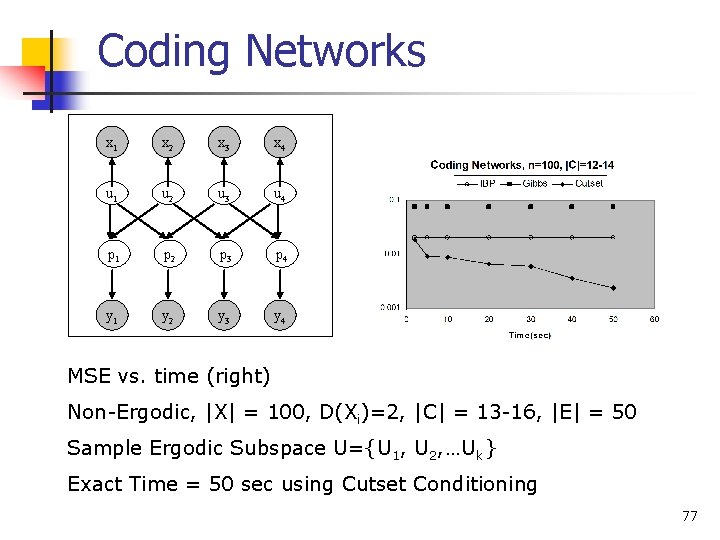

Coding Networks x 1 x 2 x 3 x 4 u 1 u 2 u 3 u 4 p 1 p 2 p 3 p 4 y 1 y 2 y 3 y 4 MSE vs. time (right) Non-Ergodic, |X| = 100, D(Xi)=2, |C| = 13 -16, |E| = 50 Sample Ergodic Subspace U={U 1, U 2, …Uk} Exact Time = 50 sec using Cutset Conditioning 77

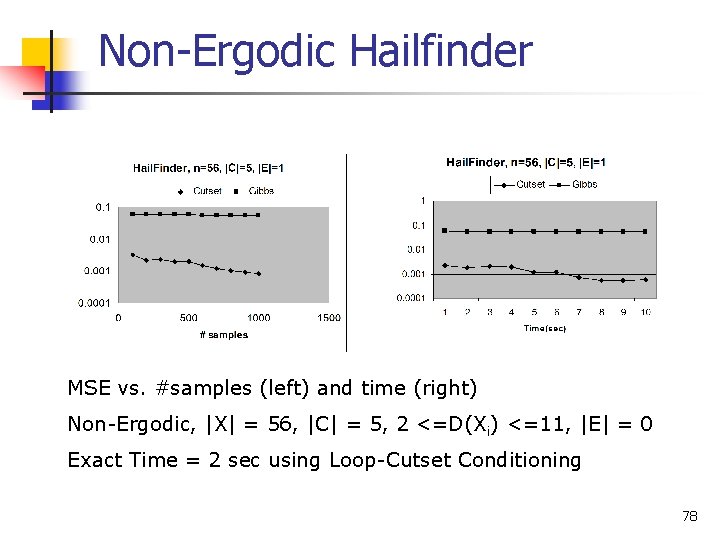

Non-Ergodic Hailfinder MSE vs. #samples (left) and time (right) Non-Ergodic, |X| = 56, |C| = 5, 2 <=D(Xi) <=11, |E| = 0 Exact Time = 2 sec using Loop-Cutset Conditioning 78

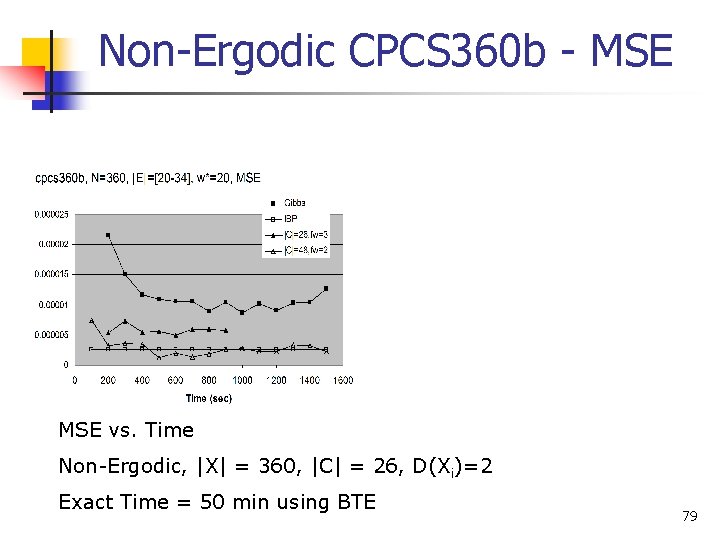

Non-Ergodic CPCS 360 b - MSE vs. Time Non-Ergodic, |X| = 360, |C| = 26, D(Xi)=2 Exact Time = 50 min using BTE 79

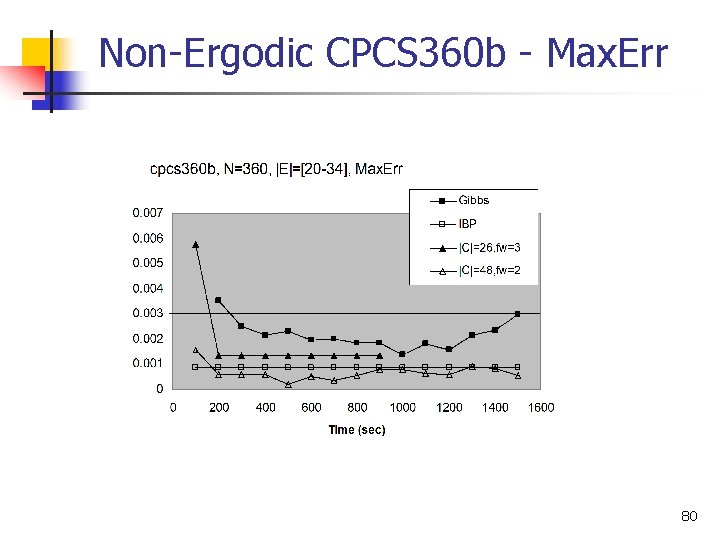

Non-Ergodic CPCS 360 b - Max. Err 80

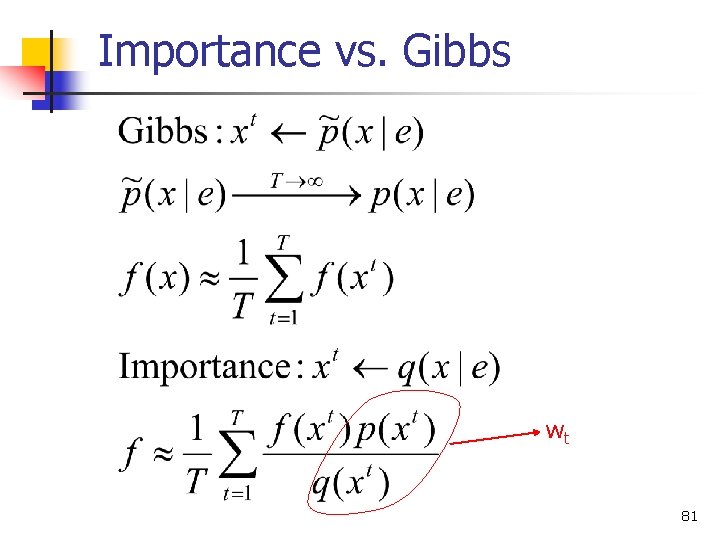

Importance vs. Gibbs wt 81

- Slides: 78