Sampling Bayesian Networks ICS 275 b 2005 1

Sampling Bayesian Networks ICS 275 b 2005 1

Approximation Algorithms Structural Approximations n Eliminate some dependencies n n Remove edges Mini-Bucket Approach Search Approach for optimization tasks: MPE, MAP Sampling Generate random samples and compute values of interest from samples, not original network 2

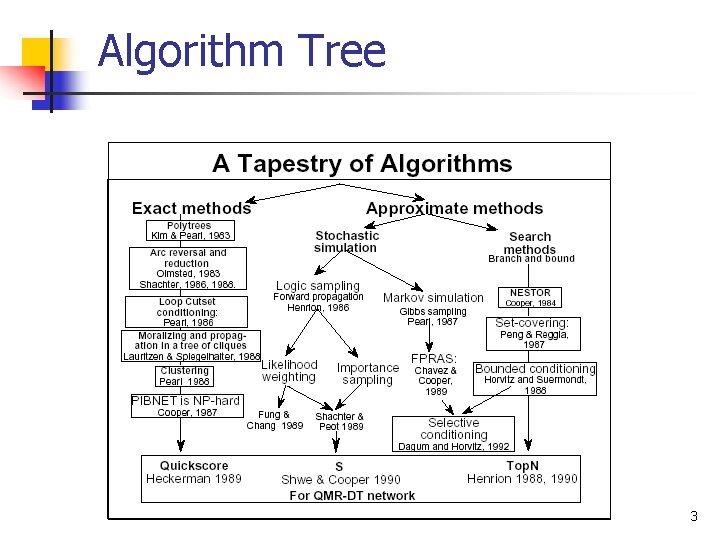

Algorithm Tree 3

Sampling n n n Input: Bayesian network with set of nodes X Sample = a tuple with assigned values s=(X 1=x 1, X 2=x 2, … , Xk=xk) Tuple may include all variables (except evidence) or a subset Sampling schemas dictate how to generate samples (tuples) Ideally, samples are distributed according to P(X|E) 4

Sampling n n n Idea: generate a set of samples T Estimate P(Xi|E) from samples Need to know: n n n How to generate a new sample ? How many samples T do we need ? How to estimate P(Xi|E) ? 5

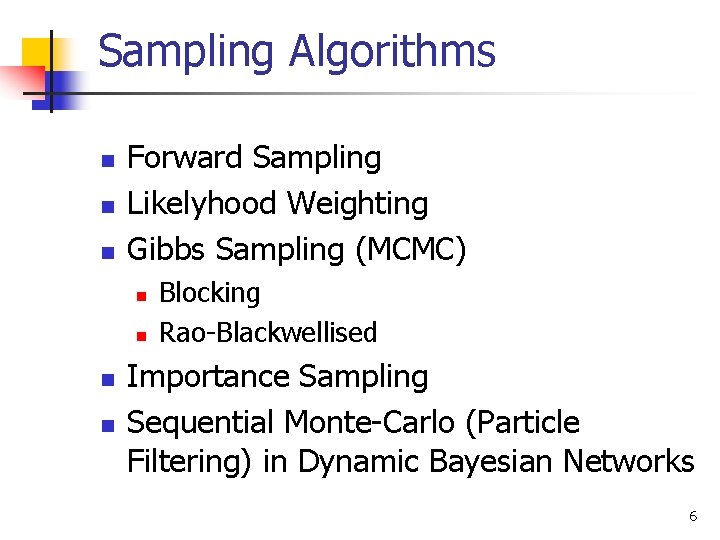

Sampling Algorithms n n n Forward Sampling Likelyhood Weighting Gibbs Sampling (MCMC) n n Blocking Rao-Blackwellised Importance Sampling Sequential Monte-Carlo (Particle Filtering) in Dynamic Bayesian Networks 6

Forward Sampling n n n Case with No evidence Case with Evidence N and Error Bounds 7

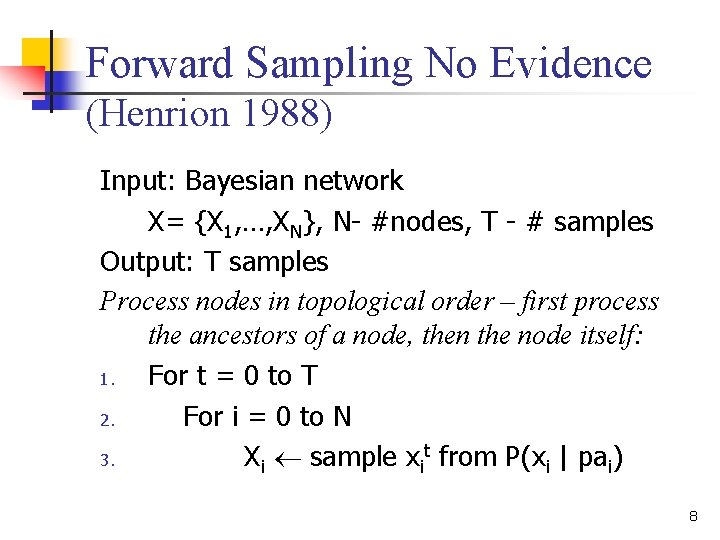

Forward Sampling No Evidence (Henrion 1988) Input: Bayesian network X= {X 1, …, XN}, N- #nodes, T - # samples Output: T samples Process nodes in topological order – first process the ancestors of a node, then the node itself: 1. For t = 0 to T 2. For i = 0 to N 3. Xi sample xit from P(xi | pai) 8

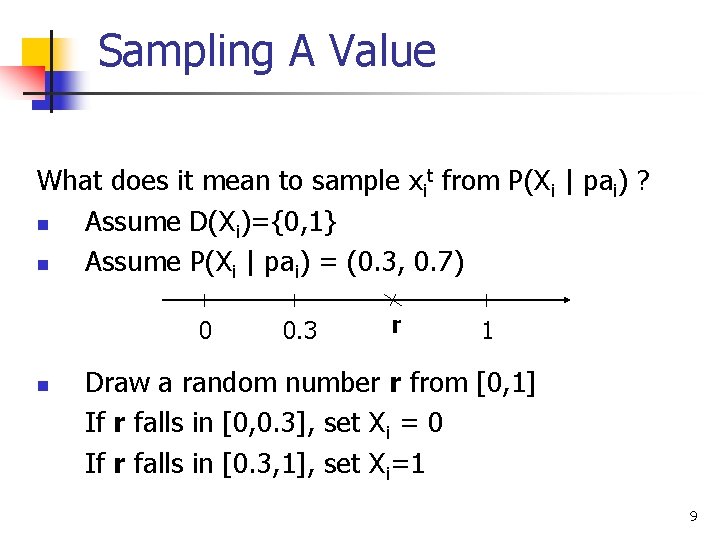

Sampling A Value What does it mean to sample xit from P(Xi | pai) ? n Assume D(Xi)={0, 1} n Assume P(Xi | pai) = (0. 3, 0. 7) 0 n 0. 3 r 1 Draw a random number r from [0, 1] If r falls in [0, 0. 3], set Xi = 0 If r falls in [0. 3, 1], set Xi=1 9

Sampling a Value n n n When we sample xit from P(Xi | pai), most of the time, will pick the most likely value of Xi occasionally, will pick the unlikely value of Xi We want to find high-probability tuples But!!!…. Choosing unlikely value allows to “cross” the low probability tuples to reach the high probability tuples ! 10

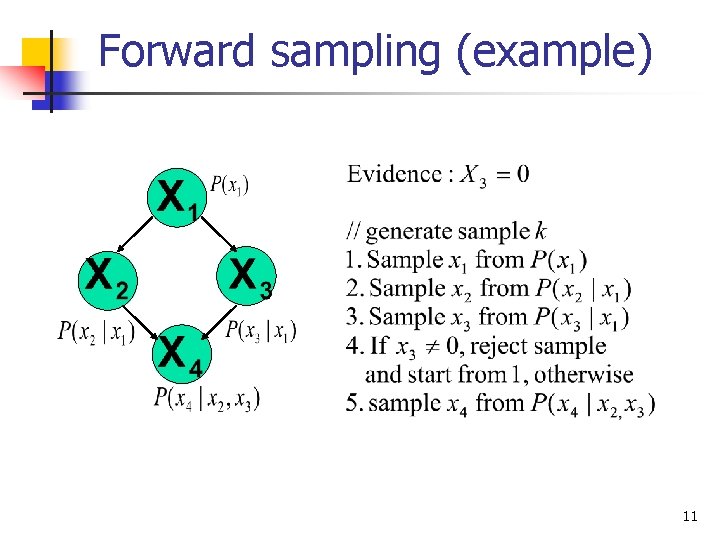

Forward sampling (example) 11

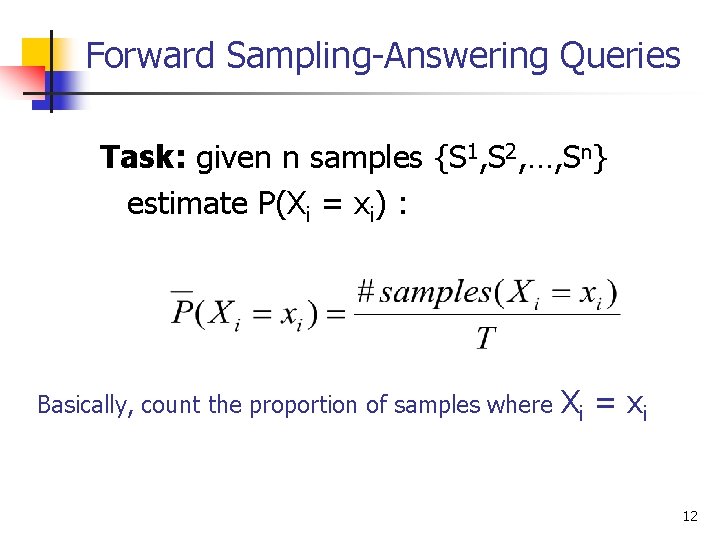

Forward Sampling-Answering Queries Task: given n samples {S 1, S 2, …, Sn} estimate P(Xi = xi) : Basically, count the proportion of samples where Xi = x i 12

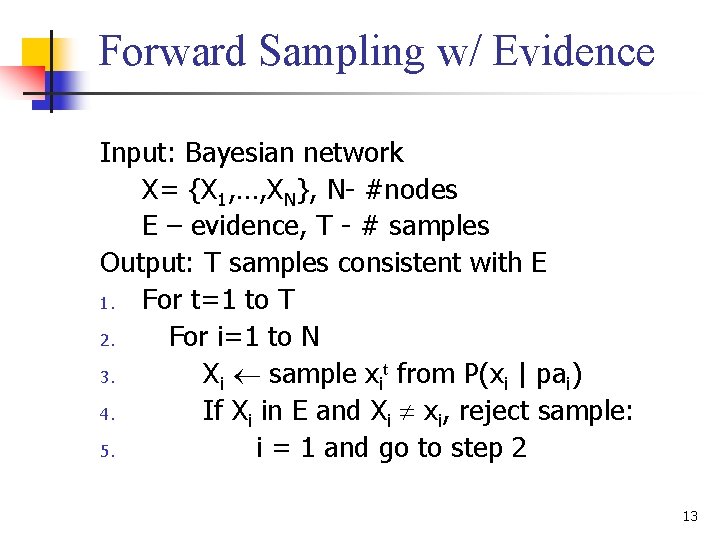

Forward Sampling w/ Evidence Input: Bayesian network X= {X 1, …, XN}, N- #nodes E – evidence, T - # samples Output: T samples consistent with E 1. For t=1 to T 2. For i=1 to N 3. Xi sample xit from P(xi | pai) 4. If Xi in E and Xi xi, reject sample: 5. i = 1 and go to step 2 13

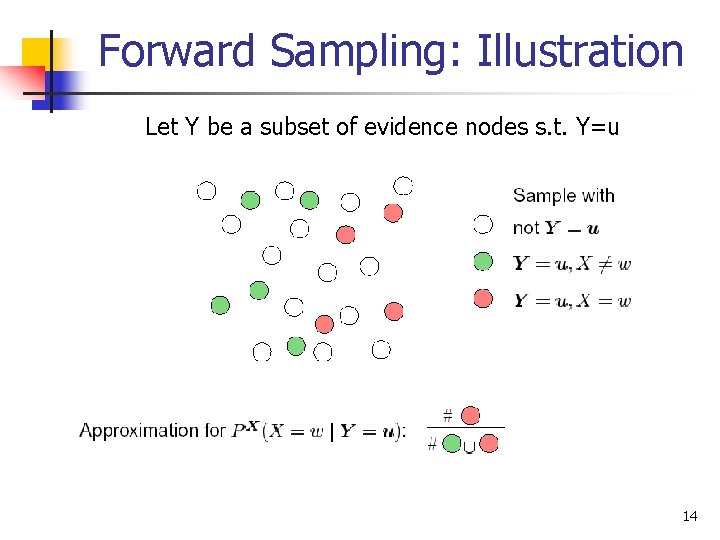

Forward Sampling: Illustration Let Y be a subset of evidence nodes s. t. Y=u 14

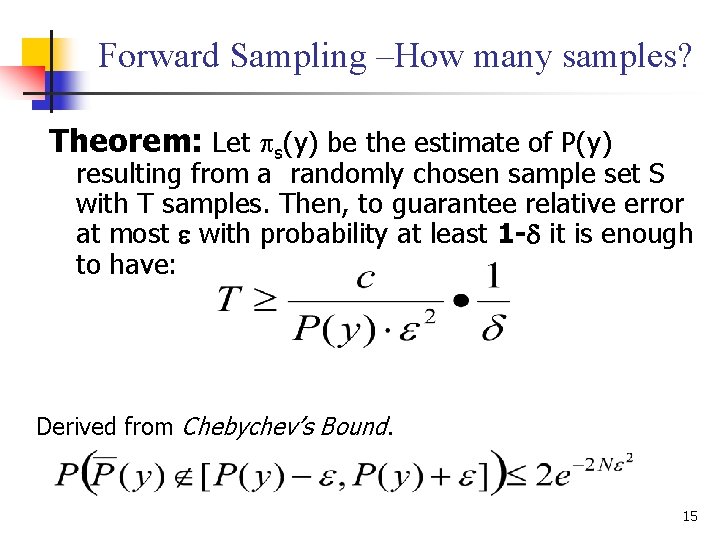

Forward Sampling –How many samples? Theorem: Let s(y) be the estimate of P(y) resulting from a randomly chosen sample set S with T samples. Then, to guarantee relative error at most with probability at least 1 - it is enough to have: Derived from Chebychev’s Bound. 15

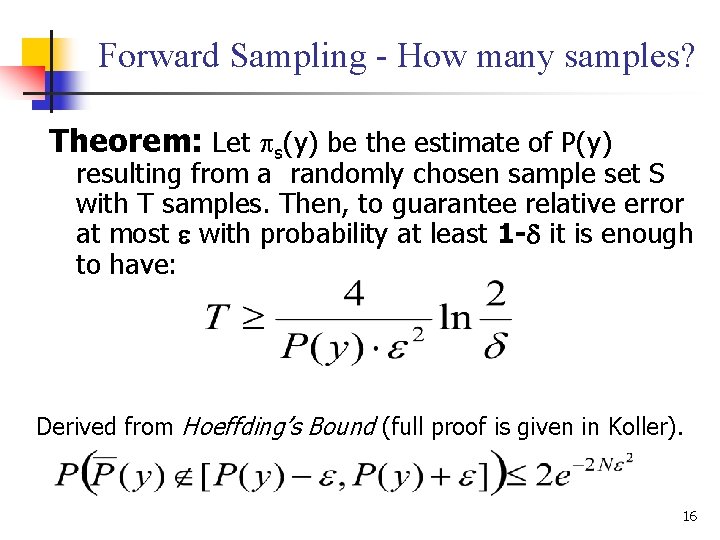

Forward Sampling - How many samples? Theorem: Let s(y) be the estimate of P(y) resulting from a randomly chosen sample set S with T samples. Then, to guarantee relative error at most with probability at least 1 - it is enough to have: Derived from Hoeffding’s Bound (full proof is given in Koller). 16

Forward Sampling: Performance Advantages: n P(xi | pa(xi)) is readily available n Samples are independent ! Drawbacks: n If evidence E is rare (P(e) is low), then we will reject most of the samples! n Since P(y) in estimate of N is unknown, must estimate P(y) from samples themselves! n If P(e) is small, T will become very big! 17

Problem: Evidence n Forward Sampling n n High Rejection Rate Fix evidence values n n n Gibbs sampling (MCMC) Likelyhood Weighting Importance Sampling 18

Forward Sampling Bibliography n {henrion 88} M. Henrion, "Propagating uncertainty in Bayesian networks by probabilistic logic sampling”, Uncertainty in AI, pp. = 149 -163, 1988 19

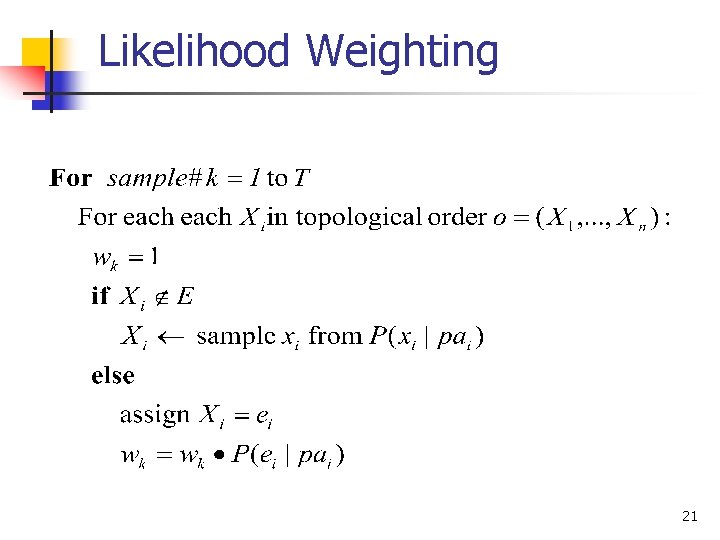

Likelihood Weighting (Fung and Chang, 1990; Shachter and Peot, 1990) “Clamping” evidence+ forward sampling+ weighing samples by evidence likelihood Works well for likely evidence! 20

Likelihood Weighting 21

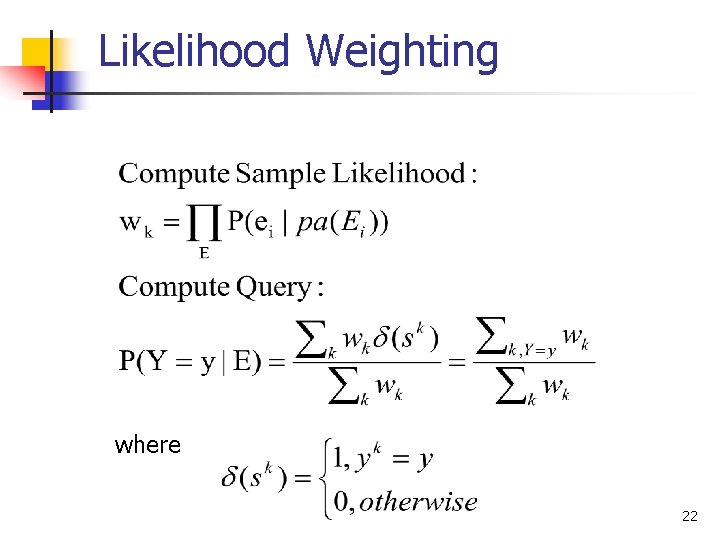

Likelihood Weighting where 22

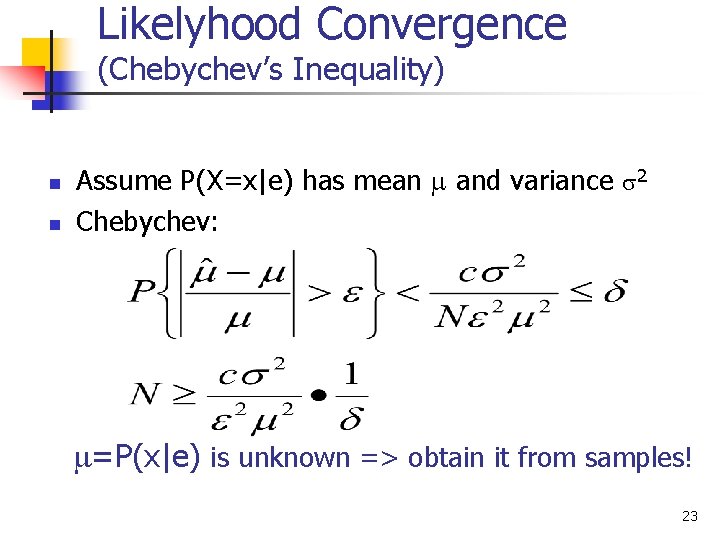

Likelyhood Convergence (Chebychev’s Inequality) n n Assume P(X=x|e) has mean and variance 2 Chebychev: =P(x|e) is unknown => obtain it from samples! 23

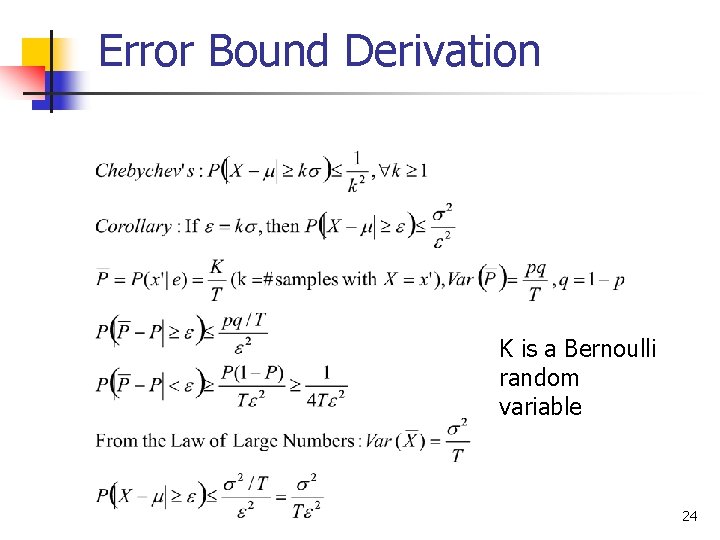

Error Bound Derivation K is a Bernoulli random variable 24

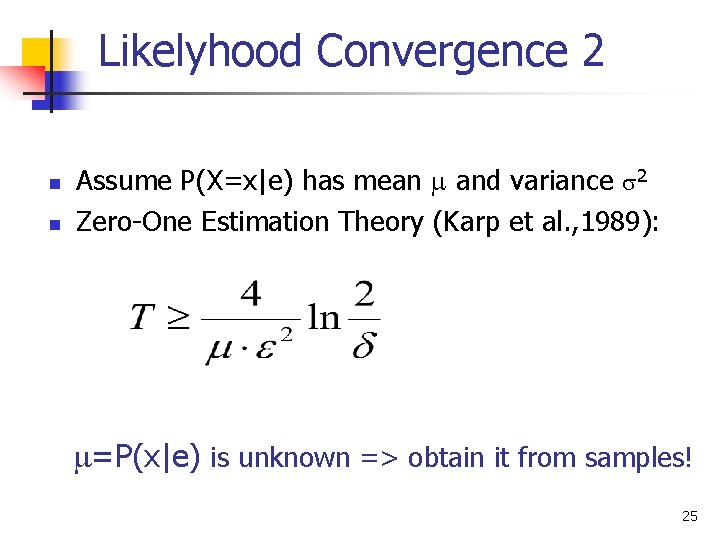

Likelyhood Convergence 2 n n Assume P(X=x|e) has mean and variance 2 Zero-One Estimation Theory (Karp et al. , 1989): =P(x|e) is unknown => obtain it from samples! 25

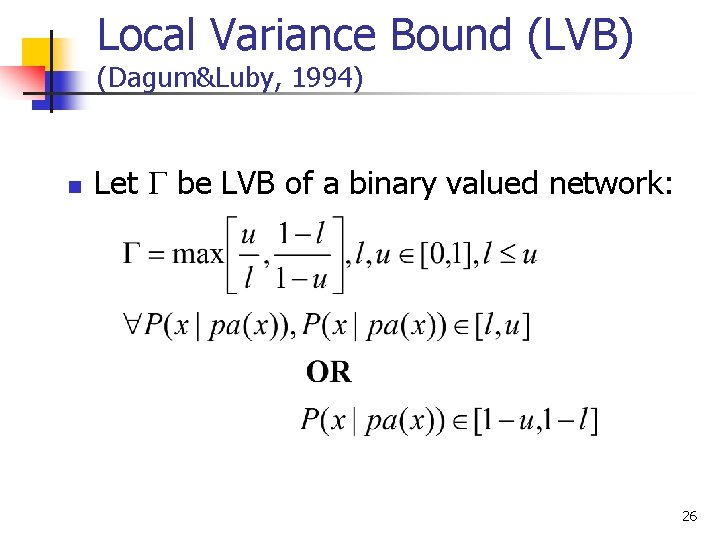

Local Variance Bound (LVB) (Dagum&Luby, 1994) n Let be LVB of a binary valued network: 26

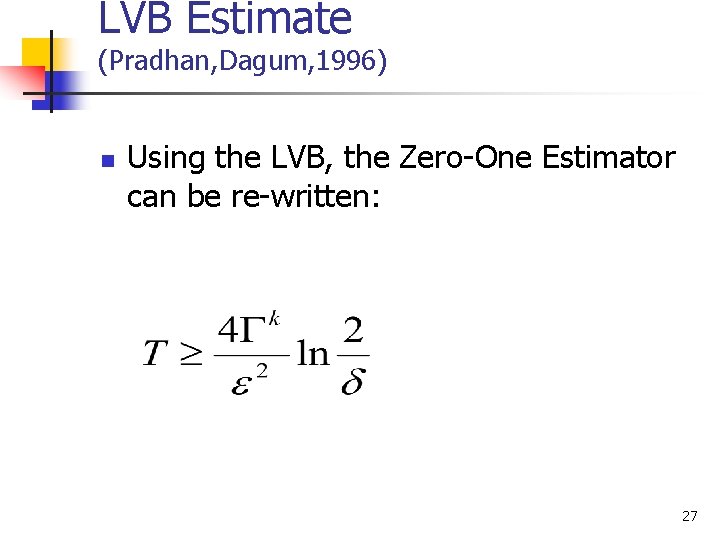

LVB Estimate (Pradhan, Dagum, 1996) n Using the LVB, the Zero-One Estimator can be re-written: 27

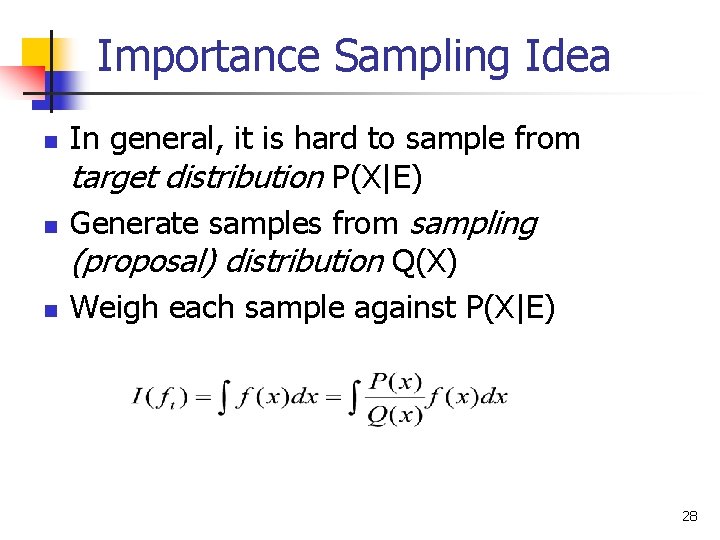

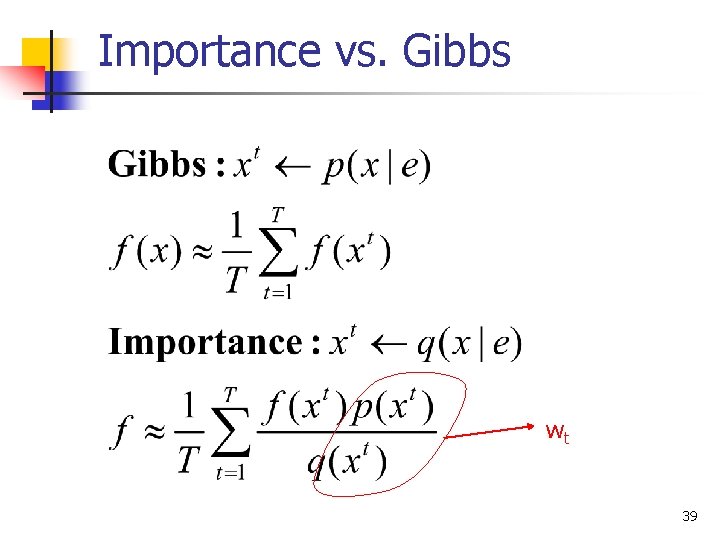

Importance Sampling Idea n n n In general, it is hard to sample from target distribution P(X|E) Generate samples from sampling (proposal) distribution Q(X) Weigh each sample against P(X|E) 28

Importance Sampling Variants Importance sampling: forward, non-adaptive n Nodes sampled in topological order n Sampling distribution (for non-instantiated nodes) equal to the prior conditionals Importance sampling: forward, adaptive n Nodes sampled in topological order n Sampling distribution adapted according to average importance weights obtained in previous samples [Cheng, Druzdzel 2000] 29

AIS-BN n n The most efficient variant of importance sampling to-date is AIS-BN – Adaptive Importance Sampling for Bayesian networks. Jian Cheng and Marek J. Druzdzel. AIS-BN: An adaptive importance sampling algorithm for evidential reasoning in large Bayesian networks. Journal of Artificial Intelligence Research (JAIR), 13: 155 -188, 2000. 30

Gibbs Sampling n Markov Chain Monte Carlo method (Gelfand Smith, 1990, Smith and Roberts, 1993, Tierney, 1994) n n Samples are dependent, form Markov Chain Samples directly from P(X|e) Guaranteed to converge when all P > 0 Methods to improve convergence: n n n Blocking Rao-Blackwellised Error Bounds n n Lag-t autocovariance Multiple Chains, Chebyshev’s Inequality 31

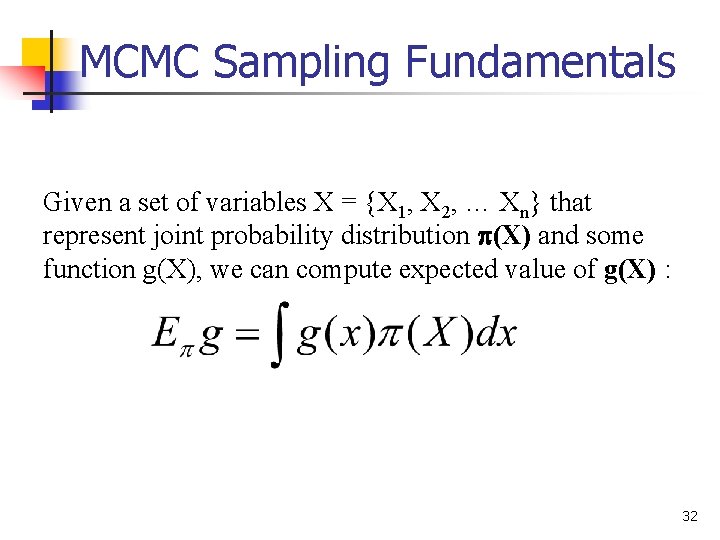

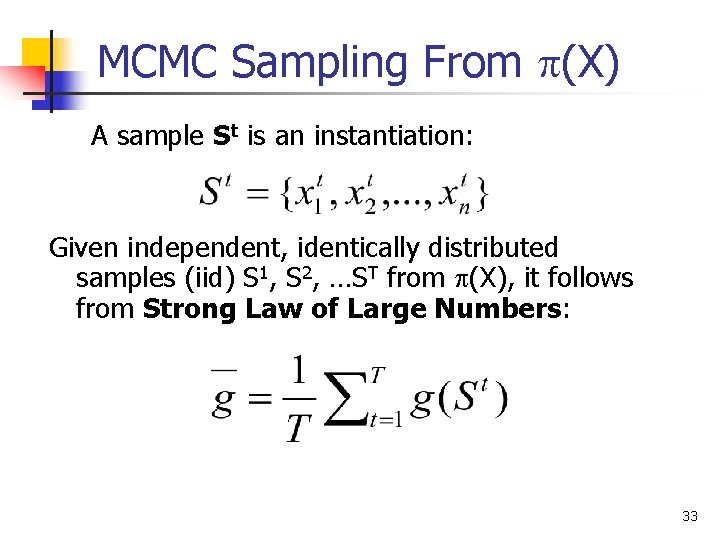

MCMC Sampling Fundamentals Given a set of variables X = {X 1, X 2, … Xn} that represent joint probability distribution (X) and some function g(X), we can compute expected value of g(X) : 32

MCMC Sampling From (X) A sample St is an instantiation: Given independent, identically distributed samples (iid) S 1, S 2, …ST from (X), it follows from Strong Law of Large Numbers: 33

![Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an](http://slidetodoc.com/presentation_image_h/fbbb72f908f0807d8f5474758f1dd8a1/image-34.jpg)

Gibbs Sampling (Pearl, 1988) n n A sample t [1, 2, …], is an instantiation of all variables in the network: Sampling process n n Fix values of observed variables e Instantiate node values in sample x 0 at random Generate samples x 1, x 2, …x. T from P(x|e) Compute posteriors from samples 34

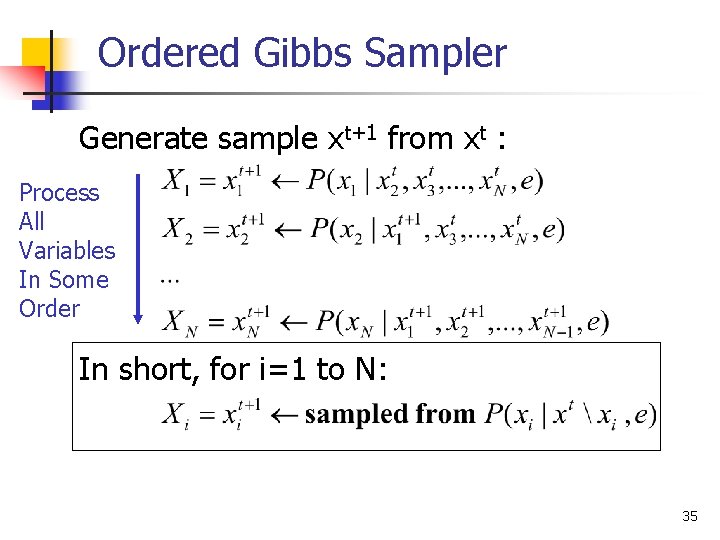

Ordered Gibbs Sampler Generate sample xt+1 from xt : Process All Variables In Some Order In short, for i=1 to N: 35

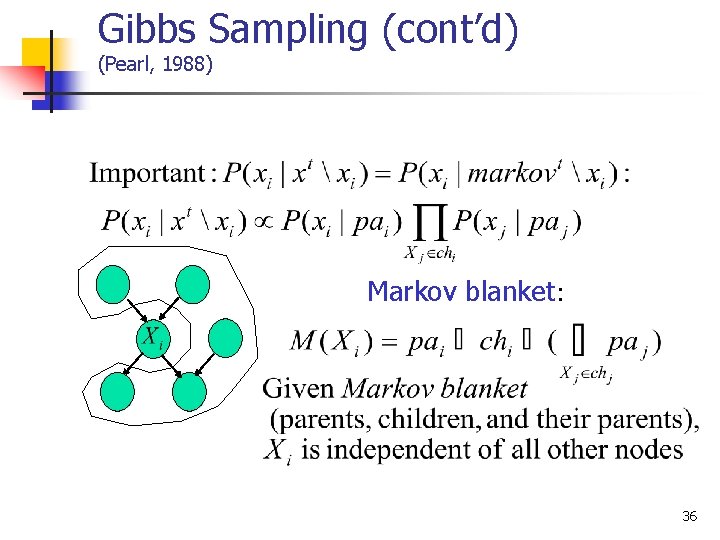

Gibbs Sampling (cont’d) (Pearl, 1988) Markov blanket: 36

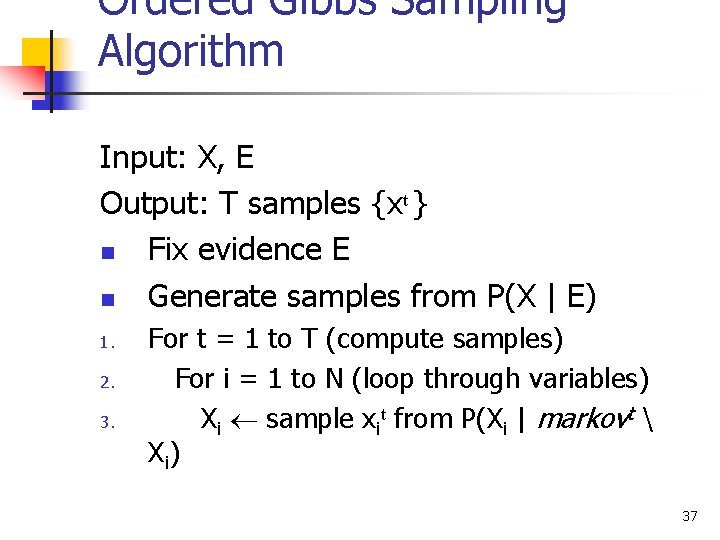

Ordered Gibbs Sampling Algorithm Input: X, E Output: T samples {xt } n Fix evidence E n Generate samples from P(X | E) 1. 2. 3. For t = 1 to T (compute samples) For i = 1 to N (loop through variables) Xi sample xit from P(Xi | markovt X i) 37

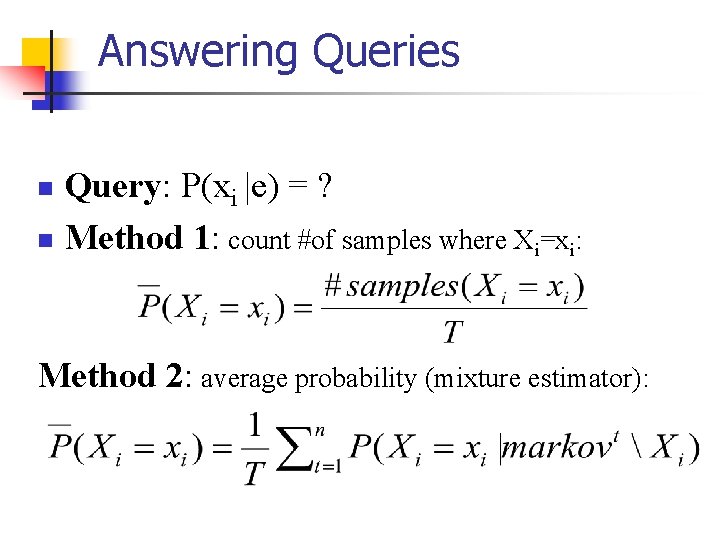

Answering Queries Query: P(xi |e) = ? n Method 1: count #of samples where Xi=xi: n Method 2: average probability (mixture estimator):

Importance vs. Gibbs wt 39

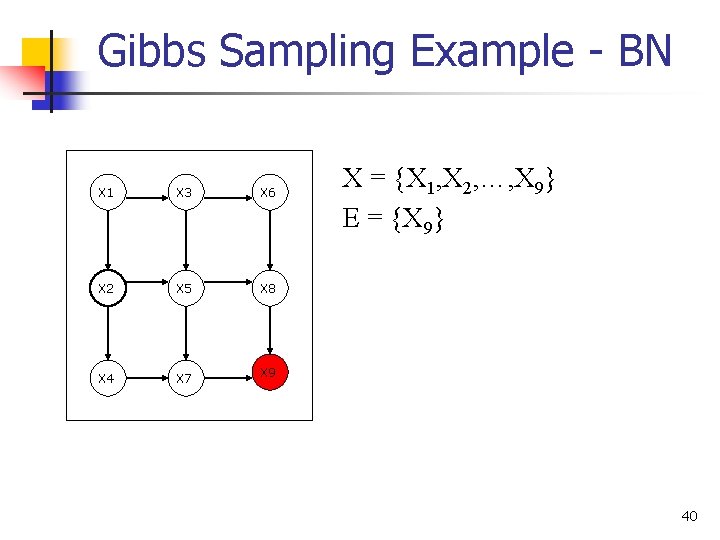

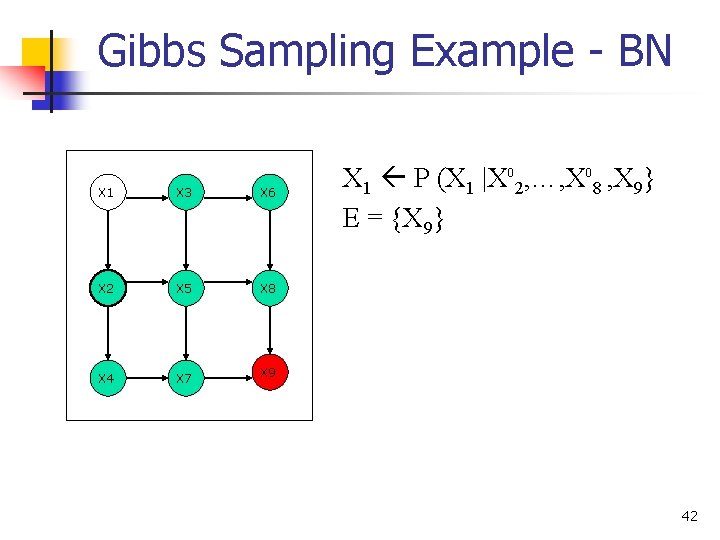

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X = {X 1, X 2, …, X 9} E = {X 9} 40

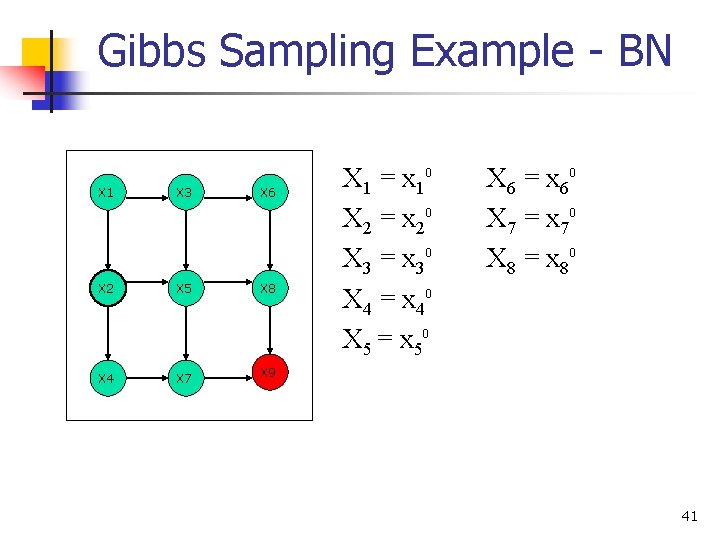

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 1 = x 10 X 2 = x 20 X 3 = x 30 X 4 = x 40 X 5 = x 50 X 6 = x 60 X 7 = x 70 X 8 = x 80 41

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 1 P (X 1 |X 02, …, X 08 , X 9} E = {X 9} 42

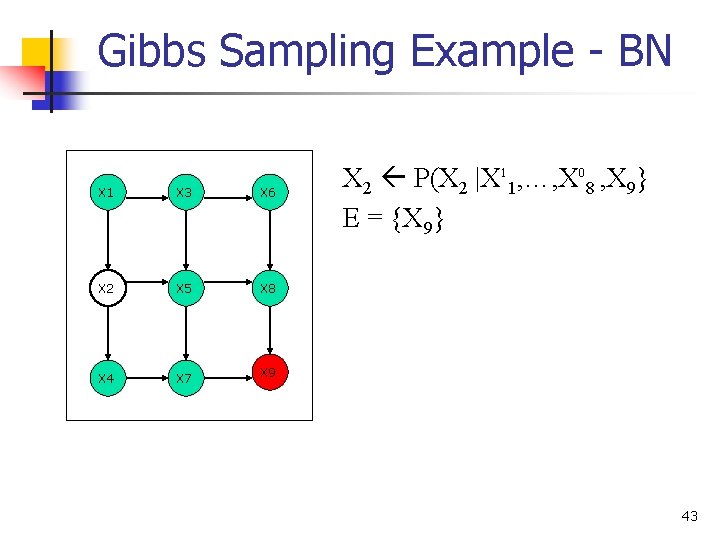

Gibbs Sampling Example - BN X 1 X 3 X 6 X 2 X 5 X 8 X 4 X 7 X 9 X 2 P(X 2 |X 11, …, X 08 , X 9} E = {X 9} 43

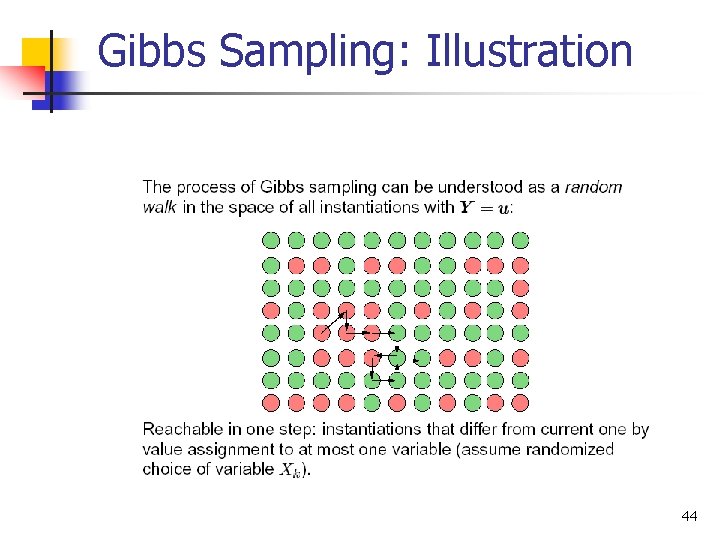

Gibbs Sampling: Illustration 44

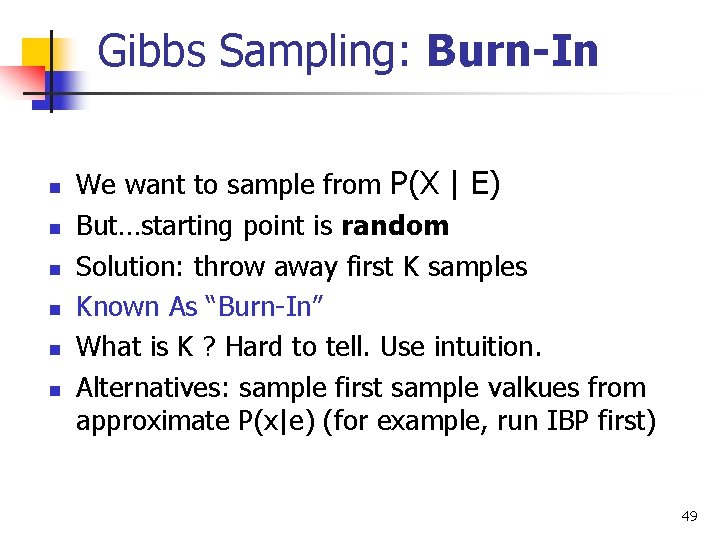

Gibbs Sampling: Burn-In n n n We want to sample from P(X | E) But…starting point is random Solution: throw away first K samples Known As “Burn-In” What is K ? Hard to tell. Use intuition. Alternatives: sample first sample valkues from approximate P(x|e) (for example, run IBP first) 49

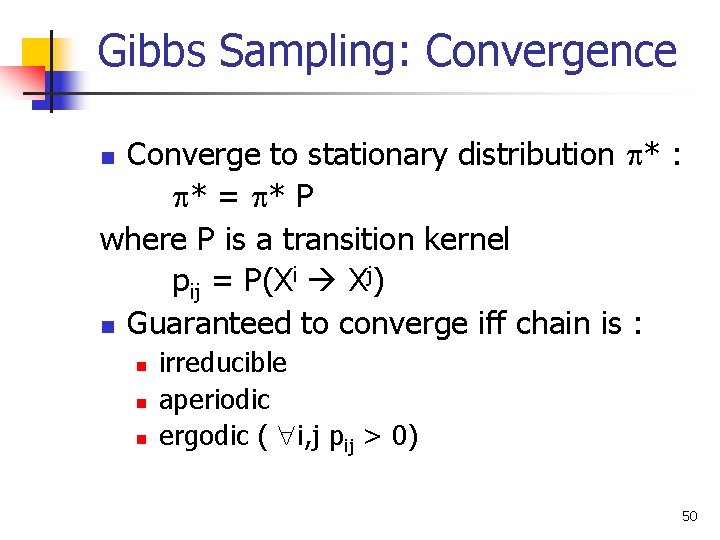

Gibbs Sampling: Convergence Converge to stationary distribution * : * = * P where P is a transition kernel pij = P(Xi Xj) n Guaranteed to converge iff chain is : n n irreducible aperiodic ergodic ( i, j pij > 0) 50

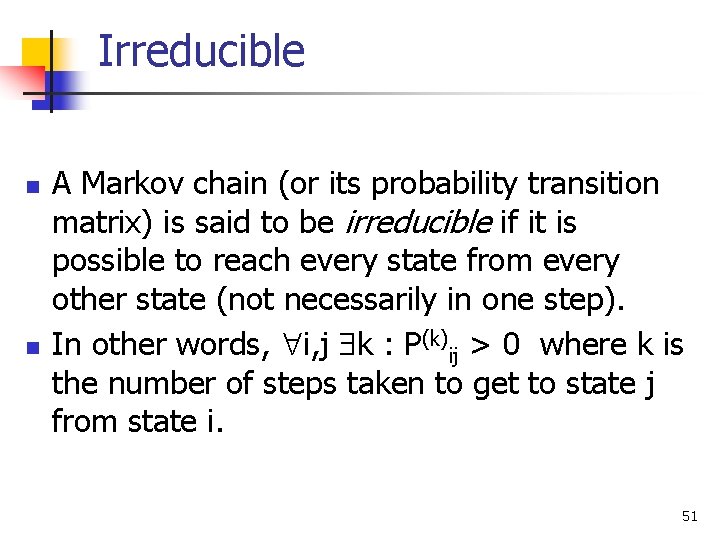

Irreducible n n A Markov chain (or its probability transition matrix) is said to be irreducible if it is possible to reach every state from every other state (not necessarily in one step). In other words, i, j k : P(k)ij > 0 where k is the number of steps taken to get to state j from state i. 51

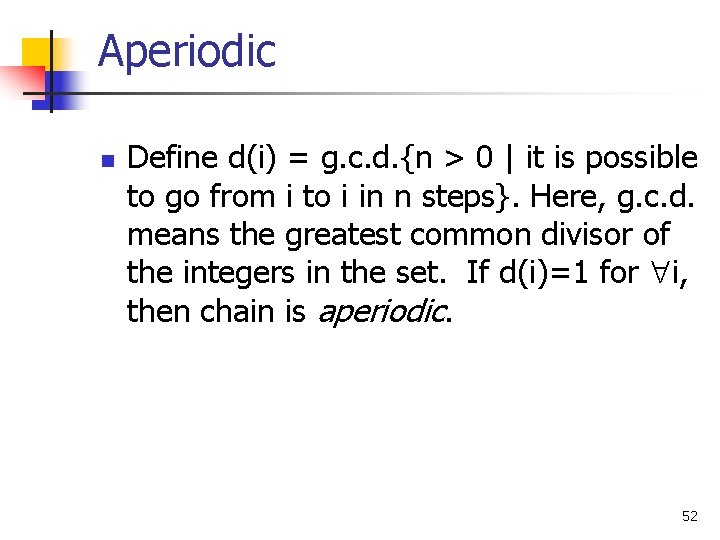

Aperiodic n Define d(i) = g. c. d. {n > 0 | it is possible to go from i to i in n steps}. Here, g. c. d. means the greatest common divisor of the integers in the set. If d(i)=1 for i, then chain is aperiodic. 52

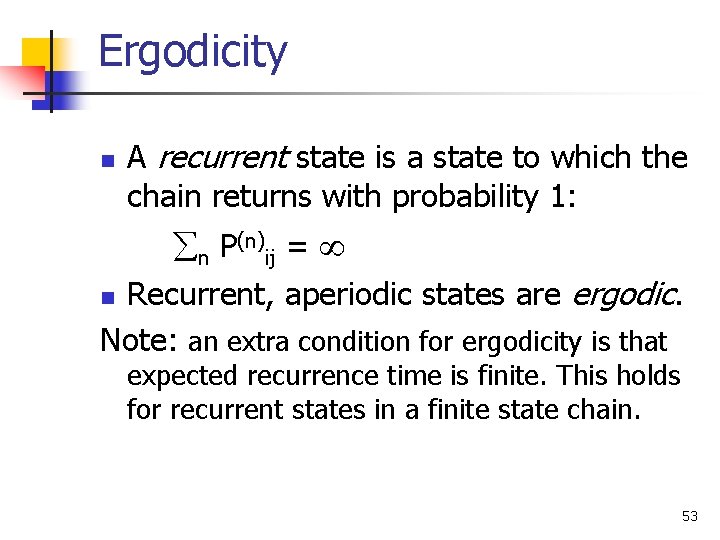

Ergodicity n A recurrent state is a state to which the chain returns with probability 1: n P(n)ij = Recurrent, aperiodic states are ergodic. Note: an extra condition for ergodicity is that n expected recurrence time is finite. This holds for recurrent states in a finite state chain. 53

Gibbs Convergence n n Gibbs convergence is generally guaranteed as long as all probabilities are positive! Intuition for ergodicity requirement: if nodes X and Y are correlated s. t. X=0 Y=0, then: n n once we sample and assign X=0, then we are forced to assign Y=0; once we sample and assign Y=0, then we are forced to assign X=0; we will never be able to change their values again! n Another problem: it can take a very long time to converge! 55

Gibbs Sampling: Performance +Advantage: guaranteed to converge to P(X|E) -Disadvantage: convergence may be slow Problems: n n Samples are dependent ! Statistical variance is too big in highdimensional problems 56

Gibbs: Speeding Convergence Objectives: 1. Reduce dependence between samples (autocorrelation) n n 2. Skip samples Randomize Variable Sampling Order Reduce variance n n Blocking Gibbs Sampling Rao-Blackwellisation 57

Skipping Samples Pick only every k-th sample (Gayer, 1992) Can reduce dependence between samples ! Increases variance ! Waists samples ! n 58

Randomized Variable Order Random Scan Gibbs Sampler Pick each next variable Xi for update at random with probability pi , i pi = 1. (In the simplest case, pi are distributed uniformly. ) In some instances, reduces variance (Mac. Eachern, Peruggia, 1999 “Subsampling the Gibbs Sampler: Variance Reduction”) 59

Blocking Sample several variables together, as a block n Example: Given three variables X, Y, Z, with domains of size 2, group Y and Z together to form a variable W={Y, Z} with domain size 4. Then, given sample (xt, yt, zt), compute next sample: Xt+1 P(yt, zt)=P(wt) (yt+1, zt+1)=Wt+1 P(xt+1) + Can improve convergence greatly when two variables are strongly correlated! - Domain of the block variable grows exponentially with the #variables in a block! n 60

Blocking Gibbs Sampling Jensen, Kong, Kjaerulff, 1993 “Blocking Gibbs Sampling Very Large Probabilistic Expert Systems” n Select a set of subsets: E 1, E 2, E 3, …, Ek s. t. Ei X U i Ei = X Ai = X Ei n Sample P(Ei | Ai) 61

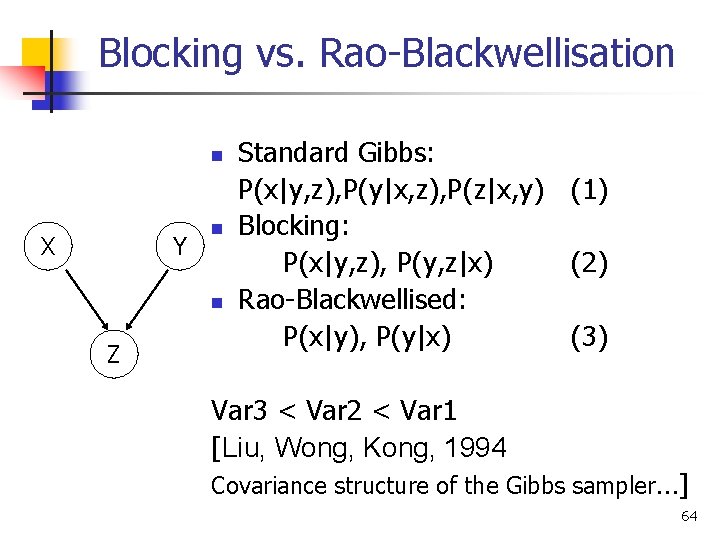

Rao-Blackwellisation n Do not sample all variables! Sample a subset! Example: Given three variables X, Y, Z, sample only X and Y, sum out Z. Given sample (xt, yt), compute next sample: Xt+1 P(yt) yt+1 P(xt+1) 62

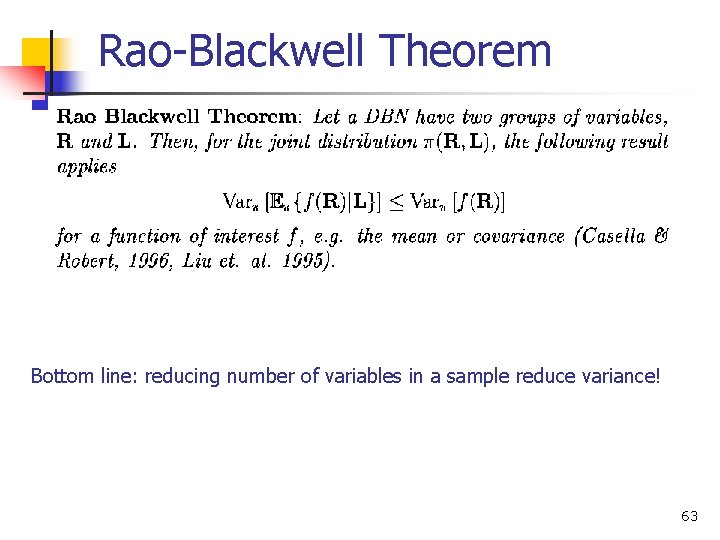

Rao-Blackwell Theorem Bottom line: reducing number of variables in a sample reduce variance! 63

Blocking vs. Rao-Blackwellisation n X Y n n Z Standard Gibbs: P(x|y, z), P(y|x, z), P(z|x, y) (1) Blocking: P(x|y, z), P(y, z|x) (2) Rao-Blackwellised: P(x|y), P(y|x) (3) Var 3 < Var 2 < Var 1 [Liu, Wong, Kong, 1994 Covariance structure of the Gibbs sampler…] 64

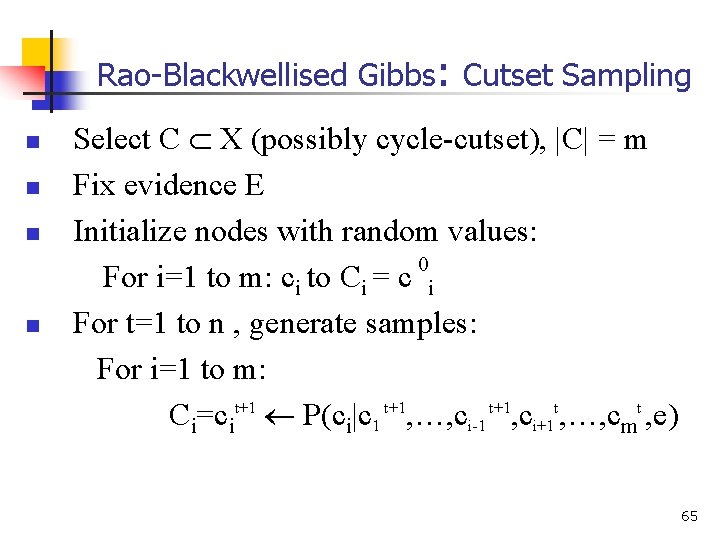

Rao-Blackwellised Gibbs: Cutset Sampling n n Select C X (possibly cycle-cutset), |C| = m Fix evidence E Initialize nodes with random values: 0 For i=1 to m: ci to Ci = c i For t=1 to n , generate samples: For i=1 to m: Ci=cit+1 P(ci|c 1 t+1, …, ci-1 t+1, ci+1 t, …, cmt , e) 65

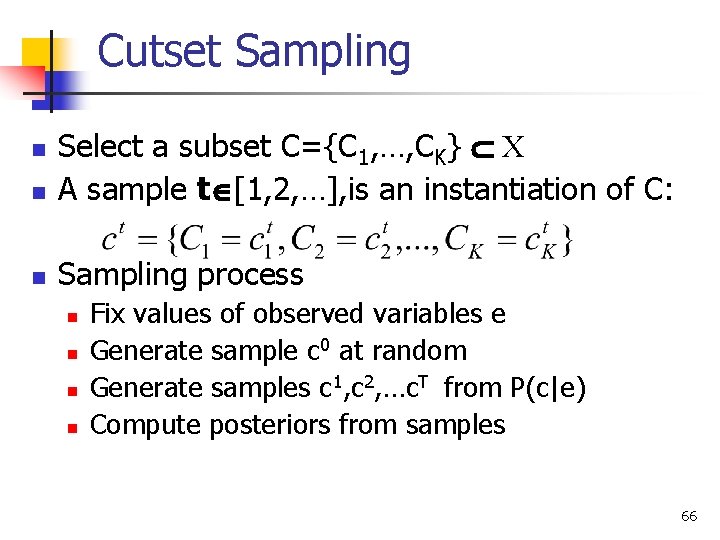

Cutset Sampling n Select a subset C={C 1, …, CK} X A sample t [1, 2, …], is an instantiation of C: n Sampling process n n n Fix values of observed variables e Generate sample c 0 at random Generate samples c 1, c 2, …c. T from P(c|e) Compute posteriors from samples 66

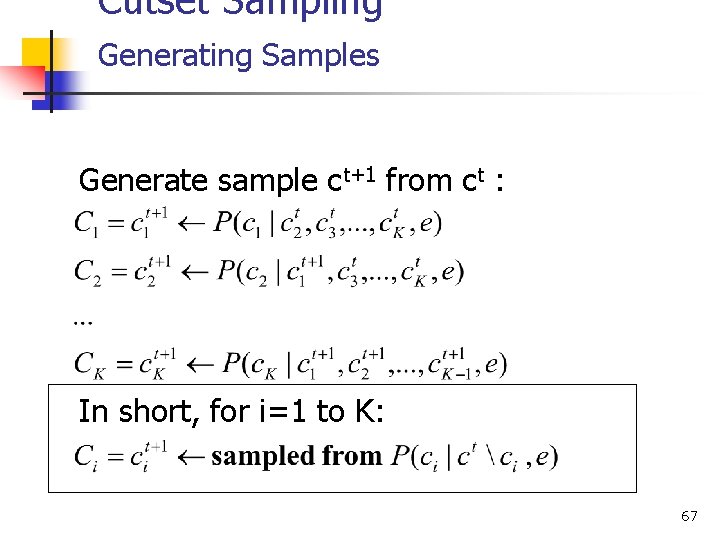

Cutset Sampling Generating Samples Generate sample ct+1 from ct : In short, for i=1 to K: 67

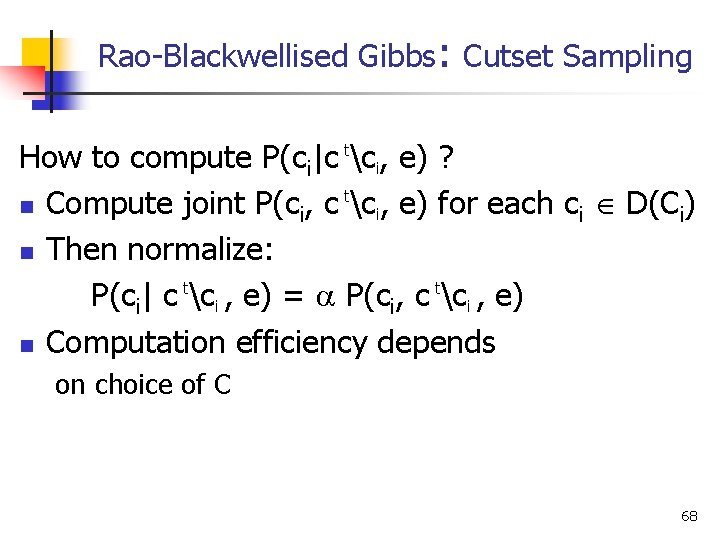

Rao-Blackwellised Gibbs: Cutset Sampling How to compute P(ci|c tci, e) ? t n Compute joint P(ci, c ci, e) for each ci D(Ci) n Then normalize: P(ci| c tci , e) = P(ci, c tci , e) n Computation efficiency depends on choice of C 68

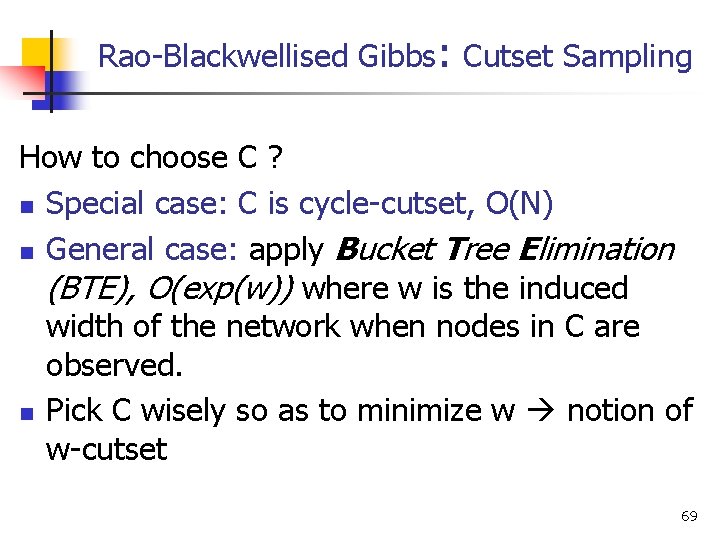

Rao-Blackwellised Gibbs: Cutset Sampling How to choose C ? n Special case: C is cycle-cutset, O(N) n General case: apply Bucket Tree Elimination (BTE), O(exp(w)) where w is the induced width of the network when nodes in C are observed. n Pick C wisely so as to minimize w notion of w-cutset 69

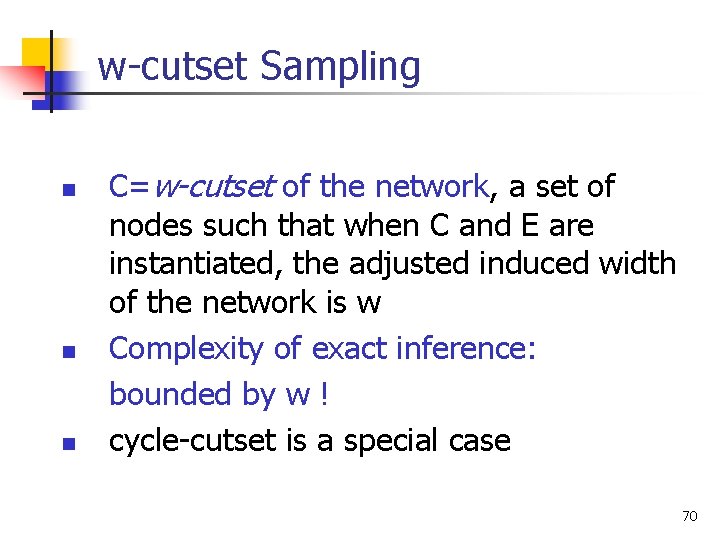

w-cutset Sampling n n n C=w-cutset of the network, a set of nodes such that when C and E are instantiated, the adjusted induced width of the network is w Complexity of exact inference: bounded by w ! cycle-cutset is a special case 70

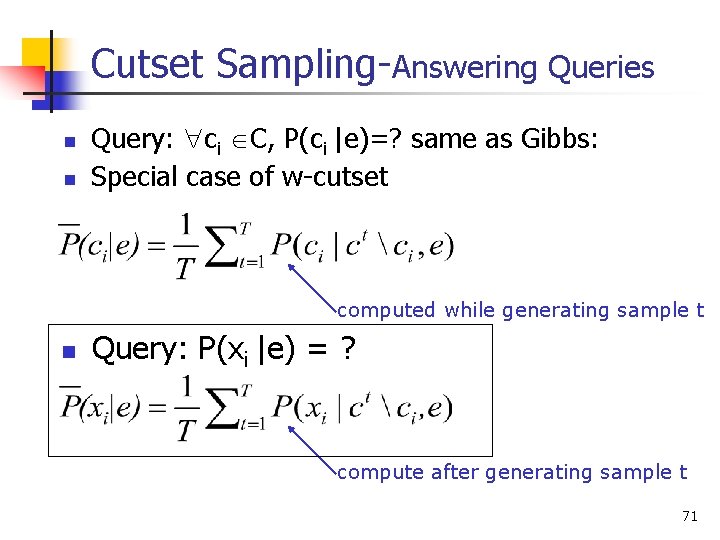

Cutset Sampling-Answering Queries n n Query: ci C, P(ci |e)=? same as Gibbs: Special case of w-cutset computed while generating sample t n Query: P(xi |e) = ? compute after generating sample t 71

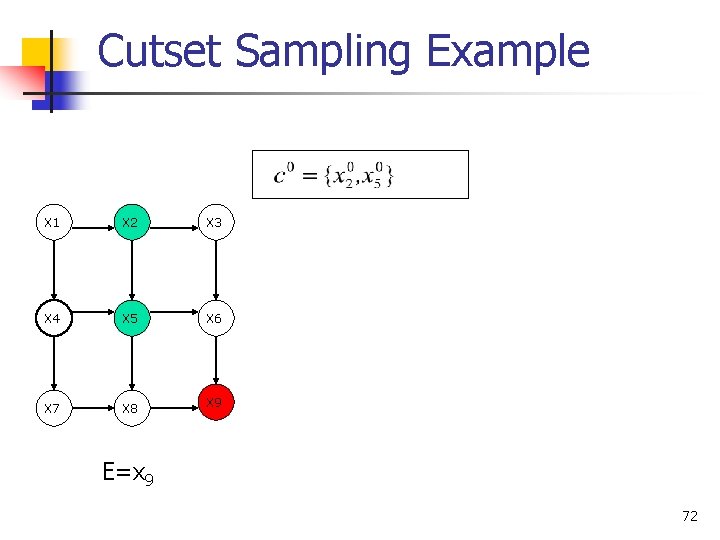

Cutset Sampling Example X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 E=x 9 72

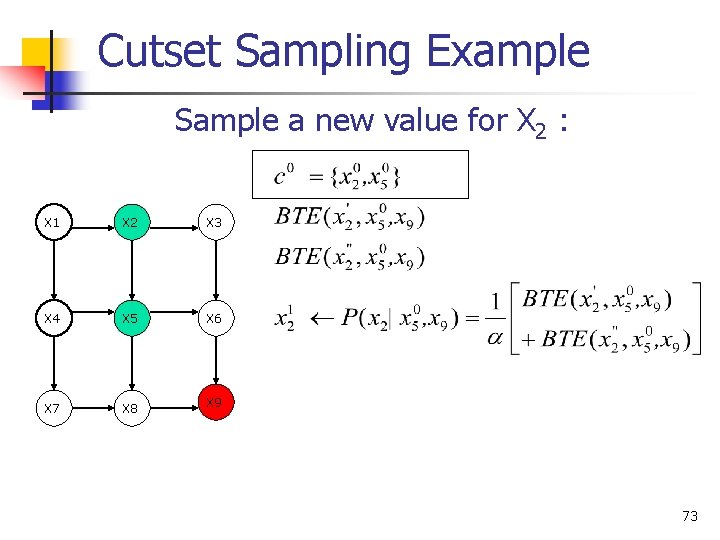

Cutset Sampling Example Sample a new value for X 2 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 73

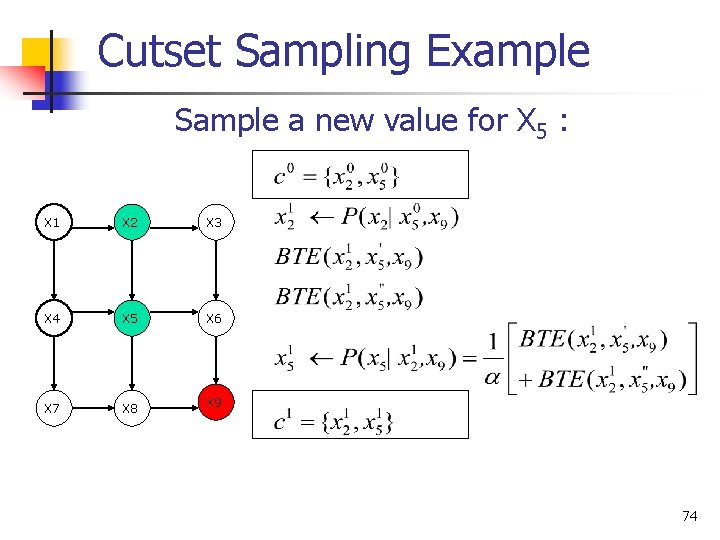

Cutset Sampling Example Sample a new value for X 5 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 74

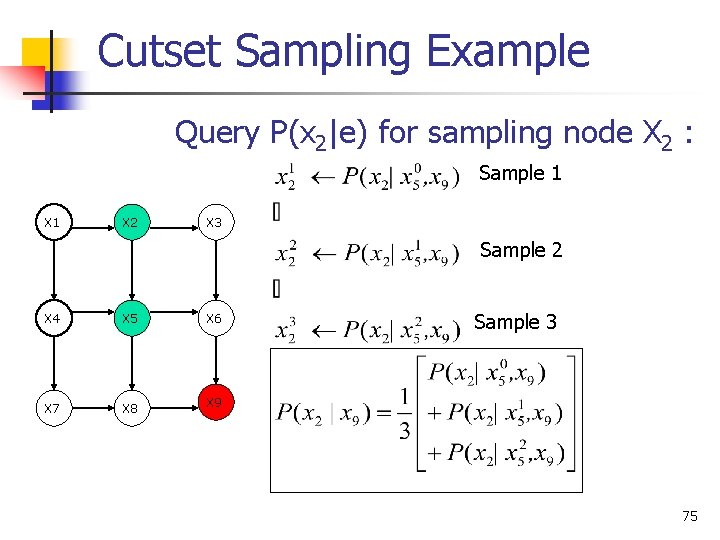

Cutset Sampling Example Query P(x 2|e) for sampling node X 2 : Sample 1 X 2 X 3 Sample 2 X 4 X 5 X 6 X 7 X 8 X 9 Sample 3 75

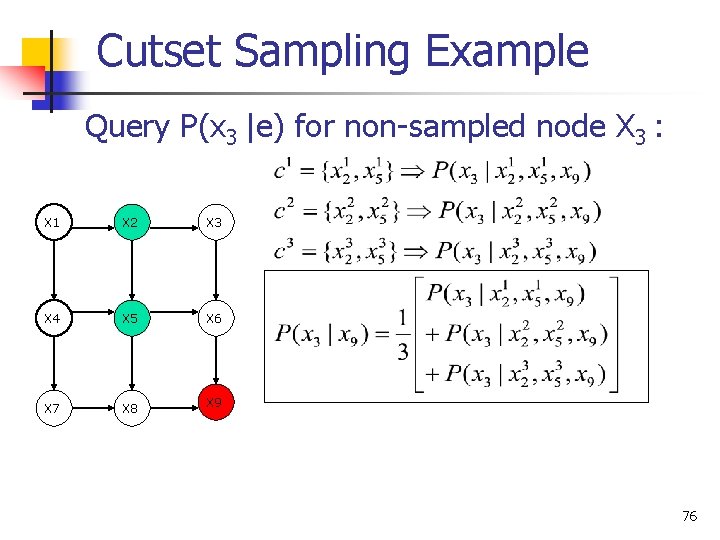

Cutset Sampling Example Query P(x 3 |e) for non-sampled node X 3 : X 1 X 2 X 3 X 4 X 5 X 6 X 7 X 8 X 9 76

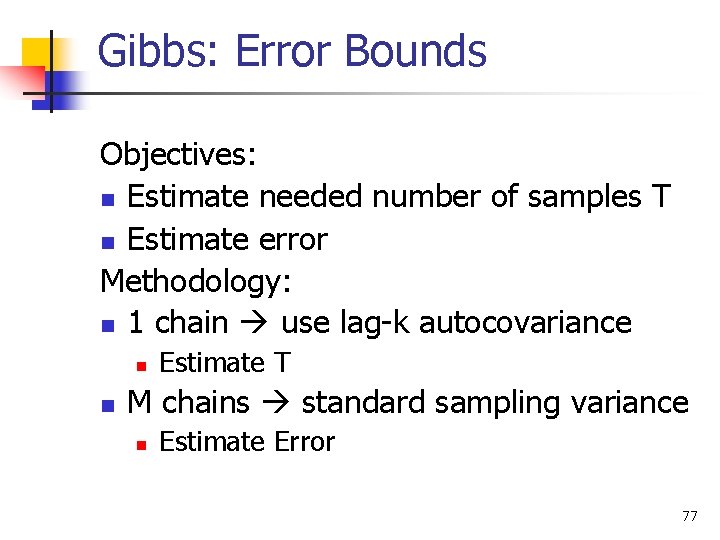

Gibbs: Error Bounds Objectives: n Estimate needed number of samples T n Estimate error Methodology: n 1 chain use lag-k autocovariance n n Estimate T M chains standard sampling variance n Estimate Error 77

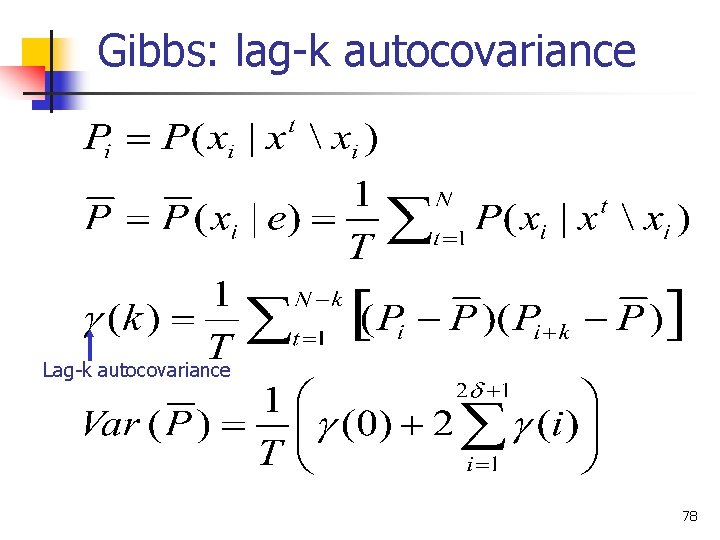

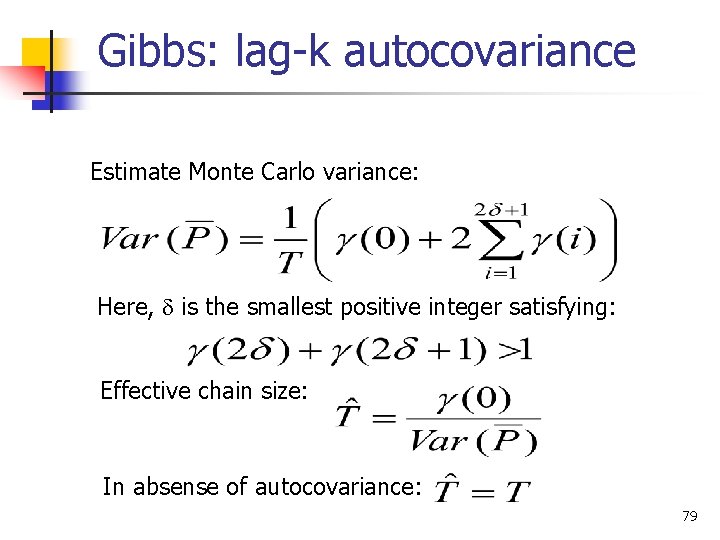

Gibbs: lag-k autocovariance Lag-k autocovariance 78

Gibbs: lag-k autocovariance Estimate Monte Carlo variance: Here, is the smallest positive integer satisfying: Effective chain size: In absense of autocovariance: 79

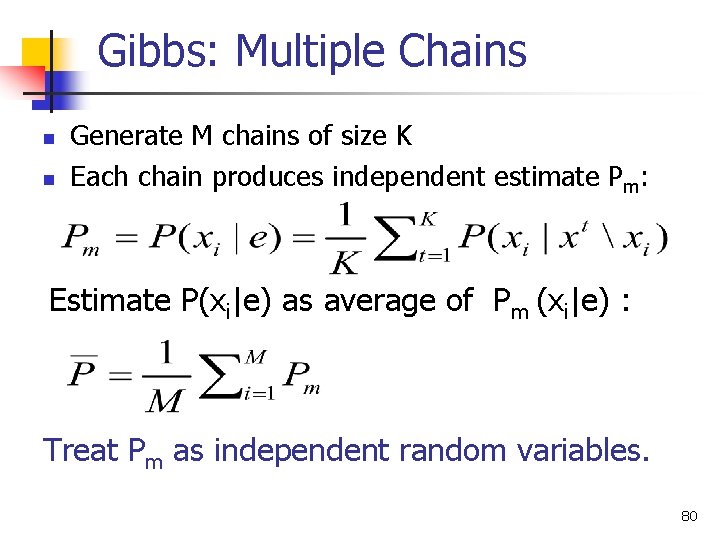

Gibbs: Multiple Chains n n Generate M chains of size K Each chain produces independent estimate Pm: Estimate P(xi|e) as average of Pm (xi|e) : Treat Pm as independent random variables. 80

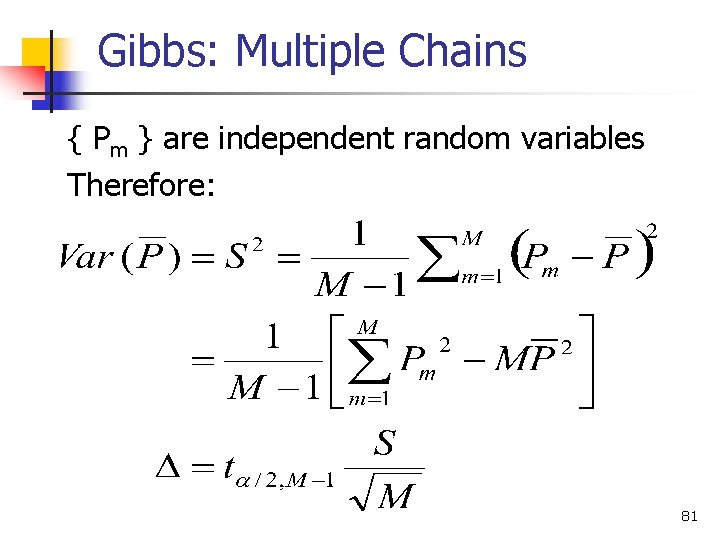

Gibbs: Multiple Chains { Pm } are independent random variables Therefore: 81

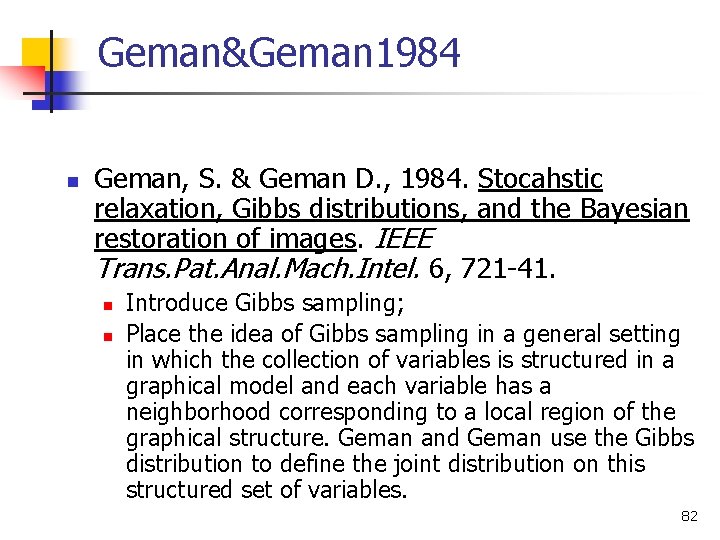

Geman&Geman 1984 n Geman, S. & Geman D. , 1984. Stocahstic relaxation, Gibbs distributions, and the Bayesian restoration of images. IEEE Trans. Pat. Anal. Mach. Intel. 6, 721 -41. n n Introduce Gibbs sampling; Place the idea of Gibbs sampling in a general setting in which the collection of variables is structured in a graphical model and each variable has a neighborhood corresponding to a local region of the graphical structure. Geman and Geman use the Gibbs distribution to define the joint distribution on this structured set of variables. 82

Tanner&Wong 1987 n Tanner and Wong (1987) n n Data-augmentation Convergence Results 83

Pearl 1988 n Pearl, 1988. Probabilistic Reasoning in Intelligent Systems, Morgan-Kaufmann. n In the case of Bayesian networks, the neighborhoods correspond to the Markov blanket of a variable and the joint distribution is defined by the factorization of the network. 84

Gelfand&Smith, 1990 n Gelfand, A. E. and Smith, A. F. M. , 1990. Sampling-based approaches to calculating marginal densities. J. Am. Statist. Assoc. 85, 398 -409. n Show variance reduction in using mixture estimator for posterior marginals. 85

Neal, 1992 n R. M. Neal, 1992. Connectionist learning of belief networks, Artifical Intelligence, v. 56, pp. 71 -118. n Stochastic simulation in noisy-or networks. 86

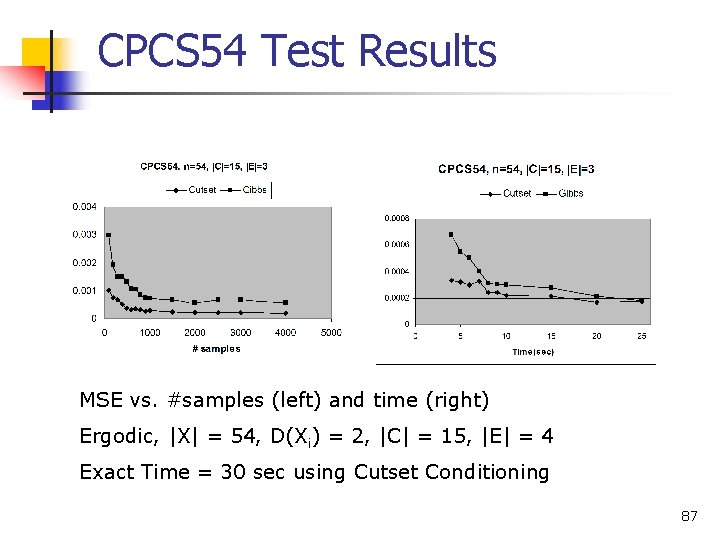

CPCS 54 Test Results MSE vs. #samples (left) and time (right) Ergodic, |X| = 54, D(Xi) = 2, |C| = 15, |E| = 4 Exact Time = 30 sec using Cutset Conditioning 87

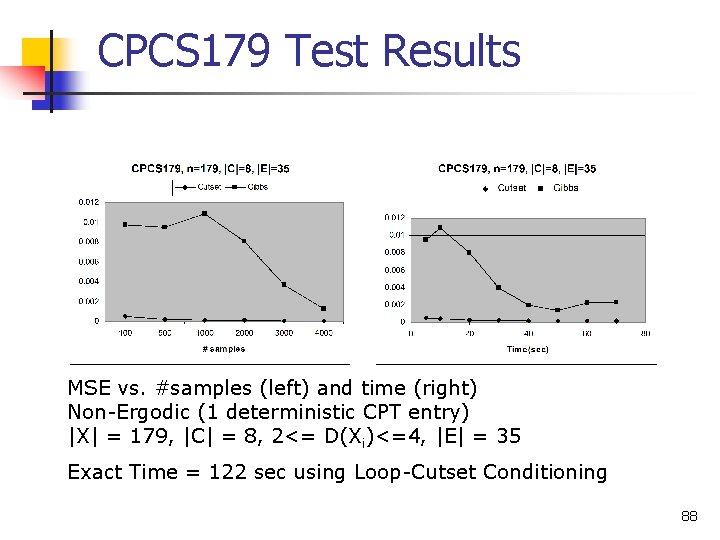

CPCS 179 Test Results MSE vs. #samples (left) and time (right) Non-Ergodic (1 deterministic CPT entry) |X| = 179, |C| = 8, 2<= D(Xi)<=4, |E| = 35 Exact Time = 122 sec using Loop-Cutset Conditioning 88

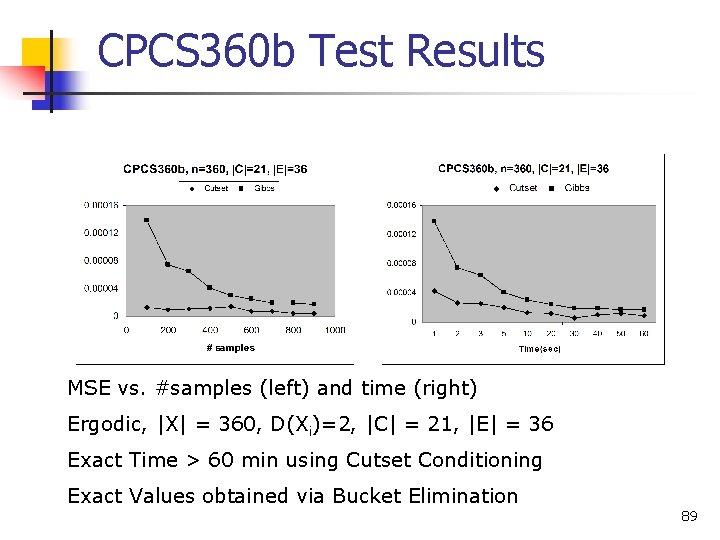

CPCS 360 b Test Results MSE vs. #samples (left) and time (right) Ergodic, |X| = 360, D(Xi)=2, |C| = 21, |E| = 36 Exact Time > 60 min using Cutset Conditioning Exact Values obtained via Bucket Elimination 89

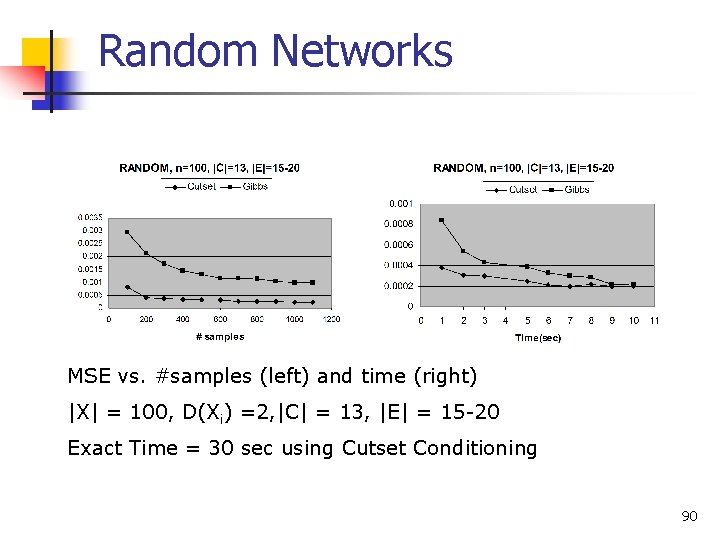

Random Networks MSE vs. #samples (left) and time (right) |X| = 100, D(Xi) =2, |C| = 13, |E| = 15 -20 Exact Time = 30 sec using Cutset Conditioning 90

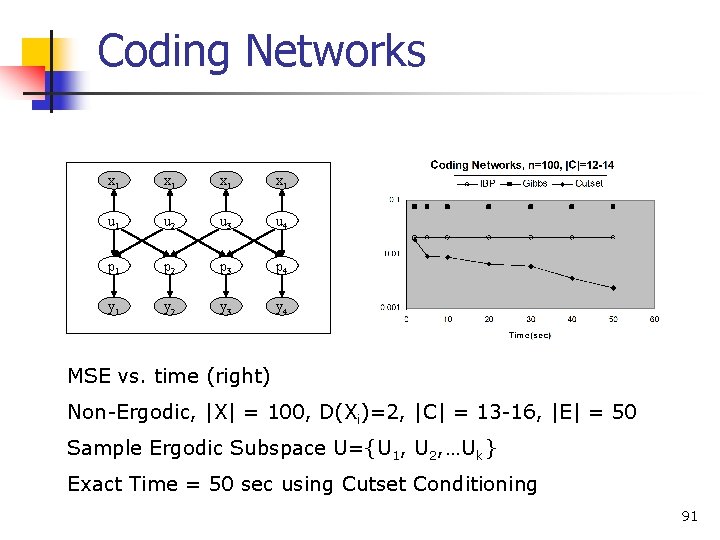

Coding Networks x 1 x 1 u 1 u 2 u 3 u 4 p 1 p 2 p 3 p 4 y 1 y 2 y 3 y 4 MSE vs. time (right) Non-Ergodic, |X| = 100, D(Xi)=2, |C| = 13 -16, |E| = 50 Sample Ergodic Subspace U={U 1, U 2, …Uk} Exact Time = 50 sec using Cutset Conditioning 91

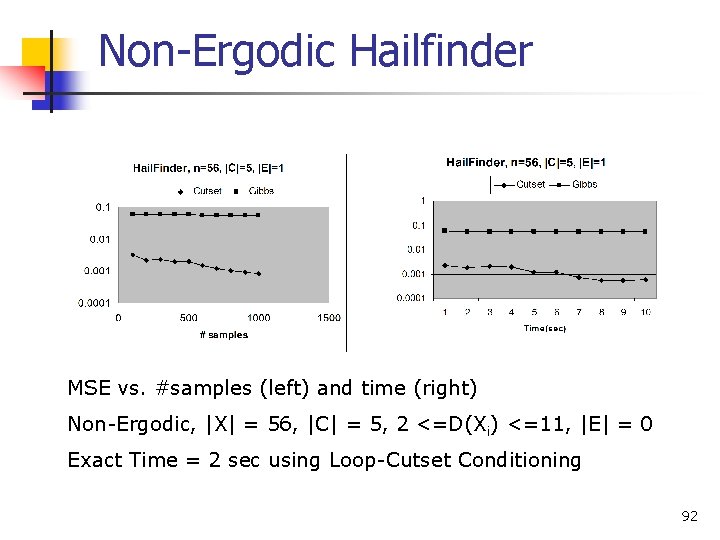

Non-Ergodic Hailfinder MSE vs. #samples (left) and time (right) Non-Ergodic, |X| = 56, |C| = 5, 2 <=D(Xi) <=11, |E| = 0 Exact Time = 2 sec using Loop-Cutset Conditioning 92

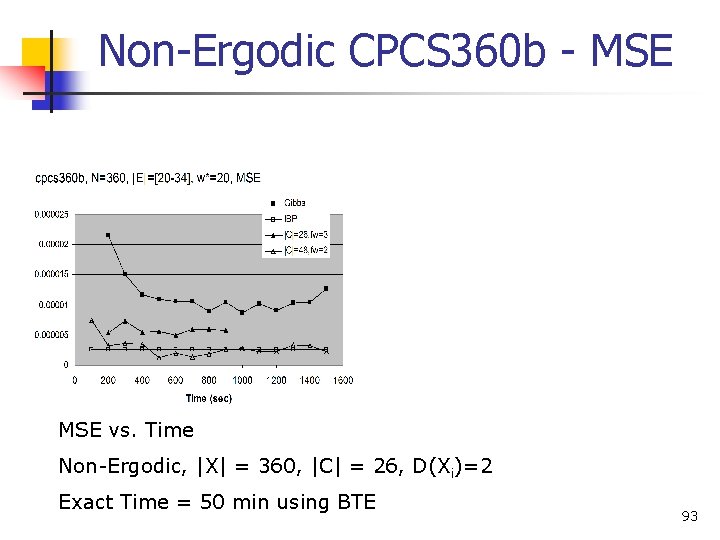

Non-Ergodic CPCS 360 b - MSE vs. Time Non-Ergodic, |X| = 360, |C| = 26, D(Xi)=2 Exact Time = 50 min using BTE 93

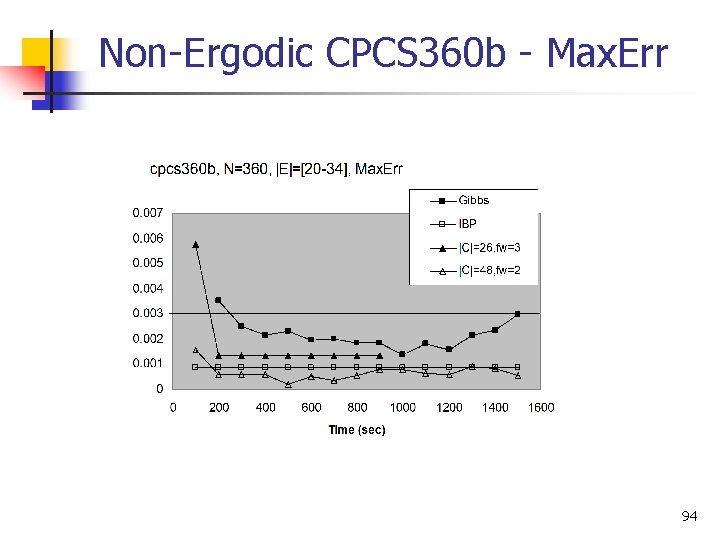

Non-Ergodic CPCS 360 b - Max. Err 94

- Slides: 89