HPCUAntwerp introduction Stefan Becuwe Franky Backeljauw Kurt Lust

HPC@UAntwerp introduction Stefan Becuwe, Franky Backeljauw, Kurt Lust, Bert Tijskens

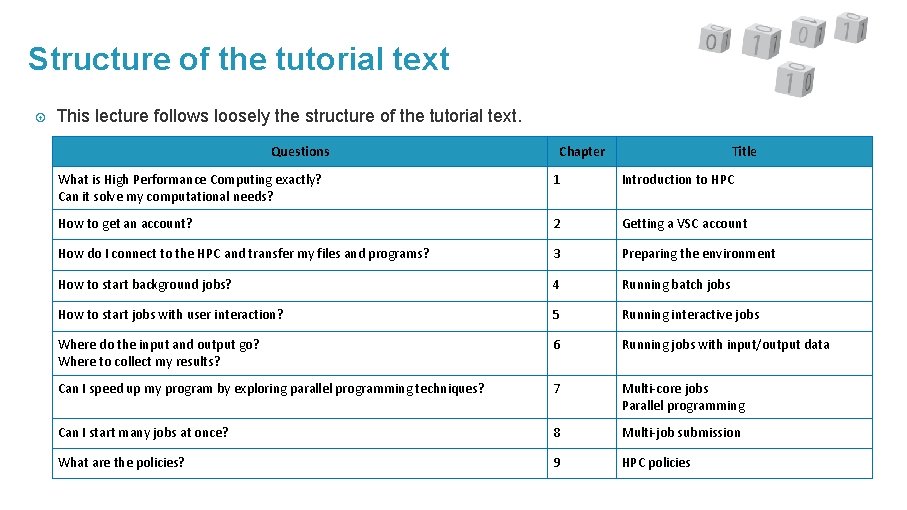

Structure of the tutorial text This lecture follows loosely the structure of the tutorial text. Questions Chapter Title What is High Performance Computing exactly? Can it solve my computational needs? 1 Introduction to HPC How to get an account? 2 Getting a VSC account How do I connect to the HPC and transfer my files and programs? 3 Preparing the environment How to start background jobs? 4 Running batch jobs How to start jobs with user interaction? 5 Running interactive jobs Where do the input and output go? Where to collect my results? 6 Running jobs with input/output data Can I speed up my program by exploring parallel programming techniques? 7 Multi-core jobs Parallel programming Can I start many jobs at once? 8 Multi-job submission What are the policies? 9 HPC policies

Introduction to the VSC Calc. UA

VSC – Flemish Supercomputer Center Vlaams Supercomputer Centrum (VSC) Established in December 2007 Partnership between 5 University associations: Antwerp, Brussels, Ghent, Hasselt, Leuven Funded by Flemish Government through the FWO (Research Fund – Flanders) Goal: make HPC available to all researchers in Flanders (academic and industrial) Local infrastructure (Tier-2) in Antwerp: HPC core facility Calc. UA

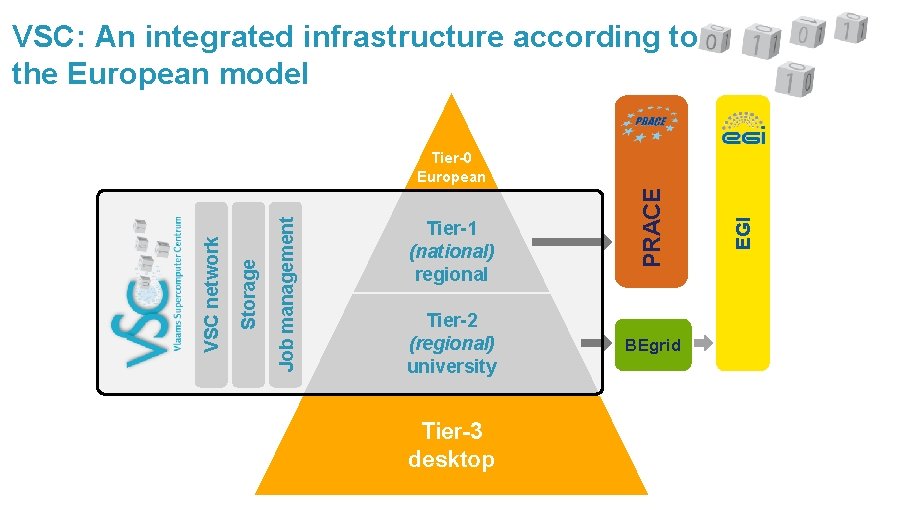

VSC: An integrated infrastructure according to the European model Tier-2 (regional) university Tier-3 desktop BEgrid EGI Tier-1 (national) regional PRACE Job management Storage VSC network Tier-0 European

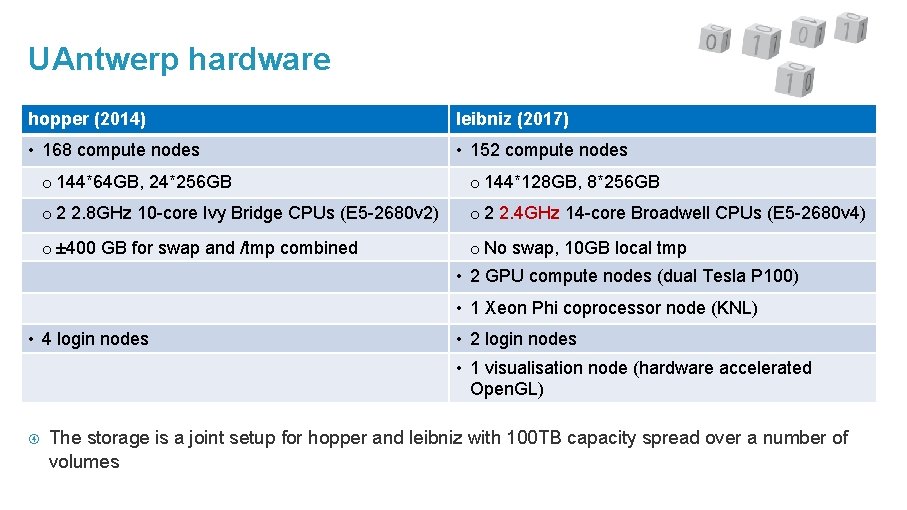

UAntwerp hardware hopper (2014) leibniz (2017) • 168 compute nodes • 152 compute nodes o 144*64 GB, 24*256 GB o 144*128 GB, 8*256 GB o 2 2. 8 GHz 10 -core Ivy Bridge CPUs (E 5 -2680 v 2) o 2 2. 4 GHz 14 -core Broadwell CPUs (E 5 -2680 v 4) o ± 400 GB for swap and /tmp combined o No swap, 10 GB local tmp • 2 GPU compute nodes (dual Tesla P 100) • 1 Xeon Phi coprocessor node (KNL) • 4 login nodes • 2 login nodes • 1 visualisation node (hardware accelerated Open. GL) The storage is a joint setup for hopper and leibniz with 100 TB capacity spread over a number of volumes

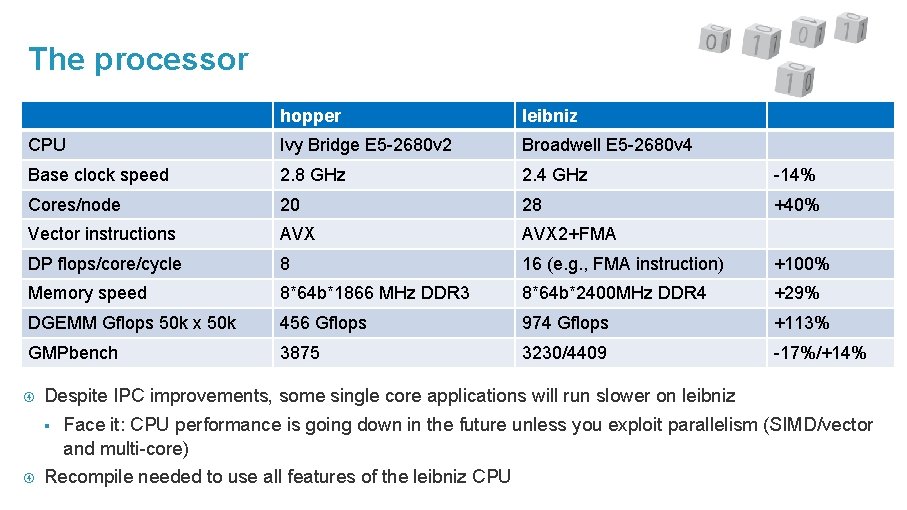

The processor hopper leibniz CPU Ivy Bridge E 5 -2680 v 2 Broadwell E 5 -2680 v 4 Base clock speed 2. 8 GHz 2. 4 GHz -14% Cores/node 20 28 +40% Vector instructions AVX 2+FMA DP flops/core/cycle 8 16 (e. g. , FMA instruction) +100% Memory speed 8*64 b*1866 MHz DDR 3 8*64 b*2400 MHz DDR 4 +29% DGEMM Gflops 50 k x 50 k 456 Gflops 974 Gflops +113% GMPbench 3875 3230/4409 -17%/+14% Despite IPC improvements, some single core applications will run slower on leibniz § Face it: CPU performance is going down in the future unless you exploit parallelism (SIMD/vector and multi-core) Recompile needed to use all features of the leibniz CPU

Our hardware

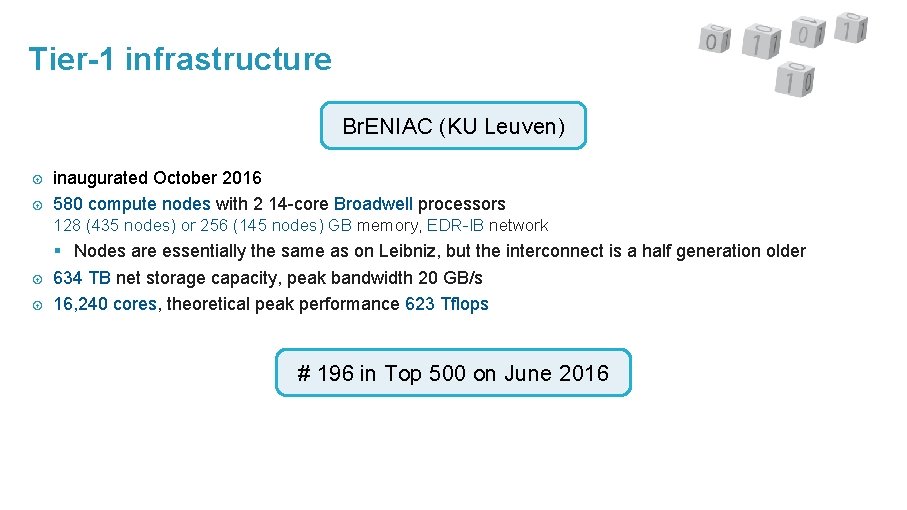

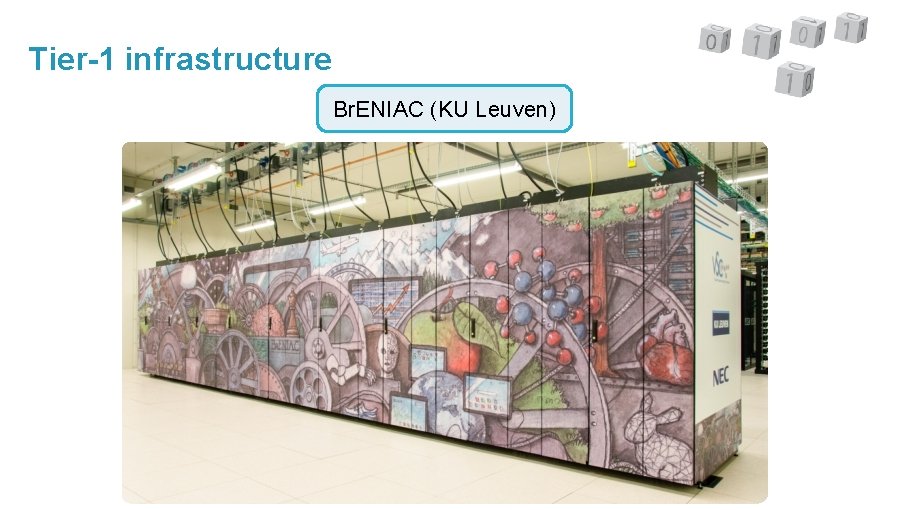

Tier-1 infrastructure Br. ENIAC (KU Leuven) inaugurated October 2016 580 compute nodes with 2 14 -core Broadwell processors 128 (435 nodes) or 256 (145 nodes) GB memory, EDR-IB network § Nodes are essentially the same as on Leibniz, but the interconnect is a half generation older 634 TB net storage capacity, peak bandwidth 20 GB/s 16, 240 cores, theoretical peak performance 623 Tflops # 196 in Top 500 on June 2016

Tier-1 infrastructure Br. ENIAC (KU Leuven)

Tier-1 infrastructure Br. ENIAC (KU Leuven)

Characteristics of a HPC cluster Shared infrastructure, used by multiple users simultaneously § So you have to request the amount of resources you need § And you may have to wait a little Expensive infrastructure § So software efficiency matters § Can’t afford idling for user input Therefore mostly for batch applications rather than interactive applications Built for parallel jobs. No parallelism = no supercomputing. Not meant for running a single-core job Remote use model § But you’re running (a different kind of) remote applications all the time on your phone or web browser… Linux-based § so no Windows or mac. OS software, § but most standard Linux applications will run.

Getting a VSC account Calc. UA Tutorial Chapter 2

SSH keys Almost all communication with the cluster happens trough SSH = Secure SHell We use public/private key pairs rather than passwords to enhance security § Private key is never passed over the internet if you do things right Keys: § The private key is the part of the key pair that you have to keep very secure • Protect with a passphrase! § The public key is put on the computer you want to access § A hacker can do nothing with your public key without the private key

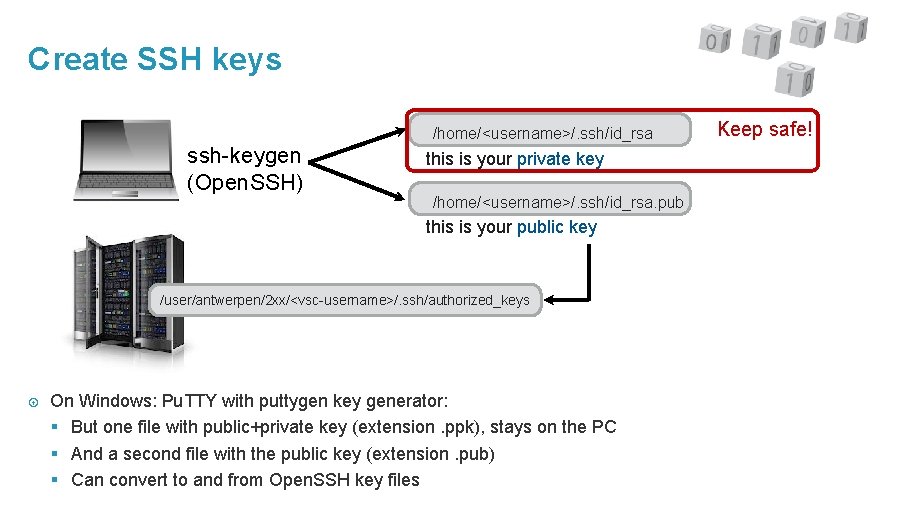

Create SSH keys /home/<username>/. ssh/id_rsa ssh-keygen (Open. SSH) this is your private key /home/<username>/. ssh/id_rsa. pub this is your public key /user/antwerpen/2 xx/<vsc-username>/. ssh/authorized_keys On Windows: Pu. TTY with puttygen key generator: § But one file with public+private key (extension. ppk), stays on the PC § And a second file with the public key (extension. pub) § Can convert to and from Open. SSH key files Keep safe!

Register your account Web-based procedure your VSC username is vsc 20 xxx

Connecting to the cluster Calc. UA Tutorial Chapter 3

A typical workflow 1. 2. 3. 4. 5. 6. Connect to the cluster Transfer your files to the clusters Select software and build your environment Define and submit your job Wait while Ø your job gets scheduled Ø your job gets executed Ø your job finishes Move your results 1 9

Connecting to the cluster Required software: § Windows: Pu. TTY graphical SSH client, or Linux emulation + ssh command § mac. OS and Linux: Terminal window + ssh command § May need a VPN client to connect to the UAntwerpen network A cluster has § login nodes: The part of the cluster to which you connect and where you launch your jobs § compute nodes: The part of the cluster where the actual work is done § storage and management nodes: As a regular user, you don’t get on them Generic names of the login nodes: § VSC standard: login. hpc. uantwerpen. be => Hopper § Hopper: login-hopper. uantwerpen. be § Leibniz: login-leibniz. uantwerpen. be

Connecting to the cluster Windows: § Open Pu. TTY § Follow the instructions on the VSC web site to fill in all fields mac. OS § Open a terminal (Terminal which comes with mac. OS or i. Term 2) § Login via secure shell: $ ssh vsc 2 xxxx@login. hpc. uantwerpen. be Linux § Open a terminal windows (Ubuntu: Applications > Accessories > Terminal or CTRL+Alt+T) § Login via secure shell $ ssh vsc 2 xxxx@login. hpc. uantwerpen. be

The login nodes Currently restricted access § only accessible from within the campus network § external access (e. g. , from home) possible using UA-VPN (VPN requirement will likely be dropped in the future) Use for: § editing and submitting jobs, also via some GUI applications § small compile jobs – submit large compiles as a regular job § visualisation (we even have a special login node for that purpose) Do not use the login nodes for running your jobs

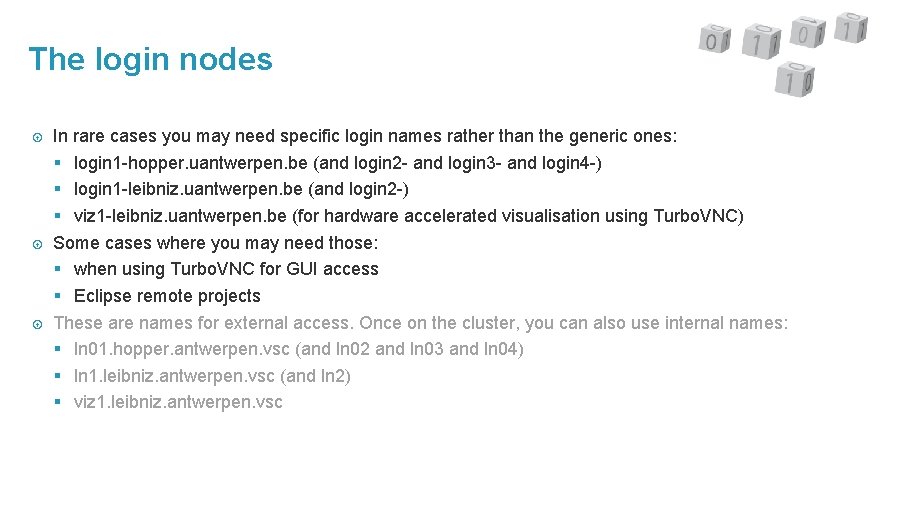

The login nodes In rare cases you may need specific login names rather than the generic ones: § login 1 -hopper. uantwerpen. be (and login 2 - and login 3 - and login 4 -) § login 1 -leibniz. uantwerpen. be (and login 2 -) § viz 1 -leibniz. uantwerpen. be (for hardware accelerated visualisation using Turbo. VNC) Some cases where you may need those: § when using Turbo. VNC for GUI access § Eclipse remote projects These are names for external access. Once on the cluster, you can also use internal names: § ln 01. hopper. antwerpen. vsc (and ln 02 and ln 03 and ln 04) § ln 1. leibniz. antwerpen. vsc (and ln 2) § viz 1. leibniz. antwerpen. vsc

A typical workflow 1. 2. 3. 4. 5. 6. Connect to the cluster Transfer your files to the clusters Select software and build your environment Define and submit your job Wait while Ø your job gets scheduled Ø your job gets executed Ø your job finishes Move your results 2 4

Transfer your files sftp protocol – ftp-like protocol based on ssh, using the same keys Graphical ssh/sftp file managers for Windows, mac. OS, Linux § Windows: Pu. TTY, Win. SCP (Pu. TTY keys) § Multiplatform: File. Zilla (Open. SSH keys) § mac. OS: Cyber. Duck Command line: scp - secure copy § copy from the local computer to the cluster $ scp file. ext vsc 2 xxxx@login. hpc. uantwerpen. be: § copy from the clusters to the local computer $ scp vsc 2 xxxx@login. hpc. uantwerpen. be: file. ext.

A typical workflow 1. 2. 3. 4. 5. 6. Connect to the cluster Transfer your files to the clusters Select software and build your environment Define and submit your job Wait while Ø your job gets scheduled Ø your job gets executed Ø your job finishes Move your results 2 6

System software Operating system : Cent. OS 7. 4 (leibniz, hopper update) or Scientific Linux 6. 9 (hopper) § Red Hat Enterprise Linux (RHEL) 6/7 clones § Hopper upgrade to Cent. OS 7. 4 ongoing Resource management and job scheduling § Torque : resource manager (based on PBS) § MOAB : job scheduler and management tools

Development software C/C++/Fortran compilers: Intel Parallel Studio XE Cluster Edition and GCC § Intel compiler comes with several tools for performance analysis and assistance in vectorisation/parallelisation of code § Fairly up-to-date Open. MP support in both compilers § Support for other shared memory parallelisation technologies such as Cilk Plus, other sets of directives, etc. to a varying level in both compilers § PGAS Co-Array Fortran support in Intel Compiler (and some in GNU Fortran) § Due to some VSC policies, support for the latest versions may be lacking, but you can request them if you need them Message passing libraries: Intel MPI, Open MPI (standard and Mellanox) Mathematical libraries: Intel MKL, Open. BLAS, FFTW, MUMPS, GSL, … Many other libraries, including HDF 5, Net. CDF, Metis, . . . Python

Application software ABINIT, CP 2 K, Open. MX, Quantum. ESPRESSO, Gaussian VASP, CHARMM, GAMESS-US, Siesta, Molpro, CPMD LAMMPS, Gromacs Telemac SAMtools, Top. Hat, Bowtie, BLAST, Ma. Su. RCA Gurobi Comsol, R, MATLAB, … Often multiple versions of the same package Additional software can be installed on demand

Application software The fine print Restrictions on commercial software § 5 licenses for Intel Parallel Studio § MATLAB: Toolboxes limited to those in the campus agreement § Other licenses paid for and provided by the users, owner of the license decides who can use the license (see next slide) Typical user applications don’t come as part of the system software, but § Additional software can be installed on demand § Take into account the system requirements § Provide working building instructions and licenses § Since we cannot have domain knowledge in all science domains, users have to do the testing with us Compilation support is limited § Best effort, no code fixing Installed in /apps/antwerpen

Using licensed software VSC or campus-wide license: You can just use the software § Some restrictions may apply if you don’t work at UAntwerp but at some of the institutions we have agreements with (ITG, VITO) or at a company Restricted licenses § Typically licenses paid for by one or more research groups, sometimes just other license restrictions that must be respected § Talk to the owner of the license first. If no one in your research group is using a particular package, your group probably doesn’t contribute to the license cost. § Access controlled via UNIX groups • E. g. , avasp : VASP users from UAntwerp • To get access: Request group membership via account. vscentrum. be, “New/Join group” menu item. The group moderator will then grant or refuse access

Building your environment: The module concept Dynamic software management through modules § Allows to select the version that you need and avoids version conflicts § Loading a module sets some environment variables : • $PATH, $LD_LIBRARY_PATH, . . . • Application-specific environment variables • VSC-specific variables that you can use to point to the location of a package (typically start with EB) § Automatically pulls in required dependencies Software on our system is organised in toolchains § System toolchain: Some packages are compiled against system libraries or installed from binaries § Compiler toolchains: Most packages are compiled from source with up-to-date compilers for better performance § Packages in the same compiler toolchain will interoperate and can be combined with packages from the system toolchain

Building your environment: Toolchains Compiler toolchains: § “intel” toolchain: Intel and GNU compilers, Intel MPI and math libraries. § “foss” toolchain: GNU compilers, Open MPI, Open. BLAS, FFTW, . . . § VSC-wide and refreshed twice per year with more recent compiler versions: 2016 b, 2017 a, . . . • We sometimes do silent updates within a toolchain at UAntwerp intel/2017 a is our main toolchain and kept synchronous on all systems. § We have skipped 2017 b at UAntwerp as it offers no advantages over our 2017 a toolchain § Software that did not compile correctly with the 2017 a toolchain or showed a large performance regression, is installed in the 2016 b toolchain § There is a page listing all software installed in the 2017 a toolchains

Building your environment: The module command Module name is typically of the form: <name of package>/<version>-<toolchain info>-<additional info>, e. g. § intel/2016 a : The Intel toolchain package, version 2016 a § PLUMED/2. 1. 0 -intel-2014 a : PLUMED library, version 2. 1. 0, build with the intel/2014 a toolchain § Suite. Sparse/4. 5. 5 -intel-2017 a-Par. METIS-4. 0. 3: Suite. Sparse library, version 4. 5. 5, build with the Intel compiler, and using Par. METIS 4. 0. 3. • In <additional info>, we don’t add all additional components, but these that matter to distinguish between different available modules for the same package. No two modules with the same name can be loaded simultaneously (exception: hopper SL 6)

Building your environment: Two kinds of modules Cluster modules: § leibniz/supported: Enables all currently supported application modules: up to 4 toolchain versions and the system toolchain modules § hopper/2017 a: Enables only the 2017 a compiler toolchain modules and the system toolchain modules § So it is a good practice to always load the appropriate cluster module first before loading any other module! § Hint: module load $VSC_INSTITUTE_CLUSTER/supported 2 types of application modules § Built with a specific version of a compiler toolchain, e. g. , 2017 a • Modules in a subdirectory that contains the toolchain name • Try to support a toolchain for 2 years § Applications installed in the system toolchain • Modules in subdirectory centos 7.

Building your environment: The module command module avail : list installed software packages module avail intel : list all available versions of the intel package module spider matlab : search for all modules whose name contains matlab, not case-sensitive module spider MATLAB/R 2017 a : display additional information about the MATLAB/R 2017 a module, including other modules you may need to load module help : Display help about the module command module help foss : show help about a given module list : list all loaded modules in the current session module load hopper/2016 b : load a list of modules module load MATLAB/R 2017 a : load a specific version module unload MATLAB : unload MATLAB settings module purge : unload all modules (incl. dependencies and cluster module) module –t av |& sort -f : produce a sorted list of all modules, case-insensitive

Exercises Getting ready (see also § 3. 1): § Log on to the cluster § Go to your data directory cd $VSC_DATA § Copy the examples for the tutorial to that directory: type cp -r /apps/antwerpen/tutorials/Intro-HPC/examples. Experiment with the module command as in § 3. 3 ”Preparing your environment: Modules” Which module offers support for VNC? Which command(s) does that module offer?

Starting jobs Calc. UA Tutorial Chapter 4

A typical workflow 1. 2. 3. 4. 5. 6. Connect to the cluster Transfer your files to the clusters Select software and build your environment Define and submit your job Wait while Ø your job gets scheduled Ø your job gets executed Ø your job finishes Move your results 3 9

Why batch? A cluster is a large and expensive machine § So the cluster has to be used as efficiently as possible § Which implies that we cannot loose time waiting for input as in an interactive program And few programs can use the whole capacity (also depends on the problem to solve) § So the cluster is a shared resource, each simultaneous user gets a fraction of the machine depending on his/her requirements Moreover there a lot of users, so one sometimes has to wait a little. Hence batch jobs (script with resource specifications) submitted to a queueing system with a scheduler to select the next job in a fair way based on available resources and scheduling policies.

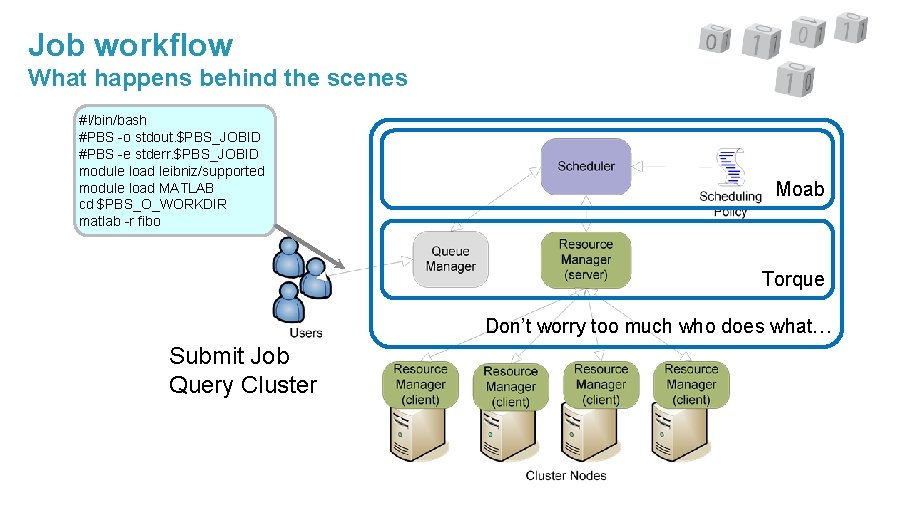

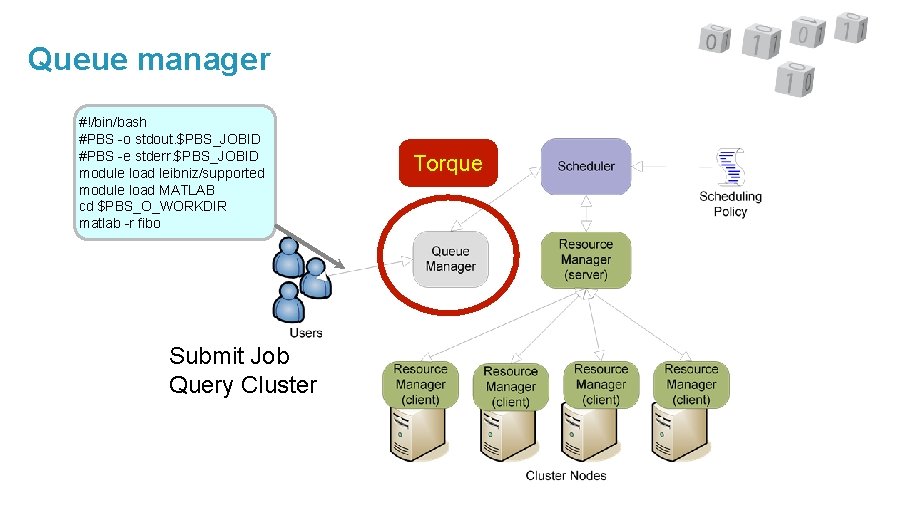

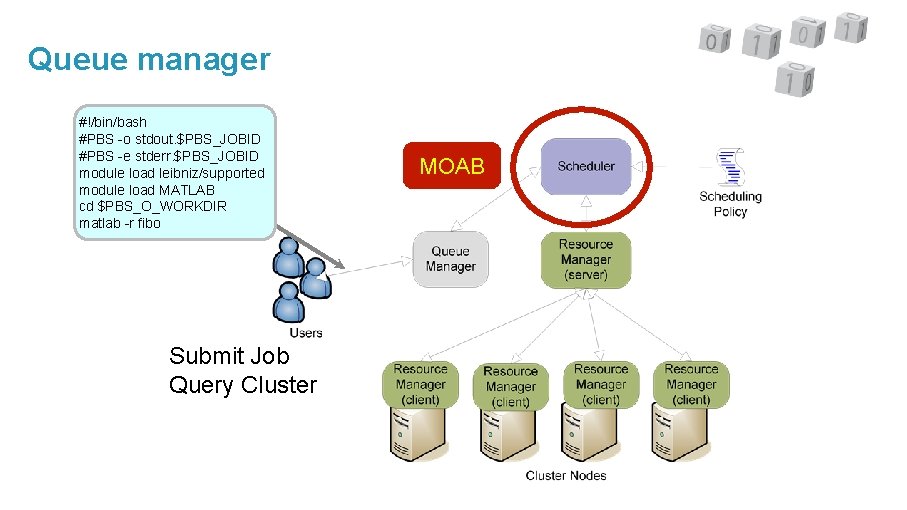

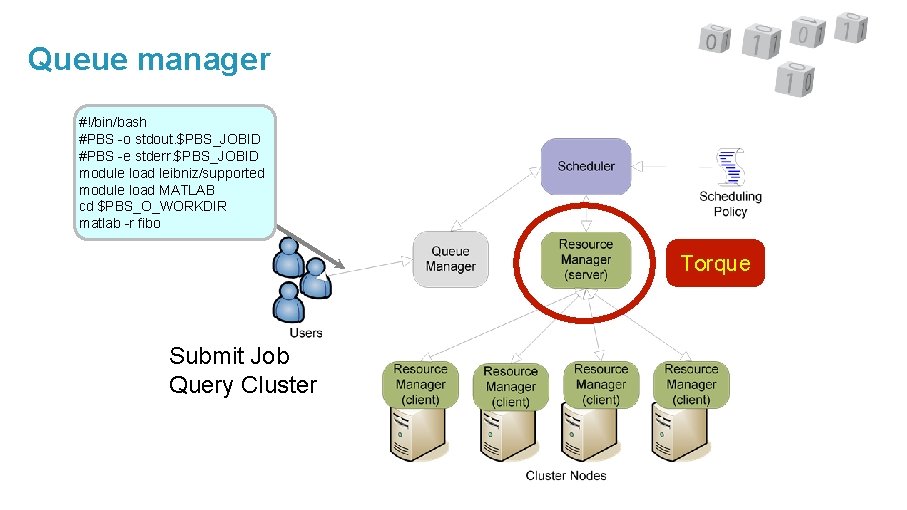

Job workflow What happens behind the scenes #!/bin/bash #PBS -o stdout. $PBS_JOBID #PBS -e stderr. $PBS_JOBID module load leibniz/supported module load MATLAB cd $PBS_O_WORKDIR matlab -r fibo Moab Torque Don’t worry too much who does what… Submit Job Query Cluster

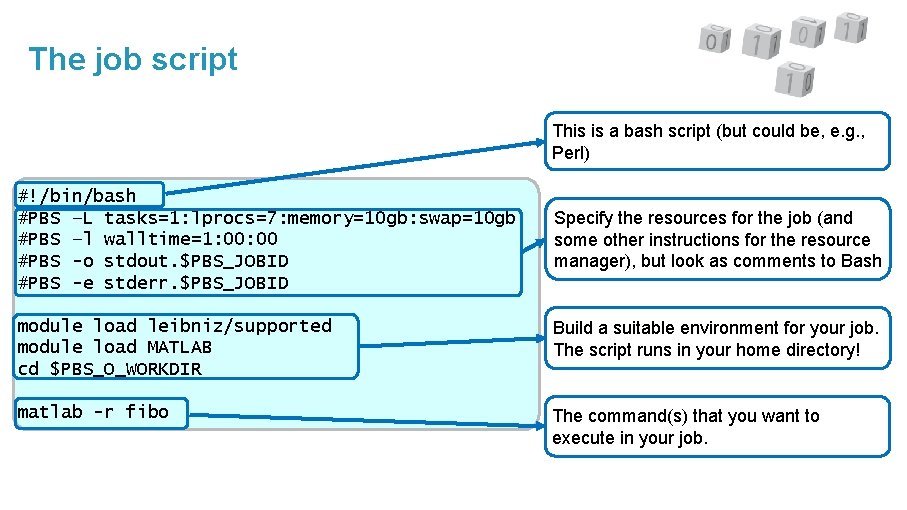

The job script Specifies the resources needed for the job and other instructions for the resource manager: § Number and type of CPUs § How much compute time? § How much memory? § Output files (stdout and stderr) – optional § Can instruct the resource manager to send mail when a job starts or ends Followed by a sequence of commands to run on the compute node § Script runs in your home directory, so don’t forget to go to the appropriate directory § Load the modules you need for your job § Then specify all the commands you want to execute § And these are really all the commands that you would also do interactively or in a regular script to perform the tasks you want to perform

The job script This is a bash script (but could be, e. g. , Perl) #!/bin/bash #PBS –L tasks=1: lprocs=7: memory=10 gb: swap=10 gb #PBS –l walltime=1: 00 #PBS -o stdout. $PBS_JOBID #PBS -e stderr. $PBS_JOBID Specify the resources for the job (and some other instructions for the resource manager), but look as comments to Bash module load leibniz/supported module load MATLAB cd $PBS_O_WORKDIR Build a suitable environment for your job. The script runs in your home directory! matlab -r fibo The command(s) that you want to execute in your job.

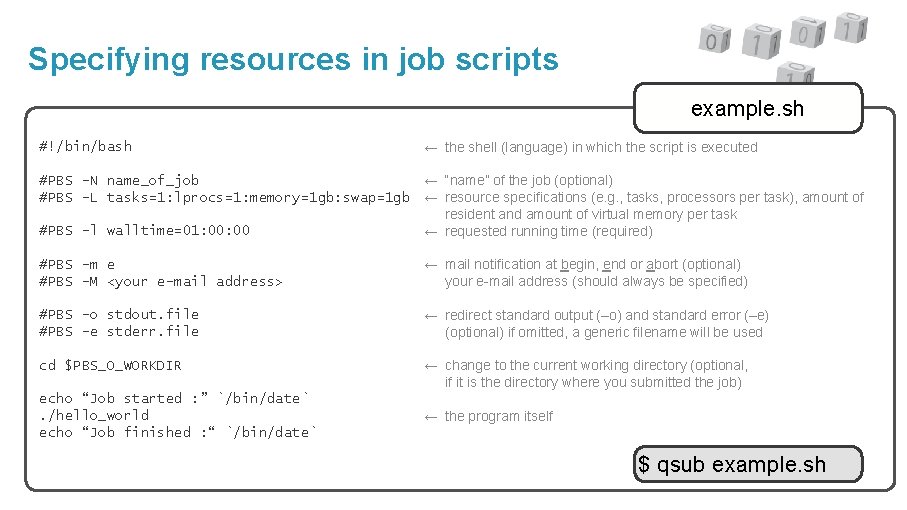

Specifying resources in job scripts example. sh #!/bin/bash ← the shell (language) in which the script is executed #PBS –N name_of_job ← “name” of the job (optional) #PBS –L tasks=1: lprocs=1: memory=1 gb: swap=1 gb ← resource specifications (e. g. , tasks, processors per task), amount of resident and amount of virtual memory per task #PBS –l walltime=01: 00 ← requested running time (required)–l #PBS –m e #PBS –M <your e-mail address> ← mail notification at begin, end or abort (optional) ← your e-mail address (should always be specified) #PBS –o stdout. file #PBS –e stderr. file ← redirect standard output (–o) and standard error (–e) ← (optional) if omitted, a generic filename will be used cd $PBS_O_WORKDIR ← change to the current working directory (optional, ← if it is the directory where you submitted the job) echo “Job started : ” `/bin/date`. /hello_world echo “Job finished : “ `/bin/date` ← the program itself← finished $ qsub example. sh

Intermezzo Why do Torque commands start with PBS? In the ’ 90 s of the previous century, there was a resource manager called Portable Batch System, developed by a contractor for NASA. This was open-sourced. But that company was acquired by another company that then sold the rights to Altair Engineering that evolved the product into the closed-source product PBSpro (now open-source again since the summer of 2016). And the open-source version was forked by what is now Adaptive Computing and became Torque remained open-source. Torque = Terascale Open-source Resource and QUEue manager. The name was changed, but the commands remained. Likewise, Moab evolved from MAUI, an open-source scheduler. Adaptive Computing, the company behind Moab, contributed a lot to MAUI but then decided to start over with a closed source product. They still offer MAUI on their website though. § MAUI used to be widely used in large USA supercomputer centres, but most now throw their weight behind SLURM with or without another scheduler.

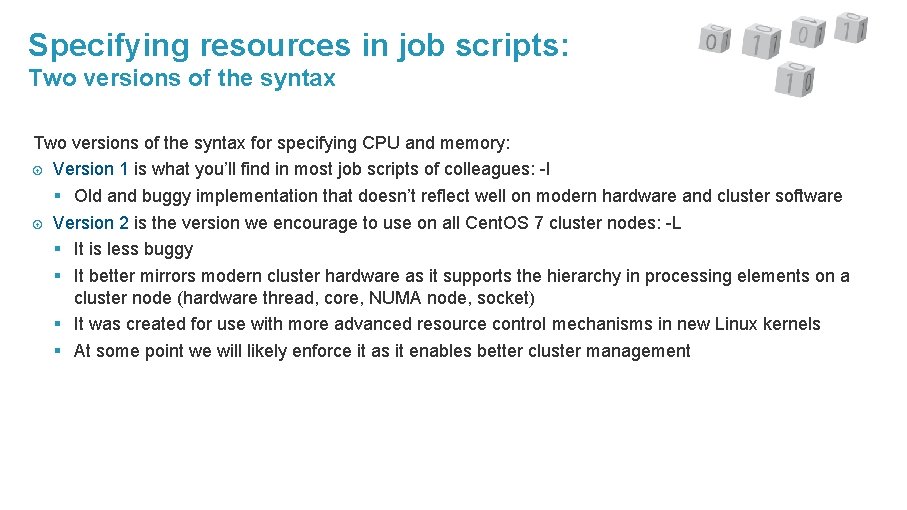

Specifying resources in job scripts: Two versions of the syntax for specifying CPU and memory: Version 1 is what you’ll find in most job scripts of colleagues: -l § Old and buggy implementation that doesn’t reflect well on modern hardware and cluster software Version 2 is the version we encourage to use on all Cent. OS 7 cluster nodes: -L § It is less buggy § It better mirrors modern cluster hardware as it supports the hierarchy in processing elements on a cluster node (hardware thread, core, NUMA node, socket) § It was created for use with more advanced resource control mechanisms in new Linux kernels § At some point we will likely enforce it as it enables better cluster management

Specifying resources in job scriptss: Version 2 concepts Task: A job consists of a number of tasks. § Think of it as a process (e. g. , one rank of a MPI job) § Must run in a single node (exception: SGI shared memory systems) In fact: Must run in a single OS instance Logical processor: Each task uses a number of logical processors § Hardware threads or cores § Specified per task § In a system with hyperthreading enabled, an additional parameter allows to specify whether the system should use all hardware threads or run one lproc per core. Placement: Set locality of hardware resources for a task Feature: Each compute node can have one or more special features. We use this for nodes with more memory

Specifying resources in job scriptss: Version 2 concepts Physical/resident memory: RAM per task § In our setup, not enforced on a per-task but per-node basis § Processes won’t crash if you exceed this, but will start to use swap space Virtual memory: RAM + swap space, again per task § In our setup, not enforced on a per-task but per-node basis § Processes will crash if you exceed this. § We will enforce the use of the parameter related to virtual memory

Specifying resources in job scripts: Version 2 syntax: Cores Specifying tasks and lprocs: #PBS –L tasks=4: lprocs=7 § Requests 4 tasks using 7 lprocs each (cores in the current setup of hopper and leibniz) § The scheduler should be clever enough to put these 4 tasks on a single 28 -core node and assign cores to each task in a clever way Some variants: § #PBS –L tasks=1: lprocs=all: place=node : Will give you all cores on a node. • Does not work without place=node! § #PBS –L tasks=4: lprocs=7: usecores : Stresses that cores should be used instead of hardware threads

Specifying resources in job scripts: Version 2 syntax: Memory Adding physical memory: #PBS –L tasks=4: lprocs=7: memory=20 gb § Requests 4 tasks using 7 lprocs each and 20 gb memory per task Adding virtual memory: #PBS –L tasks=4: lprocs=7: memory=20 gb: swap=25 gb § Confusing name! This actually requests 25 GB of virtual memory, not 25 GB of swap space (which would mean 20 GB+25 GB=45 GB of virtual memory). § We have no swap space on leibniz, so it is best to take swap=memory (at least if there is enough physical memory). § And in this case there is no need to specify memory as the default is memory=swap #PBS –L tasks=4: lprocs=7: swap=20 gb § Specifying swap will be enforced! • Available memory: roughly 56 GB per node on hopper, 112 GB per node on leibniz, 240 GB for the 256 GB nodes

Specifying resources in job scripts: Version 2 syntax: Additional features Adding additional features: #PBS –L tasks=4: lprocs=7: swap=50 gb: feature=mem 256 § Requests 4 tasks using 7 lprocs each and 50 GB virtual memory per task, and instructs the scheduler to use nodes with 256 GB of memory so that all 4 tasks can run on the same node rather than using two regular nodes and leave the cores half empty. § Most important features listed on the VSC website (UAntwerp hardware pages) § Specifying multiple features is possible, both in an “and” or an “or” combination (see the manual)

Specifying resources in job scripts: Requesting cores or memory What happens if you don’t use all memory and cores on a node? § Other jobs – but only yours – can use it • so that other users cannot accidentally crash your job • and so that you have a clean environment for benchmarking without unwanted interference § You’re likely wasting expensive resources! Don’t complain you have to wait too long until your job runs if you don’t use the cluster efficiently. If you really require a special setup where no other jobs of you are run on the nodes allocated to you, contact user support (hpc@uantwerpen. be). If you submit a lot of 1 core jobs that use more than 2. 75 GB each on hopper or more than 4 GB each on leibniz: Please specify the “mem 256” feature for the nodes so that they get scheduled on nodes with more memory and also fill up as many cores as possible § And a program requiring 16 GB of memory but using just a single thread does not make any sense on today’s computers

Specifying resources in job scripts: Single node per user policy Policy motivation : § parallel jobs can suffer badly if they don’t have exclusive access to the full node § sometimes the wrong job gets killed if a user uses more memory than requested and a node runs out of memory § a user may accidentally spawn more processes/threads and use more cores than expected (depends on the OS and how the process was started) • typical case: a user does not realize that a program is an Open. MP or hybrid MPI/Open. MP program, resulting in running 28 processes with 28 threads each. Remember : the scheduler will (try to) fill up a node with several jobs from the same user, but could use some help from time to time if you don’t have enough work for a single node, you need a good PC/workstation and not a supercomputer

Specifying resources in job scripts: Syntax 1. 0 (deprecated) - Requesting cores #PBS –l nodes=2: ppn=20 : Request 2 nodes, using 20 cores (in fact, hardware threads) per node § Or in the terminology of the Torque manual: 2 tasks with 20 lprocs (logical processors) each. § Different node types have a different number of cores per node. On hopper, all nodes have 20 cores, on leibniz, nodes have 28 cores. #PBS –l nodes=2: ppn=20: ivybridge : Request 2 nodes, using 20 cores per node, and those nodes should have the property “ivybridge” (which is currently all nodes on hopper) See the “UAntwerpen clusters” page in the “Available hardware” section of the User Portal on the VSC website for an overview of properties for various node types.

Specifying resources in job scripts: Syntax 1. 0 (deprecated) - Requesting memory As with the version 2 syntax: 2 kinds of memory: § Resident memory (RAM) § Virtual memory (RAM + swap space) But now 2 ways of requesting memory: § Specify quantity for the job as a whole and let Torque distribute evenly across tasks/lprocs (or cores): mem for resident memory and vmem for virtual memory (Torque manual advises against its use, but it often works best. . . ). § Specify the amount of memory per lproc/core: pmem for resident memory and pvmem for virtual memory. It is best not to mix between both options (total and per core). However, if both are specified, the Torque manual promises to actually take the least restrictive of (mem, pmem) for resident memory and of (vmem, pvmem) for virtual memory. However, the implementation is buggy and Torque doesn’t keep its promises. . .

Specifying resources in job scripts: Syntax 1. 0 (deprecated) - Requesting memory Guidelines: § If you want to run a single multithreaded process: mem and vmem will work for you (e. g. , Gaussian). § If you want to run a MPI job with single-threaded processes (#cores = # MPI ranks): Any of the above will work most of the time, but remember that mem/vmem is for the whole job, not one node! § Hybrid MPI/Open. MP job: Best to use mem/vmem § However, in more complicated resource requests than explained here, mem/vmem may not work as expected either So when enforcing, we’ll likely enforce vmem. Source of trouble: § Linux has two mechanisms to enforce memory limits: • ulimit : Older way, per process on most systems • cgroups: The more modern and robust mechanism, whole process hierarchy § Torque sets ulimit –m and –v often to a value which is only relevant for non-threaded jobs, or even smaller (the latter due to another bug), and smaller than the (correct) values for the cgroups.

Specifying resources in job scripts: Syntax 1. 0 (deprecated) - Requesting memory Examples: § #PBS –l pvmem=2 gb : This job needs 2 GB of virtual memory per core See the “UAntwerpen clusters” page in the “Available hardware” section of the User Portal on the VSC website for the usable amounts of memory for each node type (which is always less than the physical amount of memory in the node) As swapping makes no sense (definitely not on leibniz), it is best to set pvmem = pmem or mem = vmem, but you still need to specify both parameters.

Specifying resources in job scripts: Requesting compute time Same procedure for both versions of the resource syntax #PBS –l walltime=30: 25: 55 : This job is expected to run for 30 h 25 m 55 s. #PBS –l walltime=1: 6: 25: 55 What happens if I underestimate the time? Bad luck, your job will be killed when the wall time has expired. What happens if I overestimate the time? Not much, but… § Your job may have a lower priority for the scheduler, or may not run because we limit the number of very long jobs that can run simultaneously. § The estimates for the start time of other jobs that the scheduler produces will be even worse than they are. Maximum wall time is 3 days on leibniz and 7 days on hopper § But remember what we said in last week’s lecture about reliability? § Longer jobs need a checkpoint-and-restart strategy • And most applications can restart from intermediate data

Specifying resources in job scripts All resource specifications start with #PBS –l or #PBS –L These #PBS lines look suspiciously like command line arguments? That’s because they can also be specified on the command line of qsub. Full list of options in the Torque manual, search for “Requesting resources” and “-L NUMA Resource Request” § We discussed the most common ones. Remember that available resources change over time as new hardware is installed and old hardware is decommissioned. § Share your scripts § But understand what those scripts do (and how they do it) § And don’t forget to check the resources from time to time! § And remember from yesterday’s lecture: The optimal set of resources also depends on the size of the problem you are solving! Share experience

Non-resource-related #PBS parameters Redirecting I/O The output to stdout and stderr are redirected to files by the resource manager § Place: By default in the directory where you submitted job (“qsub test. pbs”), when the job finishes and if you have enough quota § Default naming convention • stdout: <job-name>. o<job-ID> • stderr: <job-name>. e<job-ID> The default job name is the name of the job script. To change it: #PBS -N my_job : Change the job name to my_job • stdout: my_job. o<job-ID> • stderr: my_job. e<job-ID> Rename output files: #PBS –o stdout. file : Redirect stdout to the file stdout. file instead #PBS –e stderr. file : Redirect stderr to the file stderr. file instead Other files are put in the current working directory. This is your home directory when your job starts!

Non-resource-related #PBS parameters Notifications The cluster can notify you by mail when a job starts, ends or is aborted. § #PBS –m abe –M my. mail@uantwerpen. be Send mail when the job is aborted (a), begins (b) or ends (e) to the given mail address. § Without –M mail will be sent to the account linked to your VSC account § You may be bombarded with mails if you submit a lot of jobs, but it is useful if you need to monitor a job All other qsub command line arguments can also be specified in the job script, but these are the most useful ones. § See the qsub manual page in the Torque manual. example job scripts in /apps/antwerpen/examples

Submitting jobs If all resources are specified in the job script, submitting a job is as simple as $ qsub jobscript. sh qsub returns with a unique jobid consisting of a unique number that you’ll need for further commands and a cluster name. But all parameters specified in the job script in #PBS lines can also be specified on the command line (and overwrite those in the job script), e. g. , $ qsub –L tasks=x: lprocs=y: swap=zgb example. sh § x : the number of tasks § y : the number of logical cores per task (maximum: 20 for hopper, 28 for leibniz until we enable hyperthreading) § z : the amount of virtual memory needed per task

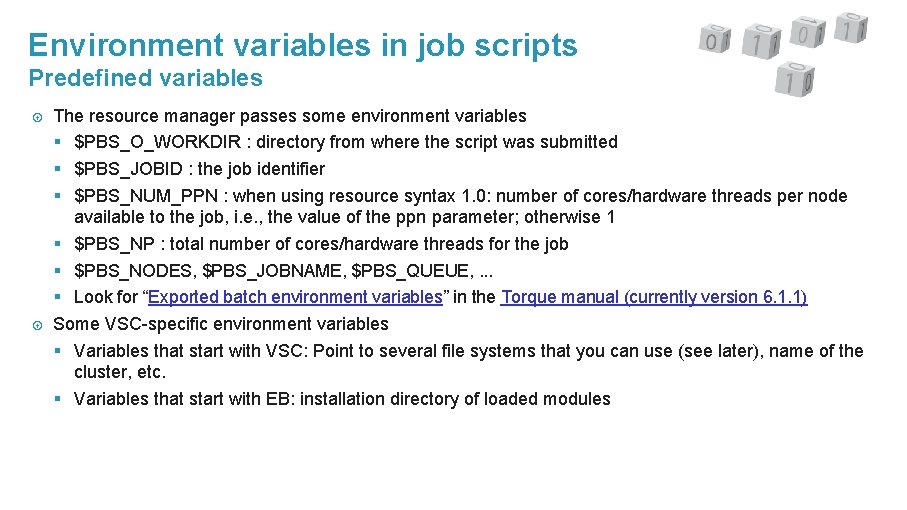

Environment variables in job scripts Predefined variables The resource manager passes some environment variables § $PBS_O_WORKDIR : directory from where the script was submitted § $PBS_JOBID : the job identifier § $PBS_NUM_PPN : when using resource syntax 1. 0: number of cores/hardware threads per node available to the job, i. e. , the value of the ppn parameter; otherwise 1 § $PBS_NP : total number of cores/hardware threads for the job § $PBS_NODES, $PBS_JOBNAME, $PBS_QUEUE, . . . § Look for “Exported batch environment variables” in the Torque manual (currently version 6. 1. 1) Some VSC-specific environment variables § Variables that start with VSC: Point to several file systems that you can use (see later), name of the cluster, etc. § Variables that start with EB: installation directory of loaded modules

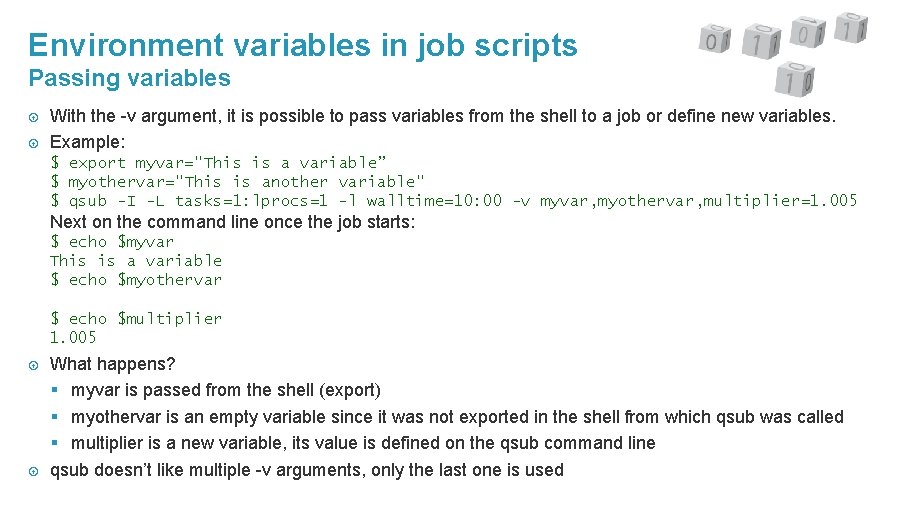

Environment variables in job scripts Passing variables With the -v argument, it is possible to pass variables from the shell to a job or define new variables. Example: $ export myvar="This is a variable” $ myothervar="This is another variable" $ qsub -I -L tasks=1: lprocs=1 -l walltime=10: 00 -v myvar, myothervar, multiplier=1. 005 Next on the command line once the job starts: $ echo $myvar This is a variable $ echo $myothervar $ echo $multiplier 1. 005 What happens? § myvar is passed from the shell (export) § myothervar is an empty variable since it was not exported in the shell from which qsub was called § multiplier is a new variable, its value is defined on the qsub command line qsub doesn’t like multiple -v arguments, only the last one is used

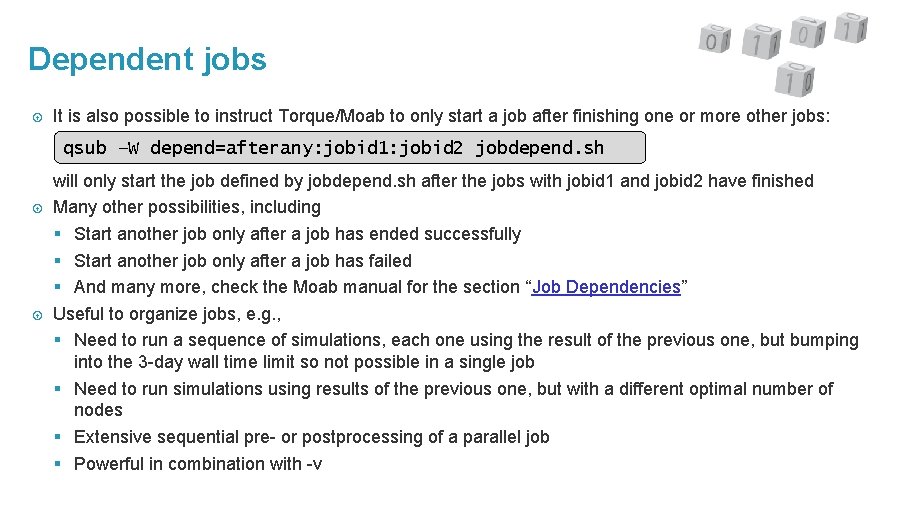

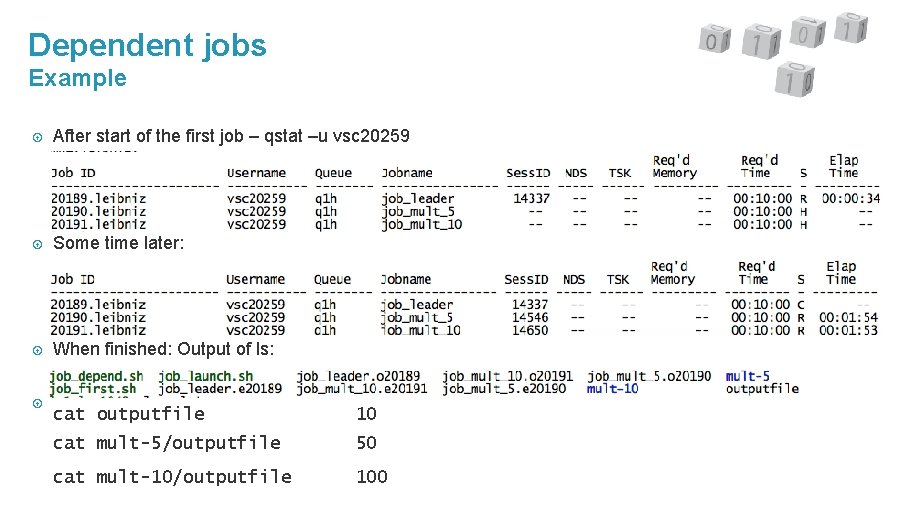

Dependent jobs It is also possible to instruct Torque/Moab to only start a job after finishing one or more other jobs: qsub –W depend=afterany: jobid 1: jobid 2 jobdepend. sh will only start the job defined by jobdepend. sh after the jobs with jobid 1 and jobid 2 have finished Many other possibilities, including § Start another job only after a job has ended successfully § Start another job only after a job has failed § And many more, check the Moab manual for the section “Job Dependencies” Useful to organize jobs, e. g. , § Need to run a sequence of simulations, each one using the result of the previous one, but bumping into the 3 -day wall time limit so not possible in a single job § Need to run simulations using results of the previous one, but with a different optimal number of nodes § Extensive sequential pre- or postprocessing of a parallel job § Powerful in combination with -v

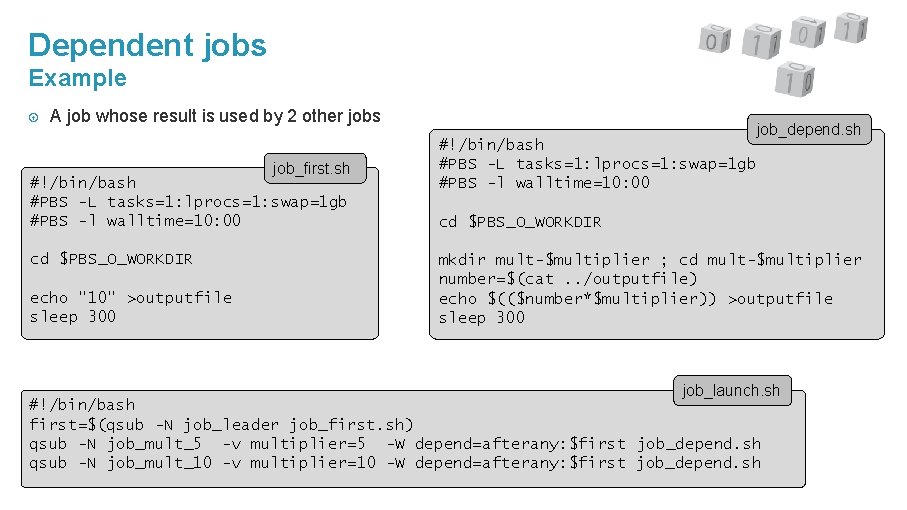

Dependent jobs Example A job whose result is used by 2 other jobs job_first. sh #!/bin/bash #PBS -L tasks=1: lprocs=1: swap=1 gb #PBS -l walltime=10: 00 cd $PBS_O_WORKDIR echo "10" >outputfile sleep 300 #!/bin/bash #PBS -L tasks=1: lprocs=1: swap=1 gb #PBS -l walltime=10: 00 job_depend. sh cd $PBS_O_WORKDIR mkdir mult-$multiplier ; cd mult-$multiplier number=$(cat. . /outputfile) echo $(($number*$multiplier)) >outputfile sleep 300 job_launch. sh #!/bin/bash first=$(qsub -N job_leader job_first. sh) qsub -N job_mult_5 -v multiplier=5 -W depend=afterany: $first job_depend. sh qsub -N job_mult_10 -v multiplier=10 -W depend=afterany: $first job_depend. sh

Dependent jobs Example After start of the first job – qstat –u vsc 20259 Some time later: When finished: Output of ls: cat outputfile 10 cat mult-5/outputfile 50 cat mult-10/outputfile 100

Queue manager #!/bin/bash #PBS -o stdout. $PBS_JOBID #PBS -e stderr. $PBS_JOBID module load leibniz/supported module load MATLAB cd $PBS_O_WORKDIR matlab -r fibo Submit Job Query Cluster Torque

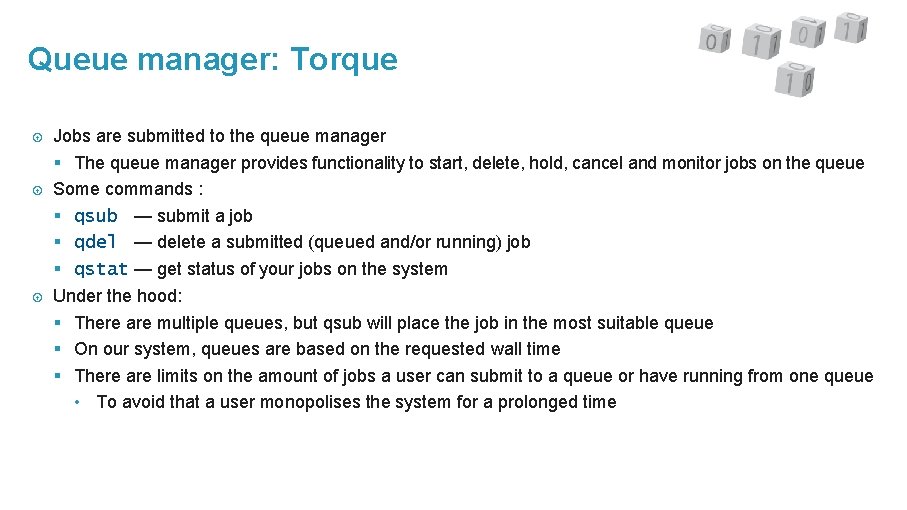

Queue manager: Torque Jobs are submitted to the queue manager § The queue manager provides functionality to start, delete, hold, cancel and monitor jobs on the queue Some commands : § qsub — submit a job § qdel — delete a submitted (queued and/or running) job § qstat — get status of your jobs on the system Under the hood: § There are multiple queues, but qsub will place the job in the most suitable queue § On our system, queues are based on the requested wall time § There are limits on the amount of jobs a user can submit to a queue or have running from one queue • To avoid that a user monopolises the system for a prolonged time

Queue manager #!/bin/bash #PBS -o stdout. $PBS_JOBID #PBS -e stderr. $PBS_JOBID module load leibniz/supported module load MATLAB cd $PBS_O_WORKDIR matlab -r fibo Submit Job Query Cluster MOAB

Job scheduling: Moab Job scheduler § Decides which job will run next based on (continuously evolving) priorities § Works with the queue manager § Works with the resource manager to check available resources Tries to optimise (long-term) resource use, keeping fairness in mind § The scheduler associates a priority number to each job • the highest priority job will (usually) be the next one to run, not first come, first served • jobs with negative priority will (temporarily) be blocked § backfill: allows some jobs to be run “out of order” with respect to the assigned priorities provided they do not delay the highest priority jobs in the queue Lots of other features : Qo. S, reservations, . . .

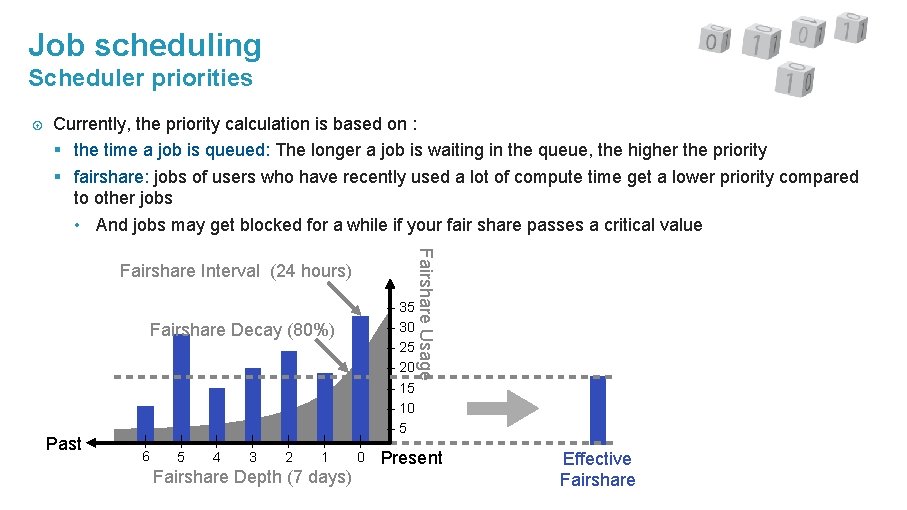

Job scheduling Scheduler priorities Currently, the priority calculation is based on : § the time a job is queued: The longer a job is waiting in the queue, the higher the priority § fairshare: jobs of users who have recently used a lot of compute time get a lower priority compared to other jobs • And jobs may get blocked for a while if your fair share passes a critical value Fairshare Decay (80%) Past | 6 | 5 | 4 | 3 | 2 | 1 Fairshare Depth (7 days) | 0 – 35 – 30 – 25 – 20 – 15 – 10 – 5 Fairshare Usage Fairshare Interval (24 hours) Present Effective Fairshare

Getting information from the scheduler showq : § Displays information about the queue as the scheduler sees it (i. e. , taking into account the priorities assigned by the scheduler) § 3 categories: starting/running jobs, eligible jobs and blocked jobs § You can only see your own jobs! checkjob : Detailed status report for specified job showstart : Show an estimate of when the job can/will start § This estimate is notoriously inaccurate! § A job can start earlier than expected because other jobs take less time than requested § But it can also start later if a higher-priority job enters the queue showbf : Shows the resources that are immediately available to you (for backfill) should you submit a job that matches those resources

Information shown by showq active jobs § are running or starting and have resources assigned to them eligible jobs § are queued and are considered eligible for scheduling and backfilling blocked jobs § jobs that are ineligible to be run or queued § job states in the ”STATE” column: • idle : job violates a fairness policy • userhold or systemhold : user or administrative hold • deferred : a temporary hold when unable to start after a specified number of attempts • batchhold : requested resources are not available, or repeated failure to start the job • notqueued : e. g. , a dependency for a job is not yet fulfilled when using job chains

Queue manager #!/bin/bash #PBS -o stdout. $PBS_JOBID #PBS -e stderr. $PBS_JOBID module load leibniz/supported module load MATLAB cd $PBS_O_WORKDIR matlab -r fibo Torque Submit Job Query Cluster

Resource manager The resource manager manages the cluster resources (e. g. , CPUs, memory, . . . ) This includes the actual job handling at the compute nodes § Start, hold, cancel and monitor jobs on the compute nodes § Should enforce resource limits (e. g. , available memory for your job, . . . ) § Logging and accounting of used resources Consists of a server component and a small daemon process that runs on each compute node 2 very technical but sometimes useful resource manager commands: § pbsnodes : returns information on the configuration of all nodes § tracejob : looks back into the log files for information about the job and can be used to get more information about job failures

Working interactively Calc. UA Tutorial Chapter 5 and more

Interactive jobs Starting an interactive job : § qsub –I : open a shell on compute node • And add the other resource parameters (wall time, number of processors) § qsub –I –X : open a shell with X support • must log in to the cluster with X forwarding enabled (ssh -X) or start from a VNC session • works only when you are requesting a single node When to start an interactive job § to test and debug your code § to use (graphical) input/output at runtime § only for short duration jobs § to compile on a specific architecture We (and your fellow users) cannot appreciate idle nodes waiting for interactive commands while other jobs are waiting to get compute time!

GUI programs The cluster is not meant to be your primary GUI working platform. However, sometimes running GUI programs is necessary: § Licensed program with a GUI for setting parameters of a run that cannot be run locally due to licensing restrictions (e. g. , NUMECA FINE-Marine) § Visualisations that need to be done while a job runs or of data that is too large to transfer to a local machine Leibniz has one visualization node with GPU and a VNC & Virtual. GL-based setup § VNC is the software to create a remote desktop. § Simple desktop environment with the xfce desktop/window manager. § Virtual. GL: To redirect Open. GL calls to the graphics accelerator and send the resulting image to the VNC software. Start Open. GL programs via vglrun. Same software stack minus the Open. GL libraries on all login nodes of hopper and leibniz. Need a VNC client on your PC. Turbo. VNC gives good results.

GUI programs How does VNC work? § Basic idea: Don’t send graphics commands over the network, but render the image on the cluster and send images. § Step 1: Load the vsc-vnc module and start the (Turbo)VNC server withe vnc-xfce command. You can then log out again. § Step 2: Create an SSH tunnel to the port given when starting the server. § Step 3: Connect to the tunnel with your VNC client. § Step 4: When you’ve finished, don’t forget to kill the VNC server. Disconnecting will not kill the server! • We’ve configured the desktop in such a way that logging out on the desktop will also kill the VNC server, at least if you started through the vnc-* commands shown in the help of the module (vncxfce). Full instructions: Page “Remote visualization @ UAntwerp” on the VSC web site (https: //www. vscentrum. be/infrastructure/hardware-ua/visualization)

GUI programs Using Open. GL: § The VNC server currently emulates a X-server without the GLX extension, so Open. GL programs will not run right away. § On the visualisation node: Start Open. GL programs through the command vglrun • vglrun will redirect Open. GL commands from the application to the GPU on the node • and transfer the rendered 3 D image to the VNC server § E. g. : vglrun glxgears will run a sample Open. GL program Our default desktop for VNC is xfce. GNOME is not supported due to compatibility problems.

Interactive jobs Do the exercises in Chapter 5 “Interactive jobs” § Files in examples/Running-interactive-jobs § Text example in § 5. 2 § GUI program in § 5. 3 (assuming you have an X server installed)

Files on the cluster Calc. UA Tutorial Chapter 6

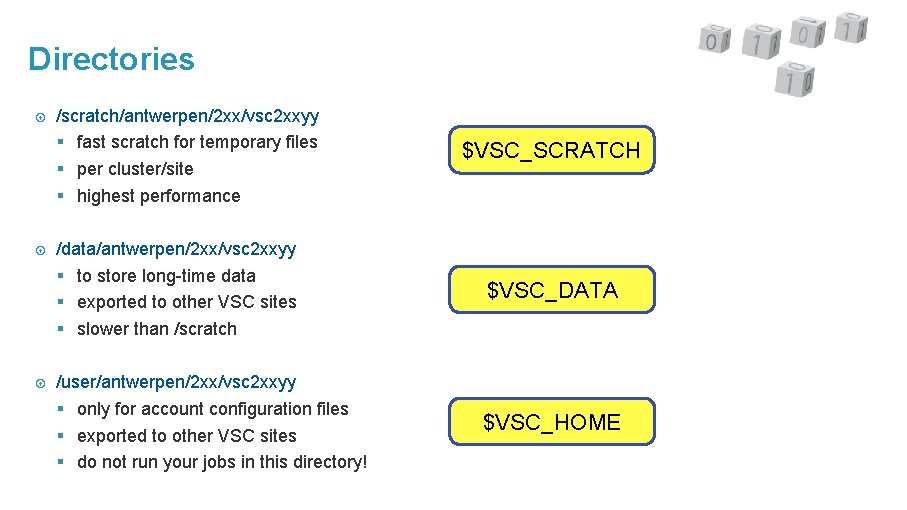

Directories /scratch/antwerpen/2 xx/vsc 2 xxyy § fast scratch for temporary files § per cluster/site § highest performance $VSC_SCRATCH /data/antwerpen/2 xx/vsc 2 xxyy § to store long-time data § exported to other VSC sites § slower than /scratch $VSC_DATA /user/antwerpen/2 xx/vsc 2 xxyy § only for account configuration files § exported to other VSC sites § do not run your jobs in this directory! $VSC_HOME

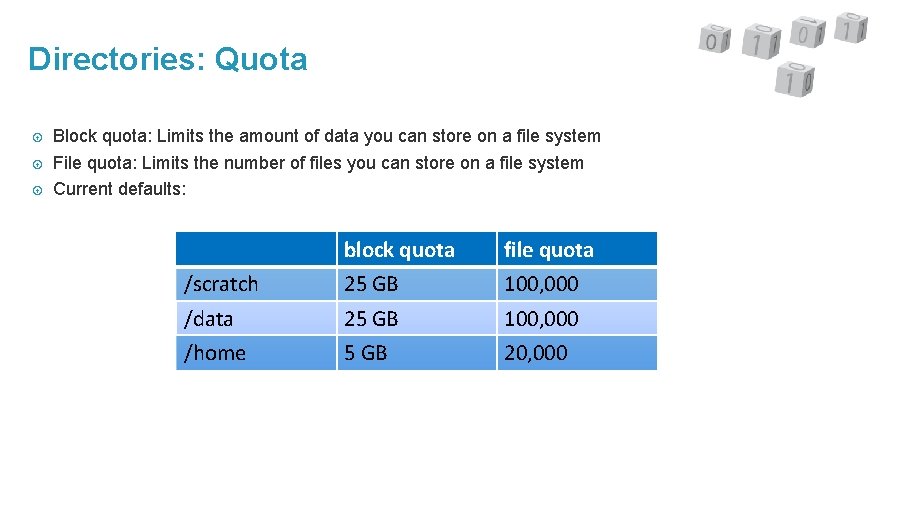

Directories: Quota Block quota: Limits the amount of data you can store on a file system File quota: Limits the number of files you can store on a file system Current defaults: block quota file quota /scratch 25 GB 100, 000 /data 25 GB 100, 000 /home 5 GB 20, 000

Best practices for file storage The cluster is not for long-term file storage. You have to move back your results to your laptop or server in your department. § There is a backup for the home and data file systems. We can only continue to offer this if these file systems are used in the proper way, i. e. , not for very volatile data § Old data on scratch can be deleted if scratch fills up (so far this has never happened at UAntwerp, but it is done regularly on some other VSC clusters) Remember that the cluster is optimised for parallel access to big files, not for using tons of small files (e. g. , one per MPI process). § If you run out of block quota, you’ll get more without too much motivation. § If you run out of file quota, you’ll have a lot of explaining to do before getting more. Text files are good for summary output while your job is running, or data that you’ll read into a spreadsheet, but not for storing 1000 x 1000 -matrices. Use binary files for that!

Exercises Do the exercises in Chapter 6 ”Jobs with input/output”. § Files in examples/Running-jobs-with-input-output-data § § 6. 3 – Writing output files : Optional § § 6. 4 – Reading an input file : Homework, not in this session (and most useful if you understand Python scripts)

Multi-core jobs Calc. UA Tutorial Chapter 7

Why parallel computing? 1. Faster time to solution § distributing code over N cores § hope for a speedup by a factor of N 2. Larger problem size § distributing your code over N nodes § increase the available memory by a factor N § hope to tackle problems which are N times bigger 3. In practice § gain limited due to communication and memory overhead and sequential fractions in the code § optimal number of nodes is problem-dependent § but no escape possible as computers don’t really become faster for serial code… Parallel computing is here to stay.

Starting a shared memory job Shared memory programs start like any other program, but you may need to tell the program how many threads it can use. § Depends on the program, and the autodetect feature of a program usually only works when the program gets the whole node. • e. g. , Matlab: use max. Num. Comp. Threads(N) § Many Open. MP programs use the environment variable OMP_NUM_THREADS: export OMP_NUM_THREADS=7 will tell the program to use 7 threads. § Check the manual of the program you use!

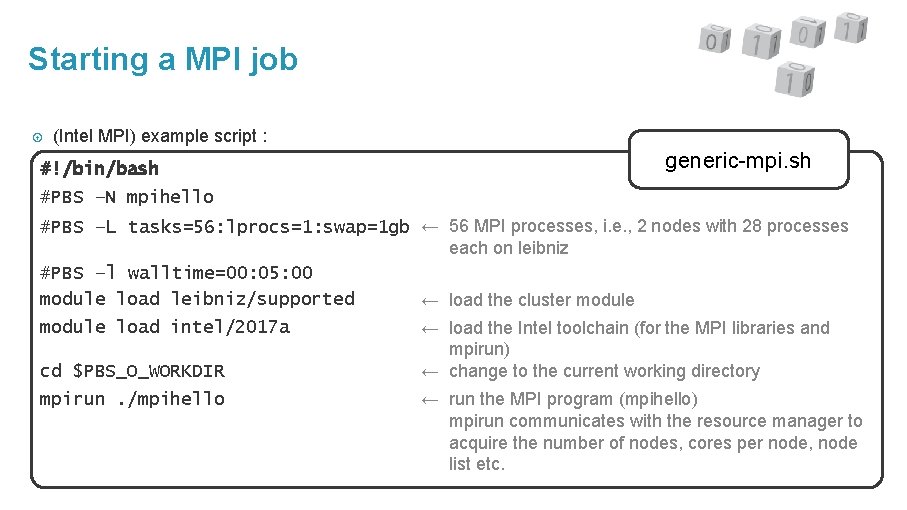

Starting a MPI job (Intel MPI) example script : #!/bin/bash generic-mpi. sh #PBS –N mpihello #PBS –L tasks=56: lprocs=1: swap=1 gb ← 56 MPI processes, i. e. , 2 nodes with 28 processes each on leibniz #PBS –l walltime=00: 05: 00 module load leibniz/supported ← load the cluster module load intel/2017 a cd $PBS_O_WORKDIR mpirun. /mpihello ← load the Intel toolchain (for the MPI libraries and mpirun) ← change to the current working directory ← run the MPI program (mpihello) mpirun communicates with the resource manager to acquire the number of nodes, cores per node, node list etc.

Starting a MPI job The module vsc-mympirun contains an alternative mpirun command (mympirun) that makes it easier to start hybrid programs § Command line option --hybrid=X to specify the number of processes per node § Check mympirun --help § Not the ideal solution but the best we have right now since Torque doesn’t communicate enough information to the MPI starter. • And may be best to set tasks to the number of nodes and lprocs to the number of cores you want just to be sure that you get full nodes

Exercises Do the exercises in Chapter 7 ”Multi-core jobs” § Scripts in examples/Multi-core-jobs-Parallel-Computing § § 7. 2 – Example pthreads program (shared memory) § § 7. 3 – Example Open. MP program (shared memory) : Only look at the first example (omp 1. c) § § 7. 4 – Example MPI program (distributed memory)

Policies Calc. UA Tutorial Chapter 9

Some site policies Our policies on the cluster: § Single user per node policy § Priority based scheduling (so not first come – first get). We reserve the freedom to change the parameters of the priority computation at will to ensure the cluster is used well § Fairshare mechanism – Make sure one user cannot monopolise the cluster § Crediting system – Dormant, not enforced Implicit user agreement: § The cluster is valuable research equipment § Do not use it for other purposes than your research for the university • No SETI@home and similar initiatives • Not for private use § And you have to acknowledge the VSC in your publications (see user portal on the VSC web site) § Don’t share your account

Credits At UAntwerp we monitor cluster use and send periodic reports to group leaders. On the Tier-1 system at KU Leuven, you get a compute time allocation (number of node days). This is enforced through project credits (Moab Accounting Manager). § A free test ride (motivation required) § Requesting compute time through a proposal At KU Leuven Tier-2, you need compute credits which are enforced as on the Tier-1. § Credits can be bought via the KU Leuven Charge policy (subject to change) based upon : § fixed start-up cost § used resources (number and type of nodes) § actual duration (used wall time)

Advanced topics Calc. UA Part B

Agenda part B 10. 11. 12. 13. Multi-job submission Compiling Program examples Good practices

Multi-Job submission Calc. UA Tutorial Chapter 10

Worker framework Many users run many serial jobs, e. g. , for a parameter exploration Problem : submitting many short jobs to the queueing system puts quite a burden on the scheduler, so try to avoid it Solution: Worker framework § parameter sweep : many small jobs and a large number of parameter variations. Parameters are passed to the program through command line arguments. § job arrays : many jobs with different input/output files Alternatives § Torque array jobs § Torque pbsdsh command § GNU parallel (module name: parallel) • We may not be able to support GNU parallel in the future for jobs that span multiple nodes

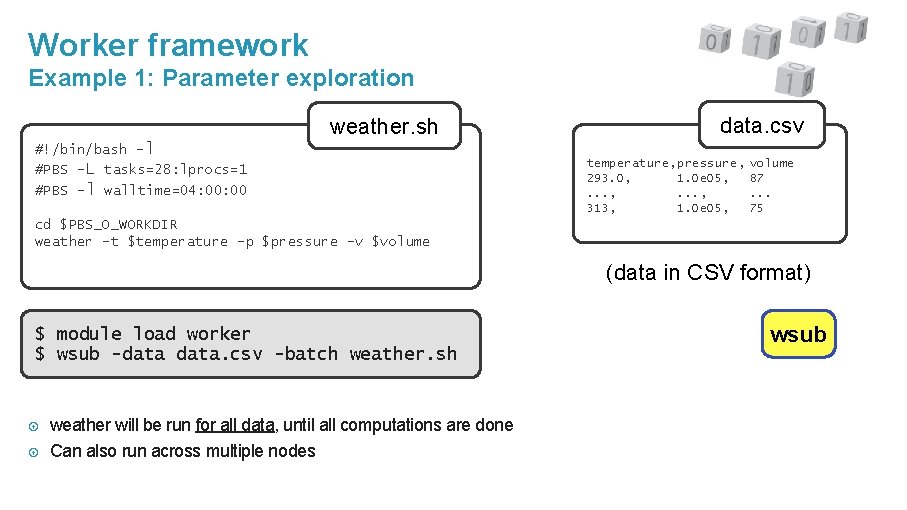

Worker framework Example 1: Parameter exploration weather. sh #!/bin/bash –l #PBS –L tasks=28: lprocs=1 #PBS –l walltime=04: 00 data. csv temperature, pressure, 293. 0, 1. 0 e 05, . . . , 313, 1. 0 e 05, volume 87. . . 75 cd $PBS_O_WORKDIR weather –t $temperature –p $pressure –v $volume (data in CSV format) $ module load worker $ wsub -data. csv -batch weather. sh weather will be run for all data, until all computations are done Can also run across multiple nodes wsub

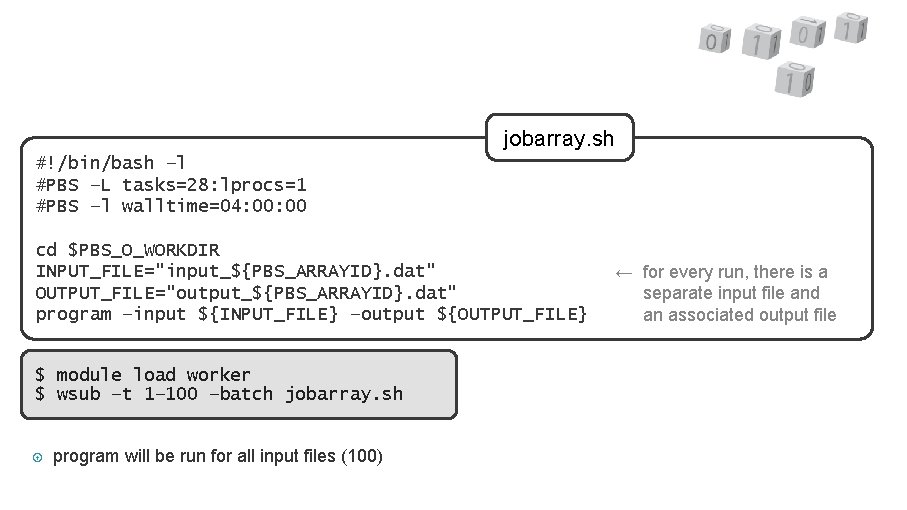

jobarray. sh #!/bin/bash –l #PBS –L tasks=28: lprocs=1 #PBS –l walltime=04: 00 cd $PBS_O_WORKDIR INPUT_FILE="input_${PBS_ARRAYID}. dat" OUTPUT_FILE="output_${PBS_ARRAYID}. dat" program –input ${INPUT_FILE} –output ${OUTPUT_FILE} $ module load worker $ wsub –t 1– 100 –batch jobarray. sh program will be run for all input files (100) –l –l ← for every run, there is a ← separate input file and ← an associated output file

Exercises Do the exercises in Chapter 10 “Multi-job submission” § Files in the subdirectories of examples/Multi-job-submission § Have a look at the parameter sweep example “weather” from § 10. 1 (subdirectory par_sweep) § Have a look at the job array example from § 10. 2 (subdirectory job_array) § (Time left: Have a look at the map-reduce example from § 10. 3 in subdirectory map_reduce)

Best practices Calc. UA Tutorial Chapter 13

Debug & test Before starting you should always check : § are there any errors in the script ? § are the required modules loaded ? § is the correct executable used ? Check your jobs at runtime § login on node and use, e. g. , “top”, “htop”, “vmstat”, . . . § monitor command (see User Portal information on running jobs) § showq –r (data not always 100% reliable) § alternatively: run an interactive job (“qsub -I”) for the first run of a set of similar runs If you will be doing a lot of runs with a package, try to benchmark the software for § scaling issues when using MPI § I/O issues • If you see that the CPU is idle most of the time that might be the problem

Hints & tips Job will logon to the first assigned compute node and start executing the commands in the job script § start in your home directory ($VSC_HOME), so go to current directory with “cd $PBS_O_WORKDIR” § don’t forget to load the software with “module load” Why is my job not running ? § use “checkjob” § commands might timeout with overloaded scheduler Submit your job and wait (be patient) … Using $VSC_SCRATCH_NODE (/tmp on the compute node) might be beneficial for jobs with many small files. § But that scratch space is node-specific and hence will disappear when your job ends § So copy what you need to your data directory or to the shared scratch

Warnings Avoid submitting many small jobs by grouping them § using a job array § or using the Worker framework Runtime is limited by the maximum wall time of the queues § for longer wall time, use checkpointing Requesting many processors could imply long waiting times (though we’re still trying to favour parallel jobs) what a cluster is not a computer that will automatically run the code of your (PC) application much faster or for much bigger problems

User support Calc. UA

User support Try to get a bit self-organized § limited resources for user support § best effort, no code fixing § contact hpc@uantwerpen. be, and be as precise as possible VSC documentation : vscentrum. be/en/user-portal External documentation : docs. adaptivecomputing. com § manuals of Torque and MOAB – We’re currently at version 6. 1. 1 of Torque and version 9. 1. 1 of Moab § mailing-lists VSC www. vscentrum. be Calc. UA uantwerpen. be/hpc

User support mailing-lists for announcements : calcua-announce@sympa. uantwerpen. be (for official announcements and communications) contacts : § e-mail : hpc@uantwerpen. be § phone : 3860 (Stefan), 3855 (Franky), 3852 (Kurt), 3879 (Bert) § office : G. 309 -311(CMI) Don’t be afraid to ask, but being as precise as possible when specifying your problem definitely helps in getting a quick answer.

- Slides: 109