Dimensions of Neural Networks Ali Akbar Darabi Ghassem

Dimensions of Neural Networks Ali Akbar Darabi Ghassem Mirroshandel Hootan Nokhost

Outline l Motivation l Neural Networks Power l Kolmogorov Theory l Cascade Correlation

Motivation l Consider you are an engineer and you know ANN l You encounter a problem that can not be solved with common analytical approaches l You decide to use ANN

But… l Some ¡ Is questions this problem solvable using ANN? ¡ How many neurons? ¡ How many layers? ¡…

Two Approaches l Fundamental ¡ Kolmogrov l Adaptive Analyses Theory Networks ¡ Cascade Correlation

Outline l Motivation l Neural Networks Power l Kolmogorov Theory l Cascade Correlation

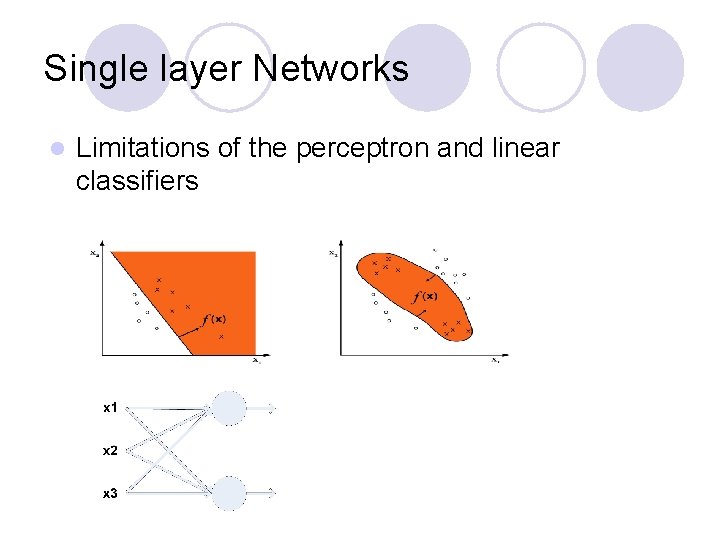

Single layer Networks l Limitations of the perceptron and linear classifiers

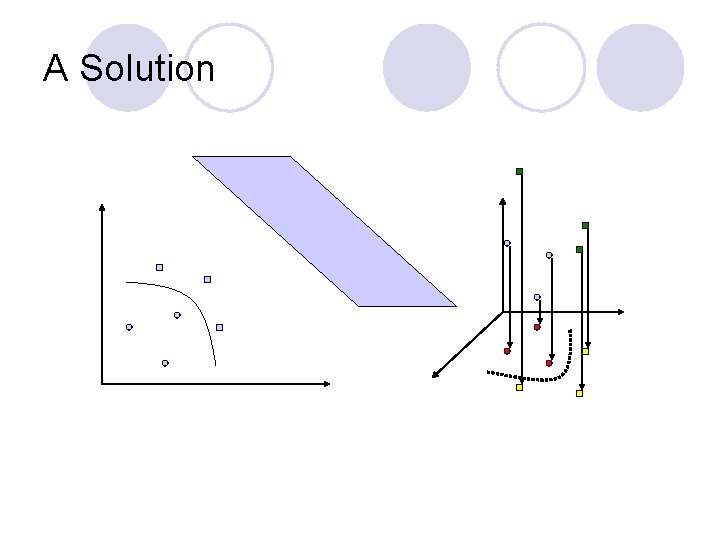

A Solution

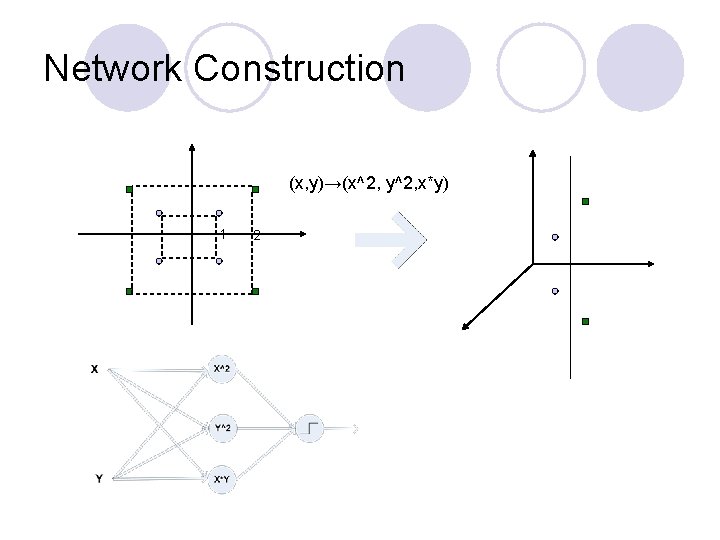

Network Construction (x, y)→(x^2, y^2, x*y) 1 2

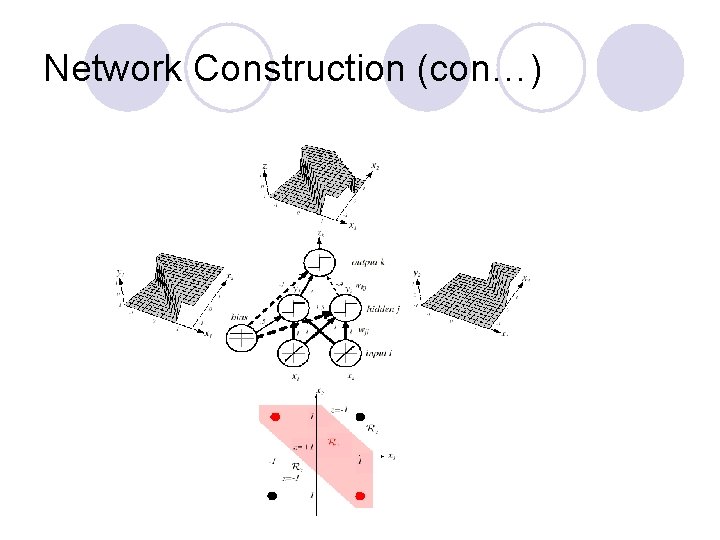

Network Construction (con…)

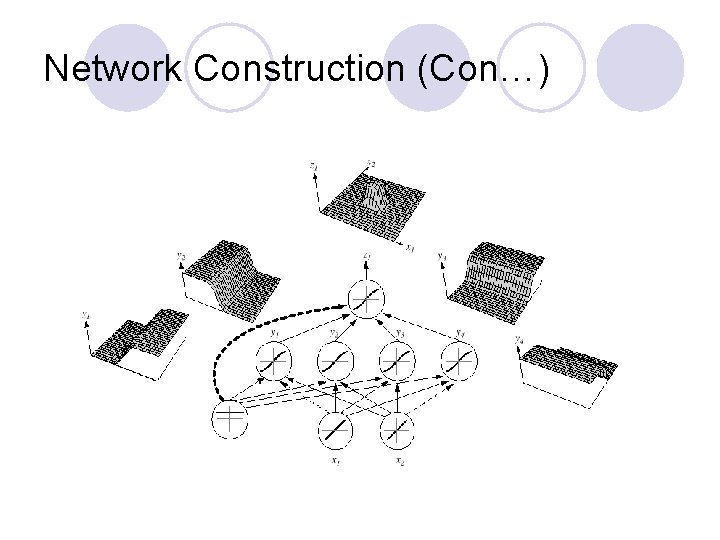

Network Construction (Con…)

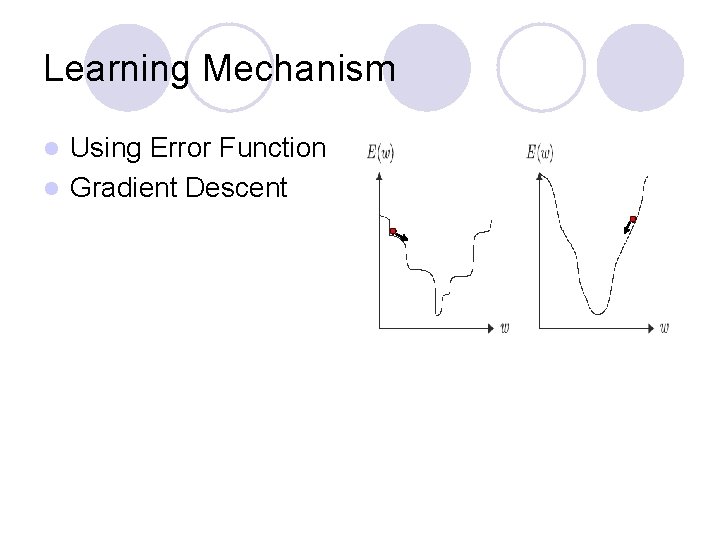

Learning Mechanism Using Error Function l Gradient Descent l

Outline l Motivation l Neural Networks Power l Kolmogorov Theory l Cascade Correlation

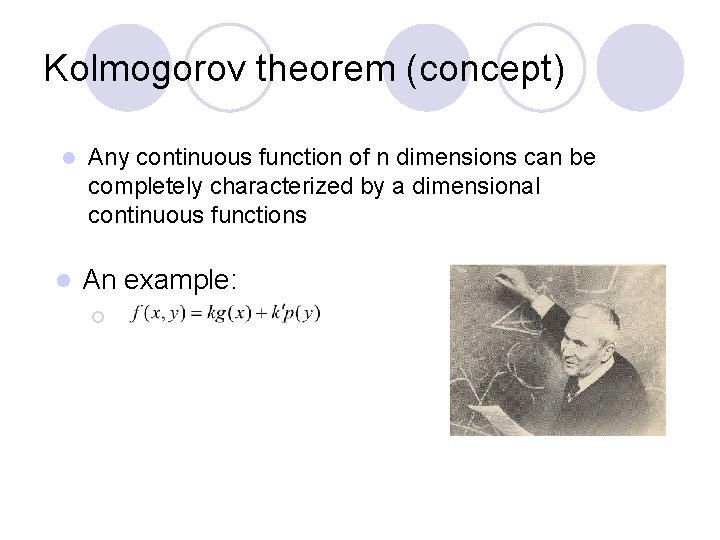

Kolmogorov theorem (concept) l l Any continuous function of n dimensions can be completely characterized by a dimensional continuous functions An example: ¡

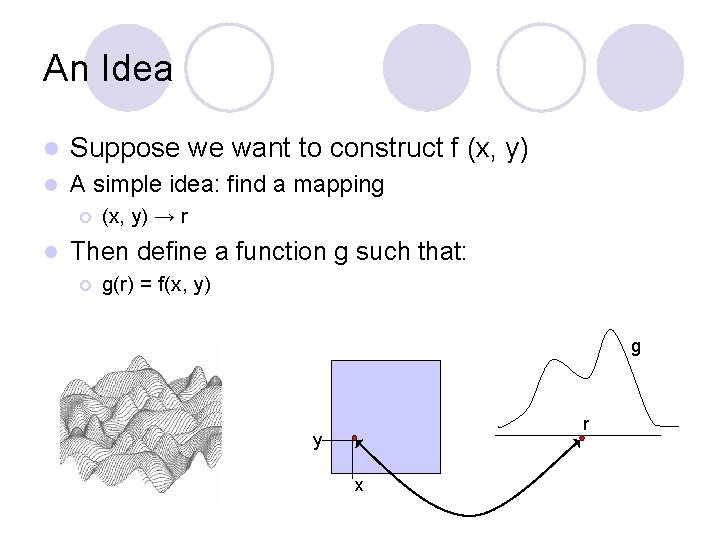

An Idea l Suppose we want to construct f (x, y) l A simple idea: find a mapping ¡ l (x, y) → r Then define a function g such that: ¡ g(r) = f(x, y) g r y x

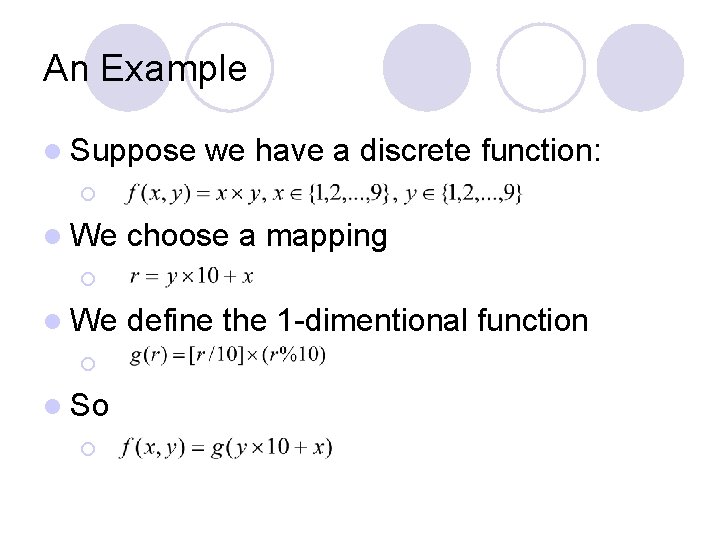

An Example l Suppose we have a discrete function: ¡ l We choose a mapping ¡ l We ¡ l So ¡ define the 1 -dimentional function

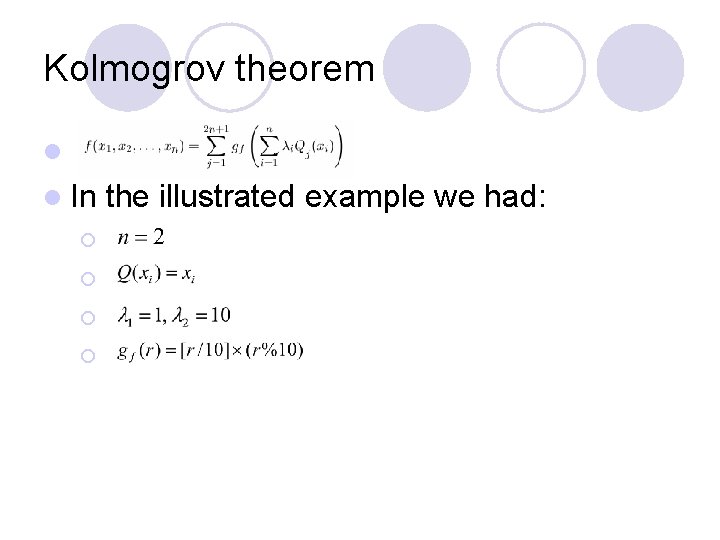

Kolmogrov theorem l l In ¡ ¡ the illustrated example we had:

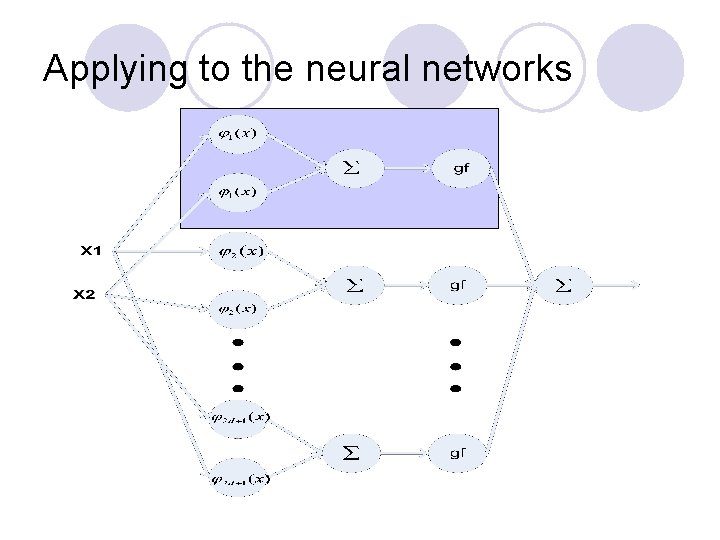

Applying to the neural networks

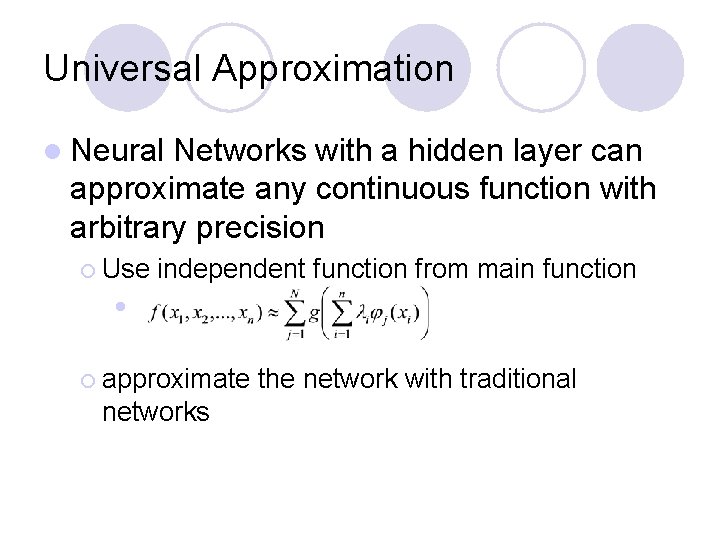

Universal Approximation l Neural Networks with a hidden layer can approximate any continuous function with arbitrary precision ¡ Use independent function from main function l ¡ approximate networks the network with traditional

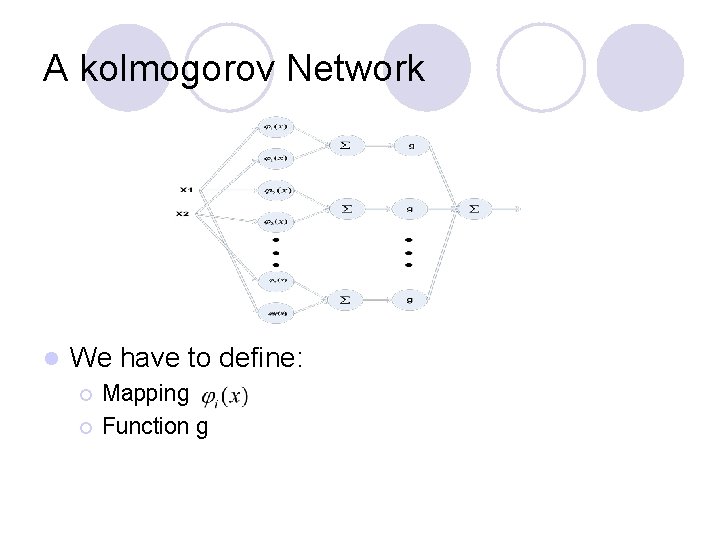

A kolmogorov Network l We have to define: ¡ ¡ Mapping Function g

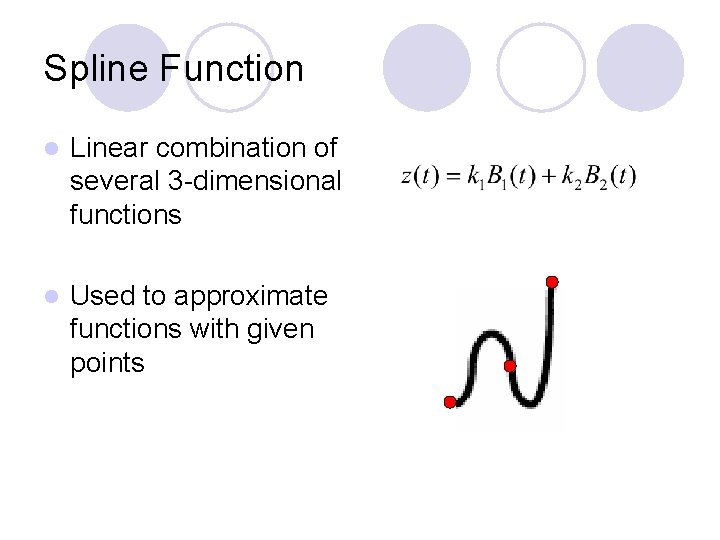

Spline Function l Linear combination of several 3 -dimensional functions l Used to approximate functions with given points

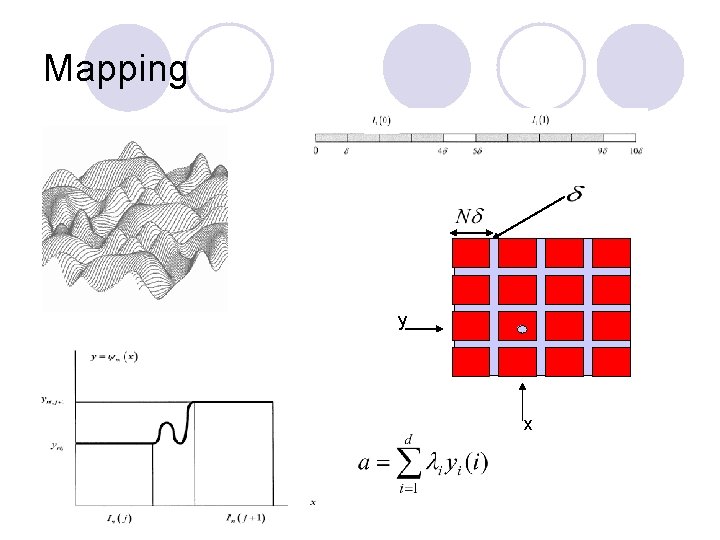

Mapping l y x

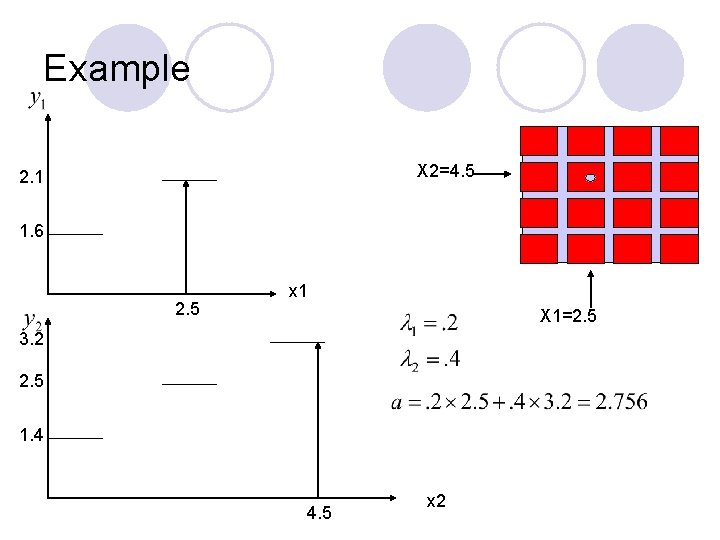

Example X 2=4. 5 2. 1 1. 6 2. 5 x 1 X 1=2. 5 3. 2 2. 5 1. 4 4. 5 x 2

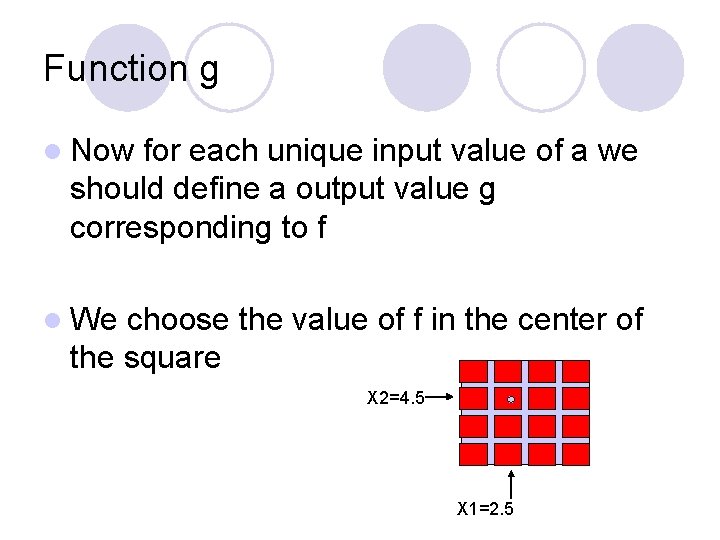

Function g l Now for each unique input value of a we should define a output value g corresponding to f l We choose the value of f in the center of the square X 2=4. 5 X 1=2. 5

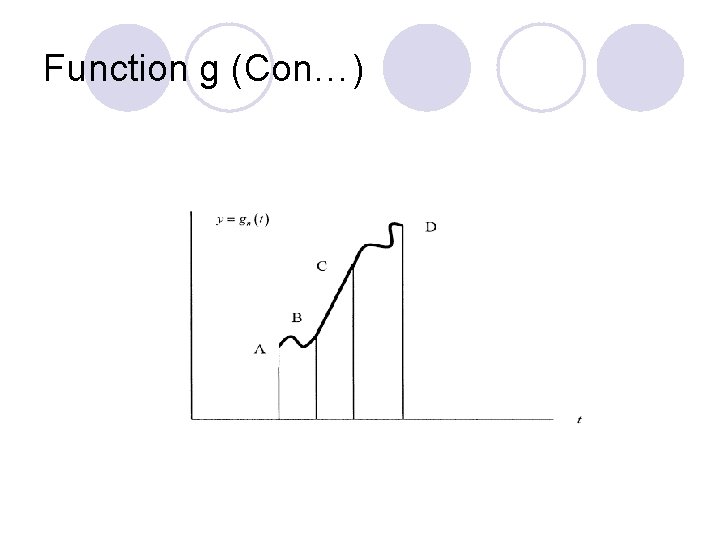

Function g (Con…)

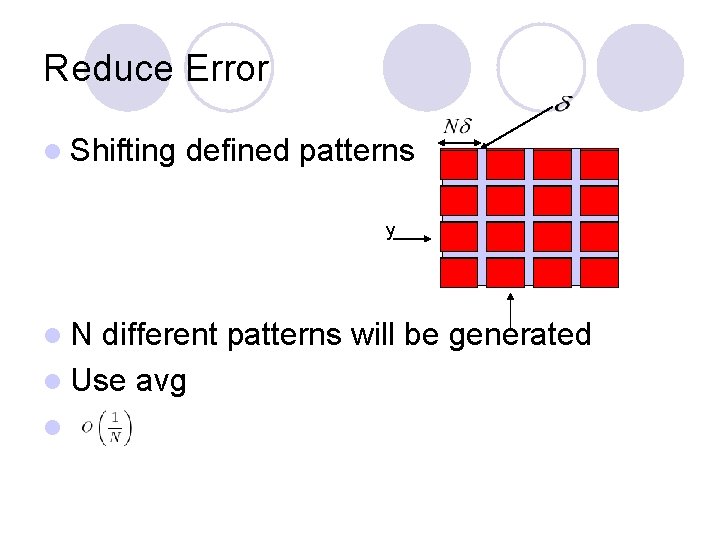

Reduce Error l Shifting defined patterns y l. N different patterns will be generated l Use avg l

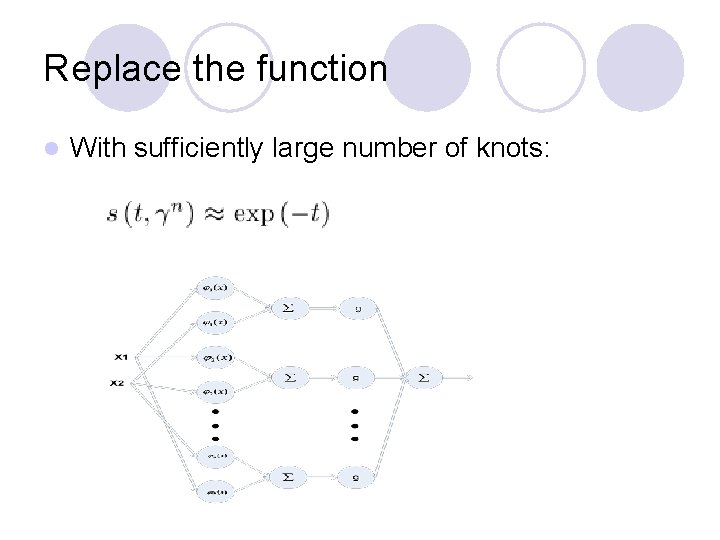

Replace the function l With sufficiently large number of knots:

Outline l Motivation l Neural Networks Power l Kolmogorov Theory l Cascade Correlation

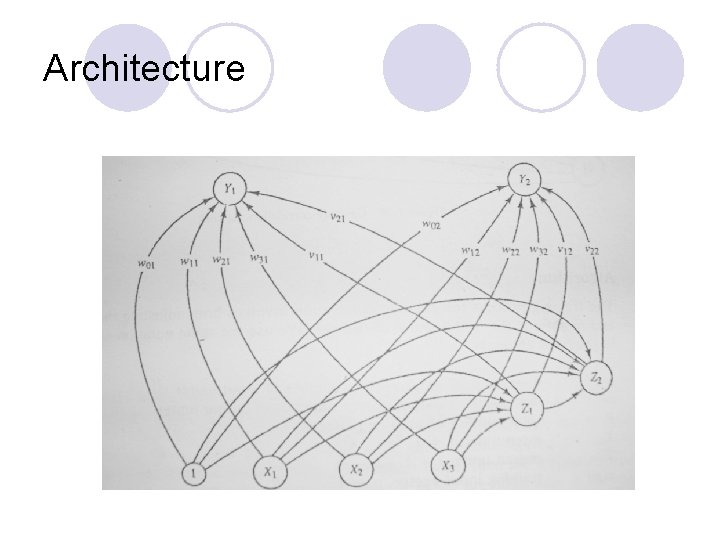

Cascade Correlation l Dynamic size, depth, topology l Single layer learning in spite of multilayer structure l Fast learning

Architecture

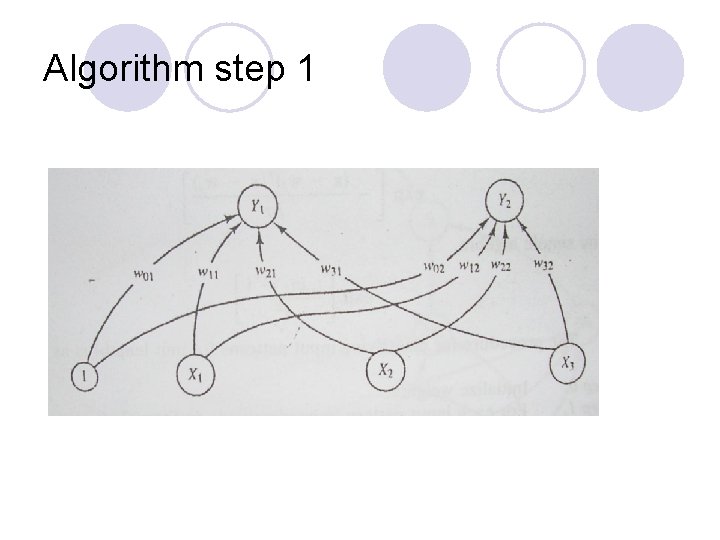

Algorithm step 1

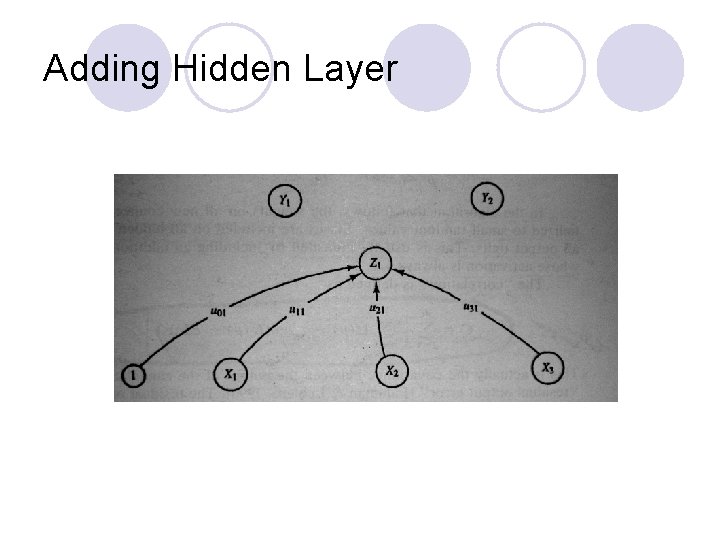

Adding Hidden Layer

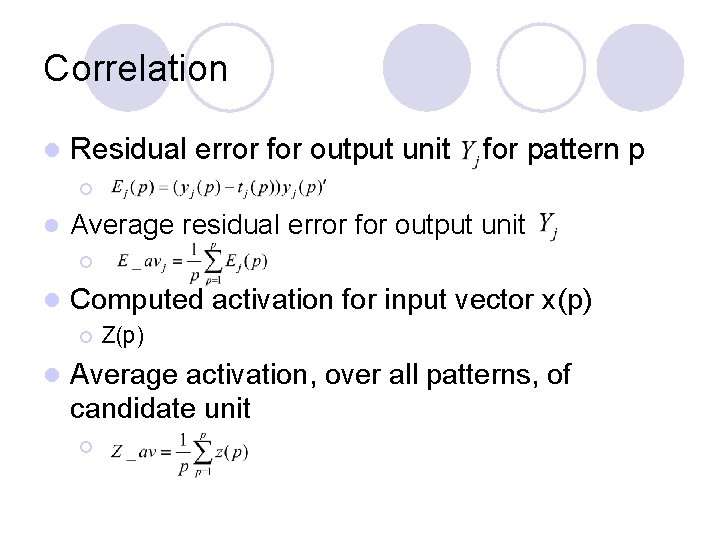

Correlation l Residual error for output unit for pattern p ¡ l Average residual error for output unit ¡ l Computed activation for input vector x(p) ¡ l Z(p) Average activation, over all patterns, of candidate unit ¡

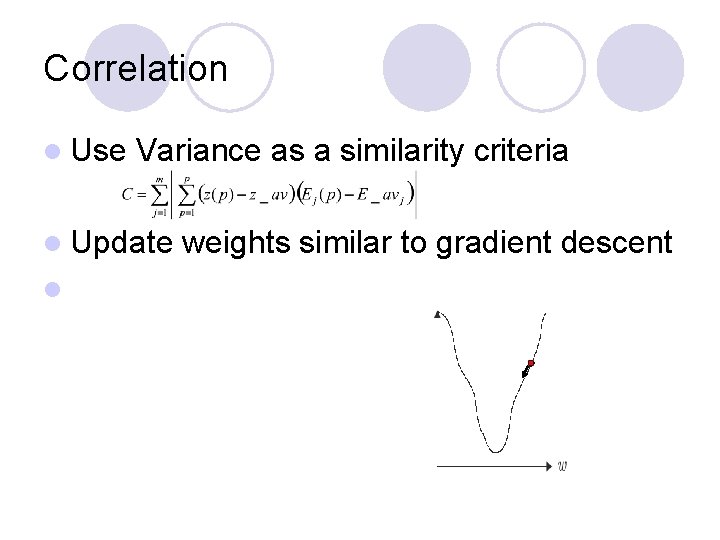

Correlation l Use Variance as a similarity criteria l Update l weights similar to gradient descent

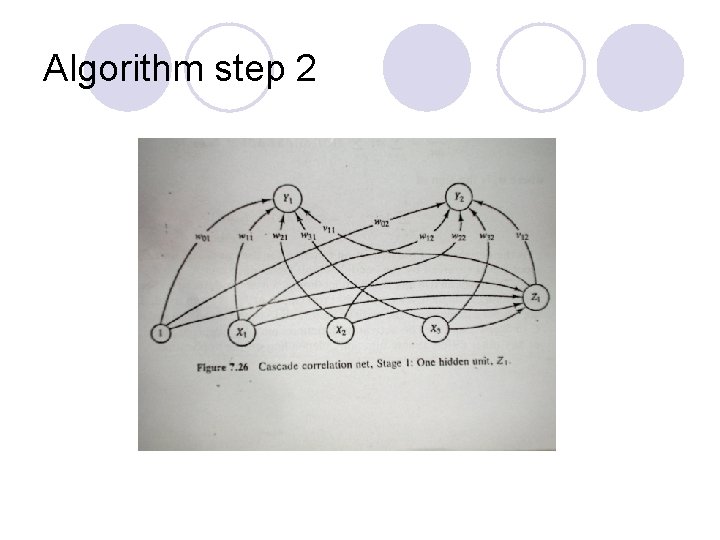

Algorithm step 2

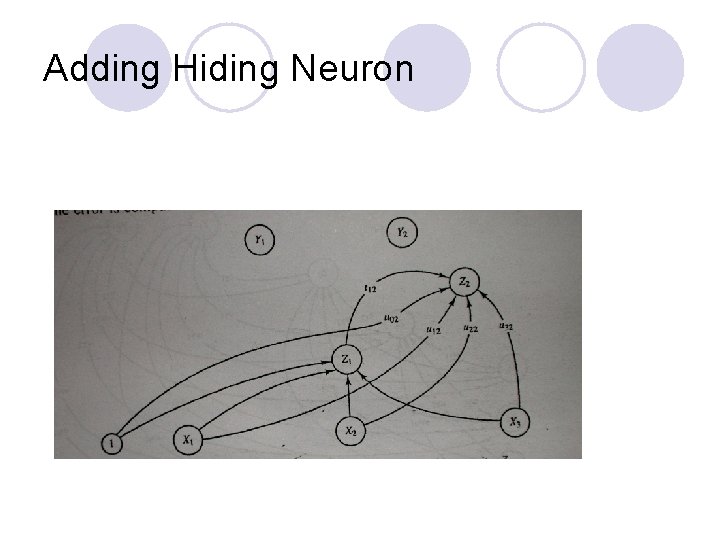

Adding Hiding Neuron

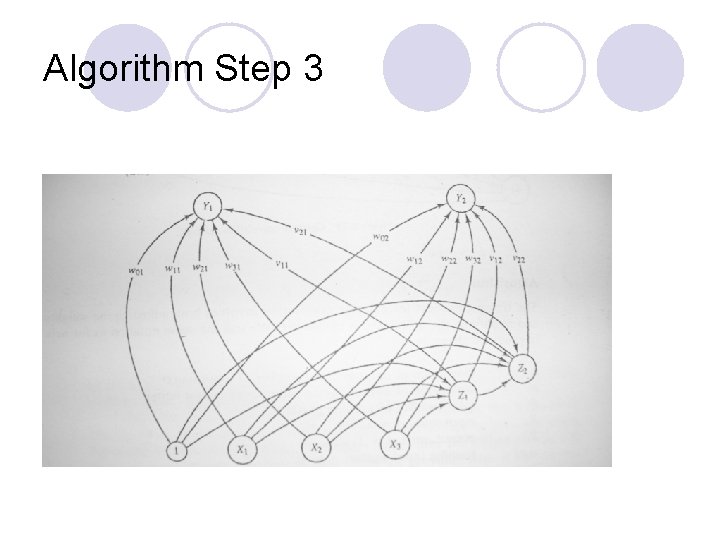

Algorithm Step 3

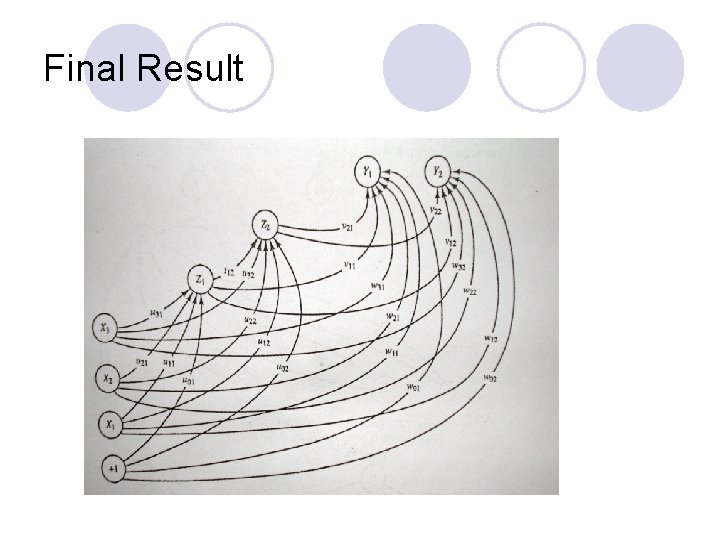

Final Result

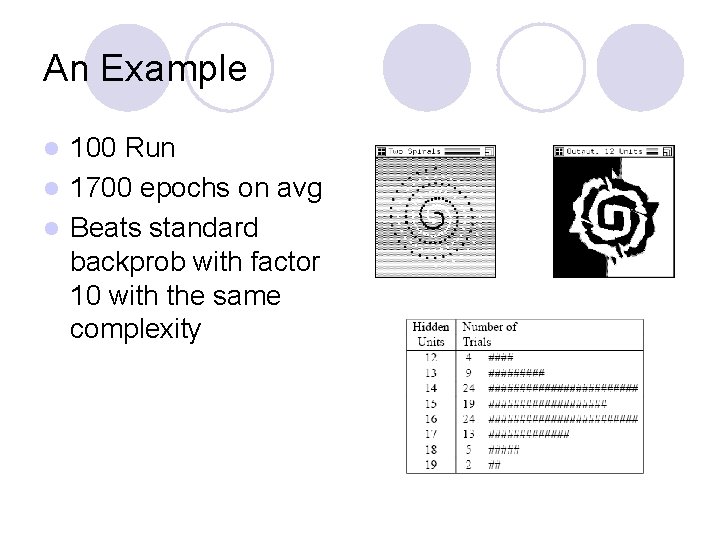

An Example 100 Run l 1700 epochs on avg l Beats standard backprob with factor 10 with the same complexity l

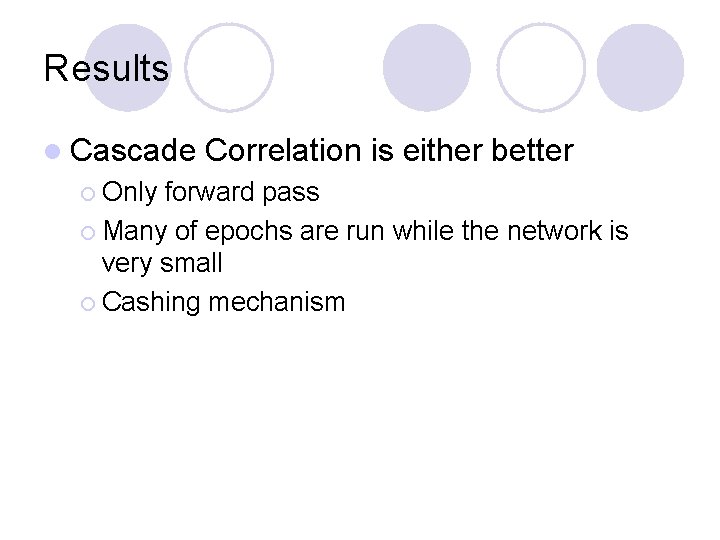

Results l Cascade ¡ Only Correlation is either better forward pass ¡ Many of epochs are run while the network is very small ¡ Cashing mechanism

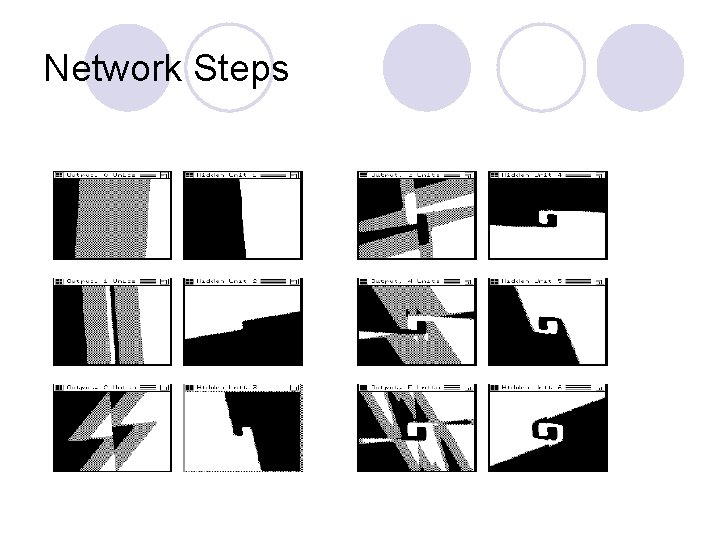

Network Steps

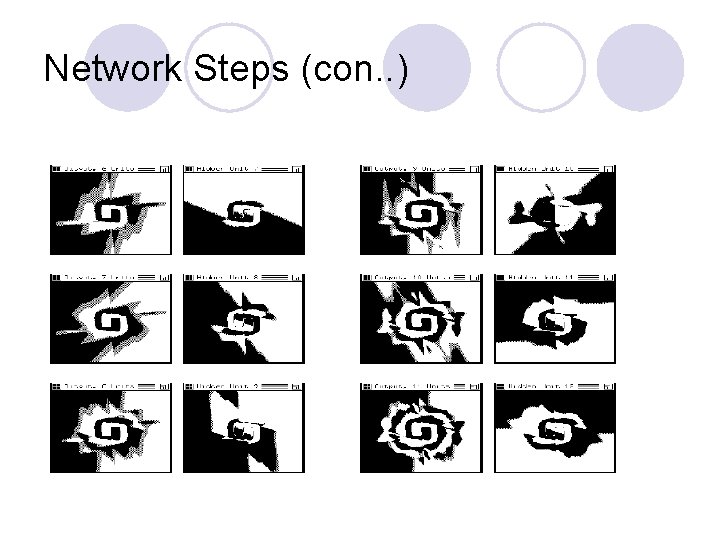

Network Steps (con. . )

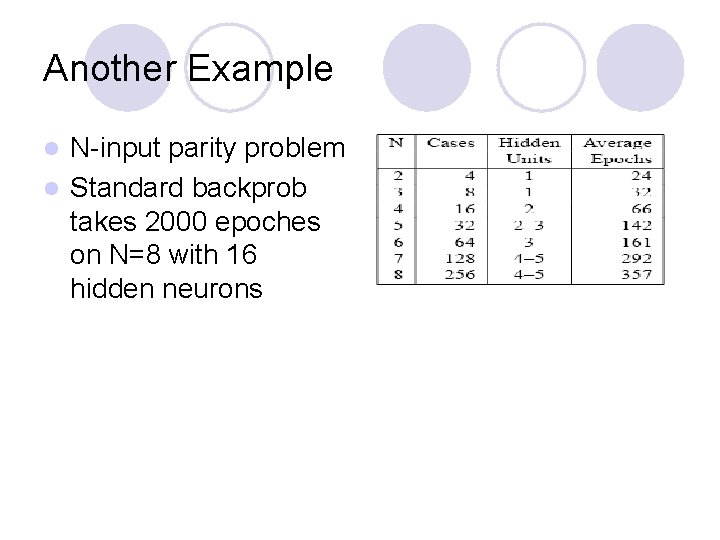

Another Example N-input parity problem l Standard backprob takes 2000 epoches on N=8 with 16 hidden neurons l

Discussion l There is no need to guess the size, depth and the connectivity pattern l Learns fast l Can build deep networks (high order feature detector) l Herd effect l Results can be cashed

Conclusion l. A Network with a hidden layer can define complex boundaries and can approximate any function l The number of neurons in the hidden layer determines the amount of approximation l Dynamic Networks

- Slides: 45