Analysis of Sparse Convolutional Neural Networks Sabareesh Ganapathy

Analysis of Sparse Convolutional Neural Networks Sabareesh Ganapathy Manav Garg Prasanna Venkatesh Srinivasan UNIVERSITY OF WISCONSIN-MADISON

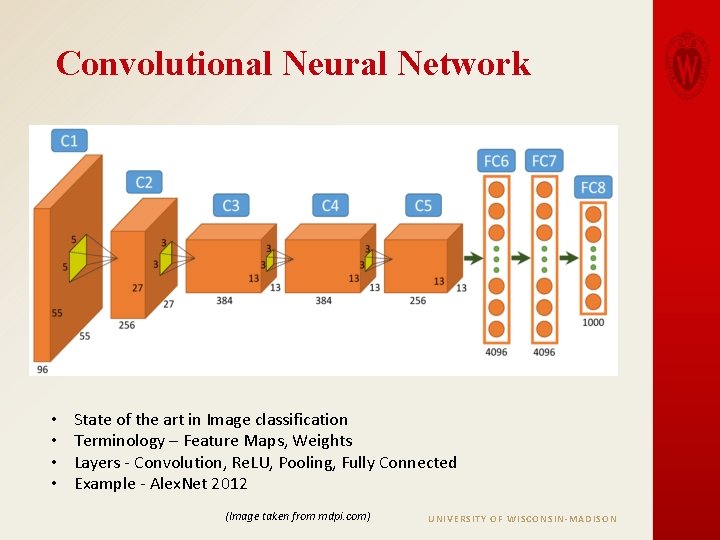

Convolutional Neural Network • • State of the art in Image classification Terminology – Feature Maps, Weights Layers - Convolution, Re. LU, Pooling, Fully Connected Example - Alex. Net 2012 (Image taken from mdpi. com) UNIVERSITY OF WISCONSIN-MADISON

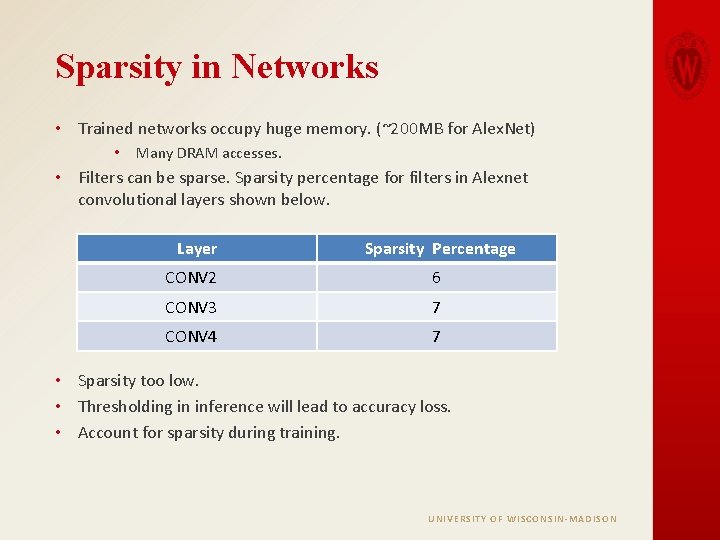

Sparsity in Networks • Trained networks occupy huge memory. (~200 MB for Alex. Net) • Many DRAM accesses. • Filters can be sparse. Sparsity percentage for filters in Alexnet convolutional layers shown below. Layer Sparsity Percentage CONV 2 6 CONV 3 7 CONV 4 7 • Sparsity too low. • Thresholding in inference will lead to accuracy loss. • Account for sparsity during training. UNIVERSITY OF WISCONSIN-MADISON

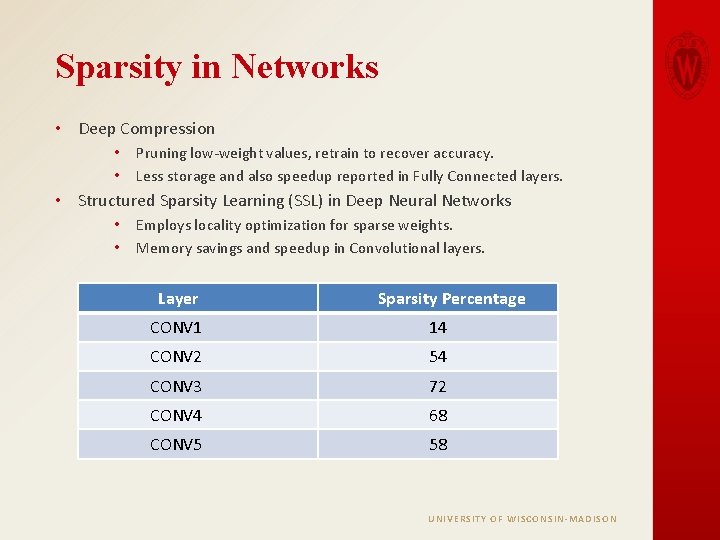

Sparsity in Networks • Deep Compression • • Pruning low-weight values, retrain to recover accuracy. Less storage and also speedup reported in Fully Connected layers. • Structured Sparsity Learning (SSL) in Deep Neural Networks • • Employs locality optimization for sparse weights. Memory savings and speedup in Convolutional layers. Layer Sparsity Percentage CONV 1 14 CONV 2 54 CONV 3 72 CONV 4 68 CONV 5 58 UNIVERSITY OF WISCONSIN-MADISON

Caffe Framework • • Open-source framework to build and run convolutional neural nets. Provides Python and C++ interface for inference. Source code in C++ and Cuda. Employs efficient data structures for feature maps and weights. • Blob data structure • Caffe Model Zoo - Repository of CNN models for analysis. • Pretrained models for base and compressed versions of Alex. Net available in the Model zoo. UNIVERSITY OF WISCONSIN-MADISON

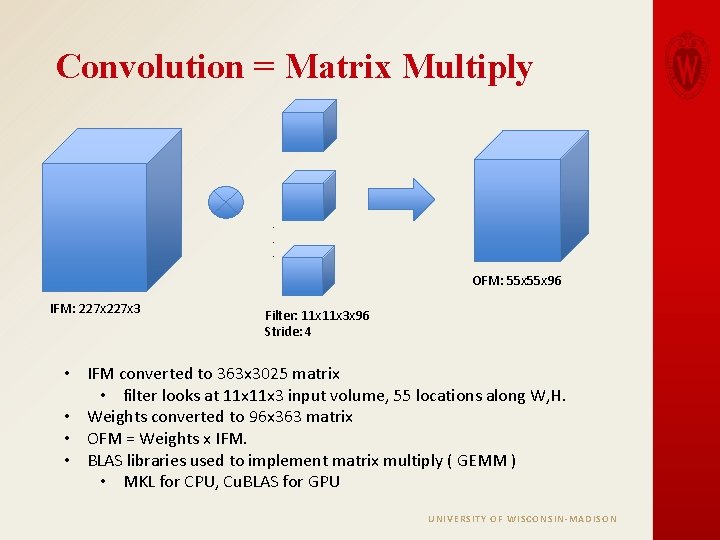

Convolution = Matrix Multiply . . . OFM: 55 x 96 IFM: 227 x 3 Filter: 11 x 3 x 96 Stride: 4 • IFM converted to 363 x 3025 matrix • filter looks at 11 x 3 input volume, 55 locations along W, H. • Weights converted to 96 x 363 matrix • OFM = Weights x IFM. • BLAS libraries used to implement matrix multiply ( GEMM ) • MKL for CPU, Cu. BLAS for GPU UNIVERSITY OF WISCONSIN-MADISON

Sparse Matrix Multiply • Weight matrix can be represented in sparse format for sparse networks. • Compressed Sparse Row Format. Matrix converted to arrays to represent non-zero values. • • • Array A - Contains non zero values. Array JA - Column index of each element in A. Array IA - Cumulative sum of number of non-zero values in previous rows. • Sparse representation saves memory and could result in efficient computation. • Wen-Wei – New Caffe branch for sparse convolution • • Represent convolutional layer weights in CSR format. Uses sparse matrix multiply routines. (CSRMM) Weight in sparse format, IFM in dense , Output is in dense. MKL library for CPU, cu. SPARSE for GPU. UNIVERSITY OF WISCONSIN-MADISON

Analysis Framework • Initially gem 5 -gpu was planned to be used as simulation framework. Gem 5 ended up being very slow due to the large size of Deep Neural Networks. • Analysis was performed by running Caffe and Cuda programs on Native Hardware. • For CPU analysis, AWS system with 2 Intel Xeon cores running @ 2. 4 GHz was used. • For GPU analysis, dodeca system with NVIDIA Ge. Force GTX 1080 GPU was used. UNIVERSITY OF WISCONSIN-MADISON

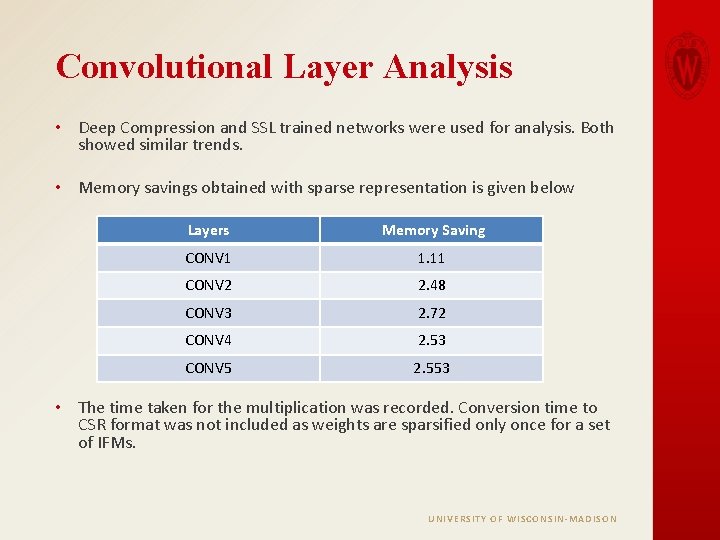

Convolutional Layer Analysis • Deep Compression and SSL trained networks were used for analysis. Both showed similar trends. • Memory savings obtained with sparse representation is given below Layers Memory Saving CONV 1 1. 11 CONV 2 2. 48 CONV 3 2. 72 CONV 4 2. 53 CONV 5 2. 553 • The time taken for the multiplication was recorded. Conversion time to CSR format was not included as weights are sparsified only once for a set of IFMs. UNIVERSITY OF WISCONSIN-MADISON

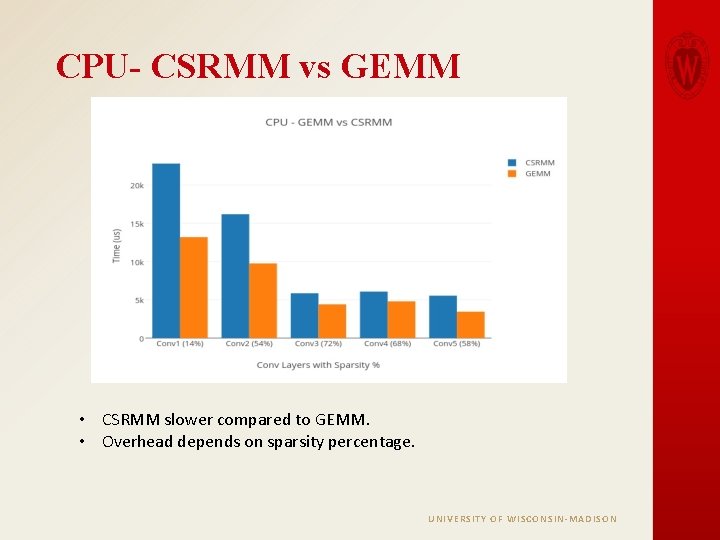

CPU- CSRMM vs GEMM • CSRMM slower compared to GEMM. • Overhead depends on sparsity percentage. UNIVERSITY OF WISCONSIN-MADISON

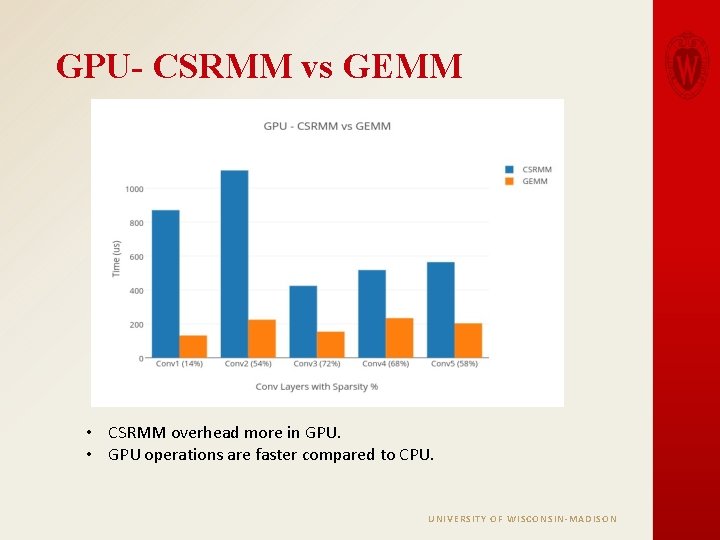

GPU- CSRMM vs GEMM • CSRMM overhead more in GPU. • GPU operations are faster compared to CPU. UNIVERSITY OF WISCONSIN-MADISON

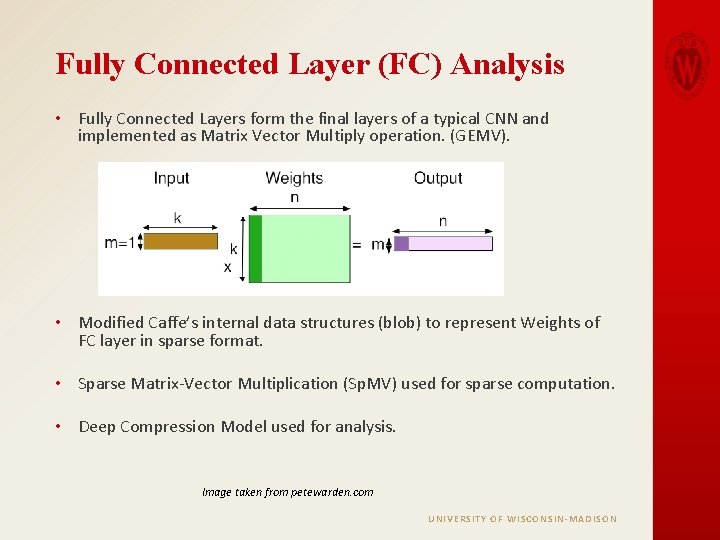

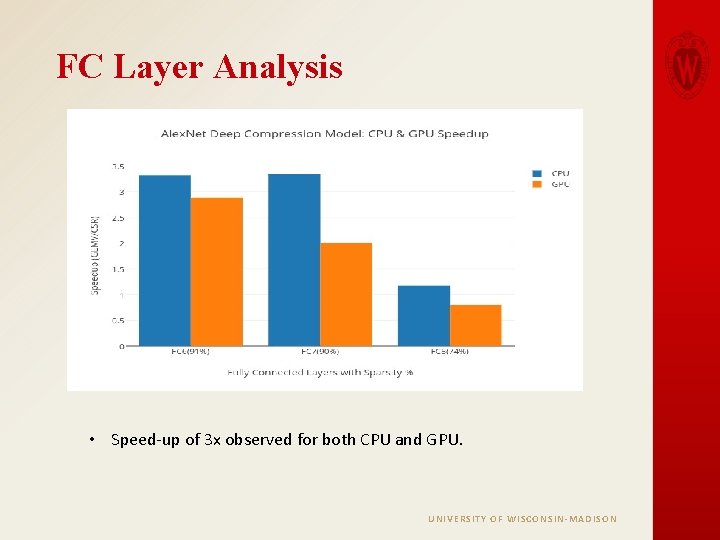

Fully Connected Layer (FC) Analysis • Fully Connected Layers form the final layers of a typical CNN and implemented as Matrix Vector Multiply operation. (GEMV). • Modified Caffe’s internal data structures (blob) to represent Weights of FC layer in sparse format. • Sparse Matrix-Vector Multiplication (Sp. MV) used for sparse computation. • Deep Compression Model used for analysis. Image taken from petewarden. com UNIVERSITY OF WISCONSIN-MADISON

FC Layer Analysis • Speed-up of 3 x observed for both CPU and GPU. UNIVERSITY OF WISCONSIN-MADISON

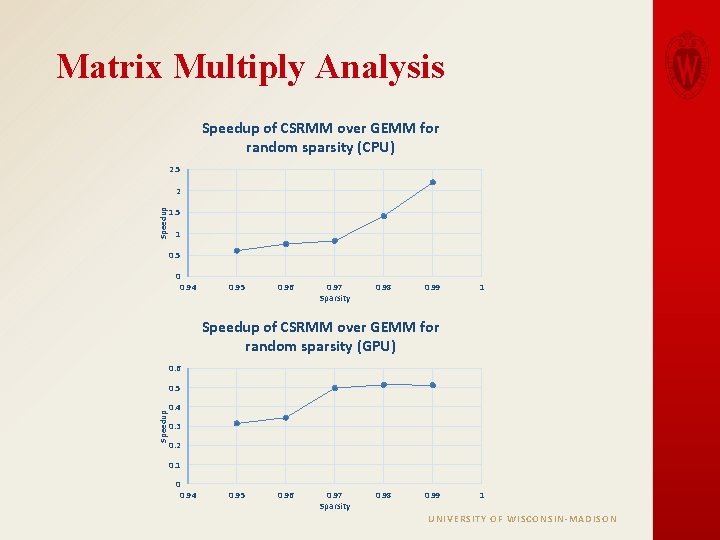

Matrix Multiply Analysis • Custom C++ and Cuda programs were written to measure the time taken to execute only matrix multiplication routines. • This allowed us to vary the sparsity of weight matrix to figure out the break-even point where CSRMM performs faster than GEMM. • Size of the weight matrix were chosen to be equal to that of the largest Alex. Net CONV layer. • The zeros were distributed randomly in the weight matrix. UNIVERSITY OF WISCONSIN-MADISON

Matrix Multiply Analysis Speedup of CSRMM over GEMM for random sparsity (CPU) 2. 5 Speedup 2 1. 5 1 0. 5 0 0. 94 0. 95 0. 96 0. 97 Sparsity 0. 98 0. 99 1 Speedup of CSRMM over GEMM for random sparsity (GPU) 0. 6 Speedup 0. 5 0. 4 0. 3 0. 2 0. 1 0 0. 94 0. 95 0. 96 0. 97 Sparsity 0. 98 0. 99 1 UNIVERSITY OF WISCONSIN-MADISON

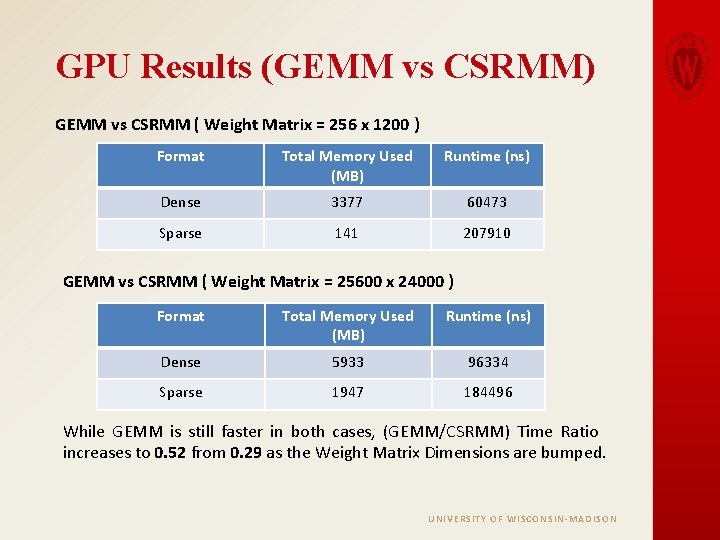

GPU Memory Scaling Experiment Motivation: As Sparse Matrix representation occupies significantly less space compared to dense representation, a larger working set can fit in the Cache/Memory system in the case of sparse. Implementation: As the “Weights” matrix was the one being passed in the Sparse format, we increased its size to a larger value. UNIVERSITY OF WISCONSIN-MADISON

GPU Results (GEMM vs CSRMM) GEMM vs CSRMM ( Weight Matrix = 256 x 1200 ) Format Total Memory Used (MB) Runtime (ns) Dense 3377 60473 Sparse 141 207910 GEMM vs CSRMM ( Weight Matrix = 25600 x 24000 ) Format Total Memory Used (MB) Runtime (ns) Dense 5933 96334 Sparse 1947 184496 While GEMM is still faster in both cases, (GEMM/CSRMM) Time Ratio increases to 0. 52 from 0. 29 as the Weight Matrix Dimensions are bumped. UNIVERSITY OF WISCONSIN-MADISON

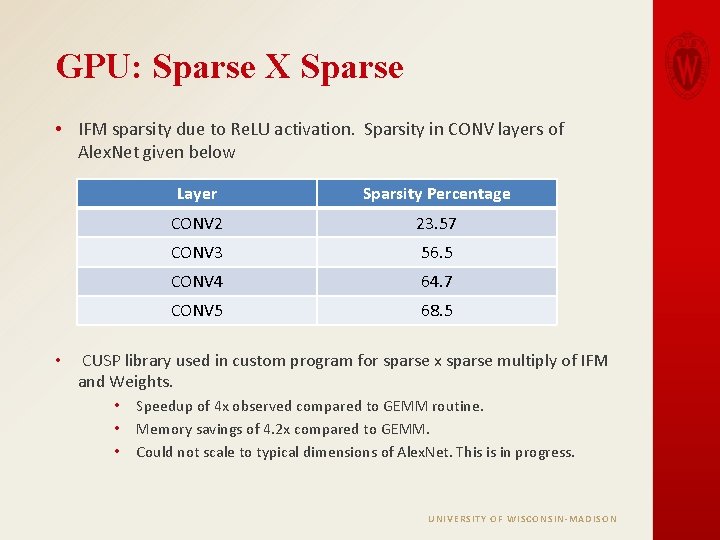

GPU: Sparse X Sparse • IFM sparsity due to Re. LU activation. Sparsity in CONV layers of Alex. Net given below • Layer Sparsity Percentage CONV 2 23. 57 CONV 3 56. 5 CONV 4 64. 7 CONV 5 68. 5 CUSP library used in custom program for sparse x sparse multiply of IFM and Weights. • • • Speedup of 4 x observed compared to GEMM routine. Memory savings of 4. 2 x compared to GEMM. Could not scale to typical dimensions of Alex. Net. This is in progress. UNIVERSITY OF WISCONSIN-MADISON

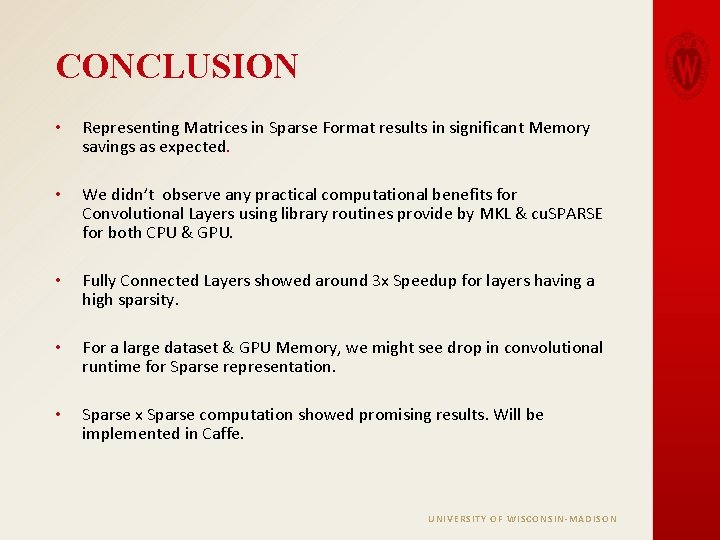

CONCLUSION • Representing Matrices in Sparse Format results in significant Memory savings as expected. • We didn’t observe any practical computational benefits for Convolutional Layers using library routines provide by MKL & cu. SPARSE for both CPU & GPU. • Fully Connected Layers showed around 3 x Speedup for layers having a high sparsity. • For a large dataset & GPU Memory, we might see drop in convolutional runtime for Sparse representation. • Sparse x Sparse computation showed promising results. Will be implemented in Caffe. UNIVERSITY OF WISCONSIN-MADISON

THANK YOU QUESTIONS ? UNIVERSITY OF WISCONSIN-MADISON

- Slides: 20