Signal Modeling From Convolutional Sparse Coding to Convolutional

![CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘ CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘](https://slidetodoc.com/presentation_image_h/c1a75330057ff1ee333d822b4ee17840/image-36.jpg)

![CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘ CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘](https://slidetodoc.com/presentation_image_h/c1a75330057ff1ee333d822b4ee17840/image-37.jpg)

- Slides: 55

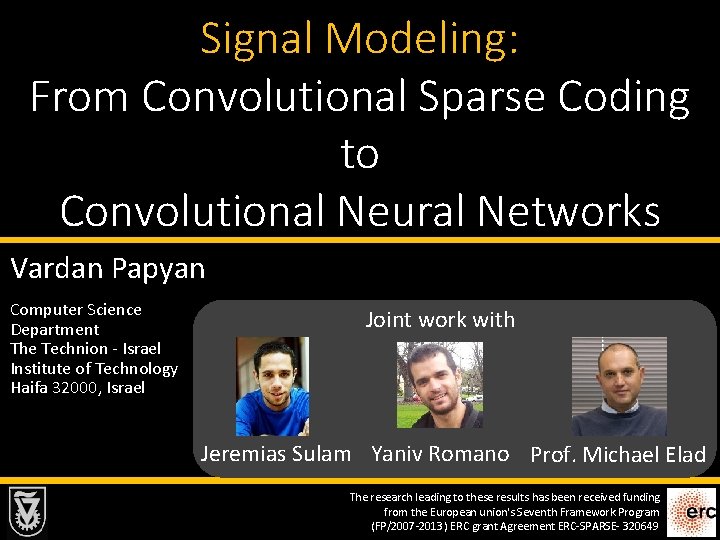

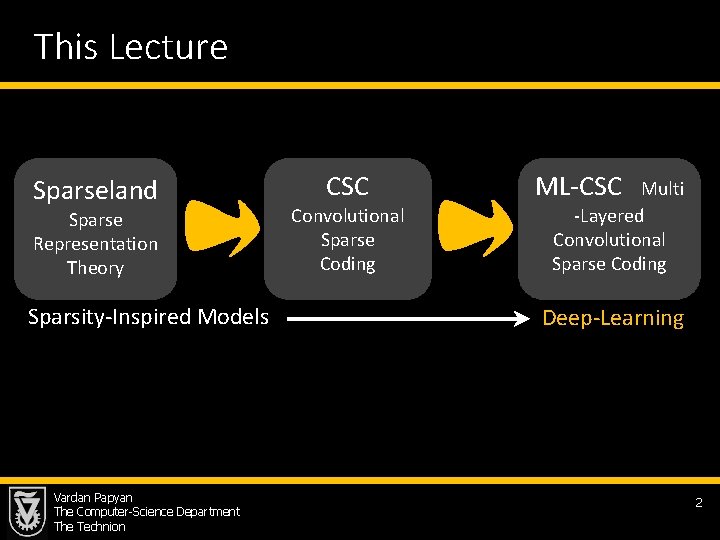

Signal Modeling: From Convolutional Sparse Coding to Convolutional Neural Networks Vardan Papyan Computer Science Department The Technion - Israel Institute of Technology Haifa 32000, Israel Joint work with Jeremias Sulam Yaniv Romano Prof. Michael Elad The research leading to these results has been received funding from the European union's Seventh Framework Program (FP/2007 -2013) ERC grant Agreement ERC-SPARSE- 320649

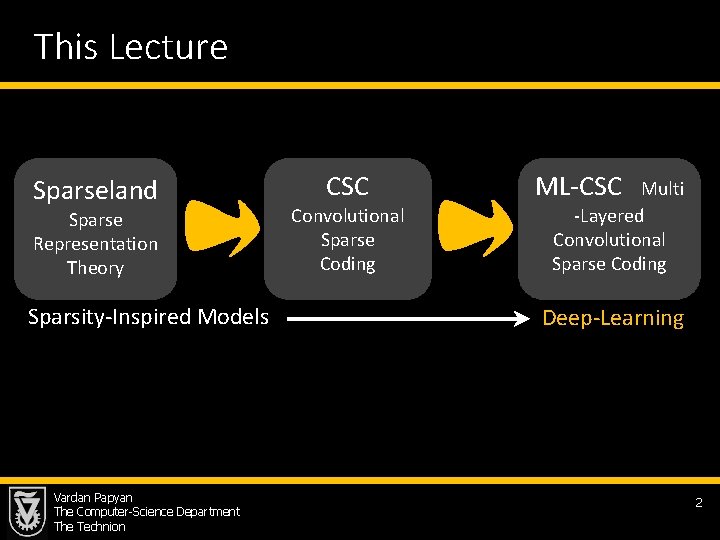

This Lecture Sparseland Sparse Representation Theory Sparsity-Inspired Models Vardan Papyan The Computer-Science Department The Technion CSC Convolutional Sparse Coding ML-CSC Multi -Layered Convolutional Sparse Coding Deep-Learning 2

Multi-Layered Convolutional Sparse Modeling Vardan Papyan The Computer-Science Department The Technion 3

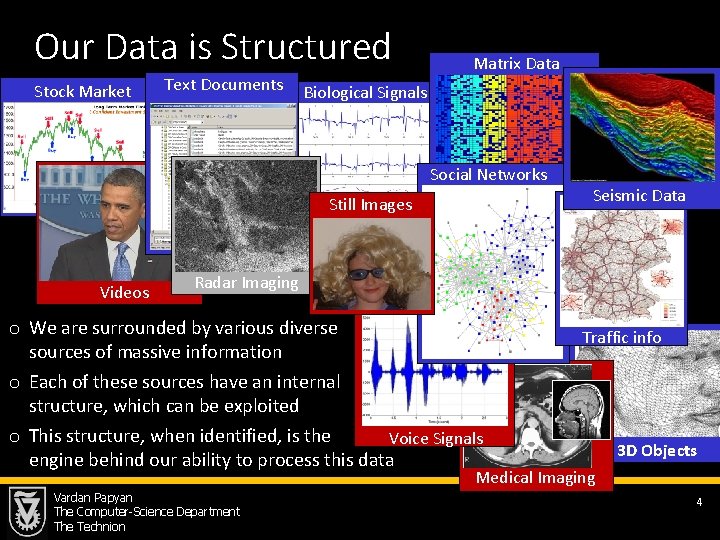

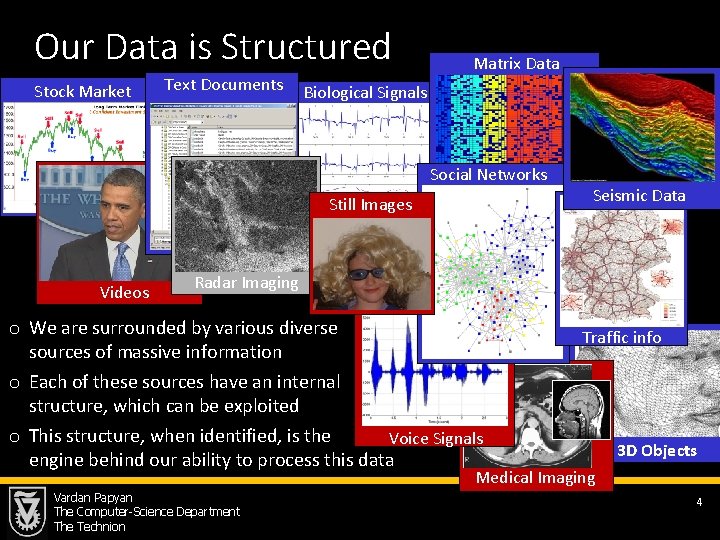

Our Data is Structured Stock Market Text Documents Matrix Data Biological Signals Social Networks Still Images Videos Seismic Data Radar Imaging o We are surrounded by various diverse sources of massive information o Each of these sources have an internal structure, which can be exploited o This structure, when identified, is the Voice Signals engine behind our ability to process this data Traffic info 3 D Objects Medical Imaging Vardan Papyan The Computer-Science Department The Technion 4

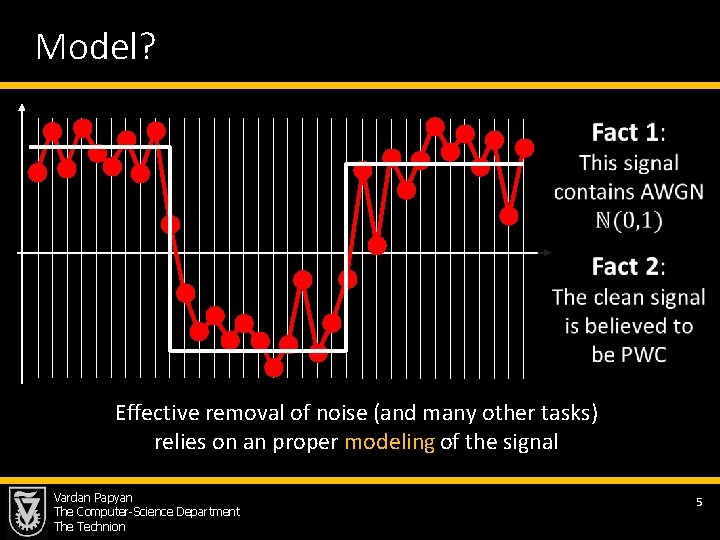

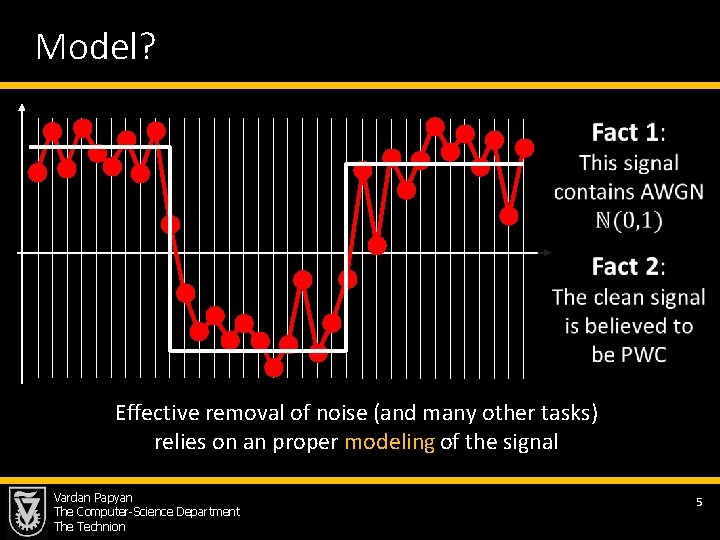

Model? Effective removal of noise (and many other tasks) relies on an proper modeling of the signal Vardan Papyan The Computer-Science Department The Technion 5

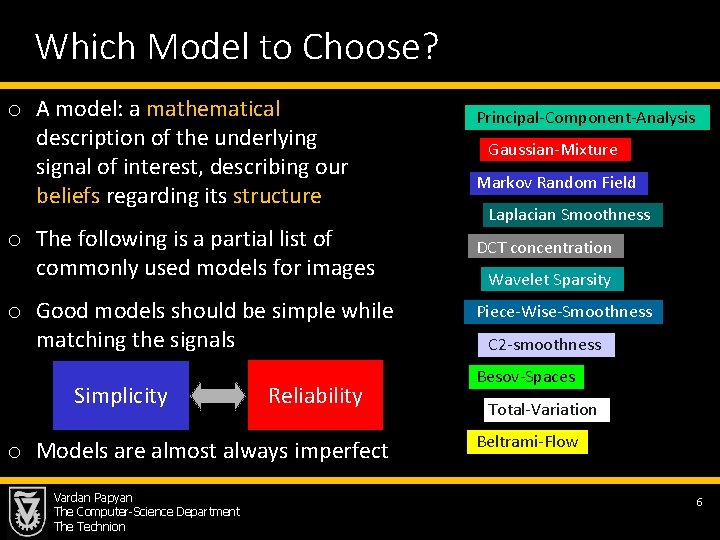

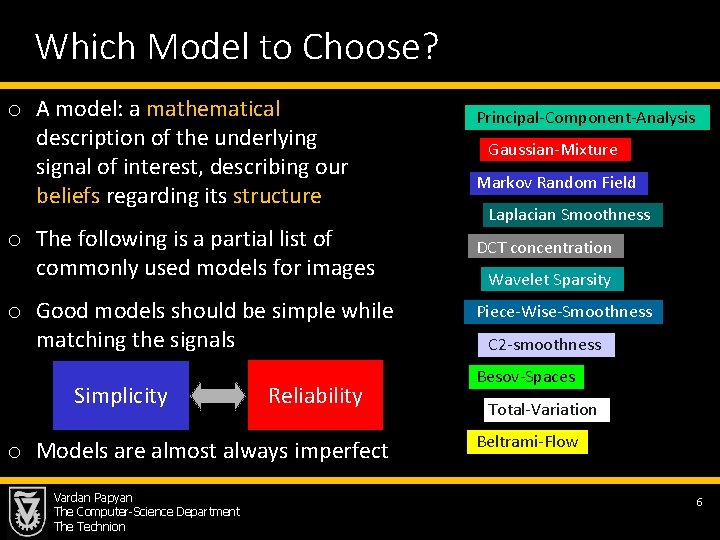

Which Model to Choose? o A model: a mathematical description of the underlying signal of interest, describing our beliefs regarding its structure o The following is a partial list of commonly used models for images o Good models should be simple while matching the signals Simplicity Reliability o Models are almost always imperfect Vardan Papyan The Computer-Science Department The Technion Principal-Component-Analysis Gaussian-Mixture Markov Random Field Laplacian Smoothness DCT concentration Wavelet Sparsity Piece-Wise-Smoothness C 2 -smoothness Besov-Spaces Total-Variation Beltrami-Flow 6

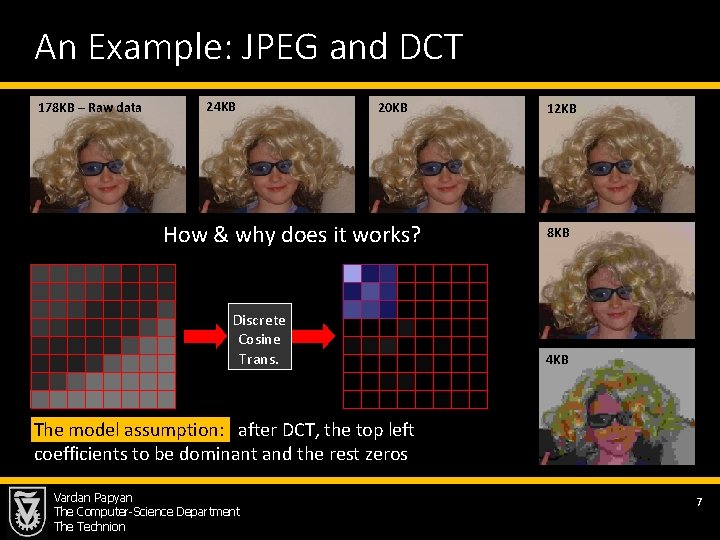

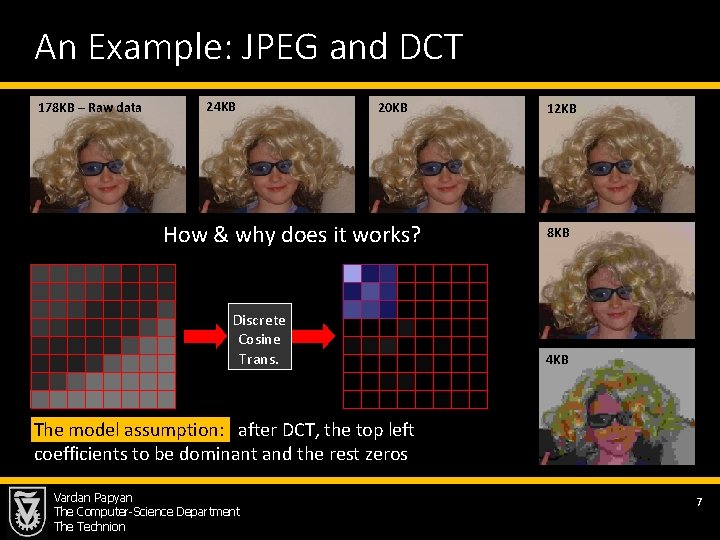

An Example: JPEG and DCT 178 KB – Raw data 24 KB 20 KB How & why does it works? Discrete Cosine Trans. 12 KB 8 KB 4 KB The model assumption: after DCT, the top left coefficients to be dominant and the rest zeros Vardan Papyan The Computer-Science Department The Technion 7

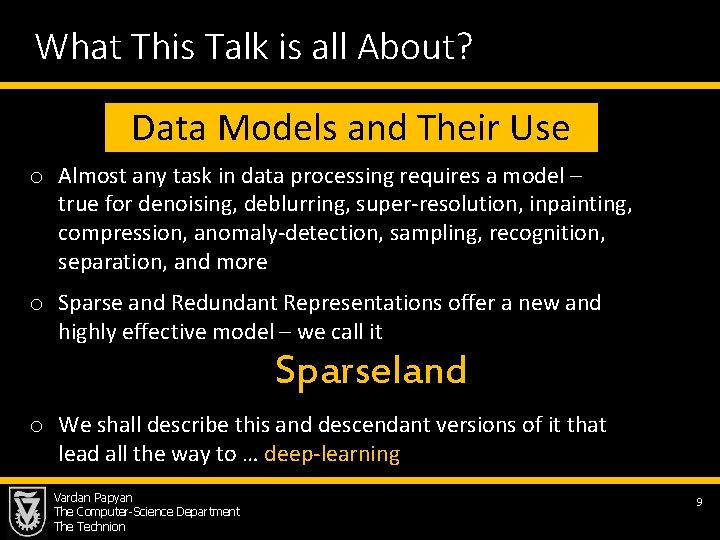

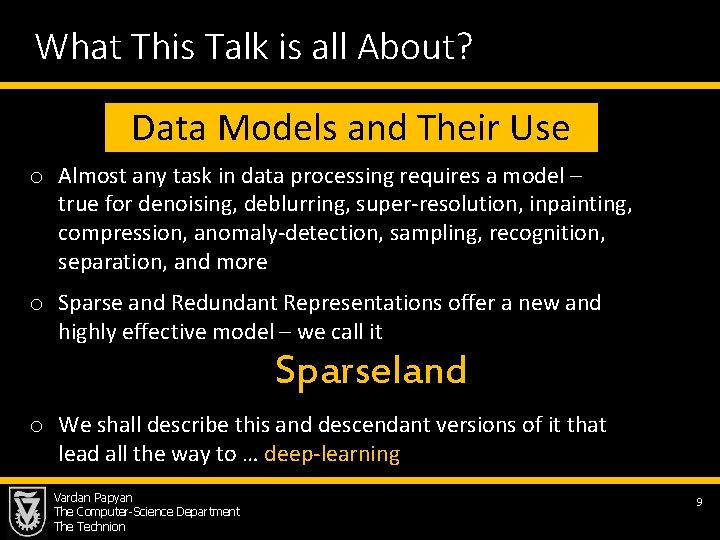

What This Talk is all About? Data Models and Their Use o Almost any task in data processing requires a model – true for denoising, deblurring, super-resolution, inpainting, compression, anomaly-detection, sampling, recognition, separation, and more o Sparse and Redundant Representations offer a new and highly effective model – we call it Sparseland o We shall describe this and descendant versions of it that lead all the way to … deep-learning Vardan Papyan The Computer-Science Department The Technion 9

Multi-Layered Convolutional Sparse Modeling Vardan Papyan The Computer-Science Department The Technion 10

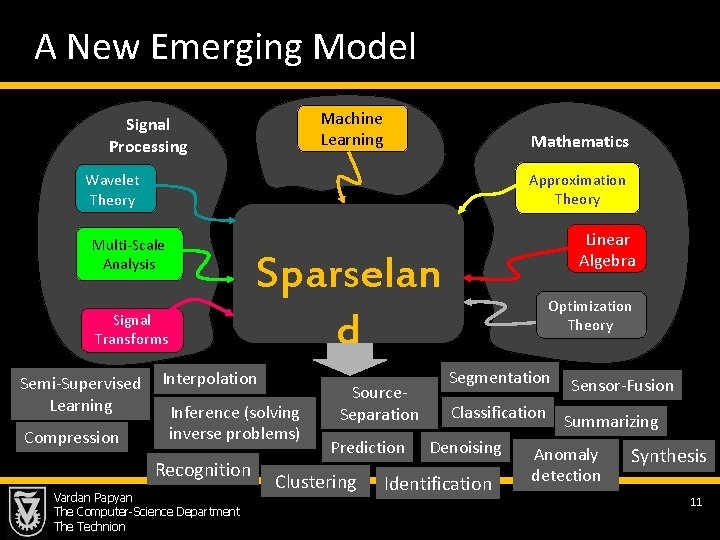

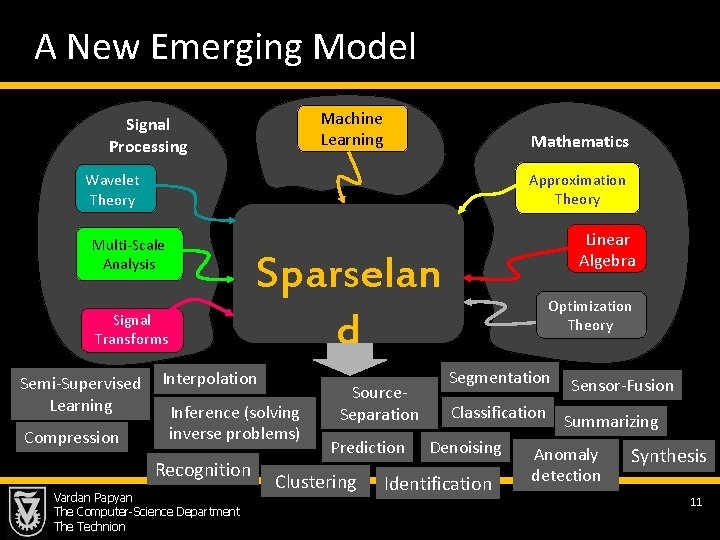

A New Emerging Model Machine Learning Signal Processing Mathematics Approximation Theory Wavelet Theory Multi-Scale Analysis Sparselan d Signal Transforms Semi-Supervised Learning Compression Linear Algebra Interpolation Inference (solving inverse problems) Recognition Vardan Papyan The Computer-Science Department The Technion Source. Separation Prediction Clustering Optimization Theory Segmentation Sensor-Fusion Classification Summarizing Denoising Anomaly Identification detection Synthesis 11

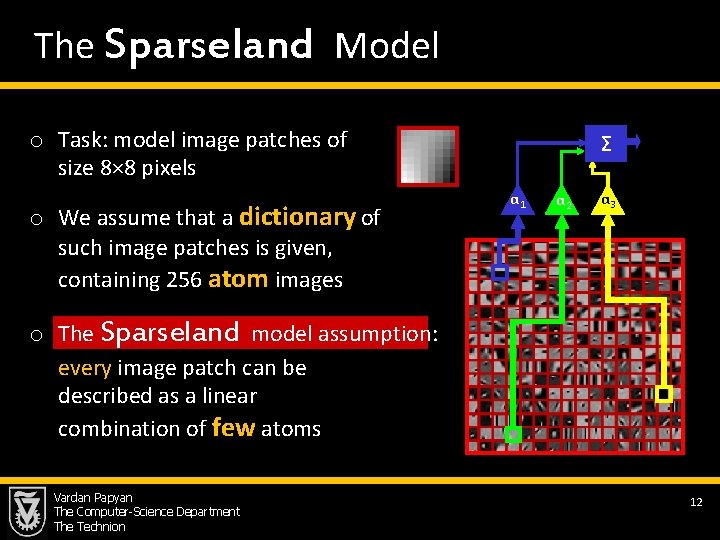

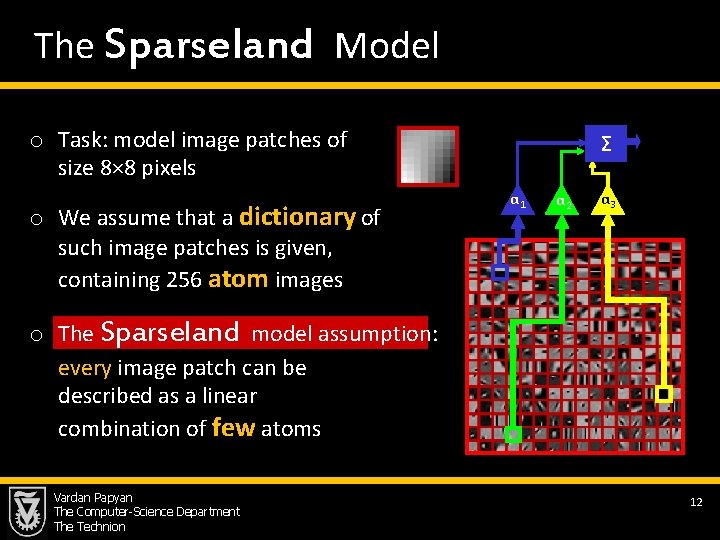

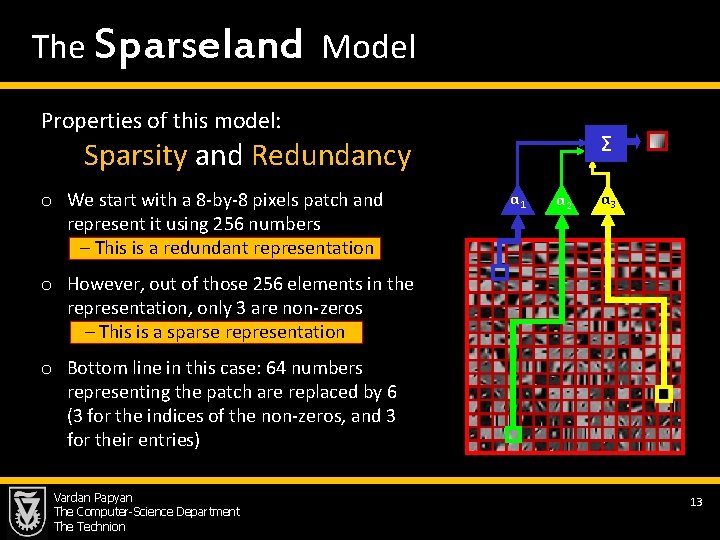

The Sparseland Model o Task: model image patches of size 8× 8 pixels o We assume that a dictionary of such image patches is given, containing 256 atom images Σ α 1 α 2 α 3 o The Sparseland model assumption: every image patch can be described as a linear combination of few atoms Vardan Papyan The Computer-Science Department The Technion 12

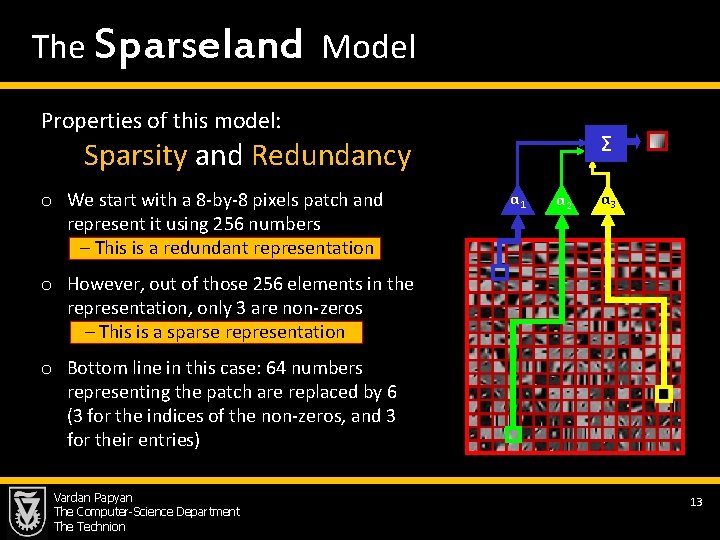

The Sparseland Model Properties of this model: Σ Sparsity and Redundancy o We start with a 8 -by-8 pixels patch and represent it using 256 numbers – This is a redundant representation α 1 α 2 α 3 o However, out of those 256 elements in the representation, only 3 are non-zeros – This is a sparse representation o Bottom line in this case: 64 numbers representing the patch are replaced by 6 (3 for the indices of the non-zeros, and 3 for their entries) Vardan Papyan The Computer-Science Department The Technion 13

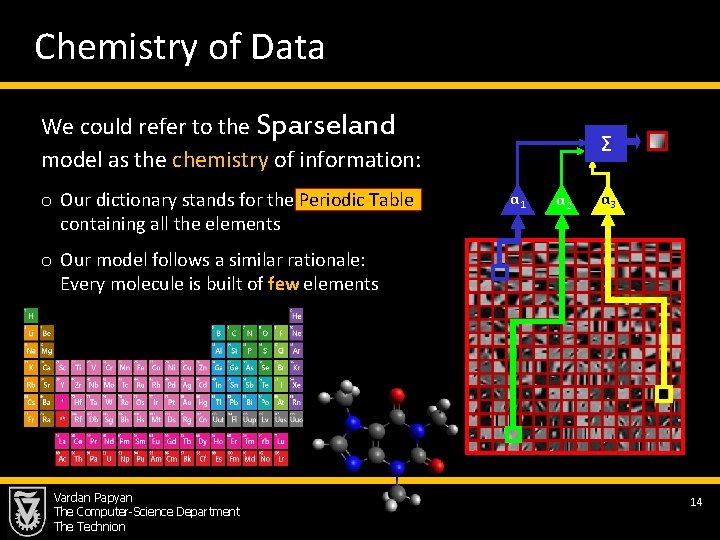

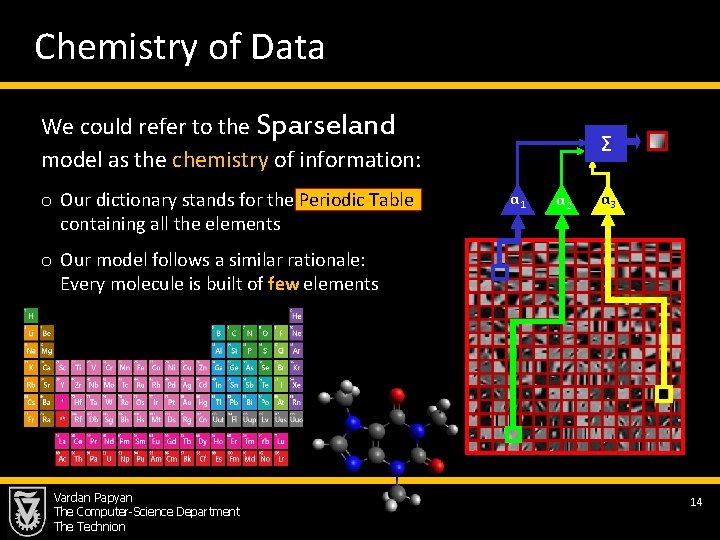

Chemistry of Data We could refer to the Sparseland model as the chemistry of information: o Our dictionary stands for the Periodic Table containing all the elements Σ α 1 α 2 α 3 o Our model follows a similar rationale: Every molecule is built of few elements Vardan Papyan The Computer-Science Department The Technion 14

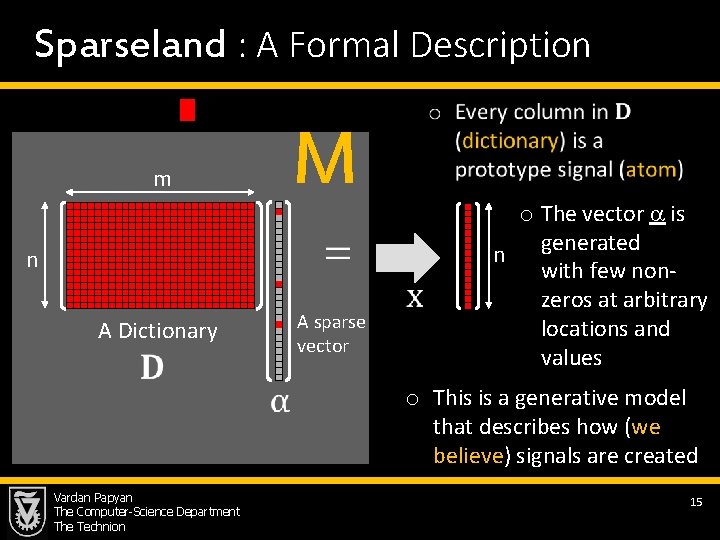

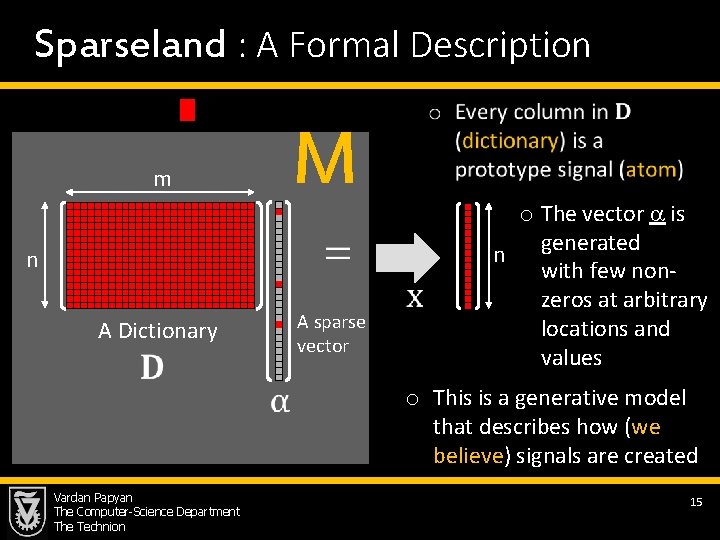

Sparseland : A Formal Description M m n A sparse vector A Dictionary Vardan Papyan The Computer-Science Department The Technion o The vector is generated n with few nonzeros at arbitrary locations and values o This is a generative model that describes how (we believe) signals are created 15

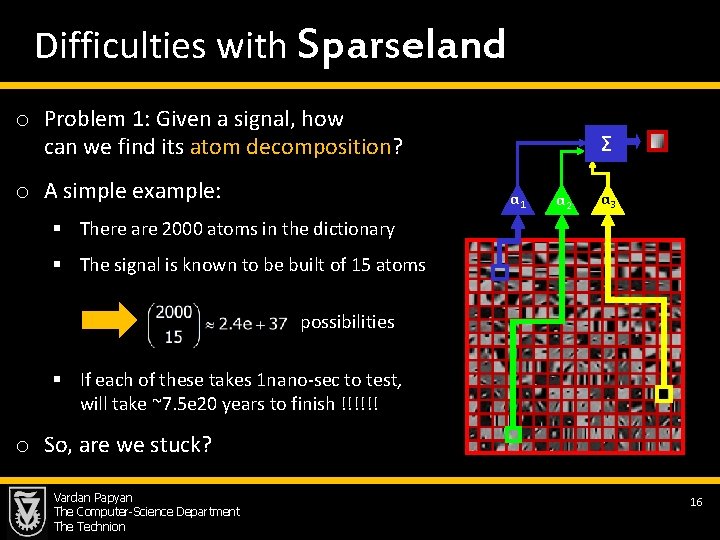

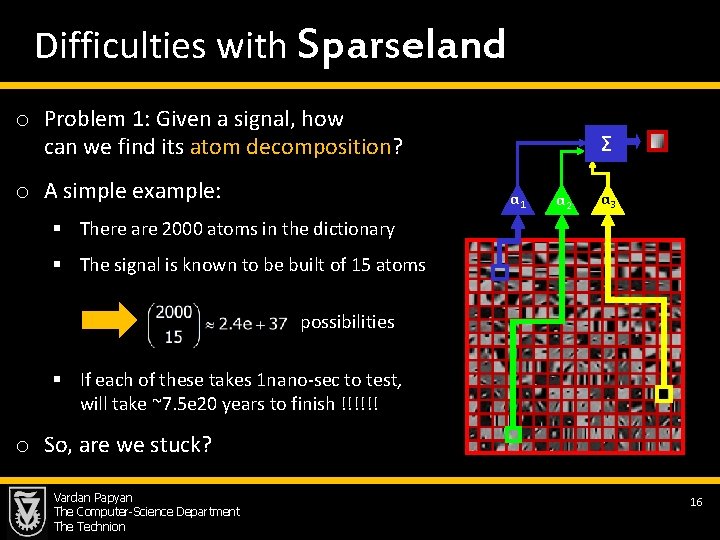

Difficulties with Sparseland o Problem 1: Given a signal, how can we find its atom decomposition? o A simple example: Σ α 1 α 2 α 3 § There are 2000 atoms in the dictionary § The signal is known to be built of 15 atoms possibilities § If each of these takes 1 nano-sec to test, will take ~7. 5 e 20 years to finish !!!!!! this o So, are we stuck? Vardan Papyan The Computer-Science Department The Technion 16

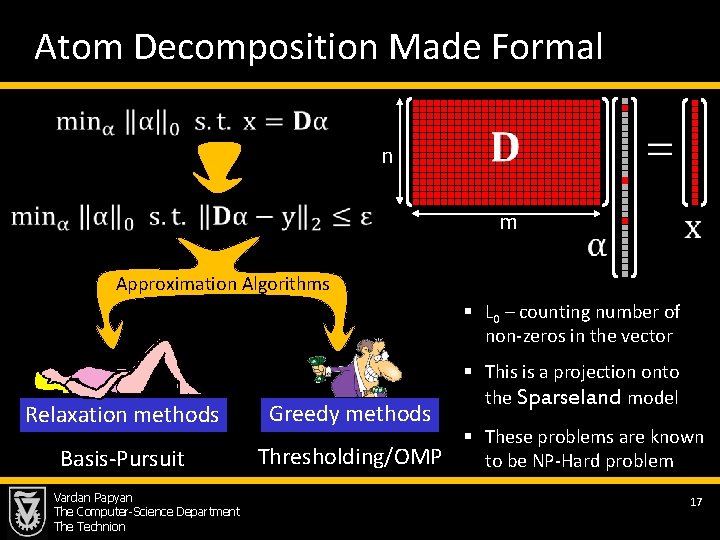

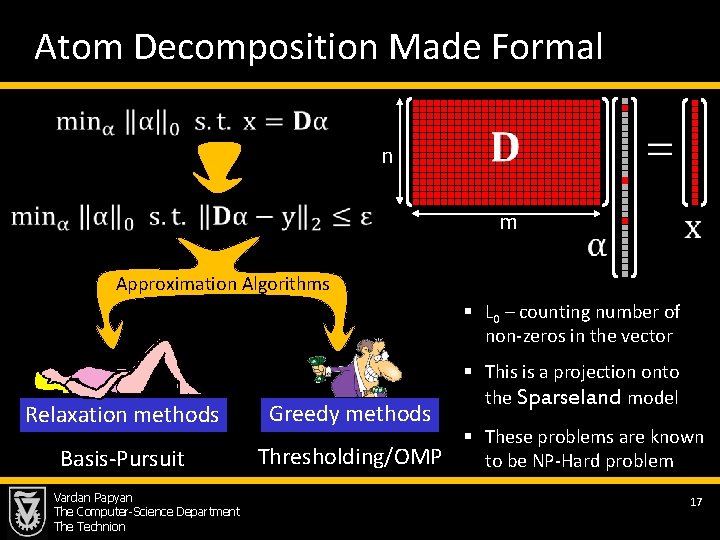

Atom Decomposition Made Formal n m Approximation Algorithms § L 0 – counting number of non-zeros in the vector Relaxation methods Greedy methods Basis-Pursuit Thresholding/OMP Vardan Papyan The Computer-Science Department The Technion § This is a projection onto the Sparseland model § These problems are known to be NP-Hard problem 17

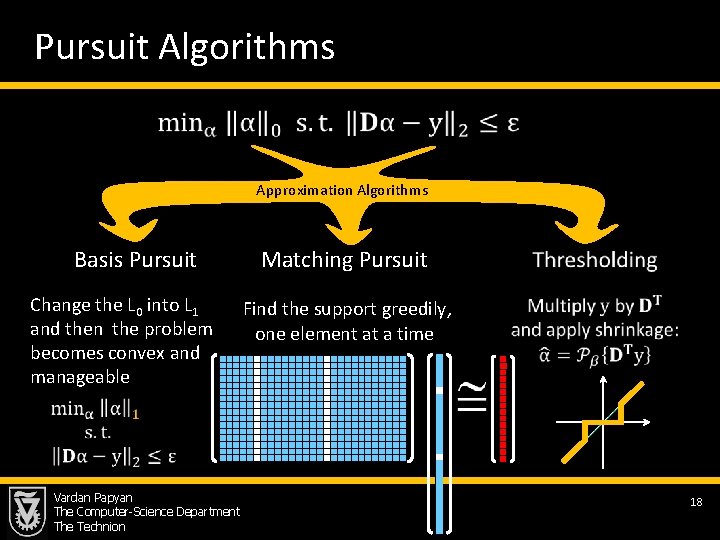

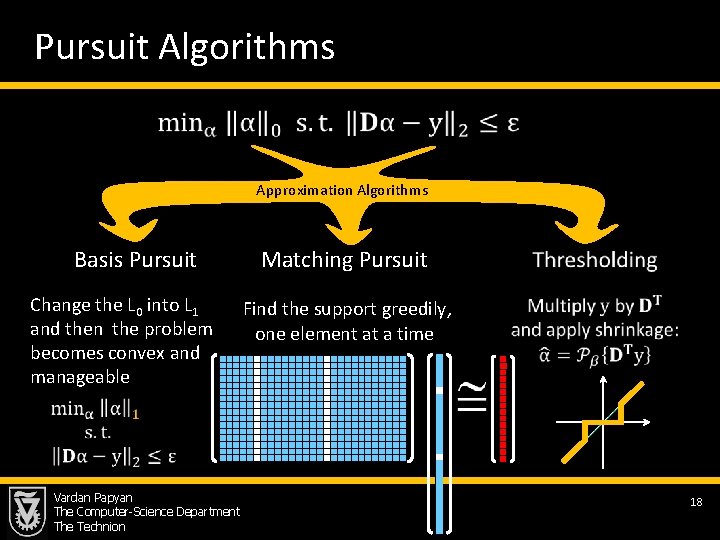

Pursuit Algorithms Approximation Algorithms Basis Pursuit Change the L 0 into L 1 and then the problem becomes convex and manageable Matching Pursuit Find the support greedily, one element at a time Vardan Papyan The Computer-Science Department The Technion 18

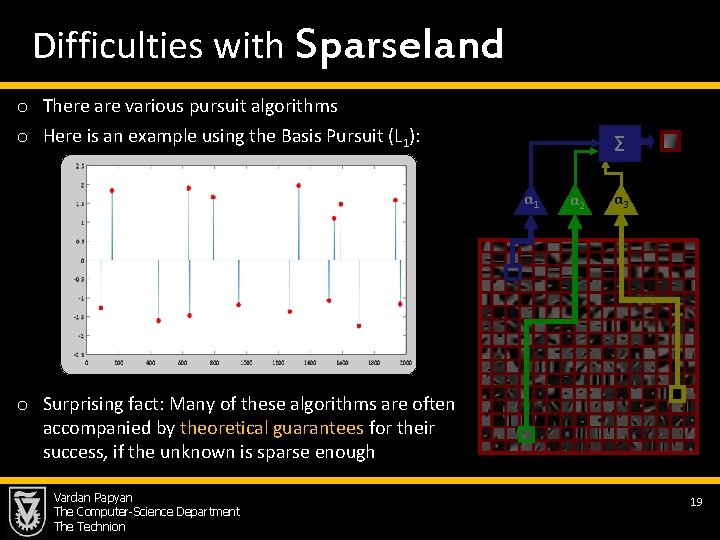

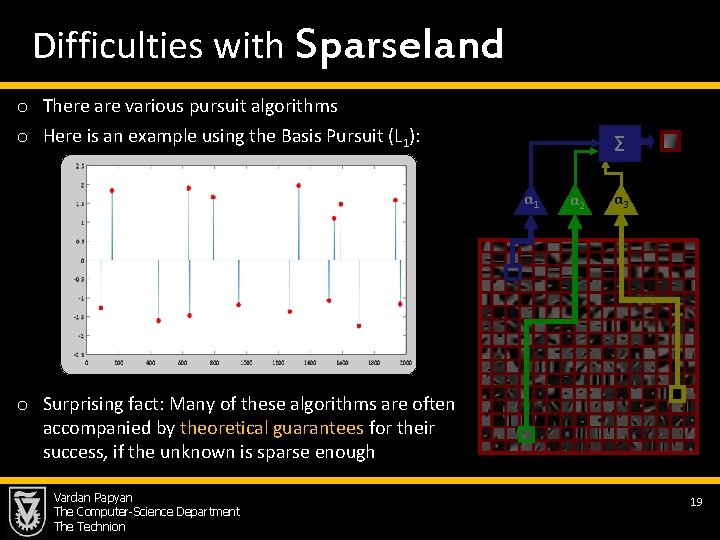

Difficulties with Sparseland o There are various pursuit algorithms o Here is an example using the Basis Pursuit (L 1): Σ α 1 α 2 α 3 o Surprising fact: Many of these algorithms are often accompanied by theoretical guarantees for their success, if the unknown is sparse enough Vardan Papyan The Computer-Science Department The Technion 19

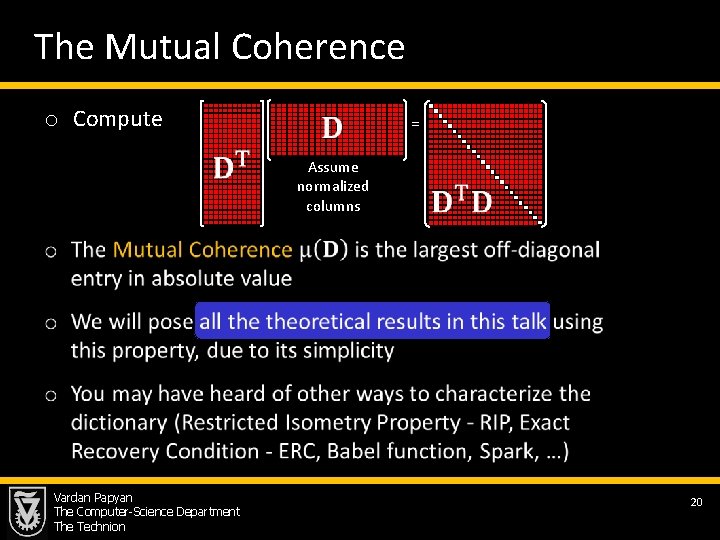

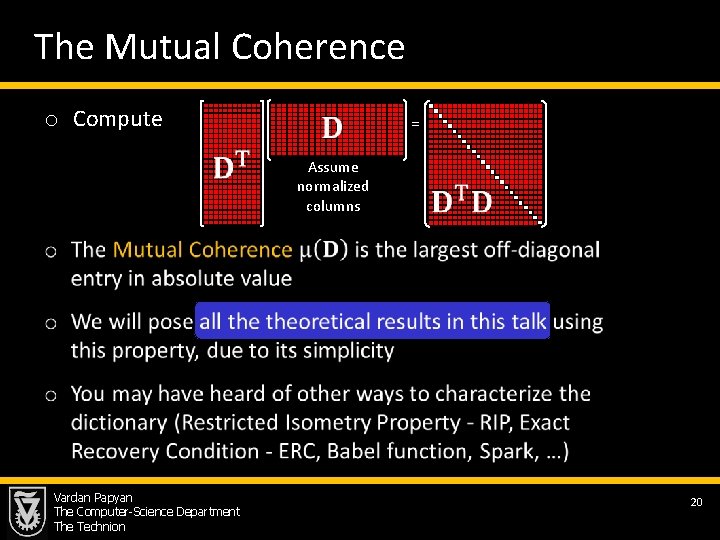

The Mutual Coherence o Compute = Assume normalized columns Vardan Papyan The Computer-Science Department The Technion 20

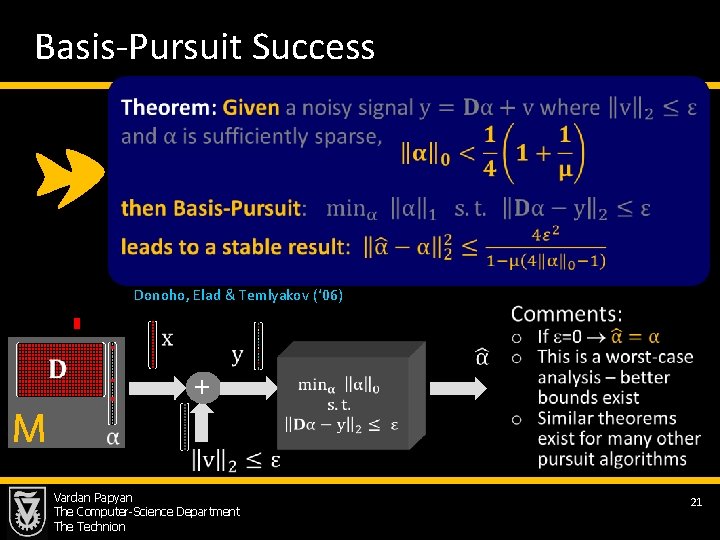

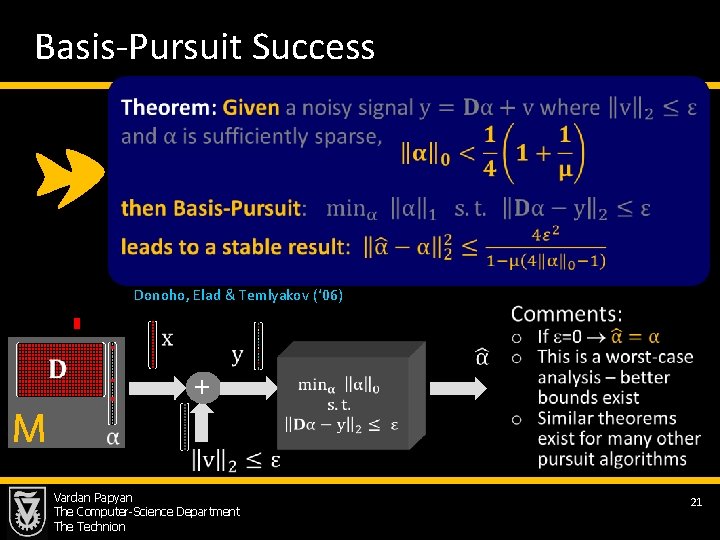

Basis-Pursuit Success Donoho, Elad & Temlyakov (‘ 06) M + Vardan Papyan The Computer-Science Department The Technion 21

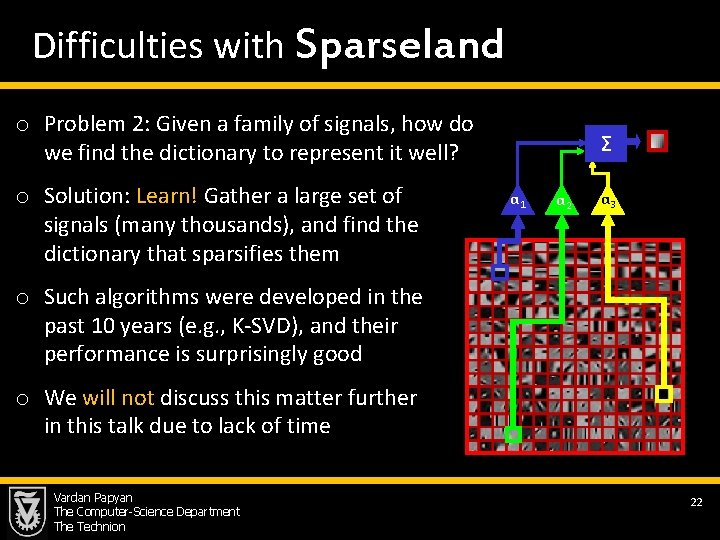

Difficulties with Sparseland o Problem 2: Given a family of signals, how do we find the dictionary to represent it well? o Solution: Learn! Gather a large set of signals (many thousands), and find the dictionary that sparsifies them Σ α 1 α 2 α 3 o Such algorithms were developed in the past 10 years (e. g. , K-SVD), and their performance is surprisingly good o We will not discuss this matter further in this talk due to lack of time Vardan Papyan The Computer-Science Department The Technion 22

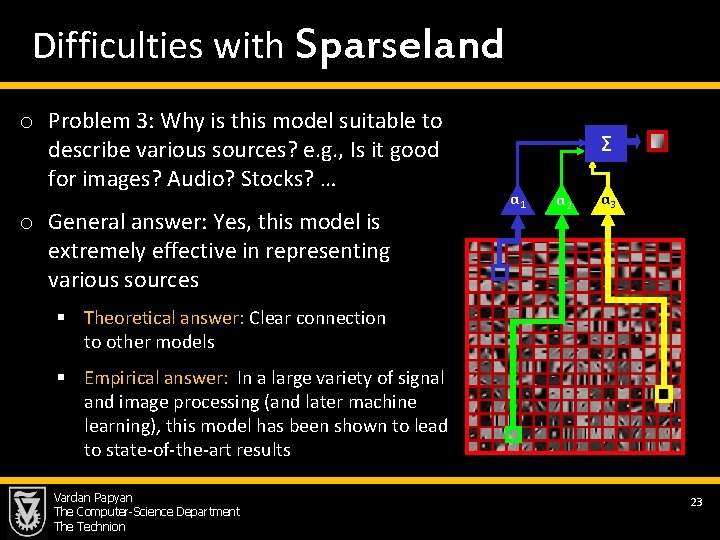

Difficulties with Sparseland o Problem 3: Why is this model suitable to describe various sources? e. g. , Is it good for images? Audio? Stocks? … o General answer: Yes, this model is extremely effective in representing various sources Σ α 1 α 2 α 3 § Theoretical answer: Clear connection to other models § Empirical answer: In a large variety of signal and image processing (and later machine learning), this model has been shown to lead to state-of-the-art results Vardan Papyan The Computer-Science Department The Technion 23

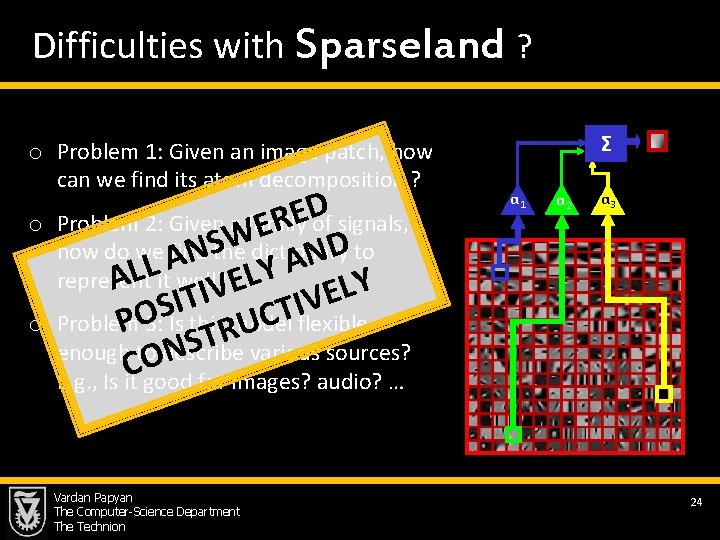

Difficulties with Sparseland ? o Problem 1: Given an image patch, how can we find its atom decomposition ? o o D E Problem 2: Given a family of signals, R E Wdictionary Sthe D how do we A find to N N A L Y L L represent A it well? E Y L V I E T V I I S T O C Problem model flexible P 3: Is this U R T various sources? S enough to describe N O C E. g. , Is it good for images? audio? … Vardan Papyan The Computer-Science Department The Technion Σ α 1 α 2 α 3 24

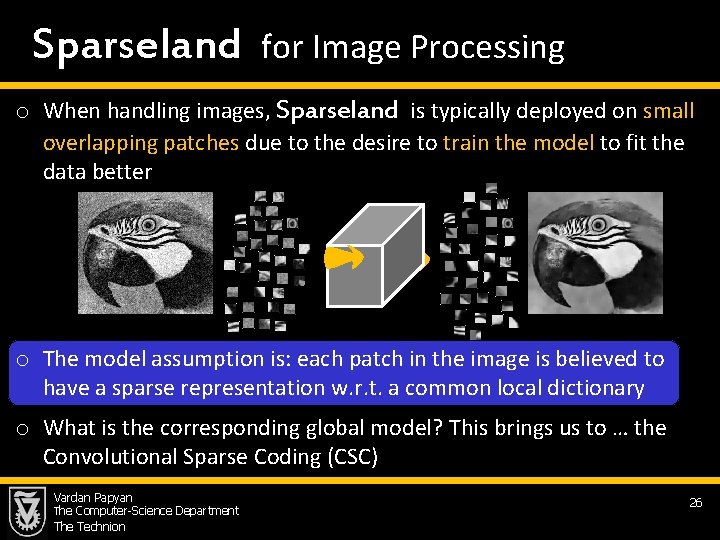

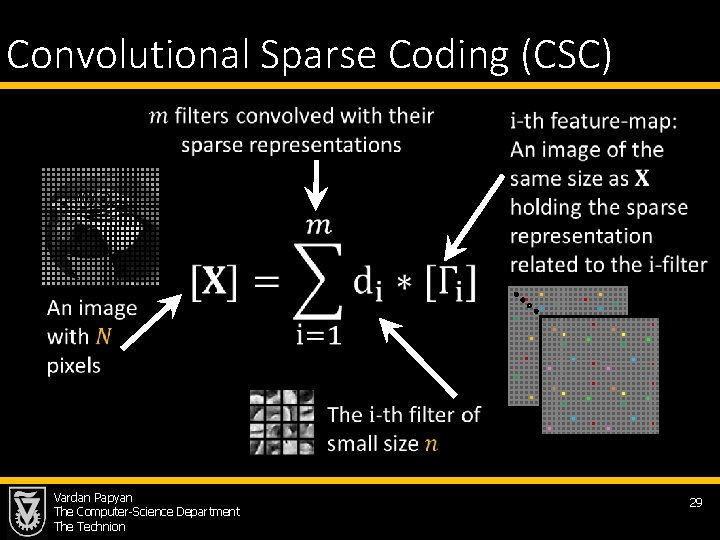

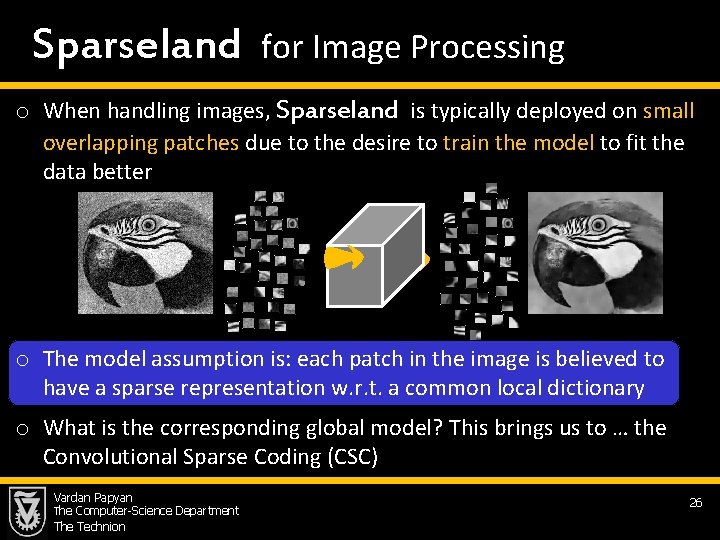

Sparseland for Image Processing o When handling images, Sparseland is typically deployed on small overlapping patches due to the desire to train the model to fit the data better o The model assumption is: each patch in the image is believed to have a sparse representation w. r. t. a common local dictionary o What is the corresponding global model? This brings us to … the Convolutional Sparse Coding (CSC) Vardan Papyan The Computer-Science Department The Technion 26

Multi-Layered Convolutional Sparse Modeling Vardan Papyan The Computer-Science Department The Technion 27

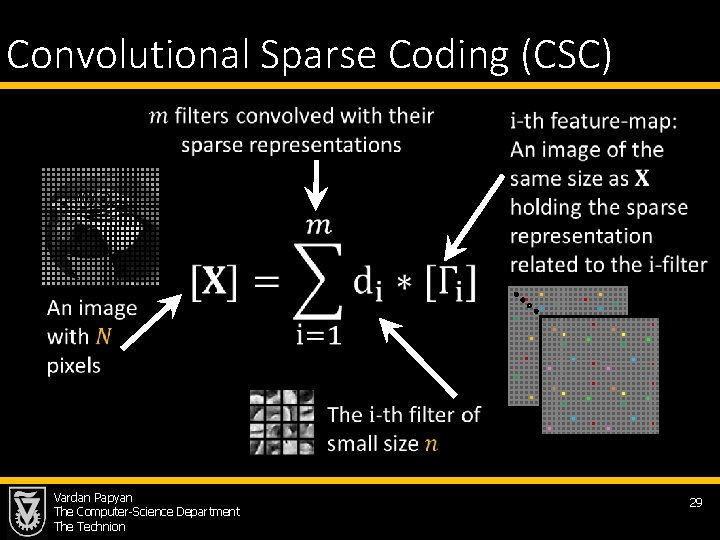

Convolutional Sparse Coding (CSC) Vardan Papyan The Computer-Science Department The Technion 29

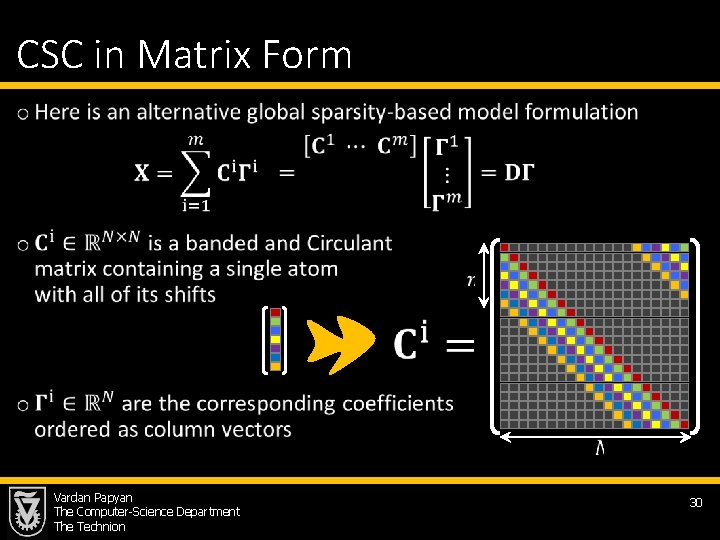

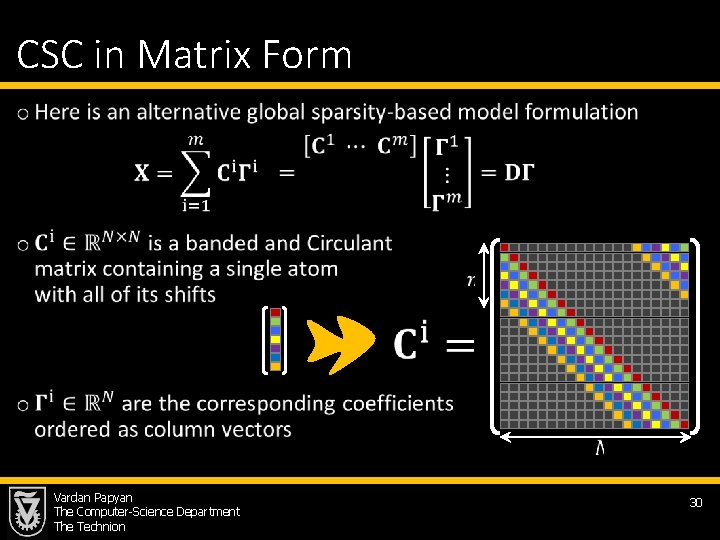

CSC in Matrix Form • Vardan Papyan The Computer-Science Department The Technion 30

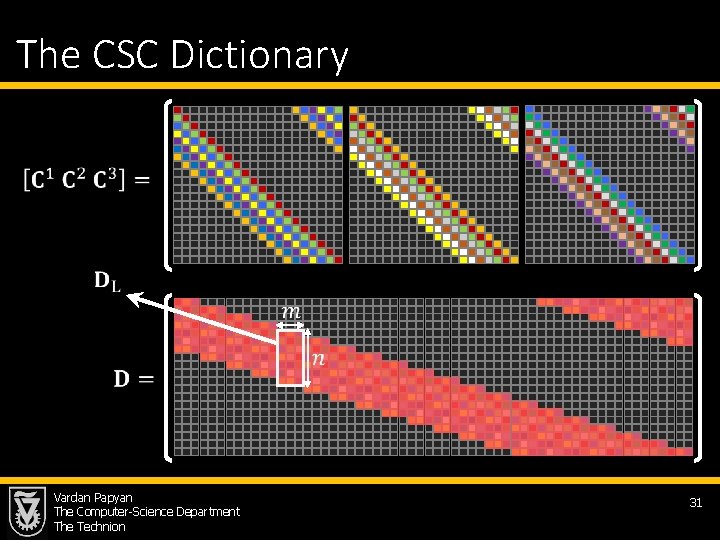

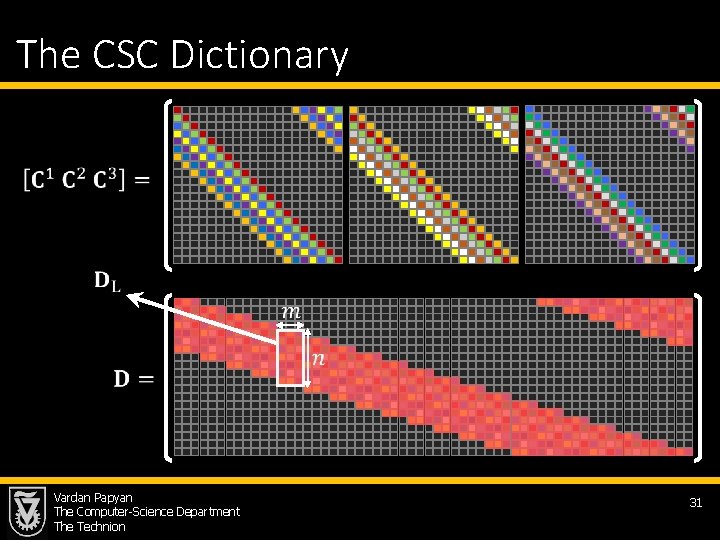

The CSC Dictionary Vardan Papyan The Computer-Science Department The Technion 31

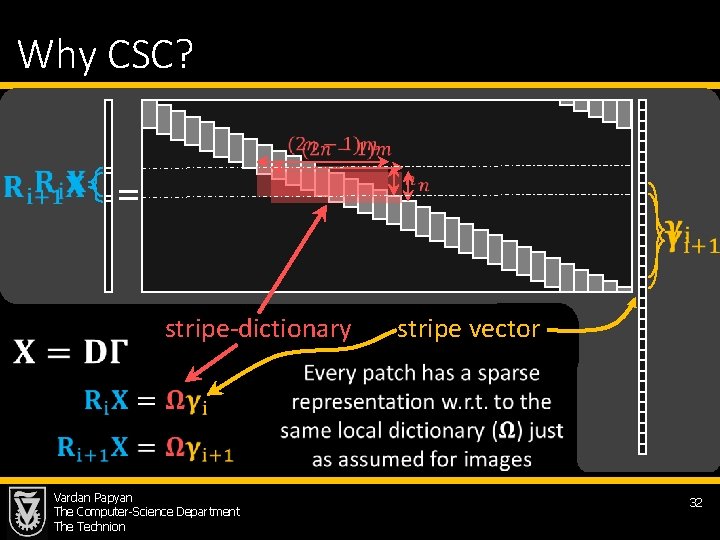

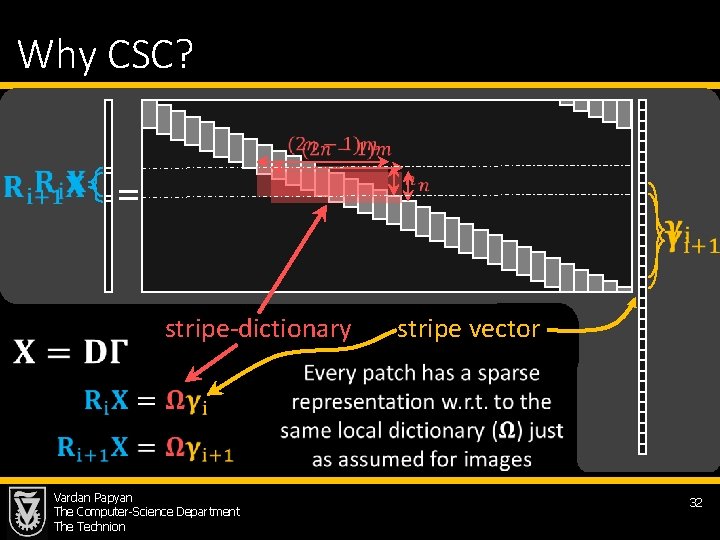

Why CSC? = stripe-dictionary stripe vector Vardan Papyan The Computer-Science Department The Technion 32

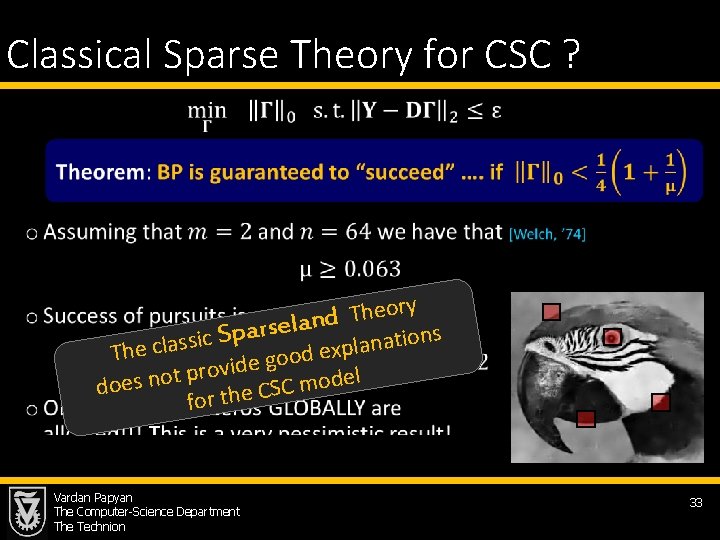

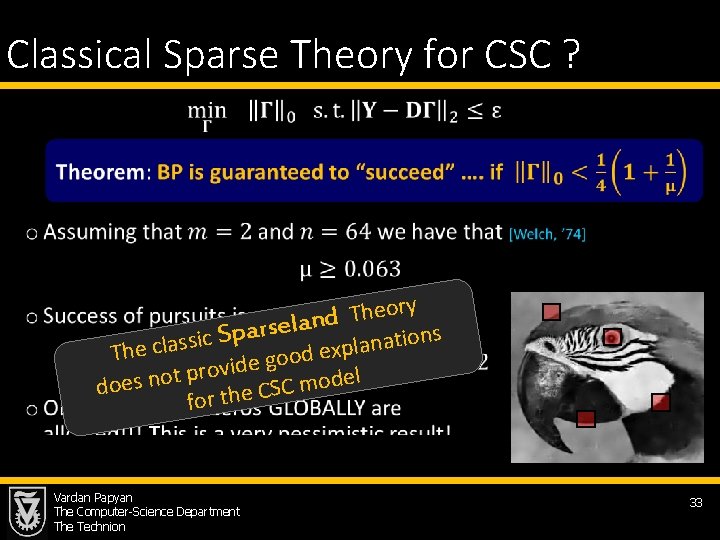

Classical Sparse Theory for CSC ? • ory e h T d n Sparsela ons ti sic a s n a l c p e x h e T od o g e d i v pro l t e o d n o s e m C do S for the C Vardan Papyan The Computer-Science Department The Technion 33

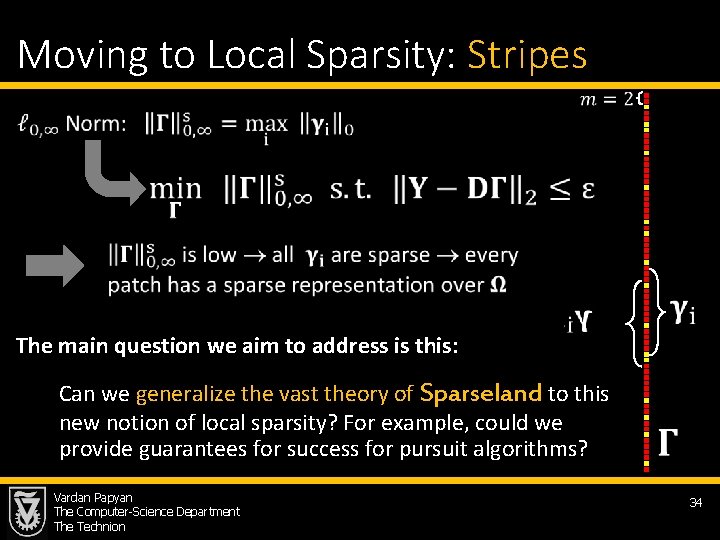

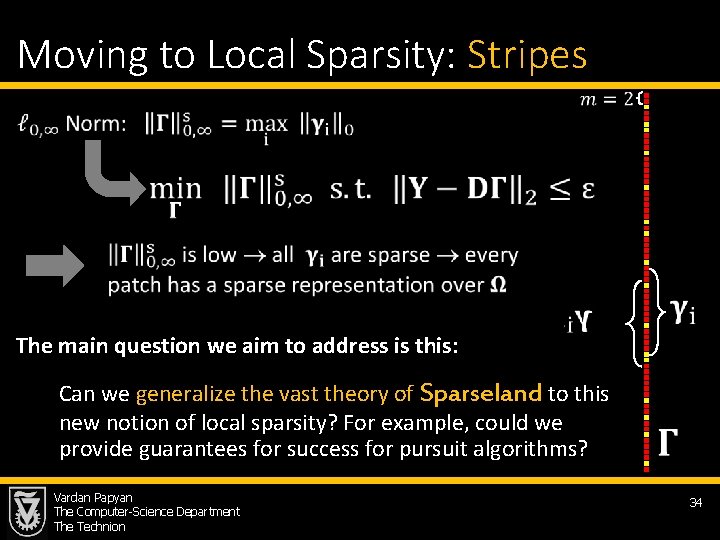

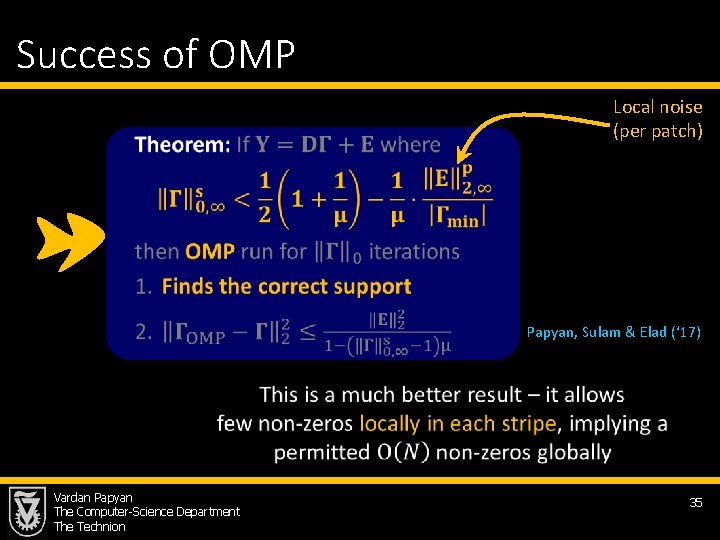

Moving to Local Sparsity: Stripes The main question we aim to address is this: Can we generalize the vast theory of Sparseland to this new notion of local sparsity? For example, could we provide guarantees for success for pursuit algorithms? Vardan Papyan The Computer-Science Department The Technion 34

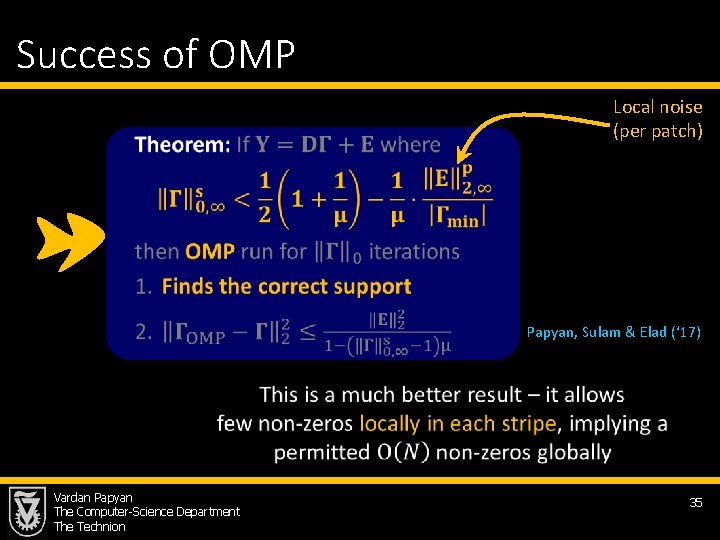

Success of OMP Local noise (per patch) Papyan, Sulam & Elad (‘ 17) Vardan Papyan The Computer-Science Department The Technion 35

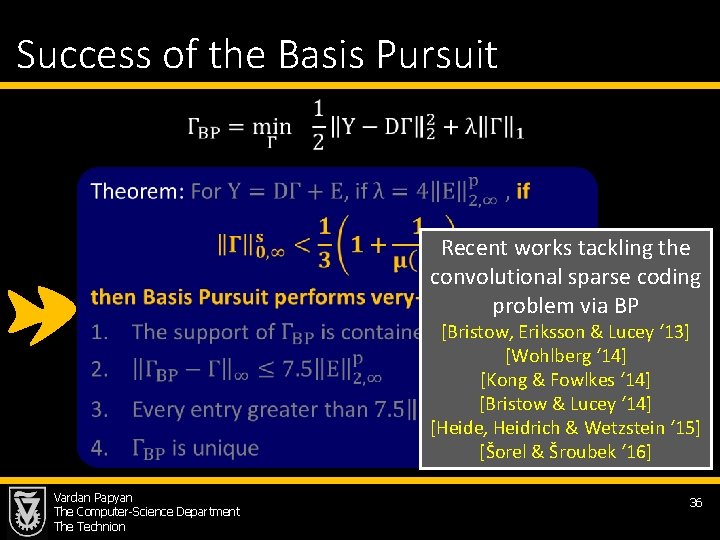

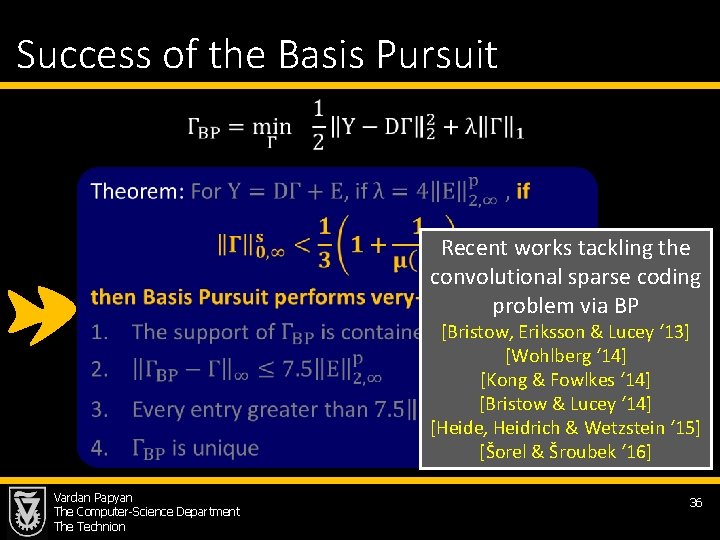

Success of the Basis Pursuit Recent works tackling the convolutional sparse coding problem via BP [Bristow, Eriksson & Lucey ‘ 13] [Wohlberg ‘ 14] [Kong & Fowlkes ‘ 14] [Bristow & Lucey ‘ 14] Papyan, Sulam [Heide, Heidrich & Wetzstein ‘ 15] & Elad (‘ 17) [Šorel & Šroubek ‘ 16] Vardan Papyan The Computer-Science Department The Technion 36

Multi-Layered Convolutional Sparse Modeling Vardan Papyan The Computer-Science Department The Technion 40

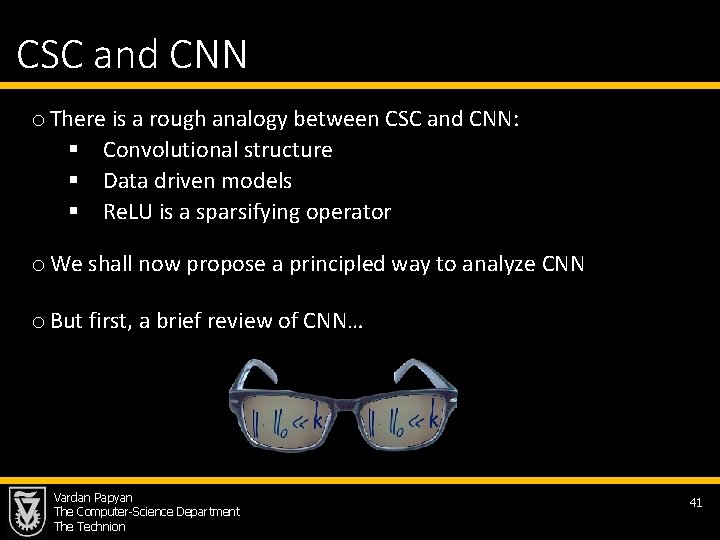

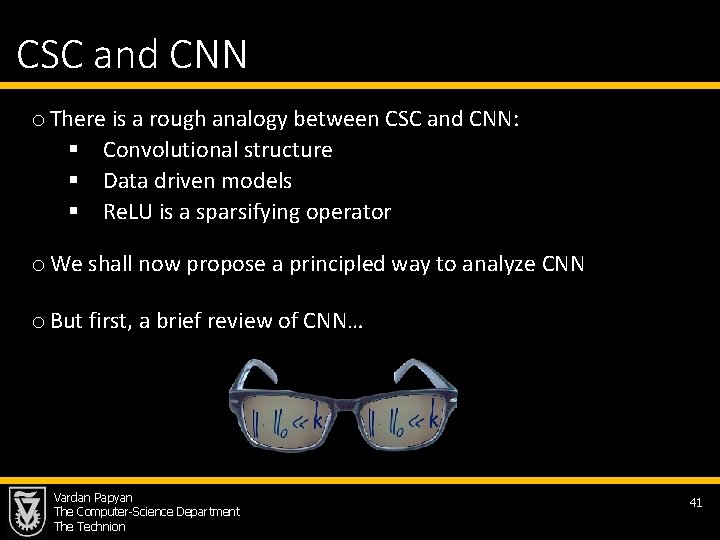

CSC and CNN o There is a rough analogy between CSC and CNN: § Convolutional structure § Data driven models § Re. LU is a sparsifying operator o We shall now propose a principled way to analyze CNN o But first, a brief review of CNN… Vardan Papyan The Computer-Science Department The Technion 41

![CNN Le Cun Bottou Bengio and Haffner 98 Krizhevsky Sutskever Hinton CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘](https://slidetodoc.com/presentation_image_h/c1a75330057ff1ee333d822b4ee17840/image-36.jpg)

CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘ 12] [Simonyan & Zisserman ‘ 14] [He, Zhang, Ren & Sun ‘ 15] Vardan Papyan The Computer-Science Department The Technion Re. LU 42

![CNN Le Cun Bottou Bengio and Haffner 98 Krizhevsky Sutskever Hinton CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘](https://slidetodoc.com/presentation_image_h/c1a75330057ff1ee333d822b4ee17840/image-37.jpg)

CNN [Le. Cun, Bottou, Bengio and Haffner ‘ 98] [Krizhevsky, Sutskever & Hinton ‘ 12] [Simonyan & Zisserman ‘ 14] [He, Zhang, Ren & Sun ‘ 15] Vardan Papyan The Computer-Science Department The Technion 43

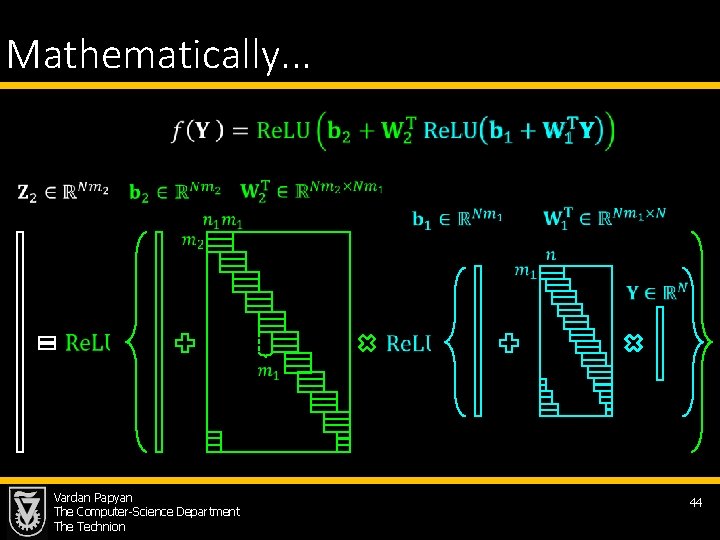

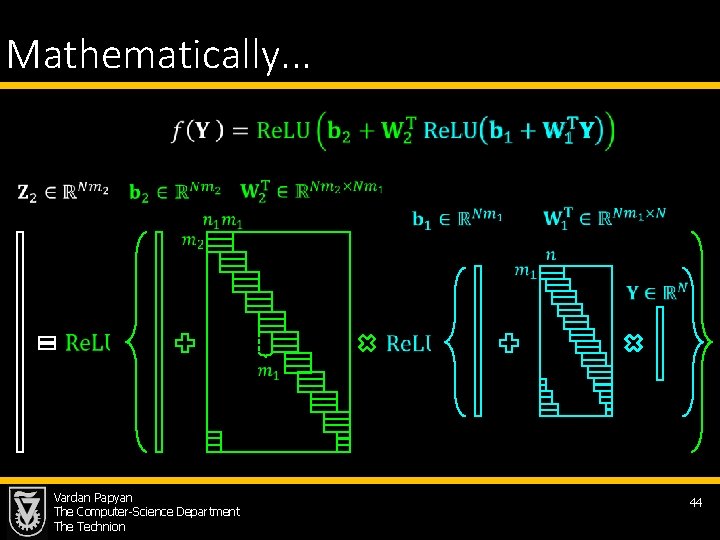

Mathematically. . . Vardan Papyan The Computer-Science Department The Technion 44

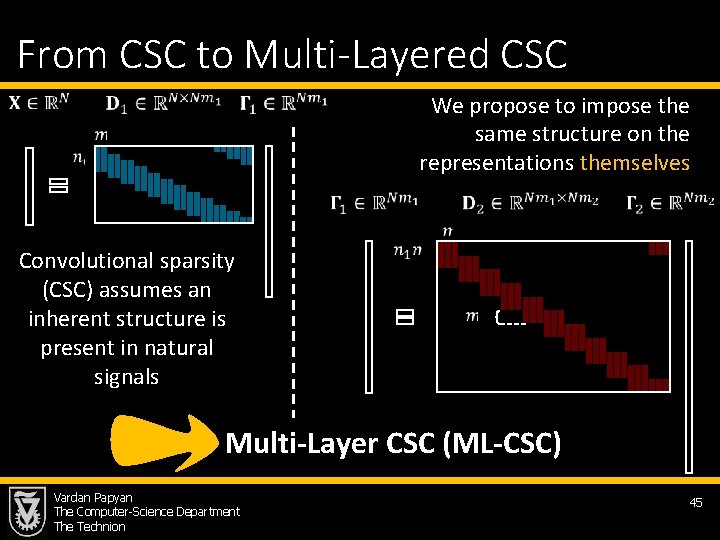

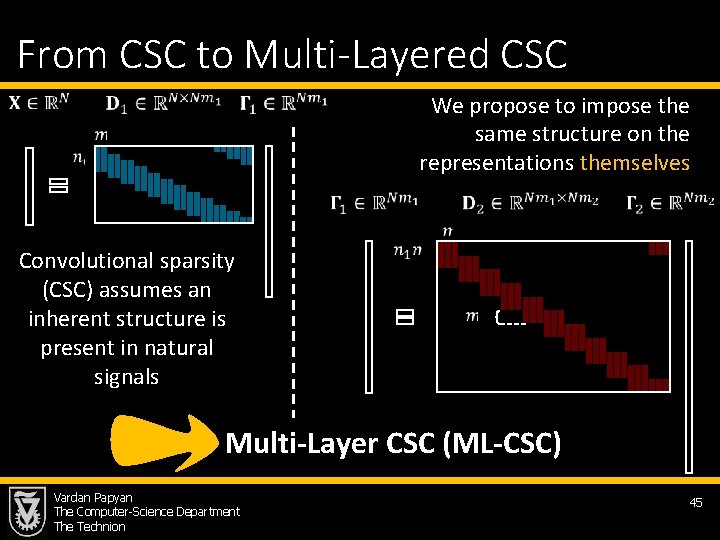

From CSC to Multi-Layered CSC We propose to impose the same structure on the representations themselves Convolutional sparsity (CSC) assumes an inherent structure is present in natural signals Multi-Layer CSC (ML-CSC) Vardan Papyan The Computer-Science Department The Technion 45

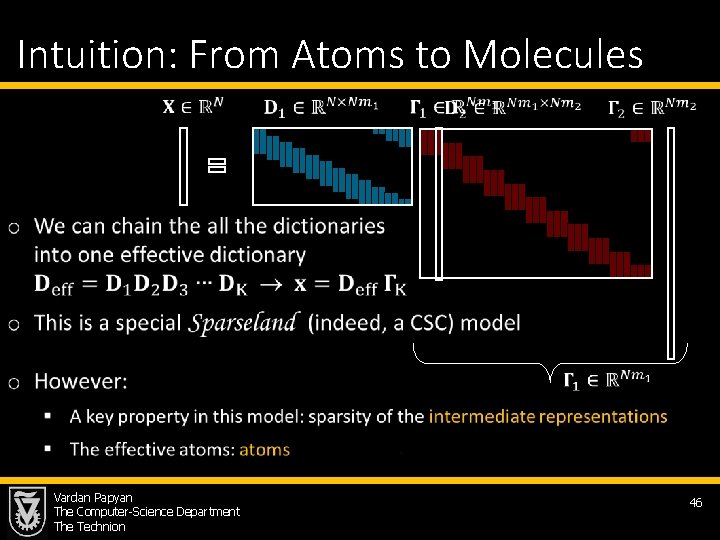

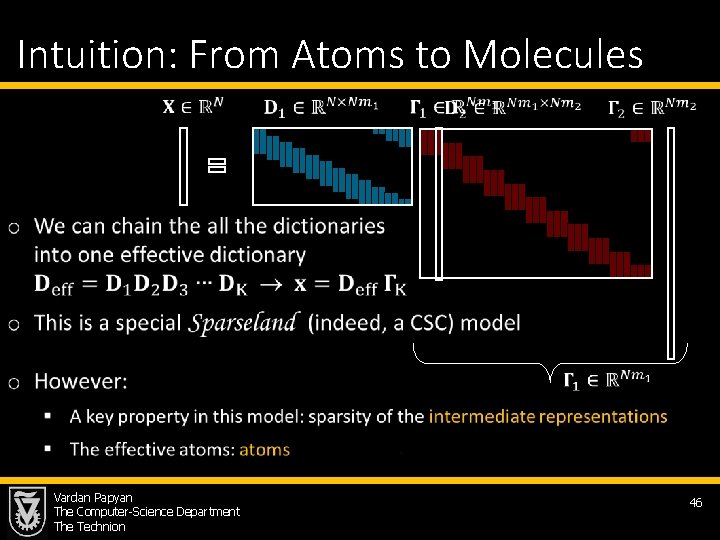

Intuition: From Atoms to Molecules Vardan Papyan The Computer-Science Department The Technion 46

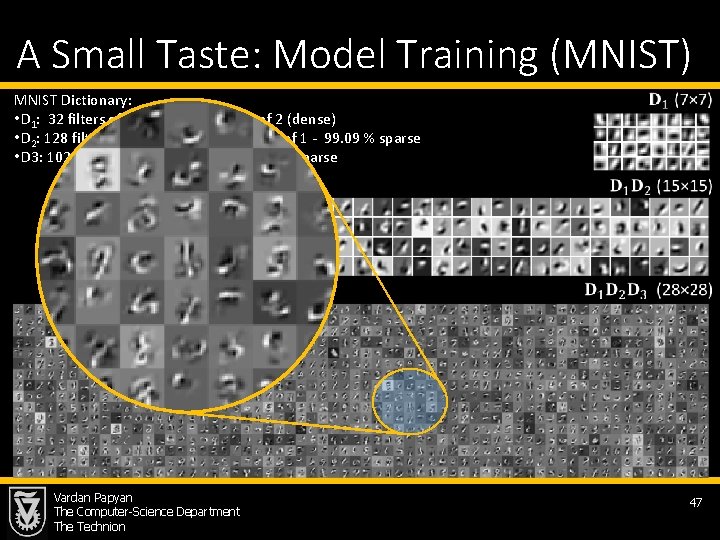

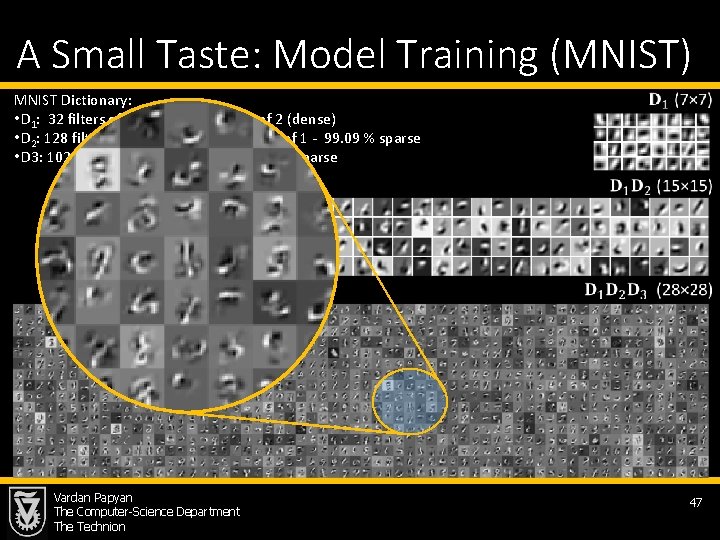

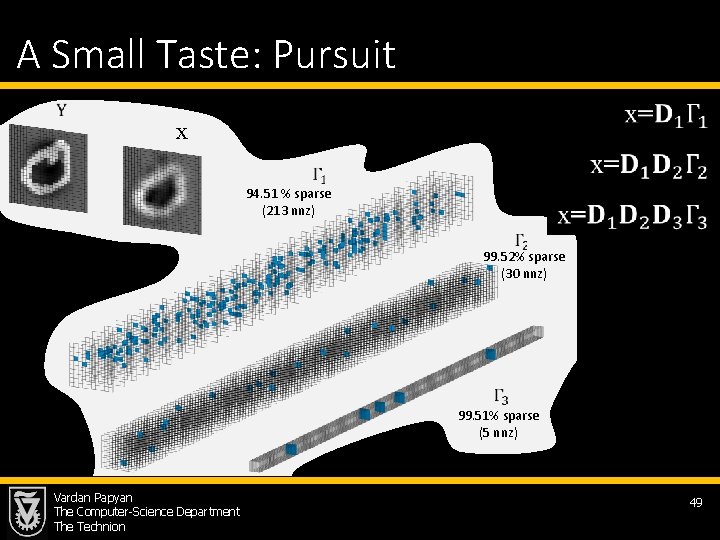

A Small Taste: Model Training (MNIST) MNIST Dictionary: • D 1: 32 filters of size 7× 7, with stride of 2 (dense) • D 2: 128 filters of size 5× 5× 32 with stride of 1 - 99. 09 % sparse • D 3: 1024 filters of size 7× 7× 128 – 99. 89 % sparse Vardan Papyan The Computer-Science Department The Technion 47

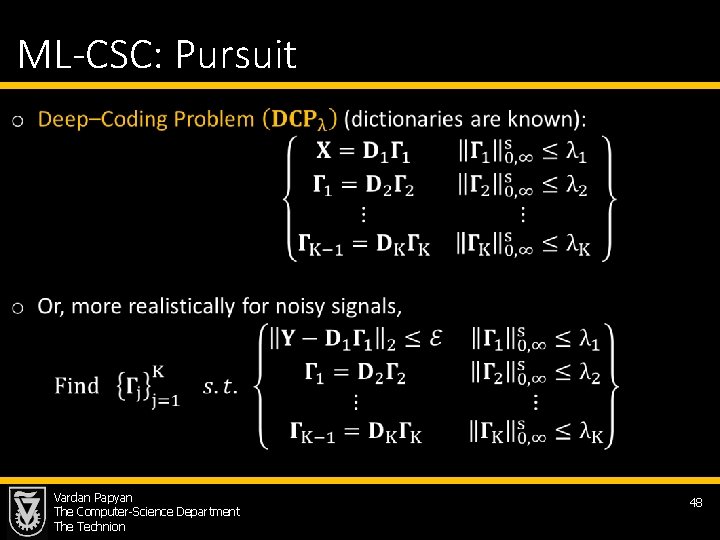

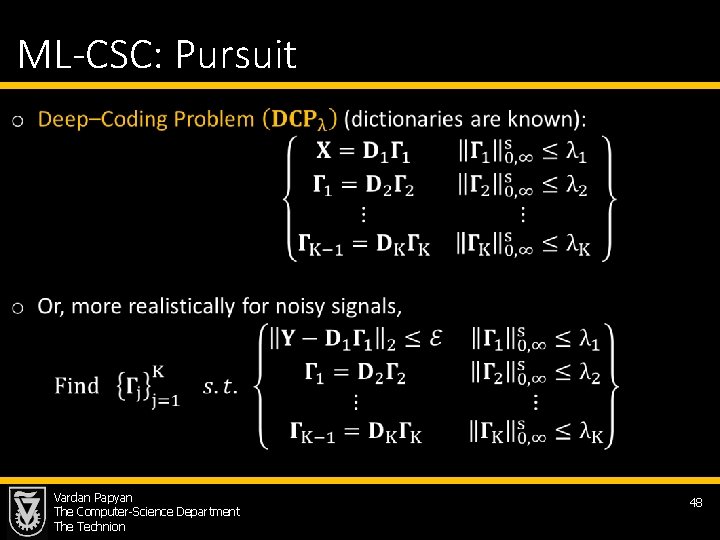

ML-CSC: Pursuit Vardan Papyan The Computer-Science Department The Technion 48

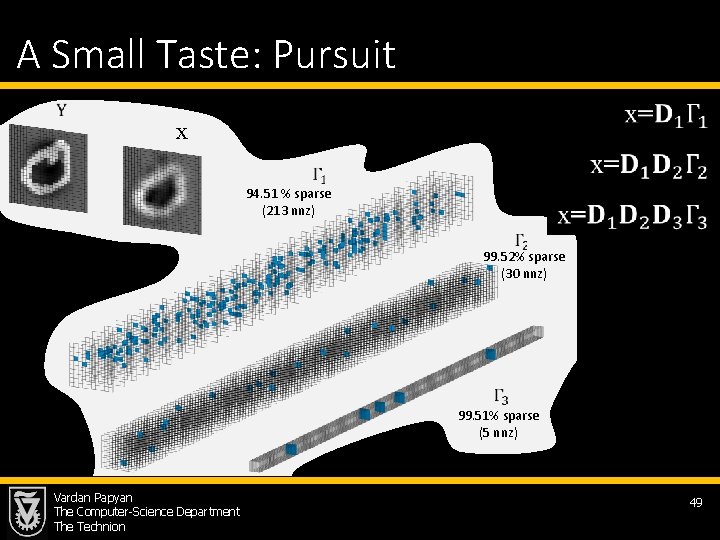

A Small Taste: Pursuit x 94. 51 % sparse (213 nnz) 99. 52% sparse (30 nnz) 99. 51% sparse (5 nnz) Vardan Papyan The Computer-Science Department The Technion 49

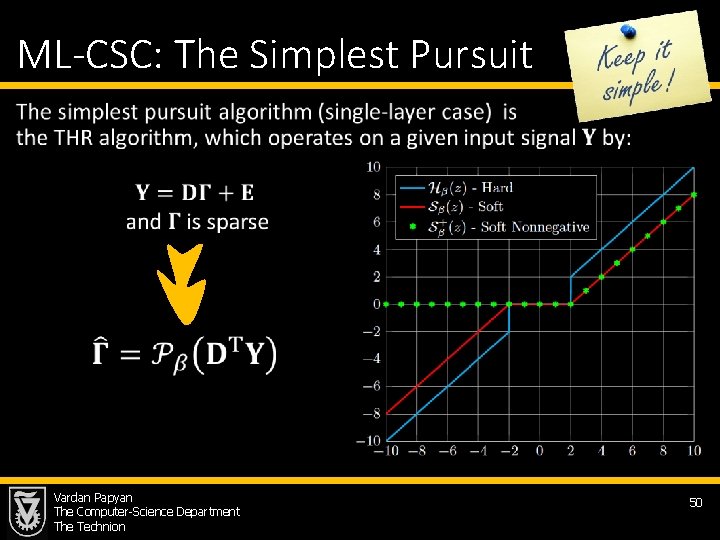

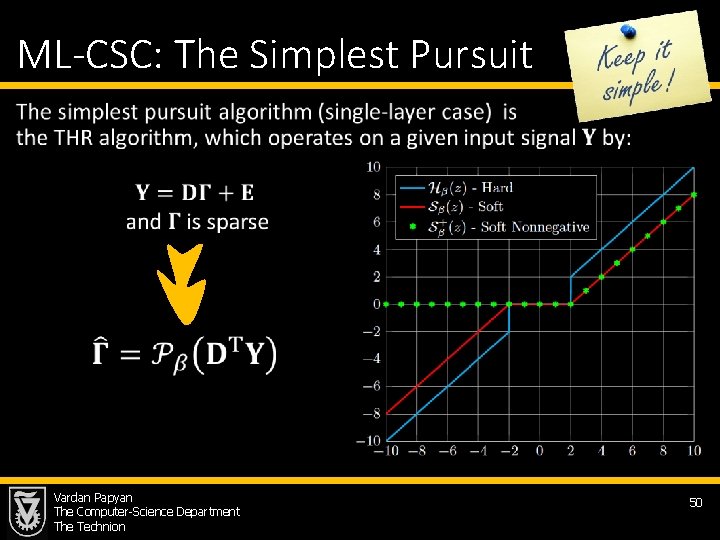

ML-CSC: The Simplest Pursuit • Vardan Papyan The Computer-Science Department The Technion 50

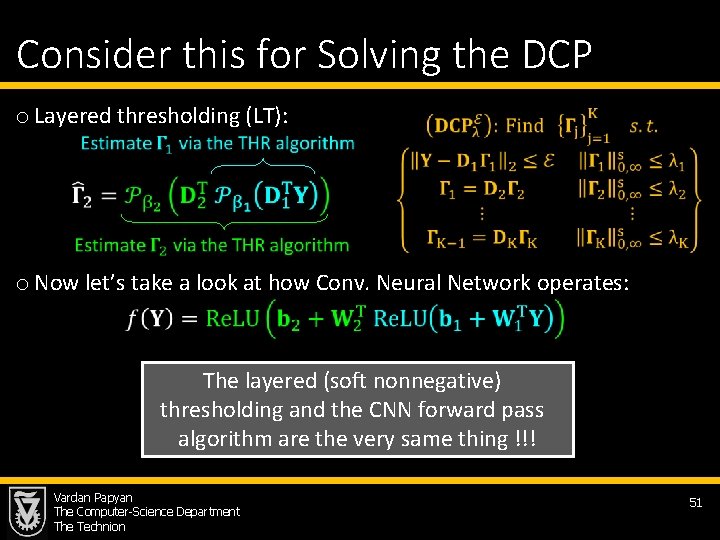

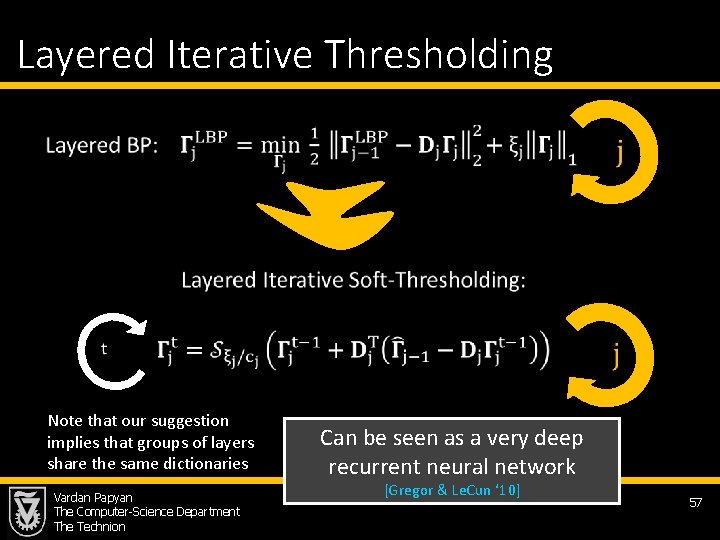

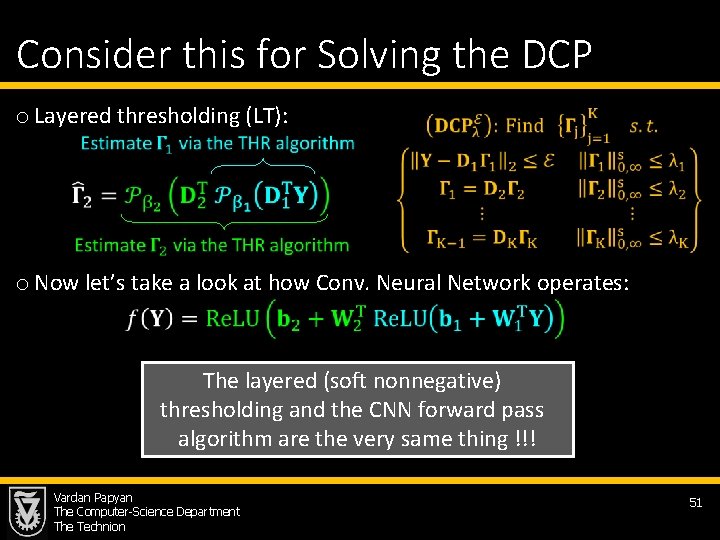

Consider this for Solving the DCP o Layered thresholding (LT): o Now let’s take a look at how Conv. Neural Network operates: The layered (soft nonnegative) thresholding and the CNN forward pass algorithm are the very same thing !!! Vardan Papyan The Computer-Science Department The Technion 51

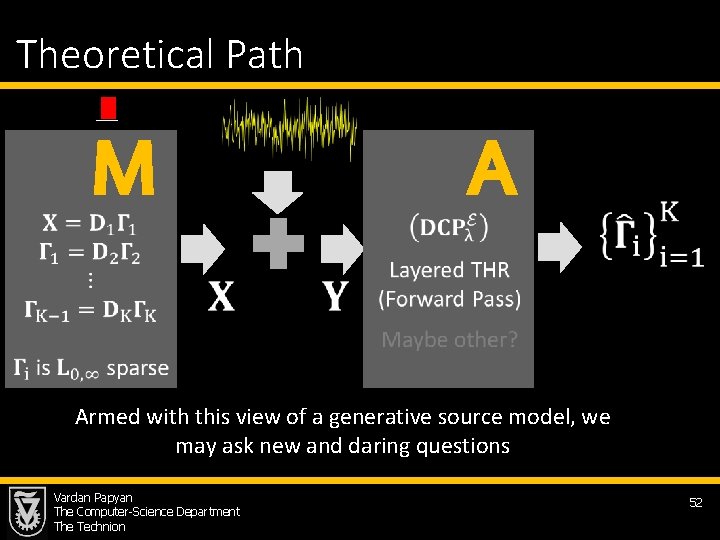

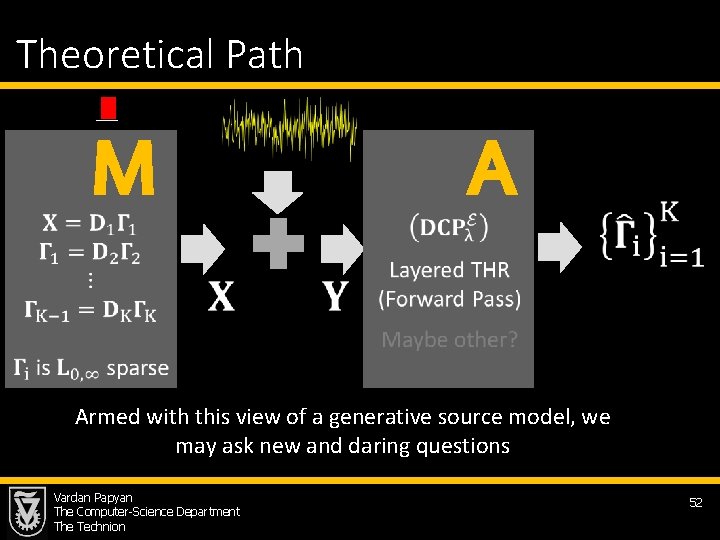

Theoretical Path M A Armed with this view of a generative source model, we may ask new and daring questions Vardan Papyan The Computer-Science Department The Technion 52

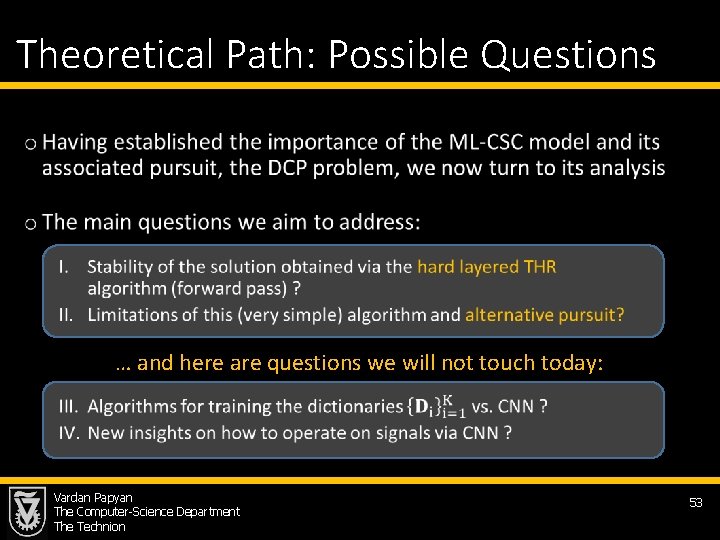

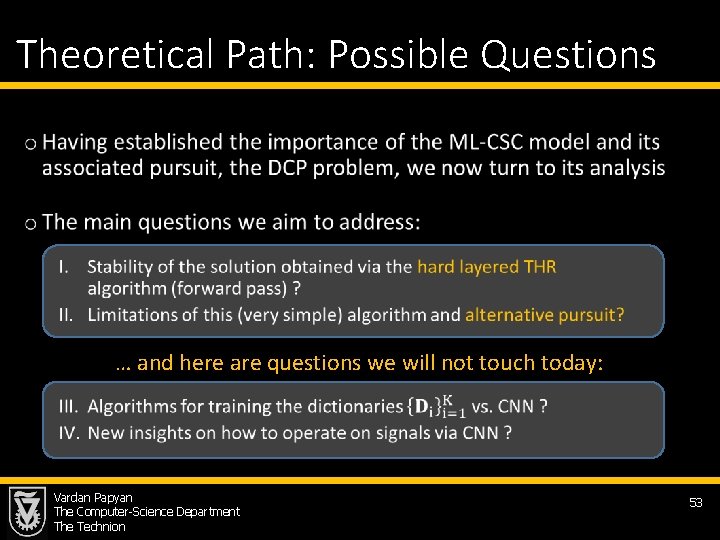

Theoretical Path: Possible Questions • … and here are questions we will not touch today: Vardan Papyan The Computer-Science Department The Technion 53

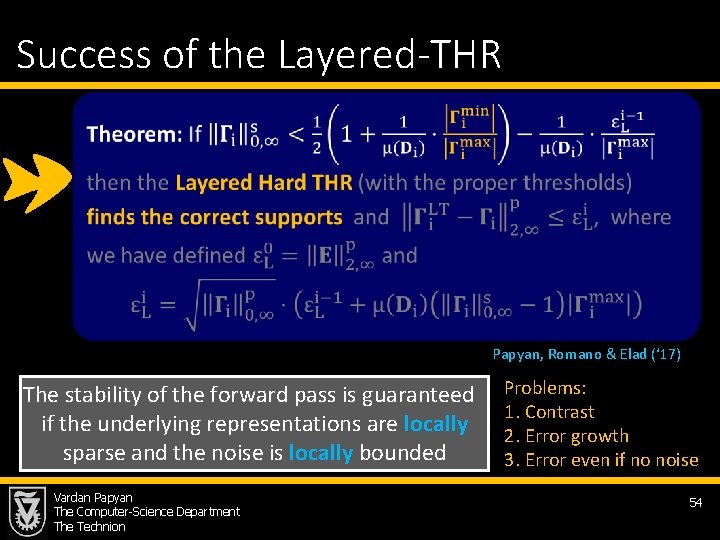

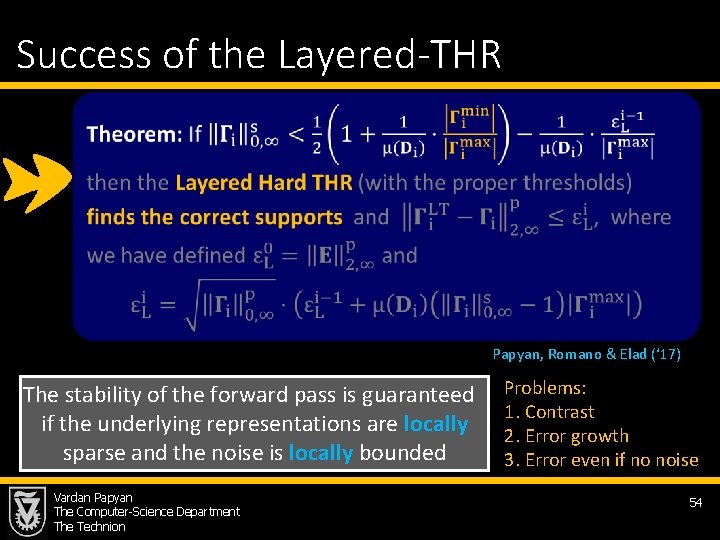

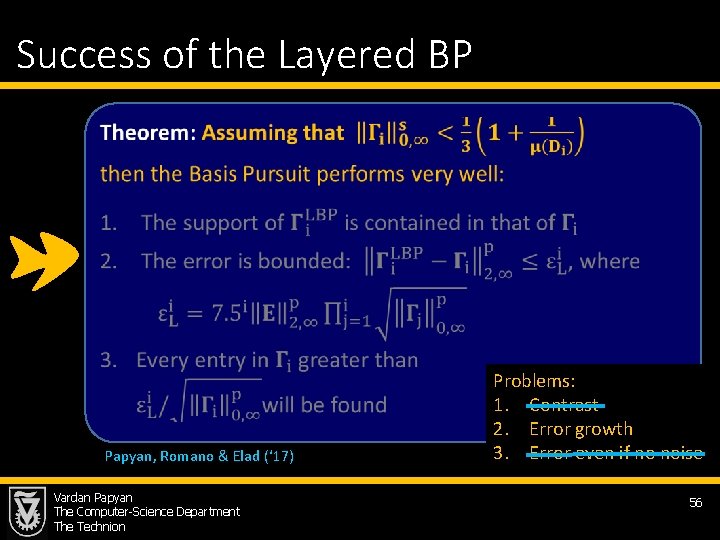

Success of the Layered-THR Papyan, Romano & Elad (‘ 17) The stability of the forward pass is guaranteed if the underlying representations are locally sparse and the noise is locally bounded Vardan Papyan The Computer-Science Department The Technion Problems: 1. Contrast 2. Error growth 3. Error even if no noise 54

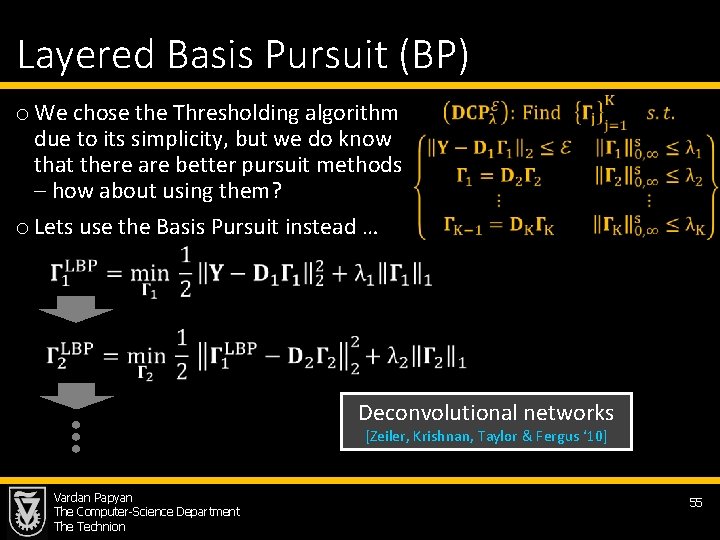

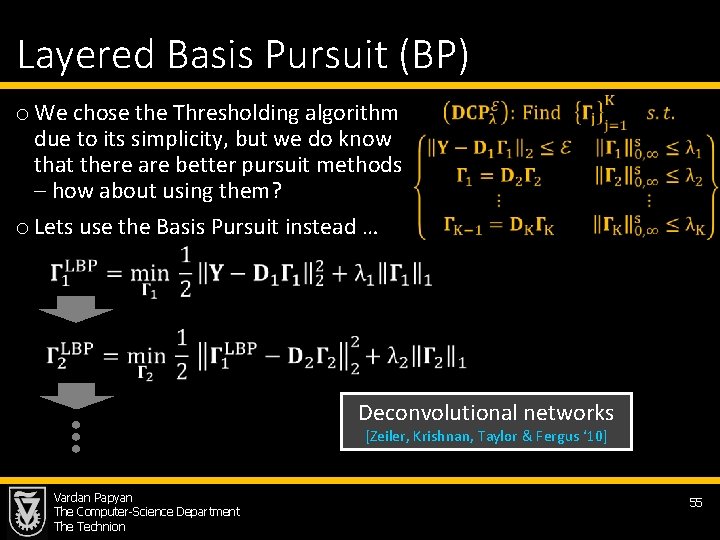

Layered Basis Pursuit (BP) o We chose the Thresholding algorithm due to its simplicity, but we do know that there are better pursuit methods – how about using them? o Lets use the Basis Pursuit instead … Deconvolutional networks [Zeiler, Krishnan, Taylor & Fergus ‘ 10] Vardan Papyan The Computer-Science Department The Technion 55

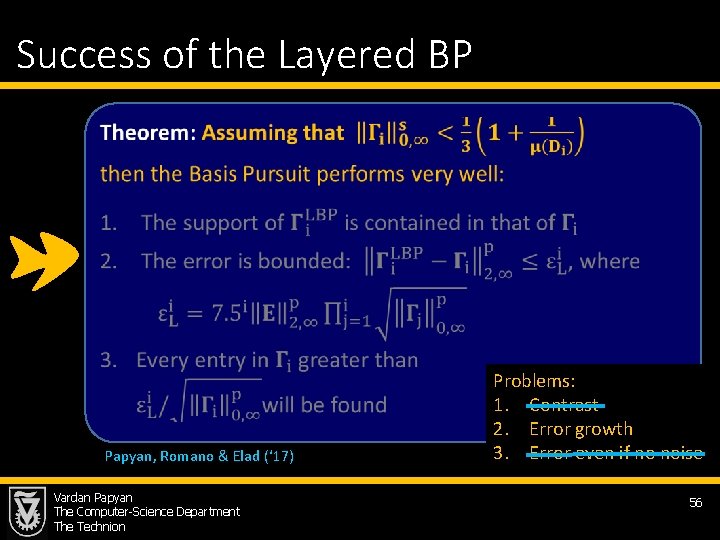

Success of the Layered BP Papyan, Romano & Elad (‘ 17) Vardan Papyan The Computer-Science Department The Technion Problems: 1. Contrast 2. Error growth 3. Error even if no noise 56

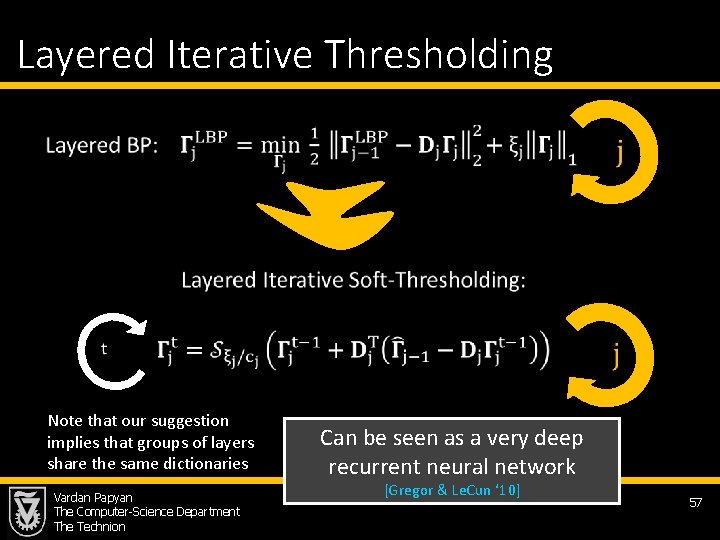

Layered Iterative Thresholding Note that our suggestion implies that groups of layers share the same dictionaries Vardan Papyan The Computer-Science Department The Technion Can be seen as a very deep recurrent neural network [Gregor & Le. Cun ‘ 10] 57

Time to Conclude Vardan Papyan The Computer-Science Department The Technion 58

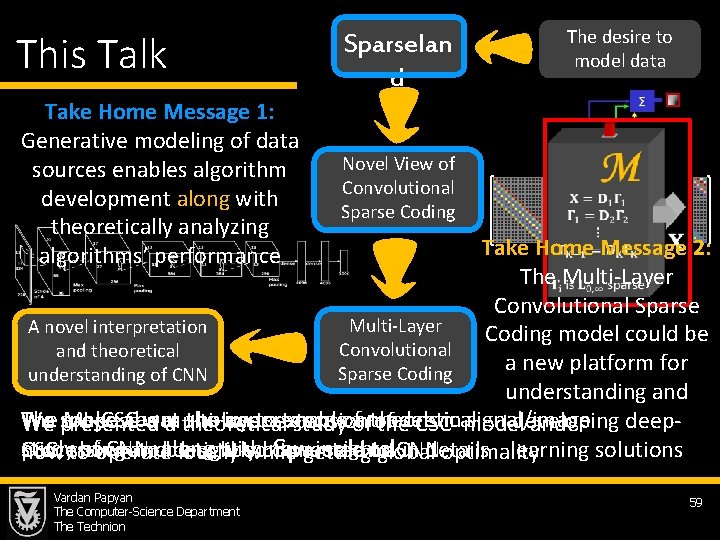

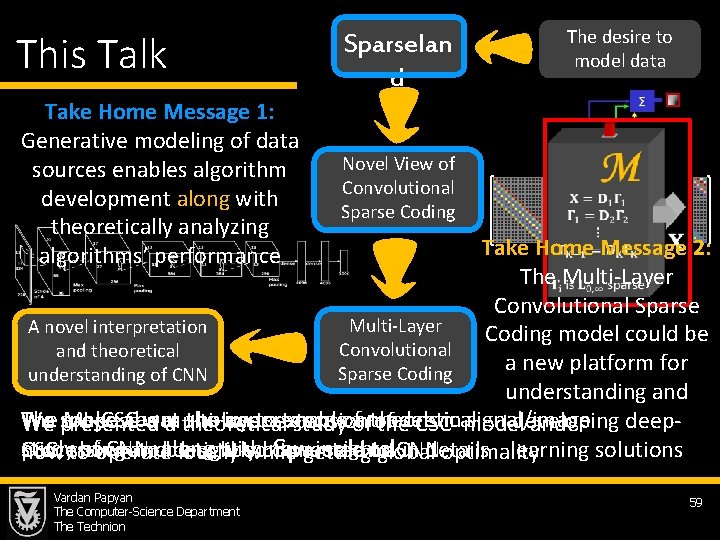

This Talk Sparselan d Take Home Message 1: Generative modeling of data sources enables algorithm development along with theoretically analyzing algorithms’ performance Novel View of Convolutional Sparse Coding The desire to model data Take Home Message 2: The Multi-Layer Convolutional Sparse Multi-Layer A novel interpretation Coding model could be Convolutional and theoretical a new platform for Sparse Coding understanding of CNN understanding and We spoke The ML-CSC about was the shown importance toextension enable a models theoretical signal/image developing deeppropose a multi-layer of presented a theoretical studyofof the CSCin model and study ofoperate CNN, with new insights learning solutions CSC, shown to along be tightly connected to CNN processing and described Sparseland in details how to locally while getting global optimality Vardan Papyan The Computer-Science Department The Technion 59

Questions? More on these (including these slides and the relevant papers) can be found in http: //vardanp. cswp. cs. technion. ac. il/ Vardan Papyan The Computer-Science Department The Technion

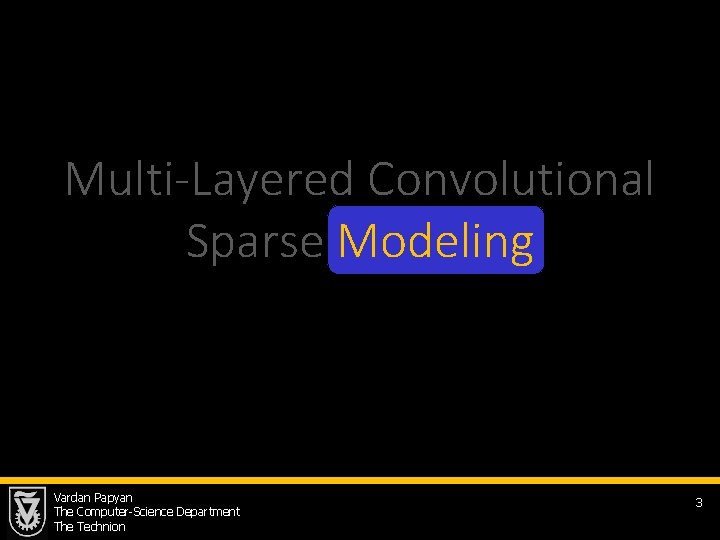

Coming Up: A Massive Open Online Course Vardan Papyan The Computer-Science Department The Technion 61