Neural Networks Slides by Megan Vasta Neural Networks

Neural Networks Slides by Megan Vasta

Neural Networks • Biological approach to AI • Developed in 1943 • Comprised of one or more layers of neurons • Several types, we’ll focus on feed-forward networks

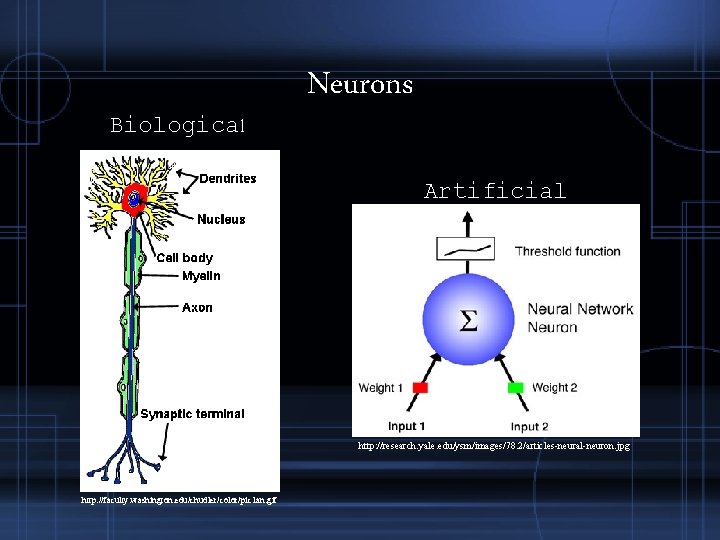

Biological Neurons Artificial http: //research. yale. edu/ysm/images/78. 2/articles-neural-neuron. jpg http: //faculty. washington. edu/chudler/color/pic 1 an. gif

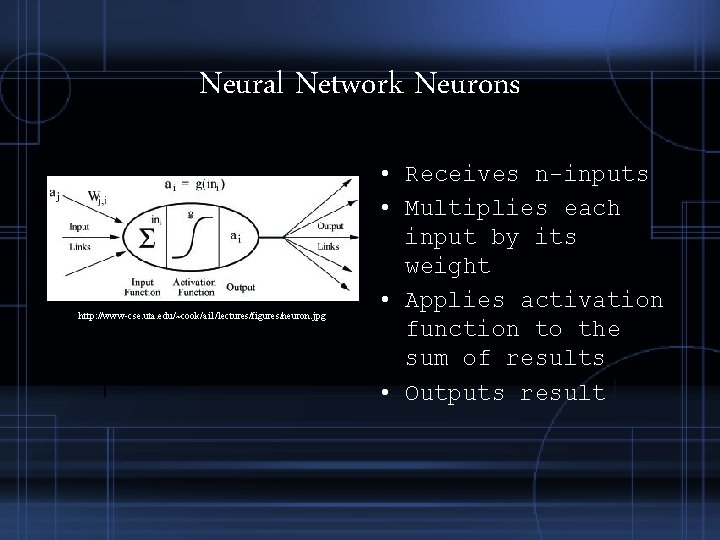

Neural Network Neurons http: //www-cse. uta. edu/~cook/ai 1/lectures/figures/neuron. jpg • Receives n-inputs • Multiplies each input by its weight • Applies activation function to the sum of results • Outputs result

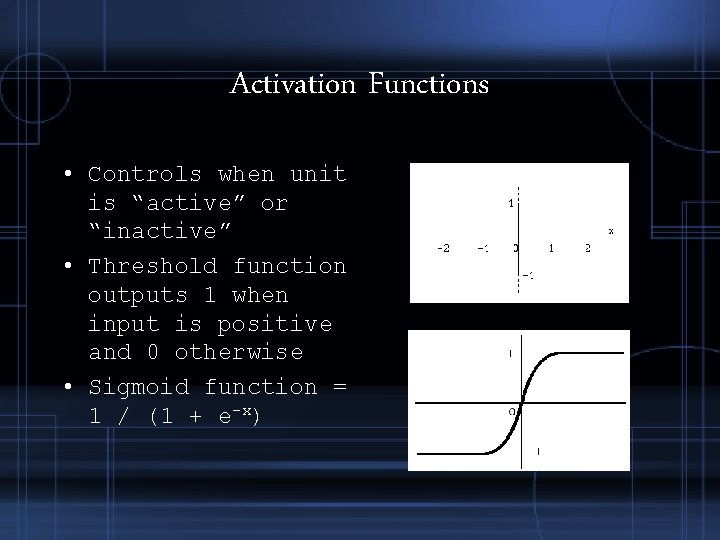

Activation Functions • Controls when unit is “active” or “inactive” • Threshold function outputs 1 when input is positive and 0 otherwise • Sigmoid function = 1 / (1 + e-x)

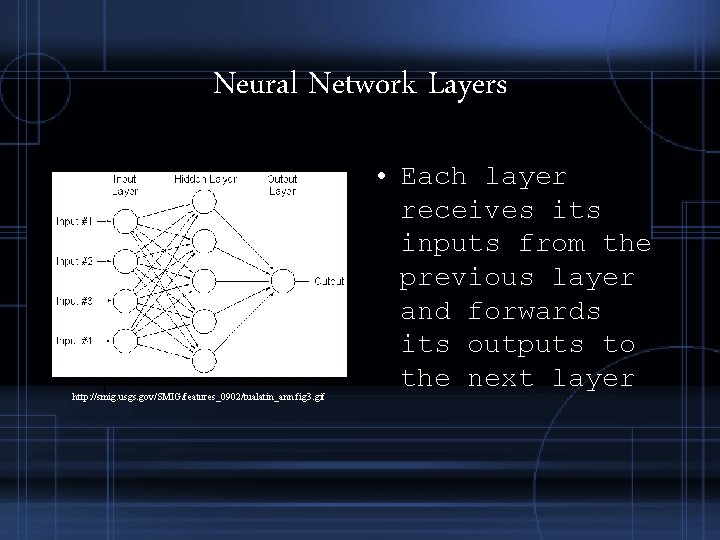

Neural Network Layers http: //smig. usgs. gov/SMIG/features_0902/tualatin_ann. fig 3. gif • Each layer receives its inputs from the previous layer and forwards its outputs to the next layer

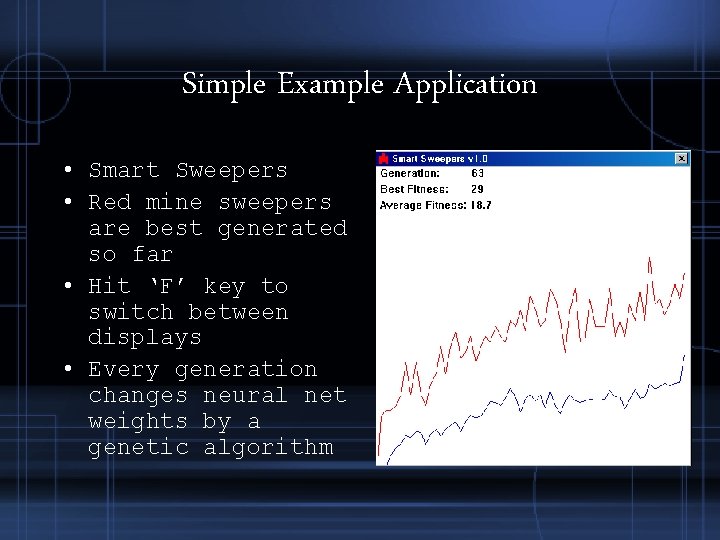

Simple Example Application • Smart Sweepers • Red mine sweepers are best generated so far • Hit ‘F’ key to switch between displays • Every generation changes neural net weights by a genetic algorithm

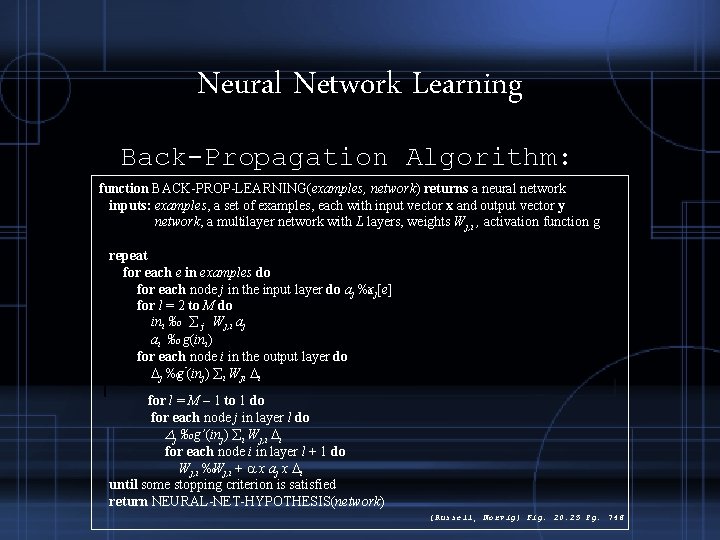

Neural Network Learning Back-Propagation Algorithm: function BACK-PROP-LEARNING(examples, network) returns a neural network inputs: examples, a set of examples, each with input vector x and output vector y network, a multilayer network with L layers, weights Wj, i , activation function g repeat for each e in examples do for each node j in the input layer do aj ‰xj[e] for l = 2 to M do ini ‰ åj Wj, i aj ai ‰ g(ini) for each node i in the output layer do Dj ‰g’(inj) åi Wji Di for l = M – 1 to 1 do for each node j in layer l do Dj ‰g’(inj) åi Wj, i Di for each node i in layer l + 1 do Wj, i ‰Wj, i + a x aj x Di until some stopping criterion is satisfied return NEURAL-NET-HYPOTHESIS(network) [Russell, Norvig] Fig. 20. 25 Pg. 746

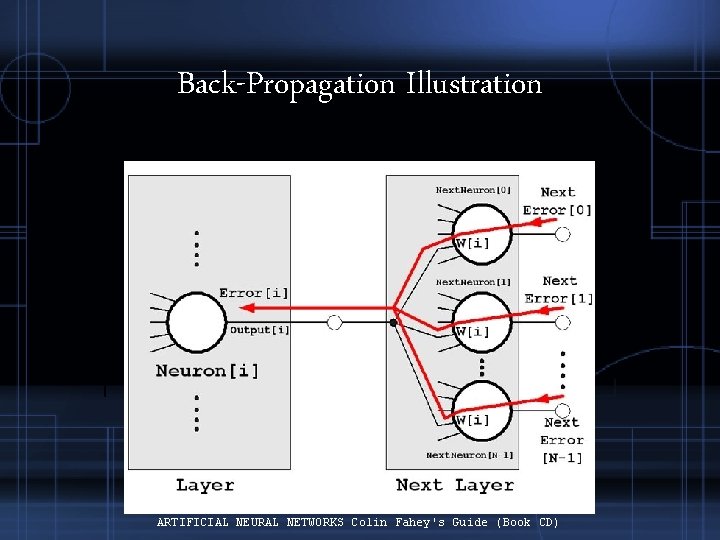

Back-Propagation Illustration ARTIFICIAL NEURAL NETWORKS Colin Fahey's Guide (Book CD)

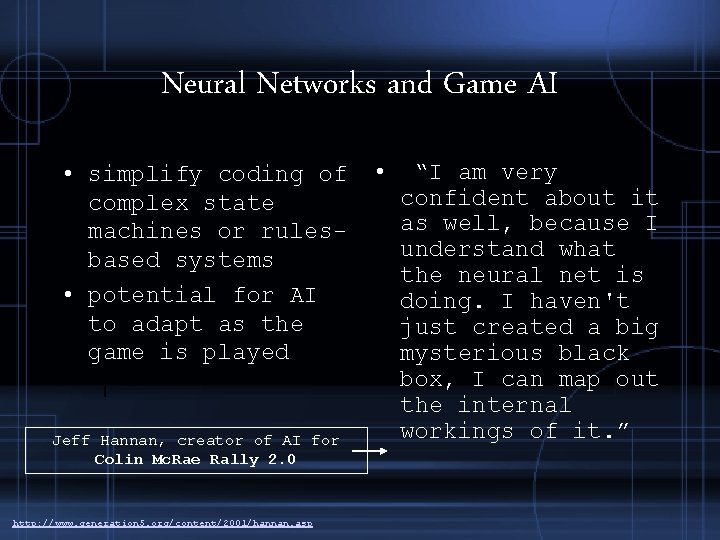

Neural Networks and Game AI • simplify coding of complex state machines or rulesbased systems • potential for AI to adapt as the game is played Jeff Hannan, creator of AI for Colin Mc. Rae Rally 2. 0 http: //www. generation 5. org/content/2001/hannan. asp • “I am very confident about it as well, because I understand what the neural net is doing. I haven't just created a big mysterious black box, I can map out the internal workings of it. ”

Colin Mc. Rae Rally 2. 0 • Used standard feedforward multilayer perceptron neural network • Constructed with the simple aim of keeping the car to the racing line http: //www. generation 5. org/content/2001/hannan. asp

BATTLECRUISER: 3000 AD http: //www. 3000 ad. com/shots/bc 3 k. shtml http: //www. gameai. com/games. html • 1998 space simulation game • 'I am a Gammulan Criminal up against a Terran Earth. COM ship. My ship is 50% damaged but my weapon systems are fully functional and my goal is still unresolved' what do I do?

Black and White • Uses neural networks to teach the creature behaviors • “Tickling” increase certain weights • Hitting reduces certain weights

Usefulness in Games • Control – motor controller, for example, control of a race car or airplane • Threat Assessment – input number of enemy ground units, aerial units, etc. • Attack or Flee – input health, distance to enemy, class of enemy, etc. • Anticipation – Predicting player’s next move, input previous moves, output prediction http: //www. onlamp. com/pub/a/onlamp/2004/09/30/AIfor. Game. Dev. html

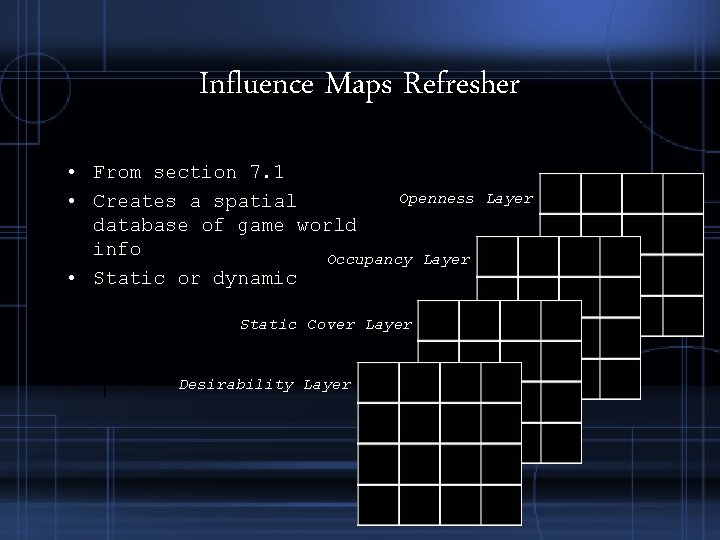

Influence Maps Refresher • From section 7. 1 Openness • Creates a spatial database of game world info Occupancy Layer • Static or dynamic Static Cover Layer Desirability Layer

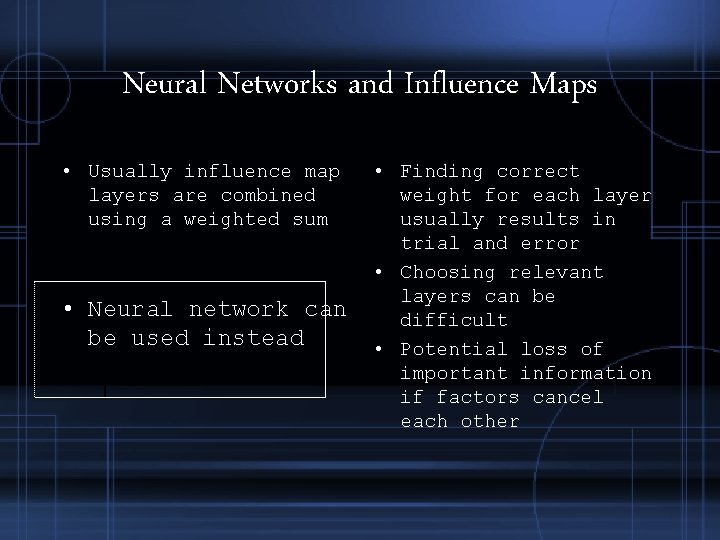

Neural Networks and Influence Maps • Usually influence map layers are combined using a weighted sum • Neural network can be used instead • Finding correct weight for each layer usually results in trial and error • Choosing relevant layers can be difficult • Potential loss of important information if factors cancel each other

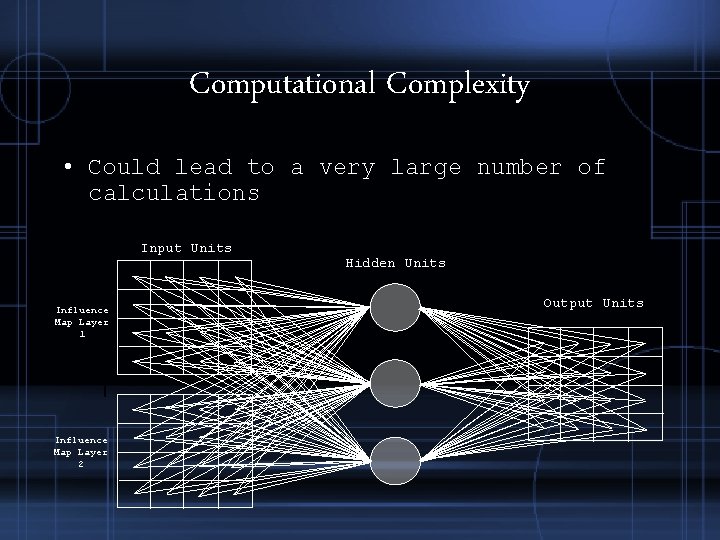

Computational Complexity • Could lead to a very large number of calculations Input Units Influence Map Layer 1 Influence Map Layer 2 Hidden Units Output Units

Optimizations • Only analyze relevant portion of map • Reduce grid resolution • Train network during development, not in-game

Design Details • Need an input for each cell in each layer of the influence map • Need one output for each cell on map • Hidden units are arbitrary, usually 10 -20 with some guess and test to prune it

Different Decisions and Personalities • One network for each decision • Implemented as one network with a different array of weights for each decision • Different personalities can have multiple arrays of weights for each decision

Training • Datasets from gaming sessions of human vs. human are best • Must decide whether training will occur in-game, during development, or both • Learning during play provides for adaptations against individual players

Conclusions • Neural networks provide ability to provide more human-like AI • Takes rough approximation and hard -coded reactions out of AI design (i. e. Rules and FSMs) • Still require a lot of fine-tuning during development

References • Four Cool Ways to Use Neural Networks in Games • Interview with Jeff Hannan, creator of AI for Colin Mc. Rae Rally 2. 0 • Interview with Derek Smart, creator of AI for Battlecruiser: 3000 AD • Neural Netware, a tutorial on neural networks • Sweetser, Penny. “Strategic Decision-Making with Neural Networks and Influence Maps”, AI Game Programming Wisdom 2, Section 7. 7 (439 – 46) • Russell, Stuart and Norvig, Peter. Artificial Intelligence: A Modern Approach, Section 20. 5 (736 – 48)

- Slides: 23