VMFEX Configuration and Best Practice for Multiple Hypervisor

VM-FEX Configuration and Best Practice for Multiple Hypervisor Timothy Ma Technical Marketing - UCS Presentation_ID © 2010 Cisco and/or its affiliates. All rights reserved. Cisco Confidential

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Base Configuration for hypervisor § VM-FEX Implementation on VMware ESX § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 2

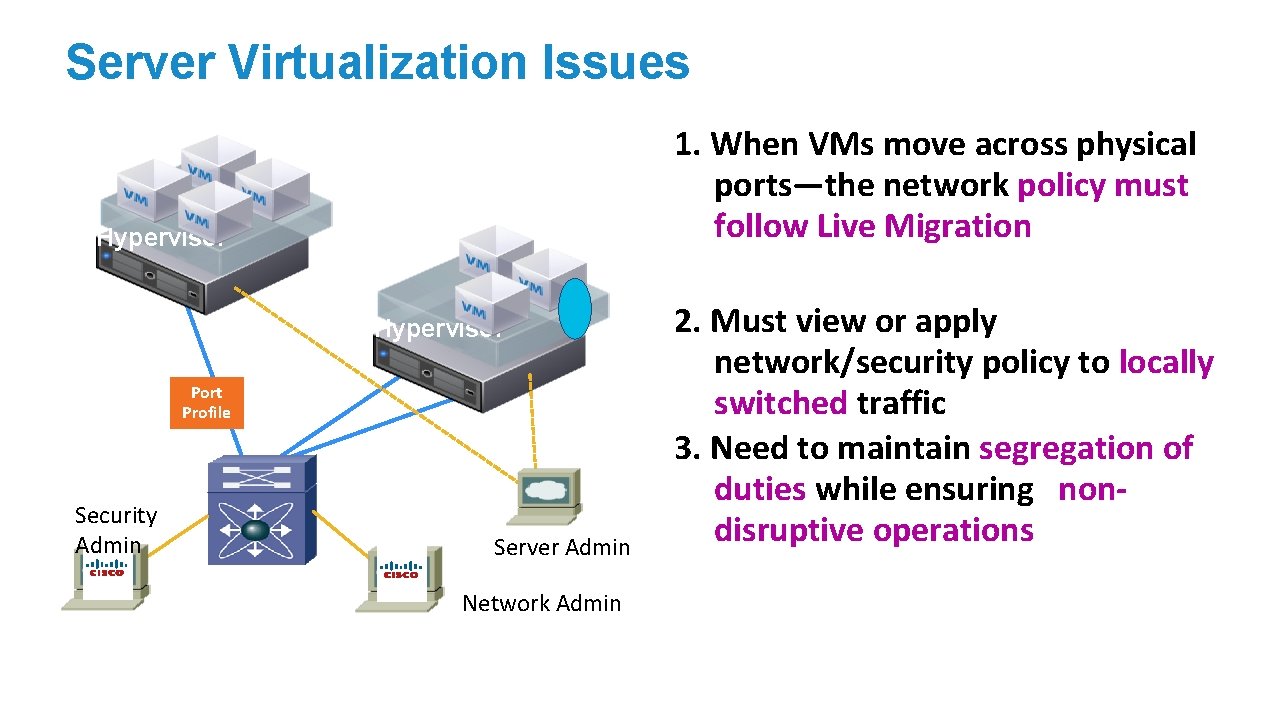

Server Virtualization Issues 1. When VMs move across physical ports—the network policy must follow Live Migration Hypervisor Port Profile Security Admin Server Admin Network Admin 2. Must view or apply network/security policy to locally switched traffic 3. Need to maintain segregation of duties while ensuring nondisruptive operations

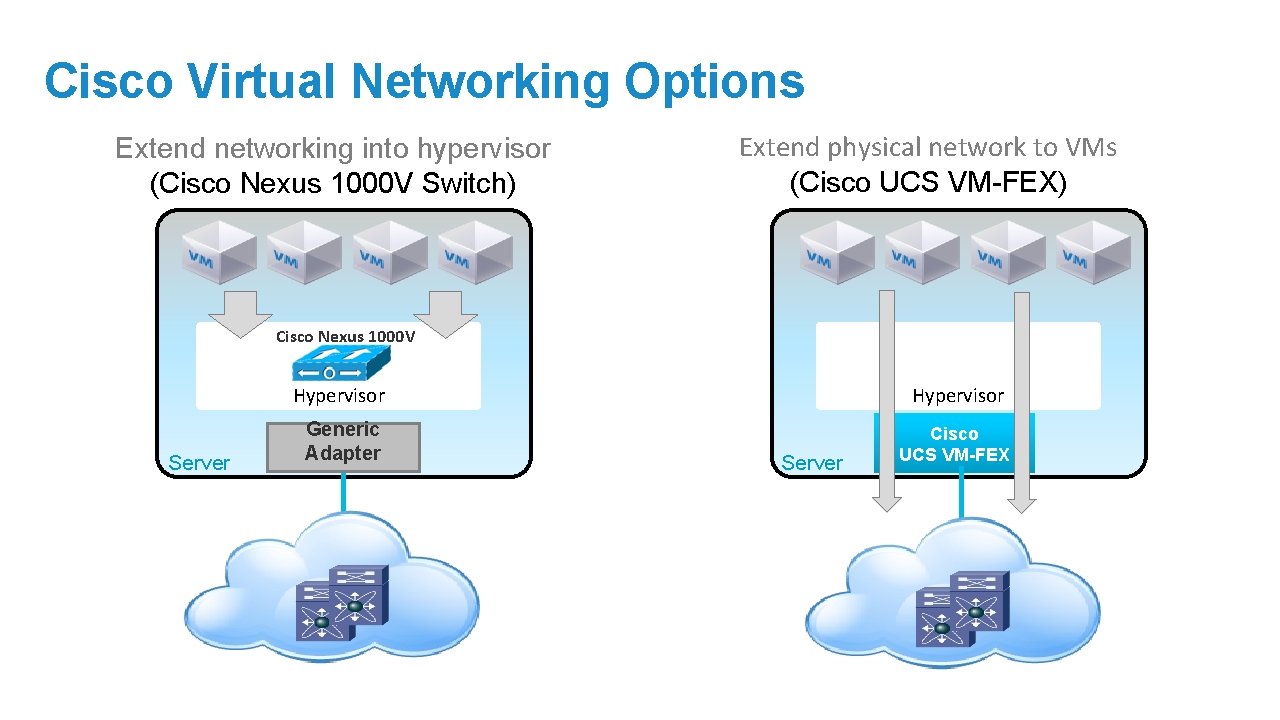

Cisco Virtual Networking Options Extend networking into hypervisor (Cisco Nexus 1000 V Switch) Extend physical network to VMs (Cisco UCS VM-FEX) Cisco Nexus 1000 V Server Hypervisor Generic Adapter Cisco UCS VM-FEX Server

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Base Configuration for hypervisor § VM-FEX Implementation on VMware ESX § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 5

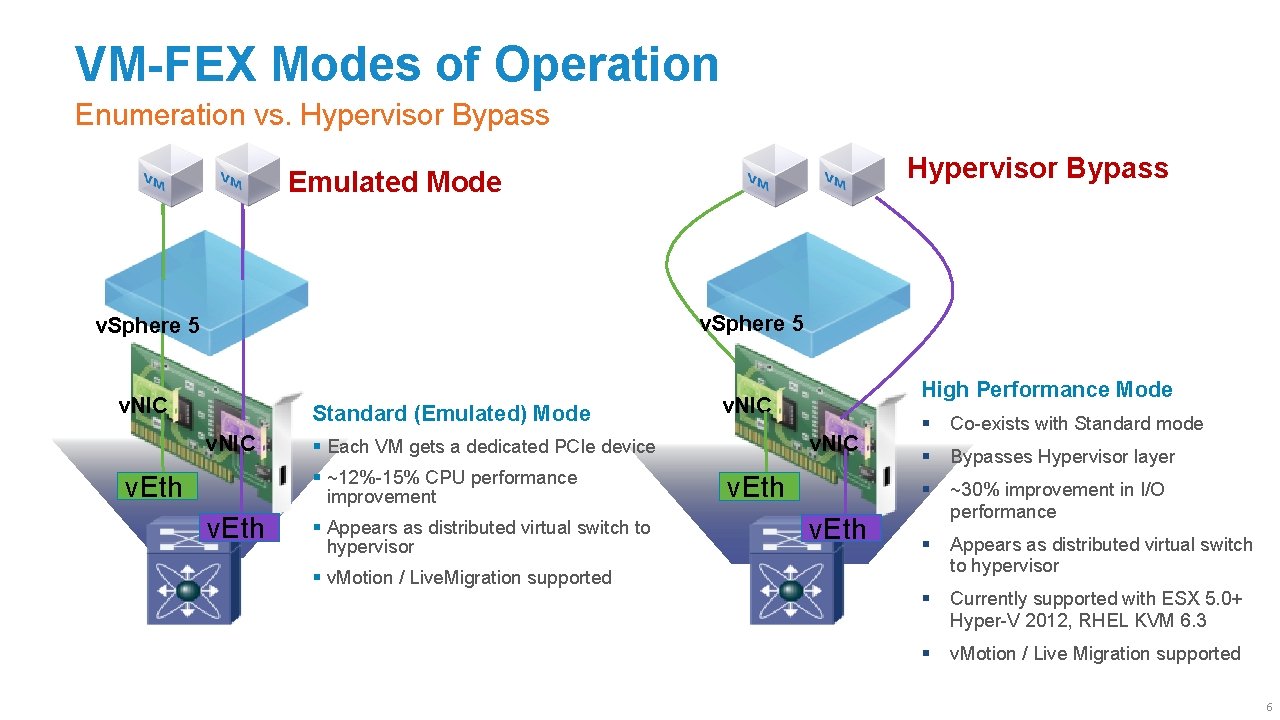

VM-FEX Modes of Operation Enumeration vs. Hypervisor Bypass Emulated Mode v. Sphere 5 v. NIC Standard (Emulated) Mode v. NIC v. Eth v. NIC § Each VM gets a dedicated PCIe device § ~12%-15% CPU performance improvement § Appears as distributed virtual switch to hypervisor § v. Motion / Live. Migration supported High Performance Mode v. Eth § Co-exists with Standard mode § Bypasses Hypervisor layer § ~30% improvement in I/O performance § Appears as distributed virtual switch to hypervisor § Currently supported with ESX 5. 0+ Hyper-V 2012, RHEL KVM 6. 3 § v. Motion / Live Migration supported 6

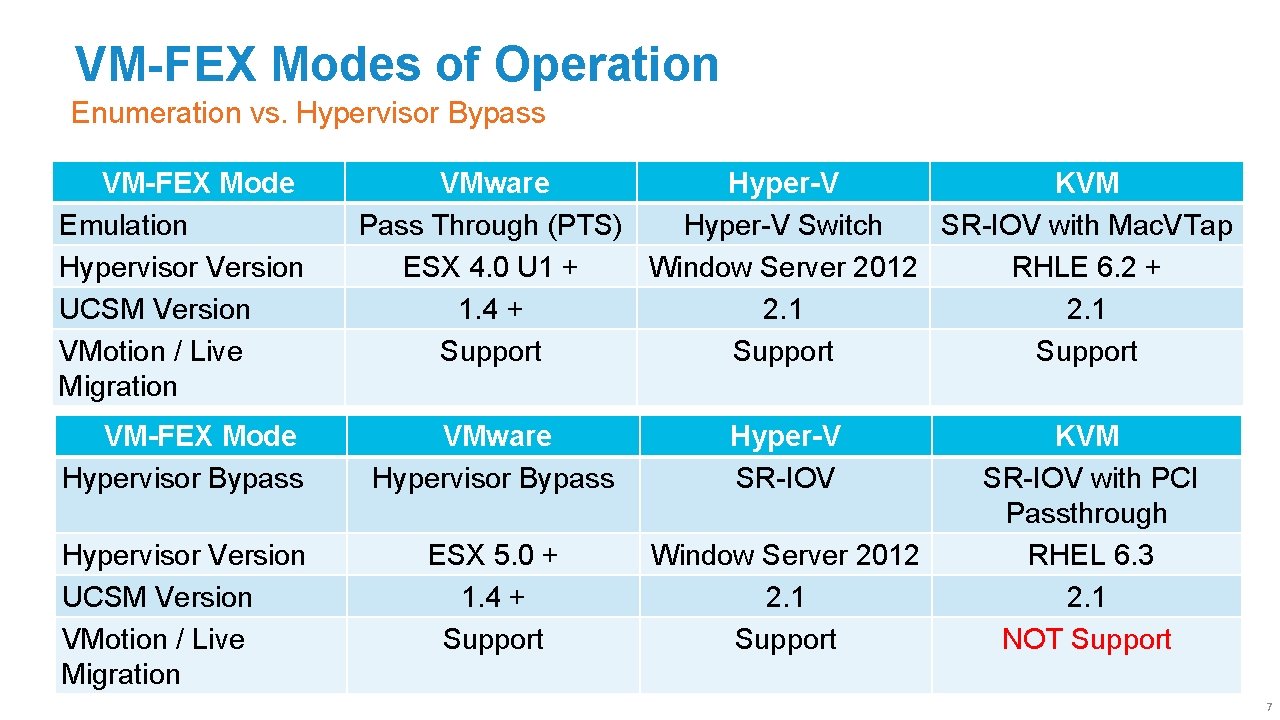

VM-FEX Modes of Operation Enumeration vs. Hypervisor Bypass VM-FEX Mode Emulation Hypervisor Version UCSM Version VMotion / Live Migration VMware Hyper-V KVM Pass Through (PTS) Hyper-V Switch SR-IOV with Mac. VTap ESX 4. 0 U 1 + Window Server 2012 RHLE 6. 2 + 1. 4 + 2. 1 Support VM-FEX Mode Hypervisor Bypass VMware Hypervisor Bypass Hyper-V SR-IOV Hypervisor Version UCSM Version VMotion / Live Migration ESX 5. 0 + 1. 4 + Support Window Server 2012 2. 1 Support KVM SR-IOV with PCI Passthrough RHEL 6. 3 2. 1 NOT Support 7

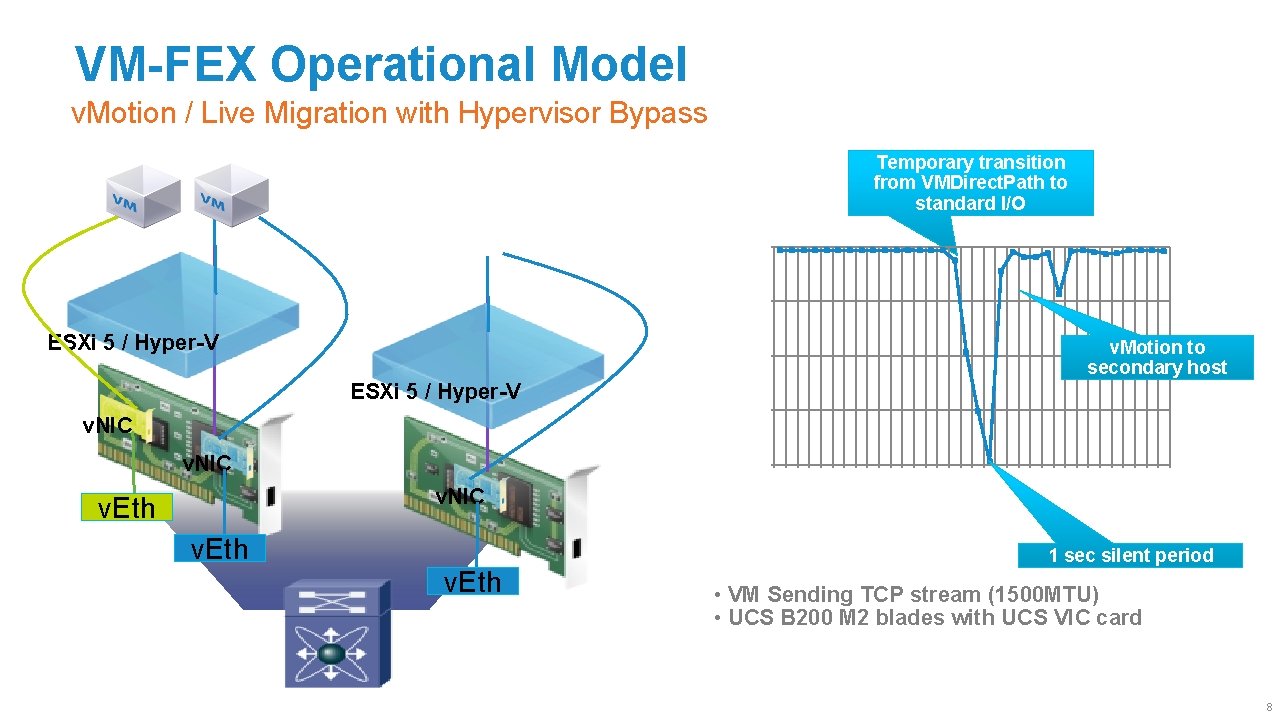

VM-FEX Operational Model v. Motion / Live Migration with Hypervisor Bypass Temporary transition from VMDirect. Path to standard I/O 10000 7500 Mbps 19: 06: 52 19: 06: 47 19: 06: 43 v. Eth 19: 06: 39 v. NIC 19: 06: 35 0 19: 06: 31 v. NIC 19: 06: 27 v. NIC 2500 19: 06: 23 ESXi 5 / Hyper-V v. Sphere 4 v. Motion to secondary host 5000 19: 06: 19 ESXi 5 / Hyper-V Time (secs) v. Eth 1 sec silent period • VM Sending TCP stream (1500 MTU) • UCS B 200 M 2 blades with UCS VIC card 8

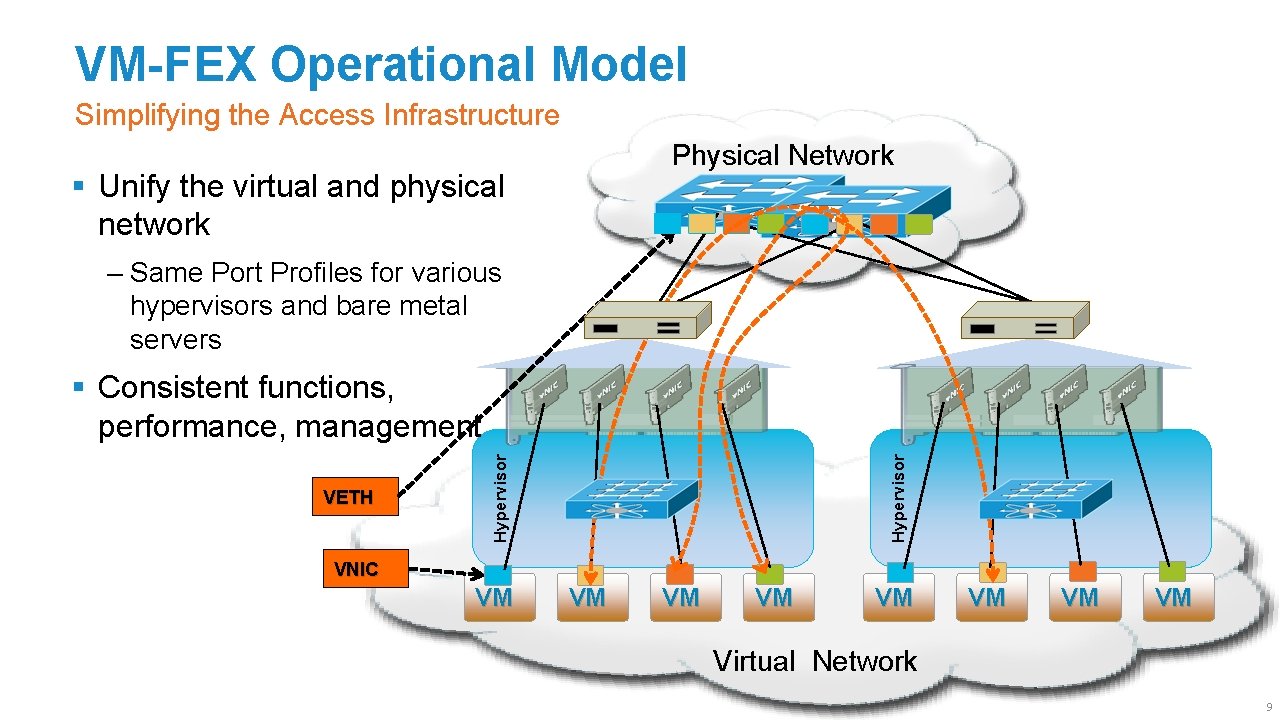

VM-FEX Operational Model Simplifying the Access Infrastructure Physical Network § Unify the virtual and physical network ‒ Same Port Profiles for various hypervisors and bare metal servers Hypervisor VETH Hypervisor § Consistent functions, performance, management VNIC VM VM Virtual Network 9

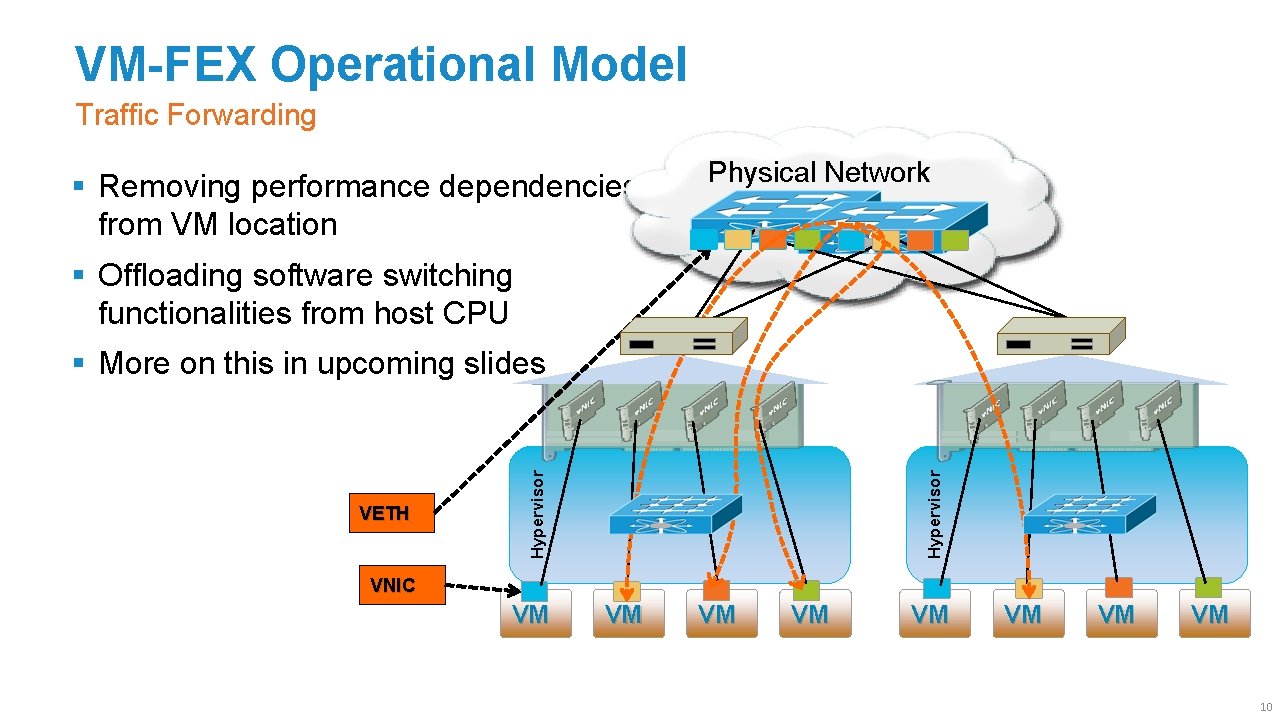

VM-FEX Operational Model Traffic Forwarding § Removing performance dependencies from VM location Physical Network § Offloading software switching functionalities from host CPU Hypervisor VETH Hypervisor § More on this in upcoming slides VNIC VM VM 10

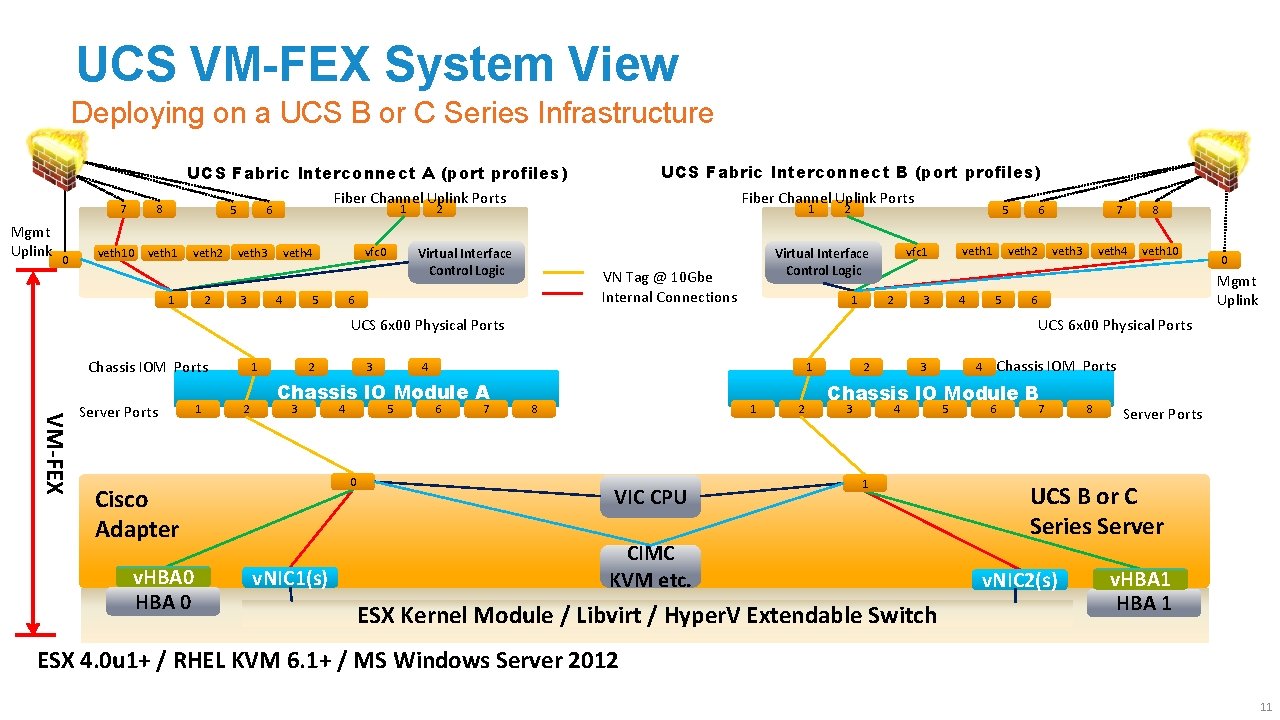

UCS VM-FEX System View Deploying on a UCS B or C Series Infrastructure UCS Fabric Interconnect B (port profiles) UCS Fabric Interconnect A (port profiles) 7 Mgmt Uplink 0 8 veth 10 5 veth 1 veth 2 1 2 Fiber Channel Uplink Ports 1 6 veth 3 3 vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 1 Virtual Interface Control Logic 1 5 veth 1 vfc 1 Virtual Interface Control Logic VN Tag @ 10 Gbe Internal Connections 6 2 2 3 4 5 VM-FEX Server Ports 1 1 2 2 3 4 5 1 0 v. NIC 1(s) 6 7 8 veth 4 veth 10 0 Mgmt Uplink 6 UCS 6 x 00 Physical Ports 4 Chassis IO Module A Cisco Adapter v. HBA 0 3 7 veth 3 veth 2 UCS 6 x 00 Physical Ports Chassis IOM Ports 6 8 1 VIC CPU 2 2 3 Chassis IOM Ports 4 Chassis IO Module B 3 4 1 CIMC KVM etc. ESX Kernel Module / Libvirt / Hyper. V Extendable Switch 5 6 7 8 Server Ports UCS B or C Series Server v. NIC 2(s) v. HBA 1 ESX 4. 0 u 1+ / RHEL KVM 6. 1+ / MS Windows Server 2012 11

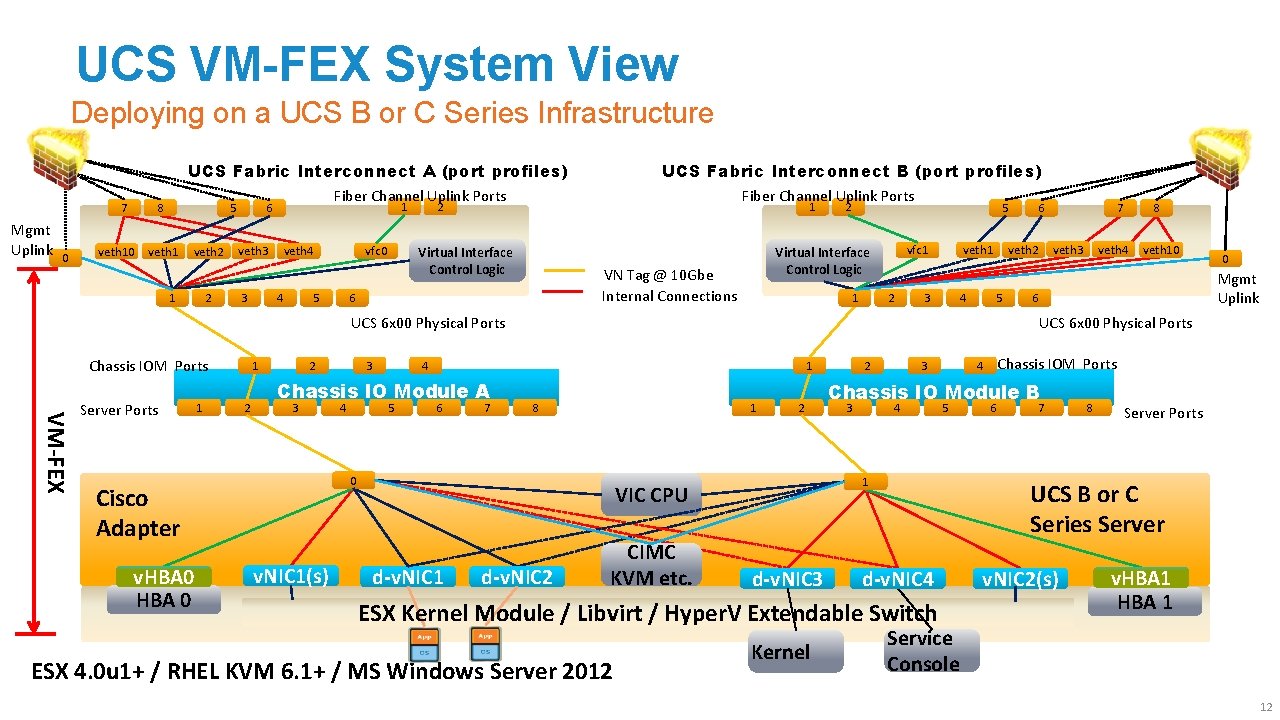

UCS VM-FEX System View Deploying on a UCS B or C Series Infrastructure UCS Fabric Interconnect B (port profiles) UCS Fabric Interconnect A (port profiles) 7 Mgmt Uplink 0 8 veth 10 5 veth 1 veth 2 1 2 Fiber Channel Uplink Ports 1 6 veth 3 3 vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 1 Virtual Interface Control Logic 1 5 veth 1 vfc 1 Virtual Interface Control Logic VN Tag @ 10 Gbe Internal Connections 6 2 2 3 4 5 VM-FEX Server Ports 1 1 2 2 3 4 5 1 6 7 8 1 0 v. NIC 1(s) 2 d-v. NIC 2 CIMC KVM etc. 2 3 veth 4 veth 10 0 Mgmt Uplink 6 d-v. NIC 3 Chassis IO Module B 3 4 ESX 4. 0 u 1+ / RHEL KVM 6. 1+ / MS Windows Server 2012 5 6 7 8 Server Ports UCS B or C Series Server d-v. NIC 4 ESX Kernel Module / Libvirt / Hyper. V Extendable Switch Kernel Chassis IOM Ports 4 1 VIC CPU d-v. NIC 1 8 UCS 6 x 00 Physical Ports 4 Chassis IO Module A Cisco Adapter v. HBA 0 3 7 veth 3 veth 2 UCS 6 x 00 Physical Ports Chassis IOM Ports 6 v. NIC 2(s) v. HBA 1 Service Console 12

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Baseline Configuration for hypervisor § VM-FEX Implementation on VMware ESX § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 13

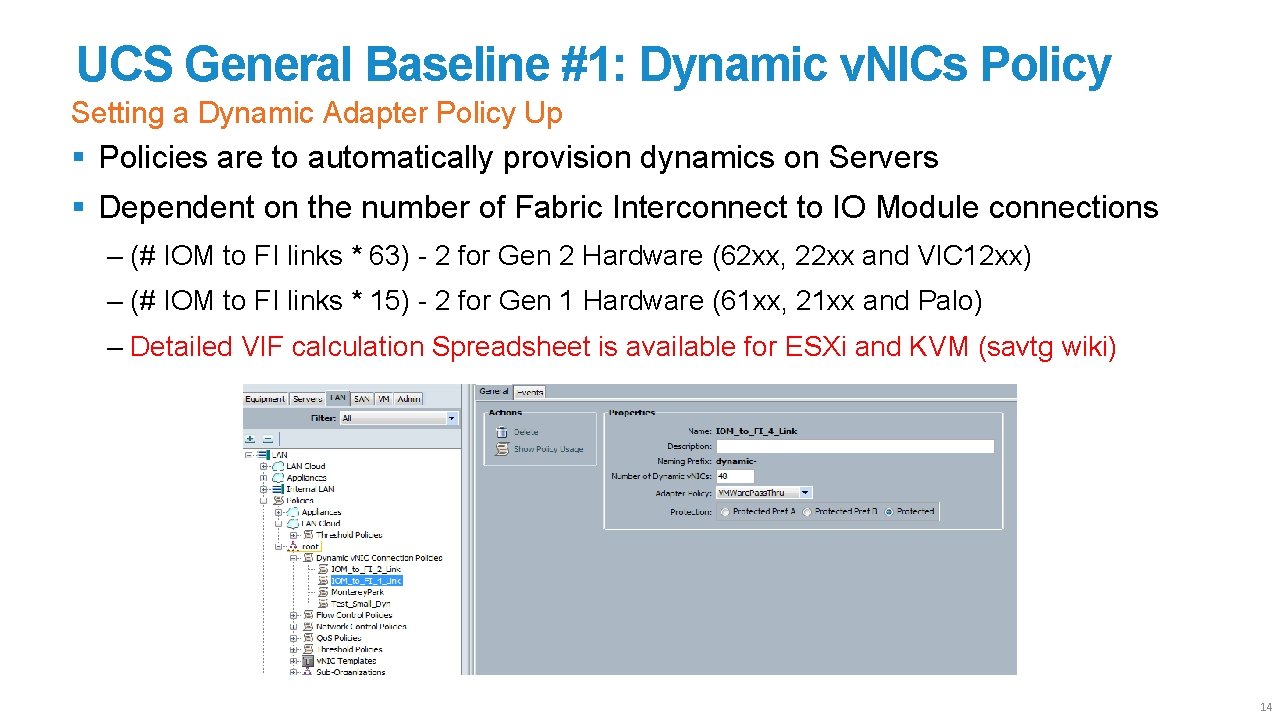

UCS General Baseline #1: Dynamic v. NICs Policy Setting a Dynamic Adapter Policy Up § Policies are to automatically provision dynamics on Servers § Dependent on the number of Fabric Interconnect to IO Module connections ‒ (# IOM to FI links * 63) - 2 for Gen 2 Hardware (62 xx, 22 xx and VIC 12 xx) ‒ (# IOM to FI links * 15) - 2 for Gen 1 Hardware (61 xx, 21 xx and Palo) ‒ Detailed VIF calculation Spreadsheet is available for ESXi and KVM (savtg wiki) 14

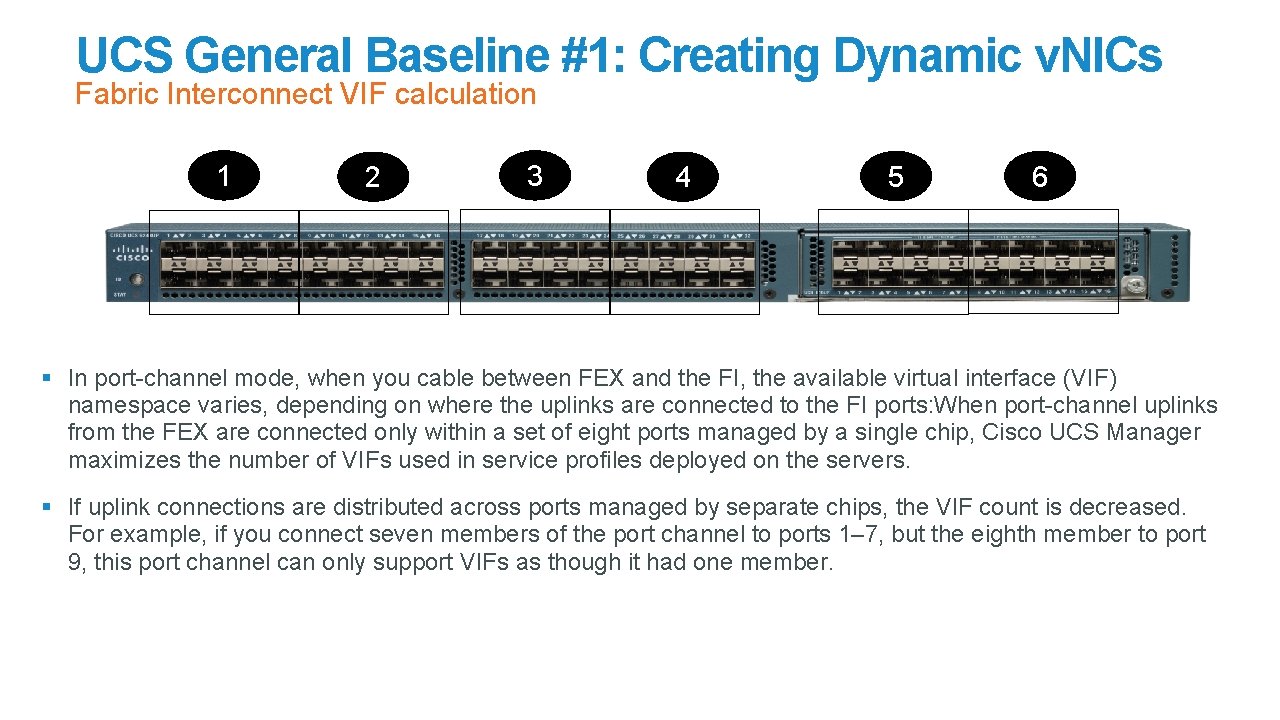

UCS General Baseline #1: Creating Dynamic v. NICs Fabric Interconnect VIF calculation 1 2 3 4 5 6 § In port-channel mode, when you cable between FEX and the FI, the available virtual interface (VIF) namespace varies, depending on where the uplinks are connected to the FI ports: When port-channel uplinks from the FEX are connected only within a set of eight ports managed by a single chip, Cisco UCS Manager maximizes the number of VIFs used in service profiles deployed on the servers. § If uplink connections are distributed across ports managed by separate chips, the VIF count is decreased. For example, if you connect seven members of the port channel to ports 1– 7, but the eighth member to port 9, this port channel can only support VIFs as though it had one member.

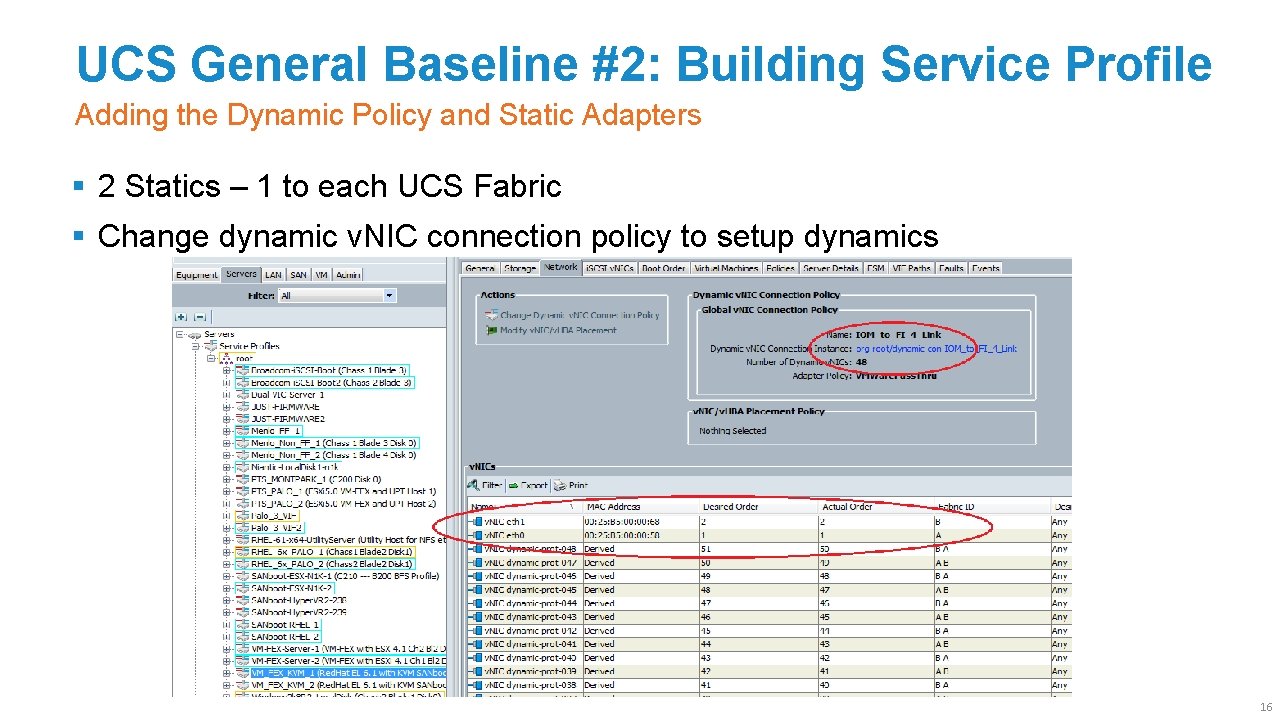

UCS General Baseline #2: Building Service Profile Adding the Dynamic Policy and Static Adapters § 2 Statics – 1 to each UCS Fabric § Change dynamic v. NIC connection policy to setup dynamics 16

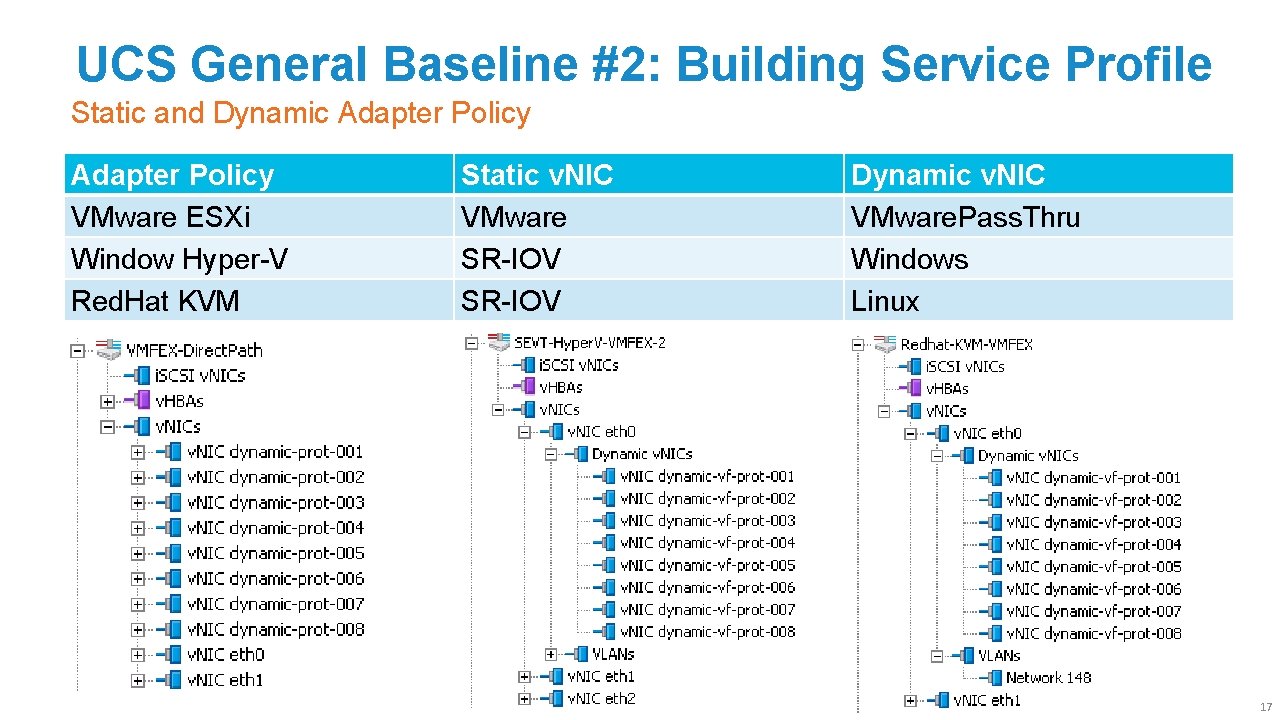

UCS General Baseline #2: Building Service Profile Static and Dynamic Adapter Policy VMware ESXi Window Hyper-V Red. Hat KVM Static v. NIC VMware SR-IOV Dynamic v. NIC VMware. Pass. Thru Windows Linux 17

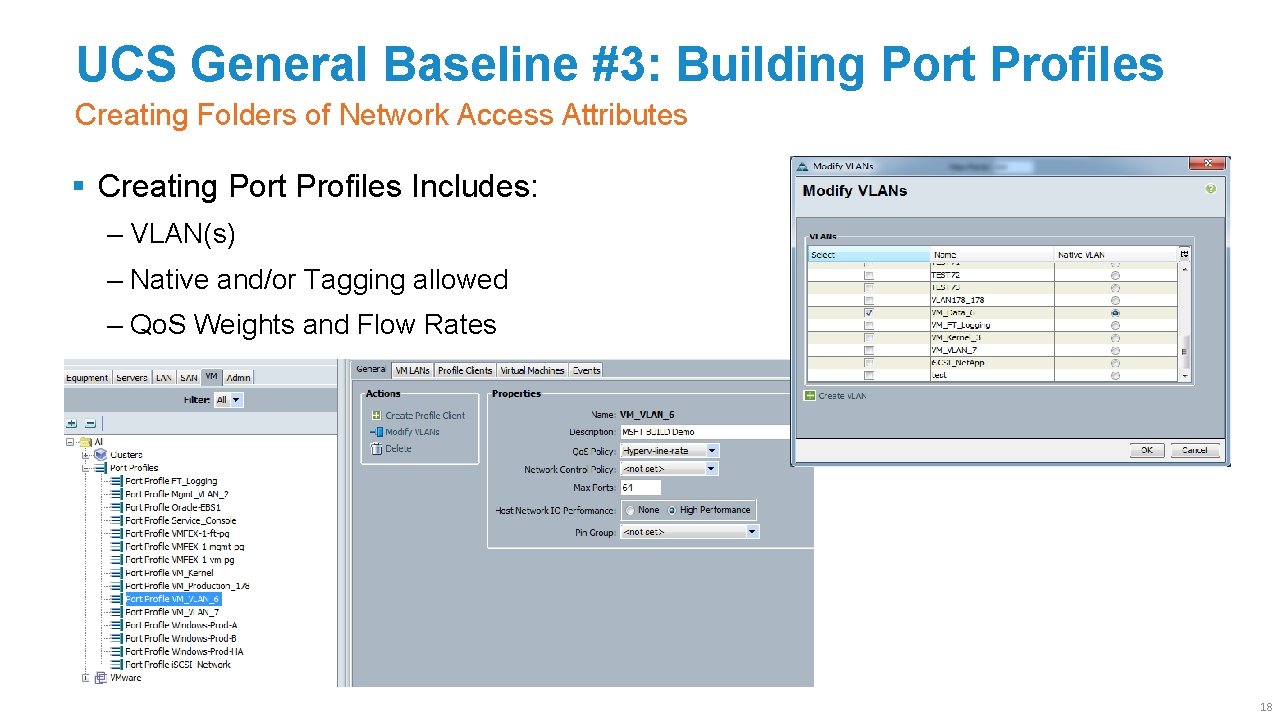

UCS General Baseline #3: Building Port Profiles Creating Folders of Network Access Attributes § Creating Port Profiles Includes: ‒ VLAN(s) ‒ Native and/or Tagging allowed ‒ Qo. S Weights and Flow Rates ‒ Upstream Ports to always use 18

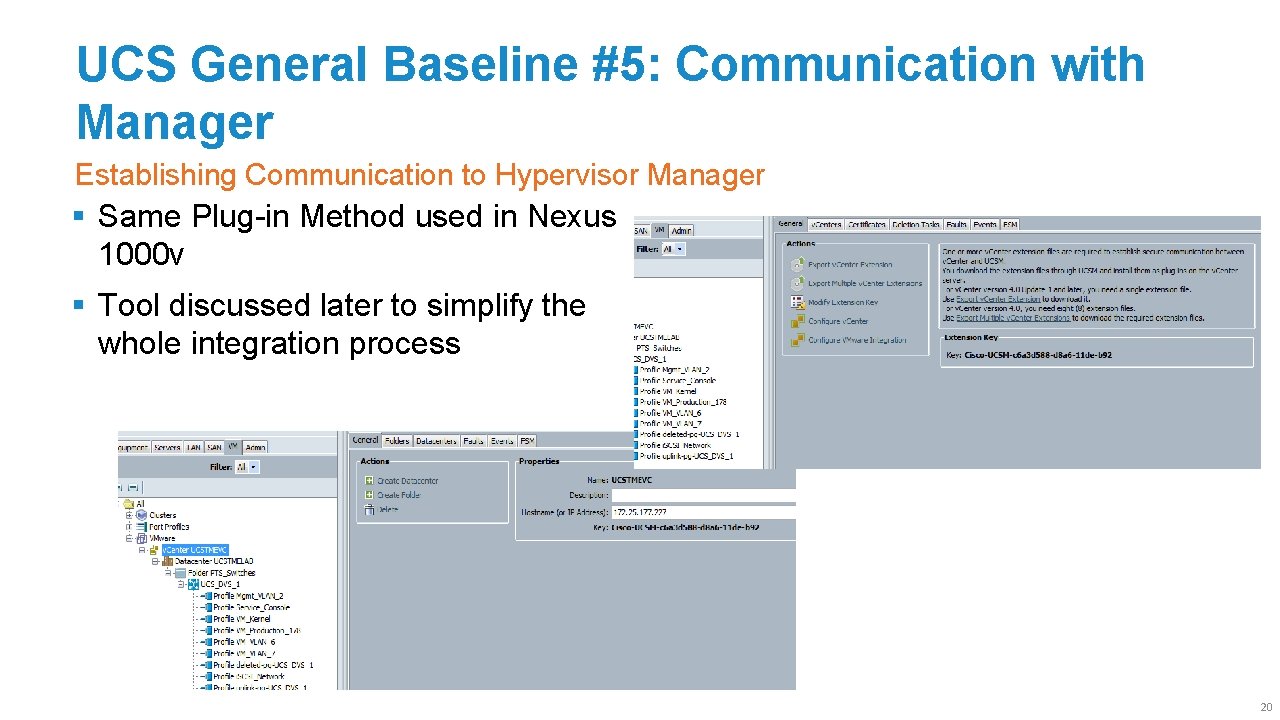

UCS General Baseline #5: Communication with Manager Establishing Communication to Hypervisor Manager § Same Plug-in Method used in Nexus 1000 v § Tool discussed later to simplify the whole integration process 20

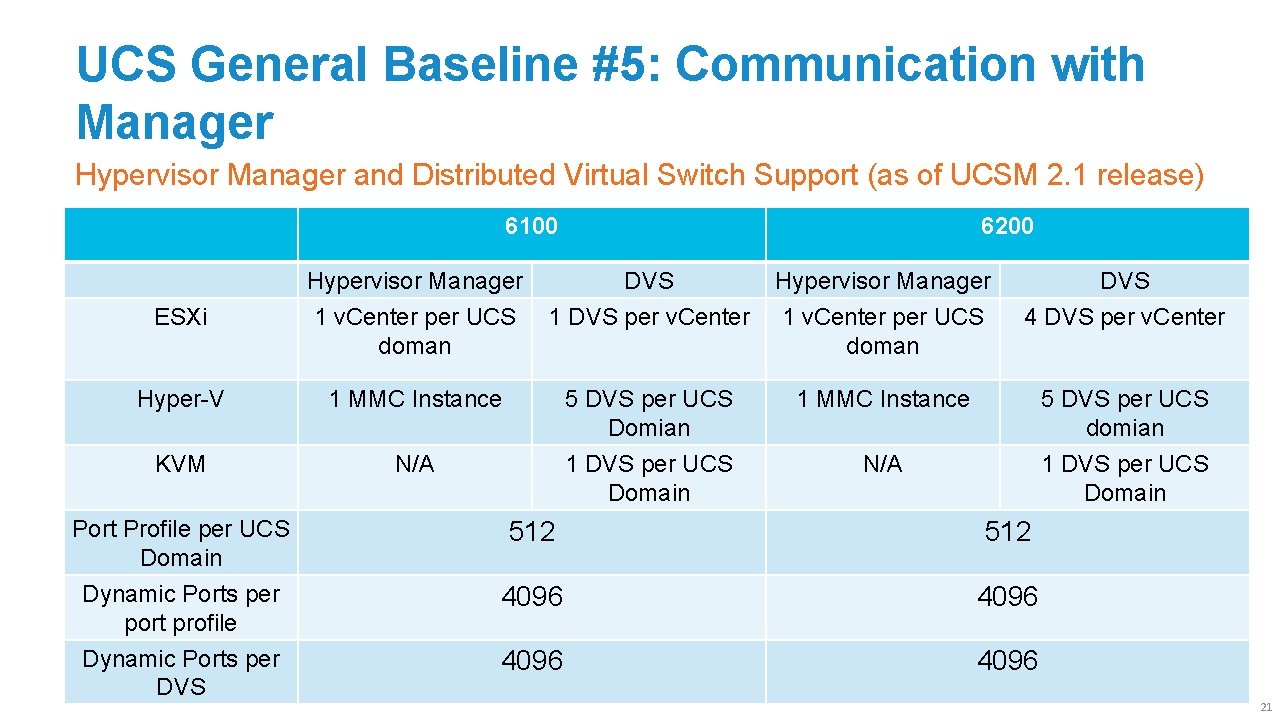

UCS General Baseline #5: Communication with Manager Hypervisor Manager and Distributed Virtual Switch Support (as of UCSM 2. 1 release) 6100 6200 ESXi Hypervisor Manager 1 v. Center per UCS doman DVS 1 DVS per v. Center Hypervisor Manager 1 v. Center per UCS doman DVS 4 DVS per v. Center Hyper-V 1 MMC Instance 5 DVS per UCS Domian 1 MMC Instance 5 DVS per UCS domian KVM N/A 1 DVS per UCS Domain Port Profile per UCS Domain Dynamic Ports per port profile Dynamic Ports per DVS 512 4096 21

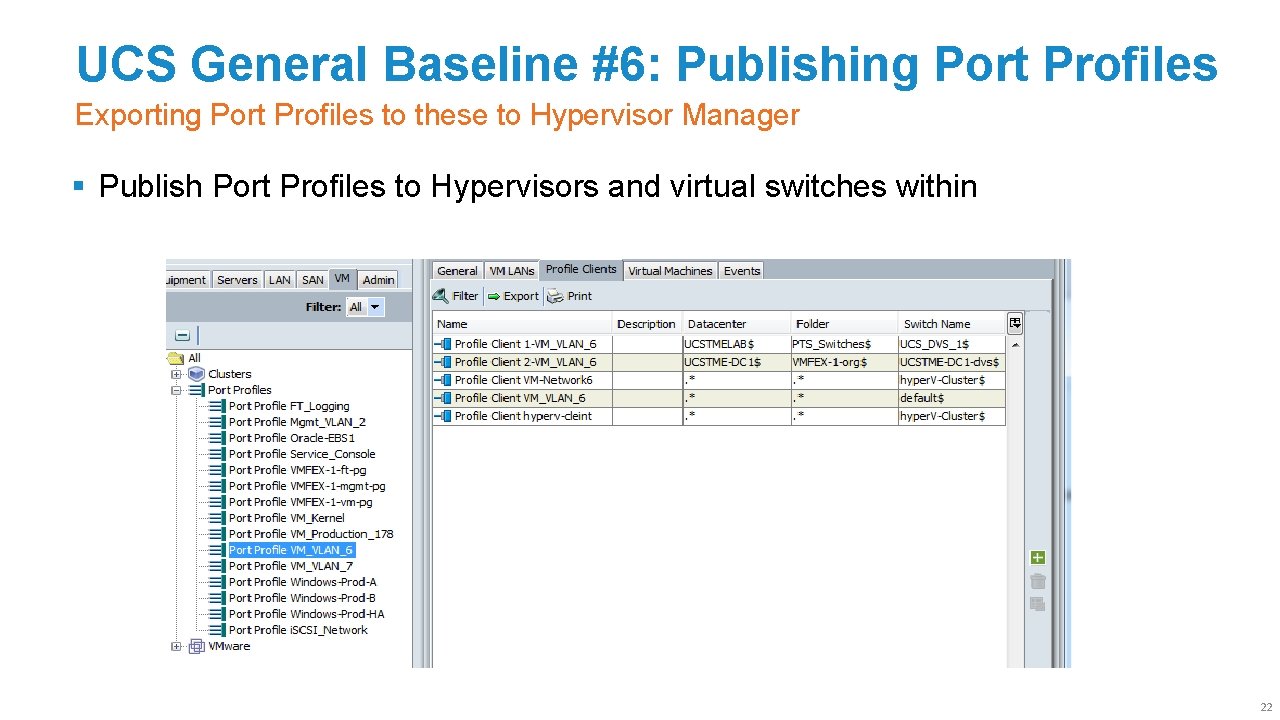

UCS General Baseline #6: Publishing Port Profiles Exporting Port Profiles to these to Hypervisor Manager § Publish Port Profiles to Hypervisors and virtual switches within 22

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Baseline Configuration for hypervisor § VM-FEX Implementation with VMware ESX on UCS § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 23

VMware VM-FEX: Infrastructure Requirements Versions, Licenses, etc. § Enterprise Plus License required (as is for any DVS) on Host § Standard License and above is required for v. Center § v. Center Plug-In download and install method (unless Easy VM-FEX tool is used) § Hosts then use VUM Depot’s to install ESX module when bringing host into UCS DVS (unless Easy VM-FEX tool is used) § VMotion fully supported for both emulated and hypervisor bypass § VMDirect. Path (Hypervisor Bypass) with VM-FEX is supported with ESXi 5. 0+ § VM-FEX upgrade is supported from ESXi 4. x to ESXi 5. x with Customized ISO and VMware Update Manger 24

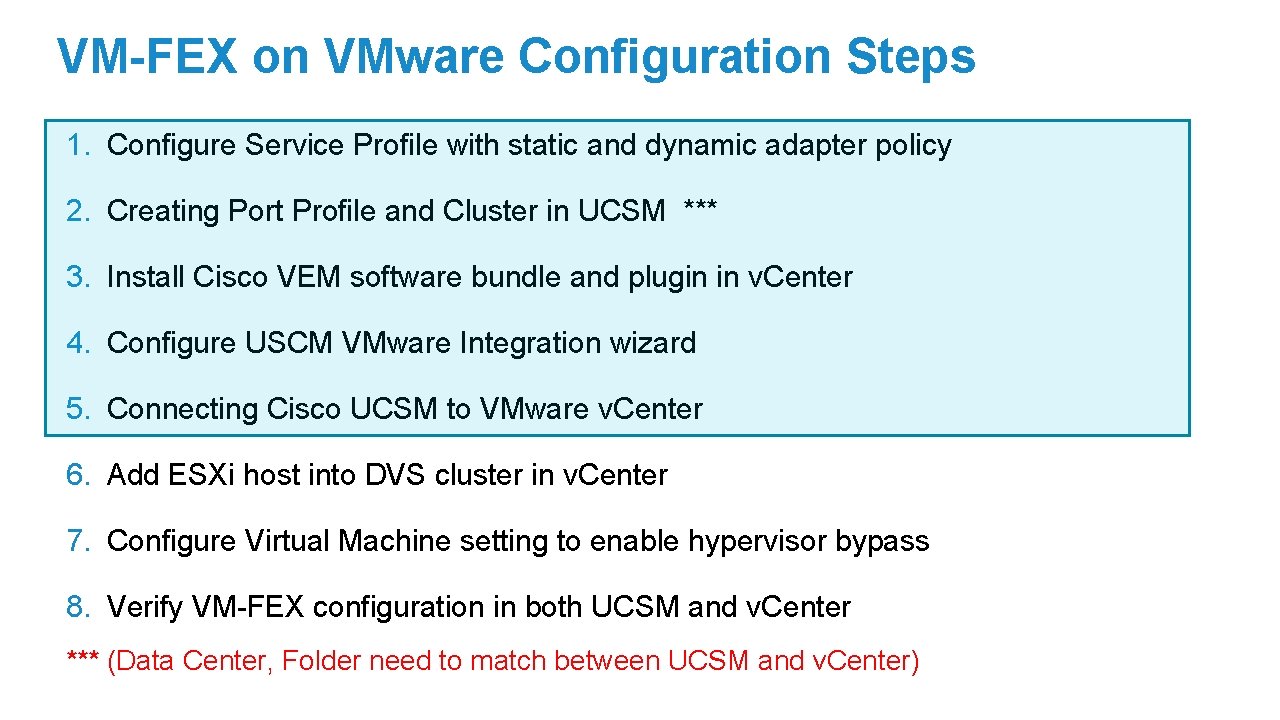

VM-FEX on VMware Configuration Steps 1. Configure Service Profile with static and dynamic adapter policy 2. Creating Port Profile and Cluster in UCSM *** 3. Install Cisco VEM software bundle and plugin in v. Center 4. Configure USCM VMware Integration wizard 5. Connecting Cisco UCSM to VMware v. Center 6. Add ESXi host into DVS cluster in v. Center 7. Configure Virtual Machine setting to enable hypervisor bypass 8. Verify VM-FEX configuration in both UCSM and v. Center *** (Data Center, Folder need to match between UCSM and v. Center)

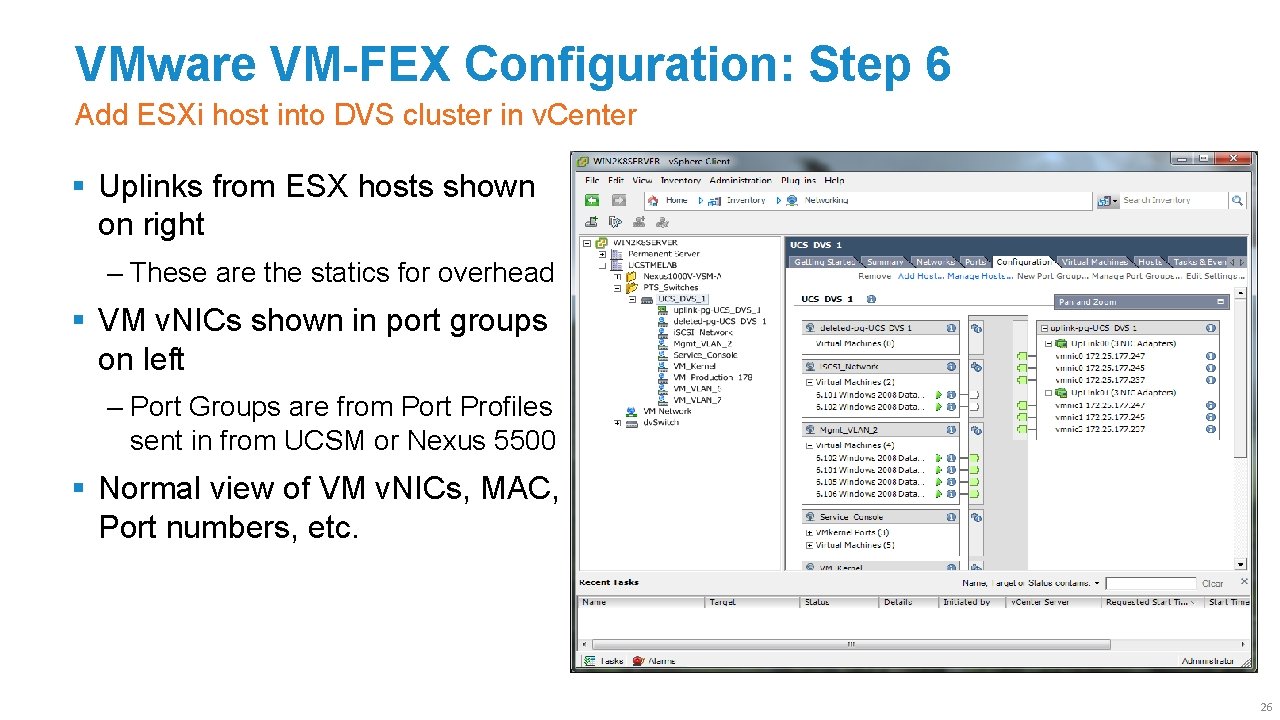

VMware VM-FEX Configuration: Step 6 Add ESXi host into DVS cluster in v. Center § Uplinks from ESX hosts shown on right ‒ These are the statics for overhead § VM v. NICs shown in port groups on left ‒ Port Groups are from Port Profiles sent in from UCSM or Nexus 5500 § Normal view of VM v. NICs, MAC, Port numbers, etc. 26

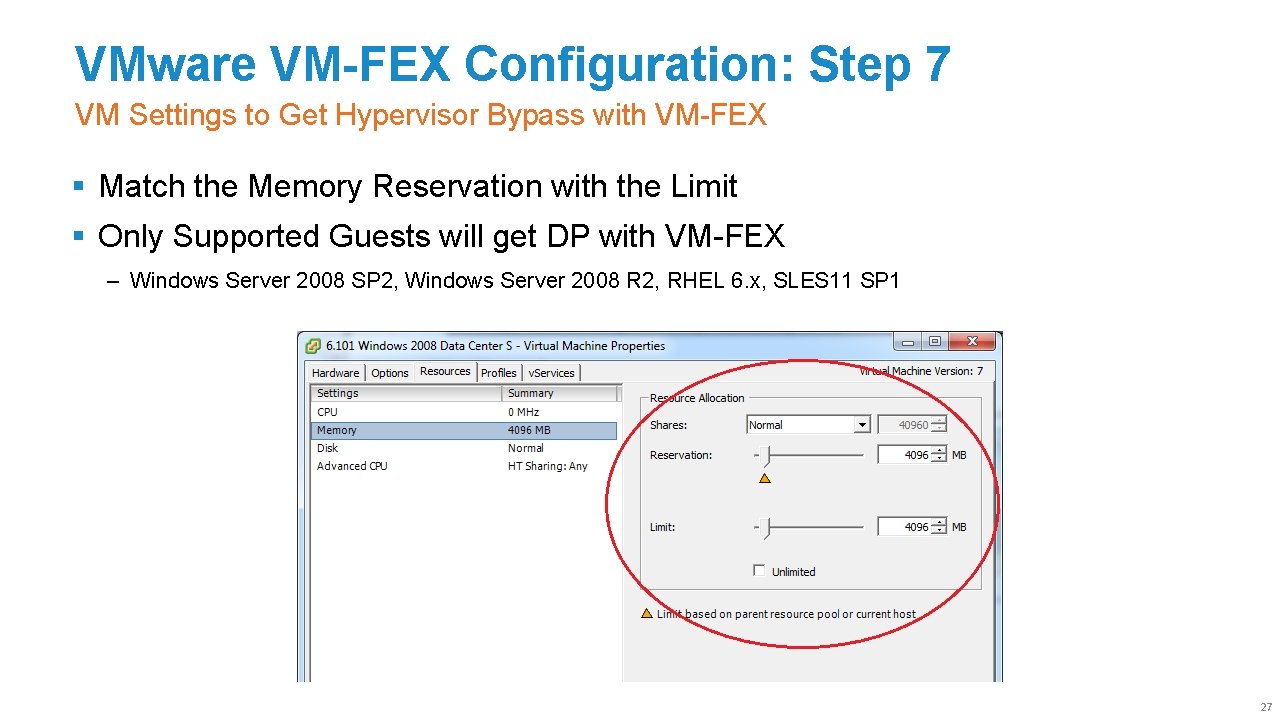

VMware VM-FEX Configuration: Step 7 VM Settings to Get Hypervisor Bypass with VM-FEX § Match the Memory Reservation with the Limit § Only Supported Guests will get DP with VM-FEX ‒ Windows Server 2008 SP 2, Windows Server 2008 R 2, RHEL 6. x, SLES 11 SP 1 27

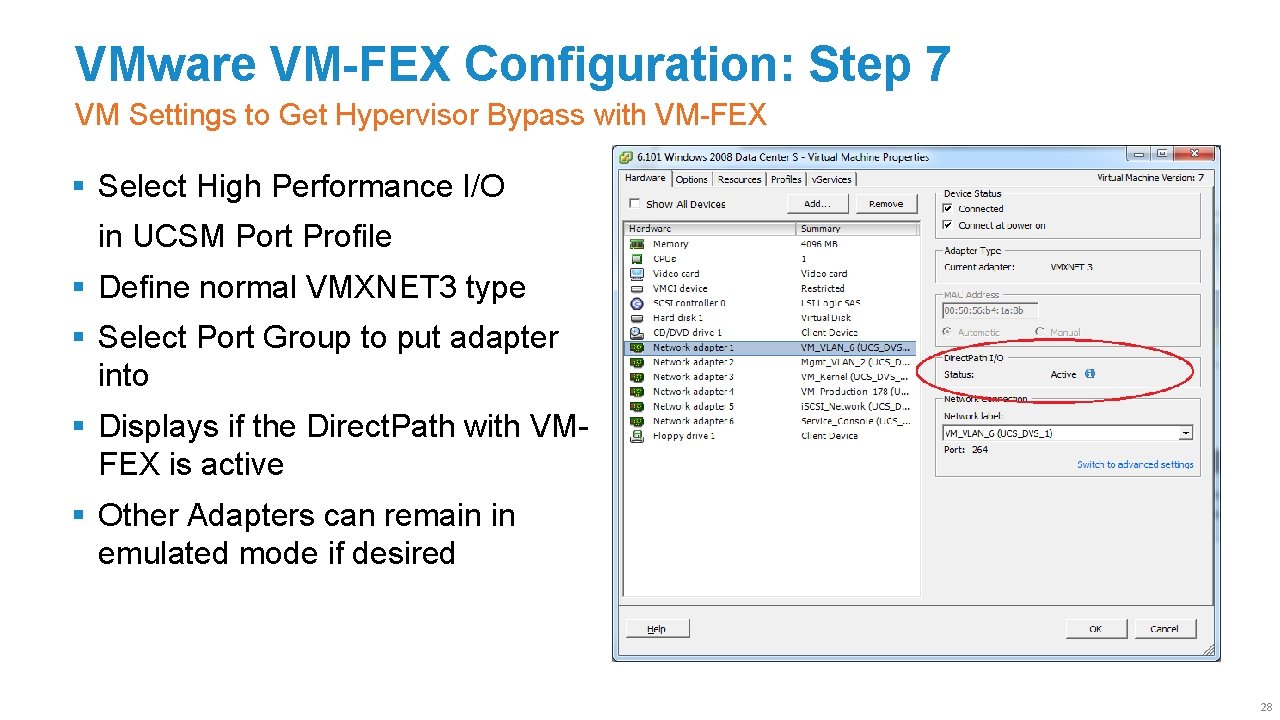

VMware VM-FEX Configuration: Step 7 VM Settings to Get Hypervisor Bypass with VM-FEX § Select High Performance I/O in UCSM Port Profile § Define normal VMXNET 3 type § Select Port Group to put adapter into § Displays if the Direct. Path with VMFEX is active § Other Adapters can remain in emulated mode if desired 28

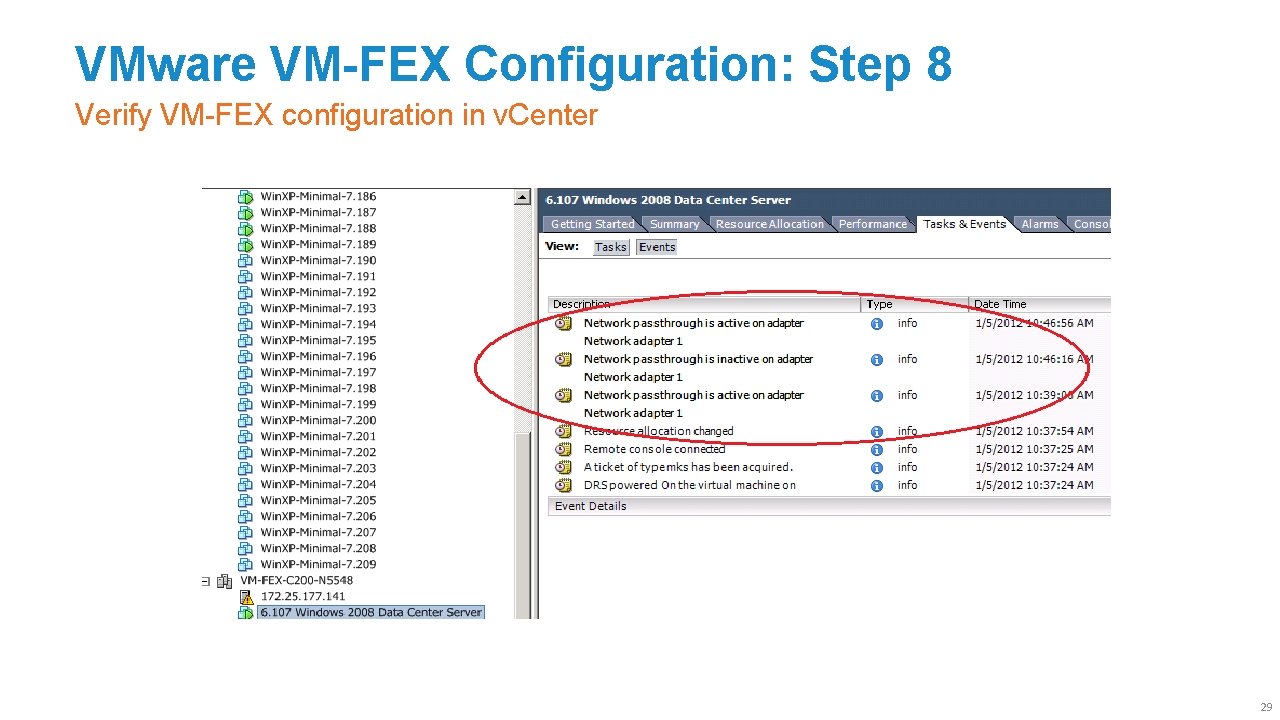

VMware VM-FEX Configuration: Step 8 Verify VM-FEX configuration in v. Center 29

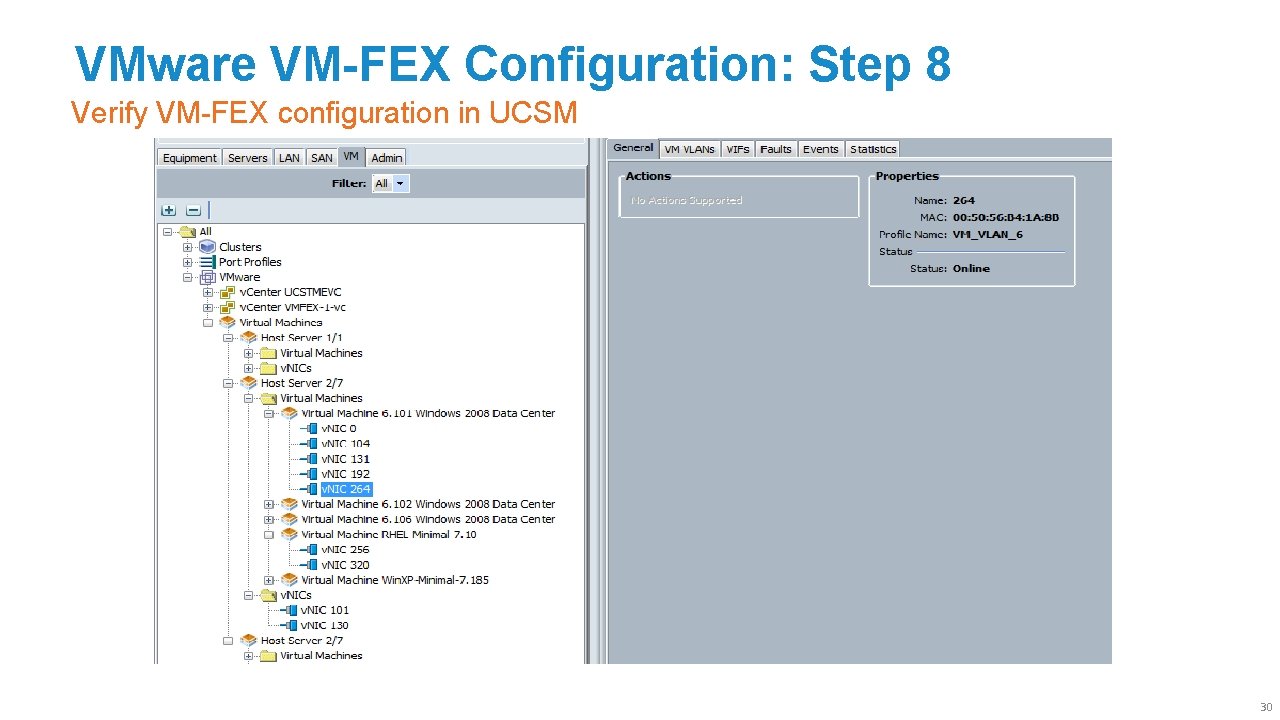

VMware VM-FEX Configuration: Step 8 Verify VM-FEX configuration in UCSM 30

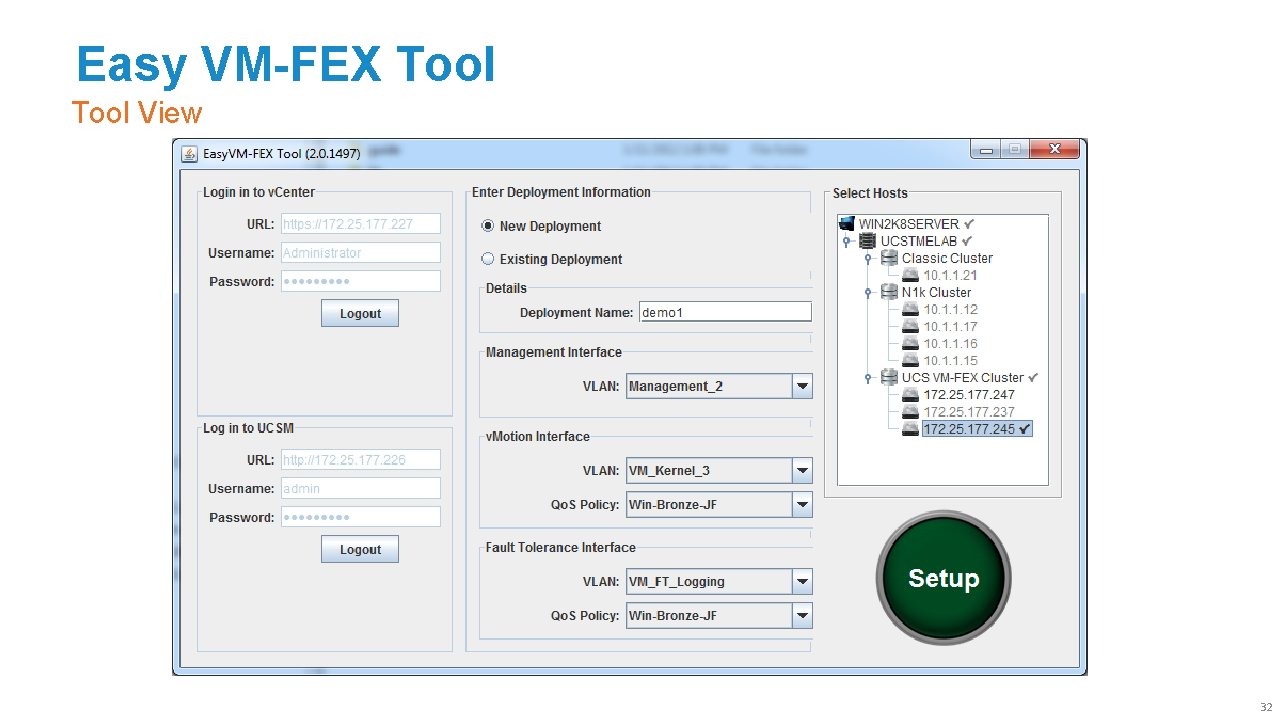

Easy VM-FEX Tool View 32

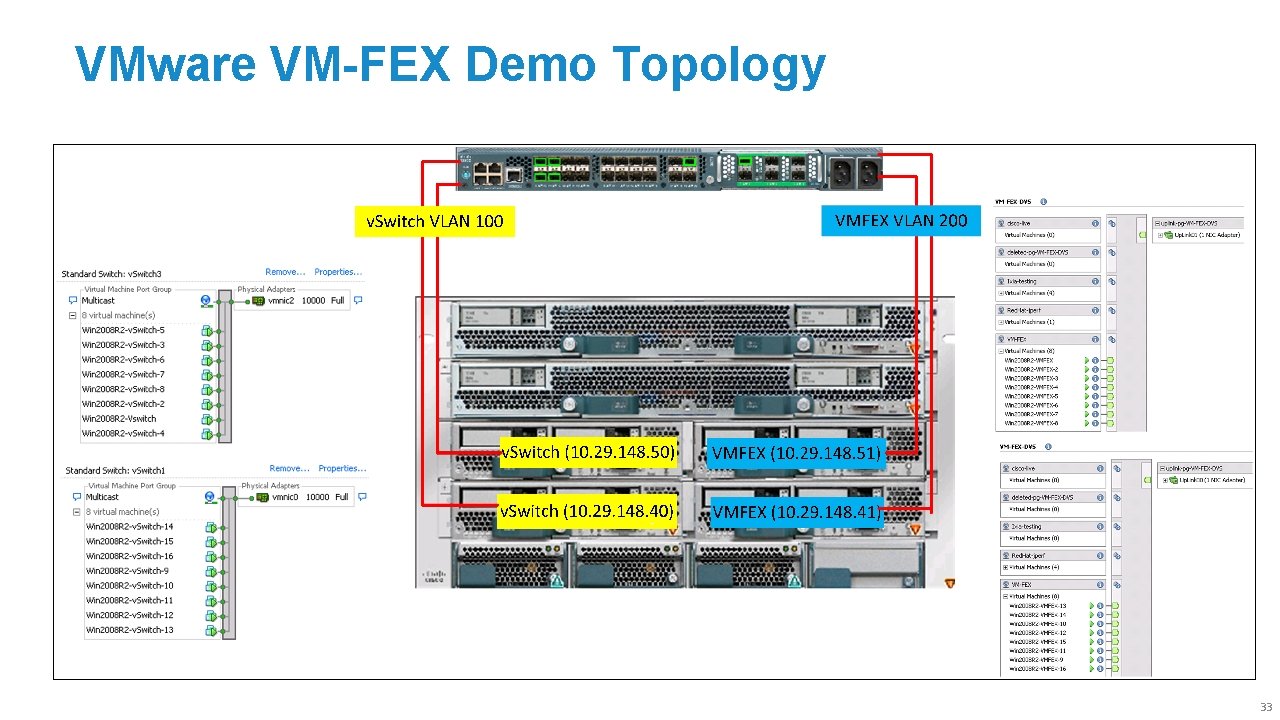

VMware VM-FEX Demo Topology 33

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Baseline Configuration for hypervisor § VM-FEX Implementation with VMware ESX on UCS § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 34

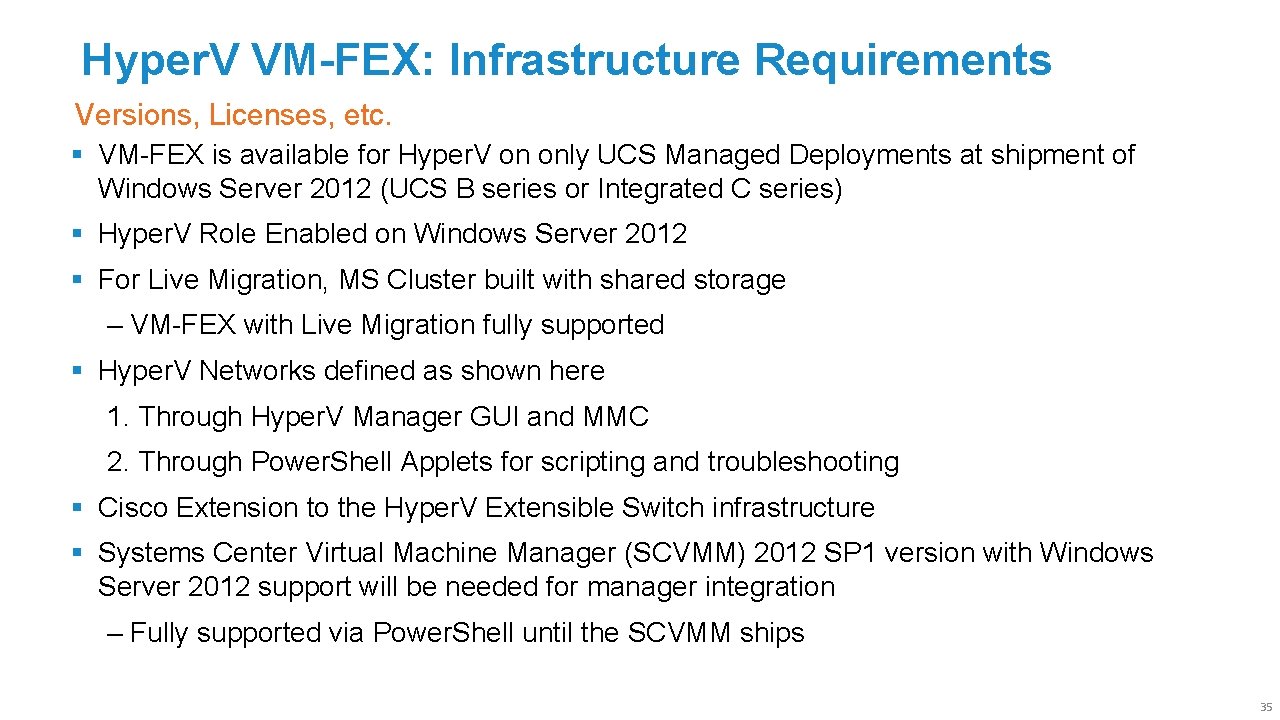

Hyper. V VM-FEX: Infrastructure Requirements Versions, Licenses, etc. § VM-FEX is available for Hyper. V on only UCS Managed Deployments at shipment of Windows Server 2012 (UCS B series or Integrated C series) § Hyper. V Role Enabled on Windows Server 2012 § For Live Migration, MS Cluster built with shared storage ‒ VM-FEX with Live Migration fully supported § Hyper. V Networks defined as shown here 1. Through Hyper. V Manager GUI and MMC 2. Through Power. Shell Applets for scripting and troubleshooting § Cisco Extension to the Hyper. V Extensible Switch infrastructure § Systems Center Virtual Machine Manager (SCVMM) 2012 SP 1 version with Windows Server 2012 support will be needed for manager integration ‒ Fully supported via Power. Shell until the SCVMM ships 35

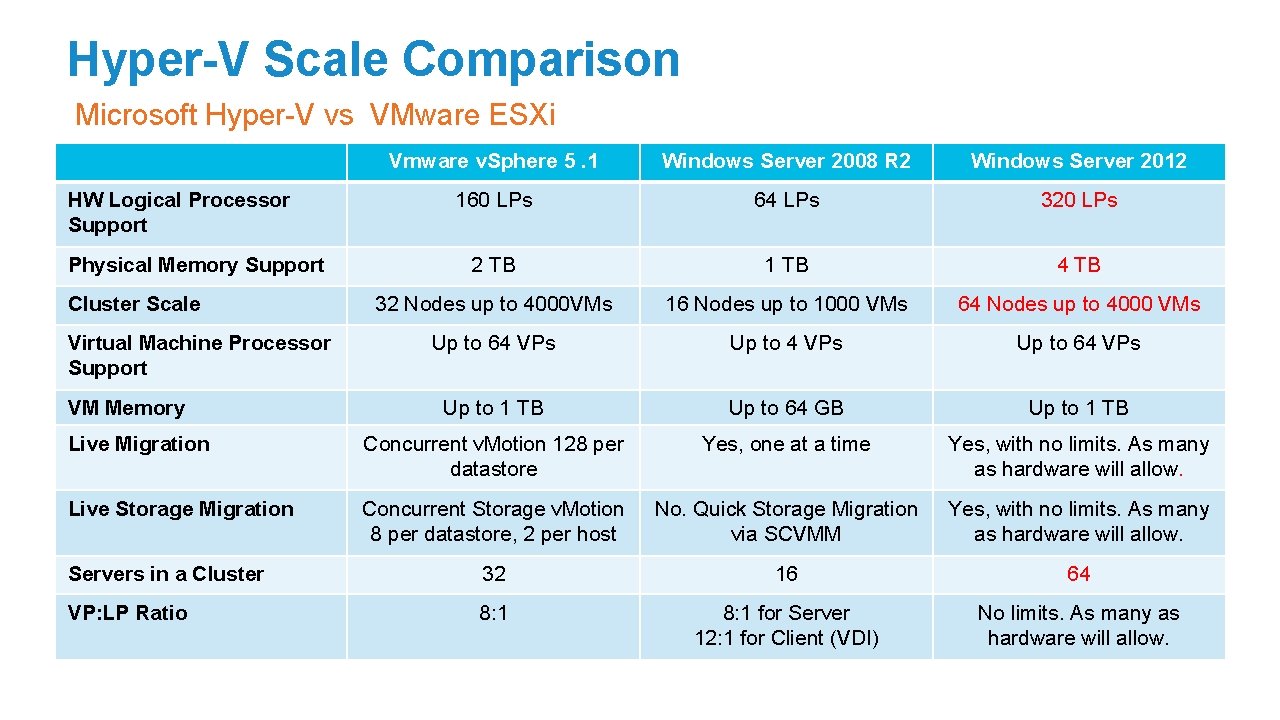

Hyper-V Scale Comparison Microsoft Hyper-V vs VMware ESXi Vmware v. Sphere 5. 1 Windows Server 2008 R 2 Windows Server 2012 160 LPs 64 LPs 320 LPs 2 TB 1 TB 4 TB 32 Nodes up to 4000 VMs 16 Nodes up to 1000 VMs 64 Nodes up to 4000 VMs Up to 64 VPs Up to 1 TB Up to 64 GB Up to 1 TB Live Migration Concurrent v. Motion 128 per datastore Yes, one at a time Yes, with no limits. As many as hardware will allow. Live Storage Migration Concurrent Storage v. Motion 8 per datastore, 2 per host No. Quick Storage Migration via SCVMM Yes, with no limits. As many as hardware will allow. Servers in a Cluster 32 16 64 VP: LP Ratio 8: 1 for Server 12: 1 for Client (VDI) No limits. As many as hardware will allow. HW Logical Processor Support Physical Memory Support Cluster Scale Virtual Machine Processor Support VM Memory

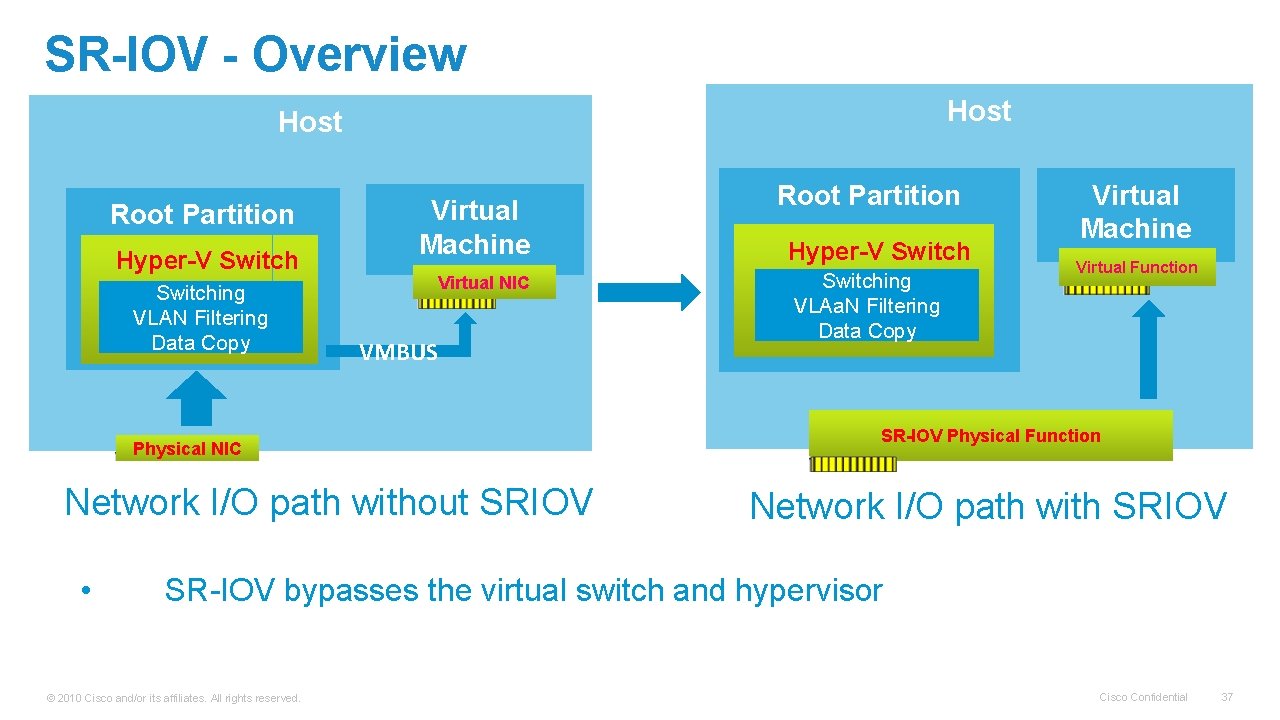

SR-IOV - Overview Host Root Partition Hyper-V Switching VLAN Filtering Data Copy Virtual Machine Virtual NIC VMBUS Physical NIC Network I/O path without SRIOV • Root Partition Hyper-V Switching VLAa. N Filtering Data Copy Virtual Machine Virtual Function SR-IOV Physical Function Network I/O path with SRIOV SR-IOV bypasses the virtual switch and hypervisor © 2010 Cisco and/or its affiliates. All rights reserved. Cisco Confidential 37

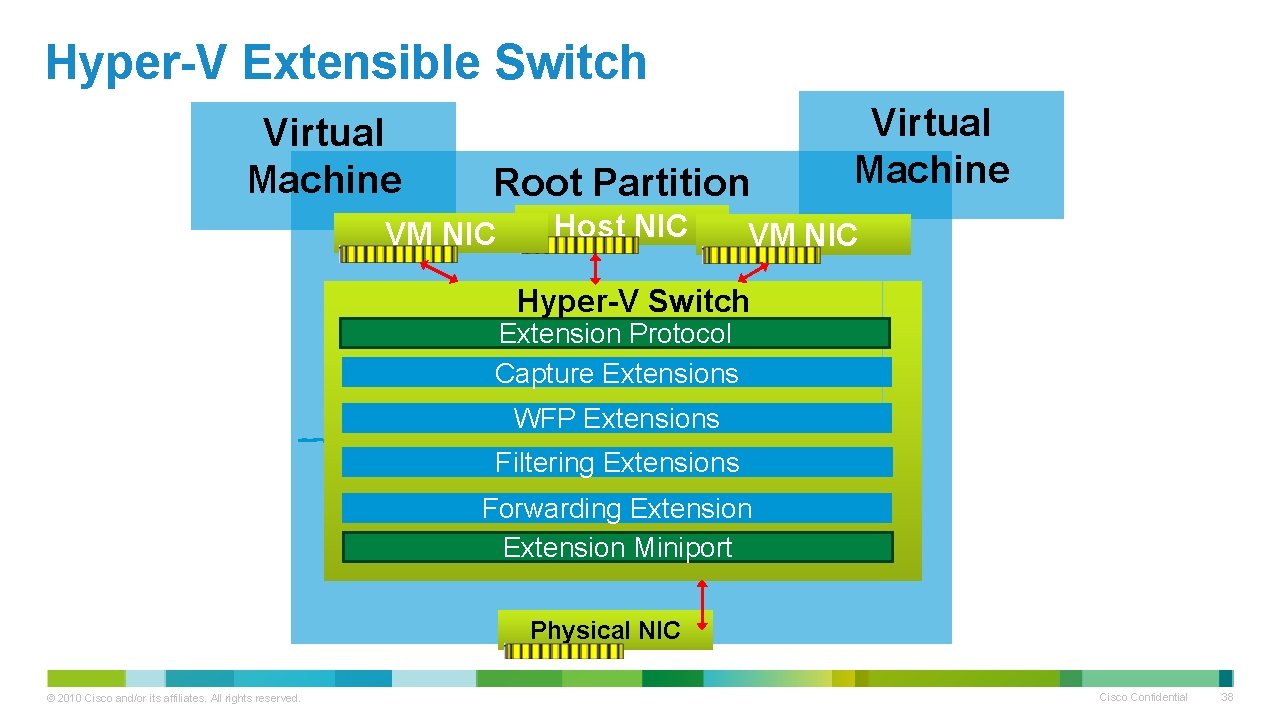

Hyper-V Extensible Switch Virtual Machine Root Partition VM NIC Host NIC Virtual Machine VM NIC Hyper-V Switch Extension Protocol Capture Extensions WFP Extensions Filtering Extensions Forwarding Extension Miniport Physical NIC © 2010 Cisco and/or its affiliates. All rights reserved. Cisco Confidential 38

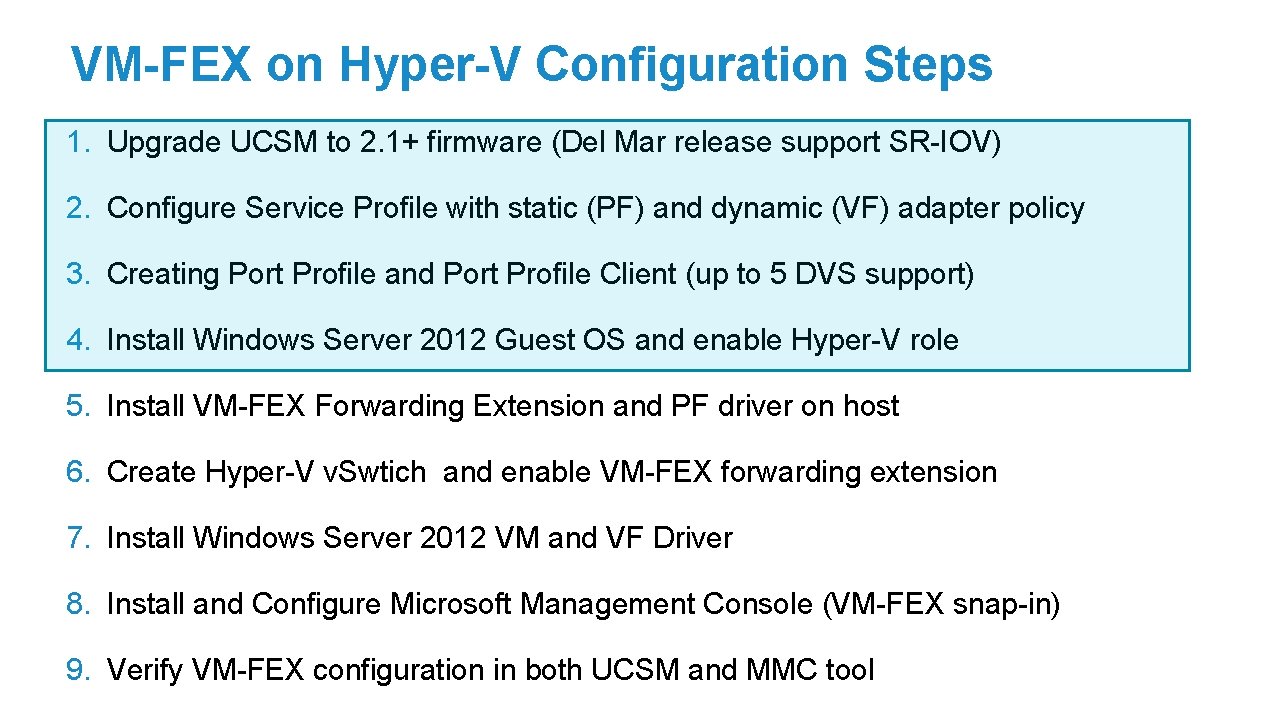

VM-FEX on Hyper-V Configuration Steps 1. Upgrade UCSM to 2. 1+ firmware (Del Mar release support SR-IOV) 2. Configure Service Profile with static (PF) and dynamic (VF) adapter policy 3. Creating Port Profile and Port Profile Client (up to 5 DVS support) 4. Install Windows Server 2012 Guest OS and enable Hyper-V role 5. Install VM-FEX Forwarding Extension and PF driver on host 6. Create Hyper-V v. Swtich and enable VM-FEX forwarding extension 7. Install Windows Server 2012 VM and VF Driver 8. Install and Configure Microsoft Management Console (VM-FEX snap-in) 9. Verify VM-FEX configuration in both UCSM and MMC tool

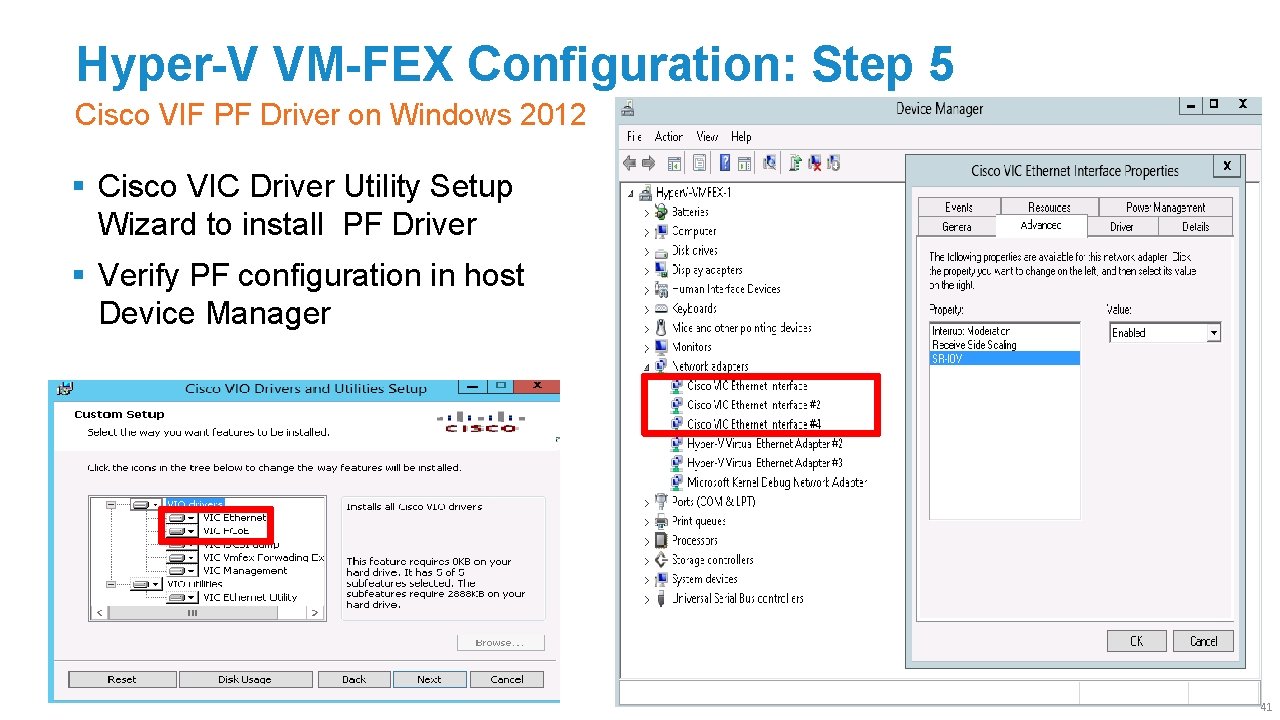

Hyper-V VM-FEX Configuration: Step 5 Cisco VIF PF Driver on Windows 2012 § Cisco VIC Driver Utility Setup Wizard to install PF Driver § Verify PF configuration in host Device Manager 41

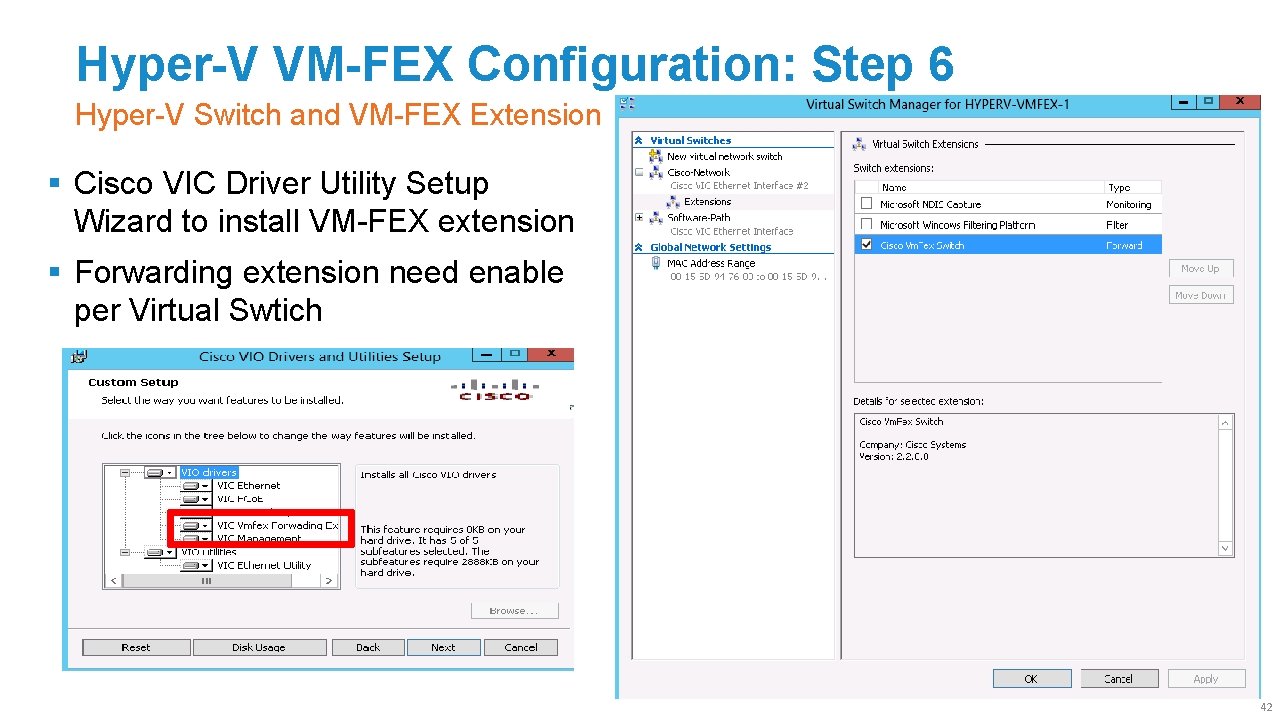

Hyper-V VM-FEX Configuration: Step 6 Hyper-V Switch and VM-FEX Extension § Cisco VIC Driver Utility Setup Wizard to install VM-FEX extension § Forwarding extension need enable per Virtual Swtich 42

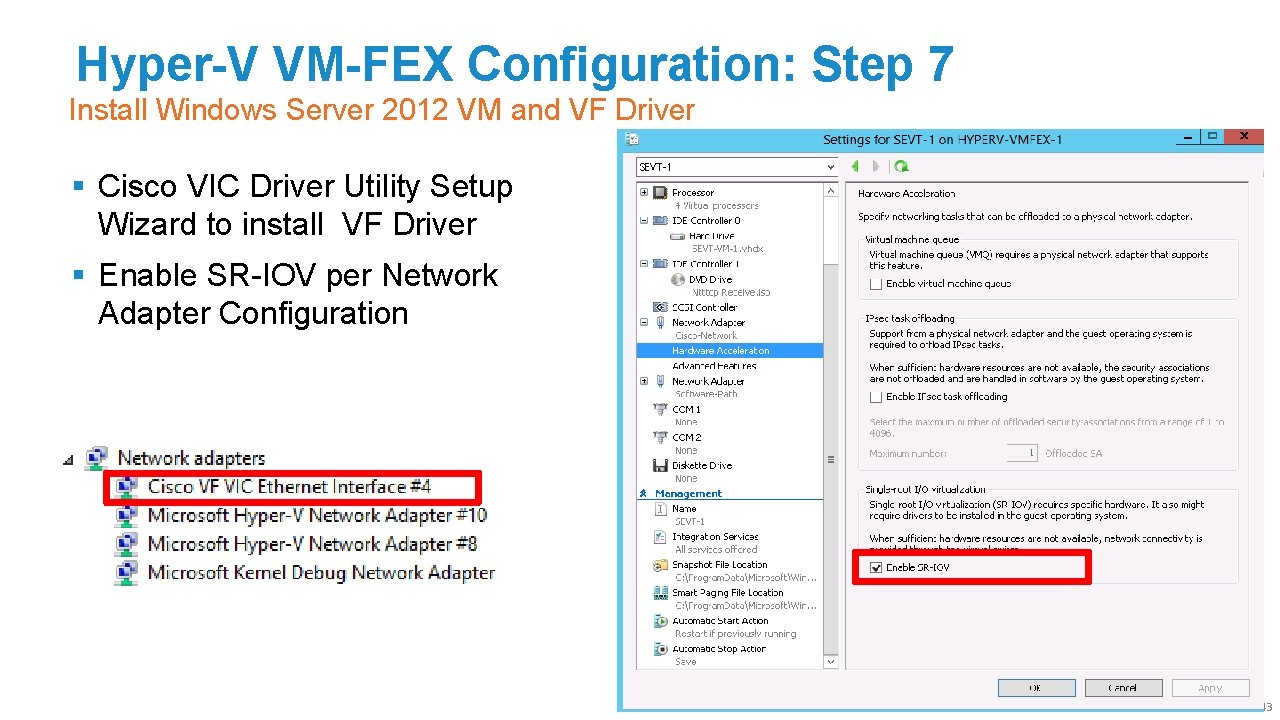

Hyper-V VM-FEX Configuration: Step 7 Install Windows Server 2012 VM and VF Driver § Cisco VIC Driver Utility Setup Wizard to install VF Driver § Enable SR-IOV per Network Adapter Configuration 43

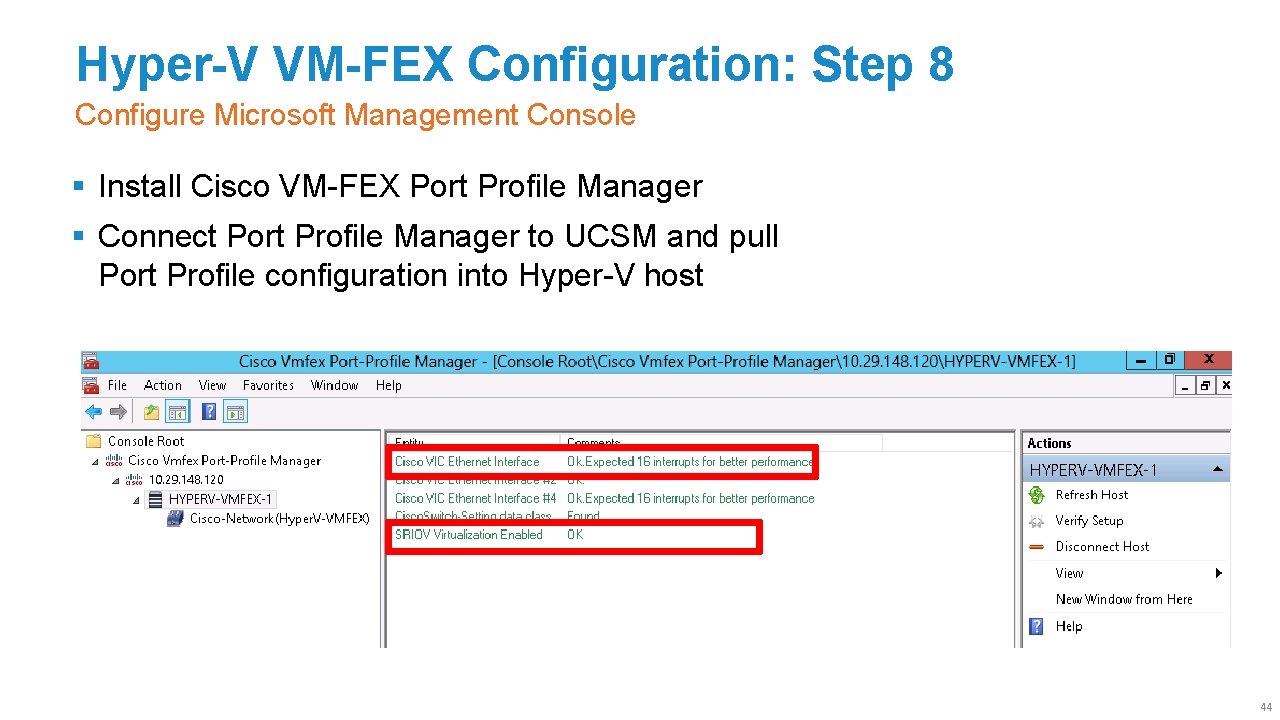

Hyper-V VM-FEX Configuration: Step 8 Configure Microsoft Management Console § Install Cisco VM-FEX Port Profile Manager § Connect Port Profile Manager to UCSM and pull Port Profile configuration into Hyper-V host 44

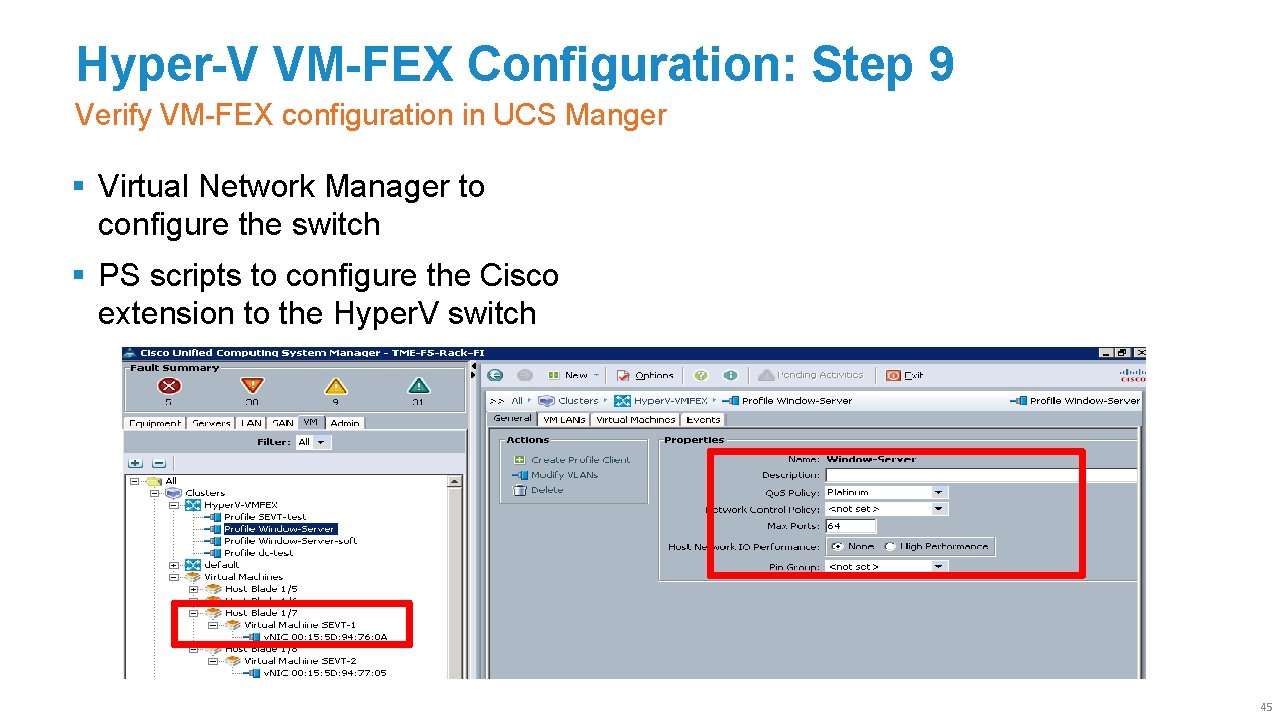

Hyper-V VM-FEX Configuration: Step 9 Verify VM-FEX configuration in UCS Manger § Virtual Network Manager to configure the switch § PS scripts to configure the Cisco extension to the Hyper. V switch 45

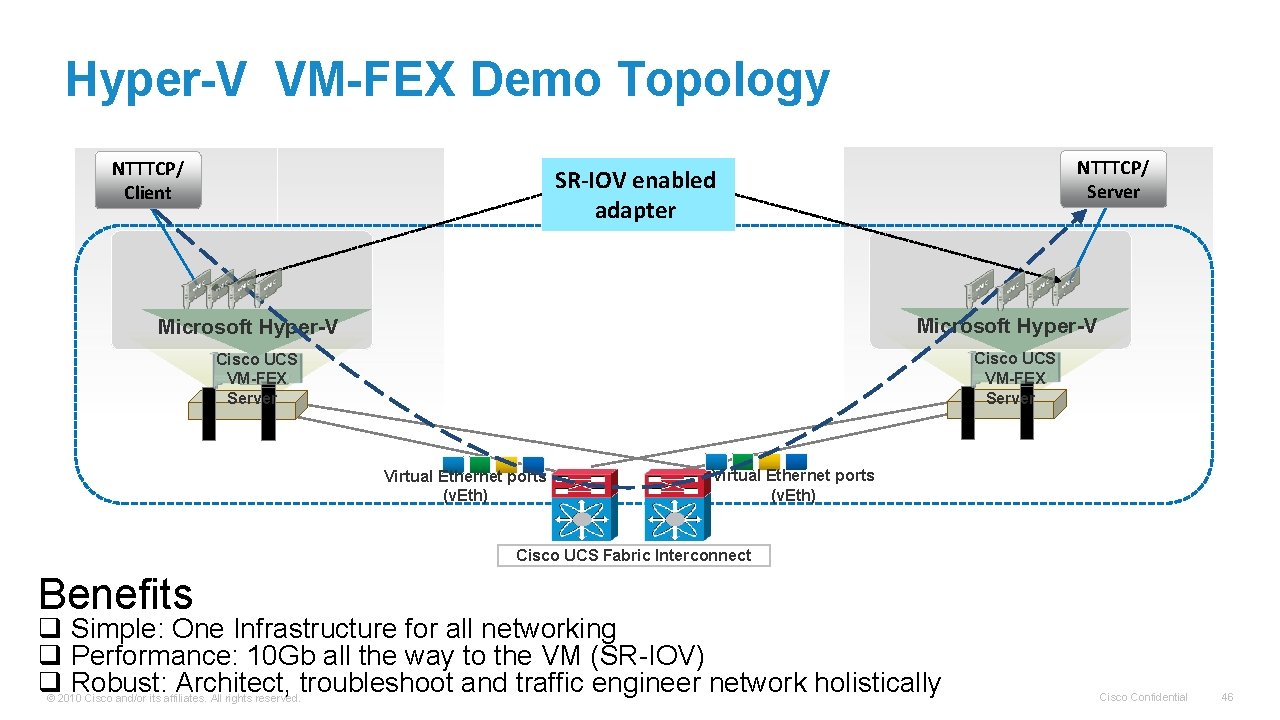

Hyper-V VM-FEX Demo Topology NTTTCP/ Client NTTTCP/ Server SR-IOV enabled adapter Microsoft Hyper-V Cisco UCS VM-FEX Server Virtual Ethernet ports (v. Eth) Cisco UCS Fabric Interconnect Benefits q Simple: One Infrastructure for all networking q Performance: 10 Gb all the way to the VM (SR-IOV) q Robust: Architect, troubleshoot and traffic engineer network holistically © 2010 Cisco and/or its affiliates. All rights reserved. Cisco Confidential 46

Agenda What This Session Will Cover § VM-FEX Overview § VM-FEX vs Nexus 1000 v § VM-FEX Operational Model § UCS VM-FEX General Baseline Configuration for hypervisor § VM-FEX Implementation with VMware ESX on UCS § VM-FEX Implementation with Hyper-V on UCS § VM-FEX Implementation with KVM on UCS § Summary 47

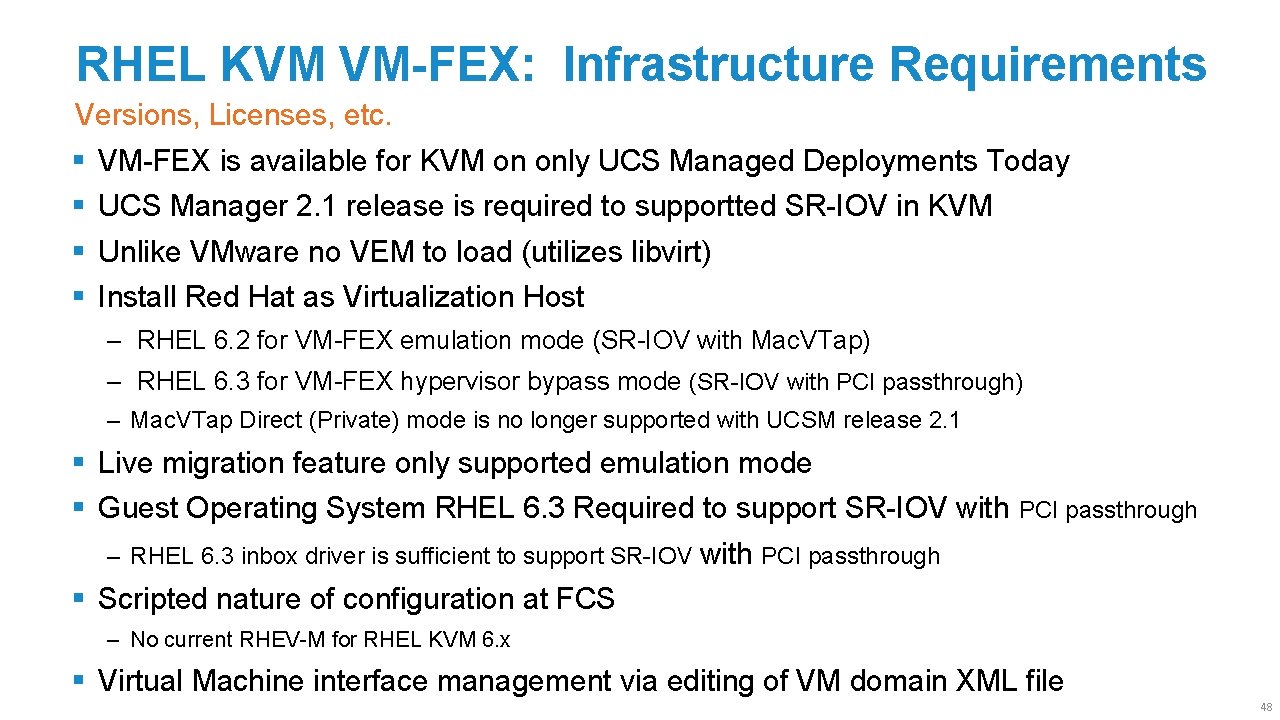

RHEL KVM VM-FEX: Infrastructure Requirements Versions, Licenses, etc. § § VM-FEX is available for KVM on only UCS Managed Deployments Today UCS Manager 2. 1 release is required to supportted SR-IOV in KVM Unlike VMware no VEM to load (utilizes libvirt) Install Red Hat as Virtualization Host ‒ RHEL 6. 2 for VM-FEX emulation mode (SR-IOV with Mac. VTap) ‒ RHEL 6. 3 for VM-FEX hypervisor bypass mode (SR-IOV with PCI passthrough) ‒ Mac. VTap Direct (Private) mode is no longer supported with UCSM release 2. 1 § Live migration feature only supported emulation mode § Guest Operating System RHEL 6. 3 Required to support SR-IOV with PCI passthrough ‒ RHEL 6. 3 inbox driver is sufficient to support SR-IOV with PCI passthrough § Scripted nature of configuration at FCS ‒ No current RHEV-M for RHEL KVM 6. x § Virtual Machine interface management via editing of VM domain XML file 48

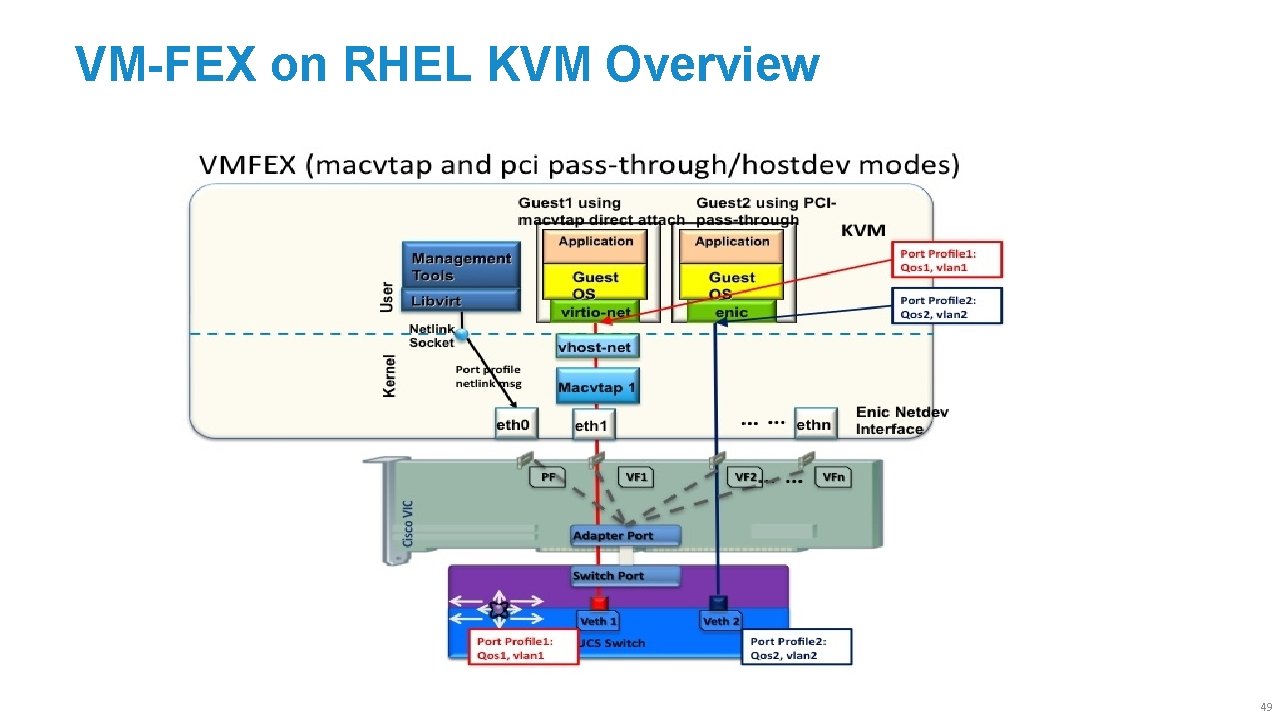

VM-FEX on RHEL KVM Overview 49

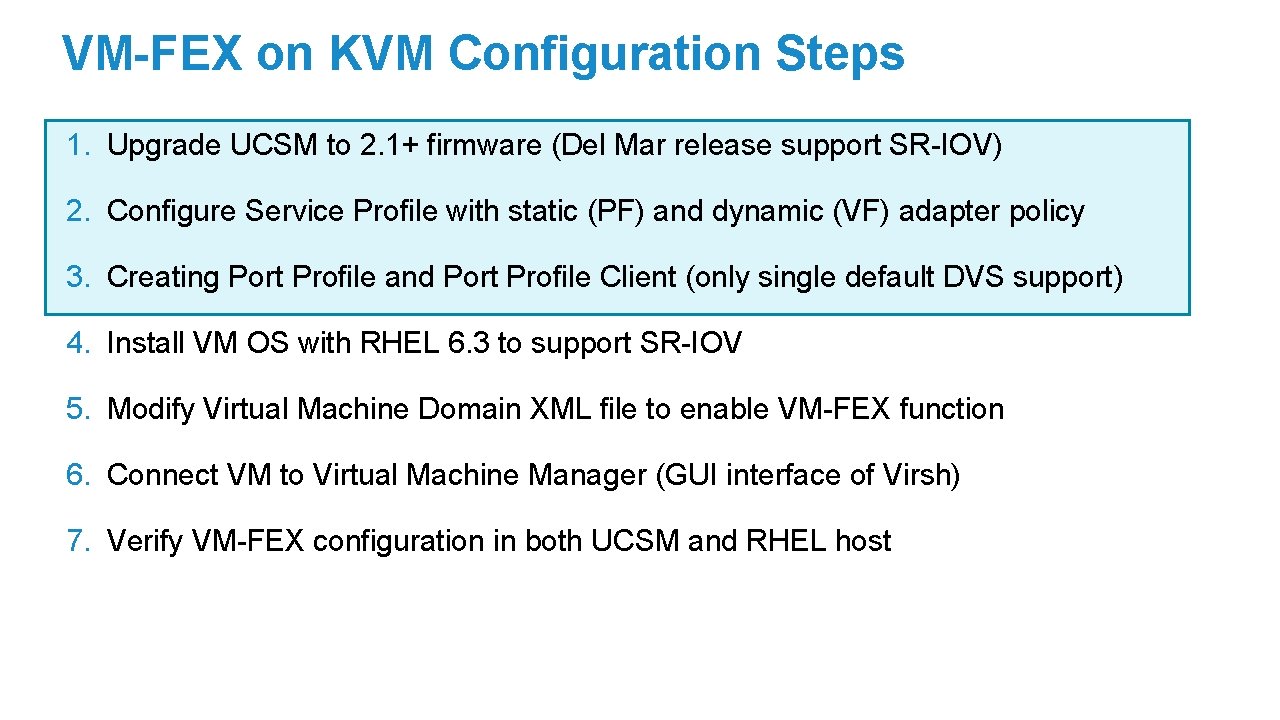

VM-FEX on KVM Configuration Steps 1. Upgrade UCSM to 2. 1+ firmware (Del Mar release support SR-IOV) 2. Configure Service Profile with static (PF) and dynamic (VF) adapter policy 3. Creating Port Profile and Port Profile Client (only single default DVS support) 4. Install VM OS with RHEL 6. 3 to support SR-IOV 5. Modify Virtual Machine Domain XML file to enable VM-FEX function 6. Connect VM to Virtual Machine Manager (GUI interface of Virsh) 7. Verify VM-FEX configuration in both UCSM and RHEL host

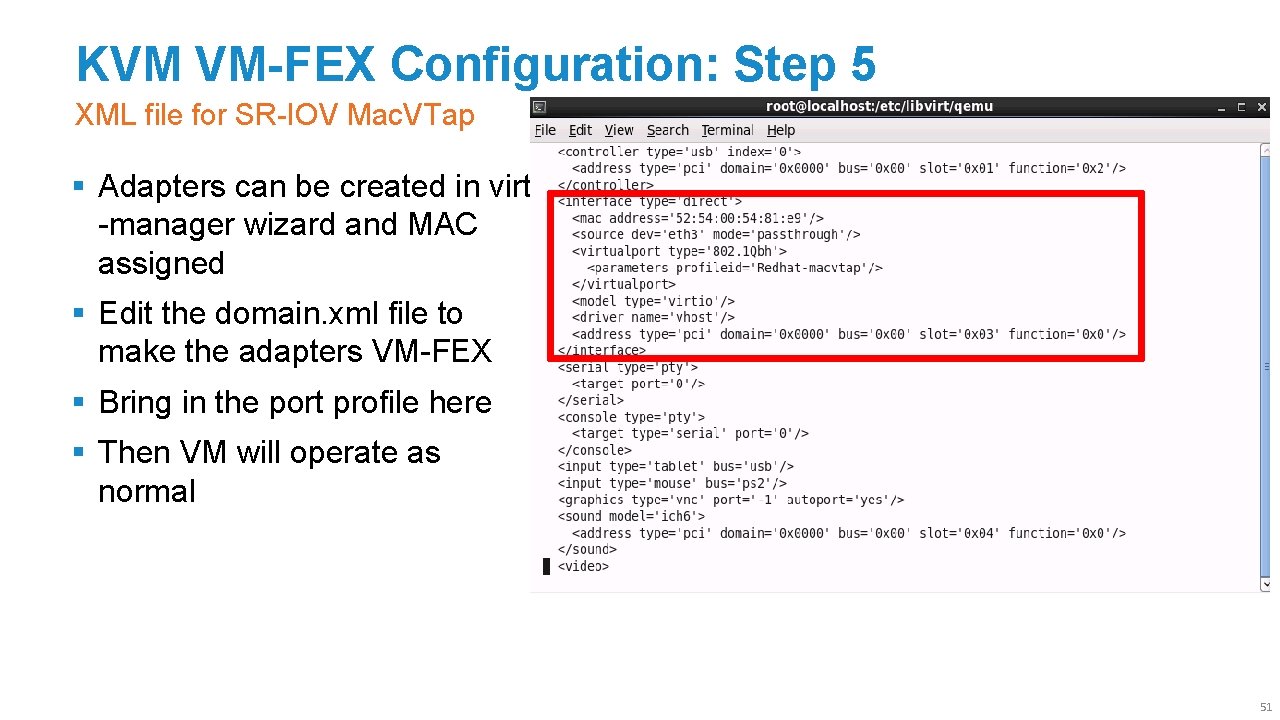

KVM VM-FEX Configuration: Step 5 XML file for SR-IOV Mac. VTap § Adapters can be created in virt -manager wizard and MAC assigned § Edit the domain. xml file to make the adapters VM-FEX § Bring in the port profile here § Then VM will operate as normal 51

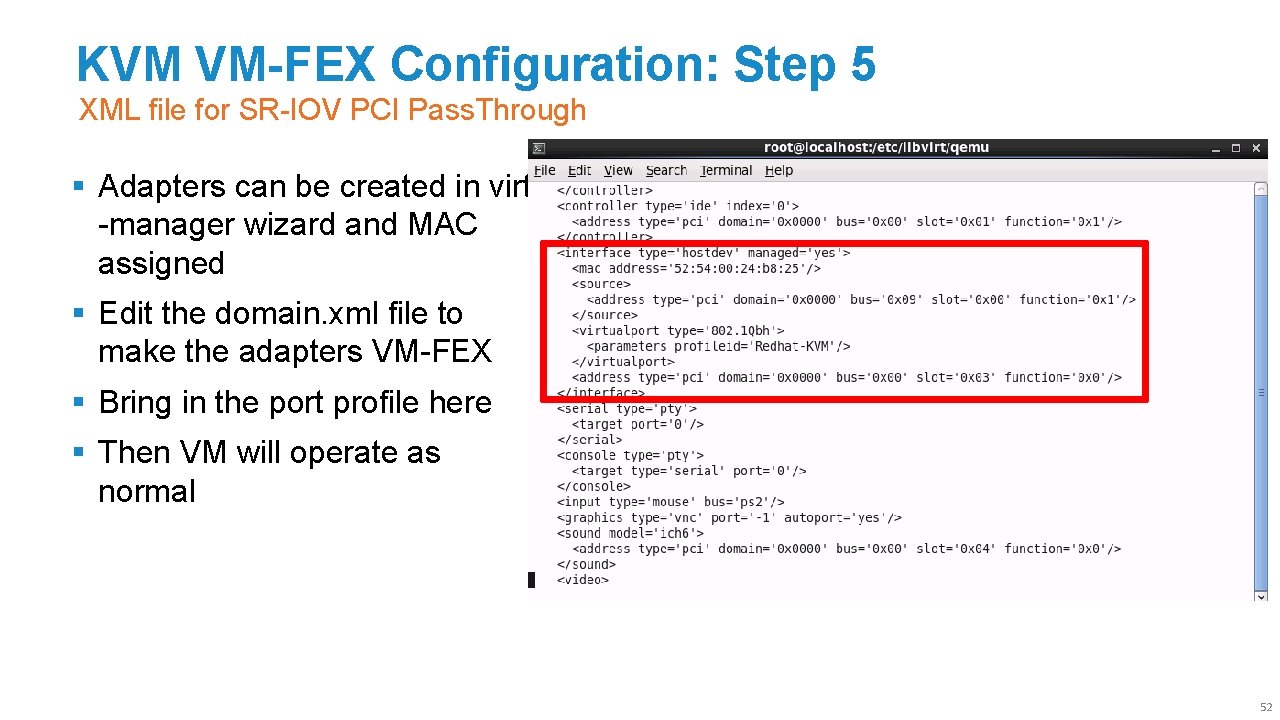

KVM VM-FEX Configuration: Step 5 XML file for SR-IOV PCI Pass. Through § Adapters can be created in virt -manager wizard and MAC assigned § Edit the domain. xml file to make the adapters VM-FEX § Bring in the port profile here § Then VM will operate as normal 52

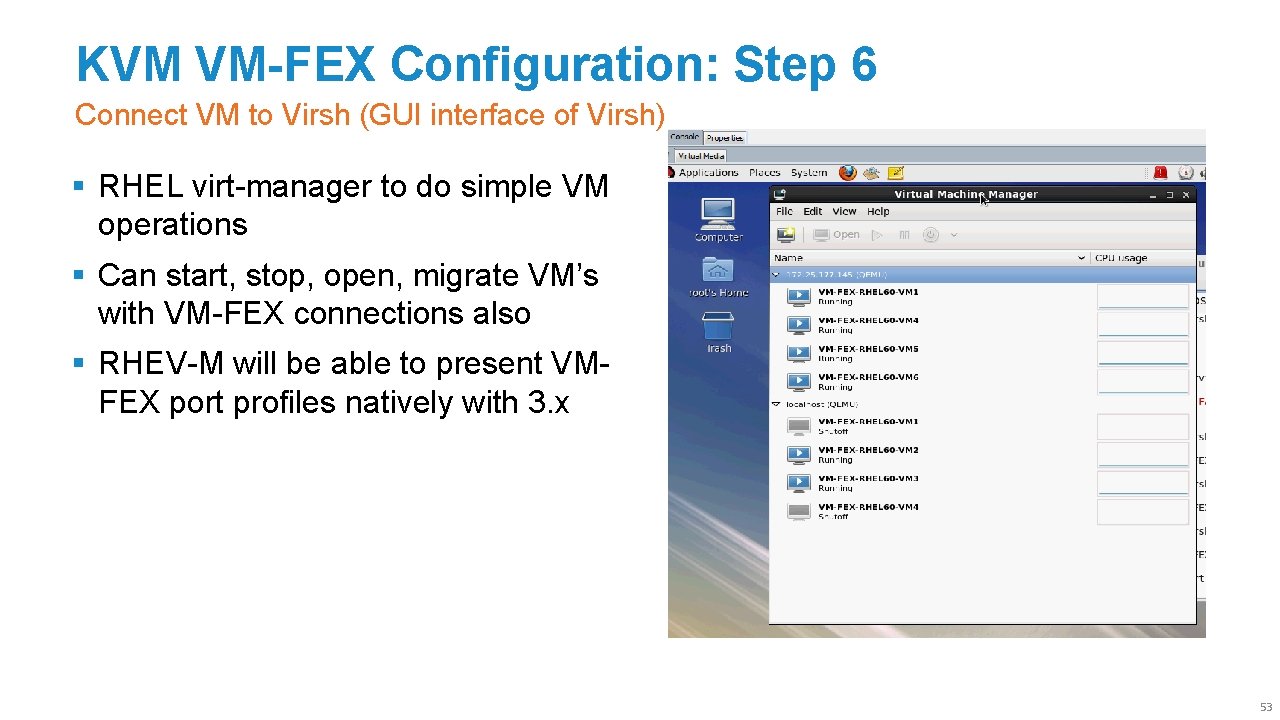

KVM VM-FEX Configuration: Step 6 Connect VM to Virsh (GUI interface of Virsh) § RHEL virt-manager to do simple VM operations § Can start, stop, open, migrate VM’s with VM-FEX connections also § RHEV-M will be able to present VMFEX port profiles natively with 3. x 53

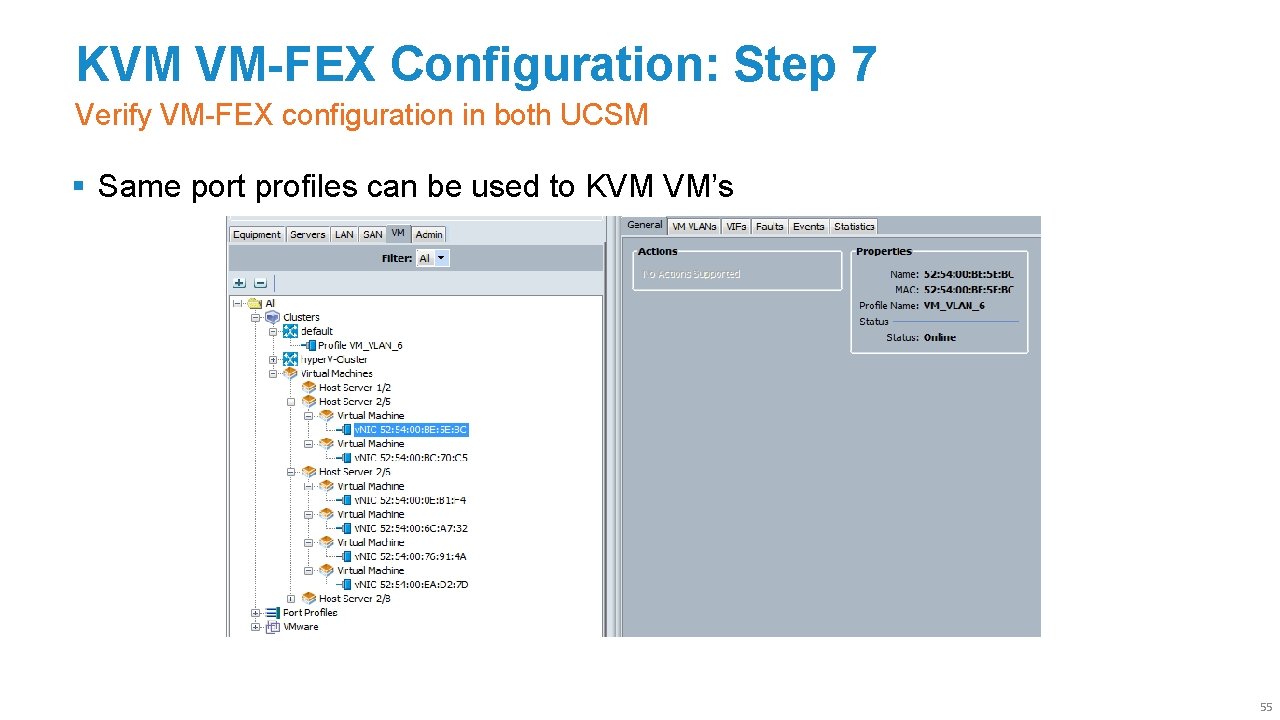

KVM VM-FEX Configuration: Step 7 Verify VM-FEX configuration in both UCSM § Same port profiles can be used to KVM VM’s 55

VM-FEX Advtanage Contrasting VM-FEX to Virtualised Switching Layers § Simpler Deployments ‒ Unifying the virtual and physical network ‒ Consistency in functionality, performance and management § Robustness ‒ Programmability of the infrastructure ‒ Troubleshooting, traffic engineering virtual and physical together § Performance ‒ Near bare metal I/O performance ‒ Improve jitter, latency, throughput and CPU utilization 56

Thank you.

- Slides: 53