Research on Embedded Hypervisor Scheduler Techniques Project overview

Research on Embedded Hypervisor Scheduler Techniques Project overview 2014/10/20 1

Background �Asymmetric multi-core is becoming increasing popular over homogeneous multi-core systems. ◦ An asymmetric multi-core platform consists of cores with different capabilities. �ARM: big. LITTLE architecture. �Qualcomm: asynchronous Symmetrical Multi. Processing (a. SMP) �Nvidia: variable Symmetric Multiprocessing (v. SMP) �…etc. 2

ARM big. LITTLE Core �Developed by ARM in Oct. 2011. �Combine two kinds of architecturally compatible cores. � To create a multi-core processor that can adjust better to dynamic computing needs and use less power than clock scaling alone. � big cores are more powerful but power-hungry, while LITTLE cores are low-power but (relatively) slower. 3

Three Types of Models �Cluster migration �CPU migration(In-Kernel Switcher) �Heterogeneous multi-processing (global task scheduling)

Motivation �Scheduling goals differ between homogenous and asymmetric multicore platforms. ◦ Homogeneous multi-core: load-balancing. �Distribute workloads evenly in order to obtain maximum performance. ◦ Asymmetric multi-core: maximize power efficiency with modest performance sacrifices. 5

Motivation(Cont. ) �Need new scheduling strategies for asymmetric multi-core platform. ◦ The power and computing characteristics vary from different types of cores. ◦ Take the differences into consideration while scheduling. 6

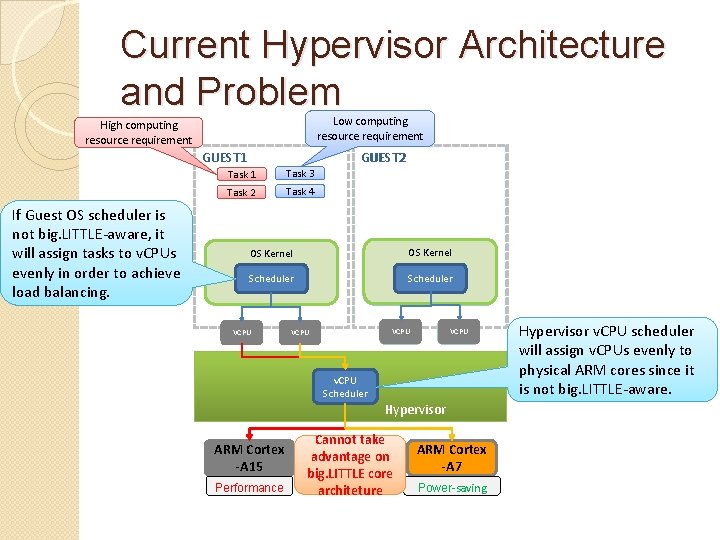

Current Hypervisor Architecture and Problem Low computing resource requirement High computing resource requirement GUEST 2 GUEST 1 If Guest OS scheduler is not big. LITTLE-aware, it will assign tasks to v. CPUs evenly in order to achieve load balancing. Task 1 Task 3 Task 2 Task 4 OS Kernel Scheduler VCPU v. CPU Scheduler Hypervisor v. CPU scheduler will assign v. CPUs evenly to physical ARM cores since it is not big. LITTLE-aware. Hypervisor ARM Cortex -A 15 Performance Cannot take advantage on big. LITTLE core architeture ARM Cortex -A 7 Power-saving 7

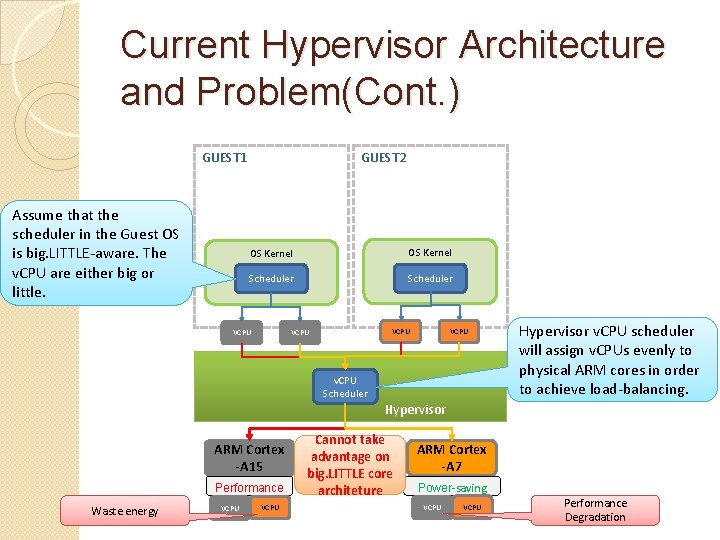

Current Hypervisor Architecture and Problem(Cont. ) GUEST 2 GUEST 1 Assume that the scheduler in the Guest OS is big. LITTLE-aware. The v. CPU are either big or little. OS Kernel Scheduler VCPU v. CPU Scheduler Hypervisor v. CPU scheduler will assign v. CPUs evenly to physical ARM cores in order to achieve load-balancing. Hypervisor ARM Cortex -A 15 Performance Waste energy VCPU Cannot take advantage on big. LITTLE core architeture ARM Cortex -A 7 Power-saving VCPU Performance Degradation 8

Project Goal �Research on the current scheduling algorithms for homogenous and asymmetric multi-core architecture. �Design and implement the hypervisor scheduler on asymmetric multi-core platform. � Assign virtual cores to physical cores for execution. � Minimize the power consumption with performance guarantee. 9

Challenge �The hypervisor scheduler cannot take advantage of big. LITTLE architecture if the scheduler inside guest OS is not big. LITTLE aware. 10

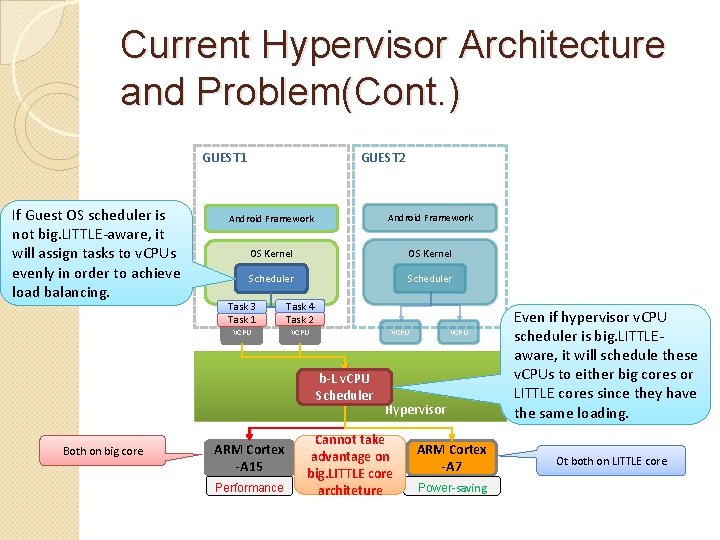

Current Hypervisor Architecture and Problem(Cont. ) GUEST 1 If Guest OS scheduler is not big. LITTLE-aware, it will assign tasks to v. CPUs evenly in order to achieve load balancing. GUEST 2 Android Framework OS Kernel Scheduler Task 3 Task 1 Task 4 Task 2 VCPU b-L v. CPU Scheduler Both on big core ARM Cortex -A 15 Performance VCPU Hypervisor Cannot take advantage on big. LITTLE core architeture ARM Cortex -A 7 Even if hypervisor v. CPU scheduler is big. LITTLEaware, it will schedule these v. CPUs to either big cores or LITTLE cores since they have the same loading. Ot both on LITTLE core Power-saving 11

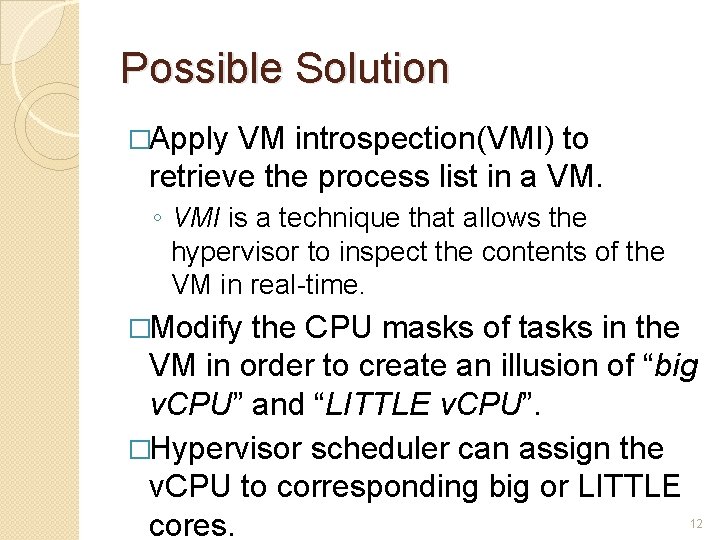

Possible Solution �Apply VM introspection(VMI) to retrieve the process list in a VM. ◦ VMI is a technique that allows the hypervisor to inspect the contents of the VM in real-time. �Modify the CPU masks of tasks in the VM in order to create an illusion of “big v. CPU” and “LITTLE v. CPU”. �Hypervisor scheduler can assign the v. CPU to corresponding big or LITTLE cores. 12

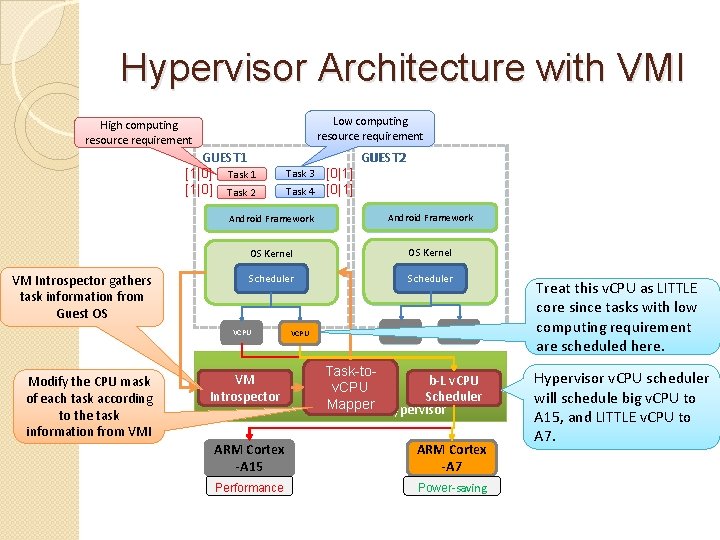

Hypervisor Architecture with VMI Low computing resource requirement High computing resource requirement GUEST 1 [1|0] Task 2 VM Introspector gathers task information from Guest OS Task 4 [0|1] Android Framework OS Kernel OSLinux Kernel Linaro Kernel Scheduler VCPU Modify the CPU mask of each task according to the task information from VMI Task 3 GUEST 2 VM Introspector VCPU Task-tob-L v. CPU Scheduler Mapper Hypervisor ARM Cortex -A 15 ARM Cortex -A 7 Performance Power-saving Treat this v. CPU as LITTLE core since tasks with low computing requirement are scheduled here. Hypervisor v. CPU scheduler will schedule big v. CPU to A 15, and LITTLE v. CPU to A 7. 13

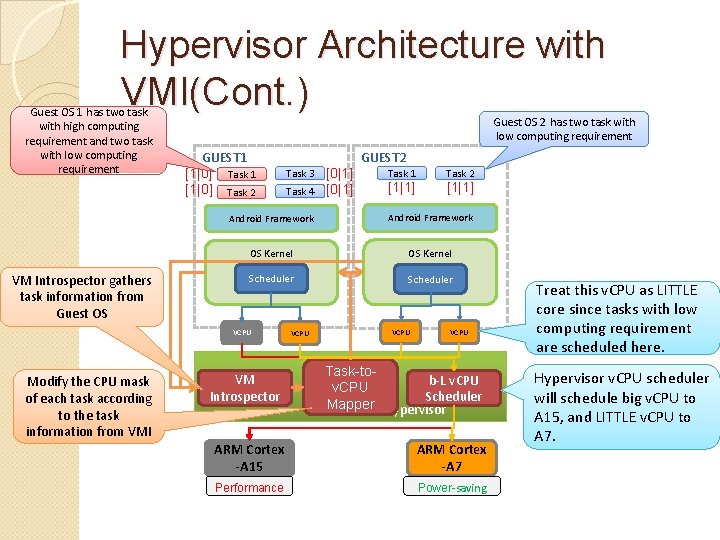

Hypervisor Architecture with VMI(Cont. ) Guest OS 1 has two task with high computing requirement and two task with low computing requirement VM Introspector gathers task information from Guest OS 2 has two task with low computing requirement GUEST 1 [1|0] Task 2 Task 4 [0|1] Task 1 [1|1] Task 2 [1|1] Android Framework OS Kernel Scheduler VCPU Modify the CPU mask of each task according to the task information from VMI Task 3 GUEST 2 VM Introspector VCPU Task-tob-L v. CPU Scheduler Mapper Hypervisor ARM Cortex -A 15 ARM Cortex -A 7 Performance Power-saving Treat this v. CPU as LITTLE core since tasks with low computing requirement are scheduled here. Hypervisor v. CPU scheduler will schedule big v. CPU to A 15, and LITTLE v. CPU to A 7. 14

Hypervisor Scheduler �Assigns the virtual cores to physical cores for execution. ◦ Determines the execution order and amount of time assigned to each virtual core according to a scheduling policy. ◦ Xen - credit-based scheduler ◦ KVM - completely fair scheduler 15

Virtual Core Scheduling Problem �For every time period, the hypervisor scheduler is given a set of virtual cores. �Given the operating frequency of each virtual core, the scheduler will generate a scheduling plan, such that the power consumption is minimized, and the performance is guaranteed. 16

Scheduling Plan �Must satisfy three constraints. ◦ Each virtual core should run on each physical core for a certain amount of time to satisfy the workload requirement. ◦ A virtual core can run only on a single physical core at any time. ◦ The virtual core should not switch among physical cores frequently, so as to reduce the overheads. 17

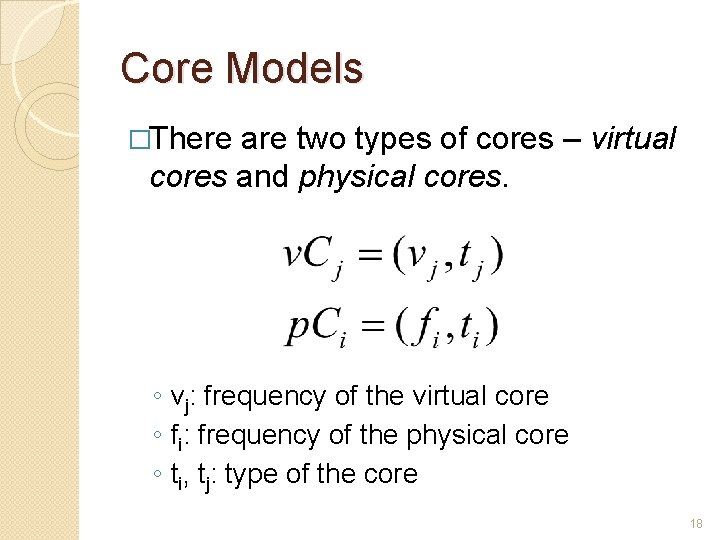

Core Models �There are two types of cores – virtual cores and physical cores. ◦ vj: frequency of the virtual core ◦ fi: frequency of the physical core ◦ ti, tj: type of the core 18

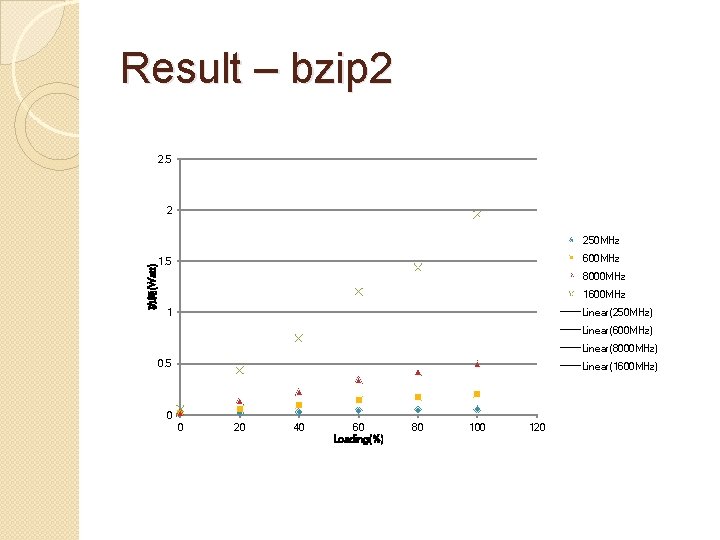

Power Model �To decide the power model, we have done some preliminary experiments to measure the power consumption of cores. ◦ On ODROID-XU board 19

Result – bzip 2 2. 5 2 功耗(Watt) 250 MHz 600 MHz 1. 5 8000 MHz 1600 MHz Linear(250 MHz) 1 Linear(600 MHz) Linear(8000 MHz) 0. 5 Linear(1600 MHz) 0 0 20 40 60 Loading(%) 80 100 120

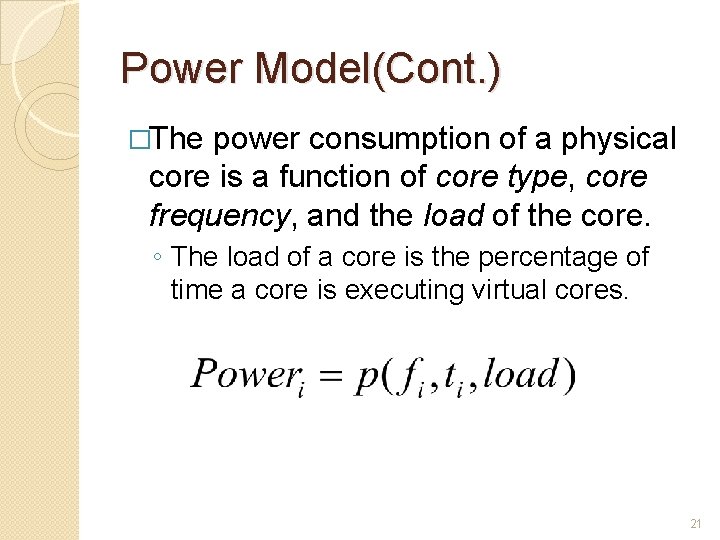

Power Model(Cont. ) �The power consumption of a physical core is a function of core type, core frequency, and the load of the core. ◦ The load of a core is the percentage of time a core is executing virtual cores. 21

Performance �A ratio between the computing resource assigned, to the computing resource requested. ◦ Ex: a virtual core running at 800 MHz runs on a physical core of 1200 MHz for 60% of a time interval. ◦ The performance of this virtual core is 0. 6*1200/800 = 0. 9. 22

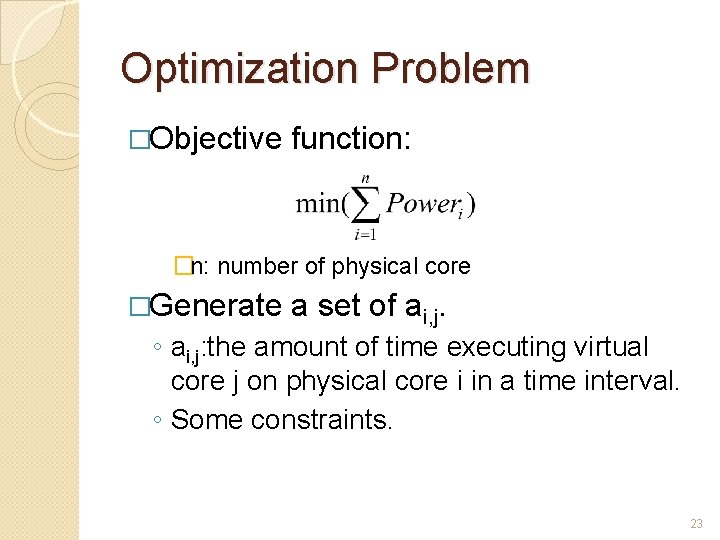

Optimization Problem �Objective function: �n: number of physical core �Generate a set of ai, j. ◦ ai, j: the amount of time executing virtual core j on physical core i in a time interval. ◦ Some constraints. 23

Constraints �Equal performance of each virtual core. ◦ Resource sufficient: all virtual core with performance = 1. ◦ Resource insufficient: all virtual core with equal performance less than 1. �Time assigned to a virtual core should be less than a time interval. 24

Constraints(Cont. ) �A physical core has a fixed amount of computing resources in a time interval. ◦ Load of a physical core ≦ 100%. 25

![Three-phase Solution �[Phase 1] generates the amount of time each virtual core should run Three-phase Solution �[Phase 1] generates the amount of time each virtual core should run](http://slidetodoc.com/presentation_image/f3e1dcd194c25e805a46814b81290b11/image-26.jpg)

Three-phase Solution �[Phase 1] generates the amount of time each virtual core should run on each physical core. �[Phase 2] determines the execution order of each virtual core on a physical core. �[Phase 3] exchanges the order of execution slice in order to reduce the number of core switching. 26

Phase 1 �Given the objective function and the constraints, we can use integer programming to find ai, j. ◦ ai, j : the amount of time slices virtual core i should run on physical core j. �Divide a time interval into time slices. ◦ Integer programming can find a feasible solution in a short time when the number of v. CPUs and the number of p. CPUs are small constants. 27

Phase 1(Cont. ) �If the relationship between power and load is linear. ◦ Use greedy instead. ◦ Assign virtual core to the physical core with the least power/instruction ratio and load under 100%. 28

Phase 2 �With the information from phase 1, the scheduler has to determine the execution order of each virtual core on each physical core. ◦ A virtual core cannot appear in two or more physical core at the same time. 29

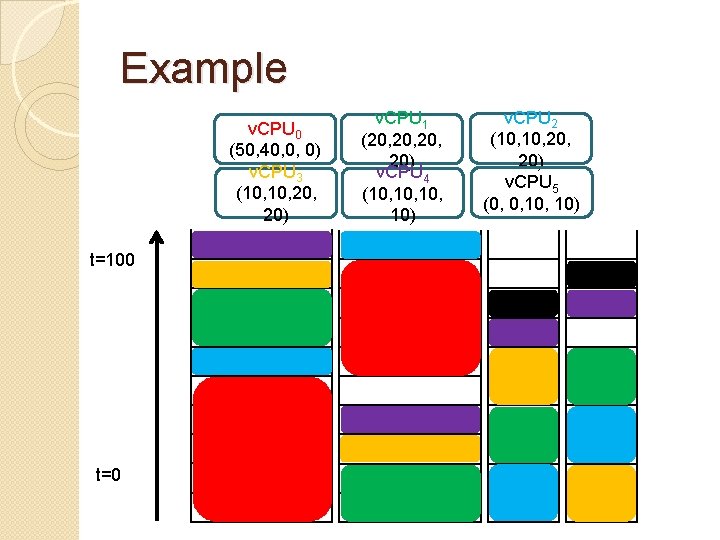

Example v. CPU 0 (50, 40, 0, 0) v. CPU 3 (10, 20, 20) t=100 t=0 v. CPU 1 (20, 20, 20) v. CPU 4 (10, 10, 10) v. CPU 2 (10, 20, 20) v. CPU 5 (0, 0, 10)

Phase 2(Cont. ) �We can formulate the problem into an Open-shop scheduling problem (OSSP). ◦ OSSP with preemption can be solved in polynomial time. [1] T. Gonzalez and S. Sahni. Open shop scheduling to minimize finish time. J. ACM, 23(4): 665– 679, Oct. 1976. 31

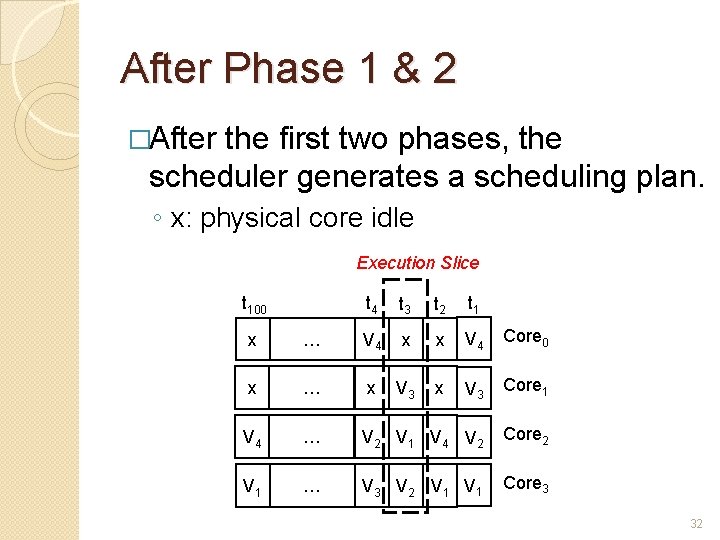

After Phase 1 & 2 �After the first two phases, the scheduler generates a scheduling plan. ◦ x: physical core idle Execution Slice t 100 t 4 t 3 t 2 t 1 x … V 4 x x V 4 Core 0 x … x V 3 Core 1 V 4 … V 2 V 1 V 4 V 2 Core 2 V 1 … V 3 V 2 V 1 Core 3 32

Phase 3 �Migrating tasks between cores incurs overhead. �Reduce the overhead by exchanging the order to minimize the number of core switching. 33

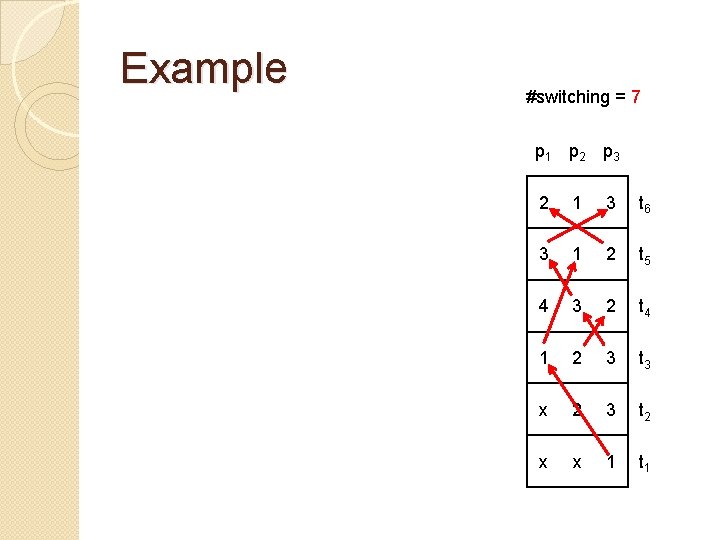

Number of Switching Minimization Problem �Given a scheduling plan, we want to find an order of the execution slice, such that the cost is minimized. ◦ An NPC problem �Reduce from the Hamilton Path Problem. ◦ Propose a greedy heuristic. 34

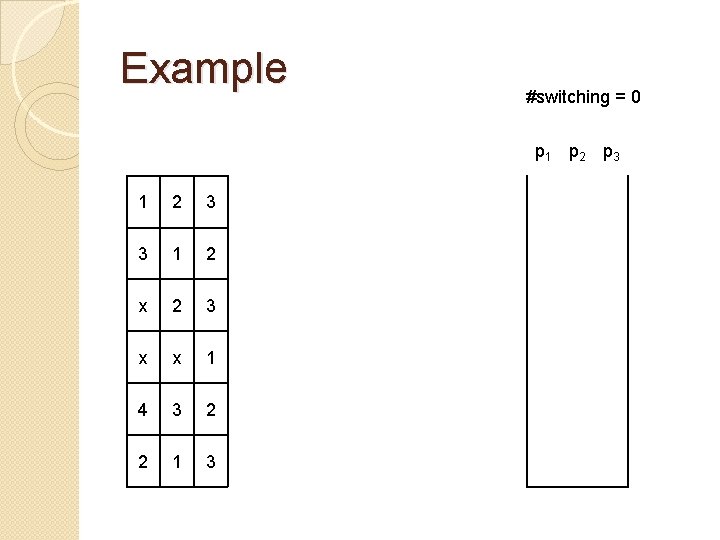

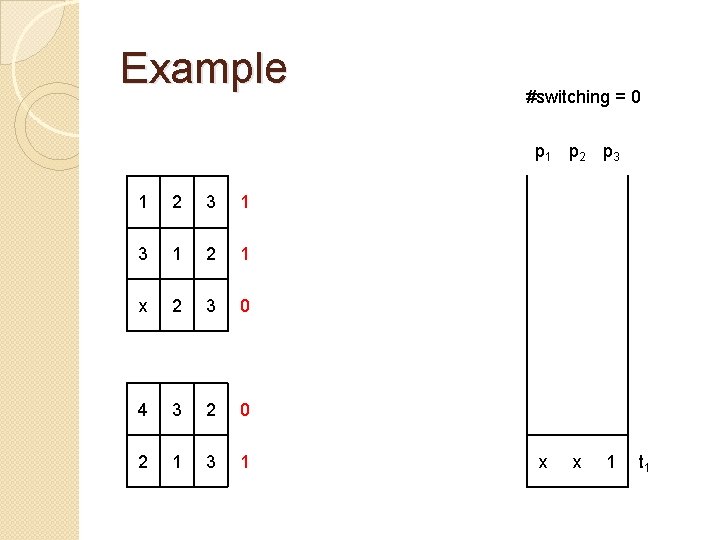

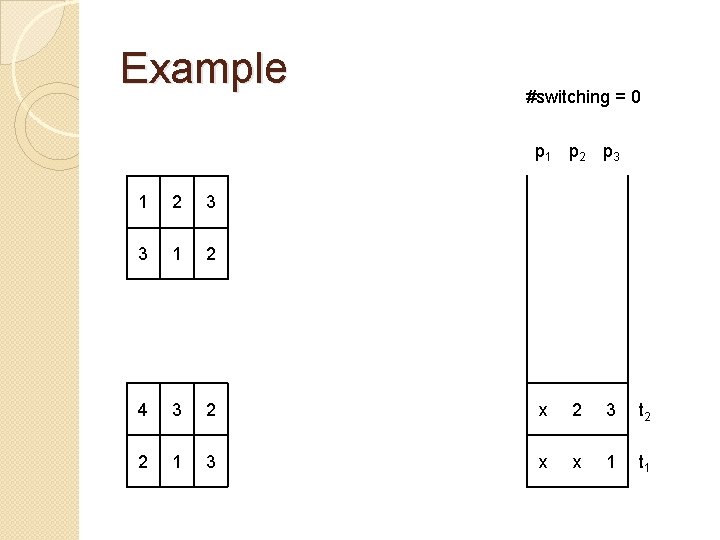

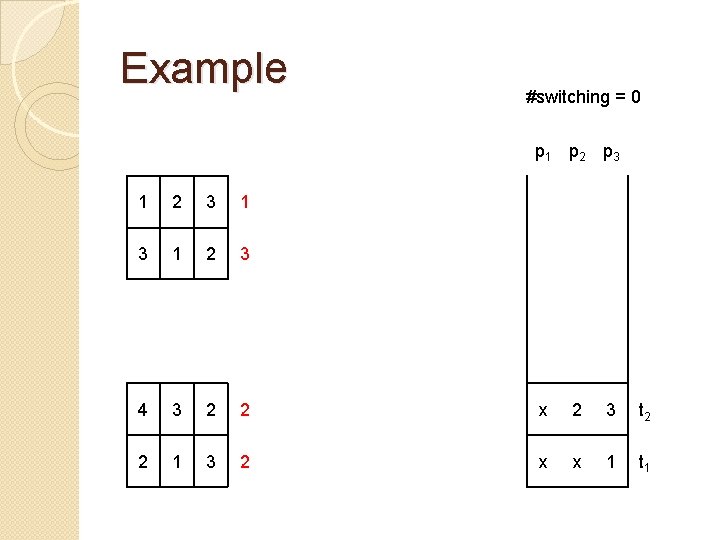

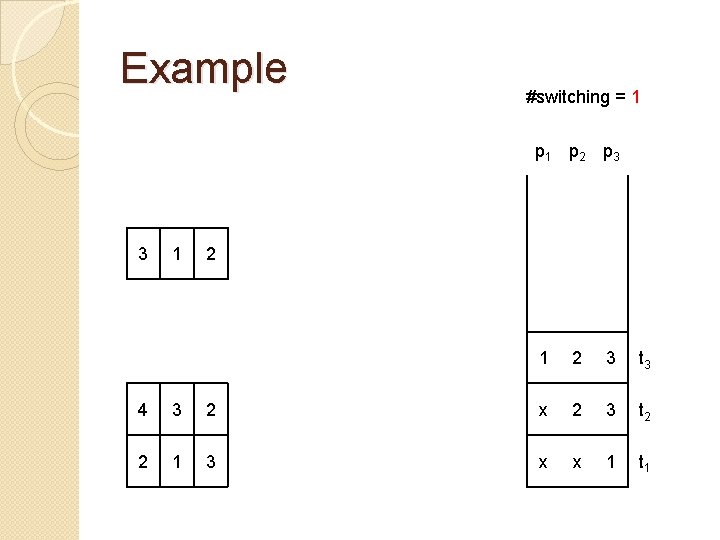

Example #switching = 0 p 1 p 2 p 3 1 2 3 3 1 2 x 2 3 x x 1 4 3 2 2 1 3

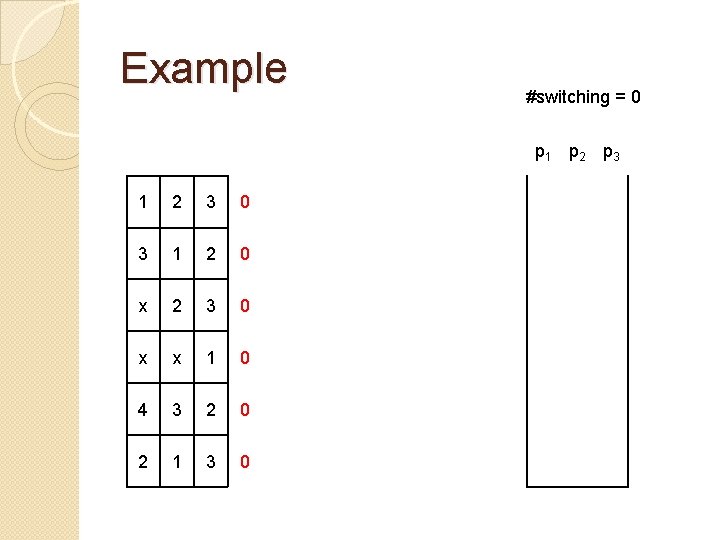

Example #switching = 0 p 1 p 2 p 3 1 2 3 0 3 1 2 0 x 2 3 0 x x 1 0 4 3 2 0 2 1 3 0

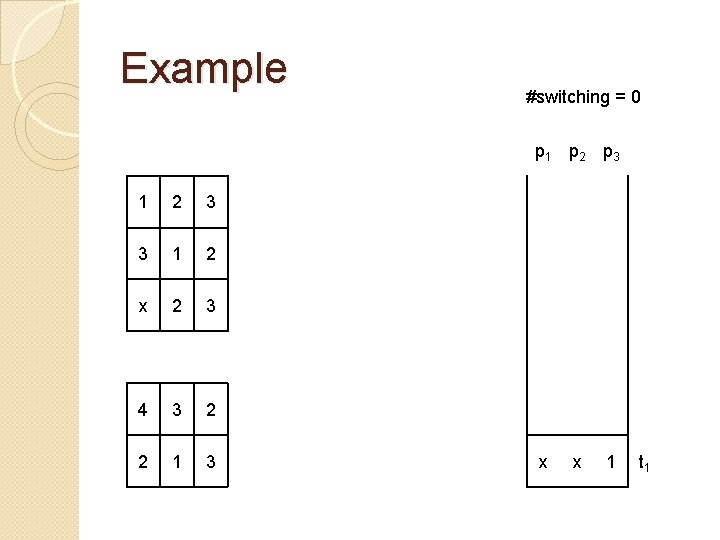

Example #switching = 0 p 1 p 2 p 3 1 2 3 3 1 2 x 2 3 4 3 2 2 1 3 x x 1 t 1

Example #switching = 0 p 1 p 2 p 3 1 2 1 x 2 3 0 4 3 2 0 2 1 3 1 x x 1 t 1

Example #switching = 0 p 1 p 2 p 3 1 2 3 3 1 2 4 3 2 x 2 3 t 2 2 1 3 x x 1 t 1

Example #switching = 0 p 1 p 2 p 3 1 2 3 4 3 2 2 x 2 3 t 2 2 1 3 2 x x 1 t 1

Example #switching = 1 p 2 p 3 3 1 2 3 t 3 4 3 2 x 2 3 t 2 2 1 3 x x 1 t 1

Example #switching = 7 p 1 p 2 p 3 2 1 3 t 6 3 1 2 t 5 4 3 2 t 4 1 2 3 t 3 x 2 3 t 2 x x 1 t 1

XEN HYPERVISOR SCHEDULER: CODE STUDY 43

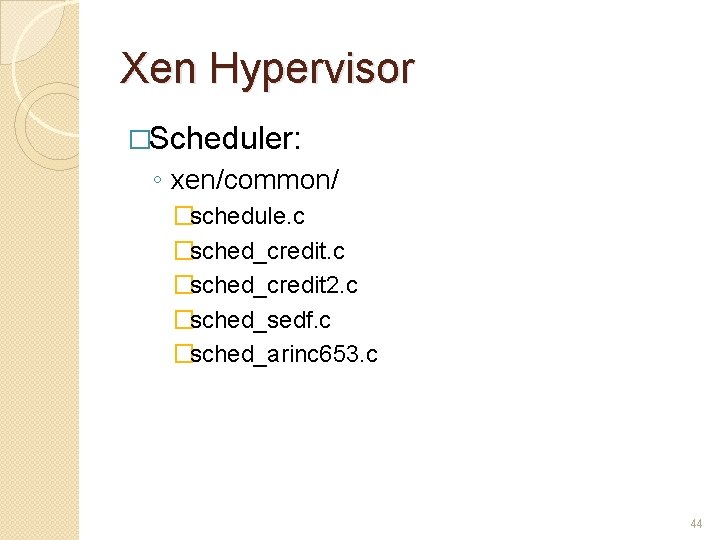

Xen Hypervisor �Scheduler: ◦ xen/common/ �schedule. c �sched_credit 2. c �sched_sedf. c �sched_arinc 653. c 44

xen/common/schedule. c �Generic CPU scheduling code ◦ implements support functionality for the Xen scheduler API. ◦ scheduler: default to credit-base scheduler �static void schedule(void) ◦ de-schedule the current domain. ◦ pick a new domain. 45

Scheduling Steps �Xen call do_schedule() of current scheduler on each physical CPU(PCPU). �Scheduler selects a virtual CPU(VCPU) from run queue, and return it to Xen hypervisor. �Xen hypervisor deploy the VCPU to current PCPU. 46

Adding Our Scheduler �Our scheduler periodically generates a scheduling plan. �Organize the run queue of each physical core according to the scheduling plan. �Xen hypervisor assigns VCPU to PCPU according to the run queue. 47

Current Status �Implementing our scheduler on Xen. �TODOs: ◦ ◦ Scheduler. Fetch virtual core frequency in Xen. Insert a periodic routine in Xen. Virtual core idle and wake-up mechanism in our scheduler. 48

- Slides: 48