Virtual Machine Fabric Extension VMFEX Bringing the Virtual

Virtual Machine Fabric Extension (VM-FEX) Bringing the Virtual Machines Directly on the Network BRKCOM-2005 Dan Hanson, Technical Marketing Manager, Data Center Group, CCIE #4482 Timothy Ma, Technical Marketing Engineer, Data Center Group

The Session will Cover Ø FEX Overview &History Ø VM-FEX Introduction Ø VM-FEX Operational Model Ø VM-FEX General Baseline on UCS Ø VM-FEX with VMware on UCS Ø VM-FEX with Hyper-V on UCS Ø VM-FEX with KVM on UCS Ø VM-FEX General Details on Nexus 5500 Ø Summary BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 3

FEX Overview & History

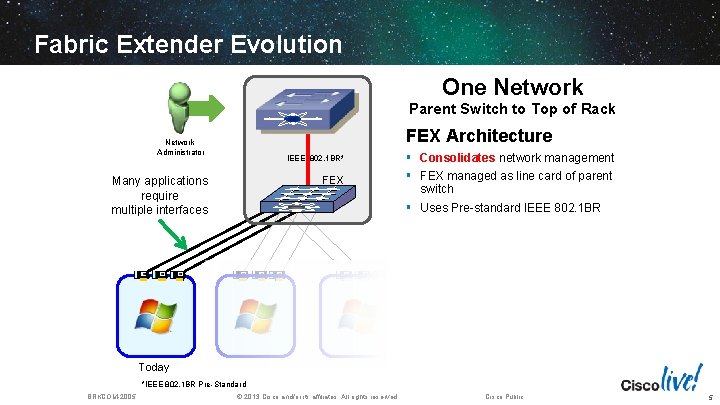

Fabric Extender Evolution One Network Parent Switch to Top of Rack FEX Architecture Network Administrator IEEE 802. 1 BR* FEX Many applications require multiple interfaces § Consolidates network management § FEX managed as line card of parent switch § Uses Pre-standard IEEE 802. 1 BR Today *IEEE 802. 1 BR Pre-Standard BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 5

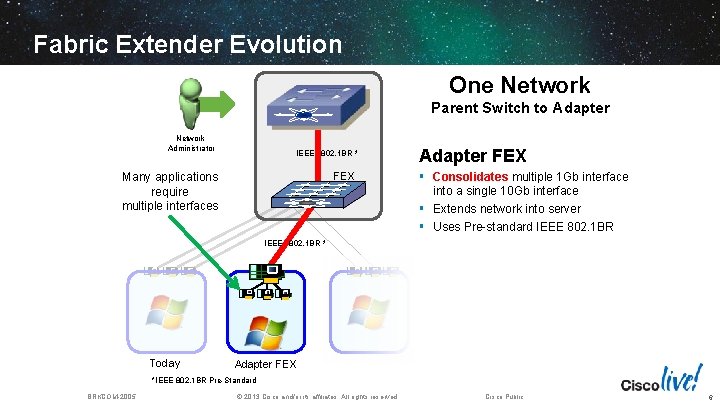

Fabric Extender Evolution One Network Parent Switch to Adapter Network Administrator IEEE 802. 1 BR * FEX Many applications require multiple interfaces Adapter FEX § Consolidates multiple 1 Gb interface into a single 10 Gb interface § Extends network into server § Uses Pre-standard IEEE 802. 1 BR IEEE 802. 1 BR * Today Adapter FEX *IEEE 802. 1 BR Pre-Standard BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 6

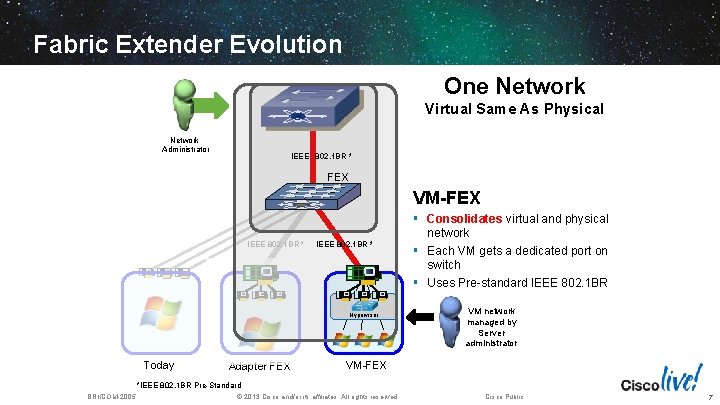

Fabric Extender Evolution One Network Virtual Same As Physical Network Administrator IEEE 802. 1 BR * FEX VM-FEX IEEE 802. 1 BR * Hypervisor Today Adapter FEX § Consolidates virtual and physical network § Each VM gets a dedicated port on switch § Uses Pre-standard IEEE 802. 1 BR VM network managed by Server administrator VM-FEX *IEEE 802. 1 BR Pre-Standard BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 7

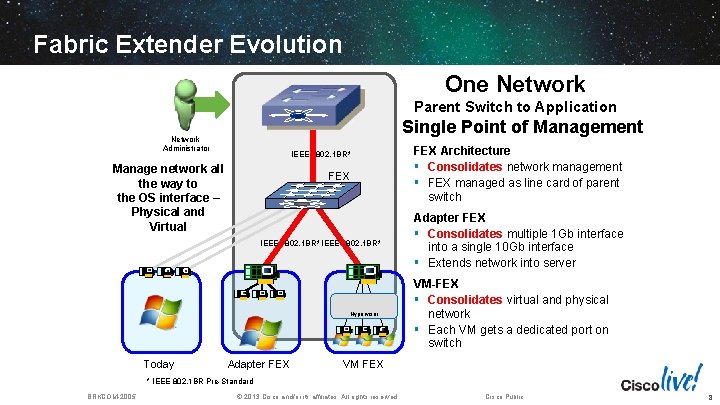

Fabric Extender Evolution One Network Parent Switch to Application Single Point of Management Network Administrator IEEE 802. 1 BR* Manage network all the way to the OS interface – Physical and Virtual FEX IEEE 802. 1 BR* Hypervisor Today Adapter FEX Architecture § Consolidates network management § FEX managed as line card of parent switch Adapter FEX § Consolidates multiple 1 Gb interface into a single 10 Gb interface § Extends network into server VM-FEX § Consolidates virtual and physical network § Each VM gets a dedicated port on switch VM FEX * IEEE 802. 1 BR Pre-Standard BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 8

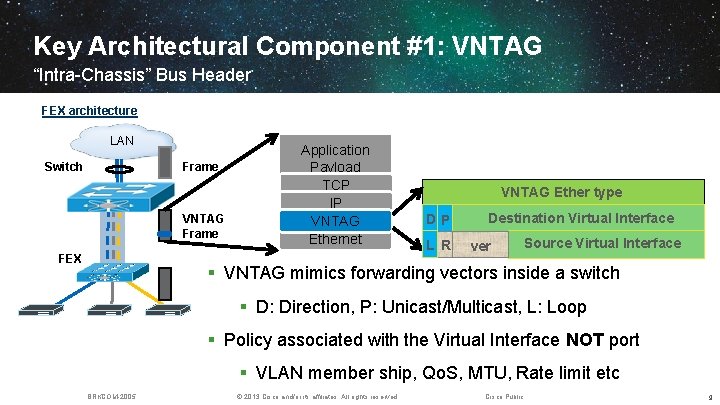

Key Architectural Component #1: VNTAG “Intra-Chassis” Bus Header FEX architecture LAN Switch Frame VNTAG Frame FEX Application Payload TCP IP VNTAG Ethernet VNTAG Ether type DP L R Destination Virtual Interface ver Source Virtual Interface § VNTAG mimics forwarding vectors inside a switch § D: Direction, P: Unicast/Multicast, L: Loop § Policy associated with the Virtual Interface NOT port § VLAN member ship, Qo. S, MTU, Rate limit etc BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 9

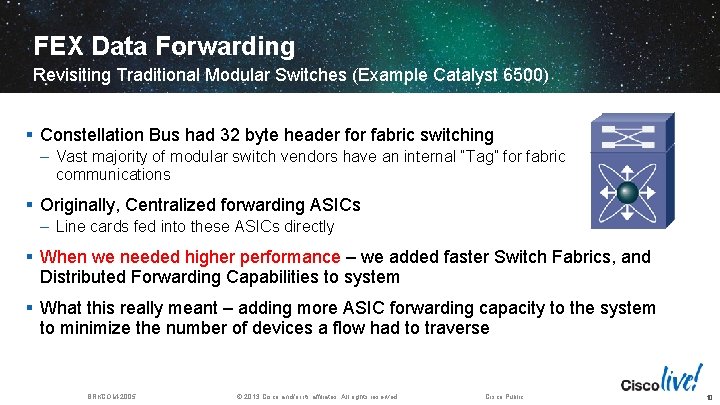

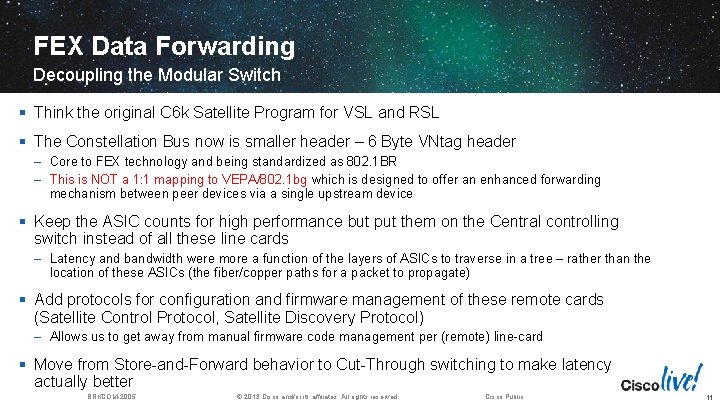

FEX Data Forwarding Revisiting Traditional Modular Switches (Example Catalyst 6500) § Constellation Bus had 32 byte header for fabric switching – Vast majority of modular switch vendors have an internal “Tag” for fabric communications § Originally, Centralized forwarding ASICs – Line cards fed into these ASICs directly § When we needed higher performance – we added faster Switch Fabrics, and Distributed Forwarding Capabilities to system § What this really meant – adding more ASIC forwarding capacity to the system to minimize the number of devices a flow had to traverse BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 10

FEX Data Forwarding Decoupling the Modular Switch § Think the original C 6 k Satellite Program for VSL and RSL § The Constellation Bus now is smaller header – 6 Byte VNtag header – Core to FEX technology and being standardized as 802. 1 BR – This is NOT a 1: 1 mapping to VEPA/802. 1 bg which is designed to offer an enhanced forwarding mechanism between peer devices via a single upstream device § Keep the ASIC counts for high performance but put them on the Central controlling switch instead of all these line cards – Latency and bandwidth were more a function of the layers of ASICs to traverse in a tree – rather than the location of these ASICs (the fiber/copper paths for a packet to propagate) § Add protocols for configuration and firmware management of these remote cards (Satellite Control Protocol, Satellite Discovery Protocol) – Allows us to get away from manual firmware code management per (remote) line-card § Move from Store-and-Forward behavior to Cut-Through switching to make latency actually better BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 11

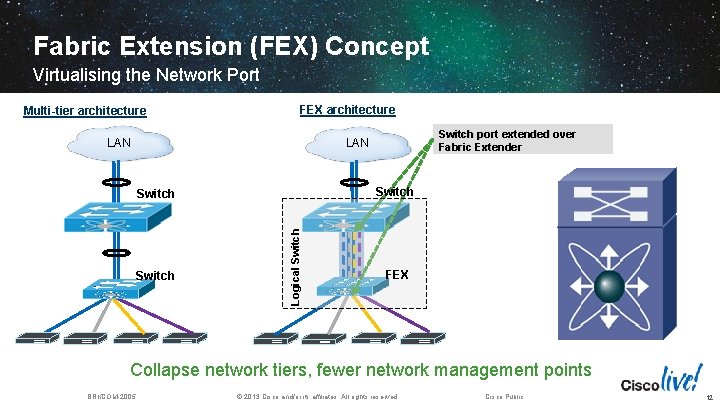

Fabric Extension (FEX) Concept Virtualising the Network Port Multi-tier architecture FEX architecture LAN Switch port extended over Fabric Extender LAN Switch Logical Switch FEX Collapse network tiers, fewer network management points BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 12

FEX Technology for Unified I/O § Virtual Switch Ports, Cables, and NIC Ports § Mapping of Ethernet and FC Wires over Ethernet § Service Level enforcement § Multiple data types (jumbo, lossless, FC) DCB Ethernet Blade Management Channels (KVM, USB, CDROM, Adapters) § Individual link-states § Fewer Cables § Multiple Ethernet traffic co-exist on same cable § Fewer adapters needed § Overall less power § Interoperates with existing Models § Management remains constant for system admins and LAN/SAN admins § Possible to take these links further upstream for aggregation Individual Ethernets Individual Storage (i. SCSI, NFS, FC) BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 13

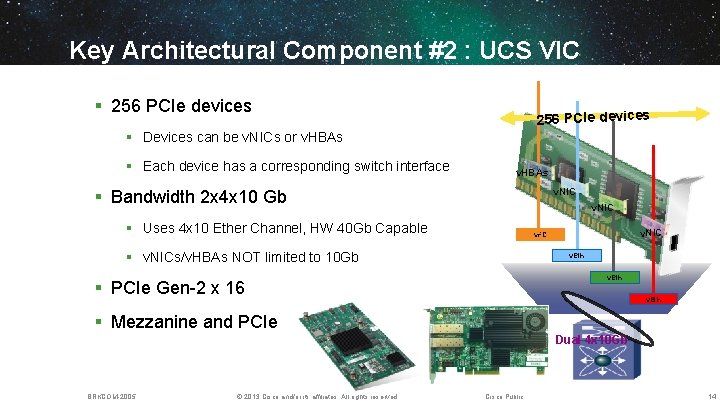

Key Architectural Component #2 : UCS VIC § 256 PCIe devices § Devices can be v. NICs or v. HBAs § Each device has a corresponding switch interface v. HBAs v. NIC § Bandwidth 2 x 4 x 10 Gb v. NIC § Uses 4 x 10 Ether Channel, HW 40 Gb Capable v. NIC v. FC § v. NICs/v. HBAs NOT limited to 10 Gb v. Eth § PCIe Gen-2 x 16 v. Eth § Mezzanine and PCIe Dual 4 x 10 Gb BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 14

VM-FEX Introduction 15

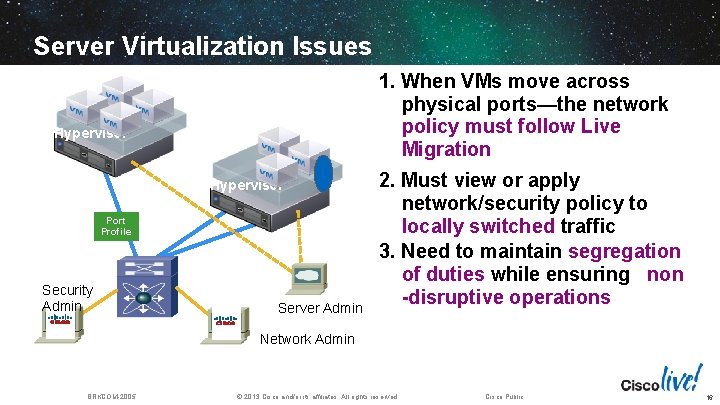

Server Virtualization Issues 1. When VMs move across physical ports—the network policy must follow Live Migration Hypervisor Port Profile Security Admin Server Admin 2. Must view or apply network/security policy to locally switched traffic 3. Need to maintain segregation of duties while ensuring non -disruptive operations Network Admin BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 16

Cisco Virtual Networking Options Extend networking into hypervisor (Cisco Nexus 1000 V Switch) Extend physical network to VMs (Cisco UCS VM-FEX) Cisco Nexus 1000 V Server BRKCOM-2005 Hypervisor Generic Adapter Cisco UCS VM-FEX Server © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 17

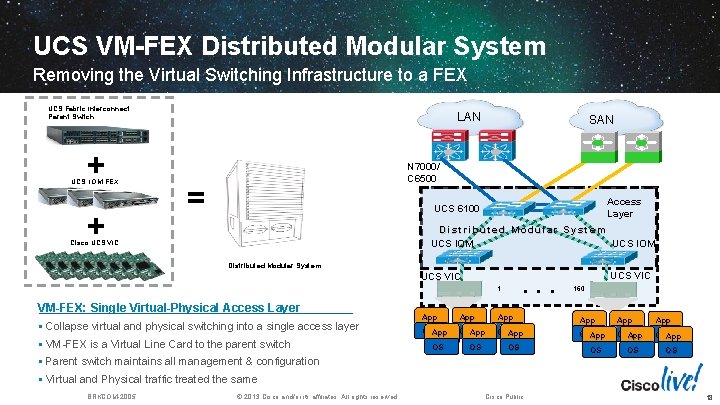

UCS VM-FEX Distributed Modular System Removing the Virtual Switching Infrastructure to a FEX UCS Fabric Interconnect Parent Switch + UCS IOM-FEX + LAN SAN MDS N 7000/ C 6500 = Access Layer UCS 6100 Distributed Modular System UCS IOM Cisco UCS VIC Distributed Modular System UCS VIC . . . 1 VM-FEX: Single Virtual-Physical Access Layer § Collapse virtual and physical switching into a single access layer § VM-FEX is a Virtual Line Card to the parent switch UCS VIC 160 App App App OSApp OSApp OS OS OS § Parent switch maintains all management & configuration OS OS OS § Virtual and Physical traffic treated the same BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 18

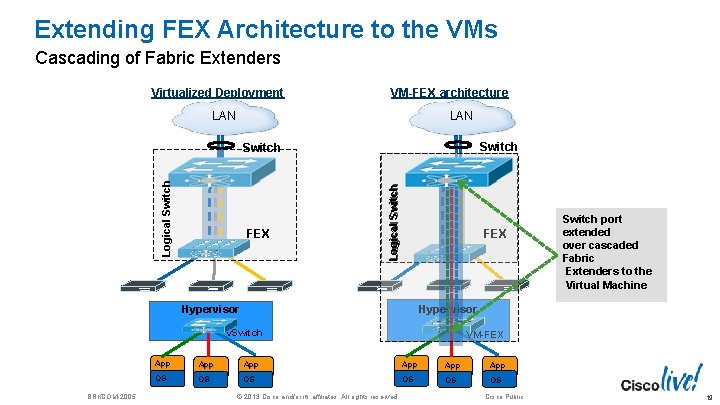

Extending FEX Architecture to the VMs Cascading of Fabric Extenders Virtualized Deployment VM-FEX architecture LAN Switch FEX Hypervisor Logical Switch FEX Hypervisor v. Switch BRKCOM-2005 VM-FEX App App App OS OS OS © 2013 Cisco and/or its affiliates. All rights reserved. Switch port extended over cascaded Fabric Extenders to the Virtual Machine Cisco Public 19

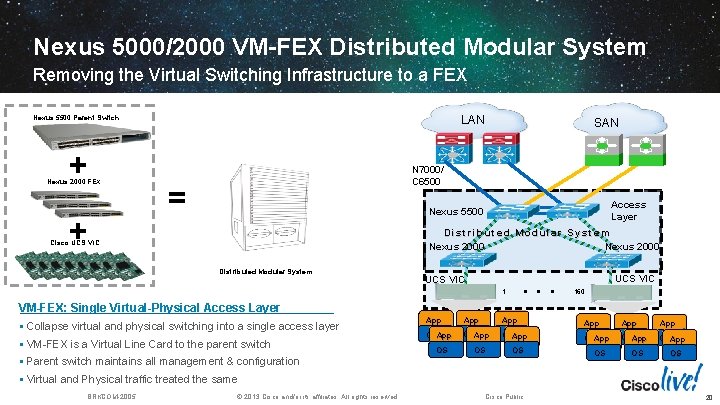

Nexus 5000/2000 VM-FEX Distributed Modular System Removing the Virtual Switching Infrastructure to a FEX LAN Nexus 5500 Parent Switch + Nexus 2000 FEX + SAN MDS N 7000/ C 6500 = Access Layer Nexus 5500 Distributed Modular System Nexus 2000 Cisco UCS VIC Distributed Modular System . . . UCS VIC 160 VM-FEX: Single Virtual-Physical Access Layer § Collapse virtual and physical switching into a single access layer § VM-FEX is a Virtual Line Card to the parent switch § Parent switch maintains all management & configuration App App App OSApp OSApp OS OS OS § Virtual and Physical traffic treated the same BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 20

Nexus 5000 + Fabric Extender Single Access Layer Nexus 5000 Parent Switch LAN + = Cisco Nexus® 2000 FEX SAN MDS N 7000/ C 6500 Access Layer Distributed Modular System N 5000 N 2232 Distributed Modular System 1 . . . N 2232 12 Distributed Modular System § Nexus 2000 FEX is a Virtual Line Card to the Nexus 5000 § Nexus 5000 maintains all management & configuration § No Spanning Tree between FEX & Nexus 5000 BRKCOM-2005 Over 6000 production customers Over 5 million Nexus 2000 ports deployed © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 21

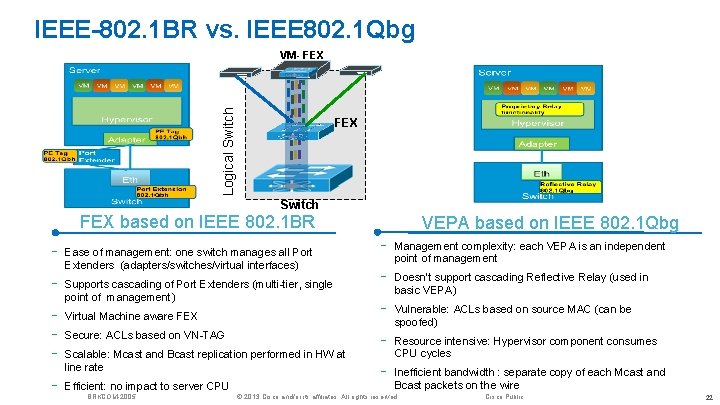

IEEE-802. 1 BR vs. IEEE 802. 1 Qbg FEX Logical Switch VM- FEX Switch FEX based on IEEE 802. 1 BR - Ease of management: one switch manages all Port Extenders (adapters/switches/virtual interfaces) - Supports cascading of Port Extenders (multi-tier, single point of management) - Virtual Machine aware FEX - Efficient: no impact to server CPU Secure: ACLs based on VN-TAG Scalable: Mcast and Bcast replication performed in HW at line rate BRKCOM-2005 VEPA based on IEEE 802. 1 Qbg - Management complexity: each VEPA is an independent point of management - Doesn’t support cascading Reflective Relay (used in basic VEPA) - Vulnerable: ACLs based on source MAC (can be spoofed) - Resource intensive: Hypervisor component consumes CPU cycles - Inefficient bandwidth : separate copy of each Mcast and Bcast packets on the wire © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 22

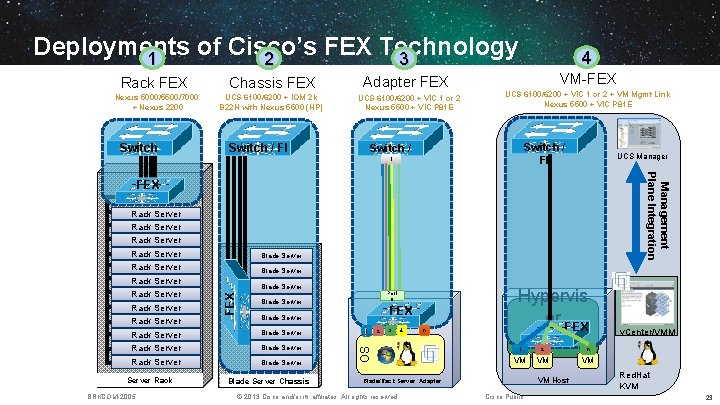

Deployments of Cisco’s FEX Technology 1 2 3 Rack FEX Chassis FEX Nexus 5000/5500/7000 + Nexus 2200 UCS 6100/6200 + IOM 2 k B 22 H with Nexus 5500 (HP) Switch Adapter FEX UCS 6100/6200 + VIC 1 or 2 Nexus 5500 + VIC P 81 E Switch / FI 4 VM-FEX UCS 6100/6200 + VIC 1 or 2 + VM Mgmt Link Nexus 5500 + VIC P 81 E Switch / FI 1 UCS Manager Management Plane Integration FEX Rack Server Rack BRKCOM-2005 Blade Server Port 0 Blade Server FEX Blade Server Blade Server Chassis 1 2 3 4 n OS Rack Server Blade Server FEX Rack Server Rack Server Hypervis or FEX 1 2 n VM VM Host Blade/Rack Server Adapter © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public v. Center/VMM Red. Hat KVM 23

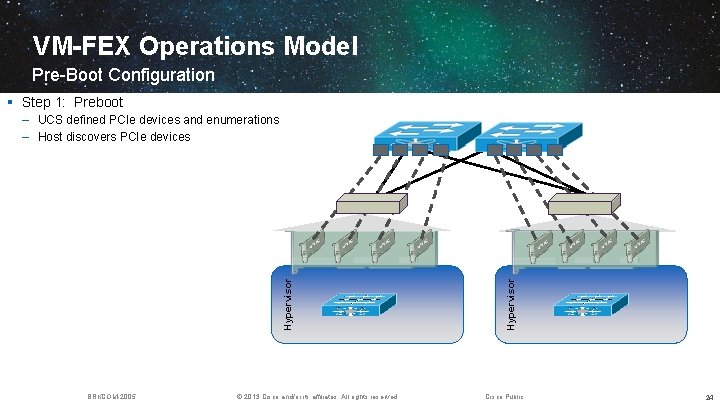

VM-FEX Operations Model Pre-Boot Configuration § Step 1: Preboot BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Hypervisor – UCS defined PCIe devices and enumerations – Host discovers PCIe devices Cisco Public 24

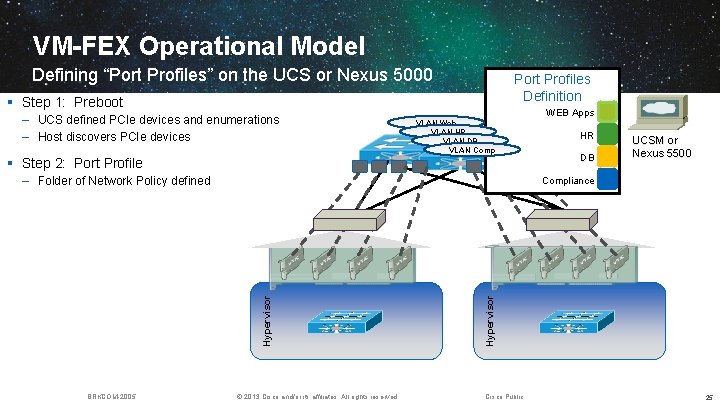

VM-FEX Operational Model Defining “Port Profiles” on the UCS or Nexus 5000 Port Profiles Definition § Step 1: Preboot – UCS defined PCIe devices and enumerations – Host discovers PCIe devices VLAN Web VLAN HR VLAN DB VLAN Comp § Step 2: Port Profile – Folder of Network Policy defined HR DB UCSM or Nexus 5500 © 2013 Cisco and/or its affiliates. All rights reserved. Hypervisor Compliance Hypervisor BRKCOM-2005 WEB Apps Cisco Public 25

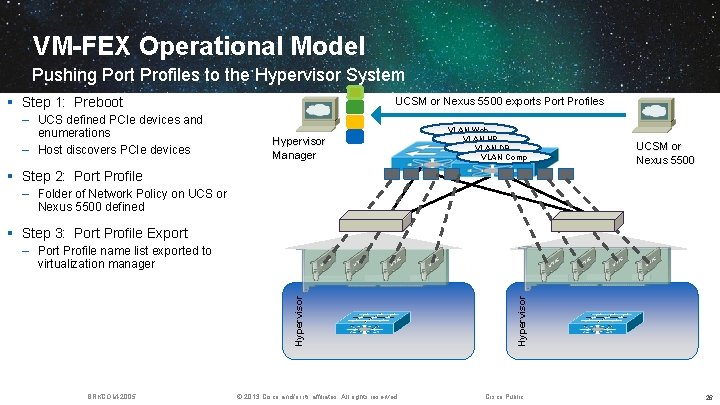

VM-FEX Operational Model Pushing Port Profiles to the Hypervisor System § Step 1: Preboot – UCS defined PCIe devices and enumerations – Host discovers PCIe devices UCSM or Nexus 5500 exports Port Profiles Hypervisor Manager VLAN Web VLAN HR VLAN DB VLAN Comp UCSM or Nexus 5500 § Step 2: Port Profile – Folder of Network Policy on UCS or Nexus 5500 defined § Step 3: Port Profile Export BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Hypervisor – Port Profile name list exported to virtualization manager Cisco Public 26

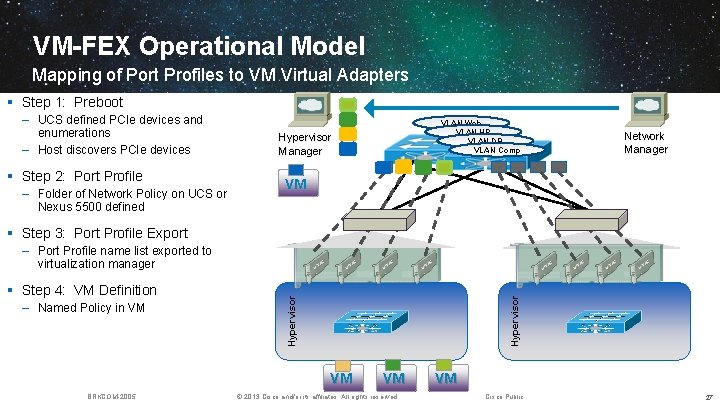

VM-FEX Operational Model Mapping of Port Profiles to VM Virtual Adapters § Step 1: Preboot – UCS defined PCIe devices and enumerations – Host discovers PCIe devices § Step 2: Port Profile – Folder of Network Policy on UCS or Nexus 5500 defined VLAN Web VLAN HR VLAN DB VLAN Comp Hypervisor Manager Network Manager VM § Step 3: Port Profile Export – Named Policy in VM Hypervisor § Step 4: VM Definition Hypervisor – Port Profile name list exported to virtualization manager VM BRKCOM-2005 VM © 2013 Cisco and/or its affiliates. All rights reserved. VM Cisco Public 27

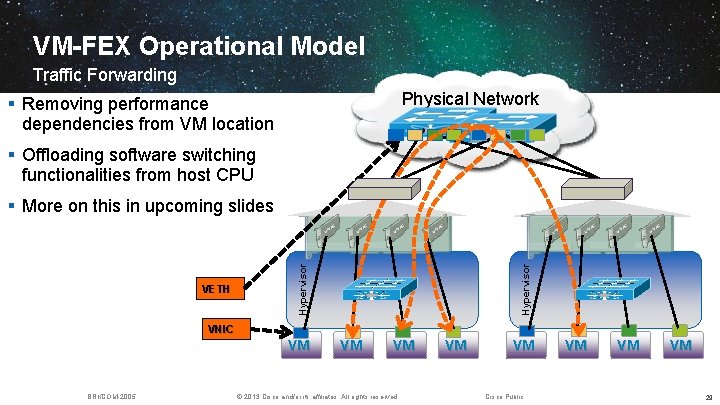

VM-FEX Operational Model Simplifying the Access Infrastructure § Unify the virtual and physical network Physical Network – Same Port Profiles for various hypervisors and bare metal servers Hypervisor VETH Hypervisor § Consistent functions, performance, management VNIC VM VM Virtual Network BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 28

VM-FEX Operational Model Traffic Forwarding Physical Network § Removing performance dependencies from VM location § Offloading software switching functionalities from host CPU Hypervisor VETH Hypervisor § More on this in upcoming slides VNIC VM BRKCOM-2005 VM VM © 2013 Cisco and/or its affiliates. All rights reserved. VM VM Cisco Public VM VM VM 29

VM-FEX Operational Model

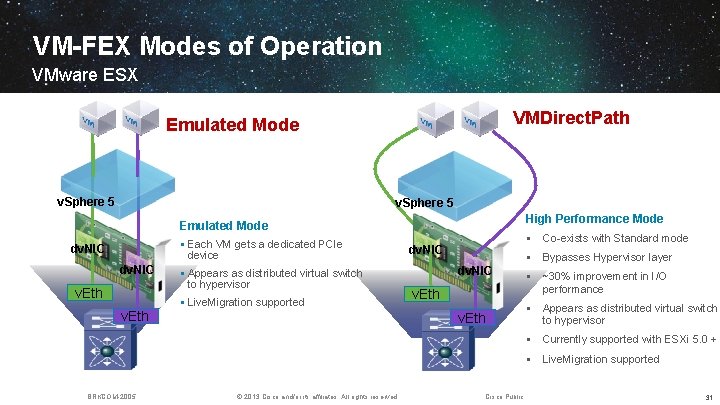

VM-FEX Modes of Operation VMware ESX VMDirect. Path Emulated Mode v. Sphere 5 High Performance Mode Emulated Mode § Each VM gets a dedicated PCIe device dv. NIC v. Eth § Appears as distributed virtual switch to hypervisor § Live. Migration supported v. Eth § Co-exists with Standard mode dv. NIC § Bypasses Hypervisor layer dv. NIC v. Eth § ~30% improvement in I/O performance § Appears as distributed virtual switch to hypervisor § Currently supported with ESXi 5. 0 + § Live. Migration supported BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 31

VMDirect. Path: How is Works VM Vmxnet 3 Driver Emulation <-> PT transitions Vmxnet 3 Port Events Cisco DVS OS PCI subsystem Ethernet Device Driver PCIe events Data Path Control Path Config: Used by PCI mgmt layer Mgmt Bar: Used by Vmkernel and PTS Data Bar : Vmxnet 3 compliant Rings and Registers Dynamic VIC device BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 32

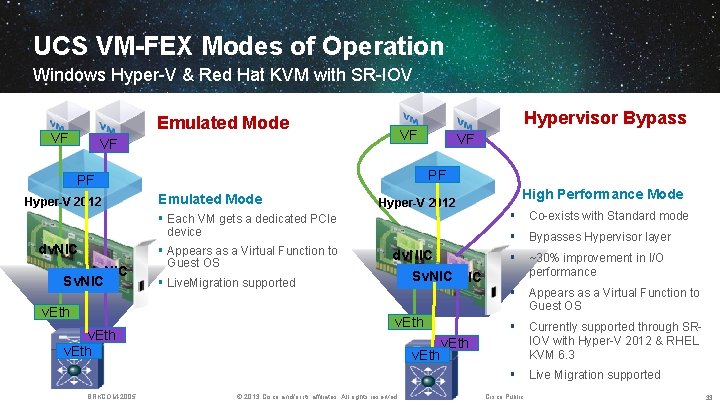

UCS VM-FEX Modes of Operation Windows Hyper-V & Red Hat KVM with SR-IOV Emulated Mode VF VF PF PF Hyper-V 2012 Hypervisor Bypass Emulated Mode Hyper-V 2012 § Each VM gets a dedicated PCIe device dv. NIC Sv. NIC v. Eth BRKCOM-2005 § Appears as a Virtual Function to Guest OS dv. NIC High Performance Mode § Co-exists with Standard mode § Bypasses Hypervisor layer § ~30% improvement in I/O performance § Appears as a Virtual Function to Guest OS § Currently supported through SRIOV with Hyper-V 2012 & RHEL KVM 6. 3 § Live Migration supported Sv. NIC dv. NIC § Live. Migration supported v. Eth © 2013 Cisco and/or its affiliates. All rights reserved. v. Eth Cisco Public 33

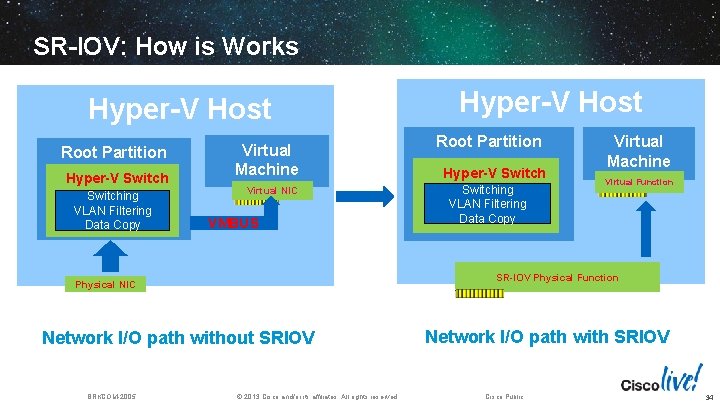

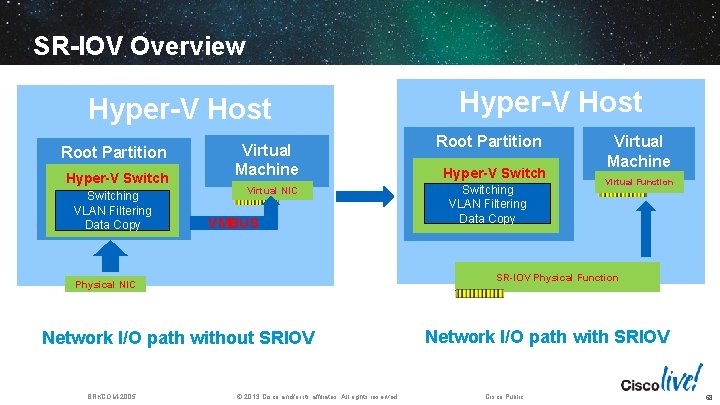

SR-IOV: How is Works Hyper-V Host Root Partition Hyper-V Switching VLAN Filtering Data Copy Virtual Machine Virtual NIC VMBUS Root Partition Hyper-V Switching VLAN Filtering Data Copy Virtual Machine Virtual Function SR-IOV Physical Function Physical NIC Network I/O path without SRIOV BRKCOM-2005 Hyper-V Host © 2013 Cisco and/or its affiliates. All rights reserved. Network I/O path with SRIOV Cisco Public 34

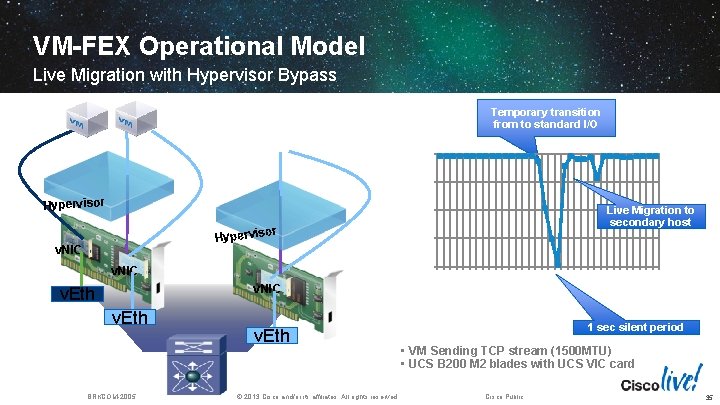

VM-FEX Operational Model Live Migration with Hypervisor Bypass Temporary transition from to standard I/O 10000 7500 BRKCOM-2005 Time (secs) v. Eth © 2013 Cisco and/or its affiliates. All rights reserved. 19: 06: 52 19: 06: 47 19: 06: 43 19: 06: 39 v. Eth 19: 06: 35 v. NIC 19: 06: 31 0 v. NIC v. Eth 2500 19: 06: 27 v. NIC 19: 06: 23 v. Sphere 4 or Hypervis Live Migration to secondary host 5000 19: 06: 19 Mbps Hypervisor 1 sec silent period • VM Sending TCP stream (1500 MTU) • UCS B 200 M 2 blades with UCS VIC card Cisco Public 35

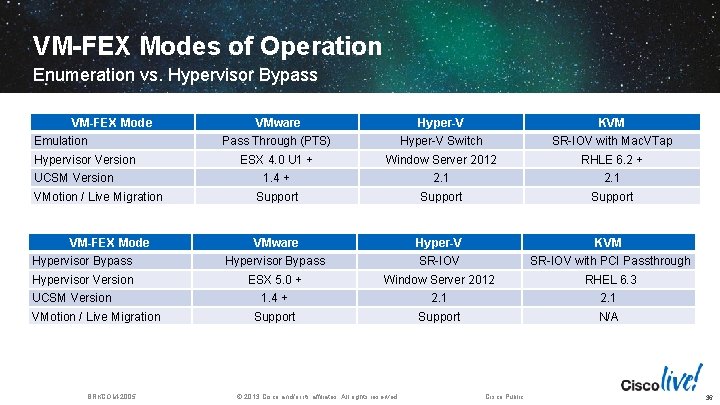

VM-FEX Modes of Operation Enumeration vs. Hypervisor Bypass VM-FEX Mode VMware Hyper-V KVM Pass Through (PTS) Hyper-V Switch SR-IOV with Mac. VTap ESX 4. 0 U 1 + Window Server 2012 RHLE 6. 2 + 1. 4 + 2. 1 Support VMware Hyper-V KVM Hypervisor Bypass SR-IOV with PCI Passthrough Hypervisor Version ESX 5. 0 + Window Server 2012 RHEL 6. 3 1. 4 + 2. 1 Support N/A Emulation Hypervisor Version UCSM Version VMotion / Live Migration VM-FEX Mode UCSM Version VMotion / Live Migration BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 36

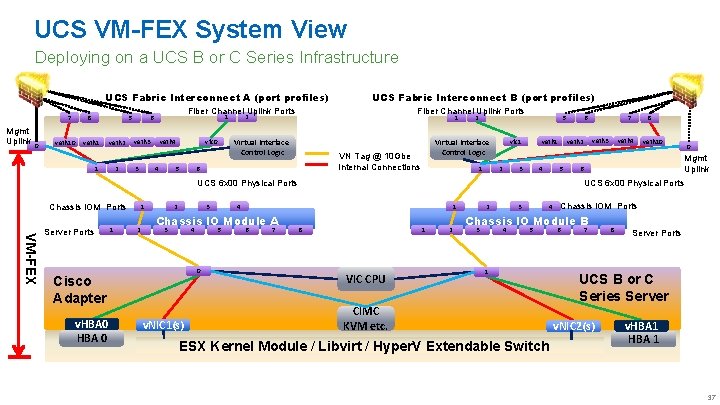

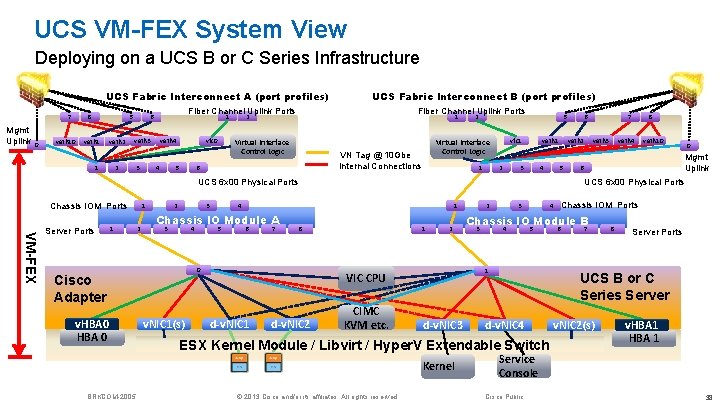

UCS VM-FEX System View Deploying on a UCS B or C Series Infrastructure UCS Fabric Interconnect A (port profiles) 7 Mgmt Uplink 0 veth 10 8 5 veth 1 veth 2 1 6 veth 3 3 UCS Fabric Interconnect B (port profiles) Fiber Channel Uplink Ports vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 1 Virtual Interface Control Logic 1 5 veth 1 vfc 1 Virtual Interface Control Logic VN Tag @ 10 Gbe Internal Connections 6 2 2 3 4 5 VM-FEX Server Ports 1 1 2 2 3 3 4 0 Cisco Adapter v. HBA 0 5 1 v. NIC 1(s) 6 7 8 veth 4 veth 10 0 Mgmt Uplink 6 UCS 6 x 00 Physical Ports 4 Chassis IO Module A 7 veth 3 veth 2 UCS 6 x 00 Physical Ports Chassis IOM Ports 6 8 1 VIC CPU 2 2 3 Chassis IOM Ports 4 Chassis IO Module B 3 4 5 1 CIMC KVM etc. ESX Kernel Module / Libvirt / Hyper. V Extendable Switch 6 7 8 Server Ports UCS B or C Series Server v. NIC 2(s) v. HBA 1 37

UCS VM-FEX System View Deploying on a UCS B or C Series Infrastructure UCS Fabric Interconnect A (port profiles) 7 Mgmt Uplink 0 8 veth 10 5 veth 1 veth 2 1 Fiber Channel Uplink Ports 1 6 veth 3 2 3 UCS Fabric Interconnect B (port profiles) vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 1 Virtual Interface Control Logic 1 5 veth 1 vfc 1 Virtual Interface Control Logic VN Tag @ 10 Gbe Internal Connections 6 2 2 3 4 5 VM-FEX Server Ports 1 1 2 2 3 3 4 1 6 7 8 0 Cisco Adapter v. HBA 0 5 v. NIC 1(s) 1 2 d-v. NIC 2 CIMC KVM etc. d-v. NIC 3 3 veth 4 veth 10 0 Mgmt Uplink 6 © 2013 Cisco and/or its affiliates. All rights reserved. Chassis IOM Ports 4 Chassis IO Module B 3 4 5 6 7 8 Server Ports UCS B or C Series Server d-v. NIC 4 ESX Kernel Module / Libvirt / Hyper. V Extendable Switch Kernel BRKCOM-2005 2 1 VIC CPU d-v. NIC 1 8 UCS 6 x 00 Physical Ports 4 Chassis IO Module A 7 veth 3 veth 2 UCS 6 x 00 Physical Ports Chassis IOM Ports 6 v. NIC 2(s) v. HBA 1 Service Console Cisco Public 38

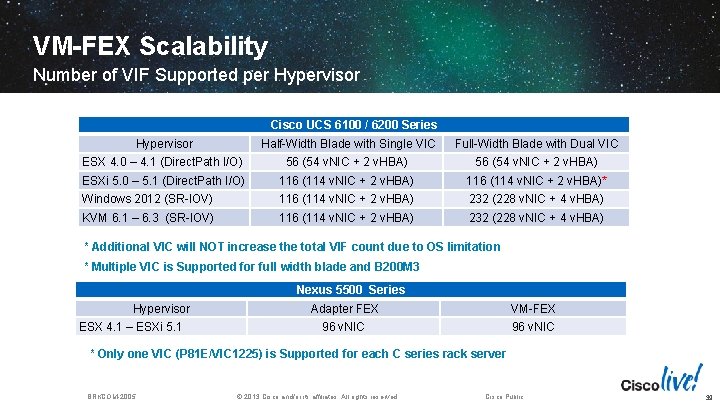

VM-FEX Scalability Number of VIF Supported per Hypervisor Cisco UCS 6100 / 6200 Series Hypervisor Half-Width Blade with Single VIC Full-Width Blade with Dual VIC ESX 4. 0 – 4. 1 (Direct. Path I/O) 56 (54 v. NIC + 2 v. HBA) ESXi 5. 0 – 5. 1 (Direct. Path I/O) 116 (114 v. NIC + 2 v. HBA)* Windows 2012 (SR-IOV) 116 (114 v. NIC + 2 v. HBA) 232 (228 v. NIC + 4 v. HBA) KVM 6. 1 – 6. 3 (SR-IOV) 116 (114 v. NIC + 2 v. HBA) 232 (228 v. NIC + 4 v. HBA) * Additional VIC will NOT increase the total VIF count due to OS limitation * Multiple VIC is Supported for full width blade and B 200 M 3 Nexus 5500 Series Hypervisor ESX 4. 1 – ESXi 5. 1 Adapter FEX VM-FEX 96 v. NIC * Only one VIC (P 81 E/VIC 1225) is Supported for each C series rack server BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 39

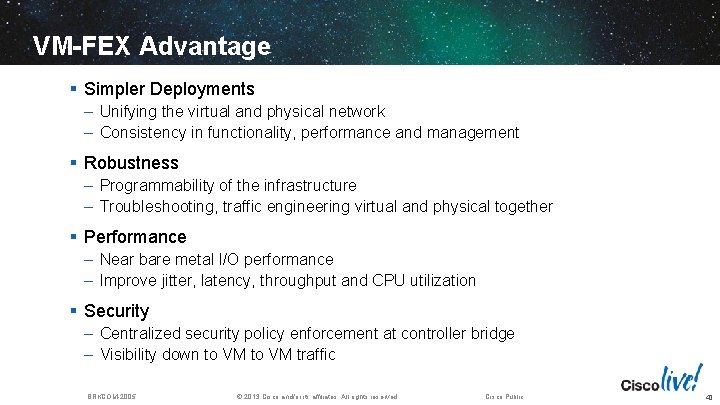

VM-FEX Advantage § Simpler Deployments – Unifying the virtual and physical network – Consistency in functionality, performance and management § Robustness – Programmability of the infrastructure – Troubleshooting, traffic engineering virtual and physical together § Performance – Near bare metal I/O performance – Improve jitter, latency, throughput and CPU utilization § Security – Centralized security policy enforcement at controller bridge – Visibility down to VM traffic BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 40

VM-FEX General Baseline on UCS

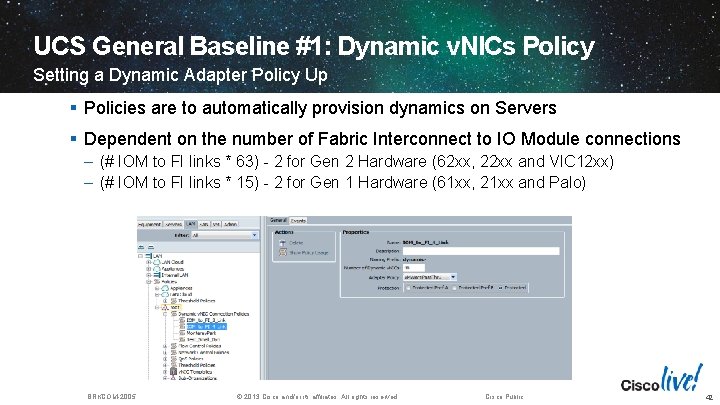

UCS General Baseline #1: Dynamic v. NICs Policy Setting a Dynamic Adapter Policy Up § Policies are to automatically provision dynamics on Servers § Dependent on the number of Fabric Interconnect to IO Module connections – (# IOM to FI links * 63) - 2 for Gen 2 Hardware (62 xx, 22 xx and VIC 12 xx) – (# IOM to FI links * 15) - 2 for Gen 1 Hardware (61 xx, 21 xx and Palo) BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 42

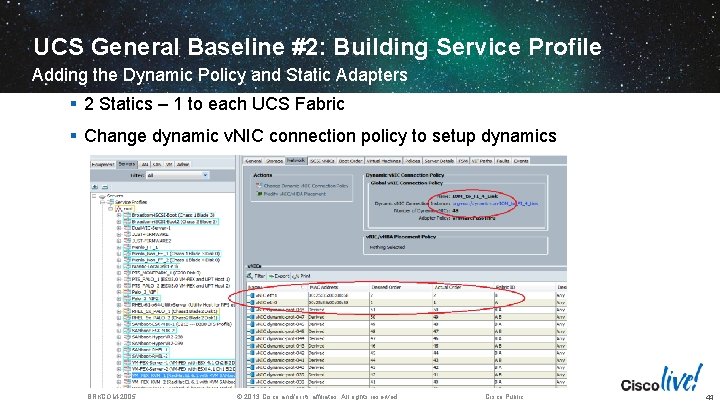

UCS General Baseline #2: Building Service Profile Adding the Dynamic Policy and Static Adapters § 2 Statics – 1 to each UCS Fabric § Change dynamic v. NIC connection policy to setup dynamics BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 44

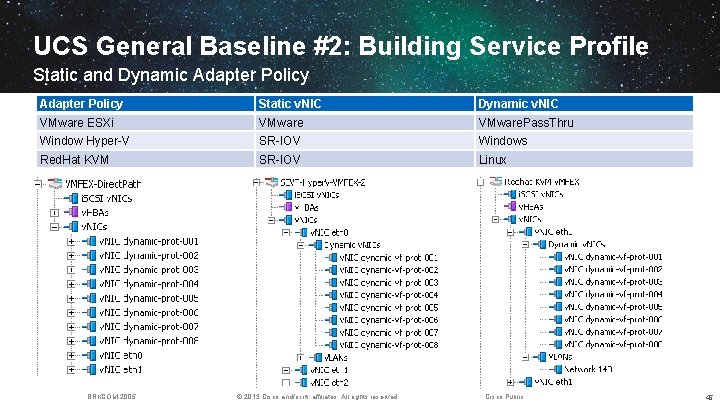

UCS General Baseline #2: Building Service Profile Static and Dynamic Adapter Policy Static v. NIC Dynamic v. NIC VMware ESXi VMware. Pass. Thru Window Hyper-V SR-IOV Windows Red. Hat KVM SR-IOV Linux BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 45

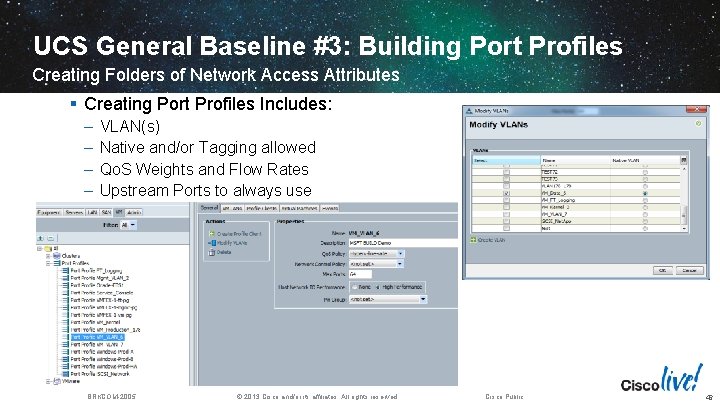

UCS General Baseline #3: Building Port Profiles Creating Folders of Network Access Attributes § Creating Port Profiles Includes: – – VLAN(s) Native and/or Tagging allowed Qo. S Weights and Flow Rates Upstream Ports to always use BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 46

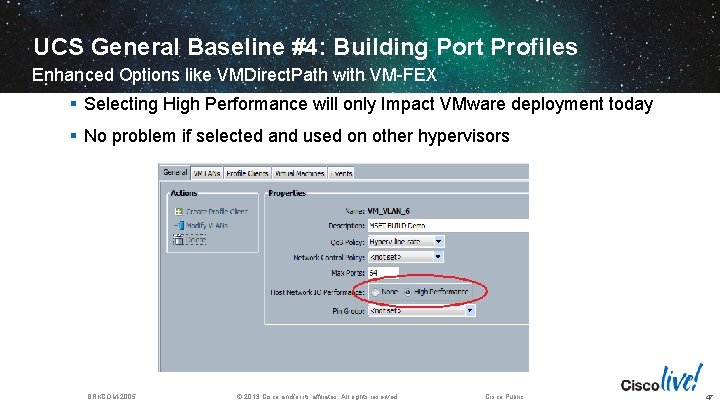

UCS General Baseline #4: Building Port Profiles Enhanced Options like VMDirect. Path with VM-FEX § Selecting High Performance will only Impact VMware deployment today § No problem if selected and used on other hypervisors BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 47

UCS General Baseline #5: Communication with Manager Establishing Communication to Hypervisor Manager § Tool discussed later to simplify the whole integration process BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 48

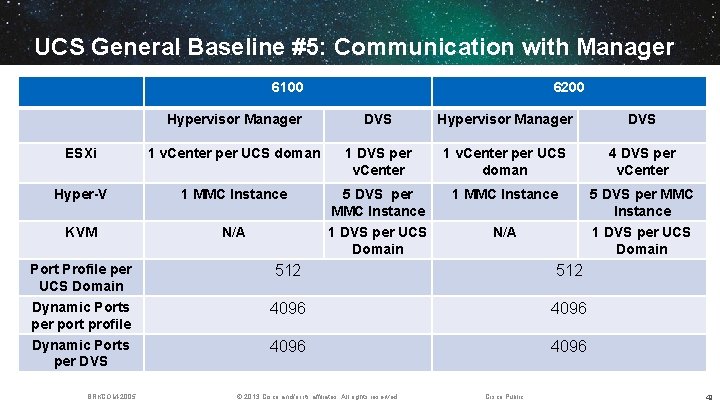

UCS General Baseline #5: Communication with Manager 6100 6200 Hypervisor Manager DVS ESXi 1 v. Center per UCS doman 1 DVS per v. Center 1 v. Center per UCS doman 4 DVS per v. Center Hyper-V 1 MMC Instance KVM N/A 5 DVS per MMC Instance 1 DVS per UCS Domain Port Profile per UCS Domain Dynamic Ports per port profile Dynamic Ports per DVS BRKCOM-2005 N/A 512 4096 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 49

UCS General Baseline #6: Publishing Port Profiles Exporting Port Profiles to these to Hypervisor Manager § Publish Port Profiles to Hypervisors and virtual switches within BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 50

VM-FEX Implementation with VMware on UCS 51

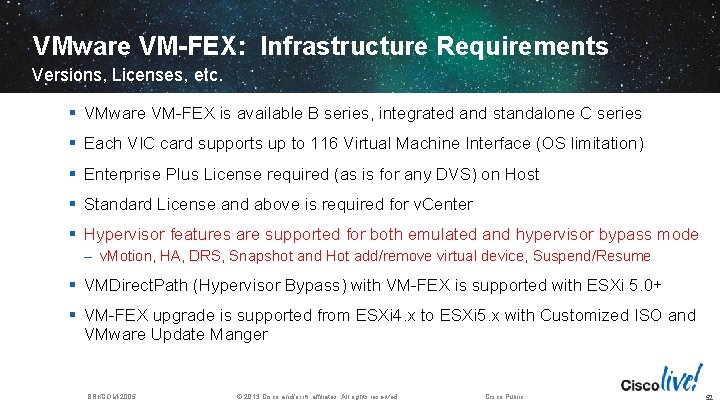

VMware VM-FEX: Infrastructure Requirements Versions, Licenses, etc. § VMware VM-FEX is available B series, integrated and standalone C series § Each VIC card supports up to 116 Virtual Machine Interface (OS limitation) § Enterprise Plus License required (as is for any DVS) on Host § Standard License and above is required for v. Center § Hypervisor features are supported for both emulated and hypervisor bypass mode – v. Motion, HA, DRS, Snapshot and Hot add/remove virtual device, Suspend/Resume § VMDirect. Path (Hypervisor Bypass) with VM-FEX is supported with ESXi 5. 0+ § VM-FEX upgrade is supported from ESXi 4. x to ESXi 5. x with Customized ISO and VMware Update Manger BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 52

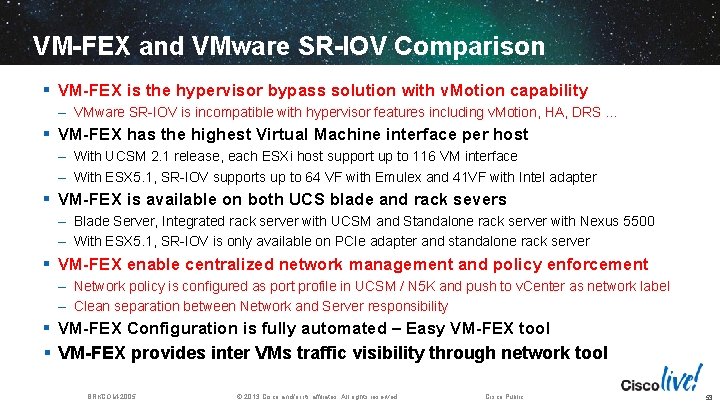

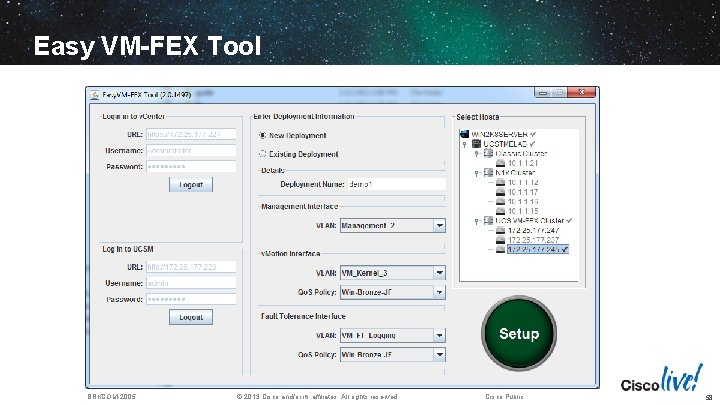

VM-FEX and VMware SR-IOV Comparison § VM-FEX is the hypervisor bypass solution with v. Motion capability – VMware SR-IOV is incompatible with hypervisor features including v. Motion, HA, DRS … § VM-FEX has the highest Virtual Machine interface per host – With UCSM 2. 1 release, each ESXi host support up to 116 VM interface – With ESX 5. 1, SR-IOV supports up to 64 VF with Emulex and 41 VF with Intel adapter § VM-FEX is available on both UCS blade and rack severs – Blade Server, Integrated rack server with UCSM and Standalone rack server with Nexus 5500 – With ESX 5. 1, SR-IOV is only available on PCIe adapter and standalone rack server § VM-FEX enable centralized network management and policy enforcement – Network policy is configured as port profile in UCSM / N 5 K and push to v. Center as network label – Clean separation between Network and Server responsibility § VM-FEX Configuration is fully automated – Easy VM-FEX tool § VM-FEX provides inter VMs traffic visibility through network tool BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 53

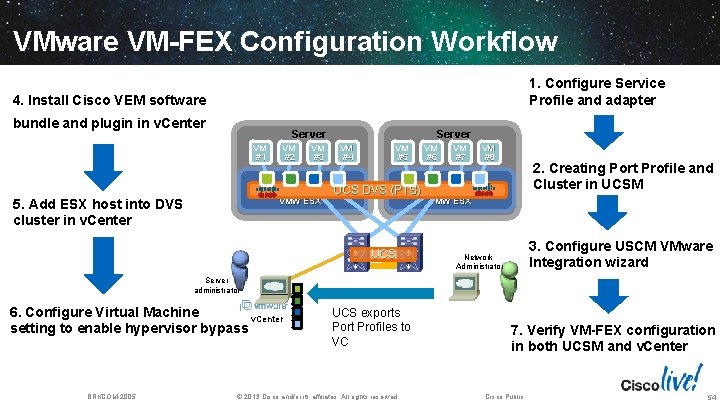

VMware VM-FEX Configuration Workflow 1. Configure Service Profile and adapter 4. Install Cisco VEM software bundle and plugin in v. Center Server VM #1 5. Add ESX host into DVS cluster in v. Center VM #2 VM #3 VMW ESX Server VM #4 VM #5 UCS DVS (PTS) UCS VM #6 VM #7 VM #8 2. Creating Port Profile and Cluster in UCSM VMW ESX 3. Configure USCM VMware Integration wizard Network Administrator Server administrator 6. Configure Virtual Machine v. Center setting to enable hypervisor bypass BRKCOM-2005 UCS exports Port Profiles to VC © 2013 Cisco and/or its affiliates. All rights reserved. 7. Verify VM-FEX configuration in both UCSM and v. Center Cisco Public 54

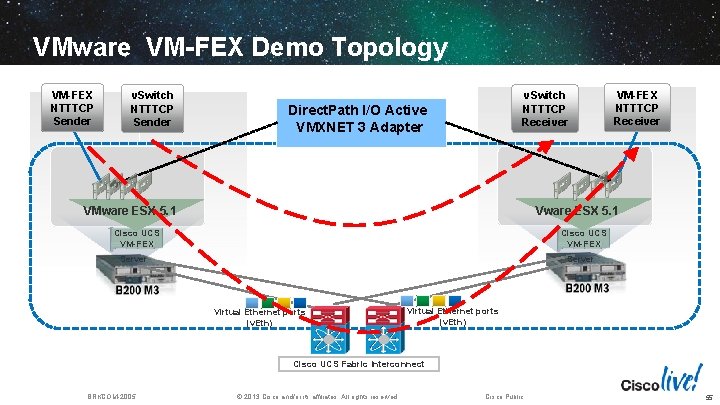

VMware VM-FEX Demo Topology VM-FEX NTTTCP Sender v. Switch NTTTCP Receiver Direct. Path I/O Active VMXNET 3 Adapter VM-FEX NTTTCP Receiver Vware ESX 5. 1 VMware ESX 5. 1 Cisco UCS VM-FEX Server Virtual Ethernet ports (v. Eth) Cisco UCS Fabric Interconnect BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 55

VMware VM-FEX Demo 56

VMware VM-FEX Best Practice § Pre-provision the number of dynamic v. NIC for future usage – Changing the quantity and the adapter policy require server reboot § Select “High Performance Mode” in Port Profile to enable hypervisor bypass § Utilize ESX Native VMXNET 3 Driver – User configurable parameter including queue, interrupt, ring size through policy – Recommend to have Num (v. CPU) = Num (TQ) = Num(RQ) to enable Direct. Path I/O § Other consideration to deploy VM-FEX – ESX heap memory size : MTU size – ESX available interrupt vectors : Guest OS and adapter policy – Dedicated spreadsheet for VM-FEX calculation and sizing BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 57

Easy VM-FEX Tool BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 58

VM-FEX Implementation with Nexus 5 K 59

Nexus VM-FEX System View Deploying on a UCS C Series with Nexus 5500 Infrastructure UCS VM-FEX System View Nexus 55 xx B (port profiles) Nexus 55 xx A (port profiles) 7 Mgmt Uplink 0 Fiber Channel Uplink Ports 1 8 veth 10 veth 1 veth 2 1 2 veth 3 3 vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 Virtual Interface Control Logic 1 47 47 48 48 VN Tag @ 10 Gbe Internal Connections v. PC Connections 6 Nexus 55 xx Physical Ports 2232 Fabric Ports VM-FEX 1 1 2 2 3 1 4 5 32 2 3 4 1 2 2 veth 2 5 veth 3 veth 4 veth 10 0 Mgmt Uplink 6 3 2232 Fabric Ports 8 2232 FEX B 4 5 6 32 2232 Server Ports 0 Cisco Adapter v. HBA 0 veth 1 8 Nexus 55 xx Physical Ports 1 6 7 vfc 1 Virtual Interface Control Logic 8 2232 FEX A 2 v. NIC 1(s) VIC CPU CIMC KVM etc. ESX Kernel Pass Through Module 1 UCS C Series Server v. NIC 2(s) v. HBA 1 60

Nexus VM-FEX System View Deploying on a UCS C Series with Nexus 5500 Infrastructure UCS VM-FEX System View Nexus 55 xx B (port profiles) Nexus 55 xx A (port profiles) 7 Mgmt Uplink 0 8 veth 10 5 veth 1 veth 2 1 2 Fiber Channel Uplink Ports 1 6 veth 3 3 vfc 0 veth 4 4 5 Fiber Channel Uplink Ports 2 Virtual Interface Control Logic 1 47 47 48 48 Nexus 55 xx Physical Ports 2232 Fabric Ports VM-FEX 1 1 2 2 8 2232 FEX A 3 1 4 5 1 6 32 1 2 5 veth 1 vfc 1 Virtual Interface Control Logic VN Tag @ 10 Gbe Internal Connections v. PC Connections (veth’s not a v. PC at FCS) 6 2 2 3 4 2 3 veth 2 5 4 5 7 veth 3 0 v. HBA 0 v. NIC 1(s) 1 VIC CPU d-v. NIC 1 d-v. NIC 2 CIMC KVM etc. d-v. NIC 3 ESX Kernel Pass Through Module Kernel veth 10 0 Mgmt Nexus 55 xx Physical Ports Uplink 6 6 32 2232 Server Ports Cisco Adapter veth 4 8 2232 Fabric Ports 8 2232 FEX B 6 2232 Server Ports UCS C Series Server d-v. NIC 4 v. NIC 2(s) v. HBA 1 Service Console 61

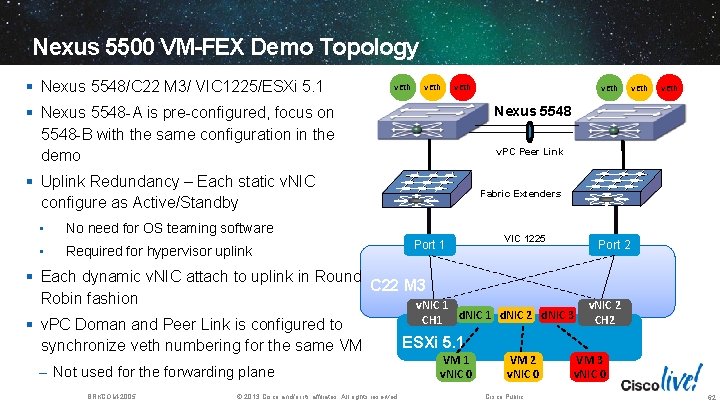

Nexus 5500 VM-FEX Demo Topology § Nexus 5548/C 22 M 3/ VIC 1225/ESXi 5. 1 v. Eth Fabric Extenders No need for OS teaming software Required for hypervisor uplink – Not used for the forwarding plane BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. VIC 1225 Port 1 § Each dynamic v. NIC attach to uplink in Round C 22 M 3 Robin fashion v. NIC 1 § v. PC Doman and Peer Link is configured to synchronize veth numbering for the same VM v. Eth v. PC Peer Link § Uplink Redundancy – Each static v. NIC configure as Active/Standby • v. Eth Nexus 5548 § Nexus 5548 -A is pre-configured, focus on 5548 -B with the same configuration in the demo • v. Eth CH 1 Port 2 d. NIC 1 d. NIC 2 d. NIC 3 v. NIC 2 CH 2 ESXi 5. 1 VM 1 v. NIC 0 VM 2 v. NIC 0 Cisco Public VM 3 v. NIC 0 62

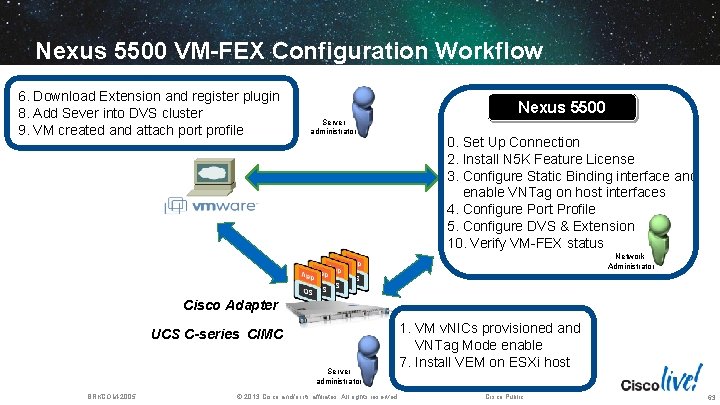

Nexus 5500 VM-FEX Configuration Workflow 6. Download Extension and register plugin 8. Add Sever into DVS cluster 9. VM created and attach port profile Nexus 5500 Server administrator 0. Set Up Connection 2. Install N 5 K Feature License 3. Configure Static Binding interface and enable VNTag on host interfaces 4. Configure Port Profile 5. Configure DVS & Extension 10. Verify VM-FEX status Network Administrator Cisco Adapter UCS C-series CIMC Server administrator BRKCOM-2005 1. VM v. NICs provisioned and VNTag Mode enable 7. Install VEM on ESXi host © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 63

Nexus 5 K VM-FEX Demo 64

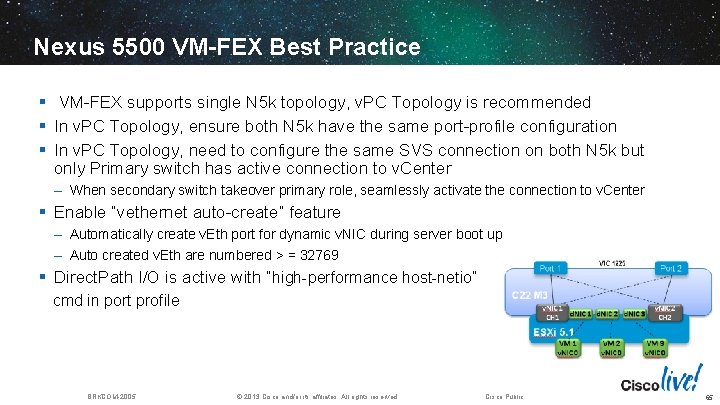

Nexus 5500 VM-FEX Best Practice § VM-FEX supports single N 5 k topology, v. PC Topology is recommended § In v. PC Topology, ensure both N 5 k have the same port-profile configuration § In v. PC Topology, need to configure the same SVS connection on both N 5 k but only Primary switch has active connection to v. Center – When secondary switch takeover primary role, seamlessly activate the connection to v. Center § Enable “vethernet auto-create” feature – Automatically create v. Eth port for dynamic v. NIC during server boot up – Auto created v. Eth are numbered > = 32769 § Direct. Path I/O is active with “high-performance host-netio” cmd in port profile BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 65

VM-FEX Implementation with Hyper-V on UCS 66

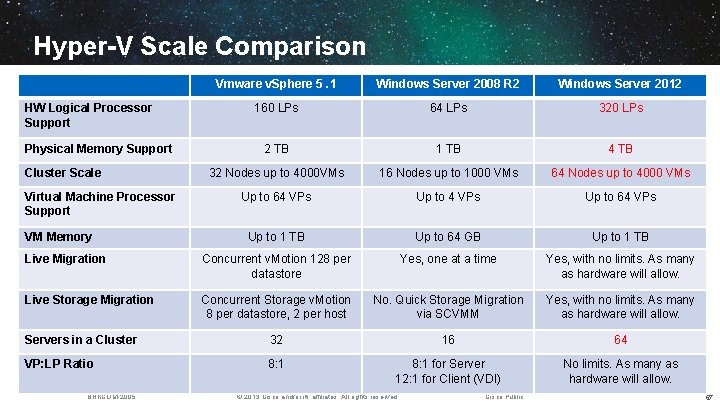

Hyper-V Scale Comparison Vmware v. Sphere 5. 1 Windows Server 2008 R 2 Windows Server 2012 160 LPs 64 LPs 320 LPs 2 TB 1 TB 4 TB 32 Nodes up to 4000 VMs 16 Nodes up to 1000 VMs 64 Nodes up to 4000 VMs Up to 64 VPs Up to 1 TB Up to 64 GB Up to 1 TB Live Migration Concurrent v. Motion 128 per datastore Yes, one at a time Yes, with no limits. As many as hardware will allow. Live Storage Migration Concurrent Storage v. Motion 8 per datastore, 2 per host No. Quick Storage Migration via SCVMM Yes, with no limits. As many as hardware will allow. Servers in a Cluster 32 16 64 VP: LP Ratio 8: 1 for Server 12: 1 for Client (VDI) No limits. As many as hardware will allow. HW Logical Processor Support Physical Memory Support Cluster Scale Virtual Machine Processor Support VM Memory BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 67

SR-IOV Overview Hyper-V Host Root Partition Hyper-V Switching VLAN Filtering Data Copy Virtual Machine Virtual NIC VMBUS Root Partition Hyper-V Switching VLAN Filtering Data Copy Virtual Machine Virtual Function SR-IOV Physical Function Physical NIC Network I/O path without SRIOV BRKCOM-2005 Hyper-V Host © 2013 Cisco and/or its affiliates. All rights reserved. Network I/O path with SRIOV Cisco Public 68

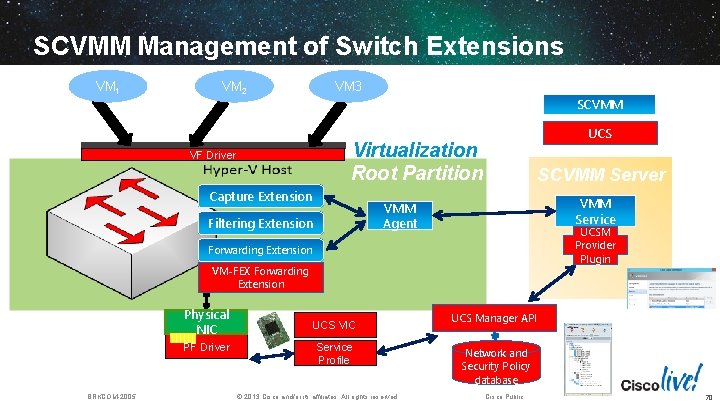

Hyper-V Extensible Switch Architecture § Hyper-V extensible switch architecture is an open API model that enhance v. Swtich feature Virtual Machine § Three types of extension is defined by Hyper-V VM NIC VF Driver – Capture Extension – Filtering Extension (Window Filtering Platform) – Forwarding Extension (VM-FEX) Root Partition Host NIC Hyper-V Switch Extension Protocol Capture Extensions VM NIC VF Driver Filtering Extensions § Multiple extension is allowed – Still need to verify with Vendor for compatibility – Several extension is incompatible with WFP § Extension state is unique for each v. Switch – Leverage SCVMM to centrally configure extension § Cisco also provides both PF and VF Drivers BRKCOM-2005 Virtual Machine © 2013 Cisco and/or its affiliates. All rights reserved. WFP Extensions Forwarding Extension VM-FEX Forwarding Extension Miniport Physical NIC PF Driver Cisco Public 69

SCVMM Management of Switch Extensions VM 1 VM 2 VM 3 SCVMM UCS Virtualization Root Partition VF Driver Capture Extension SCVMM Server VMM Service VMM Agent Filtering Extension UCSM Provider Plugin Forwarding Extension VM-FEX Forwarding Extension Physical NIC PF Driver BRKCOM-2005 UCS VIC Service Profile © 2013 Cisco and/or its affiliates. All rights reserved. UCS Manager API Network and Security Policy database Cisco Public 70

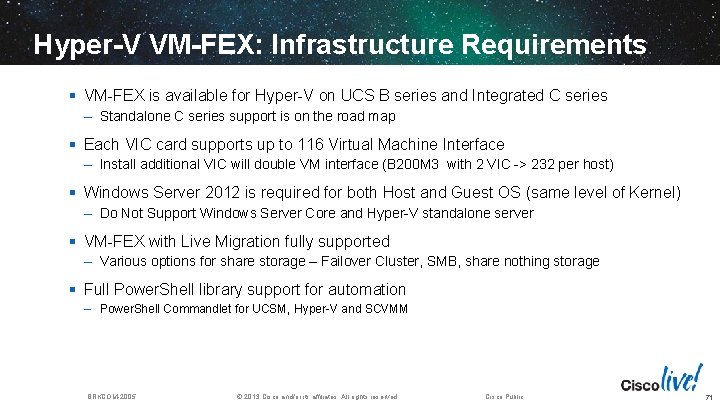

Hyper-V VM-FEX: Infrastructure Requirements § VM-FEX is available for Hyper-V on UCS B series and Integrated C series – Standalone C series support is on the road map § Each VIC card supports up to 116 Virtual Machine Interface – Install additional VIC will double VM interface (B 200 M 3 with 2 VIC -> 232 per host) § Windows Server 2012 is required for both Host and Guest OS (same level of Kernel) – Do Not Support Windows Server Core and Hyper-V standalone server § VM-FEX with Live Migration fully supported – Various options for share storage – Failover Cluster, SMB, share nothing storage § Full Power. Shell library support for automation – Power. Shell Commandlet for UCSM, Hyper-V and SCVMM BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 71

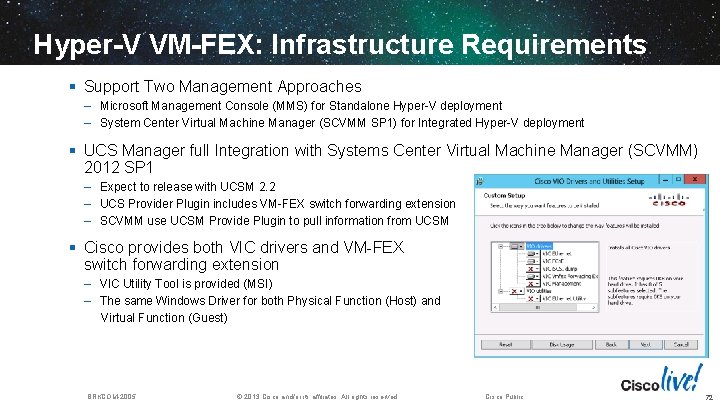

Hyper-V VM-FEX: Infrastructure Requirements § Support Two Management Approaches – Microsoft Management Console (MMS) for Standalone Hyper-V deployment – System Center Virtual Machine Manager (SCVMM SP 1) for Integrated Hyper-V deployment § UCS Manager full Integration with Systems Center Virtual Machine Manager (SCVMM) 2012 SP 1 – Expect to release with UCSM 2. 2 – UCS Provider Plugin includes VM-FEX switch forwarding extension – SCVMM use UCSM Provide Plugin to pull information from UCSM § Cisco provides both VIC drivers and VM-FEX switch forwarding extension – VIC Utility Tool is provided (MSI) – The same Windows Driver for both Physical Function (Host) and Virtual Function (Guest) BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 72

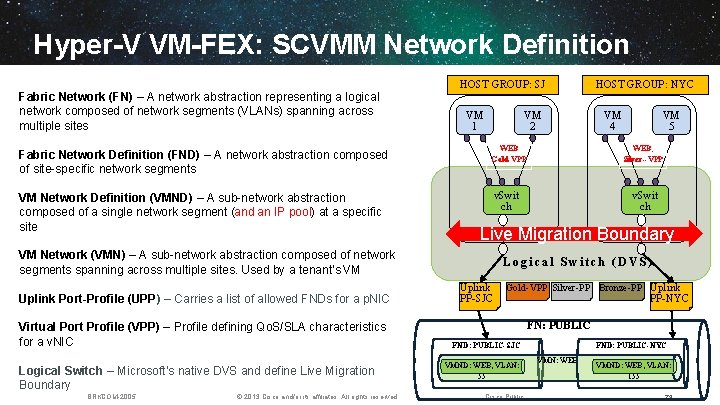

Hyper-V VM-FEX: SCVMM Network Definition HOST GROUP: SJ Fabric Network (FN) – A network abstraction representing a logical network composed of network segments (VLANs) spanning across multiple sites Fabric Network Definition (FND) – A network abstraction composed of site-specific network segments VM Network Definition (VMND) – A sub-network abstraction composed of a single network segment (and an IP pool) at a specific site VM 1 VM 2 Virtual Port Profile (VPP) – Profile defining Qo. S/SLA characteristics for a v. NIC Logical Switch – Microsoft’s native DVS and define Live Migration Boundary BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. VM 4 VM 5 WEB Gold-VPP WEB, Silver--VPP v. Swit ch Live Migration Boundary VM Network (VMN) – A sub-network abstraction composed of network segments spanning across multiple sites. Used by a tenant’s VM Uplink Port-Profile (UPP) – Carries a list of allowed FNDs for a p. NIC HOST GROUP: NYC Logical Switch (DVS) Uplink PP-SJC Gold-VPP Silver-PP Bronze-PP Uplink PP-NYC FN: PUBLIC FND: PUBLIC-SJC VMND: WEB, VLAN: 55 Cisco Public FND: PUBLIC-NYC VMN: WEB VMND: WEB, VLAN: 155 73

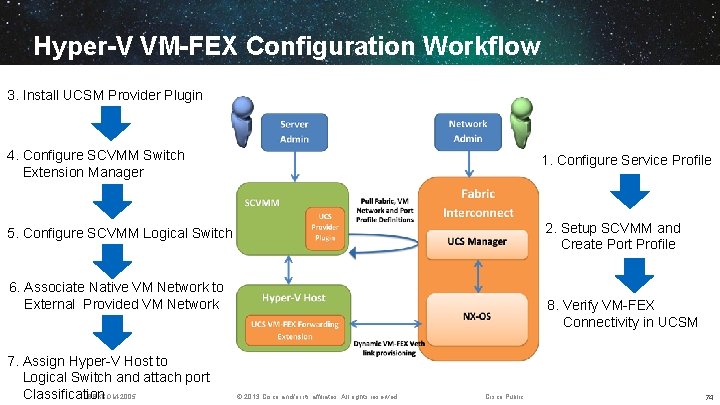

Hyper-V VM-FEX Configuration Workflow 3. Install UCSM Provider Plugin 4. Configure SCVMM Switch Extension Manager 1. Configure Service Profile 5. Configure SCVMM Logical Switch 2. Setup SCVMM and Create Port Profile 6. Associate Native VM Network to External Provided VM Network 7. Assign Hyper-V Host to Logical Switch and attach port BRKCOM-2005 Classification 8. Verify VM-FEX Connectivity in UCSM © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 74

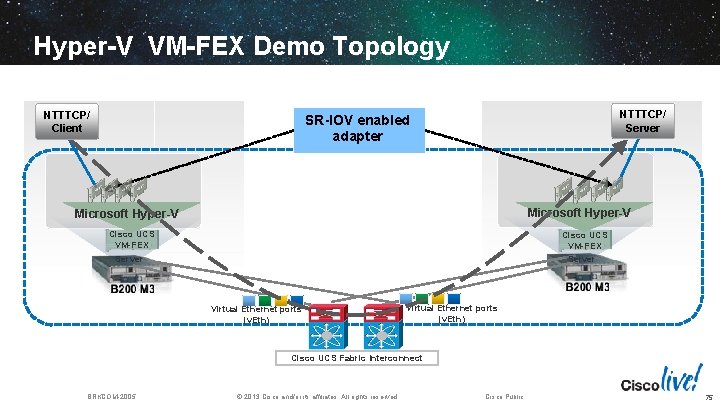

Hyper-V VM-FEX Demo Topology NTTTCP/ Client NTTTCP/ Server SR-IOV enabled adapter Microsoft Hyper-V Cisco UCS VM-FEX Server Virtual Ethernet ports (v. Eth) Cisco UCS Fabric Interconnect BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 75

Hyper-V VM-FEX Demo 76

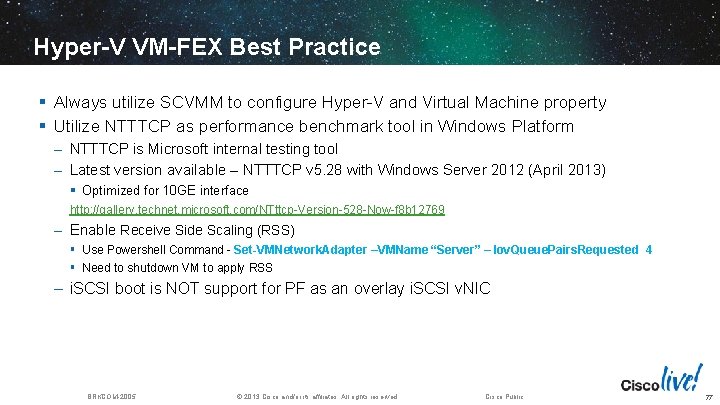

Hyper-V VM-FEX Best Practice § Always utilize SCVMM to configure Hyper-V and Virtual Machine property § Utilize NTTTCP as performance benchmark tool in Windows Platform – NTTTCP is Microsoft internal testing tool – Latest version available – NTTTCP v 5. 28 with Windows Server 2012 (April 2013) § Optimized for 10 GE interface http: //gallery. technet. microsoft. com/NTttcp-Version-528 -Now-f 8 b 12769 – Enable Receive Side Scaling (RSS) § Use Powershell Command - Set-VMNetwork. Adapter –VMName “Server” – Iov. Queue. Pairs. Requested 4 § Need to shutdown VM to apply RSS – i. SCSI boot is NOT support for PF as an overlay i. SCSI v. NIC BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 77

VM-FEX Implementation with KVM on UCS 78

RHEL KVM VM-FEX: Infrastructure Requirements § VM-FEX is available for Hyper-V on UCS B series and Integrated C series – Standalone C series support is on the road map § Each VIC card supports up to 116 Virtual Machine Interface – Install additional VIC will double VM interface (B 200 M 3 with 2 VIC -> 232 per host) § UCS Manager 2. 1 release is required to supported SR-IOV in KVM § Install Red Hat as Virtualization Host – RHEL 6. 2 for VM-FEX emulation mode (SR-IOV with Mac. VTap) – RHEL 6. 3 for VM-FEX hypervisor bypass mode (SR-IOV with PCI passthrough) – Mac. VTap Direct (Private) mode is no longer supported with UCSM release 2. 1 § Live migration feature only supported in emulation mode § Guest Operating System RHEL 6. 3 Required to support SR-IOV with PCI passthrough – RHEL 6. 3 inbox driver supports SR-IOV with PCI passthrough § Scripted nature of configuration at FCS – No current RHEV-M for RHEL KVM 6. x § Virtual Machine interface management via editing of VM domain XML file BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 79

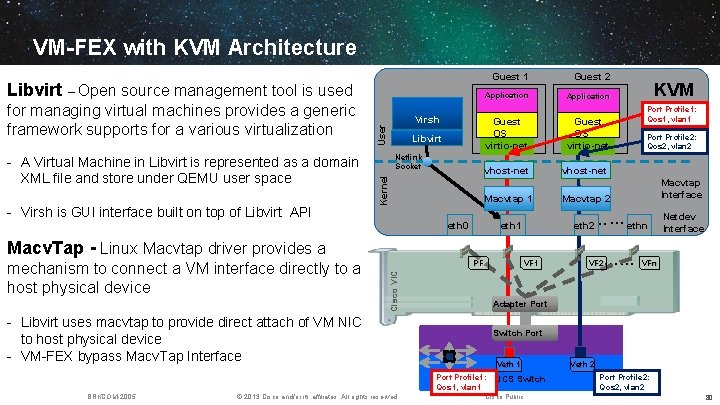

VM-FEX with KVM Architecture Libvirt – Open source management tool is used - A Virtual Machine in Libvirt is represented as a domain XML file and store under QEMU user space - Virsh is GUI interface built on top of Libvirt API User Virsh Libvirt Netlink Socket Kernel for managing virtual machines provides a generic framework supports for a various virtualization eth 0 Guest 1 Guest 2 Application Guest OS virtio-net vhost-net Macvtap 1 Macvtap 2 eth 1 eth 2 Cisco VIC PF - Libvirt uses macvtap to provide direct attach of VM NIC to host physical device - VM-FEX bypass Macv. Tap Interface VF 1 © 2013 Cisco and/or its affiliates. All rights reserved. Port Profile 2: Qos 2, vlan 2 Macvtap Interface …… VF 2 Netdev Interface VFn Adapter Port Switch Port Veth 1 Port Profile 1: UCS Switch Qos 1, vlan 1 BRKCOM-2005 Port Profile 1: Qos 1, vlan 1 …… ethn Macv. Tap - Linux Macvtap driver provides a mechanism to connect a VM interface directly to a host physical device KVM Cisco Public Veth 2 Port Profile 2: Qos 2, vlan 2 80

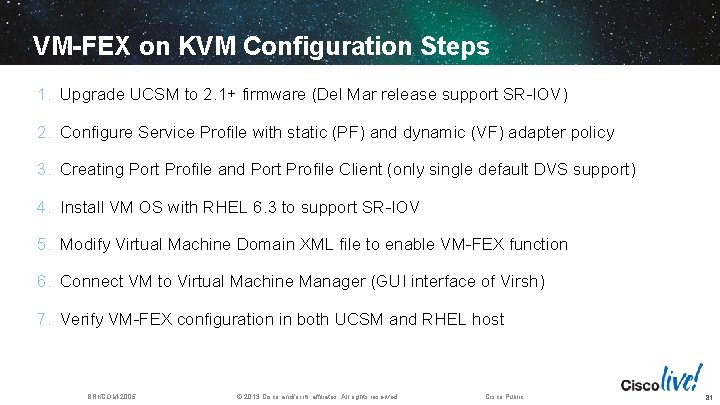

VM-FEX on KVM Configuration Steps 1. Upgrade UCSM to 2. 1+ firmware (Del Mar release support SR-IOV) 2. Configure Service Profile with static (PF) and dynamic (VF) adapter policy 3. Creating Port Profile and Port Profile Client (only single default DVS support) 4. Install VM OS with RHEL 6. 3 to support SR-IOV 5. Modify Virtual Machine Domain XML file to enable VM-FEX function 6. Connect VM to Virtual Machine Manager (GUI interface of Virsh) 7. Verify VM-FEX configuration in both UCSM and RHEL host BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 81

KVM VM-FEX Demo 82

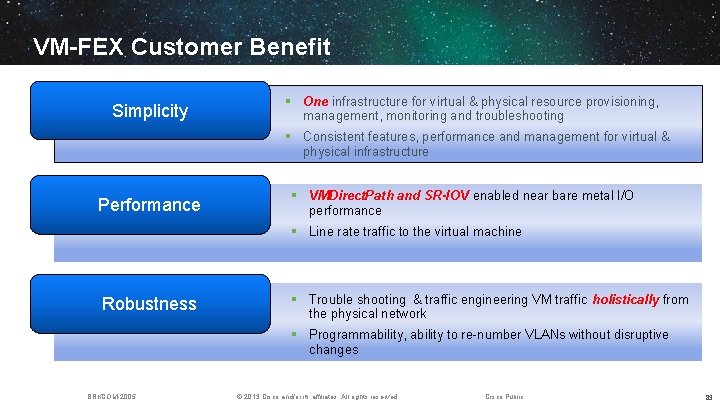

VM-FEX Customer Benefit Simplicity § One infrastructure for virtual & physical resource provisioning, management, monitoring and troubleshooting § Consistent features, performance and management for virtual & physical infrastructure Performance § VMDirect. Path and SR-IOV enabled near bare metal I/O performance § Line rate traffic to the virtual machine Robustness § Trouble shooting & traffic engineering VM traffic holistically from the physical network § Programmability, ability to re-number VLANs without disruptive changes BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 83

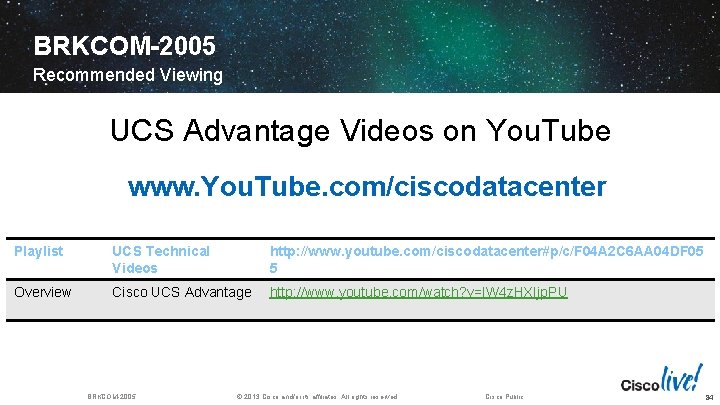

BRKCOM-2005 Recommended Viewing UCS Advantage Videos on You. Tube www. You. Tube. com/ciscodatacenter Playlist UCS Technical Videos http: //www. youtube. com/ciscodatacenter#p/c/F 04 A 2 C 6 AA 04 DF 05 5 Overview Cisco UCS Advantage http: //www. youtube. com/watch? v=IW 4 z. HXIjp. PU BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 84

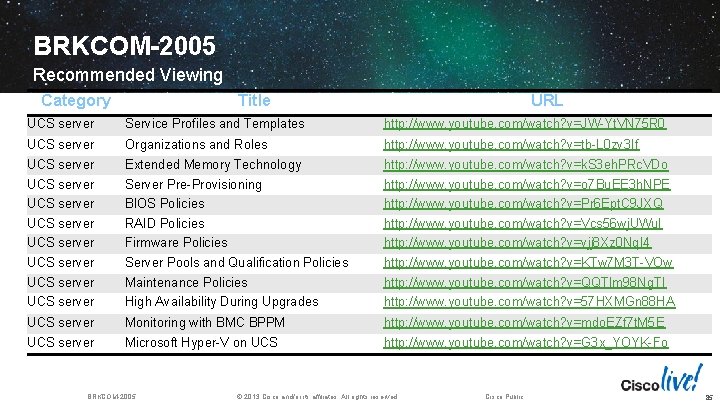

BRKCOM-2005 Recommended Viewing Category Title URL UCS server Service Profiles and Templates http: //www. youtube. com/watch? v=JW-Yt. VN 75 R 0 UCS server Organizations and Roles http: //www. youtube. com/watch? v=tb-L 0 zv 3 If UCS server Extended Memory Technology Server Pre-Provisioning BIOS Policies http: //www. youtube. com/watch? v=k. S 3 eh. PRc. VDo http: //www. youtube. com/watch? v=o 7 Bu. EE 3 h. NPE http: //www. youtube. com/watch? v=Pr 6 Ept. C 9 JXQ UCS server RAID Policies Firmware Policies http: //www. youtube. com/watch? v=Vcs 56 wj. UWu. I http: //www. youtube. com/watch? v=vjj 8 Xz 0 Nq. I 4 UCS server Server Pools and Qualification Policies http: //www. youtube. com/watch? v=KTw 7 M 3 T-VOw UCS server Maintenance Policies High Availability During Upgrades http: //www. youtube. com/watch? v=QQTlm 98 Ng. TI http: //www. youtube. com/watch? v=57 HXMGn 88 HA UCS server Monitoring with BMC BPPM http: //www. youtube. com/watch? v=mdo. EZf 7 t. M 5 E UCS server Microsoft Hyper-V on UCS http: //www. youtube. com/watch? v=G 3 x_YOYK-Fo BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 85

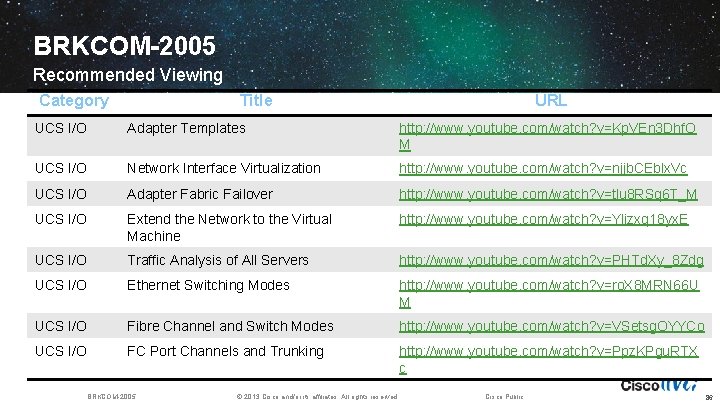

BRKCOM-2005 Recommended Viewing Category Title URL UCS I/O Adapter Templates http: //www. youtube. com/watch? v=Kp. VEn 3 Dhf. O M UCS I/O Network Interface Virtualization http: //www. youtube. com/watch? v=njjb. CEblx. Vc UCS I/O Adapter Fabric Failover http: //www. youtube. com/watch? v=tlu 8 RSq 6 T_M UCS I/O Extend the Network to the Virtual Machine http: //www. youtube. com/watch? v=Ylizxq 18 yx. E UCS I/O Traffic Analysis of All Servers http: //www. youtube. com/watch? v=PHTd. Xy_8 Zdg UCS I/O Ethernet Switching Modes http: //www. youtube. com/watch? v=ro. X 8 MRN 66 U M UCS I/O Fibre Channel and Switch Modes http: //www. youtube. com/watch? v=VSetsg. OYYCo UCS I/O FC Port Channels and Trunking http: //www. youtube. com/watch? v=Ppz. KPgu. RTX c BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 86

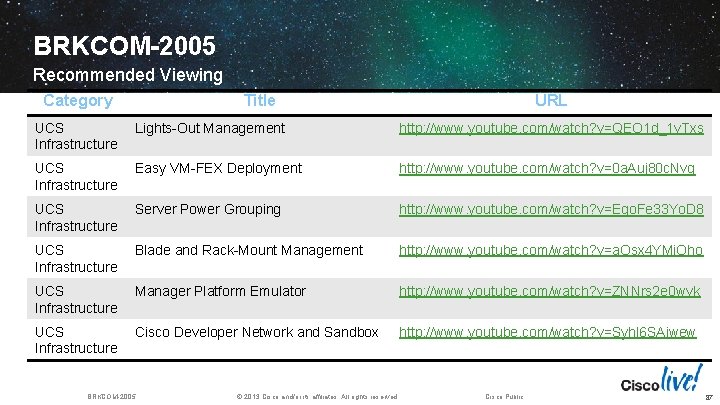

BRKCOM-2005 Recommended Viewing Category Title URL UCS Infrastructure Lights-Out Management http: //www. youtube. com/watch? v=QEO 1 d_1 v. Txs UCS Infrastructure Easy VM-FEX Deployment http: //www. youtube. com/watch? v=0 a. Auj 80 c. Nvg UCS Infrastructure Server Power Grouping http: //www. youtube. com/watch? v=Ego. Fe 33 Yo. D 8 UCS Infrastructure Blade and Rack-Mount Management http: //www. youtube. com/watch? v=a. Osx 4 YMi. Oho UCS Infrastructure Manager Platform Emulator http: //www. youtube. com/watch? v=ZNNrs 2 e 0 wvk UCS Infrastructure Cisco Developer Network and Sandbox http: //www. youtube. com/watch? v=Syhl 6 SAiwew BRKCOM-2005 © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 87

Complete Your Online Session Evaluation § Give us your feedback and you could win fabulous prizes. Winners announced daily. § Receive 20 Cisco Daily Challenge points for each session evaluation you complete. § Complete your session evaluation online now through either the mobile app or internet kiosk stations. BRKCOM-2005 Maximize your Cisco Live experience with your free Cisco Live 365 account. Download session PDFs, view sessions on-demand participate in live activities throughout the year. Click the Enter Cisco Live 365 button in your Cisco Live portal to log in. © 2013 Cisco and/or its affiliates. All rights reserved. Cisco Public 88

89

- Slides: 88