Parallel Computing 18 CUDA I Mohamed Zahran NYU

![[SPMD = Single Program Multiple Data] Source: NVIDIA CUDA C Programming [SPMD = Single Program Multiple Data] Source: NVIDIA CUDA C Programming](https://slidetodoc.com/presentation_image/0a4d184758b0736bff4c3b1c13d7b05d/image-3.jpg)

![A Simple Example: Vector Addition vector A A[0] A[1] A[2] A[3] A[4] … A[N-1] A Simple Example: Vector Addition vector A A[0] A[1] A[2] A[3] A[4] … A[N-1]](https://slidetodoc.com/presentation_image/0a4d184758b0736bff4c3b1c13d7b05d/image-13.jpg)

- Slides: 45

Parallel Computing 18: CUDA - I Mohamed Zahran NYU

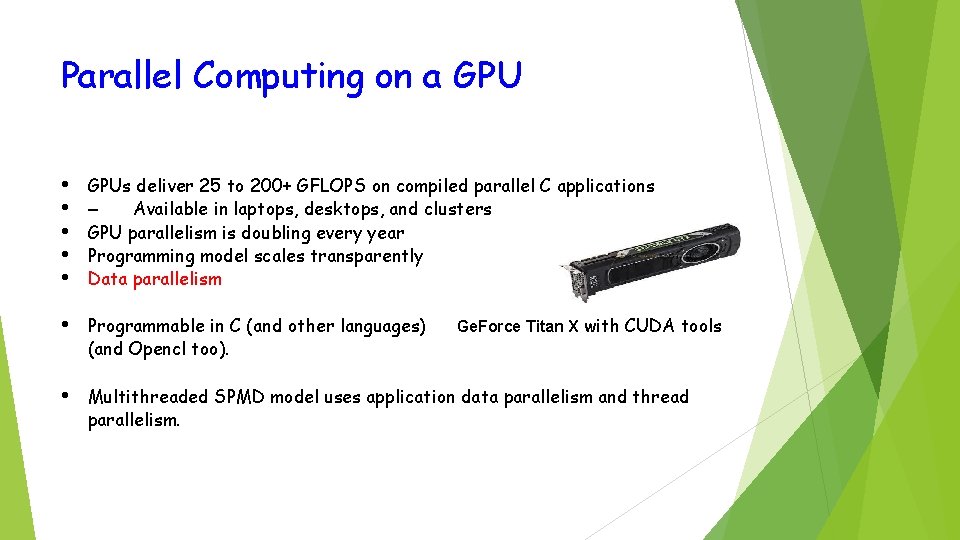

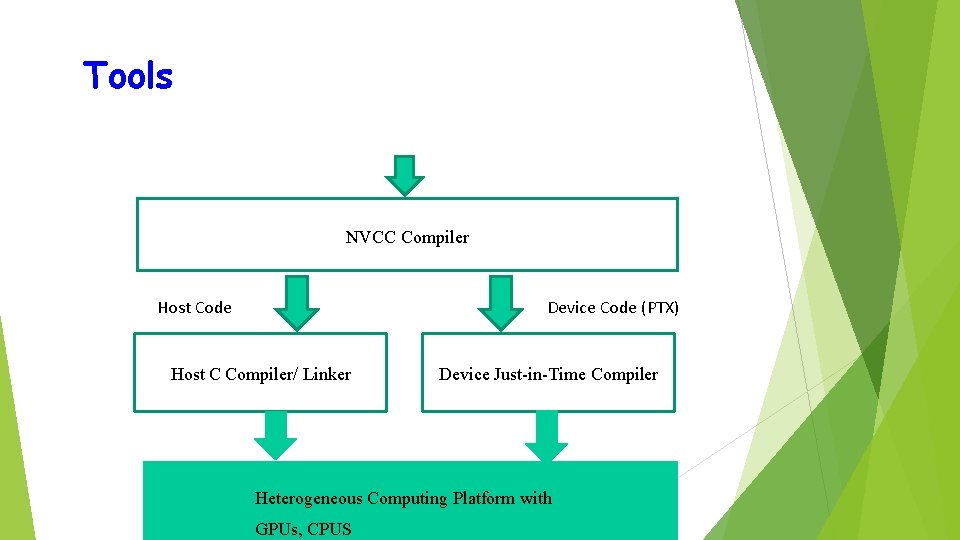

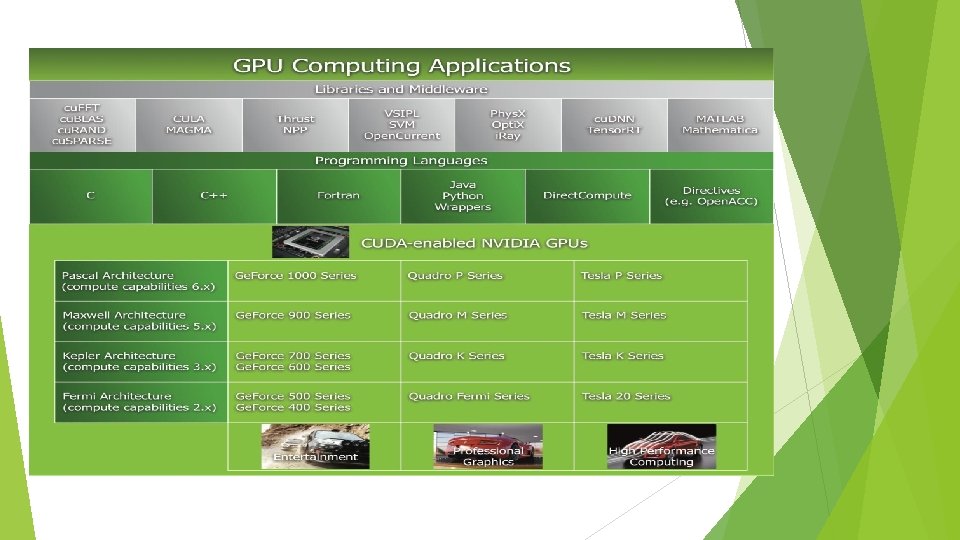

Parallel Computing on a GPU • • • GPUs deliver 25 to 200+ GFLOPS on compiled parallel C applications – Available in laptops, desktops, and clusters GPU parallelism is doubling every year Programming model scales transparently Data parallelism • Programmable in C (and other languages) (and Opencl too). • Multithreaded SPMD model uses application data parallelism and thread parallelism. Ge. Force Titan X with CUDA tools

![SPMD Single Program Multiple Data Source NVIDIA CUDA C Programming [SPMD = Single Program Multiple Data] Source: NVIDIA CUDA C Programming](https://slidetodoc.com/presentation_image/0a4d184758b0736bff4c3b1c13d7b05d/image-3.jpg)

[SPMD = Single Program Multiple Data] Source: NVIDIA CUDA C Programming

Source: Multicore and GPU Programming: An Integrated Approach by G. Barlas

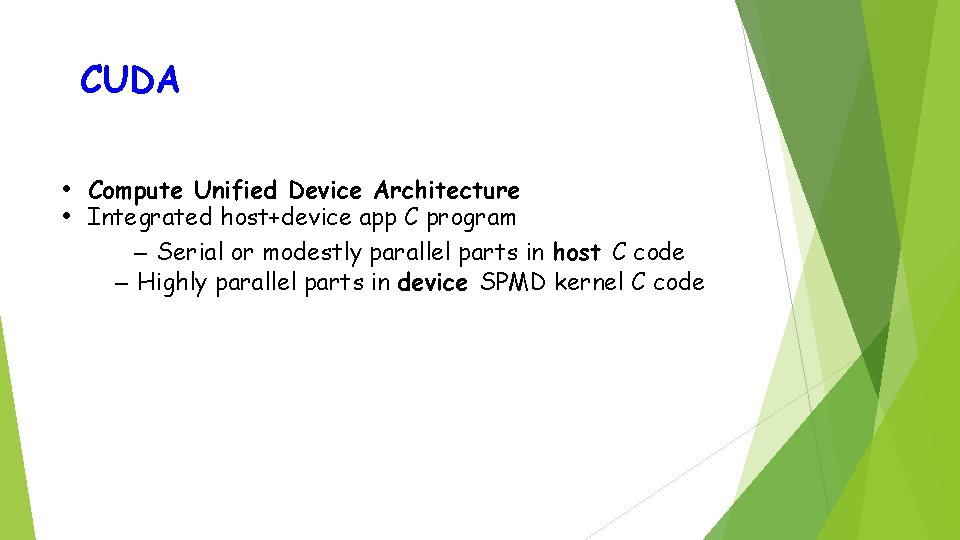

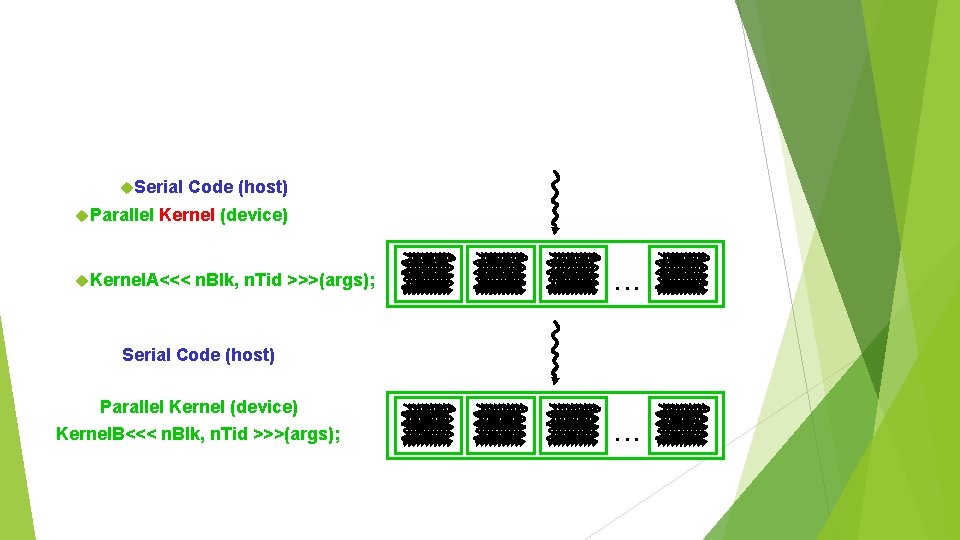

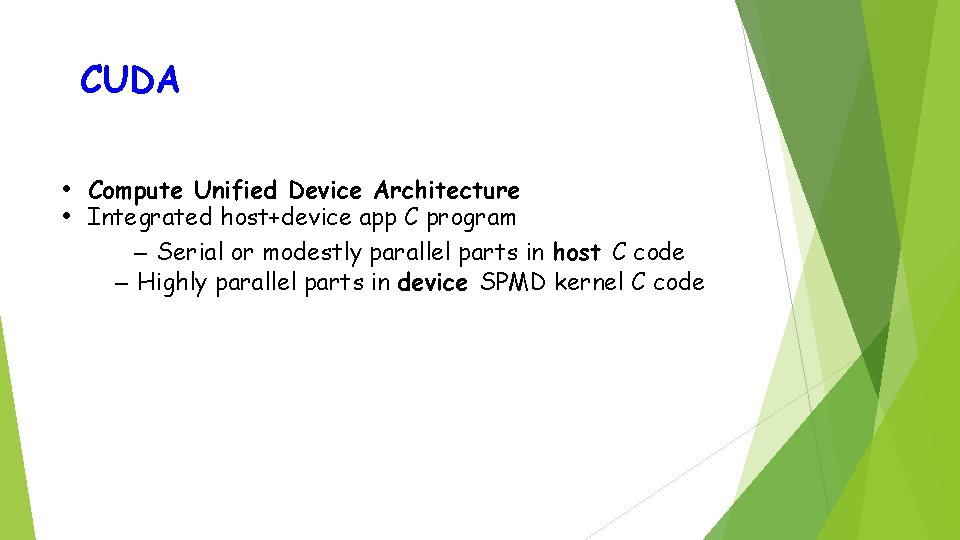

CUDA • Compute Unified Device Architecture • Integrated host+device app C program – Serial or modestly parallel parts in host C code – Highly parallel parts in device SPMD kernel C code

Serial Parallel Code (host) Kernel (device) Kernel. A<<< n. Blk, n. Tid >>>(args); . . . Serial Code (host) Parallel Kernel (device) Kernel. B<<< n. Blk, n. Tid >>>(args); . . .

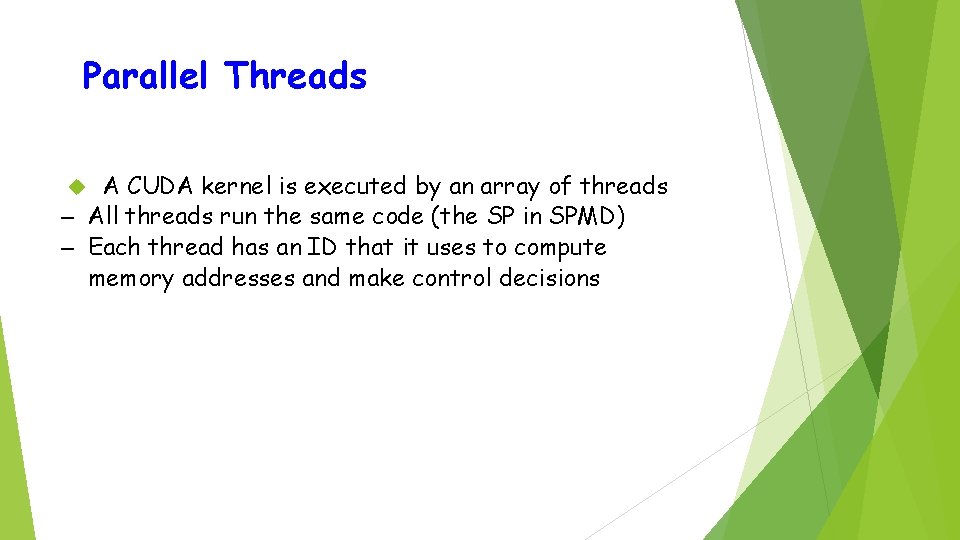

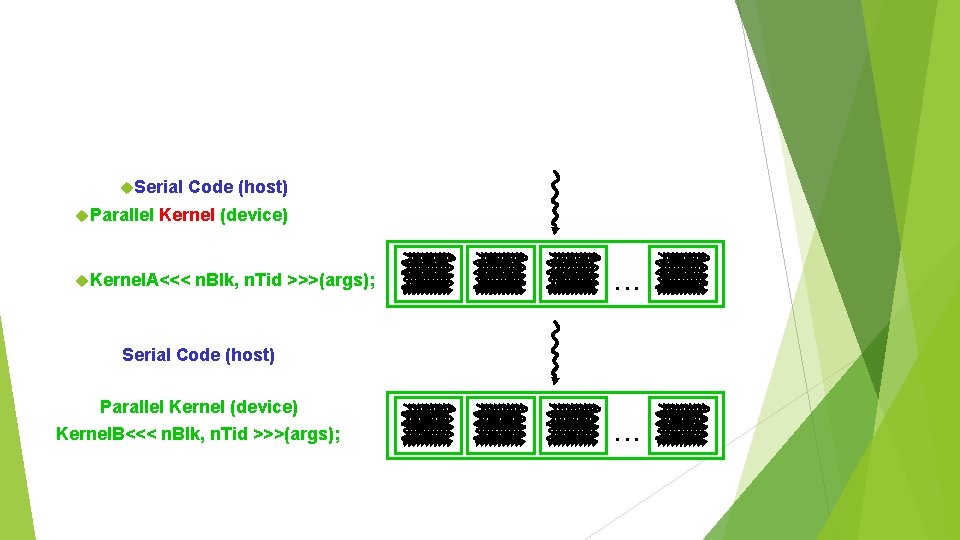

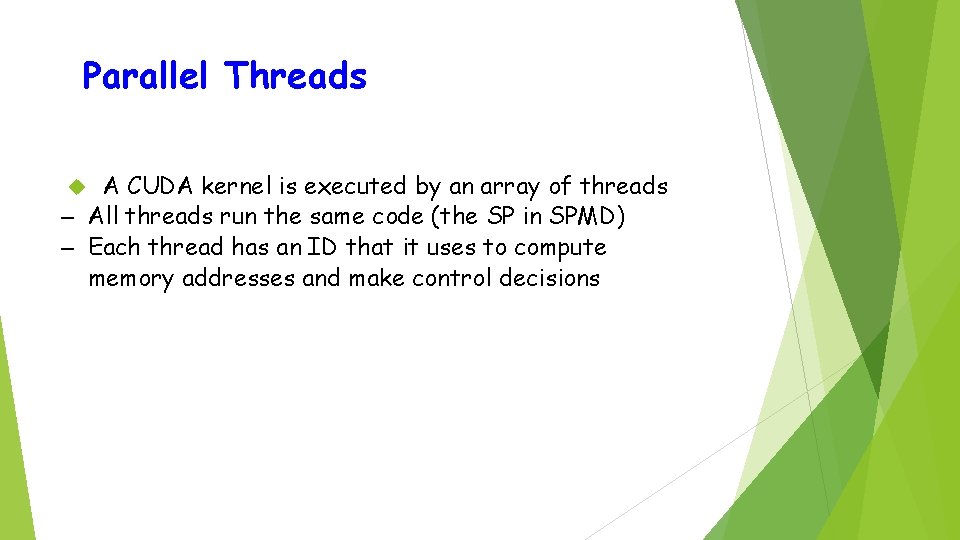

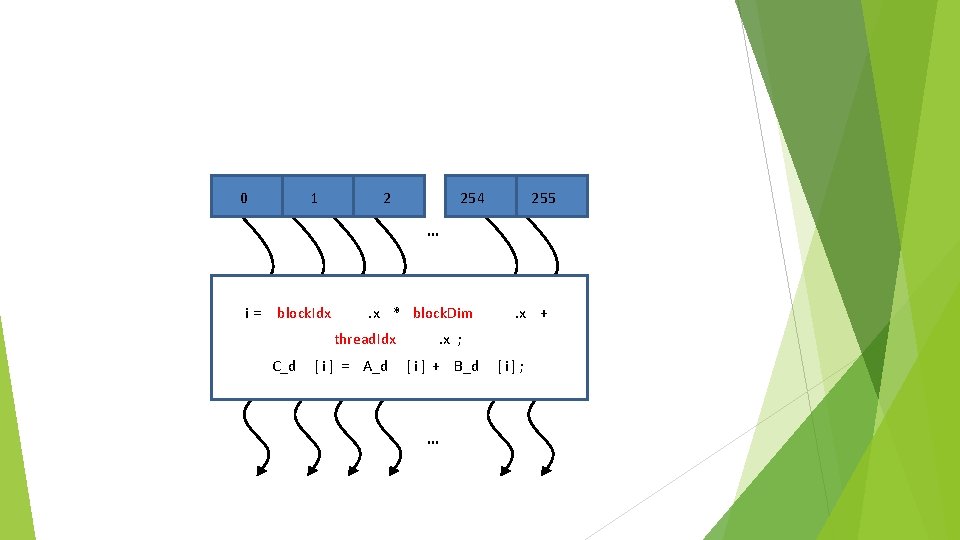

Parallel Threads A CUDA kernel is executed by an array of threads – All threads run the same code (the SP in SPMD) – Each thread has an ID that it uses to compute memory addresses and make control decisions

0 1 2 254 255 … i = block. Idx . x * block. Dim thread. Idx C_d [ i ] = A_d . x + . x ; [ i ] + B_d … [ i] ;

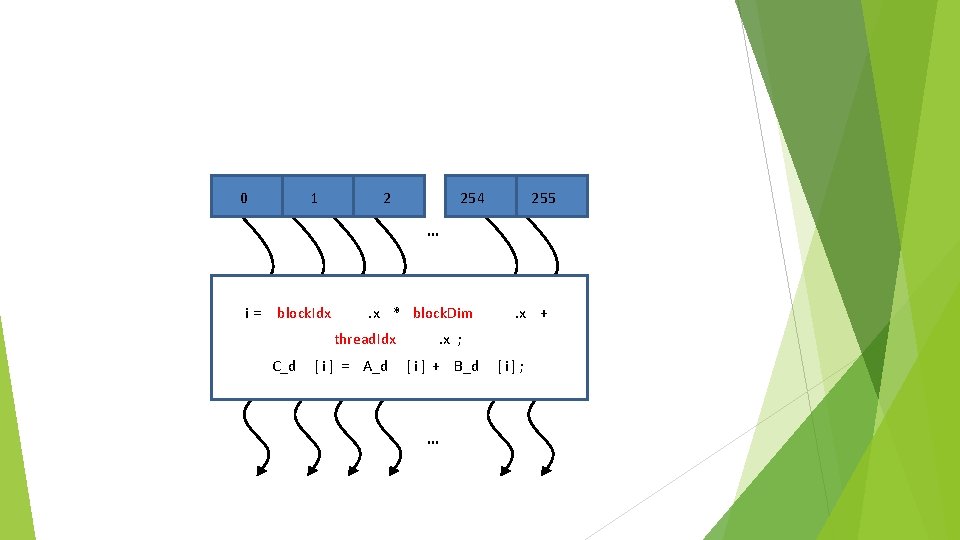

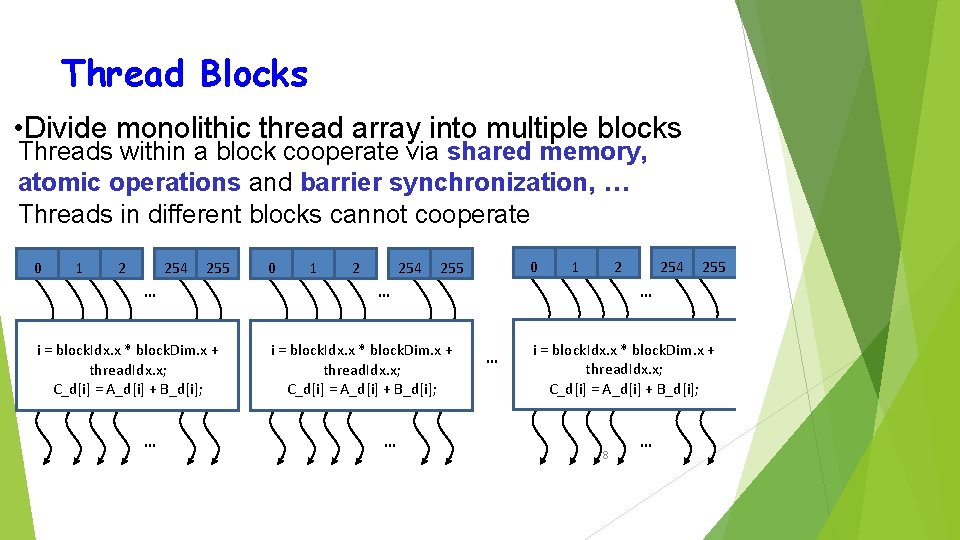

Thread Blocks • Divide monolithic thread array into multiple blocks Threads within a block cooperate via shared memory, atomic operations and barrier synchronization, … Threads in different blocks cannot cooperate 0 1 2 254 255 … i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; … 0 1 2 254 0 255 1 2 … 255 … … i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; 254 … i = block. Idx. x * block. Dim. x + thread. Idx. x; C_d[i] = A_d[i] + B_d[i]; 8 …

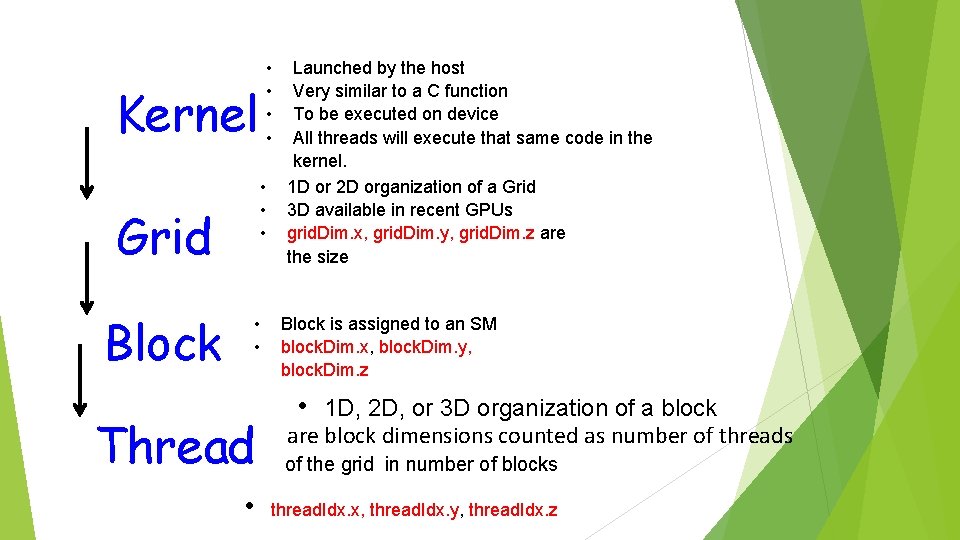

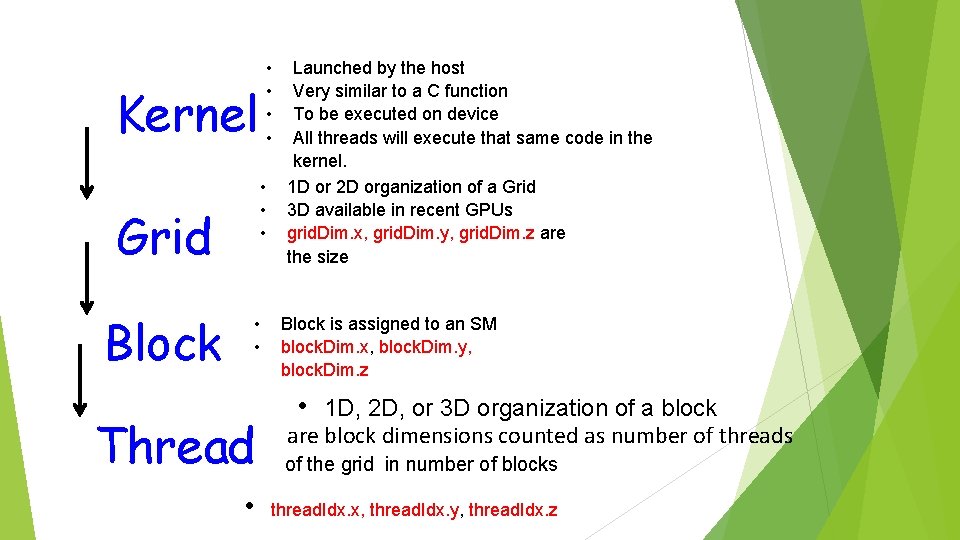

• • Kernel • • • Grid Block • • Launched by the host Very similar to a C function To be executed on device All threads will execute that same code in the kernel. 1 D or 2 D organization of a Grid 3 D available in recent GPUs grid. Dim. x, grid. Dim. y, grid. Dim. z are the size Block is assigned to an SM block. Dim. x, block. Dim. y, block. Dim. z • 1 D, 2 D, or 3 D organization of a block are block dimensions counted as number of threads Thread of the grid in number of blocks • thread. Idx. x, thread. Idx. y, thread. Idx. z

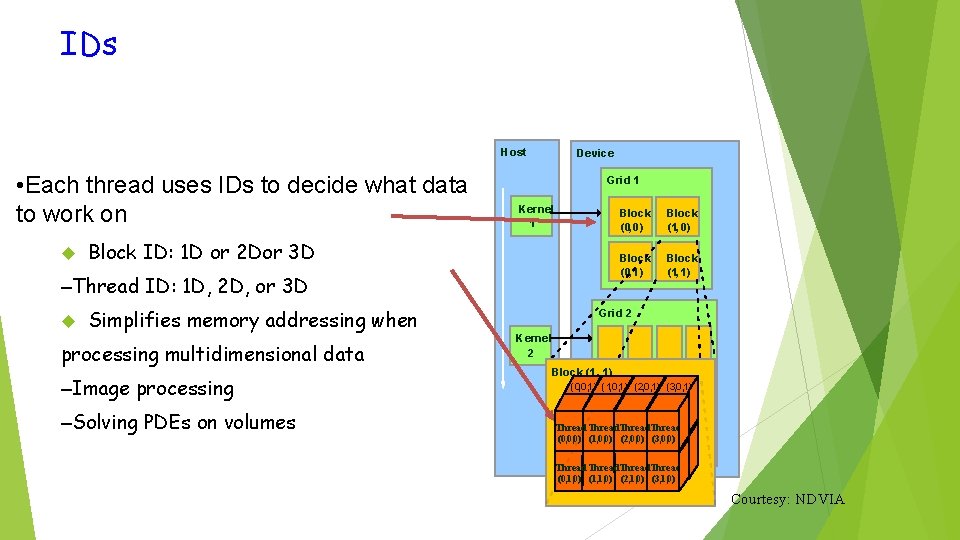

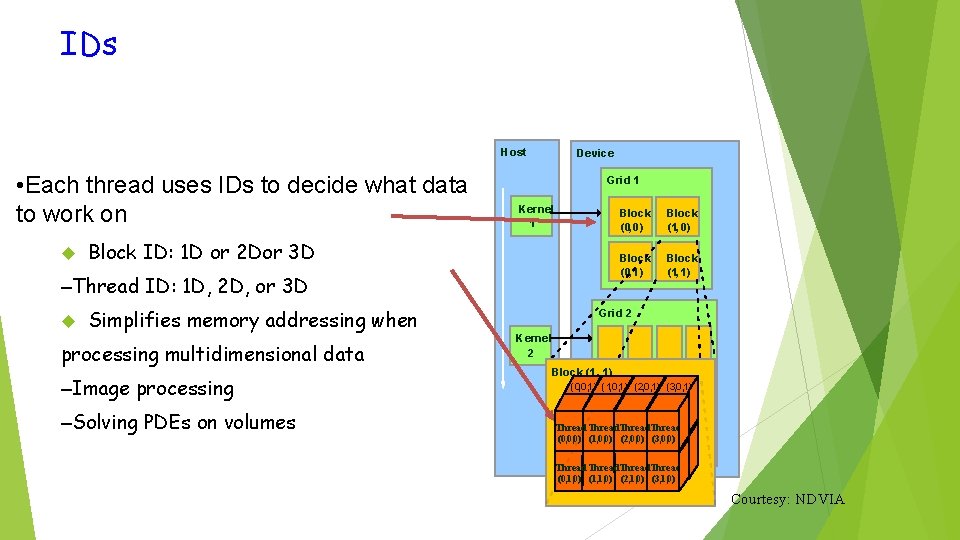

IDs Host • Each thread uses IDs to decide what data to work on Device Grid 1 Kernel 1 Block ID: 1 D or 2 Dor 3 D –Thread ID: 1 D, 2 D, or 3 D Simplifies memory addressing when processing multidimensional data –Image processing –Solving PDEs on volumes Block (0, 0) Block (1, 0) Block (0, 1) Block (1, 1) Grid 2 Kernel 2 Block (1, 1) (0, 0, 1) (1, 0, 1) (2, 0, 1) (3, 0, 1) Thread (0, 0, 0) (1, 0, 0) (2, 0, 0) (3, 0, 0) Thread (0, 1, 0) (1, 1, 0) (2, 1, 0) (3, 1, 0) Courtesy: NDVIA

![A Simple Example Vector Addition vector A A0 A1 A2 A3 A4 AN1 A Simple Example: Vector Addition vector A A[0] A[1] A[2] A[3] A[4] … A[N-1]](https://slidetodoc.com/presentation_image/0a4d184758b0736bff4c3b1c13d7b05d/image-13.jpg)

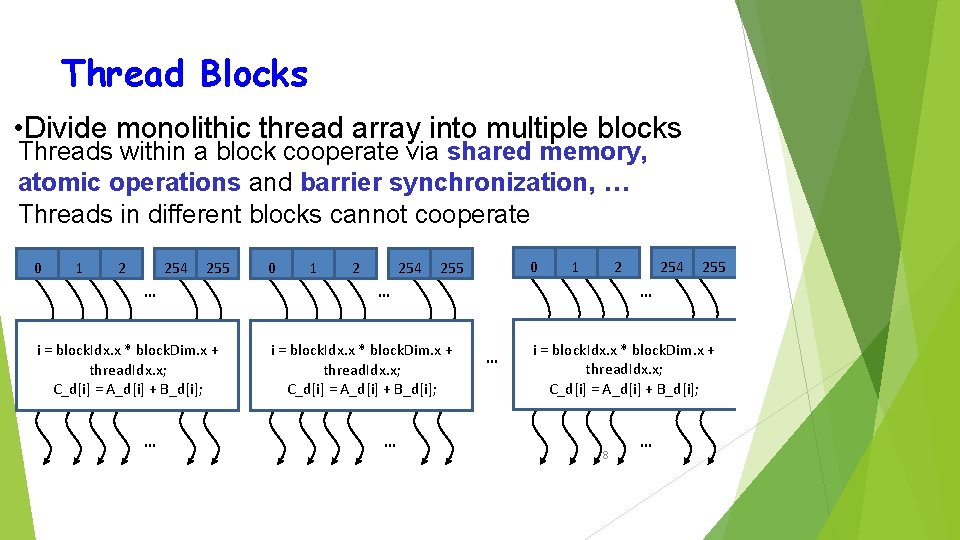

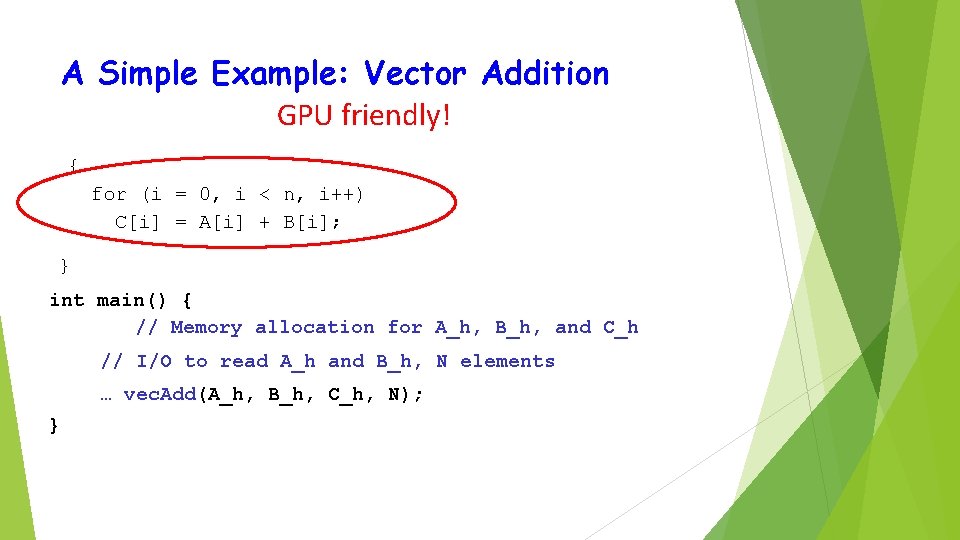

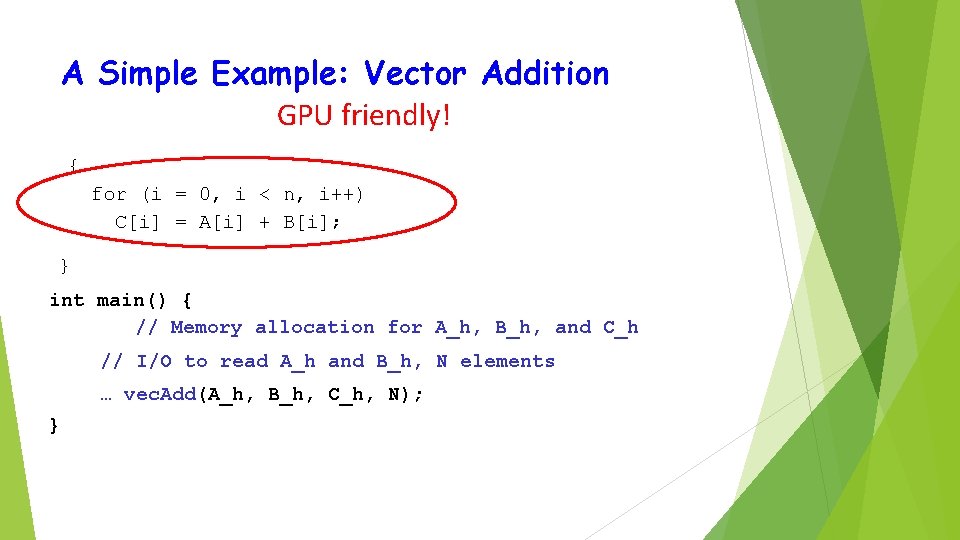

A Simple Example: Vector Addition vector A A[0] A[1] A[2] A[3] A[4] … A[N-1] B[0] B[1] B[2] B[3] B[4] … B[N-1] + + + C[0] C[1] C[2] C[3] C[4] vector B vector C + … C[N-1] Compute vector sum C = A+B void vec. Add(float* A, float* B, float* C, int n)

A Simple Example: Vector Addition GPU friendly! { for (i = 0, i < n, i++) C[i] = A[i] + B[i]; } int main() { // Memory allocation for A_h, B_h, and C_h // I/O to read A_h and B_h, N elements … vec. Add(A_h, B_h, C_h, N); }

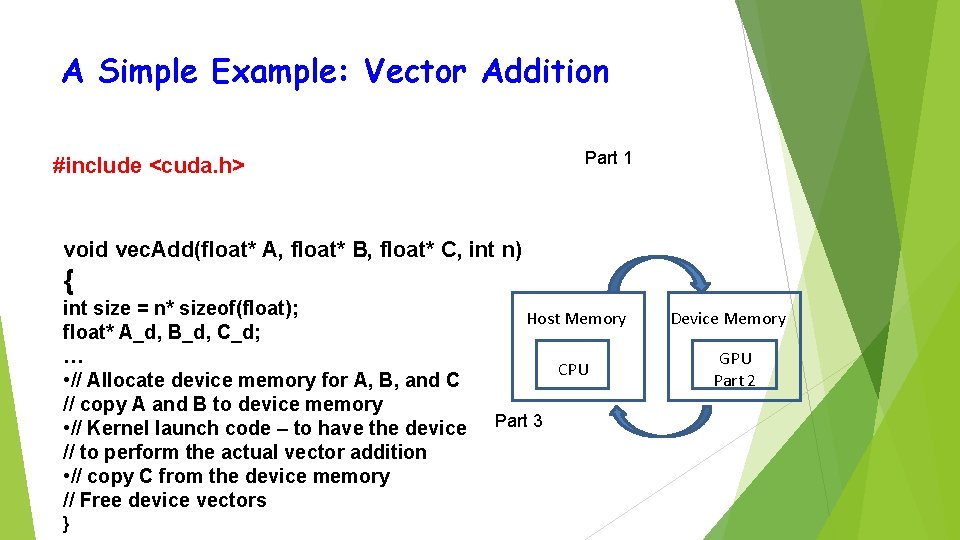

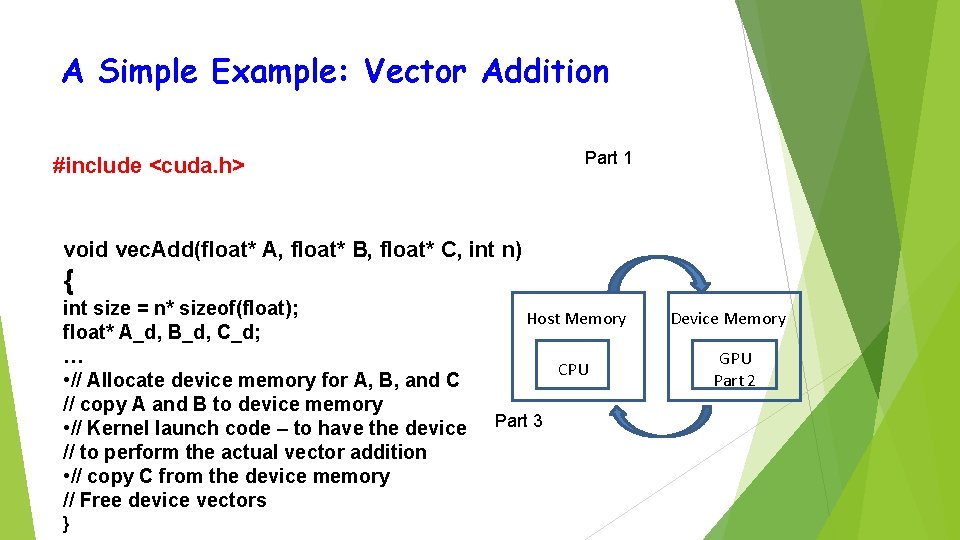

A Simple Example: Vector Addition Part 1 #include <cuda. h> void vec. Add(float* A, float* B, float* C, int n) { int size = n* sizeof(float); float* A_d, B_d, C_d; … • // Allocate device memory for A, B, and C // copy A and B to device memory • // Kernel launch code – to have the device // to perform the actual vector addition • // copy C from the device memory // Free device vectors } Host Memory CPU Part 3 Device Memory GPU Part 2

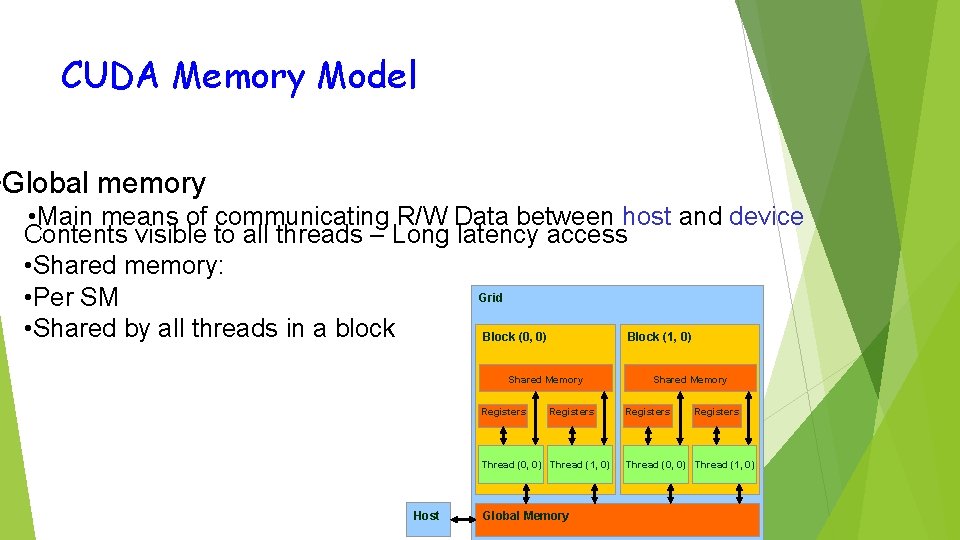

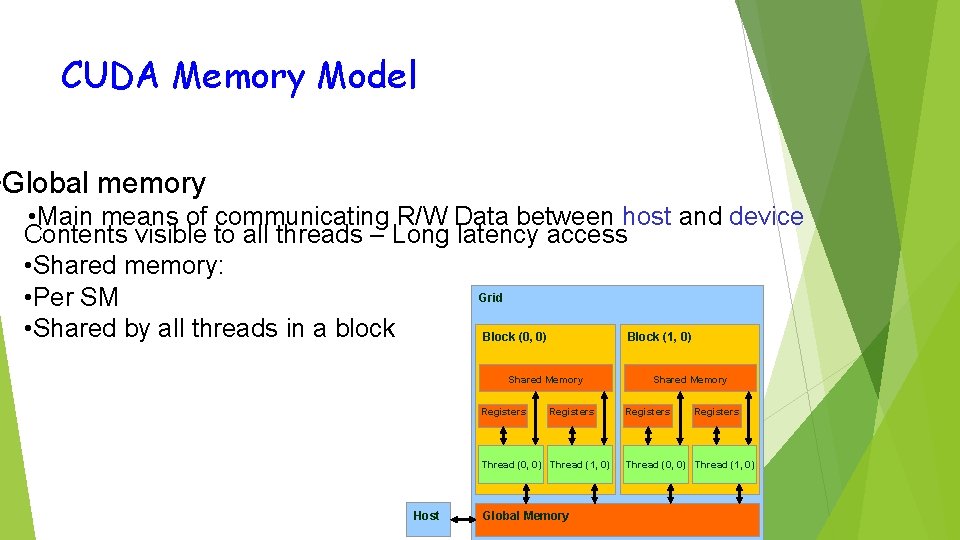

CUDA Memory Model • Global memory • Main means of communicating R/W Data between host and device Contents visible to all threads – Long latency access • Shared memory: Grid • Per SM • Shared by all threads in a block Block (0, 0) Block (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Host Global Memory Shared Memory Registers Thread (0, 0) Thread (1, 0)

CPU & GPU Memory • In CUDA, host and devices have separate memory spaces. – But in recent GPUs we have Unified Memory Access • If GPU and CPU are on the same chip, then they share memory space à fusion

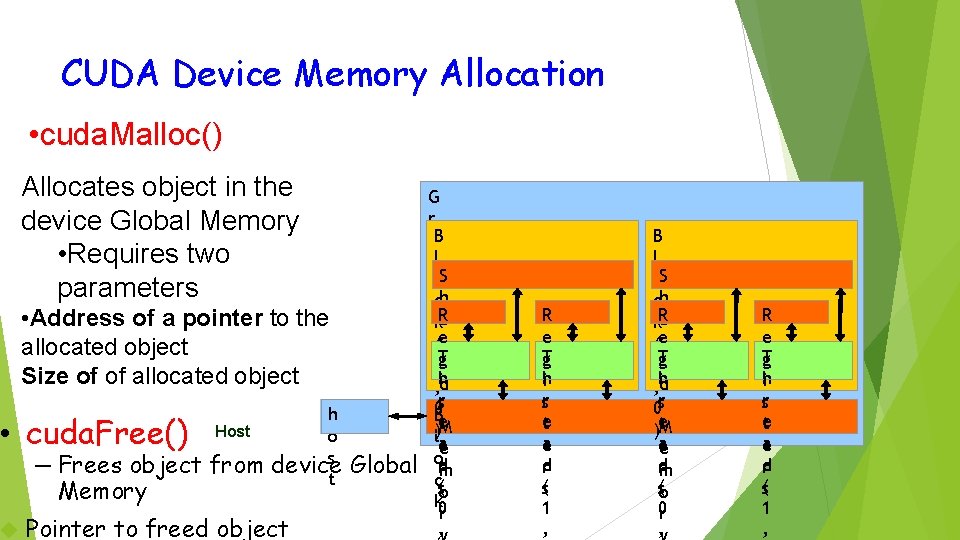

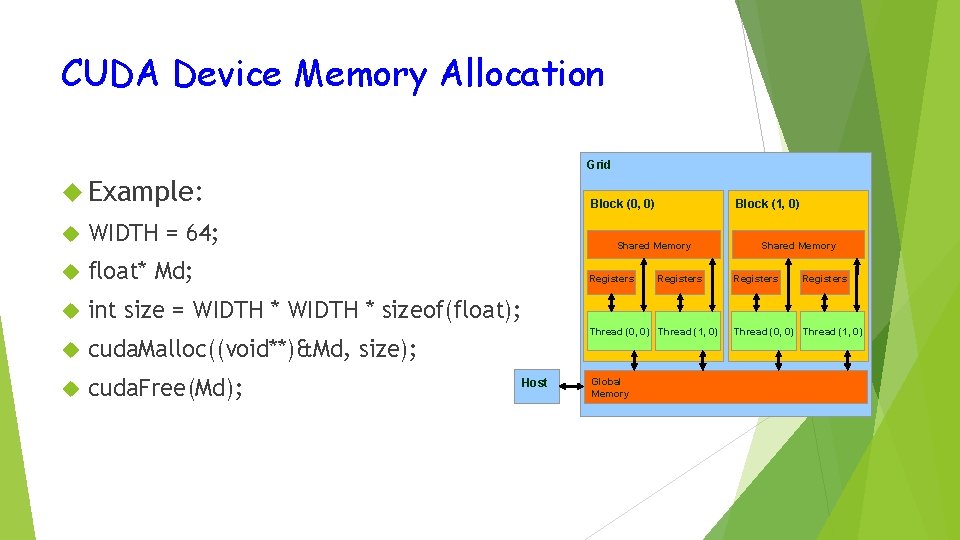

CUDA Device Memory Allocation • cuda. Malloc() Allocates object in the device Global Memory • Requires two parameters • Address of a pointer to the allocated object Size of of allocated object • cuda. Free() Host – Frees object from Memory h o s device t Pointer to freed object Global G r i. B dl o. S ch a k. R (er ge 0 T id , h rs 0 Be )lt. M a e od rm c(s o k 0 r , R e T g ih sr e t a e d r s( 1 , B l o. S ch a k. R (er ge 1 T , ihd 0 sr t. M )e a e d rm s(o 0 r , R e T g ih sr e t a e d r s( 1 ,

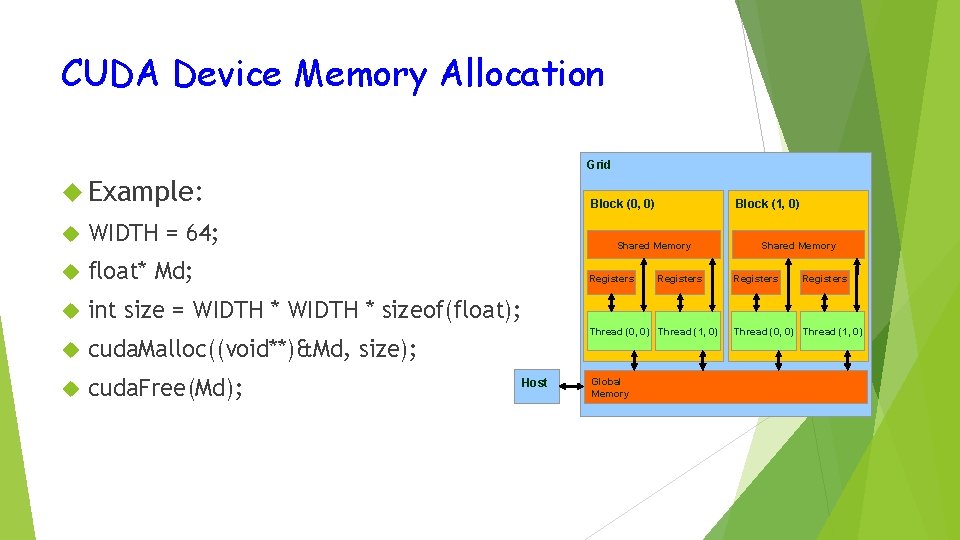

CUDA Device Memory Allocation Grid Example: WIDTH = 64; float* Md; int size = WIDTH * sizeof(float); cuda. Malloc((void**)&Md, size); cuda. Free(Md); Block (0, 0) Block (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Host Global Memory Shared Memory Registers Thread (0, 0) Thread (1, 0)

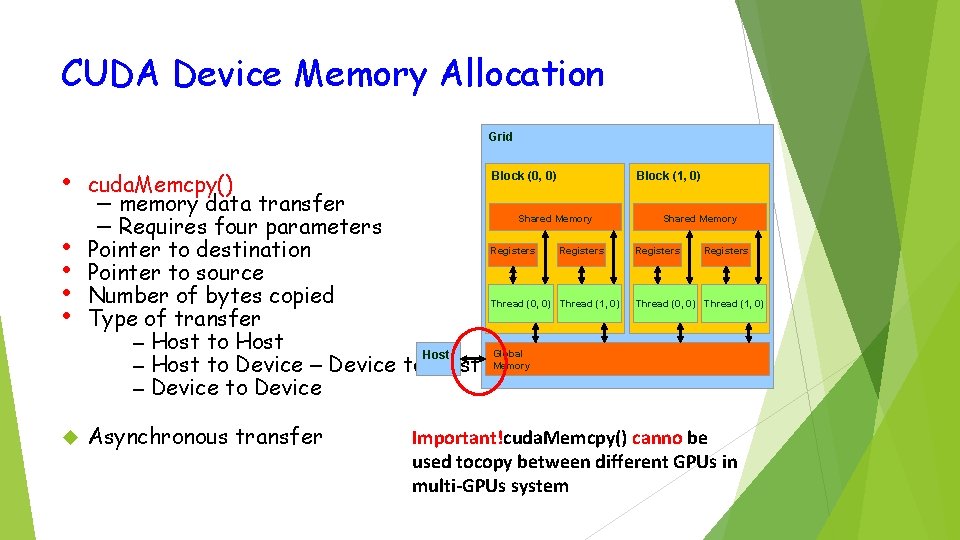

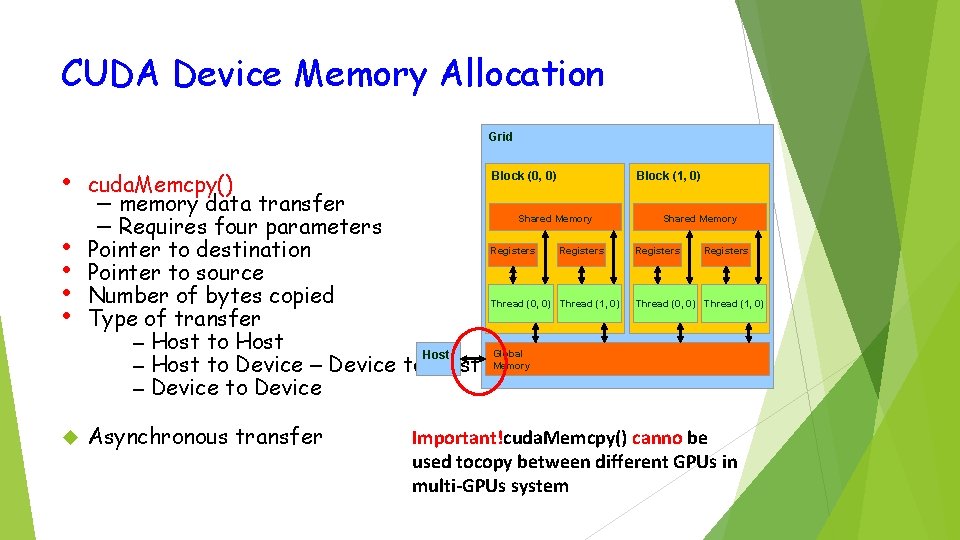

CUDA Device Memory Allocation Grid • • • cuda. Memcpy() – memory data transfer – Requires four parameters Pointer to destination Pointer to source Number of bytes copied Type of transfer – Host to Host – Host to Device – Device to Host – Device to Device Asynchronous transfer Block (0, 0) Block (1, 0) Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory Important!cuda. Memcpy() canno be used tocopy between different GPUs in multi-GPUs system

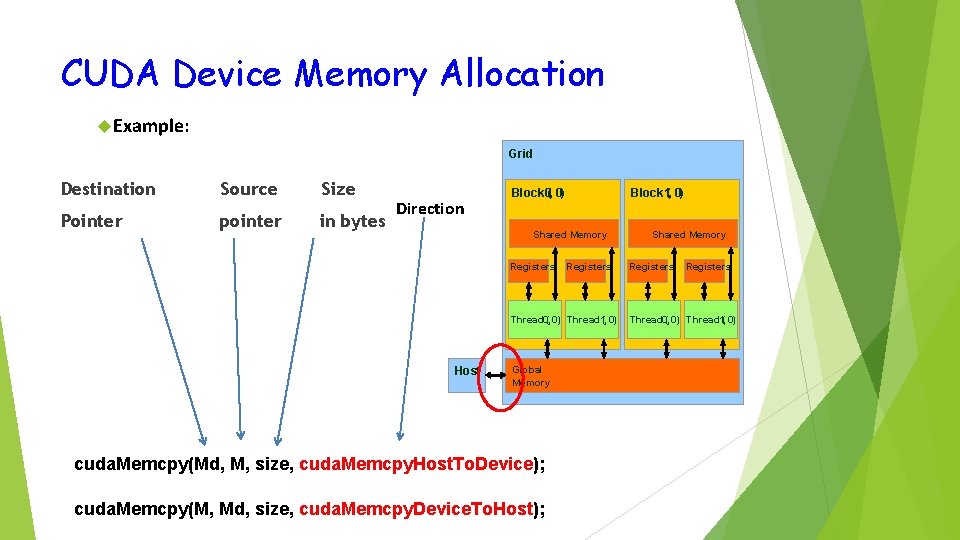

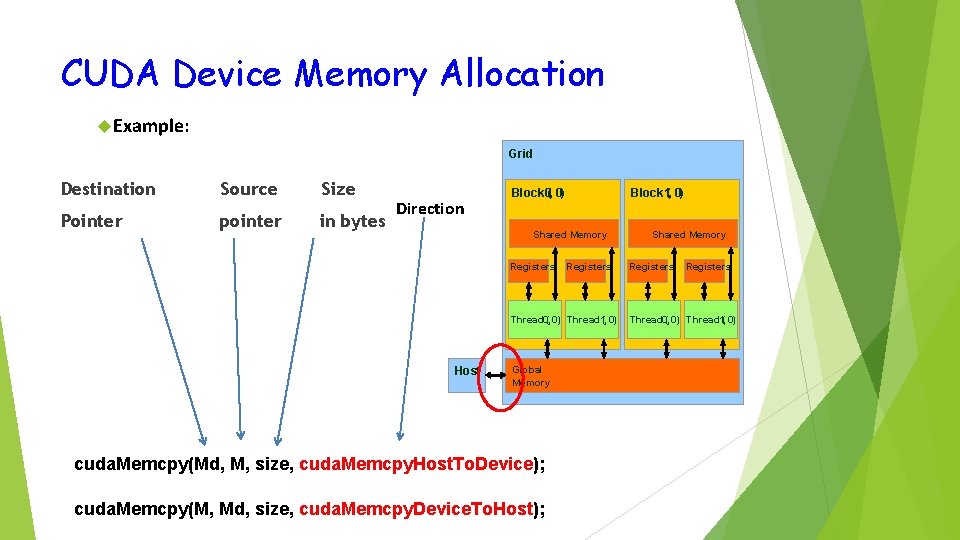

CUDA Device Memory Allocation Example: Grid Destination Source Size Pointer pointer in bytes Direction Block 0, ( 0) Block 1, ( 0) Shared Memory Registers Thread 0, ( 0) Thread 1, ( 0) Host Global Memory cuda. Memcpy(Md, M, size, cuda. Memcpy. Host. To. Device); cuda. Memcpy(M, Md, size, cuda. Memcpy. Device. To. Host); Shared Memory Registers Thread 0, ( 0) Thread 1, ( 0)

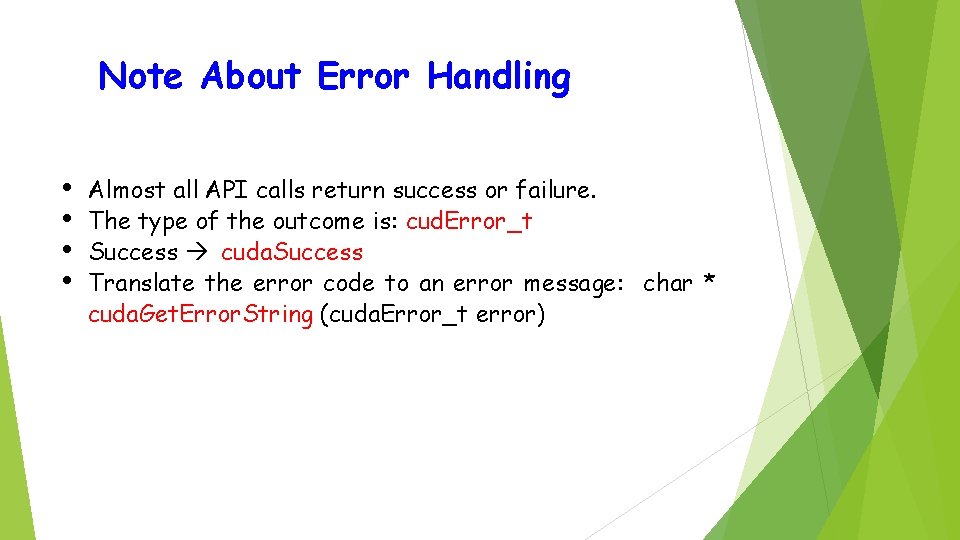

Note About Error Handling • • Almost all API calls return success or failure. The type of the outcome is: cud. Error_t Success à cuda. Success Translate the error code to an error message: char * cuda. Get. Error. String (cuda. Error_t error)

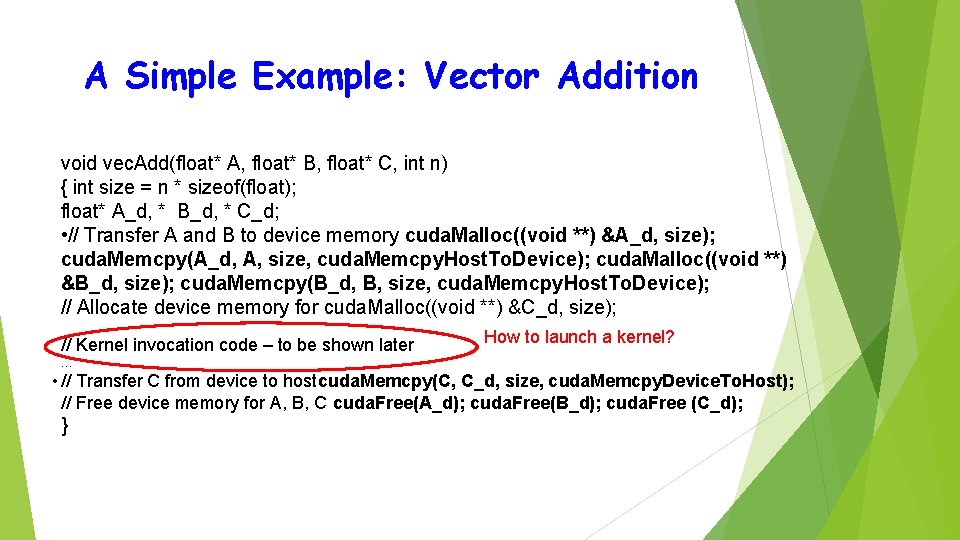

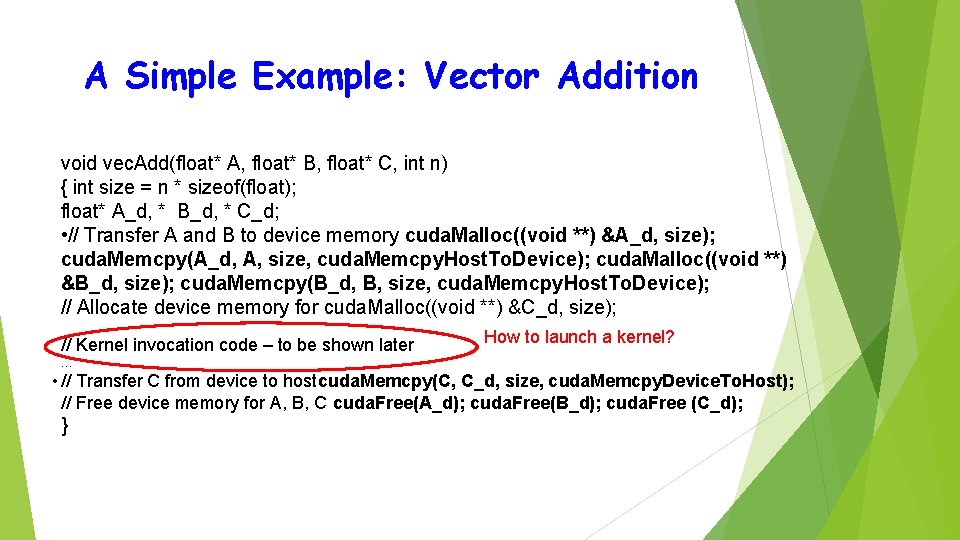

A Simple Example: Vector Addition void vec. Add(float* A, float* B, float* C, int n) { int size = n * sizeof(float); float* A_d, * B_d, * C_d; • // Transfer A and B to device memory cuda. Malloc((void **) &A_d, size); cuda. Memcpy(A_d, A, size, cuda. Memcpy. Host. To. Device); cuda. Malloc((void **) &B_d, size); cuda. Memcpy(B_d, B, size, cuda. Memcpy. Host. To. Device); // Allocate device memory for cuda. Malloc((void **) &C_d, size); // Kernel invocation code – to be shown later How to launch a kernel? … • // Transfer C from device to hostcuda. Memcpy(C, C_d, size, cuda. Memcpy. Device. To. Host); // Free device memory for A, B, C cuda. Free(A_d); cuda. Free(B_d); cuda. Free (C_d); }

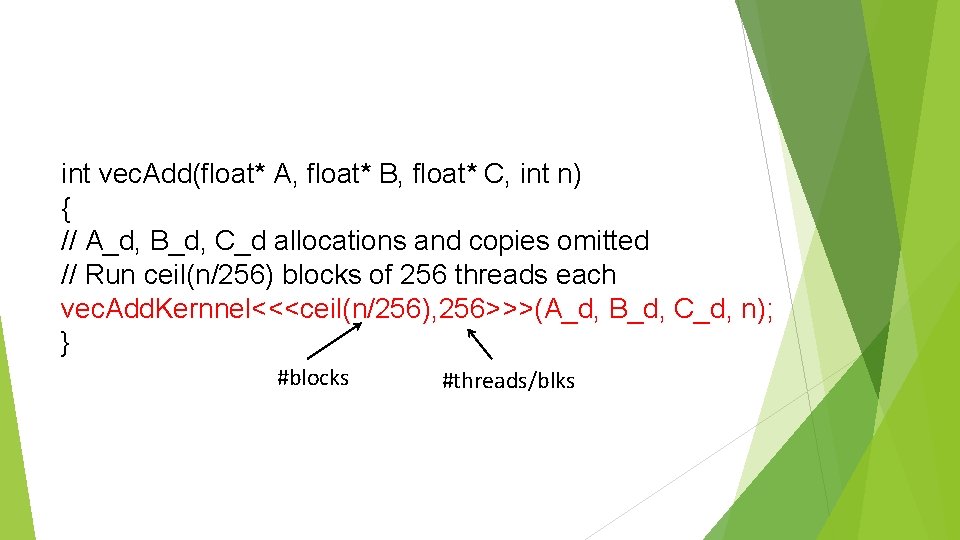

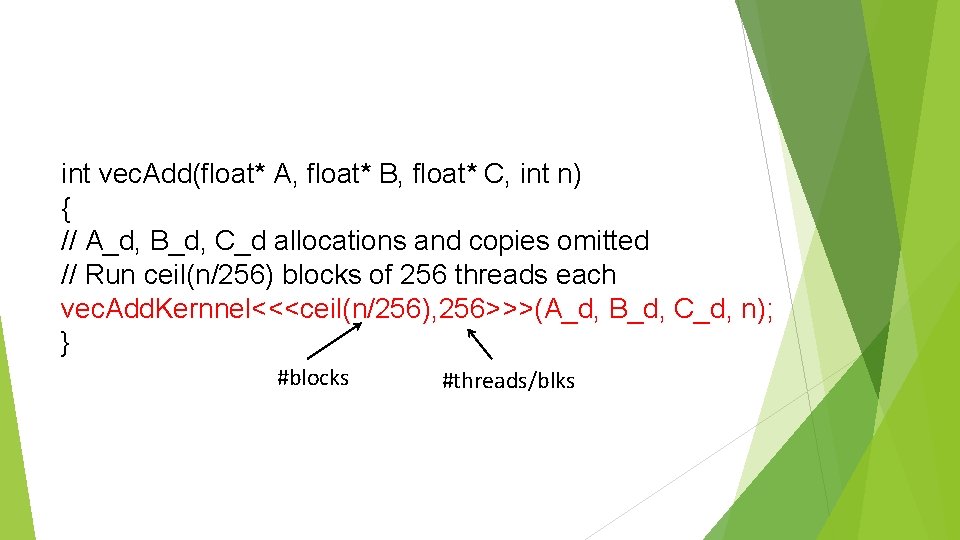

int vec. Add(float* A, float* B, float* C, int n) { // A_d, B_d, C_d allocations and copies omitted // Run ceil(n/256) blocks of 256 threads each vec. Add. Kernnel<<<ceil(n/256), 256>>>(A_d, B_d, C_d, n); } #blocks #threads/blks

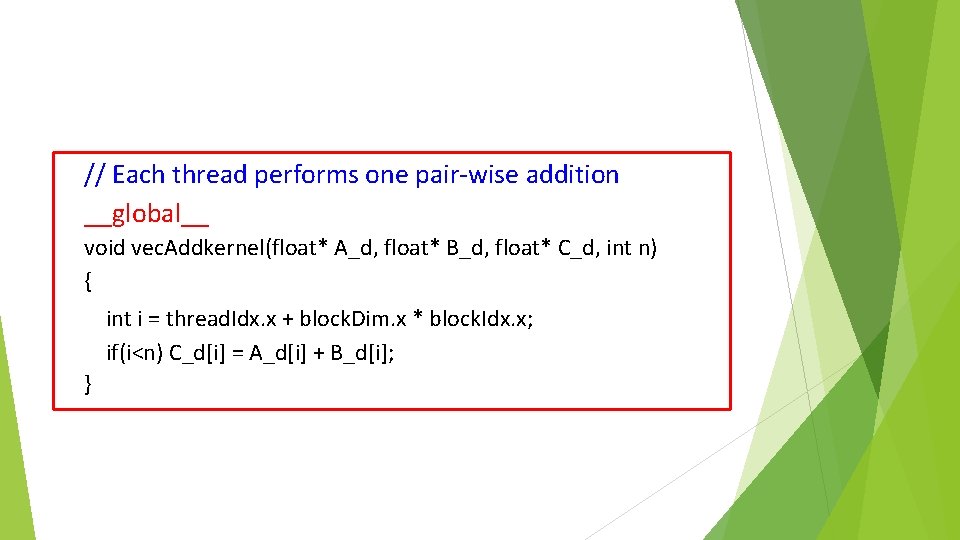

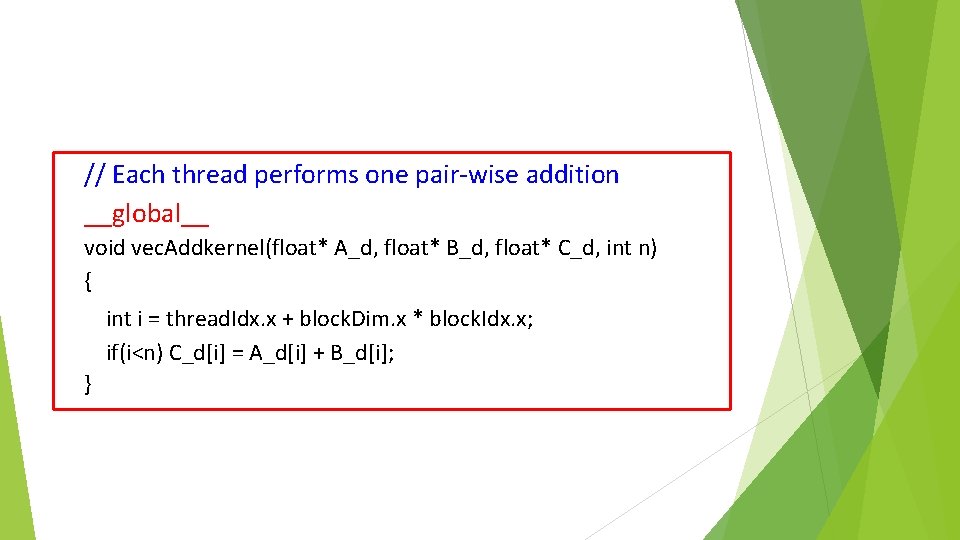

// Each thread performs one pair-wise addition __global__ void vec. Addkernel(float* A_d, float* B_d, float* C_d, int n) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; if(i<n) C_d[i] = A_d[i] + B_d[i]; }

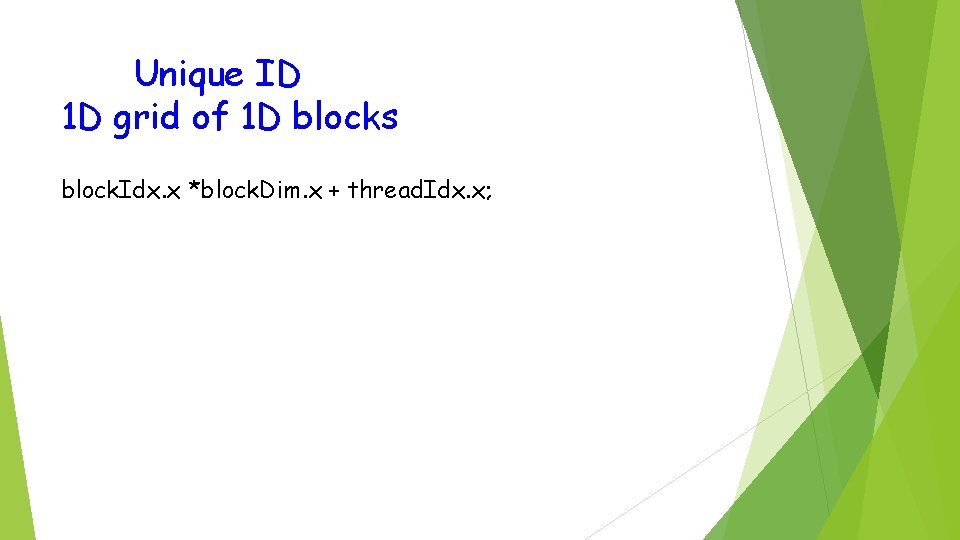

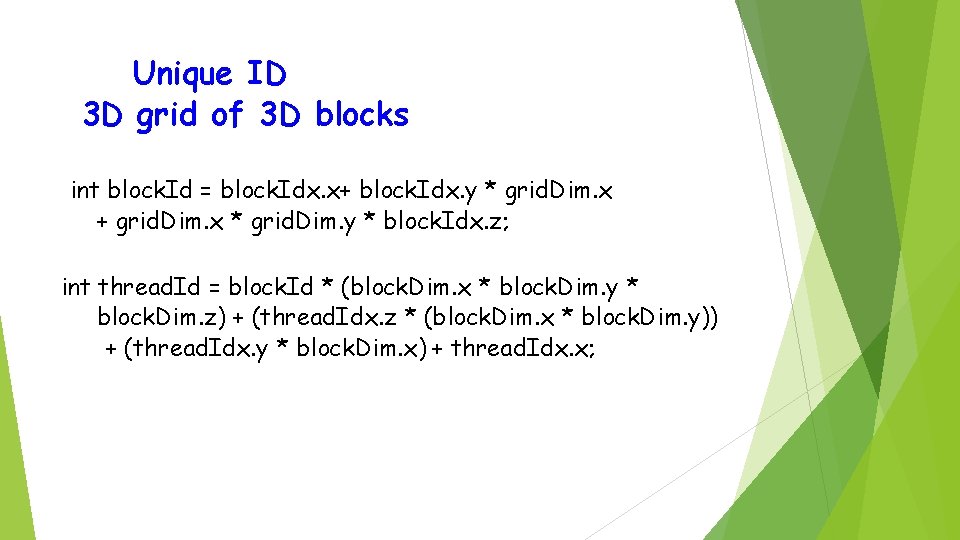

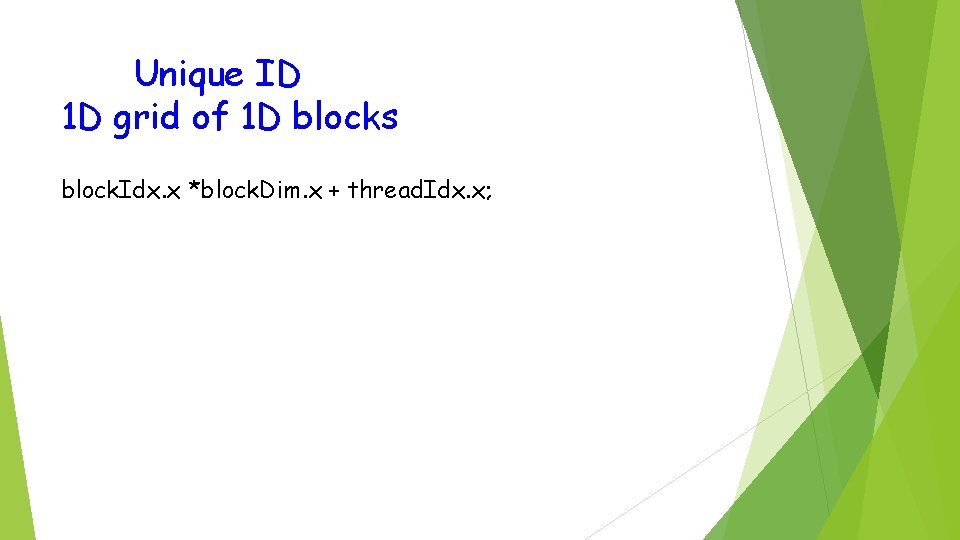

Unique ID 1 D grid of 1 D blocks block. Idx. x *block. Dim. x + thread. Idx. x;

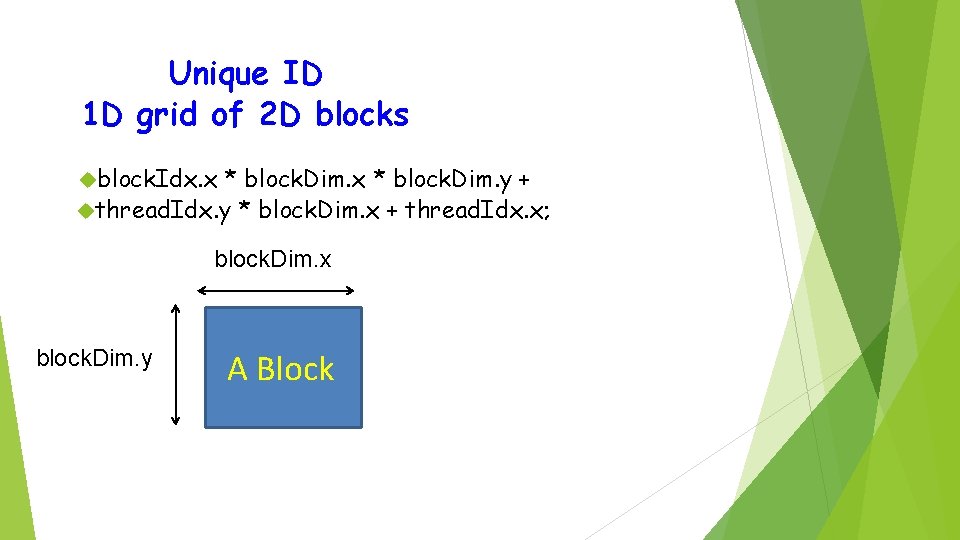

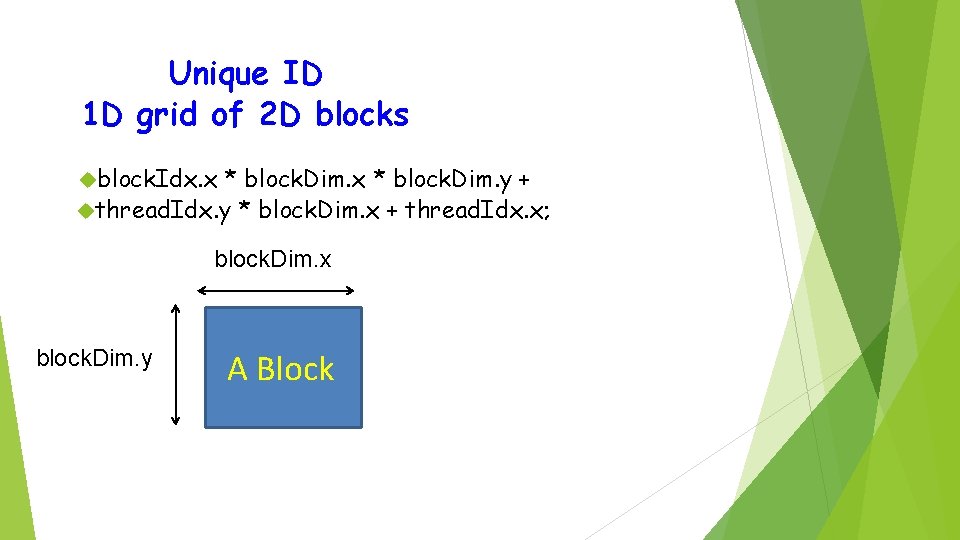

Unique ID 1 D grid of 2 D blocks block. Idx. x * block. Dim. y + thread. Idx. y * block. Dim. x + thread. Idx. x; block. Dim. x block. Dim. y A Block

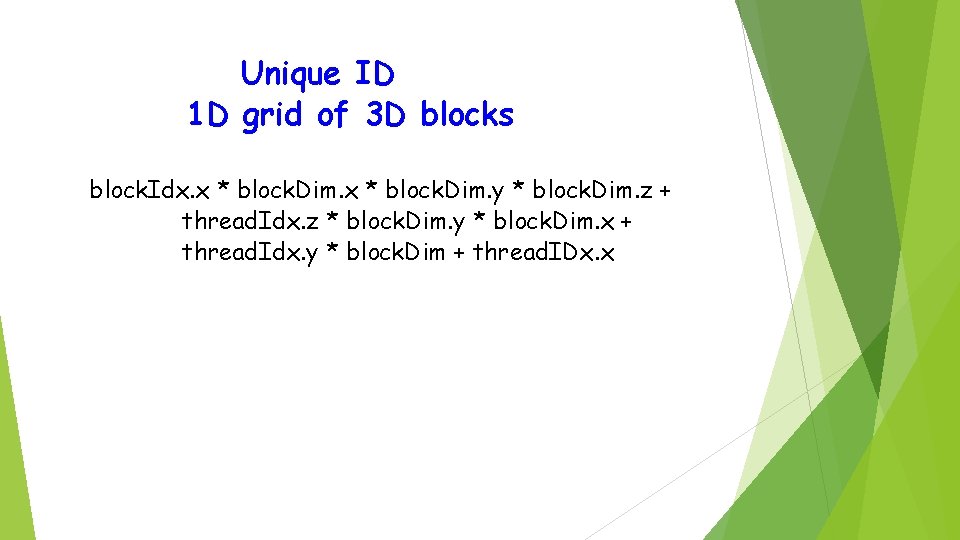

Unique ID 1 D grid of 3 D blocks block. Idx. x * block. Dim. y * block. Dim. z + thread. Idx. z * block. Dim. y * block. Dim. x + thread. Idx. y * block. Dim + thread. IDx. x

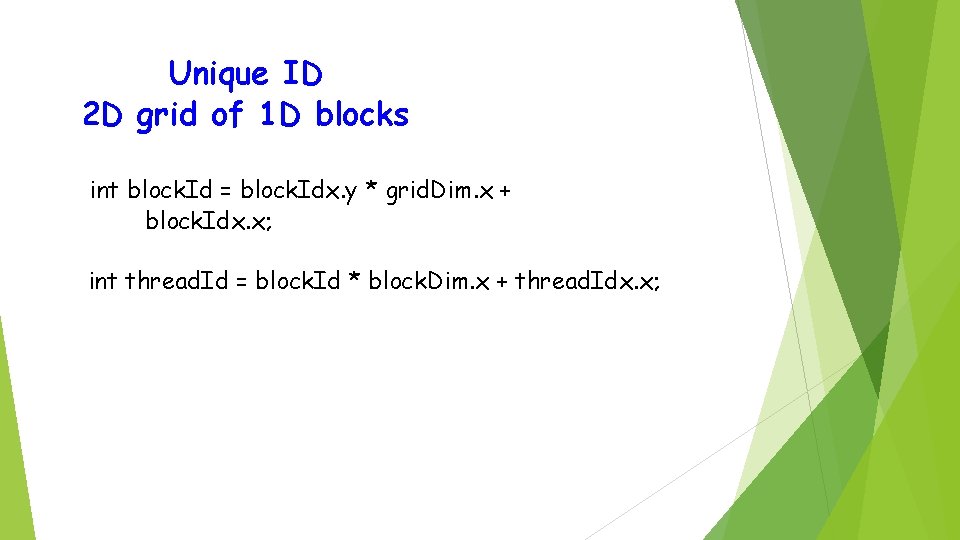

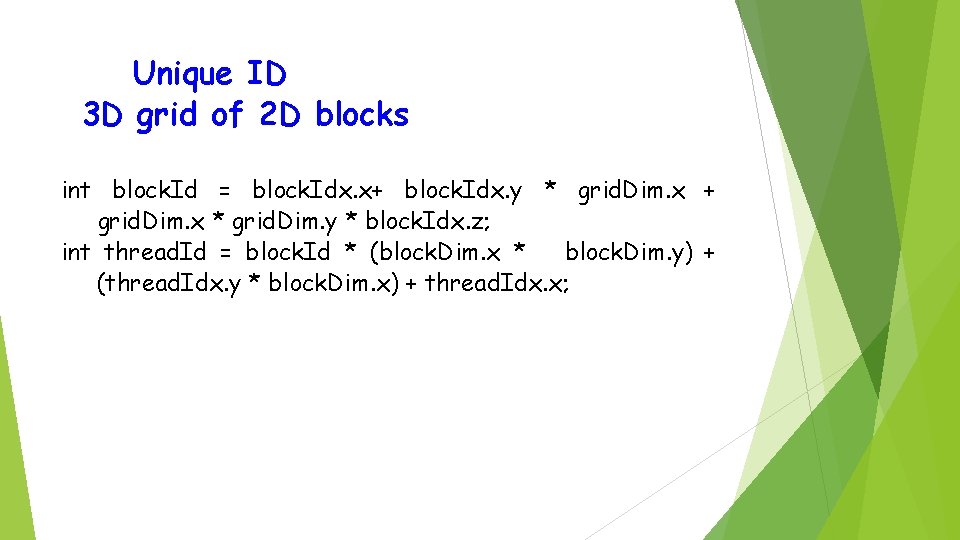

Unique ID 2 D grid of 1 D blocks int block. Id = block. Idx. y * grid. Dim. x + block. Idx. x; int thread. Id = block. Id * block. Dim. x + thread. Idx. x;

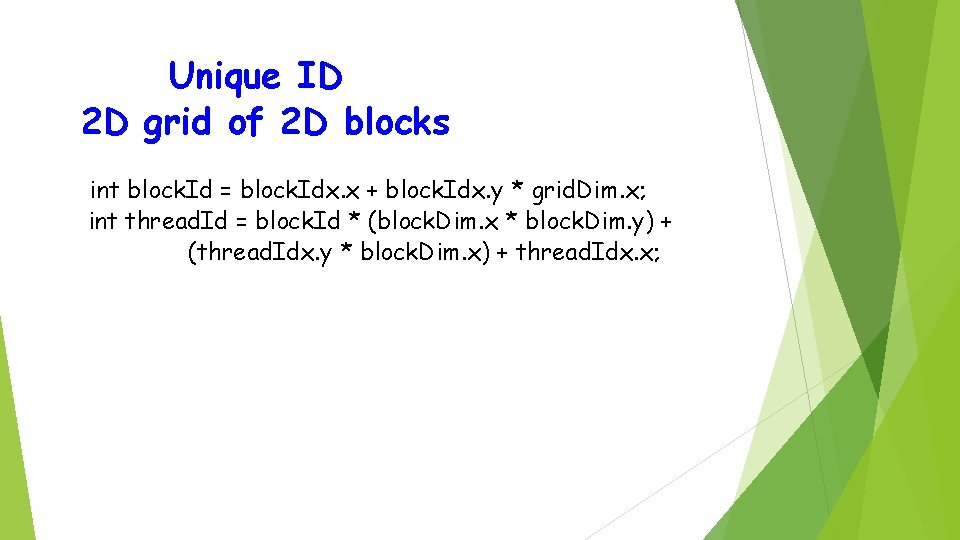

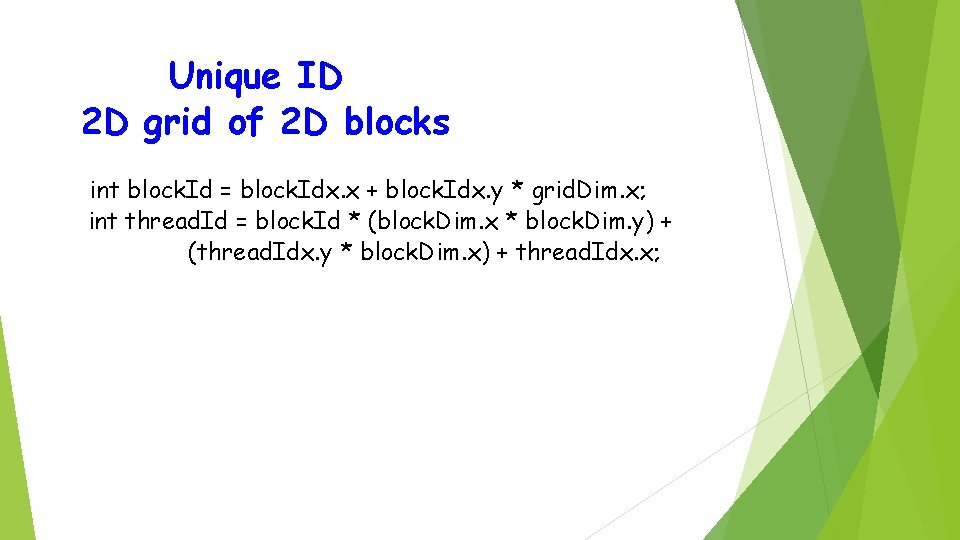

Unique ID 2 D grid of 2 D blocks int block. Id = block. Idx. x + block. Idx. y * grid. Dim. x; int thread. Id = block. Id * (block. Dim. x * block. Dim. y) + (thread. Idx. y * block. Dim. x) + thread. Idx. x;

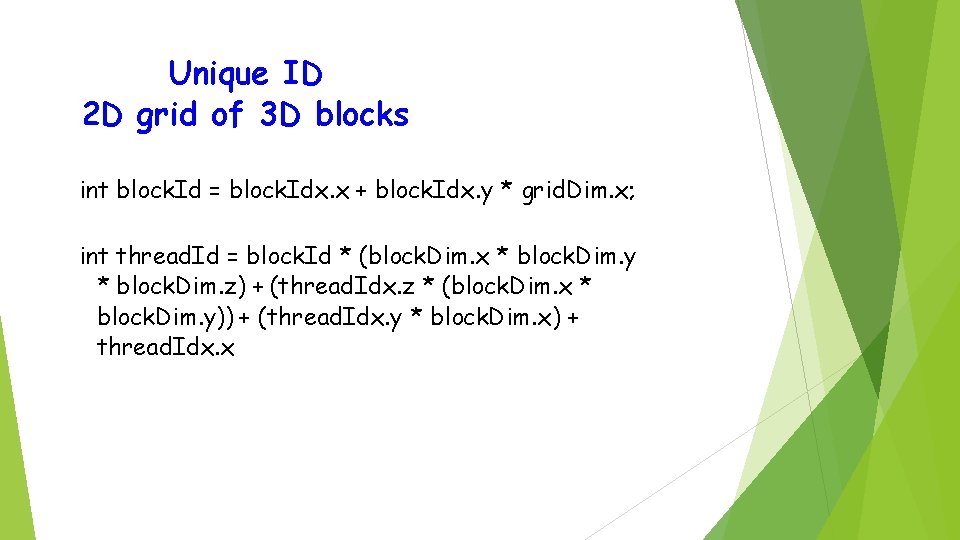

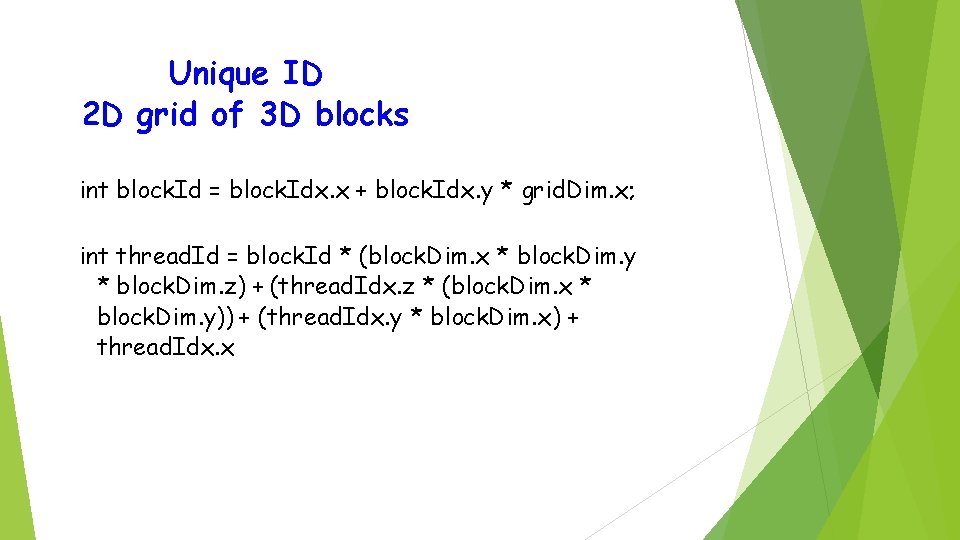

Unique ID 2 D grid of 3 D blocks int block. Id = block. Idx. x + block. Idx. y * grid. Dim. x; int thread. Id = block. Id * (block. Dim. x * block. Dim. y * block. Dim. z) + (thread. Idx. z * (block. Dim. x * block. Dim. y)) + (thread. Idx. y * block. Dim. x) + thread. Idx. x

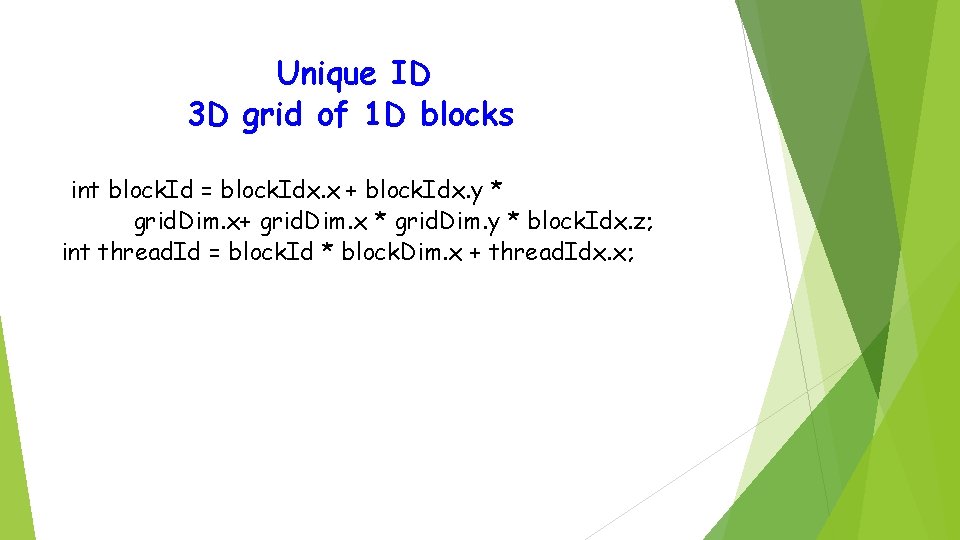

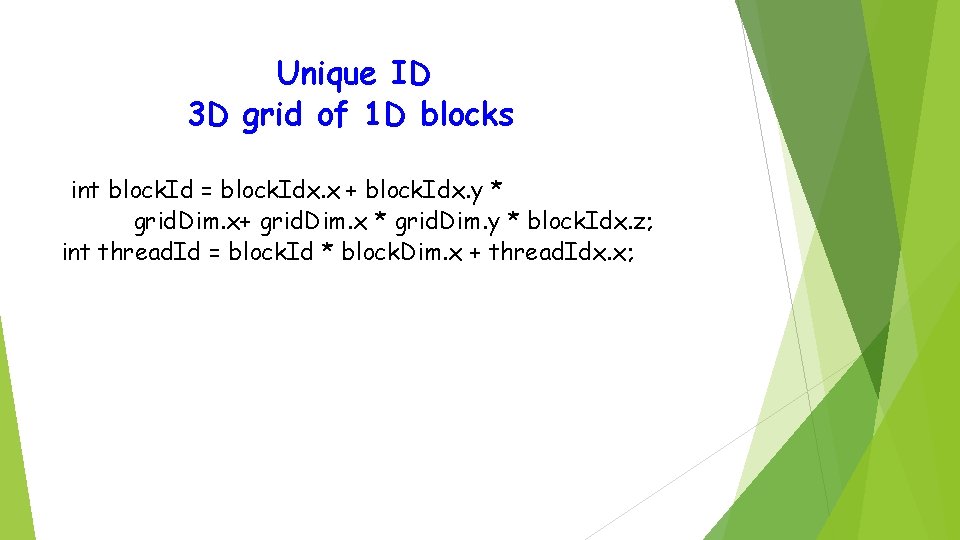

Unique ID 3 D grid of 1 D blocks int block. Id = block. Idx. x + block. Idx. y * grid. Dim. x+ grid. Dim. x * grid. Dim. y * block. Idx. z; int thread. Id = block. Id * block. Dim. x + thread. Idx. x;

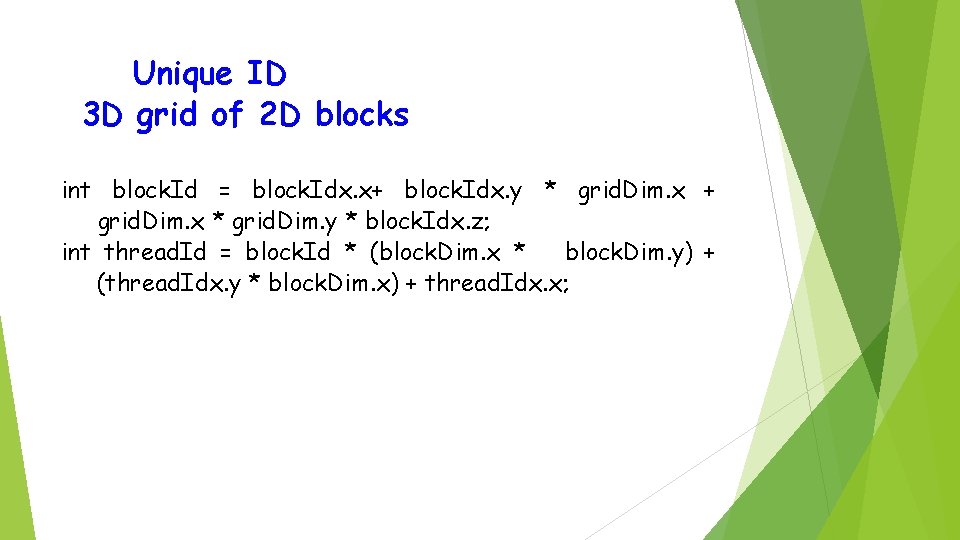

Unique ID 3 D grid of 2 D blocks int block. Id = block. Idx. x+ block. Idx. y * grid. Dim. x + grid. Dim. x * grid. Dim. y * block. Idx. z; int thread. Id = block. Id * (block. Dim. x * block. Dim. y) + (thread. Idx. y * block. Dim. x) + thread. Idx. x;

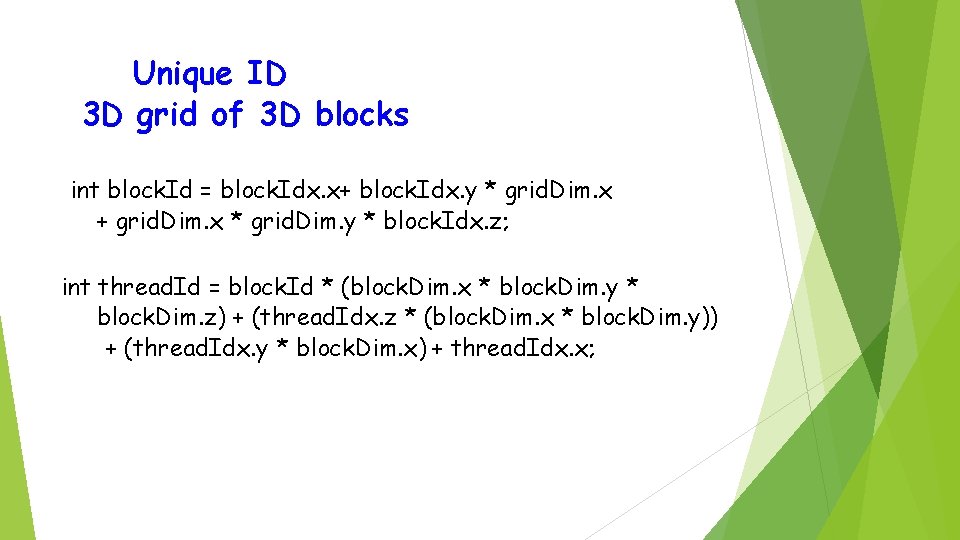

Unique ID 3 D grid of 3 D blocks int block. Id = block. Idx. x+ block. Idx. y * grid. Dim. x + grid. Dim. x * grid. Dim. y * block. Idx. z; int thread. Id = block. Id * (block. Dim. x * block. Dim. y * block. Dim. z) + (thread. Idx. z * (block. Dim. x * block. Dim. y)) + (thread. Idx. y * block. Dim. x) + thread. Idx. x;

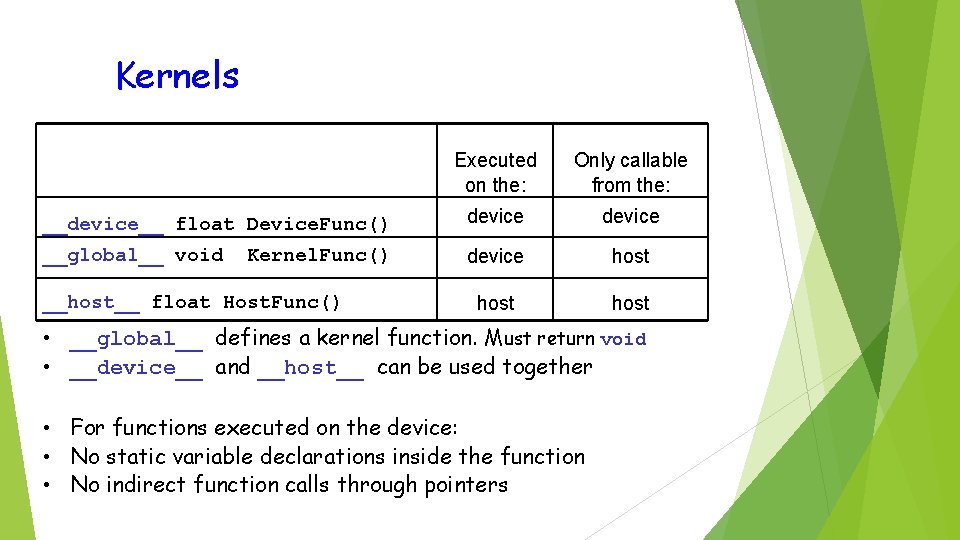

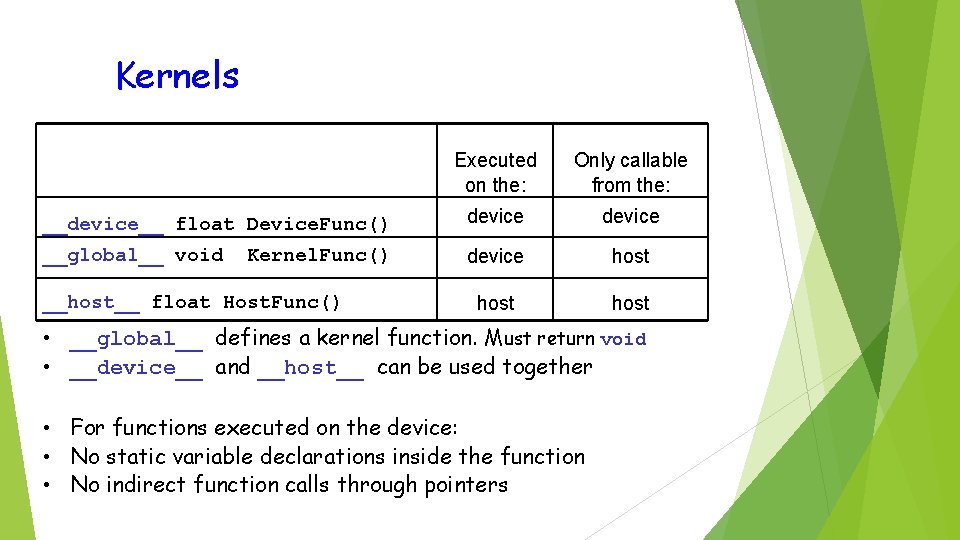

Kernels Executed on the: Only callable from the: __device__ float Device. Func() device __global__ void device host Kernel. Func() __host__ float Host. Func() • __global__ defines a kernel function. Must return void • __device__ and __host__ can be used together • For functions executed on the device: • No static variable declarations inside the function • No indirect function calls through pointers

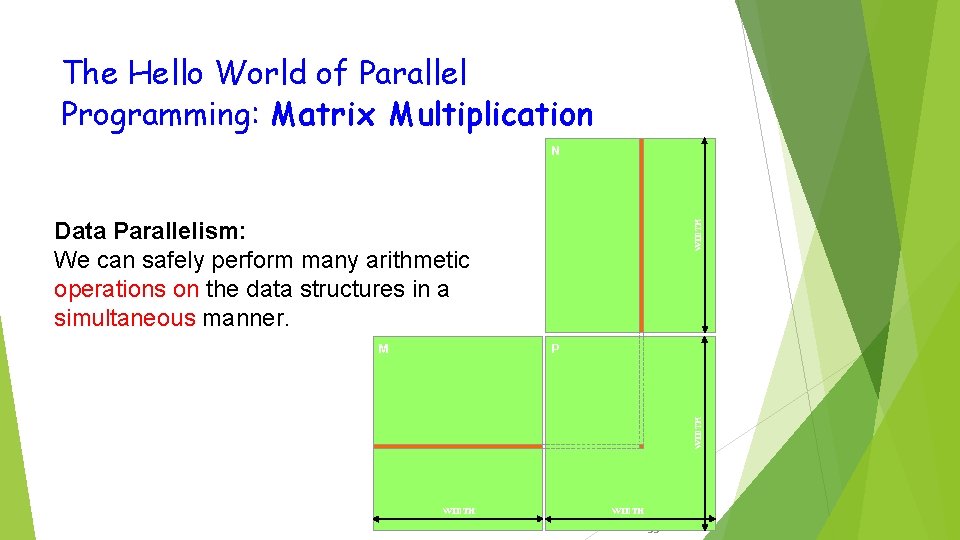

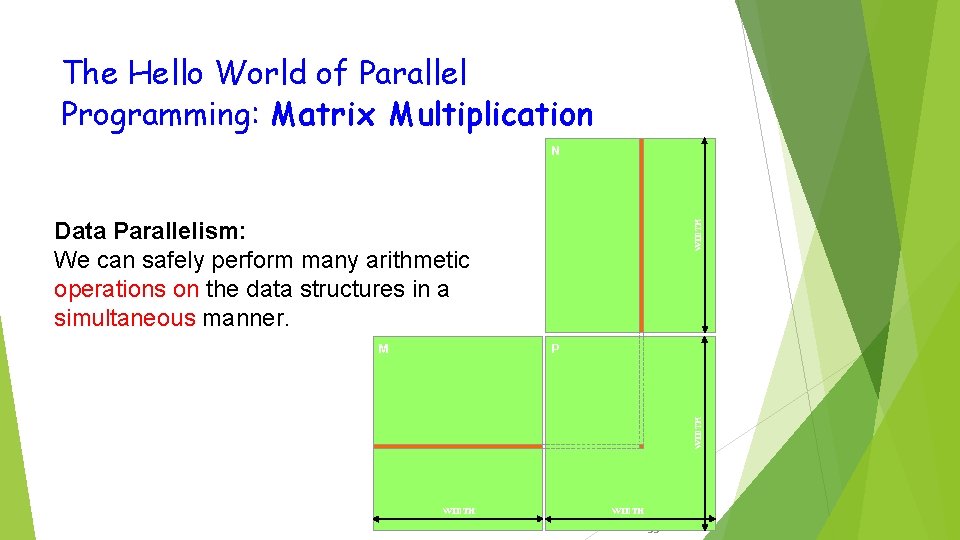

The Hello World of Parallel Programming: Matrix Multiplication N P WIDTH M WIDTH Data Parallelism: We can safely perform many arithmetic operations on the data structures in a simultaneous manner. WIDTH 33

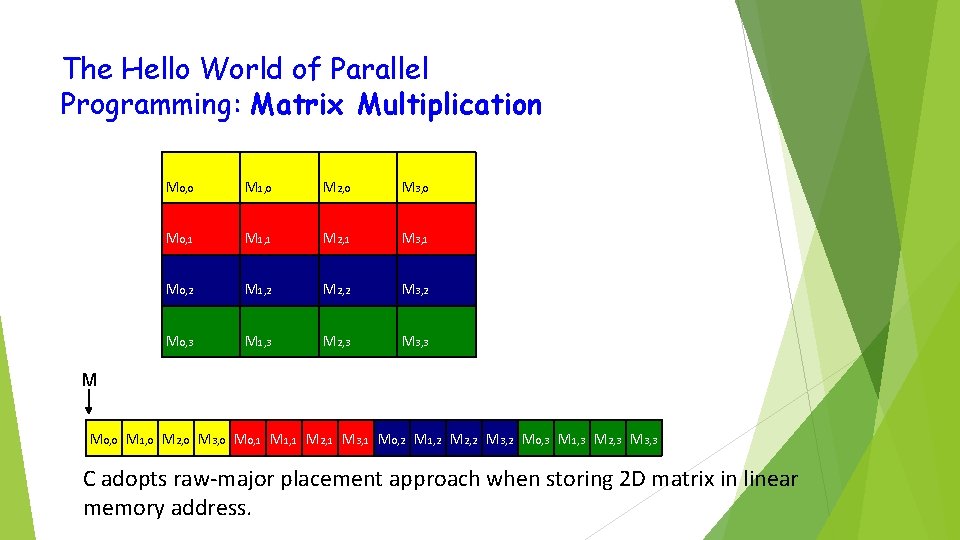

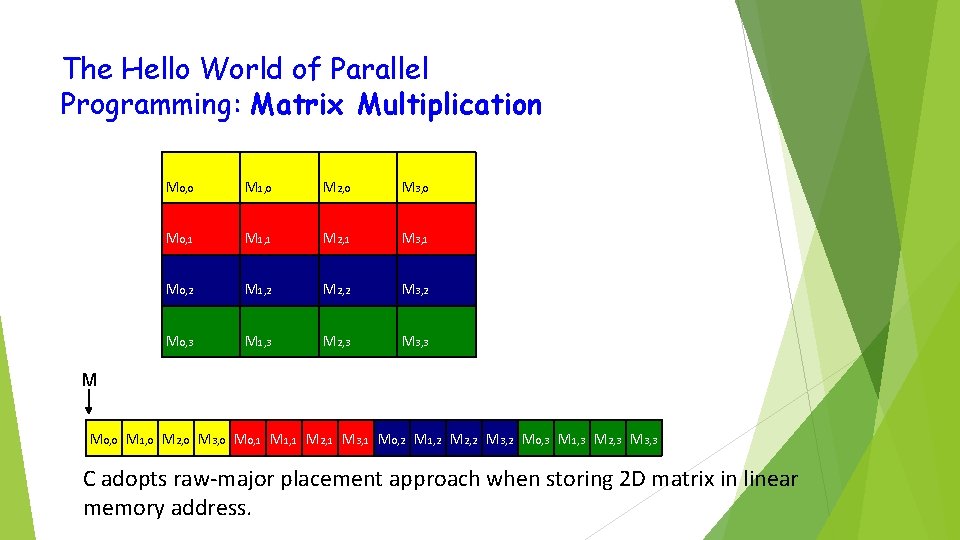

The Hello World of Parallel Programming: Matrix Multiplication M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 M M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 C adopts raw-major placement approach when storing 2 D matrix in linear memory address.

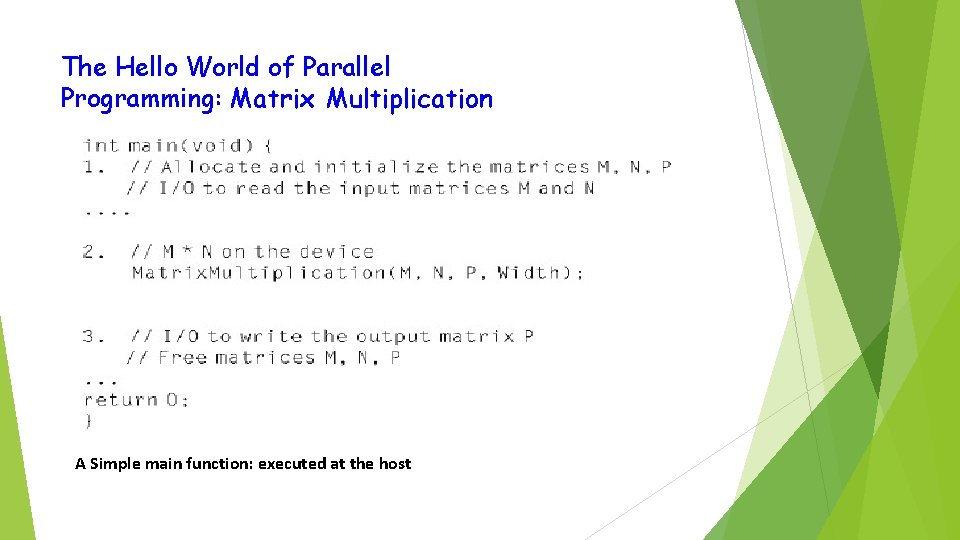

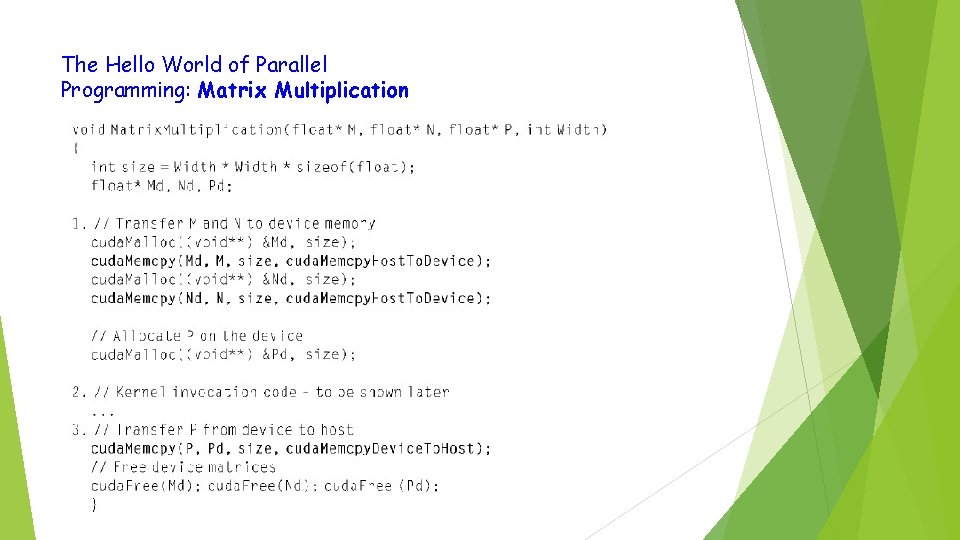

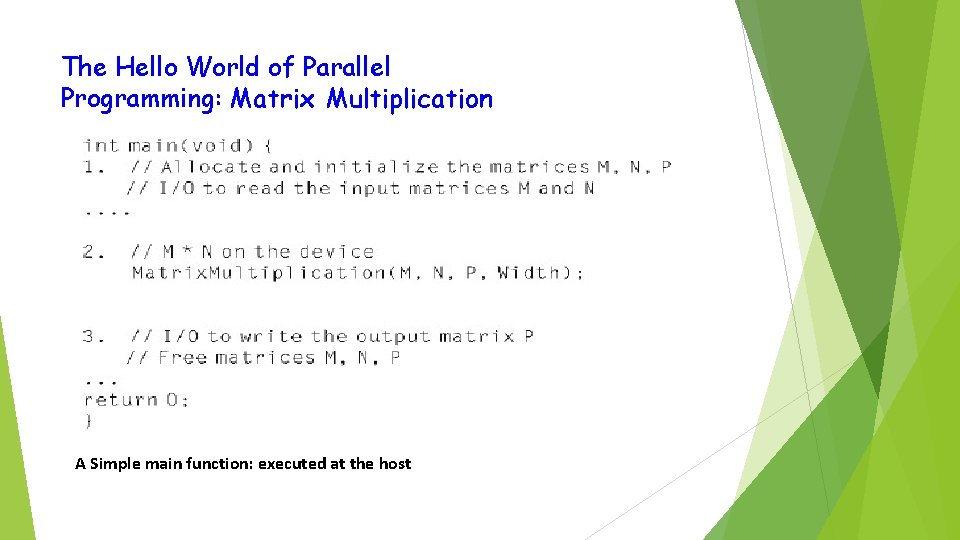

The Hello World of Parallel Programming: Matrix Multiplication A Simple main function: executed at the host

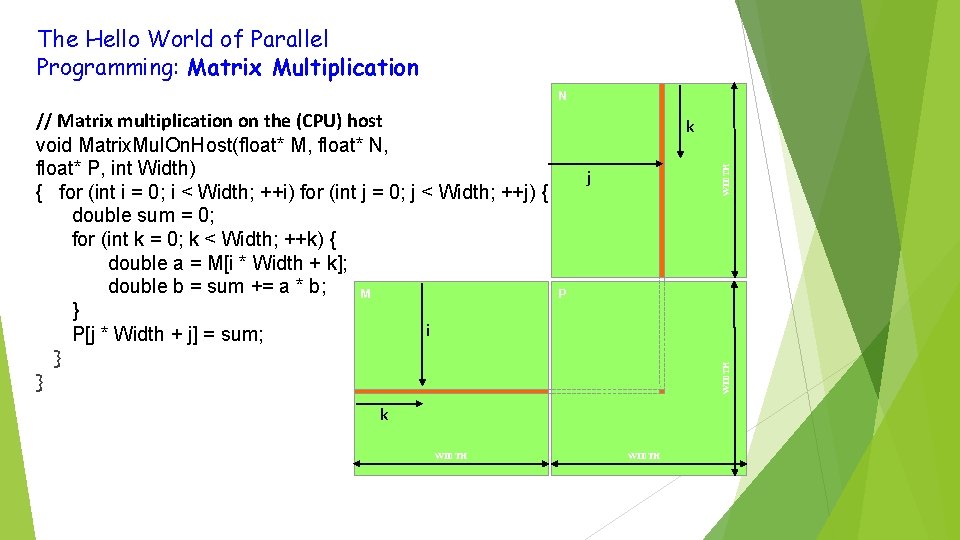

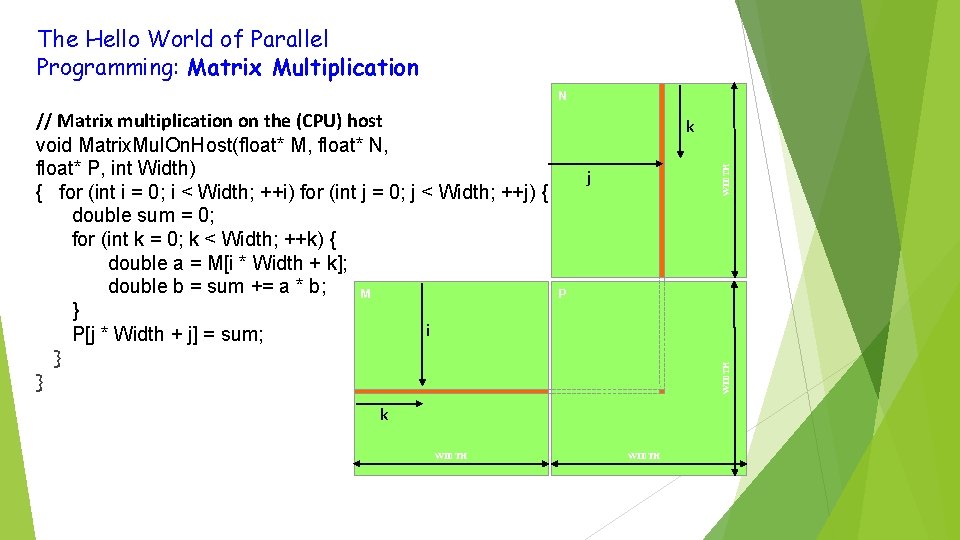

The Hello World of Parallel Programming: Matrix Multiplication N WIDTH k j P WIDTH // Matrix multiplication on the (CPU) host void Matrix. Mul. On. Host(float* M, float* N, float* P, int Width) { for (int i = 0; i < Width; ++i) for (int j = 0; j < Width; ++j) { double sum = 0; for (int k = 0; k < Width; ++k) { double a = M[i * Width + k]; double b = sum += a * b; M } i P[j * Width + j] = sum; } } k WIDTH 36

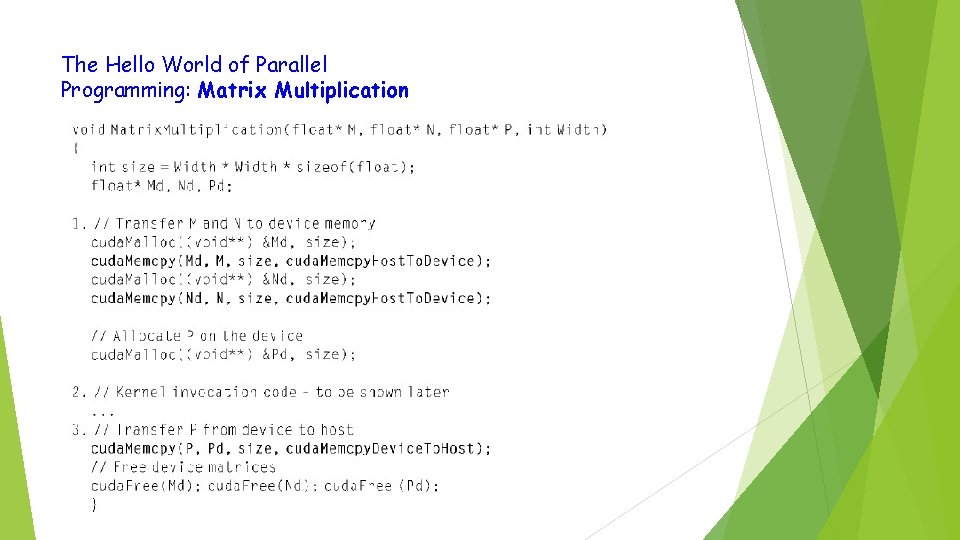

The Hello World of Parallel Programming: Matrix Multiplication

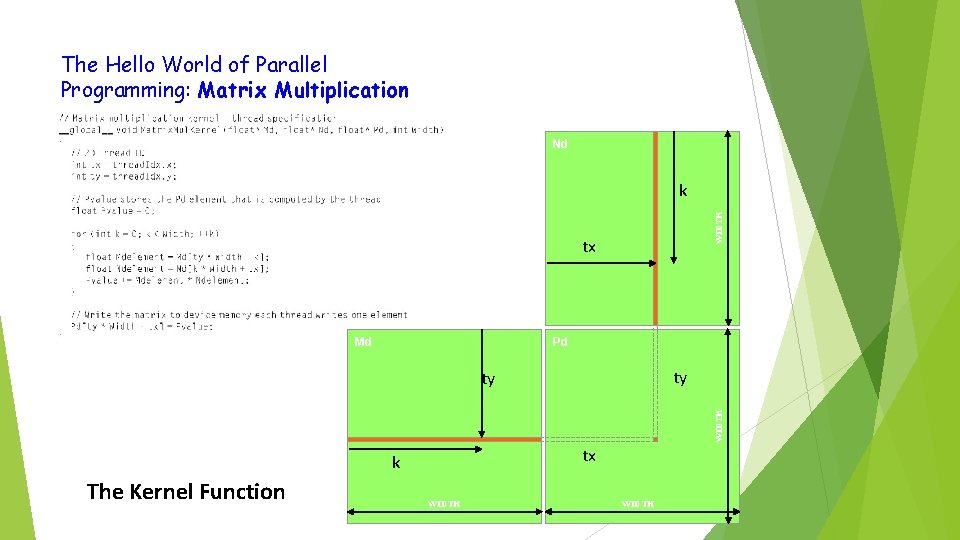

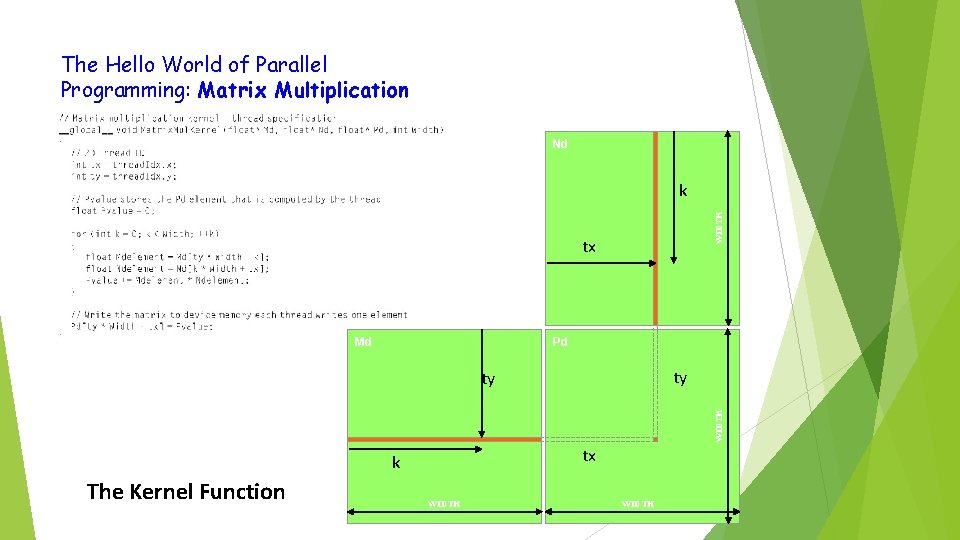

The Hello World of Parallel Programming: Matrix Multiplication Nd WIDTH k tx Md Pd ty WIDTH ty tx k The Kernel Function WIDTH 38

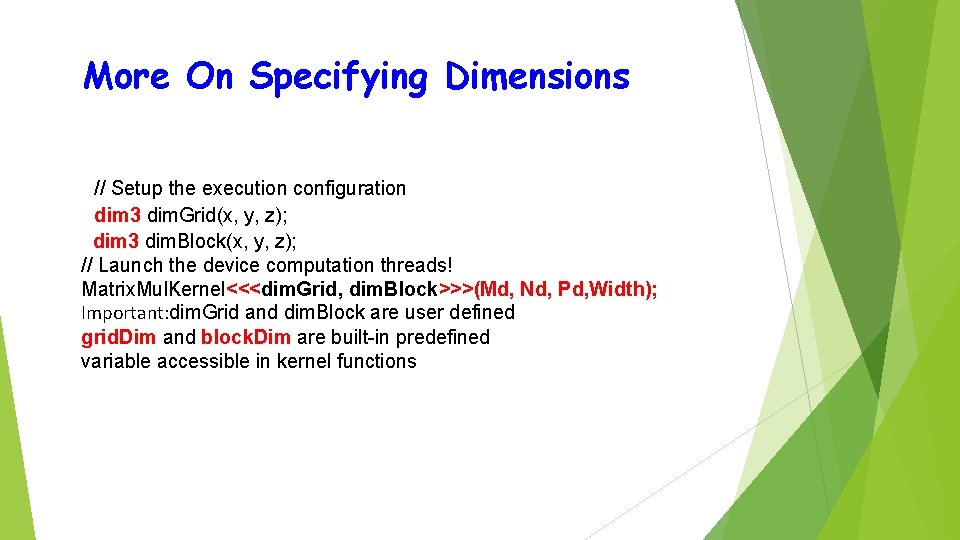

More On Specifying Dimensions // Setup the execution configuration dim 3 dim. Grid(x, y, z); dim 3 dim. Block(x, y, z); // Launch the device computation threads! Matrix. Mul. Kernel<<<dim. Grid, dim. Block>>>(Md, Nd, Pd, Width); Important: dim. Grid and dim. Block are user defined grid. Dim and block. Dim are built-in predefined variable accessible in kernel functions

Be Sure To Know: • Maximum dimensions of a block • Maximum number of threads per block • Maximum dimensions of a grid • Maximum number of blocks per grid

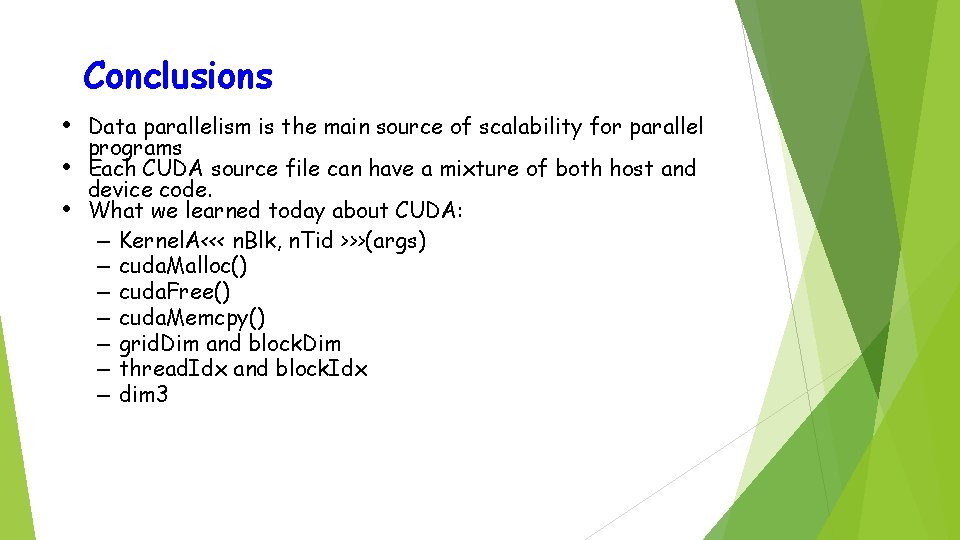

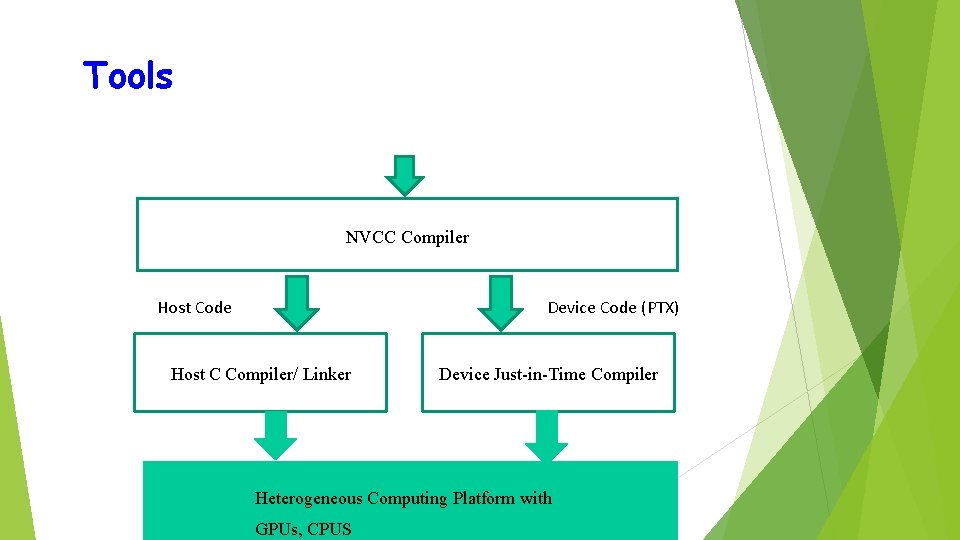

Tools NVCC Compiler Host Code Device Code (PTX) Host C Compiler/ Linker Device Just-in-Time Compiler Heterogeneous Computing Platform with GPUs, CPUS

Conclusions • Data parallelism is the main source of scalability for parallel • • programs Each CUDA source file can have a mixture of both host and device code. What we learned today about CUDA: – Kernel. A<<< n. Blk, n. Tid >>>(args) – cuda. Malloc() – cuda. Free() – cuda. Memcpy() – grid. Dim and block. Dim – thread. Idx and block. Idx – dim 3