CUDA Lecture 4 CUDA Programming Basics Prepared 6222011

CUDA Lecture 4 CUDA Programming Basics Prepared 6/22/2011 by T. O’Neil for 3460: 677, Fall 2011, The University of Akron.

Parallel Programming Basics �Things we need to consider: � Control � Synchronization � Communication �Parallel programming languages offer different ways of dealing with above CUDA Programming Basics – Slide 2

Overview � CUDA programming model – basic concepts and data types � CUDA application programming interface - basic � Simple examples to illustrate basic concepts and functionalities � Performance features will be covered later CUDA Programming Basics – Slide 3

Outline of CUDA Basics � Basic kernels and execution on GPU � Basic memory management � Coordinating CPU and GPU execution � See the programming guide for the full API CUDA Programming Basics – Slide 4

CUDA – C with no shader limitations! � Integrated host + device application program in C � Serial or modestly parallel parts in host C code � Highly parallel parts in device SPMD kernel C code � Programming model � Parallel code (kernel) is launched and executed on a device by many threads � Launches are hierarchical � Threads are grouped into blocks � Blocks are grouped into grids � Familiar serial code is written for a thread � Each thread is free to execute a unique code path � Built-in thread and block ID variables CUDA Programming Basics – Slide 5

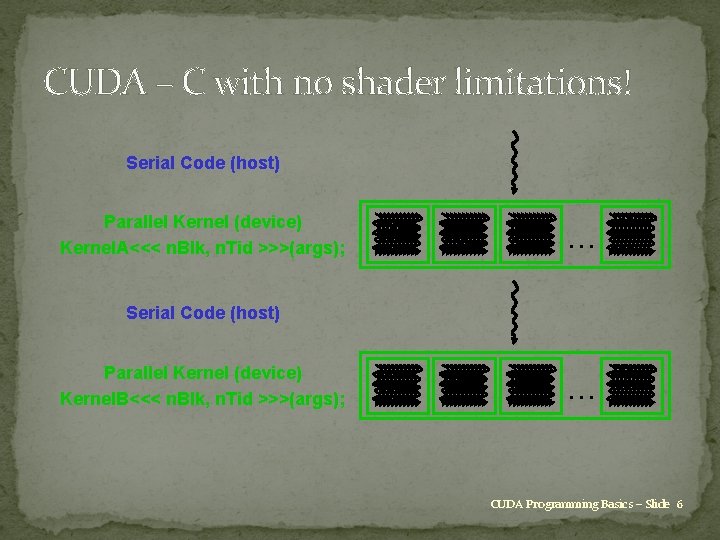

CUDA – C with no shader limitations! Serial Code (host) Parallel Kernel (device) Kernel. A<<< n. Blk, n. Tid >>>(args); . . . Serial Code (host) Parallel Kernel (device) Kernel. B<<< n. Blk, n. Tid >>>(args); . . . CUDA Programming Basics – Slide 6

CUDA Devices and Threads � A compute device � Is a coprocessor to the CPU or host � Has its own DRAM (device memory) � Runs many threads in parallel � Is typically a GPU but can also be another type of parallel processing device � Data-parallel portions of an application are expressed as device kernels which run on many threads CUDA Programming Basics – Slide 7

CUDA Devices and Threads � Differences between GPU and CPU threads � GPU threads are extremely lightweight � Very little creation overhead � GPU needs 1000 s of threads for full efficiency � Multi-core CPU needs only a few CUDA Programming Basics – Slide 8

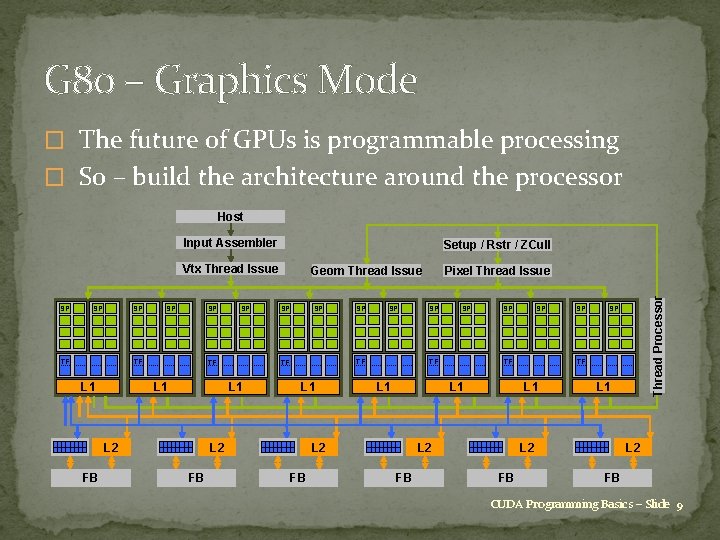

G 80 – Graphics Mode � The future of GPUs is programmable processing � So – build the architecture around the processor Host Input Assembler Setup / Rstr / ZCull SP SP SP TF TF L 1 SP SP L 1 L 2 FB SP SP TF TF L 1 L 2 FB SP Geom Thread Issue SP SP TF L 1 SP SP SP TF L 1 L 2 FB Pixel Thread Issue L 1 L 2 FB Thread Processor Vtx Thread Issue L 2 FB CUDA Programming Basics – Slide 9

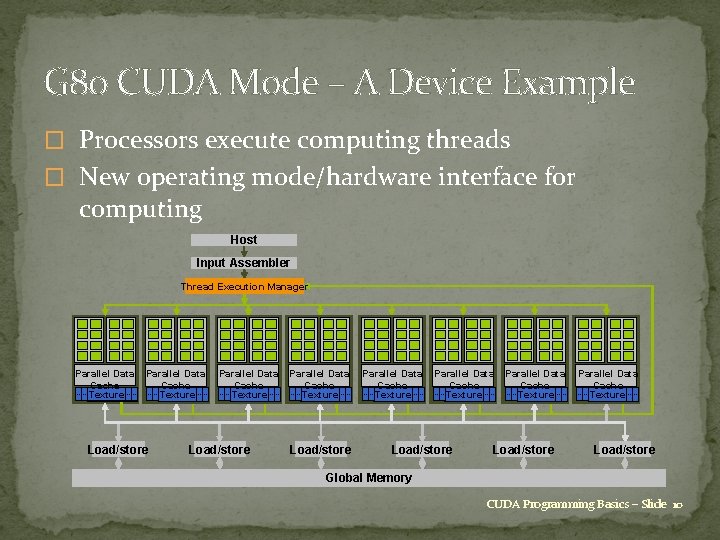

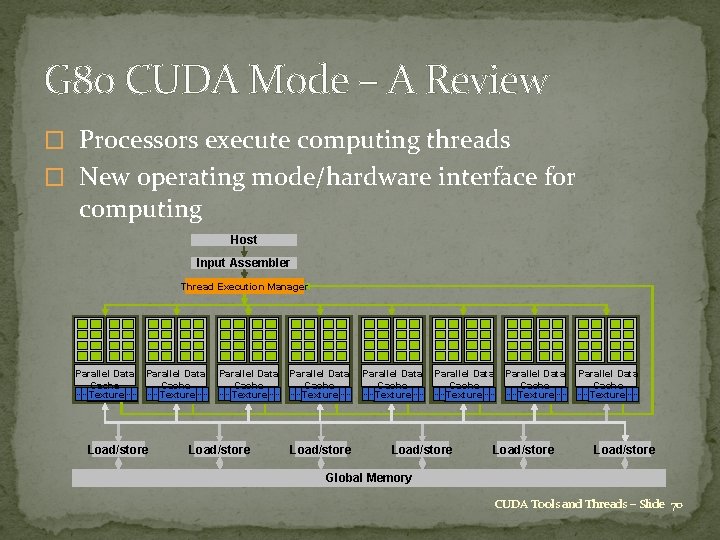

G 80 CUDA Mode – A Device Example � Processors execute computing threads � New operating mode/hardware interface for computing Host Input Assembler Thread Execution Manager Parallel Data Cache Texture Load/store Parallel Data Cache Texture Load/store Parallel Data Cache Texture Load/store Global Memory CUDA Programming Basics – Slide 10

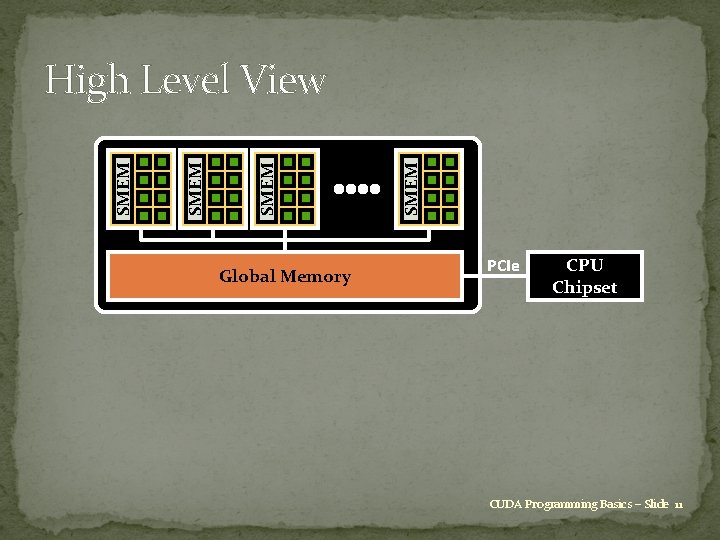

Global Memory SMEM High Level View PCIe CPU Chipset CUDA Programming Basics – Slide 11

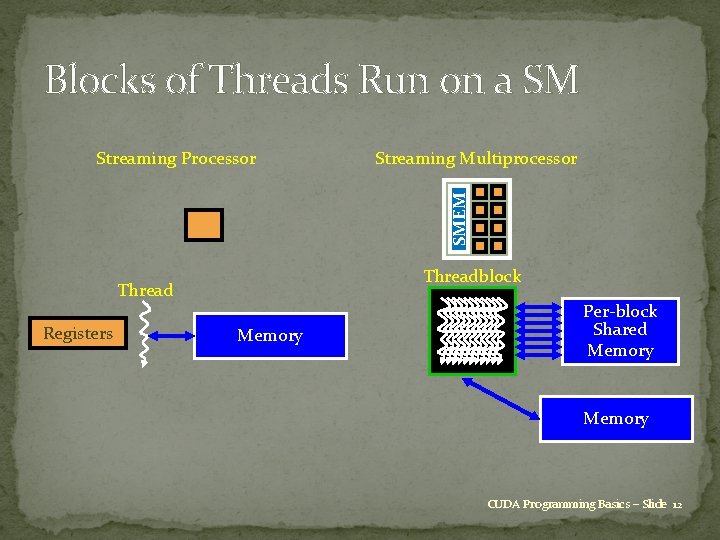

Blocks of Threads Run on a SM Streaming Multiprocessor SMEM Streaming Processor Threadblock Thread Registers Memory Per-block Shared Memory CUDA Programming Basics – Slide 12

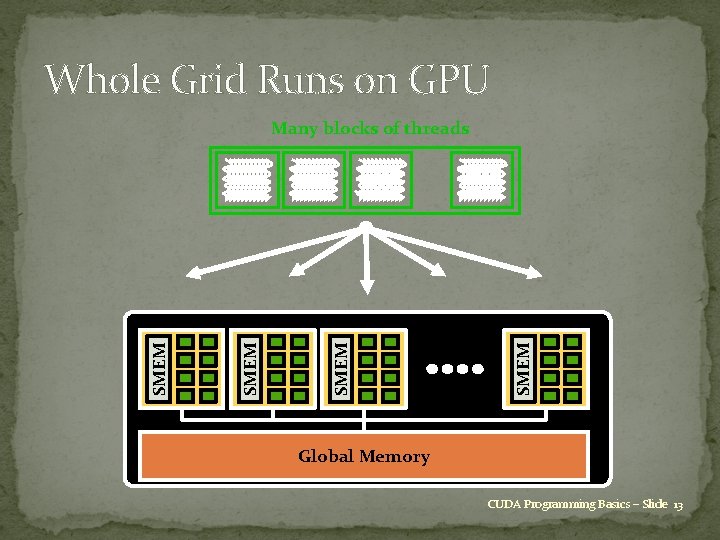

Whole Grid Runs on GPU Many blocks of threads SMEM . . . Global Memory CUDA Programming Basics – Slide 13

![Extended C Type Qualifiers global, device, shared, local, constant __device__ float filter[N]; __global__ void Extended C Type Qualifiers global, device, shared, local, constant __device__ float filter[N]; __global__ void](http://slidetodoc.com/presentation_image_h/9f5b508b562c283faee24796f4e02949/image-14.jpg)

Extended C Type Qualifiers global, device, shared, local, constant __device__ float filter[N]; __global__ void convolve (float *image) Keywords __shared__ float region[M]; . . . Intrinsics region[thread. Idx] = image[i]; Runtime API __syncthreads(). . . thread. Idx, block. Idx __syncthreads Memory, symbol, execution management Function launch { image[j] = result; } // Allocate GPU memory void *myimage = cuda. Malloc(bytes) // 100 blocks, 10 threads per block convolve<<<100, 10>>> (myimage); CUDA Programming Basics – Slide 14

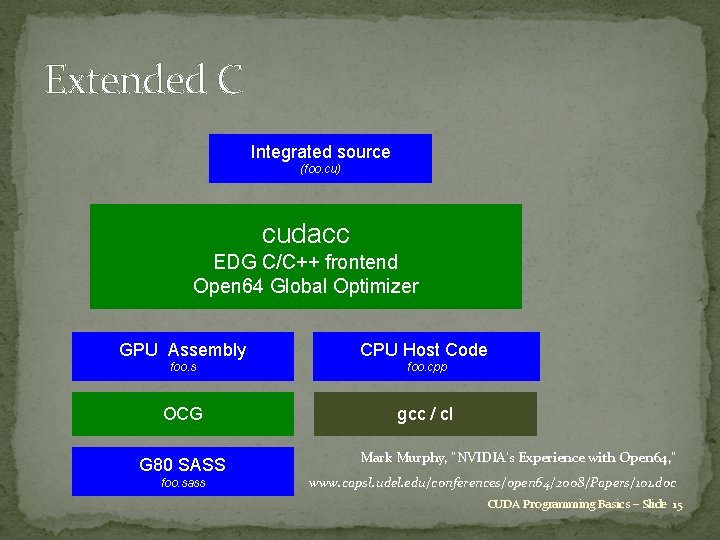

Extended C Integrated source (foo. cu) cudacc EDG C/C++ frontend Open 64 Global Optimizer GPU Assembly CPU Host Code foo. s foo. cpp OCG gcc / cl G 80 SASS foo. sass Mark Murphy, “NVIDIA’s Experience with Open 64, ” www. capsl. udel. edu/conferences/open 64/2008/Papers/101. doc CUDA Programming Basics – Slide 15

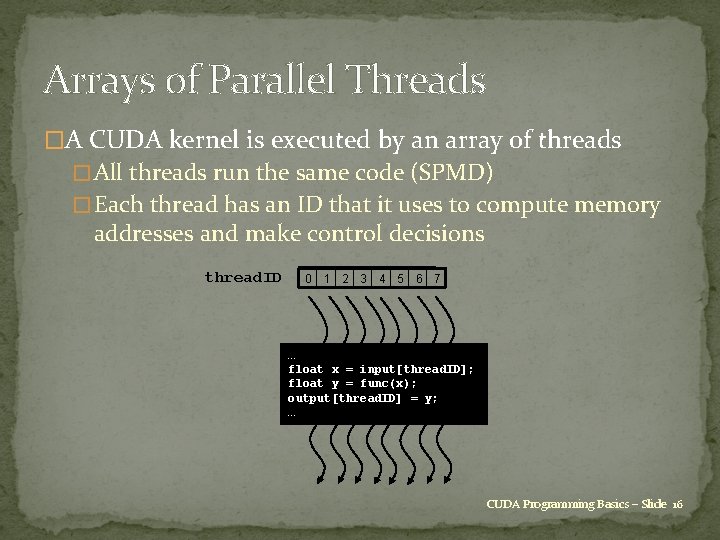

Arrays of Parallel Threads �A CUDA kernel is executed by an array of threads � All threads run the same code (SPMD) � Each thread has an ID that it uses to compute memory addresses and make control decisions thread. ID 0 1 2 3 4 5 6 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … CUDA Programming Basics – Slide 16

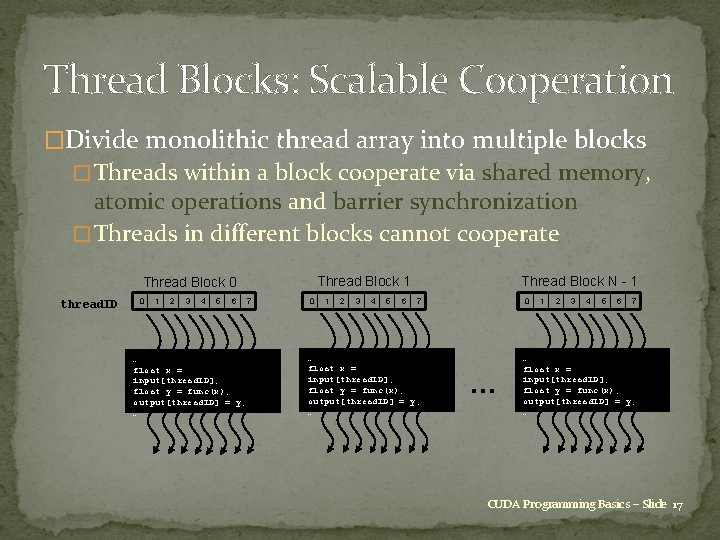

Thread Blocks: Scalable Cooperation �Divide monolithic thread array into multiple blocks � Threads within a block cooperate via shared memory, atomic operations and barrier synchronization � Threads in different blocks cannot cooperate Thread Block 1 Thread Block 0 thread. ID 0 1 2 3 4 5 6 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … 7 0 1 2 3 4 5 6 Thread Block N - 1 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … 0 … 1 2 3 4 5 6 7 … float x = input[thread. ID]; float y = func(x); output[thread. ID] = y; … CUDA Programming Basics – Slide 17

Thread Hierarchy �Threads launched for a parallel section are partitioned into thread blocks � Grid = all blocks for a given launch �Thread block is a group of threads that can � Synchronize their executions � Communicate via shared memory CUDA Programming Basics – Slide 18

Blocks Must Be Independent �Any possible interleaving of blocks should be valid � Presumed to run to completion without preemption � Can run in any order � Can run concurrently OR sequentially �Blocks may coordinate but not synchronize � Shared queue pointer: OK � Shared lock: BAD … can easily deadlock �Independence requirement gives scalability CUDA Programming Basics – Slide 19

Basics of CUDA Programming �A CUDA program has two pieces � Host code on the CPU which interfaces to the GPU � Kernel code which runs on the GPU �At the host level, there is a choice of 2 APIs (Application Programming Interfaces): � Runtime: simpler, more convenient � Driver: much more verbose, more flexible, closer to Open. CL �We will only use the Runtime API in this course CUDA Programming Basics – Slide 20

Basics of CUDA Programming �At the host code level, there are library routines for: � memory allocation on graphics card � data transfer to/from device memory �constants �texture arrays (useful for lookup tables) �ordinary data � error-checking � timing �There is also a special syntax for launching multiple copies of the kernel process on the GPU. CUDA Programming Basics – Slide 21

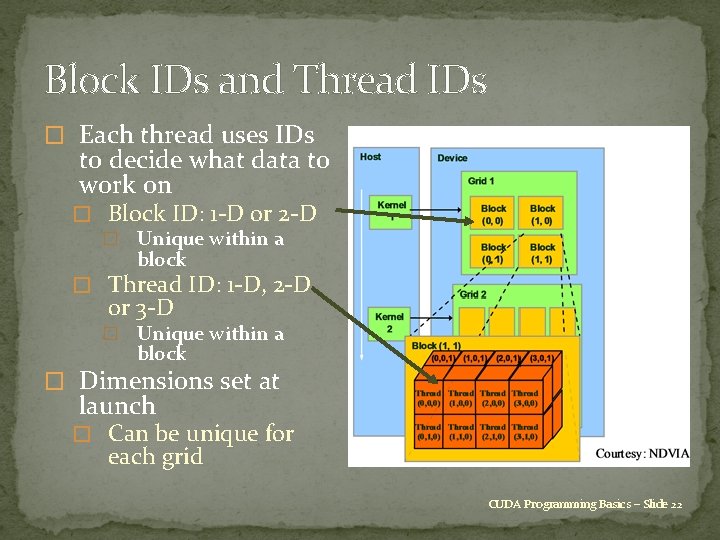

Block IDs and Thread IDs � Each thread uses IDs to decide what data to work on � Block ID: 1 -D or 2 -D � Unique within a block � Thread ID: 1 -D, 2 -D or 3 -D � Unique within a block � Dimensions set at launch � Can be unique for each grid CUDA Programming Basics – Slide 22

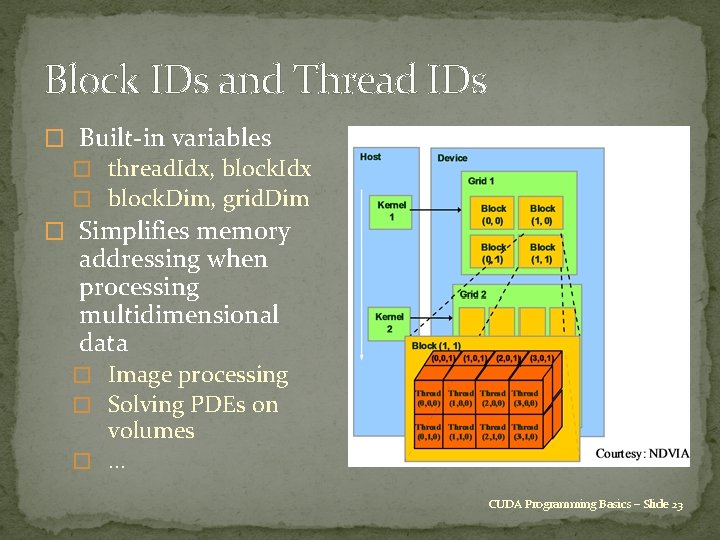

Block IDs and Thread IDs � Built-in variables � thread. Idx, block. Idx � block. Dim, grid. Dim � Simplifies memory addressing when processing multidimensional data � Image processing � Solving PDEs on volumes � … CUDA Programming Basics – Slide 23

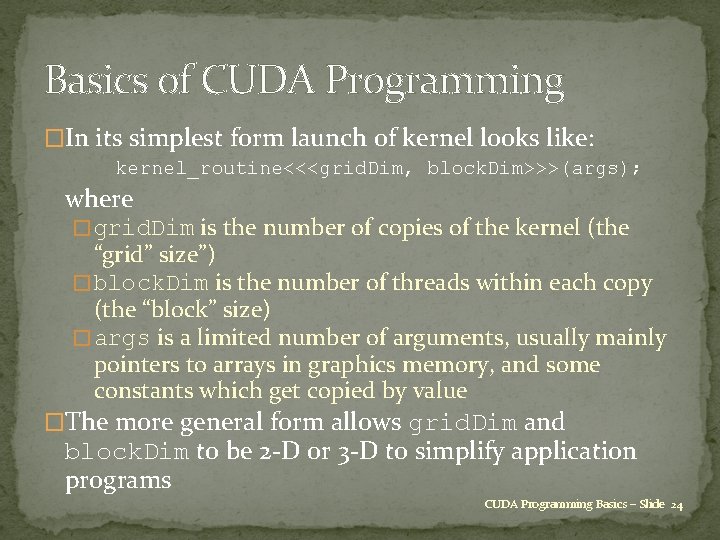

Basics of CUDA Programming �In its simplest form launch of kernel looks like: kernel_routine<<<grid. Dim, block. Dim>>>(args); where � grid. Dim is the number of copies of the kernel (the “grid” size”) � block. Dim is the number of threads within each copy (the “block” size) � args is a limited number of arguments, usually mainly pointers to arrays in graphics memory, and some constants which get copied by value �The more general form allows grid. Dim and block. Dim to be 2 -D or 3 -D to simplify application programs CUDA Programming Basics – Slide 24

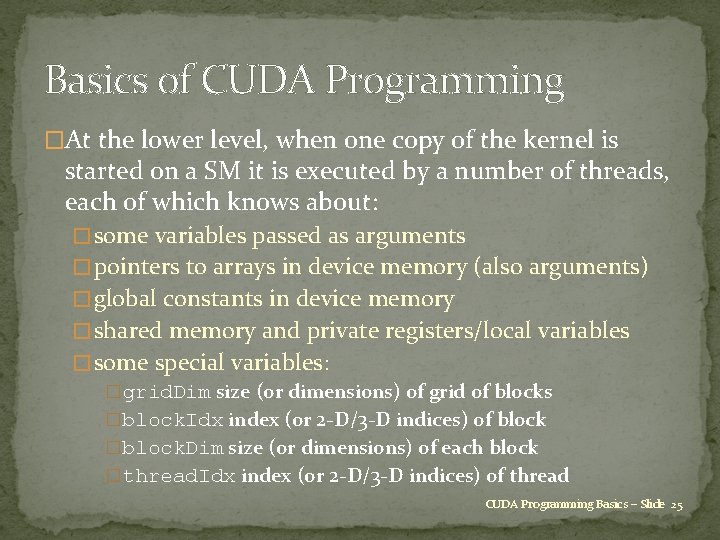

Basics of CUDA Programming �At the lower level, when one copy of the kernel is started on a SM it is executed by a number of threads, each of which knows about: � some variables passed as arguments � pointers to arrays in device memory (also arguments) � global constants in device memory � shared memory and private registers/local variables � some special variables: �grid. Dim size (or dimensions) of grid of blocks �block. Idx index (or 2 -D/3 -D indices) of block �block. Dim size (or dimensions) of each block �thread. Idx index (or 2 -D/3 -D indices) of thread CUDA Programming Basics – Slide 25

Basics of CUDA Programming �Suppose we have 1000 blocks, and each one has 128 threads – how does it get executed? �On current Tesla hardware, would probably get 8 blocks running at the same time on each SM, and each block has 4 warps => 32 warps running on each SM �Each clock tick, SM warp scheduler decides which warp to execute next, choosing from those not waiting for � data coming from device memory (memory latency) � completion of earlier instructions (pipeline delay) �Programmer doesn’t have to worry about this level of detail, just make sure there are lots of. CUDA threads /Basics warps Programming – Slide 26

Basics of CUDA Programming �In the simplest case, we have a 1 -D grid of blocks, and a 1 -D set of threads within each block. �If we want to use a 2 -D set of threads, then block. Dim. x, block. Dim. y give the dimensions, and thread. Idx. x, thread. Idx. y give thread indices �To launch the kernel we would use somthing like dim 3 nthreads(16, 4); my_new_kernel<<<nblocks, nthreads>>>(d_x); where dim 3 is a special CUDA datatype with 3 components. x, . y, . z each initialized to 1. CUDA Programming Basics – Slide 27

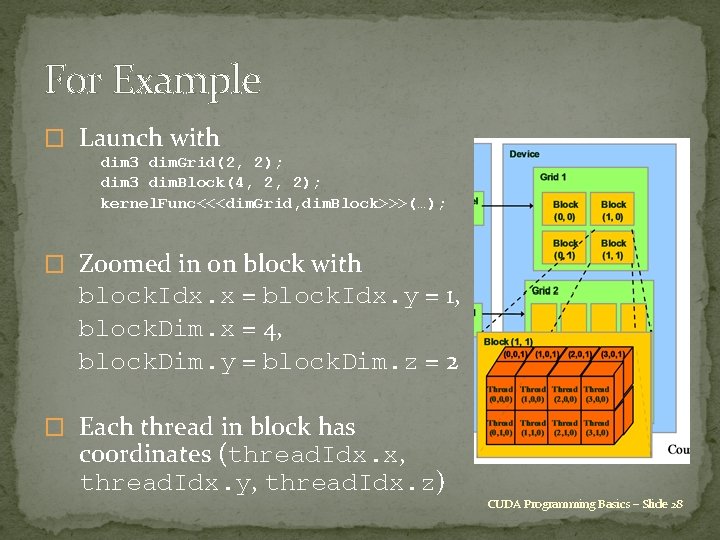

For Example � Launch with dim 3 dim. Grid(2, 2); dim 3 dim. Block(4, 2, 2); kernel. Func<<<dim. Grid, dim. Block>>>(…); � Zoomed in on block with block. Idx. x = block. Idx. y = 1, block. Dim. x = 4, block. Dim. y = block. Dim. z = 2 � Each thread in block has coordinates (thread. Idx. x, thread. Idx. y, thread. Idx. z) CUDA Programming Basics – Slide 28

Basics of CUDA Programming �A similar approach is used for 3 -D threads and/or 2 -D grids. This can be very useful in 2 -D / 3 -D finite difference applications. �How do 2 -D / 3 -D threads get divided into warps? � 1 -D thread ID defined by thread. Idx. x + thread. Idx. y * block. Dim. x + thread. Idx. z * block. Dim. x * block. Dim. y and this is then broken up into warps of size 32. CUDA Programming Basics – Slide 29

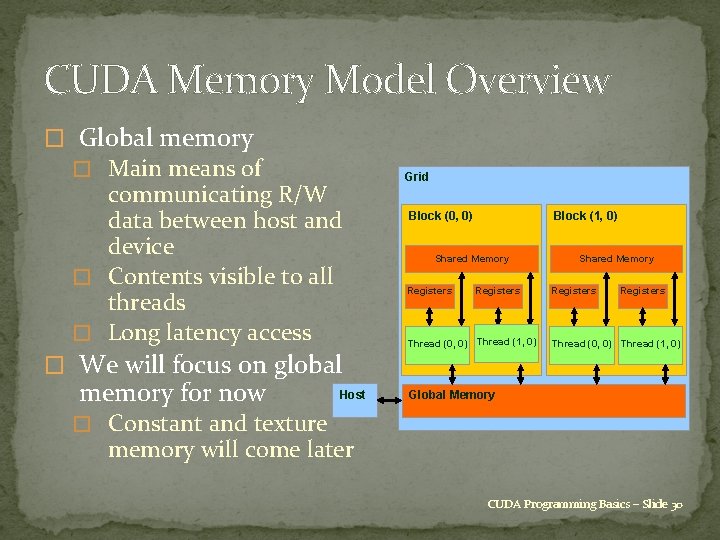

CUDA Memory Model Overview � Global memory � Main means of communicating R/W data between host and device � Contents visible to all threads � Long latency access � We will focus on global memory for now Host Grid Block (0, 0) Block (1, 0) Shared Memory Registers Thread (1, 0) 0) Thread (0, 0) Thread Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory � Constant and texture memory will come later CUDA Programming Basics – Slide 30

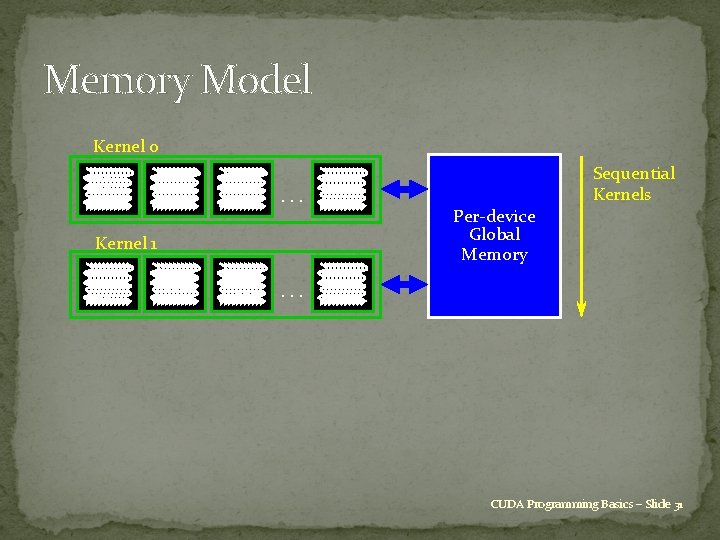

Memory Model Kernel 0. . . Kernel 1 Per-device Global Memory Sequential Kernels . . . CUDA Programming Basics – Slide 31

CUDA API Highlights: Easy and Lightweight �The API is an extension to the ANSI C programming language � Low learning curve �The hardware is designed to enable lightweight runtime and driver � High performance CUDA Programming Basics – Slide 32

Memory Spaces �CPU and GPU have separate memory spaces � Data is moved across the PCIe bus � Use functions to allocate/set/copy memory on GPU �Very similar to corresponding C functions �Pointers are just addresses � Can’t tell from the pointer value whether the address is on CPU or GPU � Must exercise care when dereferencing �Dereferencing CPU pointer on GPU will likely crash and vice -versa CUDA Programming Basics – Slide 33

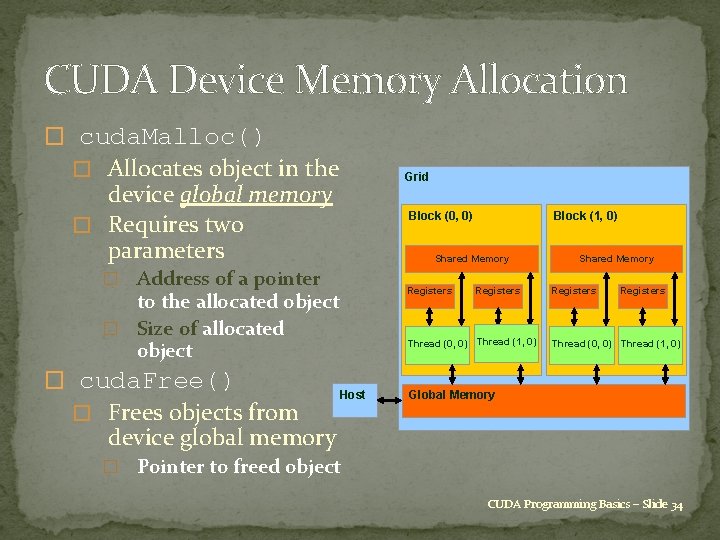

CUDA Device Memory Allocation � cuda. Malloc() � Allocates object in the Grid device global memory � Requires two parameters Block (0, 0) Shared Memory � Address of a pointer Registers to the allocated object � Size of allocated object � cuda. Free() � Frees objects from Block (1, 0) Registers Thread (1, 0) 0) Thread (0, 0) Thread Host Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory device global memory � Pointer to freed object CUDA Programming Basics – Slide 34

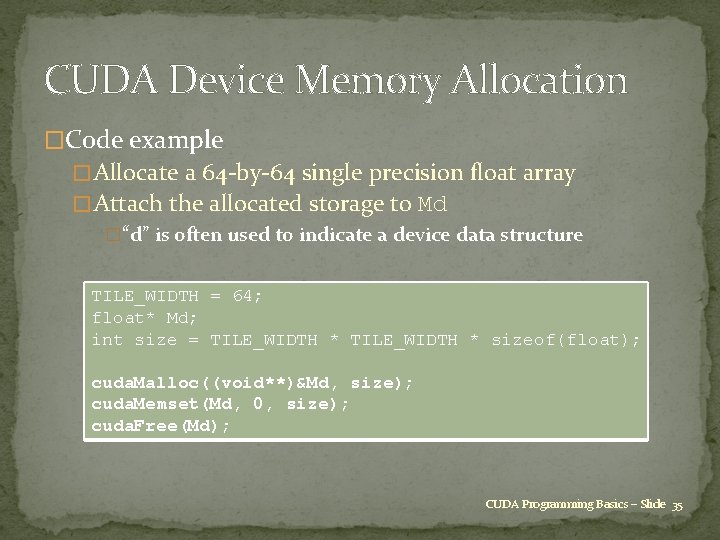

CUDA Device Memory Allocation �Code example � Allocate a 64 -by-64 single precision float array � Attach the allocated storage to Md �“d” is often used to indicate a device data structure TILE_WIDTH = 64; float* Md; int size = TILE_WIDTH * sizeof(float); cuda. Malloc((void**)&Md, size); cuda. Memset(Md, 0, size); cuda. Free(Md); CUDA Programming Basics – Slide 35

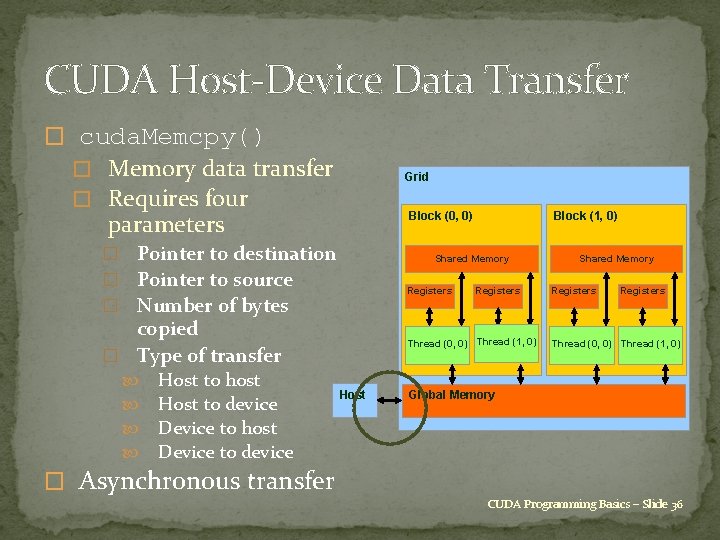

CUDA Host-Device Data Transfer � cuda. Memcpy() � Memory data transfer � Requires four Grid Block (0, 0) parameters � Pointer to destination � Pointer to source � Number of bytes Shared Memory Registers copied � Type of transfer Host to host Host to device Device to host Device to device Block (1, 0) Registers Thread (1, 0) 0) Thread (0, 0) Thread Host Shared Memory Registers Thread (0, 0) Thread (1, 0) Global Memory � Asynchronous transfer CUDA Programming Basics – Slide 36

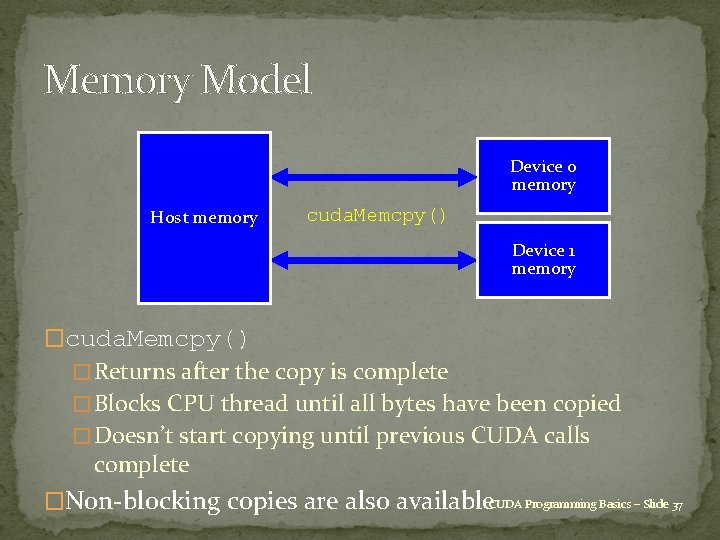

Memory Model Device 0 memory Host memory cuda. Memcpy() Device 1 memory �cuda. Memcpy() � Returns after the copy is complete � Blocks CPU thread until all bytes have been copied � Doesn’t start copying until previous CUDA calls complete �Non-blocking copies are also available. CUDA Programming Basics – Slide 37

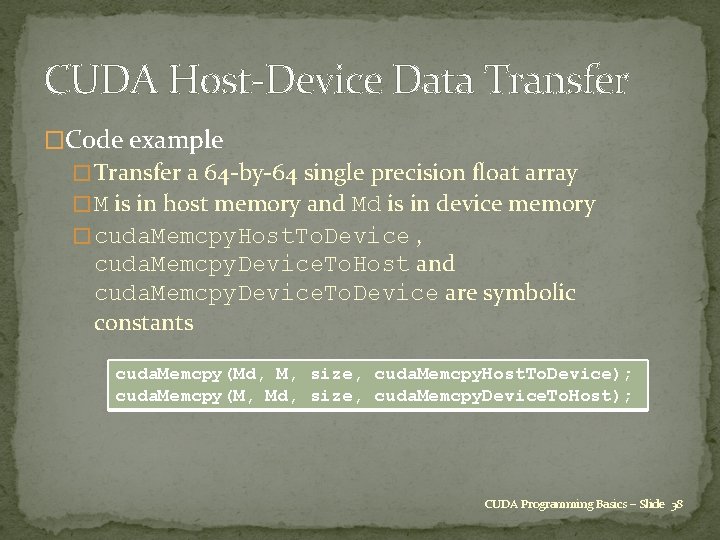

CUDA Host-Device Data Transfer �Code example � Transfer a 64 -by-64 single precision float array � M is in host memory and Md is in device memory � cuda. Memcpy. Host. To. Device , cuda. Memcpy. Device. To. Host and cuda. Memcpy. Device. To. Device are symbolic constants cuda. Memcpy(Md, M, size, cuda. Memcpy. Host. To. Device); cuda. Memcpy(M, Md, size, cuda. Memcpy. Device. To. Host); CUDA Programming Basics – Slide 38

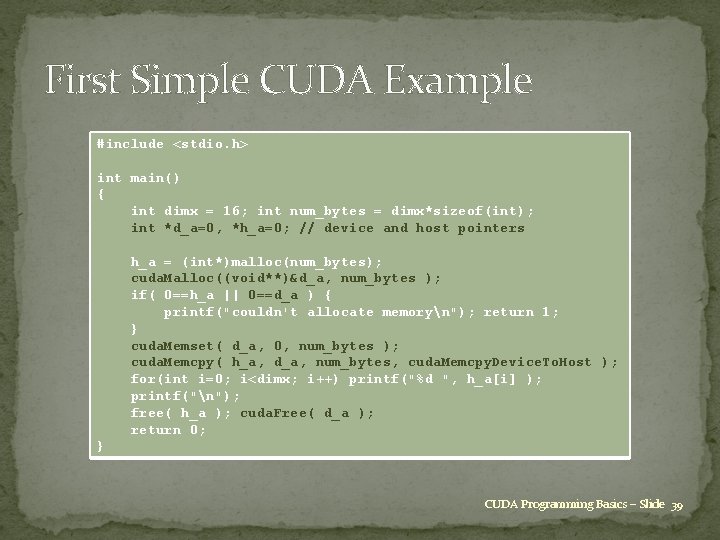

First Simple CUDA Example #include <stdio. h> int main() { int dimx = 16; int num_bytes = dimx*sizeof(int); int *d_a=0, *h_a=0; // device and host pointers h_a = (int*)malloc(num_bytes); cuda. Malloc((void**)&d_a, num_bytes ); if( 0==h_a || 0==d_a ) { printf("couldn't allocate memoryn"); return 1; } cuda. Memset( d_a, 0, num_bytes ); cuda. Memcpy( h_a, d_a, num_bytes, cuda. Memcpy. Device. To. Host ); for(int i=0; i<dimx; i++) printf("%d ", h_a[i] ); printf("n"); free( h_a ); cuda. Free( d_a ); return 0; } CUDA Programming Basics – Slide 39

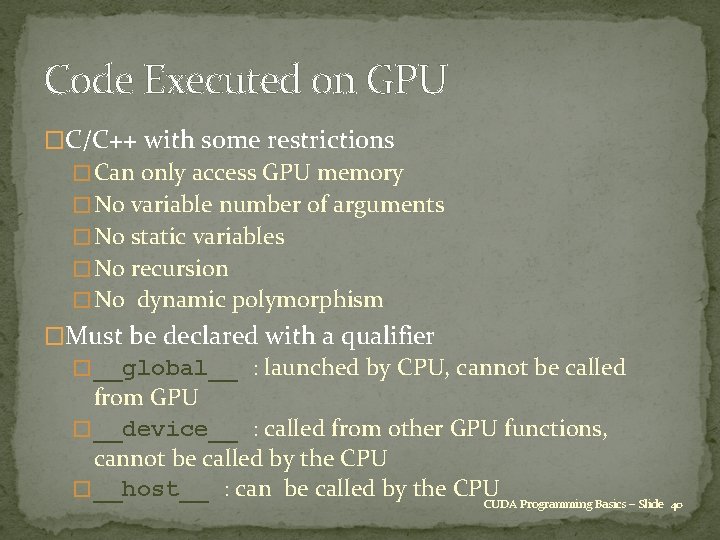

Code Executed on GPU �C/C++ with some restrictions � Can only access GPU memory � No variable number of arguments � No static variables � No recursion � No dynamic polymorphism �Must be declared with a qualifier � __global__ : launched by CPU, cannot be called from GPU � __device__ : called from other GPU functions, cannot be called by the CPU � __host__ : can be called by the CPU CUDA Programming Basics – Slide 40

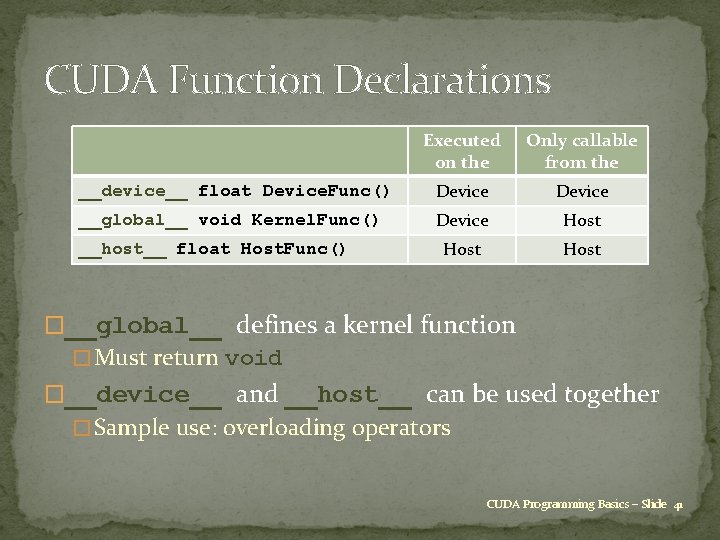

CUDA Function Declarations Executed on the Only callable from the __device__ float Device. Func() Device __global__ void Kernel. Func() Device Host __host__ float Host. Func() �__global__ defines a kernel function � Must return void �__device__ and __host__ can be used together � Sample use: overloading operators CUDA Programming Basics – Slide 41

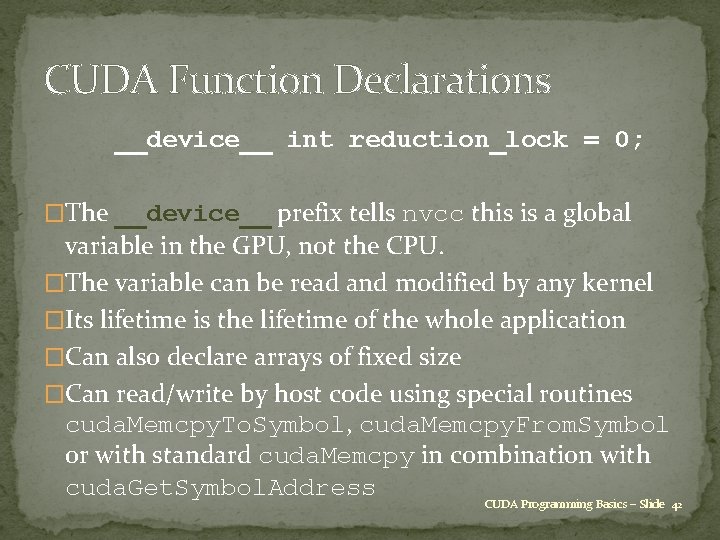

CUDA Function Declarations __device__ int reduction_lock = 0; �The __device__ prefix tells nvcc this is a global variable in the GPU, not the CPU. �The variable can be read and modified by any kernel �Its lifetime is the lifetime of the whole application �Can also declare arrays of fixed size �Can read/write by host code using special routines cuda. Memcpy. To. Symbol, cuda. Memcpy. From. Symbol or with standard cuda. Memcpy in combination with cuda. Get. Symbol. Address CUDA Programming Basics – Slide 42

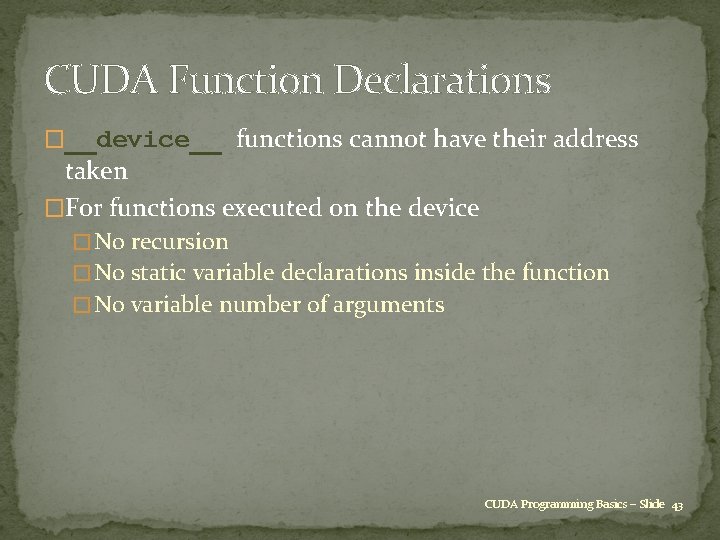

CUDA Function Declarations �__device__ functions cannot have their address taken �For functions executed on the device � No recursion � No static variable declarations inside the function � No variable number of arguments CUDA Programming Basics – Slide 43

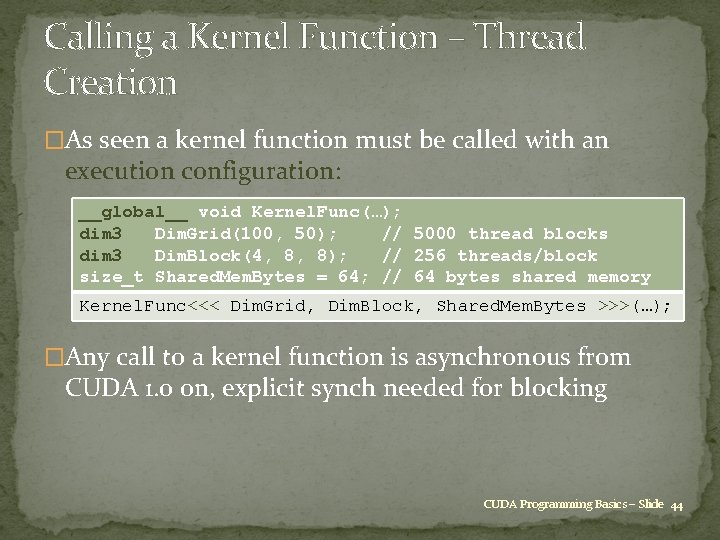

Calling a Kernel Function – Thread Creation �As seen a kernel function must be called with an execution configuration: __global__ void Kernel. Func(…); dim 3 Dim. Grid(100, 50); // 5000 thread blocks dim 3 Dim. Block(4, 8, 8); // 256 threads/block size_t Shared. Mem. Bytes = 64; // 64 bytes shared memory Kernel. Func<<< Dim. Grid, Dim. Block, Shared. Mem. Bytes >>>(…); �Any call to a kernel function is asynchronous from CUDA 1. 0 on, explicit synch needed for blocking CUDA Programming Basics – Slide 44

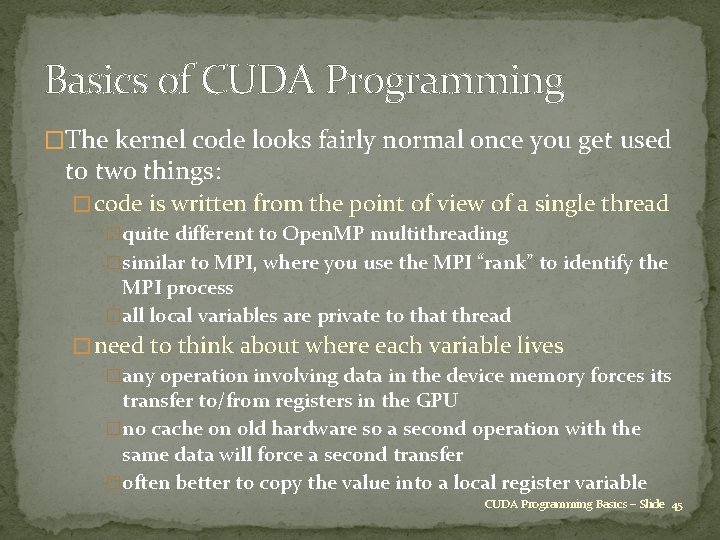

Basics of CUDA Programming �The kernel code looks fairly normal once you get used to two things: � code is written from the point of view of a single thread �quite different to Open. MP multithreading �similar to MPI, where you use the MPI “rank” to identify the MPI process �all local variables are private to that thread � need to think about where each variable lives �any operation involving data in the device memory forces its transfer to/from registers in the GPU �no cache on old hardware so a second operation with the same data will force a second transfer �often better to copy the value into a local register variable CUDA Programming Basics – Slide 45

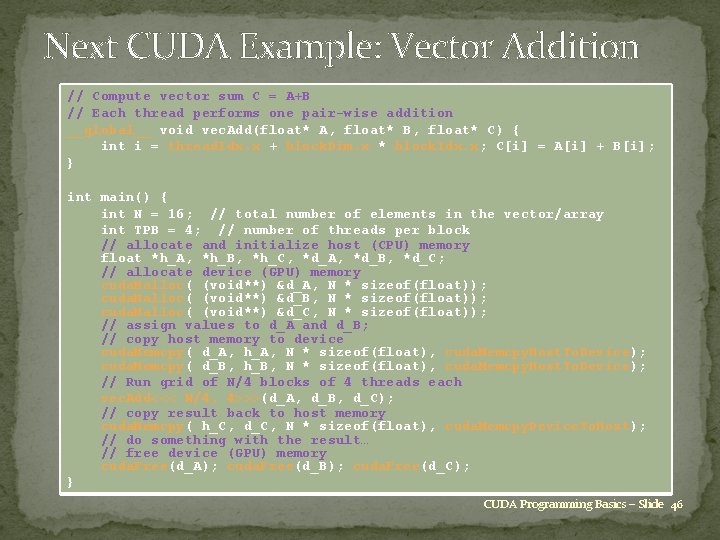

Next CUDA Example: Vector Addition // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; } int main() { int N = 16; // total number of elements in the vector/array int TPB = 4; // number of threads per block // allocate and initialize host (CPU) memory float *h_A, *h_B, *h_C, *d_A, *d_B, *d_C; // allocate device (GPU) memory cuda. Malloc( (void**) &d_A, N * sizeof(float)); cuda. Malloc( (void**) &d_B, N * sizeof(float)); cuda. Malloc( (void**) &d_C, N * sizeof(float)); // assign values to d_A and d_B; // copy host memory to device cuda. Memcpy( d_A, h_A, N * sizeof(float), cuda. Memcpy. Host. To. Device); cuda. Memcpy( d_B, h_B, N * sizeof(float), cuda. Memcpy. Host. To. Device); // Run grid of N/4 blocks of 4 threads each vec. Add<<< N/4, 4>>>(d_A, d_B, d_C); // copy result back to host memory cuda. Memcpy( h_C, d_C, N * sizeof(float), cuda. Memcpy. Device. To. Host); // do something with the result… // free device (GPU) memory cuda. Free(d_A); cuda. Free(d_B); cuda. Free(d_C); } CUDA Programming Basics – Slide 46

Next CUDA Example: Vector Addition �__global__ identifier says its a kernel function �Each thread sets one element of C[] array �Within each block of threads, thread. Idx. x ranges from 0 to block. Dim. x-1, so each thread has a unique value for i CUDA Programming Basics – Slide 47

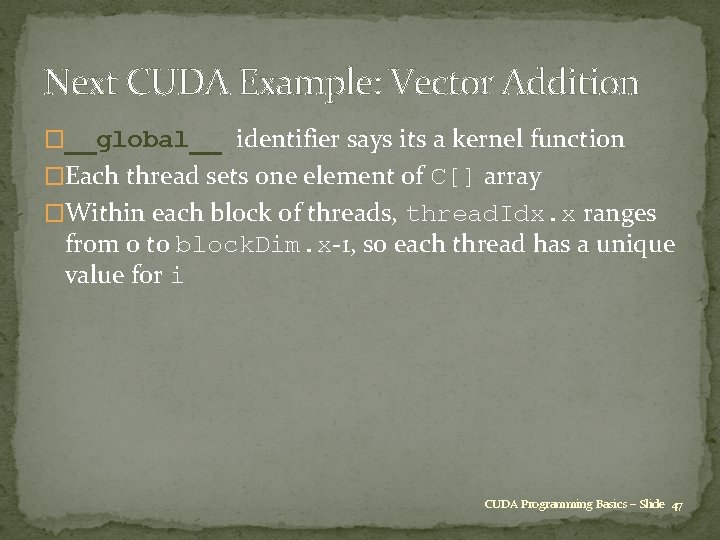

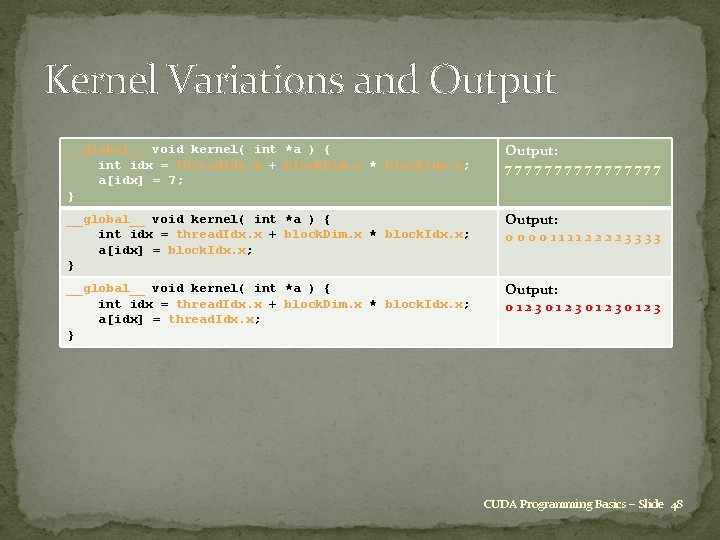

Kernel Variations and Output __global__ void kernel( int *a ) { int idx = thread. Idx. x + block. Dim. x * block. Idx. x; a[idx] = 7; } Output: 77777777 __global__ void kernel( int *a ) { int idx = thread. Idx. x + block. Dim. x * block. Idx. x; a[idx] = block. Idx. x; } Output: 0000111122223333 __global__ void kernel( int *a ) { int idx = thread. Idx. x + block. Dim. x * block. Idx. x; a[idx] = thread. Idx. x; } Output: 01230123 CUDA Programming Basics – Slide 48

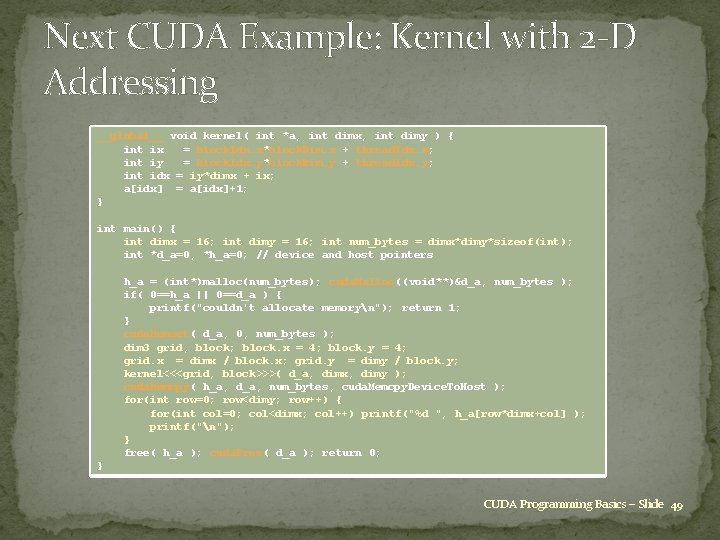

Next CUDA Example: Kernel with 2 -D Addressing __global__ void kernel( int *a, int dimx, int dimy ) { int ix = block. Idx. x*block. Dim. x + thread. Idx. x; int iy = block. Idx. y*block. Dim. y + thread. Idx. y; int idx = iy*dimx + ix; a[idx] = a[idx]+1; } int main() { int dimx = 16; int dimy = 16; int num_bytes = dimx*dimy*sizeof(int); int *d_a=0, *h_a=0; // device and host pointers h_a = (int*)malloc(num_bytes); cuda. Malloc((void**)&d_a, num_bytes ); if( 0==h_a || 0==d_a ) { printf("couldn't allocate memoryn"); return 1; } cuda. Memset( d_a, 0, num_bytes ); dim 3 grid, block; block. x = 4; block. y = 4; grid. x = dimx / block. x; grid. y = dimy / block. y; kernel<<<grid, block>>>( d_a, dimx, dimy ); cuda. Memcpy( h_a, d_a, num_bytes, cuda. Memcpy. Device. To. Host ); for(int row=0; row<dimy; row++) { for(int col=0; col<dimx; col++) printf("%d ", h_a[row*dimx+col] ); printf("n"); } free( h_a ); cuda. Free( d_a ); return 0; } CUDA Programming Basics – Slide 49

A Simple Running Example Matrix Multiplication �A simple matrix multiplication example that illustrates the basic features of memory and thread management in CUDA programs � Leave shared memory usage until later � Local, register usage � Thread ID usage � Memory data transfer API between host and device � Assume square matrix for simplicity CUDA Programming Basics – Slide 50

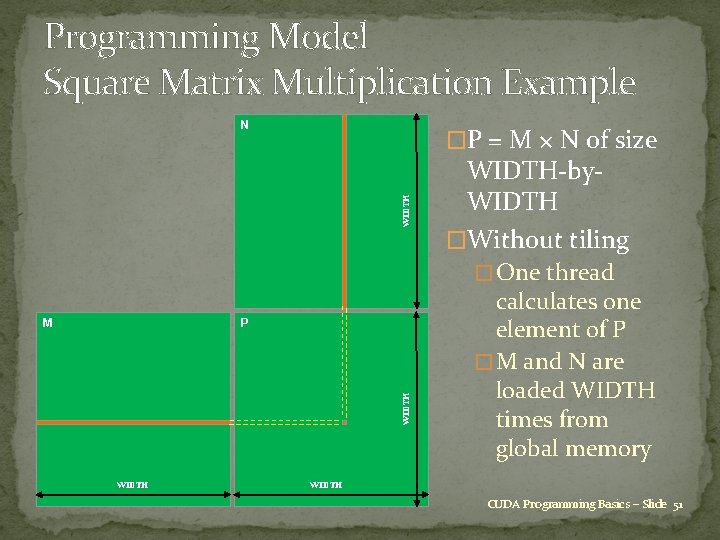

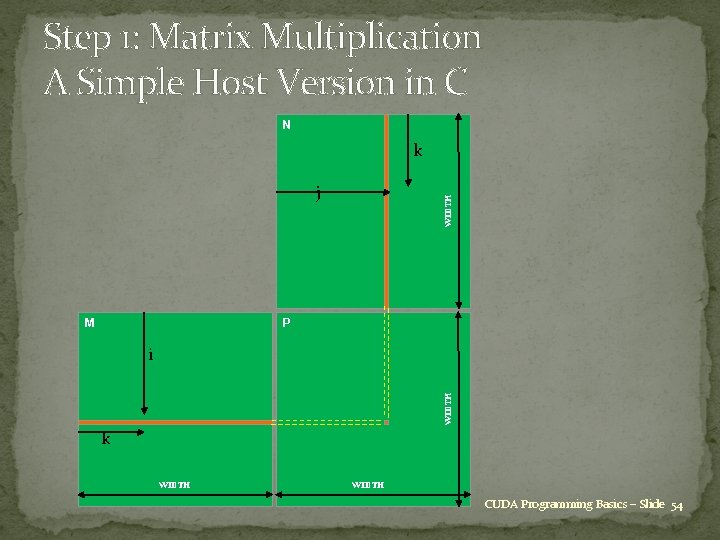

Programming Model Square Matrix Multiplication Example N WIDTH �P = M × N of size WIDTH-by. WIDTH �Without tiling � One thread P WIDTH M WIDTH calculates one element of P � M and N are loaded WIDTH times from global memory WIDTH CUDA Programming Basics – Slide 51

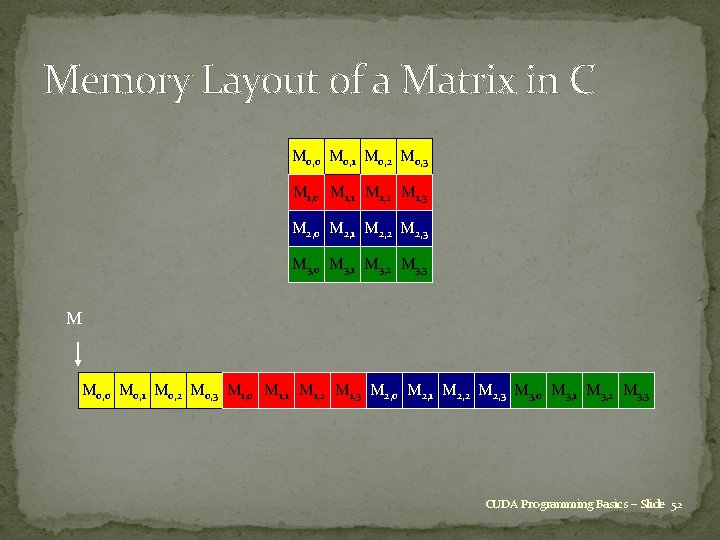

Memory Layout of a Matrix in C M 0, 0 M 0, 1 M 0, 2 M 0, 3 M 1, 0 M 1, 1 M 1, 2 M 1, 3 M 2, 0 M 2, 1 M 2, 2 M 2, 3 M 3, 0 M 3, 1 M 3, 2 M 3, 3 M M 0, 0 M 0, 1 M 0, 2 M 0, 3 M 1, 0 M 1, 1 M 1, 2 M 1, 3 M 2, 0 M 2, 1 M 2, 2 M 2, 3 M 3, 0 M 3, 1 M 3, 2 M 3, 3 CUDA Programming Basics – Slide 52

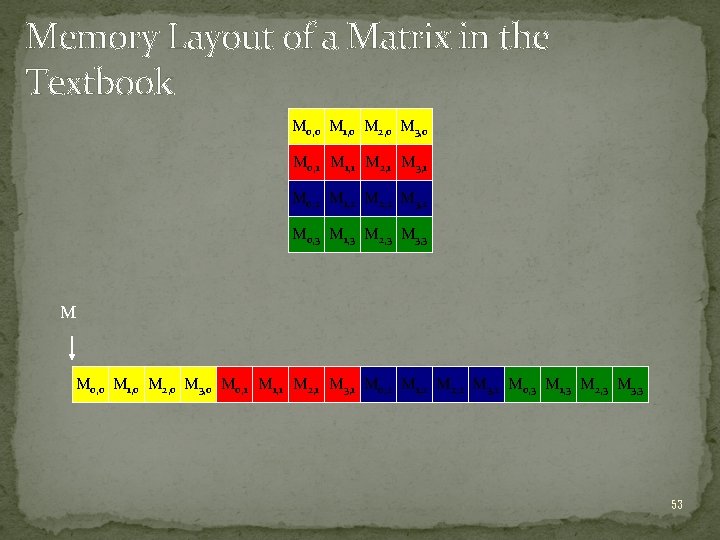

Memory Layout of a Matrix in the Textbook M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 M M 0, 0 M 1, 0 M 2, 0 M 3, 0 M 0, 1 M 1, 1 M 2, 1 M 3, 1 M 0, 2 M 1, 2 M 2, 2 M 3, 2 M 0, 3 M 1, 3 M 2, 3 M 3, 3 53

Step 1: Matrix Multiplication A Simple Host Version in C N k M WIDTH j P WIDTH i k WIDTH CUDA Programming Basics – Slide 54

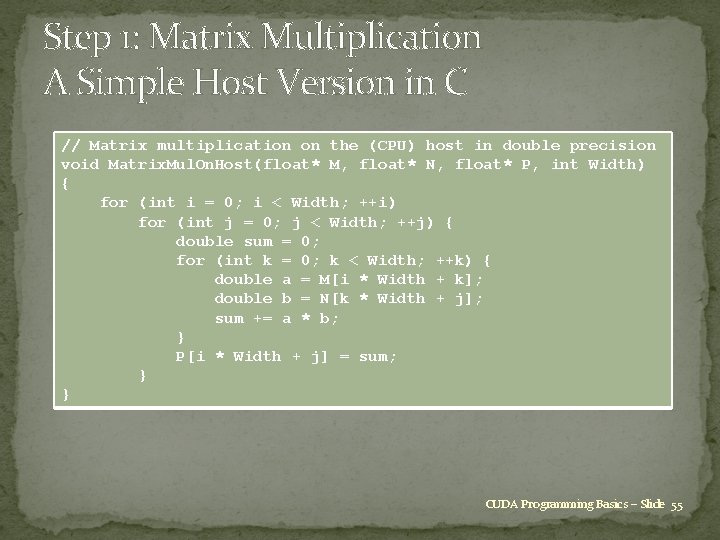

Step 1: Matrix Multiplication A Simple Host Version in C // Matrix multiplication on the (CPU) host in double precision void Matrix. Mul. On. Host(float* M, float* N, float* P, int Width) { for (int i = 0; i < Width; ++i) for (int j = 0; j < Width; ++j) { double sum = 0; for (int k = 0; k < Width; ++k) { double a = M[i * Width + k]; double b = N[k * Width + j]; sum += a * b; } P[i * Width + j] = sum; } } CUDA Programming Basics – Slide 55

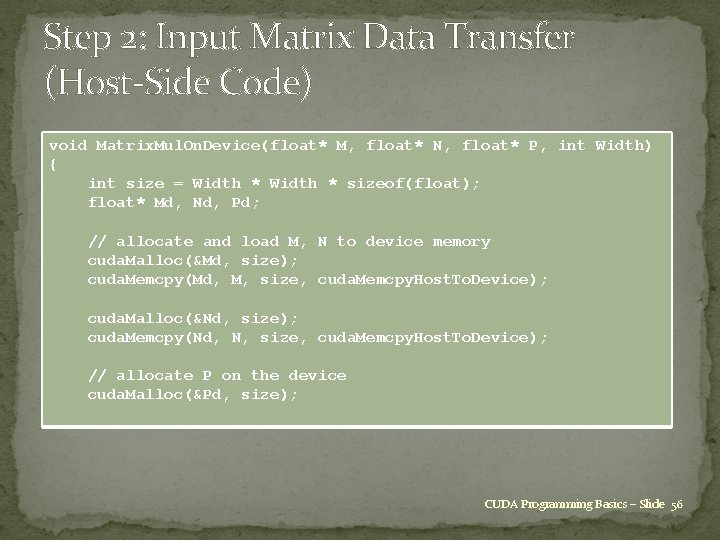

Step 2: Input Matrix Data Transfer (Host-Side Code) void Matrix. Mul. On. Device(float* M, float* N, float* P, int Width) { int size = Width * sizeof(float); float* Md, Nd, Pd; // allocate and load M, N to device memory cuda. Malloc(&Md, size); cuda. Memcpy(Md, M, size, cuda. Memcpy. Host. To. Device); cuda. Malloc(&Nd, size); cuda. Memcpy(Nd, N, size, cuda. Memcpy. Host. To. Device); // allocate P on the device cuda. Malloc(&Pd, size); CUDA Programming Basics – Slide 56

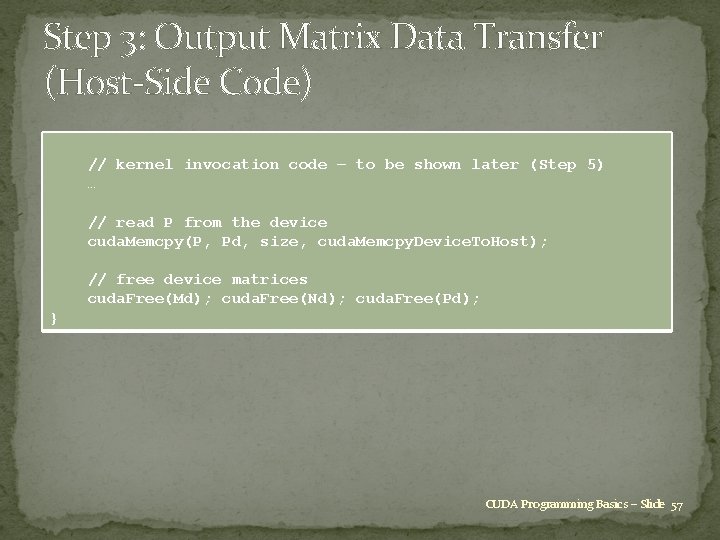

Step 3: Output Matrix Data Transfer (Host-Side Code) // kernel invocation code – to be shown later (Step 5) … // read P from the device cuda. Memcpy(P, Pd, size, cuda. Memcpy. Device. To. Host); // free device matrices cuda. Free(Md); cuda. Free(Nd); cuda. Free(Pd); } CUDA Programming Basics – Slide 57

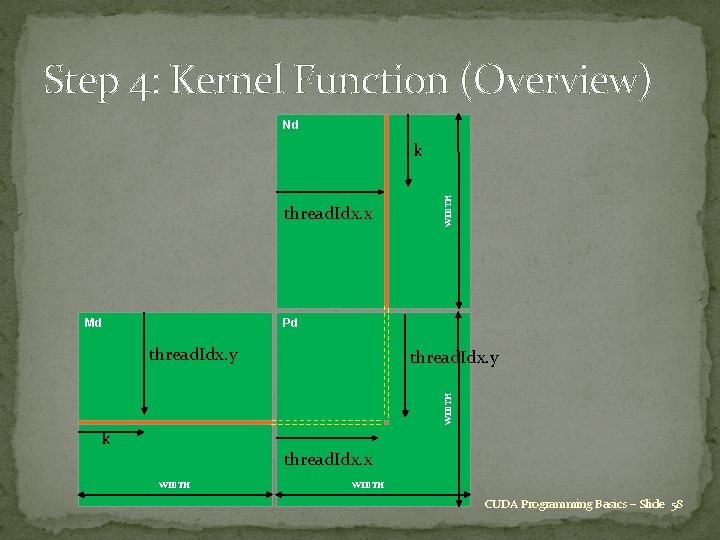

Step 4: Kernel Function (Overview) Nd thread. Idx. x Md WIDTH k Pd thread. Idx. y WIDTH thread. Idx. y k thread. Idx. x WIDTH CUDA Programming Basics – Slide 58

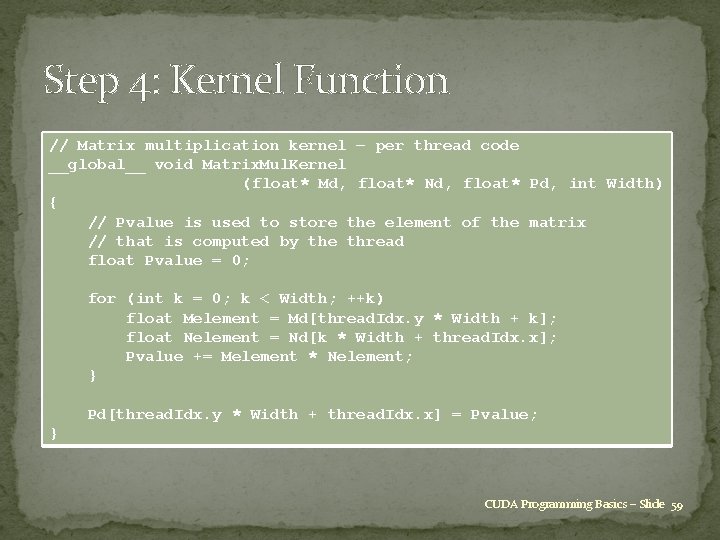

Step 4: Kernel Function // Matrix multiplication kernel – per thread code __global__ void Matrix. Mul. Kernel (float* Md, float* Nd, float* Pd, int Width) { // Pvalue is used to store the element of the matrix // that is computed by the thread float Pvalue = 0; for (int k = 0; k < Width; ++k) float Melement = Md[thread. Idx. y * Width + k]; float Nelement = Nd[k * Width + thread. Idx. x]; Pvalue += Melement * Nelement; } Pd[thread. Idx. y * Width + thread. Idx. x] = Pvalue; } CUDA Programming Basics – Slide 59

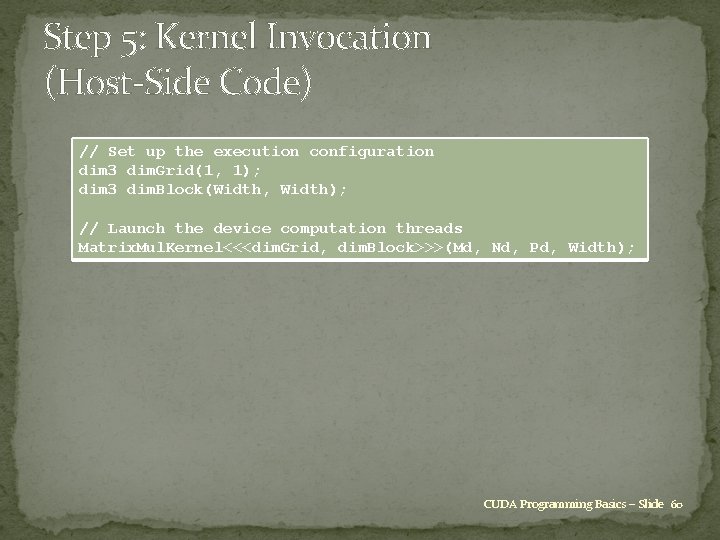

Step 5: Kernel Invocation (Host-Side Code) // Set up the execution configuration dim 3 dim. Grid(1, 1); dim 3 dim. Block(Width, Width); // Launch the device computation threads Matrix. Mul. Kernel<<<dim. Grid, dim. Block>>>(Md, Nd, Pd, Width); CUDA Programming Basics – Slide 60

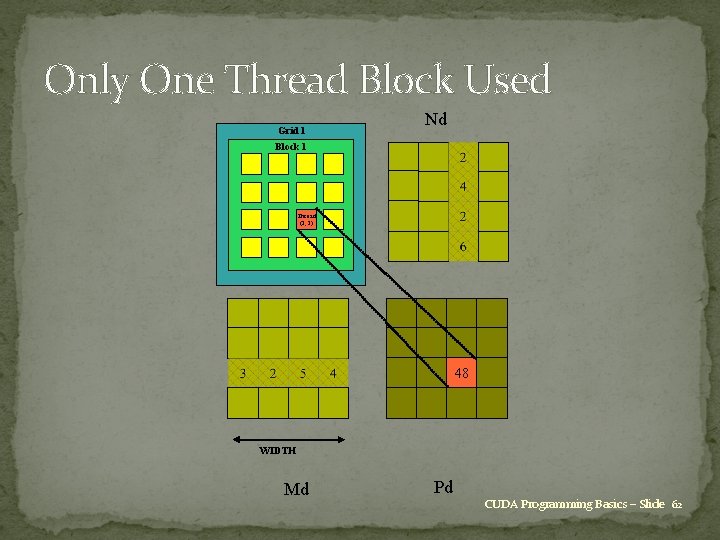

Only One Thread Block Used �One block of threads compute matrix Pd � Each thread computes one element of Pd �Each thread � Loads a row of matrix Md � Loads a column of matrix Nd � Performs one multiply and addition for each pair of Md and Nd elements � Compute to off-chip memory access ratio close to 1: 1 (not very high) �Size of matrix limited by the number of threads allowed in a thread block CUDA Programming Basics – Slide 61

Only One Thread Block Used Grid 1 Nd Block 1 Thread (2, 2) 48 WIDTH Md Pd CUDA Programming Basics – Slide 62

Handling Square Matrices with Arbitrary Size �Have each 2 -D thread block compute a (TILE_WIDTH)² sub-matrix (tile) of the result matrix � Each has (TILE_WIDTH)² threads �Generate a 2 -D grid of (WIDTH / TILE_WIDTH)² blocks �You still need to put a loop around the kernel call for cases where WIDTH / TILE_WIDTH is greater than the max grid size (64 K) CUDA Programming Basics – Slide 63

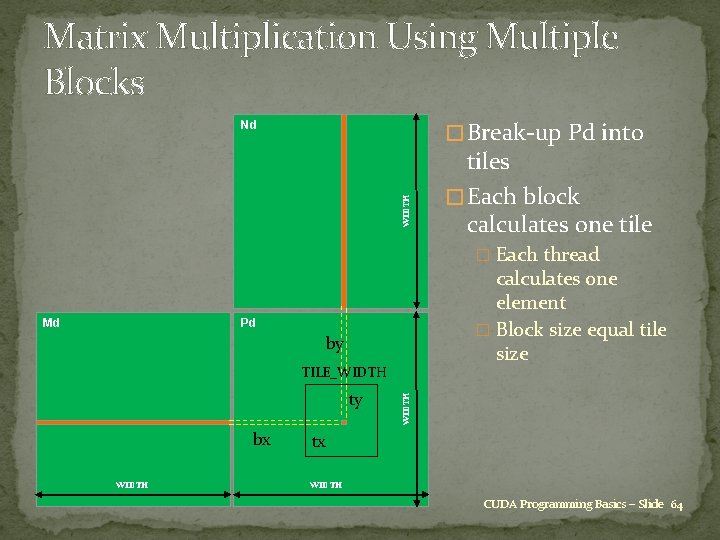

Matrix Multiplication Using Multiple Blocks � Break-up Pd into WIDTH Nd tiles � Each block calculates one tile � Each thread Md calculates one element � Block size equal tile size Pd by ty bx WIDTH TILE_WIDTH tx WIDTH CUDA Programming Basics – Slide 64

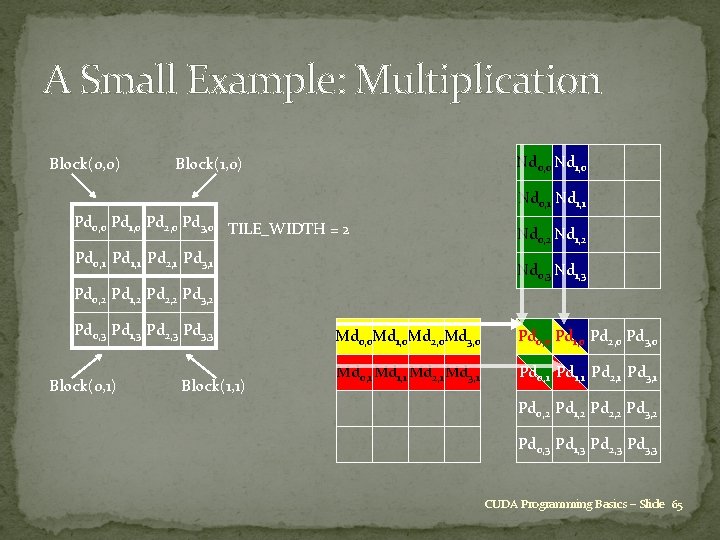

A Small Example: Multiplication Block(0, 0) Nd 0, 0 Nd 1, 0 Block(1, 0) Nd 0, 1 Nd 1, 1 Pd 0, 0 Pd 1, 0 Pd 2, 0 Pd 3, 0 TILE_WIDTH = 2 Pd 0, 1 Pd 1, 1 Pd 2, 1 Pd 3, 1 Nd 0, 2 Nd 1, 2 Nd 0, 3 Nd 1, 3 Pd 0, 2 Pd 1, 2 Pd 2, 2 Pd 3, 2 Pd 0, 3 Pd 1, 3 Pd 2, 3 Pd 3, 3 Block(0, 1) Block(1, 1) Md 0, 0 Md 1, 0 Md 2, 0 Md 3, 0 Pd 0, 0 Pd 1, 0 Pd 2, 0 Pd 3, 0 Md 0, 1 Md 1, 1 Md 2, 1 Md 3, 1 Pd 0, 1 Pd 1, 1 Pd 2, 1 Pd 3, 1 Pd 0, 2 Pd 1, 2 Pd 2, 2 Pd 3, 2 Pd 0, 3 Pd 1, 3 Pd 2, 3 Pd 3, 3 CUDA Programming Basics – Slide 65

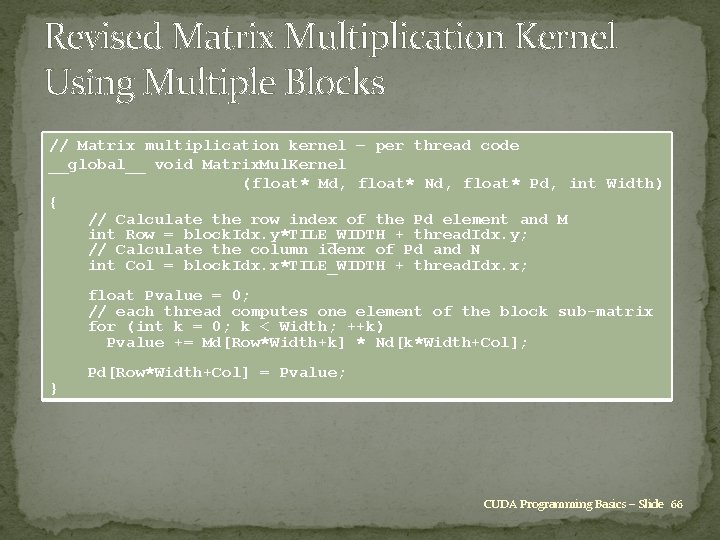

Revised Matrix Multiplication Kernel Using Multiple Blocks // Matrix multiplication kernel – per thread code __global__ void Matrix. Mul. Kernel (float* Md, float* Nd, float* Pd, int Width) { // Calculate the row index of the Pd element and M int Row = block. Idx. y*TILE_WIDTH + thread. Idx. y; // Calculate the column idenx of Pd and N int Col = block. Idx. x*TILE_WIDTH + thread. Idx. x; float Pvalue = 0; // each thread computes one element of the block sub-matrix for (int k = 0; k < Width; ++k) Pvalue += Md[Row*Width+k] * Nd[k*Width+Col]; } Pd[Row*Width+Col] = Pvalue; CUDA Programming Basics – Slide 66

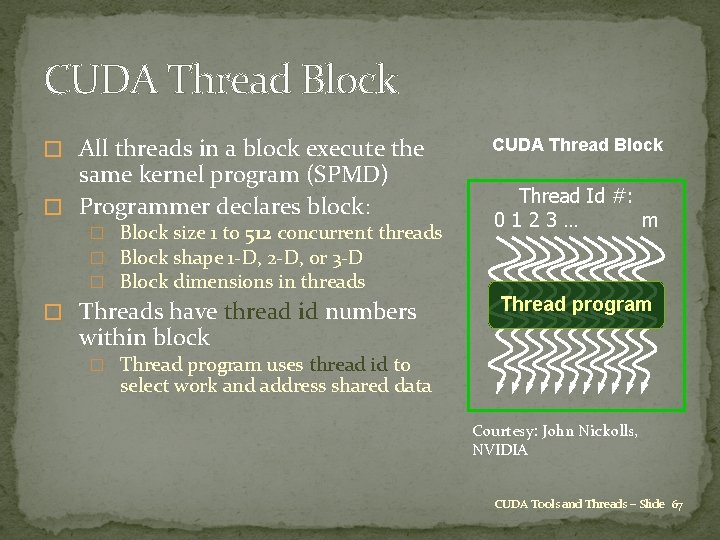

CUDA Thread Block � All threads in a block execute the same kernel program (SPMD) � Programmer declares block: � Block size 1 to 512 concurrent threads � Block shape 1 -D, 2 -D, or 3 -D � Block dimensions in threads � Threads have thread id numbers CUDA Thread Block Thread Id #: 0123… m Thread program within block � Thread program uses thread id to select work and address shared data Courtesy: John Nickolls, NVIDIA CUDA Tools and Threads – Slide 67

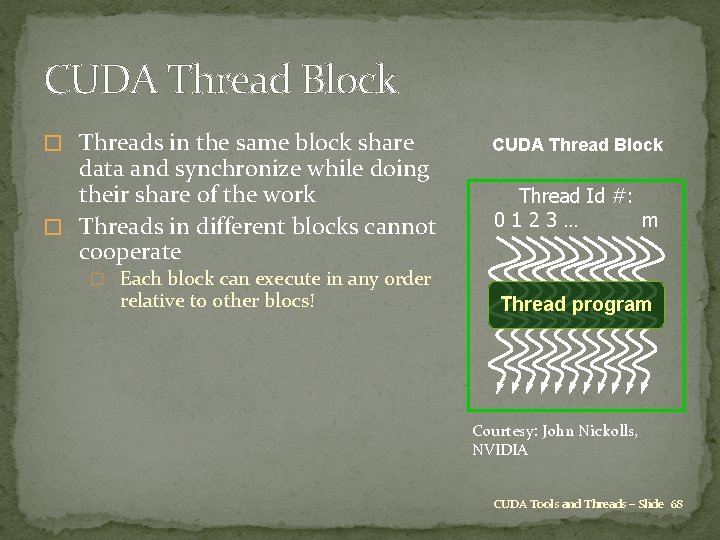

CUDA Thread Block � Threads in the same block share data and synchronize while doing their share of the work � Threads in different blocks cannot cooperate CUDA Thread Block Thread Id #: 0123… m � Each block can execute in any order relative to other blocs! Thread program Courtesy: John Nickolls, NVIDIA CUDA Tools and Threads – Slide 68

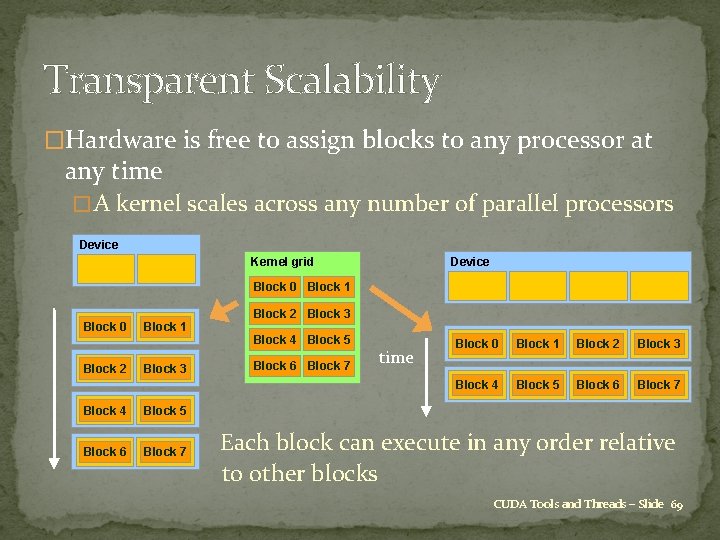

Transparent Scalability �Hardware is free to assign blocks to any processor at any time � A kernel scales across any number of parallel processors Device Kernel grid Block 0 Block 1 Block 2 Block 3 Block 0 Block 2 Block 1 Block 3 Block 4 Block 5 �Block 6 Block 7 Block 4 Block 5 Block 6 Block 7 time Block 0 Block 1 Block 2 Block 3 Block 4 Block 5 Block 6 Block 7 Each block can execute in any order relative to other blocks CUDA Tools and Threads – Slide 69

G 80 CUDA Mode – A Review � Processors execute computing threads � New operating mode/hardware interface for computing Host Input Assembler Thread Execution Manager Parallel Data Cache Texture Load/store Parallel Data Cache Texture Load/store Parallel Data Cache Texture Load/store Global Memory CUDA Tools and Threads – Slide 70

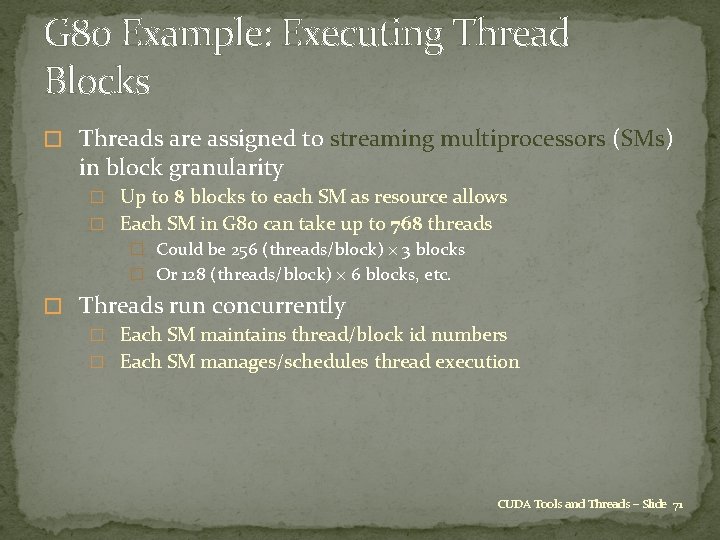

G 80 Example: Executing Thread Blocks � Threads are assigned to streaming multiprocessors (SMs) in block granularity � Up to 8 blocks to each SM as resource allows � Each SM in G 80 can take up to 768 threads � Could be 256 (threads/block) × 3 blocks � Or 128 (threads/block) × 6 blocks, etc. � Threads run concurrently � Each SM maintains thread/block id numbers � Each SM manages/schedules thread execution CUDA Tools and Threads – Slide 71

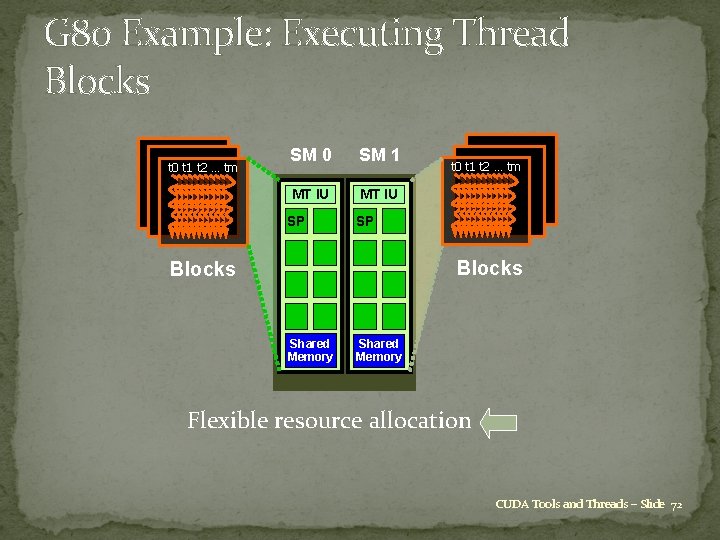

G 80 Example: Executing Thread Blocks t 0 t 1 t 2 … tm SM 0 SM 1 MT IU SP t 0 t 1 t 2 … tm SP Blocks Shared Memory Flexible resource allocation CUDA Tools and Threads – Slide 72

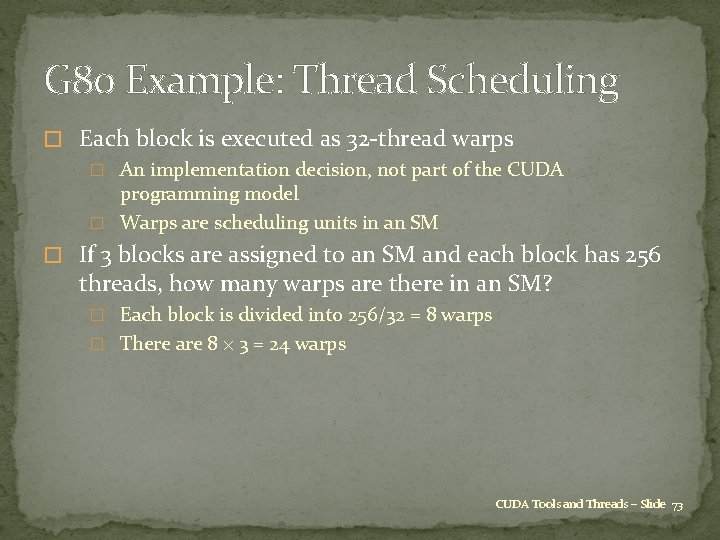

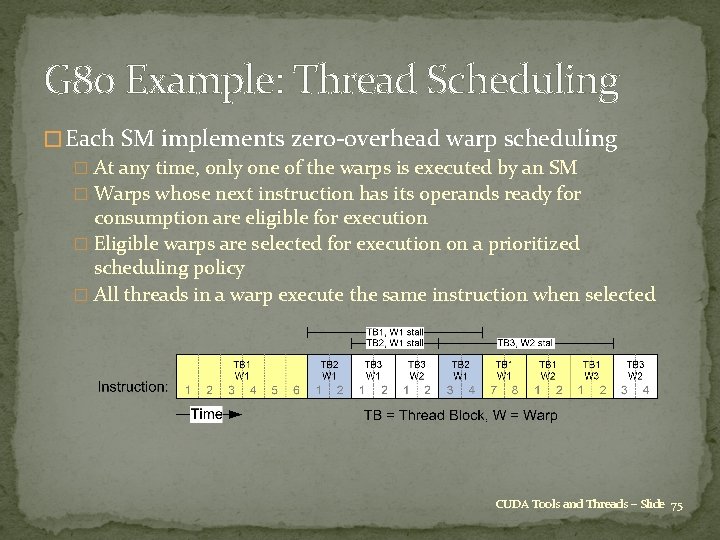

G 80 Example: Thread Scheduling � Each block is executed as 32 -thread warps � An implementation decision, not part of the CUDA programming model � Warps are scheduling units in an SM � If 3 blocks are assigned to an SM and each block has 256 threads, how many warps are there in an SM? � Each block is divided into 256/32 = 8 warps � There are 8 × 3 = 24 warps CUDA Tools and Threads – Slide 73

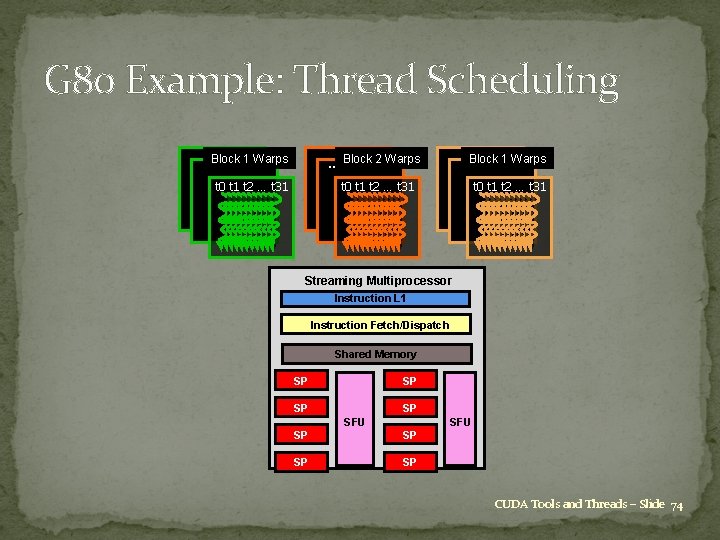

G 80 Example: Thread Scheduling …Block 1 Warps … Block 2 Warps t 0 t 1 t 2 … t 31 …Block 1 Warps t 0 t 1 t 2 … t 31 … … Streaming Multiprocessor Instruction L 1 Instruction Fetch/Dispatch Shared Memory SP SP SFU SP SP CUDA Tools and Threads – Slide 74

G 80 Example: Thread Scheduling � Each SM implements zero-overhead warp scheduling � At any time, only one of the warps is executed by an SM � Warps whose next instruction has its operands ready for consumption are eligible for execution � Eligible warps are selected for execution on a prioritized scheduling policy � All threads in a warp execute the same instruction when selected CUDA Tools and Threads – Slide 75

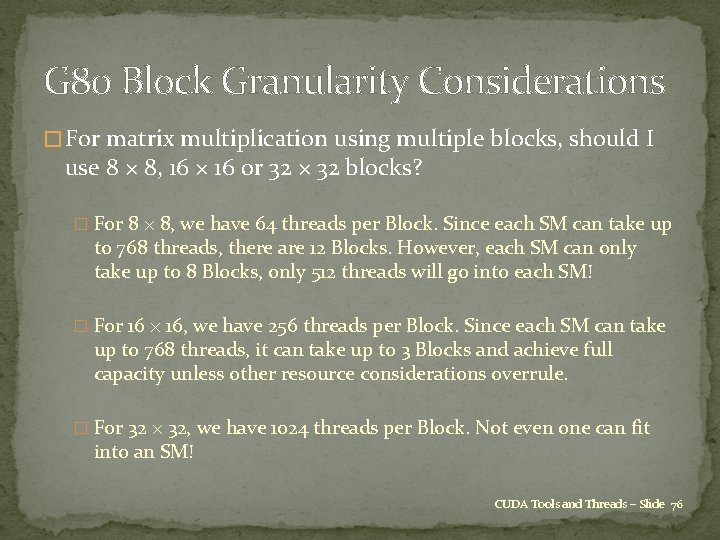

G 80 Block Granularity Considerations � For matrix multiplication using multiple blocks, should I use 8 × 8, 16 × 16 or 32 × 32 blocks? � For 8 × 8, we have 64 threads per Block. Since each SM can take up to 768 threads, there are 12 Blocks. However, each SM can only take up to 8 Blocks, only 512 threads will go into each SM! � For 16 × 16, we have 256 threads per Block. Since each SM can take up to 768 threads, it can take up to 3 Blocks and achieve full capacity unless other resource considerations overrule. � For 32 × 32, we have 1024 threads per Block. Not even one can fit into an SM! CUDA Tools and Threads – Slide 76

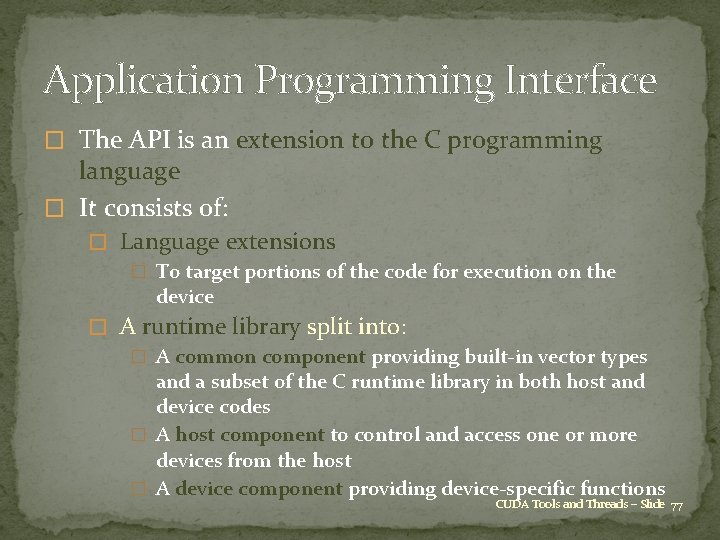

Application Programming Interface � The API is an extension to the C programming language � It consists of: � Language extensions � To target portions of the code for execution on the device � A runtime library split into: � A common component providing built-in vector types and a subset of the C runtime library in both host and device codes � A host component to control and access one or more devices from the host � A device component providing device-specific functions CUDA Tools and Threads – Slide 77

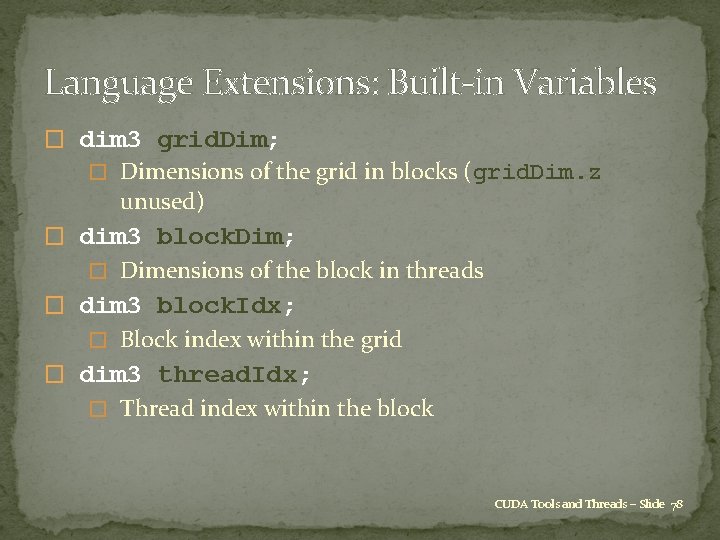

Language Extensions: Built-in Variables � dim 3 grid. Dim; � Dimensions of the grid in blocks (grid. Dim. z unused) � dim 3 block. Dim; � Dimensions of the block in threads � dim 3 block. Idx; � Block index within the grid � dim 3 thread. Idx; � Thread index within the block CUDA Tools and Threads – Slide 78

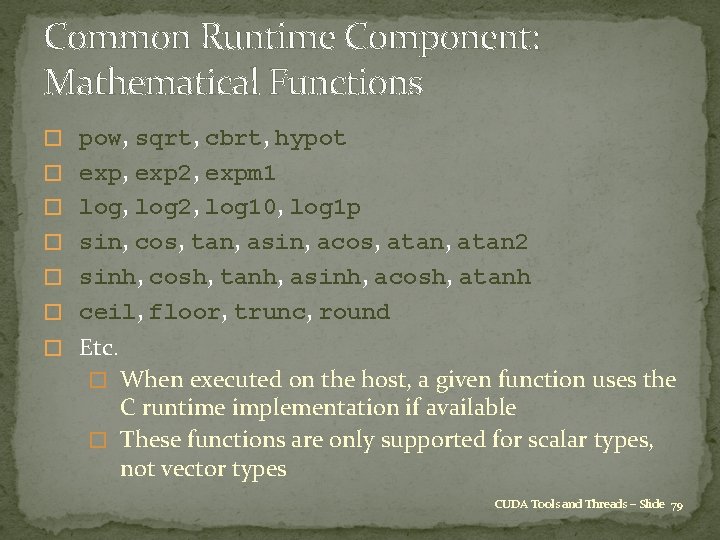

Common Runtime Component: Mathematical Functions � pow, sqrt, cbrt, hypot � exp, exp 2, expm 1 � log, log 2, log 10, log 1 p � sin, cos, tan, asin, acos, atan 2 � sinh, cosh, tanh, asinh, acosh, atanh � ceil, floor, trunc, round � Etc. � When executed on the host, a given function uses the C runtime implementation if available � These functions are only supported for scalar types, not vector types CUDA Tools and Threads – Slide 79

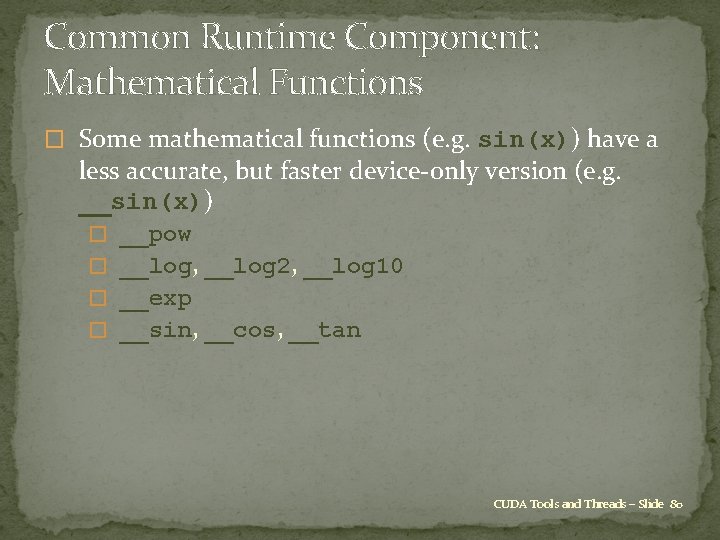

Common Runtime Component: Mathematical Functions � Some mathematical functions (e. g. sin(x)) have a less accurate, but faster device-only version (e. g. __sin(x)) � __pow � __log, __log 2, __log 10 � __exp � __sin, __cos, __tan CUDA Tools and Threads – Slide 80

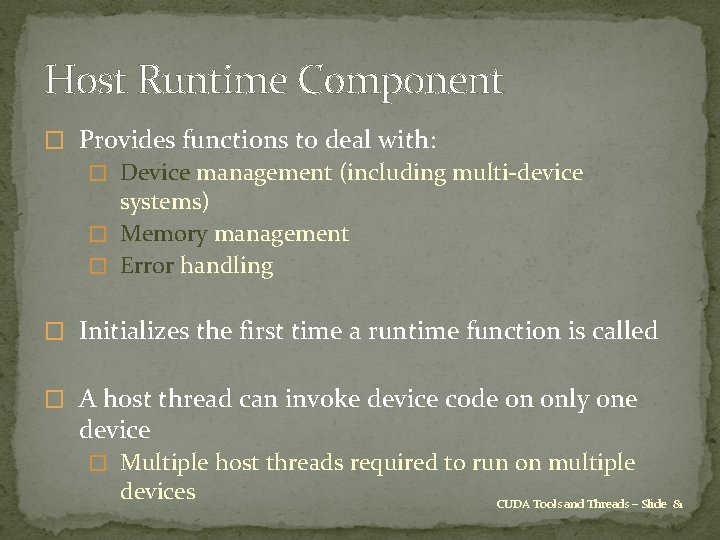

Host Runtime Component � Provides functions to deal with: � Device management (including multi-device systems) � Memory management � Error handling � Initializes the first time a runtime function is called � A host thread can invoke device code on only one device � Multiple host threads required to run on multiple devices CUDA Tools and Threads – Slide 81

Device Runtime Component: Synchronization Function � void __syncthreads(); � Synchronizes all threads in a block � Once all threads have reached this point, execution resumes normally � Used to avoid RAW / WAR / WAW hazards when accessing shared or global memory � Allowed in conditional constructs only if the conditional is uniform across the entire thread block CUDA Tools and Threads – Slide 82

Final Thoughts �memory allocation cuda. Malloc((void **)&xd, nbytes); �data copying cuda. Memcpy(xh, xd, nbytes, cuda. Memcpy. Device. To. Host); �reminder: d (h) to distinguish an array on the device (host) is not mandatory, just helpful labeling �kernel routine is declared by __global__ prefix, and is written from point of view of a single thread CUDA Programming Basics – Slide 83

End Credits �Reading: Chapters 3 and 4, “Programming Massively Parallel Processors” by Kirk and Hwu. �Based on original material from � The University of Illinois at Urbana-Champaign �David Kirk, Wen-mei W. Hwu � Oxford University: Mike Giles � Stanford University �Jared Hoberock, David Tarjan �Revision history: last updated 6/22/2011. CUDA Programming Basics – Slide 84

- Slides: 84