NYU Natural Language Processing at NYU the Proteus

- Slides: 13

NYU Natural Language Processing at NYU: the Proteus Project Ralph Grishman September 2009 1

Proteus Project Faculty • Ralph Grishman • Satoshi Sekine • Adam Meyers http: //nlp. cs. nyu. edu/ 2

‘Just the Facts’ • Vast amount of information is now available on-line in text form • but getting ‘the facts’ can be very hard and slow • Where has Secretary Clinton been over the last month? • Which places on the East Coast have had swine flu outbreaks this month? • To move from search to question answering we need more than a bag of words • we need to figure out who-did-what-to-whom 3

Understanding natural language isn’t easy • The rebels strafed the car … with automatic weapons fire. … with the Minister and his deputy. • They … died instantly. … were promptly arrested. Understanding language requires a lot of knowledge. 4

How to get all this knowledge? • By hand … too expensive • Use weakly supervised learning • Give a few examples (‘seeds’) • Use very large text corpus to learn similar examples 5

Knowledge Discovery: An Example • Goal: want to keep track of all the hirings and departures of executives need to find all the ways such events are described • Method: – identify a few seed patterns – retrieve documents containing patterns – find subject-verb-object pattern with • high frequency in retrieved documents • relatively high frequency in retrieved docs vs. other docs – add pattern to seed and repeat 6

#1: pick seed pattern Seed: < person retires > 7

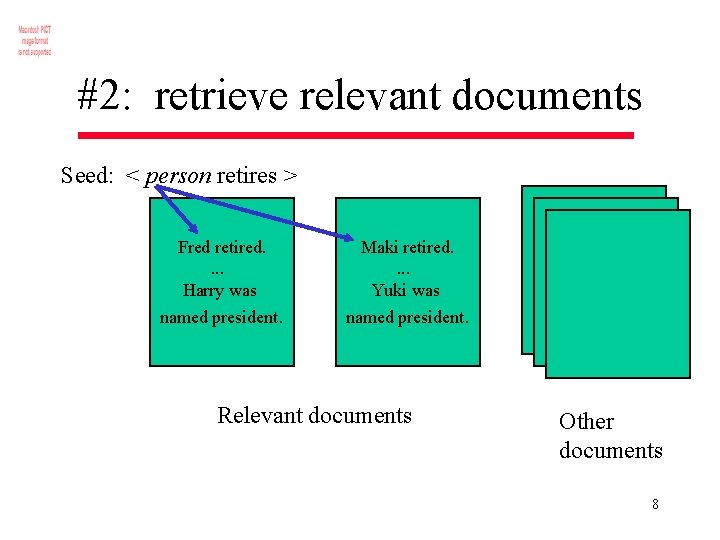

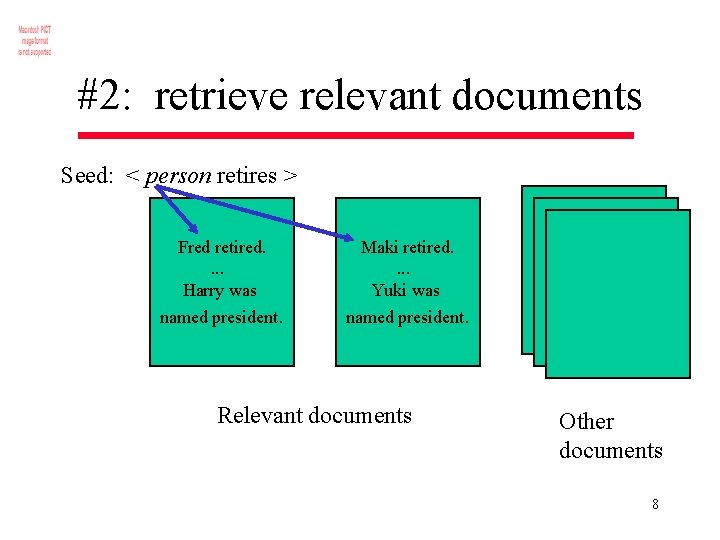

#2: retrieve relevant documents Seed: < person retires > Fred retired. . Harry was Maki retired. . Yuki was named president. Relevant documents Other documents 8

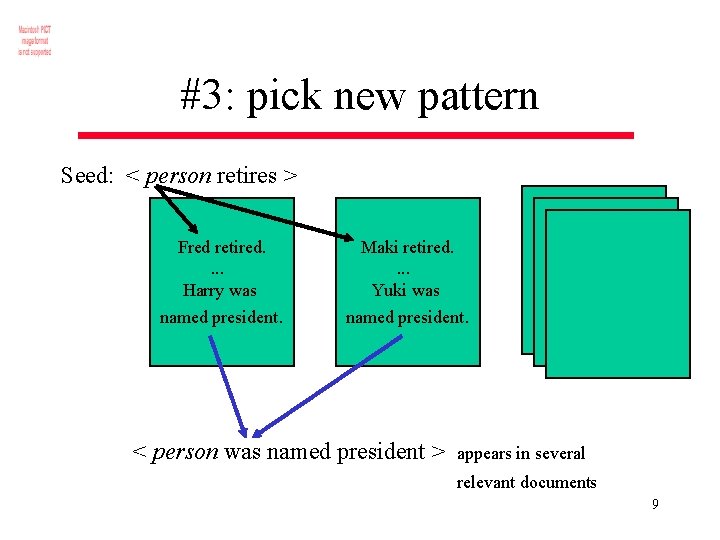

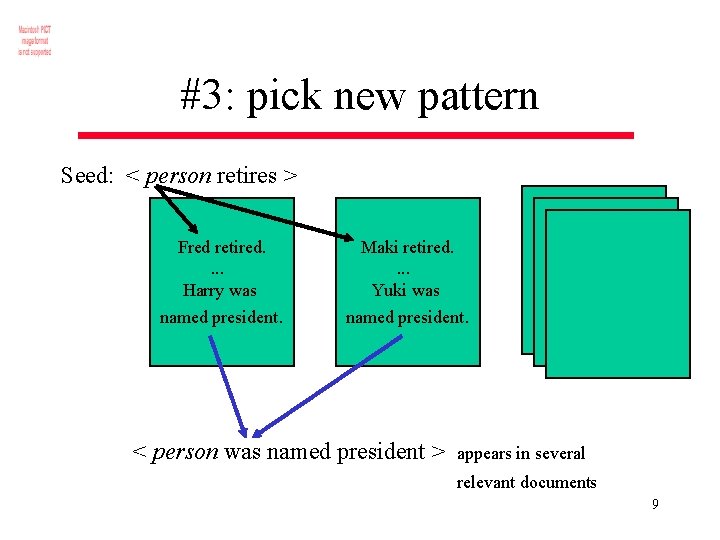

#3: pick new pattern Seed: < person retires > Fred retired. . Harry was Maki retired. . Yuki was named president. < person was named president > appears in several relevant documents 9

#4: add new pattern to pattern set Pattern set: < person retires > < person was named president > 10

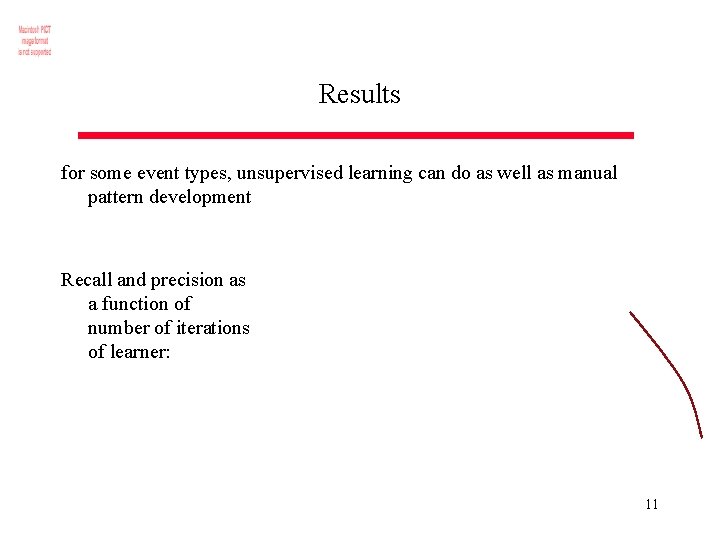

Results for some event types, unsupervised learning can do as well as manual pattern development Recall and precision as a function of number of iterations of learner: 11

Robust Learning • Quality of learned patterns is uneven • ambiguity of language leads us to learn incorrect patterns • Need to identify cases of uncertainty • Potential linguistic ambiguities • With multiple classifiers using distinct features, cases where they disagree • Query user for selected uncertain examples • Weakly supervised learning + active learning robust, rapid knowledge discovery 12

For More Information • Project web site nlp. cs. nyu. edu • Course G 22. 2590 - Natural Language Processing (Spring 2010) 13