ONLINE QLEARNER USING MOVING PROTOTYPES by Miguel ngel

ONLINE Q-LEARNER USING MOVING PROTOTYPES by Miguel Ángel Soto Santibáñez

Reinforcement Learning What does it do? Tackles the problem of learning control strategies for autonomous agents. What is the goal? The goal of the agent is to learn an action policy that maximizes the total reward it will receive from any starting state.

Reinforcement Learning What does it need? This method assumes that training information is available in the form of a real-valued reward signal given for each stateaction transition. i. e. (s, a, r) What problems? Very often, reinforcement learning fits a problem setting known as a Markov decision process (MDP).

Reinforcement Learning vs. Dynamic programming reward function r(s, a) r state transition function δ(s, a) s’

Q-learning An off-policy control algorithm. Advantage: Converges to an optimal policy in both deterministic and nondeterministic MDPs. Disadvantage: Only practical on a small number of problems.

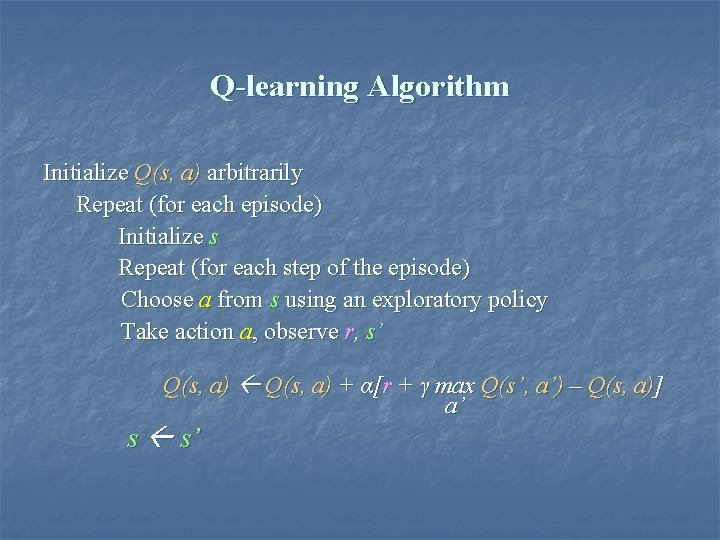

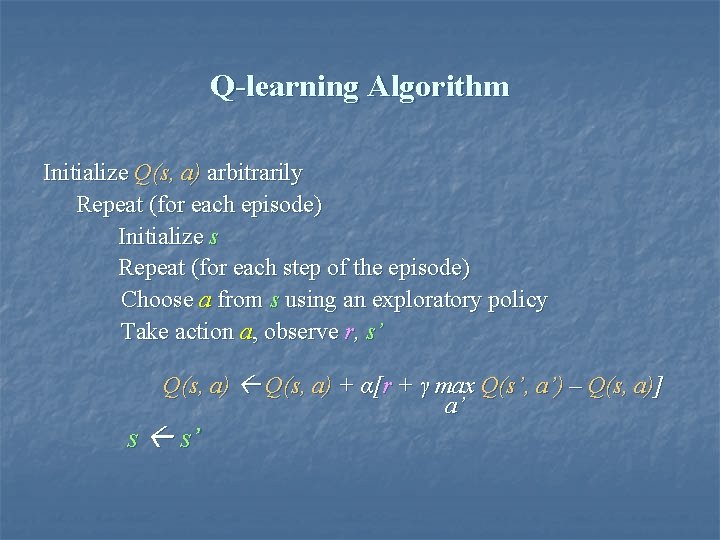

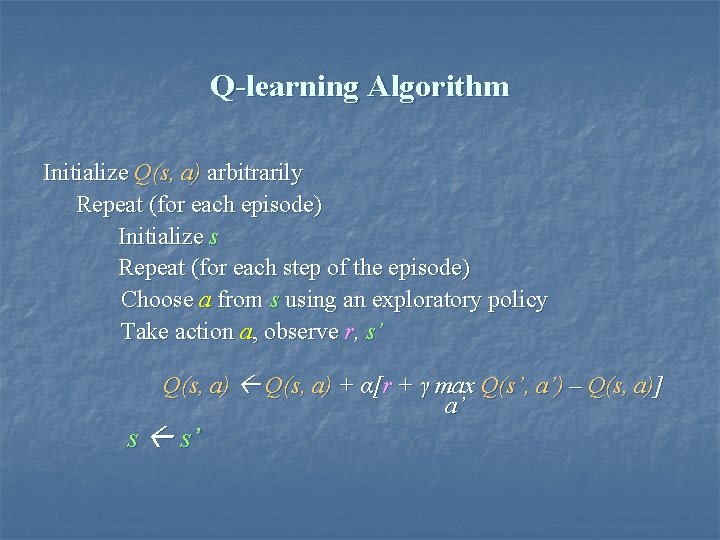

Q-learning Algorithm Initialize Q(s, a) arbitrarily Repeat (for each episode) Initialize s Repeat (for each step of the episode) Choose a from s using an exploratory policy Take action a, observe r, s’ Q(s, a) + α[r + γ max Q(s’, a’) – Q(s, a)] a’ s s’

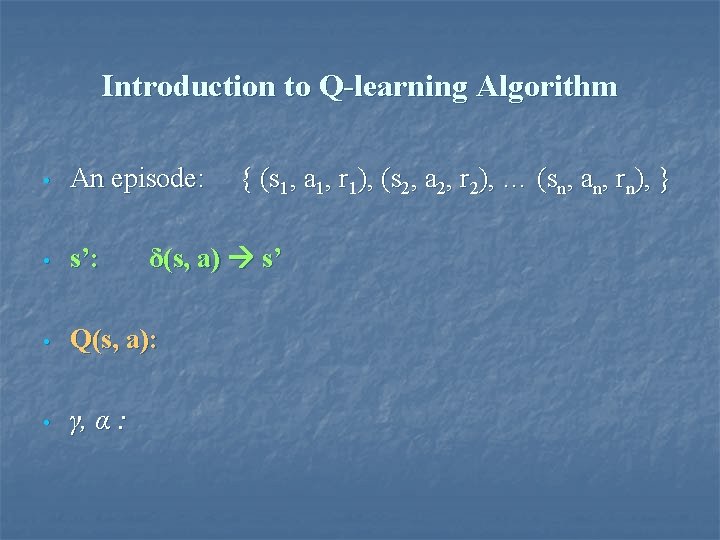

Introduction to Q-learning Algorithm • An episode: • s’: • Q(s, a): • γ, α : { (s 1, a 1, r 1), (s 2, a 2, r 2), … (sn, an, rn), } δ(s, a) s’

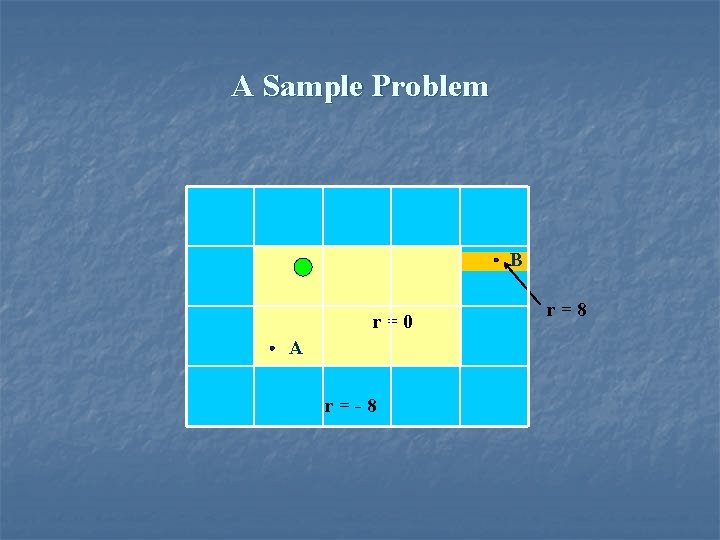

A Sample Problem B r=0 A r=-8 r=8

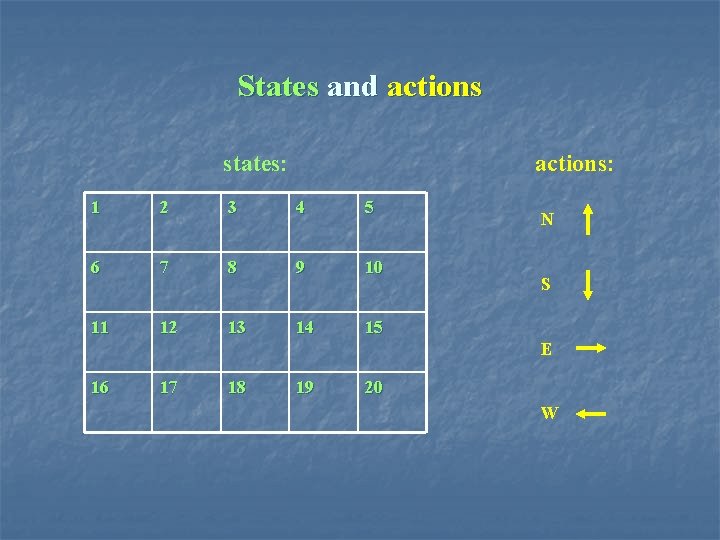

States and actions states: actions: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 N S E 16 17 18 19 20 W

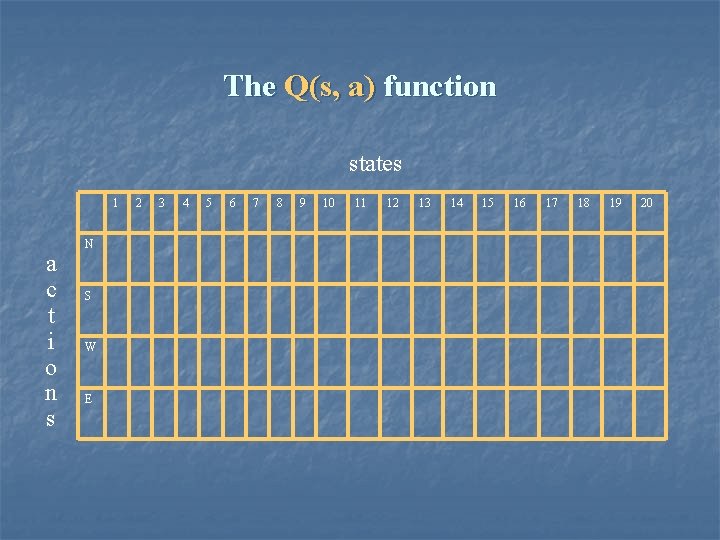

The Q(s, a) function states 1 a c t i o n s N S W E 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

Q-learning Algorithm Initialize Q(s, a) arbitrarily Repeat (for each episode) Initialize s Repeat (for each step of the episode) Choose a from s using an exploratory policy Take action a, observe r, s’ Q(s, a) + α[r + γ max Q(s’, a’) – Q(s, a)] a’ s s’

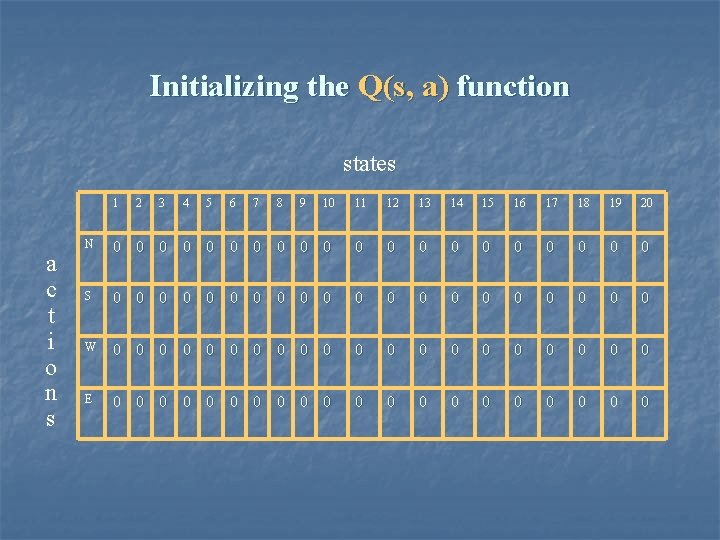

Initializing the Q(s, a) function states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 N 0 0 0 0 0 S 0 0 0 0 0 W 0 0 0 0 0 E 0 0 0 0 0

Q-learning Algorithm Initialize Q(s, a) arbitrarily Repeat (for each episode) Initialize s Repeat (for each step of the episode) Choose a from s using an exploratory policy Take action a, observe r, s’ Q(s, a) + α[r + γ max Q(s’, a’) – Q(s, a)] a’ s s’

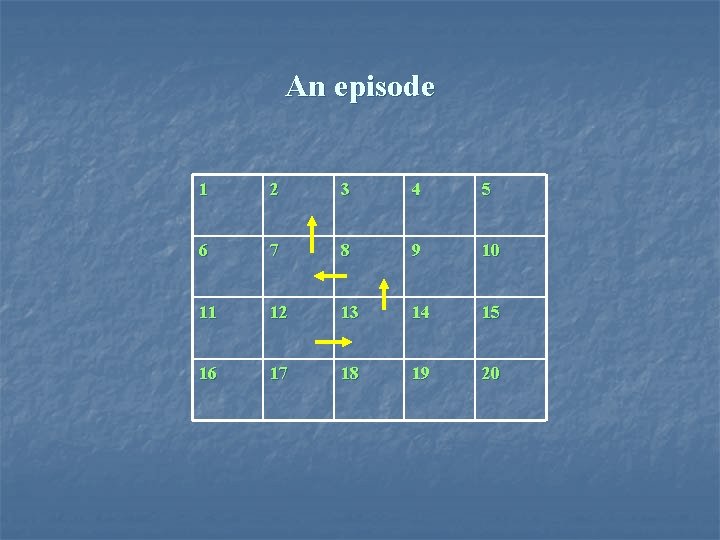

An episode 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

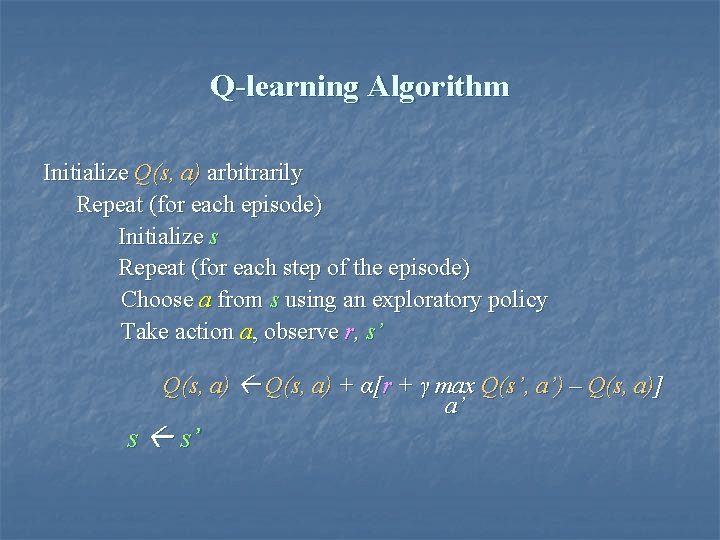

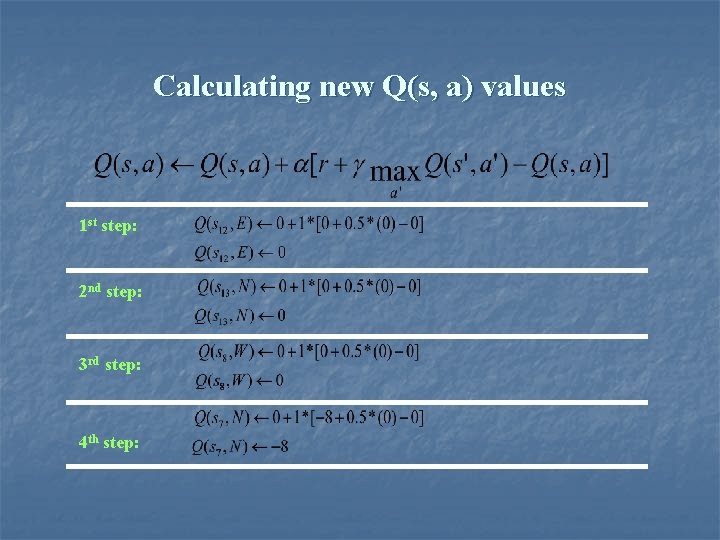

Q-learning Algorithm Initialize Q(s, a) arbitrarily Repeat (for each episode) Initialize s Repeat (for each step of the episode) Choose a from s using an exploratory policy Take action a, observe r, s’ Q(s, a) + α[r + γ max Q(s’, a’) – Q(s, a)] a’ s s’

Calculating new Q(s, a) values 1 st step: 2 nd step: 3 rd step: 4 th step:

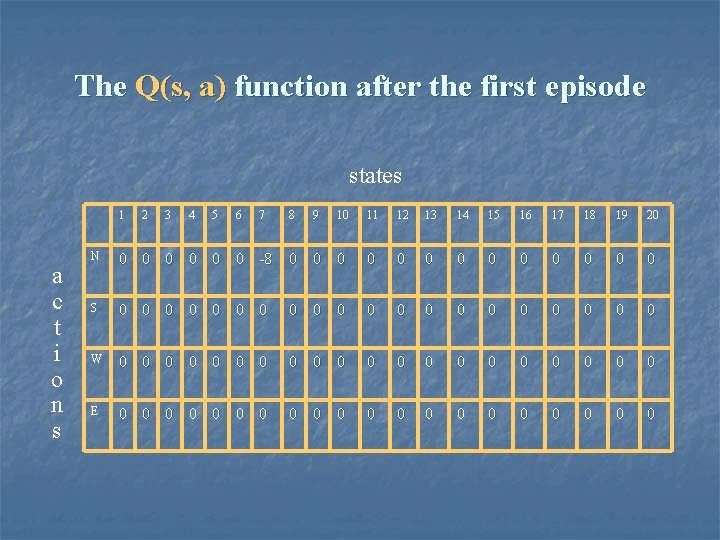

The Q(s, a) function after the first episode states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 N 0 0 0 -8 0 0 0 0 S 0 0 0 0 0 W 0 0 0 0 0 E 0 0 0 0 0

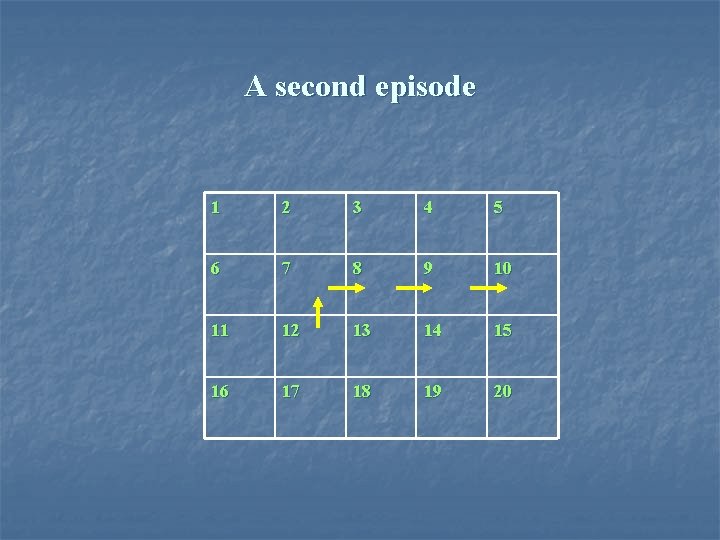

A second episode 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

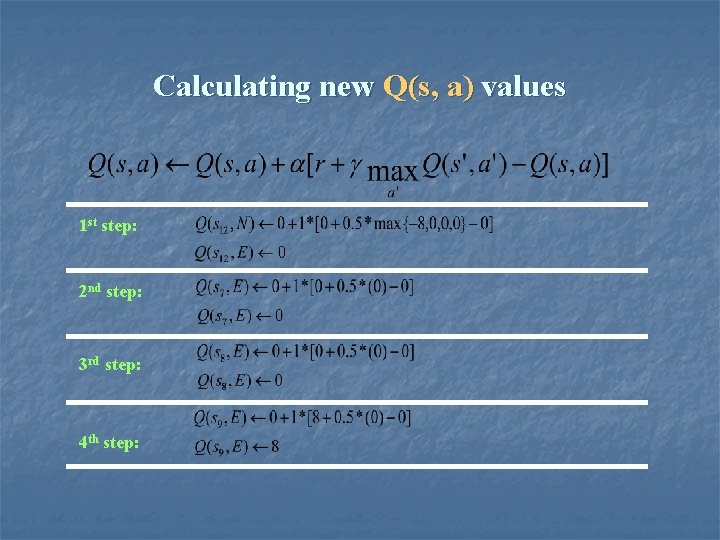

Calculating new Q(s, a) values 1 st step: 2 nd step: 3 rd step: 4 th step:

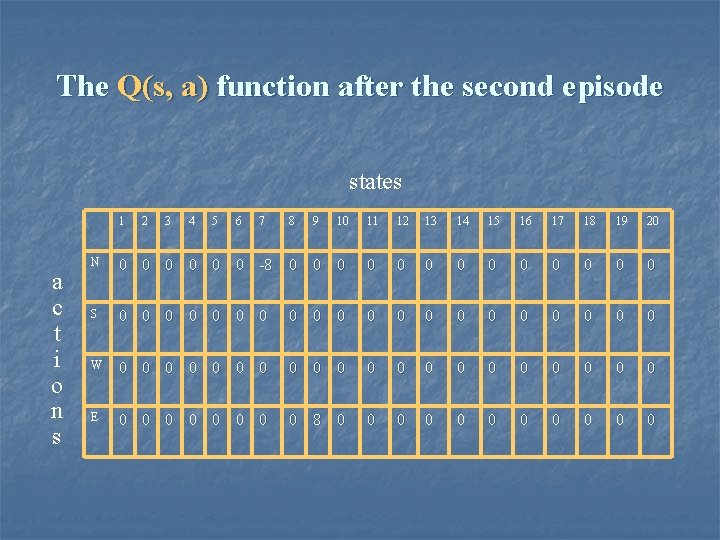

The Q(s, a) function after the second episode states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 N 0 0 0 -8 0 0 0 0 S 0 0 0 0 0 W 0 0 0 0 0 E 0 0 0 0 8 0 0 0

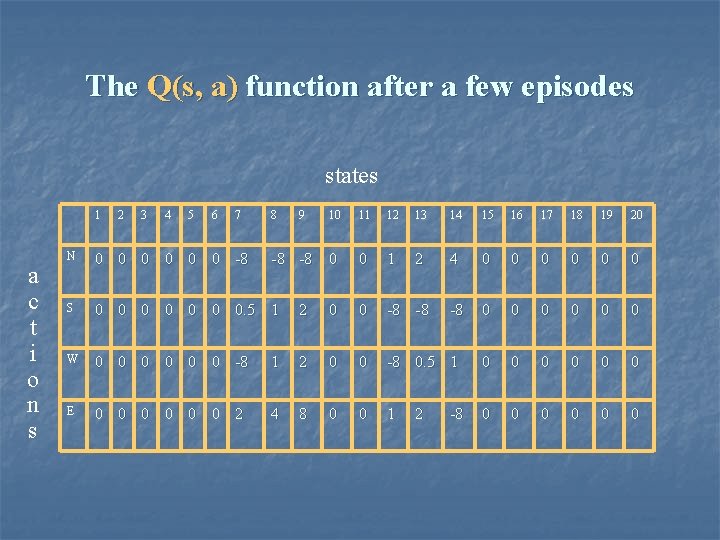

The Q(s, a) function after a few episodes states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 -8 -8 0 0 1 2 4 0 0 0 -8 0 0 0 N 0 0 0 -8 S 0 0 0 0. 5 1 2 0 0 -8 -8 W 0 0 0 -8 1 2 0 0 -8 0. 5 1 0 0 0 E 0 0 0 2 4 8 0 0 1 0 0 0 2 -8

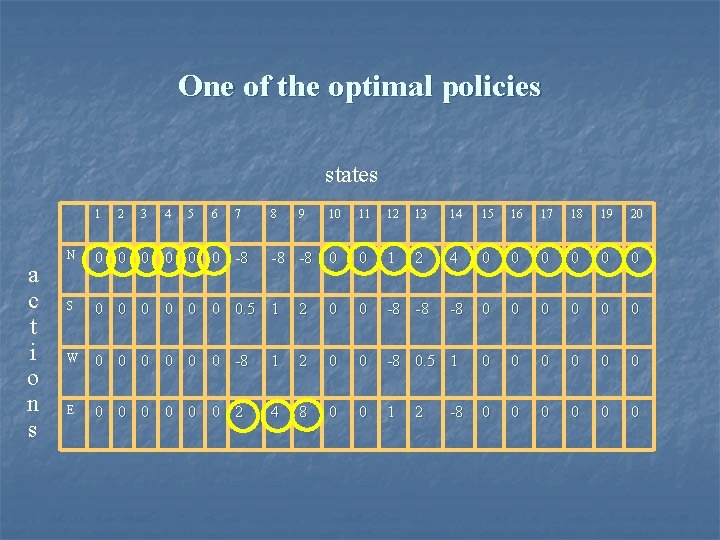

One of the optimal policies states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 -8 -8 0 0 1 2 4 0 0 0 -8 0 0 0 N 0 0 0 -8 S 0 0 0 0. 5 1 2 0 0 -8 -8 W 0 0 0 -8 1 2 0 0 -8 0. 5 1 0 0 0 E 0 0 0 2 4 8 0 0 1 0 0 0 2 -8

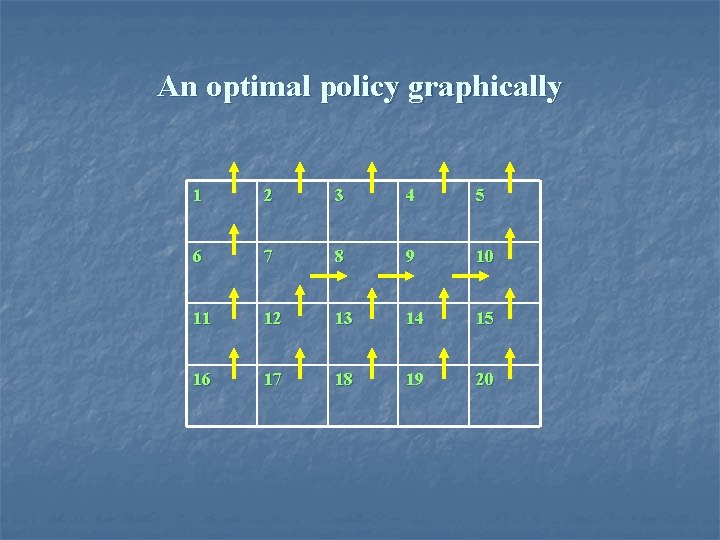

An optimal policy graphically 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

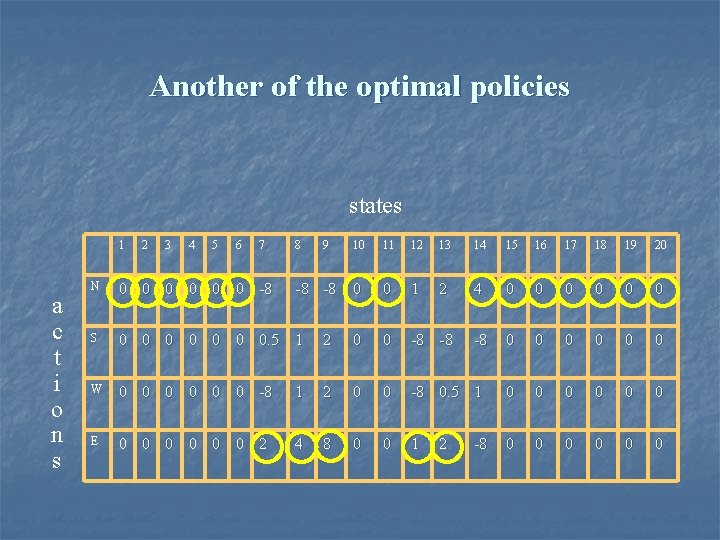

Another of the optimal policies states 1 a c t i o n s 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 -8 -8 0 0 1 2 4 0 0 0 -8 0 0 0 N 0 0 0 -8 S 0 0 0 0. 5 1 2 0 0 -8 -8 W 0 0 0 -8 1 2 0 0 -8 0. 5 1 0 0 0 E 0 0 0 2 4 8 0 0 1 0 0 0 2 -8

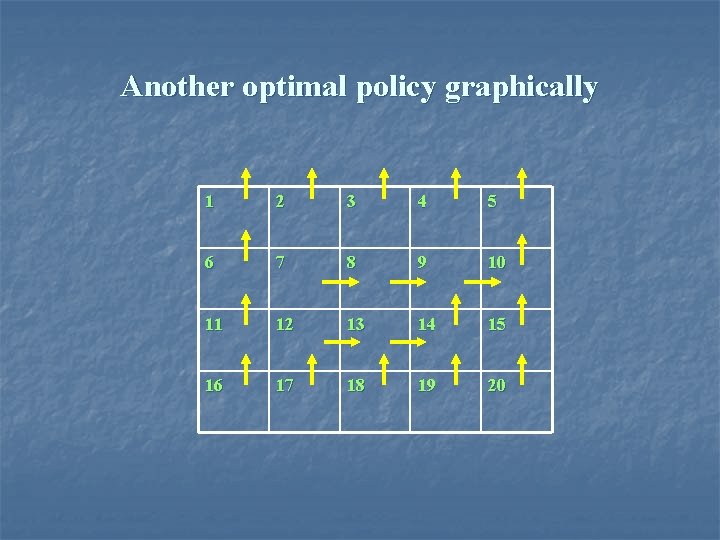

Another optimal policy graphically 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

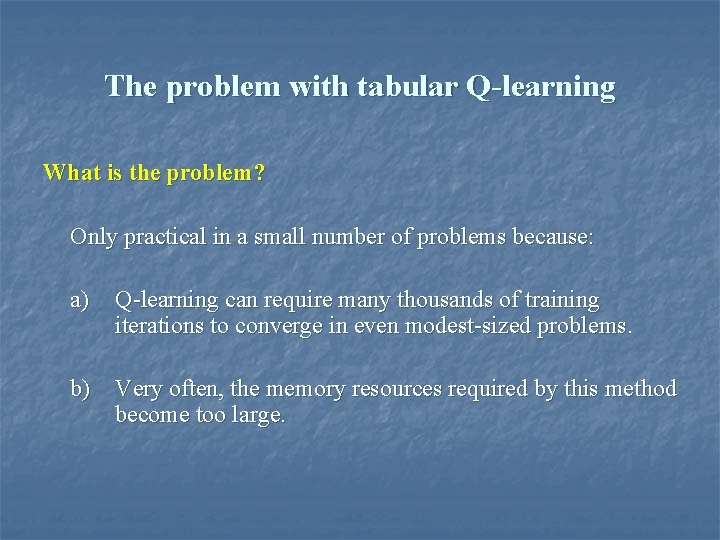

The problem with tabular Q-learning What is the problem? Only practical in a small number of problems because: a) Q-learning can require many thousands of training iterations to converge in even modest-sized problems. b) Very often, the memory resources required by this method become too large.

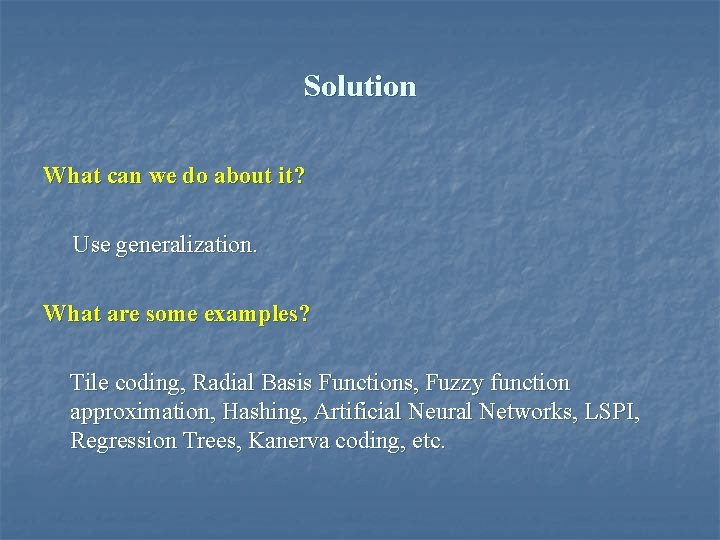

Solution What can we do about it? Use generalization. What are some examples? Tile coding, Radial Basis Functions, Fuzzy function approximation, Hashing, Artificial Neural Networks, LSPI, Regression Trees, Kanerva coding, etc.

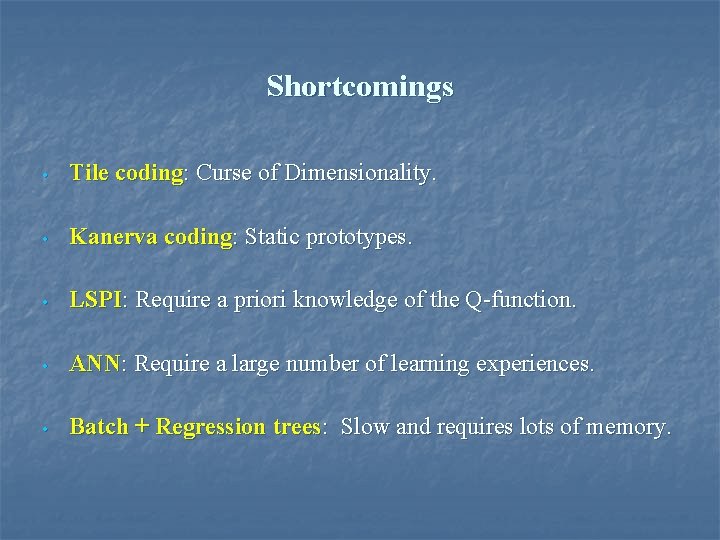

Shortcomings • Tile coding: Curse of Dimensionality. • Kanerva coding: Static prototypes. • LSPI: Require a priori knowledge of the Q-function. • ANN: Require a large number of learning experiences. • Batch + Regression trees: Slow and requires lots of memory.

Needed properties 1) Memory requirements should not explode exponentially with the dimensionality of the problem. 2) It should tackle the pitfalls caused by the usage of “static prototypes”. 3) It should try to reduce the number of learning experiences required to generate an acceptable policy. NOTE: All this without requiring a priori knowledge of the Q-function.

Overview of the proposed method 1) The proposed method limits the number of prototypes available to describe the Q-function (as Kanerva coding). 2) The Q-function is modeled using a regression tree (as the batch method proposed by Sridharan and Tesauro). 3) But prototypes are not static, as in Kanerva coding, but dynamic. 4) The proposed method has the capacity to update the Qfunction once for every available learning experience (it can be an online learner).

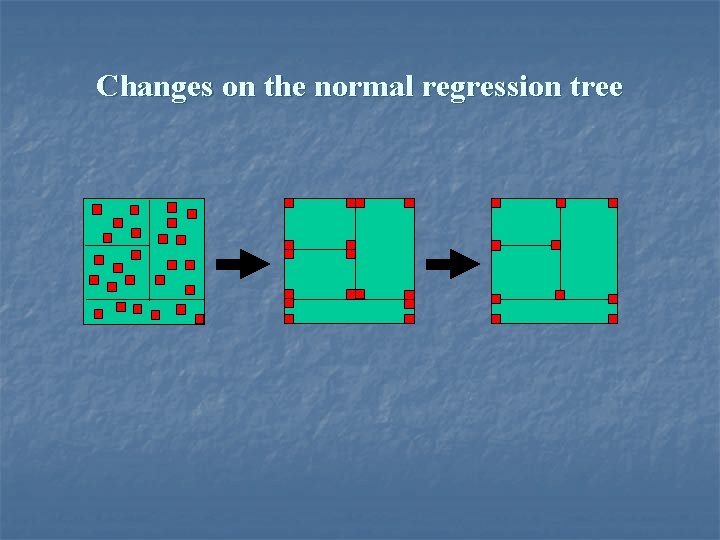

Changes on the normal regression tree

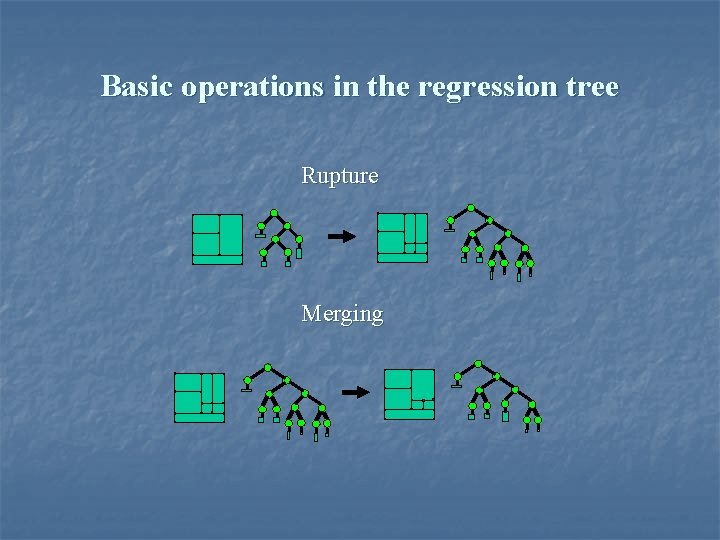

Basic operations in the regression tree Rupture Merging

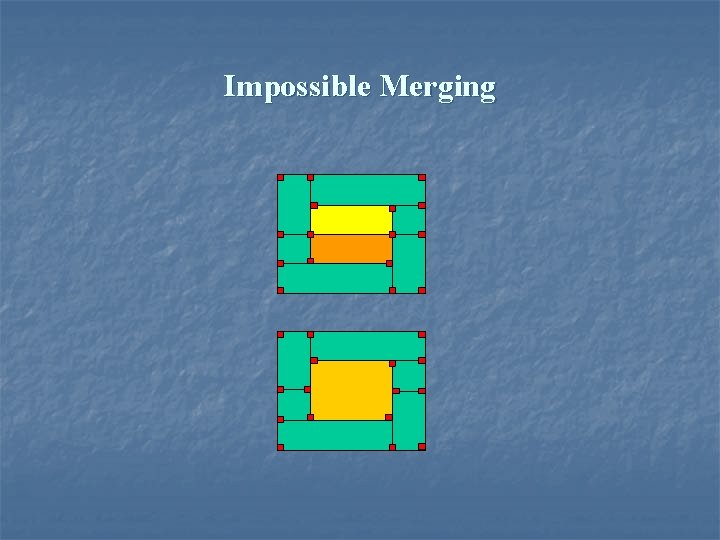

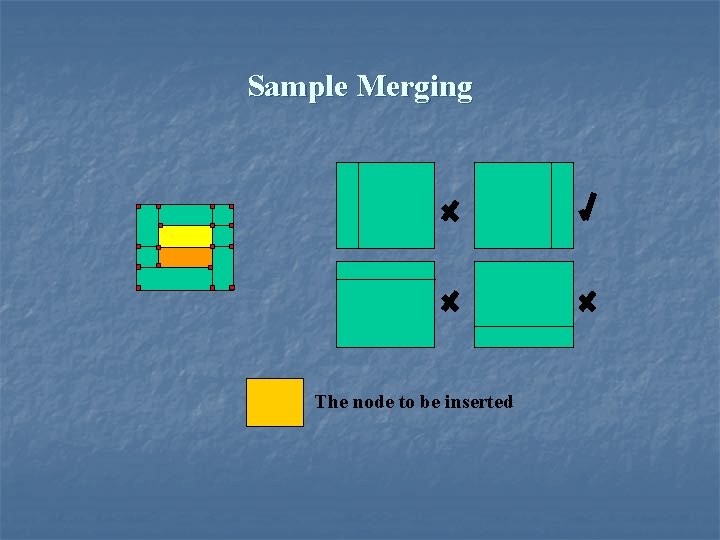

Impossible Merging

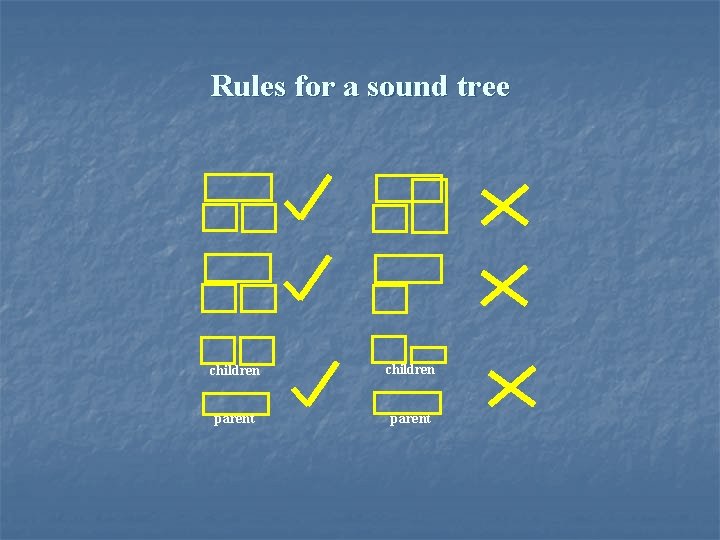

Rules for a sound tree children parent

Impossible Merging

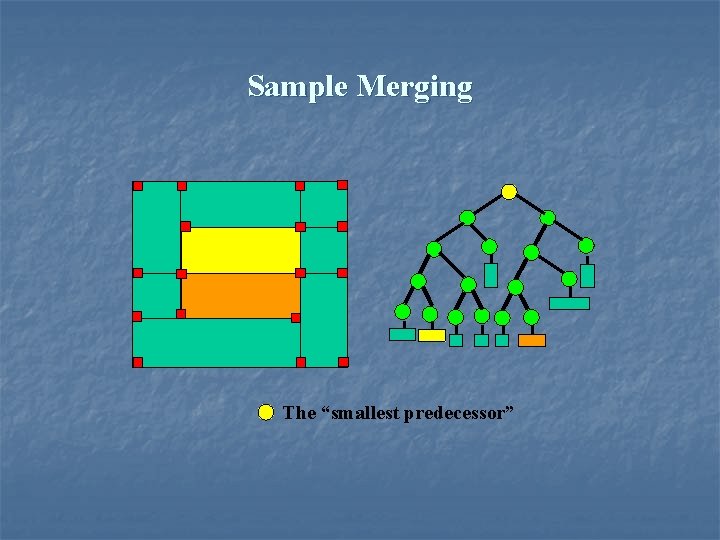

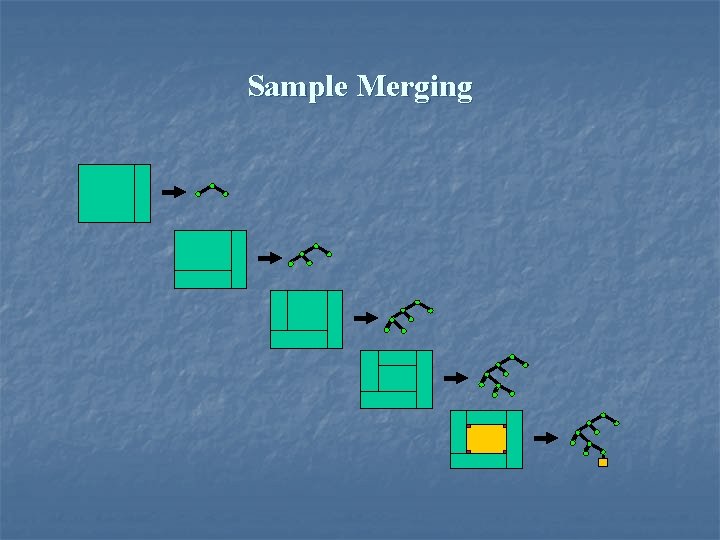

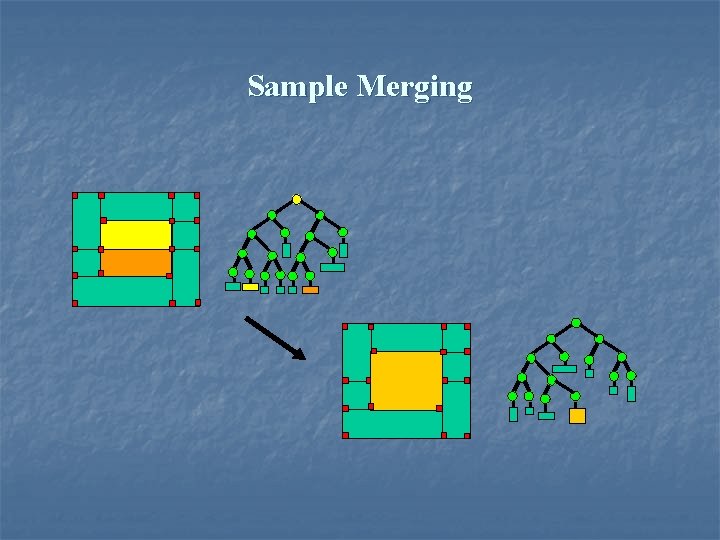

Sample Merging The “smallest predecessor”

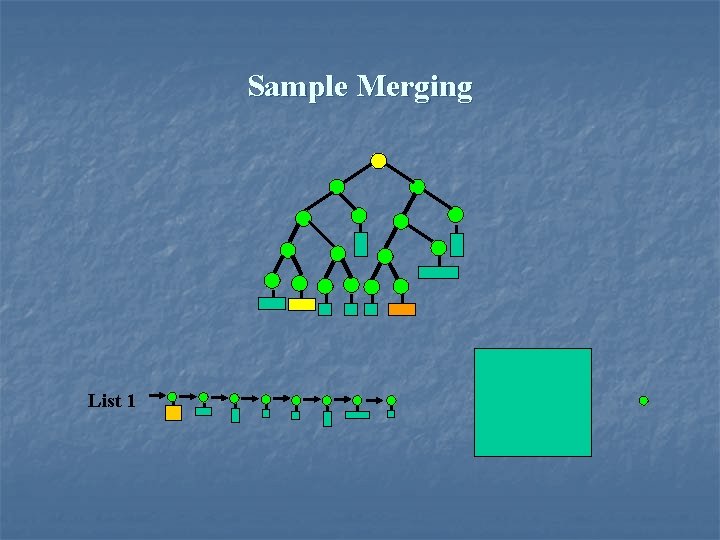

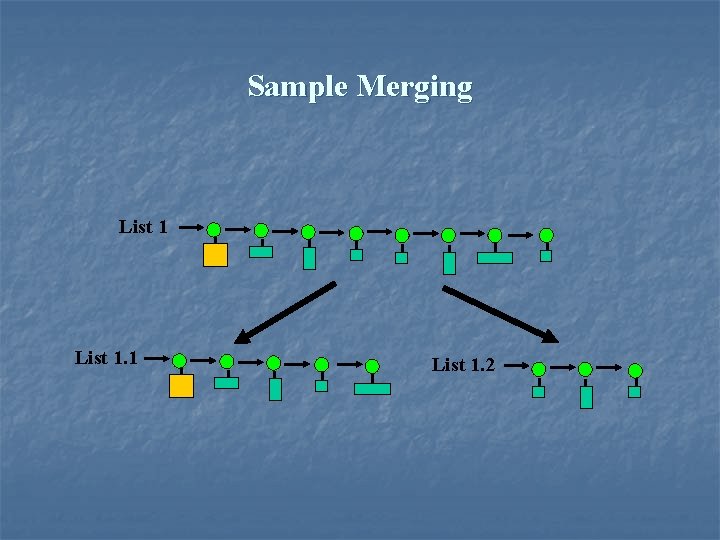

Sample Merging List 1

Sample Merging The node to be inserted

Sample Merging List 1. 1 List 1. 2

Sample Merging

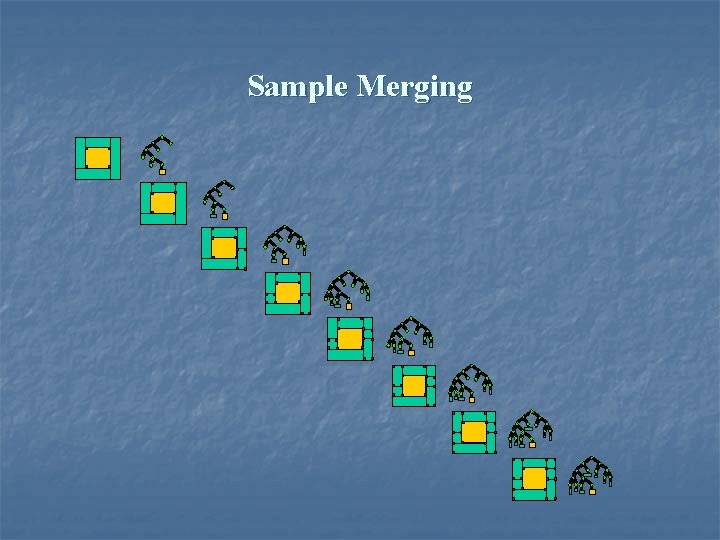

Sample Merging

Sample Merging

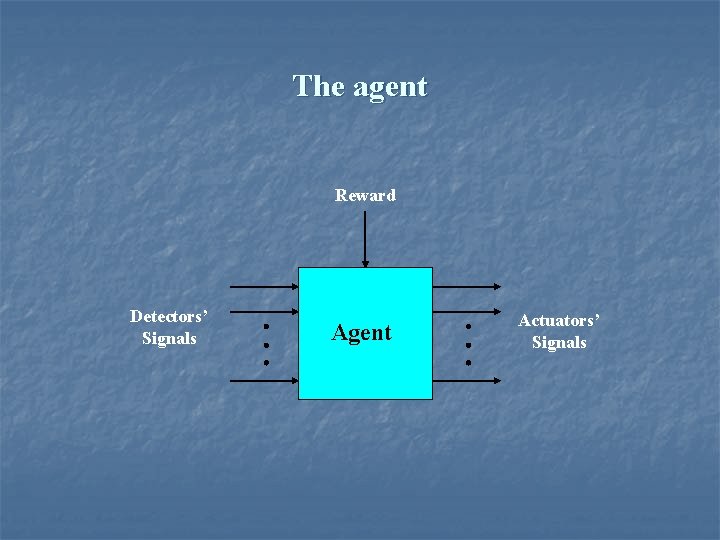

The agent Reward Detectors’ Signals Agent Actuators’ Signals

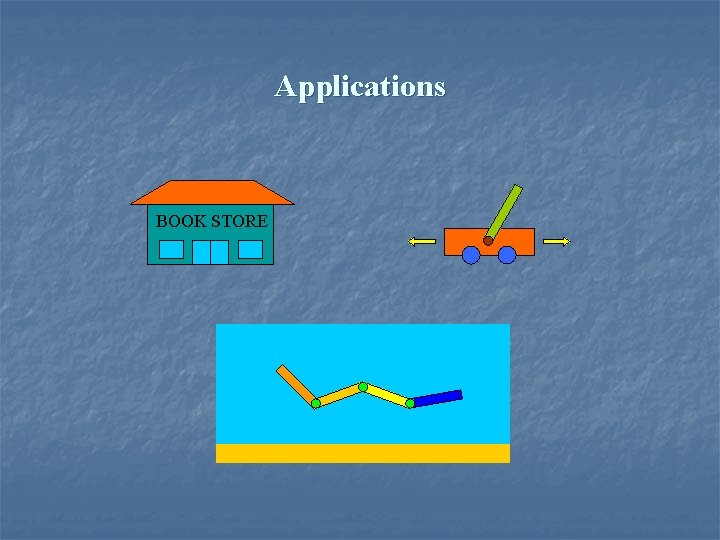

Applications BOOK STORE

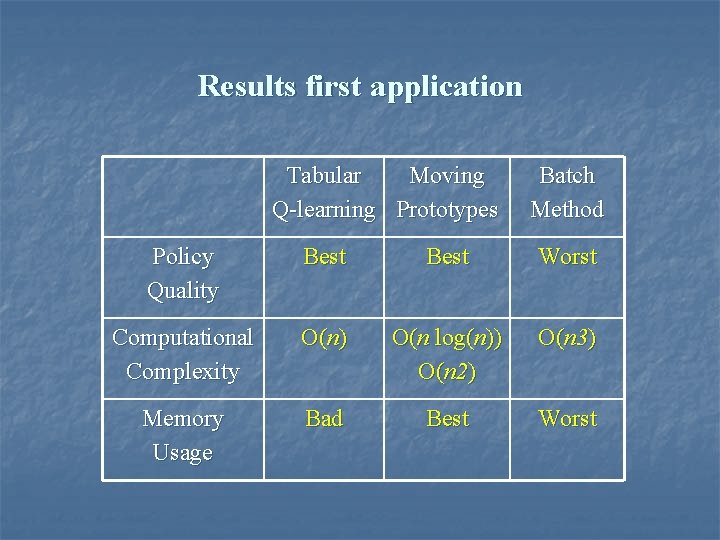

Results first application Tabular Moving Q-learning Prototypes Batch Method Policy Quality Best Worst Computational Complexity O(n) O(n log(n)) O(n 2) O(n 3) Memory Usage Bad Best Worst

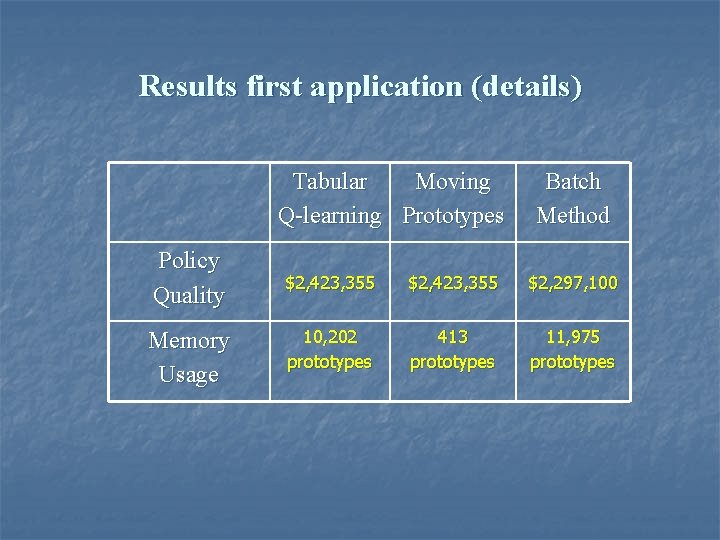

Results first application (details) Tabular Moving Q-learning Prototypes Batch Method Policy Quality $2, 423, 355 $2, 297, 100 Memory Usage 10, 202 prototypes 413 prototypes 11, 975 prototypes

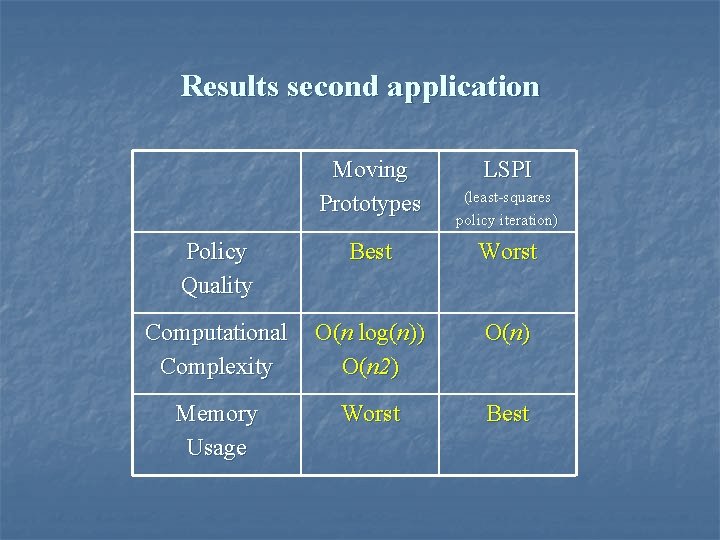

Results second application Moving Prototypes LSPI (least-squares policy iteration) Policy Quality Best Worst Computational Complexity O(n log(n)) O(n 2) O(n) Memory Usage Worst Best

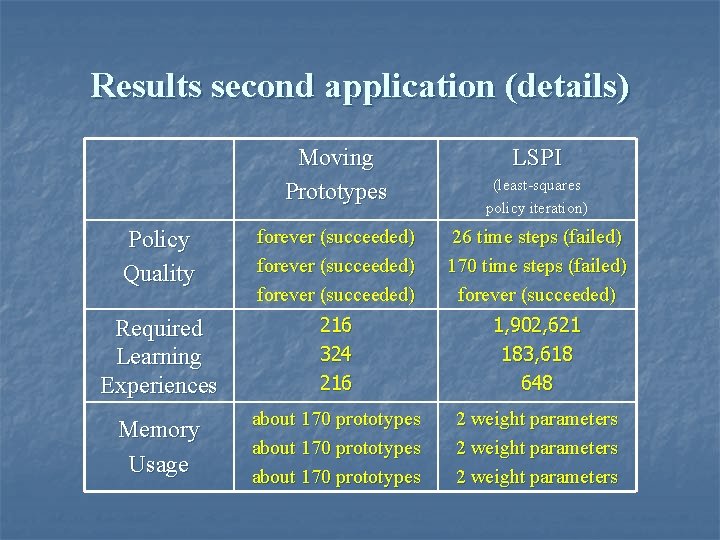

Results second application (details) Moving Prototypes LSPI (least-squares policy iteration) Policy Quality forever (succeeded) 26 time steps (failed) 170 time steps (failed) forever (succeeded) Required Learning Experiences 216 324 216 1, 902, 621 183, 618 648 Memory Usage about 170 prototypes 2 weight parameters

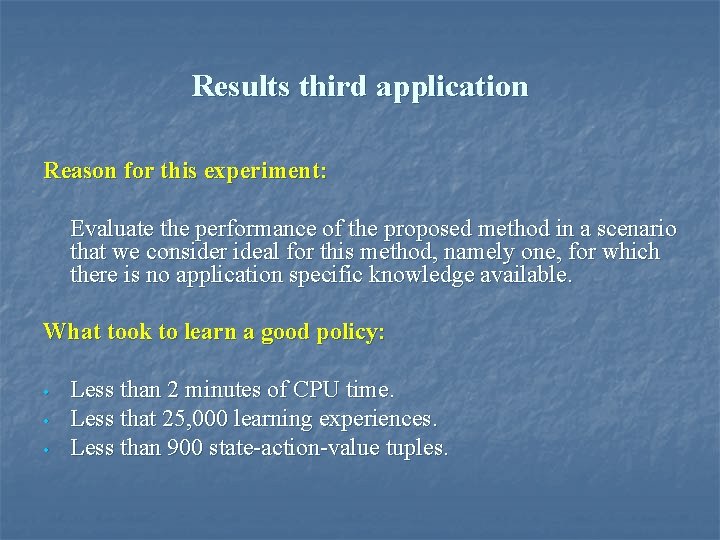

Results third application Reason for this experiment: Evaluate the performance of the proposed method in a scenario that we consider ideal for this method, namely one, for which there is no application specific knowledge available. What took to learn a good policy: • • • Less than 2 minutes of CPU time. Less that 25, 000 learning experiences. Less than 900 state-action-value tuples.

Swimmer first movie

Swimmer second movie

Swimmer third movie

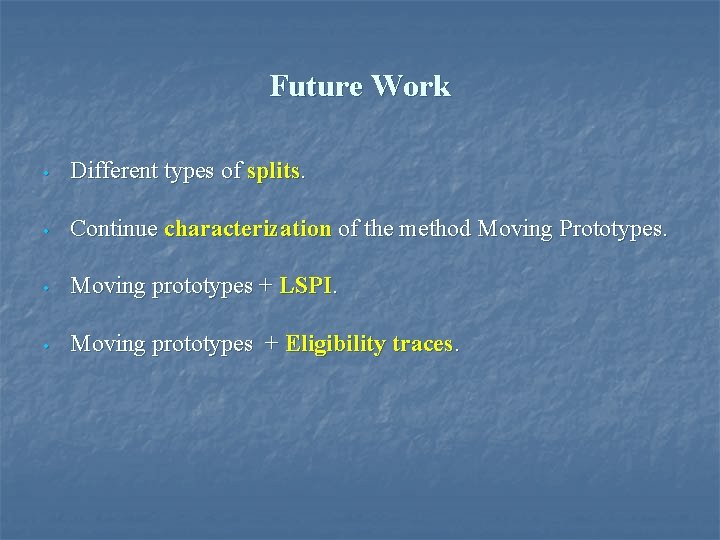

Future Work • Different types of splits. • Continue characterization of the method Moving Prototypes. • Moving prototypes + LSPI. • Moving prototypes + Eligibility traces.

- Slides: 53