Modeling Analysis r Mathematical Modeling m probability theory

Modeling & Analysis r Mathematical Modeling: m probability theory m queuing theory m application to network models r Simulation: m topology models m traffic models m dynamic models/failure models m protocol models Transport Layer 3 -1

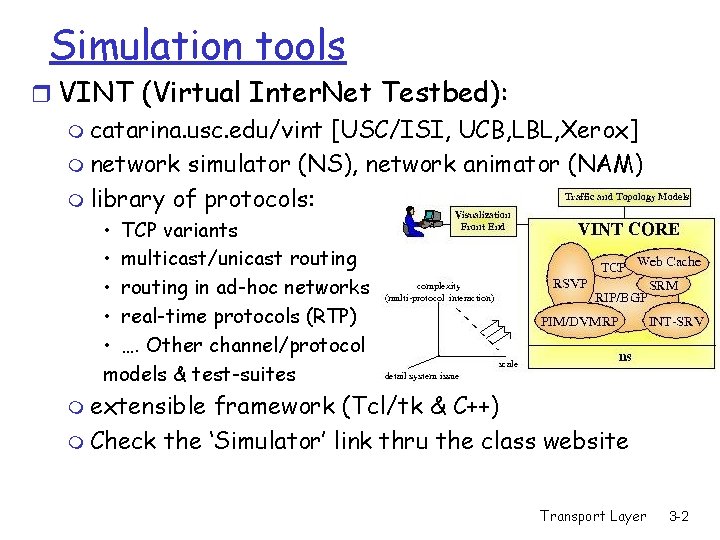

Simulation tools r VINT (Virtual Inter. Net Testbed): m catarina. usc. edu/vint [USC/ISI, UCB, LBL, Xerox] m network simulator (NS), network animator (NAM) m library of protocols: • TCP variants • multicast/unicast routing • routing in ad-hoc networks • real-time protocols (RTP) • …. Other channel/protocol models & test-suites m extensible framework (Tcl/tk & C++) m Check the ‘Simulator’ link thru the class website Transport Layer 3 -2

r OPNET: m commercial simulator m strength in wireless channel modeling r Glomo. Sim (Qual. Net): UCLA, parsec simulator r Research resources: m ACM & IEEE journals and conferences m SIGCOMM, INFOCOM, Transactions on Networking (TON), Mobi. Com m IEEE Computer, Spectrum, ACM Communications magazine m www. acm. org, www. ieee. org Transport Layer 3 -3

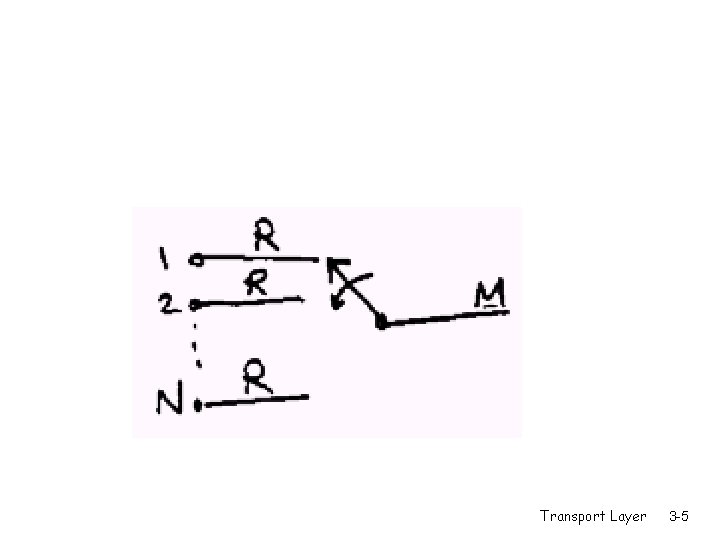

Modeling using queuing theory - Let: - N be the number of sources - M be the capacity of the multiplexed channel - R be the source data rate - be the mean fraction of time each source is active, where 0< 1 Transport Layer 3 -4

Transport Layer 3 -5

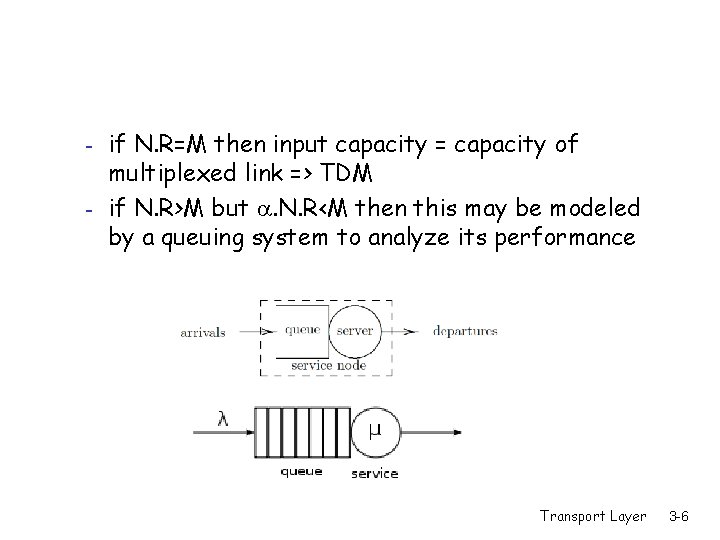

- if N. R=M then input capacity = capacity of multiplexed link => TDM if N. R>M but . N. R<M then this may be modeled by a queuing system to analyze its performance Transport Layer 3 -6

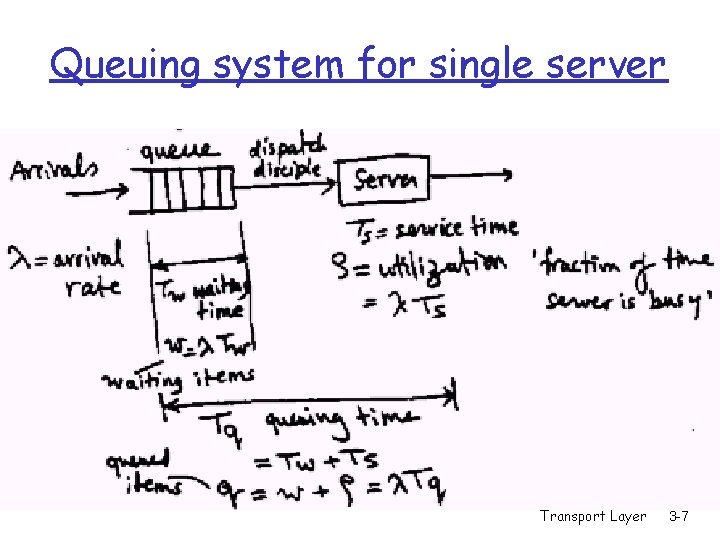

Queuing system for single server Transport Layer 3 -7

r is the arrival rate r Tw is the waiting time r The number of waiting items w=. Tw r Ts is the service time r is the utilization ‘fraction of the time the server is busy’, =. Ts r The queuing time Tq=Tw+Ts r The number of queued items (i. e. the queue occupancy) q=w+ =. Tq Transport Layer 3 -8

r =. N. R, Ts=1/M r =. Ts=. N. R/M r Assume: - random arrival process (Poisson arrival process) - constant service time (packet lengths are constant) - no drops (the buffer is large enough to hold all traffic, basically infinite) - no priorities, FIFO queue Transport Layer 3 -9

Inputs/Outputs of Queuing Theory r Given: - arrival rate - service time - queuing discipline r Output: - wait time, and queuing delay - waiting items, and queued items Transport Layer 3 -10

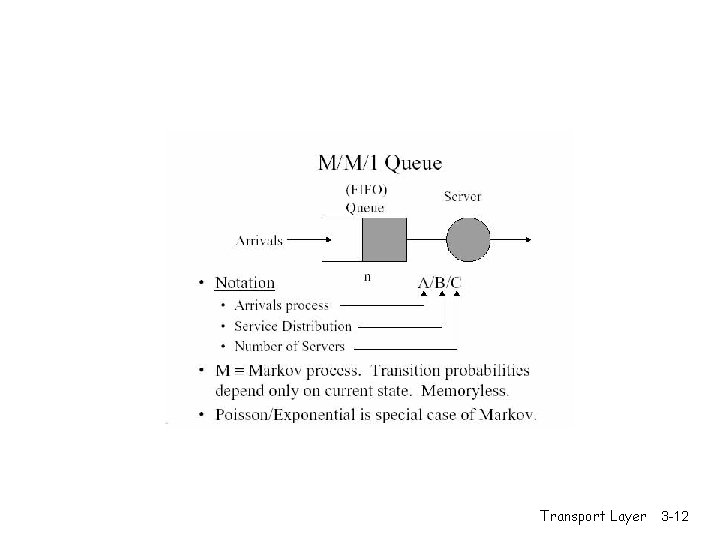

r Queue Naming: X/Y/Z r where X is the distribution of arrivals, Y is the distribution of the service time, Z is the number of servers r G: general distribution r M: negative exponential distribution r (random arrival, poisson process, exponential inter-arrival time) r D: deterministic arrivals (or fixed service time) Transport Layer 3 -11

Transport Layer 3 -12

![r M/D/1: r Tq=Ts(2 - )/[2. (1 - )], r q=. Tq= + 2/[2. r M/D/1: r Tq=Ts(2 - )/[2. (1 - )], r q=. Tq= + 2/[2.](http://slidetodoc.com/presentation_image_h2/8941c296307ffed2770c6b9a612f2cfc/image-13.jpg)

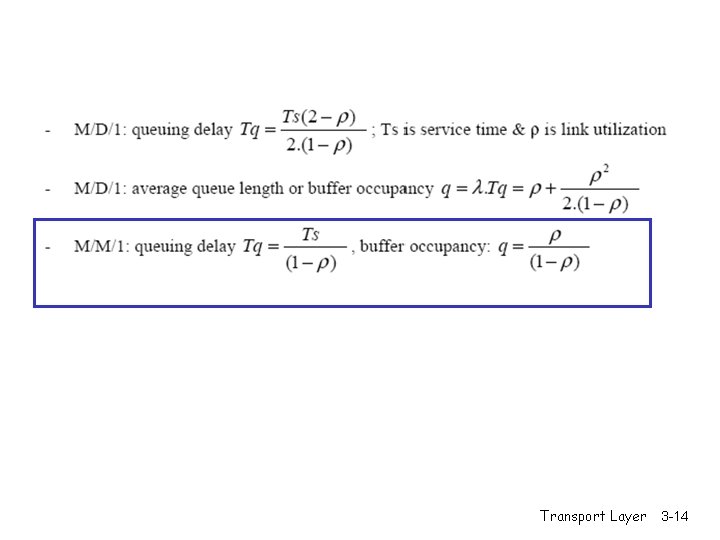

r M/D/1: r Tq=Ts(2 - )/[2. (1 - )], r q=. Tq= + 2/[2. (1 - )] Transport Layer 3 -13

Transport Layer 3 -14

Transport Layer 3 -15

Transport Layer 3 -16

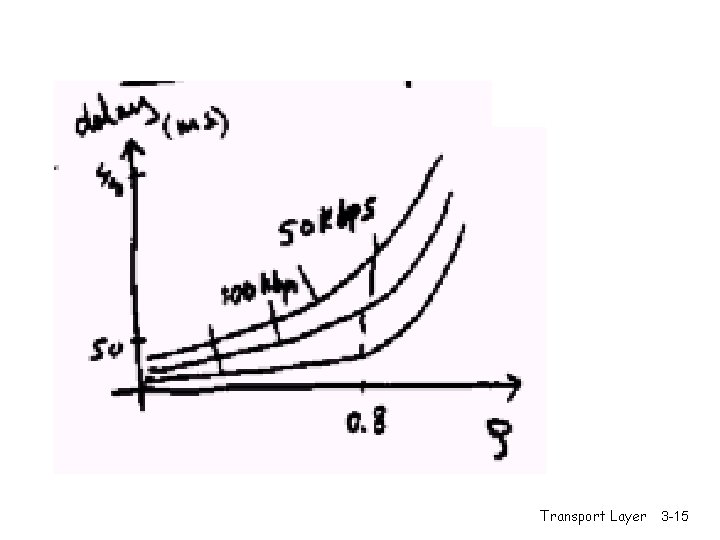

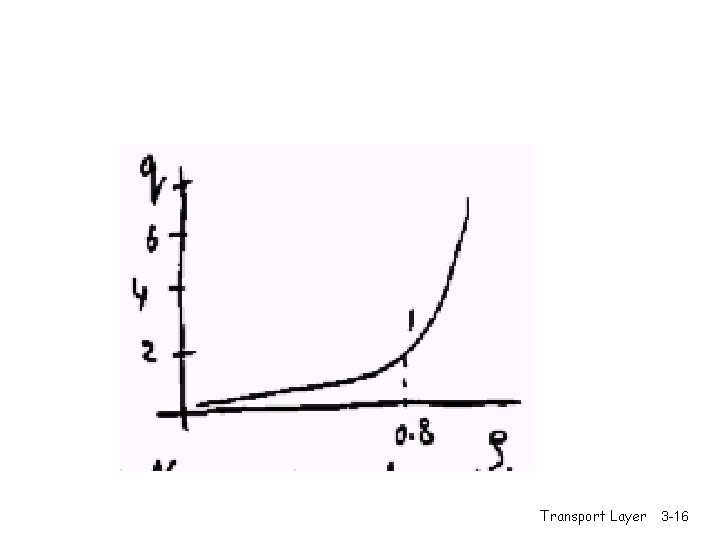

r As increases, so do buffer requirements and delay r The buffer size ‘q’ only depends on Transport Layer 3 -17

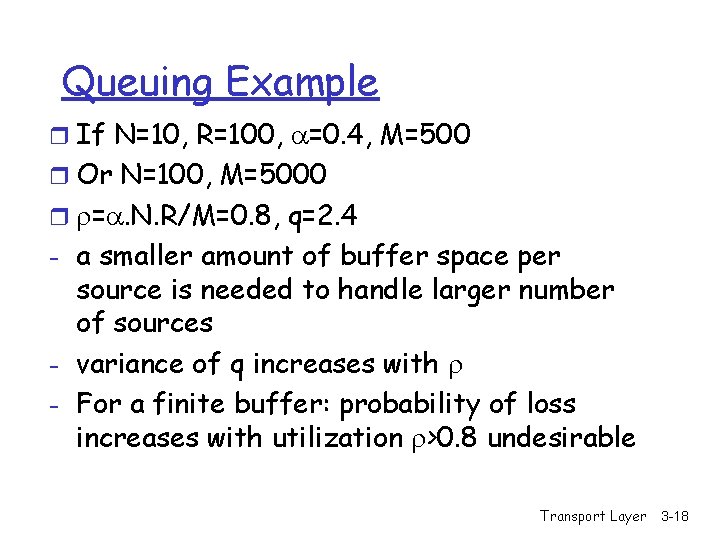

Queuing Example r If N=10, R=100, =0. 4, M=500 r Or N=100, M=5000 r =. N. R/M=0. 8, q=2. 4 - a smaller amount of buffer space per source is needed to handle larger number of sources - variance of q increases with - For a finite buffer: probability of loss increases with utilization >0. 8 undesirable Transport Layer 3 -18

Chapter 3 Transport Layer Computer Networking: A Top Down Approach 4 th edition. Jim Kurose, Keith Ross Addison-Wesley, July 2007. Transport Layer 3 -19

Transport Layer 3 -20

Transport Layer 3 -21

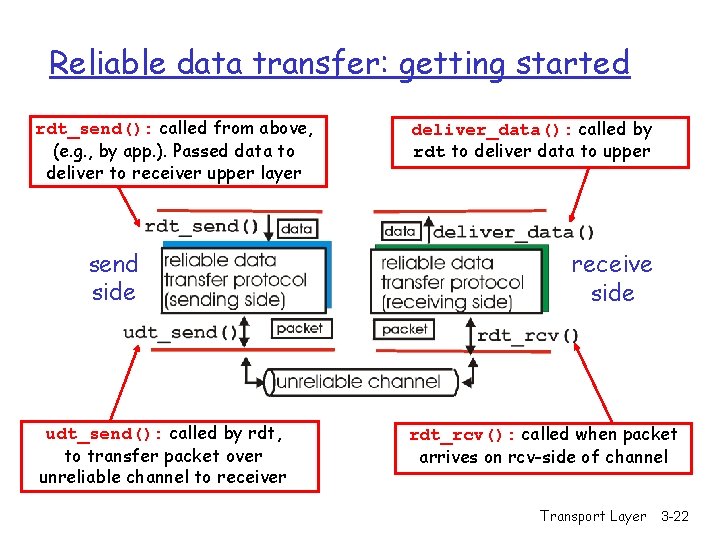

Reliable data transfer: getting started rdt_send(): called from above, (e. g. , by app. ). Passed data to deliver to receiver upper layer send side udt_send(): called by rdt, to transfer packet over unreliable channel to receiver deliver_data(): called by rdt to deliver data to upper receive side rdt_rcv(): called when packet arrives on rcv-side of channel Transport Layer 3 -22

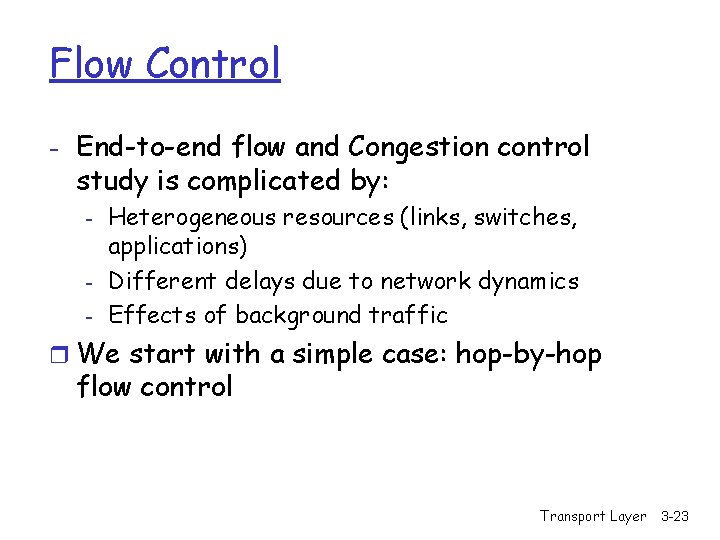

Flow Control - End-to-end flow and Congestion control study is complicated by: - Heterogeneous resources (links, switches, applications) Different delays due to network dynamics Effects of background traffic r We start with a simple case: hop-by-hop flow control Transport Layer 3 -23

Hop-by-hop flow control r Approaches/techniques for hop-by-hop flow control - Stop-and-wait sliding window - Go back N - Selective reject Transport Layer 3 -24

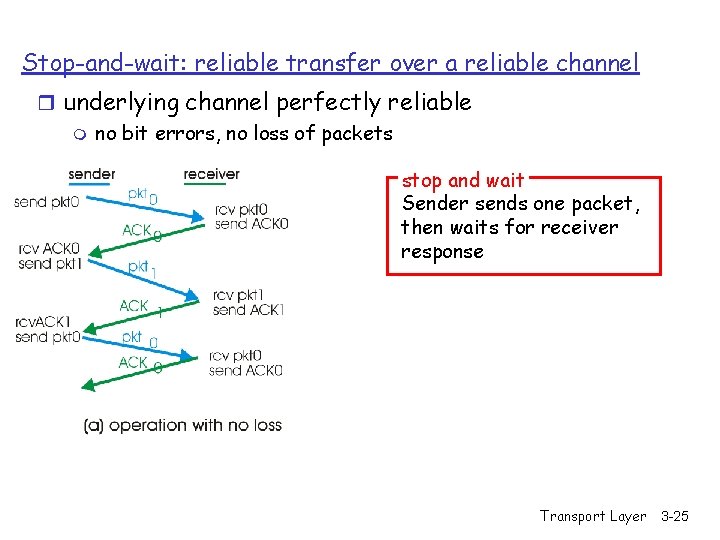

Stop-and-wait: reliable transfer over a reliable channel r underlying channel perfectly reliable m no bit errors, no loss of packets stop and wait Sender sends one packet, then waits for receiver response Transport Layer 3 -25

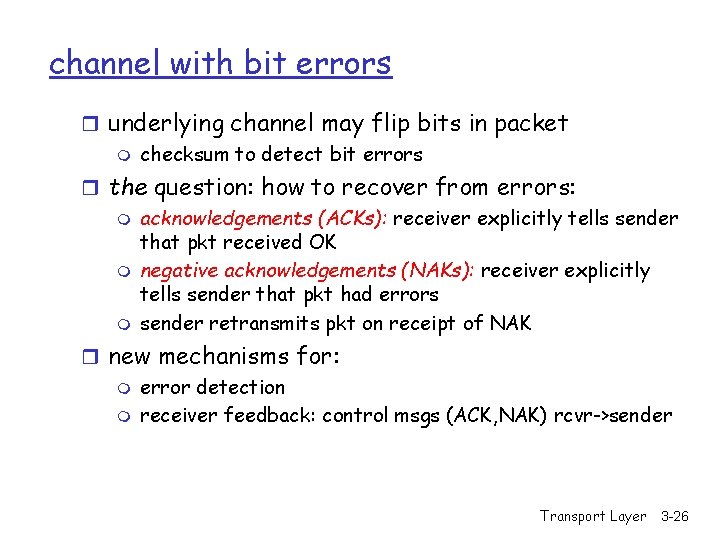

channel with bit errors r underlying channel may flip bits in packet m checksum to detect bit errors r the question: how to recover from errors: m acknowledgements (ACKs): receiver explicitly tells sender that pkt received OK m negative acknowledgements (NAKs): receiver explicitly tells sender that pkt had errors m sender retransmits pkt on receipt of NAK r new mechanisms for: m error detection m receiver feedback: control msgs (ACK, NAK) rcvr->sender Transport Layer 3 -26

Stop-and-wait operation Summary r Stop and wait: - sender awaits for ACK to send another frame - sender uses a timer to re-transmit if no ACKs - if ACK is lost: - A sends frame, B’s ACK gets lost - A times out & re-transmits the frame, B receives duplicates - Sequence numbers are added (frame 0, 1 ACK 0, 1) - timeout: should be related to round trip time estimates - if too small unnecessary re-transmission - if too large long delays Transport Layer 3 -27

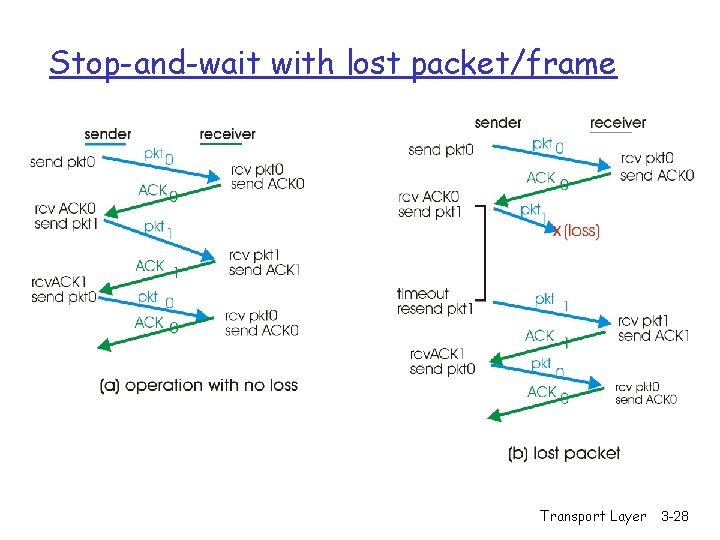

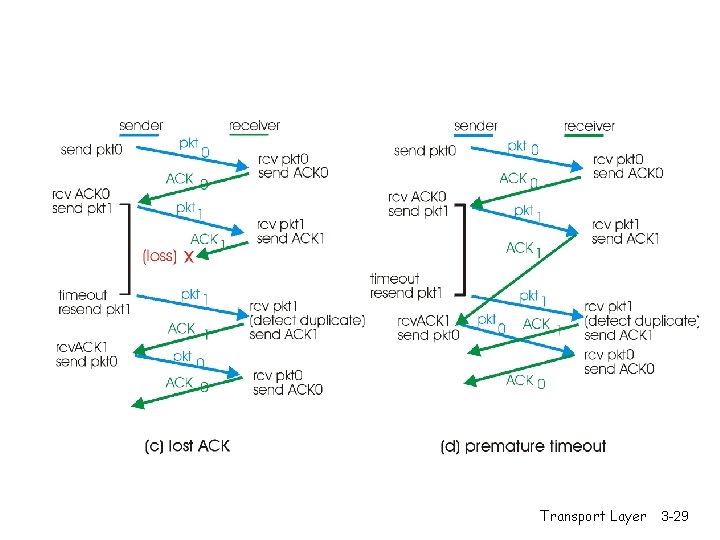

Stop-and-wait with lost packet/frame Transport Layer 3 -28

Transport Layer 3 -29

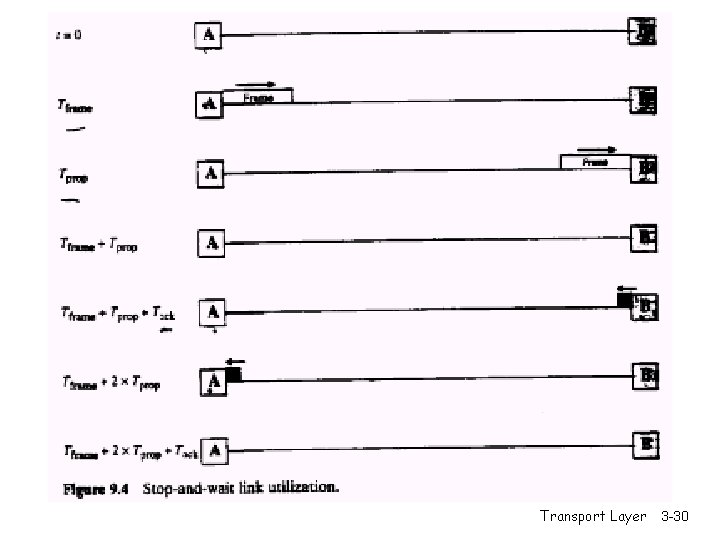

Transport Layer 3 -30

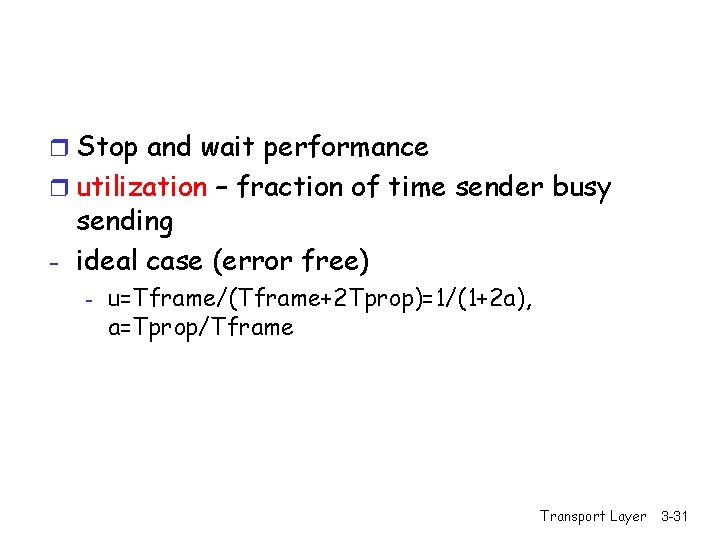

r Stop and wait performance r utilization – fraction of time sender busy sending - ideal case (error free) - u=Tframe/(Tframe+2 Tprop)=1/(1+2 a), a=Tprop/Tframe Transport Layer 3 -31

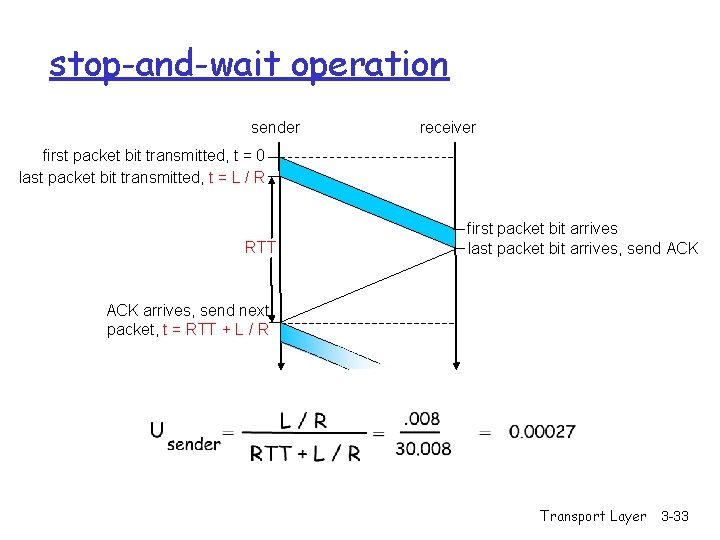

Performance of stop-and-wait r example: 1 Gbps link, 15 ms e-e prop. delay, 1 KB packet: Ttransmit = m m m L (packet length in bits) 8 kb/pkt = = 8 microsec R (transmission rate, bps) 10**9 b/sec U sender: utilization – fraction of time sender busy sending 1 KB pkt every 30 msec -> 33 k. B/sec thruput over 1 Gbps link network protocol limits use of physical resources! Transport Layer 3 -32

stop-and-wait operation sender receiver first packet bit transmitted, t = 0 last packet bit transmitted, t = L / R RTT first packet bit arrives last packet bit arrives, send ACK arrives, send next packet, t = RTT + L / R Transport Layer 3 -33

Sliding window techniques - TCP is a variant of sliding window - Includes Go back N (GBN) and selective repeat/reject - Allows for outstanding packets without Ack - More complex than stop and wait - Need to buffer un-Ack’ed packets & more book-keeping than stop-and-wait Transport Layer 3 -34

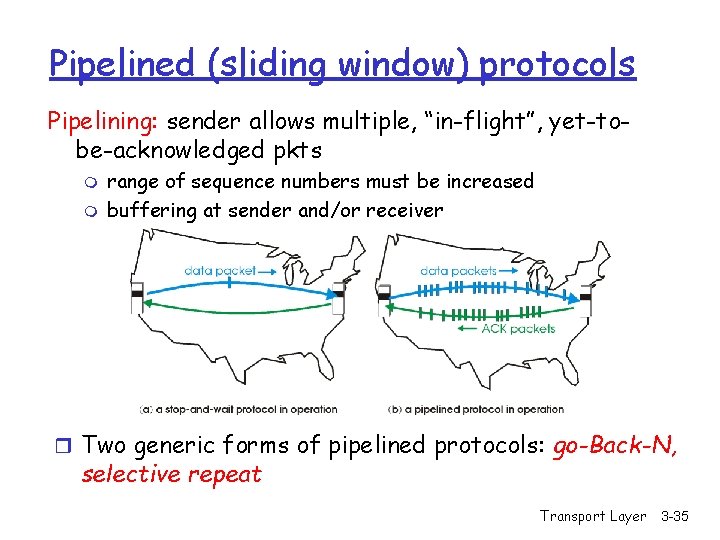

Pipelined (sliding window) protocols Pipelining: sender allows multiple, “in-flight”, yet-tobe-acknowledged pkts m m range of sequence numbers must be increased buffering at sender and/or receiver r Two generic forms of pipelined protocols: go-Back-N, selective repeat Transport Layer 3 -35

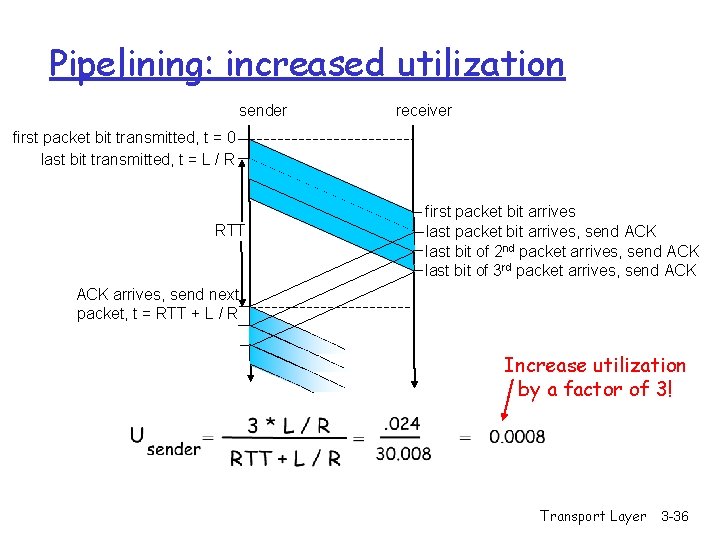

Pipelining: increased utilization sender receiver first packet bit transmitted, t = 0 last bit transmitted, t = L / R RTT first packet bit arrives last packet bit arrives, send ACK last bit of 2 nd packet arrives, send ACK last bit of 3 rd packet arrives, send ACK arrives, send next packet, t = RTT + L / R Increase utilization by a factor of 3! Transport Layer 3 -36

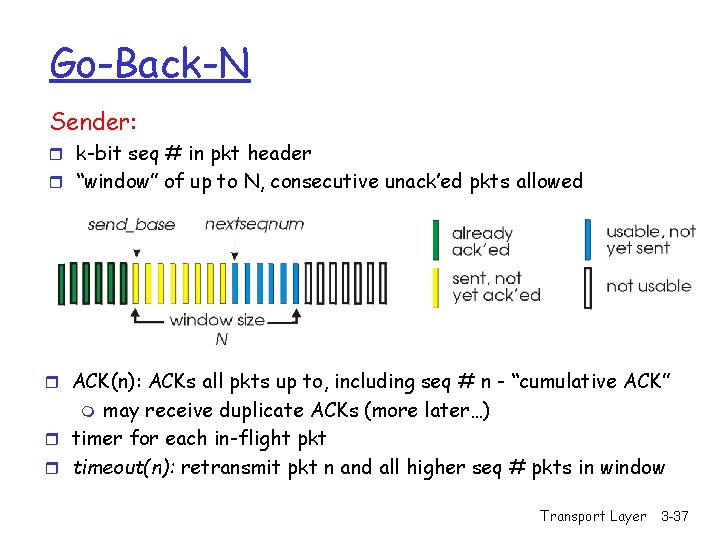

Go-Back-N Sender: r k-bit seq # in pkt header r “window” of up to N, consecutive unack’ed pkts allowed r ACK(n): ACKs all pkts up to, including seq # n - “cumulative ACK” may receive duplicate ACKs (more later…) r timer for each in-flight pkt r timeout(n): retransmit pkt n and all higher seq # pkts in window m Transport Layer 3 -37

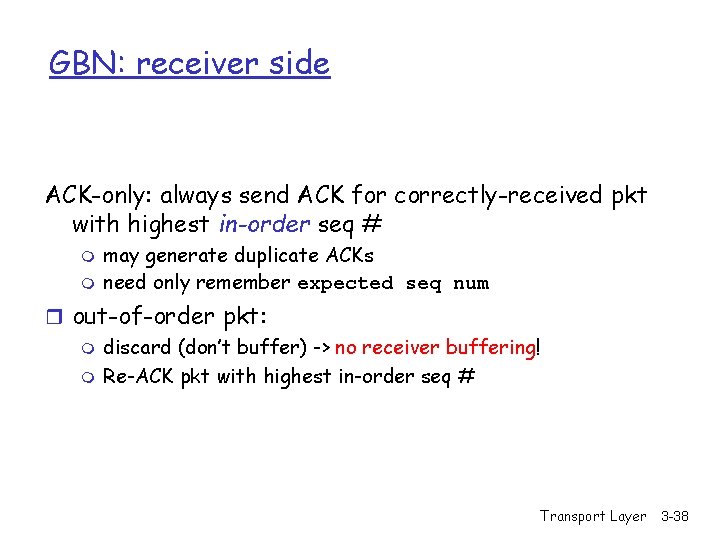

GBN: receiver side ACK-only: always send ACK for correctly-received pkt with highest in-order seq # m m may generate duplicate ACKs need only remember expected seq num r out-of-order pkt: m discard (don’t buffer) -> no receiver buffering! m Re-ACK pkt with highest in-order seq # Transport Layer 3 -38

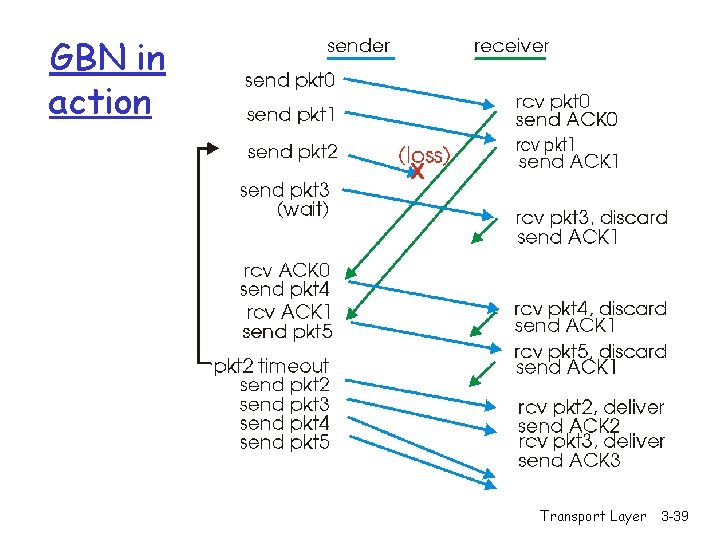

GBN in action Transport Layer 3 -39

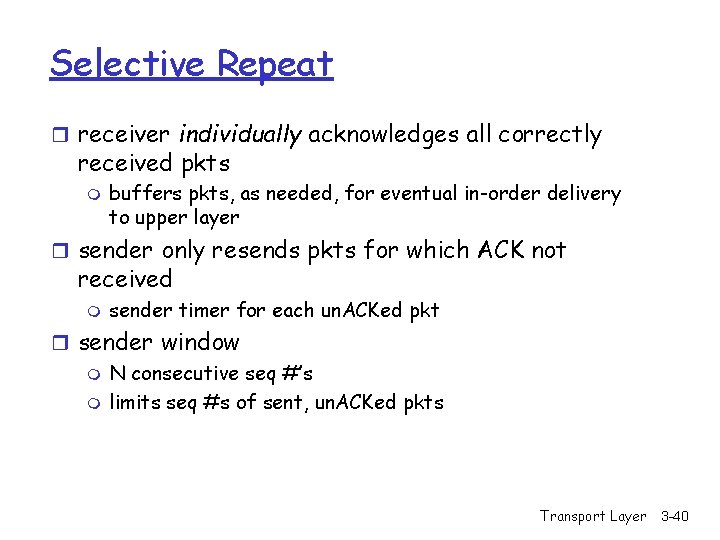

Selective Repeat r receiver individually acknowledges all correctly received pkts m buffers pkts, as needed, for eventual in-order delivery to upper layer r sender only resends pkts for which ACK not received m sender timer for each un. ACKed pkt r sender window m N consecutive seq #’s m limits seq #s of sent, un. ACKed pkts Transport Layer 3 -40

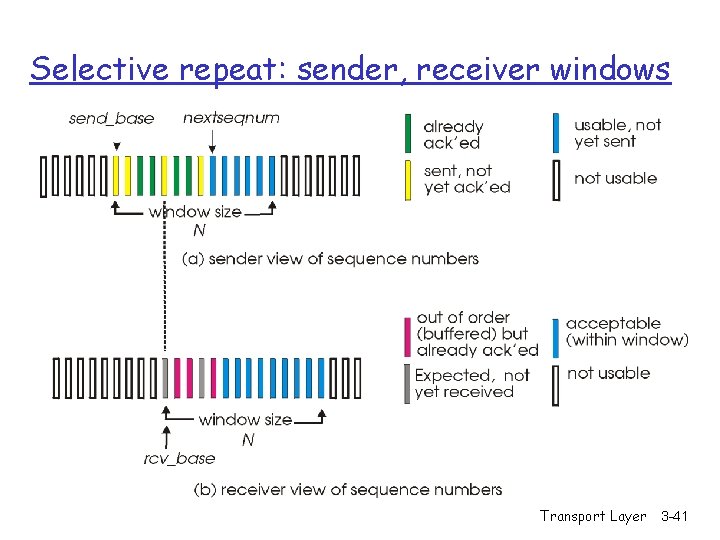

Selective repeat: sender, receiver windows Transport Layer 3 -41

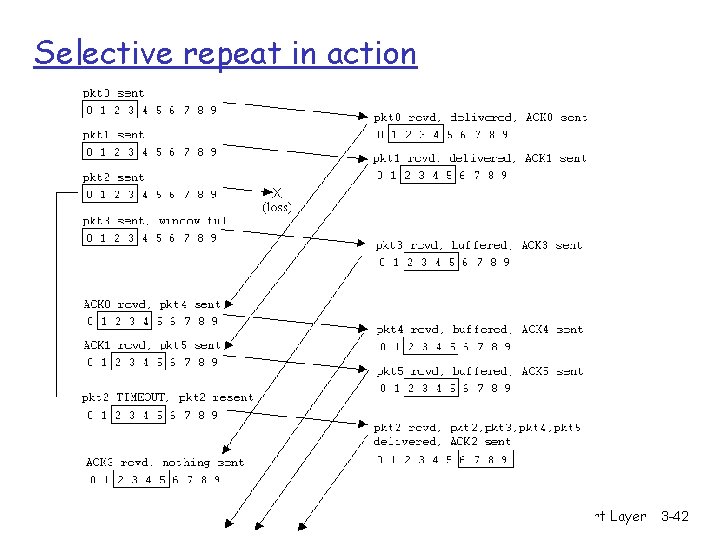

Selective repeat in action Transport Layer 3 -42

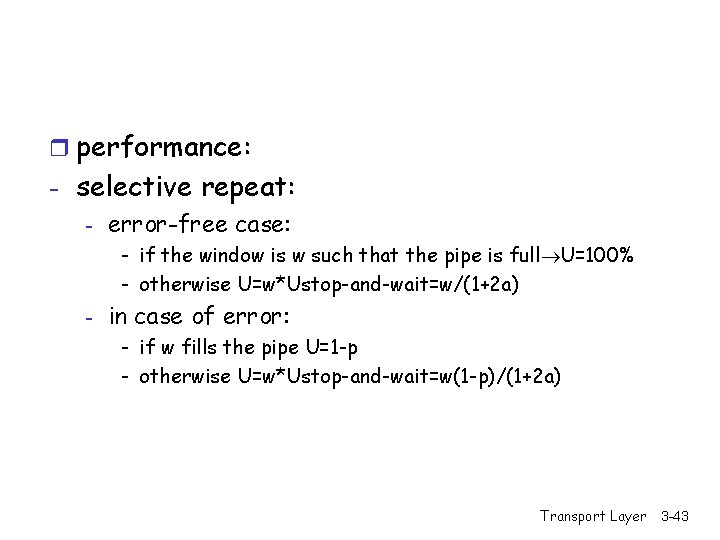

r performance: - selective repeat: - error-free case: - if the window is w such that the pipe is full U=100% - otherwise U=w*Ustop-and-wait=w/(1+2 a) - in case of error: - if w fills the pipe U=1 -p - otherwise U=w*Ustop-and-wait=w(1 -p)/(1+2 a) Transport Layer 3 -43

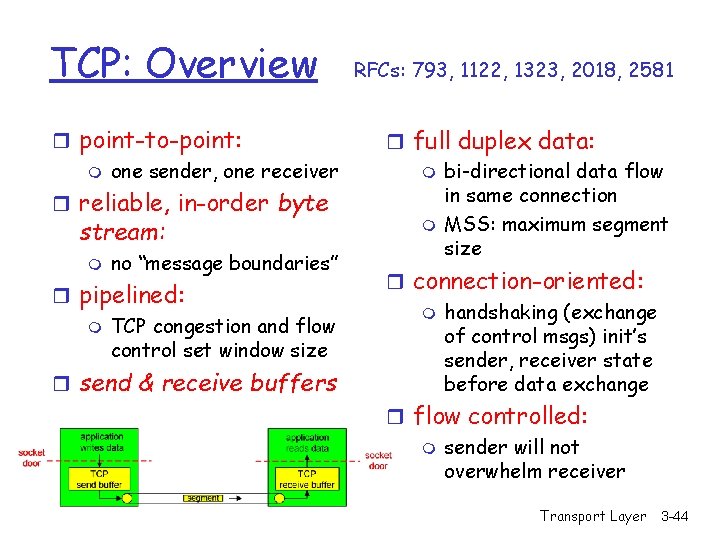

TCP: Overview r point-to-point: m one sender, one receiver r reliable, in-order byte stream: m no “message boundaries” r pipelined: m TCP congestion and flow control set window size r send & receive buffers RFCs: 793, 1122, 1323, 2018, 2581 r full duplex data: m bi-directional data flow in same connection m MSS: maximum segment size r connection-oriented: m handshaking (exchange of control msgs) init’s sender, receiver state before data exchange r flow controlled: m sender will not overwhelm receiver Transport Layer 3 -44

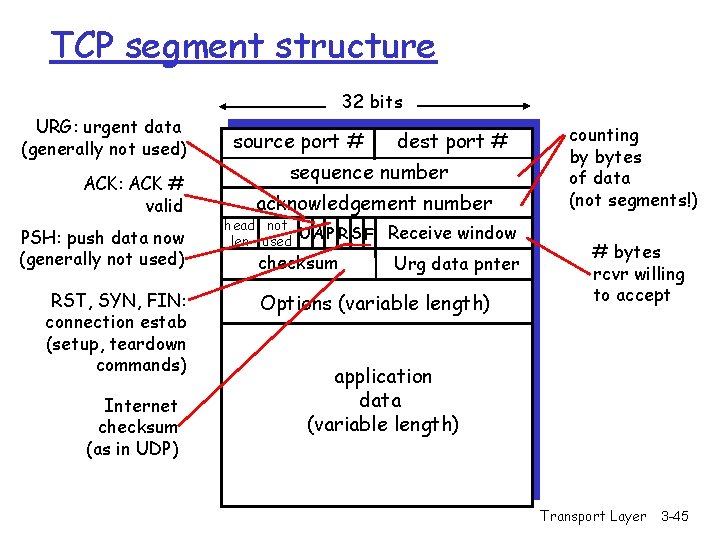

TCP segment structure 32 bits URG: urgent data (generally not used) ACK: ACK # valid PSH: push data now (generally not used) RST, SYN, FIN: connection estab (setup, teardown commands) Internet checksum (as in UDP) source port # dest port # sequence number acknowledgement number head not UA P R S F len used checksum Receive window Urg data pnter Options (variable length) counting by bytes of data (not segments!) # bytes rcvr willing to accept application data (variable length) Transport Layer 3 -45

- Receive window: credit (in octets) that the receiver is willing to accept from the sender starting from ack # - flags: - SYN: synchronizing at initail connection time FIN: end of sender data PSH: when used at sender the data is transmitted immediately, when at receiver, it is accepted immediately - options: - window scale factor (WSF): actual window = 2 Fxwindow field, where F is the number in the WSF - timestamp option: helps in RTT (round-trip-time) calculations Transport Layer 3 -46

![credit allocation scheme - (A=i, W=j) [A=Ack, W=window]: receiver acks up to ‘i-1’ bytes credit allocation scheme - (A=i, W=j) [A=Ack, W=window]: receiver acks up to ‘i-1’ bytes](http://slidetodoc.com/presentation_image_h2/8941c296307ffed2770c6b9a612f2cfc/image-47.jpg)

credit allocation scheme - (A=i, W=j) [A=Ack, W=window]: receiver acks up to ‘i-1’ bytes and allows/anticipates i up to i+j-1 receiver can use the cumulative ack option and not respond immediately - performance: depends on - transmission rate, propagation, window size, queuing delays, retransmission strategy which depends on RTT estimates that affect timeouts and are affected by network dynamics, receive policy (ack), background traffic…. . it is complex! Transport Layer 3 -47

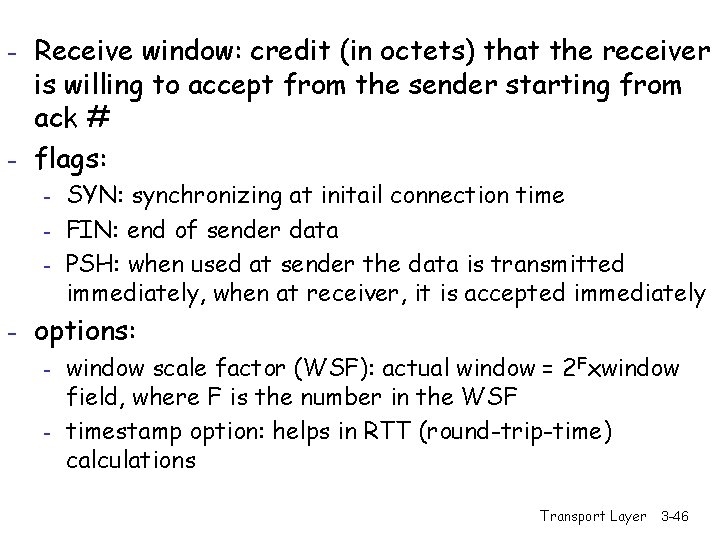

TCP seq. #’s and ACKs Seq. #’s: m byte stream “number” of first byte in segment’s data ACKs: m seq # of next byte expected from other side m cumulative ACK Q: how receiver handles out-of-order segments m A: TCP spec doesn’t say, - up to implementor Host B Host A User types ‘C’ Seq=4 2 , ACK= 79, da ta = ‘C ’ ata = d , 3 4 CK= A , 9 q=7 Se host ACKs receipt of echoed ‘C’ host ACKs receipt of ‘C’, echoes back ‘C’ Seq=4 3, ACK =80 simple telnet scenario Transport Layer time 3 -48

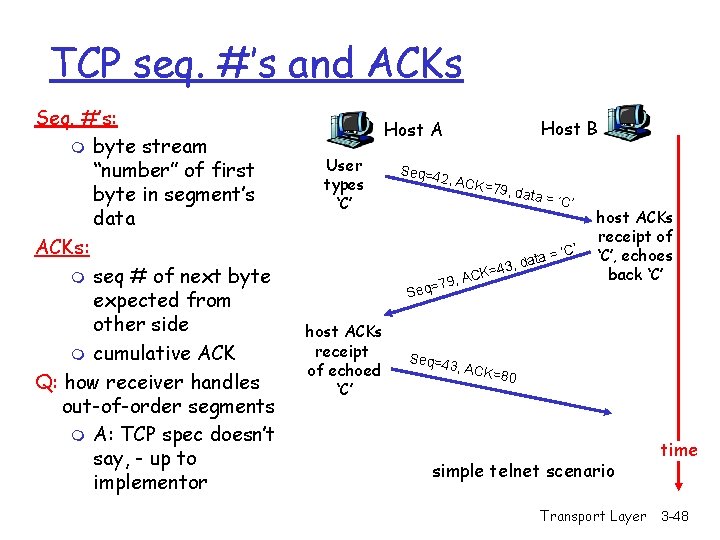

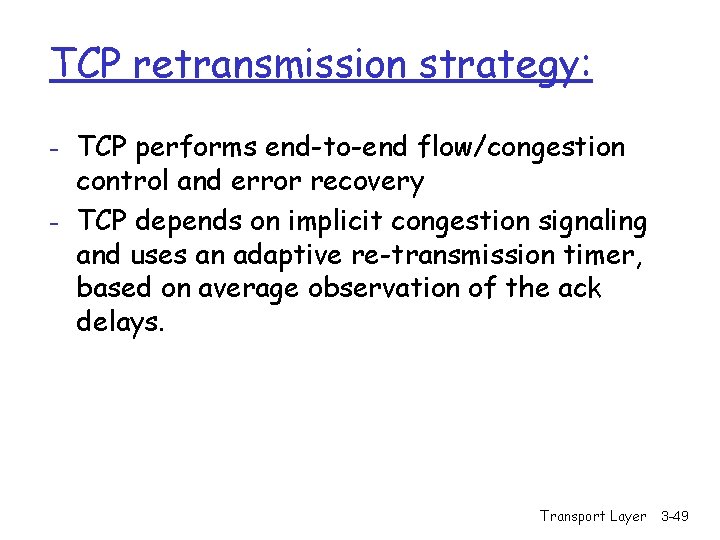

TCP retransmission strategy: - TCP performs end-to-end flow/congestion control and error recovery - TCP depends on implicit congestion signaling and uses an adaptive re-transmission timer, based on average observation of the ack delays. Transport Layer 3 -49

- Ack delays may be misleading due to the following reasons: - Cumulative acks render this estimate inaccurate Abrupt changes in the network If ack is received for a re-transmitted packet, sender cannot distinguish between ack for the original packet and ack for the re-transmitted packet Transport Layer 3 -50

Reliability in TCP r Components of reliability m 1. Sequence numbers m 2. Retransmissions m 3. Timeout Mechanism(s): function of the round trip time (RTT) between the two hosts (is it static? ) Transport Layer 3 -51

TCP Round Trip Time and Timeout Q: how to set TCP timeout value? r longer than RTT m but RTT varies r too short: premature timeout m unnecessary retransmissions r too long: slow reaction to segment loss Q: how to estimate RTT? r Sample. RTT: measured time from segment transmission until ACK receipt m ignore retransmissions r Sample. RTT will vary, want estimated RTT “smoother” m average several recent measurements, not just current Sample. RTT Transport Layer 3 -52

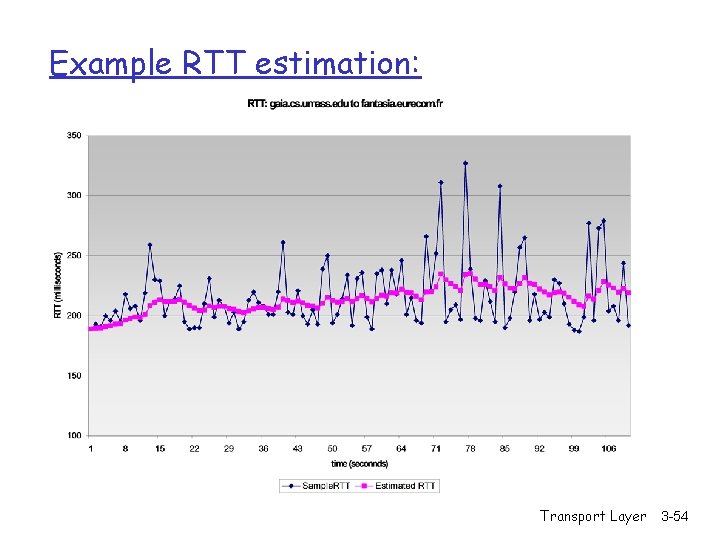

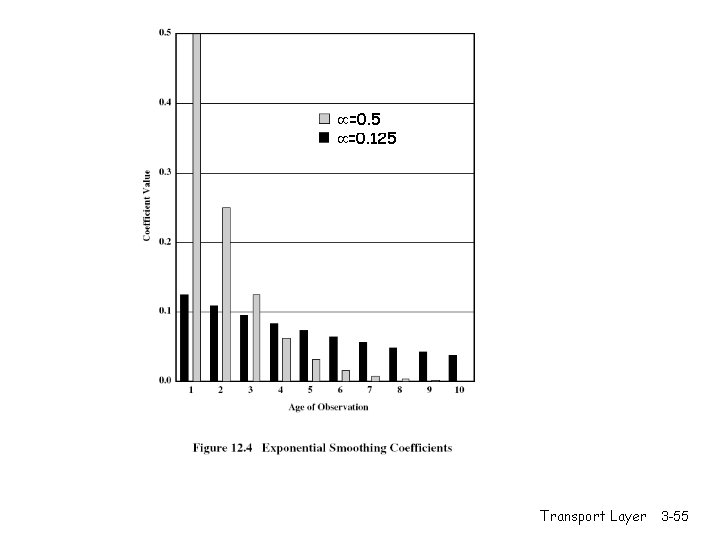

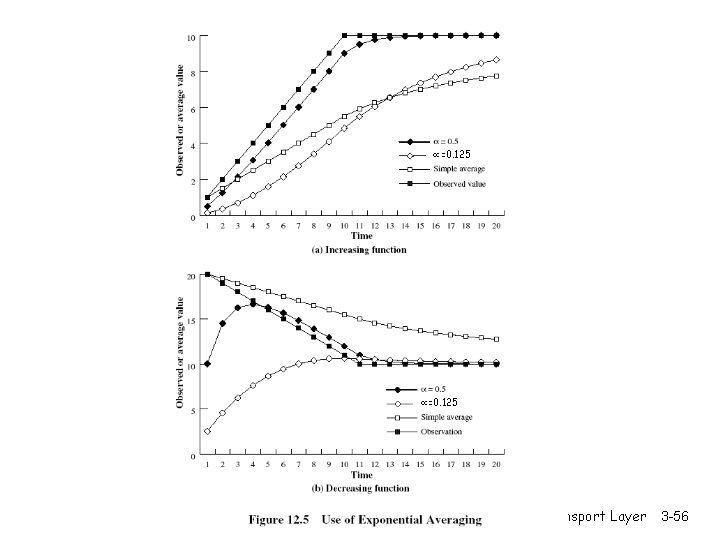

TCP Round Trip Time and Timeout Estimated. RTT(k) = (1 - )*Estimated. RTT(k-1) + *Sample. RTT(k) =(1 - )*((1 - )*Estimated. RTT(k-2)+ *Sample. RTT(k-1))+ *Sample. RTT(k) =(1 - )k *Sample. RTT(0)+ (1 - )k-1 *Sample. RTT)(1)+…+ *Sample. RTT(k) r Exponential weighted moving average (EWMA) r influence of past sample decreases exponentially fast r typical value: = 0. 125 Transport Layer 3 -53

Example RTT estimation: Transport Layer 3 -54

=0. 5 =0. 125 Transport Layer 3 -55

=0. 125 Transport Layer 3 -56

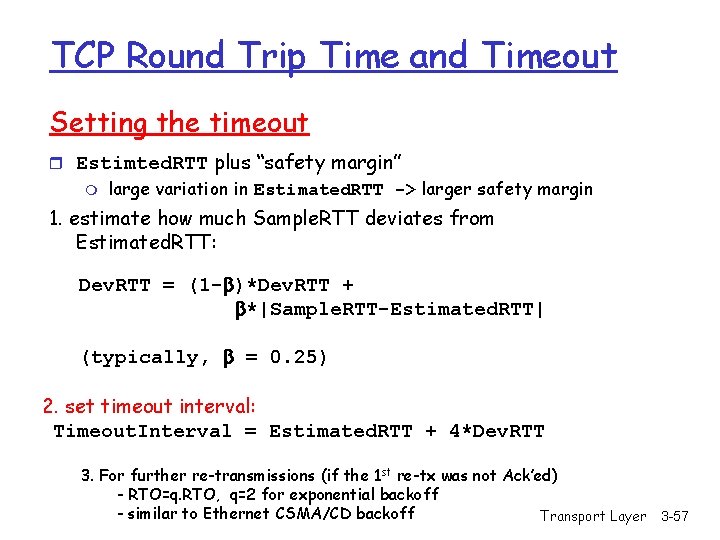

TCP Round Trip Time and Timeout Setting the timeout r Estimted. RTT plus “safety margin” m large variation in Estimated. RTT -> larger safety margin 1. estimate how much Sample. RTT deviates from Estimated. RTT: Dev. RTT = (1 - )*Dev. RTT + *|Sample. RTT-Estimated. RTT| (typically, = 0. 25) 2. set timeout interval: Timeout. Interval = Estimated. RTT + 4*Dev. RTT 3. For further re-transmissions (if the 1 st re-tx was not Ack’ed) - RTO=q. RTO, q=2 for exponential backoff - similar to Ethernet CSMA/CD backoff Transport Layer 3 -57

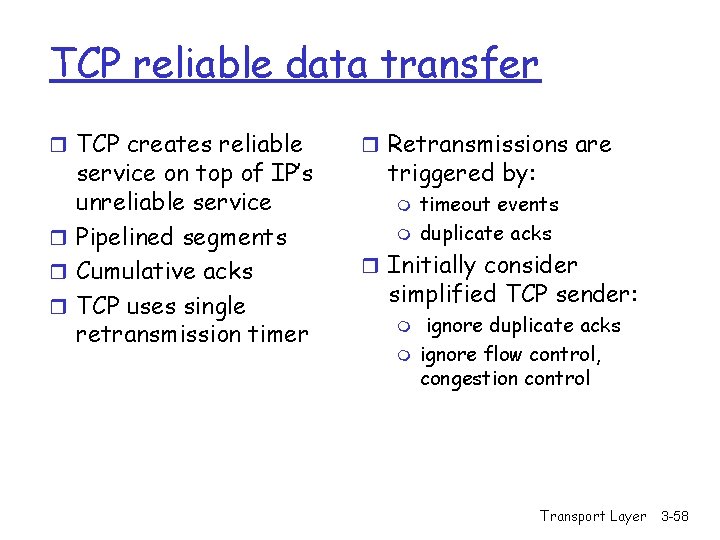

TCP reliable data transfer r TCP creates reliable service on top of IP’s unreliable service r Pipelined segments r Cumulative acks r TCP uses single retransmission timer r Retransmissions are triggered by: m m timeout events duplicate acks r Initially consider simplified TCP sender: m m ignore duplicate acks ignore flow control, congestion control Transport Layer 3 -58

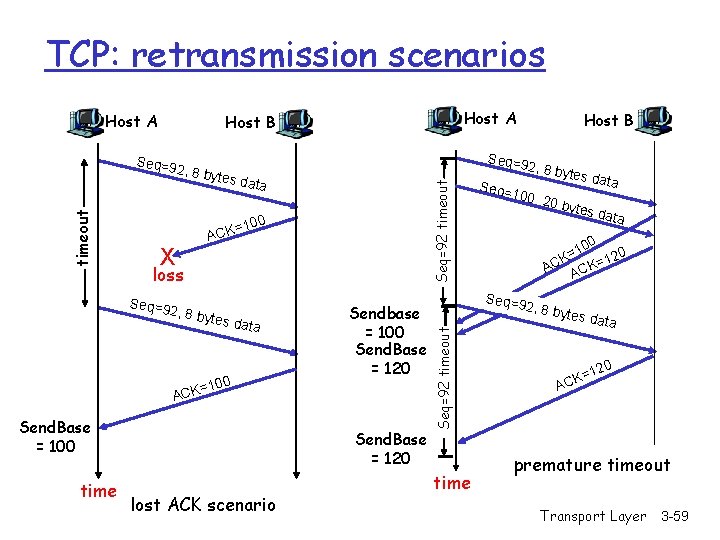

TCP: retransmission scenarios Host A 2, 8 by tes da Seq=92 timeout ta =100 X ACK loss Seq=9 2, 8 by tes da ta 100 Sendbase = 100 Send. Base = 120 = ACK Send. Base = 100 time Host B Seq=9 Send. Base = 120 lost ACK scenario Seq=92 timeout Seq=9 timeout Host A Host B time Seq= 2, 8 by 100, 2 tes da t a 0 byte s data 0 10 = K 120 = C K A AC Seq=9 2, 8 by tes da ta 20 K=1 AC premature timeout Transport Layer 3 -59

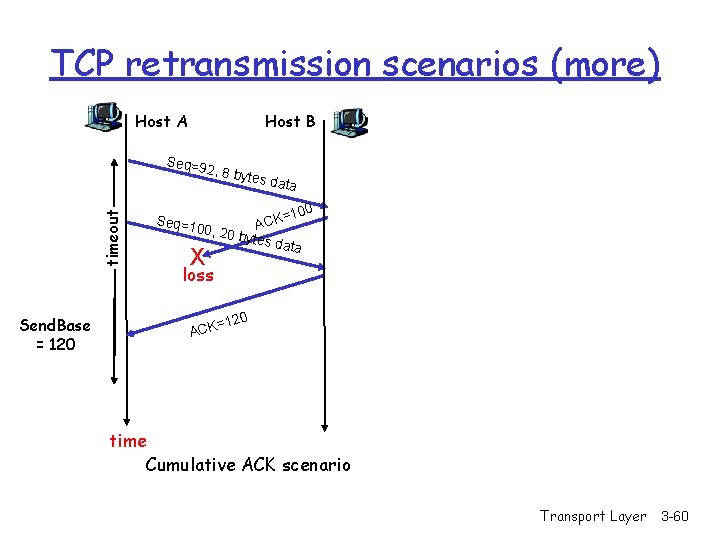

TCP retransmission scenarios (more) Host A Host B Seq=9 timeout 2, 8 by Send. Base = 120 Seq=1 tes da ta =100 K C A 00, 20 bytes data X loss 120 = ACK time Cumulative ACK scenario Transport Layer 3 -60

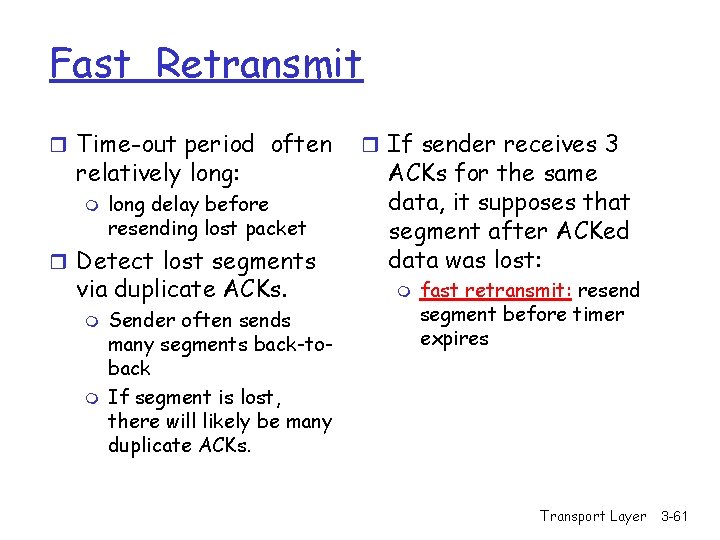

Fast Retransmit r Time-out period often relatively long: m long delay before resending lost packet r Detect lost segments via duplicate ACKs. m m Sender often sends many segments back-toback If segment is lost, there will likely be many duplicate ACKs. r If sender receives 3 ACKs for the same data, it supposes that segment after ACKed data was lost: m fast retransmit: resend segment before timer expires Transport Layer 3 -61

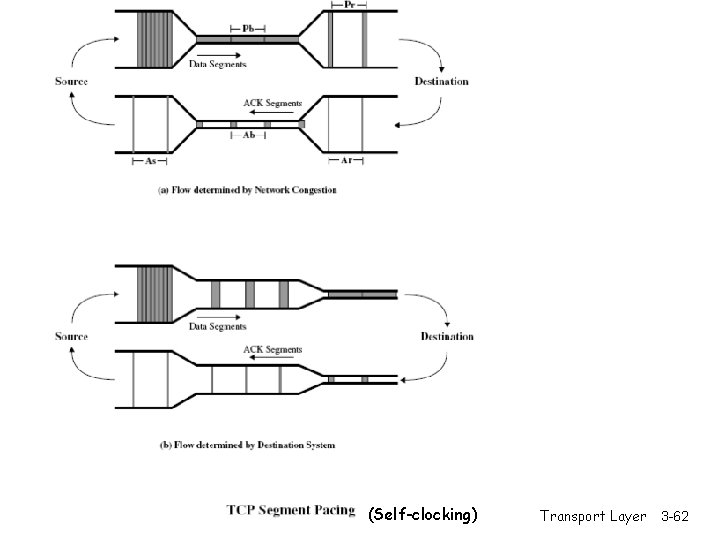

(Self-clocking) Transport Layer 3 -62

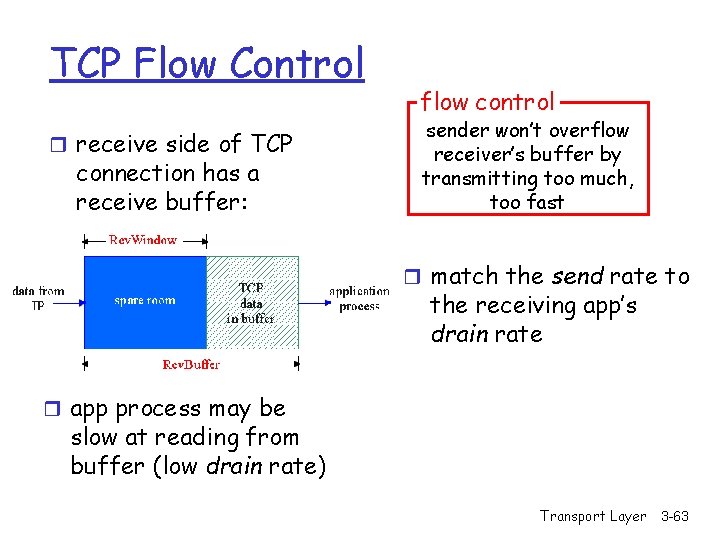

TCP Flow Control r receive side of TCP connection has a receive buffer: flow control sender won’t overflow receiver’s buffer by transmitting too much, too fast r match the send rate to the receiving app’s drain rate r app process may be slow at reading from buffer (low drain rate) Transport Layer 3 -63

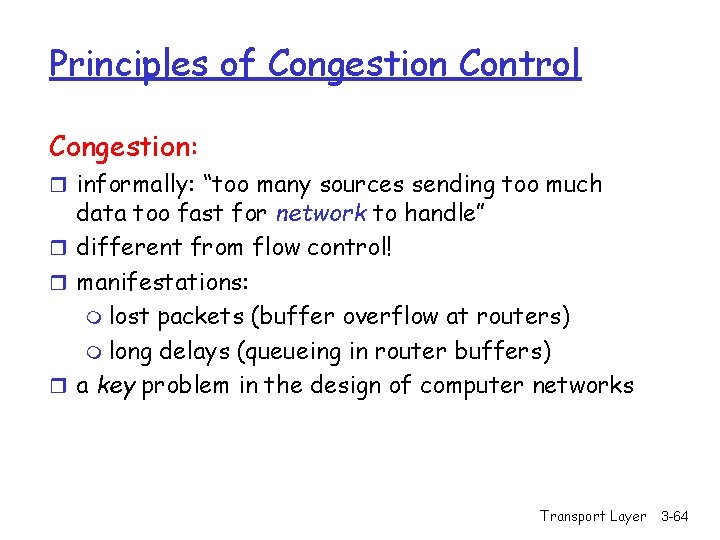

Principles of Congestion Control Congestion: r informally: “too many sources sending too much data too fast for network to handle” r different from flow control! r manifestations: m lost packets (buffer overflow at routers) m long delays (queueing in router buffers) r a key problem in the design of computer networks Transport Layer 3 -64

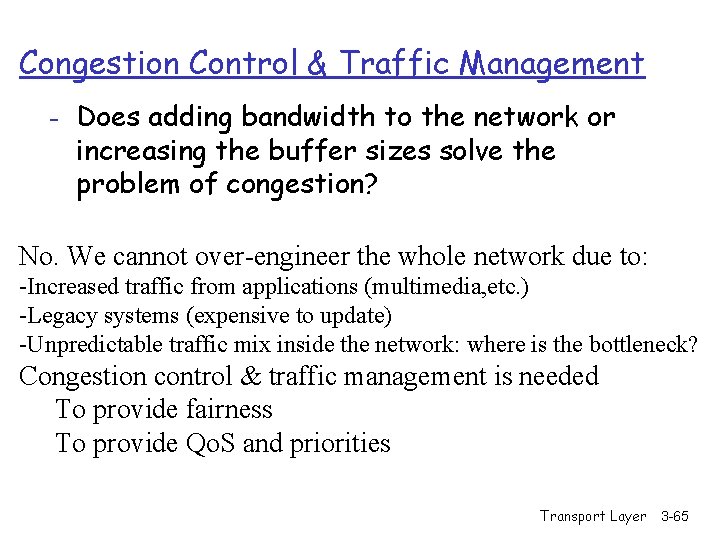

Congestion Control & Traffic Management - Does adding bandwidth to the network or increasing the buffer sizes solve the problem of congestion? No. We cannot over-engineer the whole network due to: -Increased traffic from applications (multimedia, etc. ) -Legacy systems (expensive to update) -Unpredictable traffic mix inside the network: where is the bottleneck? Congestion control & traffic management is needed To provide fairness To provide Qo. S and priorities Transport Layer 3 -65

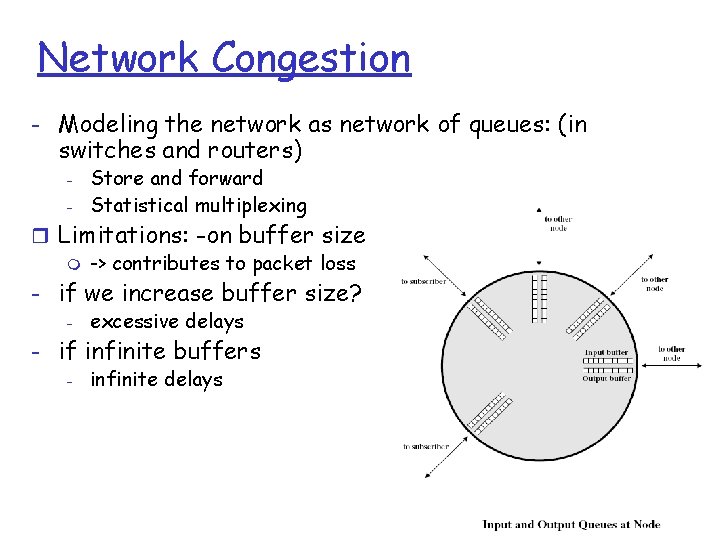

Network Congestion - Modeling the network as network of queues: (in switches and routers) - Store and forward Statistical multiplexing r Limitations: -on buffer size m -> contributes to packet loss - if we increase buffer size? - excessive delays - if infinite buffers - infinite delays Transport Layer 3 -66

- solutions: - policies for packet service and packet discard to limit delays - congestion notification and flow/congestion control to limit arrival rate - buffer management: input buffers, output buffers, shared buffers Transport Layer 3 -67

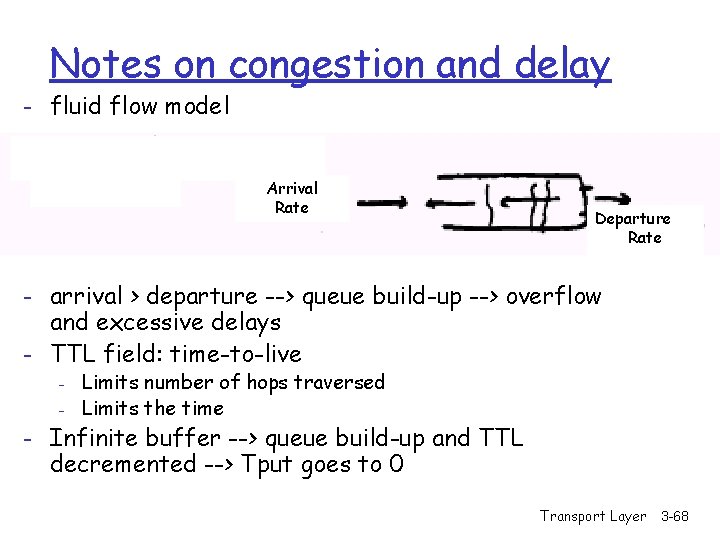

Notes on congestion and delay - fluid flow model Arrival Rate Departure Rate - arrival > departure --> queue build-up --> overflow and excessive delays - TTL field: time-to-live - Limits number of hops traversed Limits the time - Infinite buffer --> queue build-up and TTL decremented --> Tput goes to 0 Transport Layer 3 -68

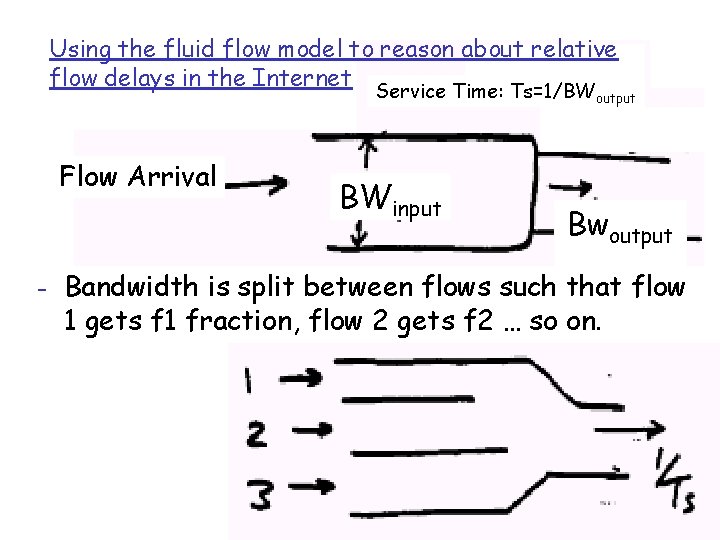

Using the fluid flow model to reason about relative flow delays in the Internet Service Time: Ts=1/BWoutput Flow Arrival BWinput Bwoutput - Bandwidth is split between flows such that flow 1 gets f 1 fraction, flow 2 gets f 2 … so on. Transport Layer 3 -69

m f 1 is fraction of the bandwidth given to flow 1 m f 2 is fraction of the bandwidth given to flow 2 m 1 is the arrival rate for flow 1 m 2 is the arrival rate for flow 2 r for M/D/1: delay Tq=Ts[1+ /[2(1 - )]] m The total server utilization, =Ts. m Fraction time utilized by flow i, Ti =Ts/fi m (or the bandwidth utilized by flow i, Bi=Bs. fi, where Bi=1/Ti and Bs=1/Ts=M [the total b. w. ]) m The utilization for flow i, i = i. Ti= i/(Bs. fi) Transport Layer 3 -70

r Tq and q = f( ) r If utilization is the same, then queuing delay is the same r Delay for flow i= f( i) m i= i. Ti= Ts. i/fi r Condition for constant delay for all flows m i/fi is constant Transport Layer 3 -71

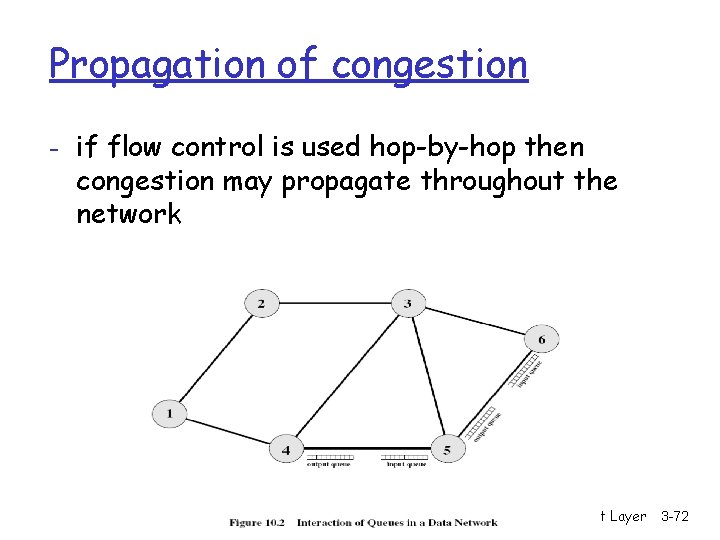

Propagation of congestion - if flow control is used hop-by-hop then congestion may propagate throughout the network Transport Layer 3 -72

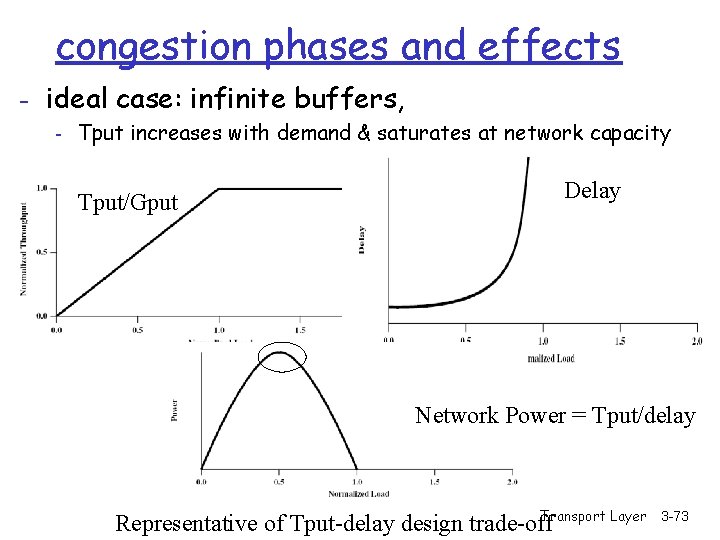

congestion phases and effects - ideal case: infinite buffers, - Tput increases with demand & saturates at network capacity Tput/Gput Delay Network Power = Tput/delay Transport Layer Representative of Tput-delay design trade-off 3 -73

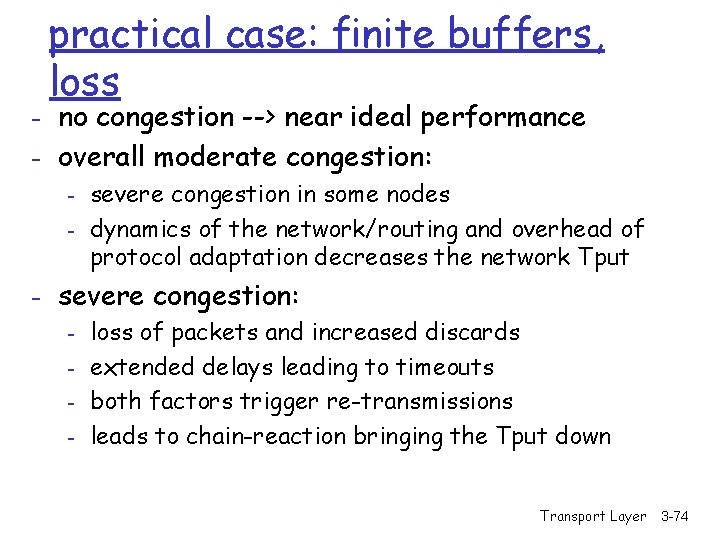

practical case: finite buffers, loss - no congestion --> near ideal performance - overall moderate congestion: - severe congestion in some nodes - dynamics of the network/routing and overhead of protocol adaptation decreases the network Tput - severe congestion: - loss of packets and increased discards - extended delays leading to timeouts - both factors trigger re-transmissions - leads to chain-reaction bringing the Tput down Transport Layer 3 -74

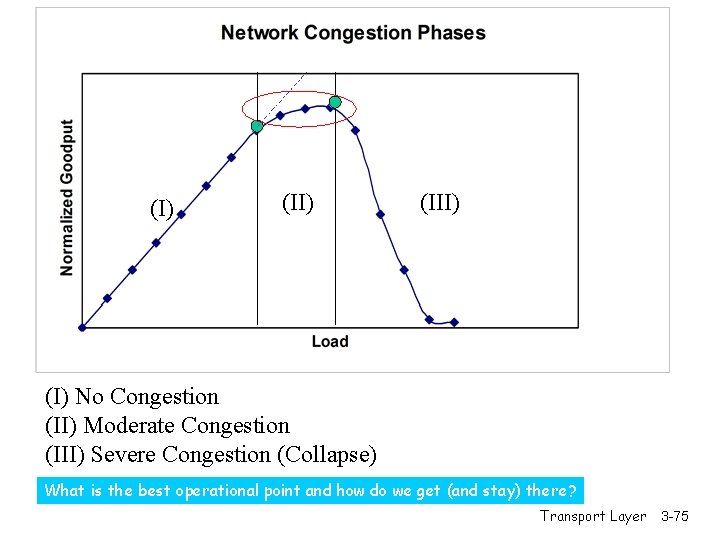

(I) (III) (I) No Congestion (II) Moderate Congestion (III) Severe Congestion (Collapse) What is the best operational point and how do we get (and stay) there? Transport Layer 3 -75

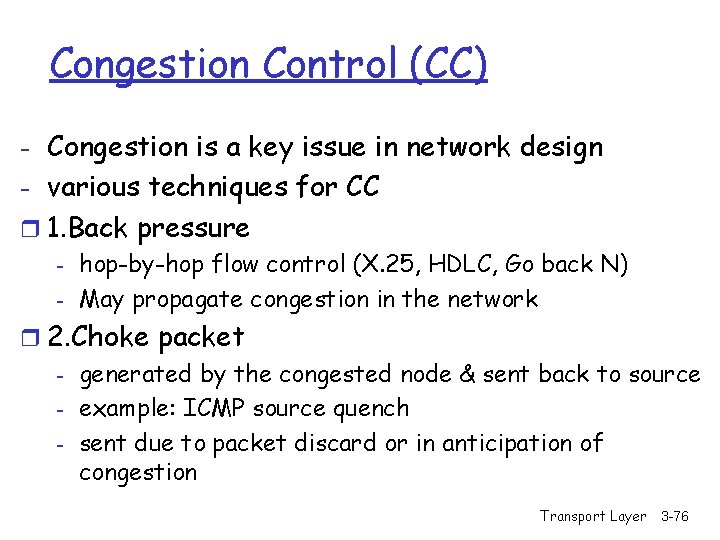

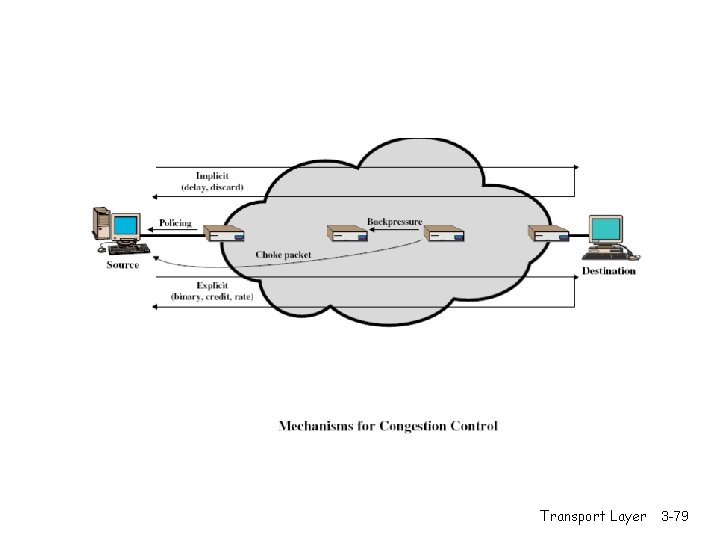

Congestion Control (CC) - Congestion is a key issue in network design - various techniques for CC r 1. Back pressure - hop-by-hop flow control (X. 25, HDLC, Go back N) - May propagate congestion in the network r 2. Choke packet - generated by the congested node & sent back to source - example: ICMP source quench - sent due to packet discard or in anticipation of congestion Transport Layer 3 -76

Congestion Control (CC) (contd. ) r 3. Implicit congestion signaling - used in TCP - delay increase or packet discard to detect congestion - may erroneously signal congestion (i. e. , not always reliable) [e. g. , over wireless links] - done end-to-end without network assistance - TCP cuts down its window/rate Transport Layer 3 -77

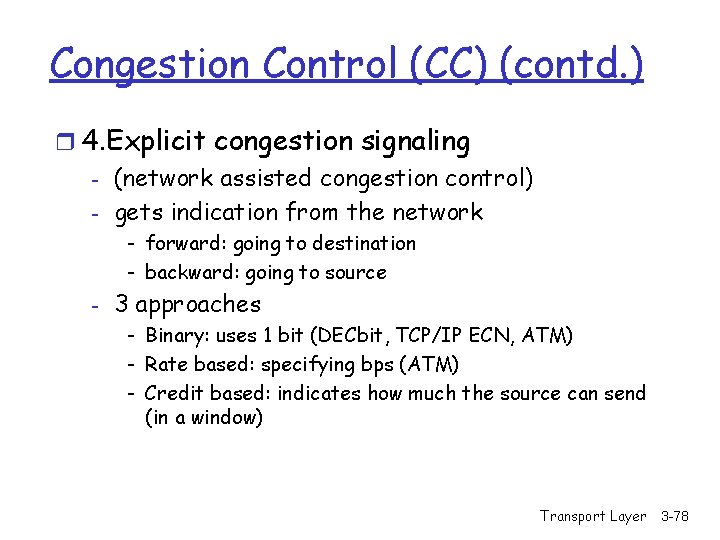

Congestion Control (CC) (contd. ) r 4. Explicit congestion signaling - (network assisted congestion control) - gets indication from the network - forward: going to destination - backward: going to source - 3 approaches - Binary: uses 1 bit (DECbit, TCP/IP ECN, ATM) - Rate based: specifying bps (ATM) - Credit based: indicates how much the source can send (in a window) Transport Layer 3 -78

Transport Layer 3 -79

TCP congestion control: additive increase, multiplicative decrease r Approach: increase transmission rate (window size), Saw tooth behavior: probing for bandwidth congestion window size probing for usable bandwidth, until loss occurs m additive increase: increase rate (or congestion window) Cong. Win until loss detected m multiplicative decrease: cut Cong. Win in half after loss time Transport Layer 3 -80

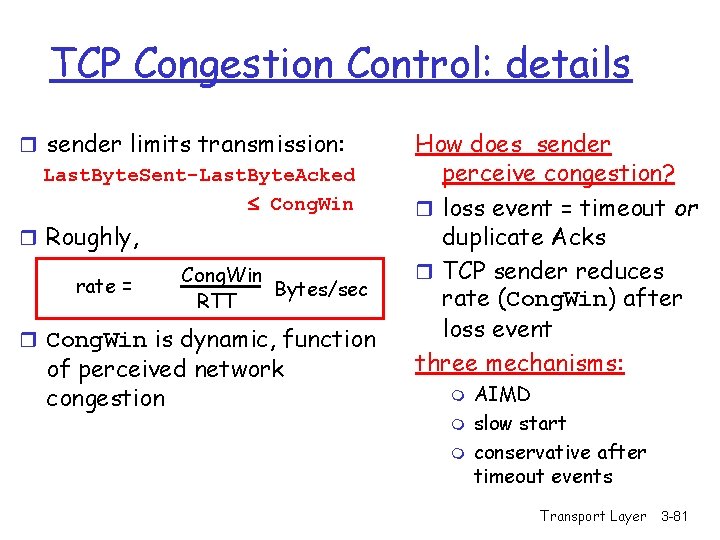

TCP Congestion Control: details r sender limits transmission: Last. Byte. Sent-Last. Byte. Acked Cong. Win r Roughly, rate = Cong. Win Bytes/sec RTT r Cong. Win is dynamic, function of perceived network congestion How does sender perceive congestion? r loss event = timeout or duplicate Acks r TCP sender reduces rate (Cong. Win) after loss event three mechanisms: m m m AIMD slow start conservative after timeout events Transport Layer 3 -81

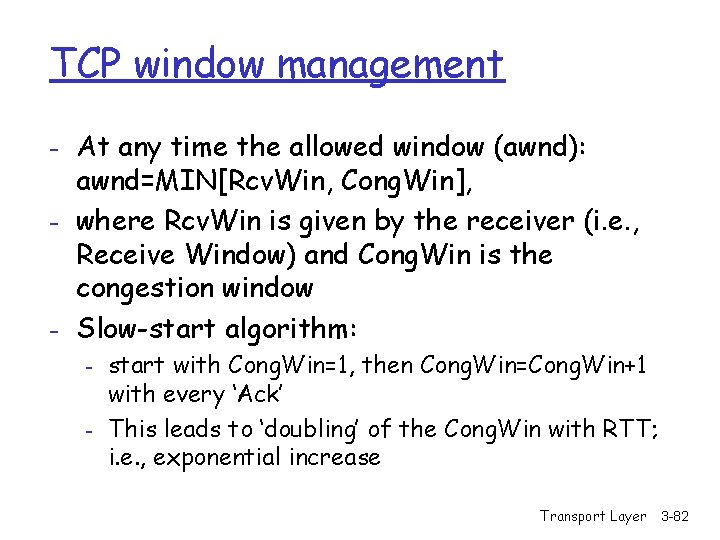

TCP window management - At any time the allowed window (awnd): awnd=MIN[Rcv. Win, Cong. Win], - where Rcv. Win is given by the receiver (i. e. , Receive Window) and Cong. Win is the congestion window - Slow-start algorithm: - start with Cong. Win=1, then Cong. Win=Cong. Win+1 with every ‘Ack’ This leads to ‘doubling’ of the Cong. Win with RTT; i. e. , exponential increase Transport Layer 3 -82

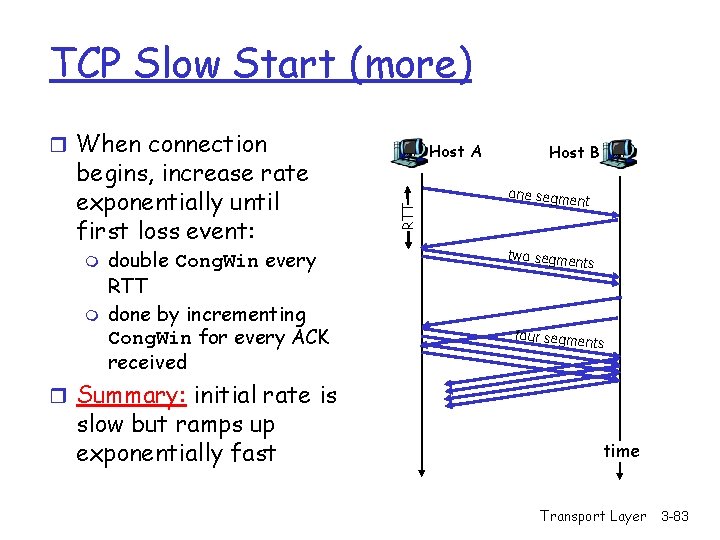

TCP Slow Start (more) r When connection m m double Cong. Win every RTT done by incrementing Cong. Win for every ACK received RTT begins, increase rate exponentially until first loss event: Host A Host B one segme nt two segme nts four segme nts r Summary: initial rate is slow but ramps up exponentially fast time Transport Layer 3 -83

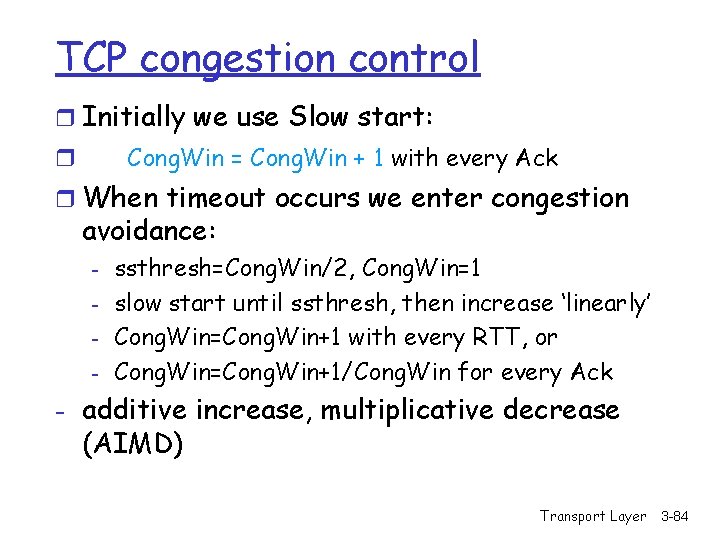

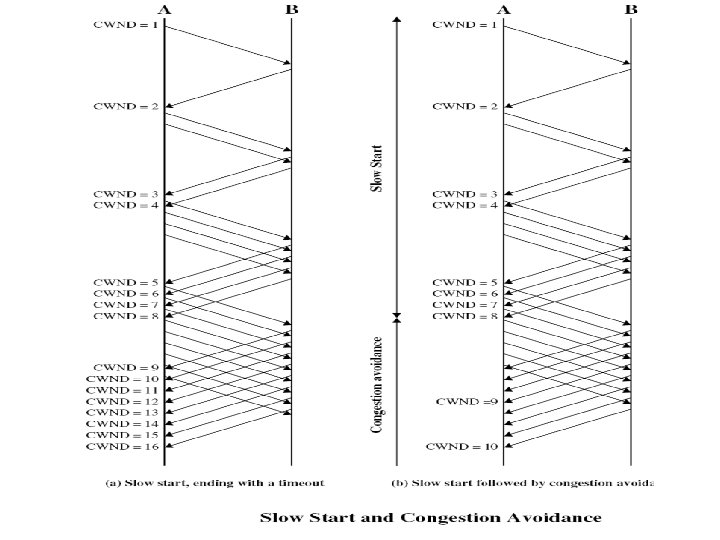

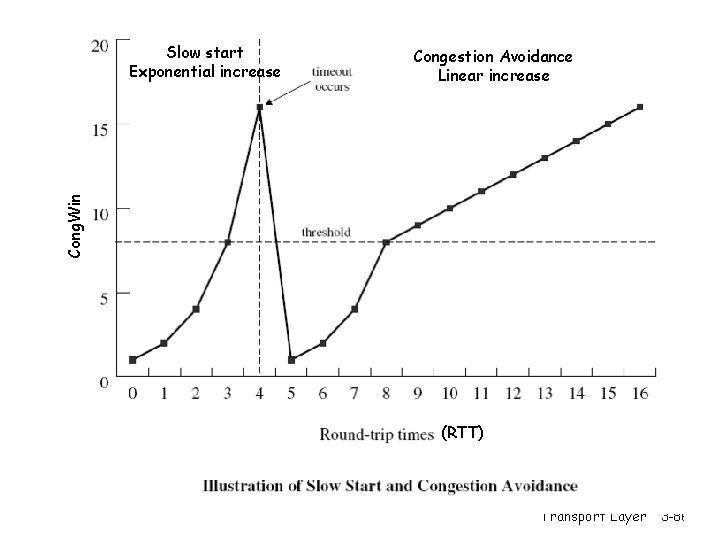

TCP congestion control r Initially we use Slow start: Cong. Win = Cong. Win + 1 with every Ack r r When timeout occurs we enter congestion avoidance: - ssthresh=Cong. Win/2, Cong. Win=1 slow start until ssthresh, then increase ‘linearly’ Cong. Win=Cong. Win+1 with every RTT, or Cong. Win=Cong. Win+1/Cong. Win for every Ack - additive increase, multiplicative decrease (AIMD) Transport Layer 3 -84

Transport Layer 3 -85

Congestion Avoidance Linear increase Cong. Win Slow start Exponential increase (RTT) Transport Layer 3 -86

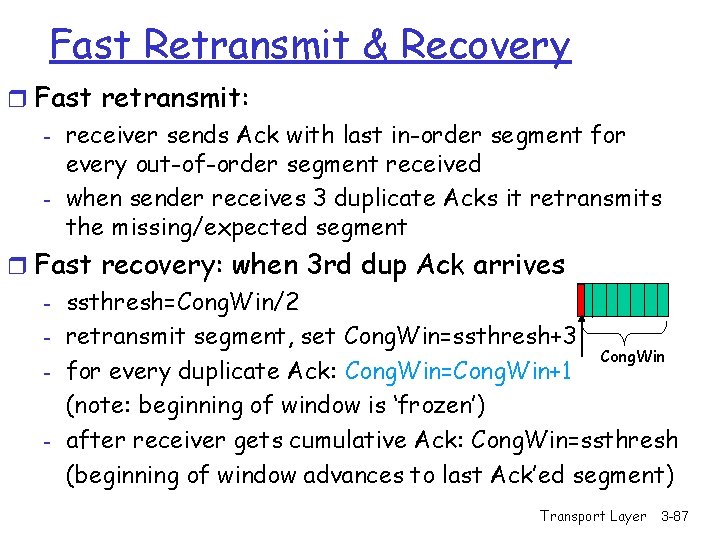

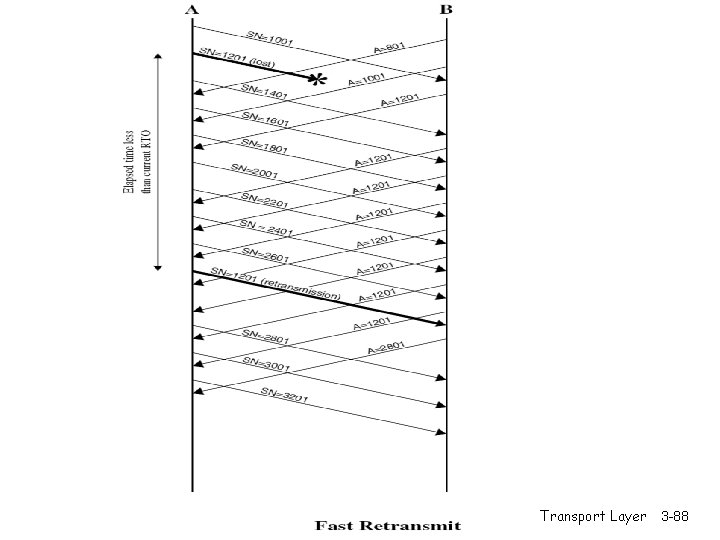

Fast Retransmit & Recovery r Fast retransmit: - receiver sends Ack with last in-order segment for every out-of-order segment received - when sender receives 3 duplicate Acks it retransmits the missing/expected segment r Fast recovery: when 3 rd dup Ack arrives - ssthresh=Cong. Win/2 - retransmit segment, set Cong. Win=ssthresh+3 Cong. Win - for every duplicate Ack: Cong. Win=Cong. Win+1 (note: beginning of window is ‘frozen’) - after receiver gets cumulative Ack: Cong. Win=ssthresh (beginning of window advances to last Ack’ed segment) Transport Layer 3 -87

Transport Layer 3 -88

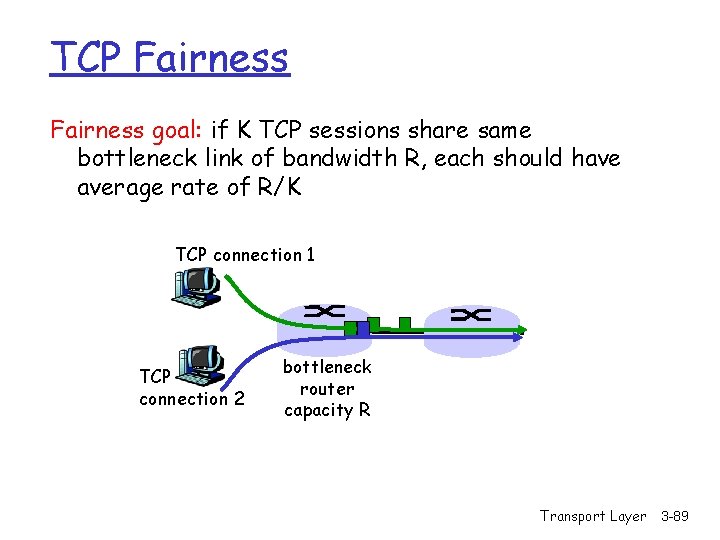

TCP Fairness goal: if K TCP sessions share same bottleneck link of bandwidth R, each should have average rate of R/K TCP connection 1 TCP connection 2 bottleneck router capacity R Transport Layer 3 -89

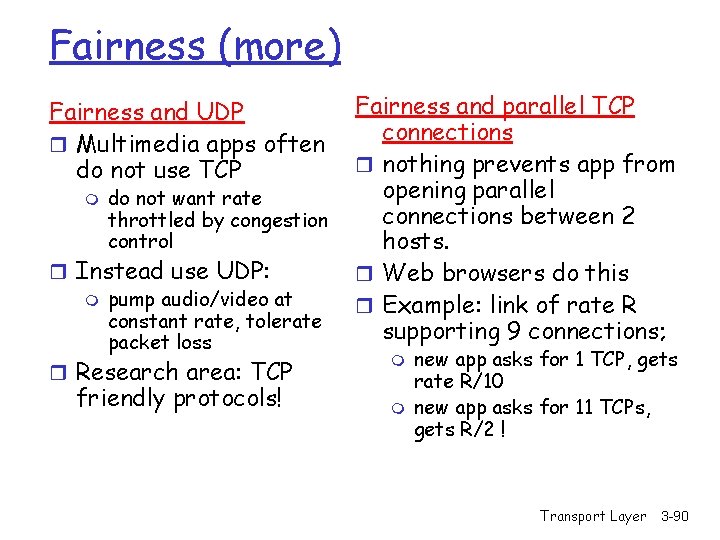

Fairness (more) Fairness and UDP r Multimedia apps often do not use TCP m do not want rate throttled by congestion control r Instead use UDP: m pump audio/video at constant rate, tolerate packet loss r Research area: TCP friendly protocols! Fairness and parallel TCP connections r nothing prevents app from opening parallel connections between 2 hosts. r Web browsers do this r Example: link of rate R supporting 9 connections; m m new app asks for 1 TCP, gets rate R/10 new app asks for 11 TCPs, gets R/2 ! Transport Layer 3 -90

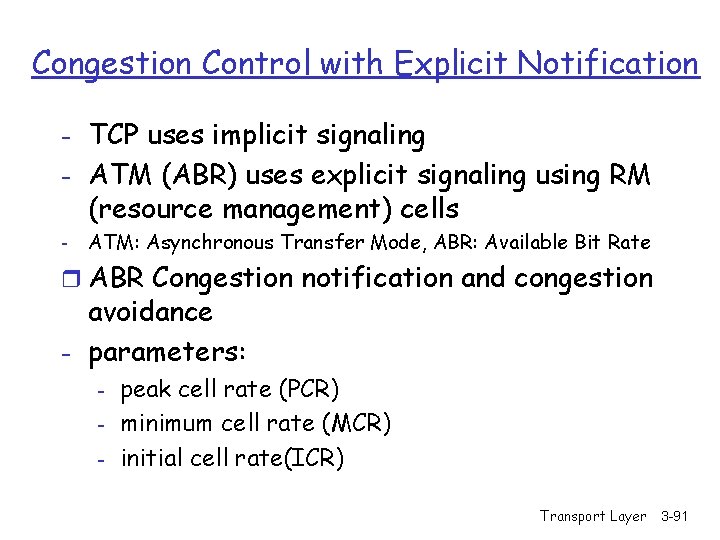

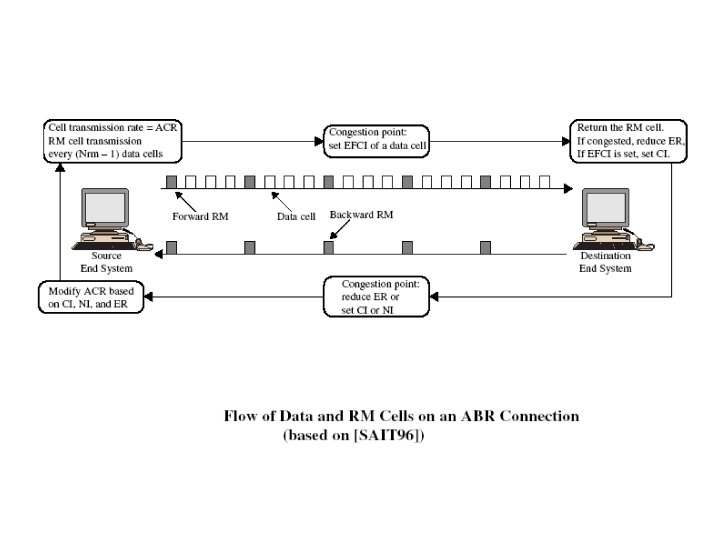

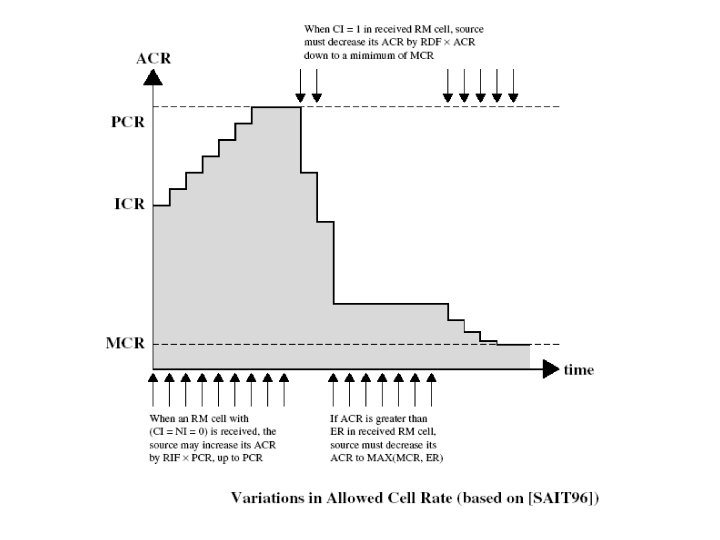

Congestion Control with Explicit Notification - TCP uses implicit signaling - ATM (ABR) uses explicit signaling using RM (resource management) cells - ATM: Asynchronous Transfer Mode, ABR: Available Bit Rate r ABR Congestion notification and congestion avoidance - parameters: - peak cell rate (PCR) minimum cell rate (MCR) initial cell rate(ICR) Transport Layer 3 -91

- ABR uses resource management cell (RM cell) with fields: - CI (congestion indication) NI (no increase) ER (explicit rate) r Types of RM cells: - Forward RM (FRM) - Backward RM (BRM) Transport Layer 3 -92

Transport Layer 3 -93

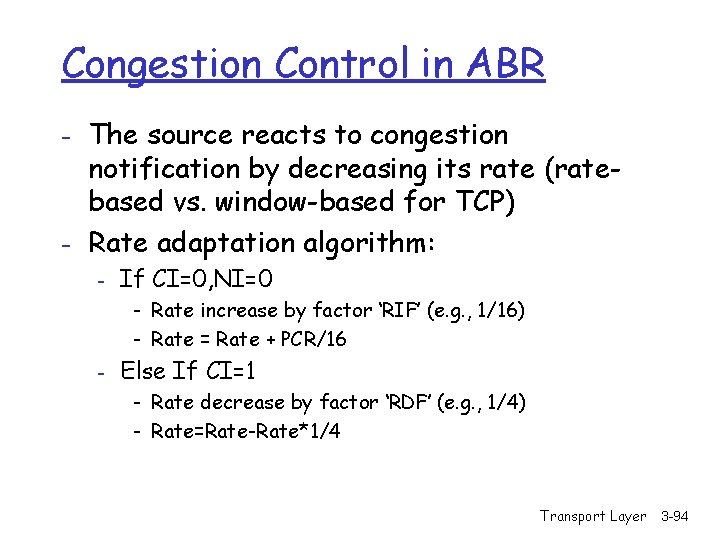

Congestion Control in ABR - The source reacts to congestion notification by decreasing its rate (ratebased vs. window-based for TCP) - Rate adaptation algorithm: - If CI=0, NI=0 - Rate increase by factor ‘RIF’ (e. g. , 1/16) - Rate = Rate + PCR/16 - Else If CI=1 - Rate decrease by factor ‘RDF’ (e. g. , 1/4) - Rate=Rate-Rate*1/4 Transport Layer 3 -94

Transport Layer 3 -95

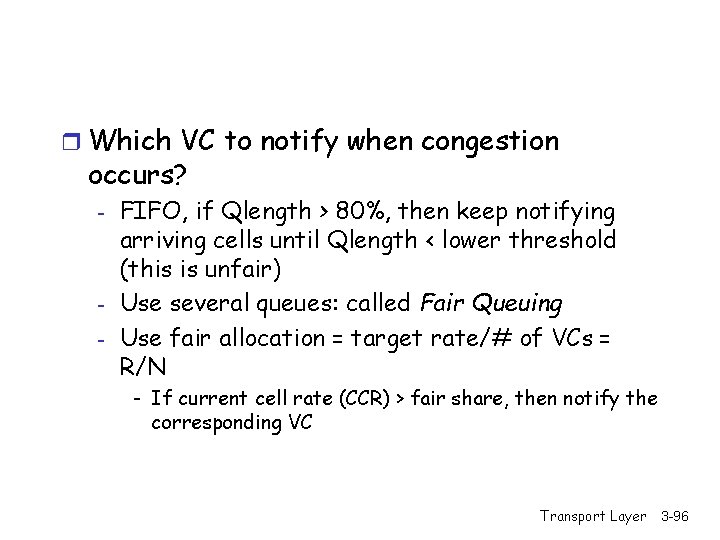

r Which VC to notify when congestion occurs? - - FIFO, if Qlength > 80%, then keep notifying arriving cells until Qlength < lower threshold (this is unfair) Use several queues: called Fair Queuing Use fair allocation = target rate/# of VCs = R/N - If current cell rate (CCR) > fair share, then notify the corresponding VC Transport Layer 3 -96

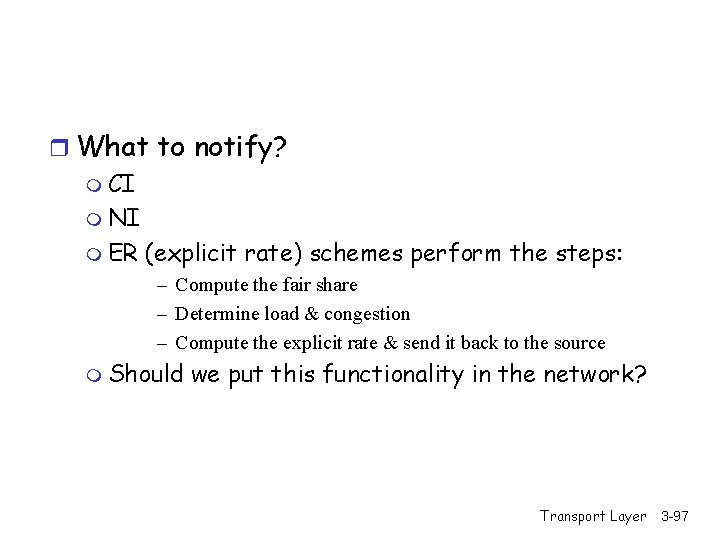

r What to notify? m CI m NI m ER (explicit rate) schemes perform the steps: – Compute the fair share – Determine load & congestion – Compute the explicit rate & send it back to the source m Should we put this functionality in the network? Transport Layer 3 -97

- Slides: 97