Management Overview 9861 Broken Land Parkway Fourth Floor

Management Overview 9861 Broken Land Parkway Fourth Floor Columbia, Maryland 21046 800 -638 -6316 www. mccabe. com support@mccabe. com 1 -800 -634 -0150 1 Copyright Mc. Cabe & Associates 1999

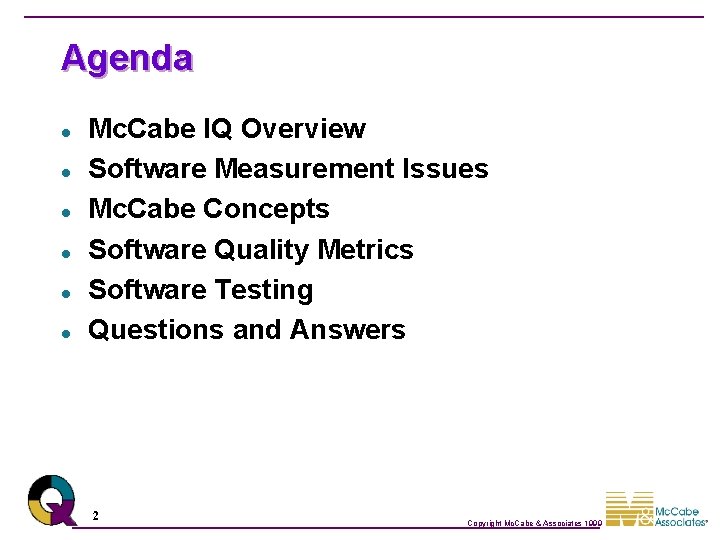

Agenda l l l Mc. Cabe IQ Overview Software Measurement Issues Mc. Cabe Concepts Software Quality Metrics Software Testing Questions and Answers 2 Copyright Mc. Cabe & Associates 1999

About Mc. Cabe & Associates Global Presence 3 20 Years of Expertise Analyzed Over 25 Billion Lines of Code Copyright Mc. Cabe & Associates 1999

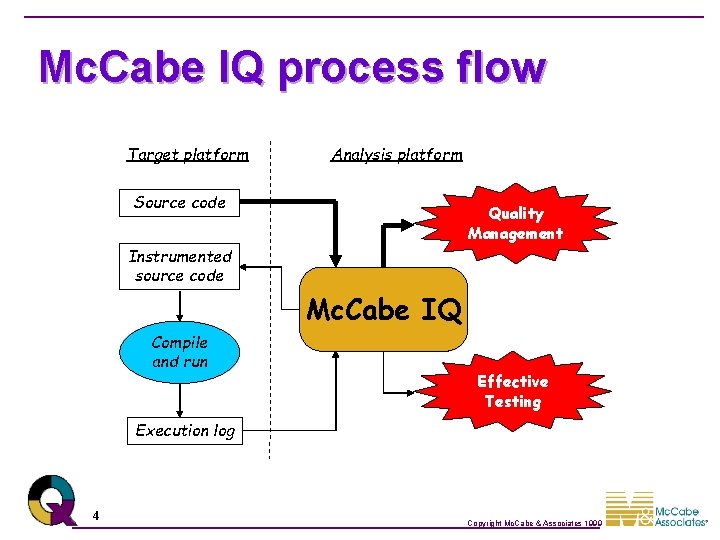

Mc. Cabe IQ process flow Target platform Analysis platform Source code Quality Management Instrumented source code Mc. Cabe IQ Compile and run Effective Testing Execution log 4 Copyright Mc. Cabe & Associates 1999

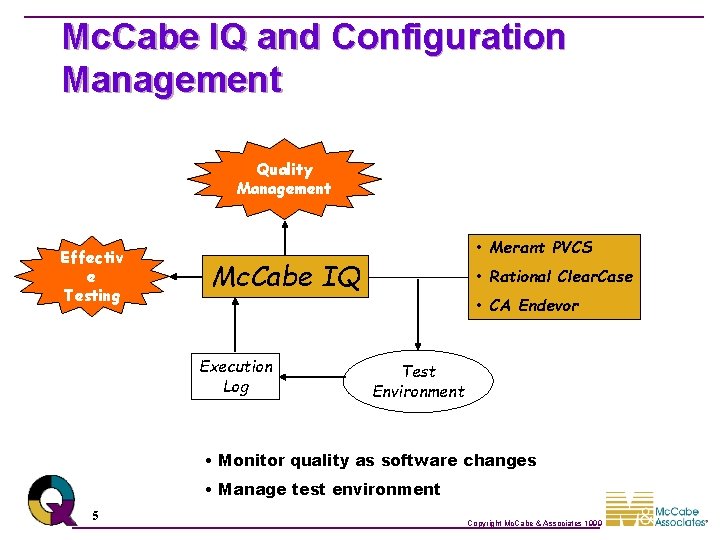

Mc. Cabe IQ and Configuration Management Quality Management Effectiv e Testing • Merant PVCS Mc. Cabe IQ Execution Log • Rational Clear. Case • CA Endevor Test Environment • Monitor quality as software changes • Manage test environment 5 Copyright Mc. Cabe & Associates 1999

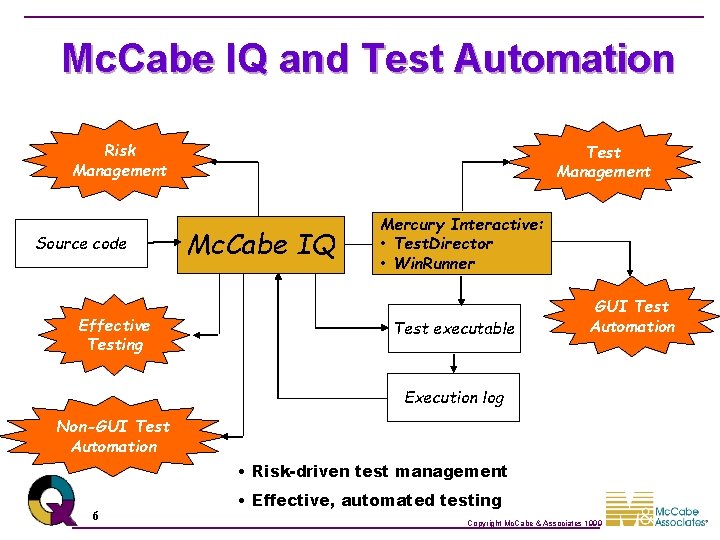

Mc. Cabe IQ and Test Automation Risk Management Source code Effective Testing Test Management Mc. Cabe IQ Mercury Interactive: • Test. Director • Win. Runner Test executable GUI Test Automation Execution log Non-GUI Test Automation • Risk-driven test management 6 • Effective, automated testing Copyright Mc. Cabe & Associates 1999

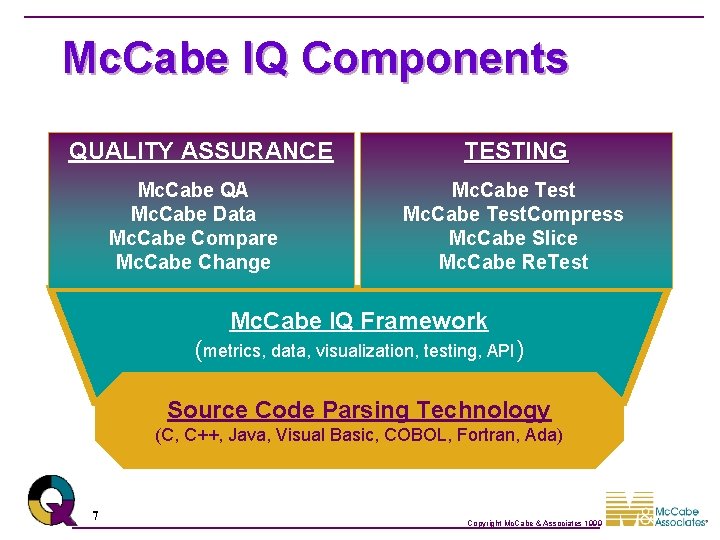

Mc. Cabe IQ Components QUALITY ASSURANCE Mc. Cabe QA Mc. Cabe Data Mc. Cabe Compare Mc. Cabe Change TESTING Mc. Cabe Test. Compress Mc. Cabe Slice Mc. Cabe Re. Test Mc. Cabe IQ Framework (metrics, data, visualization, testing, API) Source Code Parsing Technology (C, C++, Java, Visual Basic, COBOL, Fortran, Ada) 7 Copyright Mc. Cabe & Associates 1999

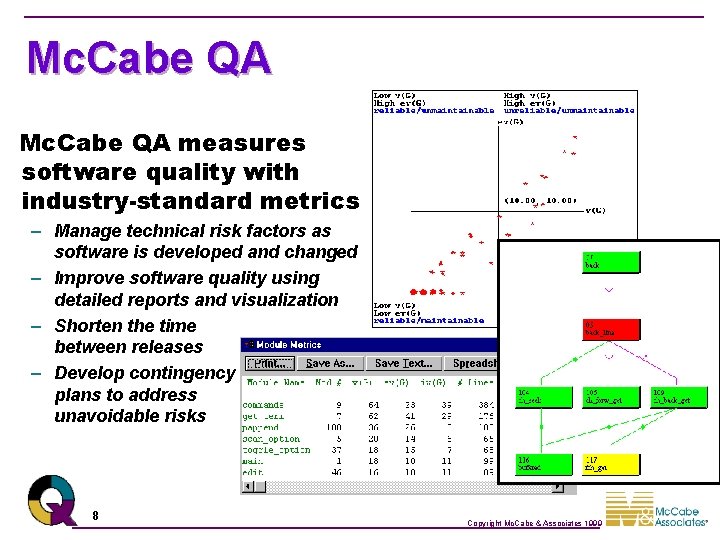

Mc. Cabe QA measures software quality with industry-standard metrics – Manage technical risk factors as software is developed and changed – Improve software quality using detailed reports and visualization – Shorten the time between releases – Develop contingency plans to address unavoidable risks 8 Copyright Mc. Cabe & Associates 1999

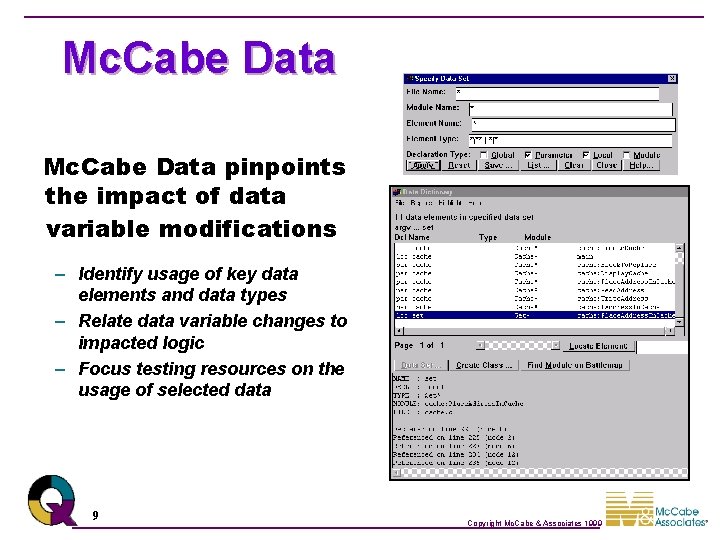

Mc. Cabe Data pinpoints the impact of data variable modifications – Identify usage of key data elements and data types – Relate data variable changes to impacted logic – Focus testing resources on the usage of selected data 9 Copyright Mc. Cabe & Associates 1999

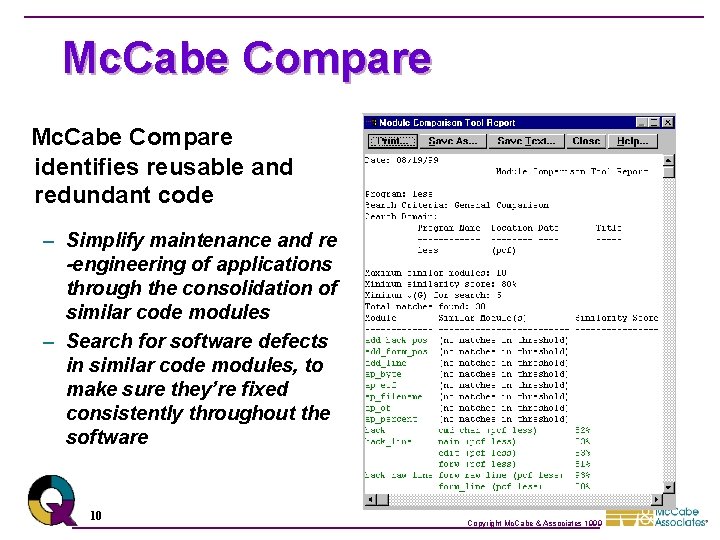

Mc. Cabe Compare identifies reusable and redundant code – Simplify maintenance and re -engineering of applications through the consolidation of similar code modules – Search for software defects in similar code modules, to make sure they’re fixed consistently throughout the software 10 Copyright Mc. Cabe & Associates 1999

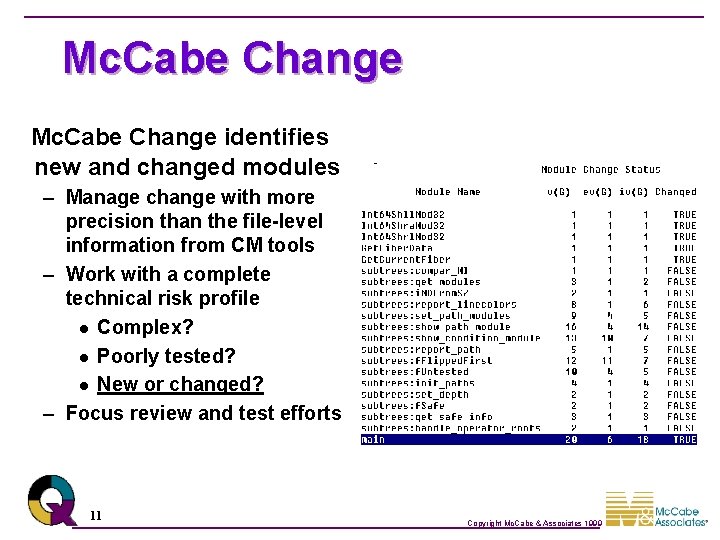

Mc. Cabe Change identifies new and changed modules – Manage change with more precision than the file-level information from CM tools – Work with a complete technical risk profile l Complex? l Poorly tested? l New or changed? – Focus review and test efforts 11 Copyright Mc. Cabe & Associates 1999

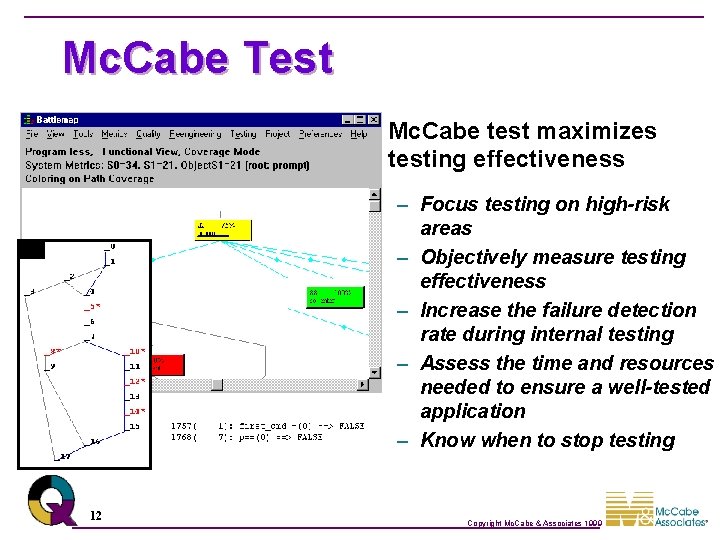

Mc. Cabe Test Mc. Cabe test maximizes testing effectiveness – Focus testing on high-risk areas – Objectively measure testing effectiveness – Increase the failure detection rate during internal testing – Assess the time and resources needed to ensure a well-tested application – Know when to stop testing 12 Copyright Mc. Cabe & Associates 1999

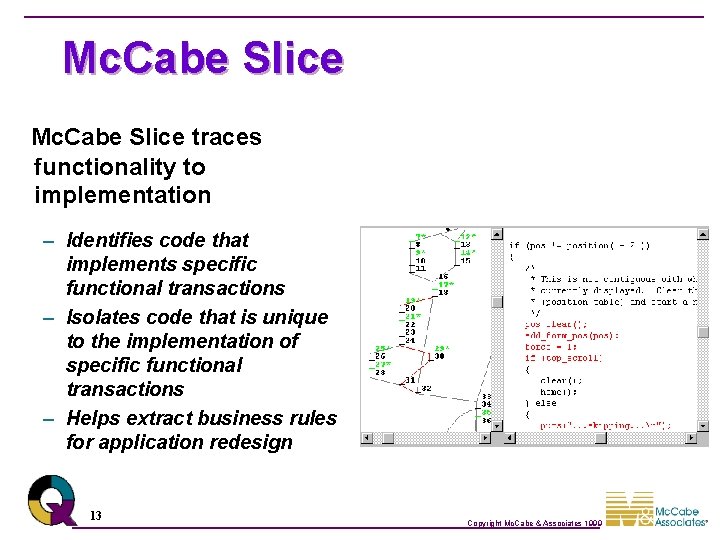

Mc. Cabe Slice traces functionality to implementation – Identifies code that implements specific functional transactions – Isolates code that is unique to the implementation of specific functional transactions – Helps extract business rules for application redesign 13 Copyright Mc. Cabe & Associates 1999

Mc. Cabe IQ Components Summary l l l l Mc. Cabe QA: Improve quality with metrics Mc. Cabe Data: Analyze data impact Mc. Cabe Compare: Eliminate duplicate code Mc. Cabe Change: Focus on changed software Mc. Cabe Test: Increase test effectiveness Mc. Cabe Test. Compress: Increase test efficiency Mc. Cabe Slice: Trace functionality to code Mc. Cabe Re. Test: Automate regression testing 14 Copyright Mc. Cabe & Associates 1999

Software Measurement Issues l l l Risk management Software metrics Complexity metric evaluation Benefits of complexity measurement 15 Copyright Mc. Cabe & Associates 1999

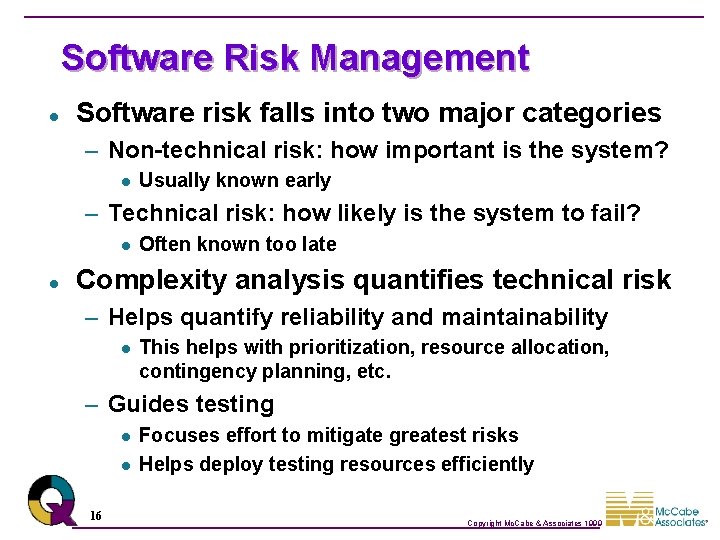

Software Risk Management l Software risk falls into two major categories – Non-technical risk: how important is the system? l Usually known early – Technical risk: how likely is the system to fail? l l Often known too late Complexity analysis quantifies technical risk – Helps quantify reliability and maintainability l This helps with prioritization, resource allocation, contingency planning, etc. – Guides testing l l 16 Focuses effort to mitigate greatest risks Helps deploy testing resources efficiently Copyright Mc. Cabe & Associates 1999

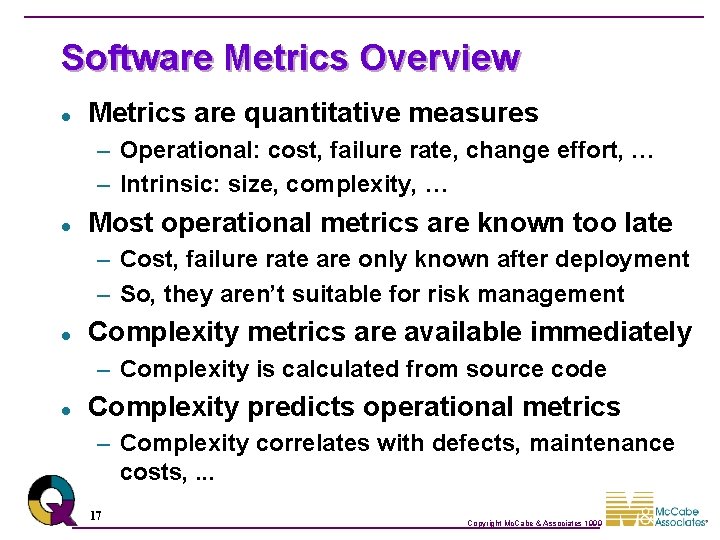

Software Metrics Overview l Metrics are quantitative measures – Operational: cost, failure rate, change effort, … – Intrinsic: size, complexity, … l Most operational metrics are known too late – Cost, failure rate are only known after deployment – So, they aren’t suitable for risk management l Complexity metrics are available immediately – Complexity is calculated from source code l Complexity predicts operational metrics – Complexity correlates with defects, maintenance costs, . . . 17 Copyright Mc. Cabe & Associates 1999

Complexity Metric Evaluation l Good complexity metrics have three properties – Descriptive: objectively measure something – Predictive: correlate with something interesting – Prescriptive: guide risk reduction l Consider lines of code – Descriptive: yes, measures software size – Predictive, Prescriptive: no l Consider cyclomatic complexity – Descriptive: yes, measures decision logic – Predictive: yes, predicts errors and maintenance – Prescriptive: yes, guides testing and improvement 18 Copyright Mc. Cabe & Associates 1999

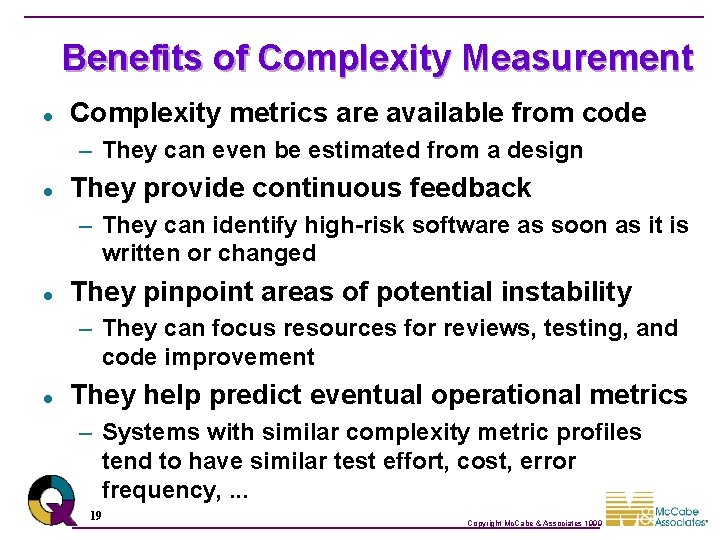

Benefits of Complexity Measurement l Complexity metrics are available from code – They can even be estimated from a design l They provide continuous feedback – They can identify high-risk software as soon as it is written or changed l They pinpoint areas of potential instability – They can focus resources for reviews, testing, and code improvement l They help predict eventual operational metrics – Systems with similar complexity metric profiles tend to have similar test effort, cost, error frequency, . . . 19 Copyright Mc. Cabe & Associates 1999

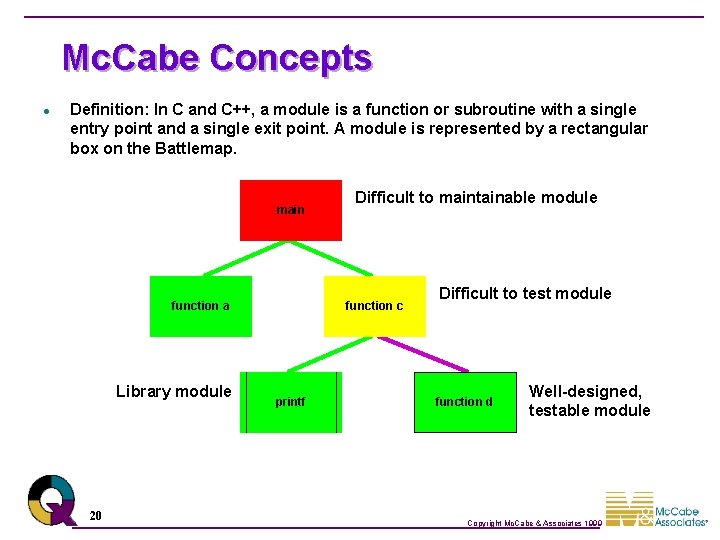

Mc. Cabe Concepts l Definition: In C and C++, a module is a function or subroutine with a single entry point and a single exit point. A module is represented by a rectangular box on the Battlemap. main function a Library module 20 Difficult to maintainable module function c printf Difficult to test module function d Well-designed, testable module Copyright Mc. Cabe & Associates 1999

Analyzing a Module l l Stmt Number 1 2 3 4 5 6 7 8 9 For each module, an annotated source listing and flowgraph is generated. Flowgraph - an architectural diagram of a software module’s logic. Code main() { printf(“example”); if (y > 10) b(); else c(); printf(“end”); } Battlemap main Flowgraph 1 -3 main 4 b c printf 5 condition 7 end of condition 8 -9 21 node: statement or block of sequential statements edge: flow of control between nodes Copyright Mc. Cabe & Associates 1999

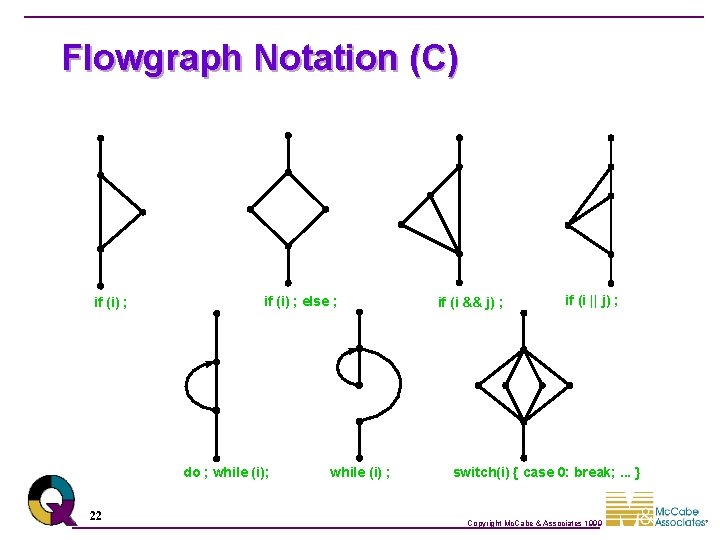

Flowgraph Notation (C) if (i) ; else ; do ; while (i); 22 while (i) ; if (i && j) ; if (i || j) ; switch(i) { case 0: break; . . . } Copyright Mc. Cabe & Associates 1999

Flowgraph and Its Annotated Source Listing Origin information Metric information 0 1* 2 3 Decision construct 4* 6* 5 7 8 9 Node correspondence 23 Copyright Mc. Cabe & Associates 1999

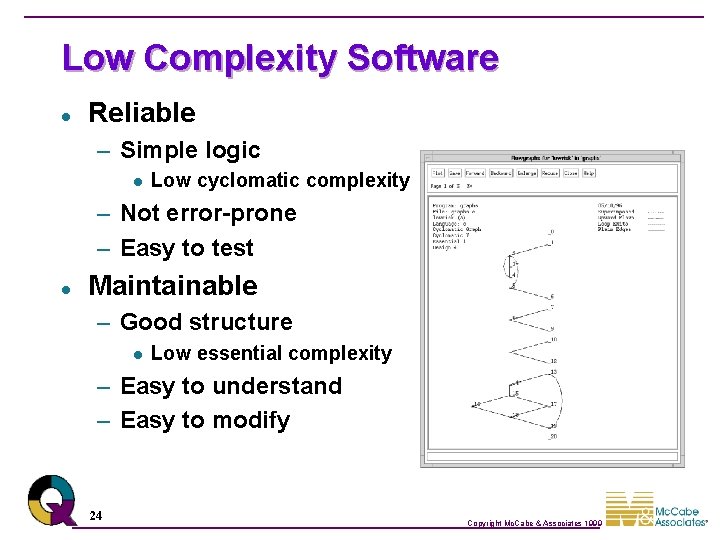

Low Complexity Software l Reliable – Simple logic l Low cyclomatic complexity – Not error-prone – Easy to test l Maintainable – Good structure l Low essential complexity – Easy to understand – Easy to modify 24 Copyright Mc. Cabe & Associates 1999

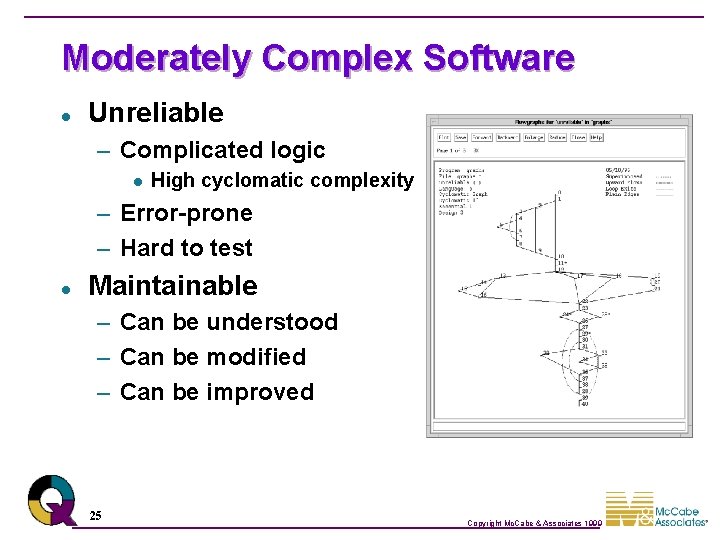

Moderately Complex Software l Unreliable – Complicated logic l High cyclomatic complexity – Error-prone – Hard to test l Maintainable – Can be understood – Can be modified – Can be improved 25 Copyright Mc. Cabe & Associates 1999

Highly Complex Software l Unreliable – Error prone – Very hard to test l Unmaintainable – Poor structure l High essential complexity – Hard to understand – Hard to modify – Hard to improve 26 Copyright Mc. Cabe & Associates 1999

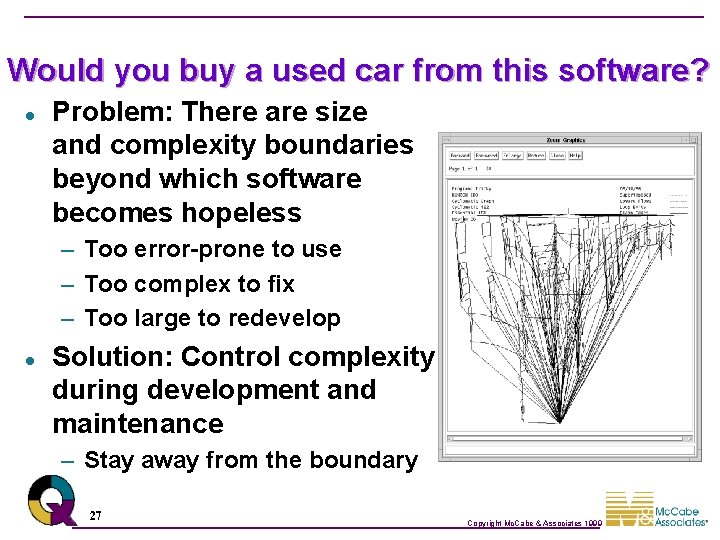

Would you buy a used car from this software? l Problem: There are size and complexity boundaries beyond which software becomes hopeless – Too error-prone to use – Too complex to fix – Too large to redevelop l Solution: Control complexity during development and maintenance – Stay away from the boundary 27 Copyright Mc. Cabe & Associates 1999

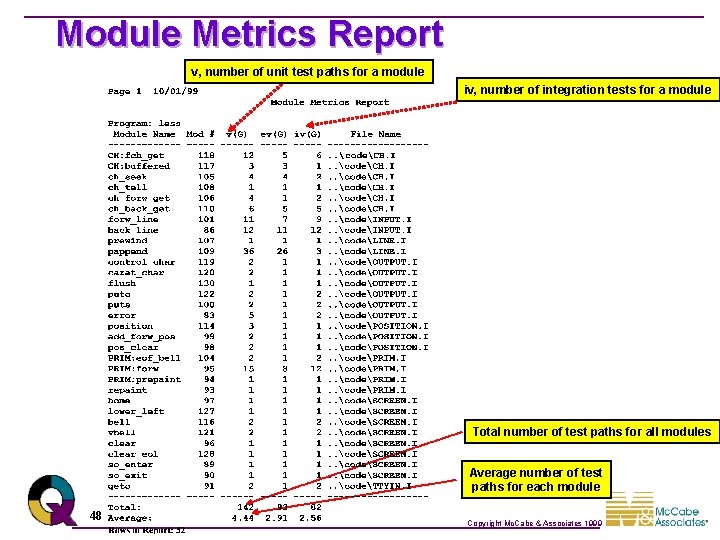

Important Complexity Measures l Cyclomatic complexity: v(G) – Amount of decision logic l Essential complexity: ev(G) – Amount of poorly-structured logic l Module design complexity: iv(G) – Amount of logic involved with subroutine calls l Data complexity: sdv – Amount of logic involved with selected data references 28 Copyright Mc. Cabe & Associates 1999

Cyclomatic Complexity l l The most famous complexity metric Measures amount of decision logic Identifies unreliable software, hard-to-test software Related test thoroughness metric, actual complexity, measures testing progress 29 Copyright Mc. Cabe & Associates 1999

Cyclomatic Complexity l Cyclomatic complexity, v - A measure of the decision logic of a software module. – Applies to decision logic embedded within written code. – Is derived from predicates in decision logic. – Is calculated for each module in the Battlemap. – Grows from 1 to high, finite number based on the amount of decision logic. – Is correlated to software quality and testing quantity; units with higher v, v>10, are less reliable and require high levels of testing. 30 Copyright Mc. Cabe & Associates 1999

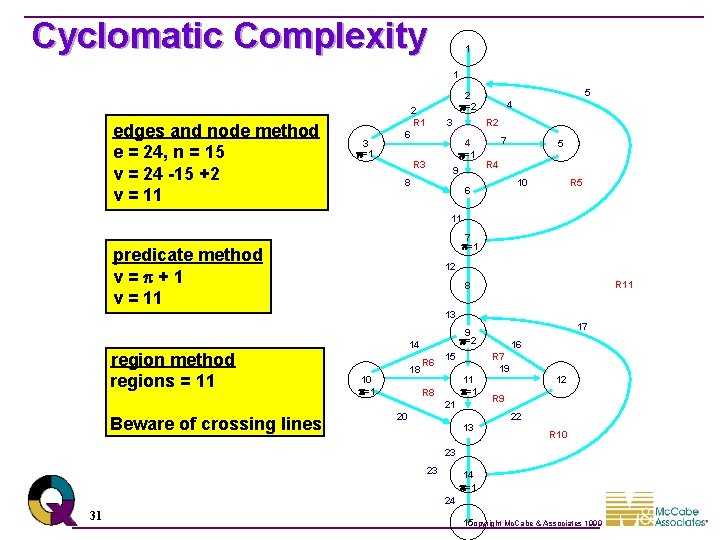

Cyclomatic Complexity 1 1 edges and node method e = 24, n = 15 v = 24 -15 +2 v = 11 2 R 1 3 =1 5 2 =2 3 R 2 6 4 =1 R 3 4 9 8 7 5 R 4 10 6 R 5 11 7 =1 predicate method v= +1 v = 11 12 R 11 8 13 region method regions = 11 14 18 10 =1 R 6 15 11 =1 R 8 20 16 R 7 19 21 Beware of crossing lines 17 9 =2 13 12 R 9 22 R 10 23 23 14 =1 24 31 Copyright Mc. Cabe & Associates 1999 15

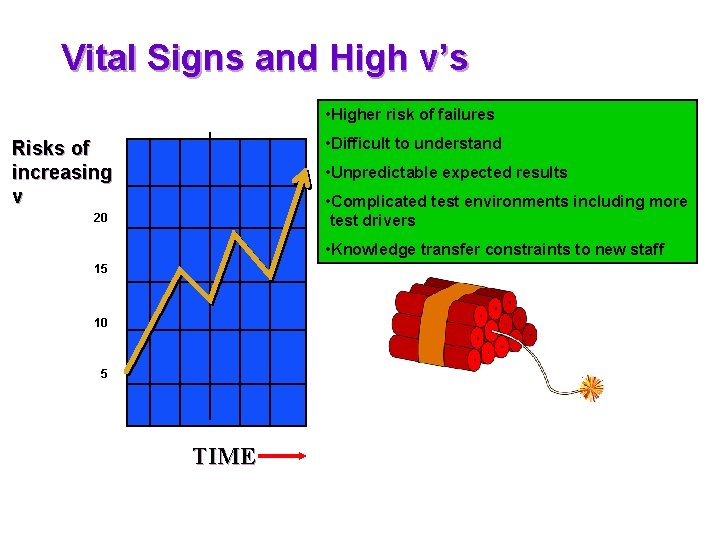

Vital Signs and High v’s • Higher risk of failures • Difficult to understand Risks of increasing v • Unpredictable expected results • Complicated test environments including more test drivers 20 • Knowledge transfer constraints to new staff 15 10 5 TIME

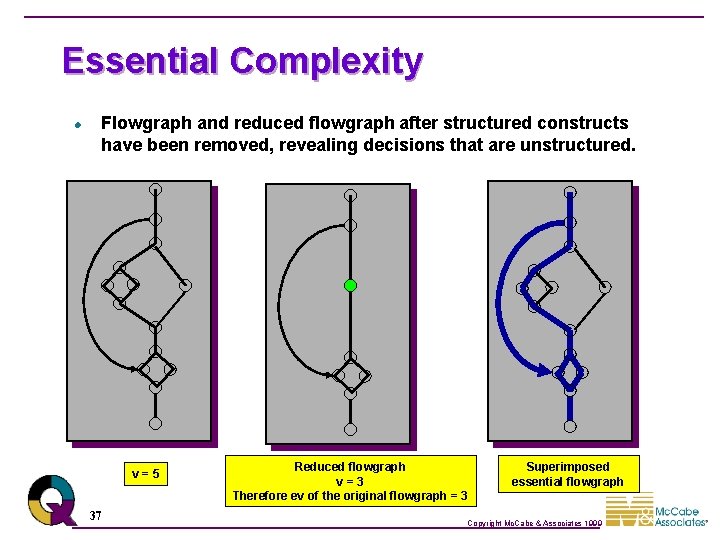

Essential Complexity l l Measures amount of poorly-structured logic Remove all well-structured logic, take cyclomatic complexity of what’s left Identifies unmaintainable software Pathological complexity metric is similar – Identifies extremely unmaintainable software 33 Copyright Mc. Cabe & Associates 1999

Essential Complexity l Essential complexity, ev - A measure of “structuredness” of decision logic of a software module. – Applies to decision logic embedded within written code. – Is calculated for each module in the Battlemap. – Grows from 1 to v based on the amount of “unstructured” decision logic. – Is associated with the ability to modularize complex modules. – If ev increases, then the coder is not using structured programming constructs. 34 Copyright Mc. Cabe & Associates 1999

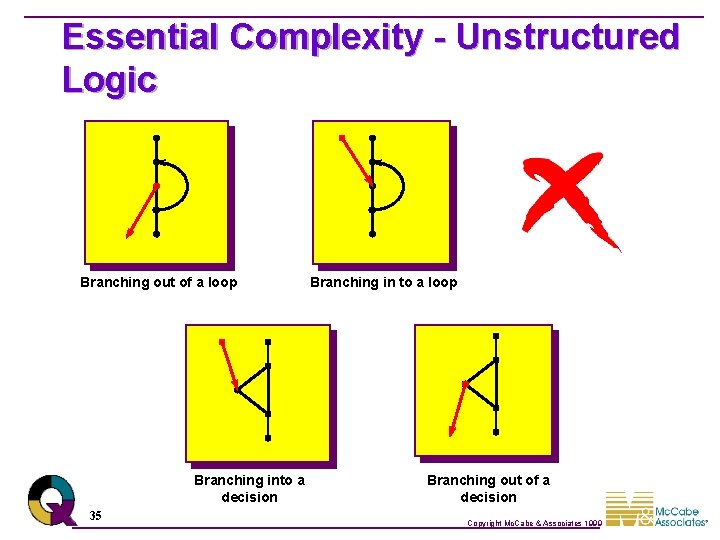

Essential Complexity - Unstructured Logic Branching out of a loop Branching into a decision 35 Branching in to a loop Branching out of a decision Copyright Mc. Cabe & Associates 1999

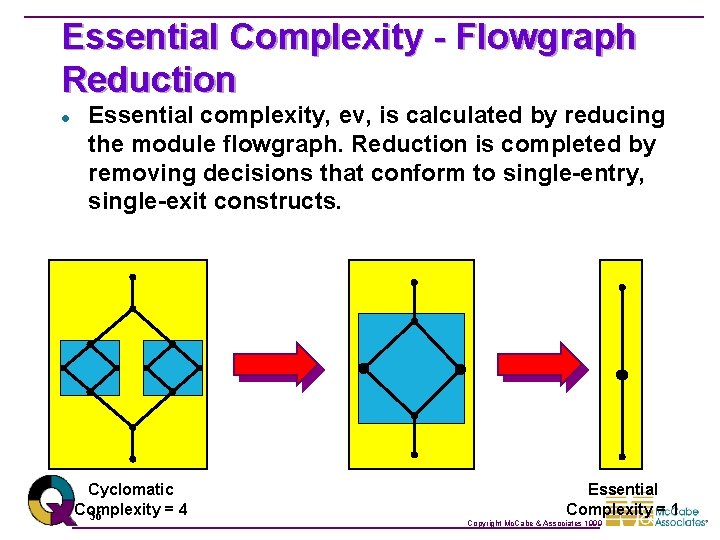

Essential Complexity - Flowgraph Reduction l Essential complexity, ev, is calculated by reducing the module flowgraph. Reduction is completed by removing decisions that conform to single-entry, single-exit constructs. Cyclomatic Complexity =4 36 Essential Complexity = 1 Copyright Mc. Cabe & Associates 1999

Essential Complexity l Flowgraph and reduced flowgraph after structured constructs have been removed, revealing decisions that are unstructured. v=5 37 Reduced flowgraph v=3 Therefore ev of the original flowgraph = 3 Superimposed essential flowgraph Copyright Mc. Cabe & Associates 1999

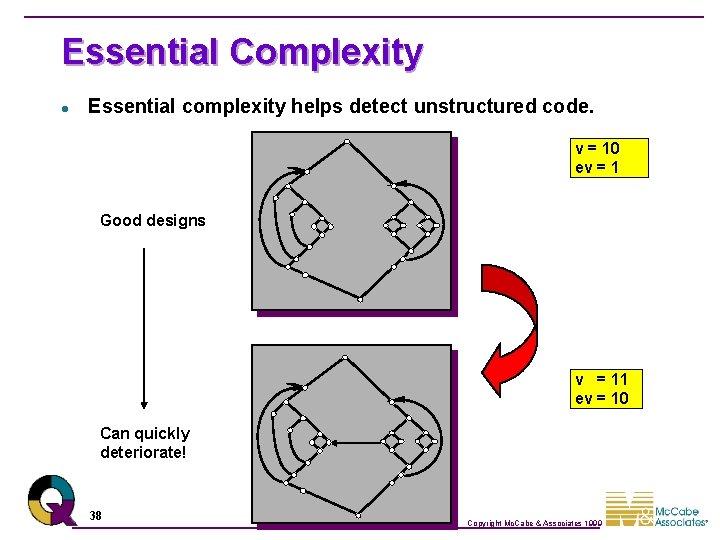

Essential Complexity l Essential complexity helps detect unstructured code. v = 10 ev = 1 Good designs v = 11 ev = 10 Can quickly deteriorate! 38 Copyright Mc. Cabe & Associates 1999

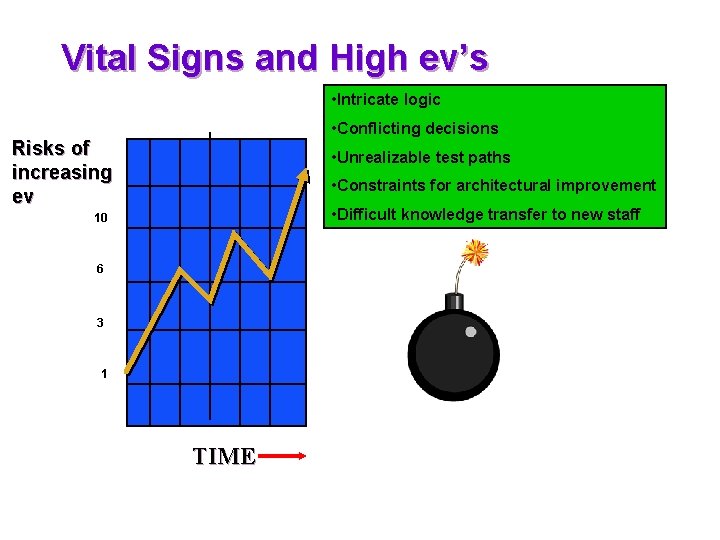

Vital Signs and High ev’s • Intricate logic • Conflicting decisions Risks of increasing ev • Unrealizable test paths • Constraints for architectural improvement • Difficult knowledge transfer to new staff 10 6 3 1 TIME

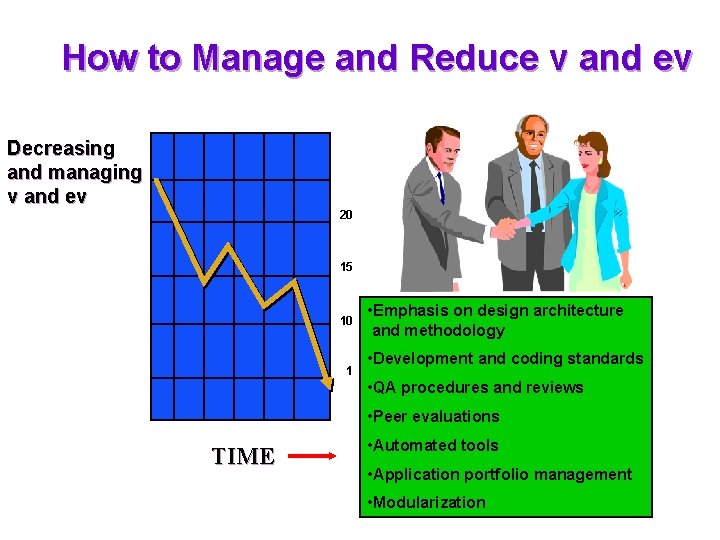

How to Manage and Reduce v and ev Decreasing and managing v and ev 20 15 10 1 • Emphasis on design architecture and methodology • Development and coding standards • QA procedures and reviews • Peer evaluations TIME • Automated tools • Application portfolio management • Modularization

Module Design Complexity How Much Supervising Is Done? 41 Copyright Mc. Cabe & Associates 1999

Module design complexity l l Measures amount of decision logic involved with subroutine calls Identifies “managerial” modules Indicates design reliability, integration testability Related test thoroughness metric, tested design complexity, measures integration testing progress 42 Copyright Mc. Cabe & Associates 1999

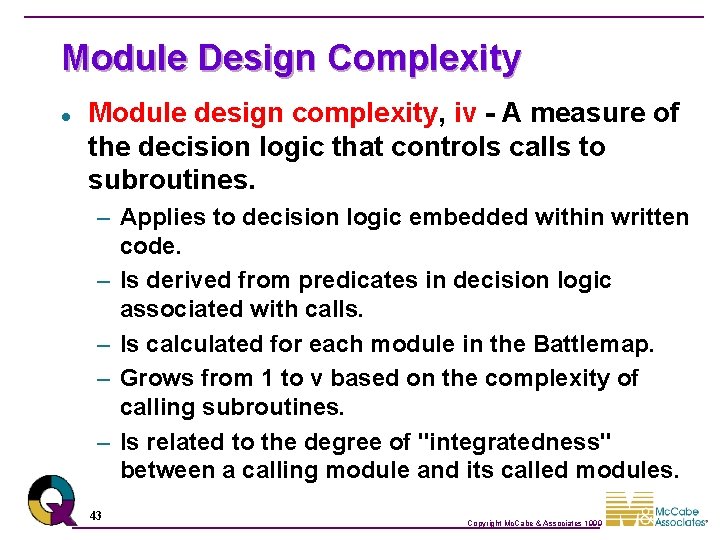

Module Design Complexity l Module design complexity, iv - A measure of the decision logic that controls calls to subroutines. – Applies to decision logic embedded within written code. – Is derived from predicates in decision logic associated with calls. – Is calculated for each module in the Battlemap. – Grows from 1 to v based on the complexity of calling subroutines. – Is related to the degree of "integratedness" between a calling module and its called modules. 43 Copyright Mc. Cabe & Associates 1999

Module Design Complexity l Module design complexity, iv, is calculated by reducing the module flowgraph. Reduction is completed by removing decisions and nodes that do not impact the calling control over a module’s immediate subordinates. 44 Copyright Mc. Cabe & Associates 1999

Module Design Complexity l Example: main() { if (a == b) progd(); if (m == n) proge(); switch(expression) { case value_1: statement 1; break; case value_2: statement 2; break; case value_3: statement 3; } } main iv = 3 progd main Reduced Flowgraph v=5 v=3 progd() proge() do not impact calls 45 proge Therefore, iv of the original flowgraph = 3 Copyright Mc. Cabe & Associates 1999

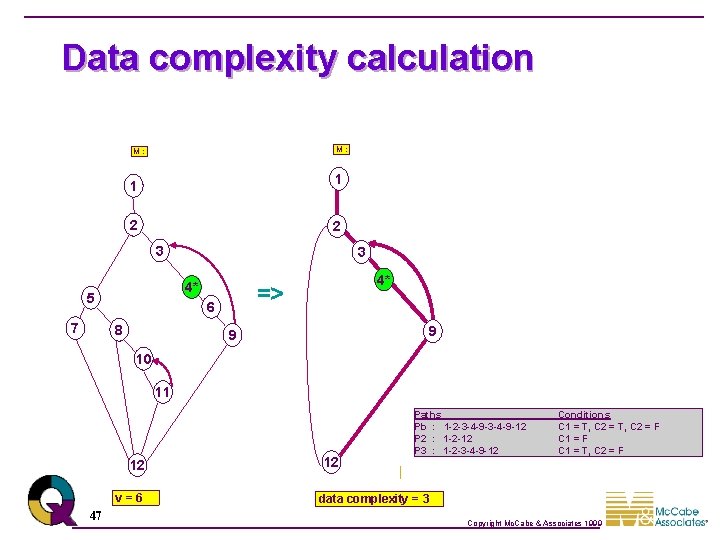

Data complexity l Actually, a family of metrics – Global data complexity (global and parameter), specified data complexity, date complexity l l l Measures amount of decision logic involved with selected data references Indicates data impact, data testability Related test thoroughness metric, tested data complexity, measures data testing progress 46 Copyright Mc. Cabe & Associates 1999

Data complexity calculation M: 1 1 2 2 C 1 7 C 3 4* Data A 5 C 1 3 => 6 8 9 C 4 3 Data A 4* 9 C 2 10 11 C 5 12 v=6 47 12 Paths Pb : 1 -2 -3 -4 -9 -12 P 2 : 1 -2 -12 P 3 : 1 -2 -3 -4 -9 -12 Conditions C 1 = T, C 2 = F data complexity = 3 Copyright Mc. Cabe & Associates 1999

Module Metrics Report v, number of unit test paths for a module iv, number of integration tests for a module Total number of test paths for all modules Average number of test paths for each module 48 Copyright Mc. Cabe & Associates 1999

Common Testing Challenges l Deriving Tests – l Verifying Tests – – l l Ensuring that Critical or Modified Code is Tested First Reducing Test Duplication – 49 Verifying that Enough Testing was Performed Providing Evidence that Testing was Good Enough When to Stop Testing Prioritizing Tests – l Creating a “Good” Set of Tests Identifying Similar Tests That Add Little Value & Removing Them Copyright Mc. Cabe & Associates 1999

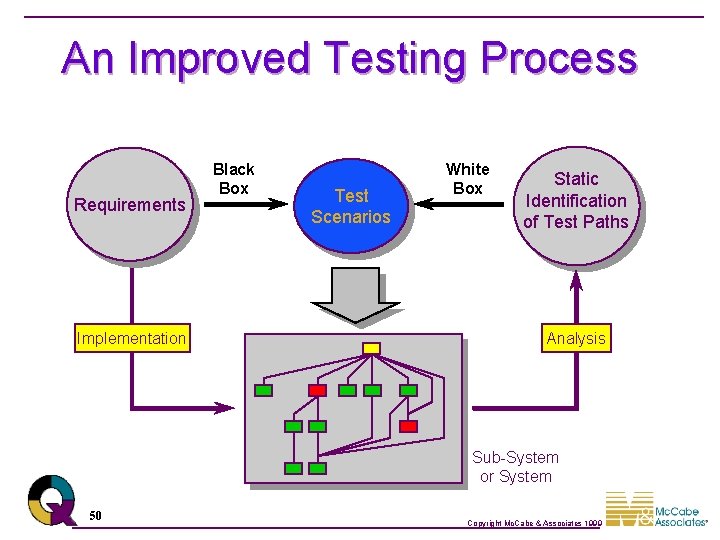

An Improved Testing Process Requirements Implementation Black Box Test Scenarios White Box Static Identification of Test Paths Analysis Sub-System or System 50 Copyright Mc. Cabe & Associates 1999

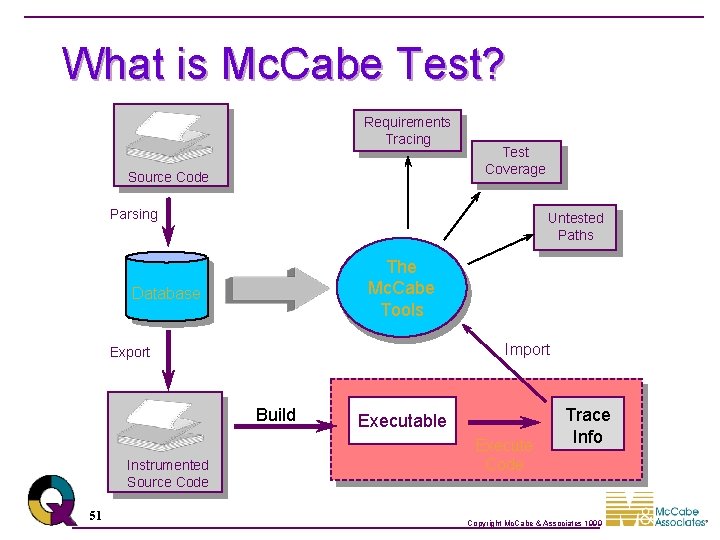

What is Mc. Cabe Test? Requirements Tracing Source Code Test Coverage Parsing Untested Paths The Mc. Cabe Tools Database Import Export Build Instrumented Source Code 51 Executable Execute Code Trace Info Copyright Mc. Cabe & Associates 1999

Coverage Mode l Color Scheme Represents Coverage No Trace File Imported 52 Copyright Mc. Cabe & Associates 1999

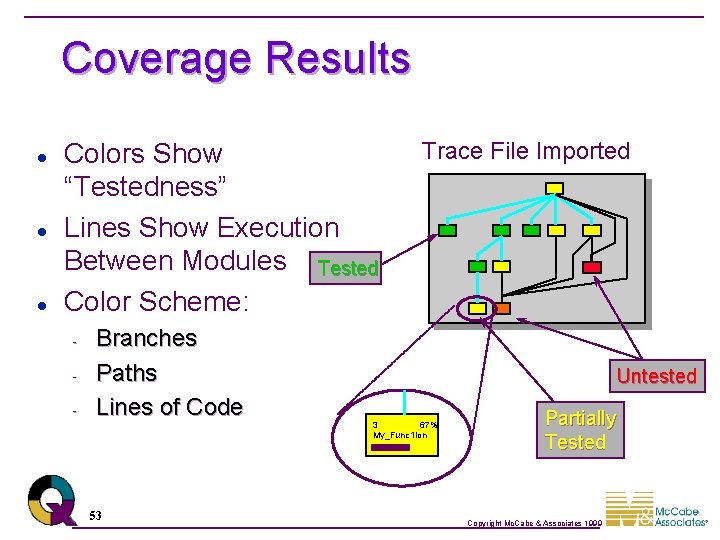

Coverage Results l l l Colors Show “Testedness” Lines Show Execution Between Modules Tested Color Scheme: - Branches Paths Lines of Code 53 Trace File Imported Untested 3 67% My_Func 1 ion Partially Tested Copyright Mc. Cabe & Associates 1999

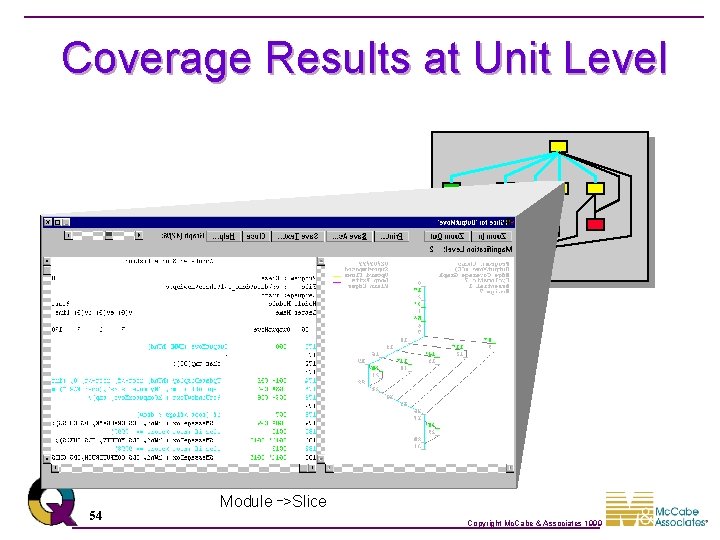

Coverage Results at Unit Level 54 Module _>Slice Copyright Mc. Cabe & Associates 1999

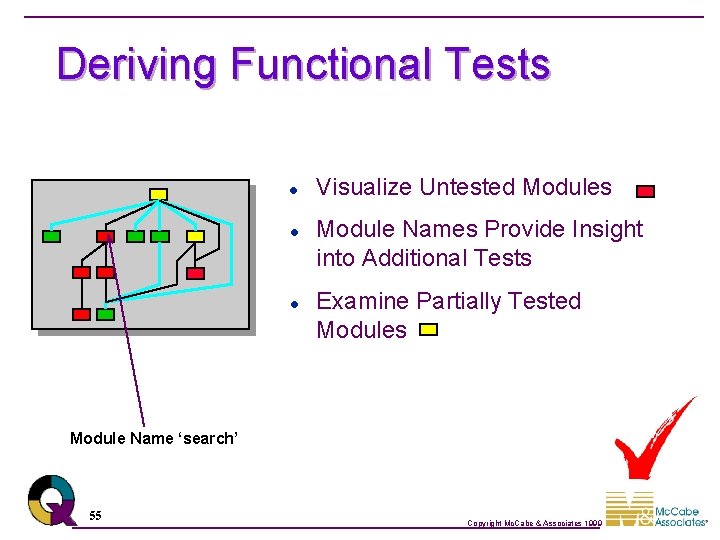

Deriving Functional Tests l l l Visualize Untested Modules Module Names Provide Insight into Additional Tests Examine Partially Tested Modules Module Name ‘search’ 55 Copyright Mc. Cabe & Associates 1999

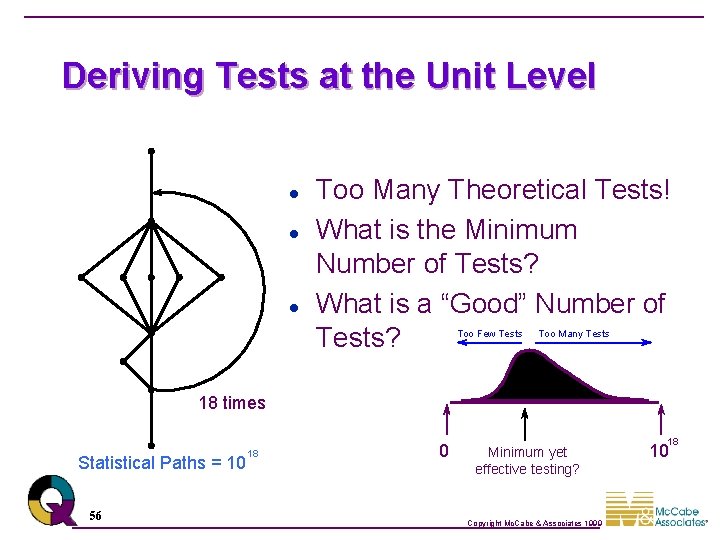

Deriving Tests at the Unit Level l Too Many Theoretical Tests! What is the Minimum Number of Tests? What is a “Good” Number of Tests? Too Few Tests Too Many Tests 18 times Statistical Paths = 10 56 18 0 Minimum yet effective testing? Copyright Mc. Cabe & Associates 1999 18 10

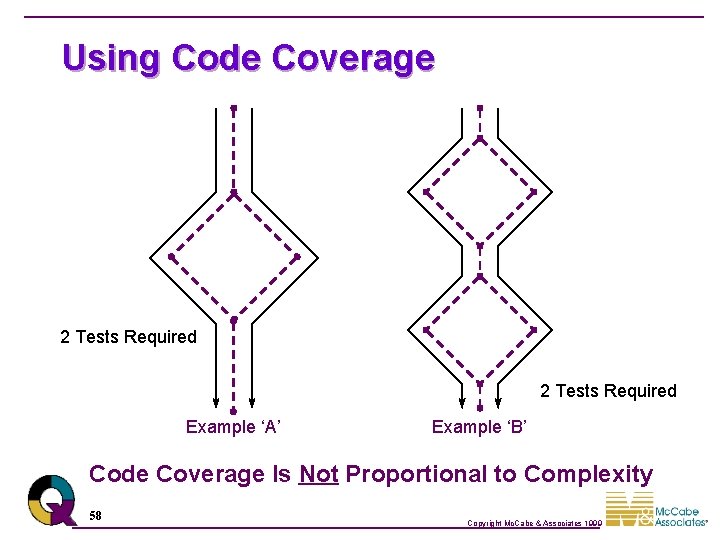

Code Coverage Example ‘A’ Example ‘B’ Which Function Is More Complex? 57 Copyright Mc. Cabe & Associates 1999

Using Code Coverage 2 Tests Required Example ‘A’ Example ‘B’ Code Coverage Is Not Proportional to Complexity 58 Copyright Mc. Cabe & Associates 1999

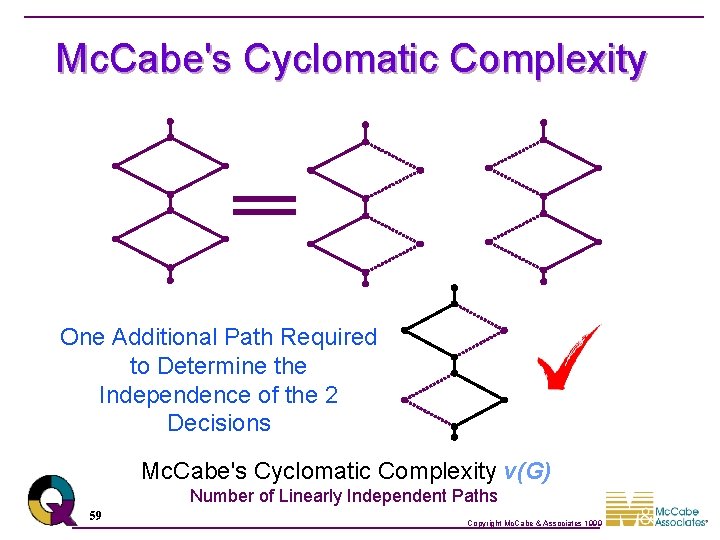

Mc. Cabe's Cyclomatic Complexity One Additional Path Required to Determine the Independence of the 2 Decisions Mc. Cabe's Cyclomatic Complexity v(G) Number of Linearly Independent Paths 59 Copyright Mc. Cabe & Associates 1999

Deriving Tests at the Unit Level Complexity = 10 Minimum 10 Tests Will: • Ensure Code Coverage • Test Independence of Decisions 60 Copyright Mc. Cabe & Associates 1999

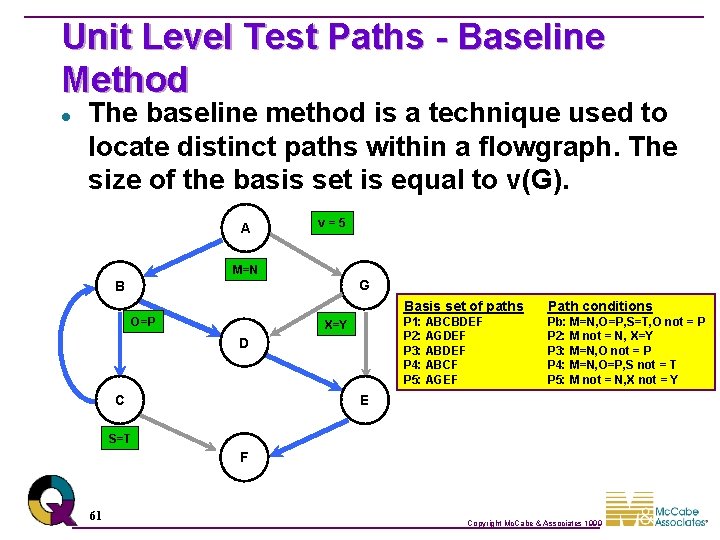

Unit Level Test Paths - Baseline Method l The baseline method is a technique used to locate distinct paths within a flowgraph. The size of the basis set is equal to v(G). A v=5 M=N G B O=P X=Y D C Basis set of paths Path conditions P 1: ABCBDEF P 2: AGDEF P 3: ABDEF P 4: ABCF P 5: AGEF Pb: M=N, O=P, S=T, O not = P P 2: M not = N, X=Y P 3: M=N, O not = P P 4: M=N, O=P, S not = T P 5: M not = N, X not = Y E S=T F 61 Copyright Mc. Cabe & Associates 1999

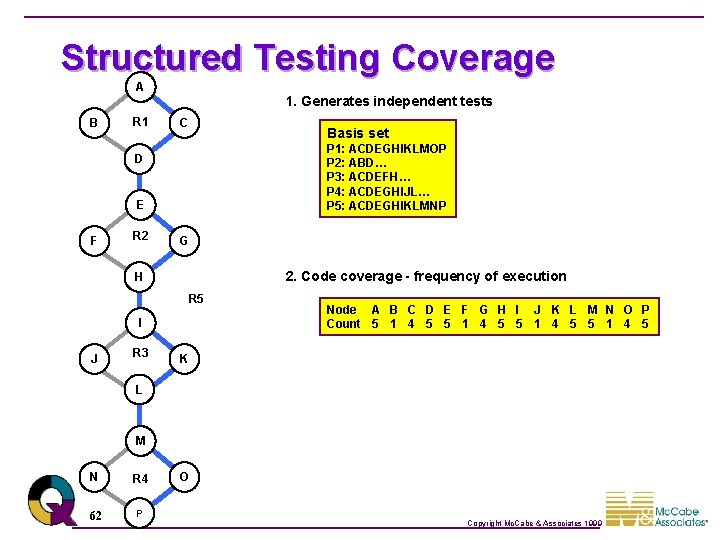

Structured Testing Coverage A B R 1 1. Generates independent tests C P 1: ACDEGHIKLMOP P 2: ABD… P 3: ACDEFH… P 4: ACDEGHIJL… P 5: ACDEGHIKLMNP D E F R 2 G 2. Code coverage - frequency of execution H R 5 I J R 3 Basis set Node A B C D E F G H I J K L M N O P Count 5 1 4 5 K L M N R 4 62 P O Copyright Mc. Cabe & Associates 1999

Other Baselines - Different Coverage A B R 1 1. Generates independent tests C P 1: ABDEFHIJLMNP P 2: ACD… P 3: ABDEGH… P 4: ABDEGHIKL… P 5: ABDEGHIKLMOP D E F R 2 G 2. Code coverage - frequency of execution H R 5 I J R 3 Basis set K L Node A B C D E F G H I J K L M N O P Count 5 4 1 5 Previous code coverage - frequency of execution Node A B C D E F G H I J K L M N O P Count 5 1 4 5 M N R 4 63 P O Same number of tests; which coverage is more effective? Copyright Mc. Cabe & Associates 1999

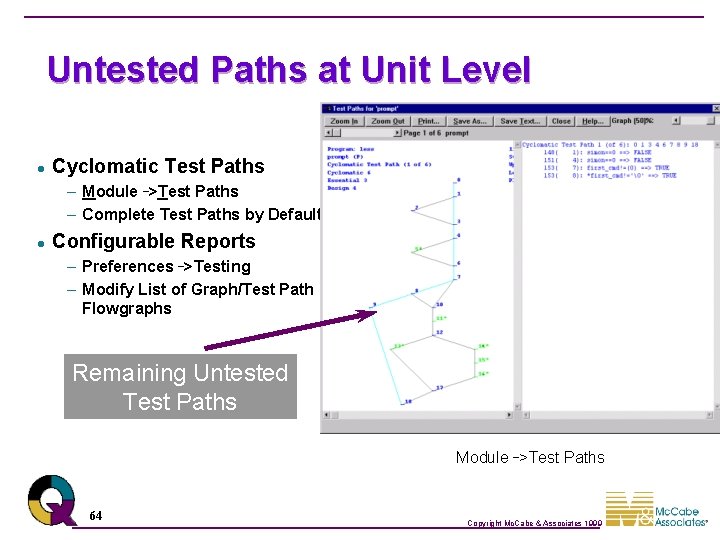

Untested Paths at Unit Level l Cyclomatic Test Paths – Module _>Test Paths – Complete Test Paths by Default l Configurable Reports – Preferences _>Testing – Modify List of Graph/Test Path Flowgraphs Remaining Untested Test Paths Module _>Test Paths 64 Copyright Mc. Cabe & Associates 1999

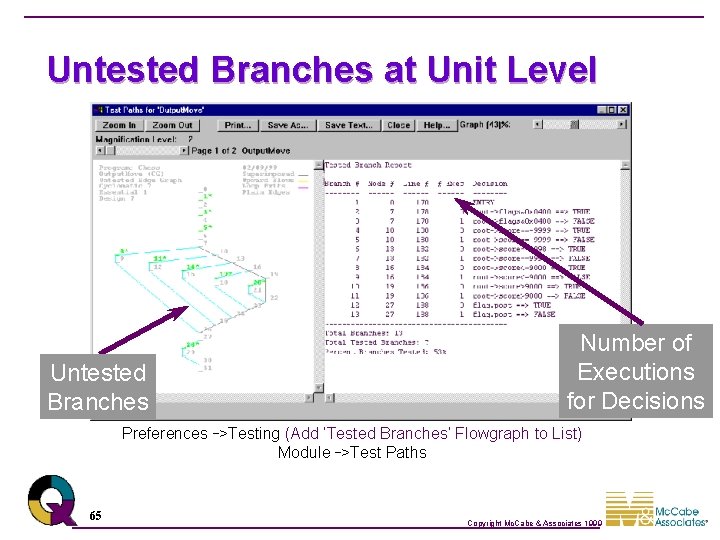

Untested Branches at Unit Level Untested Branches Number of Executions for Decisions Preferences _>Testing (Add ‘Tested Branches’ Flowgraph to List) Module _>Test Paths 65 Copyright Mc. Cabe & Associates 1999

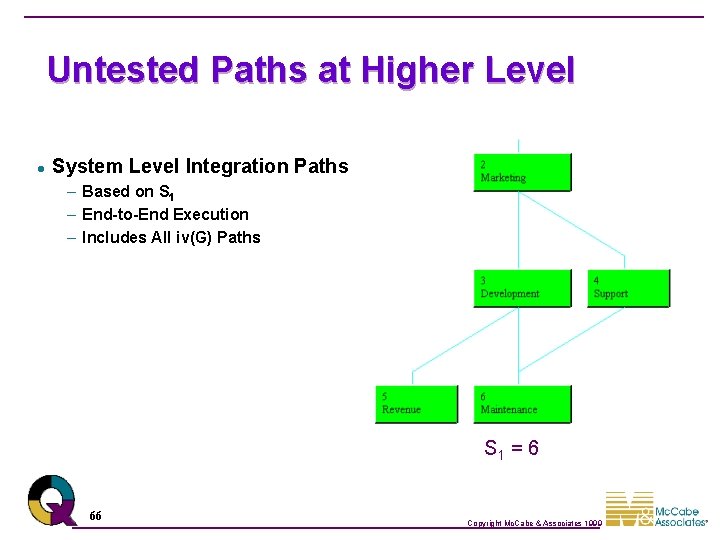

Untested Paths at Higher Level l System Level Integration Paths – Based on S 1 – End-to-End Execution – Includes All iv(G) Paths S 1 = 6 66 Copyright Mc. Cabe & Associates 1999

Untested Paths at Higher Level l System Level Integration Paths – Displayed Graphically – Textual Report – Theoretical Execution Paths – Show Only Untested Paths S 1 = 6 67 Copyright Mc. Cabe & Associates 1999

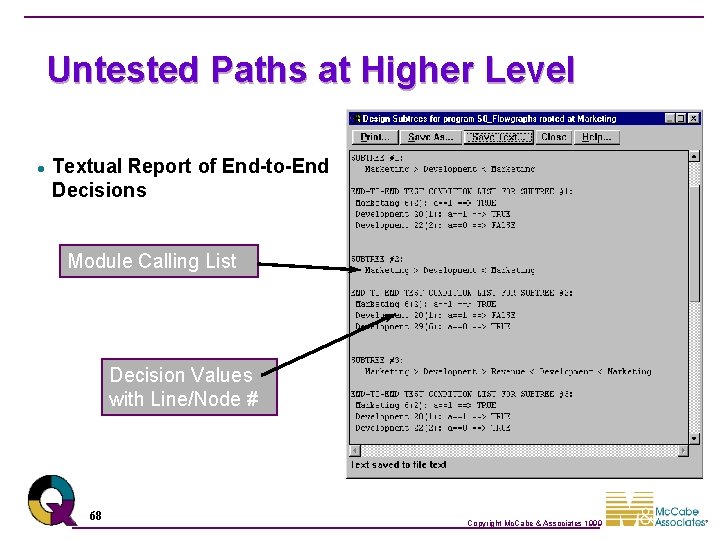

Untested Paths at Higher Level l Textual Report of End-to-End Decisions Module Calling List Decision Values with Line/Node # 68 Copyright Mc. Cabe & Associates 1999

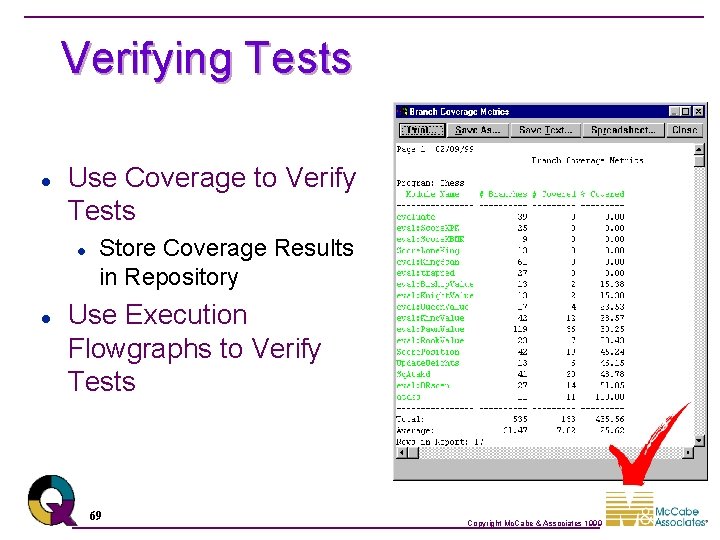

Verifying Tests l Use Coverage to Verify Tests l l Store Coverage Results in Repository Use Execution Flowgraphs to Verify Tests 69 Copyright Mc. Cabe & Associates 1999

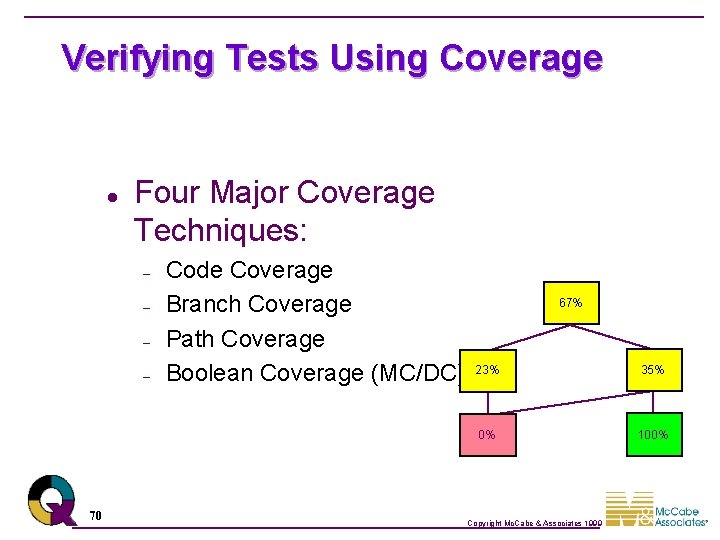

Verifying Tests Using Coverage l Four Major Coverage Techniques: – – 70 Code Coverage Branch Coverage Path Coverage Boolean Coverage (MC/DC) 67% 23% 35% 0% 100% Copyright Mc. Cabe & Associates 1999

When to Stop Testing l Coverage to Assess Testing Completeness – Branch Coverage Reports l Coverage Increments – How Much New Coverage for Each New Test of Tests? 71 Copyright Mc. Cabe & Associates 1999

When to Stop Testing l l Is All of the System Equally Important? Is All Code in An Application Used Equally? l l l 10% of Code Used 90% of Time Remaining 90% Only Used 10% of Time Where Do We Need to Test Most? 72 Copyright Mc. Cabe & Associates 1999

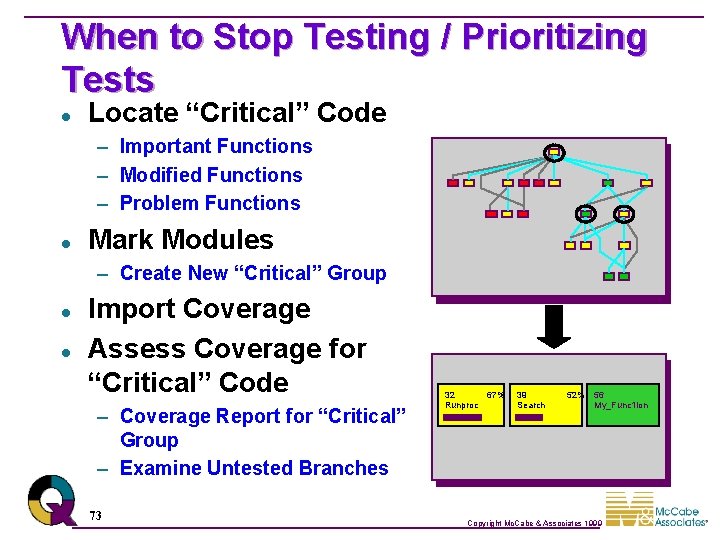

When to Stop Testing / Prioritizing Tests l Locate “Critical” Code – Important Functions – Modified Functions – Problem Functions l Mark Modules – Create New “Critical” Group l l Import Coverage Assess Coverage for “Critical” Code – Coverage Report for “Critical” Group – Examine Untested Branches 73 32 67% Runproc 39 Search 52% 56 My_Func 1 ion Copyright Mc. Cabe & Associates 1999

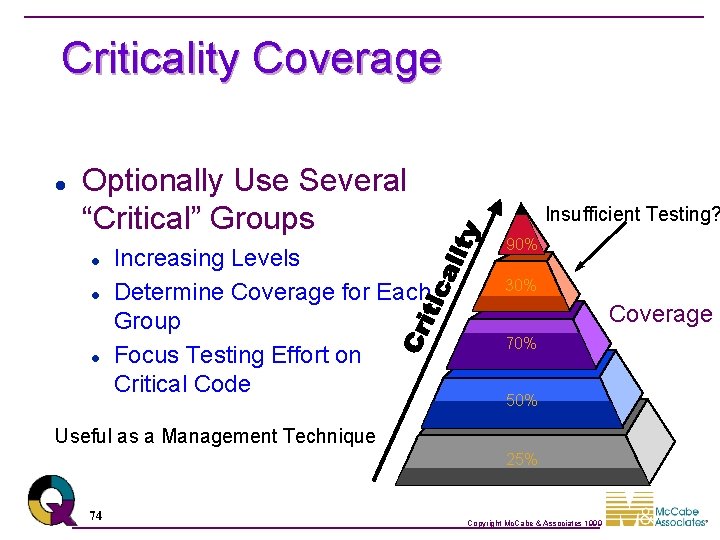

Criticality Coverage l Optionally Use Several “Critical” Groups l l l Increasing Levels Determine Coverage for Each Group Focus Testing Effort on Critical Code Insufficient Testing? 90% 30% Coverage 70% 50% Useful as a Management Technique 25% 74 Copyright Mc. Cabe & Associates 1999

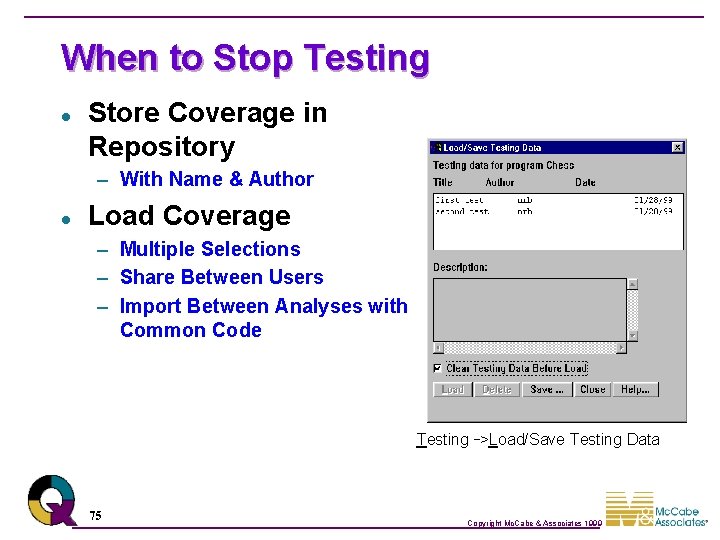

When to Stop Testing l Store Coverage in Repository – With Name & Author l Load Coverage – Multiple Selections – Share Between Users – Import Between Analyses with Common Code Testing _>Load/Save Testing Data 75 Copyright Mc. Cabe & Associates 1999

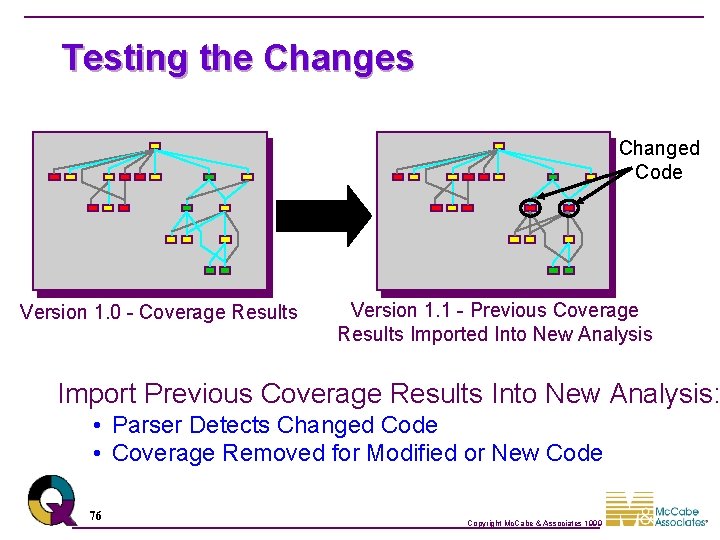

Testing the Changes Changed Code Version 1. 0 - Coverage Results Version 1. 1 - Previous Coverage Results Imported Into New Analysis Import Previous Coverage Results Into New Analysis: • Parser Detects Changed Code • Coverage Removed for Modified or New Code 76 Copyright Mc. Cabe & Associates 1999

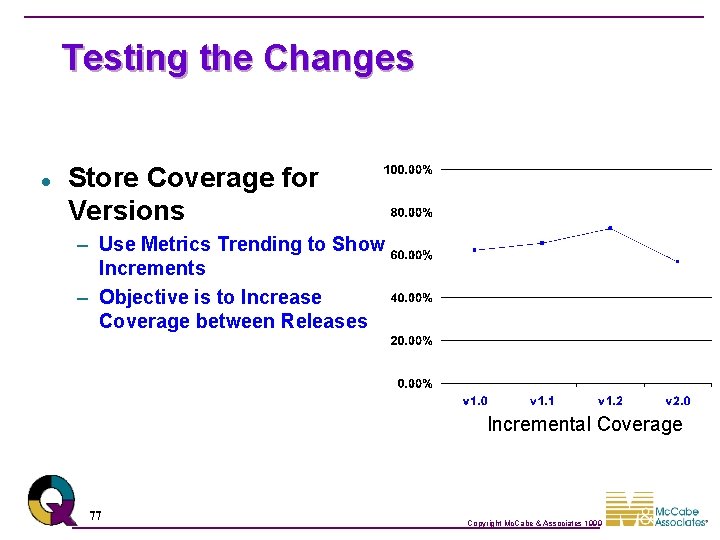

Testing the Changes l Store Coverage for Versions – Use Metrics Trending to Show Increments – Objective is to Increase Coverage between Releases Incremental Coverage 77 Copyright Mc. Cabe & Associates 1999

Mc. Cabe Change l Changed Code Marking Changed Code – Reports Showing Change Status – Coverage Reports for Changed Modules l Configurable Change Detection – Standard Metrics – “String Comparison” 78 Copyright Mc. Cabe & Associates 1999

Manipulating Coverage l Addition/Subtraction of slices – The technique: 79 Copyright Mc. Cabe & Associates 1999

Slice Manipulation Slice Operations l Manipulate Slices Using Set Theory l Export Slice to File – List of Executed Lines l 80 Must be in Slice Mode Copyright Mc. Cabe & Associates 1999

Review l l Mc. Cabe IQ Products Metrics – cyclomatic complexity, v – essential complexity, ev – module design complexity, iv l Testing – – l Deriving Tests Verifying Tests Prioritizing Tests When is testing complete? Managing Change 81 Copyright Mc. Cabe & Associates 1999

- Slides: 81