Lecture 2 BSP Map Reduce CS 848 Models

Lecture 2: BSP & Map. Reduce CS 848: Models and Applications of Distributed Data Processing Systems Wed, Sep 14 th 2016

Volunteers For Next Monday? 1. Pig Latin: High-level Dataflow Language on Map. Reduce 2. Hive: Datawarehouse on Map. Reduce 3. Pegasus: Graph Processing System on Map. Reduce 2

Outline For Today 1. Finish of Overview 2. Valiant’s 1990 BSP Paper 3. Map. Reduce 3

Outline For Today 1. Finish of Overview 2. Valiant’s 1990 BSP Paper 3. Map. Reduce 4

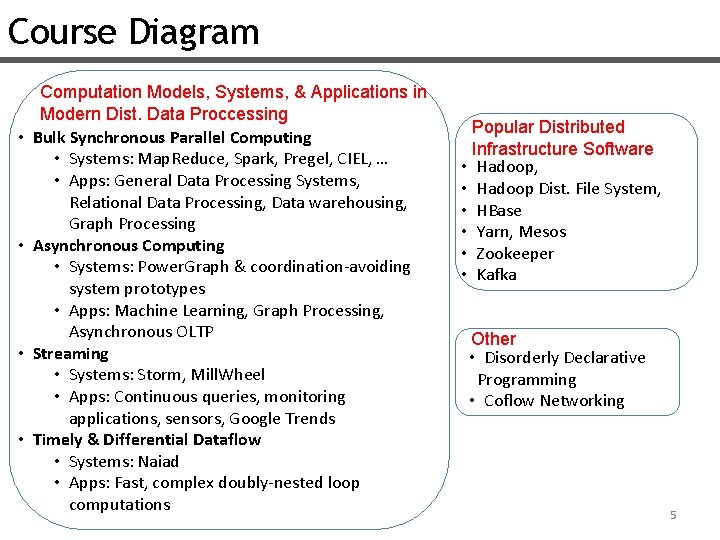

Course Diagram • • Computation Models, Systems, & Applications in Modern Dist. Data Proccessing Bulk Synchronous Parallel Computing • Systems: Map. Reduce, Spark, Pregel, CIEL, … • Apps: General Data Processing Systems, Relational Data Processing, Data warehousing, Graph Processing Asynchronous Computing • Systems: Power. Graph & coordination-avoiding system prototypes • Apps: Machine Learning, Graph Processing, Asynchronous OLTP Streaming • Systems: Storm, Mill. Wheel • Apps: Continuous queries, monitoring applications, sensors, Google Trends Timely & Differential Dataflow • Systems: Naiad • Apps: Fast, complex doubly-nested loop computations Popular Distributed Infrastructure Software • Hadoop, • Hadoop Dist. File System, • HBase • Yarn, Mesos • Zookeeper • Kafka Other • Disorderly Declarative Programming • Coflow Networking 5

Other Topics u Programming Languages: Disorderly Declarative Programming u Networks: Coflow Networking 6

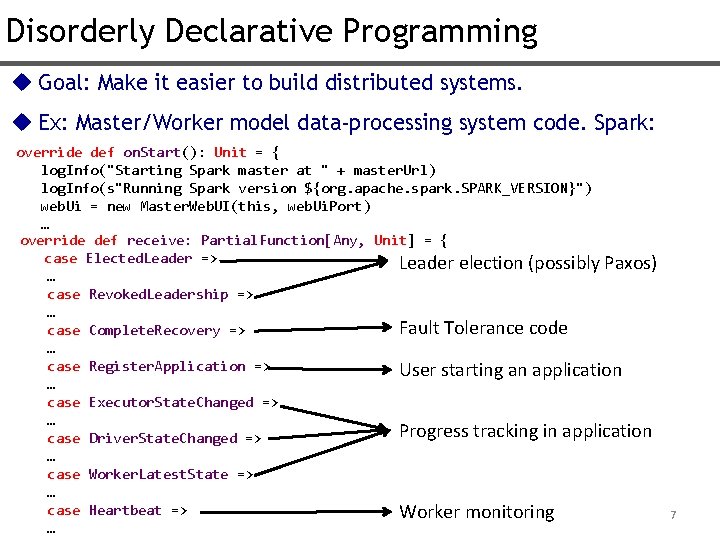

Disorderly Declarative Programming u Goal: Make it easier to build distributed systems. u Ex: Master/Worker model data-processing system code. Spark: override def on. Start(): Unit = { log. Info("Starting Spark master at " + master. Url) log. Info(s"Running Spark version ${org. apache. spark. SPARK_VERSION}") web. Ui = new Master. Web. UI(this, web. Ui. Port) … override def receive: Partial. Function[Any, Unit] = { case Elected. Leader => Leader election (possibly Paxos) … case Revoked. Leadership => … Fault Tolerance code case Complete. Recovery => … case Register. Application => User starting an application … case Executor. State. Changed => … Progress tracking in application case Driver. State. Changed => … case Worker. Latest. State => … case Heartbeat => Worker monitoring … 7

Problems w/Java/C++/Scala in Distr. Systems u Handling of (many) different states of system written one by one u (Very) Difficult to write & understand the overall protocol u Difficult to test & debug 8

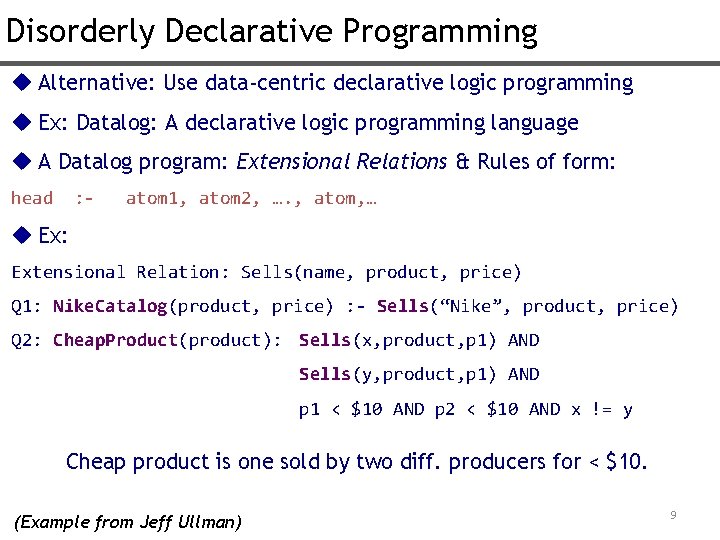

Disorderly Declarative Programming u Alternative: Use data-centric declarative logic programming u Ex: Datalog: A declarative logic programming language u A Datalog program: Extensional Relations & Rules of form: head : - atom 1, atom 2, …. , atom, … u Ex: Extensional Relation: Sells(name, product, price) Q 1: Nike. Catalog(product, price) : - Sells(“Nike”, product, price) Q 2: Cheap. Product(product): Sells(x, product, p 1) AND Sells(y, product, p 1) AND p 1 < $10 AND p 2 < $10 AND x != y Cheap product is one sold by two diff. producers for < $10. (Example from Jeff Ullman) 9

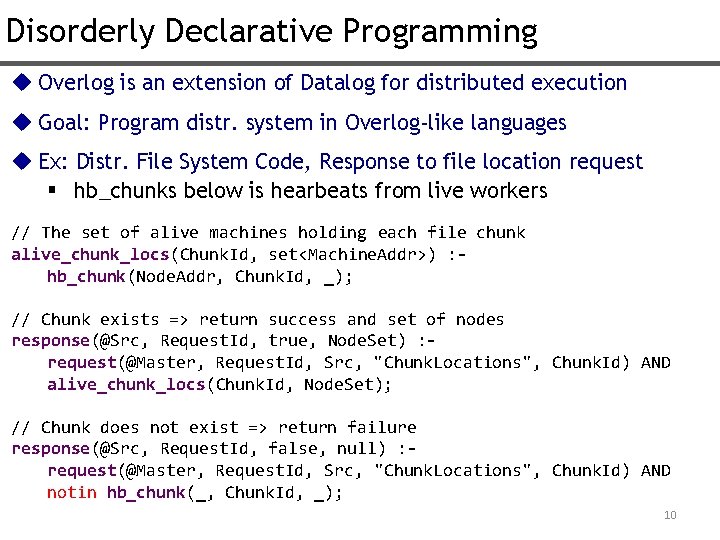

Disorderly Declarative Programming u Overlog is an extension of Datalog for distributed execution u Goal: Program distr. system in Overlog-like languages u Ex: Distr. File System Code, Response to file location request § hb_chunks below is hearbeats from live workers // The set of alive machines holding each file chunk alive_chunk_locs(Chunk. Id, set<Machine. Addr>) : hb_chunk(Node. Addr, Chunk. Id, _); // Chunk exists => return success and set of nodes response(@Src, Request. Id, true, Node. Set) : request(@Master, Request. Id, Src, "Chunk. Locations", Chunk. Id) AND alive_chunk_locs(Chunk. Id, Node. Set); // Chunk does not exist => return failure response(@Src, Request. Id, false, null) : request(@Master, Request. Id, Src, "Chunk. Locations", Chunk. Id) AND notin hb_chunk(_, Chunk. Id, _); 10

Disorderly Declarative Programming You can really implement distributed systems with declarative logic programming!!! Ex: distributed file systems, distributed locking services, distributed data processing systems (e. g. Map. Reduce) Advantages: Much more concise! Pseudocode-like Easier to test/debug 11

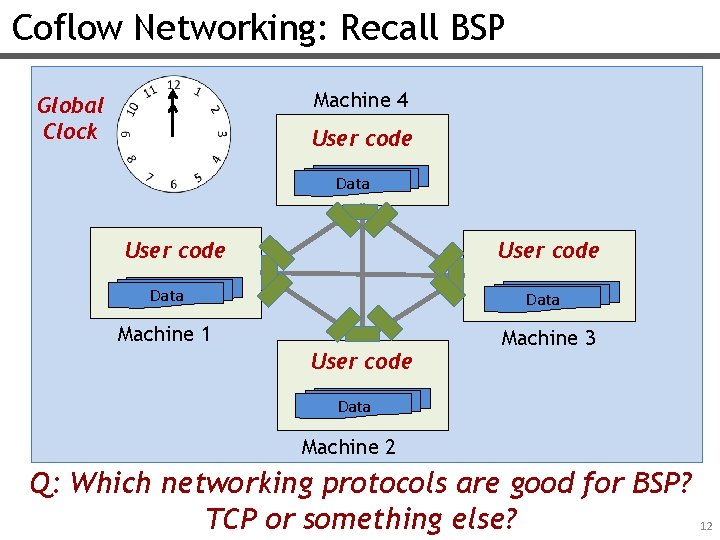

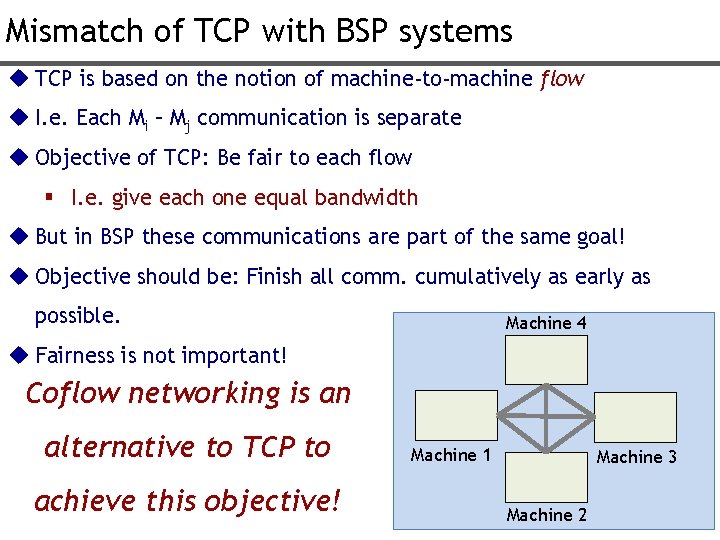

Coflow Networking: Recall BSP Machine 4 Global Clock User code Data Machine 1 User code Machine 3 Data Machine 2 Q: Which networking protocols are good for BSP? TCP or something else? 12

Mismatch of TCP with BSP systems u TCP is based on the notion of machine-to-machine flow u I. e. Each Mi – Mj communication is separate u Objective of TCP: Be fair to each flow § I. e. give each one equal bandwidth u But in BSP these communications are part of the same goal! u Objective should be: Finish all comm. cumulatively as early as possible. Machine 4 u Fairness is not important! Coflow networking is an alternative to TCP to achieve this objective! Machine 1 Machine 3 Machine 2 13

Outline For Today 1. Finish of Overview 2. Valiant’s 1990 BSP Paper 3. Map. Reduce 14

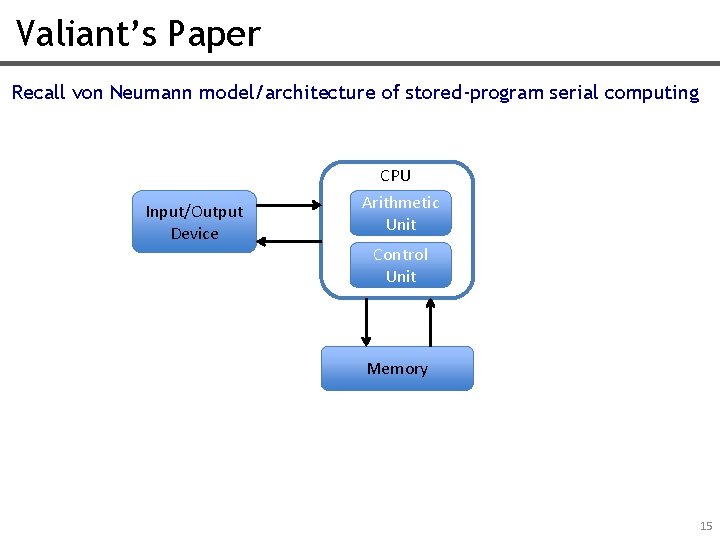

Valiant’s Paper Recall von Neumann model/architecture of stored-program serial computing CPU Input/Output Device Arithmetic Unit Control Unit Memory 15

Why was von Neumann Model So Successful? 1. Universality: Can execute any program (perform any computation) 2. Efficiently realizable in hardware: w/ different technologies. 3. Easy to understand for software developers “Bridging gap between hardware & software” i. e. Good conceptual map between: What the hardware is “roughly” doing. What the software can “roughly” assume hardware is doing. **Enables software and hardware to be developed independently and transferred easily!** 16

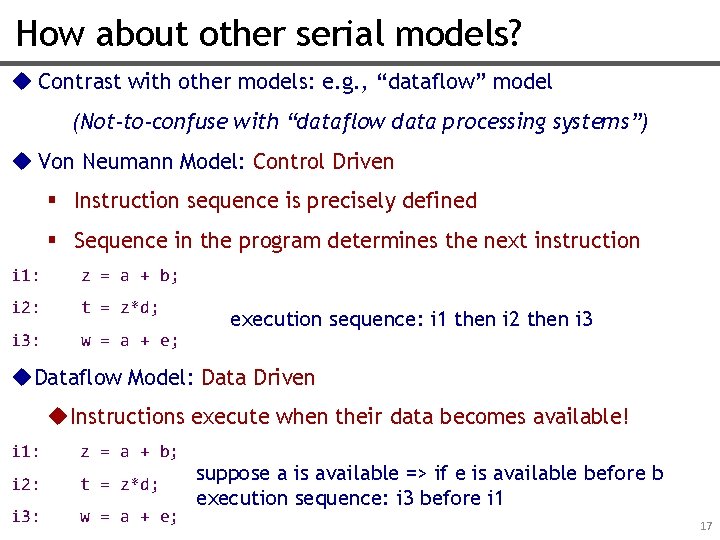

How about other serial models? u Contrast with other models: e. g. , “dataflow” model (Not-to-confuse with “dataflow data processing systems”) u Von Neumann Model: Control Driven § Instruction sequence is precisely defined § Sequence in the program determines the next instruction i 1: z = a + b; i 2: t = z*d; i 3: w = a + e; execution sequence: i 1 then i 2 then i 3 u. Dataflow Model: Data Driven u. Instructions execute when their data becomes available! i 1: z = a + b; i 2: t = z*d; i 3: w = a + e; suppose a is available => if e is available before b execution sequence: i 3 before i 1 17

Shortcomings of Other Models u Were not efficiently implementable on hardware u Or were difficult to understand for software designers. 18

Valiant’s Question What is the von Neumann model for parallel computing? Finding it, Valiant thinks, can enable parallel software and hardware to be developed independently and transferred easily! 19

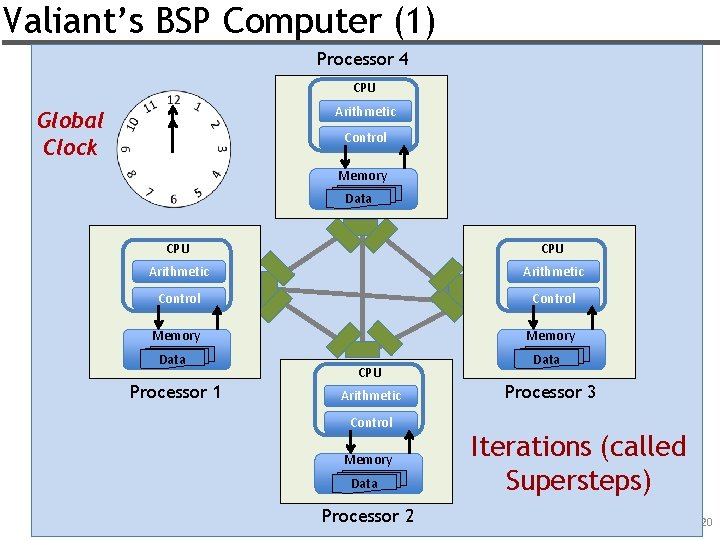

Valiant’s BSP Computer (1) Processor 4 CPU Arithmetic Global Clock Control Memory Data CPU Arithmetic Control Memory Data Processor 1 CPU Arithmetic Control Memory Data Processor 2 Processor 3 Iterations (called Supersteps) 20

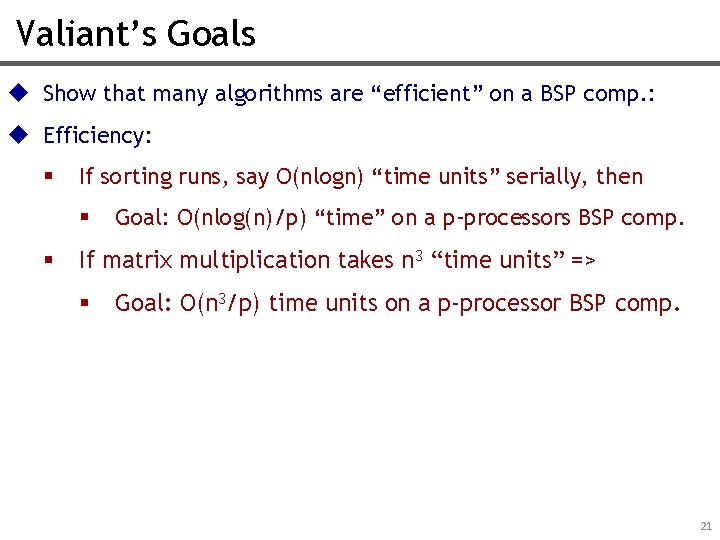

Valiant’s Goals u Show that many algorithms are “efficient” on a BSP comp. : u Efficiency: § If sorting runs, say O(nlogn) “time units” serially, then § § Goal: O(nlog(n)/p) “time” on a p-processors BSP comp. If matrix multiplication takes n 3 “time units” => § Goal: O(n 3/p) time units on a p-processor BSP comp. 21

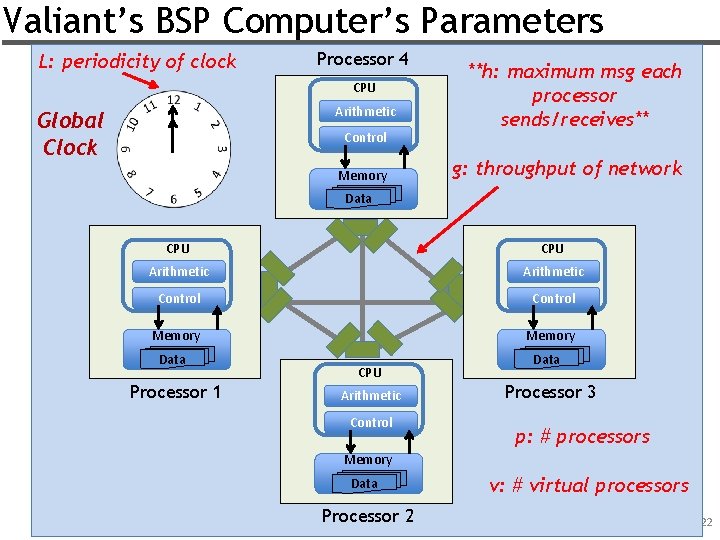

Valiant’s BSP Computer’s Parameters L: periodicity of clock Processor 4 CPU Arithmetic Global Clock Control Memory **h: maximum msg each processor sends/receives** g: throughput of network Data CPU Arithmetic Control Memory Data Processor 1 CPU Arithmetic Control Processor 3 p: # processors Memory Data Processor 2 v: # virtual processors 22

Valiant’s Conditions For Efficiency u Makes observations such as: u For efficiency v has to be O(plogp). u For efficiency L > O(logp) is enough. u …. Essentially argues that if throughput is large enough and we have enough “slack parallelism” we get efficiency for different computations. 23

Recall: Success of von Neumann Model 1) Universality: A “universal” machine, can execute any program 2) Efficiently realizable in hardware: w/ different technologies. 3) Easy to understand for software developers 24

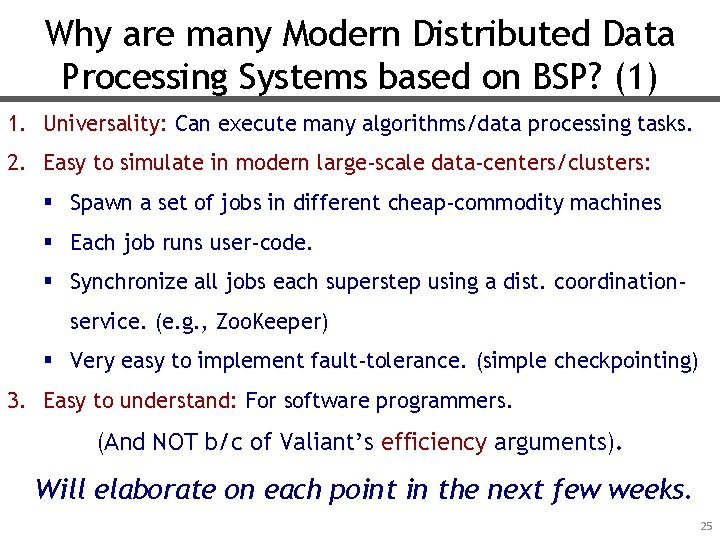

Why are many Modern Distributed Data Processing Systems based on BSP? (1) 1. Universality: Can execute many algorithms/data processing tasks. 2. Easy to simulate in modern large-scale data-centers/clusters: § Spawn a set of jobs in different cheap-commodity machines § Each job runs user-code. § Synchronize all jobs each superstep using a dist. coordinationservice. (e. g. , Zoo. Keeper) § Very easy to implement fault-tolerance. (simple checkpointing) 3. Easy to understand: For software programmers. (And NOT b/c of Valiant’s efficiency arguments). Will elaborate on each point in the next few weeks. 25

Outline For Today 1. Finish of Overview 2. Valiant’s 1990 BSP Paper 3. Map. Reduce 26

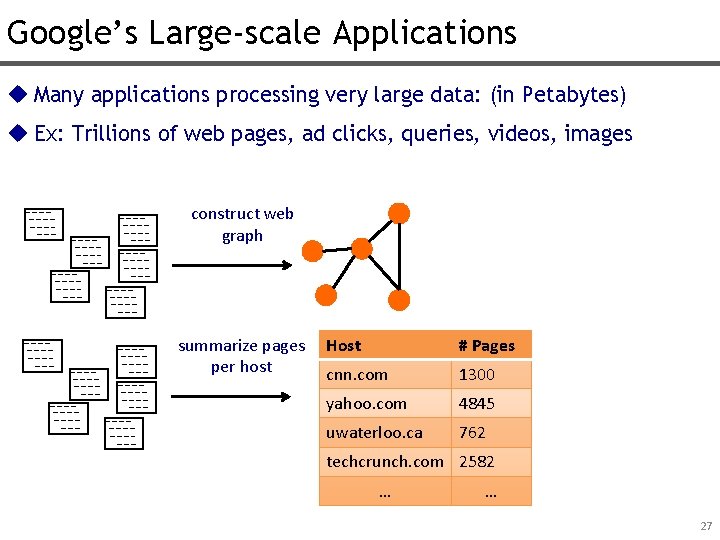

Google’s Large-scale Applications u Many applications processing very large data: (in Petabytes) u Ex: Trillions of web pages, ad clicks, queries, videos, images construct web graph summarize pages per host Host # Pages cnn. com 1300 yahoo. com 4845 uwaterloo. ca 762 techcrunch. com 2582 … … 27

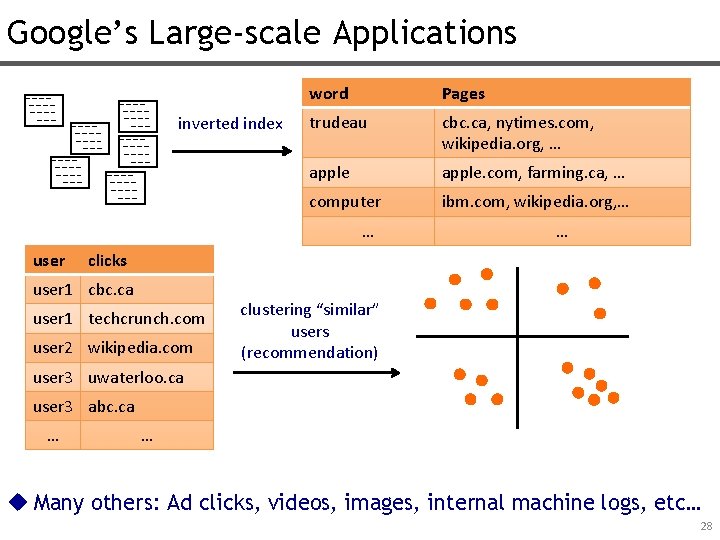

Google’s Large-scale Applications inverted index word Pages trudeau cbc. ca, nytimes. com, wikipedia. org, … apple. com, farming. ca, … computer ibm. com, wikipedia. org, … … user … clicks user 1 cbc. ca user 1 techcrunch. com user 2 wikipedia. com clustering “similar” users (recommendation) user 3 uwaterloo. ca user 3 abc. ca … … u Many others: Ad clicks, videos, images, internal machine logs, etc… 28

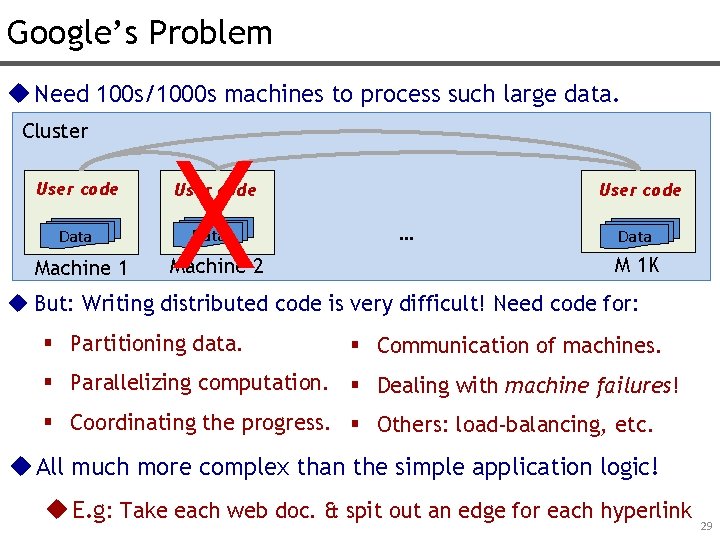

Google’s Problem u Need 100 s/1000 s machines to process such large data. Cluster User code Data Machine 1 X User code Data Machine 2 User code … Data M 1 K u But: Writing distributed code is very difficult! Need code for: § Partitioning data. § Communication of machines. § Parallelizing computation. § Dealing with machine failures! § Coordinating the progress. § Others: load-balancing, etc. u All much more complex than the simple application logic! u E. g: Take each web doc. & spit out an edge for each hyperlink 29

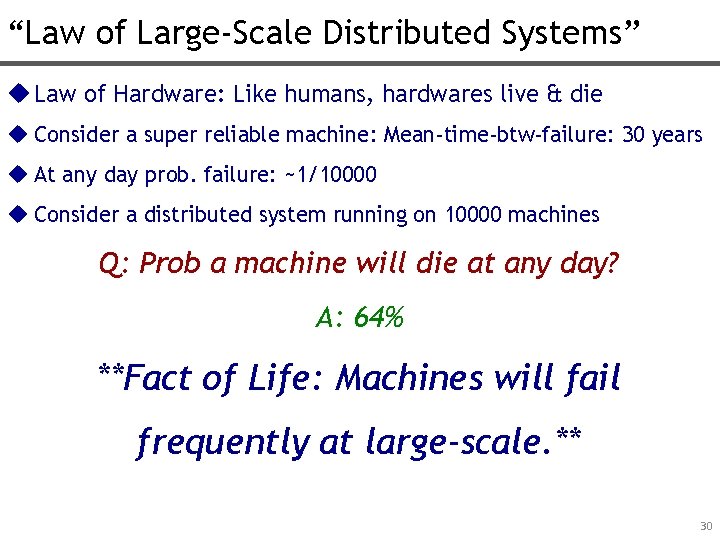

“Law of Large-Scale Distributed Systems” u Law of Hardware: Like humans, hardwares live & die u Consider a super reliable machine: Mean-time-btw-failure: 30 years u At any day prob. failure: ~1/10000 u Consider a distributed system running on 10000 machines Q: Prob a machine will die at any day? A: 64% **Fact of Life: Machines will fail frequently at large-scale. ** 30

Google’s Previous Solution u Special-purpose distributed program for each application. u Problem: Not scalable § Difficult for many engineers to write parallel code § Development speed: Thousands lines for simple applications. § Code repetition for common tasks: § Data Partitioning § Code parallelizing, Fault-tolerance etc. 31

Google’s New Solution: Map. Reduce u Distr. Data Processing System with 3 fundamental properties: 1. Transparent Parallelism § i. e: User-code parallelization & data partitioning automatically done by the system 2. Transparent Fault-tolerance § i. e: Recovery from failures is automatic 3. A Simple Programming API § map() & reduce() 32

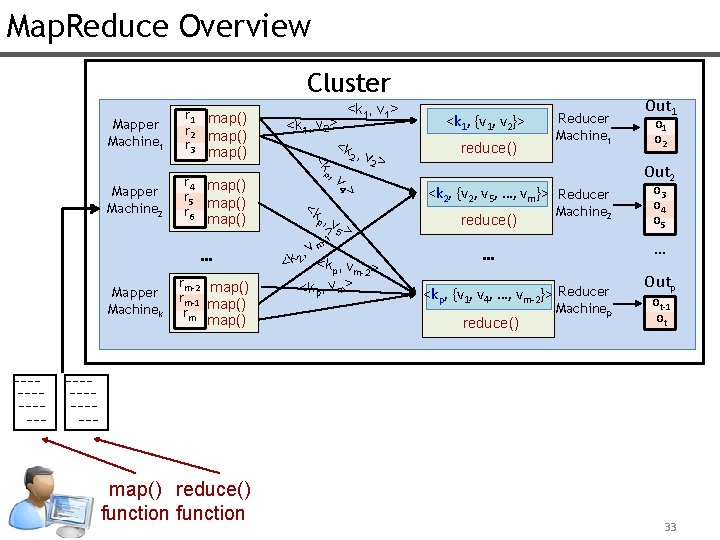

Map. Reduce Overview Cluster map() reduce() function > rm-2 map() rm-1 map() rm map() 4 Mapper Machinek , v … <k 1, v 2> <k , 2 v > 2 <k 1, {v 1, v 2}> reduce() Reducer Machine 1 p Mapper Machine 2 r 4 map() r 5 map() r 6 map() <k 1, v 1> <k Mapper Machine 1 r 1 map() r 2 map() r 3 map() <k p, - v 5 > > 1 , vm 2 <k <kp, vm-2> <kp, vm> <k 2, {v 2, v 5, …, vm}> Reducer reduce() Machine 2 o 1 o 2 Out 2 o 3 o 4 o 5 … … <kp, {v 1, v 4, …, vm-2}> Reducer reduce() Out 1 Machinep Outp ot-1 ot 33

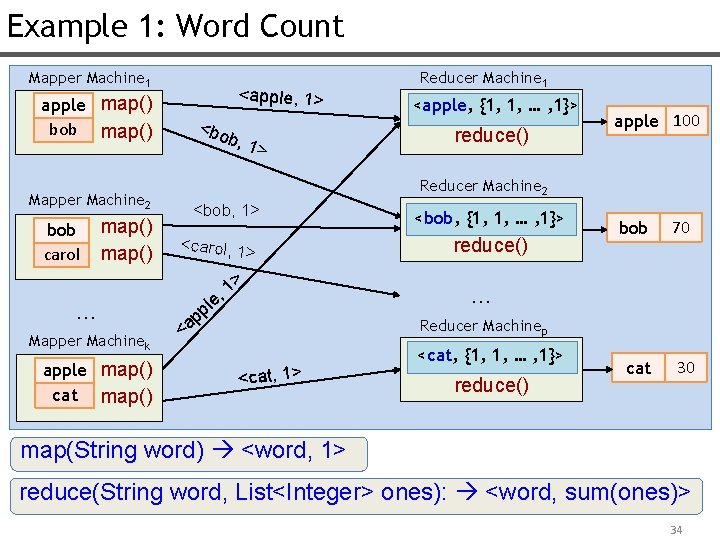

Example 1: Word Count Mapper Machine 1 apple map() bob map() Mapper Machine 2 bob carol map() … Mapper Machinek apple map() cat map() <apple, 1> <bo b, 1 > Reducer Machine 1 <apple, {1, 1, … , 1}> reduce() apple 100 Reducer Machine 2 <bob, 1> <carol, 1> > 1 , e pl p a < <bob, {1, 1, … , 1}> reduce() bob 70 cat 30 … Reducer Machinep <cat, 1> <cat, {1, 1, … , 1}> reduce() map(String word) <word, 1> reduce(String word, List<Integer> ones): <word, sum(ones)> 34

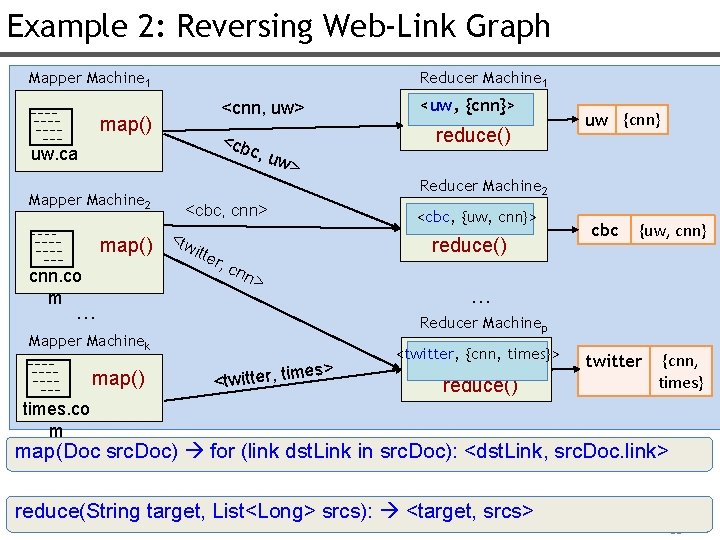

Example 2: Reversing Web-Link Graph Mapper Machine 1 Reducer Machine 1 map() uw. ca Mapper Machine 2 map() cnn. co m <cnn, uw> <uw, {cnn}> <cb c, u w> reduce() Reducer Machine 2 <cbc, cnn> <tw itte <cbc, {uw, cnn}> reduce() r, c cbc {uw, cnn} nn > … … Reducer Machinep Mapper Machinek map() uw {cnn} es> <twitter, tim <twitter, {cnn, times}> reduce() twitter {cnn, times} times. co m map(Doc src. Doc) for (link dst. Link in src. Doc): <dst. Link, src. Doc. link> reduce(String target, List<Long> srcs): <target, srcs> 35

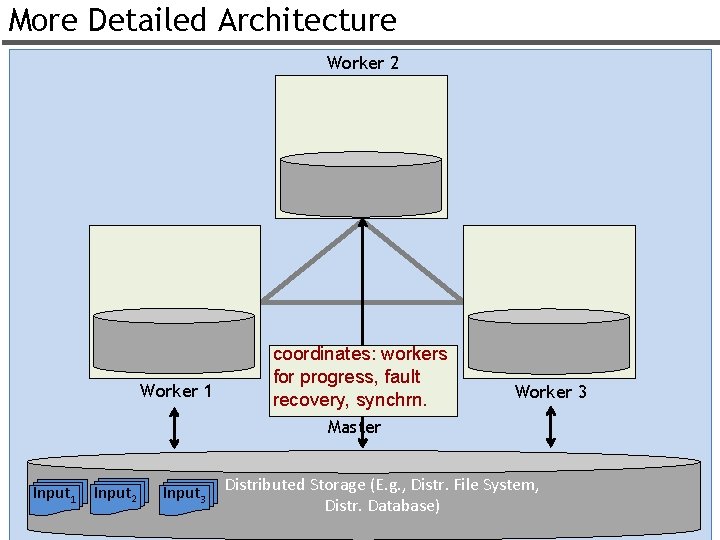

More Detailed Architecture Worker 2 Worker 1 coordinates: workers for progress, fault recovery, synchrn. Worker 3 Master Input 1 Input 2 Input 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 36

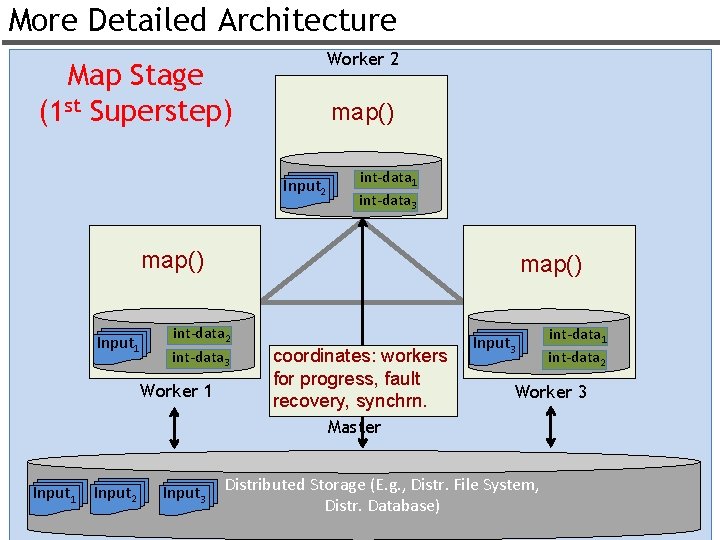

More Detailed Architecture Worker 2 Map Stage (1 st Superstep) map() Input 2 int-data 1 int-data 3 map() Input 1 map() int-data 2 int-data 3 Worker 1 coordinates: workers for progress, fault recovery, synchrn. Input 3 int-data 1 int-data 2 Worker 3 Master Input 1 Input 2 Input 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 37

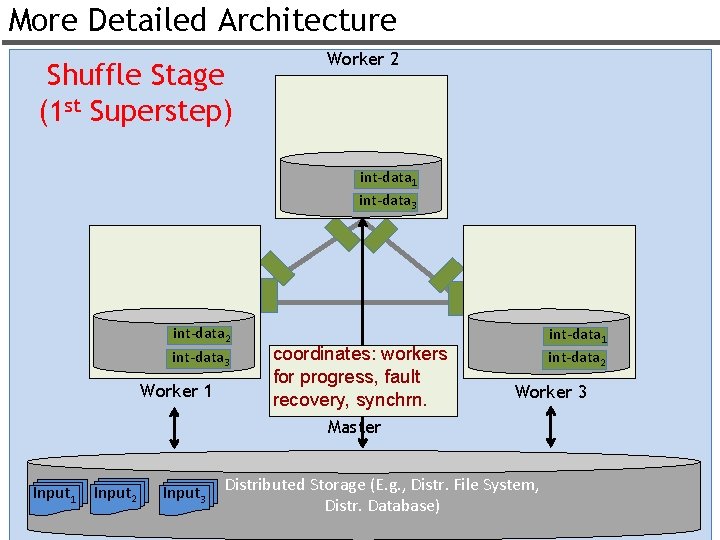

More Detailed Architecture Shuffle Stage (1 st Superstep) Worker 2 int-data 1 int-data 3 int-data 2 int-data 3 Worker 1 coordinates: workers for progress, fault recovery, synchrn. int-data 1 int-data 2 Worker 3 Master Input 1 Input 2 Input 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 38

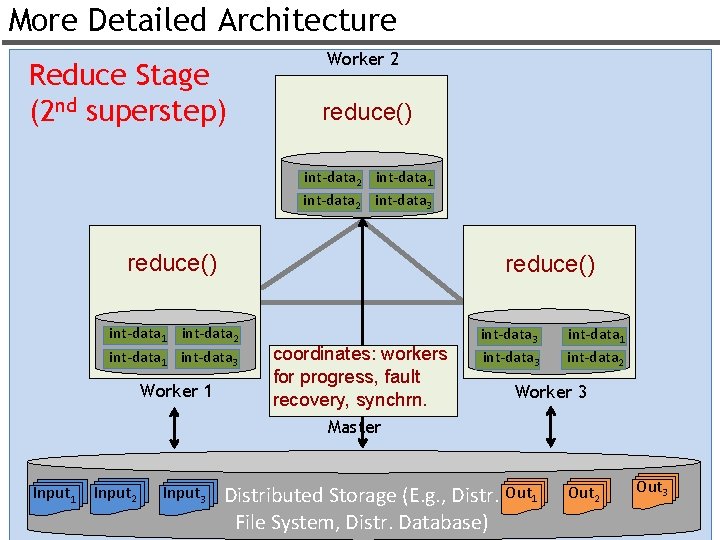

More Detailed Architecture Reduce Stage (2 nd superstep) Worker 2 reduce() int-data 2 int-data 1 int-data 2 int-data 3 reduce() int-data 1 int-data 2 int-data 1 int-data 3 Worker 1 coordinates: workers for progress, fault recovery, synchrn. int-data 3 int-data 1 int-data 2 Worker 3 Master Input 1 Input 2 Input 3 Distributed Storage (E. g. , Distr. Out 1 File System, Distr. Database) Out 2 Out 3 39

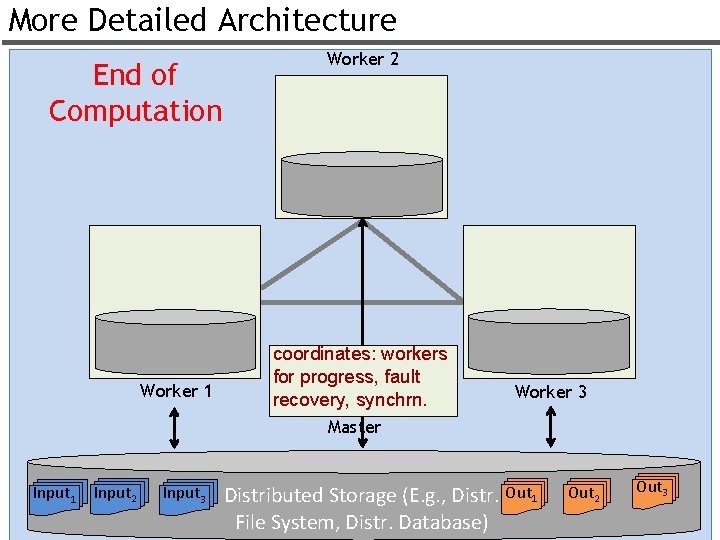

More Detailed Architecture End of Computation Worker 1 Worker 2 coordinates: workers for progress, fault recovery, synchrn. Worker 3 Master Input 1 Input 2 Input 3 Distributed Storage (E. g. , Distr. Out 1 File System, Distr. Database) Out 2 Out 3 40

Fault Tolerance u 3 Failure Scenarios 1. Master Failure: Nothing is done. Computation Fails. 2. Mapper Failure (next slide) 3. Reducer Failure (next slide) 41

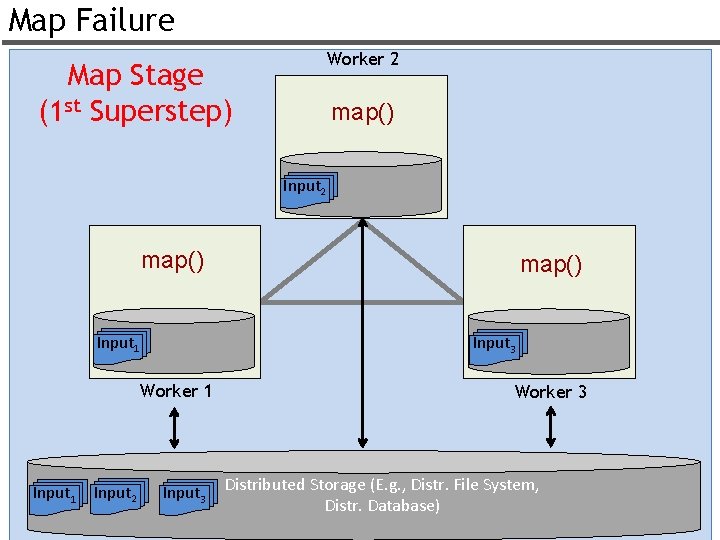

Map Failure Worker 2 Map Stage (1 st Superstep) map() Input 2 map() Input 1 Input 3 Worker 1 Input 2 map() Input 3 Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 42

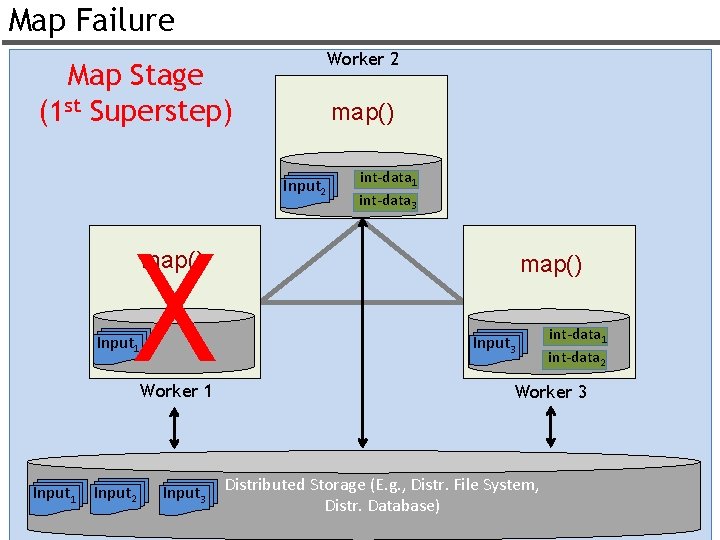

Map Failure Worker 2 Map Stage (1 st Superstep) map() Input 2 X int-data 1 int-data 3 map() Input 1 Worker 1 Input 2 Input 3 map() Input 3 int-data 1 int-data 2 Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 43

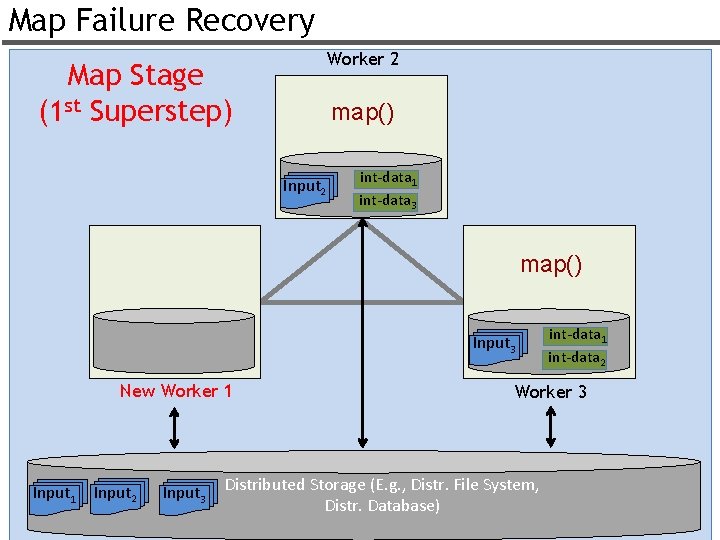

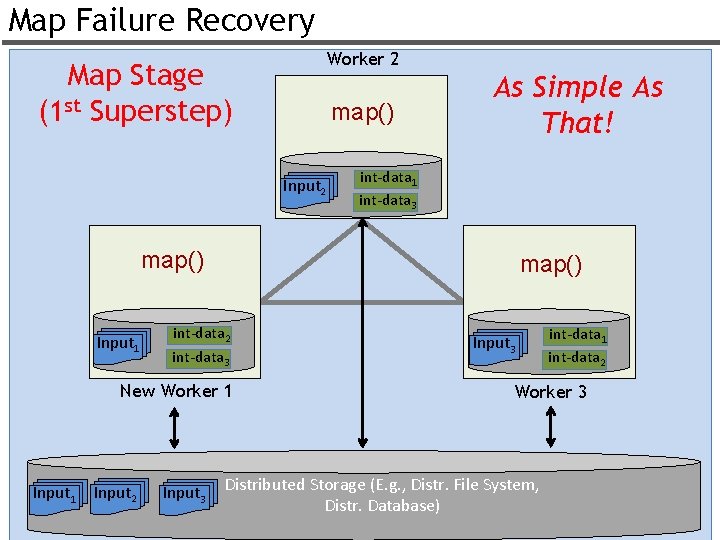

Map Failure Recovery Worker 2 Map Stage (1 st Superstep) map() Input 2 int-data 1 int-data 3 map() Input 3 New Worker 1 Input 2 Input 3 int-data 1 int-data 2 Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 44

Map Failure Recovery Worker 2 Map Stage (1 st Superstep) map() Input 2 As Simple As That! int-data 1 int-data 3 map() Input 1 map() int-data 2 int-data 3 New Worker 1 Input 2 Input 3 int-data 1 int-data 2 Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 45

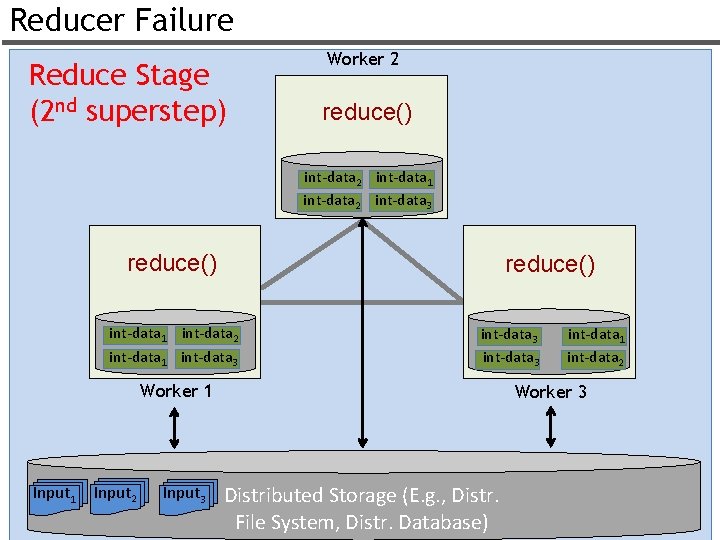

Reducer Failure Reduce Stage (2 nd superstep) Worker 2 reduce() int-data 2 int-data 1 int-data 2 int-data 3 reduce() int-data 1 int-data 2 int-data 1 int-data 3 Worker 1 Input 2 Input 3 int-data 1 int-data 2 Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) 46

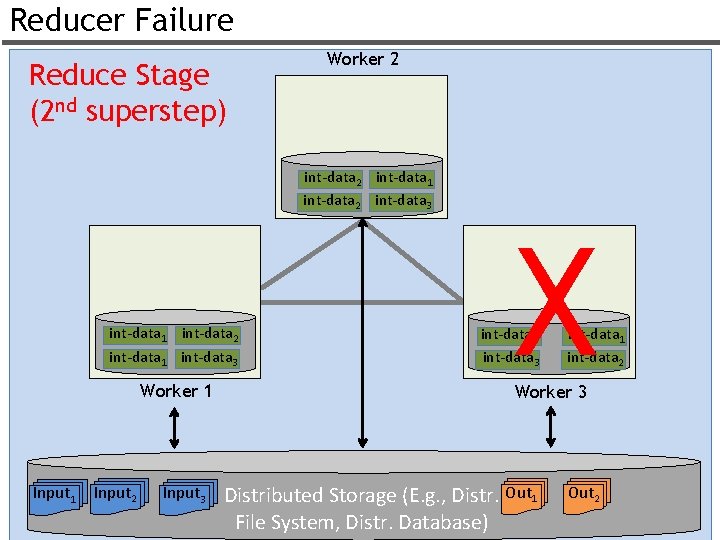

Reducer Failure Reduce Stage (2 nd superstep) Worker 2 int-data 1 int-data 2 int-data 3 int-data 1 int-data 2 int-data 1 int-data 3 Worker 1 Input 2 Input 3 X int-data 3 int-data 1 int-data 2 Worker 3 Distributed Storage (E. g. , Distr. Out 1 File System, Distr. Database) Out 2 47

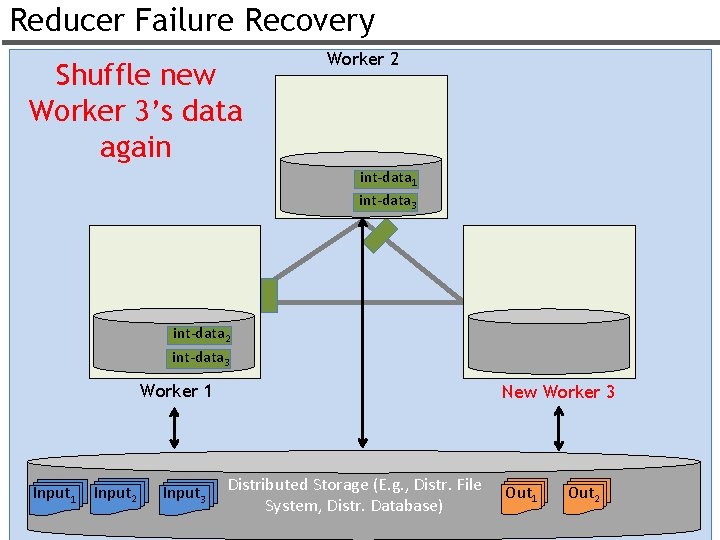

Reducer Failure Recovery Shuffle new Worker 3’s data again Worker 2 int-data 1 int-data 3 int-data 2 int-data 3 Worker 1 Input 2 Input 3 New Worker 3 Distributed Storage (E. g. , Distr. File System, Distr. Database) Out 1 Out 2 48

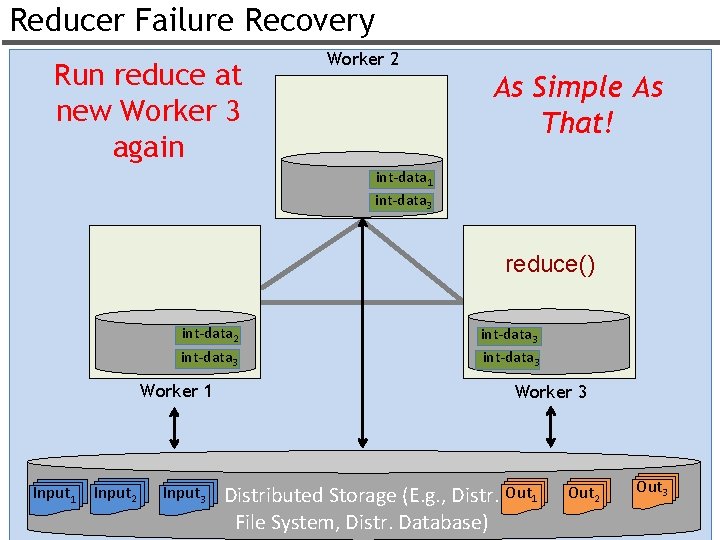

Reducer Failure Recovery Run reduce at new Worker 3 again Worker 2 As Simple As That! int-data 1 int-data 3 reduce() int-data 2 int-data 3 Worker 1 Input 2 Input 3 int-data 3 Worker 3 Distributed Storage (E. g. , Distr. Out 1 File System, Distr. Database) Out 2 Out 3 49

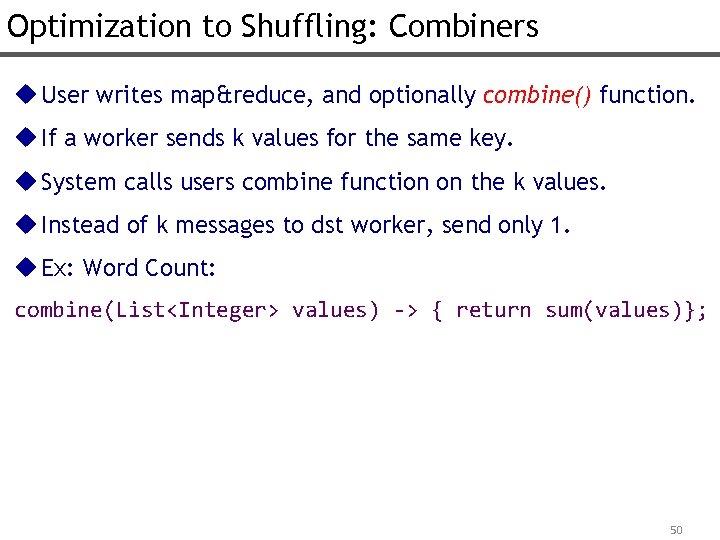

Optimization to Shuffling: Combiners u User writes map&reduce, and optionally combine() function. u If a worker sends k values for the same key. u System calls users combine function on the k values. u Instead of k messages to dst worker, send only 1. u Ex: Word Count: combine(List<Integer> values) -> { return sum(values)}; 50

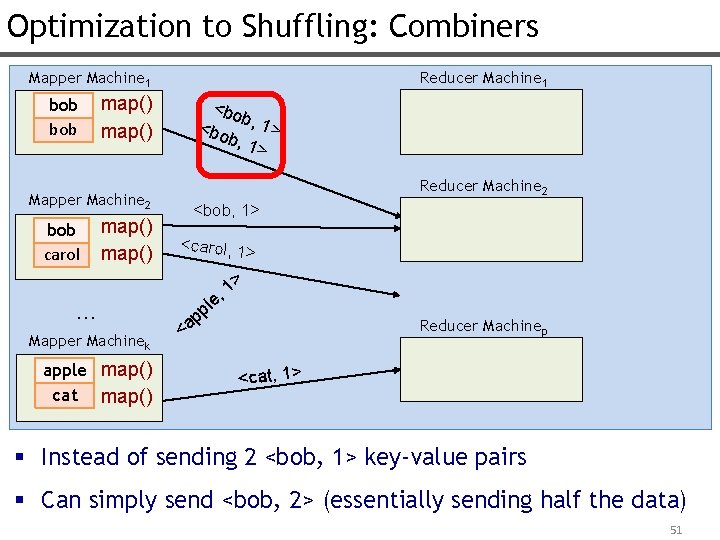

Optimization to Shuffling: Combiners Mapper Machine 1 bob map() Mapper Machine 2 bob carol Reducer Machine 1 map() … Mapper Machinek apple map() cat map() <bo b <bo , 1> b, 1 > Reducer Machine 2 <bob, 1> <carol, 1> > 1 , e l pp <a Reducer Machinep <cat, 1> § Instead of sending 2 <bob, 1> key-value pairs § Can simply send <bob, 2> (essentially sending half the data) 51

Other System Optimizations u Stragglers: Workers that are slow for some reason u Solution: Backup tasks Run some tasks at multiple machines simultaneously (especially the last mappers & reducers) Accept the output of the first one that finishes Q: What are some causes of stragglers? 52

Research Problems u Skew: What can you do when data is skewed? Q: Will Backup Tasks Help with Data Skew? A: No u Statelessness: If you have an iterative computation, can’t keep things in memory across iterations u How to schedule the mappers & reducers? § Does it matter? § E. g. schedule mappers where dist. file system keeps data? u Is the programming API really easy? Or is it too generic for some applications? 53

***Don’t Forget The Fundamental Features of Map. Reduce*** 1. Transparent Parallelism 2. Transparent Fault-tolerance 3. A Simple Programming API 54

- Slides: 54