Map Reduce Program September 25 th 2017 Kyung

Map Reduce Program September 25 th 2017 Kyung Eun Park, D. Sc. kpark@towson. edu

Contents 1. 2. 3. 4. 5. 6. 7. 8. 9. Map. Reduce Evolution Features Map. Reduce Workflows Hadoop Cluster Map. Reduce Architecture Hadoop Data Types Map. Reduce Functions Map. Reduce Programming 2

Big, Large Dataset • • Various sources of large datasets Various types, even no format: unstructured, semi-structured But, the expected analysis operations are simple and repetitive tasks Working with large datasets: 1. To design data processing tasks on the large dataset Can we build the data processing environment with hundreds or thousands of cheap commercialized computers other than almighty computer(s)? 2. To see interesting patterns and unknown characteristics from the data What kind of processing approaches among batch, interactive, on-demand, self-acting in real-time? 3. To attack the data on the processing environment using the processing approaches Can we make each computer execute a simple analysis operation on each partitions. And aggregate the partial results from each node. • Traditionally, parallel workers may need to communicate and cause integrity problems on shared data • General approach is to block concurrent access to the data by synchronizing each worker • Synchronization is not easy to handle with current multi-core and cluster environment • Hide how-to-handle and expose what-to-do (execution is made by execution framework) Abstraction level elevation! Abstraction!!! 3

Key Ideas • Scale “out”, beyond “up” • Rather than upgrading the capacity of an existing system, attach additional disk array or node to scale out horizontally • Move processing to the data: move task to data nodes geographically separated • Cluster have limited bandwidth • Each node includes storage, computing power, and I/O. • Inclusion of new resources (scale out) means performance increase • Process data sequentially, avoid random access • Seeks are expensive, disk throughput is reasonable View Datacenter as a Computer - by Jimmy Lin Adapted from Jimmy Lin’s Slide

The Data center is the computer. 5

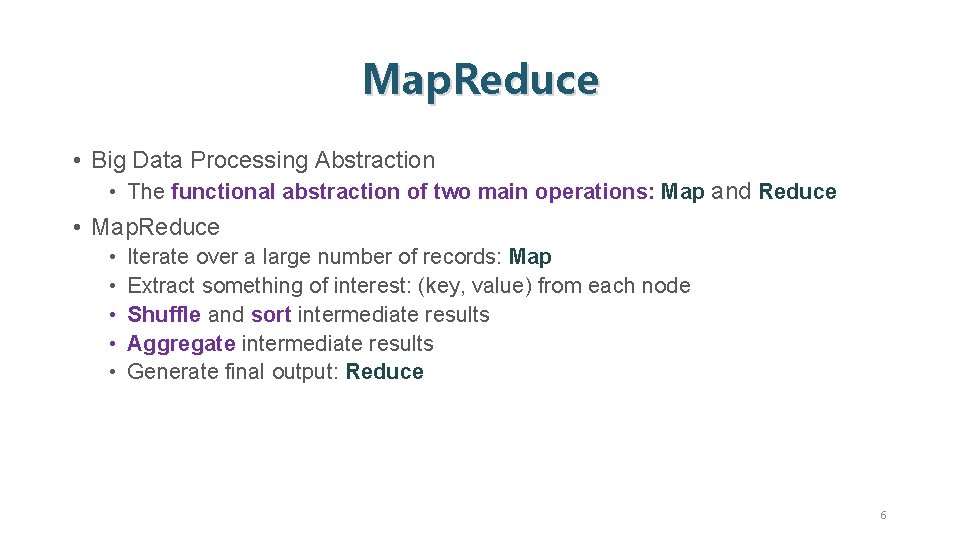

Map. Reduce • Big Data Processing Abstraction • The functional abstraction of two main operations: Map and Reduce • Map. Reduce • • • Iterate over a large number of records: Map Extract something of interest: (key, value) from each node Shuffle and sort intermediate results Aggregate intermediate results Generate final output: Reduce 6

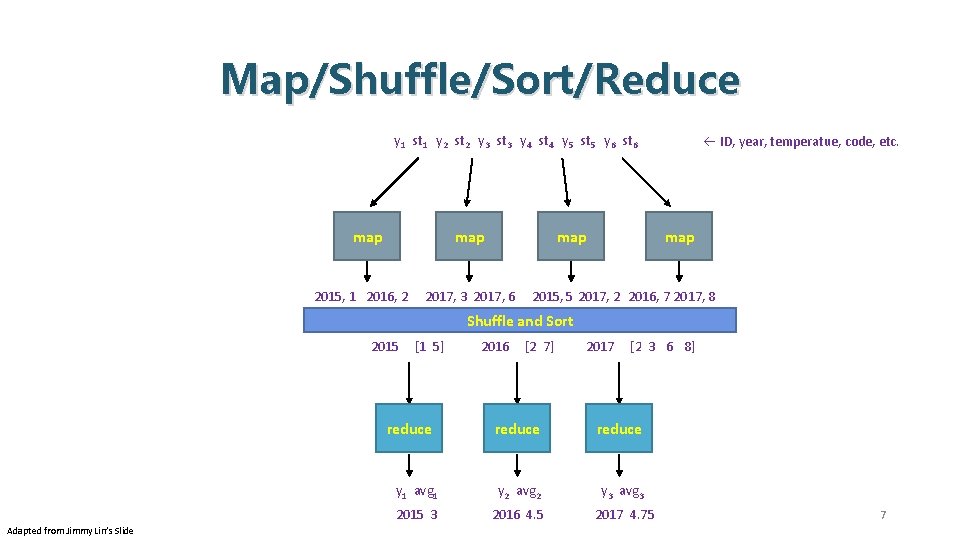

Map/Shuffle/Sort/Reduce y 1 st 1 y 2 st 2 y 3 st 3 y 4 st 4 y 5 st 5 y 6 st 6 map 2015, 1 2016, 2 map 2017, 3 2017, 6 ID, year, temperatue, code, etc. map 2015, 5 2017, 2 2016, 7 2017, 8 Shuffle and Sort 2015 [1 5] reduce Adapted from Jimmy Lin’s Slide 2016 [2 7] 2017 [2 3 6 8] reduce y 1 avg 1 y 2 avg 2 y 3 avg 3 2015 3 2016 4. 5 2017 4. 75 7

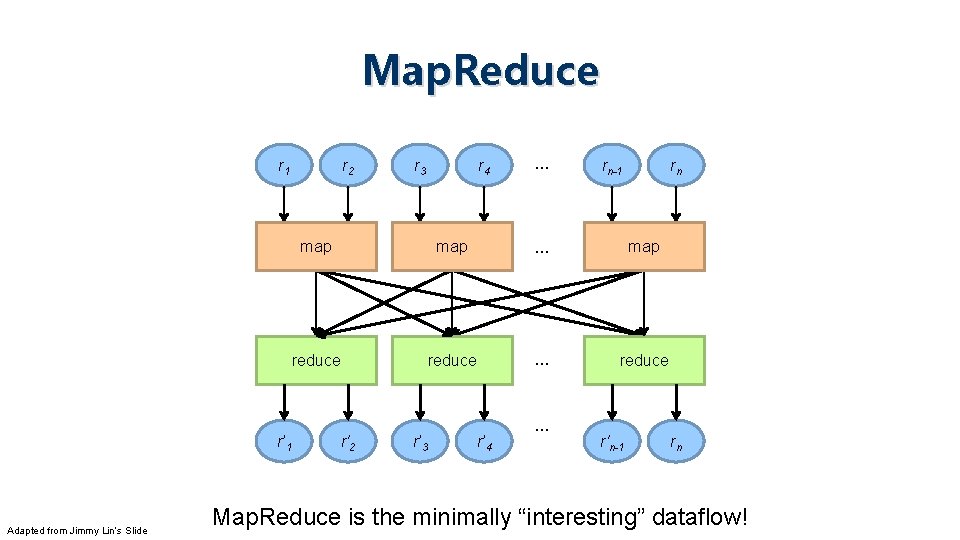

Map. Reduce r 1 r’ 1 Adapted from Jimmy Lin’s Slide r 2 r 3 r 4 … rn-1 rn map … map reduce … reduce r'2 r’ 3 r’ 4 … r'n-1 rn Map. Reduce is the minimally “interesting” dataflow!

![Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K](http://slidetodoc.com/presentation_image_h/f9c6cc54dee8eebf6a0e8fad8e5cfb18/image-9.jpg)

Map. Reduce List[(K 1, V 1)] map f: (K 1, V 1) ⇒ List[(K 2, V 2)] reduce g: (K 2, Iterable[V 2]) ⇒ List[( K 3, V 3)] List([K 3, V 3]) Adapted from Jimmy Lin’s Slide f: extract (year, temperature) [(2015, 1), (2016, 2)] [(2017, 3), (2017, 6)] [(2015, 2), (2016, 7), (2017, 2), (2017, 8] g 1: shuffle/sort (year, temperatures) [(2015, 1), (2015, 5)] [(2016, 2), (2016, 7)] [(2017, 2), (2017, 3), (2017, 6), (2017, 8] g 1 (2015, [1, 5]) g 1 (2016, [2, 7]) g 1 (2017, [ 2, 3, 6, 8]) g 2 : reduce (year, avg. temp. ) [(2015, 3), (2016, 4. 5), (2017, 4. 75)] (note we’re abstracting the “data-parallel” part)

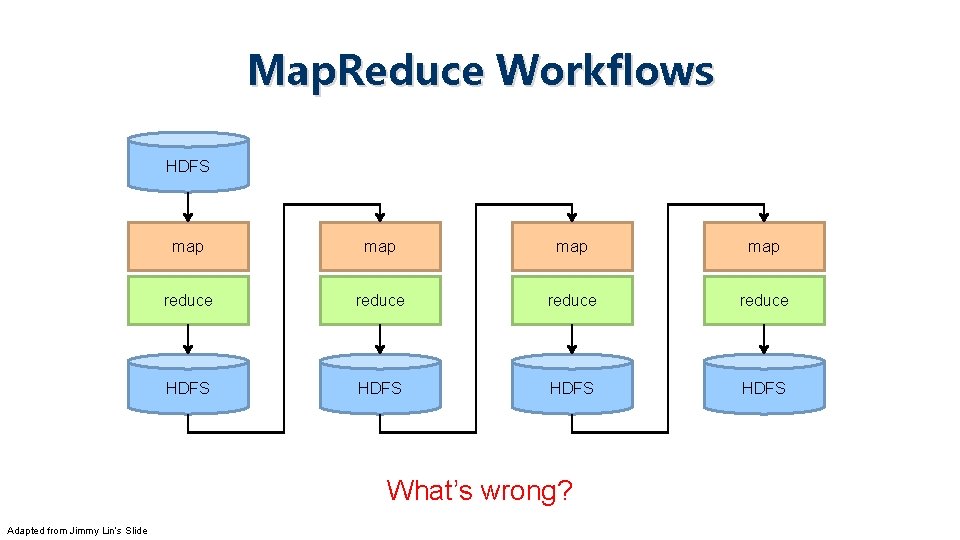

Map. Reduce Workflows HDFS map map reduce HDFS What’s wrong? Adapted from Jimmy Lin’s Slide

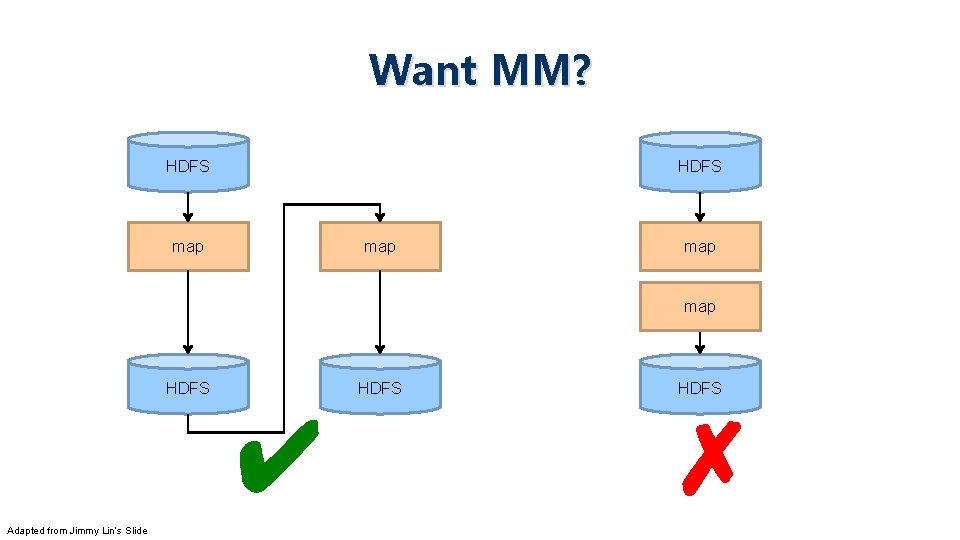

Want MM? HDFS map map HDFS ✔ Adapted from Jimmy Lin’s Slide HDFS ✗

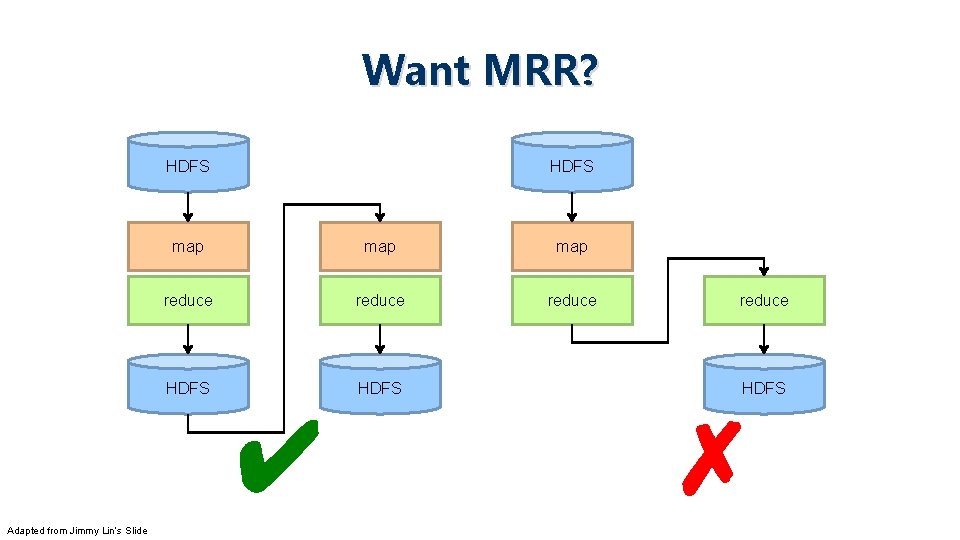

Want MRR? HDFS map map reduce HDFS ✔ Adapted from Jimmy Lin’s Slide reduce HDFS ✗

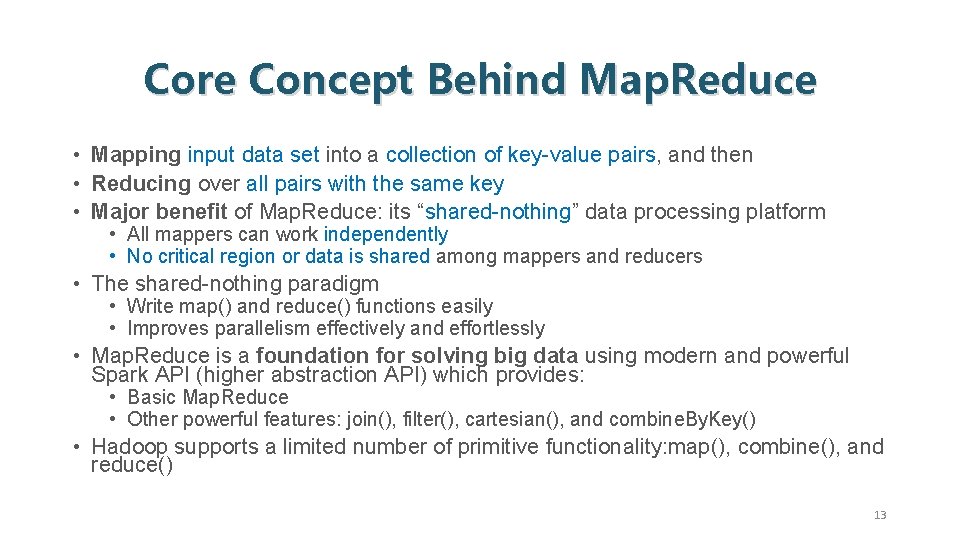

Core Concept Behind Map. Reduce • Mapping input data set into a collection of key-value pairs, and then • Reducing over all pairs with the same key • Major benefit of Map. Reduce: its “shared-nothing” data processing platform • All mappers can work independently • No critical region or data is shared among mappers and reducers • The shared-nothing paradigm • Write map() and reduce() functions easily • Improves parallelism effectively and effortlessly • Map. Reduce is a foundation for solving big data using modern and powerful Spark API (higher abstraction API) which provides: • Basic Map. Reduce • Other powerful features: join(), filter(), cartesian(), and combine. By. Key() • Hadoop supports a limited number of primitive functionality: map(), combine(), and reduce() 13

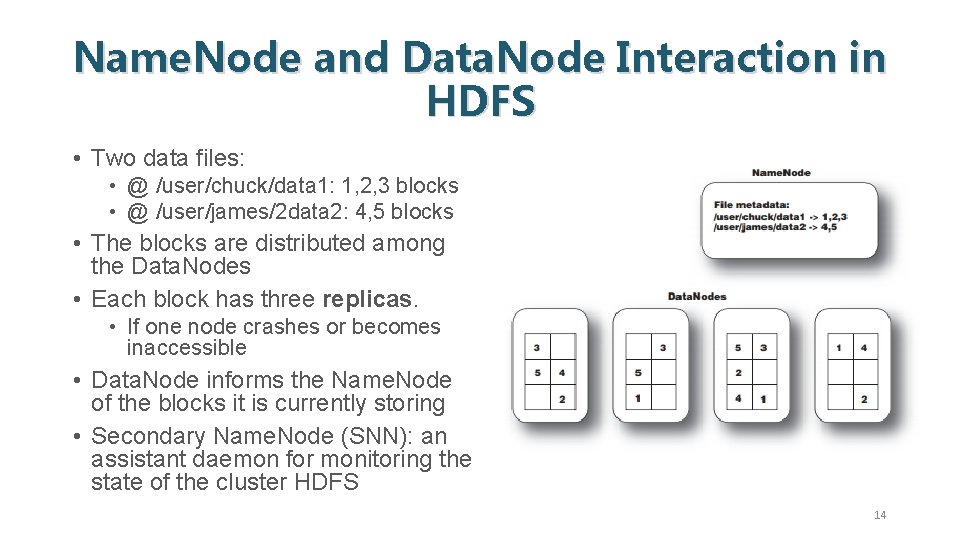

Name. Node and Data. Node Interaction in HDFS • Two data files: • @ /user/chuck/data 1: 1, 2, 3 blocks • @ /user/james/2 data 2: 4, 5 blocks • The blocks are distributed among the Data. Nodes • Each block has three replicas. • If one node crashes or becomes inaccessible • Data. Node informs the Name. Node of the blocks it is currently storing • Secondary Name. Node (SNN): an assistant daemon for monitoring the state of the cluster HDFS 14

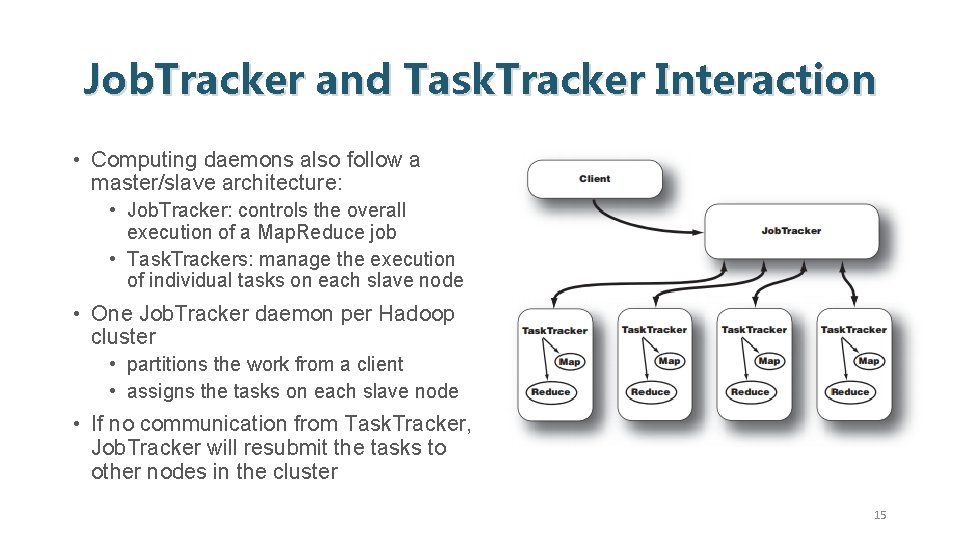

Job. Tracker and Task. Tracker Interaction • Computing daemons also follow a master/slave architecture: • Job. Tracker: controls the overall execution of a Map. Reduce job • Task. Trackers: manage the execution of individual tasks on each slave node • One Job. Tracker daemon per Hadoop cluster • partitions the work from a client • assigns the tasks on each slave node • If no communication from Task. Tracker, Job. Tracker will resubmit the tasks to other nodes in the cluster 15

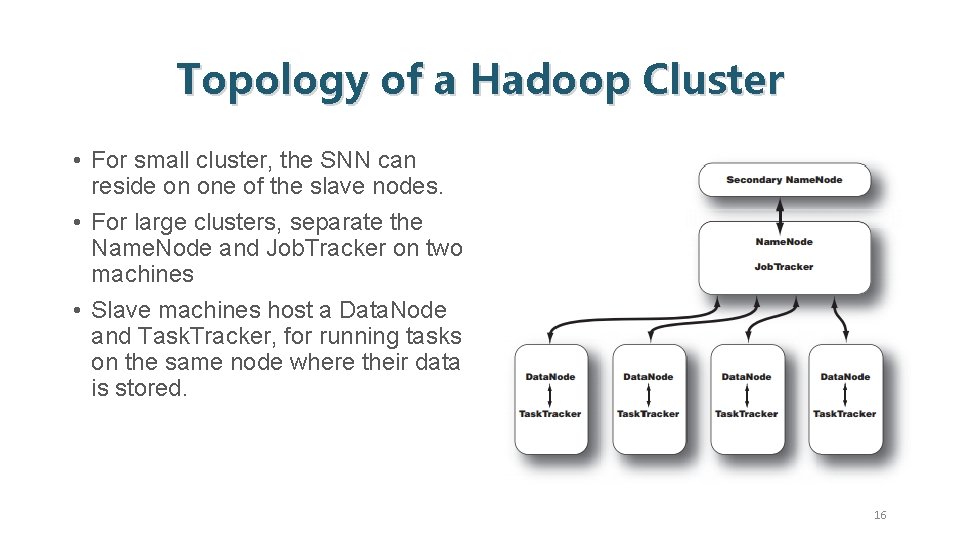

Topology of a Hadoop Cluster • For small cluster, the SNN can reside on one of the slave nodes. • For large clusters, separate the Name. Node and Job. Tracker on two machines • Slave machines host a Data. Node and Task. Tracker, for running tasks on the same node where their data is stored. 16

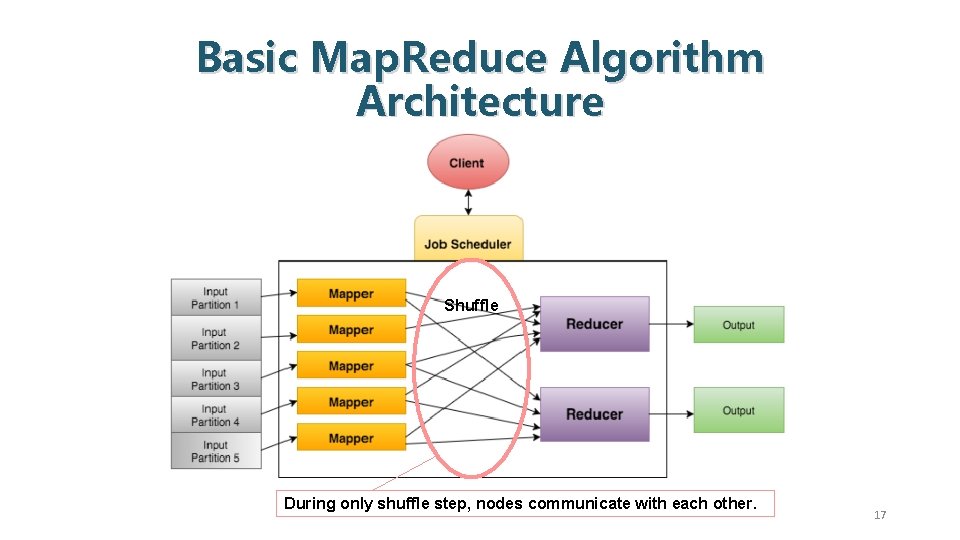

Basic Map. Reduce Algorithm Architecture Shuffle During only shuffle step, nodes communicate with each other. 17

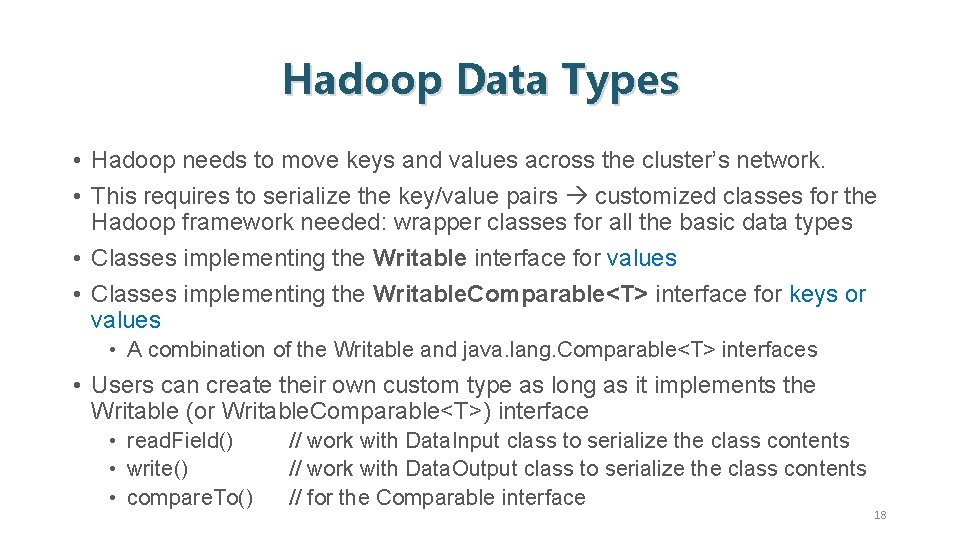

Hadoop Data Types • Hadoop needs to move keys and values across the cluster’s network. • This requires to serialize the key/value pairs customized classes for the Hadoop framework needed: wrapper classes for all the basic data types • Classes implementing the Writable interface for values • Classes implementing the Writable. Comparable<T> interface for keys or values • A combination of the Writable and java. lang. Comparable<T> interfaces • Users can create their own custom type as long as it implements the Writable (or Writable. Comparable<T>) interface • read. Field() • write() • compare. To() // work with Data. Input class to serialize the class contents // work with Data. Output class to serialize the class contents // for the Comparable interface 18

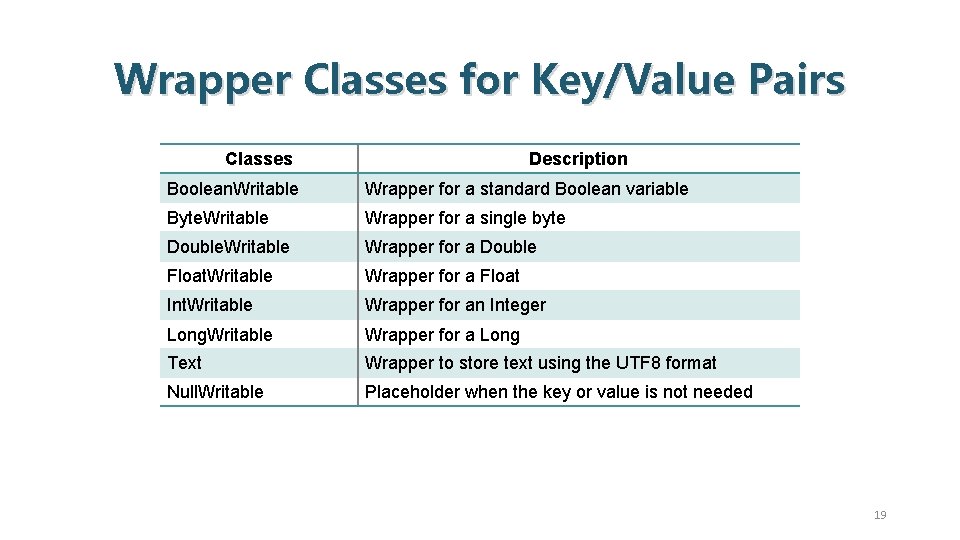

Wrapper Classes for Key/Value Pairs Classes Description Boolean. Writable Wrapper for a standard Boolean variable Byte. Writable Wrapper for a single byte Double. Writable Wrapper for a Double Float. Writable Wrapper for a Float Int. Writable Wrapper for an Integer Long. Writable Wrapper for a Long Text Wrapper to store text using the UTF 8 format Null. Writable Placeholder when the key or value is not needed 19

Best-Fit for Map. Reduce • Lots of input data • Environment with parallel and distributed computing, data storage, and data locality • Many independent tasks without synchronization • Availability of sorting and shuffling mechanisms • Demand for fault tolerance 20

Map. Reduce is • NOT a programming language, but rather a framework for distributed applications • NOT a complete replacement for a relational database • NOT for real-time processing, but rather, designed for batch processing • NOT a solution for all software problems 21

Implementation of Map. Reduce • Runs on a large cluster of commodity machines and is highly scalable • Map. Reduce application processes petabytes or terabytes of data on hundreds or thousands of machines • Easy to use because it hides the details of parallelization, fault tolerance, data distribution, and load balancing • Programmers can focus on writing the two key functions, map() and reduce() 22

![Map. Reduce • Programmers specify two functions: map (k, v) → [(k’, v’)] reduce Map. Reduce • Programmers specify two functions: map (k, v) → [(k’, v’)] reduce](http://slidetodoc.com/presentation_image_h/f9c6cc54dee8eebf6a0e8fad8e5cfb18/image-23.jpg)

Map. Reduce • Programmers specify two functions: map (k, v) → [(k’, v’)] reduce (k’, [v’]) → [(k’, v’)] • All values with the same key are reduced together • The execution framework (Map. Reduce runtime) handles everything else… • Not quite…usually, programmers also specify: partition (k’, number of partitions) → partition for k’ • Often a simple hash of the key, e. g. , hash(k’) mod n • Divides up key space for parallel reduce operations combine (k’, [v’]) → [(k’, v’’)] • Mini-reducers that run in memory after the map phase • Used as an optimization to reduce network traffic

Map. Reduce “Runtime” • Handles scheduling • Assigns workers to map and reduce tasks • Handles “data distribution” • Moves processes to data • Handles synchronization • Gathers, sorts, and shuffles intermediate data • Handles errors and faults • Detects worker failures and restarts • Everything happens on top of a distributed FS (later)

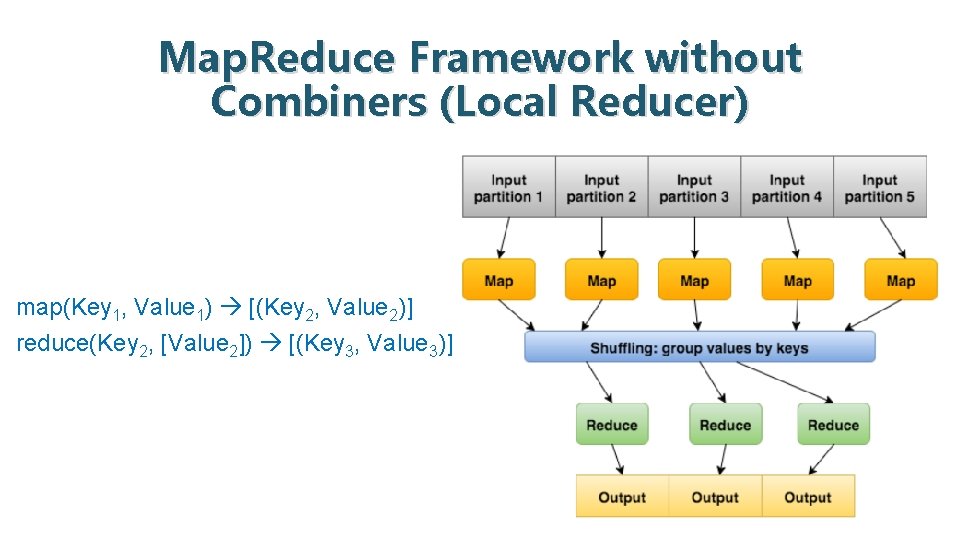

map() function • Master (name) node takes the input data set, partitions it into smaller data chunks, and distributes them to worker (data) nodes. • The worker nodes apply the same transformation function to each data chunk, then pass the results back to the master node • Mapper: map(): (Key 1, Value 1) [(Key 2, Value 2)] // square brackets denote a list 25

reduce() function • Master (name) node shuffles and clusters the received results based on unique key-value pairs; then, through another redistribution to the workers/slaves, these values are combined via another type of transformation function. • Reducer: reduce(): (Key 2, [Value 2]) [(Key 3, Value 3)] // square brackets denote a list 26

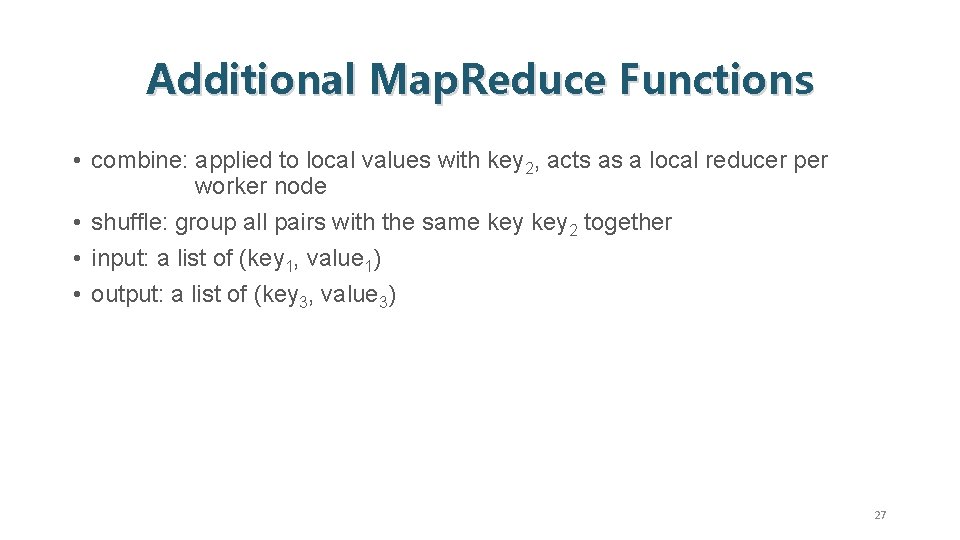

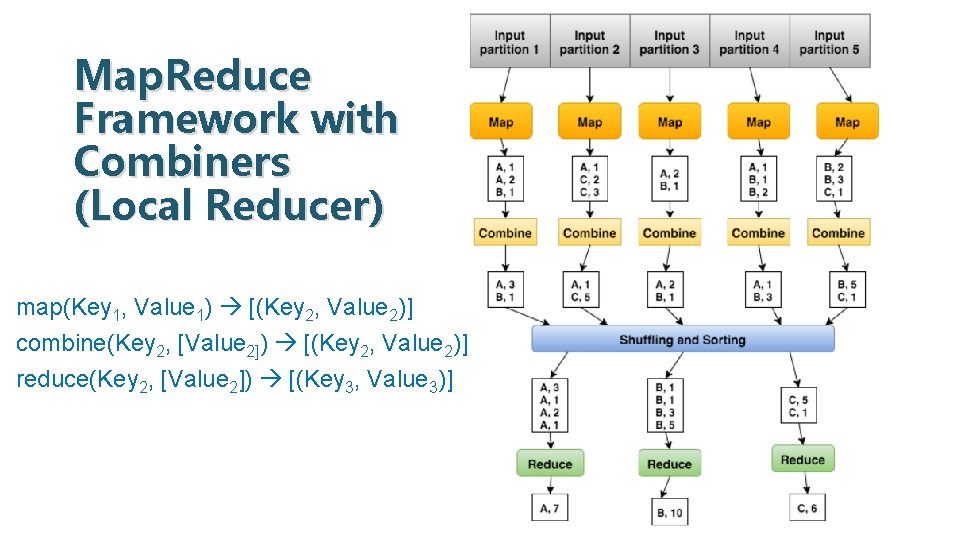

Additional Map. Reduce Functions • combine: applied to local values with key 2, acts as a local reducer per worker node • shuffle: group all pairs with the same key 2 together • input: a list of (key 1, value 1) • output: a list of (key 3, value 3) 27

Map. Reduce Framework without Combiners (Local Reducer) map(Key 1, Value 1) [(Key 2, Value 2)] reduce(Key 2, [Value 2]) [(Key 3, Value 3)] 28

Map. Reduce Framework with Combiners (Local Reducer) map(Key 1, Value 1) [(Key 2, Value 2)] combine(Key 2, [Value 2]) [(Key 2, Value 2)] reduce(Key 2, [Value 2]) [(Key 3, Value 3)] 29

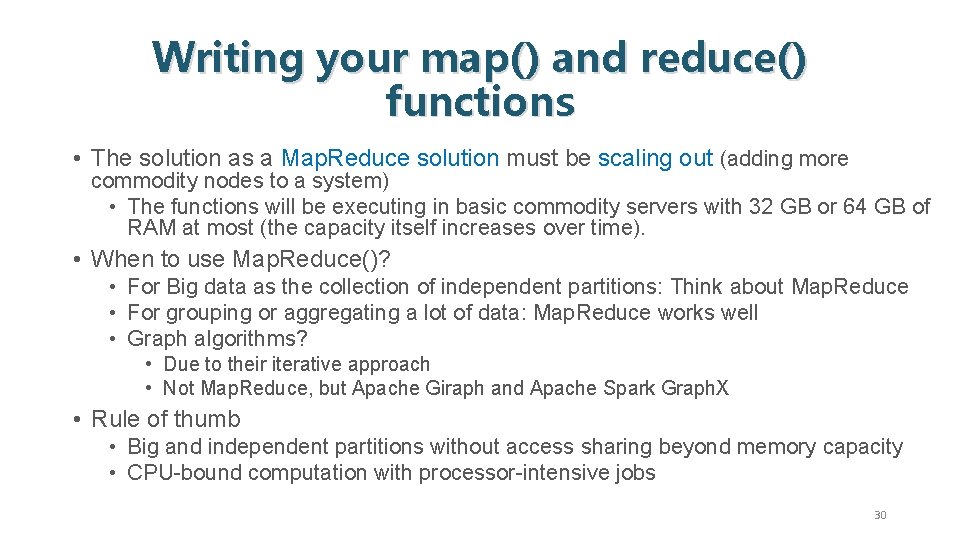

Writing your map() and reduce() functions • The solution as a Map. Reduce solution must be scaling out (adding more commodity nodes to a system) • The functions will be executing in basic commodity servers with 32 GB or 64 GB of RAM at most (the capacity itself increases over time). • When to use Map. Reduce()? • For Big data as the collection of independent partitions: Think about Map. Reduce • For grouping or aggregating a lot of data: Map. Reduce works well • Graph algorithms? • Due to their iterative approach • Not Map. Reduce, but Apache Giraph and Apache Spark Graph. X • Rule of thumb • Big and independent partitions without access sharing beyond memory capacity • CPU-bound computation with processor-intensive jobs 30

Hadoop Map. Reduce Program Components • Driver Program • Mapper Class • Reducer Class 31

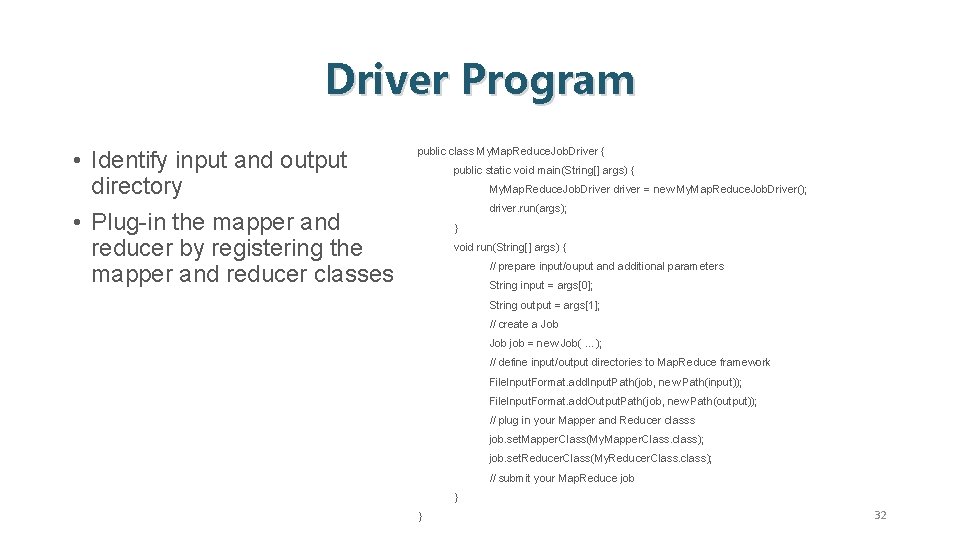

Driver Program • Identify input and output directory • Plug-in the mapper and reducer by registering the mapper and reducer classes public class My. Map. Reduce. Job. Driver { public static void main(String[] args) { My. Map. Reduce. Job. Driver driver = new My. Map. Reduce. Job. Driver(); driver. run(args); } void run(String[] args) { // prepare input/ouput and additional parameters String input = args[0]; String output = args[1]; // create a Job job = new Job( …); // define input/output directories to Map. Reduce framework File. Input. Format. add. Input. Path(job, new Path(input)); File. Input. Format. add. Output. Path(job, new Path(output)); // plug in your Mapper and Reducer classs job. set. Mapper. Class(My. Mapper. Class. class); job. set. Reducer. Class(My. Reducer. Class. class); // submit your Map. Reduce job } } 32

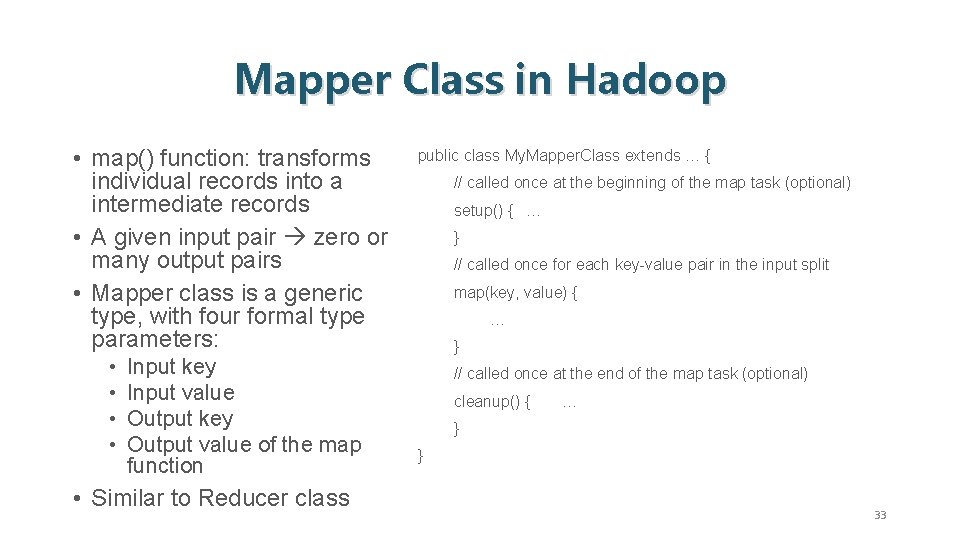

Mapper Class in Hadoop • map() function: transforms individual records into a intermediate records • A given input pair zero or many output pairs • Mapper class is a generic type, with four formal type parameters: • • Input key Input value Output key Output value of the map function • Similar to Reducer class public class My. Mapper. Class extends … { // called once at the beginning of the map task (optional) setup() { … } // called once for each key-value pair in the input split map(key, value) { … } // called once at the end of the map task (optional) cleanup() { … } } 33

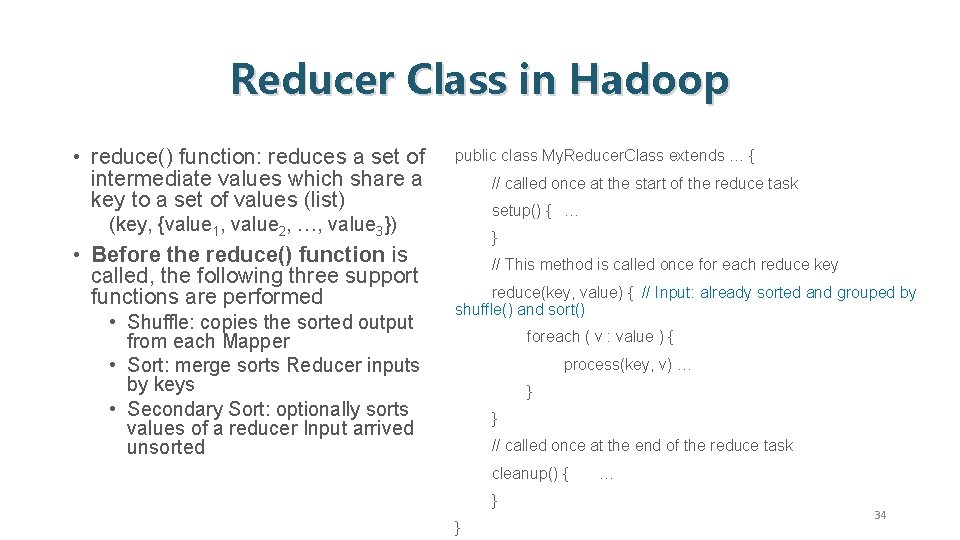

Reducer Class in Hadoop • reduce() function: reduces a set of intermediate values which share a key to a set of values (list) public class My. Reducer. Class extends … { // called once at the start of the reduce task setup() { … (key, {value 1, value 2, …, value 3}) • Before the reduce() function is called, the following three support functions are performed • Shuffle: copies the sorted output from each Mapper • Sort: merge sorts Reducer inputs by keys • Secondary Sort: optionally sorts values of a reducer Input arrived unsorted } // This method is called once for each reduce key reduce(key, value) { // Input: already sorted and grouped by shuffle() and sort() foreach ( v : value ) { process(key, v) … } } // called once at the end of the reduce task cleanup() { } } … 34

![Hadoop Script • $HADOOP_HOME/bin/Hadoop • Usage: Hadoop [--config confdir] COMMAND • Where COMMAND is Hadoop Script • $HADOOP_HOME/bin/Hadoop • Usage: Hadoop [--config confdir] COMMAND • Where COMMAND is](http://slidetodoc.com/presentation_image_h/f9c6cc54dee8eebf6a0e8fad8e5cfb18/image-35.jpg)

Hadoop Script • $HADOOP_HOME/bin/Hadoop • Usage: Hadoop [--config confdir] COMMAND • Where COMMAND is one of: namenode –format the DFS filesystem secondarynamenode run the DFS secondary namenode run the DFS namenode datanode run a DFS datanode dfsadmin run a DFS admin client fsck run a DFS filesystem checking utility fs run a generic filesystem user client balancer run a cluster balancing utility jobtracker run the Map. Reduce job Tracker node pipes run a Pipes job tasktracker run a Map. Reduce task Tracker node job manipulate Map. Reduce jobs version print the version jar <jar> run a jar file (a Java Hadoop program): bin/Hadoop jar <jar> distcp <srcurl> <desturl> copy file or directories recursively archive –archive. Name NAME <src> <dest> create a Hadoop archive daemonlog get/set the log level for each daemon or CLASSNAME run the class named CLASSNAME 35

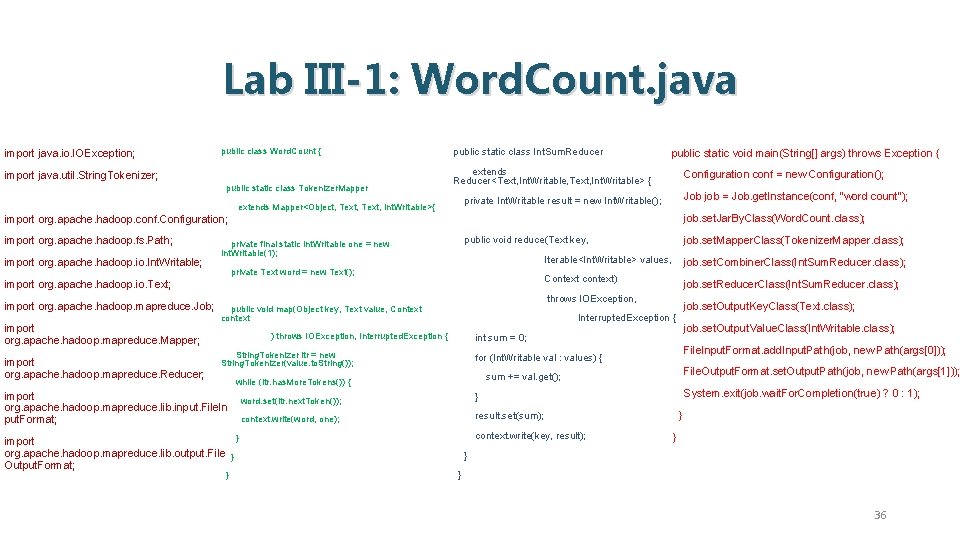

Lab III-1: Word. Count. java import java. io. IOException; public class Word. Count { import java. util. String. Tokenizer; public static class Tokenizer. Mapper public static class Int. Sum. Reducer public static void main(String[] args) throws Exception { extends Reducer<Text, Int. Writable, Text, Int. Writable> { Configuration conf = new Configuration(); Job job = Job. get. Instance(conf, "word count"); private Int. Writable result = new Int. Writable(); extends Mapper<Object, Text, Int. Writable>{ job. set. Jar. By. Class(Word. Count. class); import org. apache. hadoop. conf. Configuration; import org. apache. hadoop. fs. Path; import org. apache. hadoop. io. Int. Writable; public void reduce(Text key, private final static Int. Writable one = new Int. Writable(1); private Text word = new Text(); import org. apache. hadoop. io. Text; import org. apache. hadoop. mapreduce. Job; import org. apache. hadoop. mapreduce. Mapper; import org. apache. hadoop. mapreduce. Reducer; Context context) job. set. Reducer. Class(Int. Sum. Reducer. class); job. set. Output. Key. Class(Text. class); job. set. Output. Value. Class(Int. Writable. class); int sum = 0; String. Tokenizer itr = new String. Tokenizer(value. to. String()); File. Input. Format. add. Input. Path(job, new Path(args[0])); for (Int. Writable val : values) { File. Output. Format. set. Output. Path(job, new Path(args[1])); sum += val. get(); while (itr. has. More. Tokens()) { } job. set. Combiner. Class(Int. Sum. Reducer. class); Interrupted. Exception { ) throws IOException, Interrupted. Exception { } import org. apache. hadoop. mapreduce. lib. output. File } Output. Format; Iterable<Int. Writable> values, throws IOException, public void map(Object key, Text value, Context context import org. apache. hadoop. mapreduce. lib. input. File. In put. Format; job. set. Mapper. Class(Tokenizer. Mapper. class); word. set(itr. next. Token()); } context. write(word, one); result. set(sum); context. write(key, result); System. exit(job. wait. For. Completion(true) ? 0 : 1); } } 36

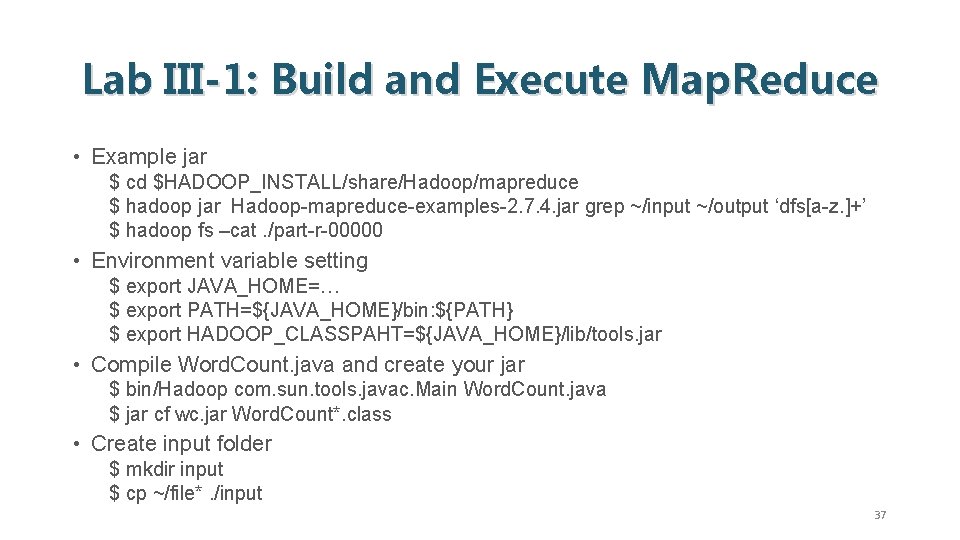

Lab III-1: Build and Execute Map. Reduce • Example jar $ cd $HADOOP_INSTALL/share/Hadoop/mapreduce $ hadoop jar Hadoop-mapreduce-examples-2. 7. 4. jar grep ~/input ~/output ‘dfs[a-z. ]+’ $ hadoop fs –cat. /part-r-00000 • Environment variable setting $ export JAVA_HOME=… $ export PATH=${JAVA_HOME}/bin: ${PATH} $ export HADOOP_CLASSPAHT=${JAVA_HOME}/lib/tools. jar • Compile Word. Count. java and create your jar $ bin/Hadoop com. sun. tools. javac. Main Word. Count. java $ jar cf wc. jar Word. Count*. class • Create input folder $ mkdir input $ cp ~/file*. /input 37

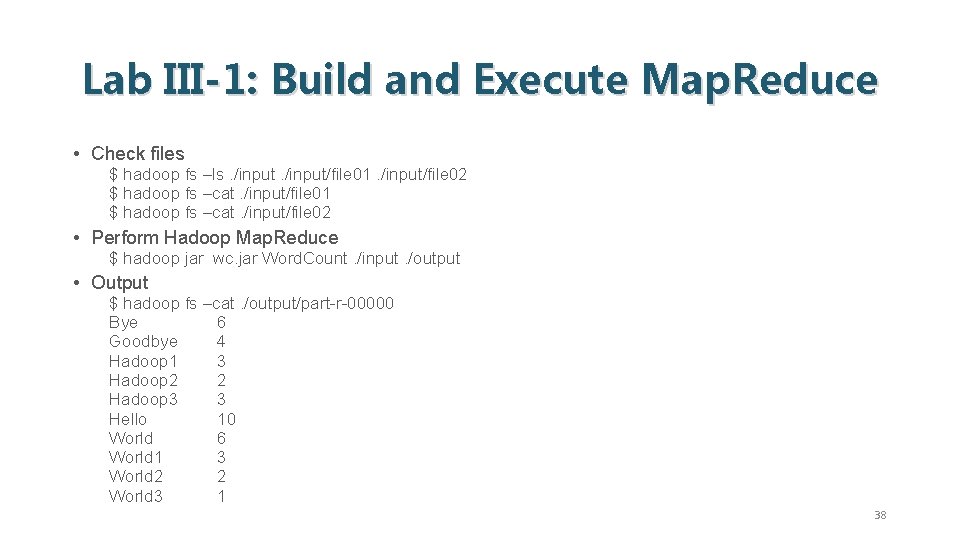

Lab III-1: Build and Execute Map. Reduce • Check files $ hadoop fs –ls. /input/file 01. /input/file 02 $ hadoop fs –cat. /input/file 01 $ hadoop fs –cat. /input/file 02 • Perform Hadoop Map. Reduce $ hadoop jar wc. jar Word. Count. /input. /output • Output $ hadoop fs –cat. /output/part-r-00000 Bye 6 Goodbye 4 Hadoop 1 3 Hadoop 2 2 Hadoop 3 3 Hello 10 World 6 World 1 3 World 2 2 World 3 1 38

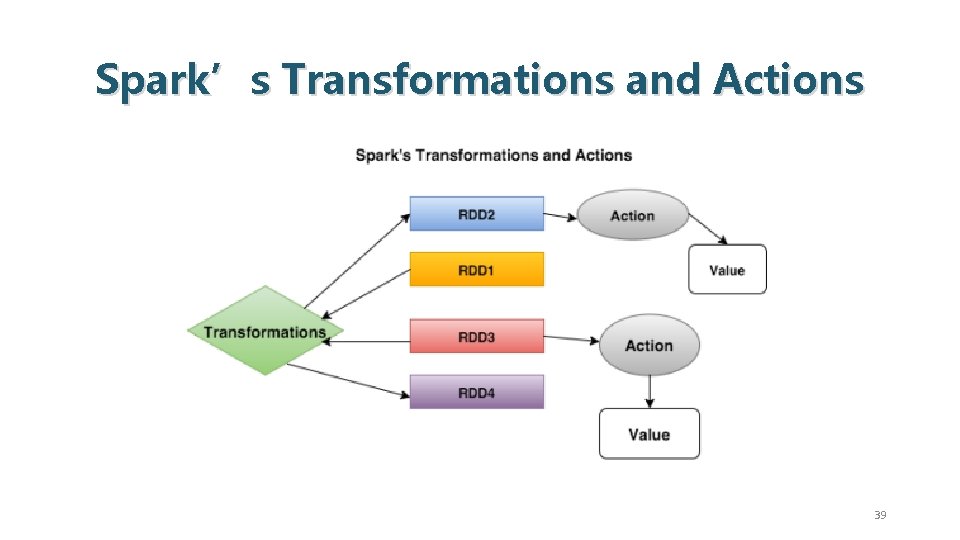

Spark’s Transformations and Actions 39

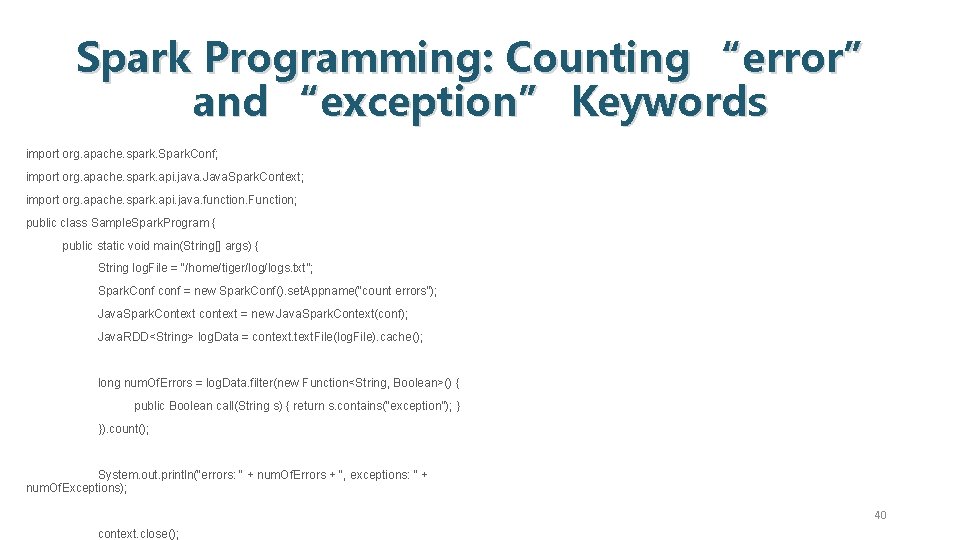

Spark Programming: Counting “error” and “exception” Keywords import org. apache. spark. Spark. Conf; ) import org. apache. spark. api. java. Java. Spark. Context; import org. apache. spark. api. java. function. Function; public class Sample. Spark. Program { public static void main(String[] args) { String log. File = “/home/tiger/logs. txt”; Spark. Conf conf = new Spark. Conf(). set. Appname(“count errors”); Java. Spark. Context context = new Java. Spark. Context(conf); Java. RDD<String> log. Data = context. File(log. File). cache(); long num. Of. Errors = log. Data. filter(new Function<String, Boolean>() { public Boolean call(String s) { return s. contains(“exception”); } }). count(); System. out. println(“errors: “ + num. Of. Errors + “, exceptions: “ + num. Of. Exceptions); 40 context. close();

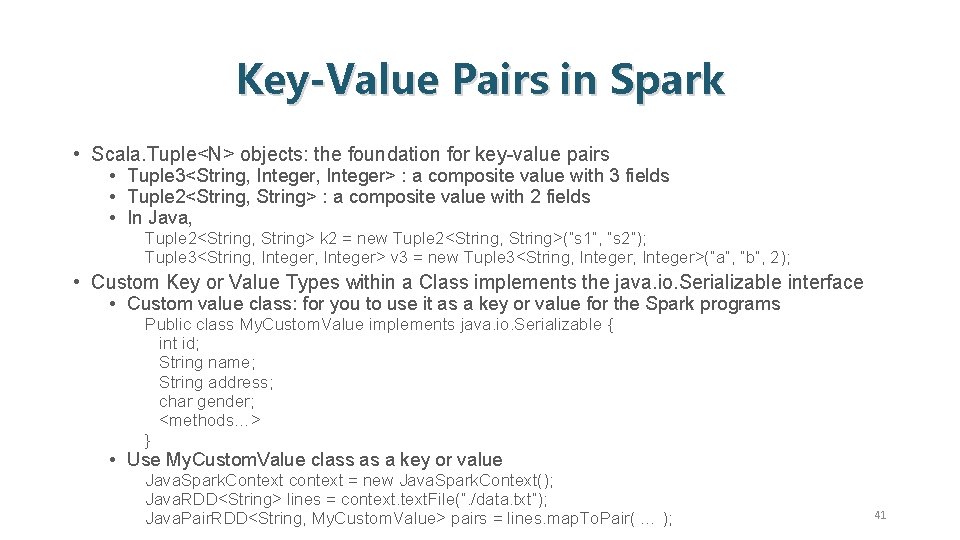

Key-Value Pairs in Spark • Scala. Tuple<N> objects: the foundation for key-value pairs • Tuple 3<String, Integer> : a composite value with 3 fields • Tuple 2<String, String> : a composite value with 2 fields • In Java, Tuple 2<String, String> k 2 = new Tuple 2<String, String>(“s 1”, “s 2”); Tuple 3<String, Integer> v 3 = new Tuple 3<String, Integer>(“a”, “b”, 2); • Custom Key or Value Types within a Class implements the java. io. Serializable interface • Custom value class: for you to use it as a key or value for the Spark programs Public class My. Custom. Value implements java. io. Serializable { int id; String name; String address; char gender; <methods…> } • Use My. Custom. Value class as a key or value Java. Spark. Context context = new Java. Spark. Context(); Java. RDD<String> lines = context. File(“. /data. txt”); Java. Pair. RDD<String, My. Custom. Value> pairs = lines. map. To. Pair( … ); 41

Transformations • Return a new, modified RDD based on the original • • • fold. By. Key() map() filter() sample() union() 42

Actions • Return a value based on some computation being performed on an RDD. • • • count. By. Key() reduce() count() first() foreach() • Using transformations and actions, complex DAG can be created to solve Map. Reduce problems and beyond. 43

Reference • Jimmy Lin (at Univ. of Waterloo) https: //lintool. github. io/bigdata-2017 w/index. html • Jimmy Lin and Chris Dyer, Data-Intensive Text Processing with Map. Reduce, 2010. : https: //lintool. github. io/Map. Reduce. Algorithms/ • Mahmoud Parsian, Data Algorithm: Recipes For Scaling Up With Hadoop and Spark, O’Reilly, 2015. : http: //mapreduce 4 hackers. com/ • Mahmoud Parsian, Introduction to Map. Reduce, 2016. : https: //github. com/mahmoudparsian/dataalgorithms-book/blob/master/src/main/java/org/dataalgorithms/chap. B 09/charcount/Introduction-to. Map. Reduce. pdf • Chuck Lam, Hadoop in Action, 2010. : http: //www. chinastor. org/upload/201311/13111115436557. pdf • Tom White, Hadoop: The Definitive Guide, 4 th Ed. , O’Reilly, 2015. • Apach Hadoop, Map. Reduce Tutorial: https: //hadoop. apache. org/docs/stable/hadoop-mapreduceclient/hadoop-mapreduce-client-core/Map. Reduce. Tutorial. html • Matthew Rathbone, Apache Spark Java Tutorial with Code Examples, 2015. : https: //blog. matthewrathbone. com/2015/12/28/java-spark-tutorial. html 44

- Slides: 44