Hidden Markov Models HMMs Probabilistic Automata Ubiquitous in

Hidden Markov Models (HMMs) • Probabilistic Automata • Ubiquitous in Speech/Speaker Recognition/Verification • Suitable for modelling phenomena which are dynamic in nature • Can be used for handwriting, keystroke biometrics 1

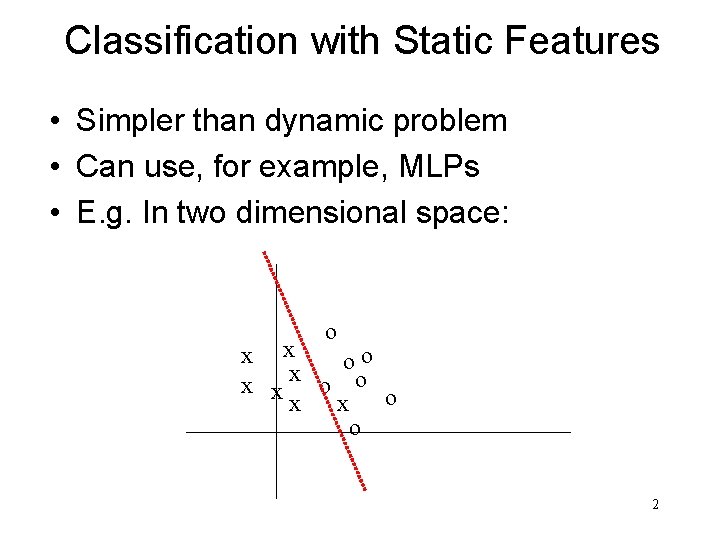

Classification with Static Features • Simpler than dynamic problem • Can use, for example, MLPs • E. g. In two dimensional space: o x x o o x xx o o o x x o 2

Hidden Markov Models (HMMs) • First: Visible VMMs • Formal Definition • Recognition • Training • HMMs • • Formal Definition Recognition Training Trellis Algorithms • Forward-Backward • Viterbi 3

Visible Markov Models • • Probabilistic Automaton N distinct states S = {s 1, …, s. N} M-element output alphabet K = {k 1, …, k. M} Initial state probabilities Π = {πi}, i S State transition at t = 1, 2, … State trans. probabilities A = {aij}, i, j S State sequence X = {X 1, …, XT}, Xt S Output seq. O = {o 1, …, o. T}, ot K 4

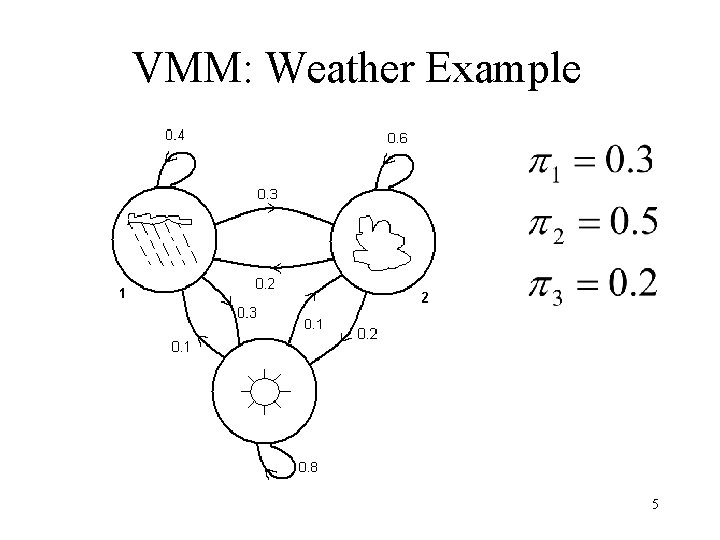

VMM: Weather Example 5

Generative VMM • We choose the state sequence probabilistically… • We could try this using: • the numbers 1 -10 • drawing from a hat • an ad-hoc assignment scheme 6

2 Questions • Training Problem – Given an observation sequence O and a “space” of possible models which spans possible values for model parameters w = {A, Π}, how do we find the model that best explains the observed data? • Recognition (decoding) problem – Given a model wi = {A, Π}, how do we compute how likely a certain observation is, i. e. P(O | wi) ? 7

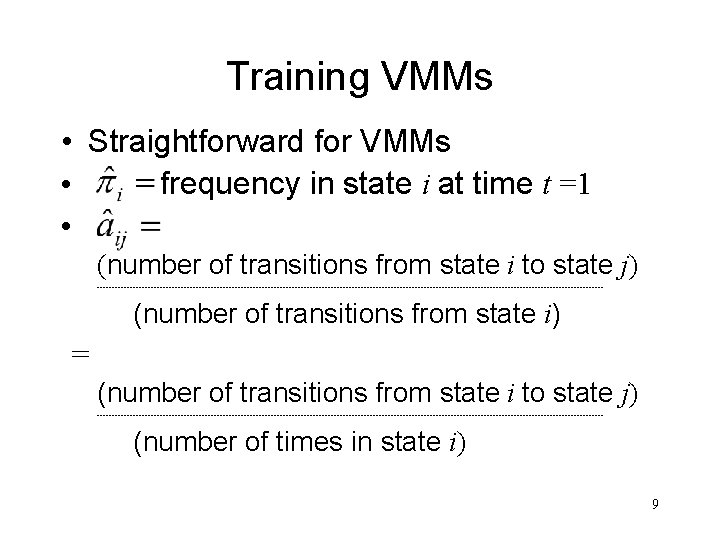

Training VMMs • Given observation sequences Os, we want to find model parameters w = {A, Π} which best explain the observations • I. e. we want to find values for w = {A, Π} that maximises P(O | w) • {A, Π} chosen = argmax {A, Π} P(O | {A, Π}) 8

Training VMMs • Straightforward for VMMs • frequency in state i at time t =1 • (number of transitions from state i to state j) ------------------------------------------------------------------------------------- (number of transitions from state i) = (number of transitions from state i to state j) ------------------------------------------------------------------------------------- (number of times in state i) 9

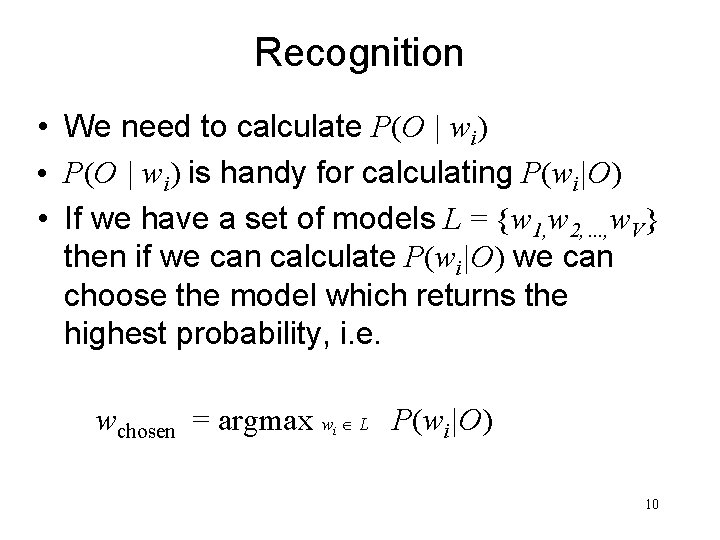

Recognition • We need to calculate P(O | wi) • P(O | wi) is handy for calculating P(wi|O) • If we have a set of models L = {w 1, w 2, …, w. V} then if we can calculate P(wi|O) we can choose the model which returns the highest probability, i. e. wchosen = argmax w L P(wi|O) i 10

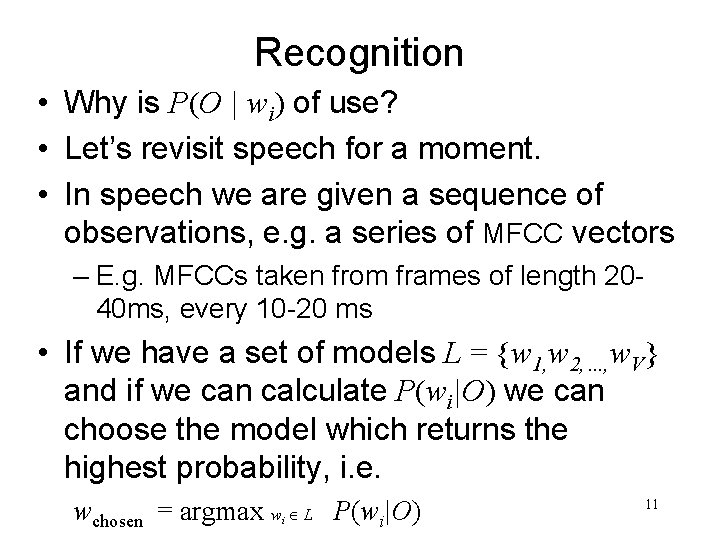

Recognition • Why is P(O | wi) of use? • Let’s revisit speech for a moment. • In speech we are given a sequence of observations, e. g. a series of MFCC vectors – E. g. MFCCs taken from frames of length 2040 ms, every 10 -20 ms • If we have a set of models L = {w 1, w 2, …, w. V} and if we can calculate P(wi|O) we can choose the model which returns the highest probability, i. e. wchosen = argmax w L P(wi|O) i 11

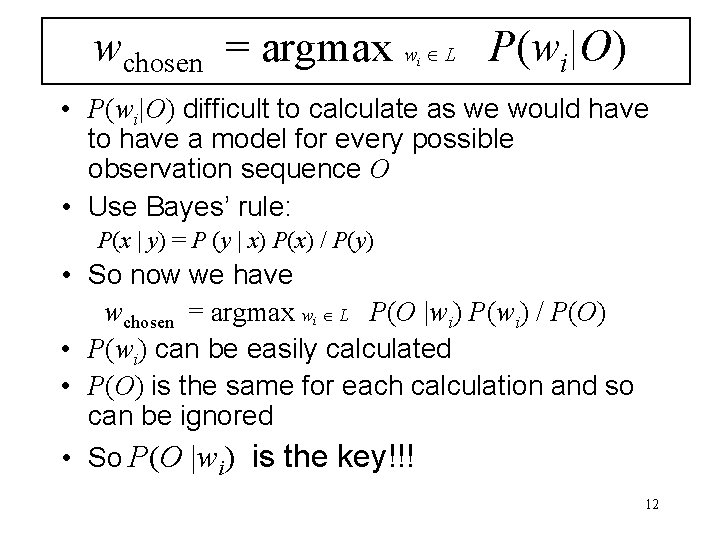

wchosen = argmax w L P(wi|O) i • P(wi|O) difficult to calculate as we would have to have a model for every possible observation sequence O • Use Bayes’ rule: P(x | y) = P (y | x) P(x) / P(y) • So now we have wchosen = argmax wi L P(O |wi) P(wi) / P(O) • P(wi) can be easily calculated • P(O) is the same for each calculation and so can be ignored • So P(O |wi) is the key!!! 12

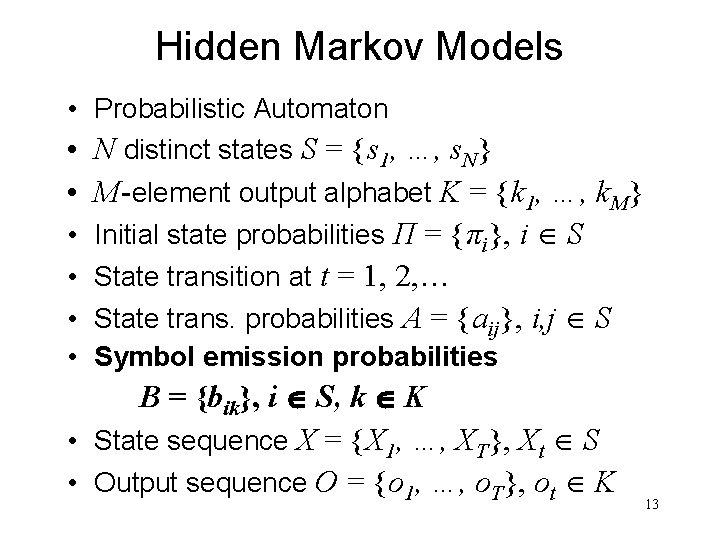

Hidden Markov Models • Probabilistic Automaton • N distinct states S = {s 1, …, s. N} • M-element output alphabet K = {k 1, …, k. M} • Initial state probabilities Π = {πi}, i S • State transition at t = 1, 2, … • State trans. probabilities A = {aij}, i, j S • Symbol emission probabilities B = {bik}, i S, k K • State sequence X = {X 1, …, XT}, Xt S • Output sequence O = {o 1, …, o. T}, ot K 13

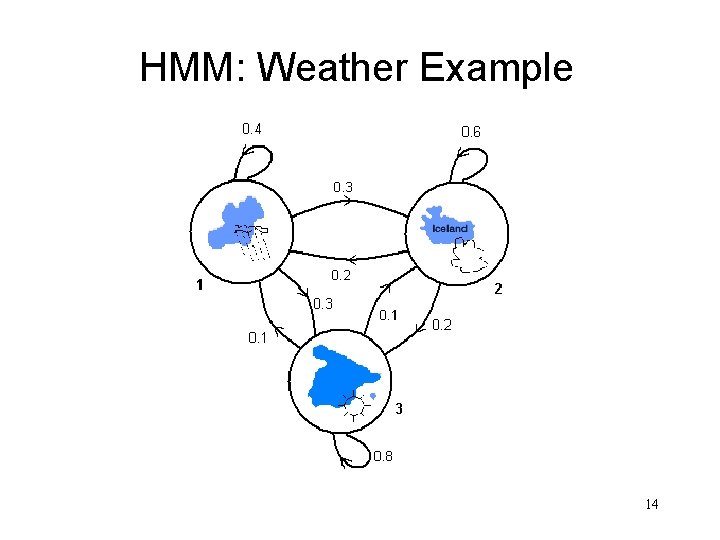

HMM: Weather Example 14

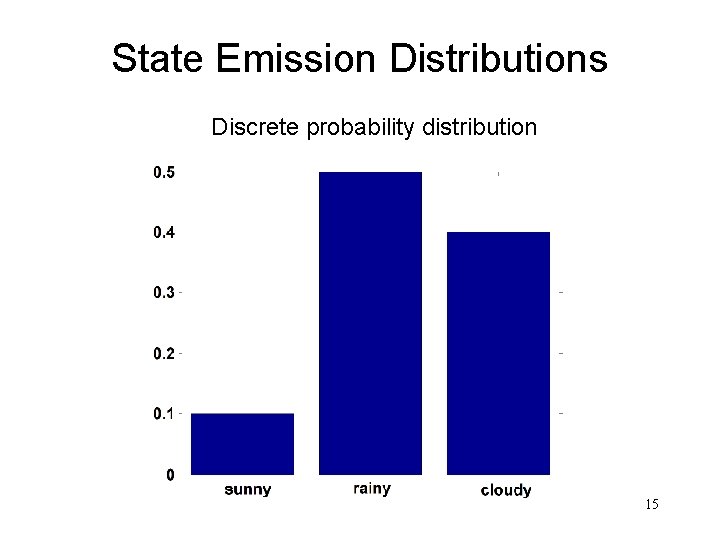

State Emission Distributions Discrete probability distribution 15

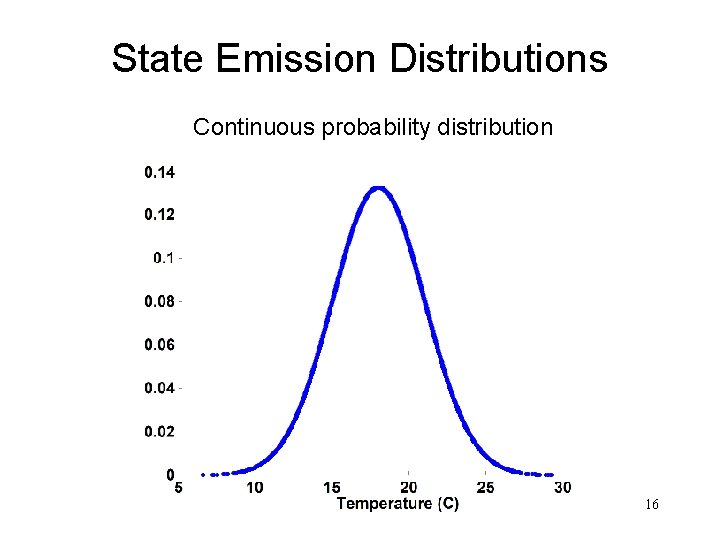

State Emission Distributions Continuous probability distribution 16

Generative HMM • Now we not only choose the state sequence probabilistically… • …but also the state emissions • Try this yourself using the numbers 1 -10 and drawing from a hat. . . 17

3 Questions • Recognition (decoding) problem – Given a model wi = {A, B, Π}, how do we compute how likely a certain observation is, i. e. P(O | wi) ? • State sequence? – Given the observation sequence and a model how do we choose a state sequence X = {X 1, …, XT} that best explains the observations • Training Problem – Given an observation sequence O and a “space” of possible models which spans possible values for model parameters w = {A, B, Π}, how do we find the model that best explains the observed data? 18

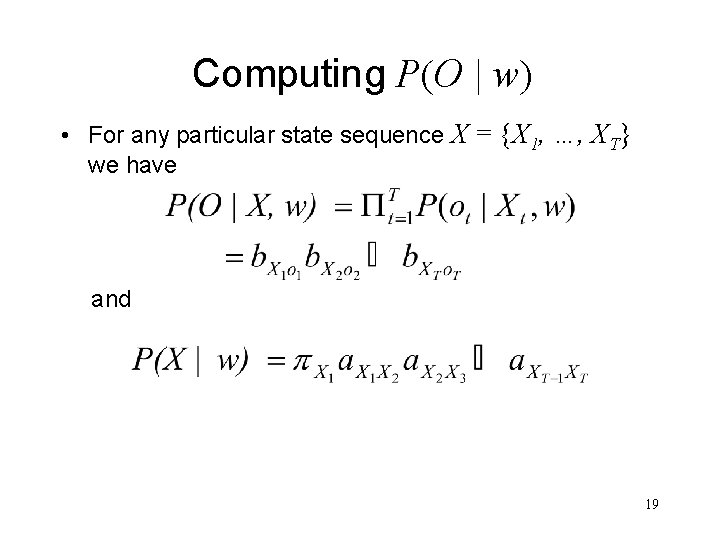

Computing P(O | w) • For any particular state sequence X = {X 1, …, XT} we have and 19

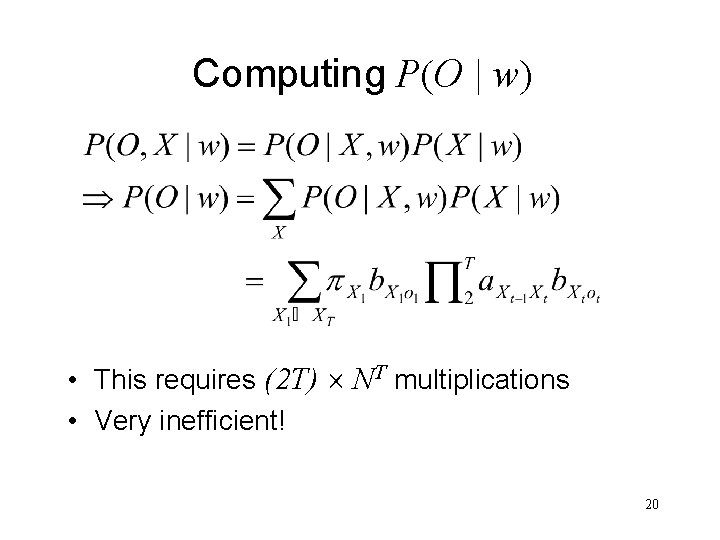

Computing P(O | w) • This requires (2 T) NT multiplications • Very inefficient! 20

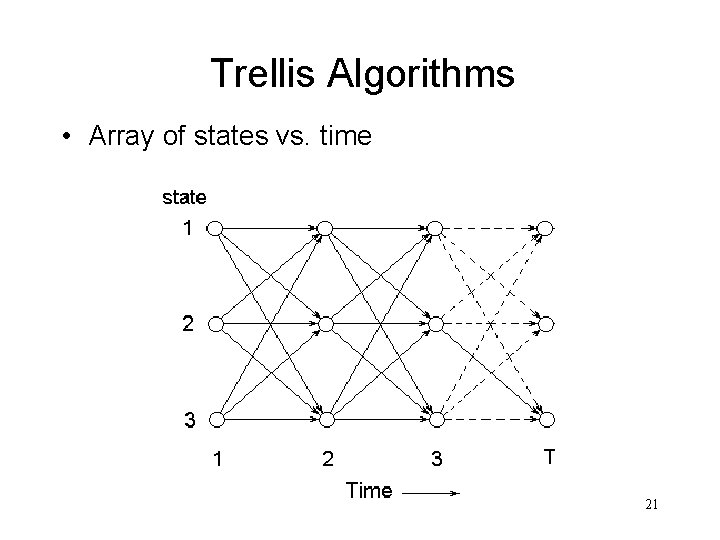

Trellis Algorithms • Array of states vs. time 21

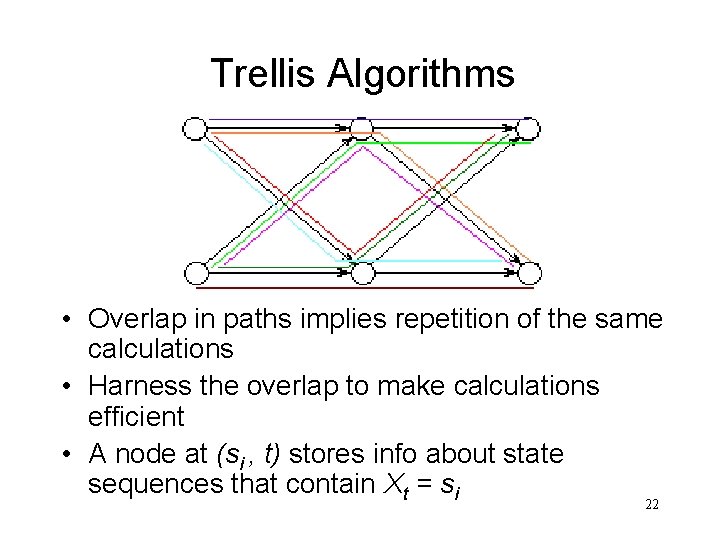

Trellis Algorithms • Overlap in paths implies repetition of the same calculations • Harness the overlap to make calculations efficient • A node at (si , t) stores info about state sequences that contain Xt = si 22

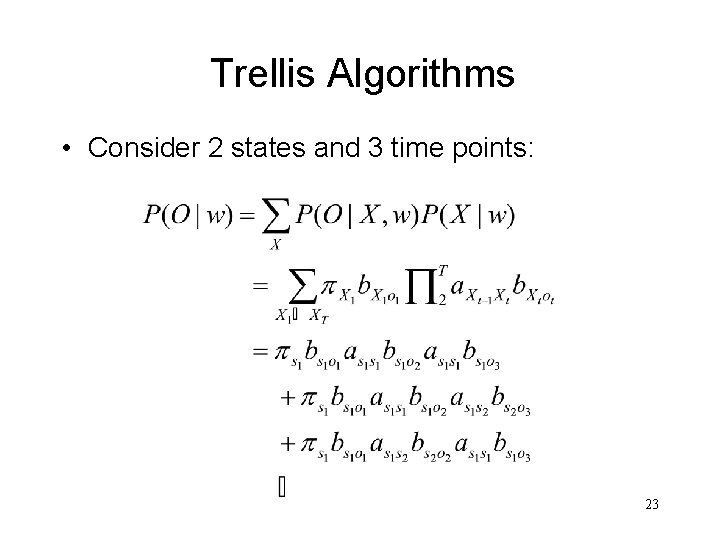

Trellis Algorithms • Consider 2 states and 3 time points: 23

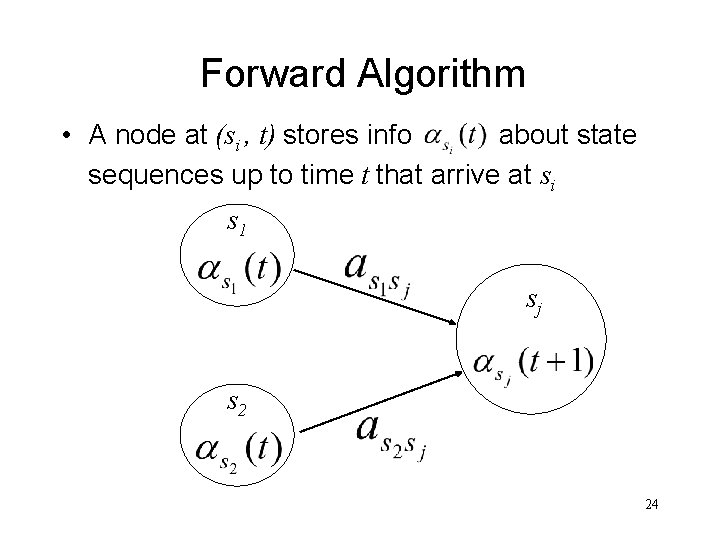

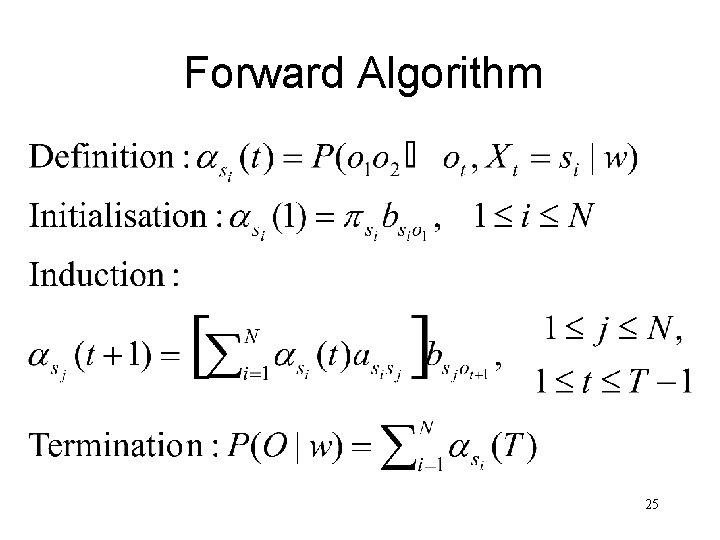

Forward Algorithm • A node at (si , t) stores info about state sequences up to time t that arrive at si s 1 sj s 2 24

Forward Algorithm 25

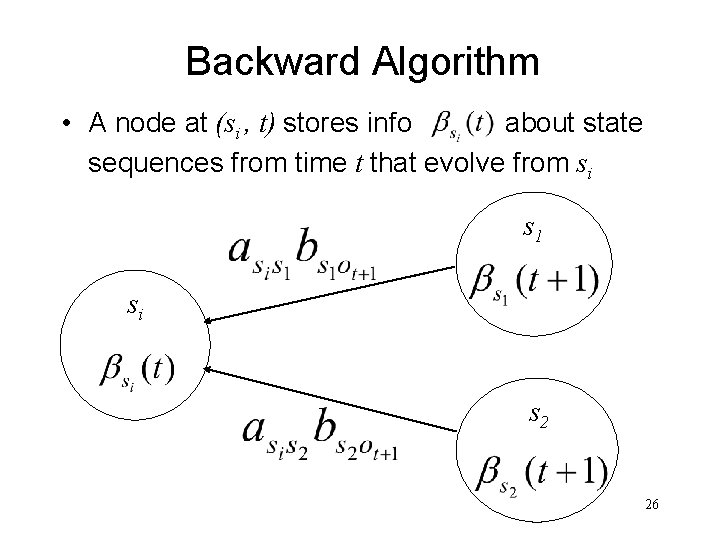

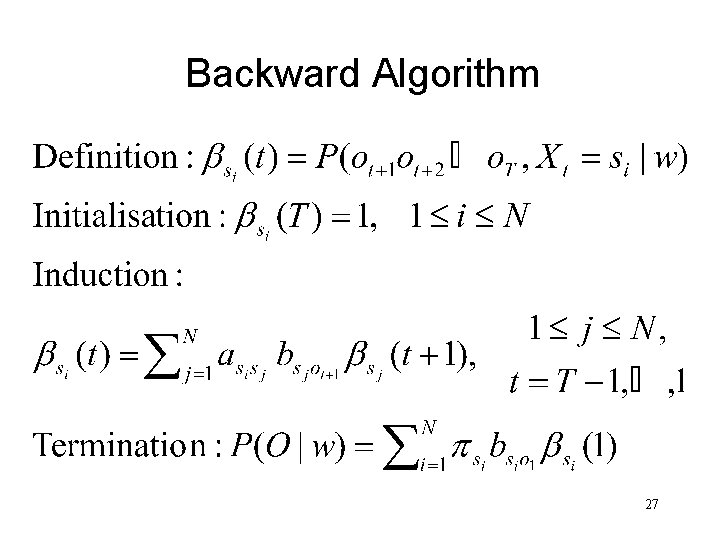

Backward Algorithm • A node at (si , t) stores info about state sequences from time t that evolve from si s 1 si s 2 26

Backward Algorithm 27

Forward & Backward Algorithms • P(O | w) as calculated from the forward and backward algorithms should be the same • FB algorithm usually used in training • FB algorithm not suited to recognition as it considers all possible state sequences • In reality, we would like to only consider the “best” state sequence (HMM problem 2) 28

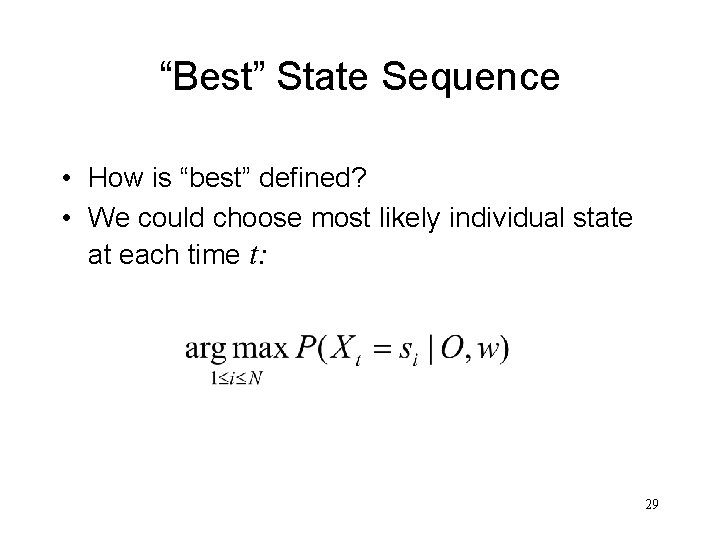

“Best” State Sequence • How is “best” defined? • We could choose most likely individual state at each time t: 29

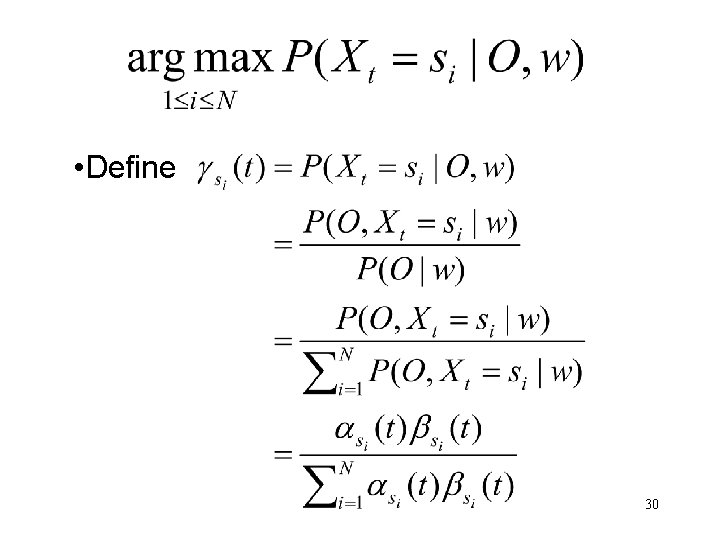

• Define 30

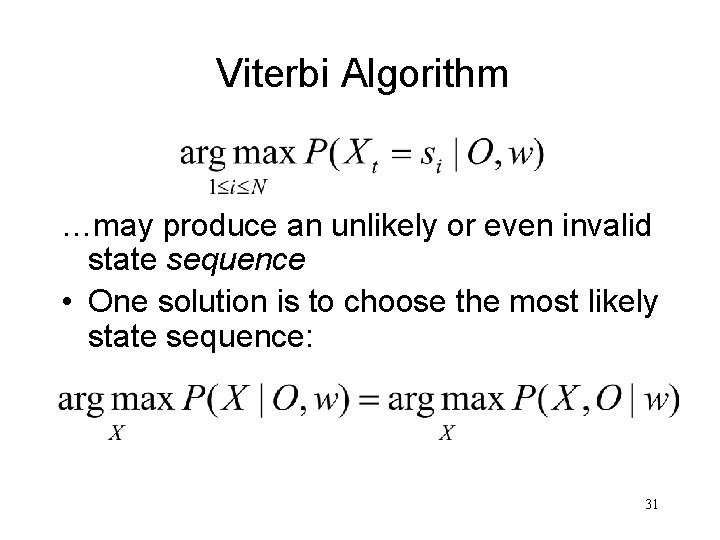

Viterbi Algorithm …may produce an unlikely or even invalid state sequence • One solution is to choose the most likely state sequence: 31

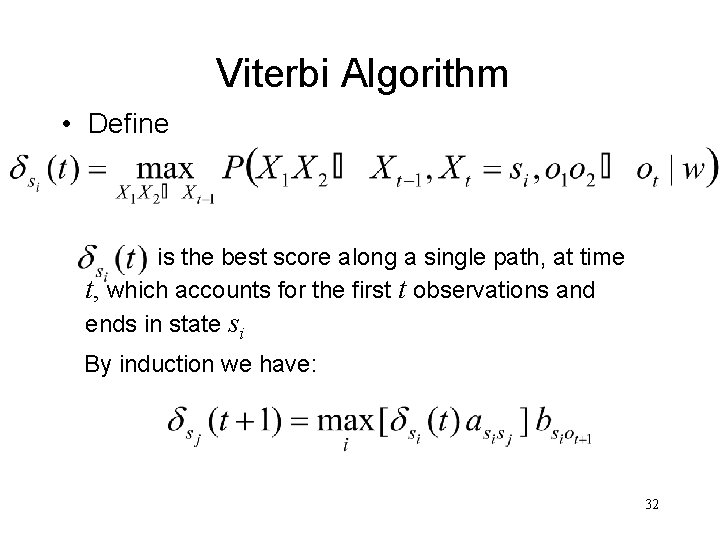

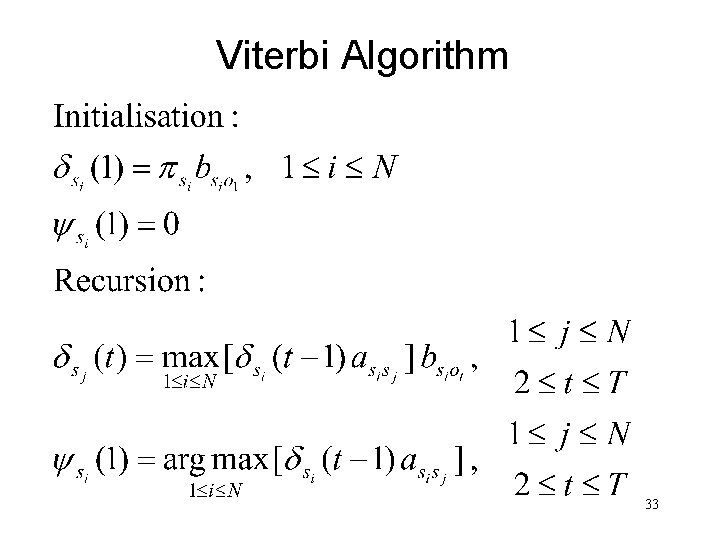

Viterbi Algorithm • Define is the best score along a single path, at time t, which accounts for the first t observations and ends in state si By induction we have: 32

Viterbi Algorithm 33

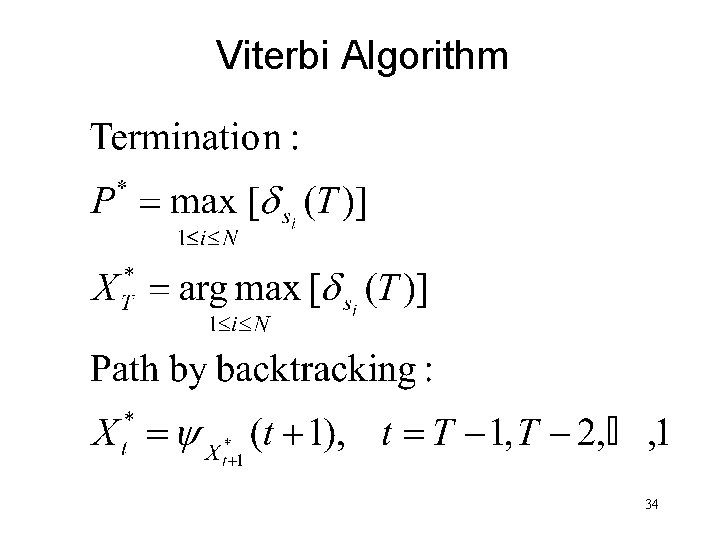

Viterbi Algorithm 34

Viterbi vs. Forward Algorithm • Similar in implementation – Forward sums over all incoming paths – Viterbi maximises • Viterbi probability Forward probability • Both efficiently implemented using a trellis structure 35

- Slides: 35