EScience and the Grid Marcel Kunze Abteilung GridComputing

E-Science and the Grid Marcel Kunze Abteilung Grid-Computing und e-Science Forschungszentrum Karlsruhe (FZK)

Computational Science Traditional Empirical Science l l Scientist gathers data by direct observation Scientist analyzes data Computational Science l l l Data captured by instruments Or data generated by simulator Processed by software Placed in a database Scientist analyzes database tcl scripts l on C programs l on ASCII files Concern: Scalability VO 2002 Marcel Kunze, FZK

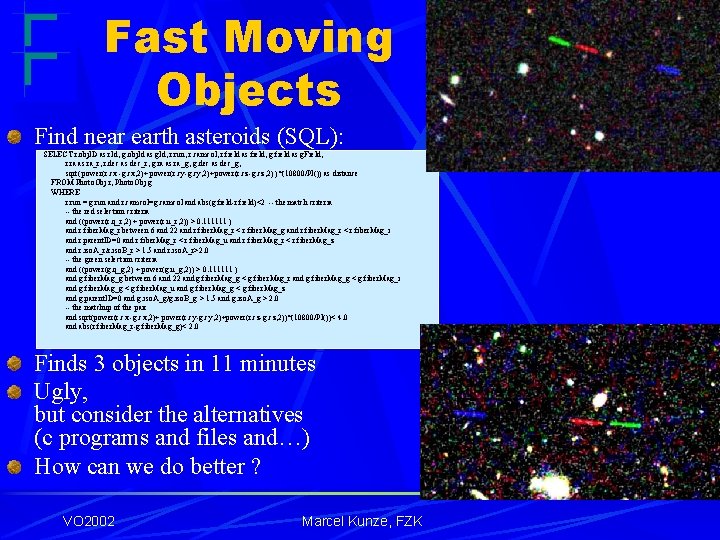

Fast Moving Objects Find near earth asteroids (SQL): SELECT r. obj. ID as r. Id, g. obj. Id as g. Id, r. run, r. camcol, r. field as field, g. field as g. Field, r. ra as ra_r, r. dec as dec_r, g. ra as ra_g, g. dec as dec_g, sqrt( power(r. cx -g. cx, 2)+ power(r. cy-g. cy, 2)+power(r. cz-g. cz, 2) )*(10800/PI()) as distance FROM Photo. Obj r, Photo. Obj g WHERE r. run = g. run and r. camcol=g. camcol and abs(g. field-r. field)<2 -- the match criteria -- the red selection criteria and ((power(r. q_r, 2) + power(r. u_r, 2)) > 0. 111111 ) and r. fiber. Mag_r between 6 and 22 and r. fiber. Mag_r < r. fiber. Mag_g and r. fiber. Mag_r < r. fiber. Mag_i and r. parent. ID=0 and r. fiber. Mag_r < r. fiber. Mag_u and r. fiber. Mag_r < r. fiber. Mag_z and r. iso. A_r/r. iso. B_r > 1. 5 and r. iso. A_r>2. 0 -- the green selection criteria and ((power(g. q_g, 2) + power(g. u_g, 2)) > 0. 111111 ) and g. fiber. Mag_g between 6 and 22 and g. fiber. Mag_g < g. fiber. Mag_r and g. fiber. Mag_g < g. fiber. Mag_i and g. fiber. Mag_g < g. fiber. Mag_u and g. fiber. Mag_g < g. fiber. Mag_z and g. parent. ID=0 and g. iso. A_g/g. iso. B_g > 1. 5 and g. iso. A_g > 2. 0 -- the matchup of the pair and sqrt(power(r. cx -g. cx, 2)+ power(r. cy-g. cy, 2)+power(r. cz-g. cz, 2))*(10800/PI())< 4. 0 and abs(r. fiber. Mag_r-g. fiber. Mag_g)< 2. 0 Finds 3 objects in 11 minutes Ugly, but consider the alternatives (c programs and files and…) How can we do better ? VO 2002 Marcel Kunze, FZK

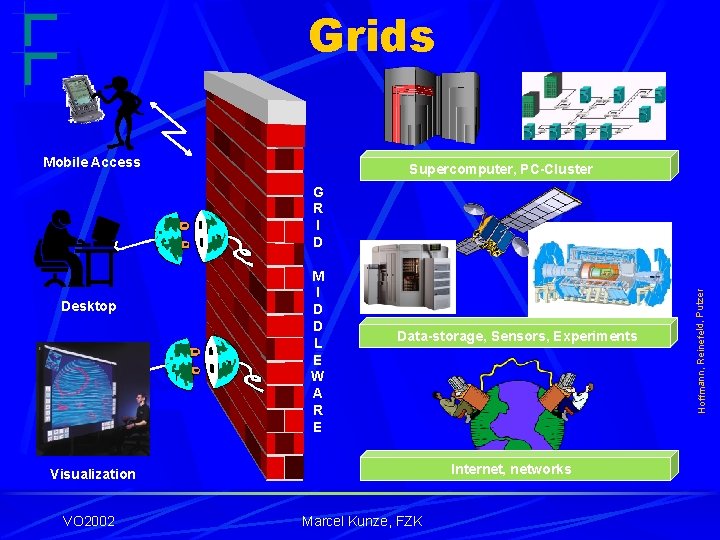

Grids Mobile Access Supercomputer, PC-Cluster Desktop M I D D L E W A R E Data-storage, Sensors, Experiments Internet, networks Visualization VO 2002 Marcel Kunze, FZK Hoffmann, Reinefeld, Putzer G R I D

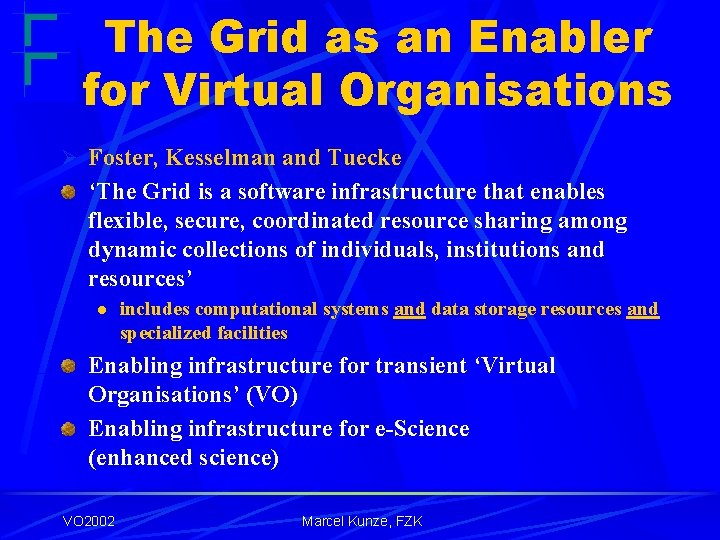

The Grid as an Enabler for Virtual Organisations Ø Foster, Kesselman and Tuecke ‘The Grid is a software infrastructure that enables flexible, secure, coordinated resource sharing among dynamic collections of individuals, institutions and resources’ l includes computational systems and data storage resources and specialized facilities Enabling infrastructure for transient ‘Virtual Organisations’ (VO) Enabling infrastructure for e-Science (enhanced science) VO 2002 Marcel Kunze, FZK

E-Science ‘e-Science is about global collaboration in key areas of science, and the next generation of infrastructure that will enable it. ’ ‘e-Science will change the dynamic of the way science is undertaken. ’ John Taylor Director General of UK Research Councils VO 2002 Marcel Kunze, FZK

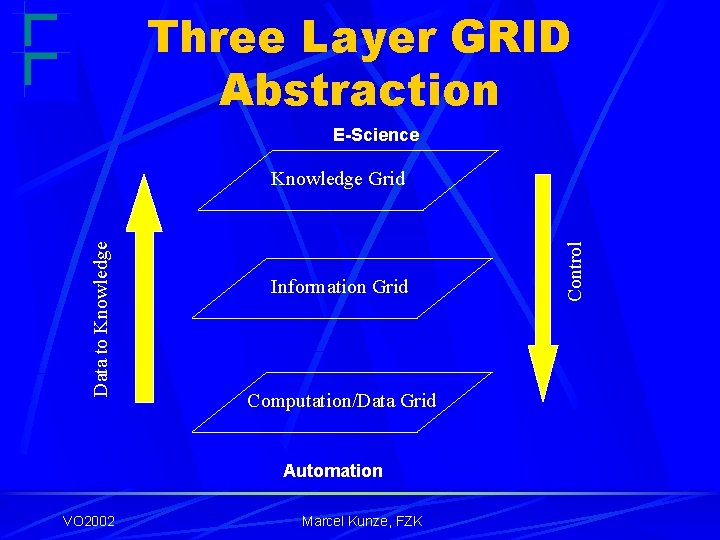

Three Layer GRID Abstraction E-Science Information Grid Computation/Data Grid Automation VO 2002 Marcel Kunze, FZK Control Data to Knowledge Grid

Research Challenges (I) Computation/Data Grid Performance Intelligent resource management Resilience Security and authentication Scalability VO 2002 Marcel Kunze, FZK

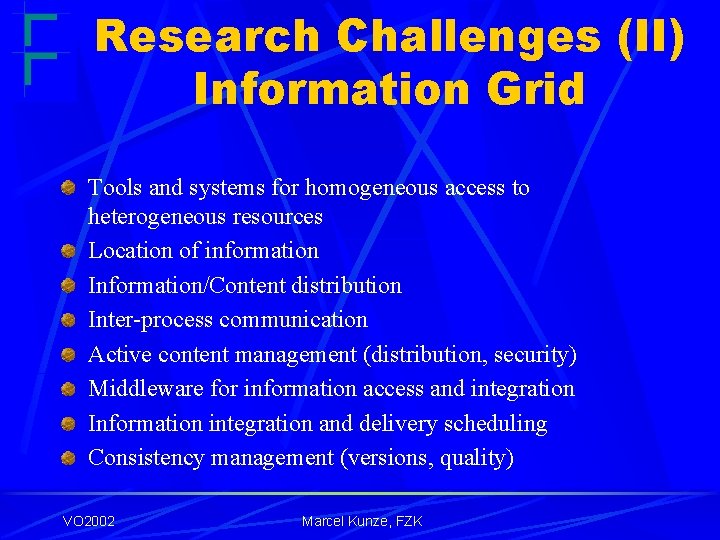

Research Challenges (II) Information Grid Tools and systems for homogeneous access to heterogeneous resources Location of information Information/Content distribution Inter-process communication Active content management (distribution, security) Middleware for information access and integration Information integration and delivery scheduling Consistency management (versions, quality) VO 2002 Marcel Kunze, FZK

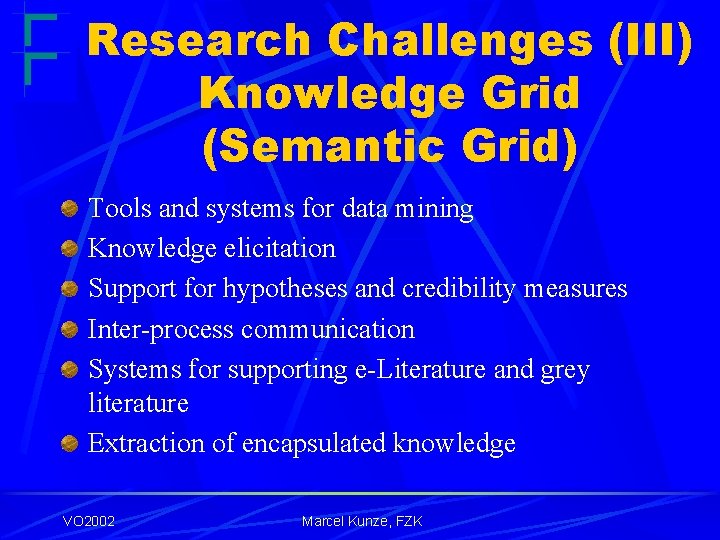

Research Challenges (III) Knowledge Grid (Semantic Grid) Tools and systems for data mining Knowledge elicitation Support for hypotheses and credibility measures Inter-process communication Systems for supporting e-Literature and grey literature Extraction of encapsulated knowledge VO 2002 Marcel Kunze, FZK

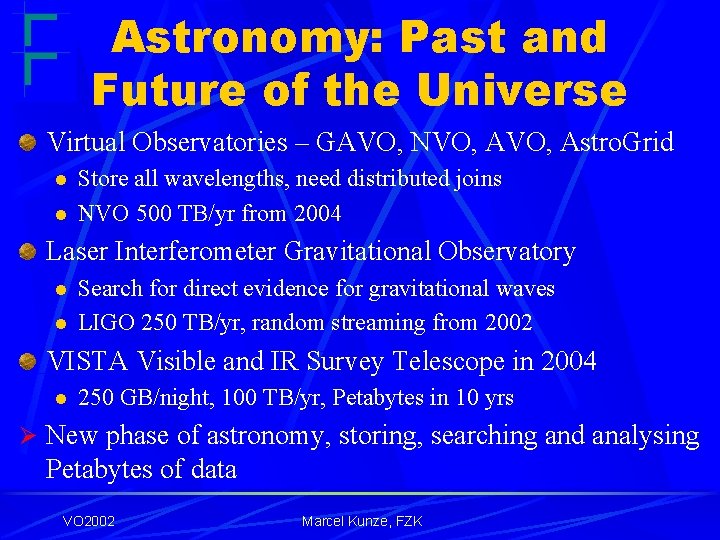

Astronomy: Past and Future of the Universe Virtual Observatories – GAVO, NVO, Astro. Grid l l Store all wavelengths, need distributed joins NVO 500 TB/yr from 2004 Laser Interferometer Gravitational Observatory l l Search for direct evidence for gravitational waves LIGO 250 TB/yr, random streaming from 2002 VISTA Visible and IR Survey Telescope in 2004 l 250 GB/night, 100 TB/yr, Petabytes in 10 yrs Ø New phase of astronomy, storing, searching and analysing Petabytes of data VO 2002 Marcel Kunze, FZK

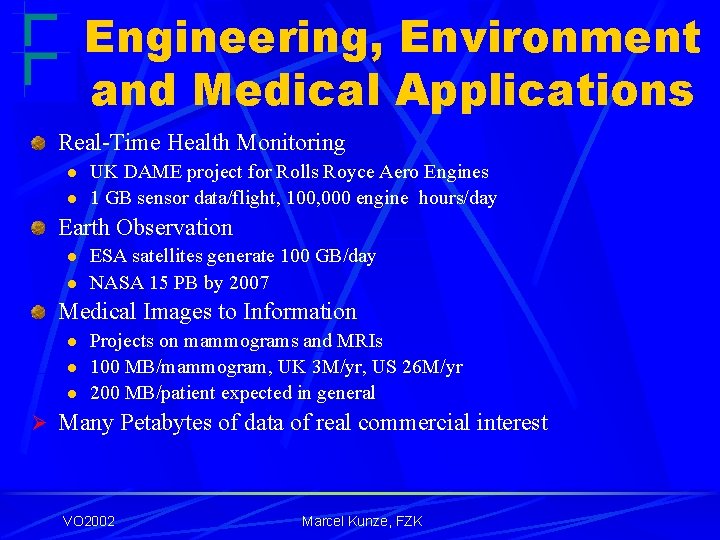

Engineering, Environment and Medical Applications Real-Time Health Monitoring l l UK DAME project for Rolls Royce Aero Engines 1 GB sensor data/flight, 100, 000 engine hours/day Earth Observation l l ESA satellites generate 100 GB/day NASA 15 PB by 2007 Medical Images to Information l l l Projects on mammograms and MRIs 100 MB/mammogram, UK 3 M/yr, US 26 M/yr 200 MB/patient expected in general Ø Many Petabytes of data of real commercial interest VO 2002 Marcel Kunze, FZK

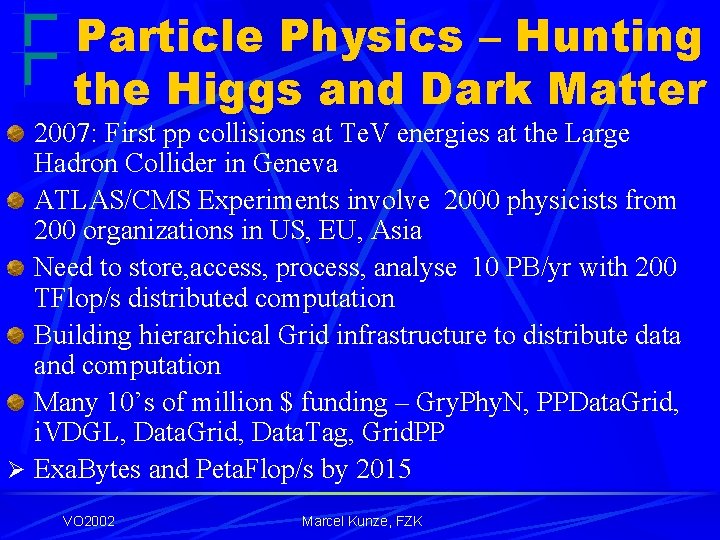

Particle Physics – Hunting the Higgs and Dark Matter 2007: First pp collisions at Te. V energies at the Large Hadron Collider in Geneva ATLAS/CMS Experiments involve 2000 physicists from 200 organizations in US, EU, Asia Need to store, access, process, analyse 10 PB/yr with 200 TFlop/s distributed computation Building hierarchical Grid infrastructure to distribute data and computation Many 10’s of million $ funding – Gry. Phy. N, PPData. Grid, i. VDGL, Data. Grid, Data. Tag, Grid. PP Ø Exa. Bytes and Peta. Flop/s by 2015 VO 2002 Marcel Kunze, FZK

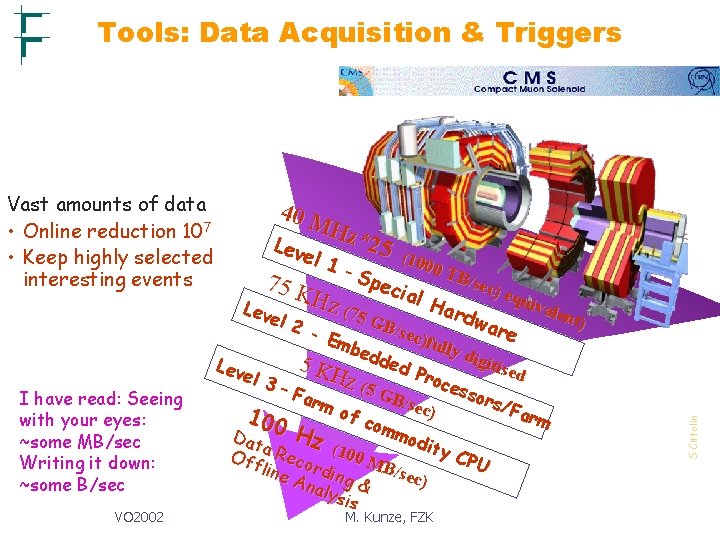

Tools: Data Acquisition & Triggers I have read: Seeing with your eyes: ~some MB/sec Writing it down: ~some B/sec VO 2002 40 M Hz* Lev 25 el 1 (100 0 TB -S /sec p 75 K ecia ) eq l H uiva Har z (75 Lev len d e GB/ l 2 war sec) - E e fully mbe digi dde Lev tised 5 d K Pro el 3 Hz ( ces 5 G – Fa sor B rm /sec s/F ) of arm 100 com mod Hz Dat ity Off a Reco (100 M CPU B/se line rdin c) Ana g & lysi s M. Kunze, FZK t) S Cittolin Vast amounts of data • Online reduction 107 • Keep highly selected interesting events

Three Theses GRID is seen as part of a wider vision for future of computing Endorsed view of GRID as essential infrastructure for e-science and (future e-business) Particle Physics GRID seen as prototype for more generic GRID architecture VO 2002 Marcel Kunze, FZK

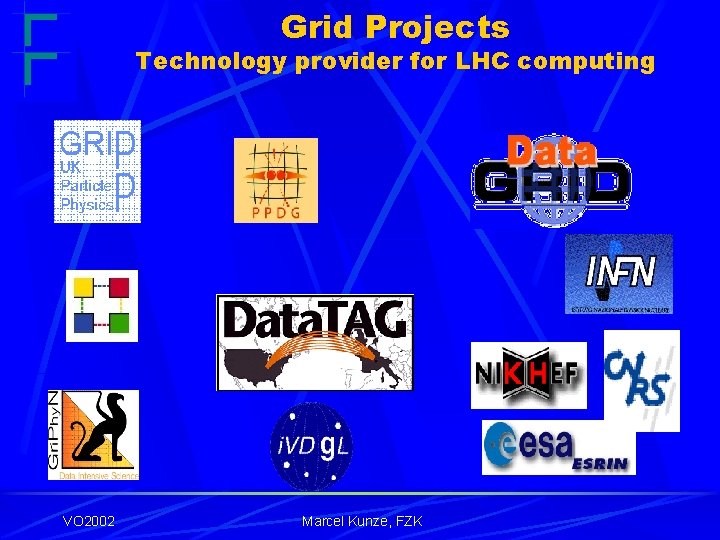

Grid Projects Technology provider for LHC computing LCG VO 2002 Marcel Kunze, FZK

The LHC Computing Grid Project (LCG) Deployment of the LHC Computing Grid Infrastructure LCG approved by CERN Council (Sept. 2001) LCG is the dedicated world-wide deployment project of the infrastructure for LHC Computing and the work place for the co-ordinated computing efforts of the experiments LCG is a global project, based on the LHC experiments, large software efforts and (inter-) national Grid project achievements LCG addresses many technological challenges and needs to solve them in the coming years (Phase 1) LCG VO 2002 Marcel Kunze, FZK

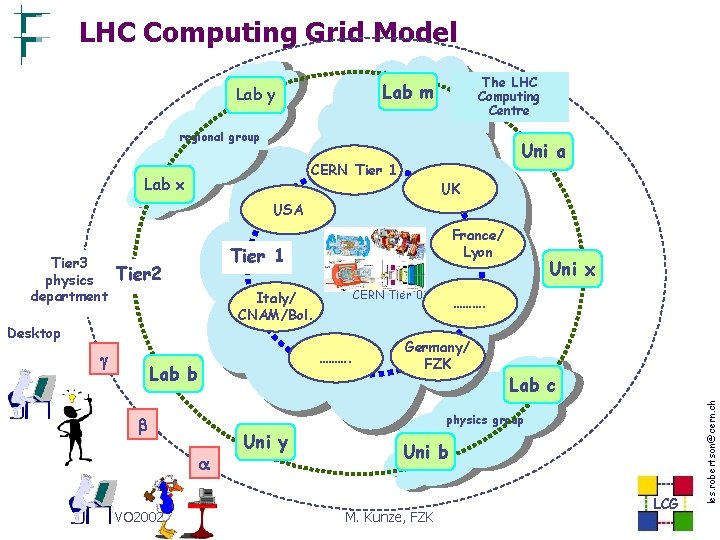

LHC Computing Grid Model The LHC Computing Centre Lab m Lab y ATLAS regional group Uni a CERN Tier 1 Lab x CMS UK USA Tier 1 Tier 2 CERN Italy/ CNAM/Bol. Desktop Lab b ………. Germany/ FZK Lab c physics group VO 2002 LHCb CERN Tier 0 ………. Uni x Uni y Uni b Tier 0 Centre at CERN M. Kunze, FZK LCG les. robertson@cern. ch Tier 3 physics department France/ Lyon

LHC Computing Grid Project: Milestones The first Level 1 Milestone – within one year, April 2003, - deploy a Global Grid Service l l sustained 24 X 7 service including sites from three continents identical or compatible Grid middleware and infrastructure several times the capacity of the CERN facility and as easy to use Having stabilized this base service – progressive evolution – l l l number of nodes, performance, capacity and quality of service integrate new middleware functionality migrate to de facto standards as soon as they emerge 2005: TDR, Mo. U final version, final ~1/10 prototype of total 2006, 2007, 2008 deployment of 1 st production facility LCG VO 2002 Marcel Kunze, FZK

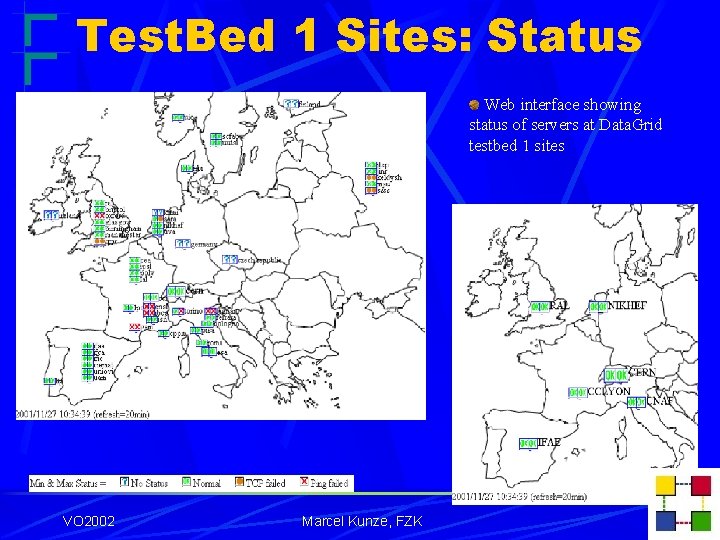

Test. Bed 1 Sites: Status Web interface showing status of servers at Data. Grid testbed 1 sites LCG VO 2002 Marcel Kunze, FZK

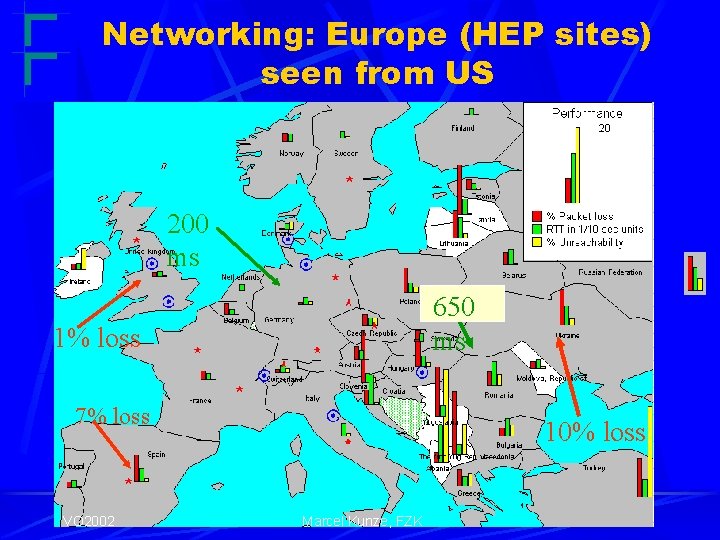

Networking: Europe (HEP sites) seen from US 200 ms 650 ms 1% loss 7% loss VO 2002 10% loss Marcel Kunze, FZK

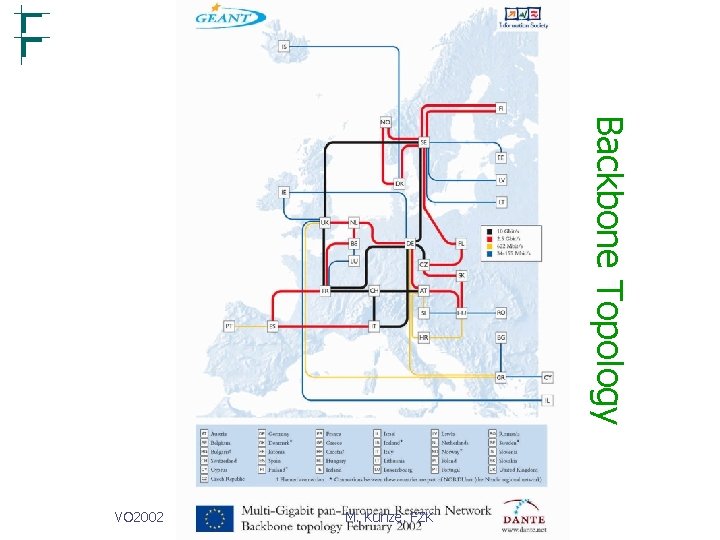

Backbone Topology VO 2002 M. Kunze, FZK

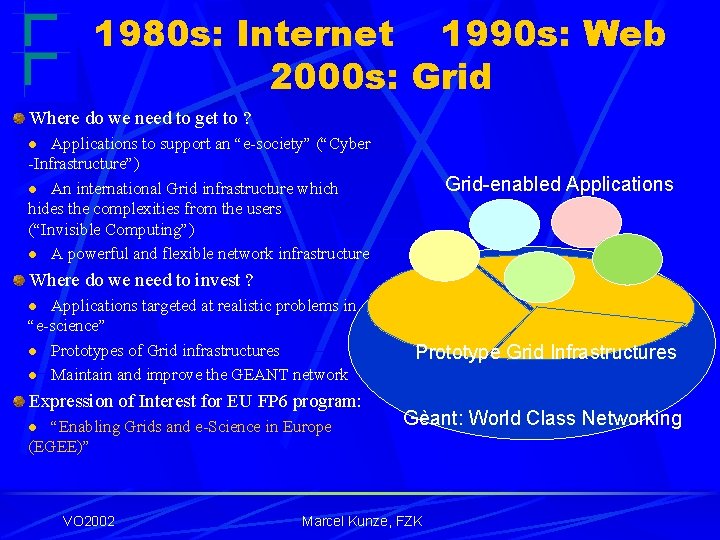

1980 s: Internet 1990 s: Web 2000 s: Grid Where do we need to get to ? Applications to support an “e-society” (“Cyber -Infrastructure”) l An international Grid infrastructure which hides the complexities from the users (“Invisible Computing”) l A powerful and flexible network infrastructure l Grid-enabled Applications Where do we need to invest ? Applications targeted at realistic problems in “e-science” l Prototypes of Grid infrastructures l Maintain and improve the GEANT network l Expression of Interest for EU FP 6 program: “Enabling Grids and e-Science in Europe (EGEE)” l VO 2002 Prototype Grid Infrastructures Gèant: World Class Networking Marcel Kunze, FZK

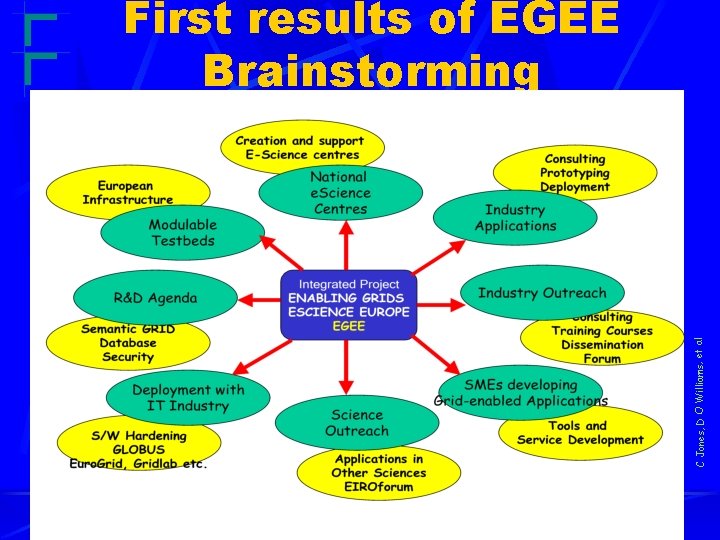

C Jones, D O Williams, et al First results of EGEE Brainstorming VO 2002 Marcel Kunze, FZK

- Slides: 24