Data Mining in e Commerce WebBased Information Architectures

Data Mining in e. Commerce Web-Based Information Architectures MSEC 20 -760 Mini II Jaime Carbonell

General Topic: Data Mining • • • Typology of Machine Learning Data Bases (review/intro) Data Mining (DM) Supervised methods for DM Applications (e. g. Text Mining)

Machine Learning • Discovering useful patterns in data – Data: DB tables, text, time-series, … – Patterns: generalizable and predictive • Learning methods are: – Deductive (e. g. cache implications) – Inductive (e. g. rules to summarize data) – Abductive (e. g. generative models)

Typology of Machine Learning Methods • • Learning by caching (remember key results) Learning from examples (“supervised learning”) Learning by experimentation (“active learning”) Learning from experience (“re-enforcement and speedup learning”) • Learning from time-series data • Learning by discovery (“unsupervised learning”)

Data Bases in a Nutshell (1) Ingredients • A Data Base is a set of one or more rectangular tables (aka "matrices", "relational tables"). • Each table consists of m records (aka, "tuples") • Each of the m records consists of n values, one for each of the n attributes • Each column in the table consist of all the values for the attribute it represents

Data Bases in a Nutshell (2) Ingredients • A data-table scheme is just the list of table column headers in their left-to-right order. Think of it as a table with no records. • A data-table instance is the content of the table (i. e. a set of records) consistent with the scheme. • For real data bases: m >> n.

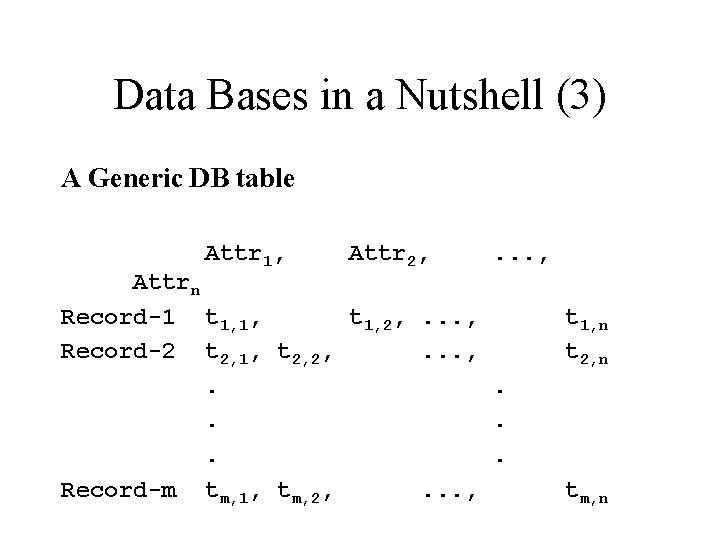

Data Bases in a Nutshell (3) A Generic DB table Attr 1, Attr 2, . . . , Attrn Record-1 t 1, 1, t 1, 2, . . . , Record-2 t 2, 1, t 2, 2, . . . Record-m tm, 1, tm, 2, . . . , t 1, n t 2, n tm, n

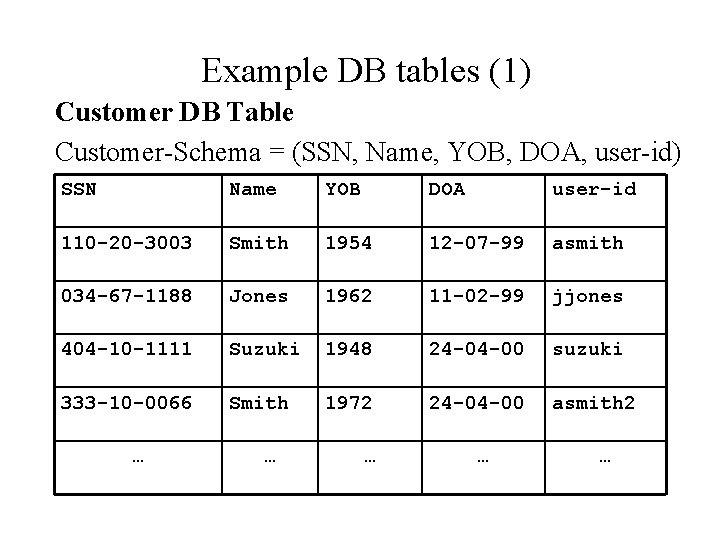

Example DB tables (1) Customer DB Table Customer-Schema = (SSN, Name, YOB, DOA, user-id) SSN Name YOB DOA user-id 110 -20 -3003 Smith 1954 12 -07 -99 asmith 034 -67 -1188 Jones 1962 11 -02 -99 jjones 404 -10 -1111 Suzuki 1948 24 -04 -00 suzuki 333 -10 -0066 Smith 1972 24 -04 -00 asmith 2 … … …

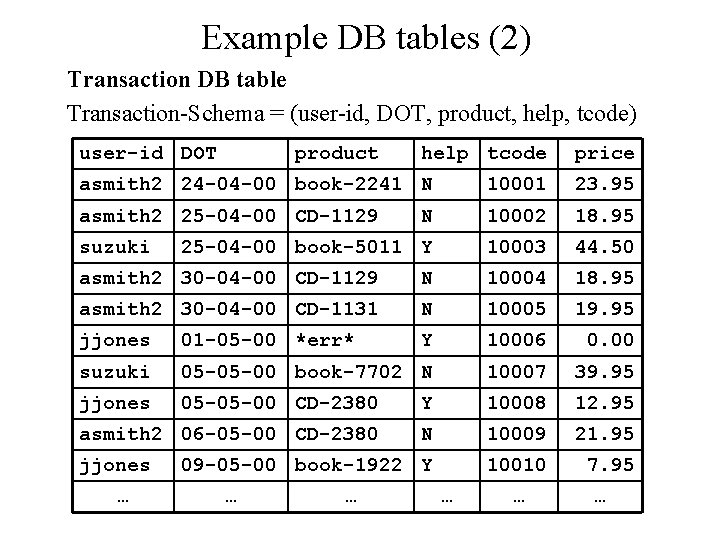

Example DB tables (2) Transaction DB table Transaction-Schema = (user-id, DOT, product, help, tcode) user-id DOT product help tcode price asmith 2 24 -04 -00 book-2241 N 10001 23. 95 asmith 2 25 -04 -00 CD-1129 N 10002 18. 95 25 -04 -00 book-5011 Y 10003 44. 50 suzuki asmith 2 30 -04 -00 CD-1129 N 10004 18. 95 asmith 2 30 -04 -00 CD-1131 N 10005 19. 95 jjones 01 -05 -00 *err* Y 10006 0. 00 suzuki 05 -05 -00 book-7702 N 10007 39. 95 jjones 05 -05 -00 CD-2380 Y 10008 12. 95 asmith 2 06 -05 -00 CD-2380 N 10009 21. 95 09 -05 -00 book-1922 Y 10010 7. 95 jjones … … …

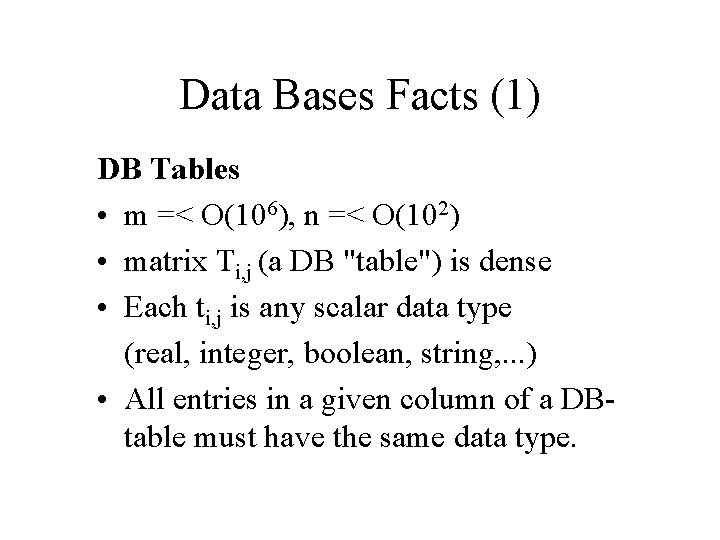

Data Bases Facts (1) DB Tables • m =< O(106), n =< O(102) • matrix Ti, j (a DB "table") is dense • Each ti, j is any scalar data type (real, integer, boolean, string, . . . ) • All entries in a given column of a DBtable must have the same data type.

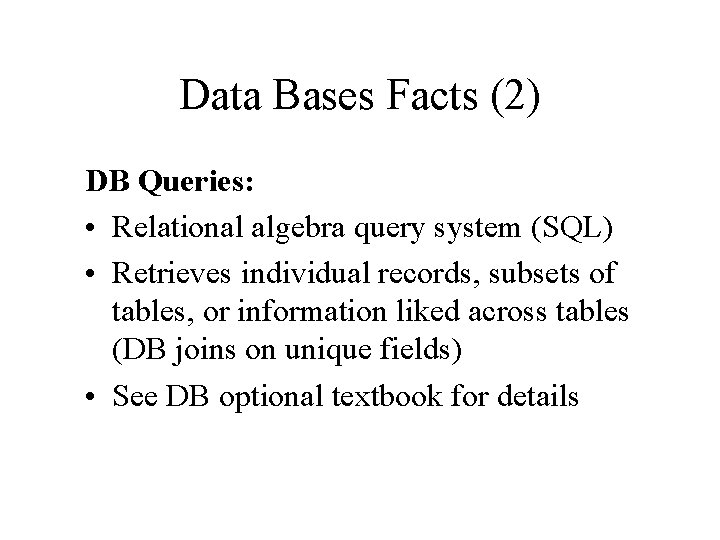

Data Bases Facts (2) DB Queries: • Relational algebra query system (SQL) • Retrieves individual records, subsets of tables, or information liked across tables (DB joins on unique fields) • See DB optional textbook for details

Data Base Design Issues (1) Design Issues • What additional table(s) are needed? • Why do we need multiple DB tables? Why not encode everything into one big table? • How do we search a DB table? How about the full DB? • How do we update a DB instance? How do we update a DB schema?

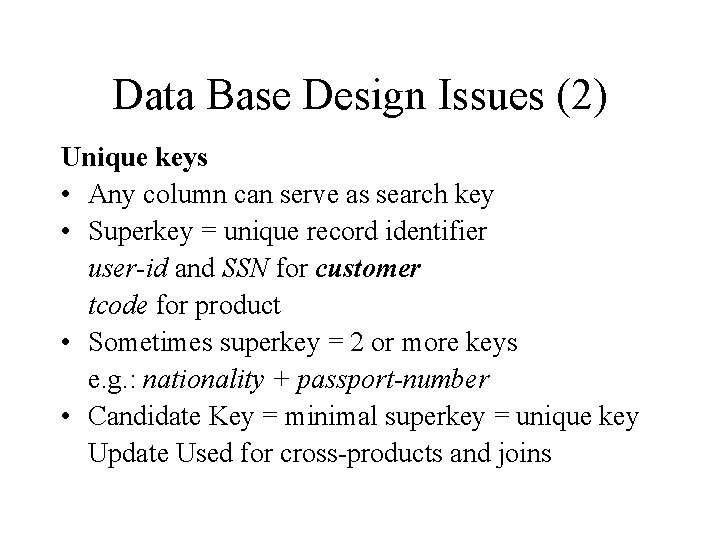

Data Base Design Issues (2) Unique keys • Any column can serve as search key • Superkey = unique record identifier user-id and SSN for customer tcode for product • Sometimes superkey = 2 or more keys e. g. : nationality + passport-number • Candidate Key = minimal superkey = unique key Update Used for cross-products and joins

Data Base Design Issues (3) Drops and errors • Missing data -- always happens • Erroneously entered data (type checking, range checking, consistency checking, . . . )

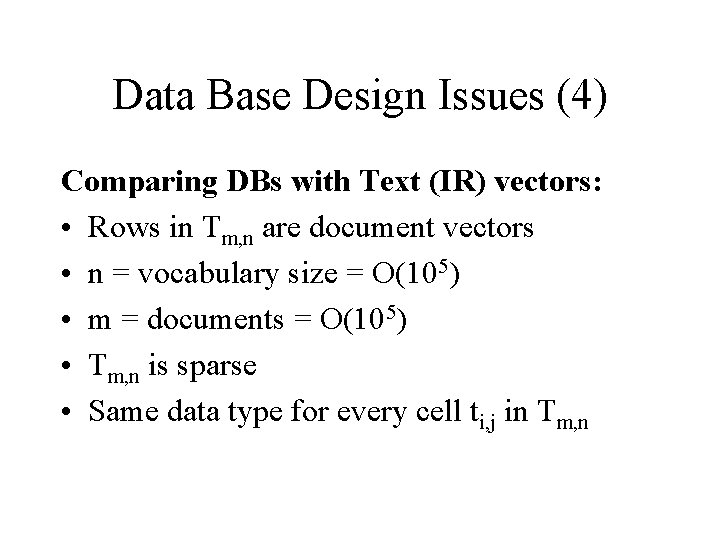

Data Base Design Issues (4) Comparing DBs with Text (IR) vectors: • Rows in Tm, n are document vectors • n = vocabulary size = O(105) • m = documents = O(105) • Tm, n is sparse • Same data type for every cell ti, j in Tm, n

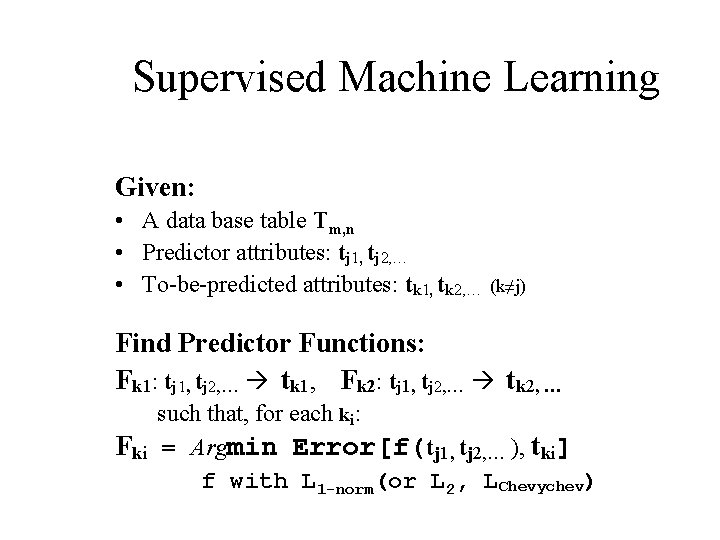

Supervised Machine Learning Given: • A data base table Tm, n • Predictor attributes: tj 1, tj 2, … • To-be-predicted attributes: tk 1, tk 2, … (k≠j) Find Predictor Functions: Fk 1: tj 1, tj 2, … tk 1, Fk 2: tj 1, tj 2, … tk 2, … Fki such that, for each ki: = Argmin Error[f(tj 1, tj 2, … ), tki] f with L 1 -norm(or L 2, LChevychev)

![DATA MINING [Supervised] (2) Where typically: • There is only one tk of interest DATA MINING [Supervised] (2) Where typically: • There is only one tk of interest](http://slidetodoc.com/presentation_image/8a623f2369faf1d0986c4774bd5f3208/image-17.jpg)

DATA MINING [Supervised] (2) Where typically: • There is only one tk of interest and therefore only one Fk (tj) • tk may be boolean => Fk is a binary classifier • tk may be nominal (finite set) => Fk is an n-ary classifier • tk may be a real number => Fk is a an approximating function • tk may be an arbitrary string (rare case) => Fk is hard to formalize

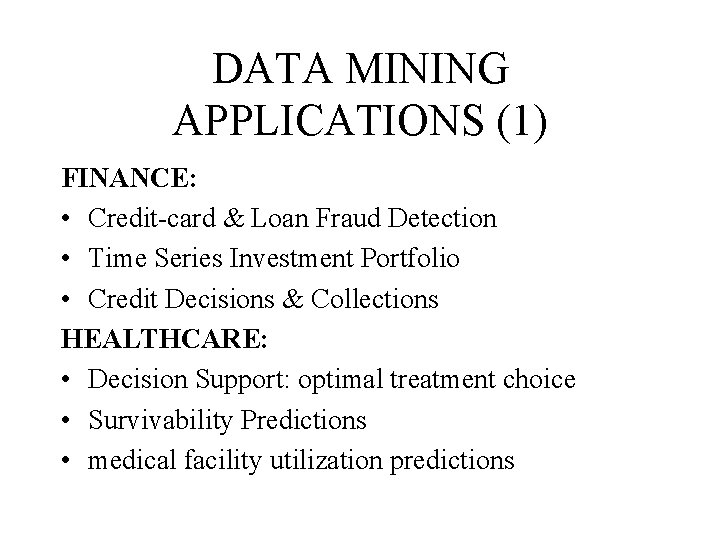

DATA MINING APPLICATIONS (1) FINANCE: • Credit-card & Loan Fraud Detection • Time Series Investment Portfolio • Credit Decisions & Collections HEALTHCARE: • Decision Support: optimal treatment choice • Survivability Predictions • medical facility utilization predictions

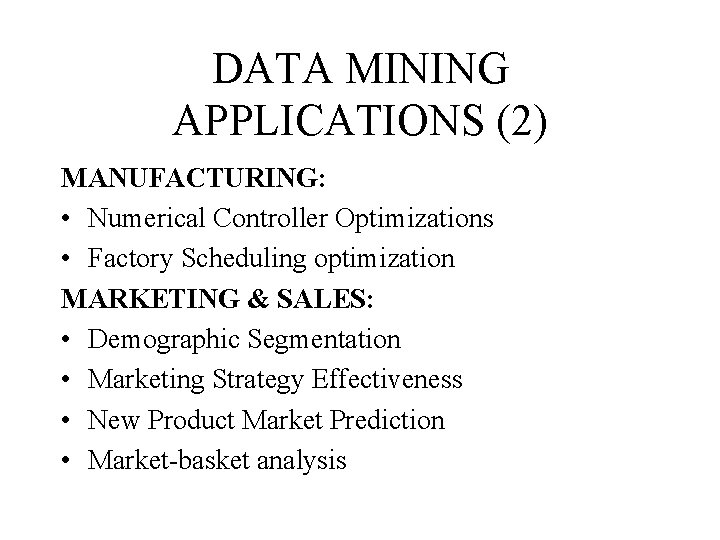

DATA MINING APPLICATIONS (2) MANUFACTURING: • Numerical Controller Optimizations • Factory Scheduling optimization MARKETING & SALES: • Demographic Segmentation • Marketing Strategy Effectiveness • New Product Market Prediction • Market-basket analysis

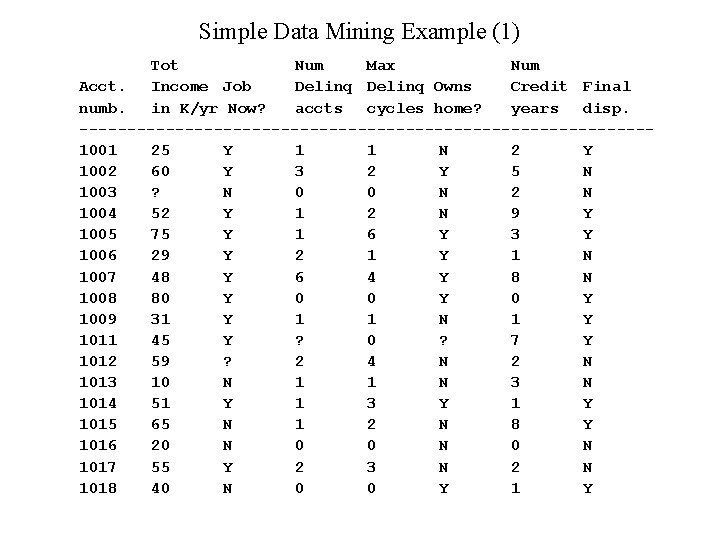

Simple Data Mining Example (1) Tot Num Max Num Acct. Income Job Delinq Owns Credit Final numb. in K/yr Now? accts cycles home? years disp. ------------------------------1001 25 Y 1 1 N 2 Y 1002 60 Y 3 2 Y 5 N 1003 ? N 0 0 N 2 N 1004 52 Y 1 2 N 9 Y 1005 75 Y 1 6 Y 3 Y 1006 29 Y 2 1 Y 1 N 1007 48 Y 6 4 Y 8 N 1008 80 Y 0 Y 1009 31 Y 1 1 N 1 Y 1011 45 Y ? 0 ? 7 Y 1012 59 ? 2 4 N 2 N 1013 10 N 1 1 N 3 N 1014 51 Y 1 3 Y 1015 65 N 1 2 N 8 Y 1016 20 N 0 N 1017 55 Y 2 3 N 2 N 1018 40 N 0 0 Y 1 Y

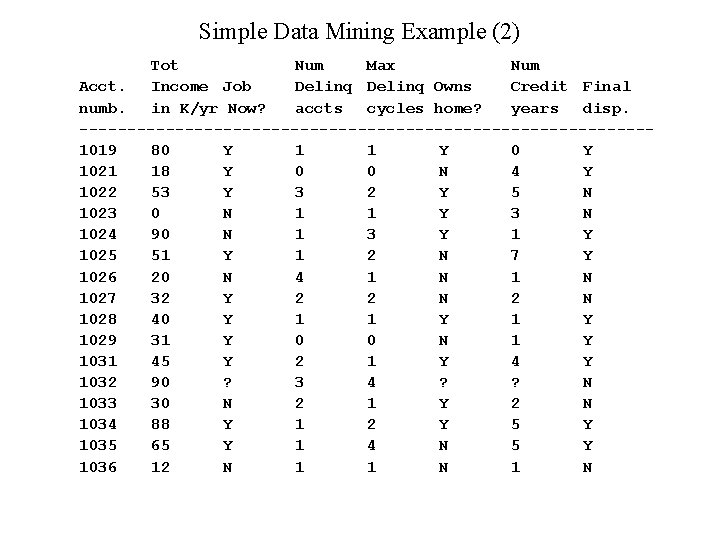

Simple Data Mining Example (2) Tot Num Max Num Acct. Income Job Delinq Owns Credit Final numb. in K/yr Now? accts cycles home? years disp. ------------------------------1019 80 Y 1 1 Y 0 Y 1021 18 Y 0 0 N 4 Y 1022 53 Y 3 2 Y 5 N 1023 0 N 1 1 Y 3 N 1024 90 N 1 3 Y 1025 51 Y 1 2 N 7 Y 1026 20 N 4 1 N 1027 32 Y 2 2 N 1028 40 Y 1 1 Y 1029 31 Y 0 0 N 1 Y 1031 45 Y 2 1 Y 4 Y 1032 90 ? 3 4 ? ? N 1033 30 N 2 1 Y 2 N 1034 88 Y 1 2 Y 5 Y 1035 65 Y 1 4 N 5 Y 1036 12 N 1 1 N

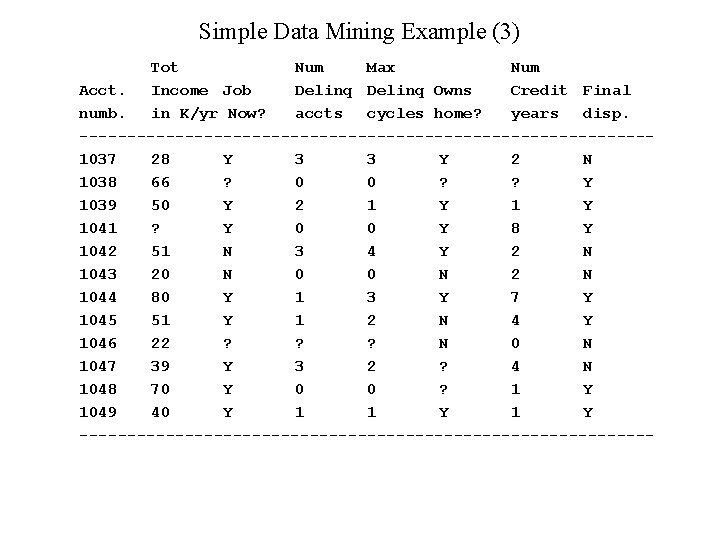

Simple Data Mining Example (3) Tot Num Max Num Acct. Income Job Delinq Owns Credit Final numb. in K/yr Now? accts cycles home? years disp. ------------------------------1037 28 Y 3 3 Y 2 N 1038 66 ? 0 0 ? ? Y 1039 50 Y 2 1 Y 1041 ? Y 0 0 Y 8 Y 1042 51 N 3 4 Y 2 N 1043 20 N 0 0 N 2 N 1044 80 Y 1 3 Y 7 Y 1045 51 Y 1 2 N 4 Y 1046 22 ? ? ? N 0 N 1047 39 Y 3 2 ? 4 N 1048 70 Y 0 0 ? 1 Y 1049 40 Y 1 1 Y ------------------------------

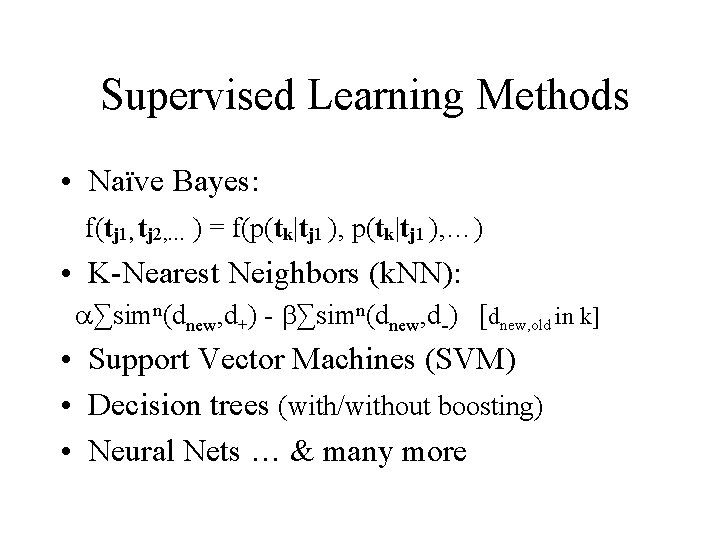

Supervised Learning Methods • Naïve Bayes: f(tj 1, tj 2, … ) = f(p(tk|tj 1 ), …) • K-Nearest Neighbors (k. NN): ∑simn(dnew, d+) - ∑simn(dnew, d-) [dnew, old in k] • Support Vector Machines (SVM) • Decision trees (with/without boosting) • Neural Nets … & many more

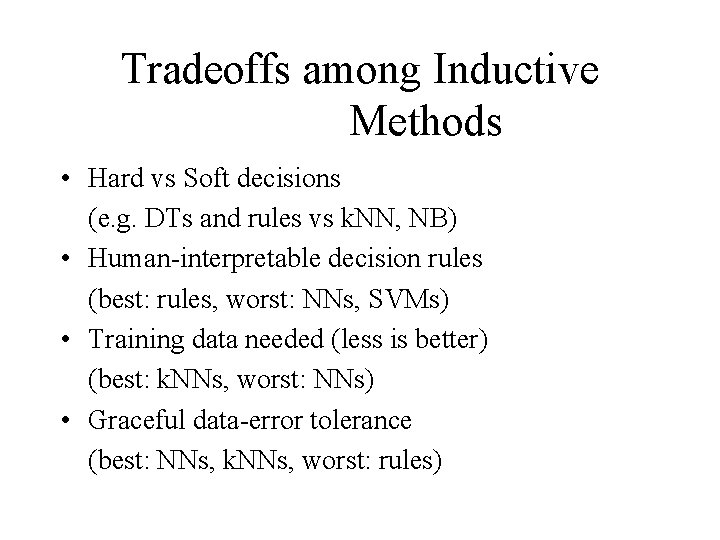

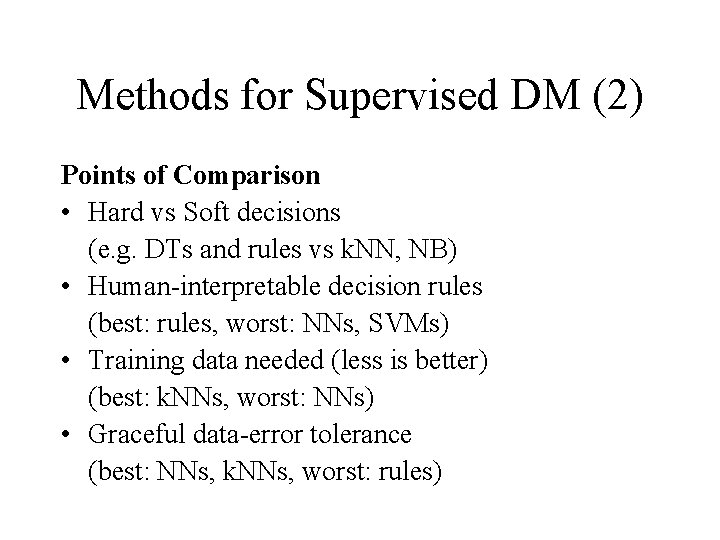

Tradeoffs among Inductive Methods • Hard vs Soft decisions (e. g. DTs and rules vs k. NN, NB) • Human-interpretable decision rules (best: rules, worst: NNs, SVMs) • Training data needed (less is better) (best: k. NNs, worst: NNs) • Graceful data-error tolerance (best: NNs, k. NNs, worst: rules)

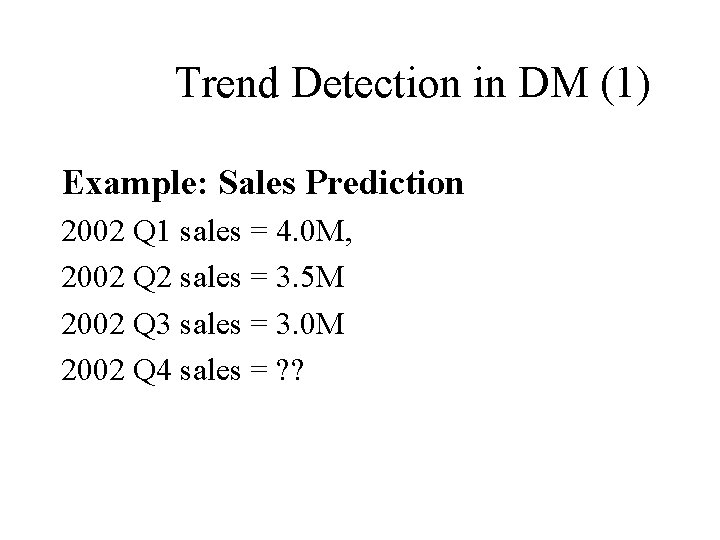

Trend Detection in DM (1) Example: Sales Prediction 2002 Q 1 sales = 4. 0 M, 2002 Q 2 sales = 3. 5 M 2002 Q 3 sales = 3. 0 M 2002 Q 4 sales = ? ?

Trend Detection in DM (2) Now if we knew last year: 2001 Q 1 sales = 3. 5 M, 2001 Q 2 sales = 3. 1 M 2001 Q 3 sales = 2, 8 M 2001 Q 4 sales = 4. 5 M And if we knew previous year: 2000 Q 1 sales = 3. 2 M, 2000 Q 2 sales = 2. 9 M 2000 Q 3 sales = 2. 5 M 2000 Q 4 sales = 3. 7 M

Trend Detection in DM (3) What will 2002 Q 4 sales be? What if Christmas 2002 was cancelled What will 2003 Q 4 sales be?

Time-Series Analysis • Numerical series extrapolation • Cyclical curve fitting – Find period of cycle (and super-cycle, …) – Fit curve for each period (often with L 2 or Linfinity norm) – Find translation (series extrapolation) – Extrapolate to estimate desire values • But, better to pre-classify data first (e. g. "recession" and "expansion" years) • Combine with "standard" data mining

Trend Detection in DM II (2) Thorny Problems • How to use external knowledge to make up for limitations in the data? • How to make longer-range extrapolations? • How to cope with corrupted data? – Random point errors (easy) – Systematic error (hard) – Malicious errors (impossible)

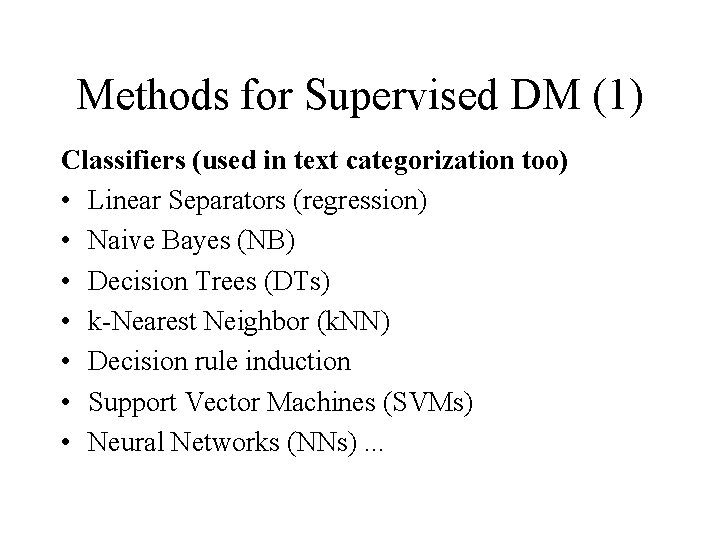

Methods for Supervised DM (1) Classifiers (used in text categorization too) • Linear Separators (regression) • Naive Bayes (NB) • Decision Trees (DTs) • k-Nearest Neighbor (k. NN) • Decision rule induction • Support Vector Machines (SVMs) • Neural Networks (NNs). . .

Methods for Supervised DM (2) Points of Comparison • Hard vs Soft decisions (e. g. DTs and rules vs k. NN, NB) • Human-interpretable decision rules (best: rules, worst: NNs, SVMs) • Training data needed (less is better) (best: k. NNs, worst: NNs) • Graceful data-error tolerance (best: NNs, k. NNs, worst: rules)

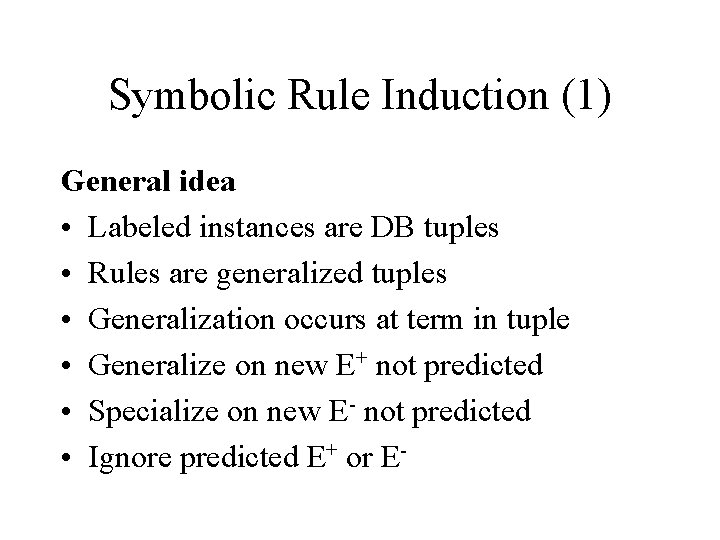

Symbolic Rule Induction (1) General idea • Labeled instances are DB tuples • Rules are generalized tuples • Generalization occurs at term in tuple • Generalize on new E+ not predicted • Specialize on new E- not predicted • Ignore predicted E+ or E-

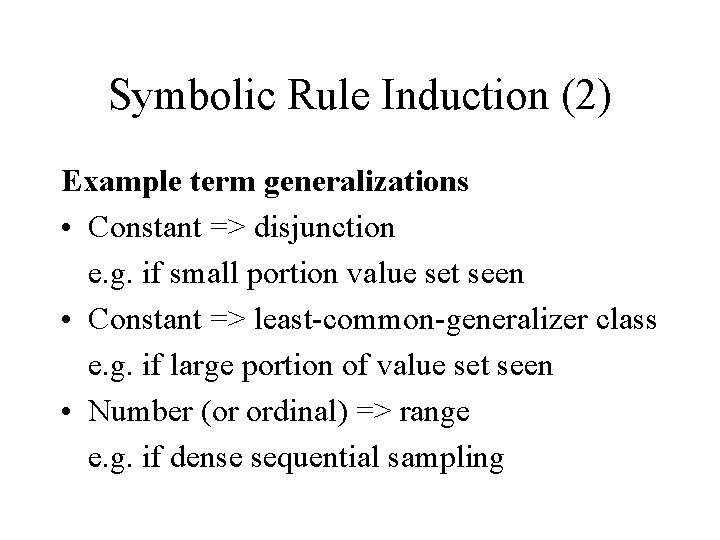

Symbolic Rule Induction (2) Example term generalizations • Constant => disjunction e. g. if small portion value set seen • Constant => least-common-generalizer class e. g. if large portion of value set seen • Number (or ordinal) => range e. g. if dense sequential sampling

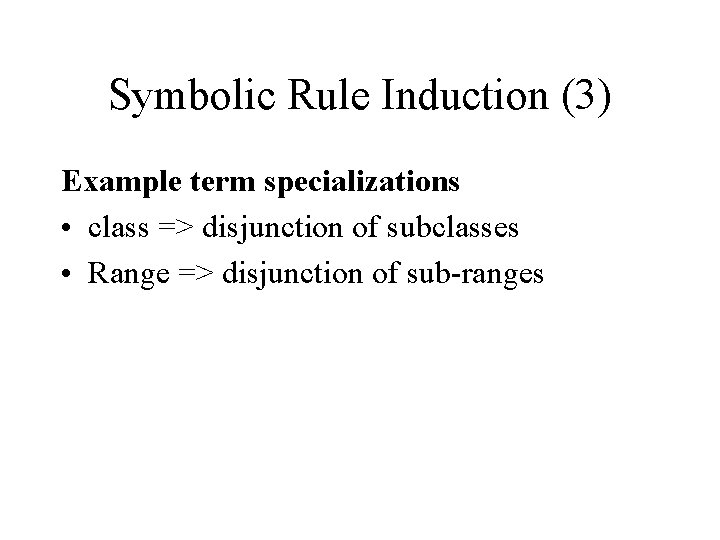

Symbolic Rule Induction (3) Example term specializations • class => disjunction of subclasses • Range => disjunction of sub-ranges

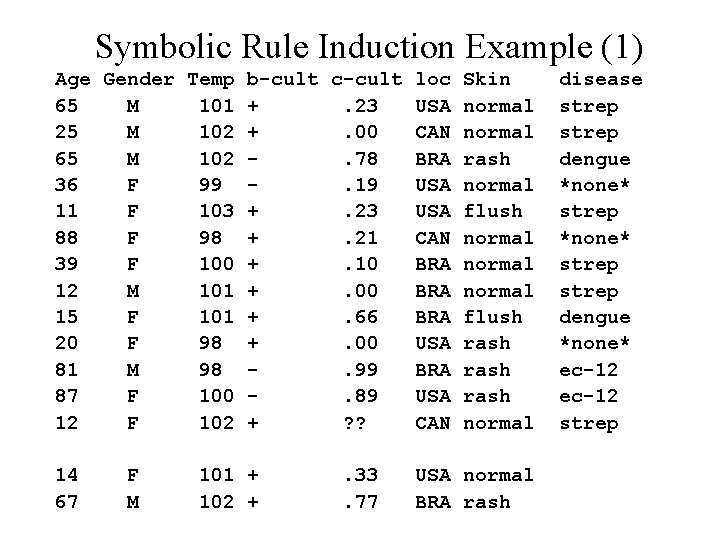

Symbolic Rule Induction Example (1) Age Gender Temp b-cult c-cult loc Skin 65 M 101 +. 23 USA normal 25 M 102 +. 00 CAN normal 65 M 102. 78 BRA rash 36 F 99. 19 USA normal 11 F 103 +. 23 USA flush 88 F 98 +. 21 CAN normal 39 F 100 +. 10 BRA normal 12 M 101 +. 00 BRA normal 15 F 101 +. 66 BRA flush 20 F 98 +. 00 USA rash 81 M 98. 99 BRA rash 87 F 100. 89 USA rash 12 F 102 + ? ? CAN normal 14 67 F M 101 + 102 + . 33. 77 USA normal BRA rash disease strep dengue *none* strep dengue *none* ec-12 strep

![Symbolic Rule Induction Example (2) Candidate Rules: IF age = [12, 65] gender = Symbolic Rule Induction Example (2) Candidate Rules: IF age = [12, 65] gender =](http://slidetodoc.com/presentation_image/8a623f2369faf1d0986c4774bd5f3208/image-36.jpg)

Symbolic Rule Induction Example (2) Candidate Rules: IF age = [12, 65] gender = *any* temp = [100, 103] b-cult = + c-cult = [. 00, . 23] loc = *any* skin = (normal, flush) THEN: strep IF age = (15, 65) gender = *any* temp = [101, 102] b-cult = *any* c-cult = [. 66, . 78] loc = BRA skin = rash THEN: dengue Disclaimer: These are *not* real medical records

- Slides: 36