Webbased Information Architectures Jian Zhang Todays Topics Term

Web-based Information Architectures Jian Zhang

Today’s Topics • • Term Weighting Scheme Vector Space Model & GVSM Evaluation of IR Rocchio Feedback Web Spider Algorithm Text Mining: Named Entity Identification Data Mining Text Categorization (k. NN)

Term Weighting Scheme • TW = TF * IDF – TF part = f 1(tf(term, doc)) – IDF part = f 2(idf(term)) = f 2(N/df(term)) – E. g. , f 1(tf) = normalized_tf = tf/max_tf; f 2(idf) = log 2(idf) – E. g, f 1(tf) = tf; f 2(idf) = 1 NOTE: definition of DF!

Document & Query Representation • Bag of words, Vector Space Model(VSM) • Word Normalization – Stopwords removal – Stemming • Proximity phrases • Each element of the vector is the Term Weight of that term w. r. t the document/query.

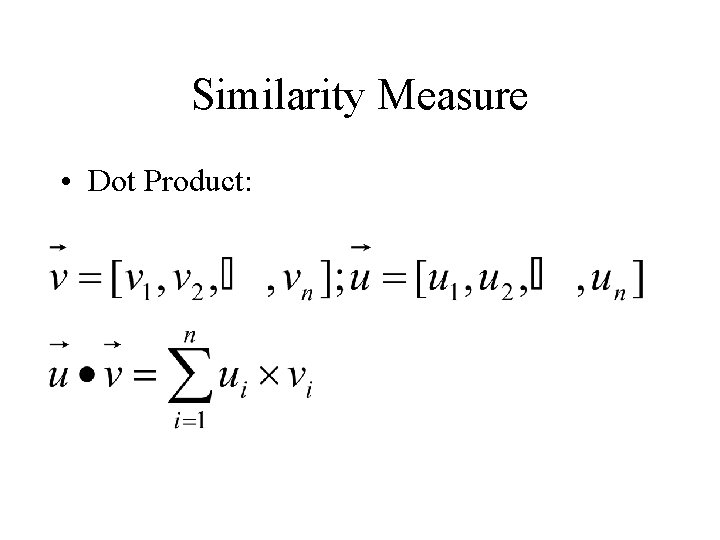

Similarity Measure • Dot Product:

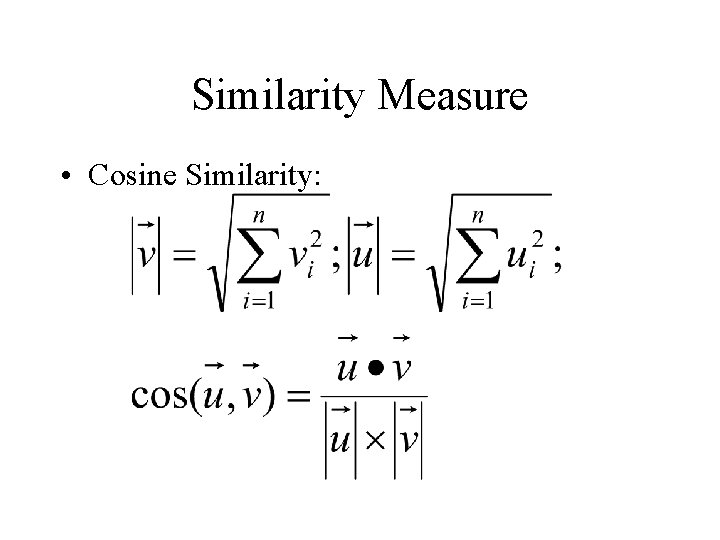

Similarity Measure • Cosine Similarity:

Information Retrieval • Basic assumption: Shared words between query and document • Similarity measures – Dot product – Cosine similarity (normalized)

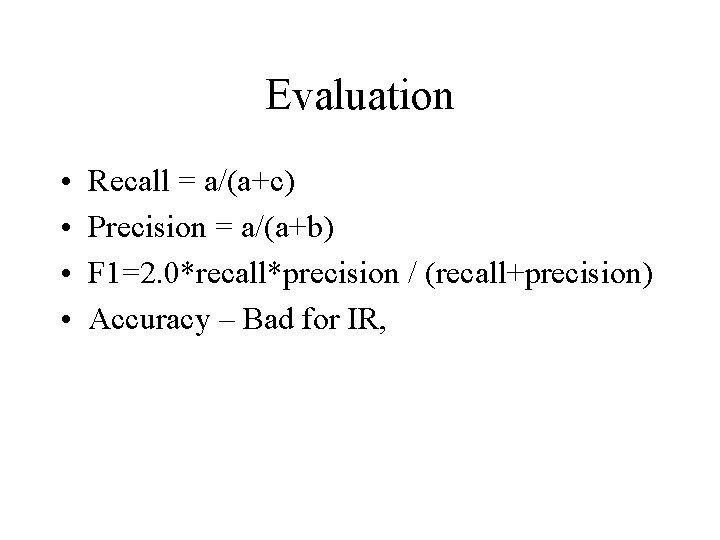

Evaluation • • Recall = a/(a+c) Precision = a/(a+b) F 1=2. 0*recall*precision / (recall+precision) Accuracy – Bad for IR,

Refinement of VSM • Query expansion • Relevance Feedback – Rocchio Formula: … Alpha, beta, gamma and their meanings

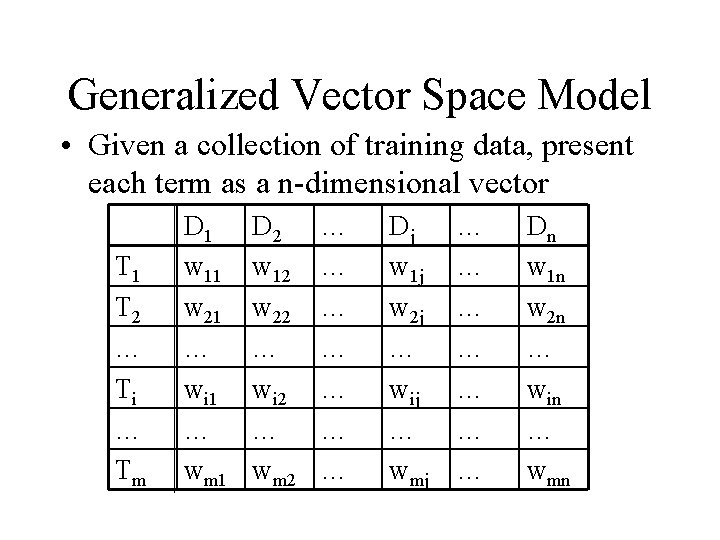

Generalized Vector Space Model • Given a collection of training data, present each term as a n-dimensional vector T 1 T 2 … Ti … Tm D 1 w 11 w 21 … wi 1 … wm 1 D 2 w 12 w 22 … wi 2 … wm 2 … … … … Dj w 1 j w 2 j … wij … wmj … … … … Dn w 1 n w 2 n … win … wmn

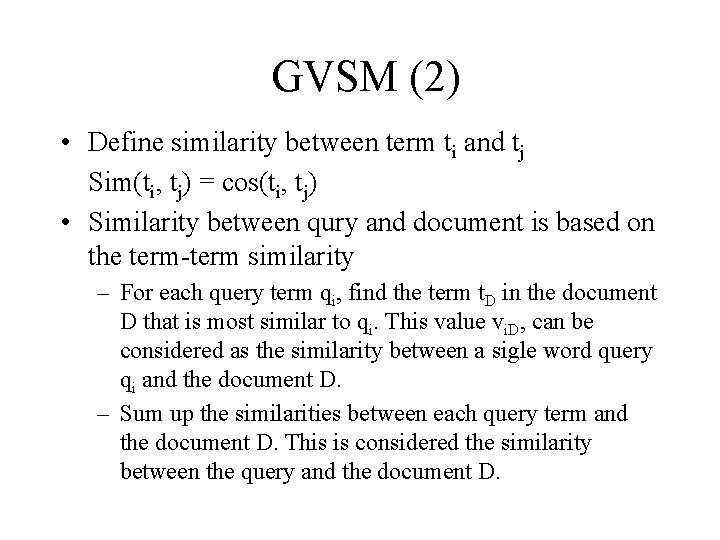

GVSM (2) • Define similarity between term ti and tj Sim(ti, tj) = cos(ti, tj) • Similarity between qury and document is based on the term-term similarity – For each query term qi, find the term t. D in the document D that is most similar to qi. This value vi. D, can be considered as the similarity between a sigle word query qi and the document D. – Sum up the similarities between each query term and the document D. This is considered the similarity between the query and the document D.

![GVSM (3) Sim(Q, D) = Σi[Maxj(sim(qi, dj)] or normalizing for document & query length: GVSM (3) Sim(Q, D) = Σi[Maxj(sim(qi, dj)] or normalizing for document & query length:](http://slidetodoc.com/presentation_image_h/7c33f326a896c2b145dc79c9e8a82120/image-12.jpg)

GVSM (3) Sim(Q, D) = Σi[Maxj(sim(qi, dj)] or normalizing for document & query length: Simnorm(Q, D) =

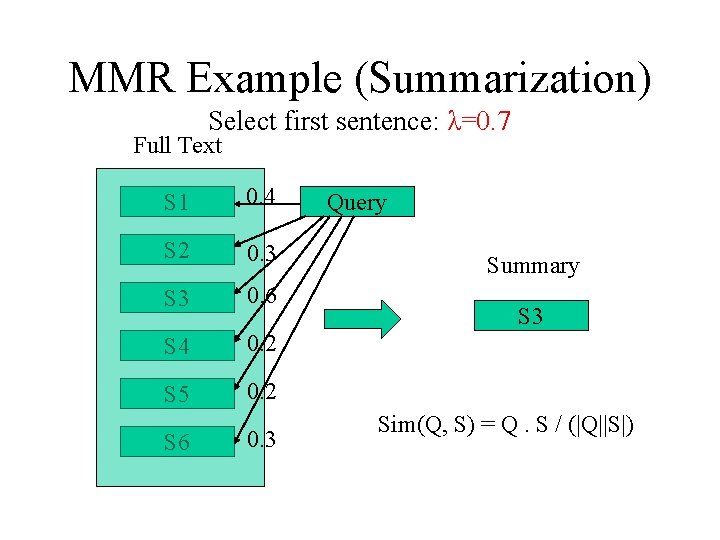

Maximal Marginal Relevance • Redundancy reduction • Getting more novel things • Formula MMR(Q, C, R) = Argmaxkdi in C[λS(Q, di) - (1 -λ)maxdj in R (S(di, dj))]

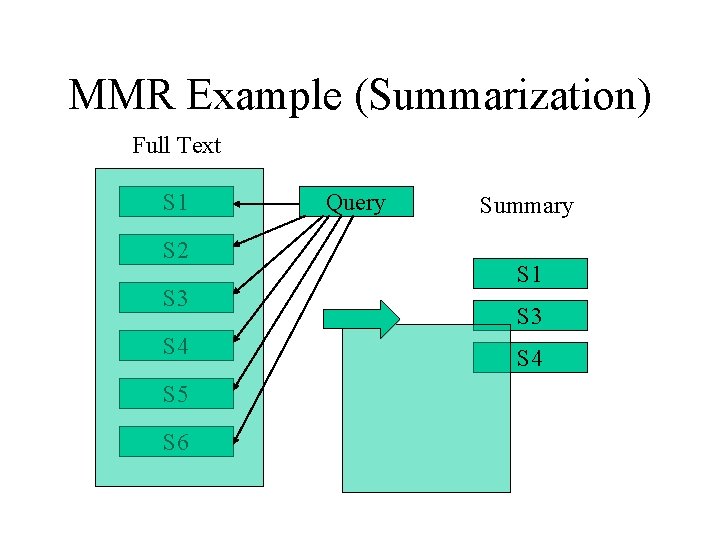

MMR Example (Summarization) Full Text S 1 S 2 S 3 S 4 S 5 S 6 Query Summary S 1 S 3 S 4

MMR Example (Summarization) Select first sentence: λ=0. 7 Full Text S 1 0. 4 S 2 0. 3 S 3 0. 6 S 4 0. 2 S 5 0. 2 S 6 0. 3 Query Summary S 3 Sim(Q, S) = Q. S / (|Q||S|)

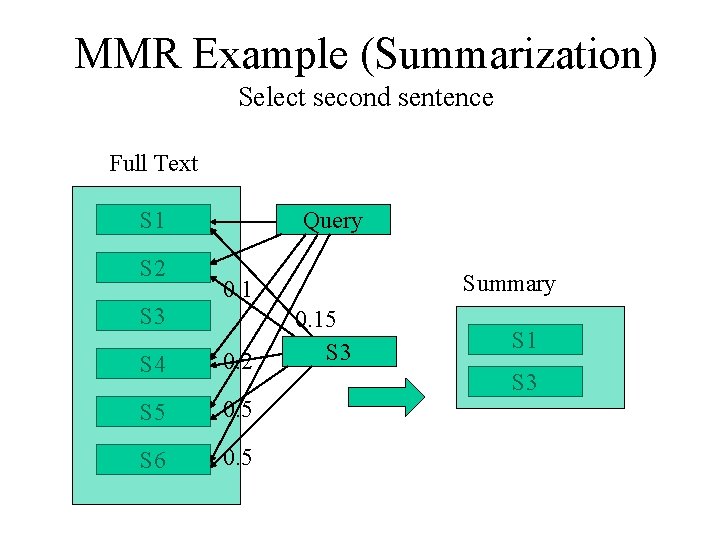

MMR Example (Summarization) Select second sentence Full Text S 1 S 2 Query Summary 0. 1 S 3 S 4 0. 2 S 5 0. 5 S 6 0. 5 0. 15 S 3 S 1 S 3

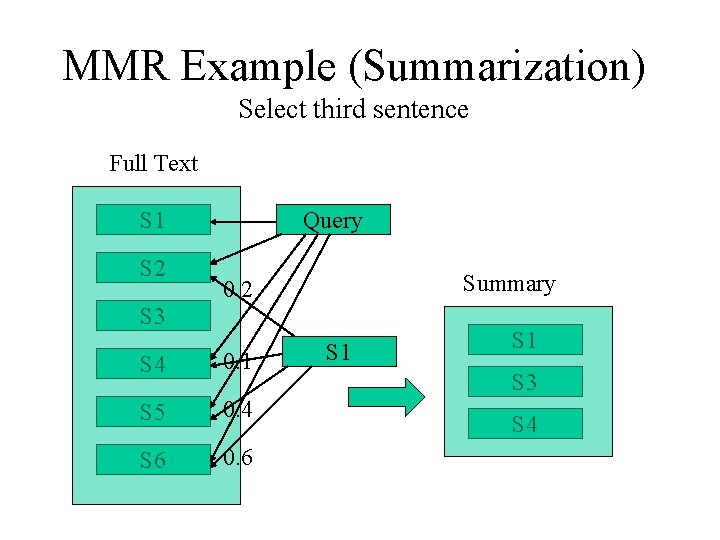

MMR Example (Summarization) Select third sentence Full Text S 1 S 2 Query Summary 0. 2 S 3 S 4 0. 1 S 5 0. 4 S 6 0. 6 S 1 S 3 S 4

Text Categorization Task • You want to classify a document to some categories automatically. For example, the categories are "weather" and "sport". • To do that, you can use k. NN algorithm. • To use k. NN, you need a collection of documents, each of them is labeled to some categories by human.

Text Categorization Procedure • Using VSM represent each document in the training data • Using VSM represent the document to be categorized (new document). • Use cosine (or some other measures, but cosine is good here, why) find top k documents (k nearest neighbors ) in the training data that are similar to the new document. • Decide from the k nearest neighbors what are the categories for the new document

Web Spider • The web graph at any instant of time contains k-connected subgraphs • The spider algorithm given in class is a depth first search through a web subgraph • Avoiding respidering the same page • Completeness is not guaranteed. Partial solution is to get seed URLs as diverse as possible.

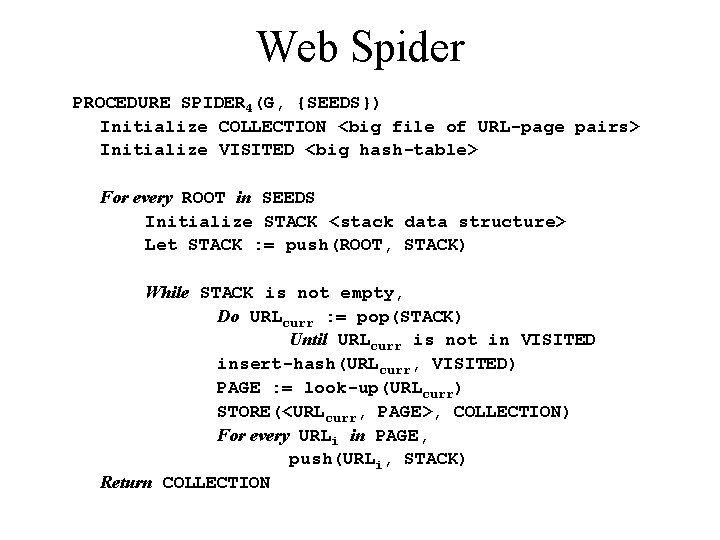

Web Spider PROCEDURE SPIDER 4(G, {SEEDS}) Initialize COLLECTION <big file of URL-page pairs> Initialize VISITED <big hash-table> For every ROOT in SEEDS Initialize STACK <stack data structure> Let STACK : = push(ROOT, STACK) While STACK is not empty, Do URLcurr : = pop(STACK) Until URLcurr is not in VISITED insert-hash(URLcurr, VISITED) PAGE : = look-up(URLcurr) STORE(<URLcurr, PAGE>, COLLECTION) For every URLi in PAGE, push(URLi, STACK) Return COLLECTION

Text Mining Components of Text Mining • Categorization by topic or Genre • Fact extraction from text • Data Mining from DBs or extracted facts

Fact extraction from text • Named Entity Identification FSA/FST, HMM • Role-Situated Named Entities Apply context information • Information Extraction Template matching

Named Entity Identification Definition of A Finite State Acceptor (FSA) • With an input source (e. g. string of words) • Outputs "YES" or "NO" Definition of A Finite State Transducer (FST) • An FSA with variable binding • Outputs "NO" or "YES"+variable-bindings • Variable bindings encode recognized entity e. g. "YES <firstname Hideto> <lastname Suzuki>"

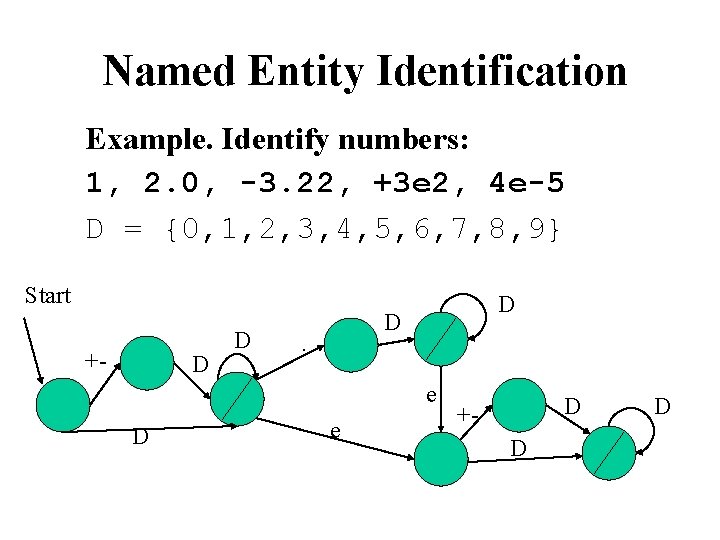

Named Entity Identification Example. Identify numbers: 1, 2. 0, -3. 22, +3 e 2, 4 e-5 D = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9} Start +- D D . e D +D D

Data Mining • Learning by caching – What/when to cache – When to use/invalidate/update cache • Learning from Examples (a. k. a, "Supervised" learning) – – Labeled examples for training Learn the mapping from examples to labels E. g. : Naive Bayes, Decision Trees, . . . Text Categorization (using k. NN or other means) is a learning-from-examples task

Data Mining • "Speedup" Learning – Tuning search heuristics from experience – Inducing explicit control knowledge – Analogical learning (generalized instances) • Optimization "policy" learning – Predicting continuous objective function – E. g. Regression, Reinforcement, . . . • New Pattern Discovery (aka "Unsupervised" Learning) – Finding meaningful correlations in data – E. g. association rules, clustering, . . .

Generalize v. s. Specialize • Generalize: First, each record in your database is a RULE Then, generalize (how? , when to stop? ) • Specialize: First, give a very general rule (almost useless) Then, specialize (how? When to stop? )

Methods for Supervised DM Classifiers • Linear Separators (regression) • Naive Bayes (NB) • Decision Trees (DTs) • k-Nearest Neighbor (k. NN) • Decision rule induction • Support Vector Machines (SVMs) • Neural Networks (NNs). . .

- Slides: 29