CS 471 Lecture 9 Virtual Memory Ch 9

- Slides: 61

CS 471 - Lecture 9 Virtual Memory Ch. 9 George Mason University Fall 2009

Virtual Memory § § Basic Concepts Demand Paging Page Replacement Algorithms Working-Set Model GMU – CS 571 9. 2

Last week in Memory Management… § Assumed all of a process is brought into memory in order to run it. • Contiguous allocation • Paging • Segmentation § What if we remove this assumption? What do we gain/lose? GMU – CS 571 9. 3

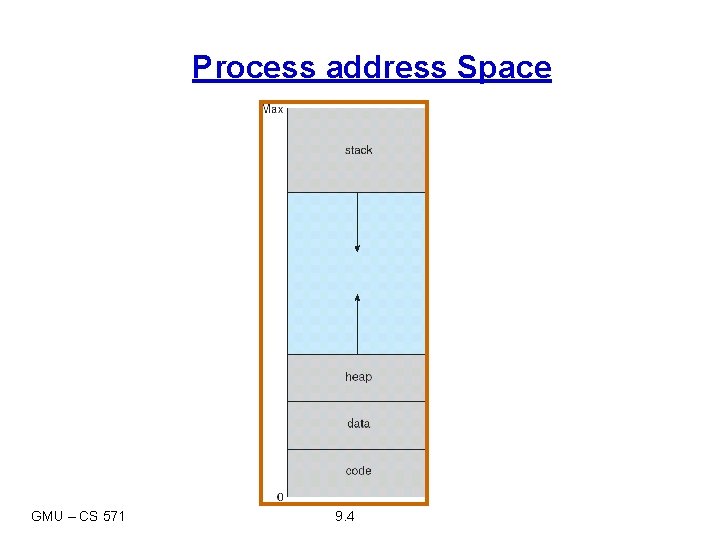

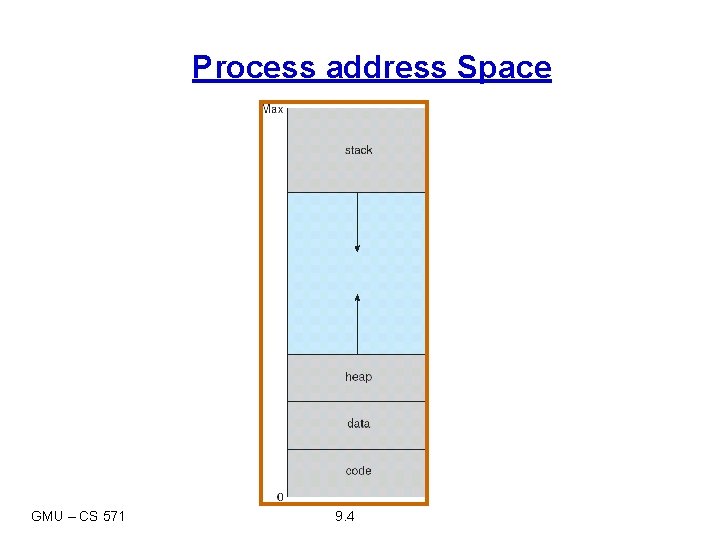

Process address Space GMU – CS 571 9. 4

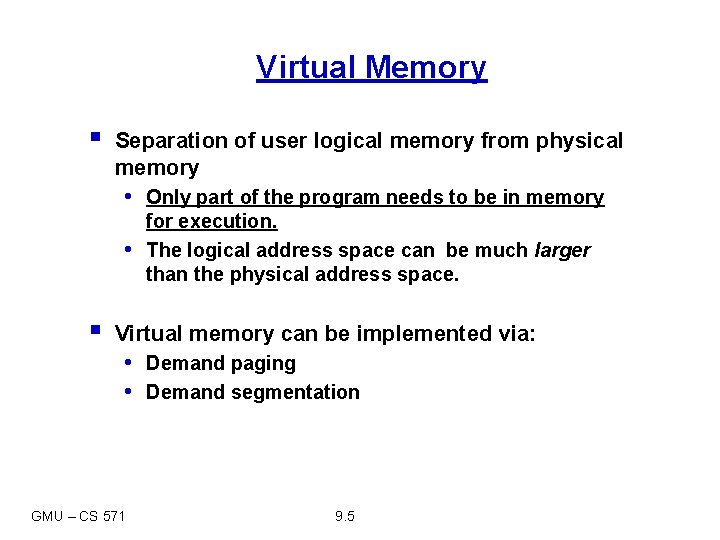

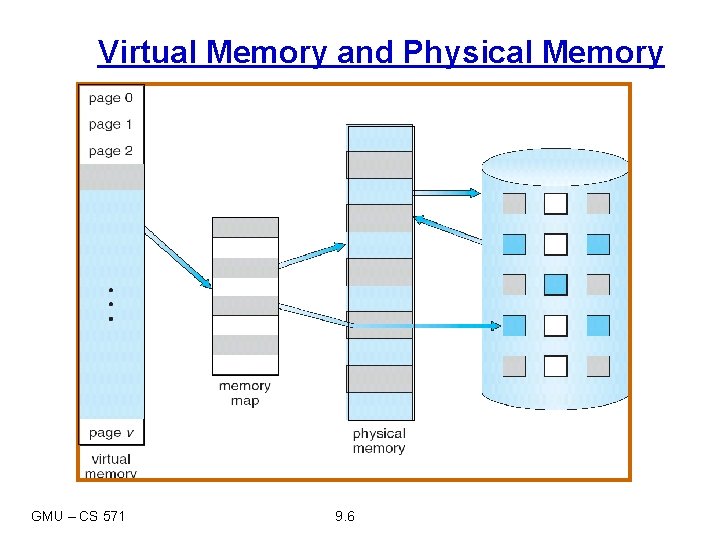

Virtual Memory § Separation of user logical memory from physical memory • Only part of the program needs to be in memory • § for execution. The logical address space can be much larger than the physical address space. Virtual memory can be implemented via: • Demand paging • Demand segmentation GMU – CS 571 9. 5

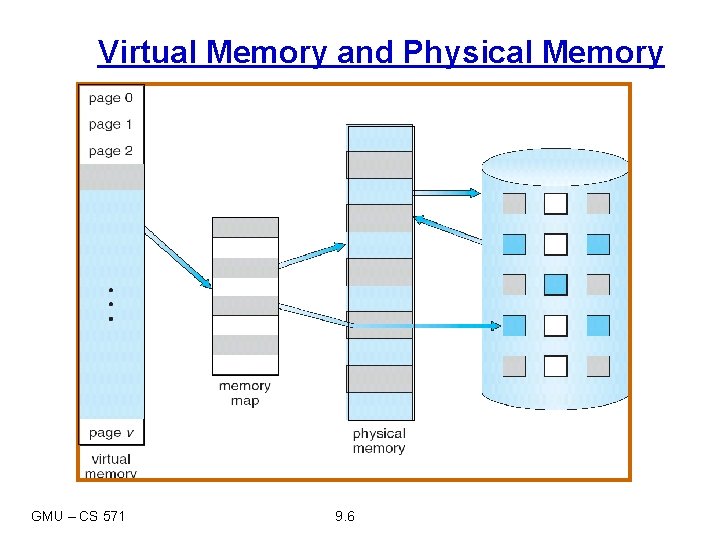

Virtual Memory and Physical Memory GMU – CS 571 9. 6

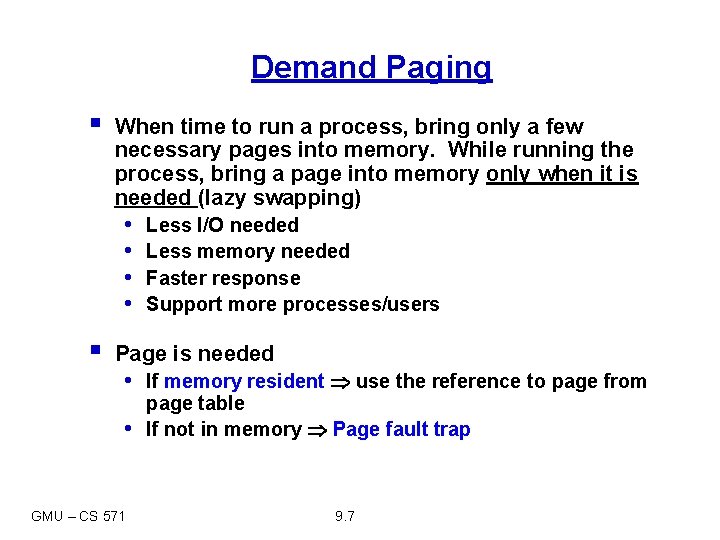

Demand Paging § When time to run a process, bring only a few necessary pages into memory. While running the process, bring a page into memory only when it is needed (lazy swapping) • • § Less I/O needed Less memory needed Faster response Support more processes/users Page is needed • If memory resident use the reference to page from • GMU – CS 571 page table If not in memory Page fault trap 9. 7

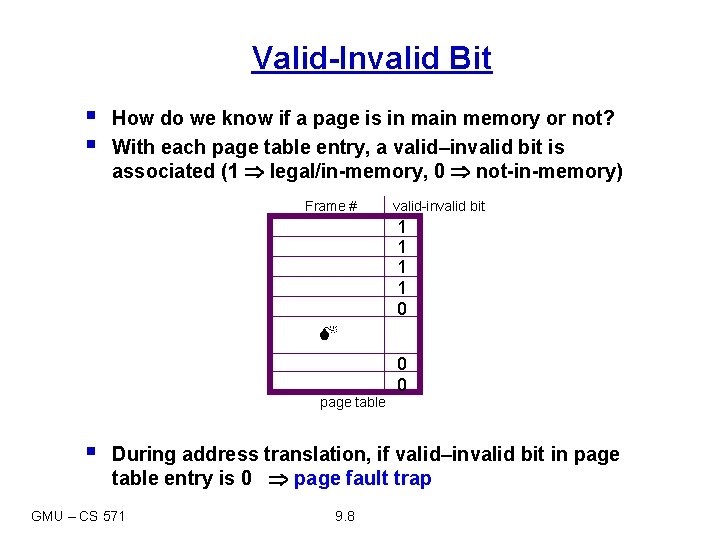

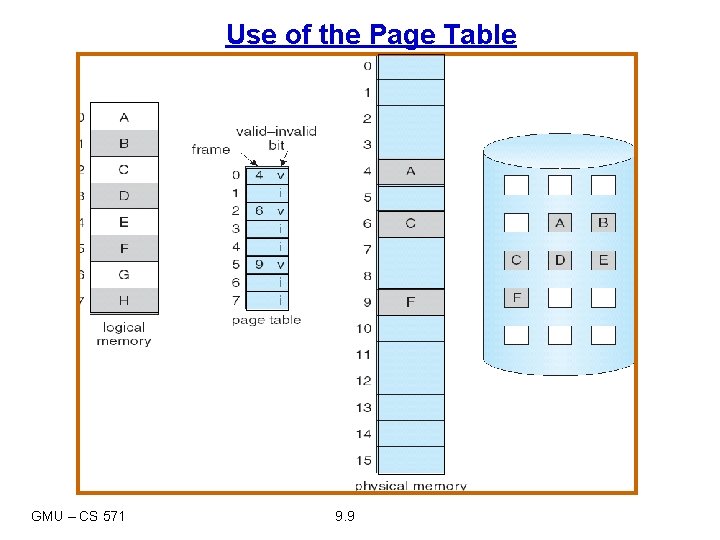

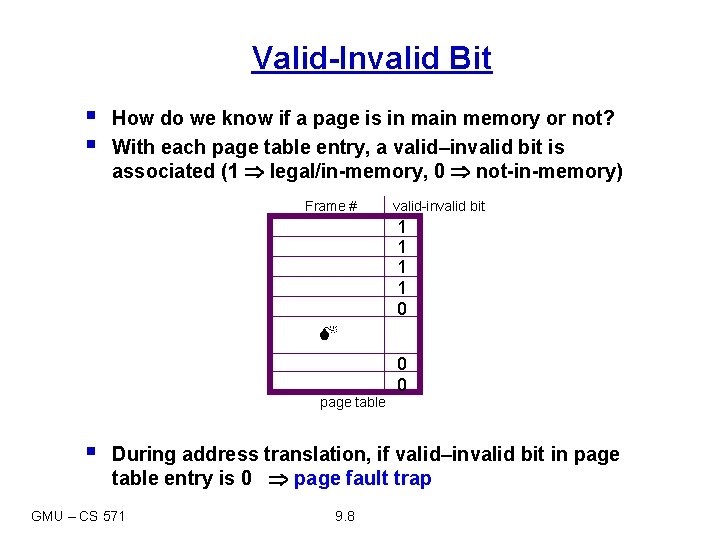

Valid-Invalid Bit § § How do we know if a page is in main memory or not? With each page table entry, a valid–invalid bit is associated (1 legal/in-memory, 0 not-in-memory) Frame # valid-invalid bit 1 1 0 0 0 page table § During address translation, if valid–invalid bit in page table entry is 0 page fault trap GMU – CS 571 9. 8

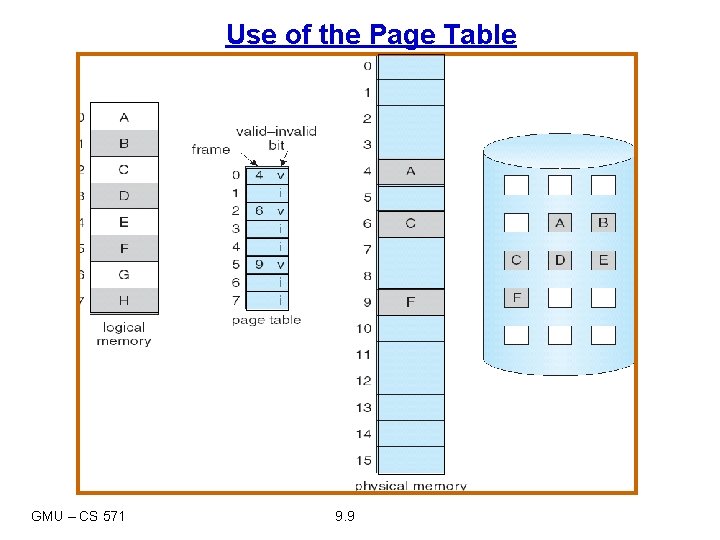

Use of the Page Table GMU – CS 571 9. 9

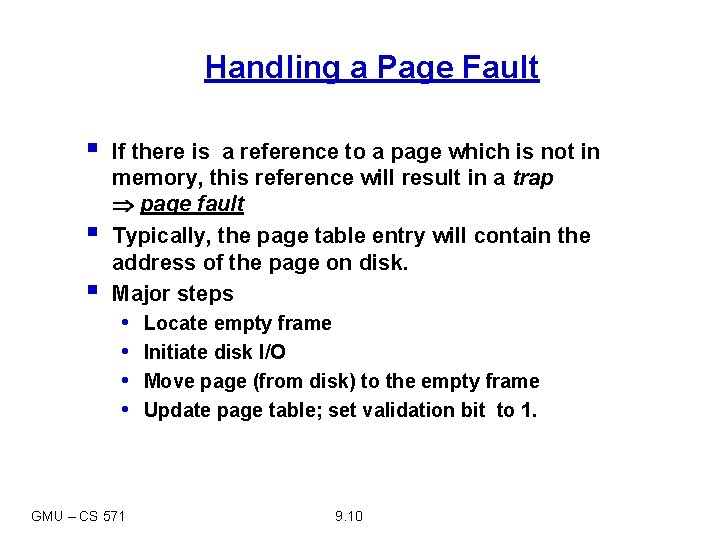

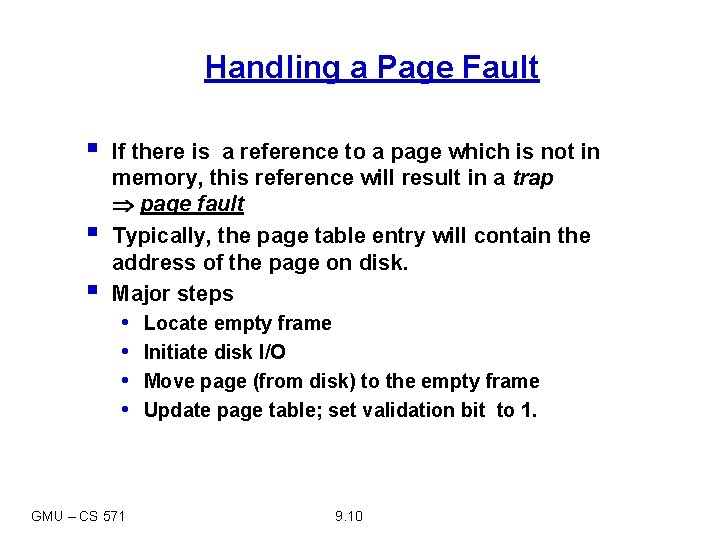

Handling a Page Fault § § § If there is a reference to a page which is not in memory, this reference will result in a trap page fault Typically, the page table entry will contain the address of the page on disk. Major steps • • GMU – CS 571 Locate empty frame Initiate disk I/O Move page (from disk) to the empty frame Update page table; set validation bit to 1. 9. 10

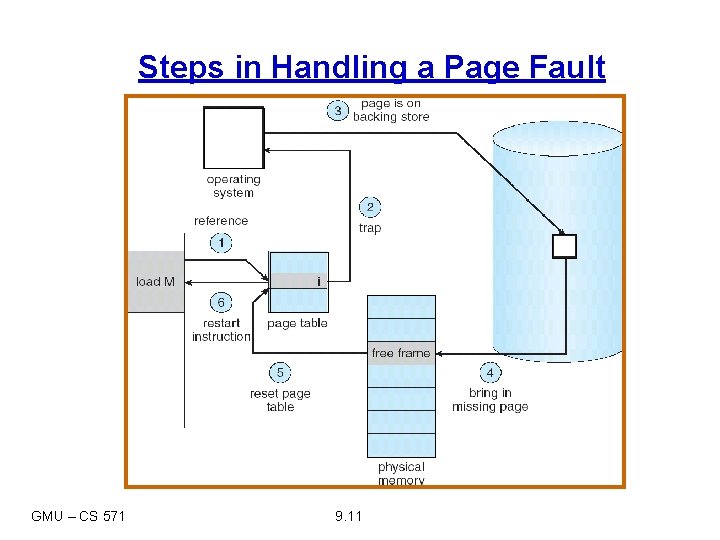

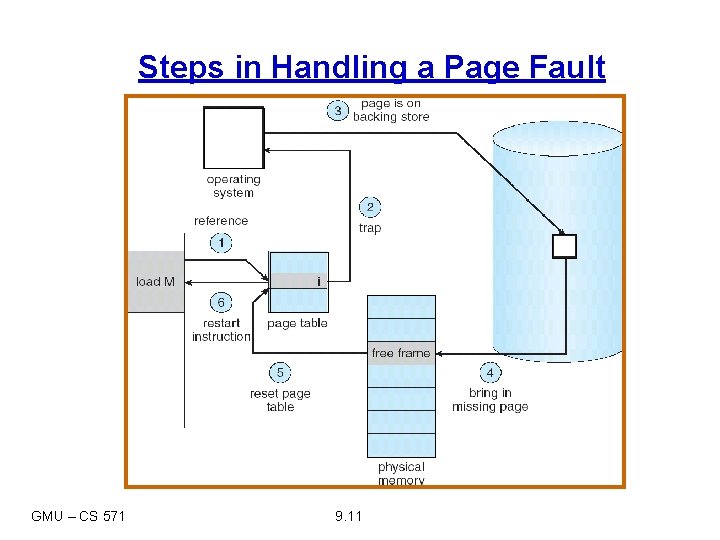

Steps in Handling a Page Fault GMU – CS 571 9. 11

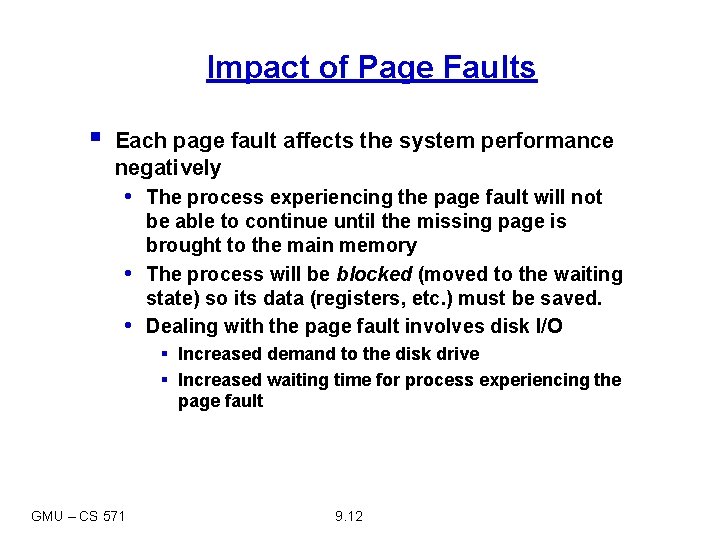

Impact of Page Faults § Each page fault affects the system performance negatively • The process experiencing the page fault will not • • be able to continue until the missing page is brought to the main memory The process will be blocked (moved to the waiting state) so its data (registers, etc. ) must be saved. Dealing with the page fault involves disk I/O § Increased demand to the disk drive § Increased waiting time for process experiencing the page fault GMU – CS 571 9. 12

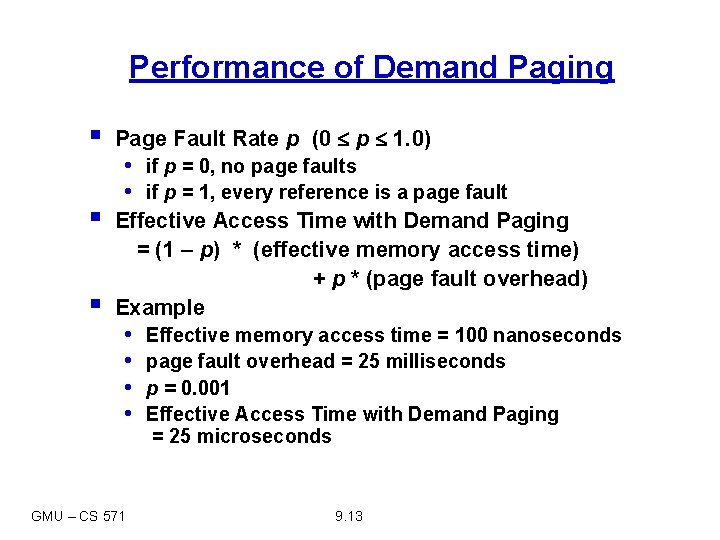

Performance of Demand Paging § Page Fault Rate p (0 p 1. 0) § Effective Access Time with Demand Paging = (1 – p) * (effective memory access time) + p * (page fault overhead) Example § • if p = 0, no page faults • if p = 1, every reference is a page fault • • GMU – CS 571 Effective memory access time = 100 nanoseconds page fault overhead = 25 milliseconds p = 0. 001 Effective Access Time with Demand Paging = 25 microseconds 9. 13

Page Replacement § As we increase the degree of multi-programming, over-allocation of memory becomes a problem. § What if we are unable to find a free frame at the time of the page fault? § One solution: Swap out a process (writing it back to disk), free all its frames and reduce the level of multiprogramming • May be good under some conditions § Another option: free a memory frame already in use. GMU – CS 571 9. 14

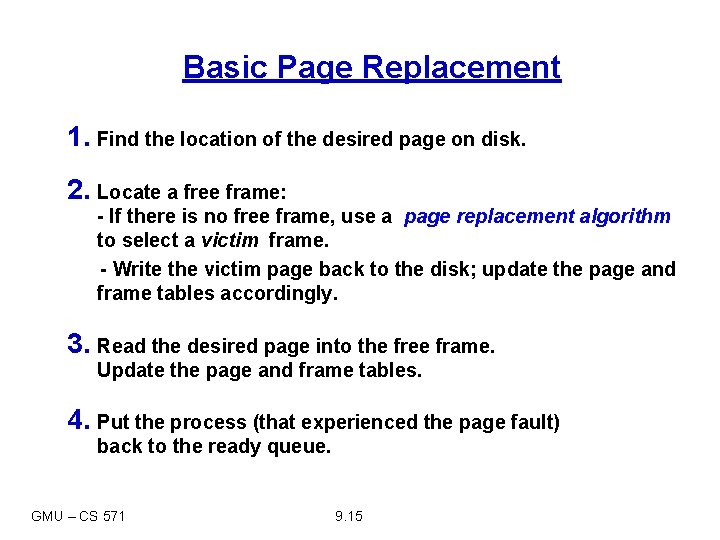

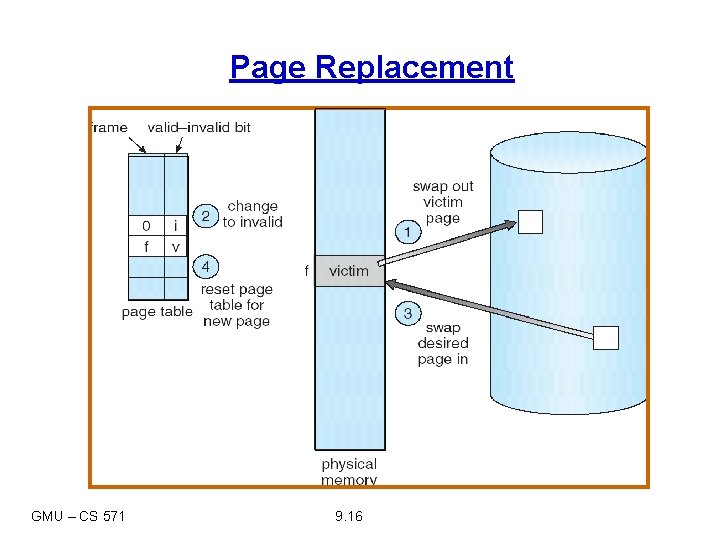

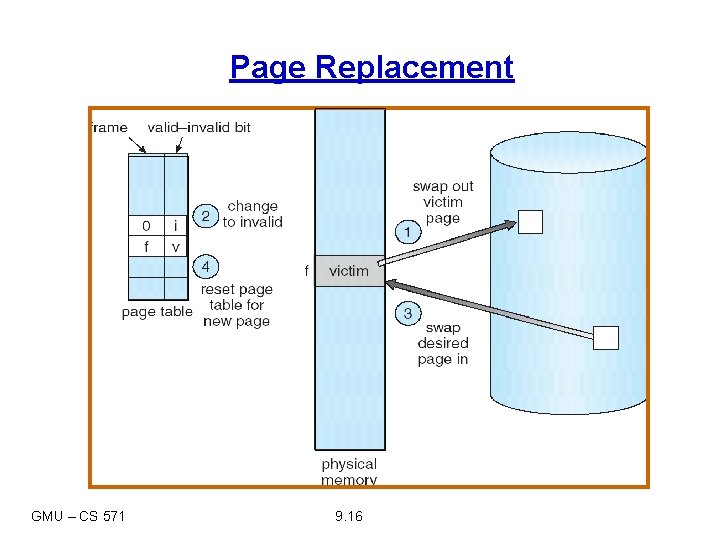

Basic Page Replacement 1. Find the location of the desired page on disk. 2. Locate a free frame: - If there is no free frame, use a page replacement algorithm to select a victim frame. - Write the victim page back to the disk; update the page and frame tables accordingly. 3. Read the desired page into the free frame. Update the page and frame tables. 4. Put the process (that experienced the page fault) back to the ready queue. GMU – CS 571 9. 15

Page Replacement GMU – CS 571 9. 16

Page Replacement § Observe: If there are no free frames, two page transfers needed at each page fault! § We can use a modify (dirty) bit to reduce overhead of page transfers – only modified pages are written back to disk. § Page replacement completes the separation between the logical memory and the physical memory – large virtual memory can be provided on a smaller physical memory. GMU – CS 571 9. 17

Page Replacement Algorithms § When page replacement is required, we must select the frames that are to be replaced. § Primary Objective: Use the algorithm with lowest page-fault rate. • Efficiency • Cost § Evaluate algorithm by running it on a particular string of memory references (reference string) and computing the number of page faults on that string. § We can generate reference strings artificially or we can use specific traces. GMU – CS 571 9. 18

First-In-First-Out (FIFO) Algorithm § Simplest page replacement algorithm. § FIFO replacement algorithm chooses the “oldest” page in the memory. § Implementation: FIFO queue holds identifiers of all the pages in memory. • We replace the page at the head of the queue. • When a page is brought into memory, it is inserted at the tail of the queue. GMU – CS 571 9. 19

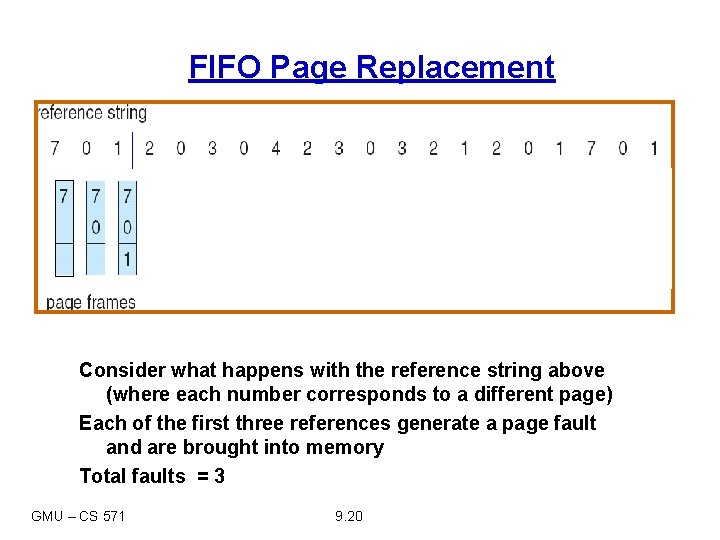

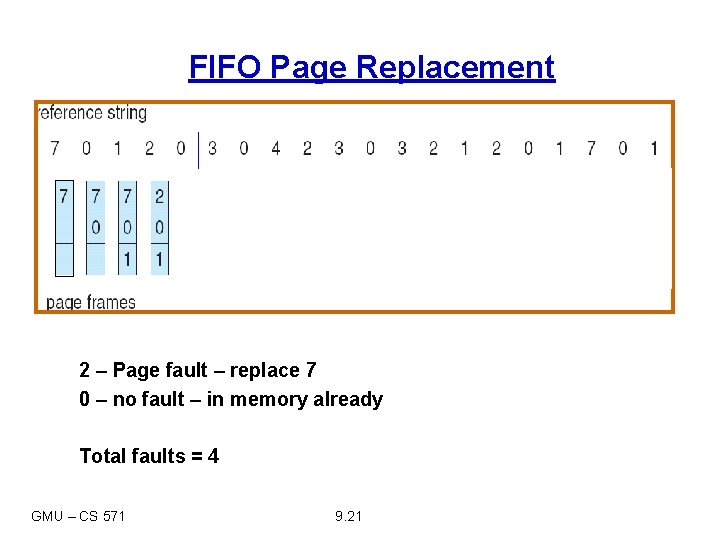

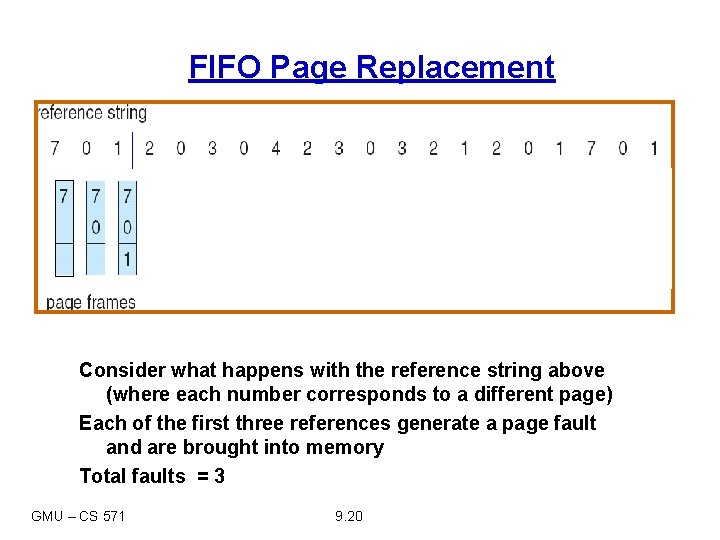

FIFO Page Replacement Consider what happens with the reference string above (where each number corresponds to a different page) Each of the first three references generate a page fault and are brought into memory Total faults = 3 GMU – CS 571 9. 20

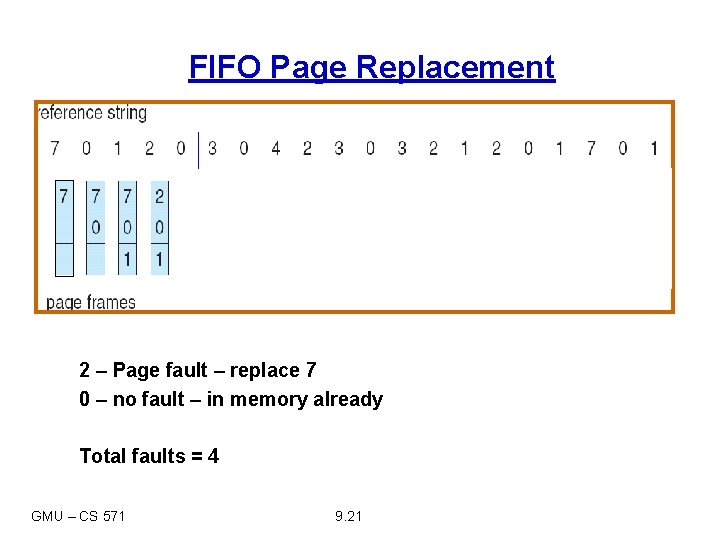

FIFO Page Replacement 2 – Page fault – replace 7 0 – no fault – in memory already Total faults = 4 GMU – CS 571 9. 21

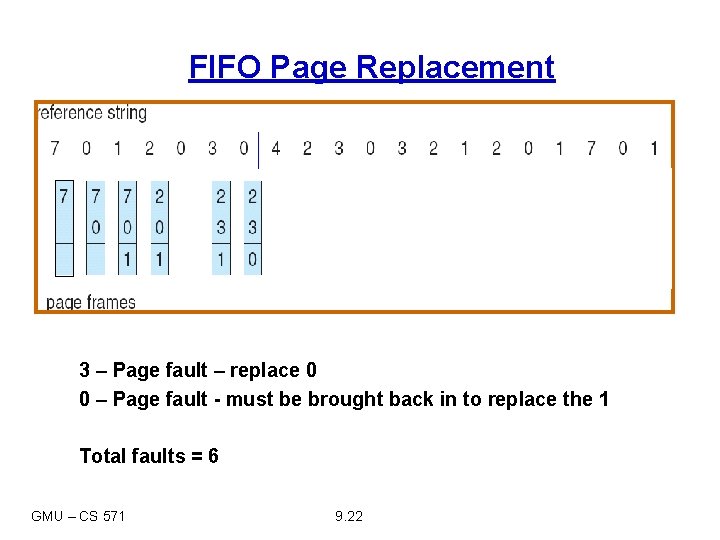

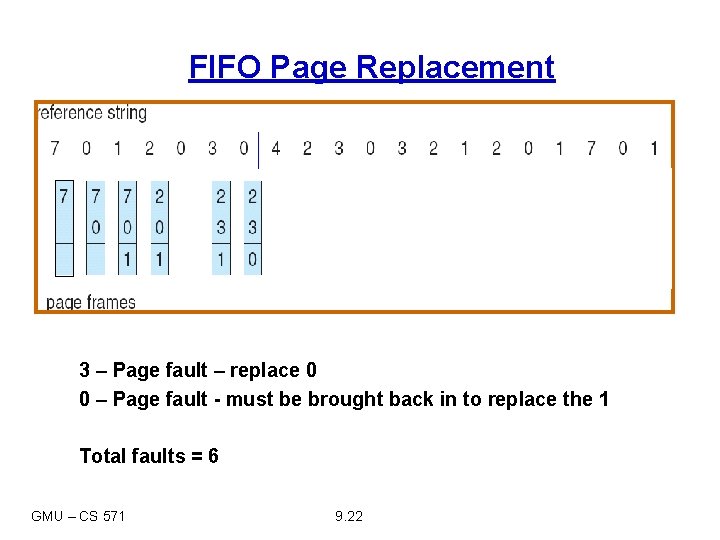

FIFO Page Replacement 3 – Page fault – replace 0 0 – Page fault - must be brought back in to replace the 1 Total faults = 6 GMU – CS 571 9. 22

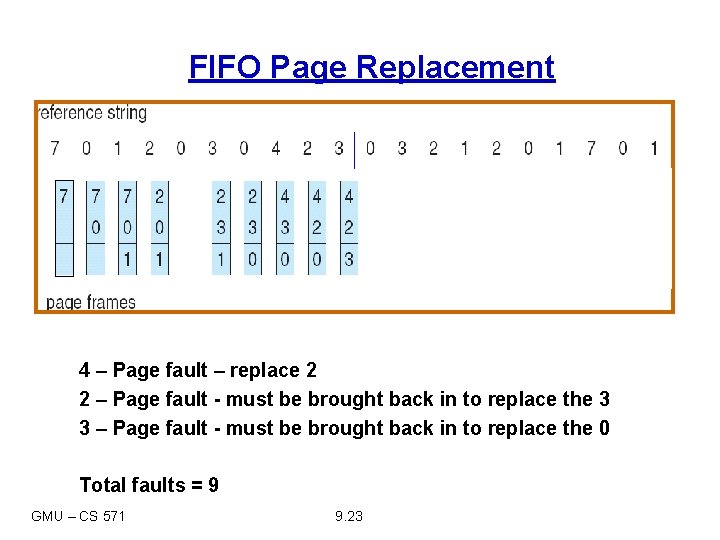

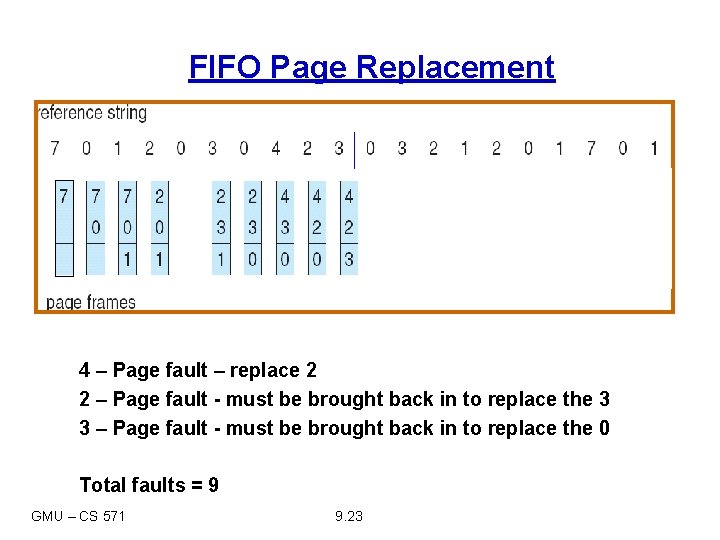

FIFO Page Replacement 4 – Page fault – replace 2 2 – Page fault - must be brought back in to replace the 3 3 – Page fault - must be brought back in to replace the 0 Total faults = 9 GMU – CS 571 9. 23

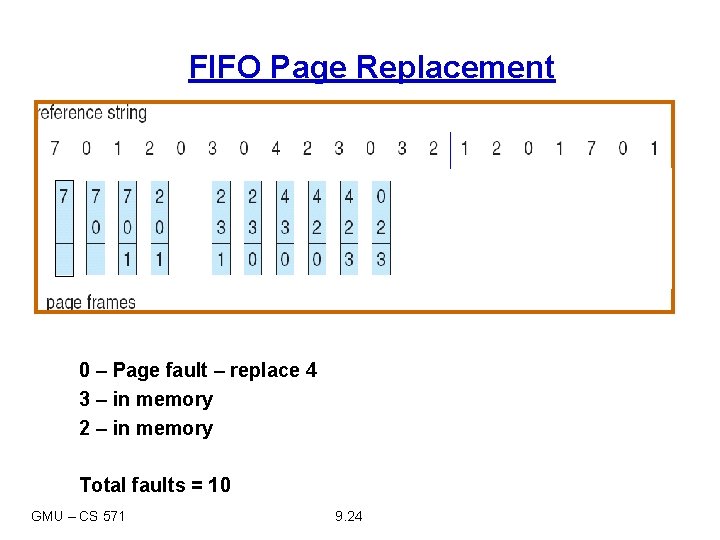

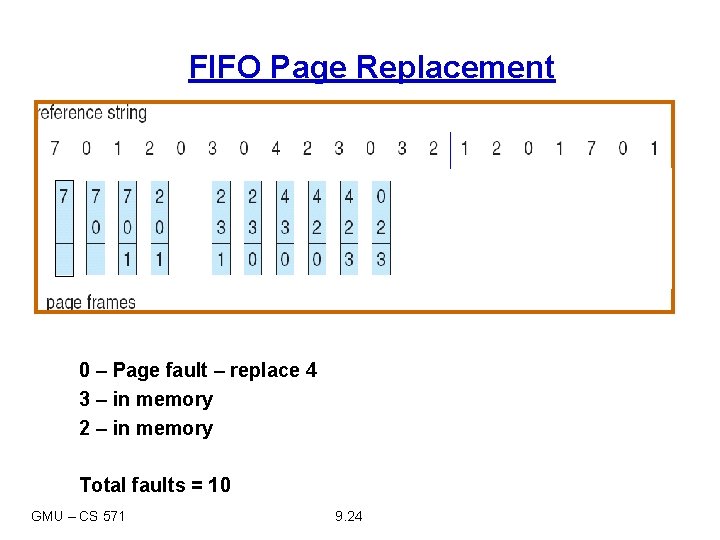

FIFO Page Replacement 0 – Page fault – replace 4 3 – in memory 2 – in memory Total faults = 10 GMU – CS 571 9. 24

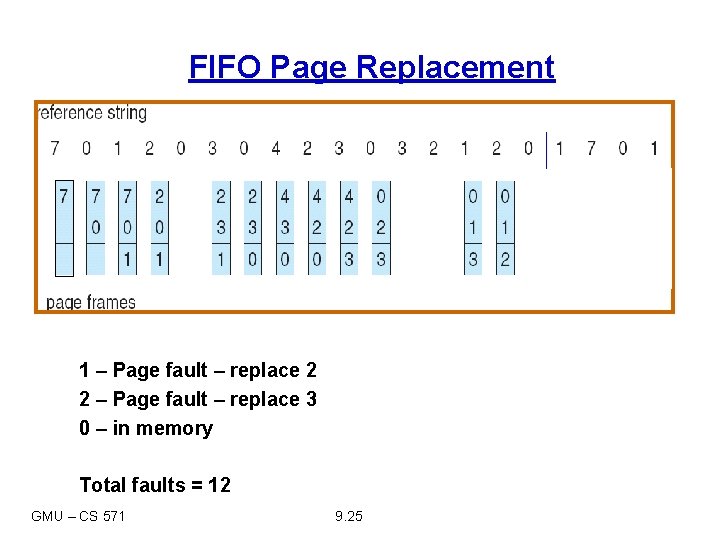

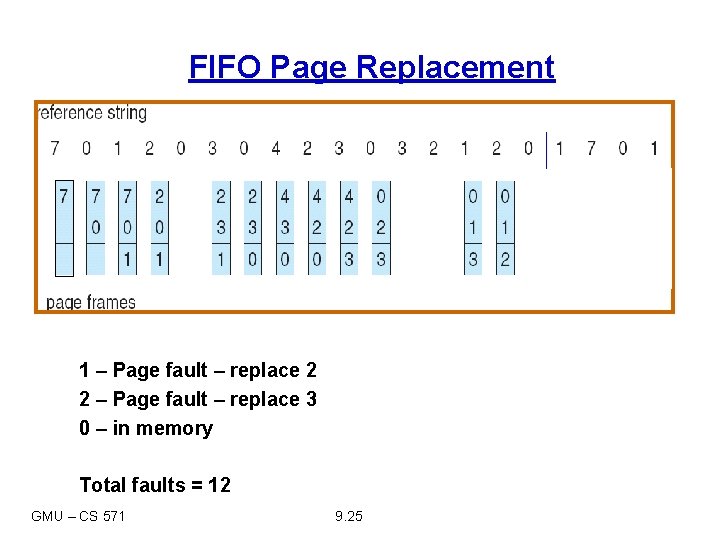

FIFO Page Replacement 1 – Page fault – replace 2 2 – Page fault – replace 3 0 – in memory Total faults = 12 GMU – CS 571 9. 25

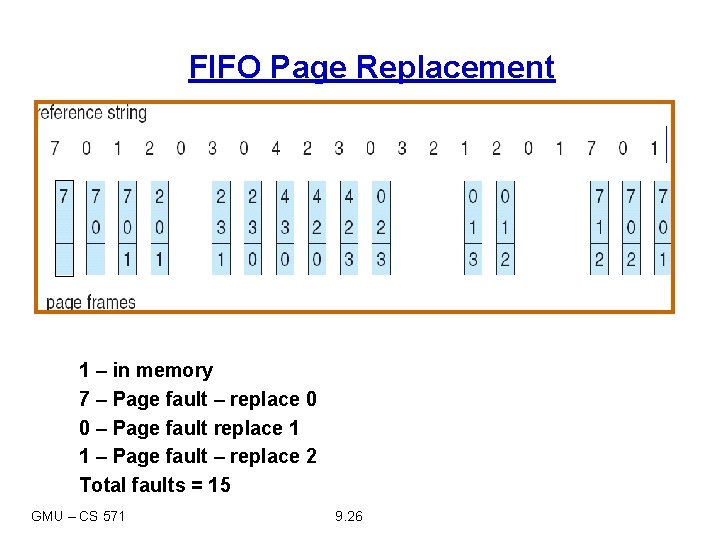

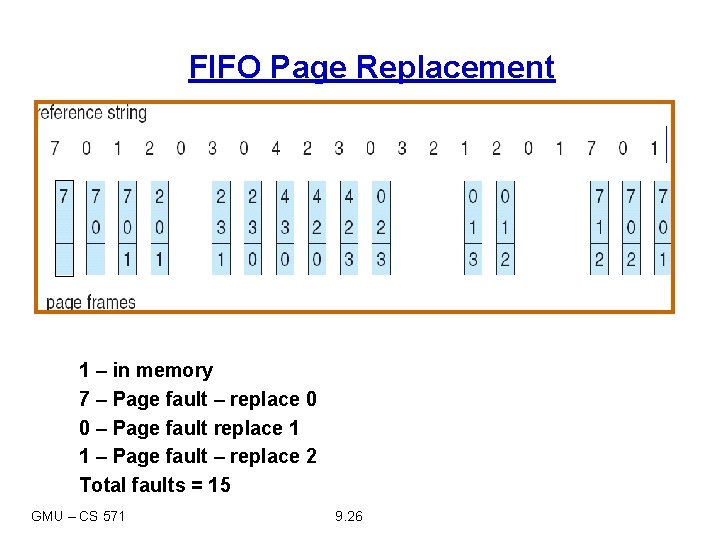

FIFO Page Replacement 1 – in memory 7 – Page fault – replace 0 0 – Page fault replace 1 1 – Page fault – replace 2 Total faults = 15 GMU – CS 571 9. 26

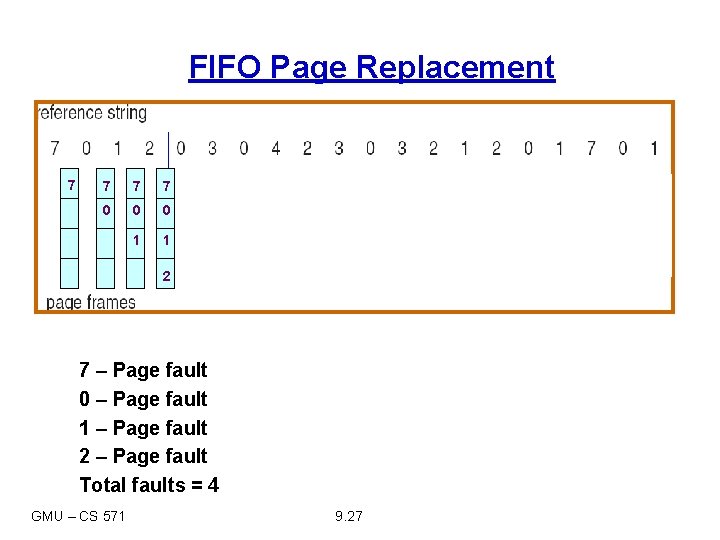

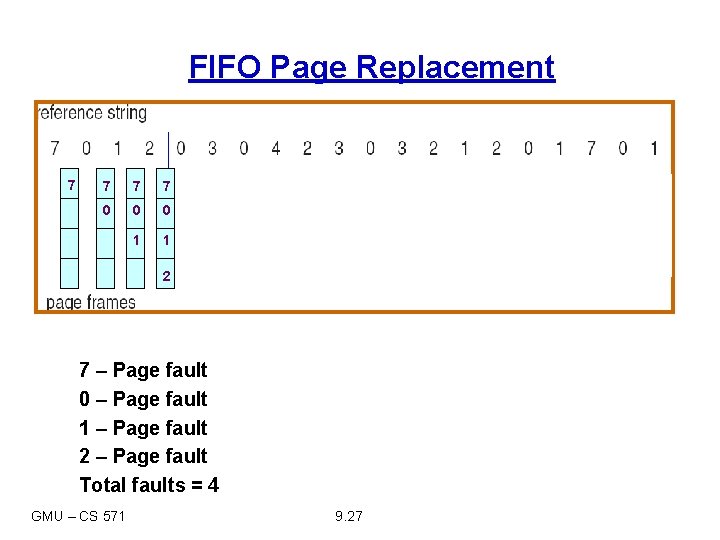

FIFO Page Replacement 7 7 0 0 0 1 1 2 7 – Page fault 0 – Page fault 1 – Page fault 2 – Page fault Total faults = 4 GMU – CS 571 9. 27

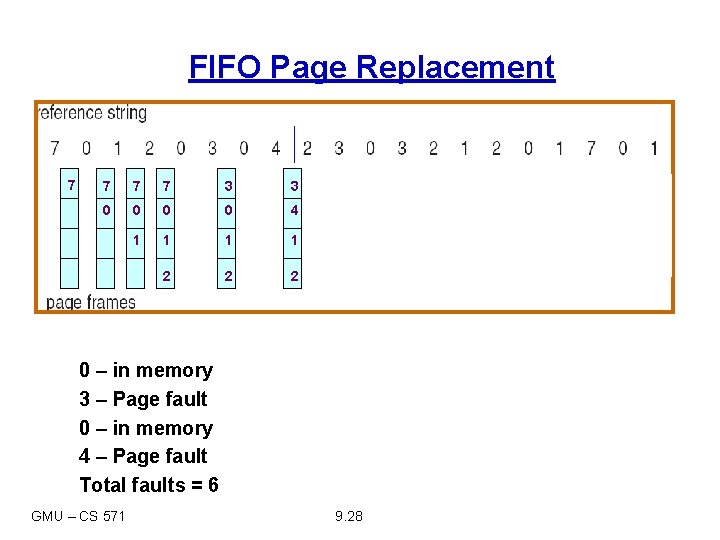

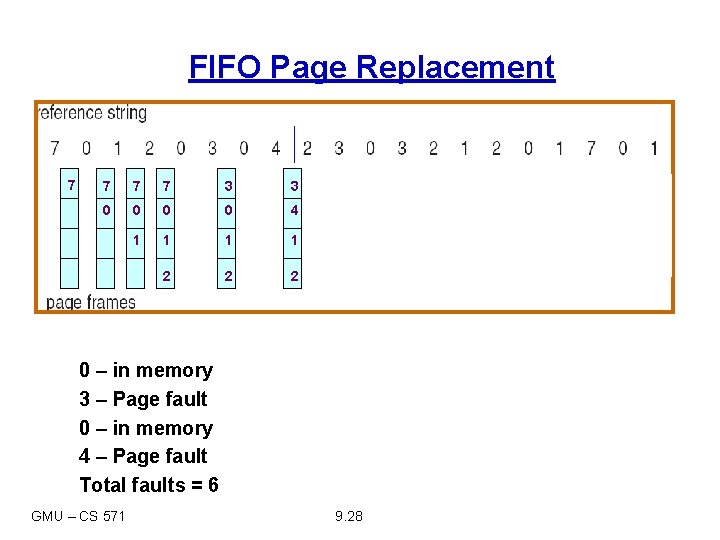

FIFO Page Replacement 7 7 3 3 0 0 4 1 1 2 2 2 0 – in memory 3 – Page fault 0 – in memory 4 – Page fault Total faults = 6 GMU – CS 571 9. 28

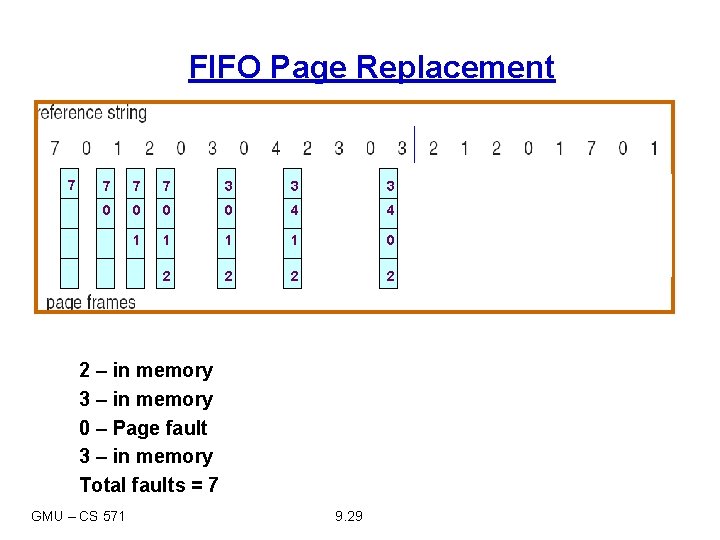

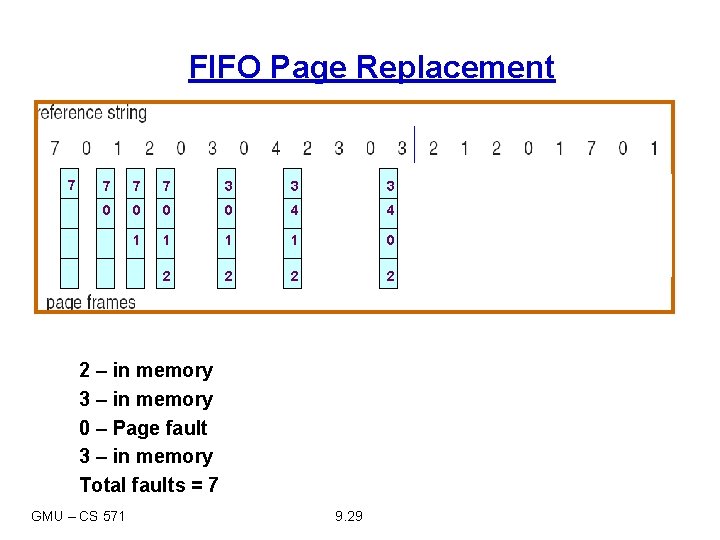

FIFO Page Replacement 7 7 3 3 3 0 0 4 4 1 1 0 2 2 2 – in memory 3 – in memory 0 – Page fault 3 – in memory Total faults = 7 GMU – CS 571 9. 29

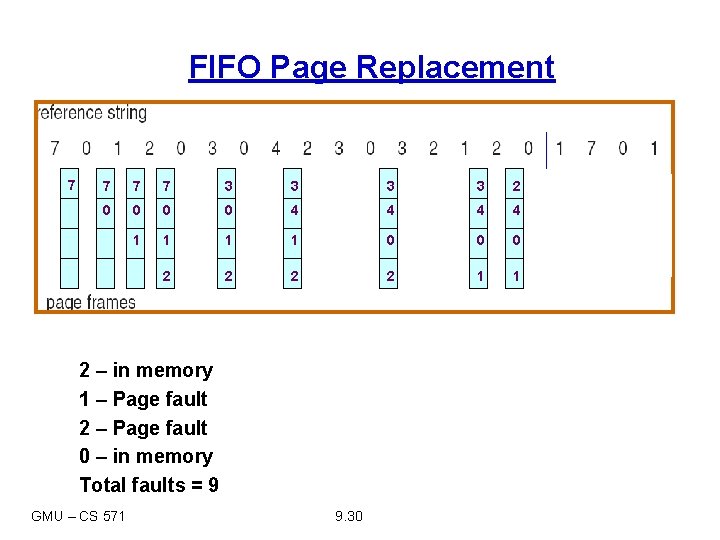

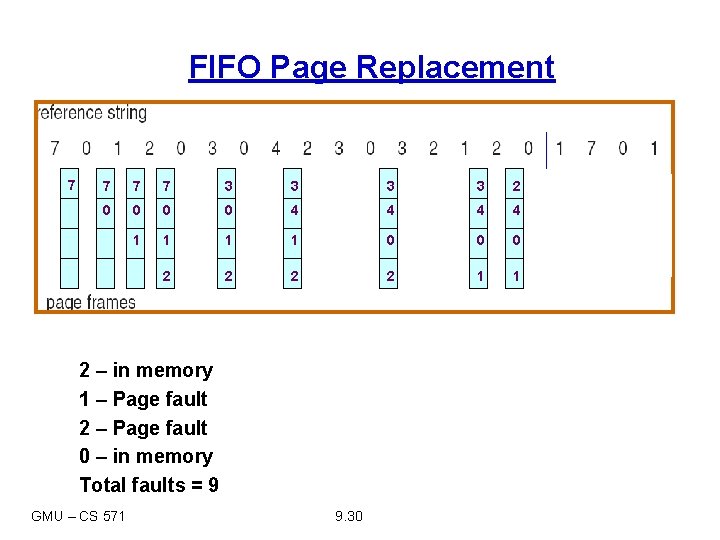

FIFO Page Replacement 7 7 3 3 2 0 0 4 4 1 1 0 0 0 2 2 1 1 2 – in memory 1 – Page fault 2 – Page fault 0 – in memory Total faults = 9 GMU – CS 571 9. 30

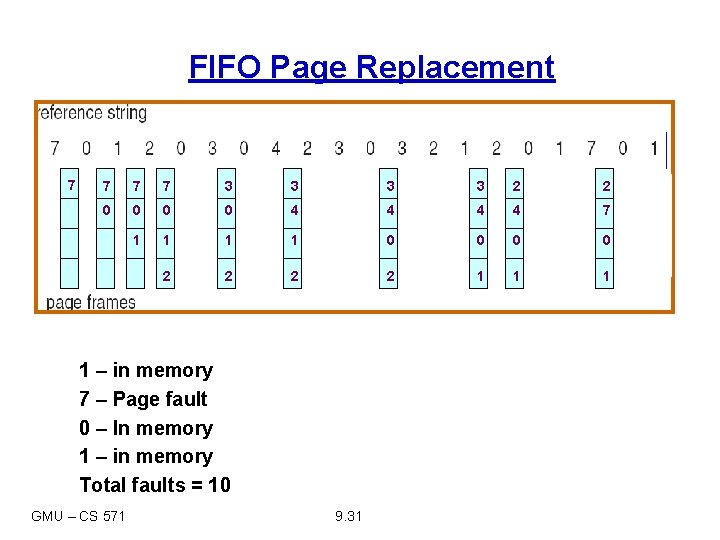

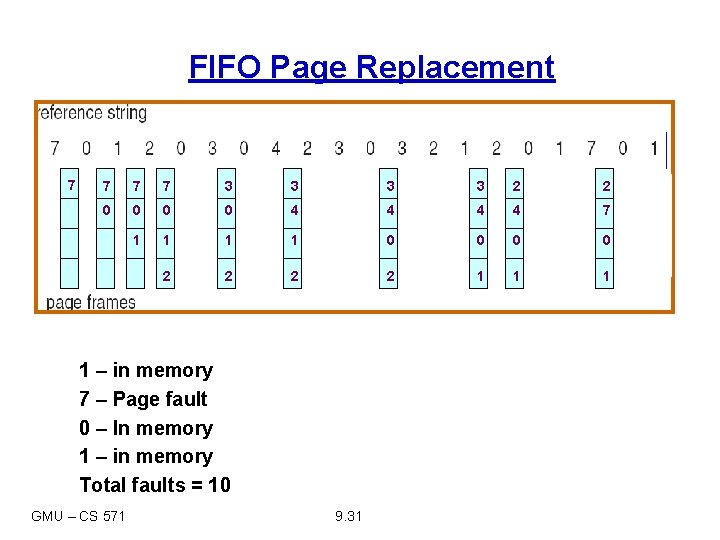

FIFO Page Replacement 7 7 3 3 2 2 0 0 4 4 7 1 1 0 0 2 2 1 1 – in memory 7 – Page fault 0 – In memory 1 – in memory Total faults = 10 GMU – CS 571 9. 31

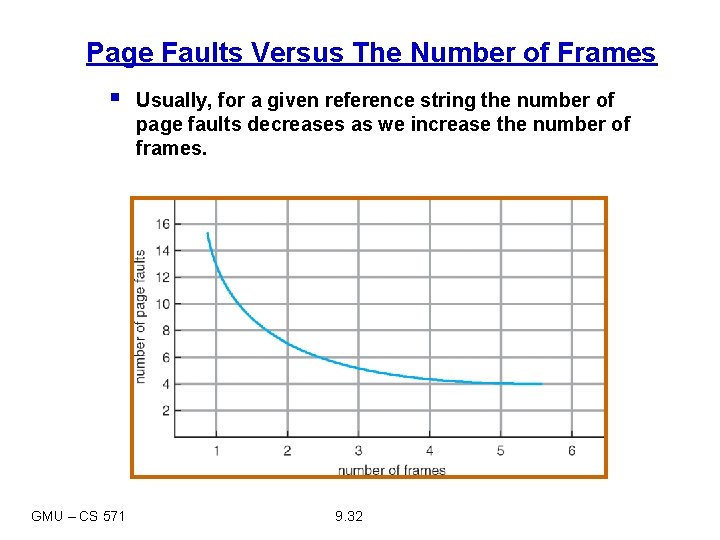

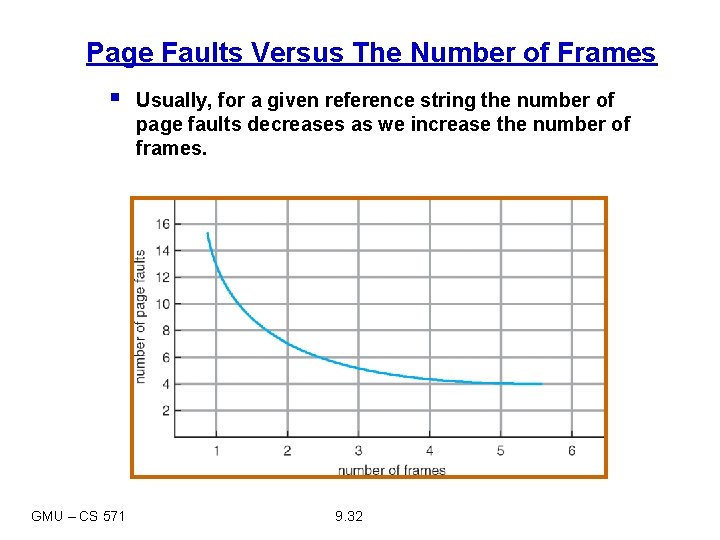

Page Faults Versus The Number of Frames § GMU – CS 571 Usually, for a given reference string the number of page faults decreases as we increase the number of frames. 9. 32

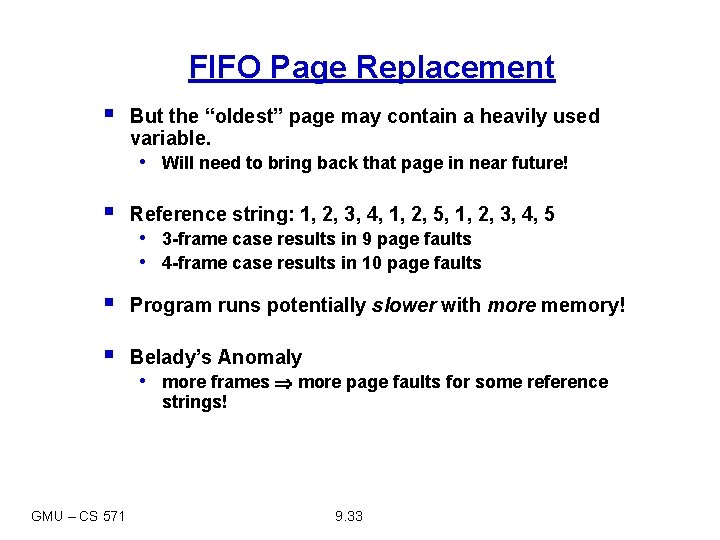

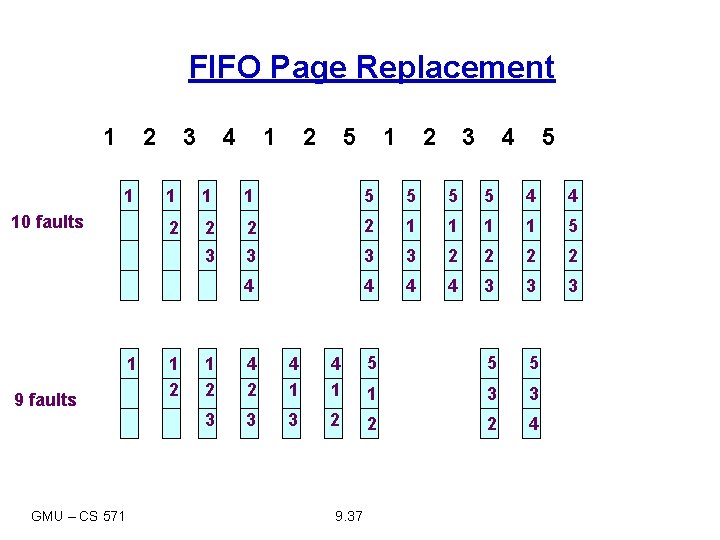

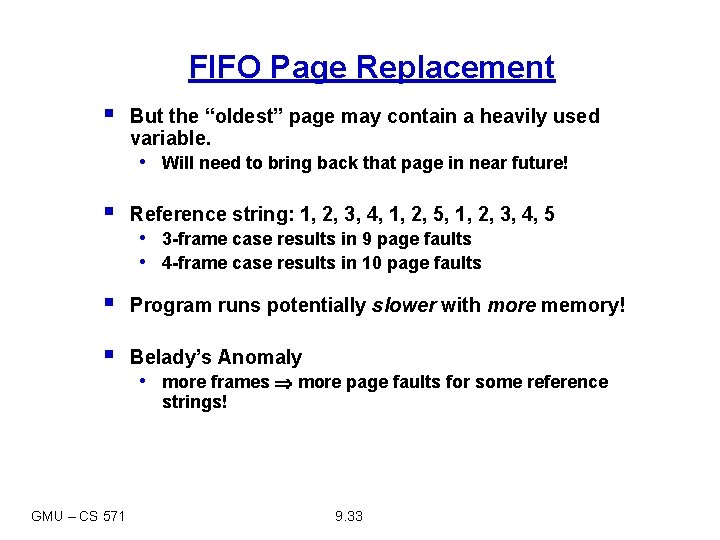

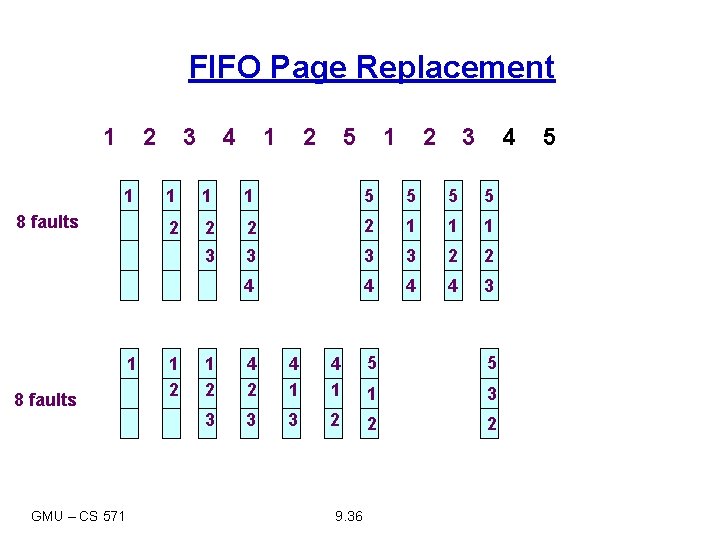

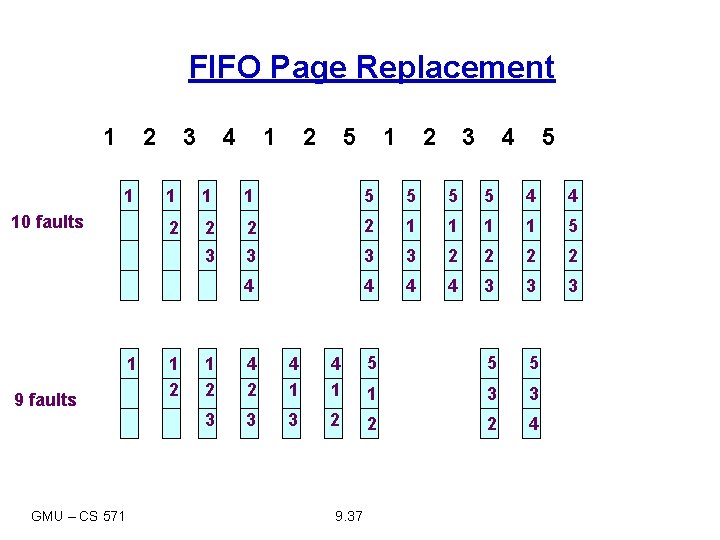

FIFO Page Replacement § But the “oldest” page may contain a heavily used variable. • Will need to bring back that page in near future! § Reference string: 1, 2, 3, 4, 1, 2, 5, 1, 2, 3, 4, 5 § Program runs potentially slower with more memory! § Belady’s Anomaly • 3 -frame case results in 9 page faults • 4 -frame case results in 10 page faults • more frames more page faults for some reference strings! GMU – CS 571 9. 33

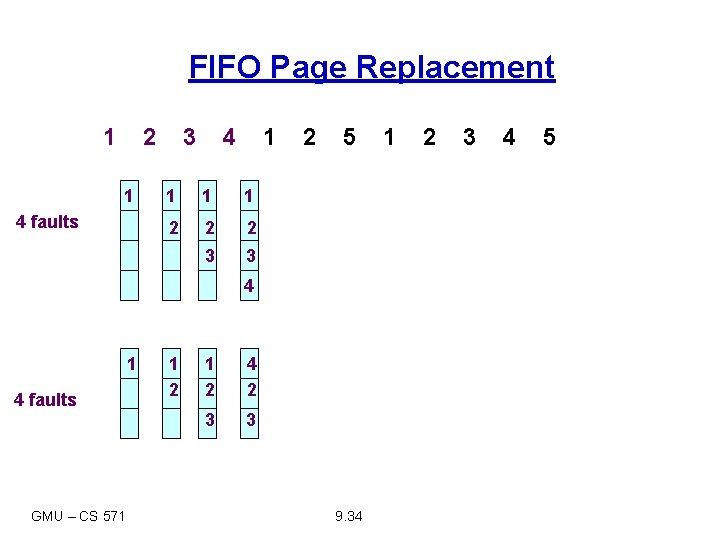

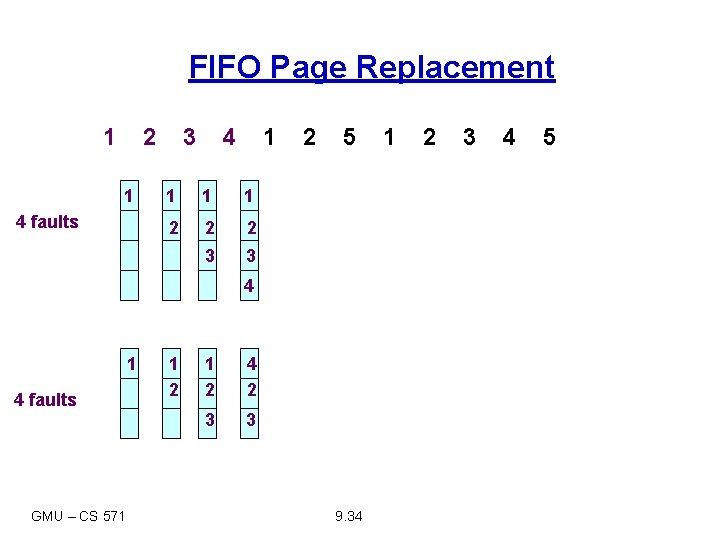

FIFO Page Replacement 1 2 1 4 faults 3 4 1 1 2 2 2 3 3 2 5 4 1 4 faults GMU – CS 571 1 2 4 2 3 3 9. 34 1 2 3 4 5

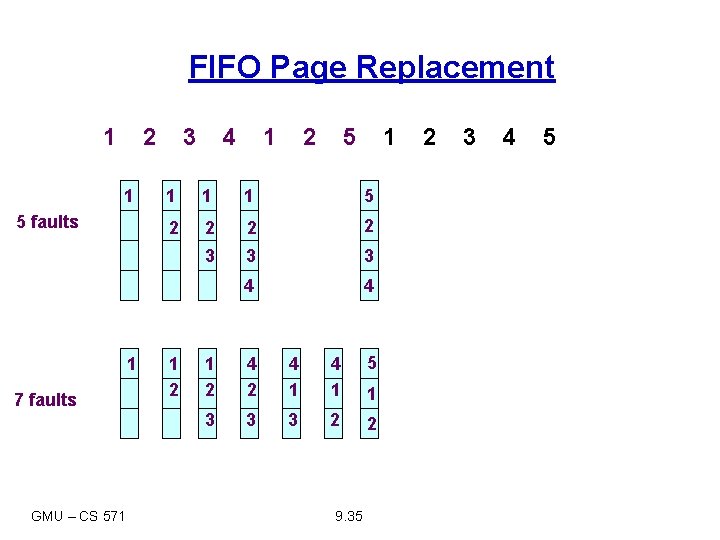

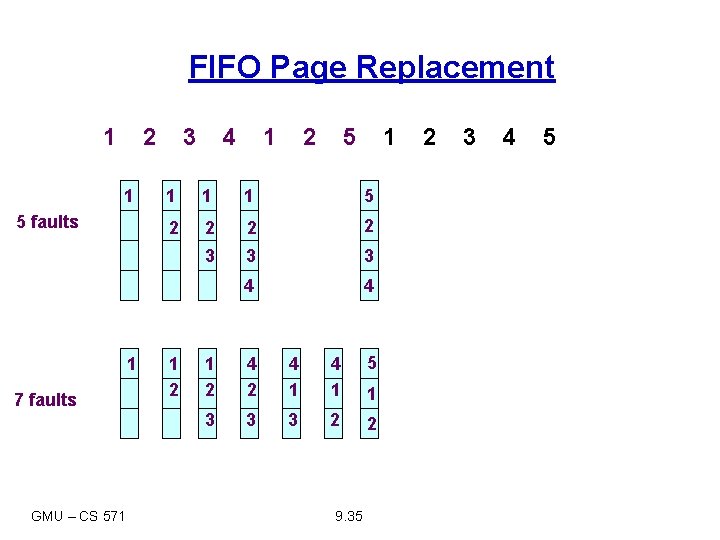

FIFO Page Replacement 1 2 1 5 faults 1 7 faults GMU – CS 571 3 4 1 2 5 1 1 5 2 2 3 3 3 4 4 1 2 4 2 4 1 5 3 3 3 2 2 9. 35 1 2 3 4 5

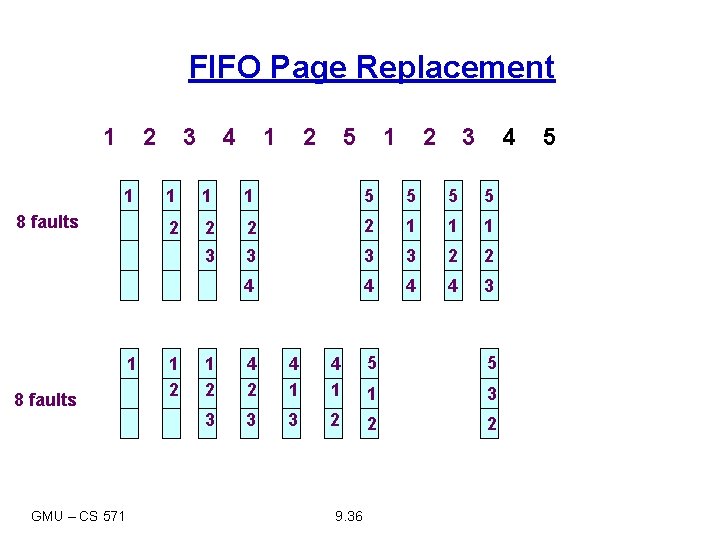

FIFO Page Replacement 1 2 1 8 faults GMU – CS 571 3 4 1 2 5 1 2 3 4 1 1 1 5 5 2 2 1 1 1 3 3 2 2 4 4 3 1 2 4 2 4 1 5 5 1 3 3 2 2 2 9. 36 5

FIFO Page Replacement 1 2 1 10 faults 1 9 faults GMU – CS 571 3 4 1 2 5 1 2 3 4 5 1 1 1 5 5 4 4 2 2 1 1 5 3 3 2 2 4 4 3 3 3 1 2 4 2 4 1 5 5 5 1 3 3 3 2 2 2 4 9. 37

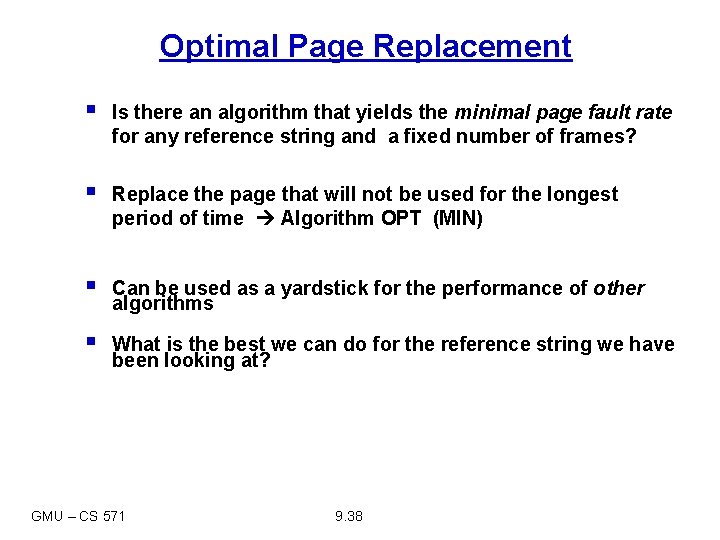

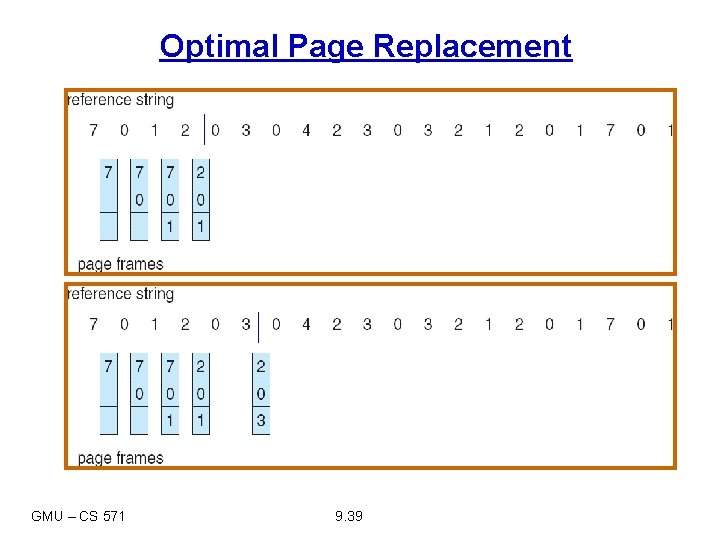

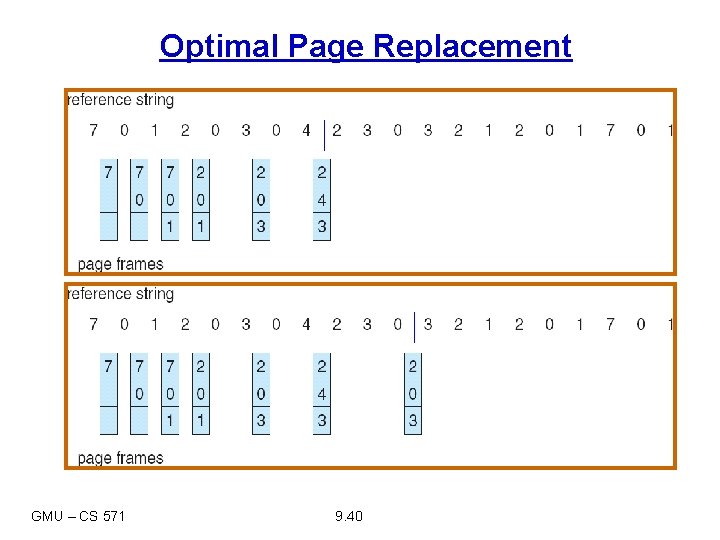

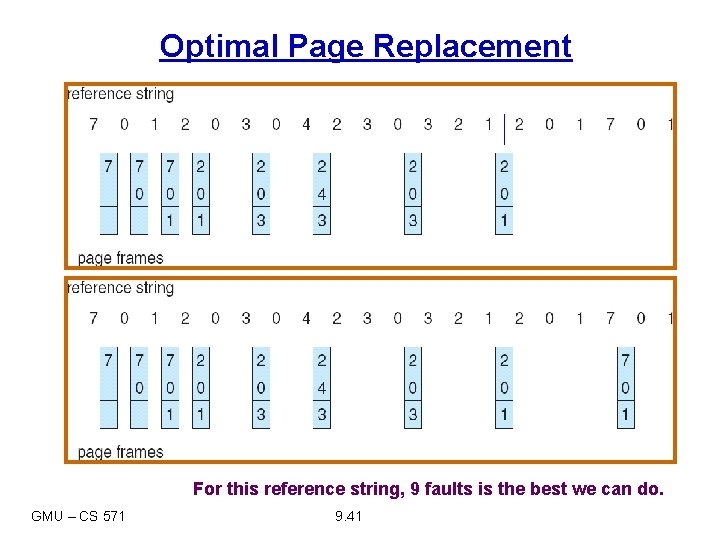

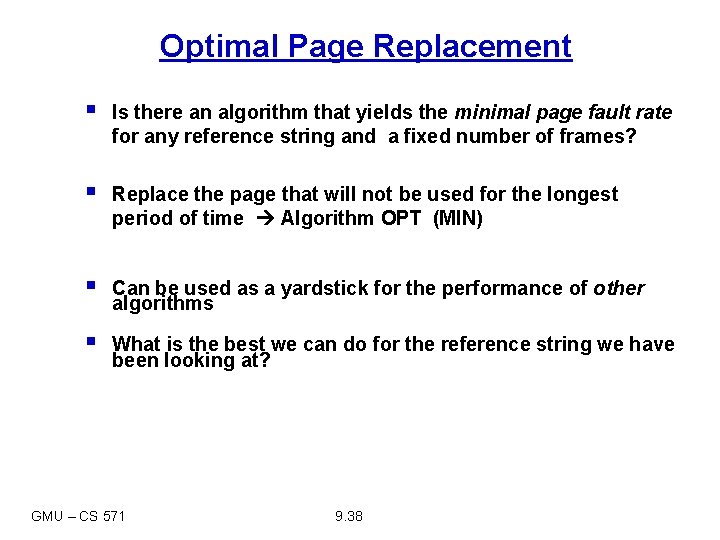

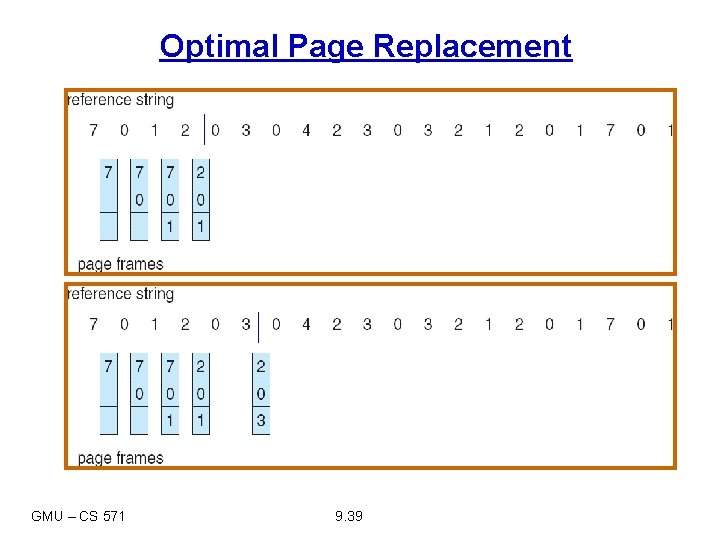

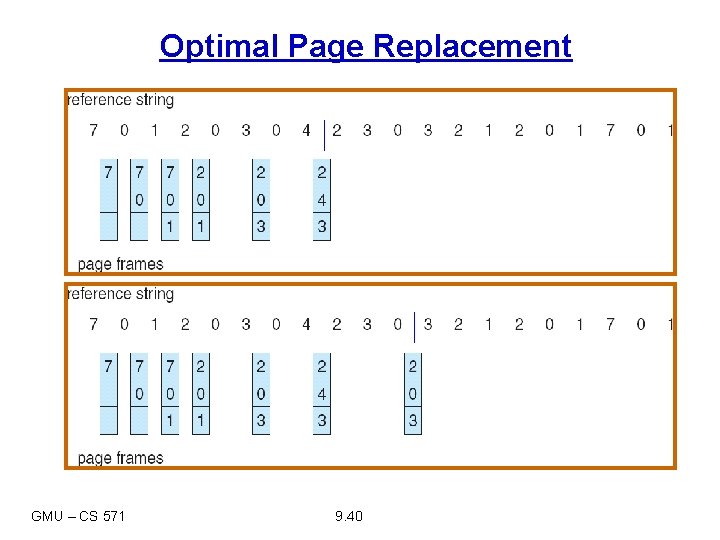

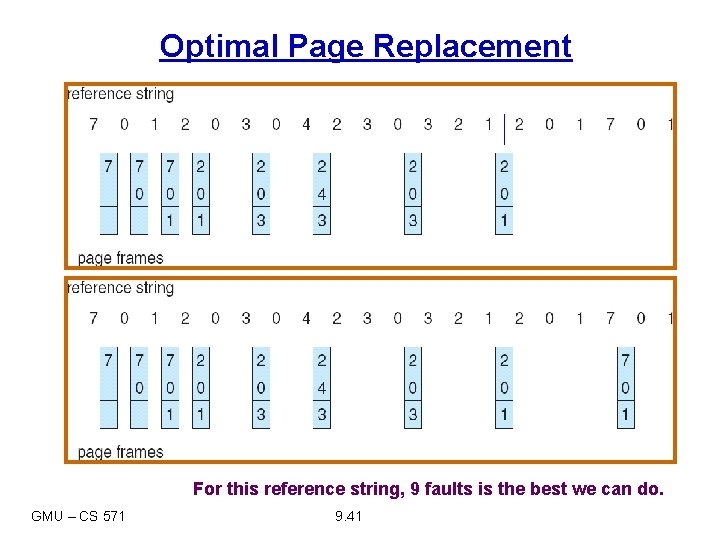

Optimal Page Replacement § Is there an algorithm that yields the minimal page fault rate for any reference string and a fixed number of frames? § Replace the page that will not be used for the longest period of time Algorithm OPT (MIN) § Can be used as a yardstick for the performance of other algorithms § What is the best we can do for the reference string we have been looking at? GMU – CS 571 9. 38

Optimal Page Replacement GMU – CS 571 9. 39

Optimal Page Replacement GMU – CS 571 9. 40

Optimal Page Replacement For this reference string, 9 faults is the best we can do. GMU – CS 571 9. 41

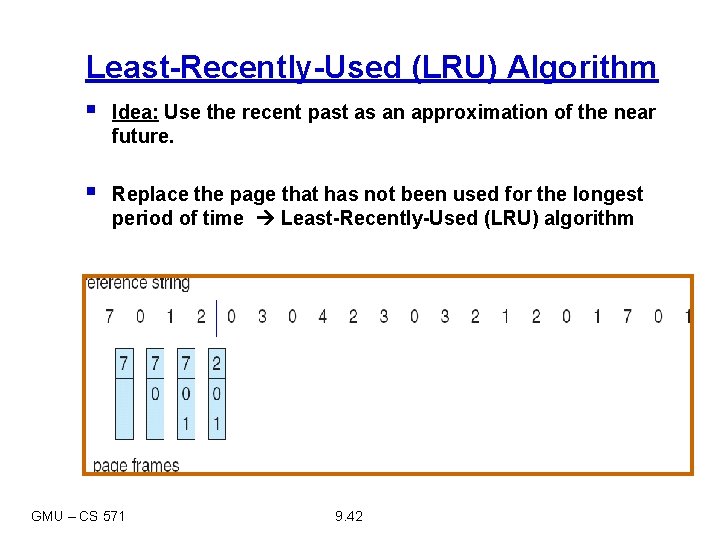

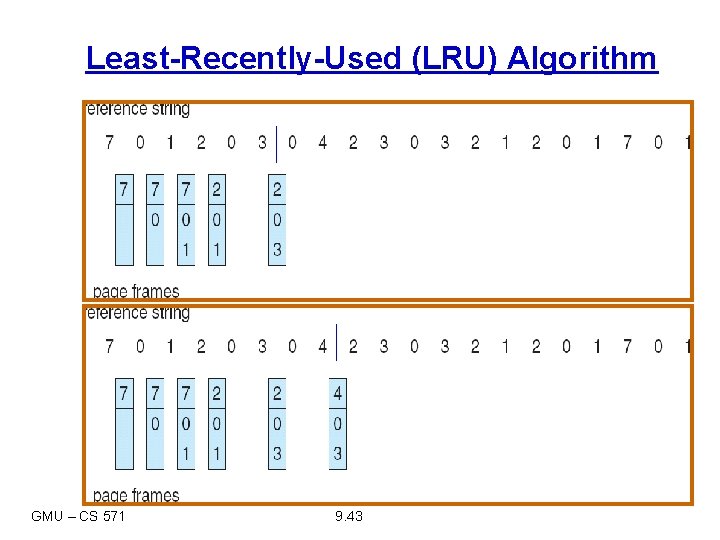

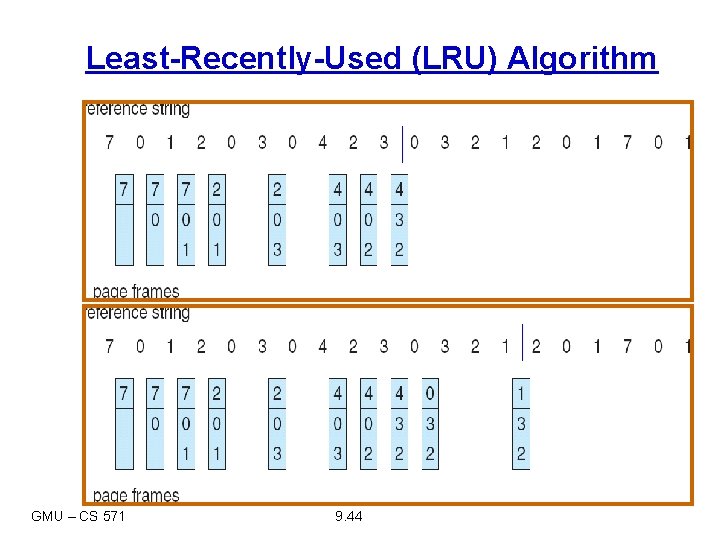

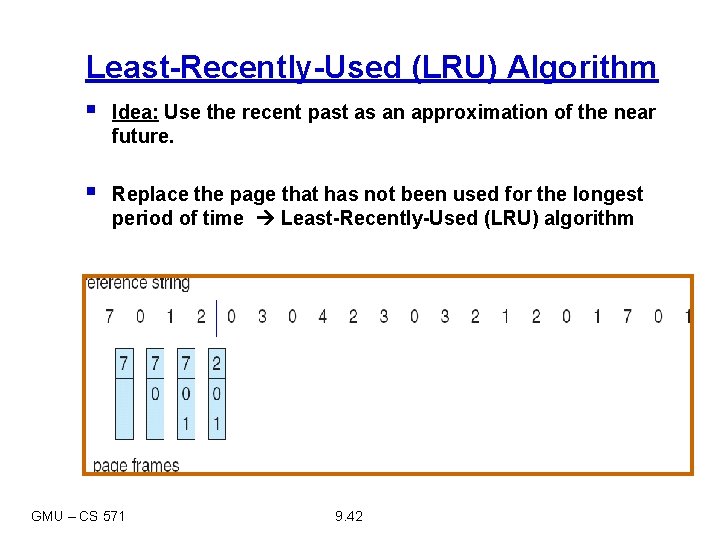

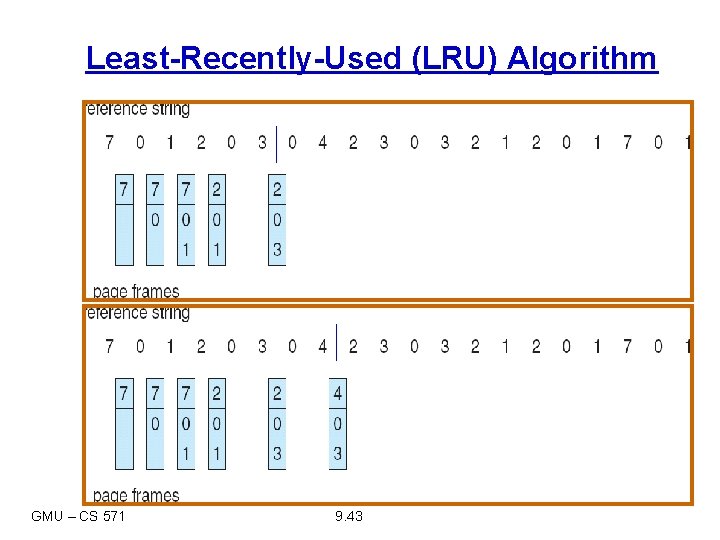

Least-Recently-Used (LRU) Algorithm § Idea: Use the recent past as an approximation of the near future. § Replace the page that has not been used for the longest period of time Least-Recently-Used (LRU) algorithm GMU – CS 571 9. 42

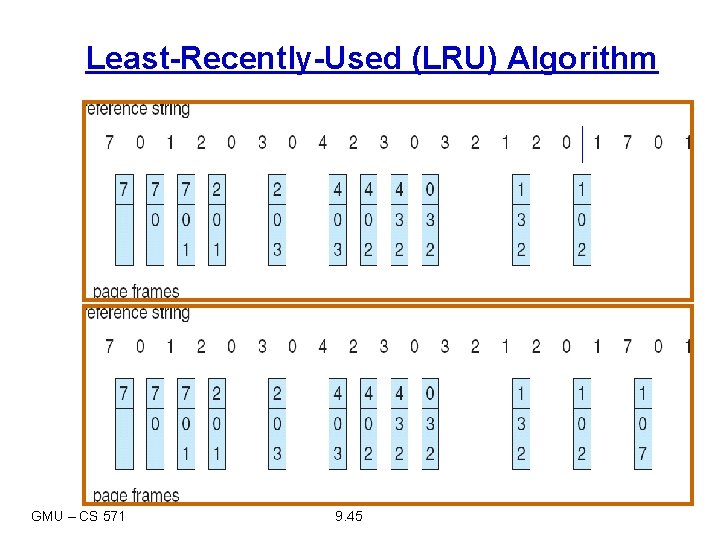

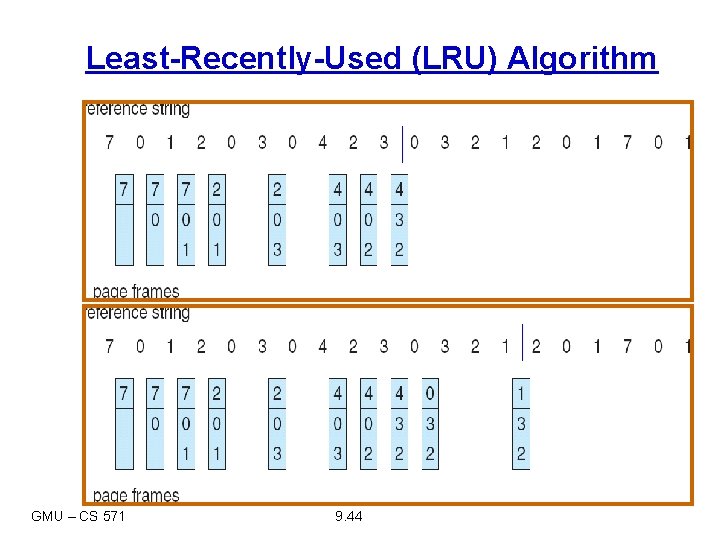

Least-Recently-Used (LRU) Algorithm GMU – CS 571 9. 43

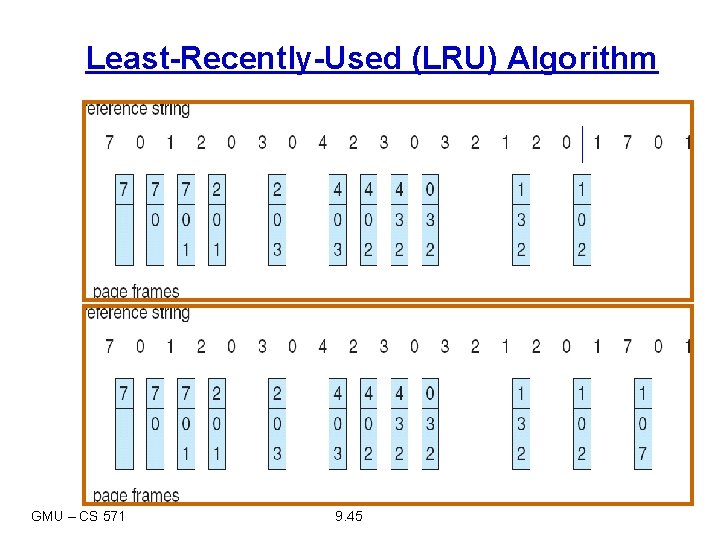

Least-Recently-Used (LRU) Algorithm GMU – CS 571 9. 44

Least-Recently-Used (LRU) Algorithm GMU – CS 571 9. 45

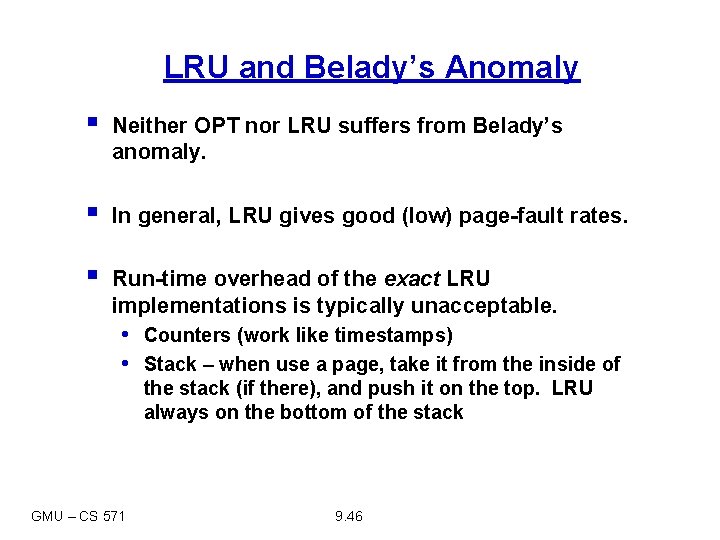

LRU and Belady’s Anomaly § Neither OPT nor LRU suffers from Belady’s anomaly. § In general, LRU gives good (low) page-fault rates. § Run-time overhead of the exact LRU implementations is typically unacceptable. • Counters (work like timestamps) • Stack – when use a page, take it from the inside of the stack (if there), and push it on the top. LRU always on the bottom of the stack GMU – CS 571 9. 46

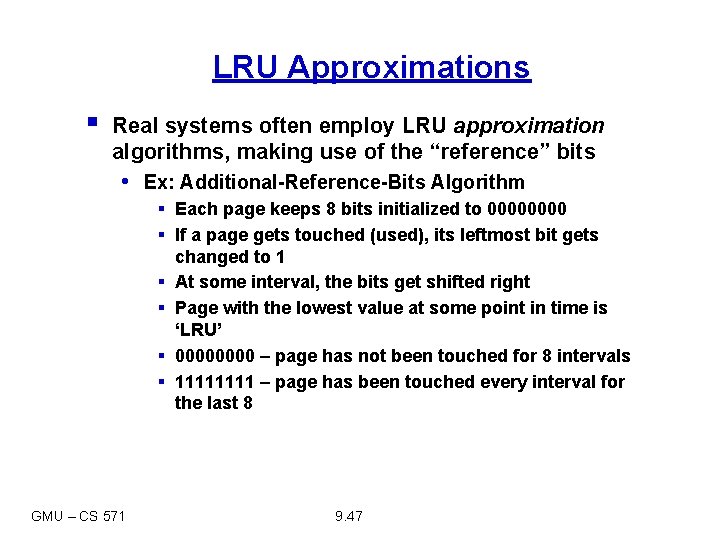

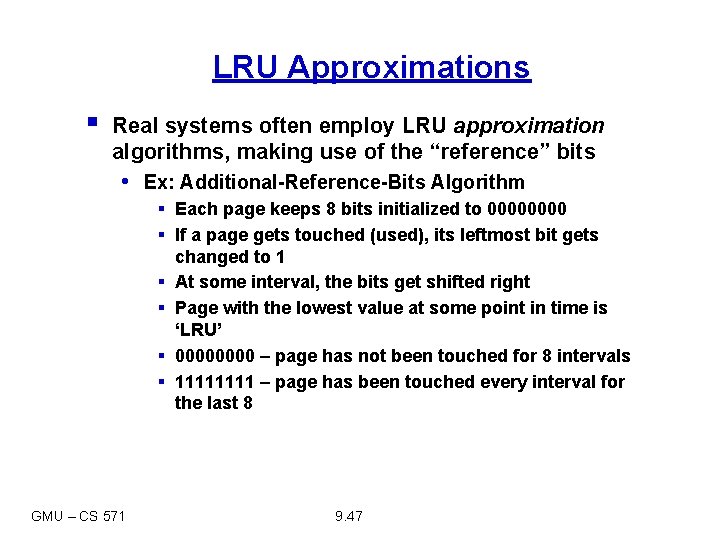

LRU Approximations § Real systems often employ LRU approximation algorithms, making use of the “reference” bits • Ex: Additional-Reference-Bits Algorithm § Each page keeps 8 bits initialized to 0000 § If a page gets touched (used), its leftmost bit gets changed to 1 § At some interval, the bits get shifted right § Page with the lowest value at some point in time is ‘LRU’ § 0000 – page has not been touched for 8 intervals § 1111 – page has been touched every interval for the last 8 GMU – CS 571 9. 47

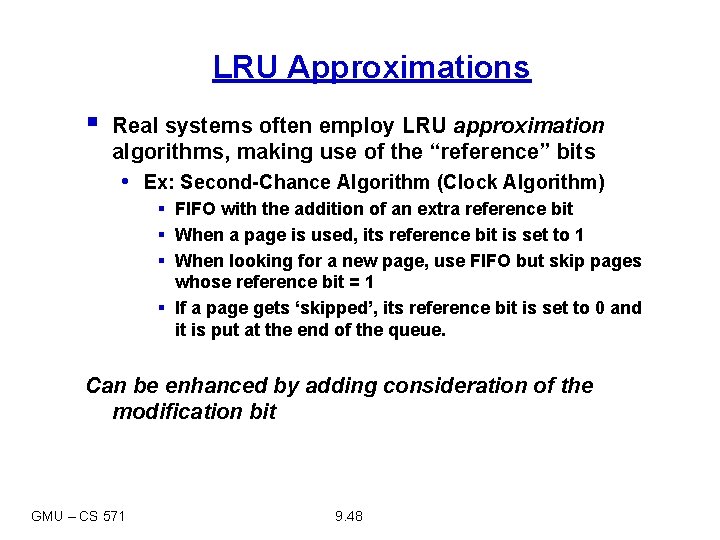

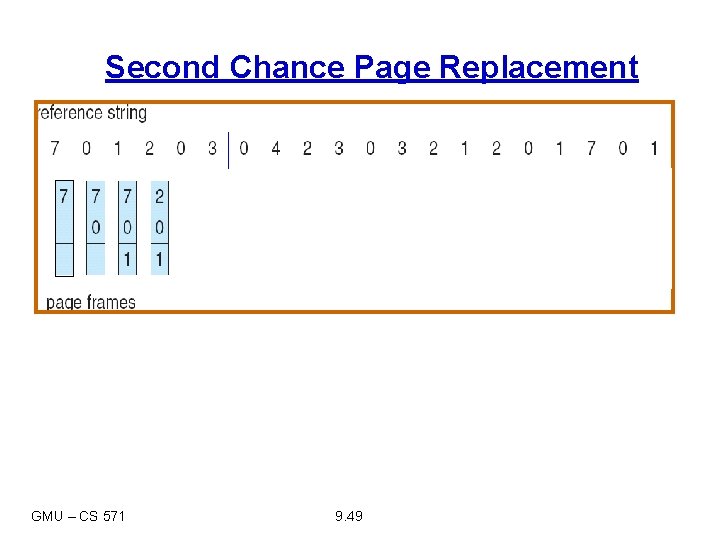

LRU Approximations § Real systems often employ LRU approximation algorithms, making use of the “reference” bits • Ex: Second-Chance Algorithm (Clock Algorithm) § FIFO with the addition of an extra reference bit § When a page is used, its reference bit is set to 1 § When looking for a new page, use FIFO but skip pages whose reference bit = 1 § If a page gets ‘skipped’, its reference bit is set to 0 and it is put at the end of the queue. Can be enhanced by adding consideration of the modification bit GMU – CS 571 9. 48

Second Chance Page Replacement GMU – CS 571 9. 49

Page-Buffering Algorithm § In addition to a specific page-replacement algorithm, other procedures are also used. § Systems commonly keep a pool of free frames. § When a page fault occurs, the desired page is read into a free frame first, allowing the process to restart as soon as possible. The victim page is written out to the disk later, and its frame is added to the free-frame pool. § Other enhancements are also possible. GMU – CS 571 9. 50

Allocation of Frames § How do we allocate the fixed number of free memory among the various processes? § In addition to performance considerations, each process needs a minimum number of pages, determined by the instruction-set architecture. § Since a page fault causes an instruction to restart, consider an architecture that contains the instruction ADD M 1, M 2, M 3 (with three operands). § Consider also the case where indirect memory references are allowed. GMU – CS 571 9. 51

Global vs. Local Allocation § Page replacement algorithms can be implemented broadly in two ways. § Global replacement – process selects a replacement frame from the set of all frames; one process can take a frame from another. • Under global allocation algorithms, the page-fault rate of a given process depends also on the paging behavior of other processes. § Local replacement – each process selects from only its own set of allocated frames. • Less used pages of memory are not made available to a process that may need them. GMU – CS 571 9. 52

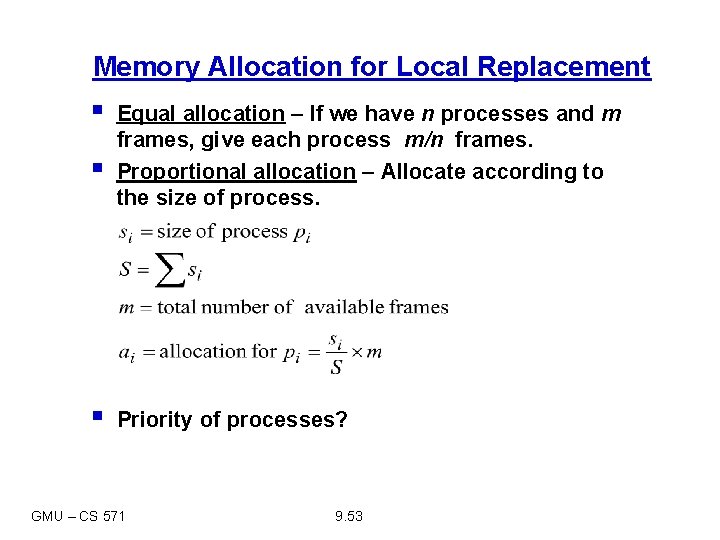

Memory Allocation for Local Replacement § § § Equal allocation – If we have n processes and m frames, give each process m/n frames. Proportional allocation – Allocate according to the size of process. Priority of processes? GMU – CS 571 9. 53

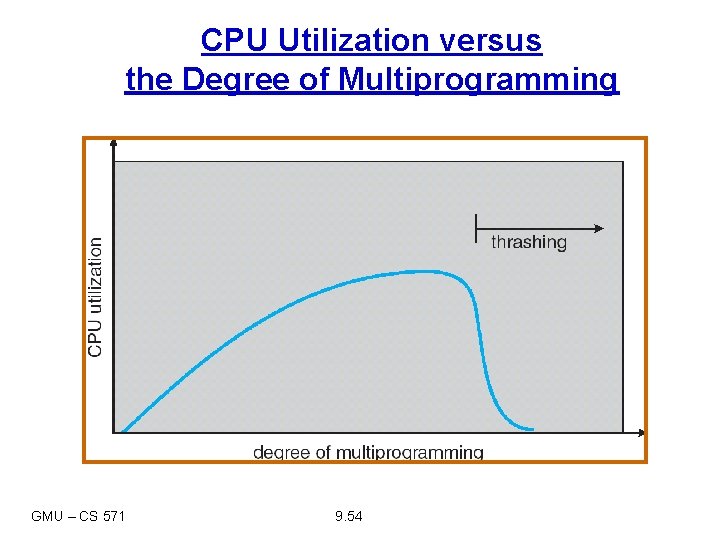

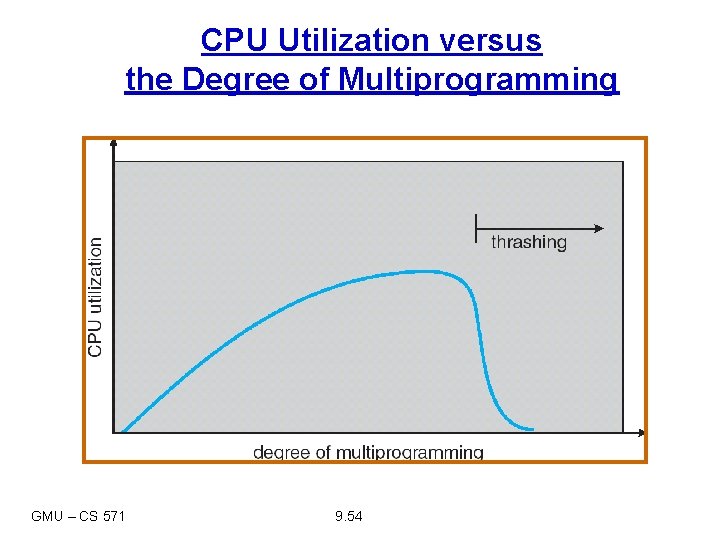

CPU Utilization versus the Degree of Multiprogramming GMU – CS 571 9. 54

Thrashing § High-paging activity: The system is spending more time paging than executing. § How can this happen? • OS observes low CPU utilization and increases the • • GMU – CS 571 degree of multiprogramming. Global page-replacement algorithm is used, it takes away frames belonging to other processes But these processes need those pages, they also cause page faults. Many processes join the waiting queue for the paging device, CPU utilization further decreases. OS introduces new processes, further increasing the paging activity. 9. 55

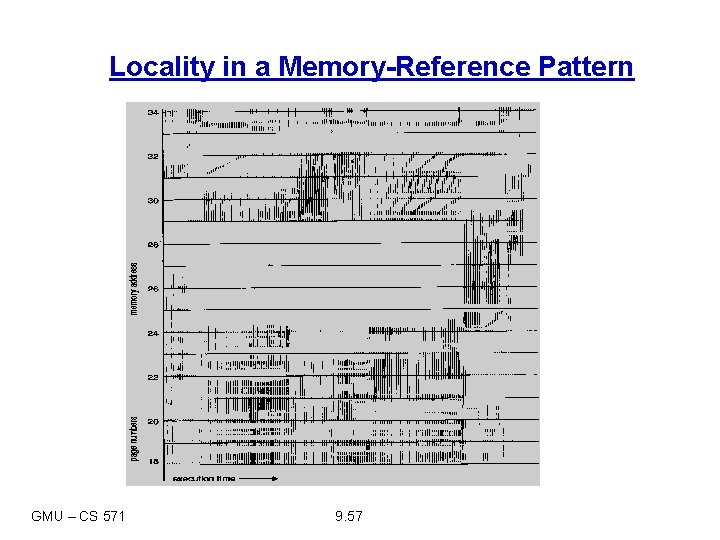

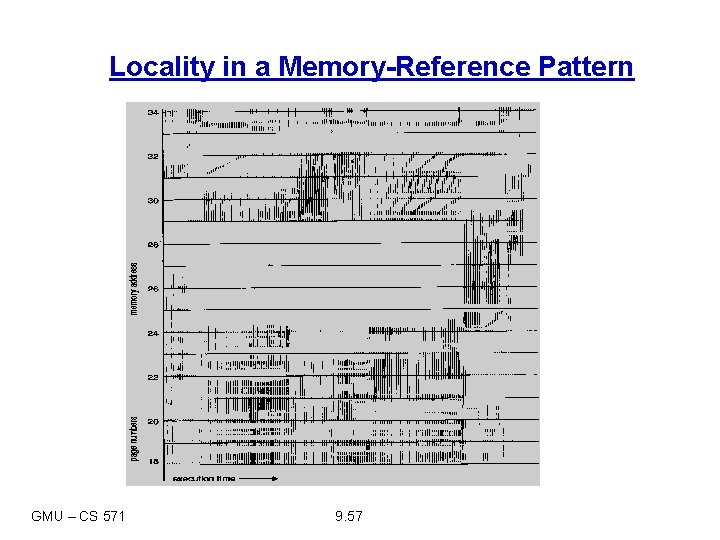

Thrashing § To avoid thrashing, we must provide every process in memory as many frames as it needs to run without an excessive number of page faults. § Programs do not reference their address spaces uniformly/randomly § A locality is a set of pages that are actively used together. § According to the locality model, as a process executes, it moves from locality to locality. § A program is generally composed of several different localities, which may overlap. GMU – CS 571 9. 56

Locality in a Memory-Reference Pattern GMU – CS 571 9. 57

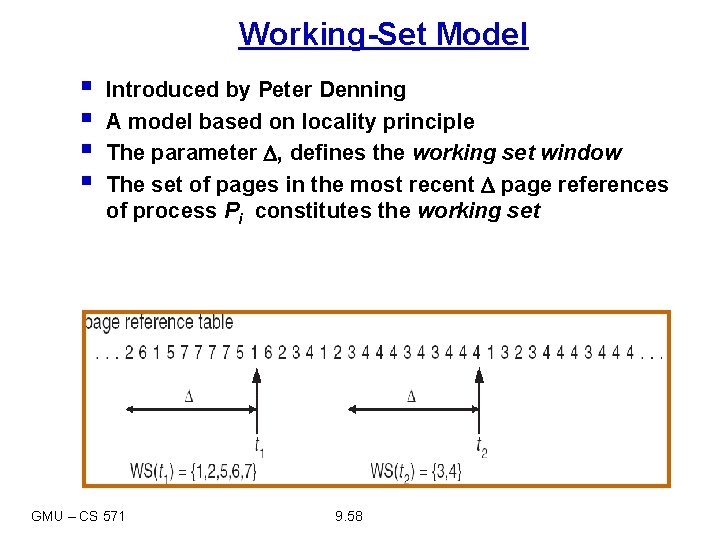

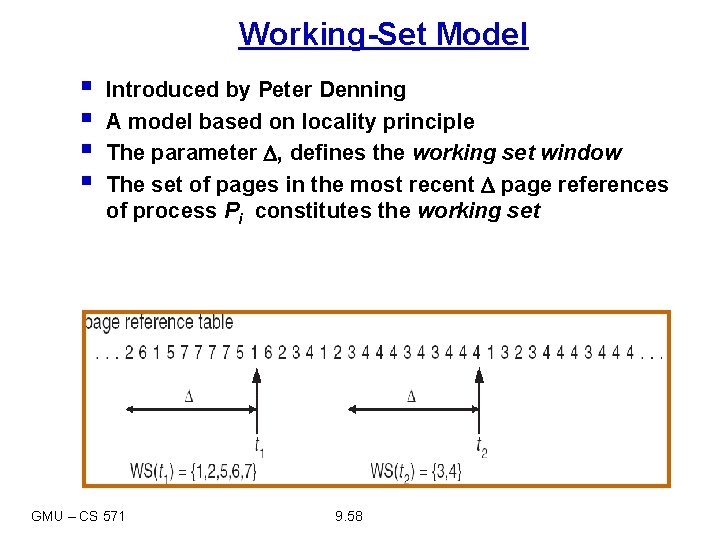

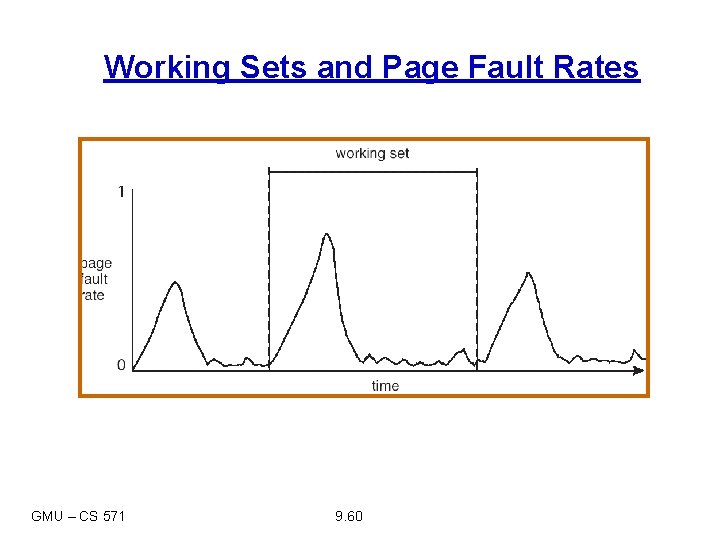

Working-Set Model § § Introduced by Peter Denning A model based on locality principle The parameter , defines the working set window The set of pages in the most recent page references of process Pi constitutes the working set GMU – CS 571 9. 58

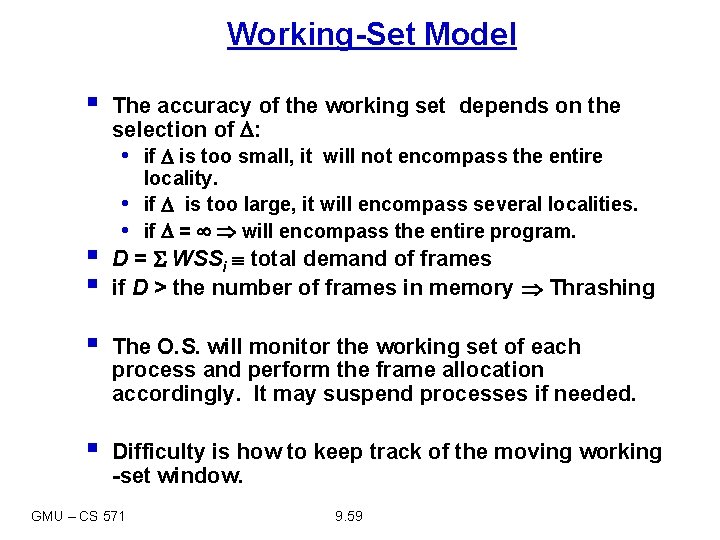

Working-Set Model § The accuracy of the working set depends on the selection of : • if is too small, it will not encompass the entire • • locality. if is too large, it will encompass several localities. if = will encompass the entire program. § § D = WSSi total demand of frames if D > the number of frames in memory Thrashing § The O. S. will monitor the working set of each process and perform the frame allocation accordingly. It may suspend processes if needed. § Difficulty is how to keep track of the moving working -set window. GMU – CS 571 9. 59

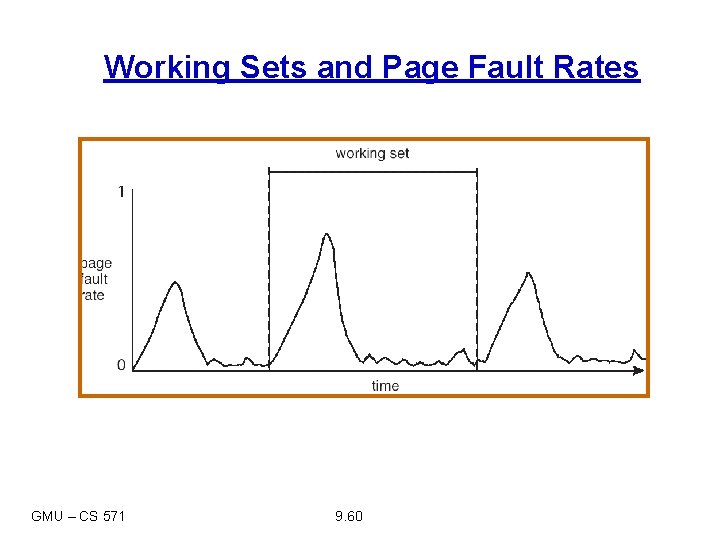

Working Sets and Page Fault Rates GMU – CS 571 9. 60

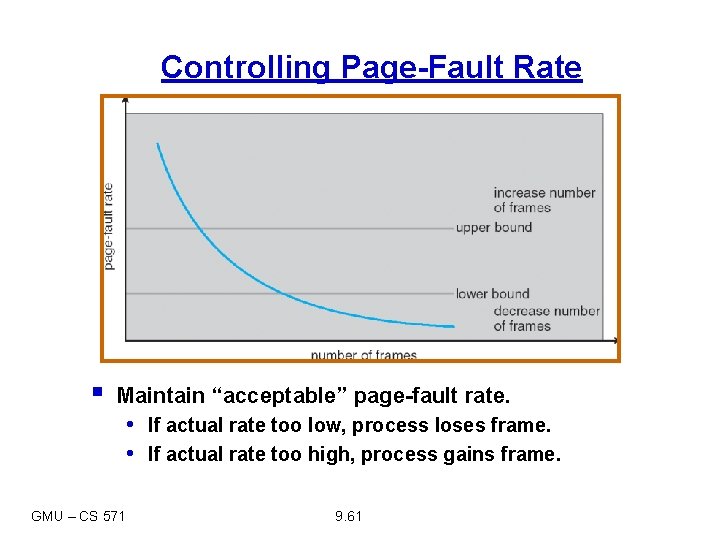

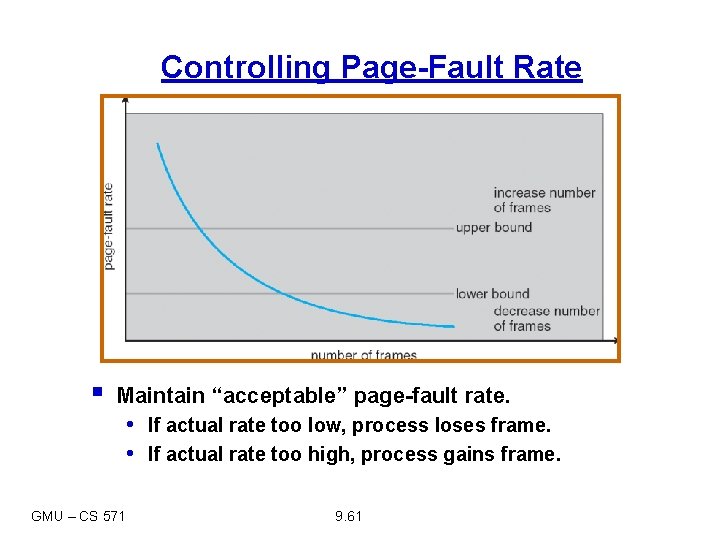

Controlling Page-Fault Rate § Maintain “acceptable” page-fault rate. • If actual rate too low, process loses frame. • If actual rate too high, process gains frame. GMU – CS 571 9. 61