Abstractive Summarization of Reddit Posts with Multilevel Memory

![Abstractive Summarization of Reddit Posts with Multi-level Memory Networks [NAACL 2019] Group Presentation WANG, Abstractive Summarization of Reddit Posts with Multi-level Memory Networks [NAACL 2019] Group Presentation WANG,](https://slidetodoc.com/presentation_image_h/3740b84b4799be0263d13d0d06be8b7c/image-1.jpg)

Abstractive Summarization of Reddit Posts with Multi-level Memory Networks [NAACL 2019] Group Presentation WANG, Yue 04/15/2019 WANG, Yue The Chinese University of Hong Kong

Outline • Background • Dataset • Method • Experiment • Conclusion WANG, Yue The Chinese University of Hong Kong 2/16

Background • Challenge: • Previous abstractive summarization tasks focus on formal texts (e. g. , news articles), which are not abstractive enough. • Prior approaches neglect the different level understandings of the document (i. e. , sentence-level, paragraph-level and document-level). • Contribution: 1. Newly collect a large-scale abstractive summarization dataset named Reddit TIFU (the first informal texts for abstractive summarization). 2. Propose a novel model named multi-level memory networks (MMN), which considers multi-level abstraction of the document and outperforms existing state-of-the-arts. WANG, Yue The Chinese University of Hong Kong 3/16

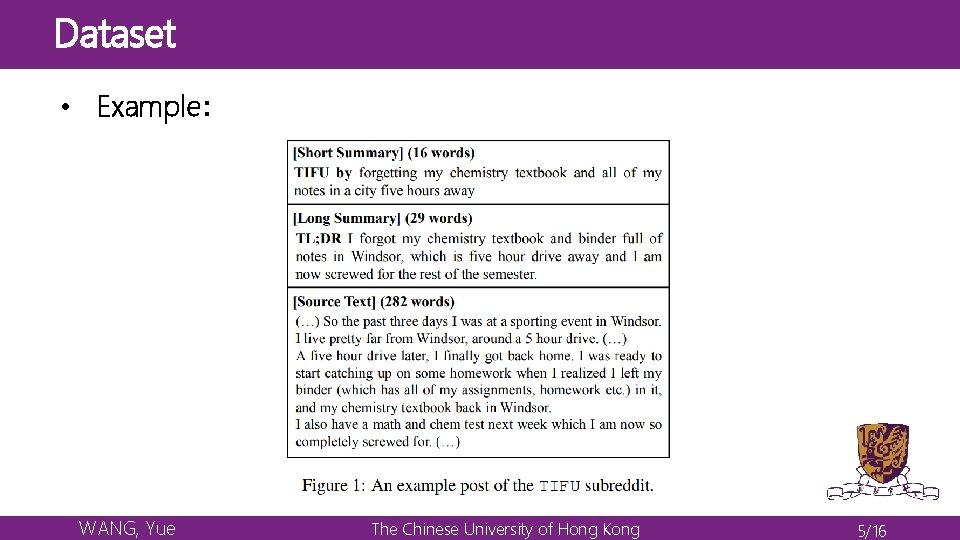

Dataset • Dataset is crawled from a subreddit “/r/tifu” • https: //www. reddit. com/r/tifu/ • The important rules under this subreddit: • The title must make an attempt to encapsulate the nature of your f***up • All posts must end with a TL; DR summary that is descriptive of your f***up and its consequences. • Smart adaption: • The title short summary • The TL; DR summary long summary WANG, Yue The Chinese University of Hong Kong 4/16

Dataset • Example: WANG, Yue The Chinese University of Hong Kong 5/16

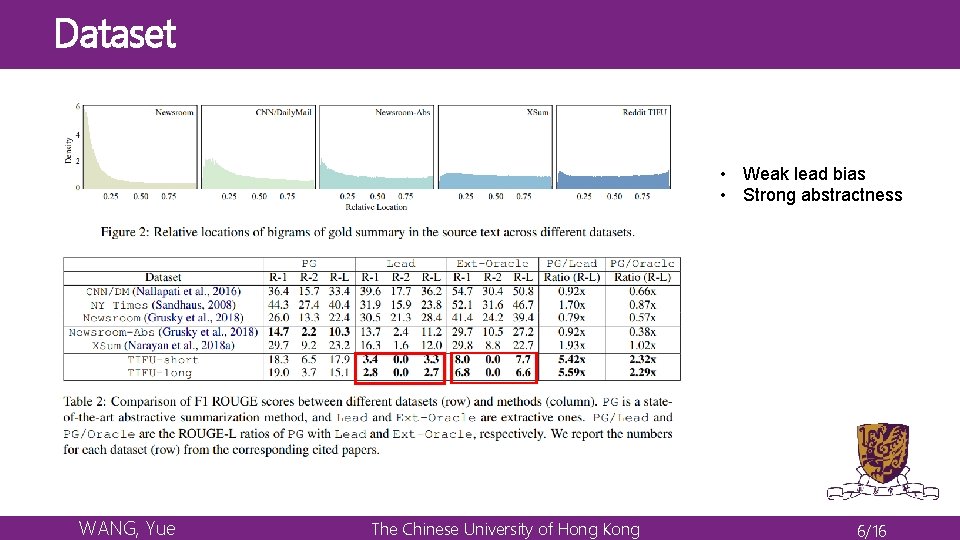

Dataset • Weak lead bias • Strong abstractness WANG, Yue The Chinese University of Hong Kong 6/16

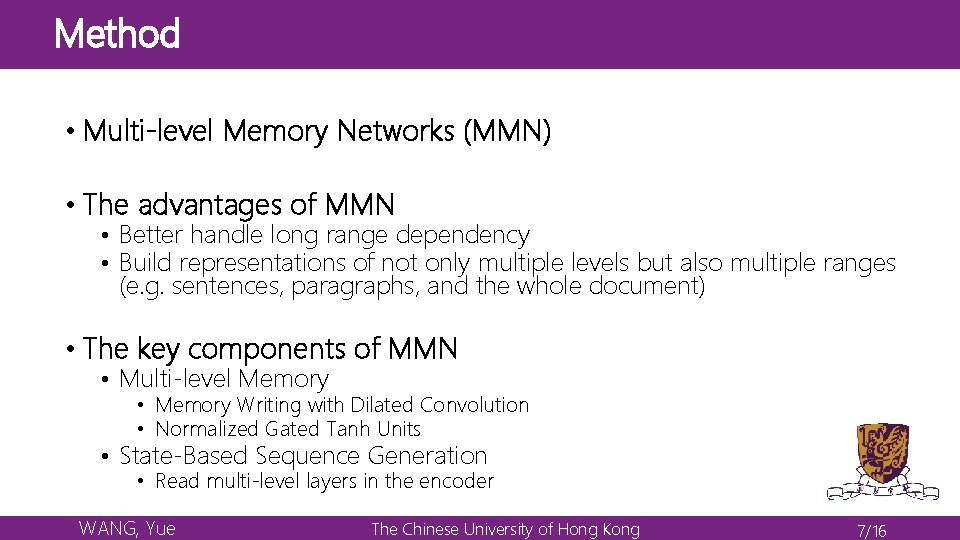

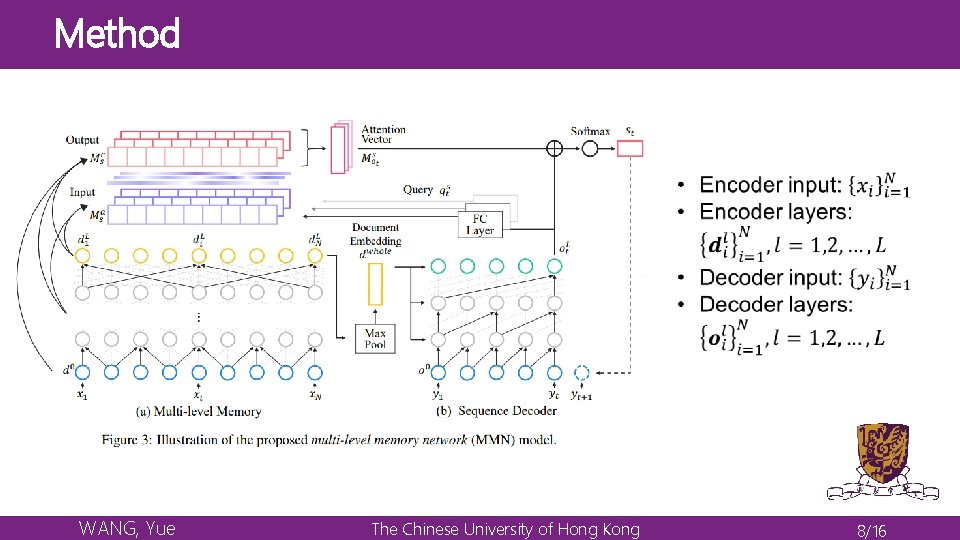

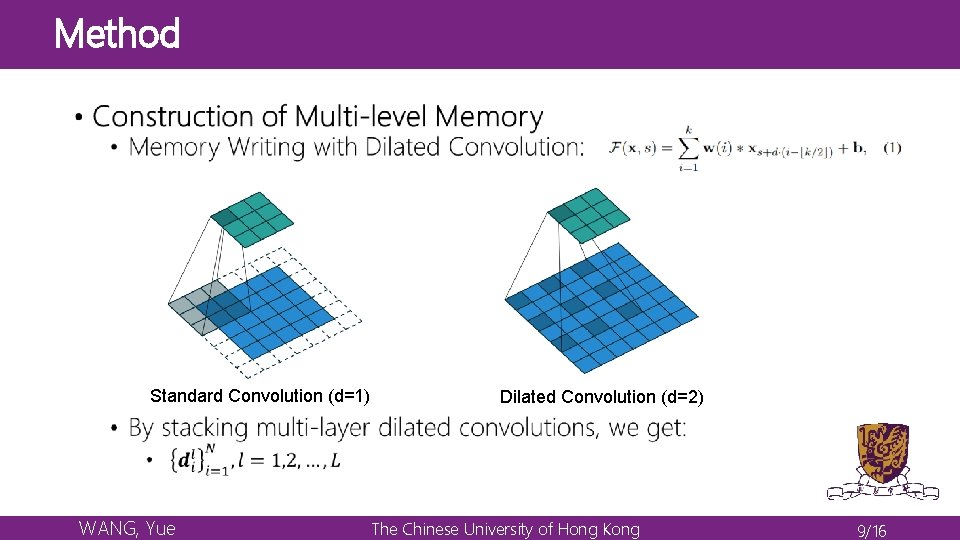

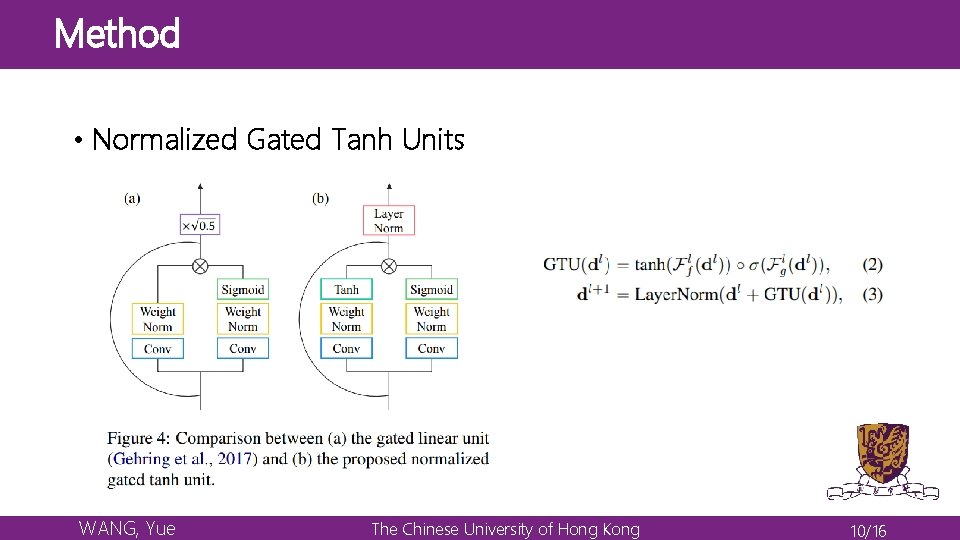

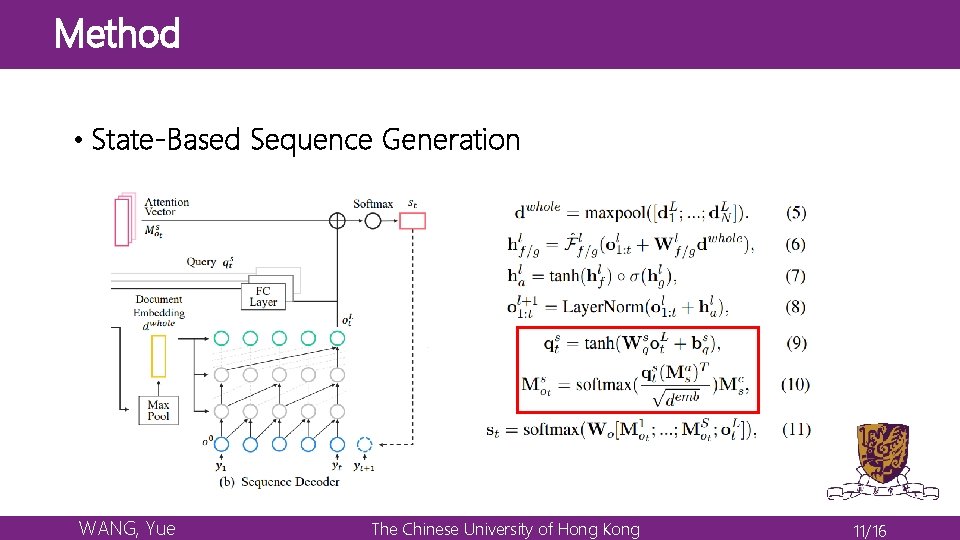

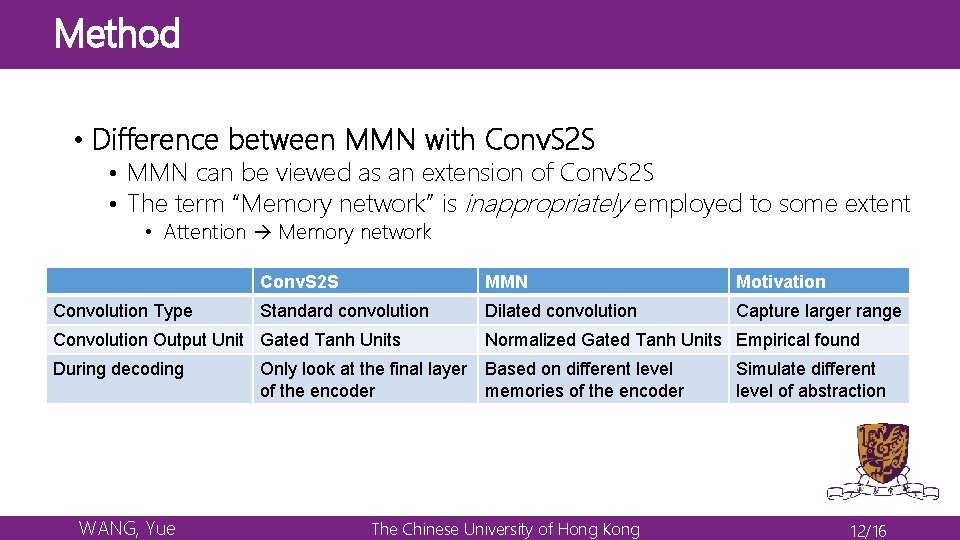

Method • Multi-level Memory Networks (MMN) • The advantages of MMN • Better handle long range dependency • Build representations of not only multiple levels but also multiple ranges (e. g. sentences, paragraphs, and the whole document) • The key components of MMN • Multi-level Memory • Memory Writing with Dilated Convolution • Normalized Gated Tanh Units • State-Based Sequence Generation • Read multi-level layers in the encoder WANG, Yue The Chinese University of Hong Kong 7/16

Method WANG, Yue The Chinese University of Hong Kong 8/16

Method Standard Convolution (d=1) WANG, Yue Dilated Convolution (d=2) The Chinese University of Hong Kong 9/16

Method • Normalized Gated Tanh Units WANG, Yue The Chinese University of Hong Kong 10/16

Method • State-Based Sequence Generation WANG, Yue The Chinese University of Hong Kong 11/16

Method • Difference between MMN with Conv. S 2 S • MMN can be viewed as an extension of Conv. S 2 S • The term “Memory network” is inappropriately employed to some extent • Attention Memory network Convolution Type Conv. S 2 S MMN Motivation Standard convolution Dilated convolution Capture larger range Convolution Output Unit Gated Tanh Units During decoding WANG, Yue Normalized Gated Tanh Units Empirical found Only look at the final layer Based on different level of the encoder memories of the encoder The Chinese University of Hong Kong Simulate different level of abstraction 12/16

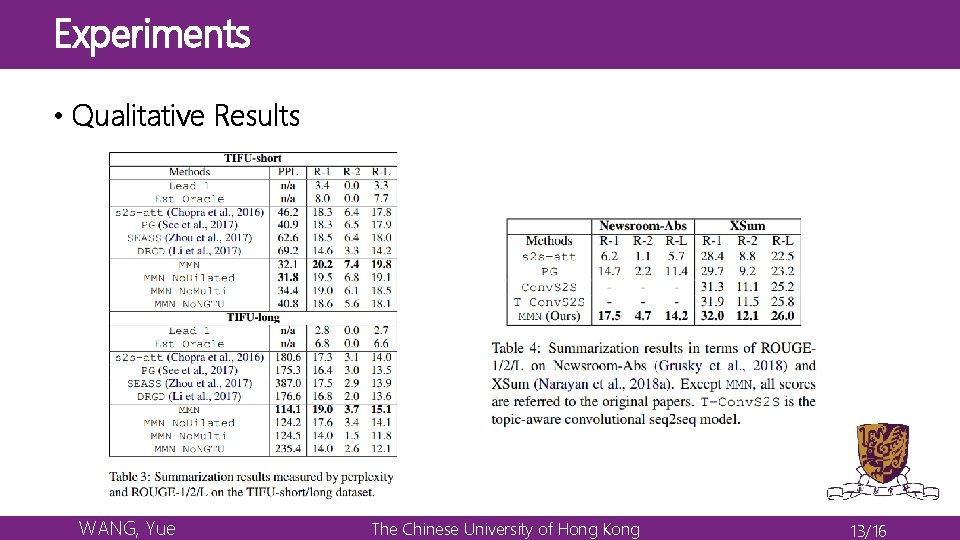

Experiments • Qualitative Results WANG, Yue The Chinese University of Hong Kong 13/16

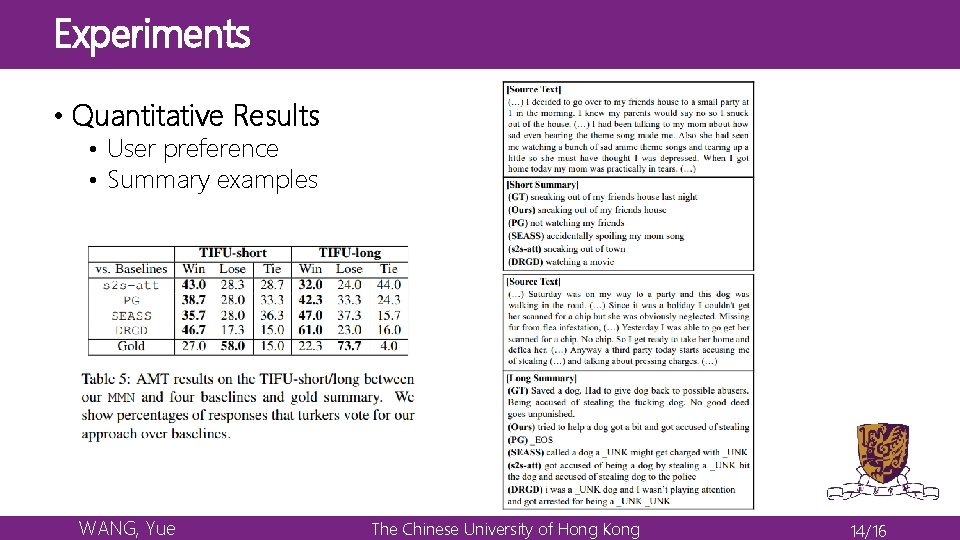

Experiments • Quantitative Results • User preference • Summary examples WANG, Yue The Chinese University of Hong Kong 14/16

Conclusion • A new dataset Reddit TIFU for abstractive summarization on informal online texts • A novel summarization model named multi-level memory networks (MMN) WANG, Yue The Chinese University of Hong Kong 15/16

Conclusion Thanks WANG, Yue The Chinese University of Hong Kong 16/16

- Slides: 16