Classification Problems in Bioinformatics 1282020 BafnaIdeker 1 Gene

Classification Problems in Bioinformatics 12/8/2020 Bafna/Ideker 1

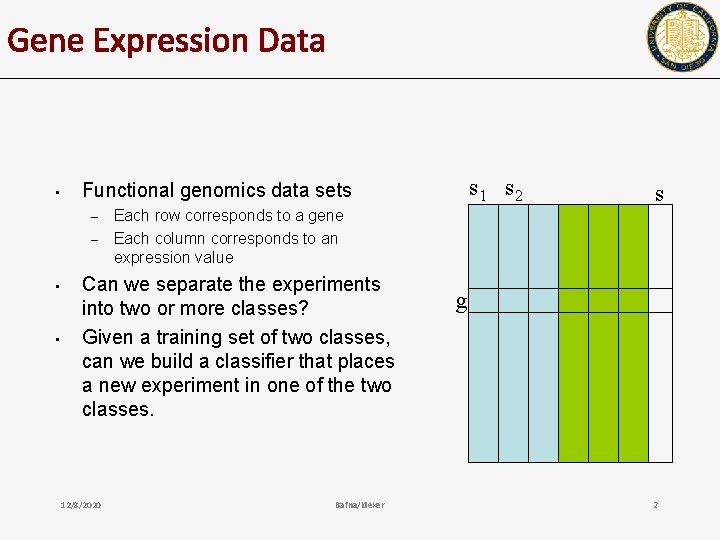

Gene Expression Data • – – • • s 1 s 2 Functional genomics data sets Each row corresponds to a gene Each column corresponds to an expression value Can we separate the experiments into two or more classes? Given a training set of two classes, can we build a classifier that places a new experiment in one of the two classes. 12/8/2020 s Bafna/Ideker g 2

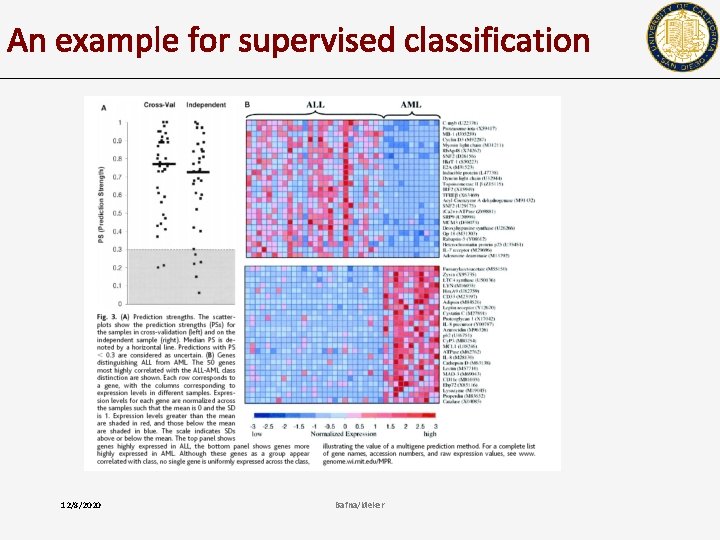

An example for supervised classification 12/8/2020 Bafna/Ideker

Three types of analysis problems • • • Cluster analysis/unsupervised learning (Week 3: L 5, 6) Classification into known classes (Supervised) Identification of “marker” genes that characterize different tumor classes – • Dimensionality reduction, feature selection Additional topics (NMF) 12/8/2020 Bafna/Ideker 4

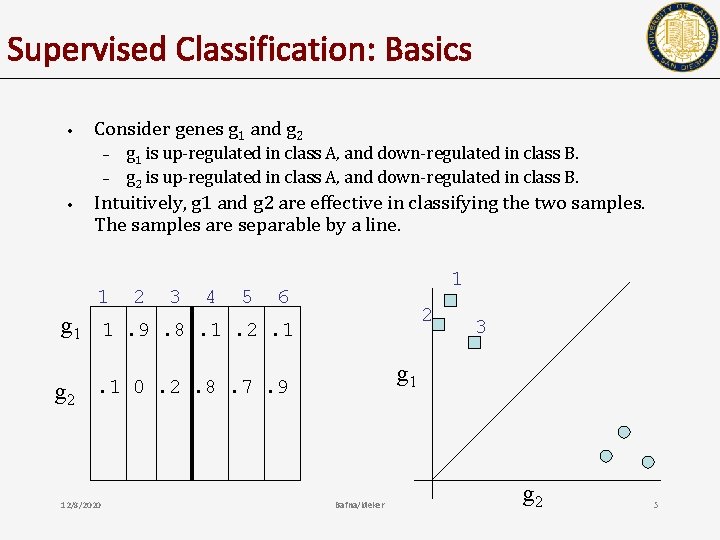

Supervised Classification: Basics • Consider genes g 1 and g 2 – – • g 1 is up-regulated in class A, and down-regulated in class B. g 2 is up-regulated in class A, and down-regulated in class B. Intuitively, g 1 and g 2 are effective in classifying the two samples. The samples are separable by a line. 1 2 3 4 5 1 6 2 g 1 1. 9. 8. 1. 2. 1 g 2 g 1 . 1 0. 2. 8. 7. 9 12/8/2020 3 Bafna/Ideker g 2 5

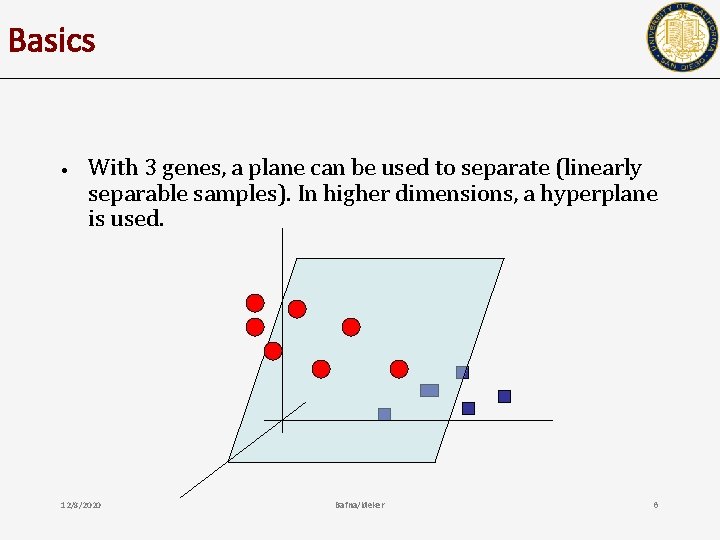

Basics • With 3 genes, a plane can be used to separate (linearly separable samples). In higher dimensions, a hyperplane is used. 12/8/2020 Bafna/Ideker 6

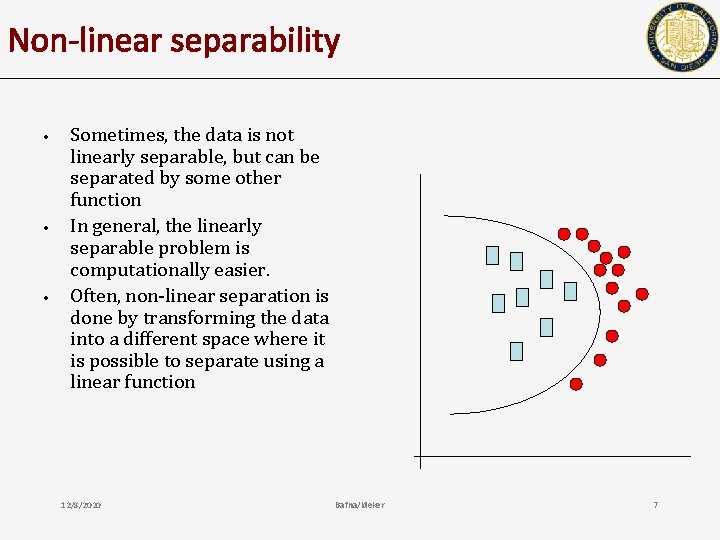

Non-linear separability • • • Sometimes, the data is not linearly separable, but can be separated by some other function In general, the linearly separable problem is computationally easier. Often, non-linear separation is done by transforming the data into a different space where it is possible to separate using a linear function 12/8/2020 Bafna/Ideker 7

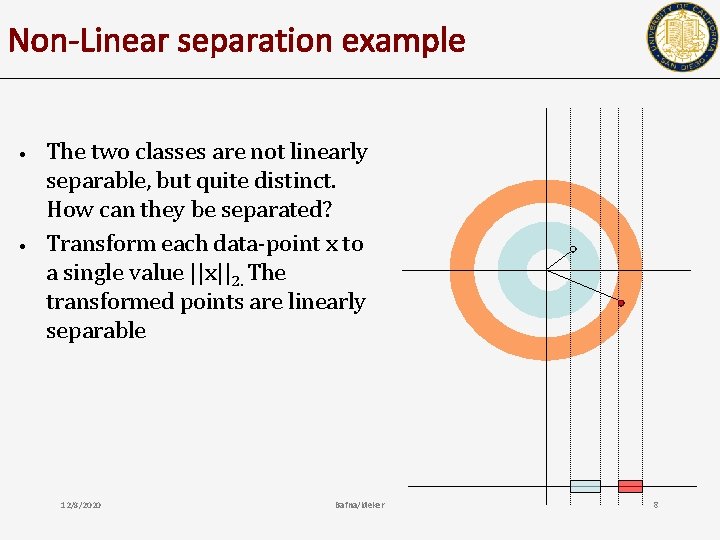

Non-Linear separation example • • The two classes are not linearly separable, but quite distinct. How can they be separated? Transform each data-point x to a single value ||x||2. The transformed points are linearly separable 12/8/2020 Bafna/Ideker 8

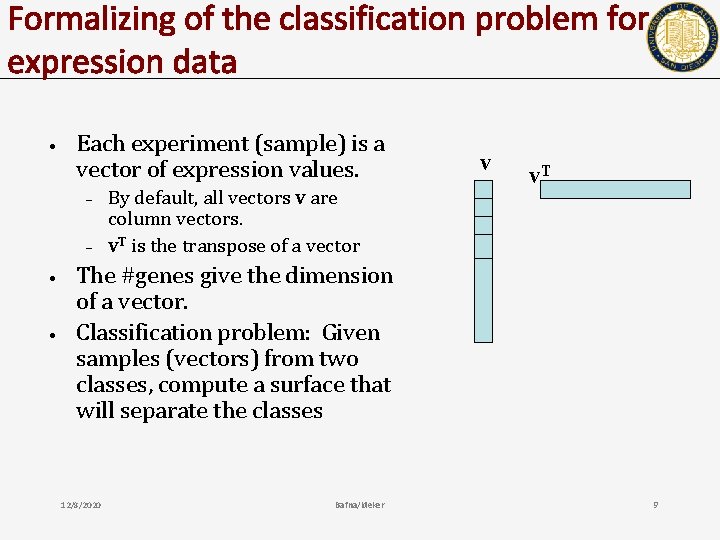

Formalizing of the classification problem for expression data • Each experiment (sample) is a vector of expression values. – – • • By default, all vectors v are column vectors. v. T is the transpose of a vector v v. T The #genes give the dimension of a vector. Classification problem: Given samples (vectors) from two classes, compute a surface that will separate the classes 12/8/2020 Bafna/Ideker 9

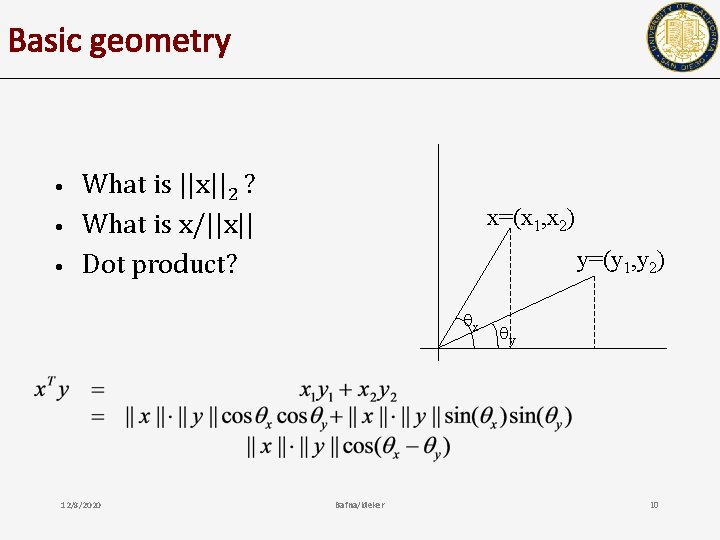

Basic geometry • • • What is ||x||2 ? What is x/||x|| Dot product? x=(x 1, x 2) y=(y 1, y 2) x 12/8/2020 Bafna/Ideker y 10

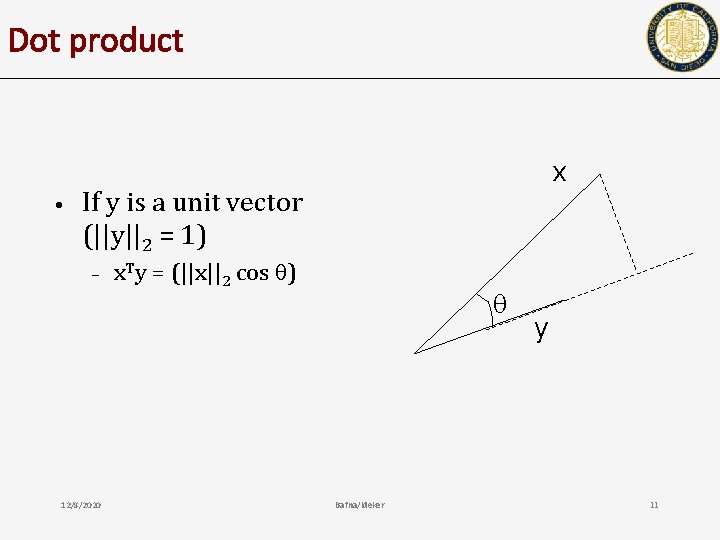

Dot product • x If y is a unit vector (||y||2 = 1) – 12/8/2020 x. Ty = (||x||2 cos ) Bafna/Ideker y 11

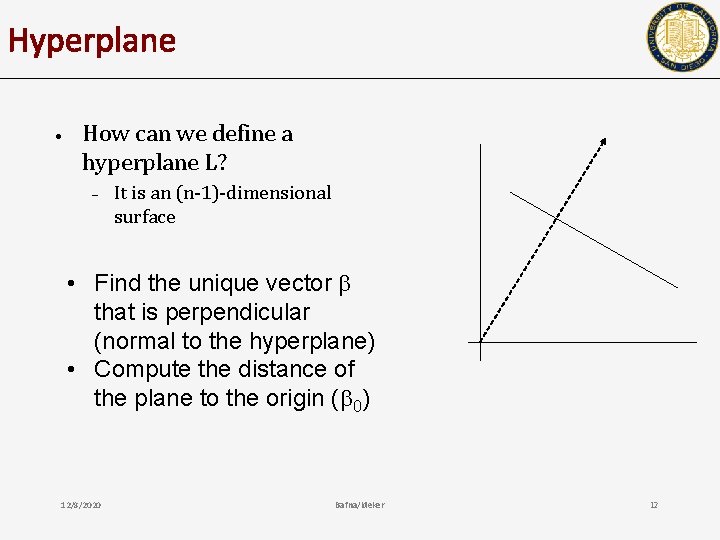

Hyperplane • How can we define a hyperplane L? – It is an (n-1)-dimensional surface • Find the unique vector that is perpendicular (normal to the hyperplane) • Compute the distance of the plane to the origin ( 0) 12/8/2020 Bafna/Ideker 12

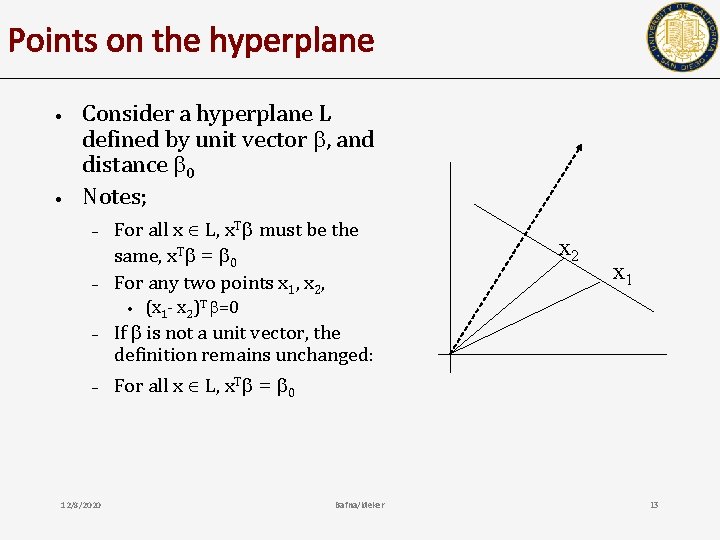

Points on the hyperplane • • Consider a hyperplane L defined by unit vector , and distance 0 Notes; – – For all x L, x. T must be the same, x. T = 0 For any two points x 1, x 2, • – – 12/8/2020 x 2 x 1 (x 1 - x 2)T =0 If is not a unit vector, the definition remains unchanged: For all x L, x. T = 0 Bafna/Ideker 13

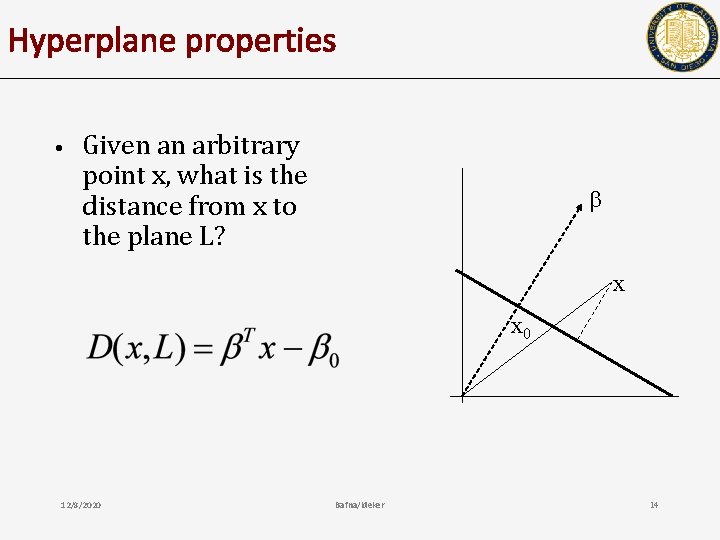

Hyperplane properties • Given an arbitrary point x, what is the distance from x to the plane L? x x 0 12/8/2020 Bafna/Ideker 14

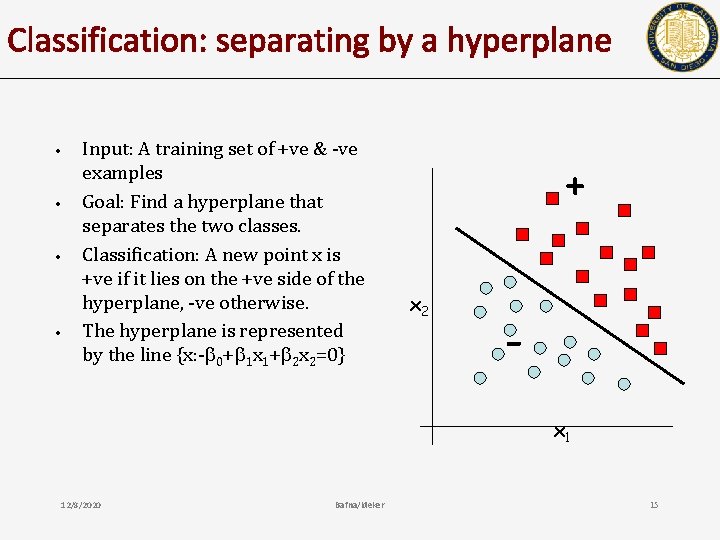

Classification: separating by a hyperplane • • Input: A training set of +ve & -ve examples Goal: Find a hyperplane that separates the two classes. Classification: A new point x is +ve if it lies on the +ve side of the hyperplane, -ve otherwise. The hyperplane is represented by the line {x: - 0+ 1 x 1+ 2 x 2=0} + x 2 x 1 12/8/2020 Bafna/Ideker 15

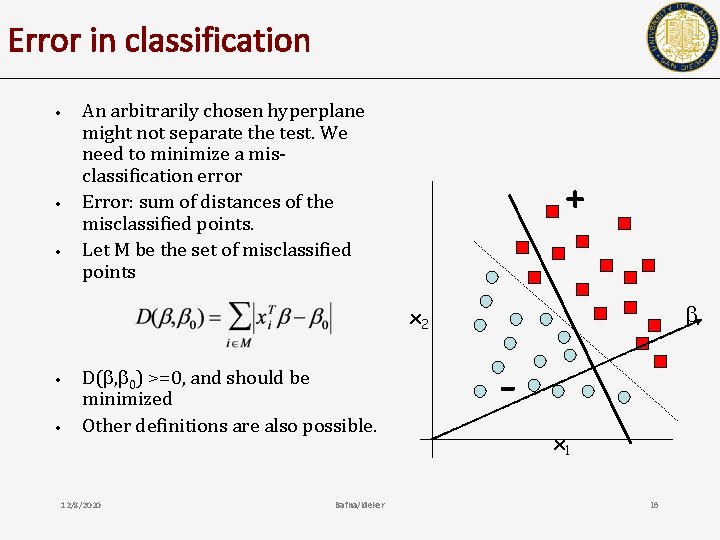

Error in classification • • • An arbitrarily chosen hyperplane might not separate the test. We need to minimize a misclassification error Error: sum of distances of the misclassified points. Let M be the set of misclassified points + x 2 • • D( , 0) >=0, and should be minimized Other definitions are also possible. 12/8/2020 Bafna/Ideker x 1 16

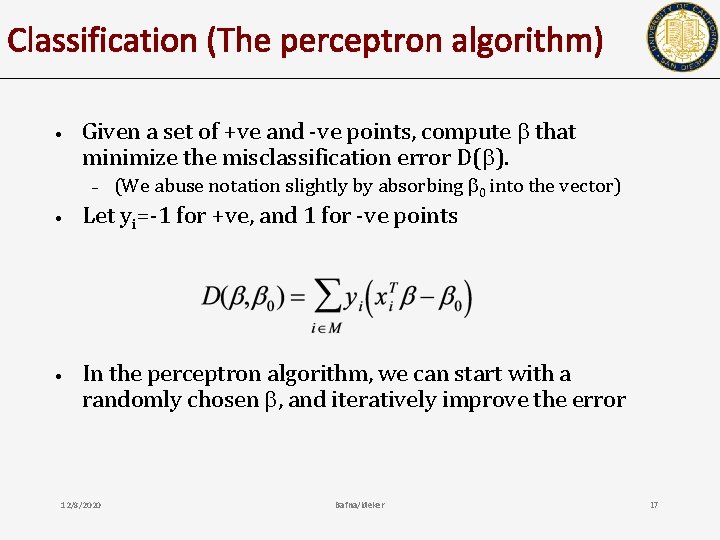

Classification (The perceptron algorithm) • Given a set of +ve and -ve points, compute that minimize the misclassification error D( ). – (We abuse notation slightly by absorbing 0 into the vector) • Let yi=-1 for +ve, and 1 for -ve points • In the perceptron algorithm, we can start with a randomly chosen , and iteratively improve the error 12/8/2020 Bafna/Ideker 17

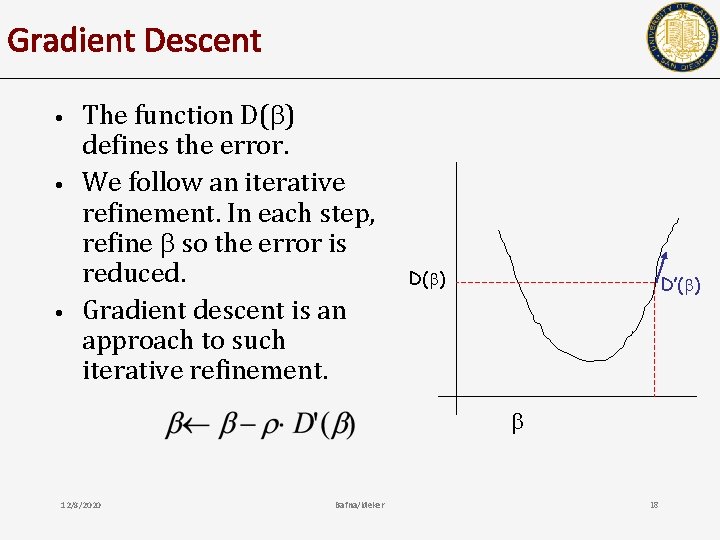

Gradient Descent • • • The function D( ) defines the error. We follow an iterative refinement. In each step, refine so the error is reduced. Gradient descent is an approach to such iterative refinement. D( ) D’( ) 12/8/2020 Bafna/Ideker 18

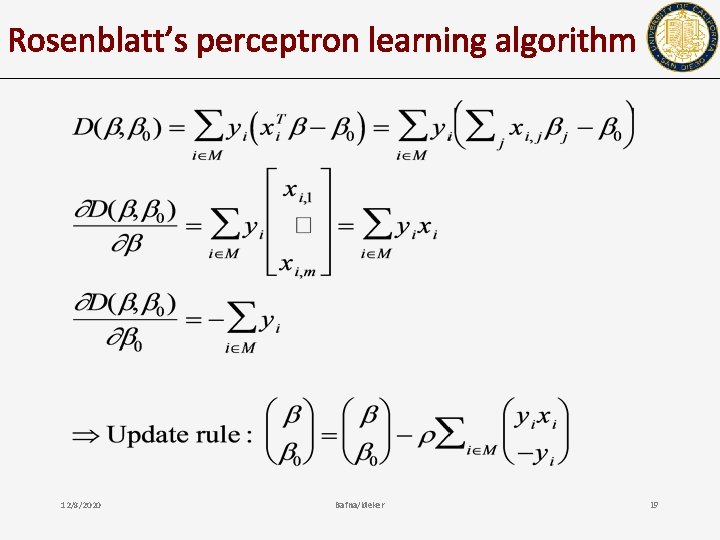

Rosenblatt’s perceptron learning algorithm 12/8/2020 Bafna/Ideker 19

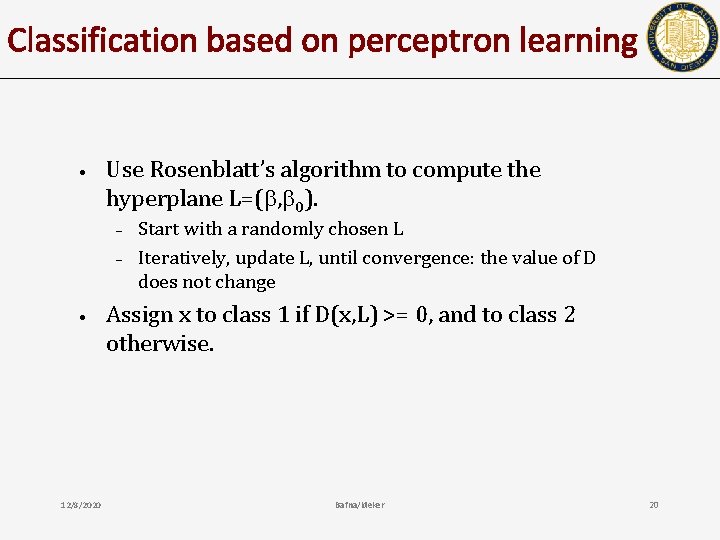

Classification based on perceptron learning • Use Rosenblatt’s algorithm to compute the hyperplane L=( , 0). – – • 12/8/2020 Start with a randomly chosen L Iteratively, update L, until convergence: the value of D does not change Assign x to class 1 if D(x, L) >= 0, and to class 2 otherwise. Bafna/Ideker 20

Perceptron learning • • If many solutions are possible, it does not choose between solutions If separation is possible, then convergence is guaranteed, and the number of steps can be bounded. 12/8/2020 Bafna/Ideker 21

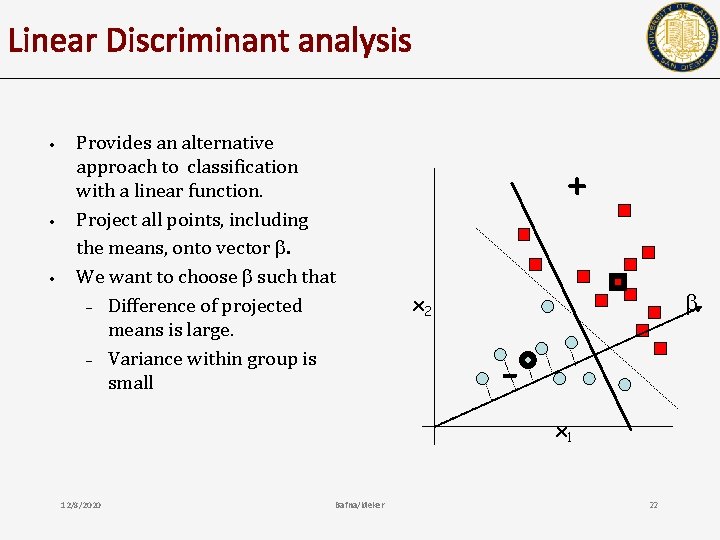

Linear Discriminant analysis • • • Provides an alternative approach to classification with a linear function. Project all points, including the means, onto vector . We want to choose such that – Difference of projected means is large. – Variance within group is small + x 2 x 1 12/8/2020 Bafna/Ideker 22

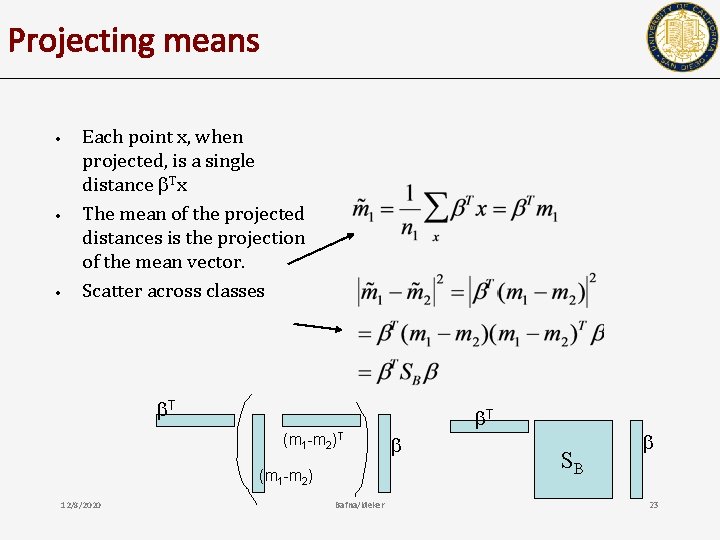

Projecting means • • • Each point x, when projected, is a single distance Tx The mean of the projected distances is the projection of the mean vector. Scatter across classes T T (m 1 -m 2) 12/8/2020 Bafna/Ideker SB 23

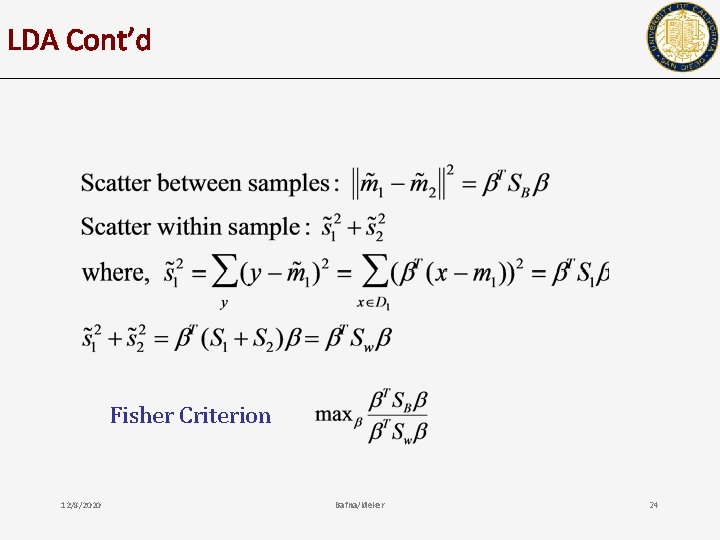

LDA Cont’d Fisher Criterion 12/8/2020 Bafna/Ideker 24

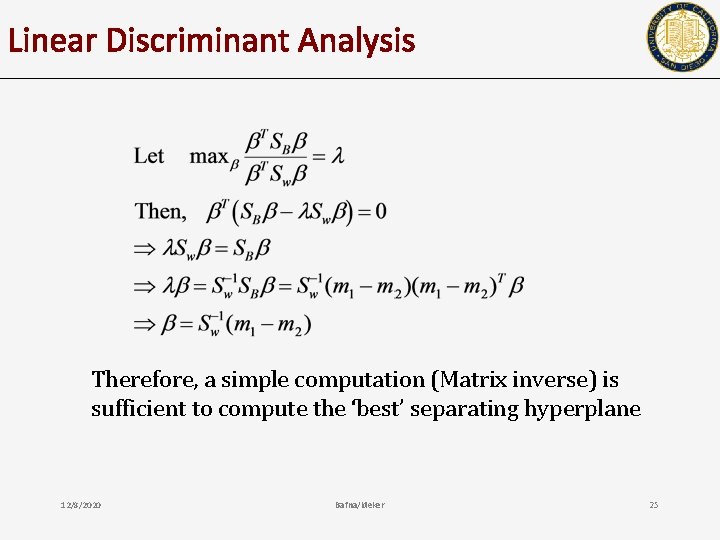

Linear Discriminant Analysis Therefore, a simple computation (Matrix inverse) is sufficient to compute the ‘best’ separating hyperplane 12/8/2020 Bafna/Ideker 25

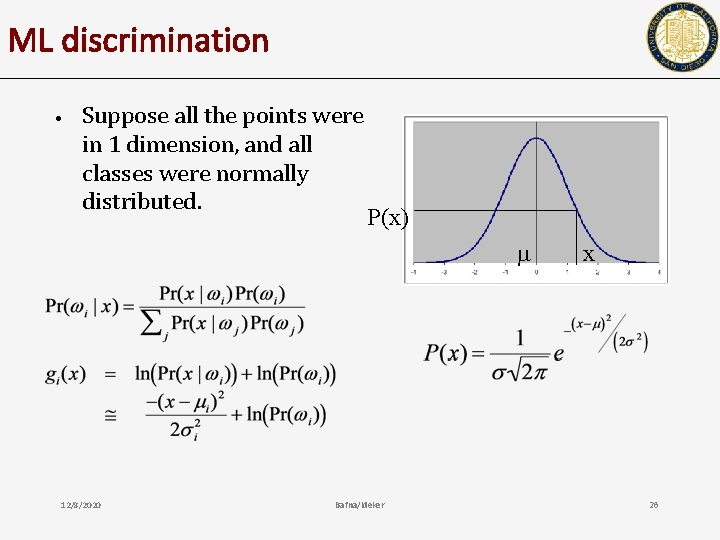

ML discrimination • Suppose all the points were in 1 dimension, and all classes were normally distributed. P(x) 12/8/2020 Bafna/Ideker x 26

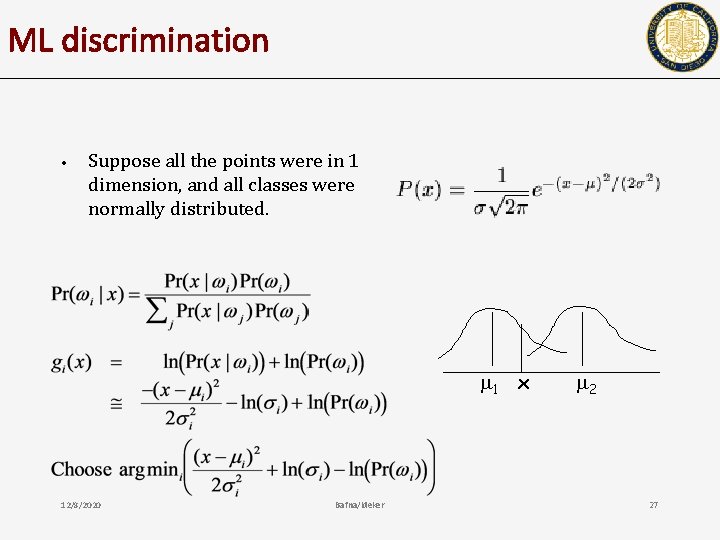

ML discrimination • Suppose all the points were in 1 dimension, and all classes were normally distributed. 1 x 12/8/2020 Bafna/Ideker 2 27

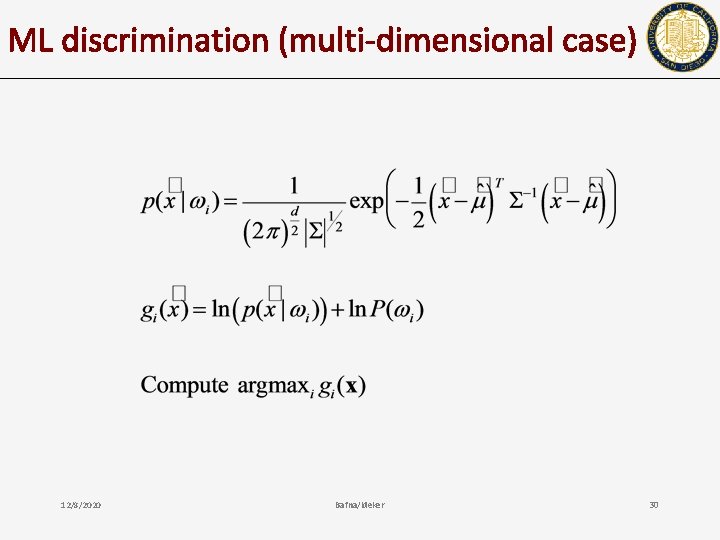

ML discrimination recipe (1 dimensional case) • • • Estimate the mean and variance for each class. For a new point x, compute the discrimination function gi(x) for each class i. Choose argmaxi gi(x) as the class for x 12/8/2020 Bafna/Ideker 28

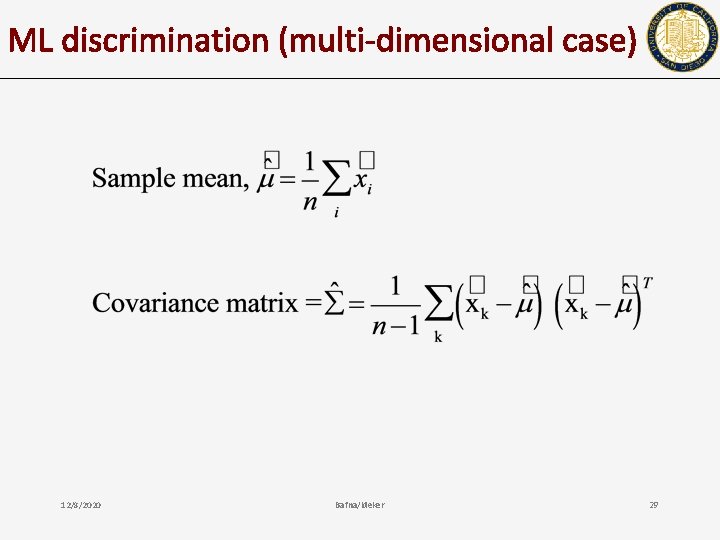

ML discrimination (multi-dimensional case) 12/8/2020 Bafna/Ideker 29

ML discrimination (multi-dimensional case) 12/8/2020 Bafna/Ideker 30

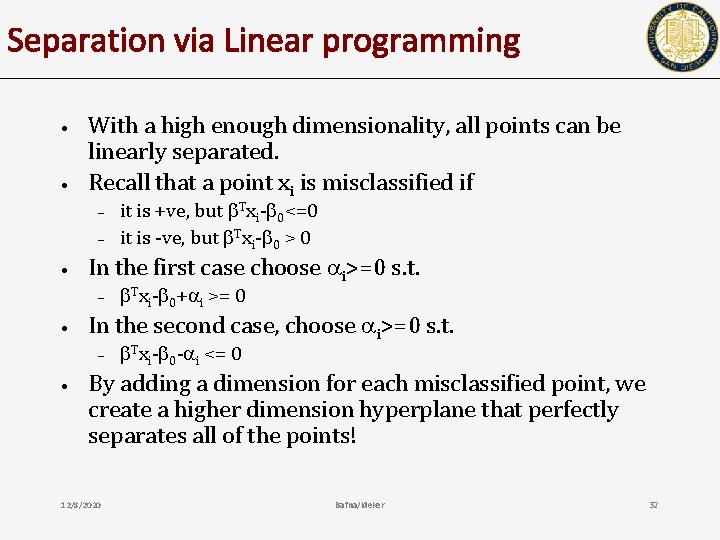

Separation via Linear programming • • With a high enough dimensionality, all points can be linearly separated. Recall that a point xi is misclassified if – – • In the first case choose i>=0 s. t. – • Txi- 0+ i >= 0 In the second case, choose i>=0 s. t. – • it is +ve, but Txi- 0<=0 it is -ve, but Txi- 0 > 0 Txi- 0 - i <= 0 By adding a dimension for each misclassified point, we create a higher dimension hyperplane that perfectly separates all of the points! 12/8/2020 Bafna/Ideker 32

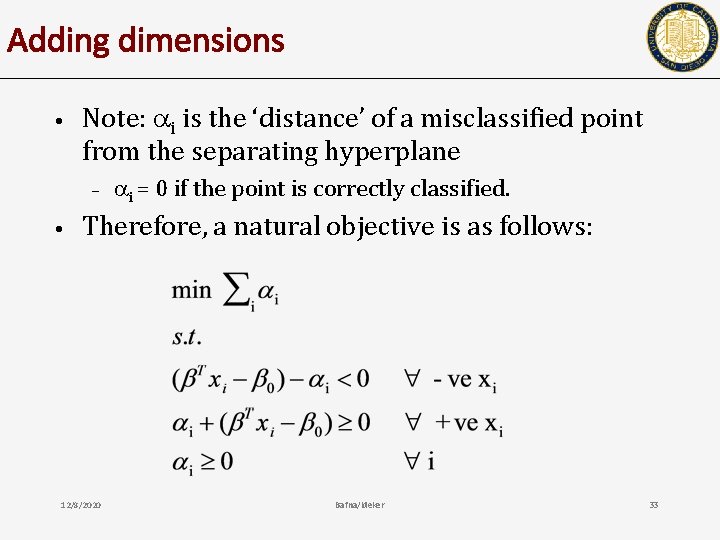

Adding dimensions • Note: i is the ‘distance’ of a misclassified point from the separating hyperplane – • i = 0 if the point is correctly classified. Therefore, a natural objective is as follows: 12/8/2020 Bafna/Ideker 33

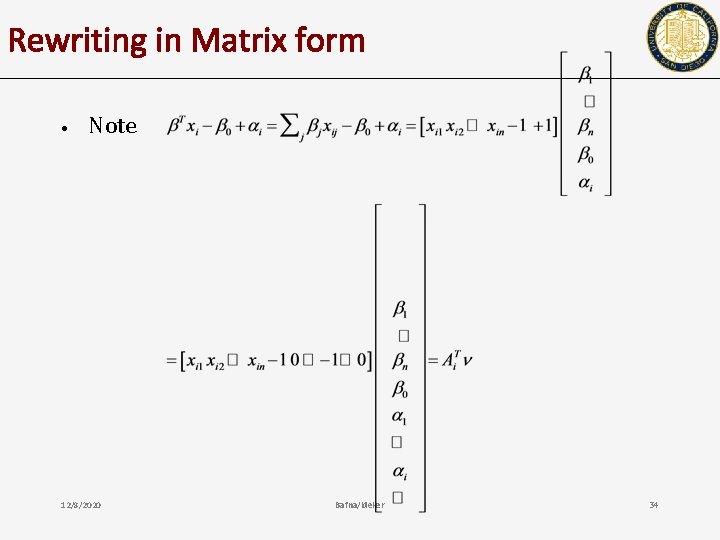

Rewriting in Matrix form • Note 12/8/2020 Bafna/Ideker 34

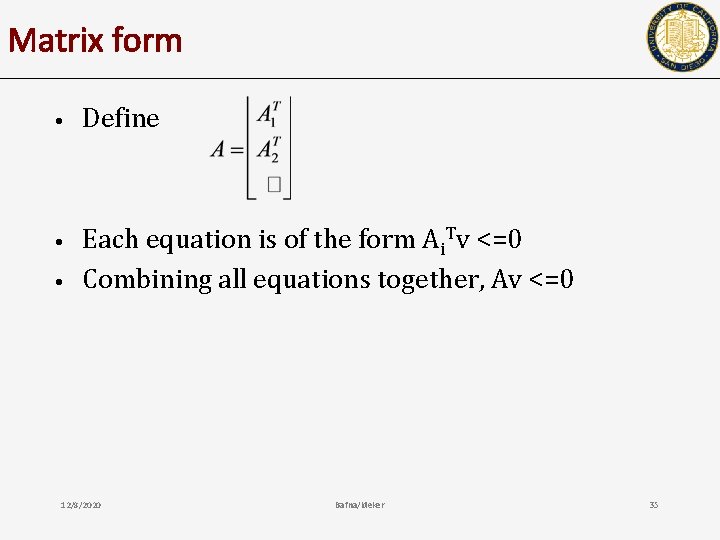

Matrix form • Define • Each equation is of the form Ai. Tv <=0 Combining all equations together, Av <=0 • 12/8/2020 Bafna/Ideker 35

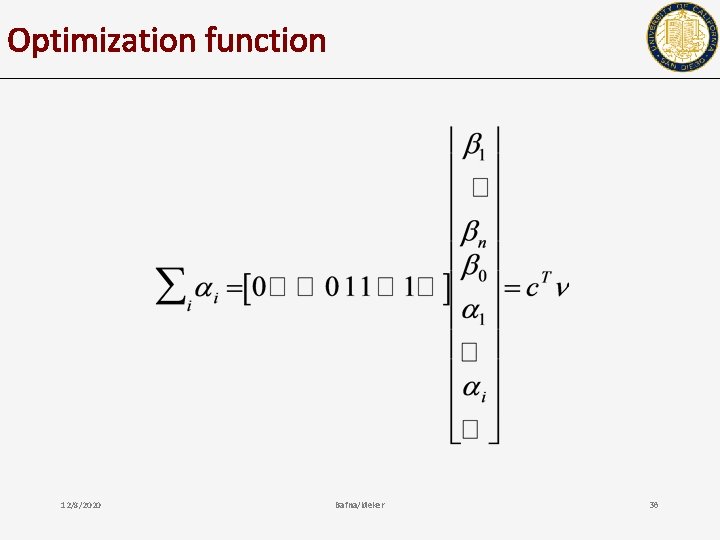

Optimization function 12/8/2020 Bafna/Ideker 36

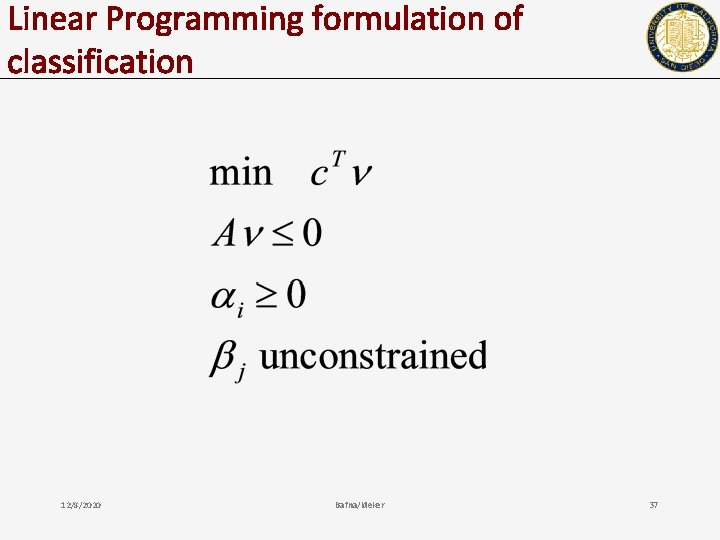

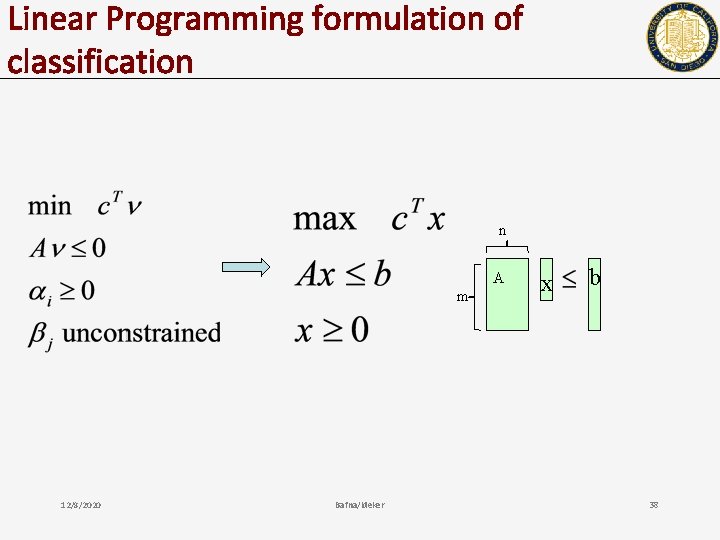

Linear Programming formulation of classification 12/8/2020 Bafna/Ideker 37

Linear Programming formulation of classification n A m 12/8/2020 Bafna/Ideker x b 38

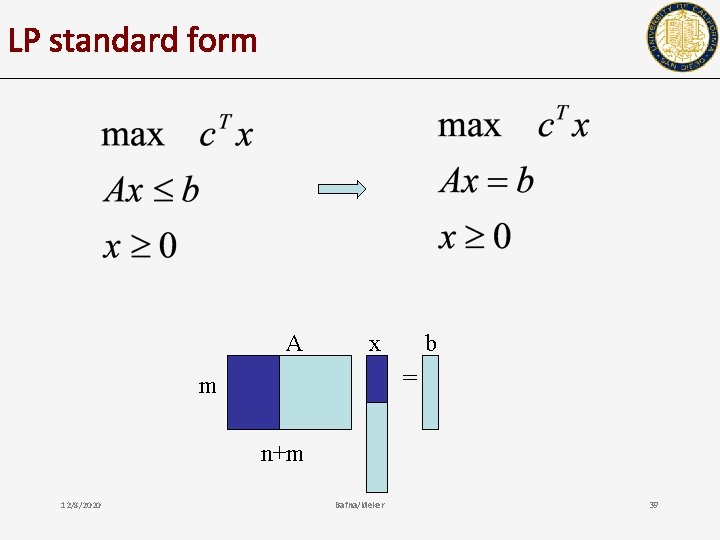

LP standard form A x b = m n+m 12/8/2020 Bafna/Ideker 39

Basics: converting to standard form • • • Ax >= b can be replaced by -Ax <= -b, (y>=0) x <0 can be replaced by -x’ (x’>=0) x unconstrained can be replaced by x = y-z, y>= 0, z>=0 To change to equality, we can always add positive slack variables. We will work with all of these equivalent forms 12/8/2020 Bafna/Ideker 40

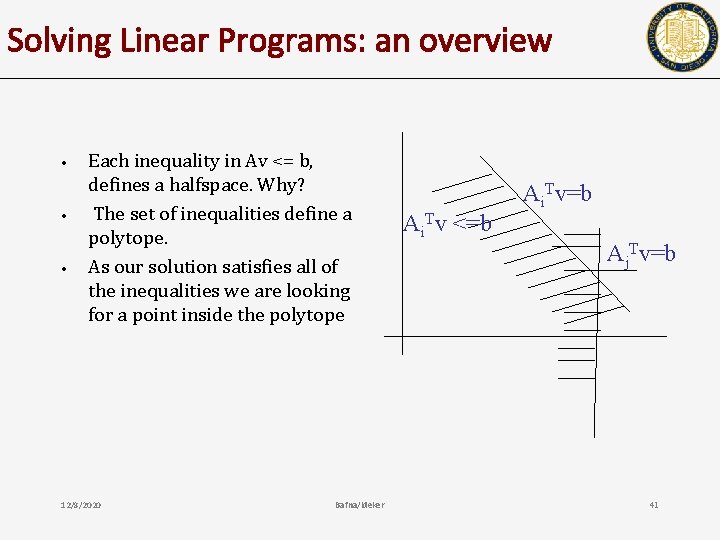

Solving Linear Programs: an overview • • • Each inequality in Av <= b, defines a halfspace. Why? The set of inequalities define a polytope. As our solution satisfies all of the inequalities we are looking for a point inside the polytope 12/8/2020 Bafna/Ideker Ai. Tv <=b Ai. Tv=b Aj. Tv=b 41

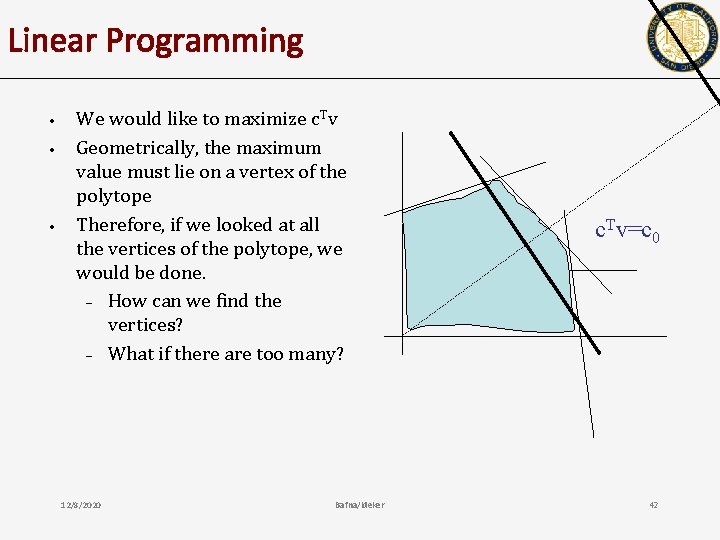

Linear Programming • • • We would like to maximize c. Tv Geometrically, the maximum value must lie on a vertex of the polytope Therefore, if we looked at all the vertices of the polytope, we would be done. – How can we find the vertices? – What if there are too many? 12/8/2020 Bafna/Ideker c. Tv=c 0 42

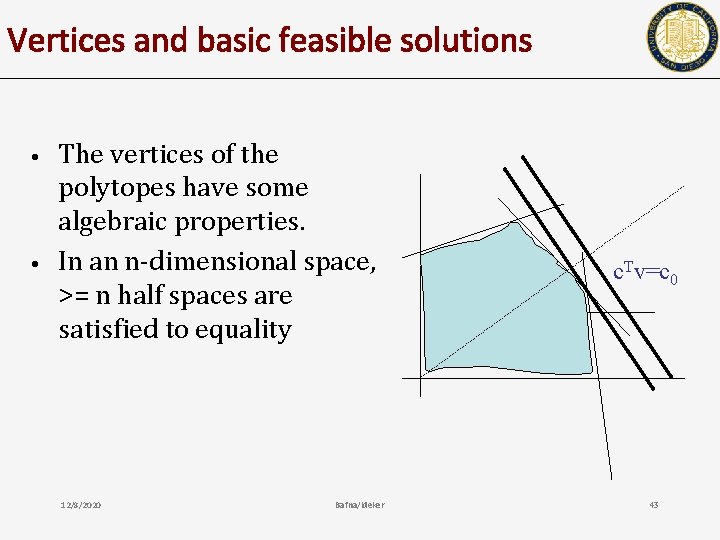

Vertices and basic feasible solutions • • The vertices of the polytopes have some algebraic properties. In an n-dimensional space, >= n half spaces are satisfied to equality 12/8/2020 Bafna/Ideker c. Tv=c 0 43

Vertex? • • • In an m-dimensional space, a vertex is an intersection of m half-spaces (all linearly independent). (basic/basis solution) However, all basis solutions may not lie within the polytope (they are not all feasible). If we find a basic feasible solution, a vertex of the polytope, we can move to a neighboring vertex.

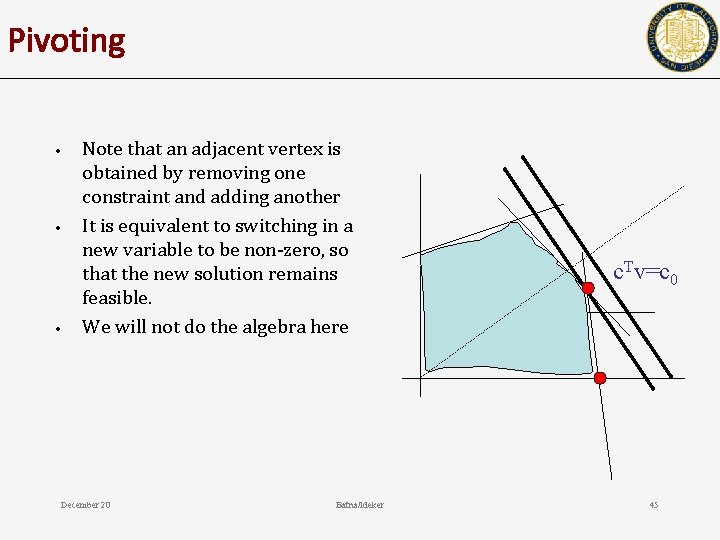

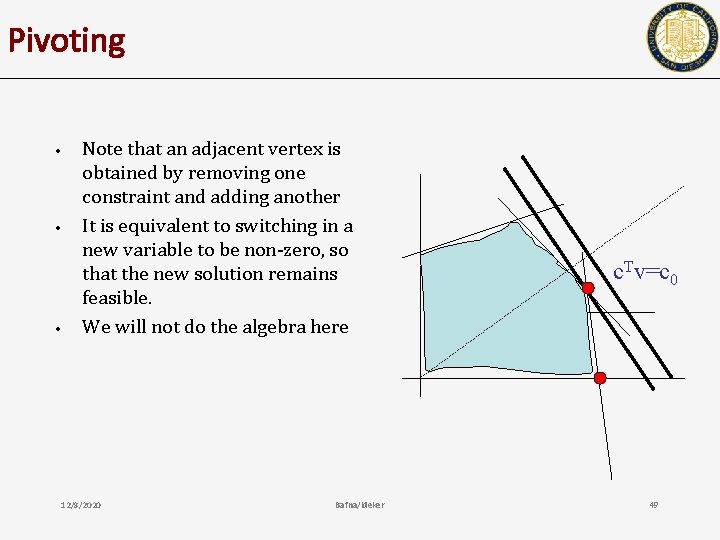

Pivoting • • • Note that an adjacent vertex is obtained by removing one constraint and adding another It is equivalent to switching in a new variable to be non-zero, so that the new solution remains feasible. We will not do the algebra here December 20 Bafna/Ideker c. Tv=c 0 45

Reiterating • • • Identify an initial Basic Feasible Solution (a vertex of the polytope) Compute the objective function. Move to a neighboring vertex on the polytope by pivoting (not covered) By choosing to pivot to a vertex that improves the objective, we eventually converge on the optima. 12/8/2020 Bafna/Ideker 46

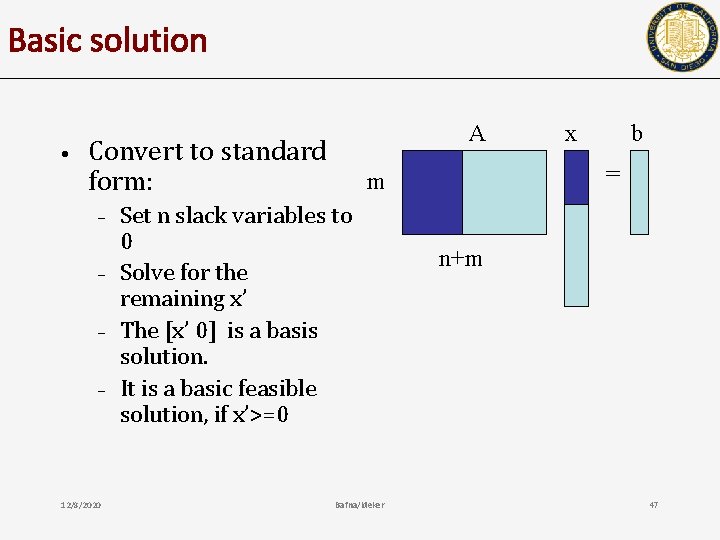

Basic solution • A Convert to standard form: – – 12/8/2020 Bafna/Ideker b = m Set n slack variables to 0 Solve for the remaining x’ The [x’ 0] is a basis solution. It is a basic feasible solution, if x’>=0 x n+m 47

Basic Feasible solution • • It is easy to compute a basic (basis) solution x s. t. Ax=b However, the basis solution might not be feasible. How can we compute a basic feasible solution (BFS)? Assuming that we can find a vertex (BFS), how do we move to an adjacent vertex? 12/8/2020 Bafna/Ideker 48

Pivoting • • • Note that an adjacent vertex is obtained by removing one constraint and adding another It is equivalent to switching in a new variable to be non-zero, so that the new solution remains feasible. We will not do the algebra here 12/8/2020 Bafna/Ideker c. Tv=c 0 49

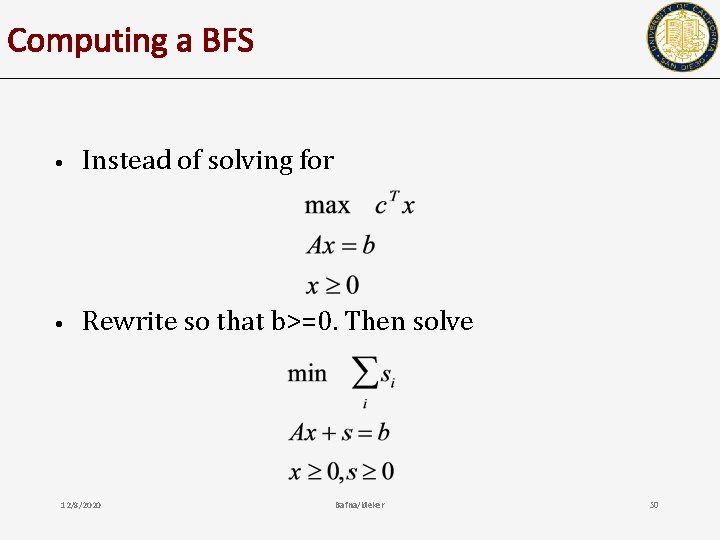

Computing a BFS • Instead of solving for • Rewrite so that b>=0. Then solve 12/8/2020 Bafna/Ideker 50

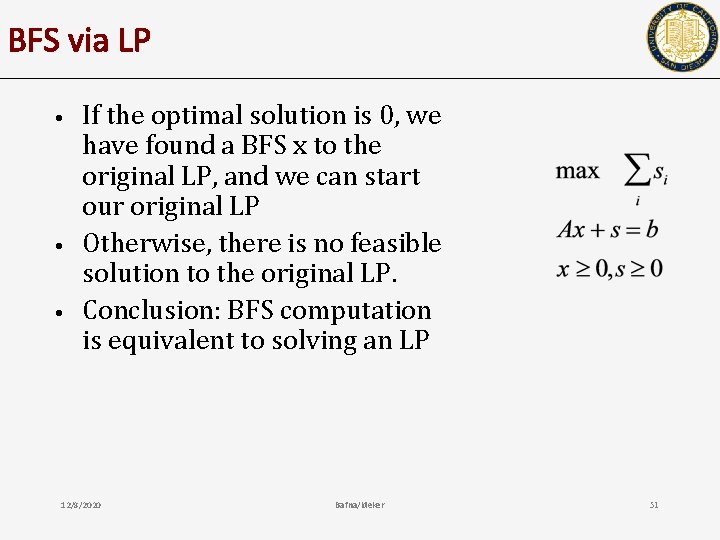

BFS via LP • • • If the optimal solution is 0, we have found a BFS x to the original LP, and we can start our original LP Otherwise, there is no feasible solution to the original LP. Conclusion: BFS computation is equivalent to solving an LP 12/8/2020 Bafna/Ideker 51

Reiterating • • • Identify an initial Basic Feasible Solution (a vertex of the polytope) Compute the objective function. Move to a neighboring vertex on the polytope by pivoting (not covered) By choosing to pivot to a vertex that improves the objective, we eventually converge on the optima. 12/8/2020 Bafna/Ideker 52

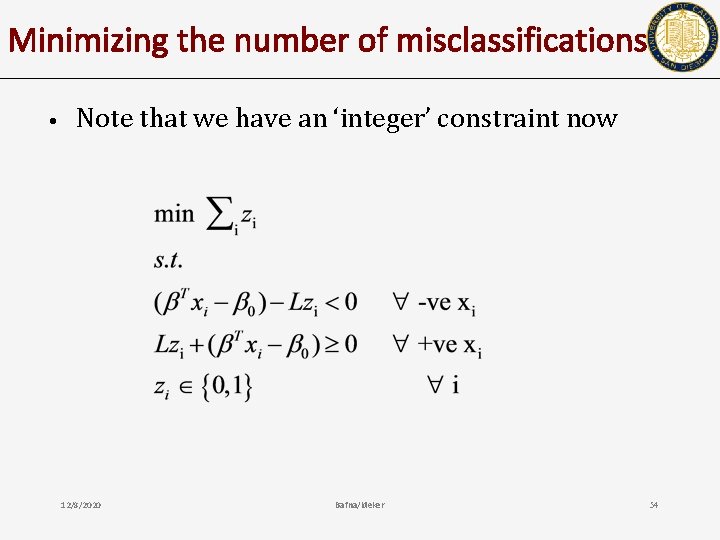

working with ‘different’ objectives • • Suppose our goal was to minimize the number of misclassified points. Create indicator variables zi for each point i. – • zi=1 if and only if point i is misclassified; otherwise, set zi=1 How will you rewrite the LP? 12/8/2020 Bafna/Ideker 53

Minimizing the number of misclassifications • Note that we have an ‘integer’ constraint now 12/8/2020 Bafna/Ideker 54

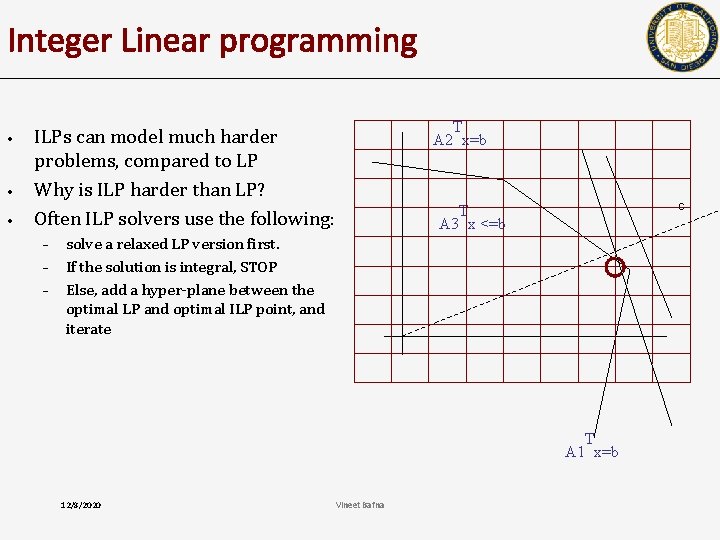

Integer Linear programming • • • T A 2 x=b ILPs can model much harder problems, compared to LP Why is ILP harder than LP? Often ILP solvers use the following: – – – c T A 3 x <=b solve a relaxed LP version first. If the solution is integral, STOP Else, add a hyper-plane between the optimal LP and optimal ILP point, and iterate T A 1 x=b 12/8/2020 Vineet Bafna

Summary of concepts • • • Basic linear algebra (projections, inner, outer product) Concept of hyperplane, hyperplane separation, distance from hyperplane. Computing a separating hyperplane Fisher’s LDA ML based separation LP to quantify the error – – – 12/8/2020 geometric viewpoint of LP. Polytopes, vertices, ‘proof’ of optimality at a vertex. computing vertices, and pivoting. Bafna/Ideker 56

Next • How do we choose between several good solutions, and maximum margin hyperplanes. 12/8/2020 Bafna/Ideker 57

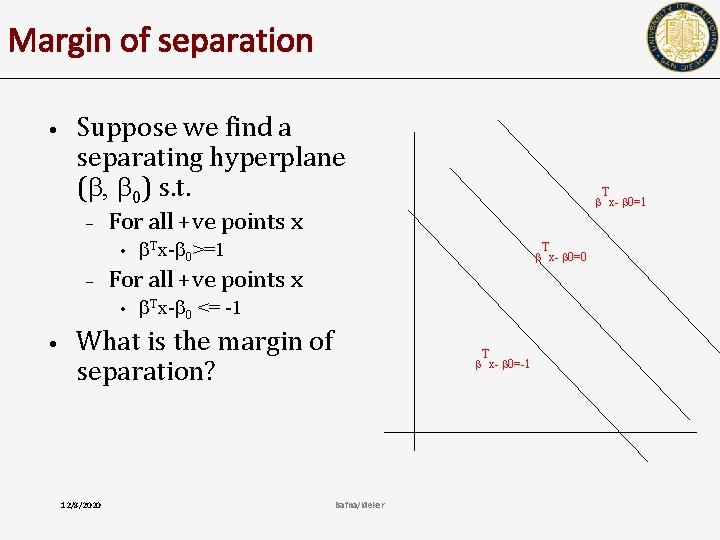

Margin of separation • Suppose we find a separating hyperplane ( , 0) s. t. – For all +ve points x • – Tx- 0>=1 T x- 0=0 For all +ve points x • • Tx- 0 <= -1 What is the margin of separation? 12/8/2020 T x- 0=1 T x- 0=-1 Bafna/Ideker

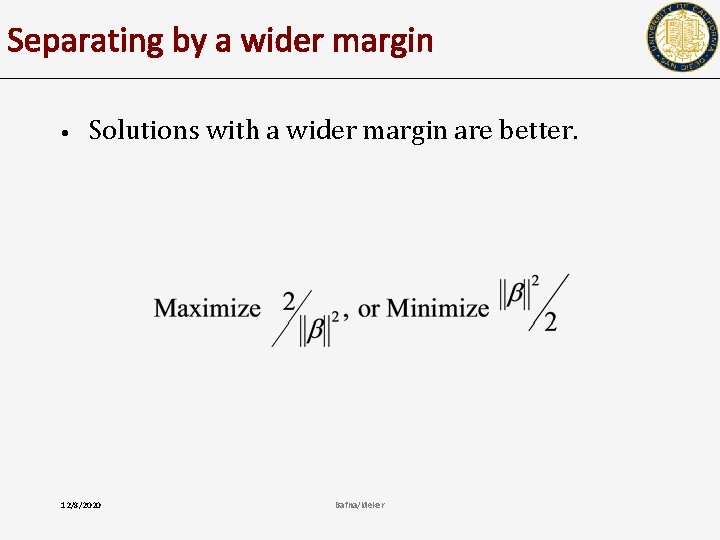

Separating by a wider margin • Solutions with a wider margin are better. 12/8/2020 Bafna/Ideker

- Slides: 58