Theory of Distributed Systems TDS Group Leaders Nancy

Theory of Distributed Systems (TDS) Group Leaders: Nancy Lynch, Idit Keidar Ph. D students: Victor Luchangco, Josh Tauber, Roger Khazan, Carl Livadas, Rui Fan Meng and UROP students: Laura Dean, Andrej Bogdanov, Michael Tsai, Chris Luhrs, Affiliates: Alex Shvartsman (U. Conn. ), Alan Fekete (U. Sydney), Paul Attie (Northeastern), Ziv Bar-Joseph 1

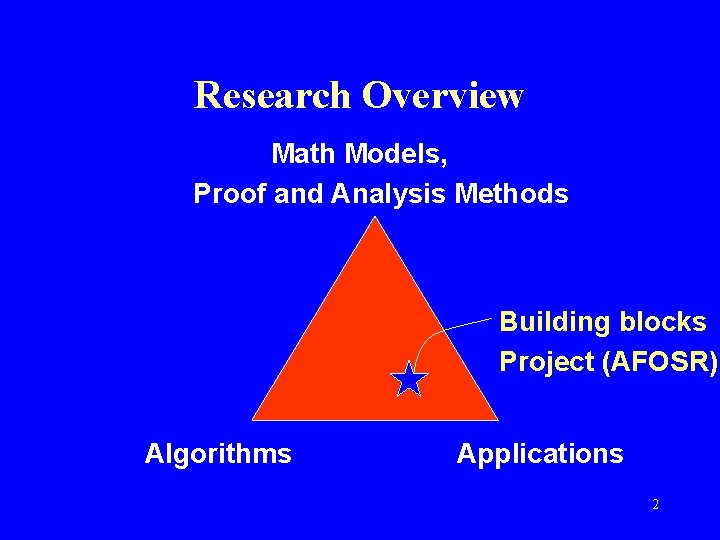

Research Overview Math Models, Proof and Analysis Methods Building blocks Project (AFOSR) Algorithms Applications 2

Math Models, Proof and Analysis Methods • I/O Automata – Mathematical model for asynchronously interacting system components. • Timed I/O Automata, Hybrid I/O Automata [Lynch, Segala, Vaandrager], Probabilistic [Segala], Dynamic [Lynch, Attie] • Invariant assertions • Levels of abstraction (simulation relations) • Compositional reasoning • Conditional performance analysis • Object inheritance [Keidar, Khazan, Shvartsman] 3

![IOA Language and Toolset • • • Language design [Garland, Lynch] Front end [Garland] IOA Language and Toolset • • • Language design [Garland, Lynch] Front end [Garland]](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-4.jpg)

IOA Language and Toolset • • • Language design [Garland, Lynch] Front end [Garland] Simulator [Chefter, Ramirez, Dean] Code generator [Tauber, Tsai] Theorem prover [Garland, Fan, Bogdanov] Test examples [Luhrs, Dean, Rosen, Karlovich] 4

![Algorithms • Communication [Keidar, Khazan, Livadas, Bar-Joseph, Lynch] • Distributed data management [Keidar, Shvartsman, Algorithms • Communication [Keidar, Khazan, Livadas, Bar-Joseph, Lynch] • Distributed data management [Keidar, Shvartsman,](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-5.jpg)

Algorithms • Communication [Keidar, Khazan, Livadas, Bar-Joseph, Lynch] • Distributed data management [Keidar, Shvartsman, De Prisco, Lynch] • Consensus [Bar-Joseph, Keidar] • Load balancing [Shvartsman, Khazan, Fekete] • Lower bounds, impossibility results • Distributed algorithms => Distributed systems – Generating code from models [Tauber, Tsai] 5

![Applications • Multiprocessor memories [Luchangco] • Hybrid systems – Automated transportation: Planes (TCAS), Trains Applications • Multiprocessor memories [Luchangco] • Hybrid systems – Automated transportation: Planes (TCAS), Trains](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-6.jpg)

Applications • Multiprocessor memories [Luchangco] • Hybrid systems – Automated transportation: Planes (TCAS), Trains (Raytheon PRT), and Automobiles (California PATH) [Livadas] – Mobile computing • Middleware (building blocks) for fault-tolerant distributed computing [Keidar, Shvartsman, Fekete, De Prisco, Khazan] • Communication [Livadas, Bar-Joseph] • Object-oriented programming [Fekete, Lynch] • Agents [Attie, Luchangco, Lynch, Keidar] 6

7

Reliable Group Communication: a Mathematical Approach Nancy Lynch Theory of Distributed Systems MIT LCS AFOSR Review November 15, 2000 … GC 8

Dynamic Distributed Systems • Modern distributed systems are dynamic. • Set of clients participating in an application changes, because of: – Network, processor failure, recovery – Changing client requirements • To cope with changes: ? ? ? ? – Use abstract groups of client processes with changing membership sets. – Processes communicate with group members by sending messages to the group as a whole. 9

Group Communication Services • • … Support management of groups GC Maintain membership info Manage communication Make guarantees about ordering, reliability of message delivery, e. g. : – Best-effort: IP Multicast – Strong consistency guarantees: Isis, Transis, Ensemble • Hide complexity of coping with changes 10

This Talk • Describe – Group communication systems – A mathematical approach to designing, modeling, analyzing GC systems. – Our accomplishments and ideas for future work. • Collaborators: Idit Keidar, Alan Fekete, Alex Shvartsman, Roger Khazan, Roberto De Prisco, Jason Hickey, Robert van Renesse, Carl Livadas, Ziv Bar. Joseph, Kyle Ingols, Igor Tarashchanskiy 11

Talk Outline I. Background: Group Communication II. Our Approach III. Projects and Results 1. View Synchrony 2. Ensemble 3. Dynamic Views 4. Scalable Group Communication IV. Conclusions 12

I. Background: Group Communication 13

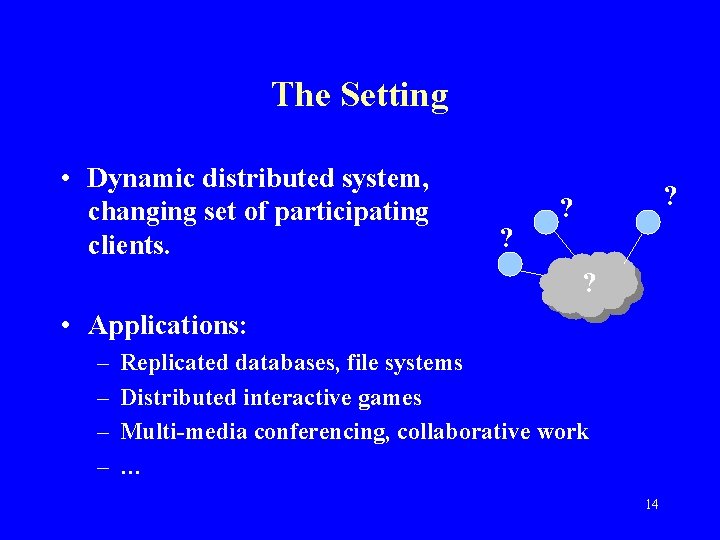

The Setting • Dynamic distributed system, changing set of participating clients. ? ? ? ? • Applications: – – Replicated databases, file systems Distributed interactive games Multi-media conferencing, collaborative work … 14

Groups • Abstract, named groups of client processes, changing membership. • Client processes send messages to the group (multicast). • Early 80 s: Group idea used in replicated data management system designs • Late 80 s: Separate group communication services. 15

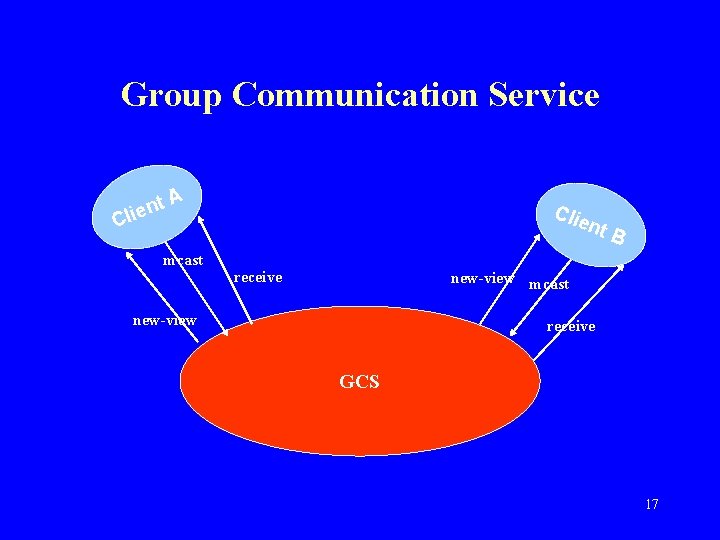

Group Communication Service • Communication middleware • Manages group membership, current views View = membership set + identifier B • Manages multicast communication A among group members … GC – Multicasts respect views – Guarantees within each view: • Reliability constraints • Ordering constraints, e. g. , FIFO from each sender, causal, common total order • Global service 16

Group Communication Service nt e i l C A mcast Clie receive nt B new-view mcast new-view receive GCS 17

![Isis [Birman, Joseph 87] • Primary component group membership • Several reliable multicast services, Isis [Birman, Joseph 87] • Primary component group membership • Several reliable multicast services,](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-18.jpg)

Isis [Birman, Joseph 87] • Primary component group membership • Several reliable multicast services, different ordering guarantees, e. g. : – Atomic Broadcast: Common total order, no gaps – Causal Broadcast: A B • When partition is repaired, primary processes send state information to rejoining processes. • Virtually Synchronous message delivery 18

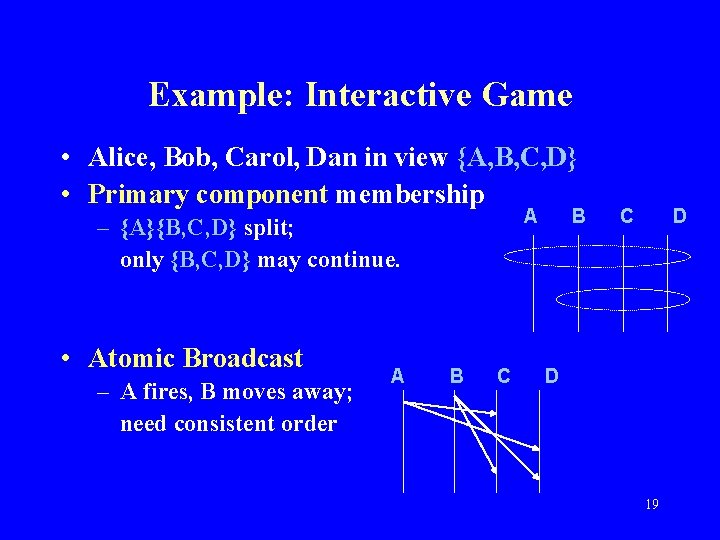

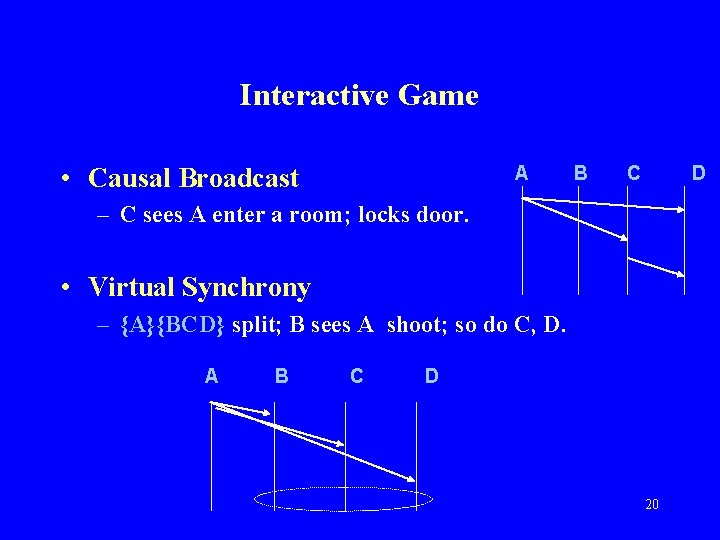

Example: Interactive Game • Alice, Bob, Carol, Dan in view {A, B, C, D} • Primary component membership A – {A}{B, C, D} split; only {B, C, D} may continue. • Atomic Broadcast – A fires, B moves away; need consistent order A B C D D 19

Interactive Game • Causal Broadcast A B C D – C sees A enter a room; locks door. • Virtual Synchrony – {A}{BCD} split; B sees A shoot; so do C, D. A B C D 20

![Applications • Replicated data management – State machine replication [Lamport 78] , [Schneider 90] Applications • Replicated data management – State machine replication [Lamport 78] , [Schneider 90]](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-21.jpg)

Applications • Replicated data management – State machine replication [Lamport 78] , [Schneider 90] – Atomic Broadcast provides support – Same sequence of actions performed everywhere. – Example: Interactive game state machine • Stock market • Air-traffic control 21

![Transis [Amir, Dolev, Kramer, Malkhi 92] • Partitionable group membership • When components merge, Transis [Amir, Dolev, Kramer, Malkhi 92] • Partitionable group membership • When components merge,](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-22.jpg)

Transis [Amir, Dolev, Kramer, Malkhi 92] • Partitionable group membership • When components merge, processes exchange state information. • Virtual synchrony reduces amount of data exchanged. • Applications – – – Highly available servers Collaborative computing, e. g. shared whiteboard Video, audio conferences Distributed jam sessions Replicated data management [Keidar , Dolev 96] 22

![Other Systems • Totem [Amir, Melliar-Smith, Moser, et al. , 95] – Transitional views, Other Systems • Totem [Amir, Melliar-Smith, Moser, et al. , 95] – Transitional views,](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-23.jpg)

Other Systems • Totem [Amir, Melliar-Smith, Moser, et al. , 95] – Transitional views, useful with virtual synchrony • Horus [Birman, van Renesse, Maffeis 96] • Ensemble [Birman, Hayden 97] – Layered architecture – Composable building blocks • Phoenix, Consul, RMP, Newtop, RELACS, … • Partitionable 23

Service Specifications • Precise specifications needed for GC services – Help application programmers write programs that use the services correctly, effectively – Help system maintainers make changes correctly – Safety, performance, fault-tolerance • But difficult: – Many different services; different guarantees about membership, reliability, ordering – Complicated – Specs based on implementations might not be optimal for application programmers. 24

![Early Work on GC Service Specs • • • [Ricciardi 92] [Jahanian, Fakhouri, Rajkumar Early Work on GC Service Specs • • • [Ricciardi 92] [Jahanian, Fakhouri, Rajkumar](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-25.jpg)

Early Work on GC Service Specs • • • [Ricciardi 92] [Jahanian, Fakhouri, Rajkumar 93] [Moser, Amir, Melliar-Smith, Agrawal 94] [Babaoglu et al. 95, 98] [Friedman, van Renesse 95] [Hiltunin, Schlichting 95] [Dolev, Malkhi, Strong 96] [Cristian 96] [Neiger 96] • Impossibility results [Chandra, Hadzilacos, et al. 96] • But still difficult… 25

II. Our Approach 26

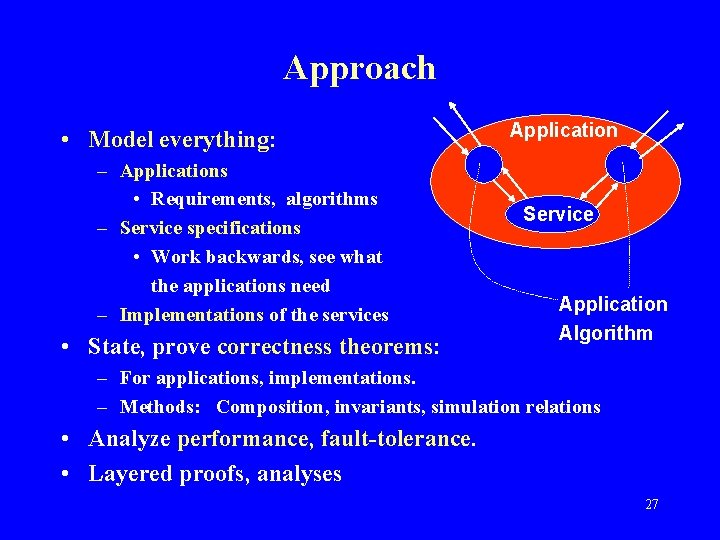

Approach • Model everything: – Applications • Requirements, algorithms – Service specifications • Work backwards, see what the applications need – Implementations of the services • State, prove correctness theorems: Application Service Application Algorithm – For applications, implementations. – Methods: Composition, invariants, simulation relations • Analyze performance, fault-tolerance. • Layered proofs, analyses 27

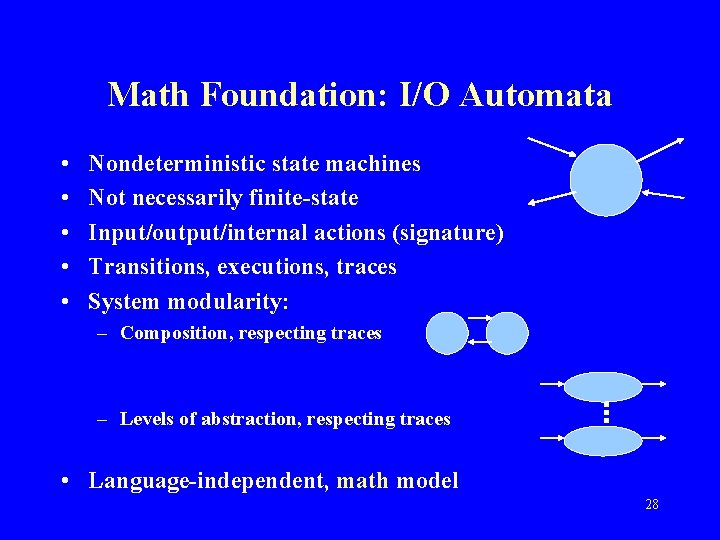

Math Foundation: I/O Automata • • • Nondeterministic state machines Not necessarily finite-state Input/output/internal actions (signature) Transitions, executions, traces System modularity: – Composition, respecting traces – Levels of abstraction, respecting traces • Language-independent, math model 28

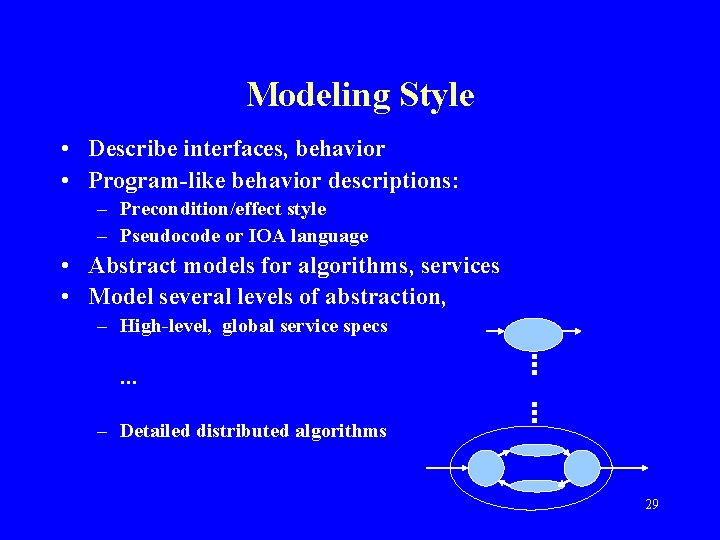

Modeling Style • Describe interfaces, behavior • Program-like behavior descriptions: – Precondition/effect style – Pseudocode or IOA language • Abstract models for algorithms, services • Model several levels of abstraction, – High-level, global service specs … – Detailed distributed algorithms 29

Modeling Style • Very nondeterministic: – Constrain only what must be constrained. – Simpler – Allows alternative implementations 30

![Describing Timing Features • TIOAs [Lynch, Vaandrager 93] – For describing: • Timeout-based algorithms. Describing Timing Features • TIOAs [Lynch, Vaandrager 93] – For describing: • Timeout-based algorithms.](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-31.jpg)

Describing Timing Features • TIOAs [Lynch, Vaandrager 93] – For describing: • Timeout-based algorithms. • Clocks, clock synchronization • Performance properties 31

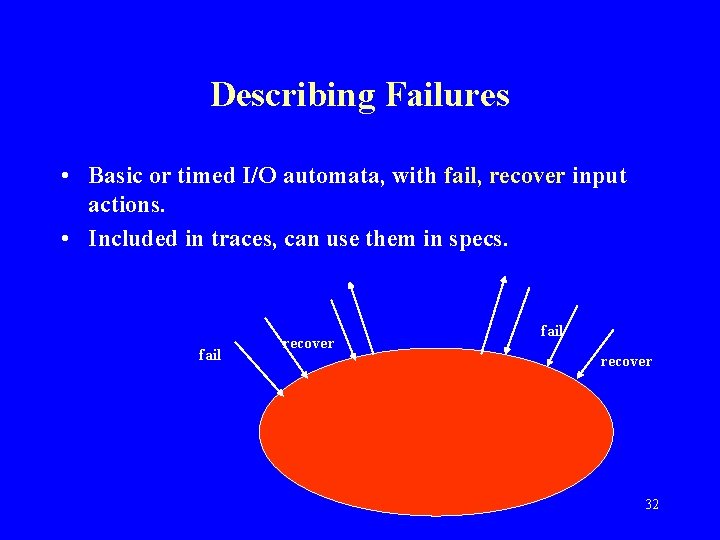

Describing Failures • Basic or timed I/O automata, with fail, recover input actions. • Included in traces, can use them in specs. fail recover 32

Using I/O Automata for Group Communication Systems • Use for global services + distributed algorithms • Define safety properties separately from performance/fault-tolerance properties. – Safety: • Basic I/O automata; trace properties – Performance/fault-tolerance: • Timed I/O automata with failure actions; timed trace properties 33

III. Projects and Results 34

Projects 1. View Synchrony 2. Ensemble 3. Dynamic Views 4. Scalable Group Communication 35

![1. View Synchrony (VS) [Fekete, Lynch, Shvartsman 97, 00] Goals: • Develop prototypes: – 1. View Synchrony (VS) [Fekete, Lynch, Shvartsman 97, 00] Goals: • Develop prototypes: –](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-36.jpg)

1. View Synchrony (VS) [Fekete, Lynch, Shvartsman 97, 00] Goals: • Develop prototypes: – – Specifications for typical GC services Descriptions for typical GC algorithms Correctness proofs Performance analyses • Design simple math foundation for the area. • Try out, evaluate our approach. 36

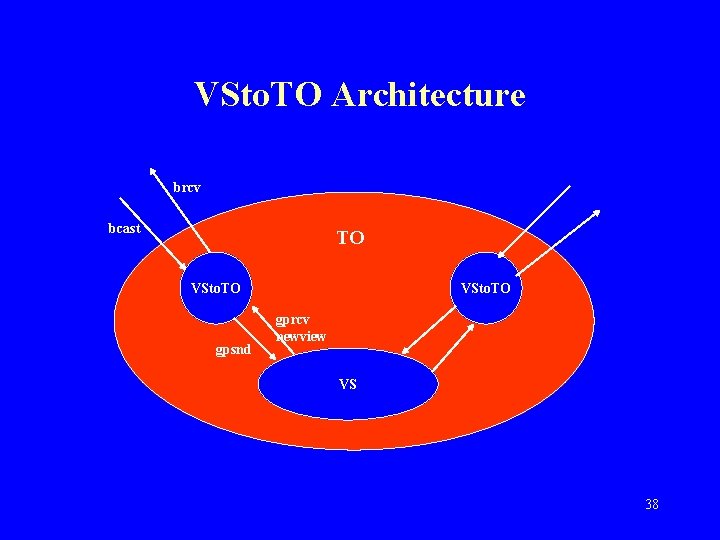

View Synchrony What we did: • Talked with system developers (Isis, Transis) • Defined I/O automaton models for: – VS, prototype partitionable GC service – TO, non-view-oriented totally ordered bcast service – VSto. TO, application algorithm based on [Amir, Dolev, Keidar, Melliar-Smith, Moser] • Proved correctness • Analyzed performance/ fault-tolerance. 37

VSto. TO Architecture brcv bcast TO VSto. TO gpsnd VSto. TO gprcv newview VS 38

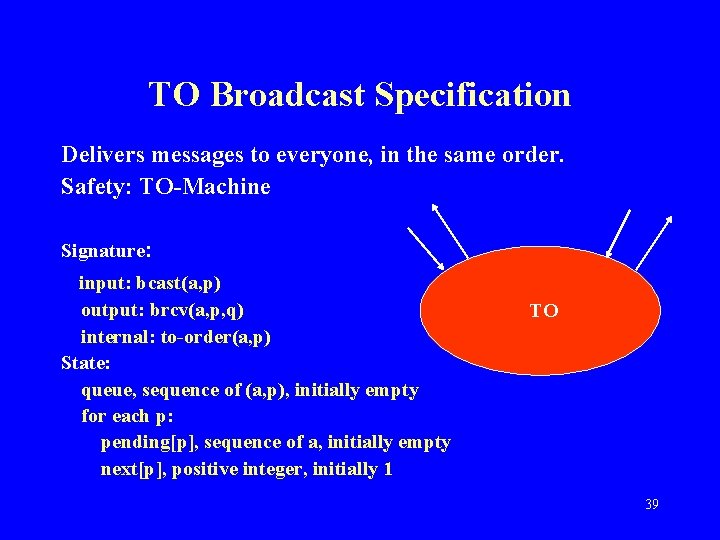

TO Broadcast Specification Delivers messages to everyone, in the same order. Safety: TO-Machine Signature: input: bcast(a, p) output: brcv(a, p, q) internal: to-order(a, p) State: queue, sequence of (a, p), initially empty for each p: pending[p], sequence of a, initially empty next[p], positive integer, initially 1 TO 39

![TO-Machine Transitions: bcast(a, p) Effect: append a to pending[p] to-order(a, p) Precondition: a is TO-Machine Transitions: bcast(a, p) Effect: append a to pending[p] to-order(a, p) Precondition: a is](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-40.jpg)

TO-Machine Transitions: bcast(a, p) Effect: append a to pending[p] to-order(a, p) Precondition: a is head of pending[p] Effect: remove head of pending[p] append (a, p) to queue brcv(a, p, q) Precondition: queue[next[q]] = (a, p) Effect: next[q] : = next[q] + 1 40

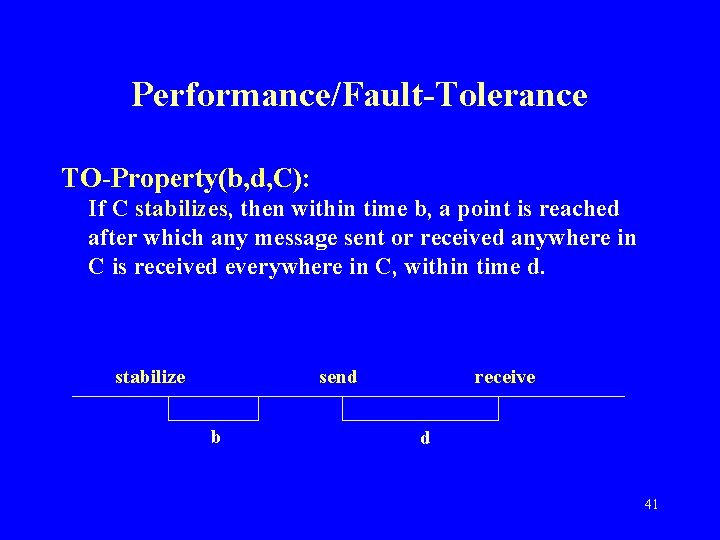

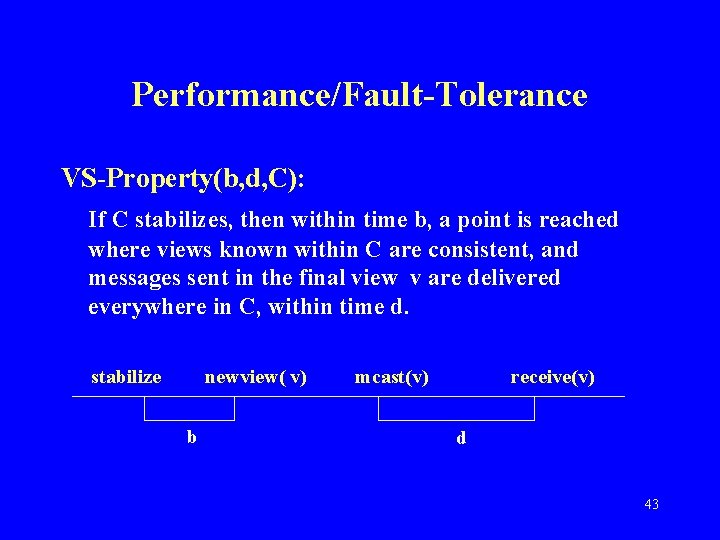

Performance/Fault-Tolerance TO-Property(b, d, C): If C stabilizes, then within time b, a point is reached after which any message sent or received anywhere in C is received everywhere in C, within time d. stabilize send b receive d 41

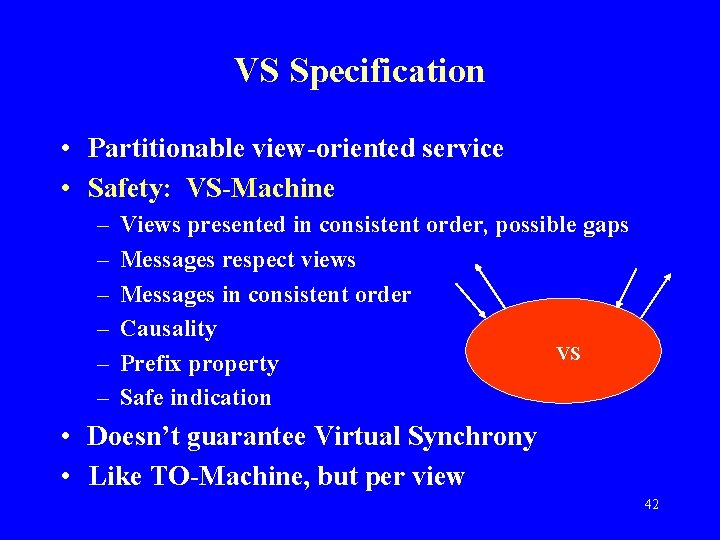

VS Specification • Partitionable view-oriented service • Safety: VS-Machine – – – Views presented in consistent order, possible gaps Messages respect views Messages in consistent order Causality VS Prefix property Safe indication • Doesn’t guarantee Virtual Synchrony • Like TO-Machine, but per view 42

Performance/Fault-Tolerance VS-Property(b, d, C): If C stabilizes, then within time b, a point is reached where views known within C are consistent, and messages sent in the final view v are delivered everywhere in C, within time d. stabilize newview( v) b mcast(v) receive(v) d 43

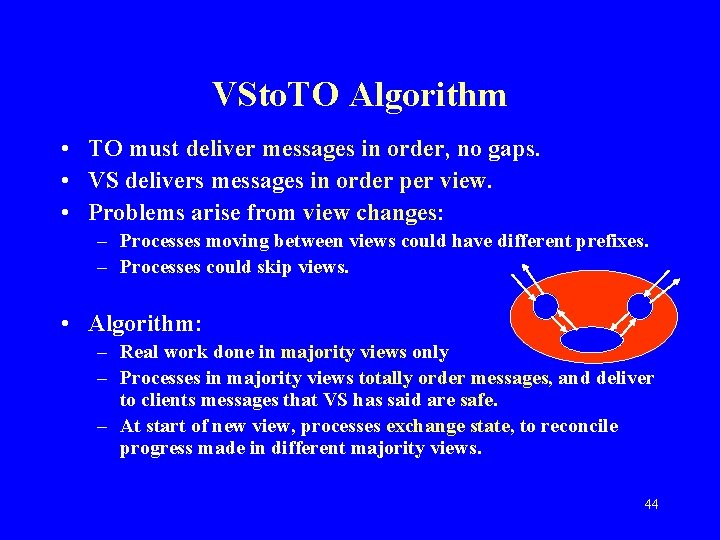

VSto. TO Algorithm • TO must deliver messages in order, no gaps. • VS delivers messages in order per view. • Problems arise from view changes: – Processes moving between views could have different prefixes. – Processes could skip views. • Algorithm: – Real work done in majority views only – Processes in majority views totally order messages, and deliver to clients messages that VS has said are safe. – At start of new view, processes exchange state, to reconcile progress made in different majority views. 44

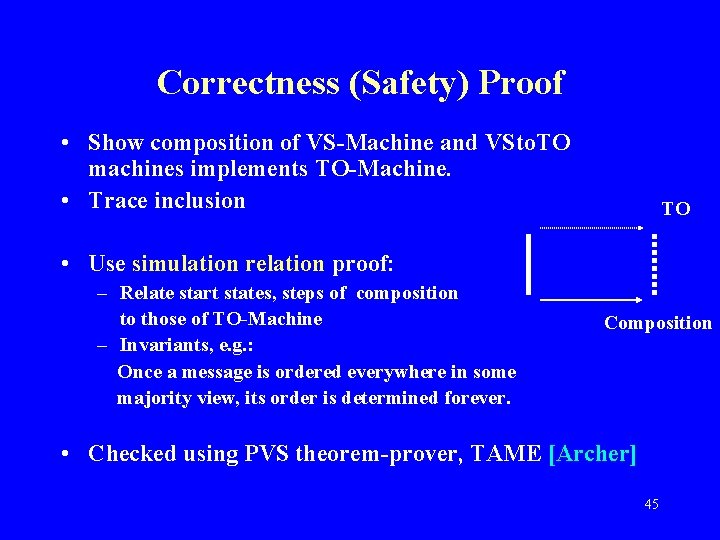

Correctness (Safety) Proof • Show composition of VS-Machine and VSto. TO machines implements TO-Machine. • Trace inclusion TO • Use simulation relation proof: – Relate start states, steps of composition to those of TO-Machine – Invariants, e. g. : Once a message is ordered everywhere in some majority view, its order is determined forever. Composition • Checked using PVS theorem-prover, TAME [Archer] 45

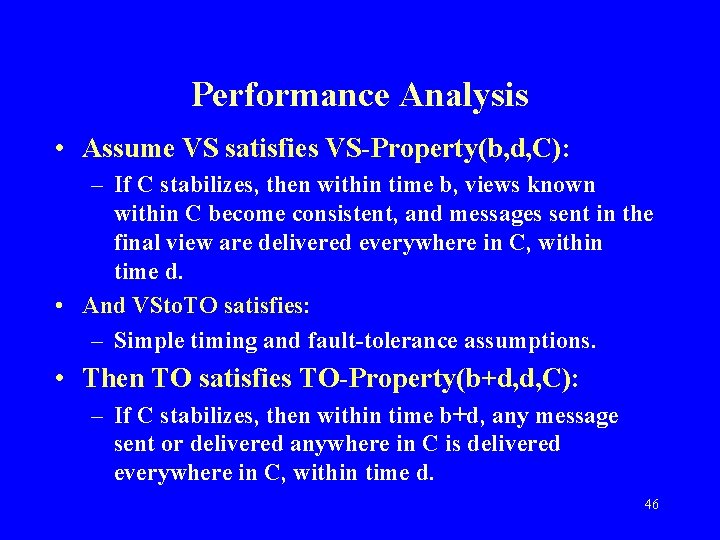

Performance Analysis • Assume VS satisfies VS-Property(b, d, C): – If C stabilizes, then within time b, views known within C become consistent, and messages sent in the final view are delivered everywhere in C, within time d. • And VSto. TO satisfies: – Simple timing and fault-tolerance assumptions. • Then TO satisfies TO-Property(b+d, d, C): – If C stabilizes, then within time b+d, any message sent or delivered anywhere in C is delivered everywhere in C, within time d. 46

Conclusions: VS • • • Models for VS, TO, VSto. TO Proofs, performance analysis Tractable, understandable, modular [PODC 97], [TOCS 00] Follow-on work: – Algorithm for VS [Fekete, Lesley] – Load balancing using VS [Khazan] – Models for other Transis algorithms [Chockler] • But: VS is only a prototype; lacks some key features, like Virtual Synchrony • Next: Try a real system! 47

![2. Ensemble [Hickey, Lynch, van Renesse 99] Goals: • Try, evaluate our approach on 2. Ensemble [Hickey, Lynch, van Renesse 99] Goals: • Try, evaluate our approach on](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-48.jpg)

2. Ensemble [Hickey, Lynch, van Renesse 99] Goals: • Try, evaluate our approach on a real system • Develop techniques for modeling, verifying, analyzing more features, of GC systems, including Virtual Synchrony • Improve on prior system validation methods 48

![Ensemble • Ensemble system [Birman, Hayden 97] – Virtual Synchrony – Layered design, building Ensemble • Ensemble system [Birman, Hayden 97] – Virtual Synchrony – Layered design, building](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-49.jpg)

Ensemble • Ensemble system [Birman, Hayden 97] – Virtual Synchrony – Layered design, building blocks – Coded in ML [Hayden] • Prior verification work for Ensemble and predecessors: – Proving local properties using Nuprl [Hickey] – [Ricciardi], [Friedman] 49

Ensemble • What we did: – Worked with developers – Followed VS example – Developed global specs for key layers: • Virtual Synchrony • Total Order with Virtual Synchrony – Modeled Ensemble algorithm spanning between layers – Attempted proof; found logical error in state exchange algorithm (repaired) – Developed models, proofs for repaired system 50

Conclusions: Ensemble • • • Models for two layers, algorithm Tractable, easily understandable by developers Error, proofs Low-level models similar to actual ML code (4 to 1) [TACAS 99] Follow-on: – Same error found in Horus. – Incremental models, proofs [Hickey] • Next: Use our approach to design new services. 51

![3. Dynamic Views [De Prisco, Fekete, Lynch, Shvartsman 98] Goals: • Define GC services 3. Dynamic Views [De Prisco, Fekete, Lynch, Shvartsman 98] Goals: • Define GC services](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-52.jpg)

3. Dynamic Views [De Prisco, Fekete, Lynch, Shvartsman 98] Goals: • Define GC services that cope with both: – Long-term changes: • Permanent failures, new joins • Changes in the “universe” of processes – Transient changes • Use these to design consistent total order and consistent replicated data algorithms that tolerate both long-term and transient changes. 52

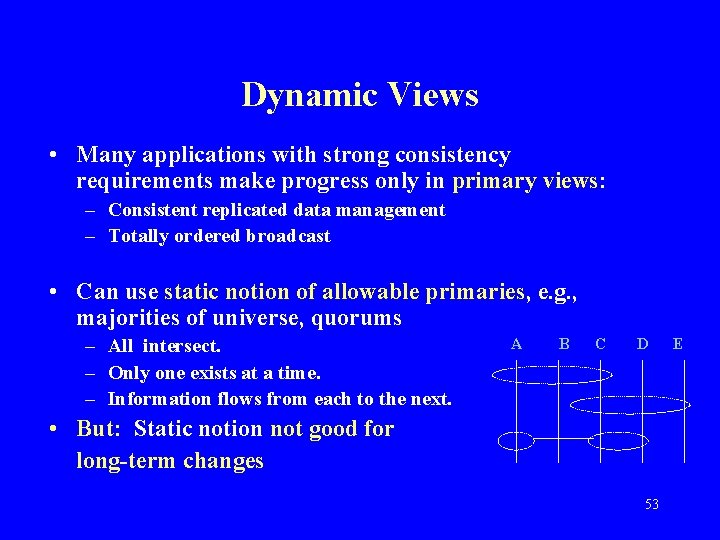

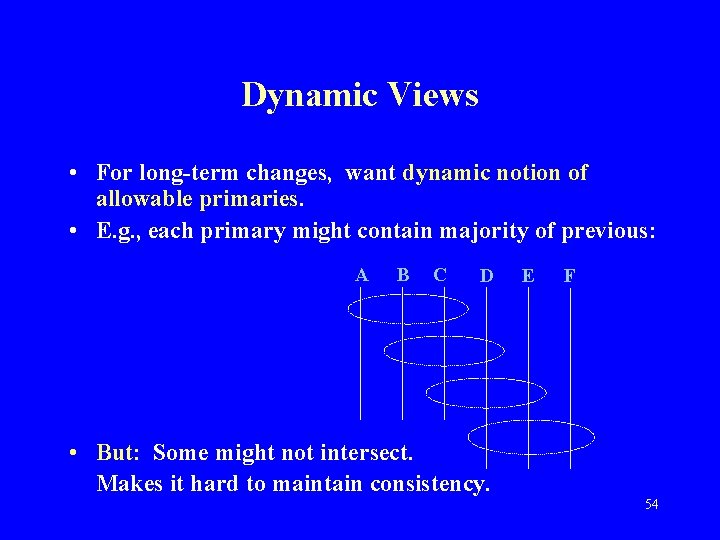

Dynamic Views • Many applications with strong consistency requirements make progress only in primary views: – Consistent replicated data management – Totally ordered broadcast • Can use static notion of allowable primaries, e. g. , majorities of universe, quorums – All intersect. – Only one exists at a time. – Information flows from each to the next. A B C D • But: Static notion not good for long-term changes 53 E

Dynamic Views • For long-term changes, want dynamic notion of allowable primaries. • E. g. , each primary might contain majority of previous: A B C D • But: Some might not intersect. Makes it hard to maintain consistency. E F 54

Dynamic Views • Key problem: – Processes may have different opinions about which is the previous primary – Could even be disjoint. • [Yeger-Lotem, Keidar, Dolev 97] algorithm – Keeps track of all possible previous primaries. – Ensures intersection with all of them. 55

![Dynamic Views What we did: • Defined Dynamic View Service, DVS, based on [YKD] Dynamic Views What we did: • Defined Dynamic View Service, DVS, based on [YKD]](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-56.jpg)

Dynamic Views What we did: • Defined Dynamic View Service, DVS, based on [YKD] • Designed to tolerate long-term failures • Membership: – Views delivered in consistent order, with possible gaps. – Ensures new primary intersects all possible previous primaries. • Communication: Similar to. VS – Messages delivered within views, – Prefix property, safe notifications. 56

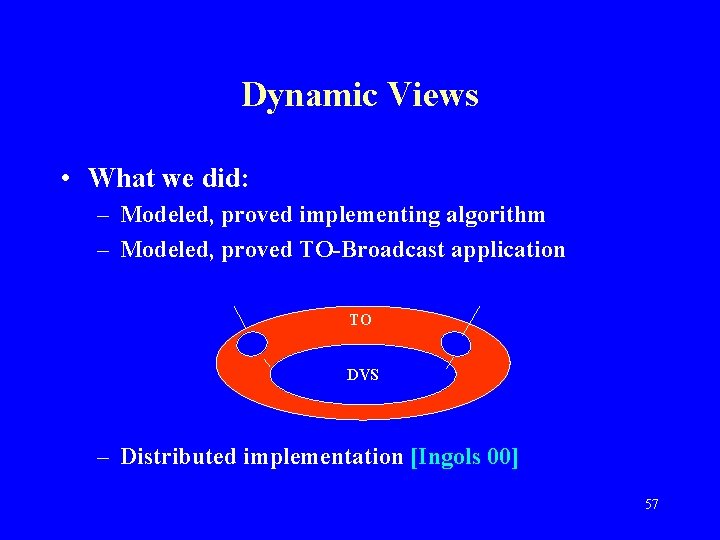

Dynamic Views • What we did: – Modeled, proved implementing algorithm – Modeled, proved TO-Broadcast application TO DVS – Distributed implementation [Ingols 00] 57

Handling Transient Failures: Dynamic Configurations • Configuration = Set of processes plus structure, e. g. , set of quorums, leader, … • Application: Highly available consistent replicated data management: – Paxos [Lamport], uses leader, quorums – [Attiya, Bar-Noy, Dolev], uses read quorums and write quorums • Quorums allow flexibility, availability in the face of transient failures. 58

![Dynamic Configurations [De Prisco, Fekete, Lynch, Shvartsman 99, 00] • Combine ideas/benefits of – Dynamic Configurations [De Prisco, Fekete, Lynch, Shvartsman 99, 00] • Combine ideas/benefits of –](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-59.jpg)

Dynamic Configurations [De Prisco, Fekete, Lynch, Shvartsman 99, 00] • Combine ideas/benefits of – Dynamic views, for long-term failures, and – Static configurations, for transient failures • Idea: – Allow configuration to change (reconfiguration). – Each configuration satisfies intersection properties with previous configuration • Example: – Config = (membership set, read quorums, write quorums) – Membership set of new configuration contains read quorum and write quorum of previous configuration 59

Dynamic Configurations What we did: • Defined dynamic configuration service DCS, guaranteeing intersection properties w. r. t. all possible previous configurations. • Designed implementing algorithm, extending [YKD] • Developed application: Replicated data – Dynamic version of [Paxos] – Dynamic version of [Attiya, Bar. Noy, Dolev] – Tolerate • Transient failures, using quorums • Longer-term failures, using reconfiguration 60

Conclusions: Dynamic Views • New DVS, DCS services for long-term changes in set of processes • Applications, implementations • Decomposed complex algorithms into tractable pieces: – Service specification, implementation, application – Static algorithm vs. reconfiguration • Couldn’t have done it without the formal framework. • [PODC 98], [DISC 99] 61

![4. Scalable Group Communication [Keidar, Khazan 99], [Keidar, Khazan, Lynch, Shvartsman 00] Goal: • 4. Scalable Group Communication [Keidar, Khazan 99], [Keidar, Khazan, Lynch, Shvartsman 00] Goal: •](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-62.jpg)

4. Scalable Group Communication [Keidar, Khazan 99], [Keidar, Khazan, Lynch, Shvartsman 00] Goal: • Make GC work in wide area networks What we did: • Defined desired properties for GC services • Defined spec for scalable group membership service [Keidar, Sussman, Marzullo, Dolev 00], implemented on small set of membership servers 62

Scalable Group Communication What we did: • Developed new, scalable GC algorithms: – Use scalable GM service – Multicast implemented on clients – Efficient: Algorithm for virtual synchrony uses only one round for state exchange, in parallel with membership service’s agreement on views. – Processes can join during reconfiguration. • Distributed implementation [Tarashchanskiy] 63

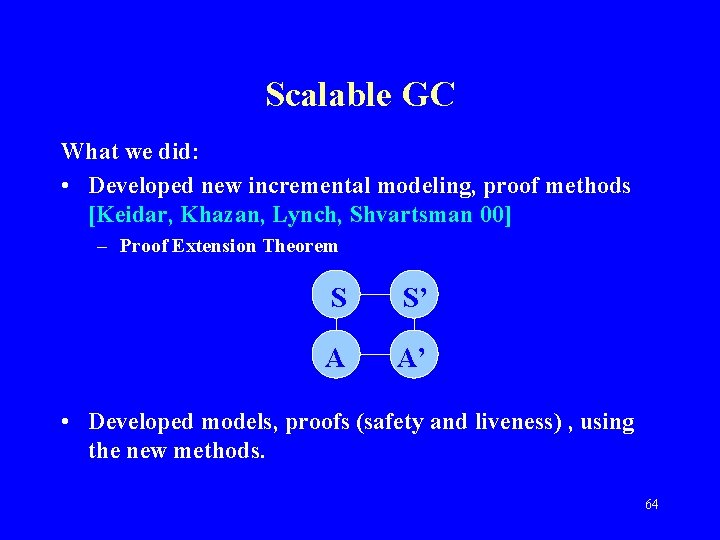

Scalable GC What we did: • Developed new incremental modeling, proof methods [Keidar, Khazan, Lynch, Shvartsman 00] – Proof Extension Theorem S S’ A A’ • Developed models, proofs (safety and liveness) , using the new methods. 64

Conclusions: Scalable GC • • Specs, new algorithms, proofs New incremental proof methods Couldn’t have done it without the formal framework. [ICDCS 99], [ICSE 00] 65

IV. Conclusions 66

![Practical GC Systems: Current Status [Birman 99] • Commercial successes: – Stock exchange (Zurich, Practical GC Systems: Current Status [Birman 99] • Commercial successes: – Stock exchange (Zurich,](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-67.jpg)

Practical GC Systems: Current Status [Birman 99] • Commercial successes: – Stock exchange (Zurich, New York) – Air-traffic control (France) • Problems: – Performance, for strong guarantees like Virtual Synchrony – Not integrated with OO programming technologies. • Trends: – Flexible services – Weaker guarantees; better performance – Integration with OO technologies 67

Summary GC • GC services help in programming dynamic distributed systems, though scalability, integration problems remain. • Our contributions: – Modeling style: Automata + performance properties – Techniques: Conditional performance analysis, incremental modeling/proof – Models, proofs for key services – Discovered errors – New services: Dynamic views, scalable GC • Mathematical framework makes it possible to design more complex systems correctly. 68

Conditional Performance Analysis • Make conditional claims about system behavior, under various assumptions about behavior of environment, network. • Include timing, performance, failures. • Benefits: – Formal performance predictions – Says when system makes specific guarantees • Normal case + failure cases • Parameters, sensitivity analysis – Composable – Get probabilistic claims as corollaries 69

CP Analysis: Typical Hypotheses • • • Stabilization of underlying network. Limited rate of change. Bounds on message delay. Limited amount of failure (number, density). Limit input arrivals (number, density). • Method allows focus on tractable cases. 70

Future Work • Consider more dynamic settings: – – – Join, leave requests Processor failures: Volatile memory, Byzantine behavior Communication failures Changing network topology Mobility • Design, model, analyze more services, applications – Communication, data sharing services – Other services • Lower bounds, impossibility results • Math foundations 71

Math Foundations • Models: – General models for timing behavior, hybrid continuous/discrete behavior, failures, probabilistic behavior, process creation, … – Models for mobility (based on Hybrid I/O Automata) – Combined models • Proof and performance analysis methods: – Measures – Conditional performance analysis – Incremental modeling, proof 72

Communication: View-oriented GC • Scalable GC – – GC Experiments CP analysis Compare predicted, observed performance Applications • Dynamic views, dynamic configurations – Experiments, Analysis, … • Self-stabilization • Lower bounds, impossibility results 73

![Scalable Reliable Multicast [Floyd, Jacobson, et al. 95] • IP Multicast+ retransmission protocol • Scalable Reliable Multicast [Floyd, Jacobson, et al. 95] • IP Multicast+ retransmission protocol •](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-74.jpg)

Scalable Reliable Multicast [Floyd, Jacobson, et al. 95] • IP Multicast+ retransmission protocol • Modeling, analysis [Livadas, Keidar, Lynch] SRM IPMcast 74

![Other communication services • Bimodal Multicast [Birman, Hayden, et al. 99] • Compare GC Other communication services • Bimodal Multicast [Birman, Hayden, et al. 99] • Compare GC](http://slidetodoc.com/presentation_image/bc97655bb99e0a6e7373d2ca9790d018/image-75.jpg)

Other communication services • Bimodal Multicast [Birman, Hayden, et al. 99] • Compare GC services with SRM, Bimodal Multicast • Study tradeoffs between strength of ordering and reliability guarantees vs. performance • Multicast with Qo. S [Bar-Joseph, Keidar, Anker, Lynch 00] – Models for: • Bandwidth reservation service • TO Multicast service with Qo. S (latency, bandwidth) – Algorithms implementing TO-Qo. S using reservation service 75

Data Sharing • Coherent – Analyze, compare implementations – Lower bounds, impossibility results • Less coherent • Tradeoffs between coherence guarantees and performance 76

Other services for dynamic systems: • • Registry/location services Leader election Consensus Resource allocation Establishing spanning trees, overlay networks Load-balancing … 77

Mobility • Mobile system models – Ad hoc, cellular, mixed (Oxygen) – Failure models – Formalized using Hybrid I/O Automata • Mobile system services – – Location services Group membership, group communication Data sharing Applications: Games, military operations 78

- Slides: 78