SWG Competitive Project Office Introduction to IBMs zOS

SWG Competitive Project Office Introduction to IBM’s z/OS The Operating System for System z

Defining characteristics of z/OS n Uses address spaces to ensure isolation of private areas n Ensures data integrity, regardless of how large the user population might be. n Can process a large number of concurrent batch jobs, with automatic workload balancing n Allows security to be incorporated into applications, resources, and user profiles. n Allows multiple communications subsystems at the same time n Provides extensive recovery, making unplanned system restarts very rare. n Can manage mixed workloads n Can manage large I/O configurations of 1000 s of disk drives, automated tape libraries, large printers, networks of terminals, etc. Can be controlled from one or more operator terminals, or from application programming interfaces (APIs) that allow automation of routine operator functions. n 64 BIT Virtual Address Space n z. CPO z. Class Introduction to z/OS 2

What’s an Address Space? n An execution environment in z/OS u n How many Address Spaces can there be? u n u u u Multiple Units of Work can be active within the address space (parallel execution) These Units of work are called TASKs User Address spaces do not communicate with each other If one address space fails the other user address spaces continue to run System Address Spaces u u n THOUSANDS User Address Spaces are unique and run single applications u n Remember z/OS runs in an LPAR (it is like a distributed server (a box on the floor)) Execute System Components (elements), e. g. − DB 2, CICS, SMF, RMF, DFSMS … (More coming) − These Components are called Subsystems (like a system within a system) System Components Communicate with each other Cloned or Duplicate Address Spaces running as a Subsystem communicate with each other u u u Multiple Address spaces of a Subsystem and as a Component act as one If one address space fails, the Component, e. g. Running DB 2 continues to execute This enables continuous platform availability z. CPO z. Class Introduction to z/OS 3

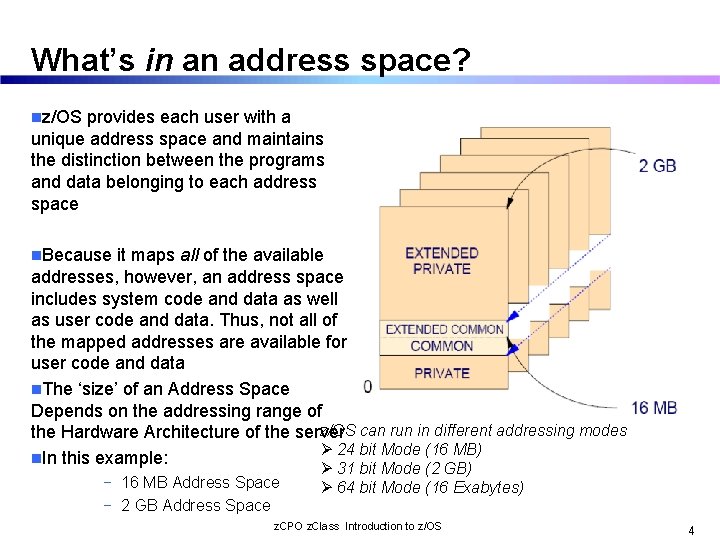

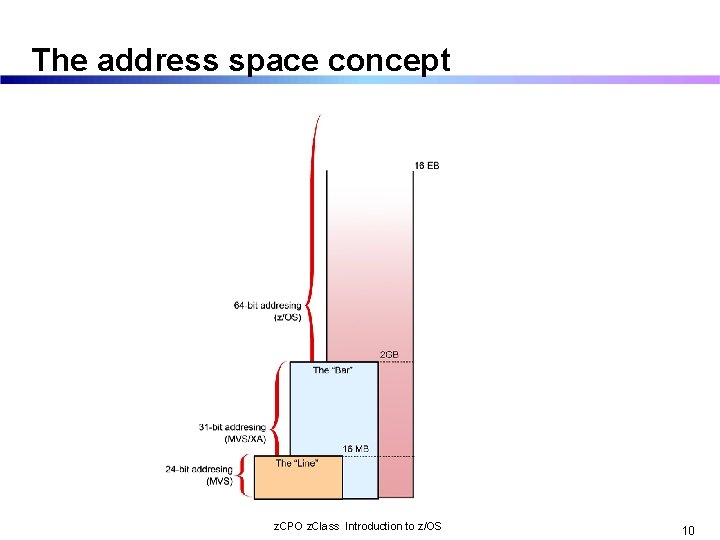

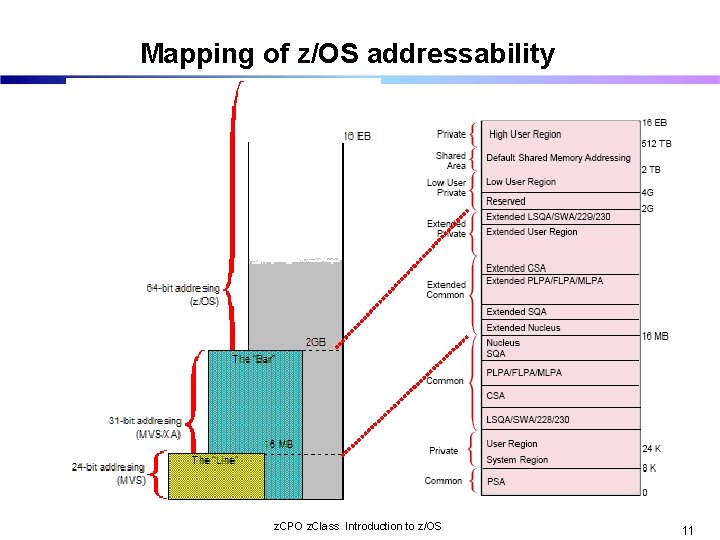

What’s in an address space? nz/OS provides each user with a unique address space and maintains the distinction between the programs and data belonging to each address space n. Because it maps all of the available addresses, however, an address space includes system code and data as well as user code and data. Thus, not all of the mapped addresses are available for user code and data n. The ‘size’ of an Address Space Depends on the addressing range of z/OS can run in different addressing modes the Hardware Architecture of the server Ø 24 bit Mode (16 MB) n. In this example: − 16 MB Address Space − 2 GB Address Space Ø 31 bit Mode (2 GB) Ø 64 bit Mode (16 Exabytes) z. CPO z. Class Introduction to z/OS 4

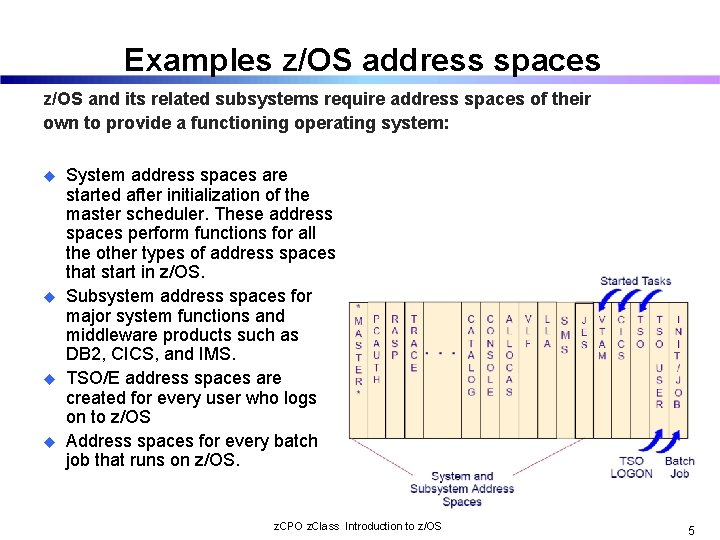

Examples z/OS address spaces z/OS and its related subsystems require address spaces of their own to provide a functioning operating system: u u System address spaces are started after initialization of the master scheduler. These address spaces perform functions for all the other types of address spaces that start in z/OS. Subsystem address spaces for major system functions and middleware products such as DB 2, CICS, and IMS. TSO/E address spaces are created for every user who logs on to z/OS Address spaces for every batch job that runs on z/OS. z. CPO z. Class Introduction to z/OS 5

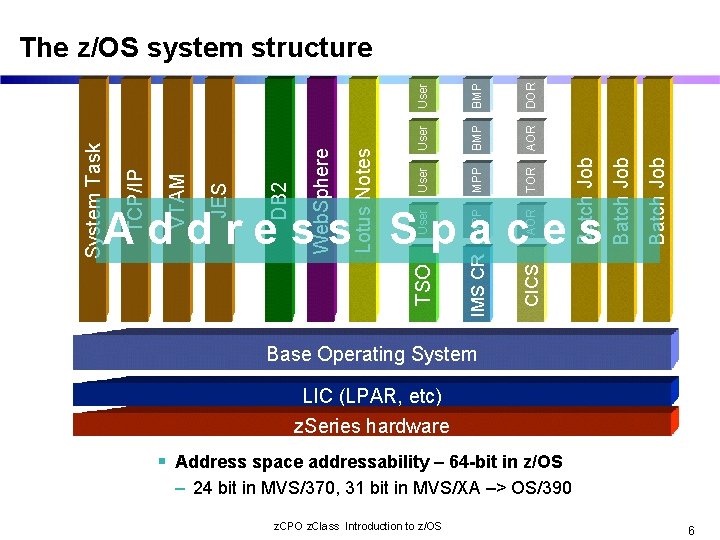

DOR MPP AOR IMS CR CICS Batch Job User TSO Address Spaces Batch Job AOR TOR BMP MPP User Lotus Notes Web. Sphere DB 2 JES VTAM TCP/IP System Task User The z/OS system structure Base Operating System LIC (LPAR, etc) z. Series hardware Address space addressability – 64 -bit in z/OS – 24 bit in MVS/370, 31 bit in MVS/XA –> OS/390 z. CPO z. Class Introduction to z/OS 6

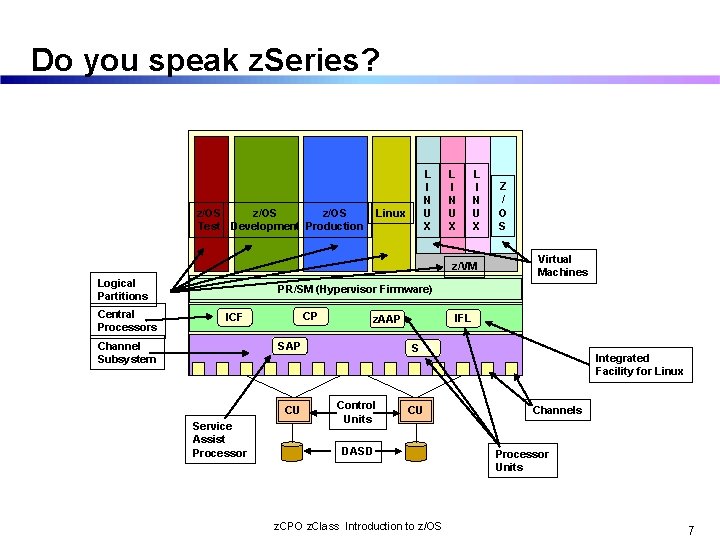

Do you speak z. Series? z/OS Test Development Production L I N U X Linux L I N U X z/VM Logical Partitions Central Processors Z / O S Virtual Machines PR/SM (Hypervisor Firmware) CP ICF Channel Subsystem SAP CU Service Assist Processor IFL z. AAP SAP Control Units CU DASD z. CPO z. Class Introduction to z/OS Integrated Facility for Linux Channels Processor Units 7

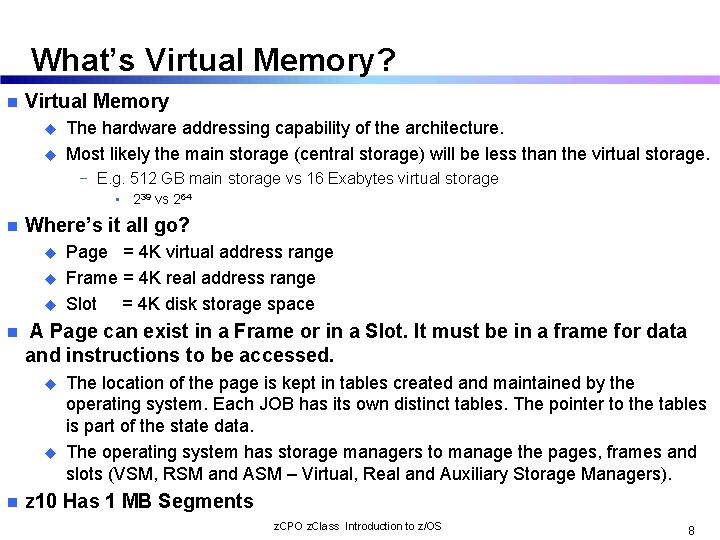

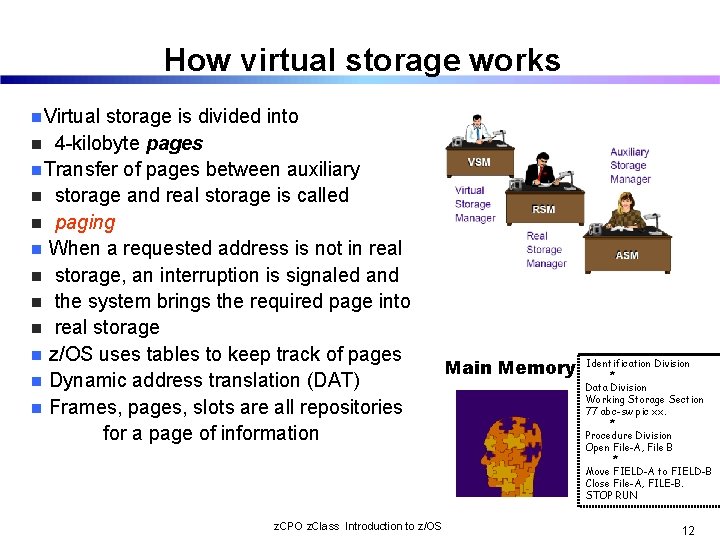

What’s Virtual Memory? n Virtual Memory u u The hardware addressing capability of the architecture. Most likely the main storage (central storage) will be less than the virtual storage. − E. g. 512 GB main storage vs 16 Exabytes virtual storage • 239 vs 264 n Where’s it all go? u u u n A Page can exist in a Frame or in a Slot. It must be in a frame for data and instructions to be accessed. u u n Page = 4 K virtual address range Frame = 4 K real address range Slot = 4 K disk storage space The location of the page is kept in tables created and maintained by the operating system. Each JOB has its own distinct tables. The pointer to the tables is part of the state data. The operating system has storage managers to manage the pages, frames and slots (VSM, RSM and ASM – Virtual, Real and Auxiliary Storage Managers). z 10 Has 1 MB Segments z. CPO z. Class Introduction to z/OS 8

The address space concept z. CPO z. Class Introduction to z/OS 10

Mapping of z/OS addressability z. CPO z. Class Introduction to z/OS 11

How virtual storage works n. Virtual storage is divided into n 4 -kilobyte pages n. Transfer of pages between auxiliary n storage and real storage is called n paging n When a requested address is not in real n storage, an interruption is signaled and n the system brings the required page into n real storage n z/OS uses tables to keep track of pages n Dynamic address translation (DAT) n Frames, pages, slots are all repositories for a page of information z. CPO z. Class Introduction to z/OS Main Memory Identification Division * Data Division Working Storage Section 77 abc-sw pic xx. * Procedure Division Open File-A, File B * Move FIELD-A to FIELD-B Close File-A, FILE-B. STOP RUN 12

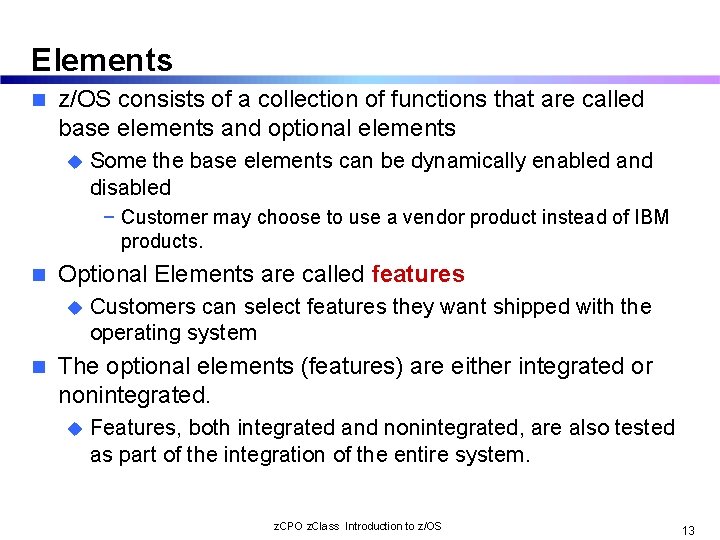

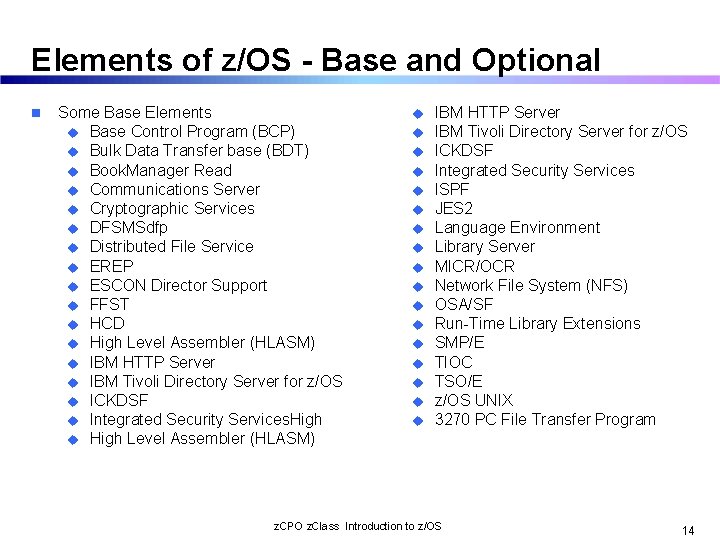

Elements n z/OS consists of a collection of functions that are called base elements and optional elements u Some the base elements can be dynamically enabled and disabled − Customer may choose to use a vendor product instead of IBM products. n Optional Elements are called features u n Customers can select features they want shipped with the operating system The optional elements (features) are either integrated or nonintegrated. u Features, both integrated and nonintegrated, are also tested as part of the integration of the entire system. z. CPO z. Class Introduction to z/OS 13

Elements of z/OS - Base and Optional n Some Base Elements u Base Control Program (BCP) u Bulk Data Transfer base (BDT) u Book. Manager Read u Communications Server u Cryptographic Services u DFSMSdfp u Distributed File Service u EREP u ESCON Director Support u FFST u HCD u High Level Assembler (HLASM) u IBM HTTP Server u IBM Tivoli Directory Server for z/OS u ICKDSF u Integrated Security Services. High u High Level Assembler (HLASM) u u u u u IBM HTTP Server IBM Tivoli Directory Server for z/OS ICKDSF Integrated Security Services ISPF JES 2 Language Environment Library Server MICR/OCR Network File System (NFS) OSA/SF Run-Time Library Extensions SMP/E TIOC TSO/E z/OS UNIX 3270 PC File Transfer Program z. CPO z. Class Introduction to z/OS 14

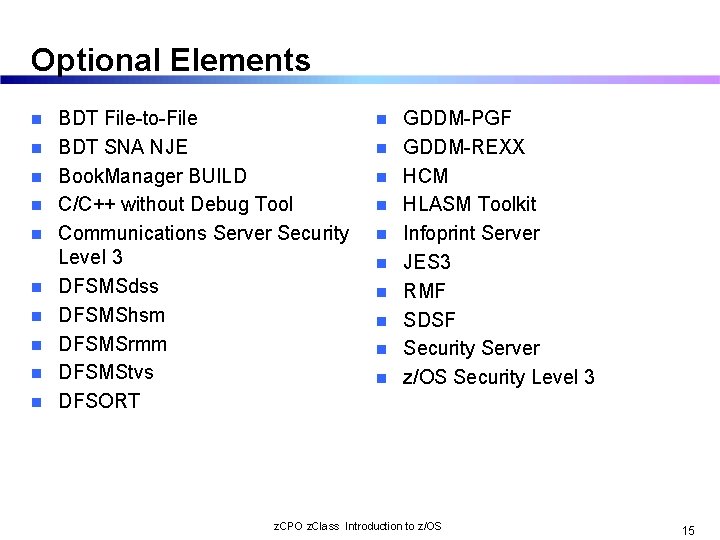

Optional Elements n n n n n BDT File-to-File BDT SNA NJE Book. Manager BUILD C/C++ without Debug Tool Communications Server Security Level 3 DFSMSdss DFSMShsm DFSMSrmm DFSMStvs DFSORT n n n n n GDDM-PGF GDDM-REXX HCM HLASM Toolkit Infoprint Server JES 3 RMF SDSF Security Server z/OS Security Level 3 z. CPO z. Class Introduction to z/OS 15

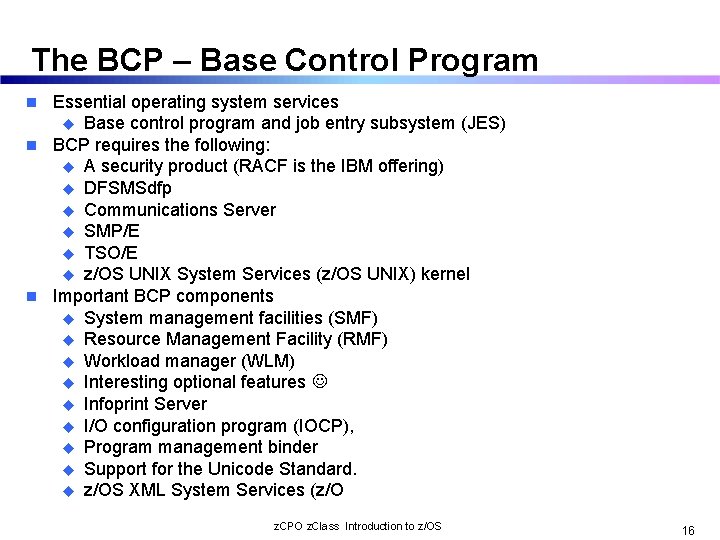

The BCP – Base Control Program Essential operating system services u Base control program and job entry subsystem (JES) n BCP requires the following: u A security product (RACF is the IBM offering) u DFSMSdfp u Communications Server u SMP/E u TSO/E u z/OS UNIX System Services (z/OS UNIX) kernel n Important BCP components u System management facilities (SMF) u Resource Management Facility (RMF) u Workload manager (WLM) u Interesting optional features u Infoprint Server u I/O configuration program (IOCP), u Program management binder u Support for the Unicode Standard. u z/OS XML System Services (z/O n z. CPO z. Class Introduction to z/OS 16

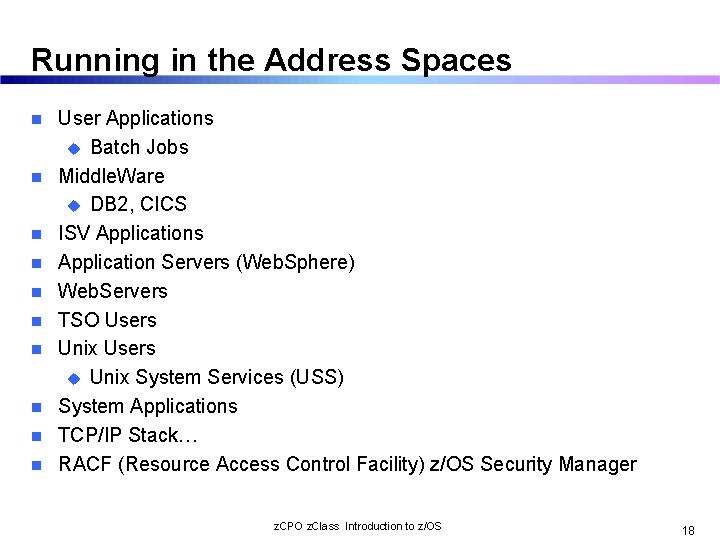

Running in the Address Spaces n n n n n User Applications u Batch Jobs Middle. Ware u DB 2, CICS ISV Applications Application Servers (Web. Sphere) Web. Servers TSO Users Unix Users u Unix System Services (USS) System Applications TCP/IP Stack… RACF (Resource Access Control Facility) z/OS Security Manager z. CPO z. Class Introduction to z/OS 18

Who Makes an Address Space n When z/OS is “Booted” (really IPLed (Initial Program Load)) a component call the Master Scheduler is built as the 1 st address space. u The Master Scheduler creates other address spaces as needed. − − − n When a TSO User Logs on When A USS User Logs on When A System Task Is started When JES is Started When JES Initiators are Started (they pull jobs off the JES Queues) More examples follow u SMF – System Management Facility u RMF – Resource Management Facility u DFSMS – Data Facility Storage Management Subsystem z. CPO z. Class Introduction to z/OS 19

What Type of System Applications? n n n n GRS – Global Resource Serialization u Controls Access to Resources RACF – Resource Access Control Facility u Provides Security Services WLM – Workload Manager u Dynamically sends work to resources & resources to white space JES – Job Entry Subsystem u Queues up work for entry into the z/OS u Queues up output for sending work to printers SMF – System Management Facilty u Gathers messages from system applications and writes them to disk. Performance data, events …. RMF – Resource Measurement Facility u Provides reports on system and application activity u Graphical real time operating system data These Components and subsystems communicate with each other …. across address spaces. z. CPO z. Class Introduction to z/OS 20

SMF – Part of BCP n SMF – System Message Recording u u u n Components write messages to SMF, SMF writes messages to a dataset Every message has a specific record id associated with it Record formats are different The data is post processed System programmers configure what messages are/are not written There are two SMF datasets – One is hot and when the dataset if full, automation or the operator switches to the standby data set and them dumps the data of the full data set The data is used for various purposes u u u Performance analysis Workload management behavior Resource consumption − I/O, Memory, CPU u n z/OS architects / developers use a system service to write SMF records u u n Error Analysis Developers determine if, where, and when in the code a record is written There is an SMF developer/designer that assigns the record id and reviews the record format/structure and content Data and record collection is event driven, e. g. u u u Start and stop of a job − Reason codes indicating why job was stopped Open and close of a dataset z. CPO z. Class Introduction to z/OS Count of I/O records read/written 21

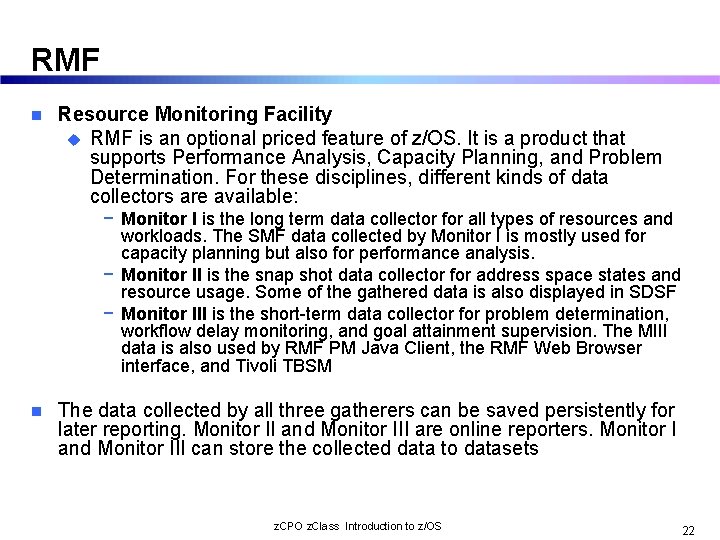

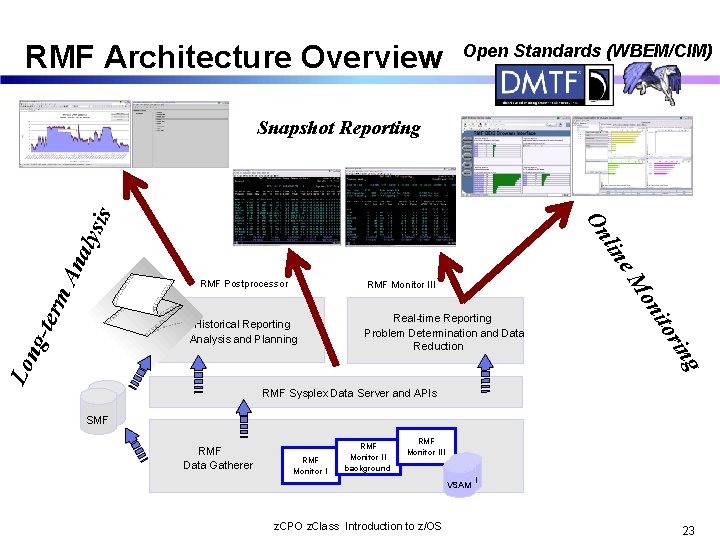

RMF n Resource Monitoring Facility u RMF is an optional priced feature of z/OS. It is a product that supports Performance Analysis, Capacity Planning, and Problem Determination. For these disciplines, different kinds of data collectors are available: − Monitor I is the long term data collector for all types of resources and workloads. The SMF data collected by Monitor I is mostly used for capacity planning but also for performance analysis. − Monitor II is the snap shot data collector for address space states and resource usage. Some of the gathered data is also displayed in SDSF − Monitor III is the short-term data collector for problem determination, workflow delay monitoring, and goal attainment supervision. The MIII data is also used by RMF PM Java Client, the RMF Web Browser interface, and Tivoli TBSM n The data collected by all three gatherers can be saved persistently for later reporting. Monitor II and Monitor III are online reporters. Monitor I and Monitor III can store the collected data to datasets z. CPO z. Class Introduction to z/OS 22

RMF Architecture Overview Open Standards (WBEM/CIM) m. A RMF Monitor III Lo ing Real-time Reporting Problem Determination and Data Reduction r ito Historical Reporting Analysis and Planning on ngter RMF Postprocessor e. M nal y lin On sis Snapshot Reporting RMF Sysplex Data Server and APIs SMF RMF Data Gatherer RMF Monitor II background RMF Monitor III z. CPO z. Class Introduction to z/OS VSAM 23

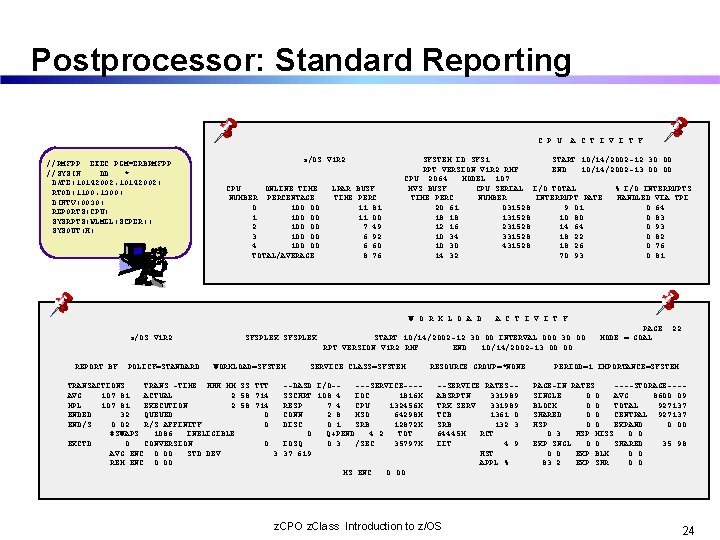

Postprocessor: Standard Reporting C P U //RMFPP EXEC PGM=ERBRMFPP //SYSIN DD * DATE(10142002, 10142002) RTOD(1100, 1300) DINTV(0030) REPORTS(CPU) SYSRPTS(WLMGL(SCPER)) SYSOUT(H) z/OS V 1 R 2 CPU ONLINE TIME NUMBER PERCENTAGE 0 100. 00 1 100. 00 2 100. 00 3 100. 00 4 100. 00 TOTAL/AVERAGE LPAR BUSY TIME PERC 11. 81 11. 00 7. 49 6. 92 6. 60 8. 76 SYSTEM ID SYS 1 START 10/14/2002 -12. 30. 00 RPT VERSION V 1 R 2 RMF END 10/14/2002 -13. 00 CPU 2064 MODEL 107 MVS BUSY CPU SERIAL I/O TOTAL % I/O INTERRUPTS TIME PERC NUMBER INTERRUPT RATE HANDLED VIA TPI 20. 61 031528 9. 01 0. 64 18. 18 131528 10. 80 0. 83 12. 16 231528 14. 64 0. 93 10. 34 331528 18. 22 0. 82 10. 30 431528 18. 26 0. 76 14. 32 70. 93 0. 81 W O R K L O A D z/OS V 1 R 2 REPORT BY: POLICY=STANDARD SYSPLEX WORKLOAD=SYSTEM A C T I V I T Y START 10/14/2002 -12. 30. 00 INTERVAL 000. 30. 00 RPT VERSION V 1 R 2 RMF END 10/14/2002 -13. 00 SERVICE CLASS=SYSTEM TRANSACTIONS TRANS. -TIME HHH. MM. SS. TTT --DASD I/O----SERVICE---AVG 107. 81 ACTUAL 2. 58. 714 SSCHRT 108. 4 IOC 1816 K MPL 107. 81 EXECUTION 2. 58. 714 RESP 7. 4 CPU 132456 K ENDED 32 QUEUED 0 CONN 2. 8 MSO 64298 M END/S 0. 02 R/S AFFINITY 0 DISC 0. 1 SRB 12872 K #SWAPS 1086 INELIGIBLE 0 Q+PEND 4. 2 TOT EXCTD 0 CONVERSION 0 IOSQ 0. 3 /SEC 35797 K AVG ENC 0. 00 STD DEV 3. 37. 619 REM ENC 0. 00 MS ENC 0. 00 RESOURCE GROUP=*NONE --SERVICE RATES-ABSRPTN 331989 TRX SERV 331989 TCB 1361. 0 SRB 132. 3 64445 M RCT IIT 4. 9 HST APPL % z. CPO z. Class Introduction to z/OS PAGE MODE = GOAL 22 PERIOD=1 IMPORTANCE=SYSTEM PAGE-IN RATES ----STORAGE---SINGLE 0. 0 AVG 8600. 09 BLOCK 0. 0 TOTAL 927137 SHARED 0. 0 CENTRAL 927137 HSP 0. 0 EXPAND 0. 00 0. 3 HSP MISS 0. 0 EXP SNGL 0. 0 SHARED 35. 98 0. 0 EXP BLK 0. 0 83. 2 EXP SHR 0. 0 24

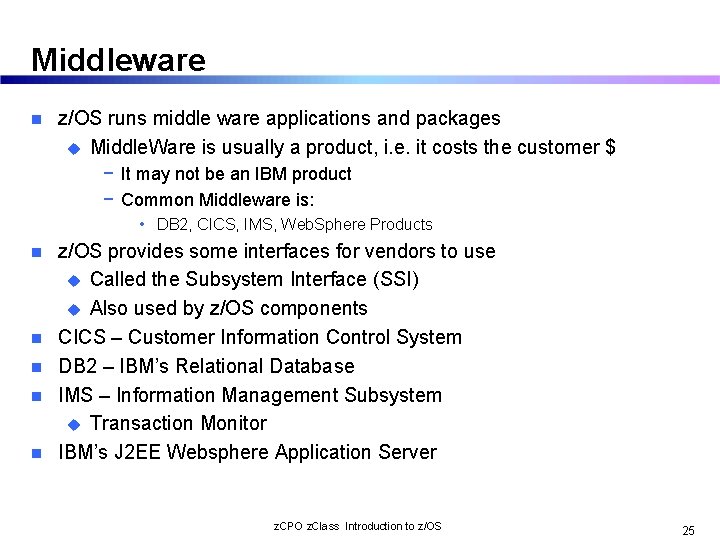

Middleware n z/OS runs middle ware applications and packages u Middle. Ware is usually a product, i. e. it costs the customer $ − It may not be an IBM product − Common Middleware is: • DB 2, CICS, IMS, Web. Sphere Products n n n z/OS provides some interfaces for vendors to use u Called the Subsystem Interface (SSI) u Also used by z/OS components CICS – Customer Information Control System DB 2 – IBM’s Relational Database IMS – Information Management Subsystem u Transaction Monitor IBM’s J 2 EE Websphere Application Server z. CPO z. Class Introduction to z/OS 25

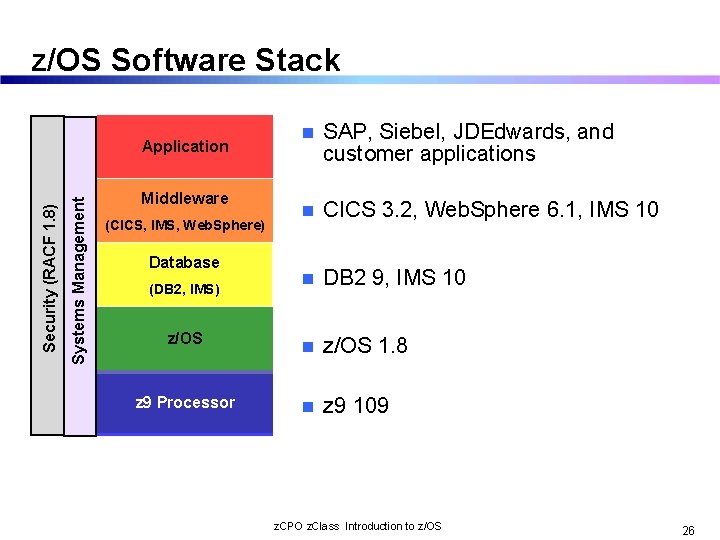

z/OS Software Stack n SAP, Siebel, JDEdwards, and customer applications n CICS 3. 2, Web. Sphere 6. 1, IMS 10 n DB 2 9, IMS 10 z/OS n z/OS 1. 8 z 9 Processor n z 9 109 Systems Management Security (RACF 1. 8) Application Middleware (CICS, IMS, Web. Sphere) Database (DB 2, IMS) z. CPO z. Class Introduction to z/OS 26

Transactions and Data – the z. Series Application “Sweet Spot” n Transaction monitor – manages a transaction u. A program or subsystem that manages or oversees the sequence of events that are part of a transaction u Makes sure the ACID properties of a transaction are maintained u Includes functions such as interfacing to databases and networks and transaction commit/rollback coordination u Provides an API so applications can exploit the services of the transaction monitor n IBM’s z/OS-based transaction monitors: u IMS - Information Management System u CICS - Customer Information Control System u Web. Sphere Application Server for z/OS n A key strength of the z/OS platform is support for high-volume, highperformance transaction management using transaction monitors z. CPO z. Class Introduction to z/OS 27

IMS – Information Management System n “IMS Runs the World” since 1968: u Most Corporate Data is Managed by IMS − Over 95% of Fortune 1000 Companies use IMS − IMS Manages over 15 Billion GBs of Production Data − $2 Trillion/day transferred thru IMS by one customer u Over 50 Billion Transactions a Day run through IMS − IMS serves close to 200 Million users per day − Over 79 million IMS trans/day handled by one customer on a single production Sysplex, 30 million trans/day on a single CEC − 120 M IMS trans/day, 7 M per hour handled by one customer − 4000 trans/sec (250 million/day) across TCP/IP to a single IMS − Over 3000 days without an outage at one large customer − 21, 000 transactions per second on a single z 990, with 4 IMS servers z. CPO z. Class Introduction to z/OS 28

CICS – Customer Information Control System 30+ years of applications n >30 B transactions per day n 5000 packages/2000 ISVs n 30 M CICS users n 50 K CICS/390 licenses, 16 K customers n 950, 000 CICS application programmers n u “it’s the programming model!” n 490 of IBM’s top 500 customers n What is it? CICS provides an execution environment for concurrent program execution for multiple end users, who have access to multiple data types. u CICS will manage the operating environment to provide performance, scalability, security, and integrity u z. CPO z. Class Introduction to z/OS 29

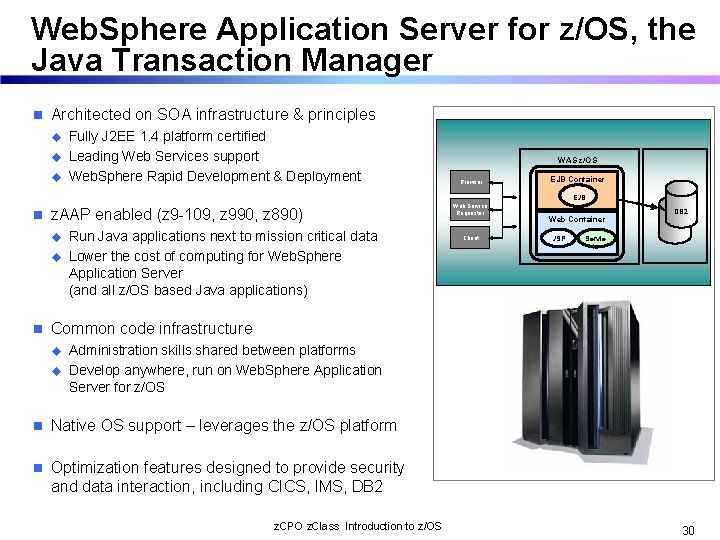

Web. Sphere Application Server for z/OS, the Java Transaction Manager n Architected on SOA infrastructure & principles u u u n z. AAP enabled (z 9 -109, z 990, z 890) u u n Fully J 2 EE 1. 4 platform certified Leading Web Services support Web. Sphere Rapid Development & Deployment Run Java applications next to mission critical data Lower the cost of computing for Web. Sphere Application Server (and all z/OS based Java applications) WAS z/OS Browser Web Service Requestor Client EJB Container EJB s Web Container JSP z. AAP DB 2 Servle ts Common code infrastructure u u Administration skills shared between platforms Develop anywhere, run on Web. Sphere Application Server for z/OS n Native OS support – leverages the z/OS platform n Optimization features designed to provide security and data interaction, including CICS, IMS, DB 2 z. CPO z. Class Introduction to z/OS 30

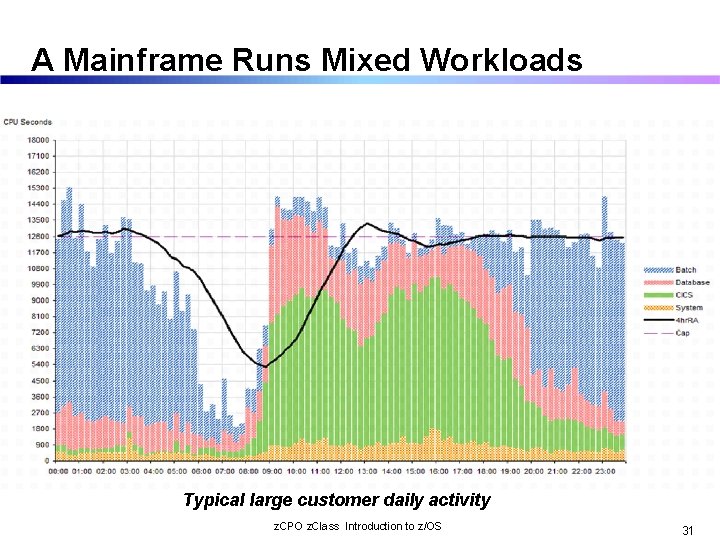

A Mainframe Runs Mixed Workloads Typical large customer daily activity z. CPO z. Class Introduction to z/OS 31

DFSMS – The Premier Storage Management Suite GOALS: n Improve the use of the storage media; for example, by reducing out-of-space abends and providing a way to set a free-space requirement. n Reduce the labor involved in storage management by centralizing control, automating tasks, and providing interactive or batch controls for storage administrators. n Reduce the user's need to be concerned with the physical details of performance, space, and device management. Users can focus on using information instead of managing data. http: //www. ibm. com/systems/storage/software/sms/whatis_sms/ z. CPO z. Class Introduction to z/OS 39

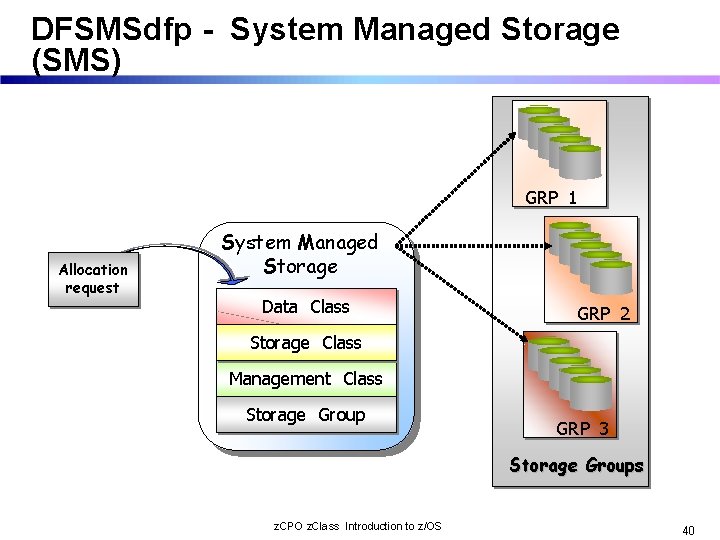

DFSMSdfp - System Managed Storage (SMS) GRP_1 Allocation request System Managed Storage Data Class GRP_2 Storage Class Management Class Storage Group GRP_3 Storage Groups z. CPO z. Class Introduction to z/OS 40

Mitigating Management Costs… n DFSMSdfp constructs are key to data placement and assigning goals, requirements, etc. u. The operating system and subsystems understand the three user specifiable constructs. n DFSMShsm and ABARs are key to implementing the management policy. u. Coherent backups u. Data retirement n DFSMSdss key to movement, copying datasets. n DFSMSrmm key to tape management. z. CPO z. Class Introduction to z/OS 41

WLM DOES THE FOLLOWING Monitors the use of resources by various address spaces n Monitors the system-wide use of resources to determine whether they are fully n utilized n Determines which address space to “swap” out (and when) n Inhibits the creation of new address spaces or steals pages when certain n shortages of real storage exist n Changes the dispatching priority of address spaces to adjust the consumption n of system resources n Selects the devices to be allocated, if a choice of devices exist to balance I/O n devices n z. CPO z. Class Introduction to z/OS 44

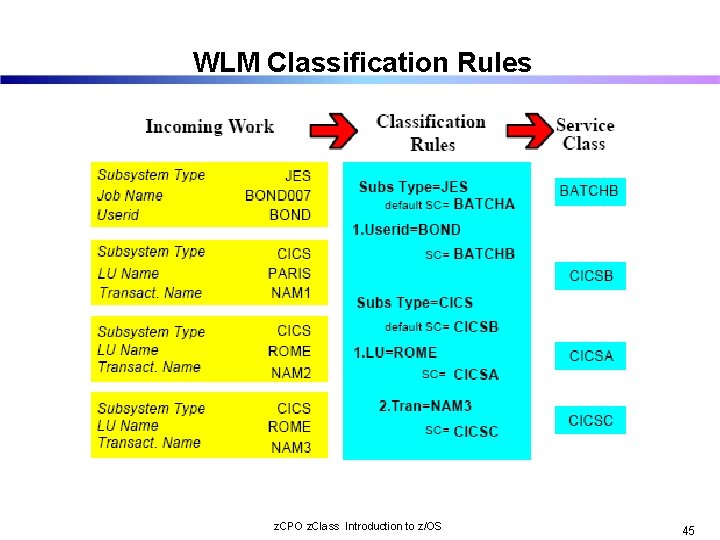

WLM Classification Rules z. CPO z. Class Introduction to z/OS 45

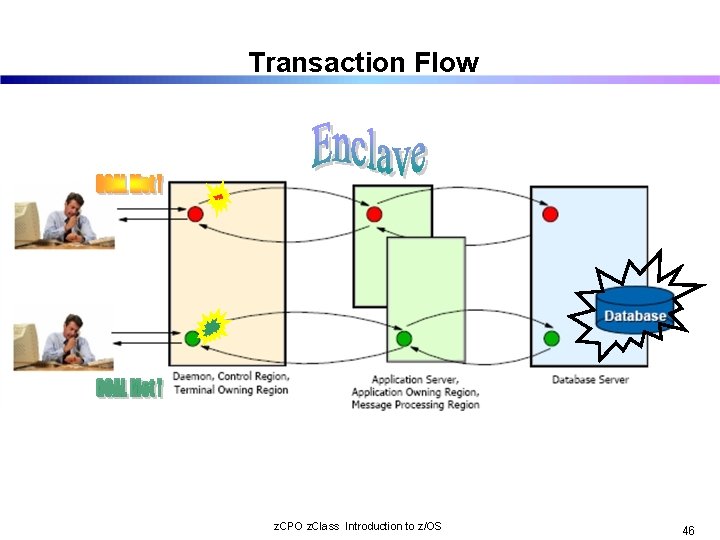

Transaction Flow z. CPO z. Class Introduction to z/OS 46

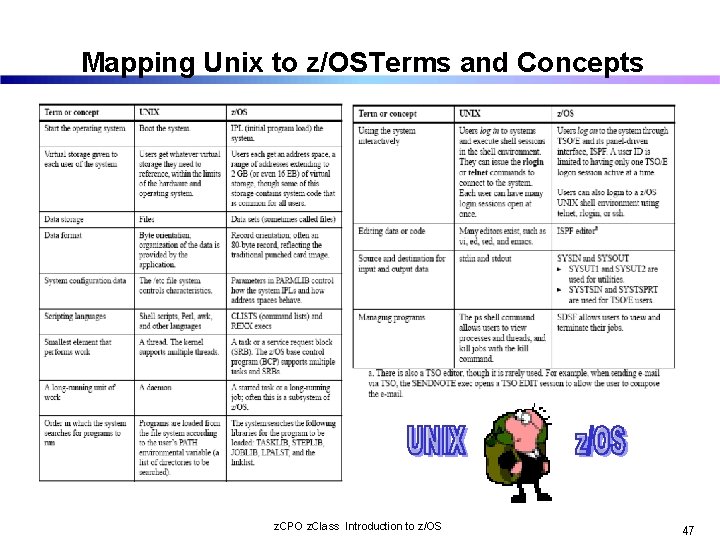

Mapping Unix to z/OSTerms and Concepts z. CPO z. Class Introduction to z/OS 47

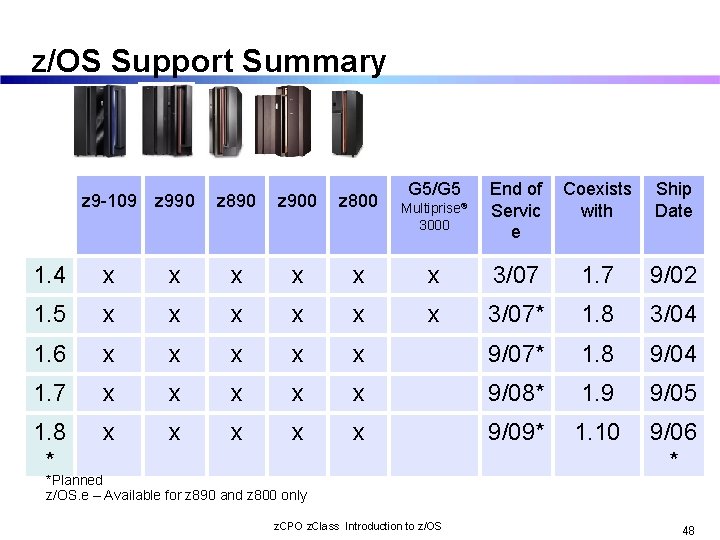

z/OS Support Summary Multiprise® 3000 End of Servic e Coexists with Ship Date x x 3/07 1. 7 9/02 x x x 3/07* 1. 8 3/04 x x x 9/07* 1. 8 9/04 x x 9/08* 1. 9 9/05 x x 9/09* 1. 10 9/06 * z 9 -109 z 990 z 890 z 900 z 800 1. 4 x x 1. 5 x x x 1. 6 x x 1. 7 x 1. 8 * x G 5/G 5 *Planned z/OS. e – Available for z 890 and z 800 only z. CPO z. Class Introduction to z/OS 48

Summary of z/OS facilities n n n Address spaces and virtual storage for users and programs. Physical storage types available: real and auxiliary. Movement of programs and data between real storage and auxiliary storage through paging. Dispatching work for execution, based on priority and ability to execute. An extensive set of facilities for managing files stored on disk or tape. Operators use consoles to start and stop z/OS, enter commands, and manage the operating system. z. CPO z. Class Introduction to z/OS 49

SWG Competitive Project Office Introduction to IBM’s System z Clustering Technologies Parallel Sysplex And LPAR Cluster

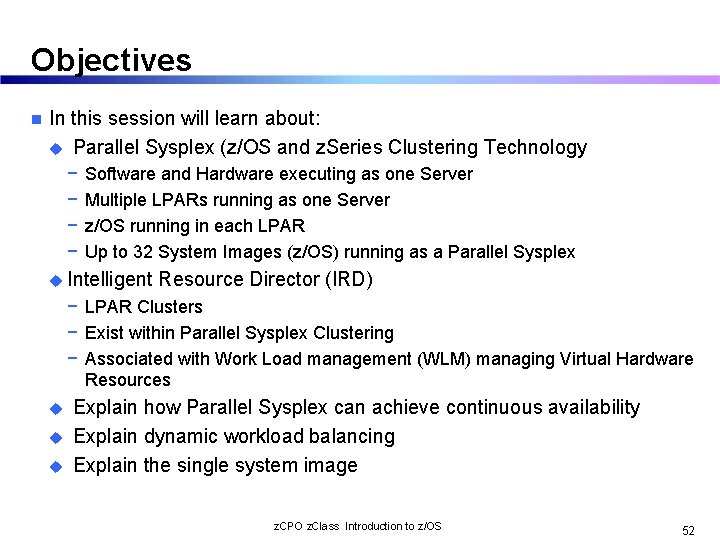

Objectives n In this session will learn about: u Parallel Sysplex (z/OS and z. Series Clustering Technology − − Software and Hardware executing as one Server Multiple LPARs running as one Server z/OS running in each LPAR Up to 32 System Images (z/OS) running as a Parallel Sysplex u Intelligent Resource Director (IRD) − LPAR Clusters − Exist within Parallel Sysplex Clustering − Associated with Work Load management (WLM) managing Virtual Hardware Resources u u u Explain how Parallel Sysplex can achieve continuous availability Explain dynamic workload balancing Explain the single system image z. CPO z. Class Introduction to z/OS 52

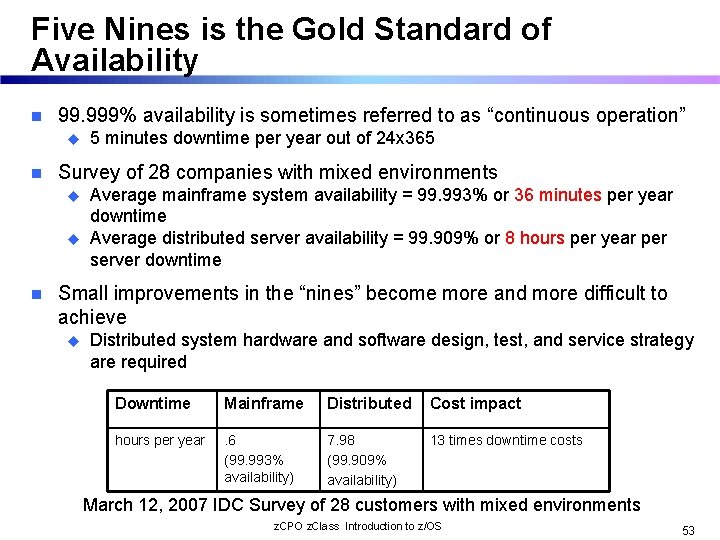

Five Nines is the Gold Standard of Availability n 99. 999% availability is sometimes referred to as “continuous operation” u n Survey of 28 companies with mixed environments u u n 5 minutes downtime per year out of 24 x 365 Average mainframe system availability = 99. 993% or 36 minutes per year downtime Average distributed server availability = 99. 909% or 8 hours per year per server downtime Small improvements in the “nines” become more and more difficult to achieve u Distributed system hardware and software design, test, and service strategy are required Downtime Mainframe Distributed Cost impact hours per year . 6 (99. 993% availability) 7. 98 (99. 909% availability) 13 times downtime costs March 12, 2007 IDC Survey of 28 customers with mixed environments z. CPO z. Class Introduction to z/OS 53

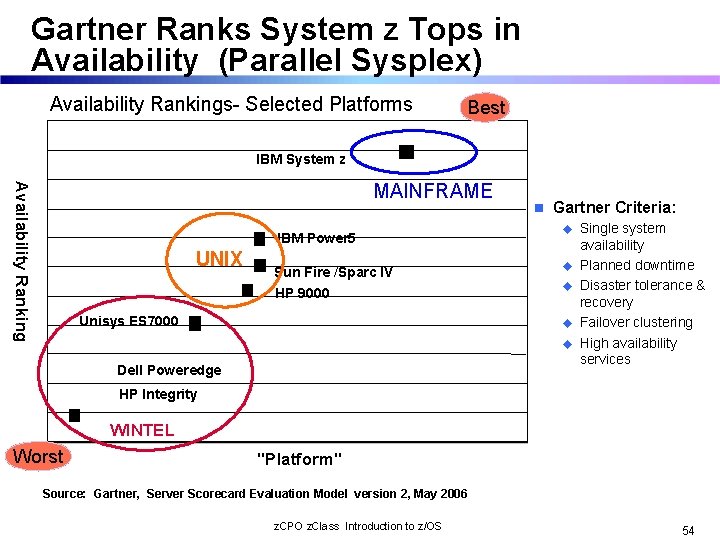

Gartner Ranks System z Tops in Availability (Parallel Sysplex) Availability Rankings- Selected Platforms Best IBM System z Availability Ranking MAINFRAME IBM Power 5 UNIX n Gartner Criteria: u Sun Fire /Sparc IV u HP 9000 u Unisys ES 7000 u u Dell Poweredge Single system availability Planned downtime Disaster tolerance & recovery Failover clustering High availability services HP Integrity WINTEL Worst "Platform" Source: Gartner, Server Scorecard Evaluation Model version 2, May 2006 z. CPO z. Class Introduction to z/OS 54

What is a parallel sysplex = Continuous Availability Builds on the strength of z. Series servers by linking up to 32 images to create the industry’s most powerful commercial processing clustered system n Innovative multi-system data-sharing technology n Direct concurrent read/write access to shared data from all processing nodes n No loss of data integrity, No performance hit n Transactions and queries can be distributed for parallel execution based on available capacity and not restricted to a single node n Every “cloned” application can run on every image n Hardware and software can be maintained non-disruptively n Within a parallel sysplex cluster, it is possible to construct an environment with no single point of failure n Peer instances of a failing subsystem can take over recovery of resources held by the failing instance OR the failing subsystem can be automatically restarted on still healthy systems n In a parallel sysplex the loss of a server may be transparent to the application and the server workload redistributed automatically with little performance degradation n Software upgrades can be rolled through one system at a time on a sensible timescale for the business n z. CPO z. Class Introduction to z/OS 55

Consider the Power n Parallel Sysplex – Up to 32 System Images n z 10 Server – Up to 64 Processors per image n MIPS up to 920 per processor Up to 1, 884, 160 MIPS In a Parallel Sysplex z. CPO z. Class Introduction to z/OS 56

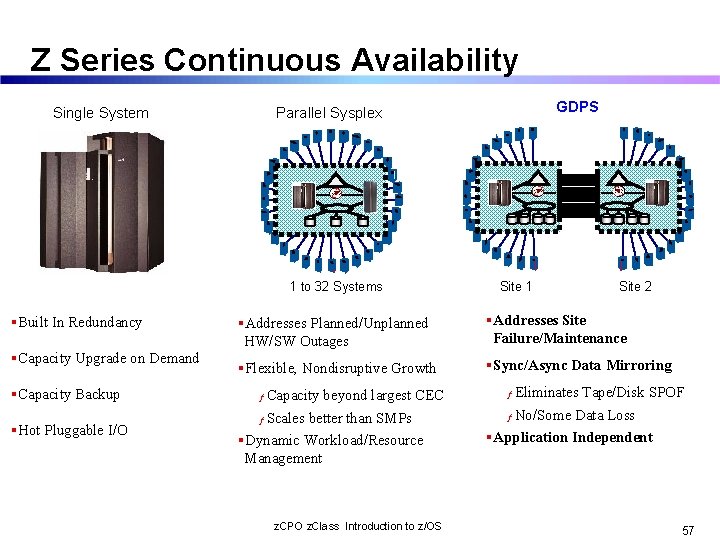

Z Series Continuous Availability Single System GDPS Parallel Sysplex 11 3 6 Capacity Upgrade on Demand Capacity Backup Hot Pluggable I/O 1 1 2 6 5 12 2 3 4 7 11 10 3 4 5 6 7 9 8 5 1 to 32 Systems Built In Redundancy 9 8 2 4 7 12 10 11 12 1 10 9 8 Site 1 Site 2 Addresses Planned/Unplanned HW/SW Outages Addresses Site Failure/Maintenance Flexible, Nondisruptive Growth Sync/Async Data Mirroring Capacity beyond largest CEC ƒ Scales better than SMPs ƒ Dynamic Workload/Resource Management z. CPO z. Class Introduction to z/OS Eliminates Tape/Disk SPOF ƒ No/Some Data Loss ƒ Application Independent 57

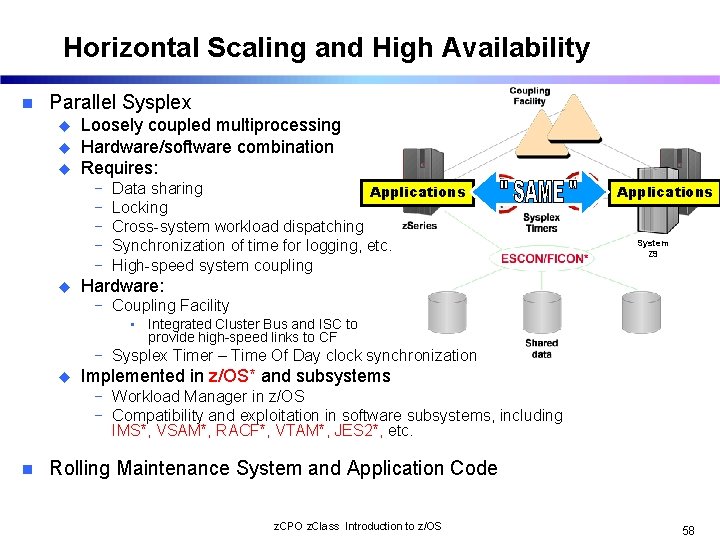

Horizontal Scaling and High Availability n Parallel Sysplex u u u Loosely coupled multiprocessing Hardware/software combination Requires: − − − u Data sharing Applications Locking Cross-system workload dispatching Synchronization of time for logging, etc. High-speed system coupling Applications System Z 9 Hardware: − Coupling Facility • Integrated Cluster Bus and ISC to provide high-speed links to CF − Sysplex Timer – Time Of Day clock synchronization u Implemented in z/OS* and subsystems − Workload Manager in z/OS − Compatibility and exploitation in software subsystems, including IMS*, VSAM*, RACF*, VTAM*, JES 2*, etc. n Rolling Maintenance System and Application Code z. CPO z. Class Introduction to z/OS 58

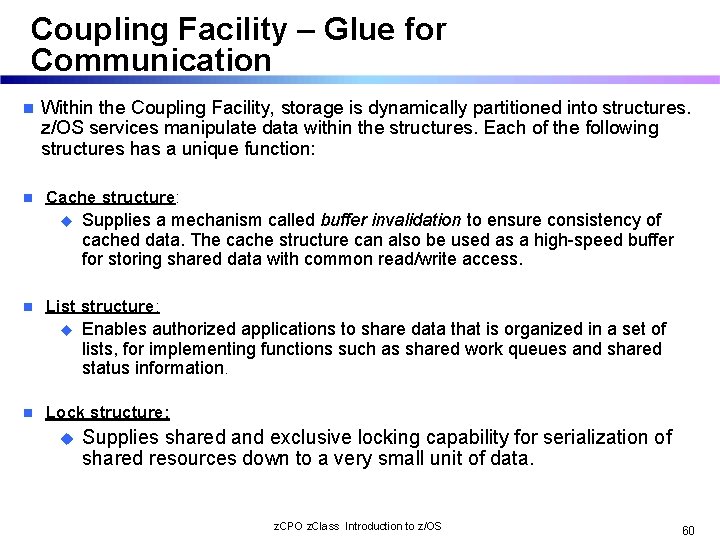

Coupling Facility – Glue for Communication n n Within the Coupling Facility, storage is dynamically partitioned into structures. z/OS services manipulate data within the structures. Each of the following structures has a unique function: Cache structure: u n List structure: u n Supplies a mechanism called buffer invalidation to ensure consistency of cached data. The cache structure can also be used as a high-speed buffer for storing shared data with common read/write access. Enables authorized applications to share data that is organized in a set of lists, for implementing functions such as shared work queues and shared status information. Lock structure: u Supplies shared and exclusive locking capability for serialization of shared resources down to a very small unit of data. z. CPO z. Class Introduction to z/OS 60

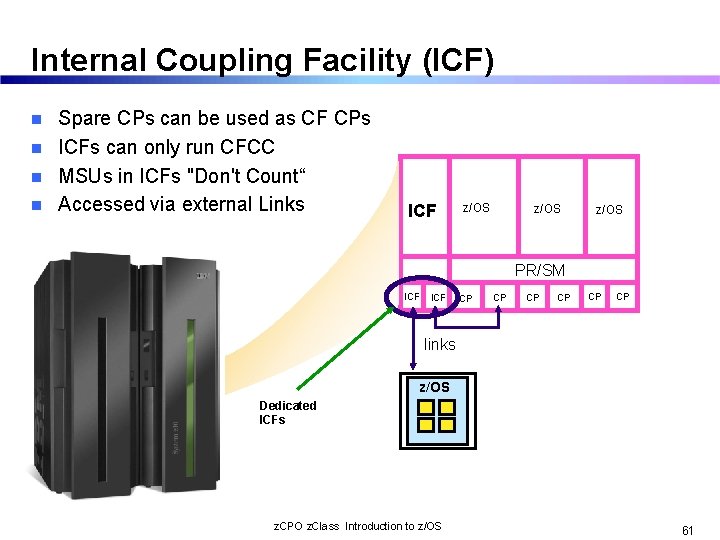

Internal Coupling Facility (ICF) Spare CPs can be used as CF CPs n ICFs can only run CFCC n MSUs in ICFs "Don't Count“ n Accessed via external Links n ICF z/OS PR/SM ICF CP CP CP links z/OS Dedicated ICFs z. CPO z. Class Introduction to z/OS 61

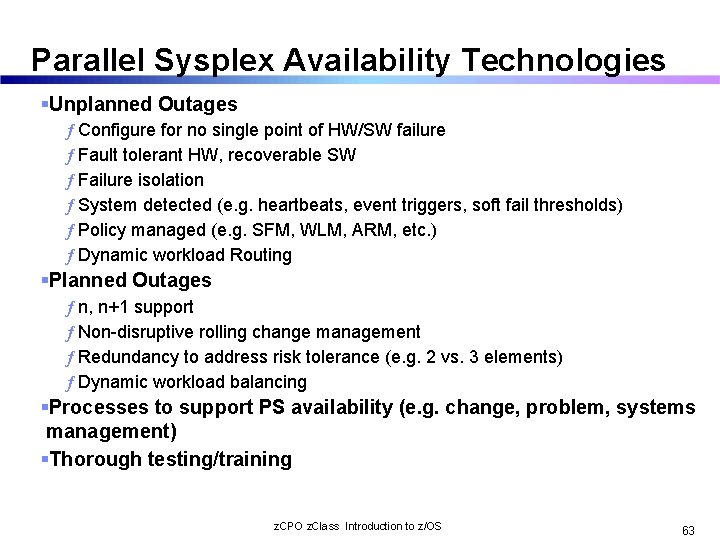

Parallel Sysplex Availability Technologies Unplanned Outages ƒ Configure for no single point of HW/SW failure ƒ Fault tolerant HW, recoverable SW ƒ Failure isolation ƒ System detected (e. g. heartbeats, event triggers, soft fail thresholds) ƒ Policy managed (e. g. SFM, WLM, ARM, etc. ) ƒ Dynamic workload Routing Planned Outages ƒ n, n+1 support ƒ Non-disruptive rolling change management ƒ Redundancy to address risk tolerance (e. g. 2 vs. 3 elements) ƒ Dynamic workload balancing Processes to support PS availability (e. g. change, problem, systems management) Thorough testing/training z. CPO z. Class Introduction to z/OS 63

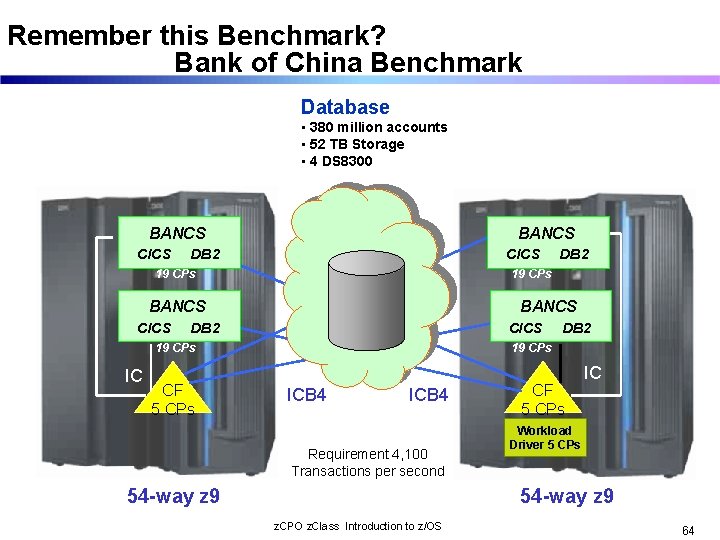

Remember this Benchmark? Bank of China Benchmark Database • 380 million accounts • 52 TB Storage • 4 DS 8300 BANCS CICS BANCS DB 2 CICS 19 CPs IC CF 5 CPs DB 2 19 CPs ICB 4 Requirement 4, 100 Transactions per second 54 -way z 9 CF 5 CPs IC Workload Driver 5 CPs 54 -way z 9 z. CPO z. Class Introduction to z/OS 64

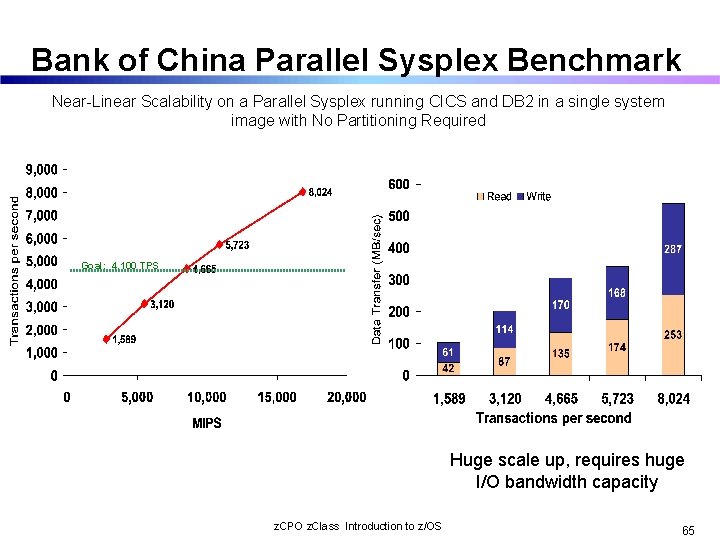

Bank of China Parallel Sysplex Benchmark Near-Linear Scalability on a Parallel Sysplex running CICS and DB 2 in a single system image with No Partitioning Required Goal: 4, 100 TPS Huge scale up, requires huge I/O bandwidth capacity z. CPO z. Class Introduction to z/OS 65

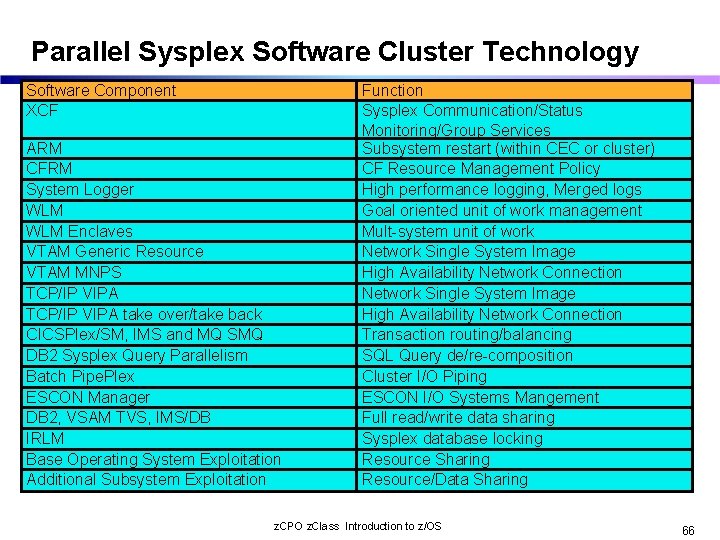

Parallel Sysplex Software Cluster Technology Software Component XCF ARM CFRM System Logger WLM Enclaves VTAM Generic Resource VTAM MNPS TCP/IP VIPA take over/take back CICSPlex/SM, IMS and MQ SMQ DB 2 Sysplex Query Parallelism Batch Pipe. Plex ESCON Manager DB 2, VSAM TVS, IMS/DB IRLM Base Operating System Exploitation Additional Subsystem Exploitation Function Sysplex Communication/Status Monitoring/Group Services Subsystem restart (within CEC or cluster) CF Resource Management Policy High performance logging, Merged logs Goal oriented unit of work management Mult-system unit of work Network Single System Image High Availability Network Connection Transaction routing/balancing SQL Query de/re-composition Cluster I/O Piping ESCON I/O Systems Mangement Full read/write data sharing Sysplex database locking Resource Sharing Resource/Data Sharing z. CPO z. Class Introduction to z/OS 66

Failure Recovery enabled by Sysplex & ARM n z/OS Workload Manager u. Sysplex-wide workload management to one policy n Sysplex Failure Manager u. Specify failure detection and recovery actions n Automatic u. Fast Restart Manager recovery of critical subsystems n Cloning u. Used and symbolics to replicate applications across the nodes z. CPO z. Class Introduction to z/OS 67

z. Series Parallel Sysplex Resource Sharing n This is not to be confused with application data sharing n This is sharing of physical system resources such as tape drives, catalogs, consoles n This exploitation is built into z/OS n Benefits u. System Management u. Performance $$$ u. Reduced hardware requirements z. CPO z. Class Introduction to z/OS 68

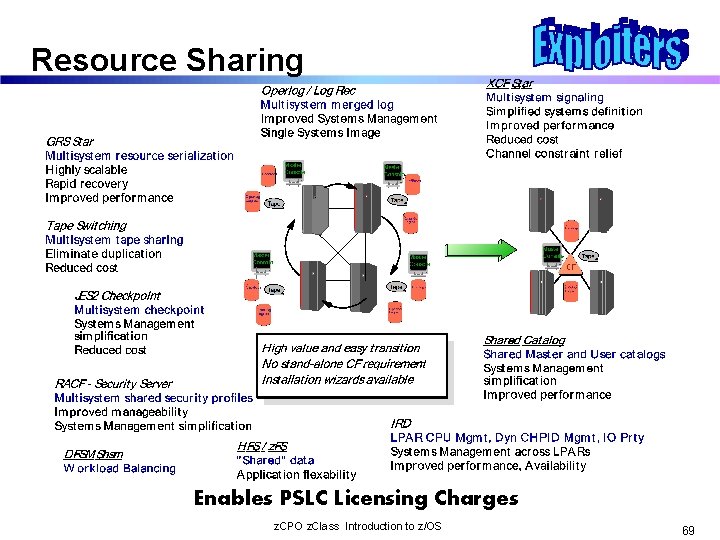

Resource Sharing Enables PSLC Licensing Charges z. CPO z. Class Introduction to z/OS 69

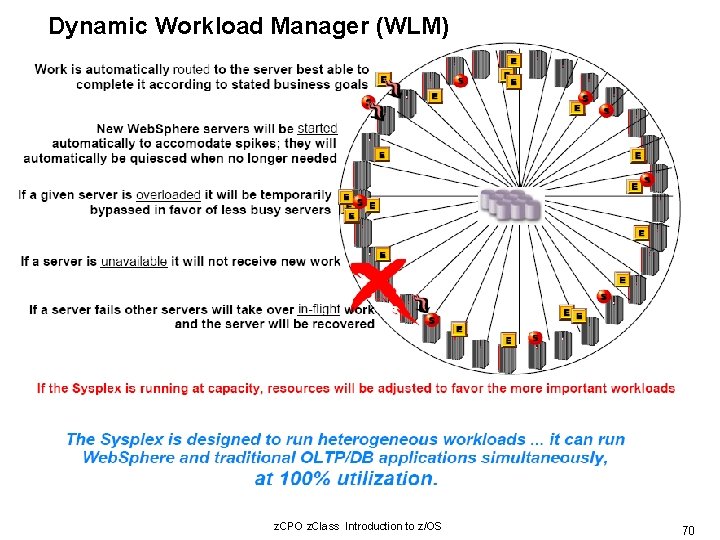

Dynamic Workload Manager (WLM) z. CPO z. Class Introduction to z/OS 70

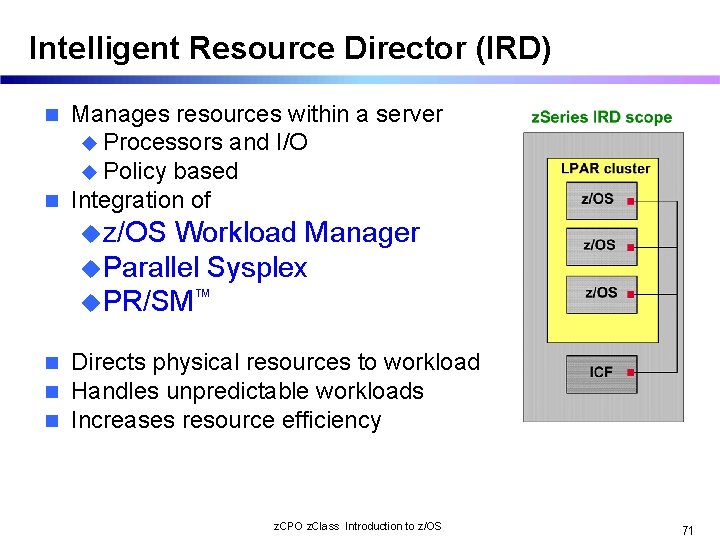

Intelligent Resource Director (IRD) Manages resources within a server u Processors and I/O u Policy based n Integration of n uz/OS Workload Manager u. Parallel Sysplex u. PR/SM™ n n n Directs physical resources to workload Handles unpredictable workloads Increases resource efficiency z. CPO z. Class Introduction to z/OS 71

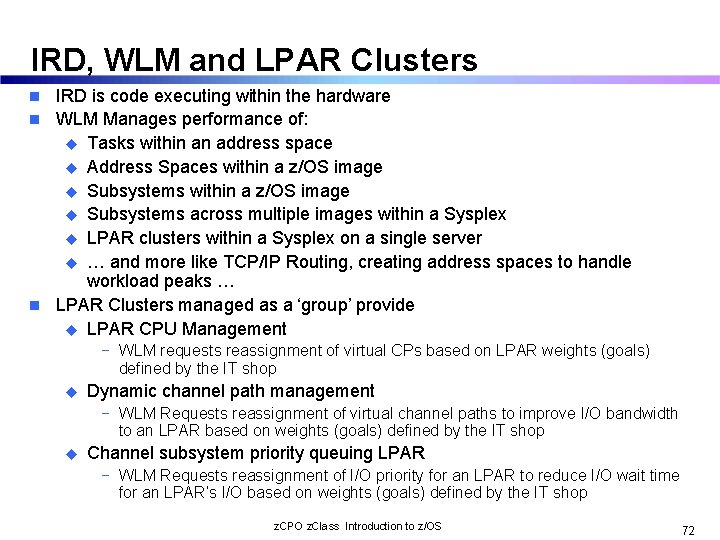

IRD, WLM and LPAR Clusters IRD is code executing within the hardware n WLM Manages performance of: u Tasks within an address space u Address Spaces within a z/OS image u Subsystems across multiple images within a Sysplex u LPAR clusters within a Sysplex on a single server u … and more like TCP/IP Routing, creating address spaces to handle workload peaks … n LPAR Clusters managed as a ‘group’ provide u LPAR CPU Management n − WLM requests reassignment of virtual CPs based on LPAR weights (goals) defined by the IT shop u Dynamic channel path management − WLM Requests reassignment of virtual channel paths to improve I/O bandwidth to an LPAR based on weights (goals) defined by the IT shop u Channel subsystem priority queuing LPAR − WLM Requests reassignment of I/O priority for an LPAR to reduce I/O wait time for an LPAR’s I/O based on weights (goals) defined by the IT shop z. CPO z. Class Introduction to z/OS 72

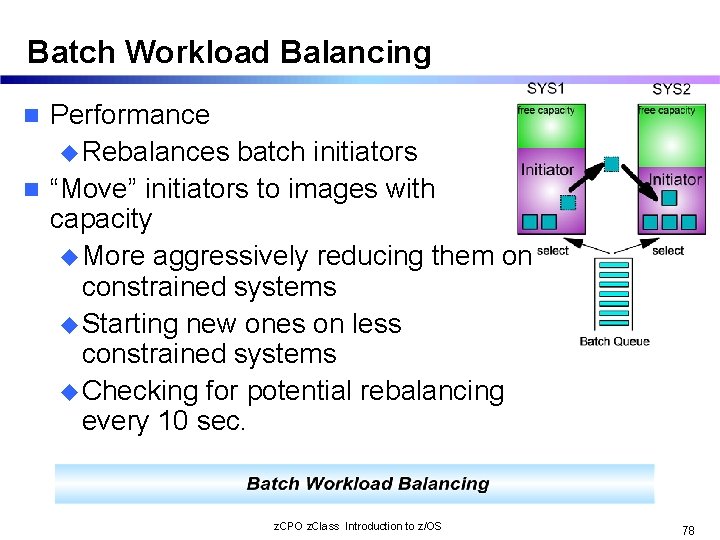

Batch Workload Balancing Performance u Rebalances batch initiators n “Move” initiators to images with capacity u More aggressively reducing them on constrained systems u Starting new ones on less constrained systems u Checking for potential rebalancing every 10 sec. n z. CPO z. Class Introduction to z/OS 78

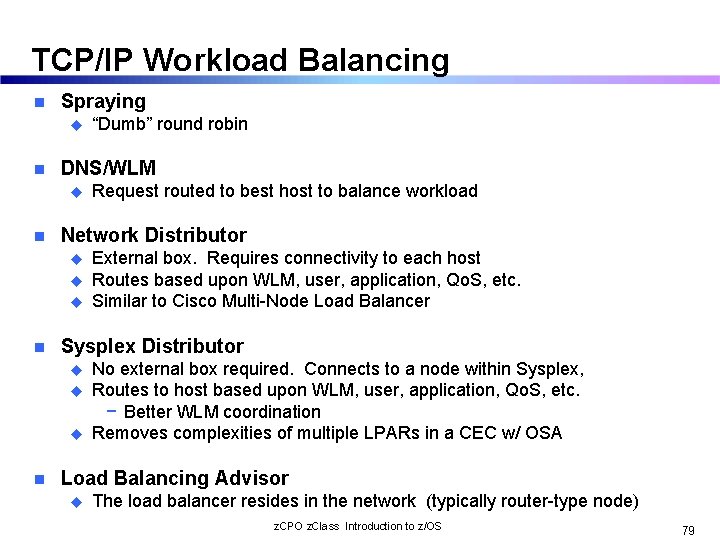

TCP/IP Workload Balancing n Spraying u n DNS/WLM u n u u External box. Requires connectivity to each host Routes based upon WLM, user, application, Qo. S, etc. Similar to Cisco Multi-Node Load Balancer Sysplex Distributor u u u n Request routed to best host to balance workload Network Distributor u n “Dumb” round robin No external box required. Connects to a node within Sysplex, Routes to host based upon WLM, user, application, Qo. S, etc. − Better WLM coordination Removes complexities of multiple LPARs in a CEC w/ OSA Load Balancing Advisor u The load balancer resides in the network (typically router-type node) z. CPO z. Class Introduction to z/OS 79

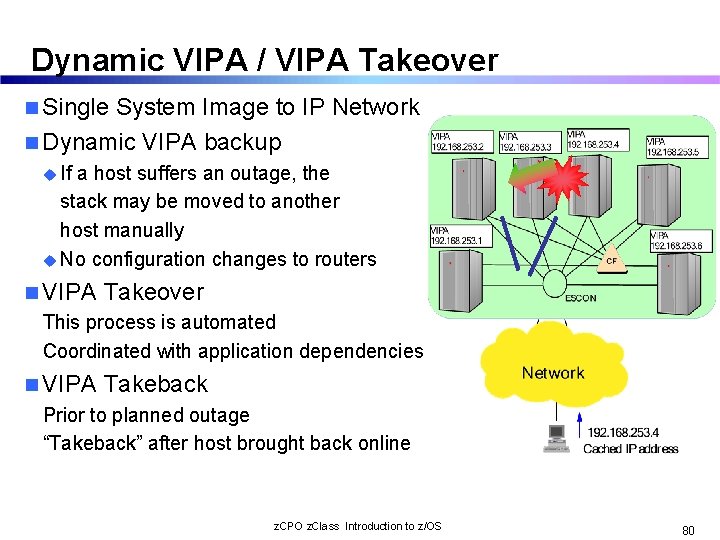

Dynamic VIPA / VIPA Takeover n Single System Image to IP Network n Dynamic VIPA backup u If a host suffers an outage, the stack may be moved to another host manually u No configuration changes to routers n VIPA Takeover This process is automated Coordinated with application dependencies n VIPA Takeback Prior to planned outage “Takeback” after host brought back online z. CPO z. Class Introduction to z/OS 80

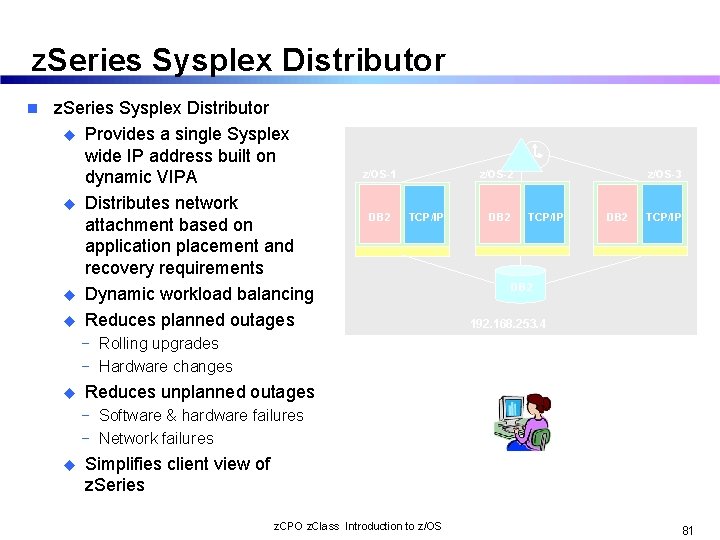

z. Series Sysplex Distributor n z. Series Sysplex Distributor u Provides a single Sysplex wide IP address built on dynamic VIPA u Distributes network attachment based on application placement and recovery requirements u Dynamic workload balancing u Reduces planned outages z/OS-1 DB 2 z/OS-2 TCP/IP DB 2 z/OS-3 TCP/IP DB 2 192. 168. 253. 4 − Rolling upgrades − Hardware changes u Reduces unplanned outages − Software & hardware failures − Network failures u Simplifies client view of z. Series z. CPO z. Class Introduction to z/OS 81

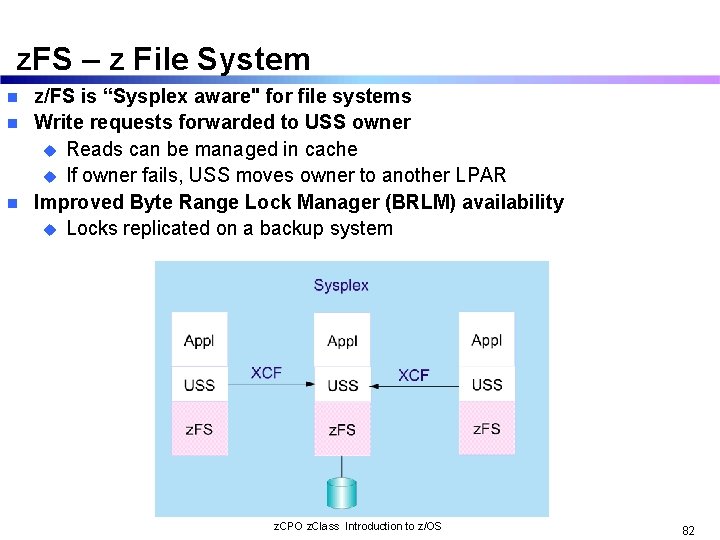

z. FS – z File System z/FS is “Sysplex aware" for file systems n Write requests forwarded to USS owner u Reads can be managed in cache u If owner fails, USS moves owner to another LPAR n Improved Byte Range Lock Manager (BRLM) availability u Locks replicated on a backup system n z. CPO z. Class Introduction to z/OS 82

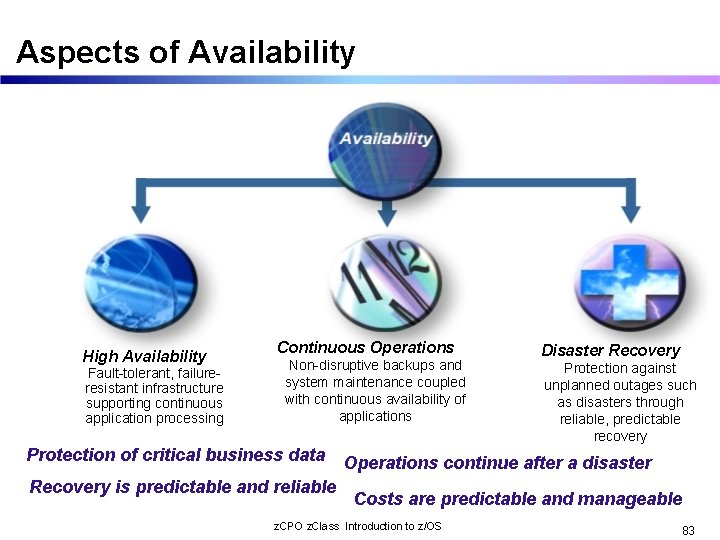

Aspects of Availability High Availability Fault-tolerant, failureresistant infrastructure supporting continuous application processing Continuous Operations Non-disruptive backups and system maintenance coupled with continuous availability of applications Protection of critical business data Recovery is predictable and reliable Disaster Recovery Protection against unplanned outages such as disasters through reliable, predictable recovery Operations continue after a disaster Costs are predictable and manageable z. CPO z. Class Introduction to z/OS 83

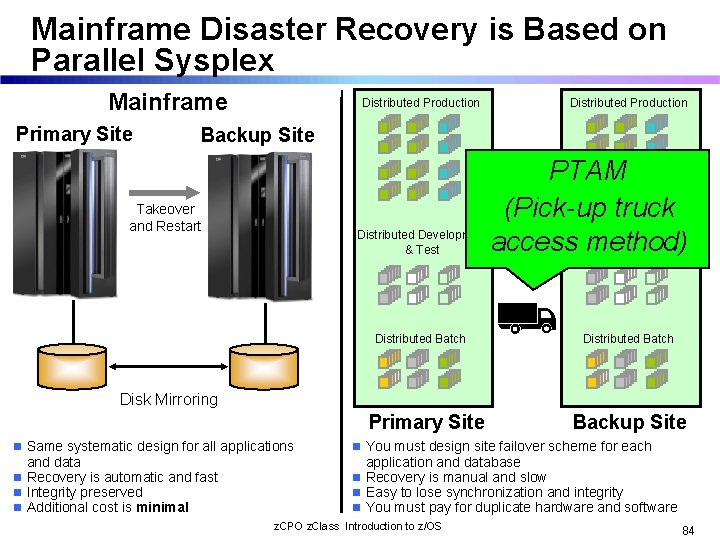

Mainframe Disaster Recovery is Based on Parallel Sysplex Mainframe Primary Site Distributed Production Backup Site PTAM (Pick-up truck Distributed Development access method) & Test Takeover and Restart Distributed Batch Disk Mirroring Primary Site n Same systematic design for all applications and data n Recovery is automatic and fast n Integrity preserved n Additional cost is minimal Backup Site n You must design site failover scheme for each application and database n Recovery is manual and slow n Easy to lose synchronization and integrity n You must pay for duplicate hardware and software z. CPO z. Class Introduction to z/OS 84

GDPS/PPRC Experience Bank Austria Creditanstalt n Recovery window reduced from 48 hours to less than two hours n Planned site switch completed in the two hour target n Significant reduction of on-site manpower and skill level required to manage planned and unplanned reconfigurations n Dynamic switchover of disk subsystems is between 32 -95 seconds n No loss of committed data z. CPO z. Class Introduction to z/OS 85

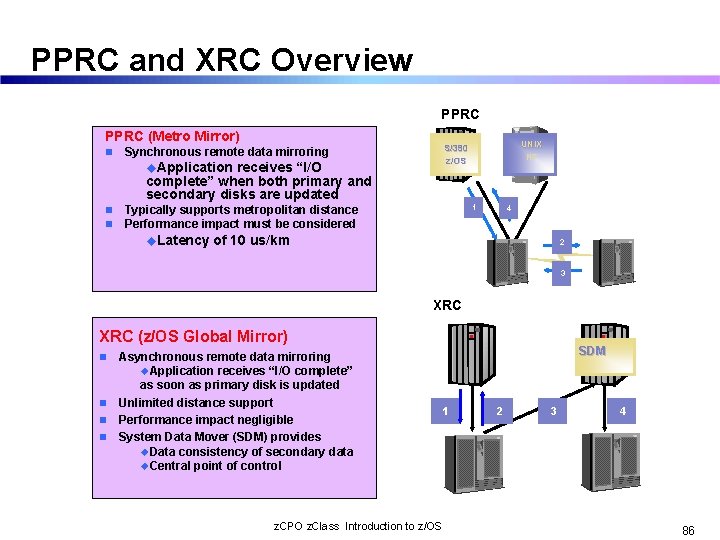

PPRC and XRC Overview PPRC (Metro Mirror) n u. Application receives “I/O complete” when both primary and secondary disks are updated n n Typically supports metropolitan distance Performance impact must be considered u. Latency UNIX NT S/390 z/OS Synchronous remote data mirroring 1 4 of 10 us/km 2 3 XRC (z/OS Global Mirror) n n Asynchronous remote data mirroring u. Application receives “I/O complete” as soon as primary disk is updated Unlimited distance support Performance impact negligible System Data Mover (SDM) provides u. Data consistency of secondary data u. Central point of control z. CPO z. Class Introduction to z/OS SDM 1 2 3 4 86

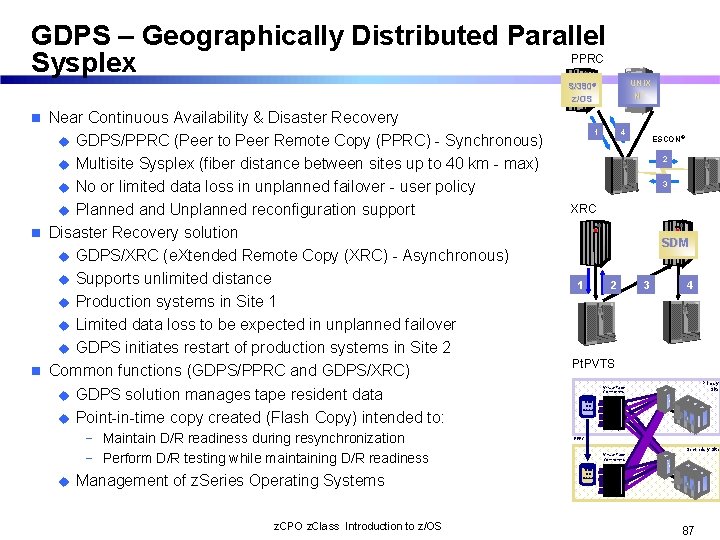

GDPS – Geographically Distributed Parallel PPRC Sysplex UNIX NT S/390® z/OS Near Continuous Availability & Disaster Recovery u GDPS/PPRC (Peer to Peer Remote Copy (PPRC) - Synchronous) u Multisite Sysplex (fiber distance between sites up to 40 km - max) u No or limited data loss in unplanned failover - user policy u Planned and Unplanned reconfiguration support n Disaster Recovery solution u GDPS/XRC (e. Xtended Remote Copy (XRC) - Asynchronous) u Supports unlimited distance u Production systems in Site 1 u Limited data loss to be expected in unplanned failover u GDPS initiates restart of production systems in Site 2 n Common functions (GDPS/PPRC and GDPS/XRC) u GDPS solution manages tape resident data u Point-in-time copy created (Flash Copy) intended to: n − Maintain D/R readiness during resynchronization − Perform D/R testing while maintaining D/R readiness u Management of z. Series Operating Systems z. CPO z. Class Introduction to z/OS 1 4 ESCON® 2 3 XRC SDM 1 2 3 4 Pt. PVTS Primary Site Virtual Tape Controllers TCDB TMC Catalog PPRC Virtual Tape Controllers Secondary Site TCDB TMC Catalog 87

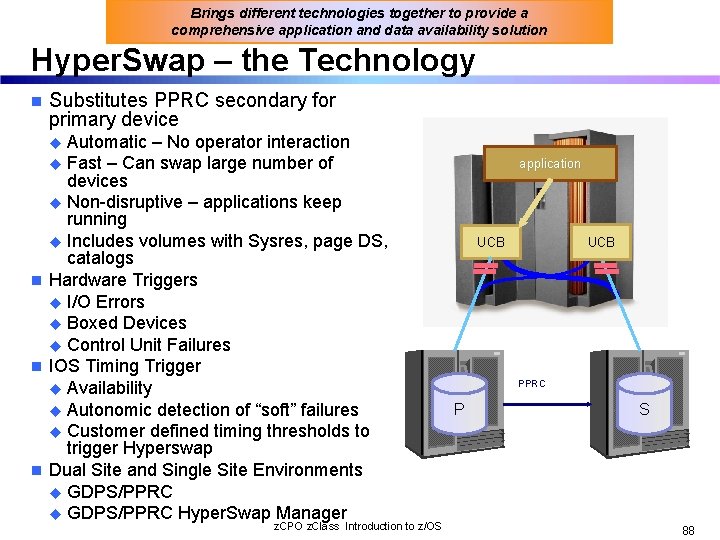

Brings different technologies together to provide a comprehensive application and data availability solution Hyper. Swap – the Technology n Substitutes PPRC secondary for primary device u Automatic – No operator interaction u Fast – Can swap large number of devices u Non-disruptive – applications keep running u Includes volumes with Sysres, page DS, catalogs n Hardware Triggers u I/O Errors u Boxed Devices u Control Unit Failures n IOS Timing Trigger u Availability u Autonomic detection of “soft” failures u Customer defined timing thresholds to trigger Hyperswap n Dual Site and Single Site Environments u GDPS/PPRC Hyper. Swap Manager z. CPO z. Class Introduction to z/OS application UCB PPRC P S 88

What a Sysplex can do for YOU… n It will address any of the following types of work Ø Ø Ø n Large business problems that involve hundreds of end users, or deal with volumes of work that can be counted in millions of transactions per day. Work that consists of small work units, such as online transactions, or large work units that can be subdivided into smaller work units, such as queries. Concurrent applications on different systems that need to directly access and update a single database without jeopardizing data integrity and security. Provides reduced cost through Ø Cost effective processor technology Ø IBM software licensing charges in Parallel Sysplex Ø Continued use of large-system data processing skills without re-education Ø Protection of z/OS application investments Ø The ability to manage a large number of systems more easily than other comparably performing multisystem environments z. CPO z. Class Introduction to z/OS 90

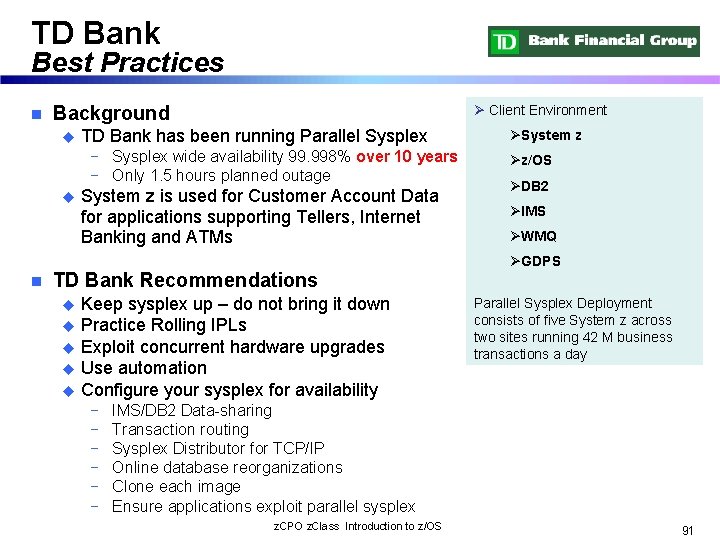

TD Bank Best Practices n Ø Client Environment Background u TD Bank has been running Parallel Sysplex − Sysplex wide availability 99. 998% over 10 years − Only 1. 5 hours planned outage u System z is used for Customer Account Data for applications supporting Tellers, Internet Banking and ATMs ØSystem z Øz/OS ØDB 2 ØIMS ØWMQ ØGDPS n TD Bank Recommendations Keep sysplex up – do not bring it down u Practice Rolling IPLs u Exploit concurrent hardware upgrades u Use automation u Configure your sysplex for availability u − − − Parallel Sysplex Deployment consists of five System z across two sites running 42 M business transactions a day IMS/DB 2 Data-sharing Transaction routing Sysplex Distributor for TCP/IP Online database reorganizations Clone each image Ensure applications exploit parallel sysplex z. CPO z. Class Introduction to z/OS 91

Summary n Reduce cost compared to previous offerings of comparable function and performance n Continuous availability even during change n Dynamic addition and change n Parallel sysplex builds on the strengths of the z/OS platform to bring even greater availability serviceability and reliability n Scales out at low overhead, near linear scaling z. CPO z. Class Introduction to z/OS 92

Additional Information n GDPS u. The Ultimate e-business Availability Solution – GF 22 -5114 uwww. ibm. com/systems/z/gdps n Parallel Sysplex uwww. ibm. com/systems/z/pso z. CPO z. Class Introduction to z/OS 93

- Slides: 73