sdbi winter 2001 Data Mining For Hypertext A

sdbi – winter 2001 Data Mining For Hypertext: A Tutorial Survey n Based on a paper by: Soumen Chakrabarti Indian Institute Of technology Bombay. Soumen@cse. iitb. ernet. in n. Lecture by: n. Noga Kashti n. Efrat Daum 11/11/01 sdbi winter 2001 11/11/01

Lets start with definitions … n n Hypertext a collection of documents (or "nodes") containing cross references or "links" which, with the aid of an interactive browser program, allow the reader to move easily from one document to another. Data Mining Analysis of data in a database using tools which look for trends or anomalies without knowledge of the meaning of the data. 11/11/01 sdbi winter 2001 2

Two Ways For Getting Information From The Web : n Clicking On Hyperlinks n Searching Via Keyword Queries 11/11/01 sdbi winter 2001 3

Some History … n n Before the popular Web, Hypertext has been used by ACM, SIGIR, SIGLINK/SIGWEB and DIGITAL LIBRARIES. The old IR (Information retrieval) deals with documents whereas the Web deals with semi structured data. 11/11/01 sdbi winter 2001 4

Some Numbers. . n The Web exceeds 800 million HTML pages on about three million servers. n n n Almost a million pages are added daily. 11/11/01 sdbi winter 2001 A typical page changes in a few months. Several hundred gigabytes change every month. 5

Difficulties With Accessing Information On The Web: n Usual problems of text search (synonymy, polysemy, text sensitivity) become much more severe. n Semi structured data. n Sheer size and flux. n No consistent standard or style. 11/11/01 sdbi winter 2001 6

The Old Search Process Is Often Unsatisfactory! n n Deficiency of scale. Poor accuracy (low recall and low precision). 11/11/01 sdbi winter 2001 7

Better Solutions: Data Mining And Machine Learning n n NL Techniques. Statistical Techniques for learning structure in various forms from text hypertext and semi structured data. 11/11/01 sdbi winter 2001 8

Issues We’ll Discuss n n n Models Supervised learning Unsupervised learning Semi supervised learning Social network analysis 11/11/01 sdbi winter 2001 9

Models For Text n Representation for text with statistical analyses only (bag of words): n n n 11/11/01 The vector space model The binary model The multi nominal model sdbi winter 2001 10

Models For Text (cont. ) n The vector space model: n n n Documents > tokens >canonical forms. Canonical token is an axis in a Euclidean space. The t th coordinate of d is n(d, t) n n 11/11/01 t is a term d is a document sdbi winter 2001 11

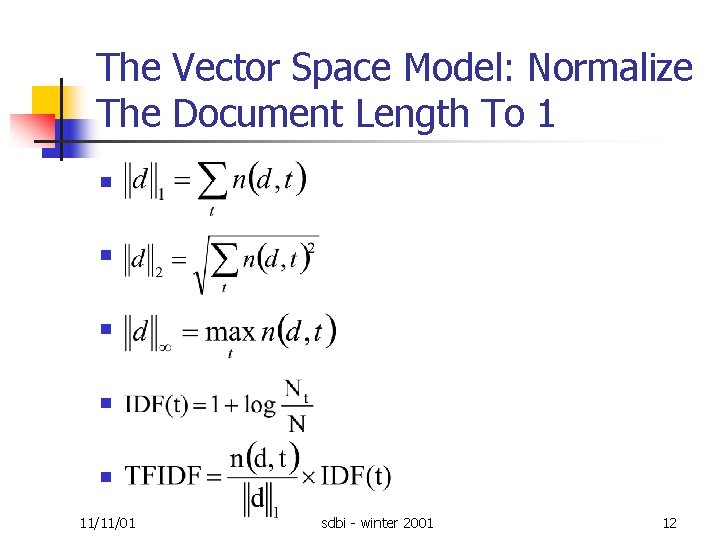

The Vector Space Model: Normalize The Document Length To 1 n n n 11/11/01 sdbi winter 2001 12

More Models For Text n n The Binary Model : A document is a set of terms, which is a subset of the lexicon. Word counts are not significant. The multinomial model : a die with |T| faces. Every face has a probability θt of showing up when tossed. Deciding of total word count, the author tosses the die while writing the term that shows up. 11/11/01 sdbi winter 2001 13

Models For Hypertext n Hypertext: text with hyperlinks. Varying levels of detail. n Example: Directed Graph(D, L) n n n 11/11/01 D – The set of nodes/documents/pages L – The set of links sdbi winter 2001 14

Models For Semi structured Data n A point of convergence for the web(documents) and database(data) communities 11/11/01 sdbi winter 2001 15

Models For Semi structured Data(cont. ) n n n like Topic Directories with tree structured hierarchies. Examples: Open Directory Project , Yahoo! Another representation: XML. 11/11/01 sdbi winter 2001 16

Supervised Learning (classification) n Algorithm Initialization: training data, each item is marked with a label or class from a discrete finite set. n Input: unlabeled data. n Algorithm roll: guess the data labels. 11/11/01 sdbi winter 2001 17

Supervised Learning (cont. ) n n Example: topic directories Advantages: help structure, restrict keyword search, can enable powerful searches. 11/11/01 sdbi winter 2001 18

Probabilistic Models For Text Learning n n n Let c 1, …, cm be m classes or topics with some training documents Dc. Prior probability of a class: T : the universe of terms in all the training documents. 11/11/01 sdbi winter 2001 19

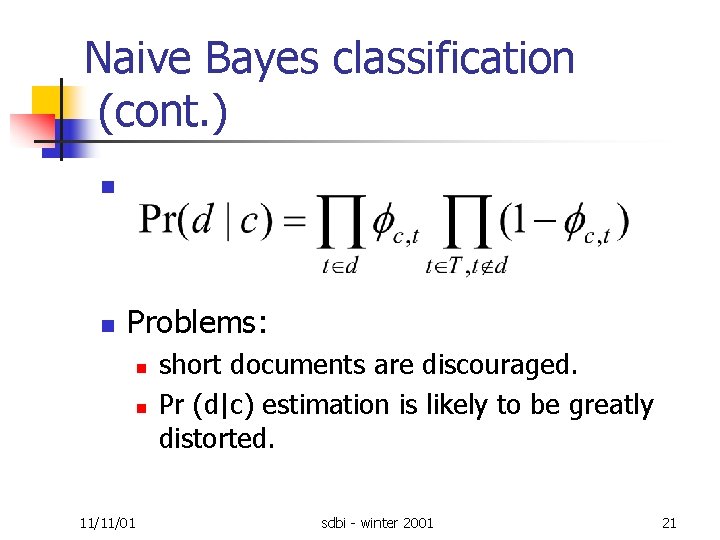

Probabilistic Models For Text Learning (cont. ) n Naive Bayes classification: n n 11/11/01 Assumption: for each class c, there is binary text generator model. Model parameters: Φc, t – the probability that a document in class c will mention term t at lease once. sdbi winter 2001 20

Naive Bayes classification (cont. ) n n Problems: n n 11/11/01 short documents are discouraged. Pr (d|c) estimation is likely to be greatly distorted. sdbi winter 2001 21

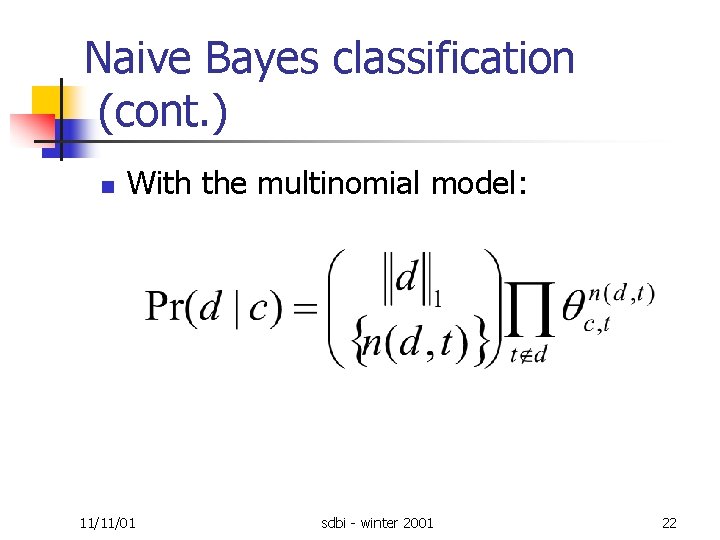

Naive Bayes classification (cont. ) n With the multinomial model: 11/11/01 sdbi winter 2001 22

Naive Bayes classification (cont. ) n Problems: n n Again, short documents are discouraged. Inter term correlation ignored. Multiplicative Φc, t ‘surprise’ factor. Conclusion: n 11/11/01 Both model are effective. sdbi winter 2001 23

More Probabilistic Models For Text Learning n n n Parameter smoothing and feature selection. Limited dependence modeling. The maximum entropy technique. Support vector machines (SVMs). Hierarchies over class labels. 11/11/01 sdbi winter 2001 24

Learning Relations n n n Classification extension : a combination of statistical and relational learning. Improve accuracy. The ability to invent predicates. Can represent hyperlink graph structure and word statistics of neighbor documents. Learned rules will not be dependent on specific keywords. 11/11/01 sdbi winter 2001 25

Unsupervised learning n hypertext documents n a hierarchy among the documents n What is a good clustering? 11/11/01 sdbi winter 2001 26

Basic clustering techniques n Techniques for Clustering: n n 11/11/01 k means hierarchical agglomerative clustering sdbi winter 2001 27

Basic clustering techniques n documents n n n unweighted vector space TFIDF vector space similarity between two documents n n 11/11/01 cos( ), = the angle between their corresponding vectors the distance between the vectors lengths (normalized) sdbi winter 2001 28

k means clustering n the k means algorithm: n input: n n n output: n 11/11/01 d 1, …, dn set of n documents k the number of clusters desired (k n) C 1, …, Ck – k clusters with the n classifier documents sdbi winter 2001 29

k means clustering n the k means algorithm (cont. ): n initial: guess k initial means: m 1, …mk n Until there are no changes in any means: n n 11/11/01 For each document d d is in ci if ||d mi|| is the minimum of all the k distances. For 1 i k replace mi with the means of all the documents for ci. sdbi winter 2001 30

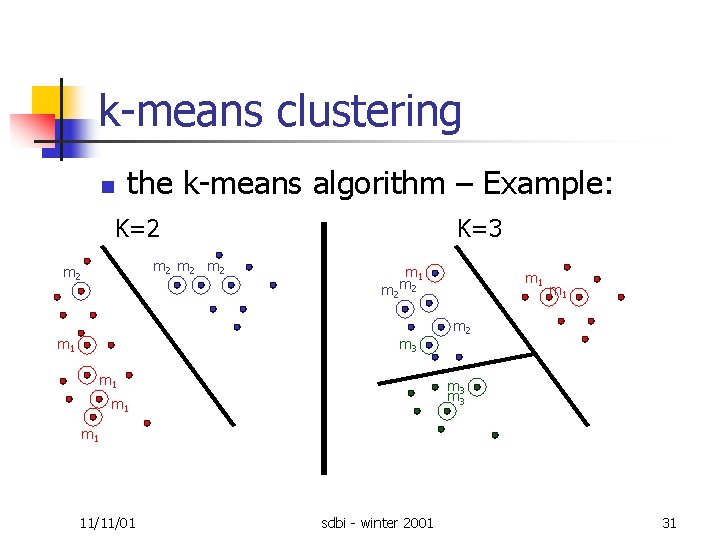

k means clustering the k means algorithm – Example: n K=2 m 2 m 2 K=3 m 1 m m 2 2 m 3 m 1 m 1 m 2 m 3 m 1 11/11/01 sdbi winter 2001 31

k means clustering (cont. ) n Problem: n high dimensionality n n e. g. : if 30000 dimensions has only two possible values, the vector space size is 230000 Solution: n 11/11/01 Projecting out some dimensions sdbi winter 2001 32

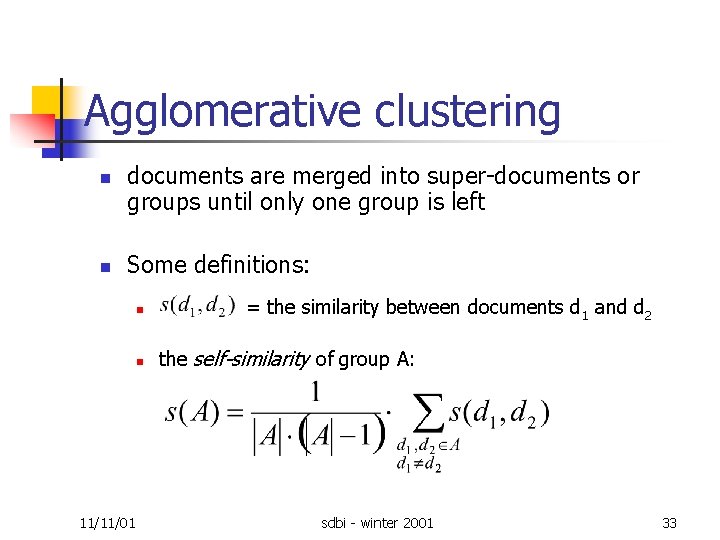

Agglomerative clustering n n documents are merged into super documents or groups until only one group is left Some definitions: 11/11/01 n = the similarity between documents d 1 and d 2 n the self-similarity of group A: sdbi winter 2001 33

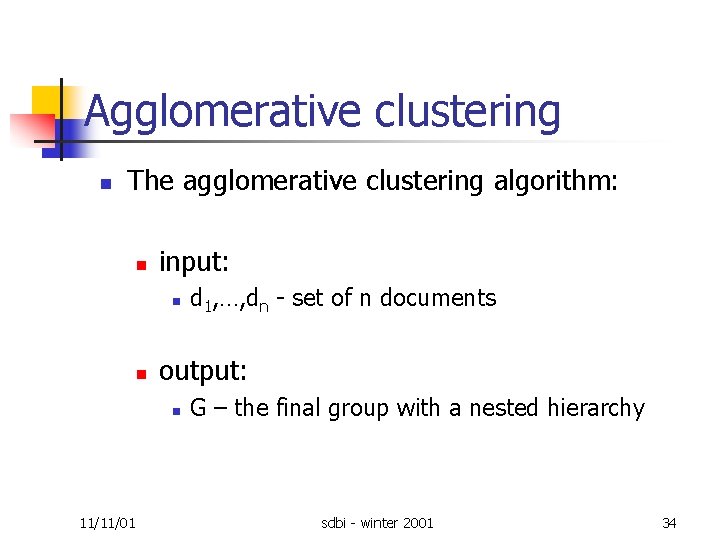

Agglomerative clustering n The agglomerative clustering algorithm: n input: n n output: n 11/11/01 d 1, …, dn set of n documents G – the final group with a nested hierarchy sdbi winter 2001 34

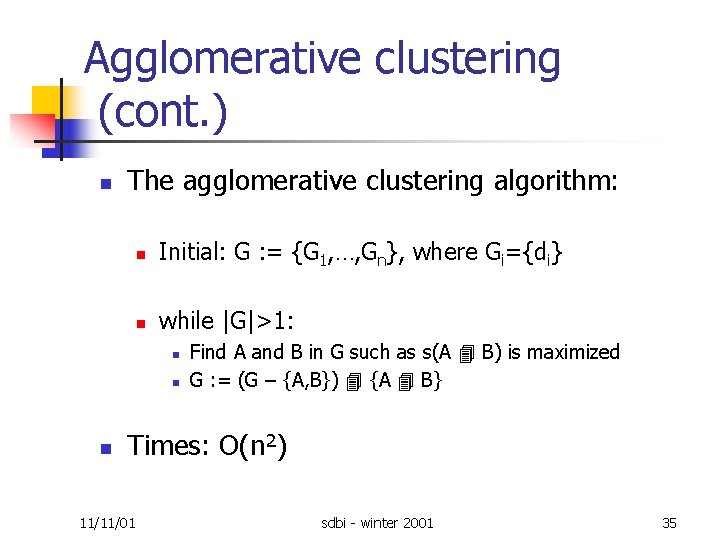

Agglomerative clustering (cont. ) n The agglomerative clustering algorithm: n Initial: G : = {G 1, …, Gn}, where Gi={di} n while |G|>1: n n n Find A and B in G such as s(A B) is maximized G : = (G – {A, B}) {A B} Times: O(n 2) 11/11/01 sdbi winter 2001 35

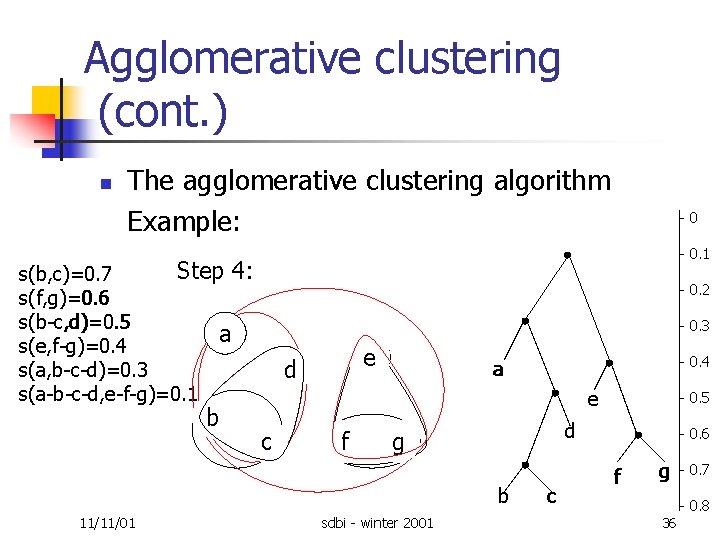

Agglomerative clustering (cont. ) n The agglomerative clustering algorithm Example: 0. 1 Initial: Step 6: Step 1: Step 2: Step 5: Step 4: Step 3: s(b, c)=0. 7 s(f, g)=0. 6 s(b c, d)=0. 5 a s(e, f g)=0. 4 s(a, b c d)=0. 3 s(a b c d, e f g)=0. 1 b 0. 2 0. 3 e d 0. 4 a e c f sdbi winter 2001 0. 5 d g b 11/11/01 0 c 0. 6 f g 36 0. 7 0. 8

Techniques from linear algebra n n Documents and terms are represented by vectors in Euclidean space. Applications of linear algebra to text analysis: n n 11/11/01 Latent semantic indexing (LSI) Random projections sdbi winter 2001 37

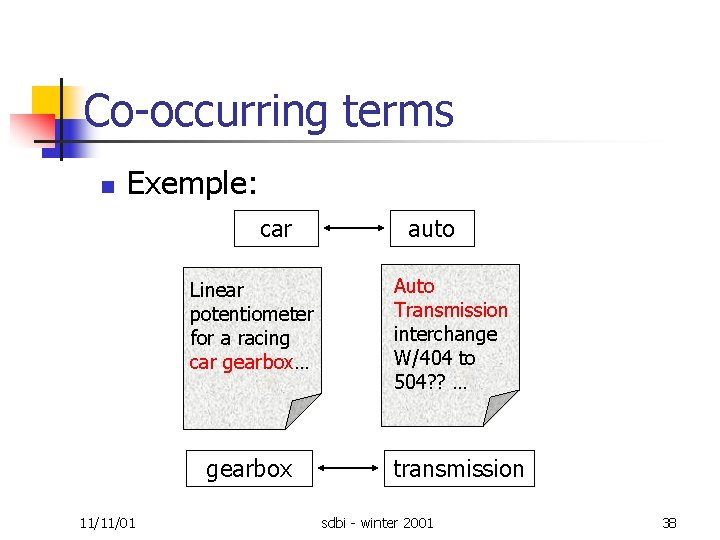

Co occurring terms n Exemple: car Linear potentiometer for a racing car gearbox… gearbox 11/11/01 auto Auto Transmission interchange W/404 to 504? ? … transmission sdbi winter 2001 38

Latent semantic indexing (LSI) n Vector Space model of documents: n n n 11/11/01 Let m=|T|, the lexicon size Let n=the number of documents Define Amxn = term by document matrix where: aij = the number of occurrences of term i in document j. sdbi winter 2001 39

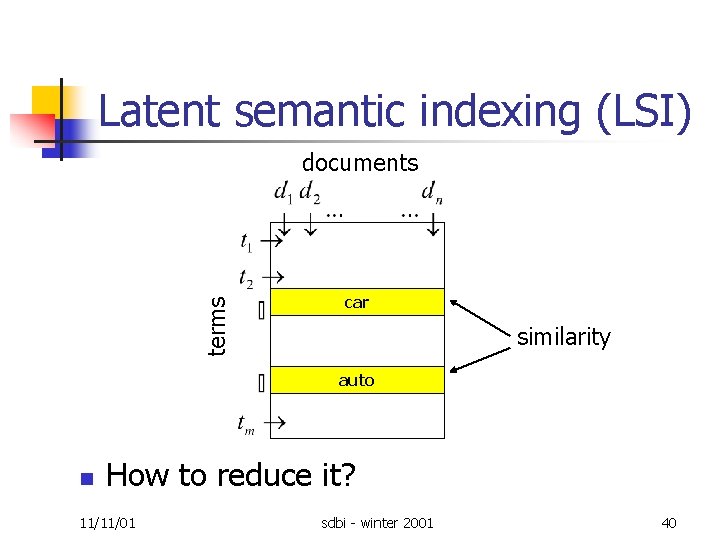

Latent semantic indexing (LSI) terms documents car similarity auto n How to reduce it? 11/11/01 sdbi winter 2001 40

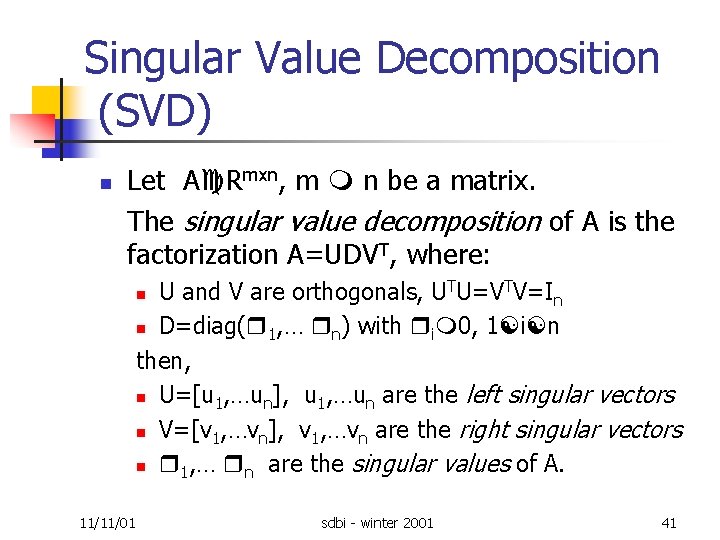

Singular Value Decomposition (SVD) n Let A Rmxn, m n be a matrix. The singular value decomposition of A is the factorization A=UDVT, where: U and V are orthogonals, UTU=VTV=In n D=diag( 1, … n) with i 0, 1 i n then, n U=[u 1, …un], u 1, …un are the left singular vectors n V=[v 1, …vn], v 1, …vn are the right singular vectors n 1, … n are the singular values of A. n 11/11/01 sdbi winter 2001 41

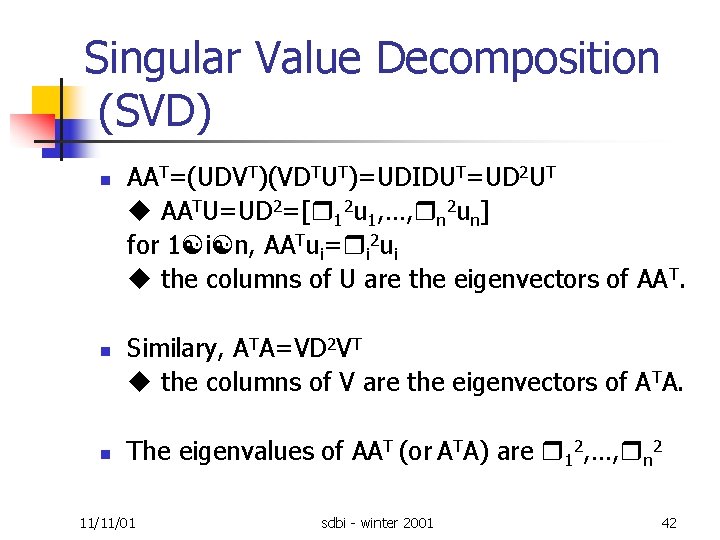

Singular Value Decomposition (SVD) n n n AAT=(UDVT)(VDTUT)=UDIDUT=UD 2 UT AATU=UD 2=[ 12 u 1, …, n 2 un] for 1 i n, AATui= i 2 ui the columns of U are the eigenvectors of AAT. Similary, ATA=VD 2 VT the columns of V are the eigenvectors of ATA. The eigenvalues of AAT (or ATA) are 12, …, n 2 11/11/01 sdbi winter 2001 42

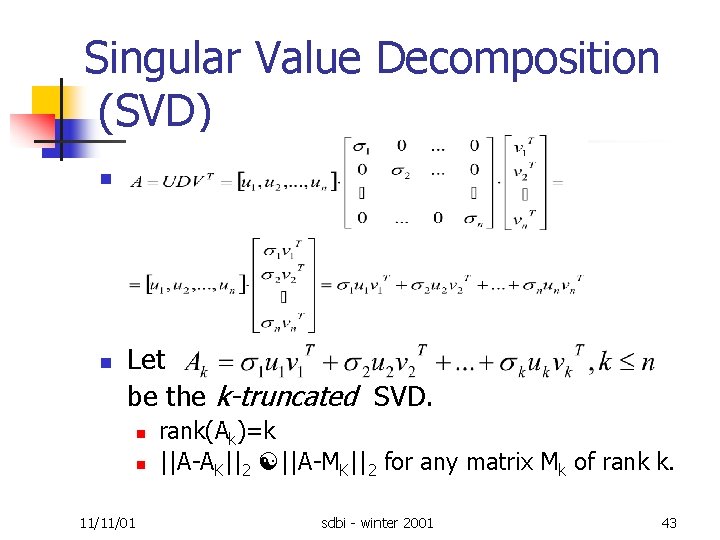

Singular Value Decomposition (SVD) n n Let be the k-truncated SVD. n n 11/11/01 rank(Ak)=k ||A AK||2 ||A MK||2 for any matrix Mk of rank k. sdbi winter 2001 43

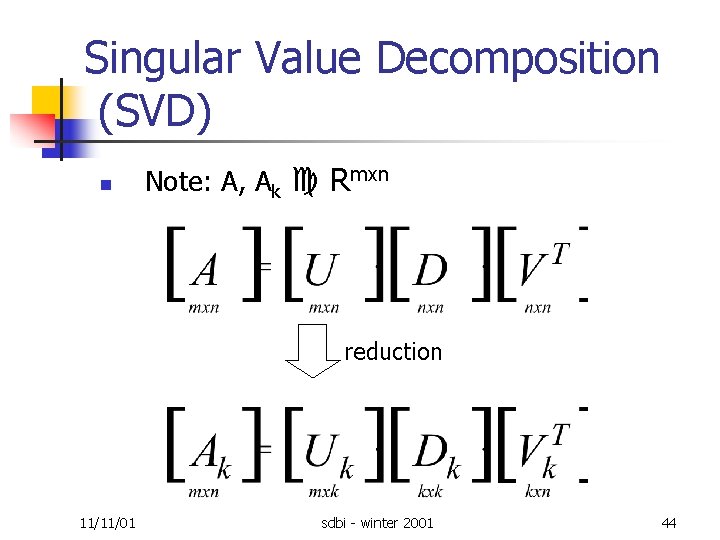

Singular Value Decomposition (SVD) n Note: A, Ak Rmxn reduction 11/11/01 sdbi winter 2001 44

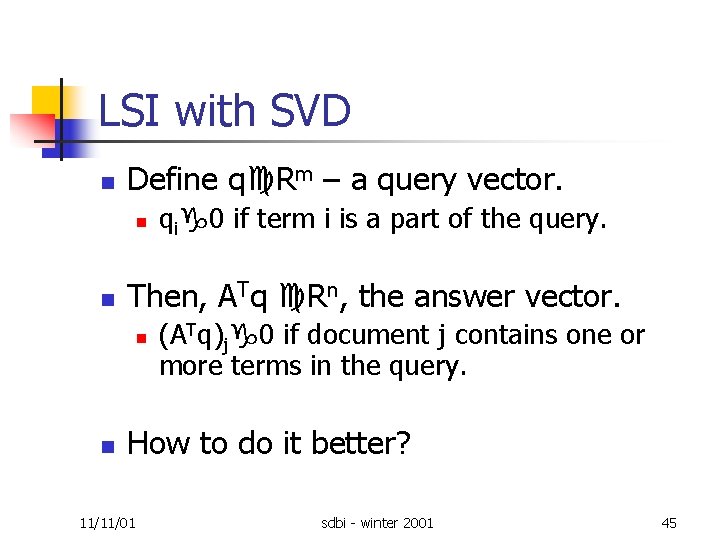

LSI with SVD n Define q Rm – a query vector. n n Then, ATq Rn, the answer vector. n n qi 0 if term i is a part of the query. (ATq)j 0 if document j contains one or more terms in the query. How to do it better? 11/11/01 sdbi winter 2001 45

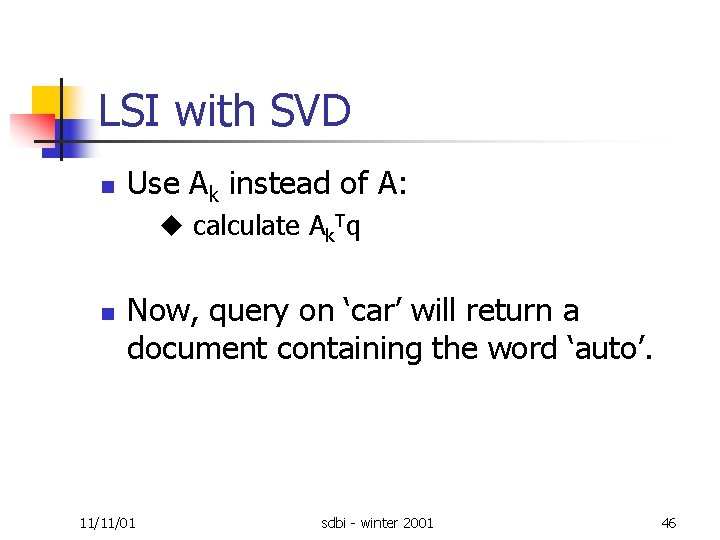

LSI with SVD n Use Ak instead of A: calculate Ak. Tq n Now, query on ‘car’ will return a document containing the word ‘auto’. 11/11/01 sdbi winter 2001 46

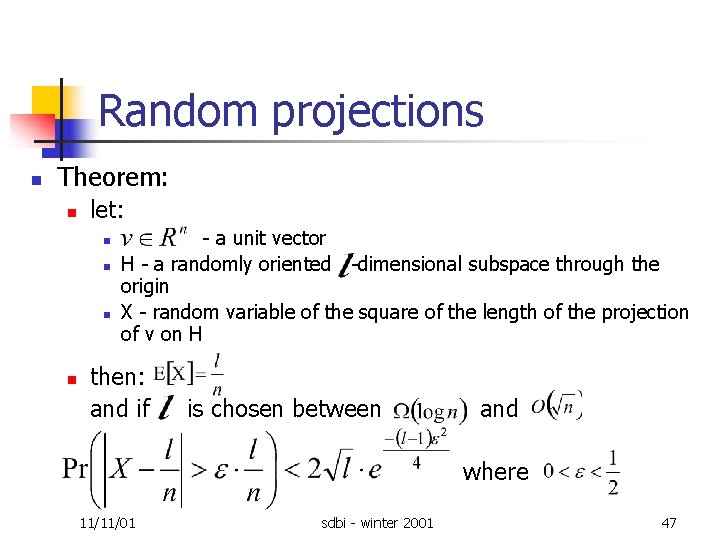

Random projections n Theorem: n let: n n a unit vector H a randomly oriented dimensional subspace through the origin X random variable of the square of the length of the projection of v on H then: and if is chosen between and where 11/11/01 sdbi winter 2001 47

Random projections n A projection of a set of points to a randomly oriented subspace. Small distortion in inter points distances n The technique: n n n 11/11/01 reducing the dimensionality of the points speed up the distances computation sdbi winter 2001 48

Semi supervised learning n Real life applications: n n n labeled documents unlabeled documents Between supervised and unsupervised learning 11/11/01 sdbi winter 2001 49

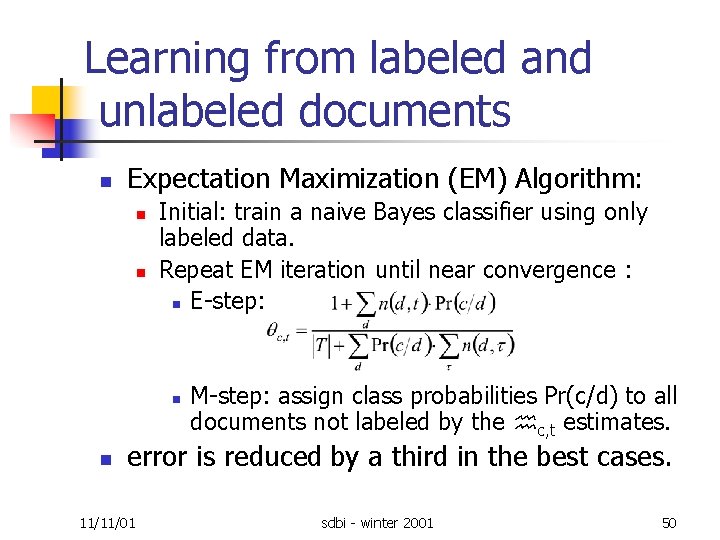

Learning from labeled and unlabeled documents n Expectation Maximization (EM) Algorithm: n n Initial: train a naive Bayes classifier using only labeled data. Repeat EM iteration until near convergence : n E step: n n M step: assign class probabilities Pr(c/d) to all documents not labeled by the c, t estimates. error is reduced by a third in the best cases. 11/11/01 sdbi winter 2001 50

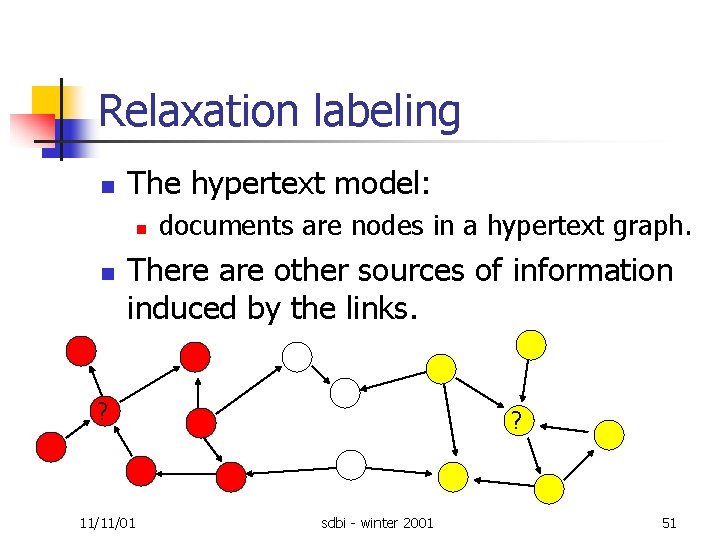

Relaxation labeling n The hypertext model: n n documents are nodes in a hypertext graph. There are other sources of information induced by the links. ? 11/11/01 ? sdbi winter 2001 51

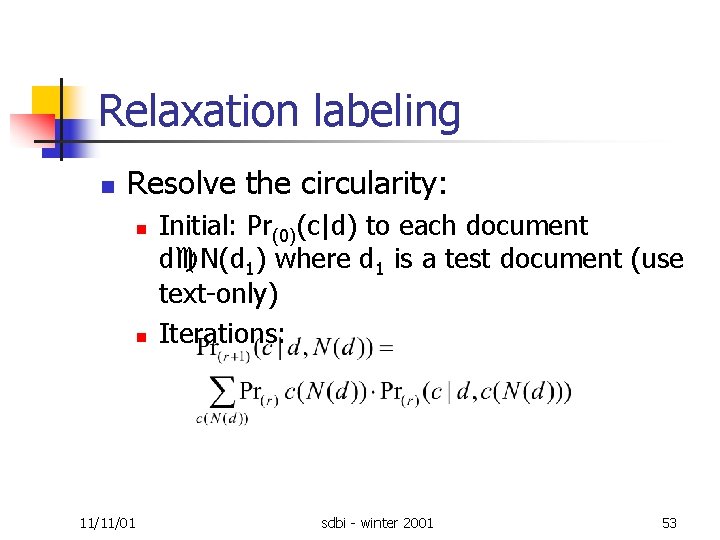

Relaxation labeling n n n c=class, t=term, N=neighbors In supervised learning: Pr(t|c) In hypertext, using neighbors’ terms: Pr( t(d), t(N(d)) |c) Better model, using neighbors’ classes : Pr( t(d), c(N(d)) |c] Circularity 11/11/01 sdbi winter 2001 52

Relaxation labeling n Resolve the circularity: n n 11/11/01 Initial: Pr(0)(c|d) to each document d N(d 1) where d 1 is a test document (use text only) Iterations: sdbi winter 2001 53

Social network analysis n Social networks: n n n between academics by co authoring, advising. between movie personnel by directing and acting. between people by making phone calls between web pages by hyperlinking to other web pages. Applications n n 11/11/01 Google HITS sdbi winter 2001 54

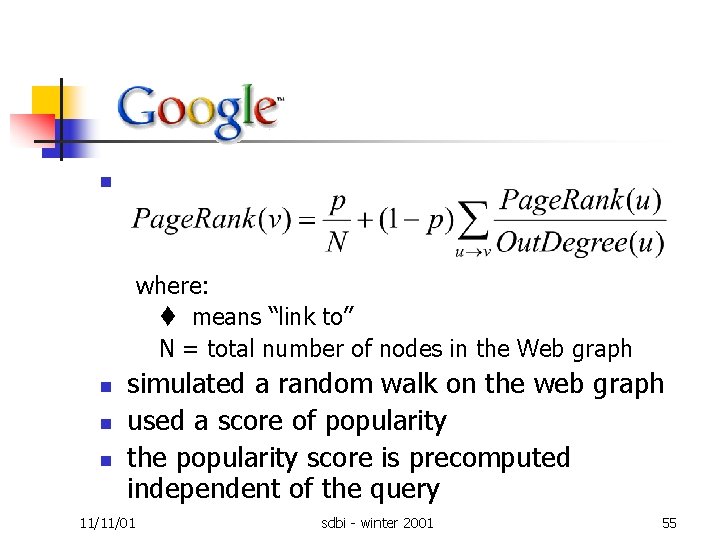

n where: means “link to” N = total number of nodes in the Web graph n n n simulated a random walk on the web graph used a score of popularity the popularity score is precomputed independent of the query 11/11/01 sdbi winter 2001 55

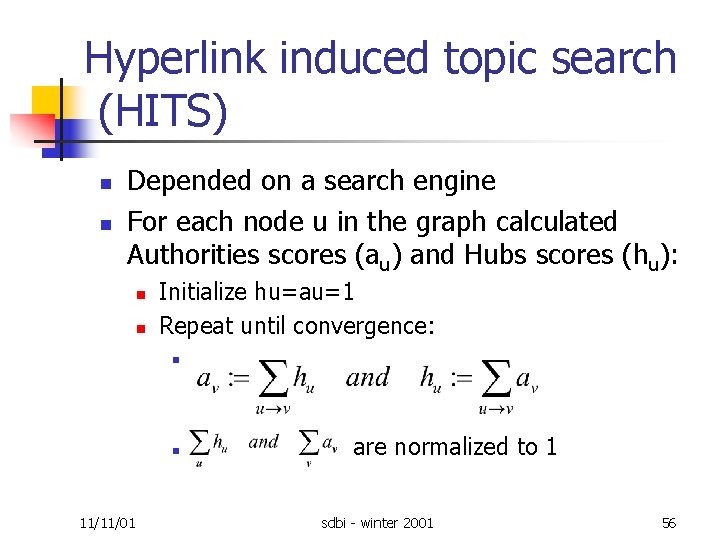

Hyperlink induced topic search (HITS) n n Depended on a search engine For each node u in the graph calculated Authorities scores (au) and Hubs scores (hu): n n Initialize hu=au=1 Repeat until convergence: n n 11/11/01 are normalized to 1 sdbi winter 2001 56

n n Interesting page include links to others interesting pages. The goal: n n n 11/11/01 many relevant pages few irrelevant pages fast sdbi winter 2001 57

Conclusion n Supervised learning n n Probabilistic models Unsupervised learning n Techniques for clustering: n n n Techniques for reducing: n n n k means (top down) agglomerative (bottom up) LSI with SVD Random projections Semi supervised learning n 11/11/01 n The EM algorithm Relaxation labeling sdbi winter 2001 58

referance n http: //www. engr. sjsu. edu/~knapp/HCIRDFSC/C/k_m eans. htm n http: //ei. cs. vt. edu/~cs 5604/cs 5604 cn. CL/CL illus. html n http: //www. cs. utexas. edu/users/inderjit/Datamining n Scatter/Gather: A Cluster based Approach to Browsing Large Document Collections (Cutting, Karger, Pedersen, Tukey) 11/11/01 sdbi winter 2001 59

- Slides: 59