Operating System ecs 251 Spring 2016 2 Distributed

: Operating System ecs 251 Spring 2016 #2: Distributed Files Systems Dr. S. Felix Wu Computer Science Department University of California, Davis http: //www. facebook. com/group. php? gid=29670204725 http: //cyrus. cs. ucdavis. edu/sfelixwu/ecs 251 04/16/2016 ecs 251, spring 2016 1

Distributed FS l Distributed File System – NFS (Network File System) – AFS (Andrew File System) – GFS (Google File System), Hadoop – CODA 04/16/2016 ecs 251, spring 2016 2

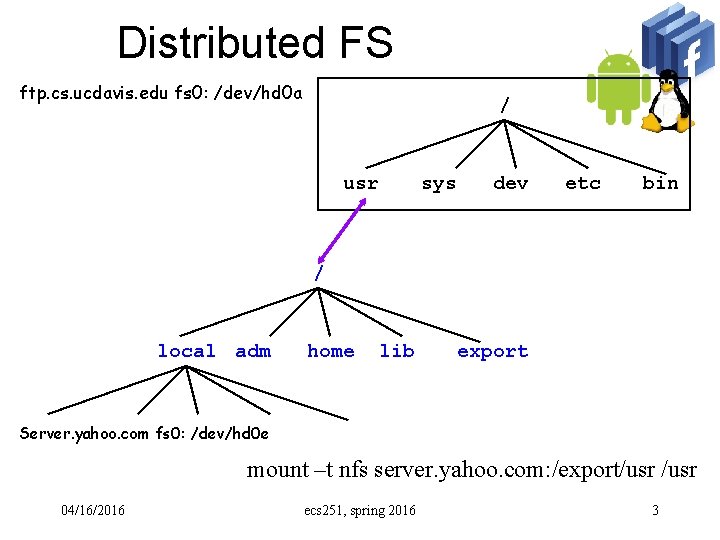

Distributed FS ftp. cs. ucdavis. edu fs 0: /dev/hd 0 a / usr sys dev etc bin / local adm home lib export Server. yahoo. com fs 0: /dev/hd 0 e mount –t nfs server. yahoo. com: /export/usr 04/16/2016 ecs 251, spring 2016 3

Distributed File System Transparency and Location Independence l Reliability and Crash Recovery l Scalability and Efficiency l Correctness and Consistency l Security and Safety l 04/16/2016 ecs 251, spring 2016 4

Correctness l One-copy Unix Semantics? ? 04/16/2016 ecs 251, spring 2016 5

Correctness l One-copy Unix Semantics – every modification to every byte of a file has to be immediately and permanently visible to every client. 04/16/2016 ecs 251, spring 2016 6

Correctness l One-copy Unix Semantics – every modification to every byte of a file has to be immediately and permanently visible to every client. – Conceptually FS sequent access Make sense in a local file system l Single processor versus shared memory l l Is this necessary? 04/16/2016 ecs 251, spring 2016 7

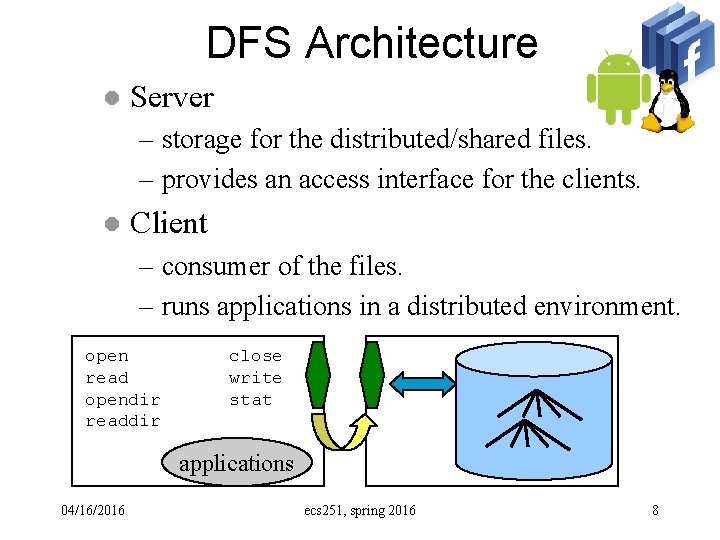

DFS Architecture l Server – storage for the distributed/shared files. – provides an access interface for the clients. l Client – consumer of the files. – runs applications in a distributed environment. open read opendir readdir close write stat applications 04/16/2016 ecs 251, spring 2016 8

NFS (SUN, 1985) l l Based on RPC (Remote Procedure Call) and XDR (Extended Data Representation) Server maintains no state – a READ on the server opens, seeks, reads, and closes – a WRITE is similar, but the buffer is flushed to disk before closing l l Server crash: client continues to try until server reboots – no loss Client crashes: client must rebuild its own state – no effect on server 04/16/2016 ecs 251, spring 2016 9

RPC - XDR RPC: Standard protocol for calling procedures in another machine l Procedure is packaged with authorization and admin info l XDR: standard format for data, because manufacturers of computers cannot agree on byte ordering. l 04/16/2016 ecs 251, spring 2016 10

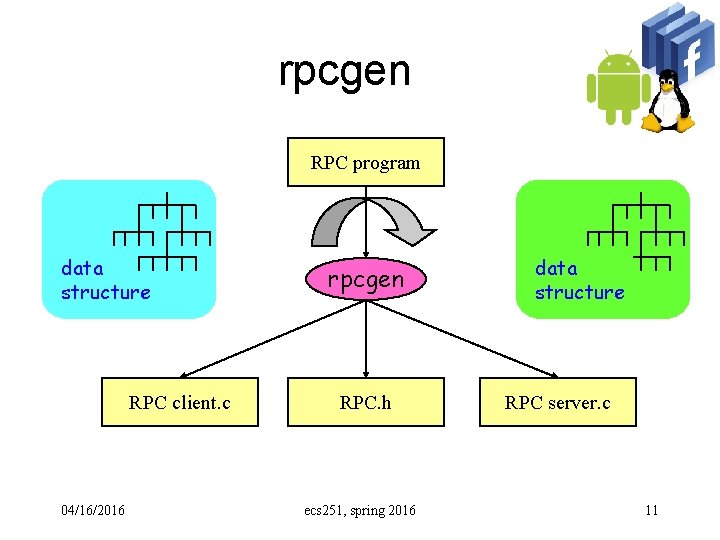

rpcgen RPC program data structure RPC client. c 04/16/2016 rpcgen RPC. h ecs 251, spring 2016 data structure RPC server. c 11

NFS Operations Every operation is independent: server opens file for every operation l File identified by handle -- no state information retained by server l client maintains mount table, v-node, offset in file table etc. l What do these imply? ? ? 04/16/2016 ecs 251, spring 2016 12

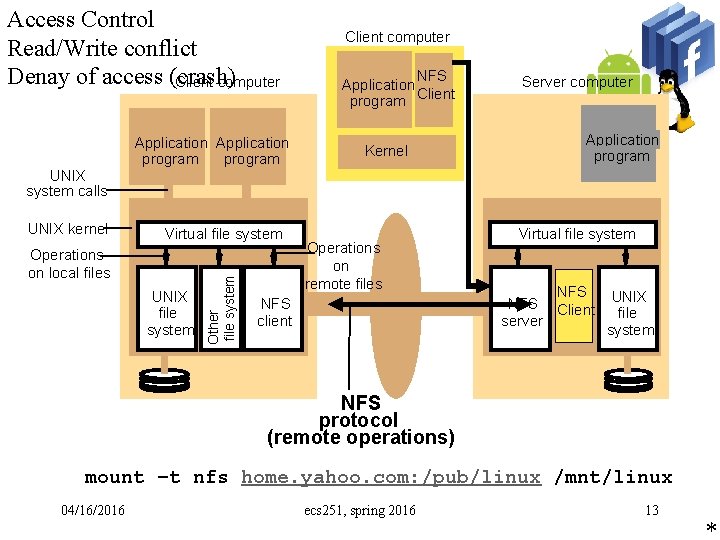

Access Control Read/Write conflict Denay of access (crash) Client computer Application program Client computer NFS Application program Client Kernel Server computer Application program UNIX system calls Virtual file system Operations on local files UNIX file system Other file system UNIX kernel Operations on remote files NFS client Virtual file system NFS Client server UNIX file system NFS protocol (remote operations) mount –t nfs home. yahoo. com: /pub/linux /mnt/linux 04/16/2016 ecs 251, spring 2016 13 *

04/16/2016 ecs 251, spring 2016 14

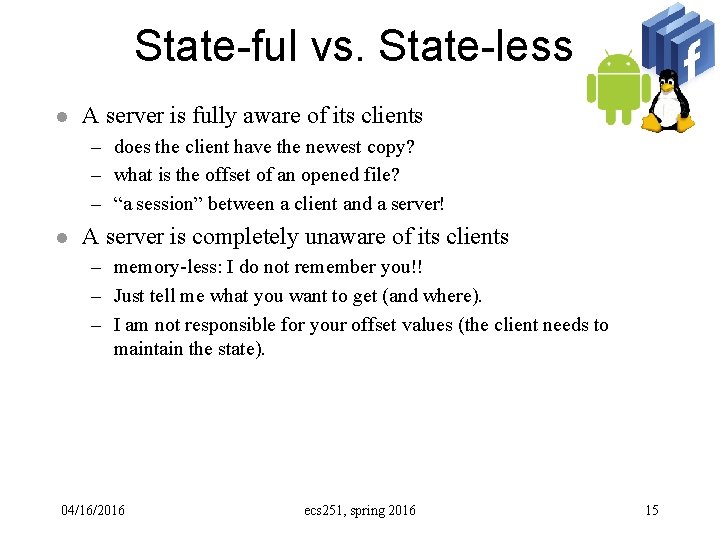

State-ful vs. State-less l A server is fully aware of its clients – does the client have the newest copy? – what is the offset of an opened file? – “a session” between a client and a server! l A server is completely unaware of its clients – memory-less: I do not remember you!! – Just tell me what you want to get (and where). – I am not responsible for your offset values (the client needs to maintain the state). 04/16/2016 ecs 251, spring 2016 15

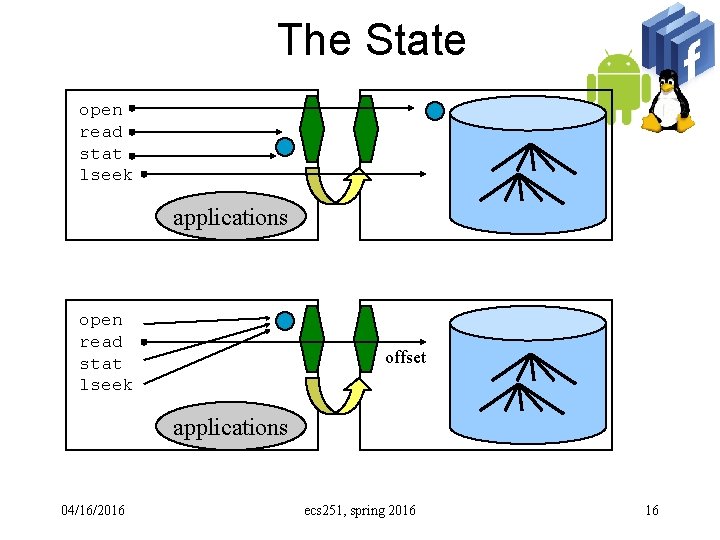

The State open read stat lseek applications open read stat lseek offset applications 04/16/2016 ecs 251, spring 2016 16

Network File Sharing l Server side: – Rpcbind (portmap) – Mountd - respond to mount requests (sometimes called rpc. mountd). l Relies on several files – /etc/dfstab, – /etc/exports, – /etc/netgroup – – nfsd - serves files - actually a call to kernel level code. lockd – file locking daemon. statd – manages locks for lockd. rquotad – manages quotas for exported file systems. 04/16/2016 ecs 251, spring 2016 17

Network File Sharing l Client Side – biod - client side caching daemon – mount must understand the hostname: directory convention. – Filesystem entries in /etc/[v]fstab tell the client what filesystems to mount. 04/16/2016 ecs 251, spring 2016 18

Unix file semantics l NFS: – open a file with read-write mode – later, the server’s copy becomes read-only mode – now, the application tries to write it!! 04/16/2016 ecs 251, spring 2016 19

Problems with NFS l Performance not scaleable: – maybe it is OK for a local office. – will be horrible with large scale systems. 04/16/2016 ecs 251, spring 2016 20

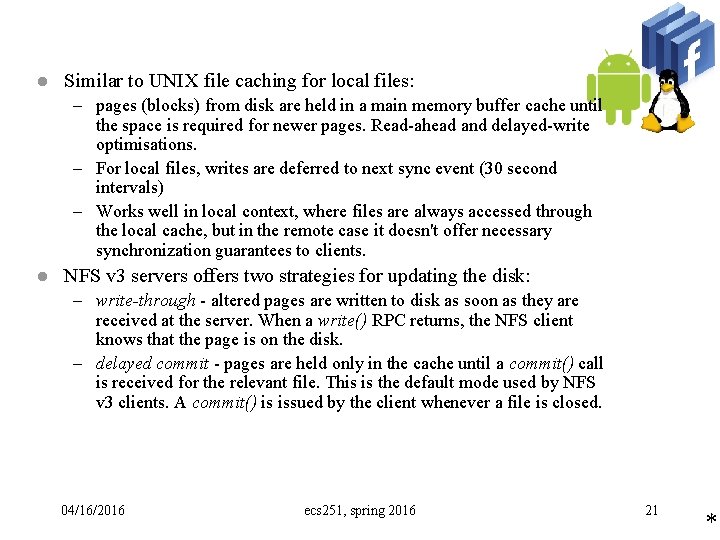

l Similar to UNIX file caching for local files: – pages (blocks) from disk are held in a main memory buffer cache until the space is required for newer pages. Read-ahead and delayed-write optimisations. – For local files, writes are deferred to next sync event (30 second intervals) – Works well in local context, where files are always accessed through the local cache, but in the remote case it doesn't offer necessary synchronization guarantees to clients. l NFS v 3 servers offers two strategies for updating the disk: – write-through - altered pages are written to disk as soon as they are received at the server. When a write() RPC returns, the NFS client knows that the page is on the disk. – delayed commit - pages are held only in the cache until a commit() call is received for the relevant file. This is the default mode used by NFS v 3 clients. A commit() is issued by the client whenever a file is closed. 04/16/2016 ecs 251, spring 2016 21 *

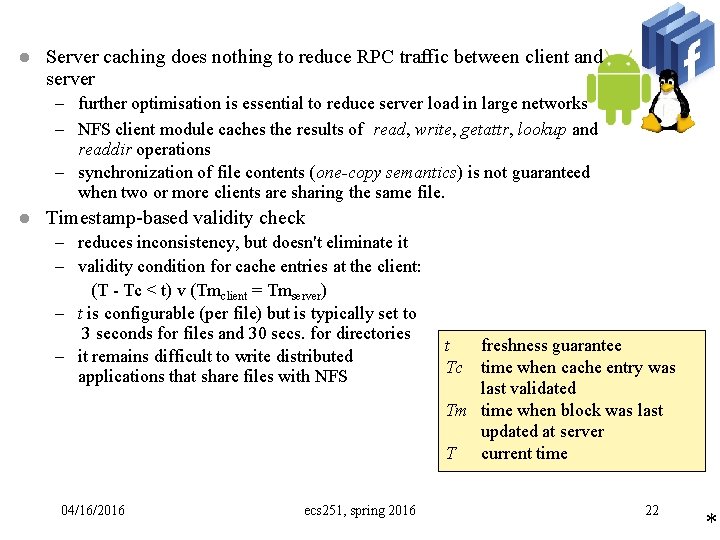

l Server caching does nothing to reduce RPC traffic between client and server – further optimisation is essential to reduce server load in large networks – NFS client module caches the results of read, write, getattr, lookup and readdir operations – synchronization of file contents (one-copy semantics) is not guaranteed when two or more clients are sharing the same file. l Timestamp-based validity check – reduces inconsistency, but doesn't eliminate it – validity condition for cache entries at the client: (T - Tc < t) v (Tmclient = Tmserver) – t is configurable (per file) but is typically set to 3 seconds for files and 30 secs. for directories – it remains difficult to write distributed applications that share files with NFS 04/16/2016 ecs 251, spring 2016 t freshness guarantee Tc time when cache entry was last validated Tm time when block was last updated at server T current time 22 *

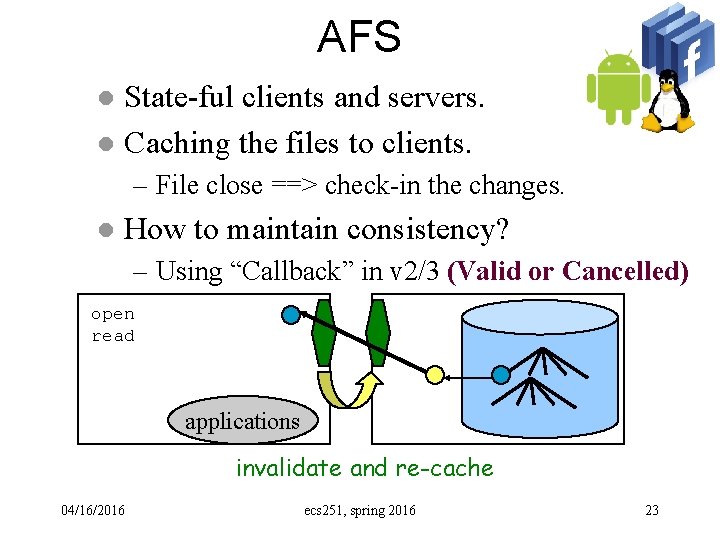

AFS State-ful clients and servers. l Caching the files to clients. l – File close ==> check-in the changes. l How to maintain consistency? – Using “Callback” in v 2/3 (Valid or Cancelled) open read applications invalidate and re-cache 04/16/2016 ecs 251, spring 2016 23

Why AFS? l l Shared files are infrequently updated Local cache of a few hundred mega bytes – Now 50~100 giga bytes l Unix workload: – Files are small, Read Operations dominated, sequential access is common, read/written by one user, reference bursts. – Are these still true? 04/16/2016 ecs 251, spring 2016 24

Fault Tolerance in AFS l a server crashes l a client crashes – check for call-back tokens first. 04/16/2016 ecs 251, spring 2016 27

Problems with AFS Availability l what happens if call-back itself is lost? ? l 04/16/2016 ecs 251, spring 2016 28

Atomic commit protocols one-phase atomic commit protocol – the coordinator tells the participants whether to commit or abort – what is the problem with that? – this does not allow one of the servers to decide to abort – it may have discovered a deadlock or it may have crashed and been restarted two-phase atomic commit protocol – is designed to allow any participant to choose to abort a transaction – phase 1 - each participant votes. If it votes to commit, it is prepared. It cannot change its mind. In case it crashes, it must save updates in permanent store – phase 2 - the participants carry out the joint decision The decision could be commit or abort – participants record it in permanent store 04/16/2016 ecs 251, spring 2016 29 •

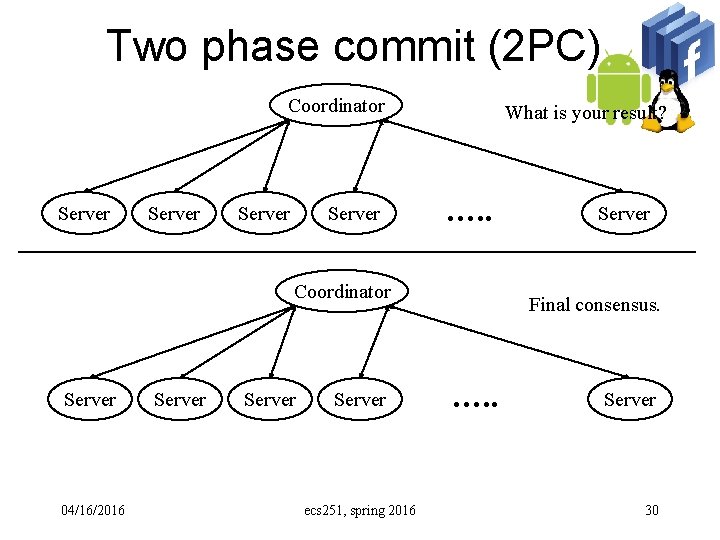

Two phase commit (2 PC) Coordinator Server What is your result? …. . Coordinator Server 04/16/2016 Server ecs 251, spring 2016 Server Final consensus. …. . Server 30

Failure model l Commit protocols are designed to work in – – – l asynchronous system (e. g. messages may take a very long time) servers/coordinator may crash messages may be lost. assume corrupt and duplicated messages are removed. no byzantine faults – servers either crash or they obey their requests 2 PC is an example of a protocol for reaching a consensus. – because crash failures of processes are masked by replacing a crashed process with a new process whose state is set from information saved in permanent storage and information held by other processes. 04/16/2016 ecs 251, spring 2016 31 •

2 PC l 2 PC – voting phase: coordinator asks all servers if they can commit l if yes, server records updates in permanent storage and then votes – completion phase: coordinator tells all servers to commit or abort 04/16/2016 ecs 251, spring 2016 32 •

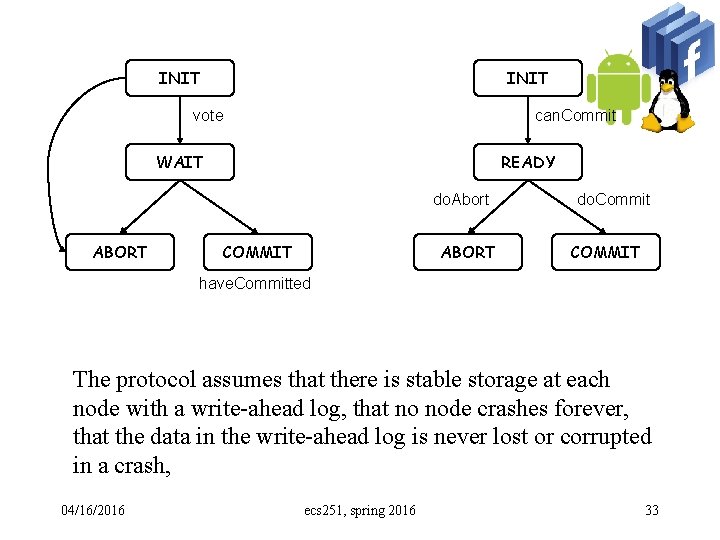

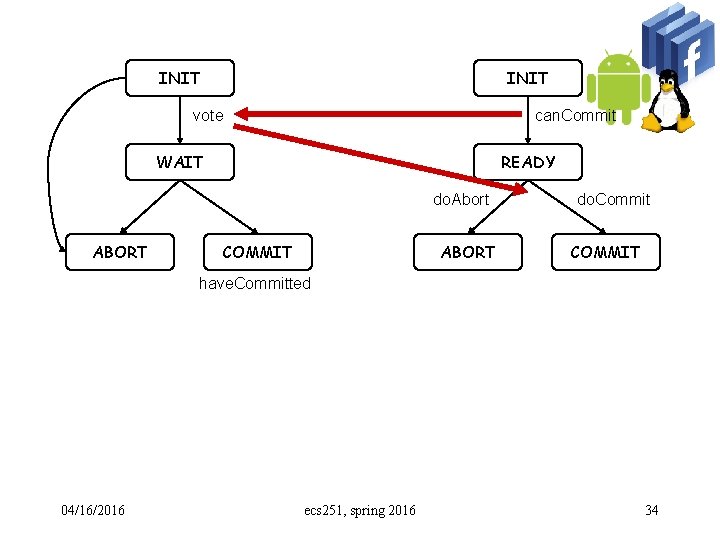

INIT vote can. Commit WAIT READY do. Abort ABORT COMMIT ABORT do. Commit COMMIT have. Committed The protocol assumes that there is stable storage at each node with a write-ahead log, that no node crashes forever, that the data in the write-ahead log is never lost or corrupted in a crash, 04/16/2016 ecs 251, spring 2016 33

INIT vote can. Commit WAIT READY do. Abort ABORT COMMIT ABORT do. Commit COMMIT have. Committed 04/16/2016 ecs 251, spring 2016 34

Failures Some servers missed “can. Commit”. l Coordinator missed some “votes”. l Some servers missed “do. Abort” or “do. Commit”. l 04/16/2016 ecs 251, spring 2016 35

Failures/Crashes Some servers crashed b/a “can. Commit”. l Coordinator crashed b/a receiving some “votes”. l Some servers crashes b/a receiving “do. Abort” or “do. Commit”. l 04/16/2016 ecs 251, spring 2016 36

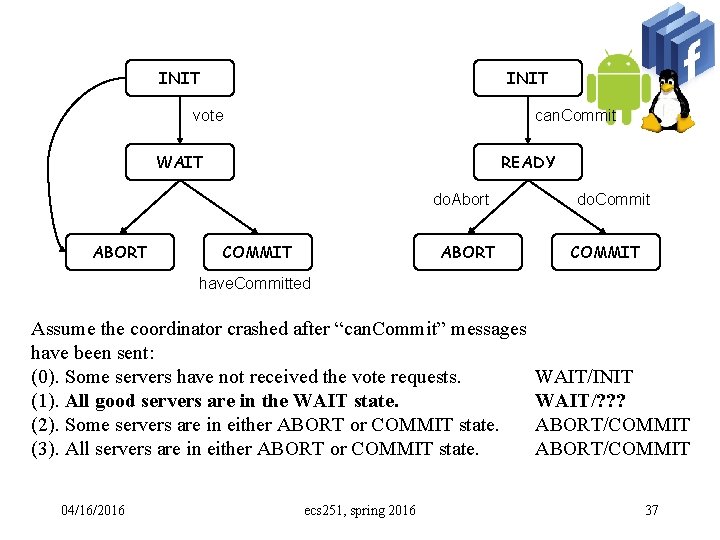

INIT vote can. Commit WAIT READY do. Abort ABORT COMMIT ABORT do. Commit COMMIT have. Committed Assume the coordinator crashed after “can. Commit” messages have been sent: (0). Some servers have not received the vote requests. (1). All good servers are in the WAIT state. (2). Some servers are in either ABORT or COMMIT state. (3). All servers are in either ABORT or COMMIT state. 04/16/2016 ecs 251, spring 2016 WAIT/INIT WAIT/? ? ? ABORT/COMMIT 37

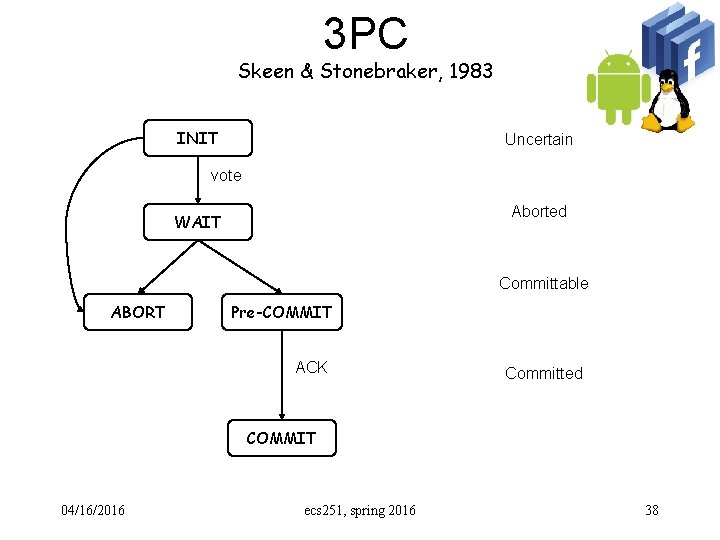

3 PC Skeen & Stonebraker, 1983 INIT Uncertain vote Aborted WAIT Committable ABORT Pre-COMMIT ACK Committed COMMIT 04/16/2016 ecs 251, spring 2016 38

Consistency Read: are we reading the fresh copy? l Write: have we updated all the copies? l Failure/Partition l 04/16/2016 ecs 251, spring 2016 39

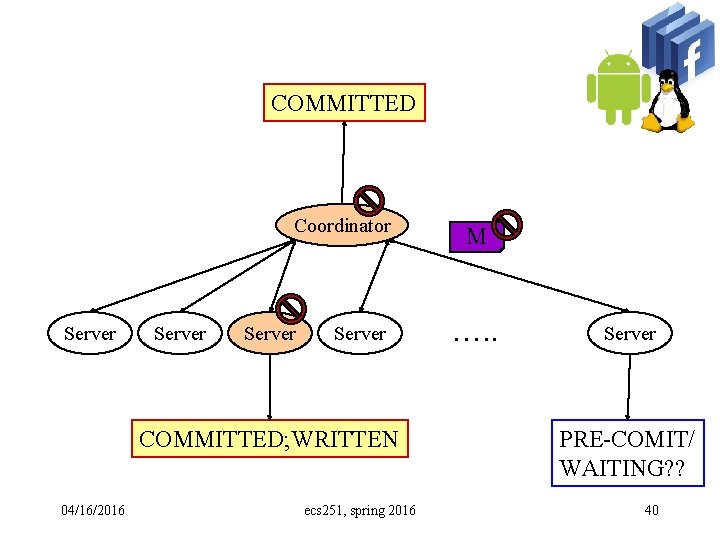

COMMITTED Coordinator Server COMMITTED; WRITTEN 04/16/2016 ecs 251, spring 2016 M …. . Server PRE-COMIT/ WAITING? ? 40

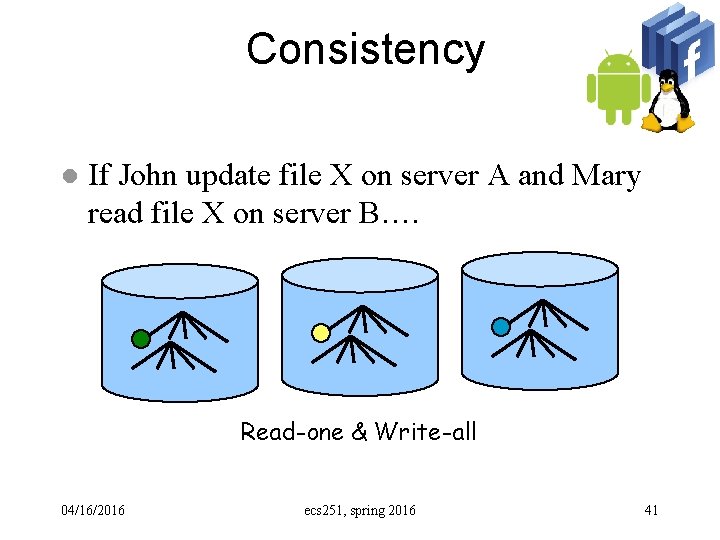

Consistency l If John update file X on server A and Mary read file X on server B…. Read-one & Write-all 04/16/2016 ecs 251, spring 2016 41

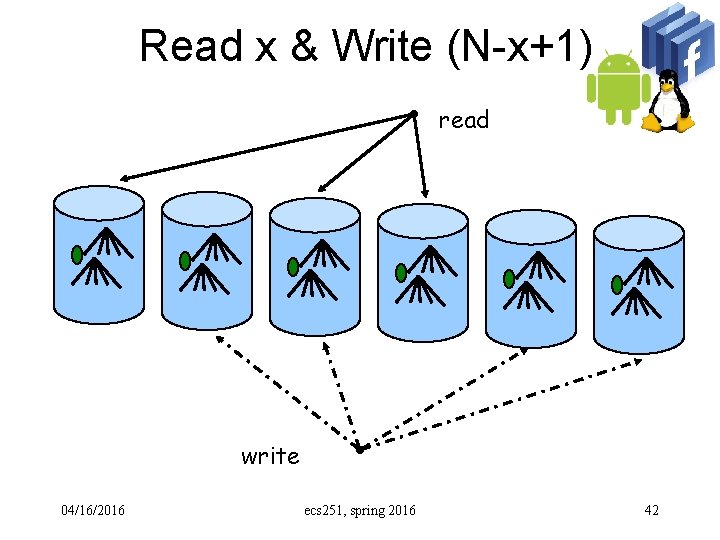

Read x & Write (N-x+1) read write 04/16/2016 ecs 251, spring 2016 42

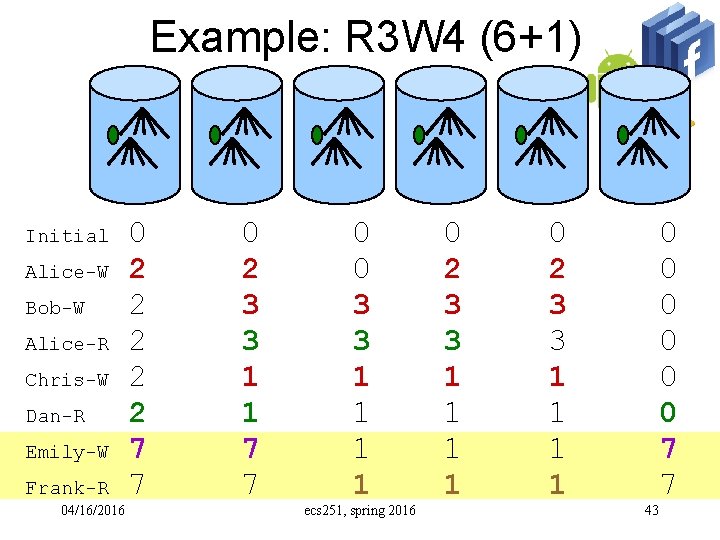

Example: R 3 W 4 (6+1) Initial Alice-W Bob-W Alice-R Chris-W Dan-R Emily-W Frank-R 04/16/2016 0 2 2 2 7 7 0 2 3 3 1 1 7 7 0 0 3 3 1 1 ecs 251, spring 2016 0 2 3 3 1 1 1 1 43 0 0 0 7 7

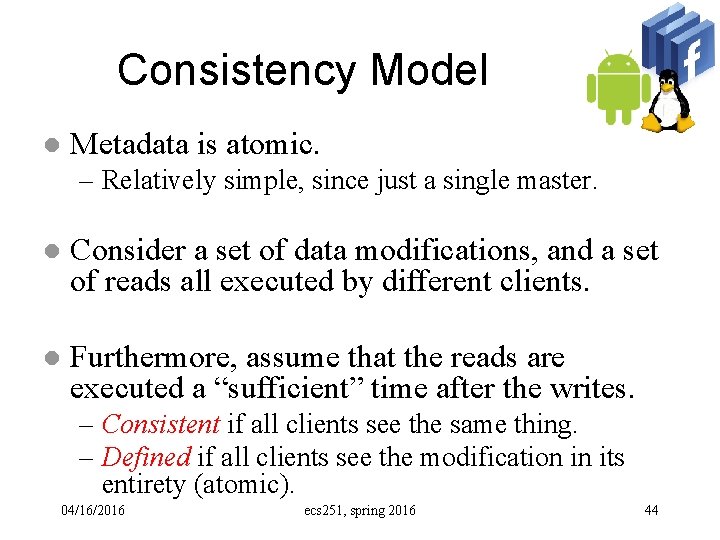

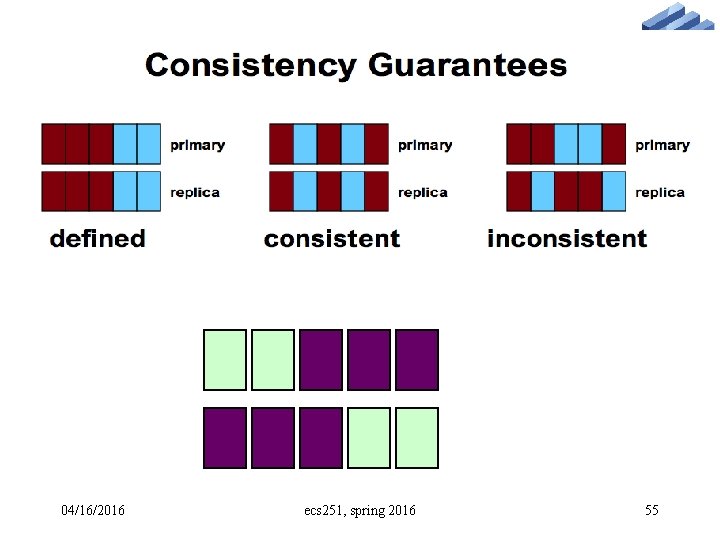

Consistency Model l Metadata is atomic. – Relatively simple, since just a single master. l Consider a set of data modifications, and a set of reads all executed by different clients. l Furthermore, assume that the reads are executed a “sufficient” time after the writes. – Consistent if all clients see the same thing. – Defined if all clients see the modification in its entirety (atomic). 04/16/2016 ecs 251, spring 2016 44

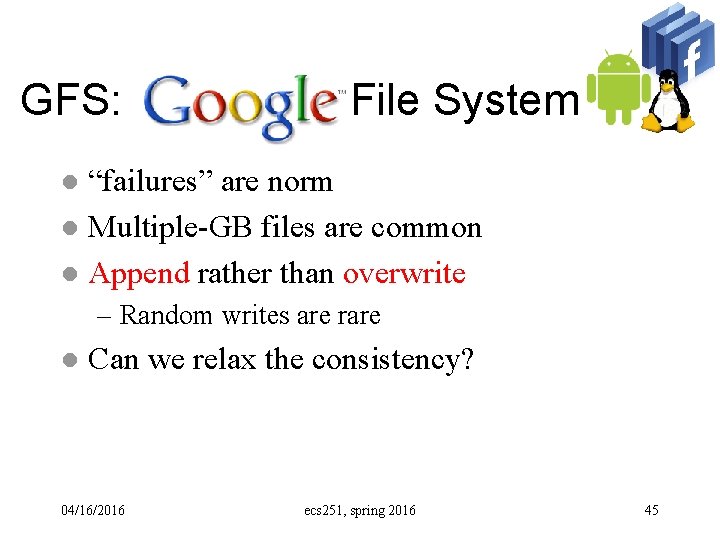

GFS: Google File System “failures” are norm l Multiple-GB files are common l Append rather than overwrite l – Random writes are rare l Can we relax the consistency? 04/16/2016 ecs 251, spring 2016 45

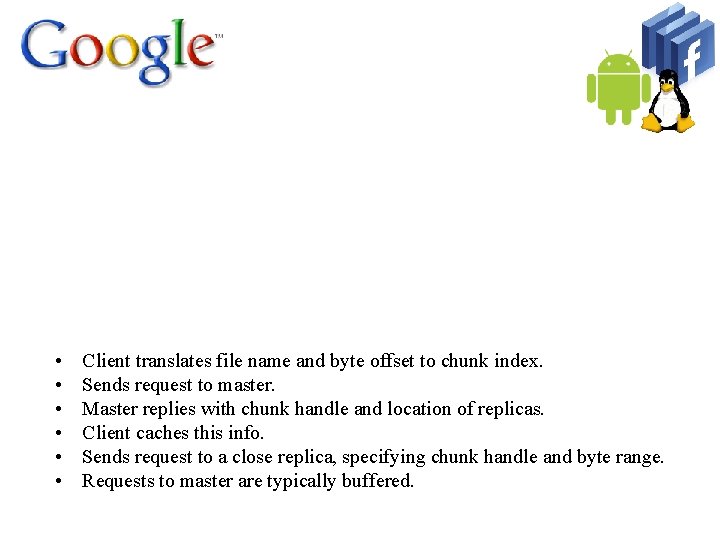

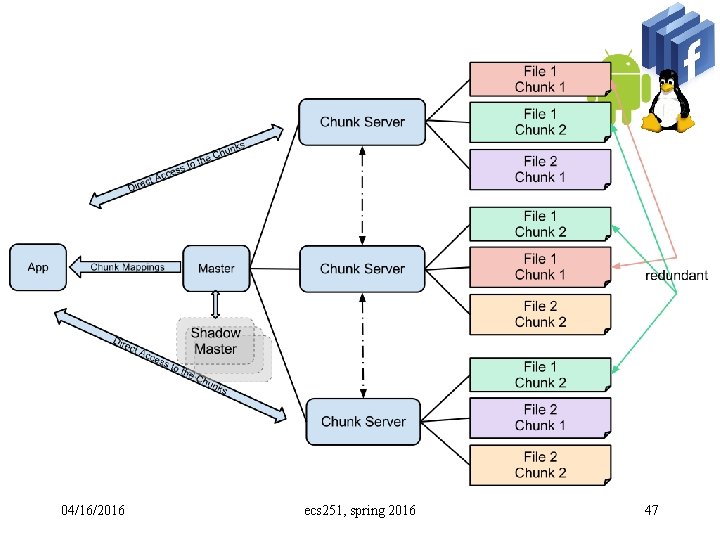

• • • Client translates file name and byte offset to chunk index. Sends request to master. Master replies with chunk handle and location of replicas. Client caches this info. Sends request to a close replica, specifying chunk handle and byte range. Requests to master are typically buffered. 04/16/2016 ecs 251, spring 2016 46

04/16/2016 ecs 251, spring 2016 47

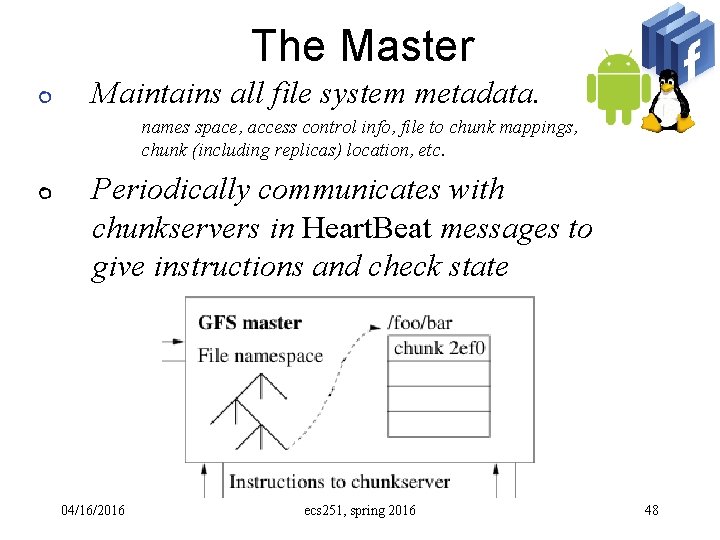

The Master Maintains all file system metadata. names space, access control info, file to chunk mappings, chunk (including replicas) location, etc. Periodically communicates with chunkservers in Heart. Beat messages to give instructions and check state 04/16/2016 ecs 251, spring 2016 48

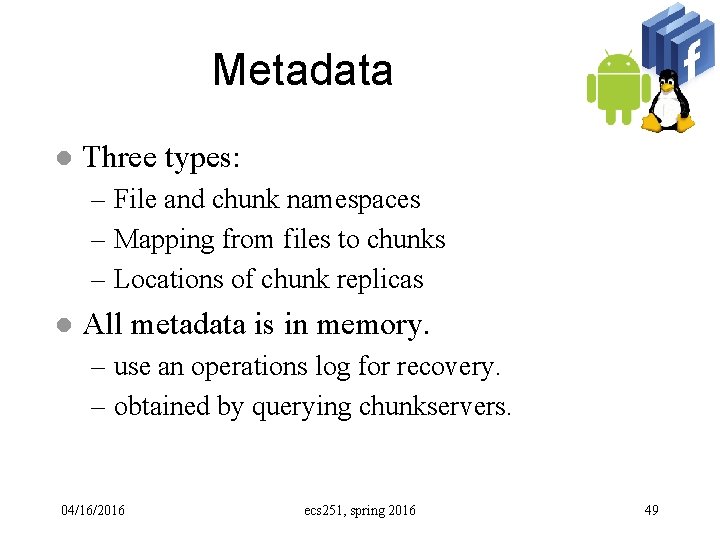

Metadata l Three types: – File and chunk namespaces – Mapping from files to chunks – Locations of chunk replicas l All metadata is in memory. – use an operations log for recovery. – obtained by querying chunkservers. 04/16/2016 ecs 251, spring 2016 49

Operation Log Historical record of metadata changes. l Replicated on remote machines, operations are logged synchronously. l Checkpoints used to bound startup time. l Checkpoints created in background. l 04/16/2016 ecs 251, spring 2016 50

The Master Helps make sophisticated chunk placement and replication decision, using global knowledge For reading and writing, client contacts Master to get chunk locations, then deals directly with chunkservers Master is not a bottleneck for reads/writes 04/16/2016 ecs 251, spring 2016 51

Single Master l General disadvantages for distributed systems: – Single point of failure – Bottleneck (scalability) l Solution? – Clients use master only for metadata, not reading/writing. 04/16/2016 ecs 251, spring 2016 52

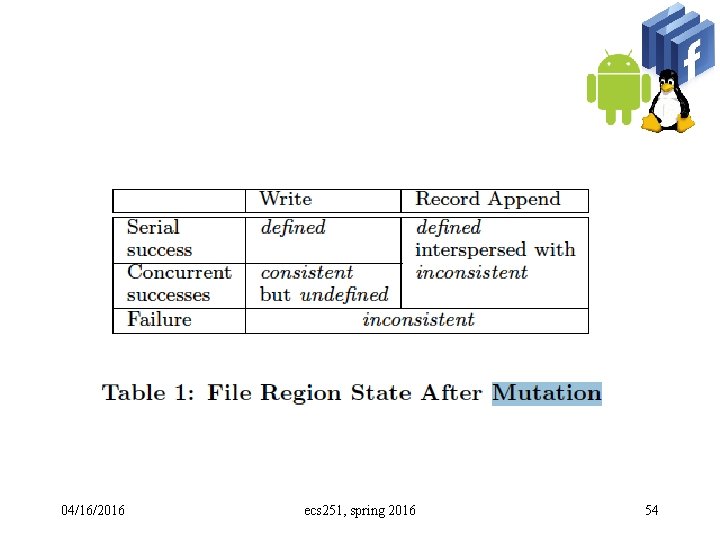

Consistency Model l Metadata is atomic. – Relatively simple, since just a single master. l Consider a set of data modifications, and a set of reads all executed by different clients. l Furthermore, assume that the reads are executed a “sufficient” time after the writes. – Consistent if all clients see the same thing. – Defined if all clients see the modification in its entirety (atomic). 04/16/2016 ecs 251, spring 2016 53

04/16/2016 ecs 251, spring 2016 54

04/16/2016 ecs 251, spring 2016 55

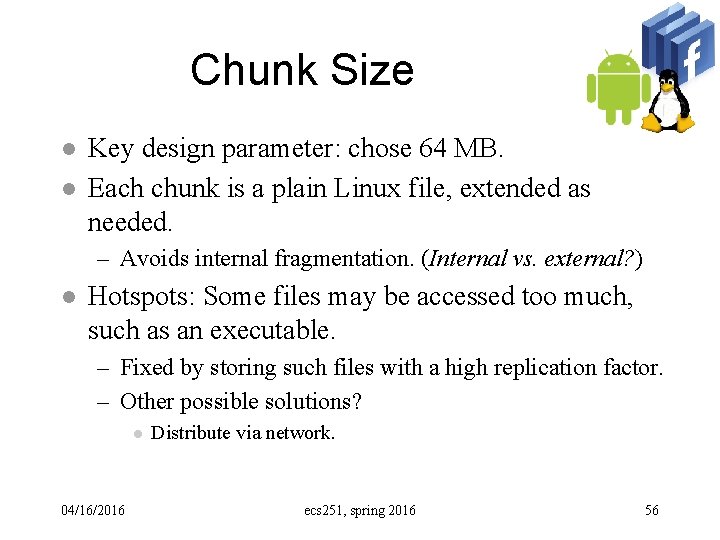

Chunk Size l l Key design parameter: chose 64 MB. Each chunk is a plain Linux file, extended as needed. – Avoids internal fragmentation. (Internal vs. external? ) l Hotspots: Some files may be accessed too much, such as an executable. – Fixed by storing such files with a high replication factor. – Other possible solutions? l 04/16/2016 Distribute via network. ecs 251, spring 2016 56

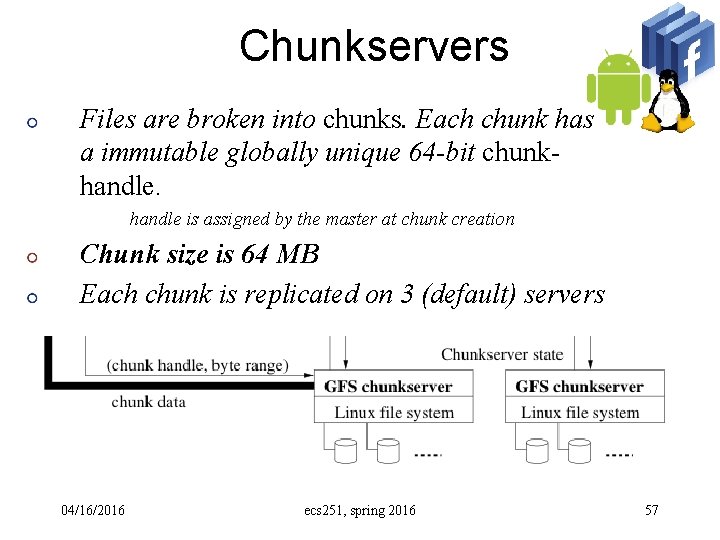

Chunkservers Files are broken into chunks. Each chunk has a immutable globally unique 64 -bit chunkhandle is assigned by the master at chunk creation Chunk size is 64 MB Each chunk is replicated on 3 (default) servers 04/16/2016 ecs 251, spring 2016 57

Clients Linked to apps using the file system API. Communicates with master and chunkservers for reading and writing Master interactions only for metadata Chunkserver interactions for data Only caches metadata information Data is too large to cache. 04/16/2016 ecs 251, spring 2016 58

Chunk Locations Master does not keep a persistent record of locations of chunks and replicas. Polls chunkservers at startup, and when new chunkservers join/leave for this. Stays up to date by controlling placement of new chunks and through Heart. Beat messages (when monitoring chunkservers) 04/16/2016 ecs 251, spring 2016 59

Chunk Locations l No persistent states – Polls chunkservers at startup – Use heartbeat messages to monitor servers – Simplicity – On-demand approach vs. coordination l 04/16/2016 On-demand wins when changes (failures) are often ecs 251, spring 2016 60

Read/Write/Append l Read? ? ? 04/16/2016 ecs 251, spring 2016 61

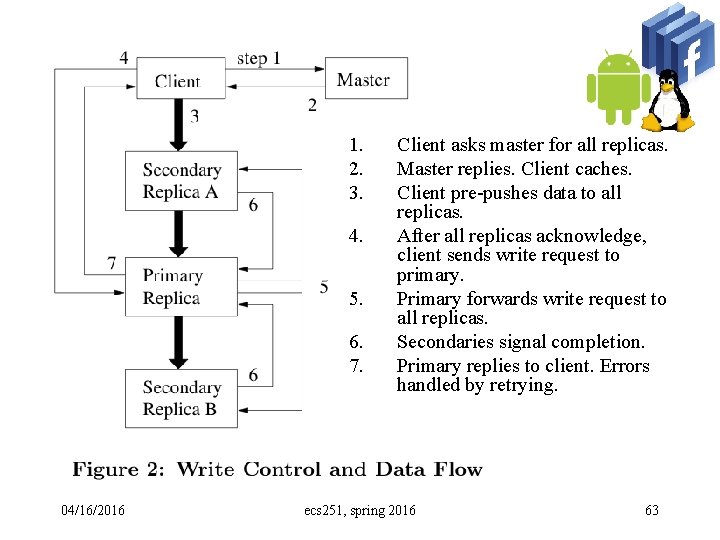

Leases and Mutation Order Each modification is performed at all replicas. l Maintain consistent order by having a single primary chunkserver specify the order. l Primary chunkservers are maintained with leases (60 seconds default). l 04/16/2016 ecs 251, spring 2016 62

1. 2. 3. 4. 5. 6. 7. 04/16/2016 Client asks master for all replicas. Master replies. Client caches. Client pre-pushes data to all replicas. After all replicas acknowledge, client sends write request to primary. Primary forwards write request to all replicas. Secondaries signal completion. Primary replies to client. Errors handled by retrying. ecs 251, spring 2016 63

System Interactions The master grants a chunk lease to a replica l The replica holding the lease determines the order of updates to all replicas l Lease l – 60 second timeouts – Can be extended indefinitely – Extension request are piggybacked on heartbeat messages – After a timeout expires, the master can grant new leases 04/16/2016 ecs 251, spring 2016 64

Snapshot l A “snapshot” is a copy of a system at a moment in time. – When are snapshots useful? – Does “cp –r” generate snapshots? l Handled using copy-on-write (COW). – First revoke all leases. – Then duplicate the metadata, but point to the same chunks. – When a client requests a write, the master allocates a new chunk handle. 04/16/2016 ecs 251, spring 2016 65

NFS, AFS, & GFS l Read the newest copy – NFS: as long as we don’t cache… – AFS: the read callback might be broken… – GFS: we can check the master… 04/16/2016 ecs 251, spring 2016 66

NFS, AFS, & GFS l Write to the master copy – NFS: write through – AFS: you have to have the callback … – GFS: we will update all three replicas, but what happen if a read occurs during the process we are updating the copies. (name space and file to chunks mapping) 04/16/2016 ecs 251, spring 2016 67

Pessimistic versus Optimistic l Locking the world while I am updating it… – Scaleable? – “Serializable schedule” – Assuming a close system -- “you can NOT fail at this moment as I am updating this particular transaction” -- we can use “log” to handle the issue of “atomicity” (all or nothing). 04/16/2016 ecs 251, spring 2016 68

Soft Update l Create X (t 1) and Delete Y (t 2) l T 1, T 2, T 1 l l We will enforce it when resolve the problem of circular dependency! 04/16/2016 ecs 251, spring 2016 69

SU & Background FSCK Soft Update guarantees that the File System will always in a “consistent” state at all time. l Essentially, Soft Update prevents any chances of inconsistency! l 04/16/2016 ecs 251, spring 2016 70

An Alternative Approach “Optimistic” l The chance for certain bad things to occur is very small (depending on what you are doing). l And, it is very expensive to pessimistically prevent the probability. l “Let it happen, and we try to detect and recover from that…” l 04/16/2016 ecs 251, spring 2016 71

The Optimistic Approach Regular Execution with “recording” l Conflict Detection based on the “recorded” l Conflict resolution l 04/16/2016 ecs 251, spring 2016 72

Example l Allowing “inconsistencies” – Without soft update, we have to do FSCK in the foreground (before we can use it). – I. e. , we try to eliminate “inconsistencies” l But, do we really need “perfectly consistent FS” for all the applications? – Why not, take a snapshot and then do background FSCK anyway! 04/16/2016 ecs 251, spring 2016 73

Optimistic? NFS l AFS l GFS l 04/16/2016 ecs 251, spring 2016 74

Optimistic? l NFS – If the server changes the access mode in the middle of an open session from a client… l AFS – “Callback” is the check for inconsistencies. l GFS – Failure in the middle of a write/append 04/16/2016 ecs 251, spring 2016 75

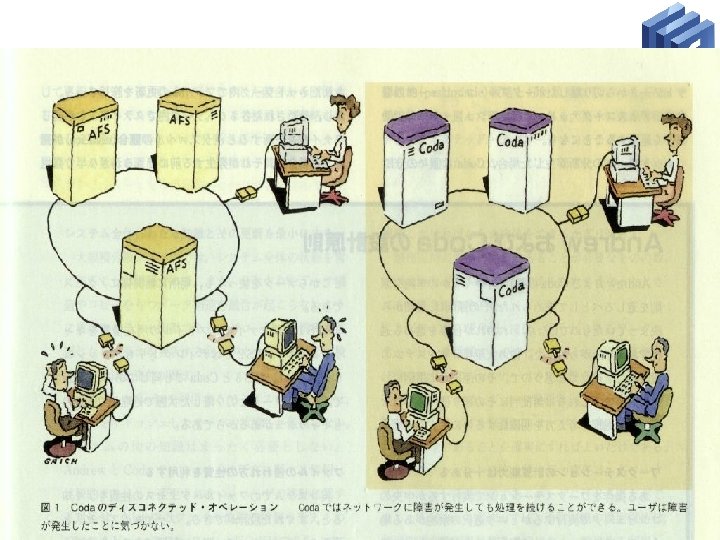

CODA l Server Replication: – if one server goes down, I can get another. l Disconnected Operation: – if all go down, I will use my own cache. 04/16/2016 ecs 251, spring 2016 76

04/16/2016 ecs 251, spring 2016 77

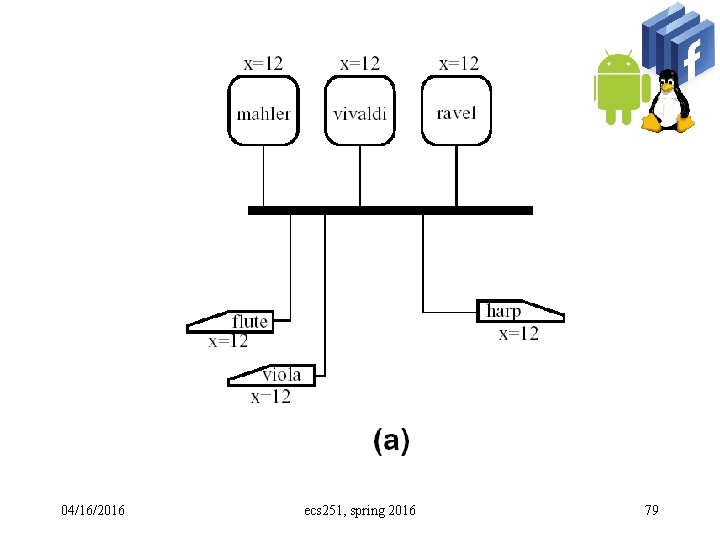

Disconnected Operation Continue critical work when that repository is inaccessible. l Key idea: caching data. l – Performance – Availability l Server Replication 04/16/2016 ecs 251, spring 2016 78

04/16/2016 ecs 251, spring 2016 79

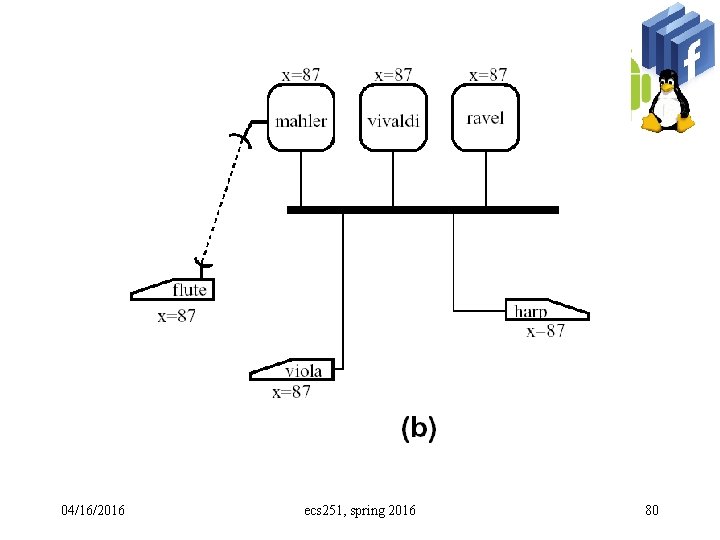

04/16/2016 ecs 251, spring 2016 80

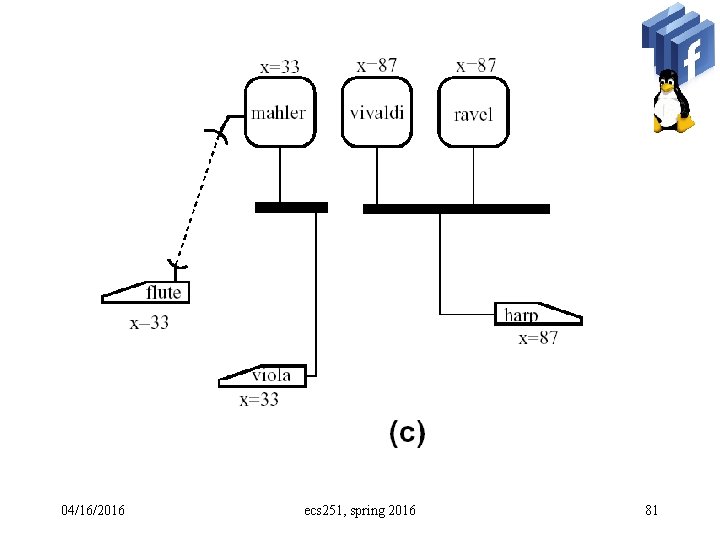

04/16/2016 ecs 251, spring 2016 81

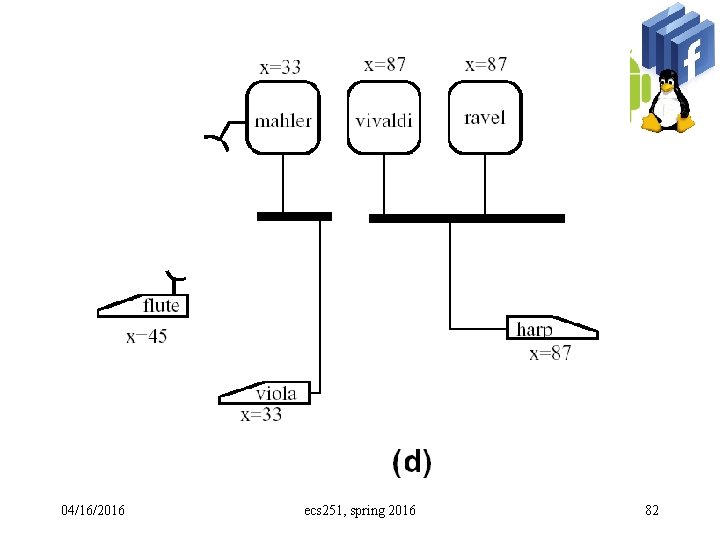

04/16/2016 ecs 251, spring 2016 82

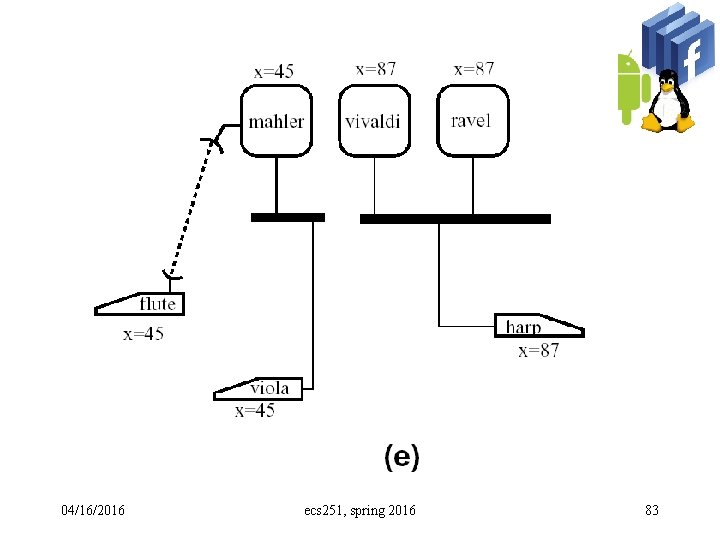

04/16/2016 ecs 251, spring 2016 83

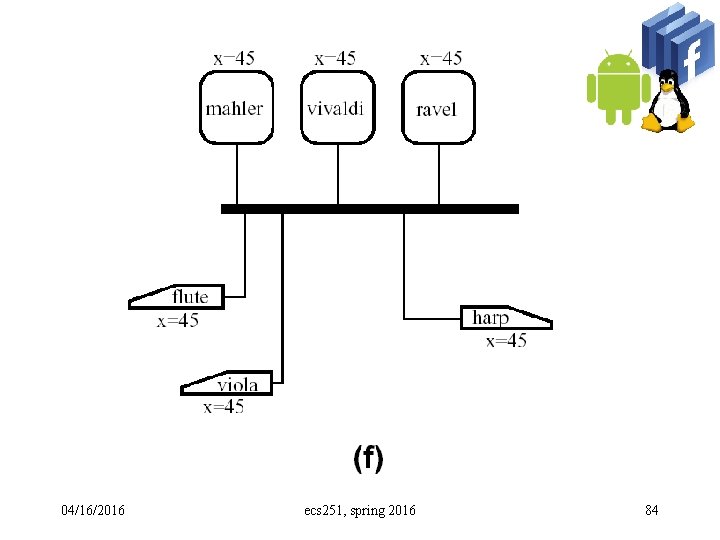

04/16/2016 ecs 251, spring 2016 84

Sequence #, Logical TS l Monotonic increasing and finite l Sequence # wrap around problem – Self-Stabilization – Global Reset 04/16/2016 ecs 251, spring 2016 85

- Slides: 83