MACHINE LEARNING E NEURAL NETWORKS Eleonora Barelli eleonora

MACHINE LEARNING E NEURAL NETWORKS Eleonora Barelli eleonora. barelli 2@unibo. it

What is Machine Learning? ”ML is the science that gives computers the ability to learn without being explicitly programmed” (Arthur Samuel, 1959) Learning • What kind of “learning”? • Approach based on examples bottom-up Database

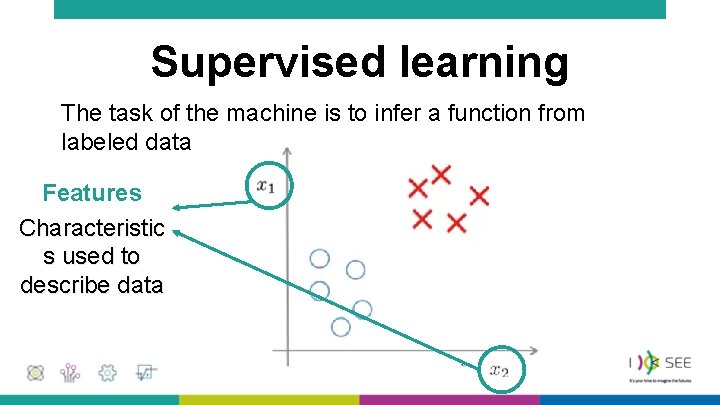

Supervised learning The task of the machine is to infer a function from labeled data Features Characteristic s used to describe data

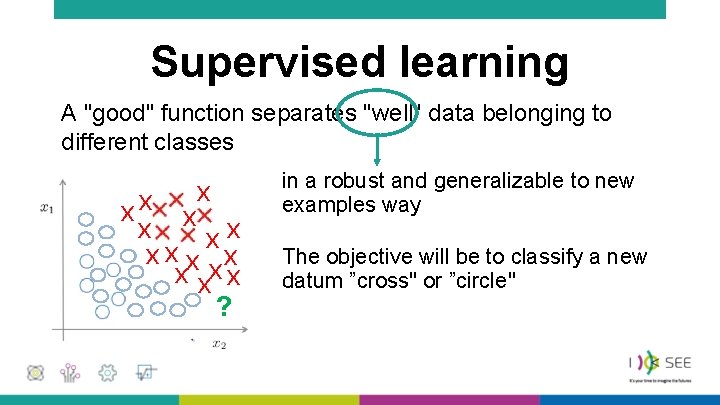

Supervised learning A "good" function separates "well" data belonging to different classes x x xx xx x ? in a robust and generalizable to new examples way The objective will be to classify a new datum ”cross" or ”circle"

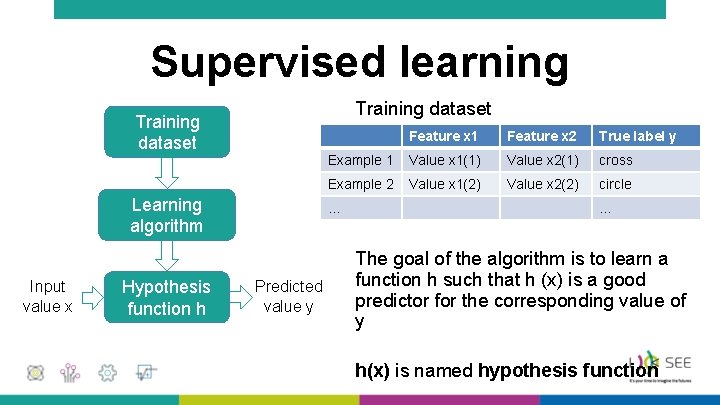

Supervised learning Training dataset Learning algorithm Input value x Hypothesis function h Feature x 1 Feature x 2 True label y Example 1 Value x 1(1) Value x 2(1) cross Example 2 Value x 1(2) Value x 2(2) circle … Predicted value y … The goal of the algorithm is to learn a function h such that h (x) is a good predictor for the corresponding value of y h(x) is named hypothesis function

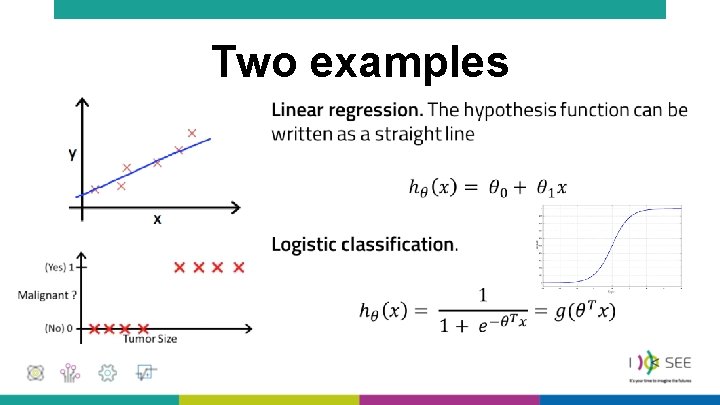

Two examples •

Two examples •

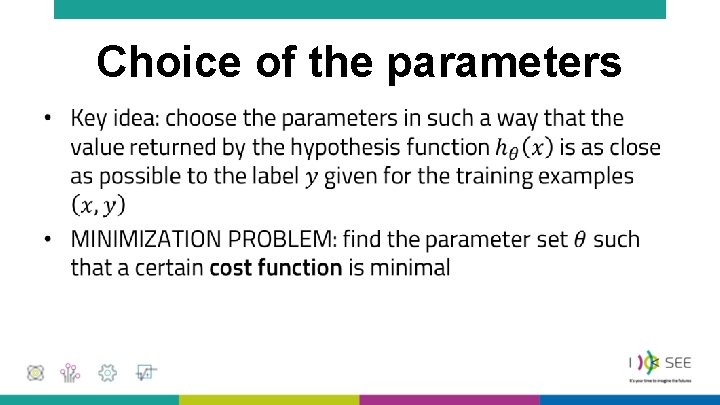

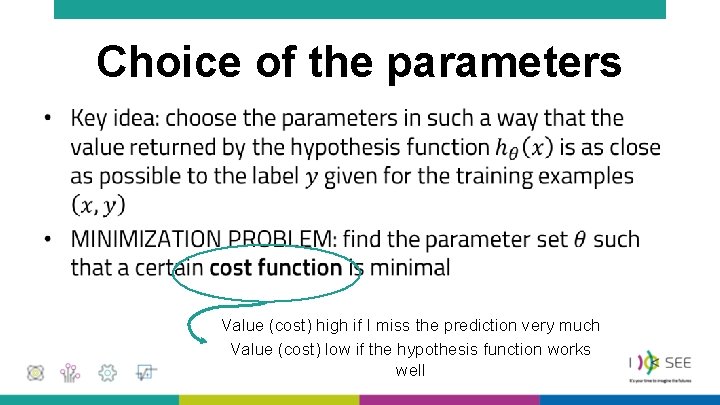

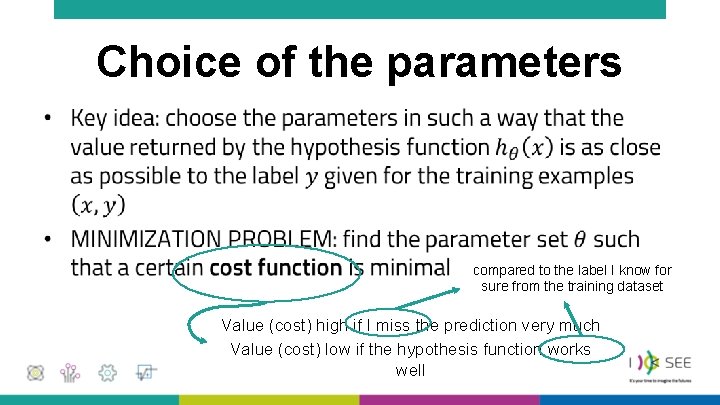

Choice of the parameters •

Choice of the parameters • Value (cost) high if I miss the prediction very much Value (cost) low if the hypothesis function works well

Choice of the parameters • compared to the label I know for sure from the training dataset Value (cost) high if I miss the prediction very much Value (cost) low if the hypothesis function works well

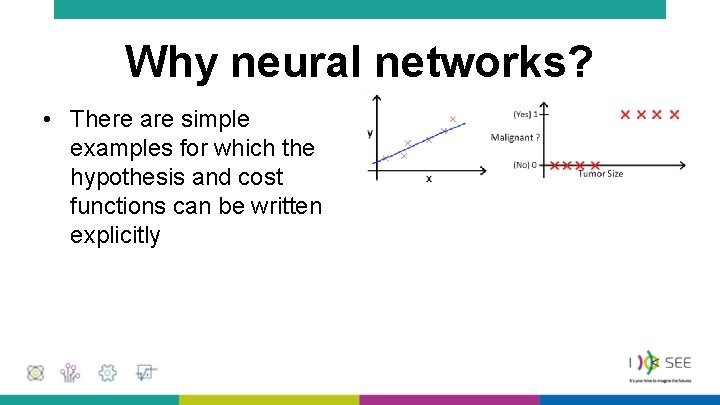

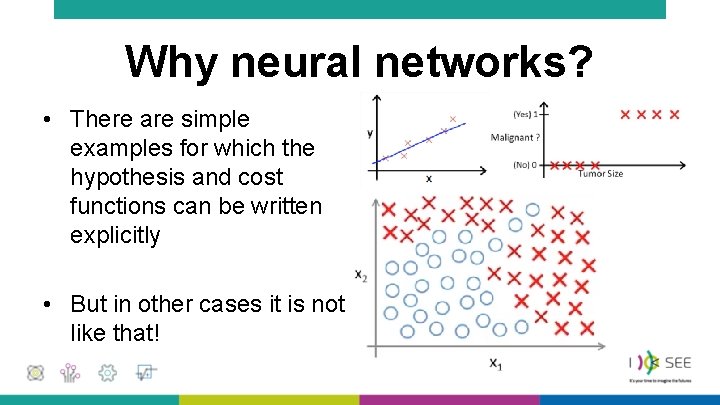

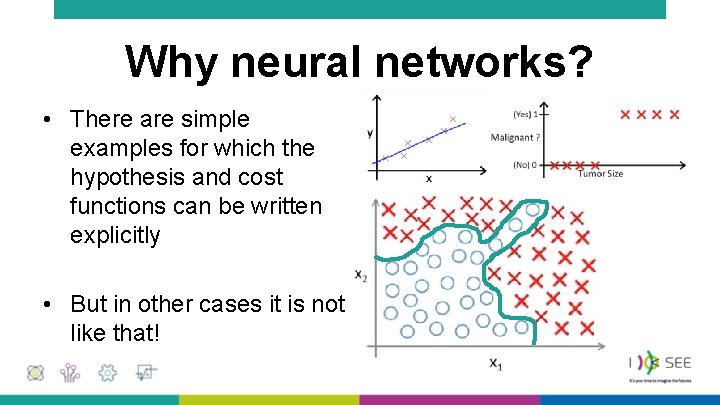

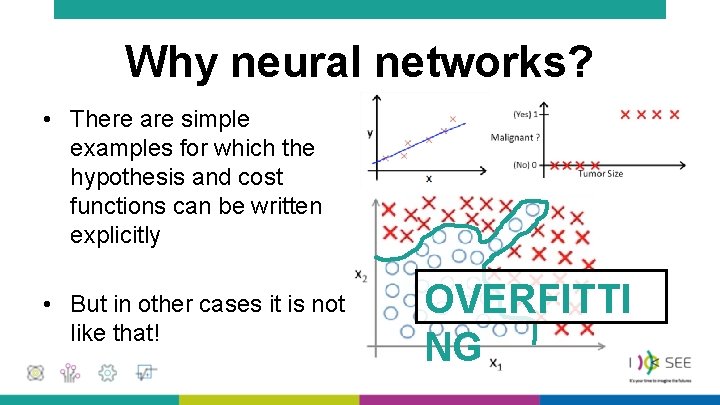

Why neural networks? • There are simple examples for which the hypothesis and cost functions can be written explicitly

Why neural networks? • There are simple examples for which the hypothesis and cost functions can be written explicitly • But in other cases it is not like that!

Why neural networks? • There are simple examples for which the hypothesis and cost functions can be written explicitly • But in other cases it is not like that!

Why neural networks? • There are simple examples for which the hypothesis and cost functions can be written explicitly • But in other cases it is not like that! OVERFITTI NG

Why neural networks? • We find a similar difficulty if we are dealing with data described by "many" features

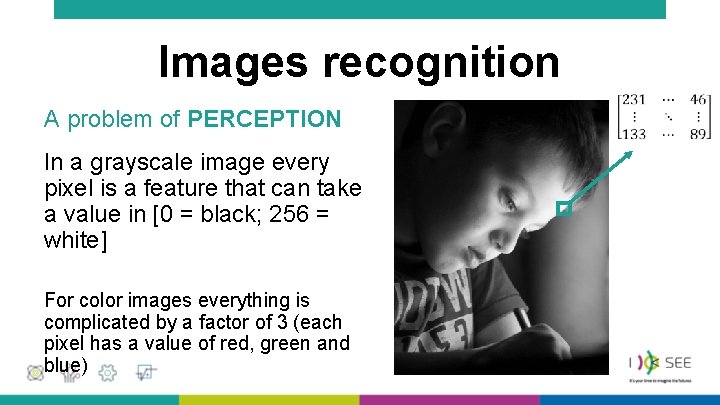

Images recognition A problem of PERCEPTION In a grayscale image every pixel is a feature that can take a value in [0 = black; 256 = white] For color images everything is complicated by a factor of 3 (each pixel has a value of red, green and blue) •

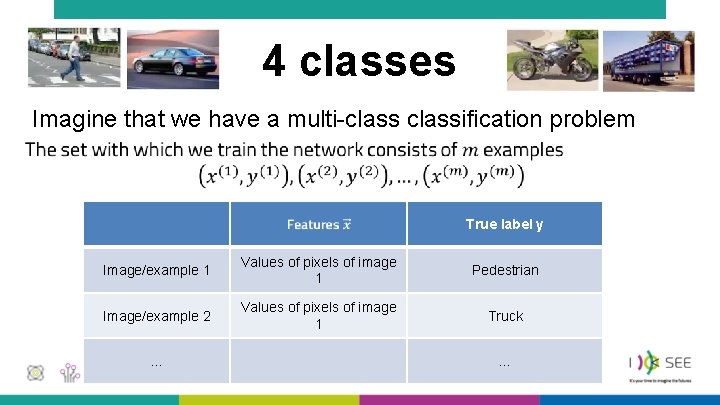

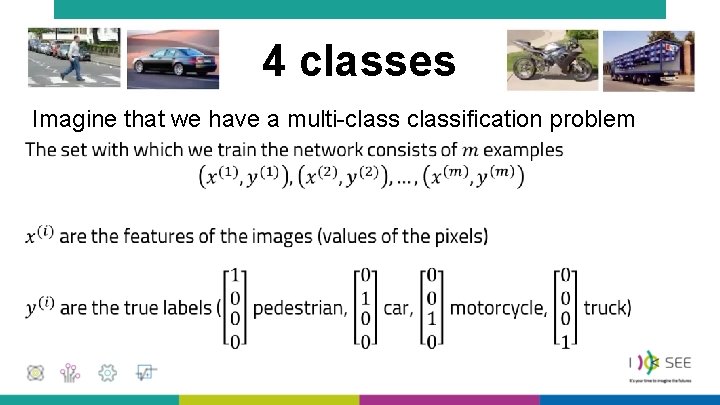

4 classes Imagine that we have a multi-classification problem • True label y Image/example 1 Values of pixels of image 1 Pedestrian Image/example 2 Values of pixels of image 1 Truck … …

4 classes Imagine that we have a multi-classification problem •

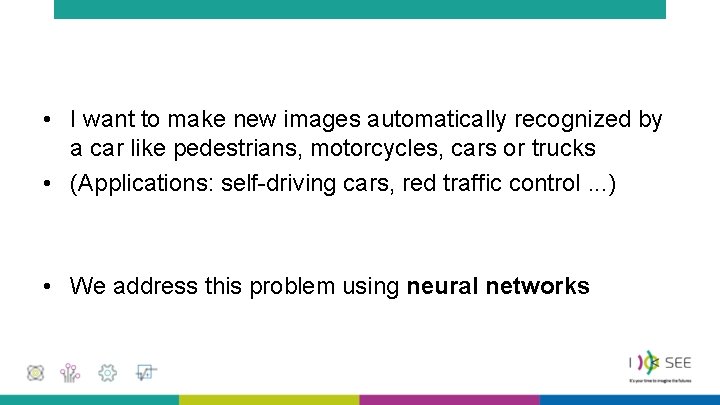

• I want to make new images automatically recognized by a car like pedestrians, motorcycles, cars or trucks • (Applications: self-driving cars, red traffic control. . . ) • We address this problem using neural networks

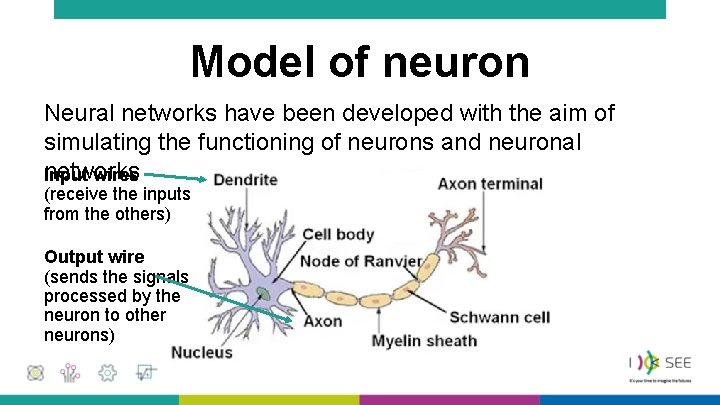

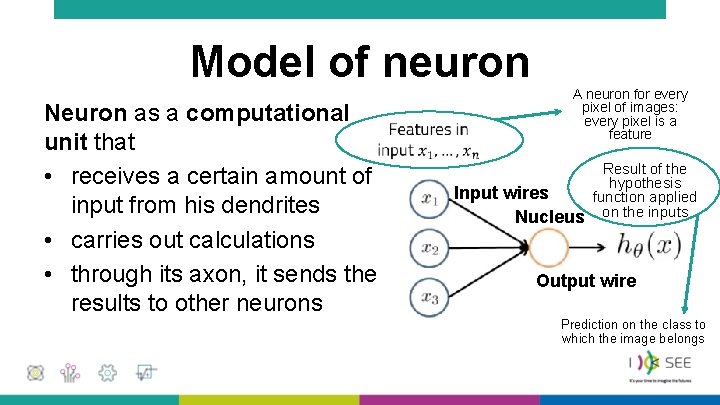

Model of neuron Neural networks have been developed with the aim of simulating the functioning of neurons and neuronal networks Input wires (receive the inputs from the others) Output wire (sends the signals processed by the neuron to other neurons)

Model of neuron Neuron as a computational • unit that • receives a certain amount of input from his dendrites • carries out calculations • through its axon, it sends the results to other neurons A neuron for every pixel of images: every pixel is a feature Input wires Nucleus Result of the hypothesis function applied on the inputs Output wire Prediction on the class to which the image belongs

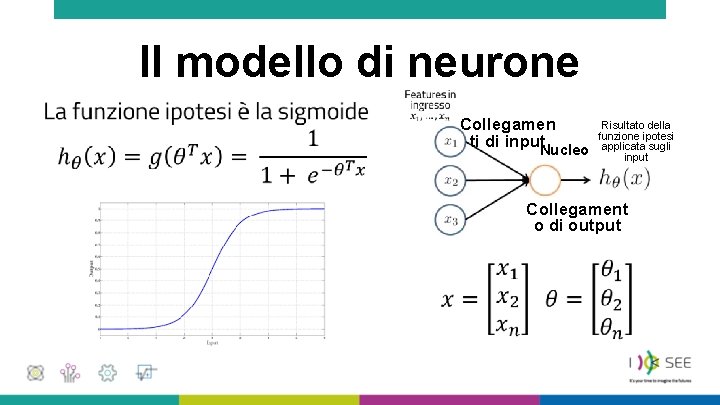

Il modello di neurone • • Collegamen ti di input. Nucleo Risultato della funzione ipotesi applicata sugli input Collegament o di output

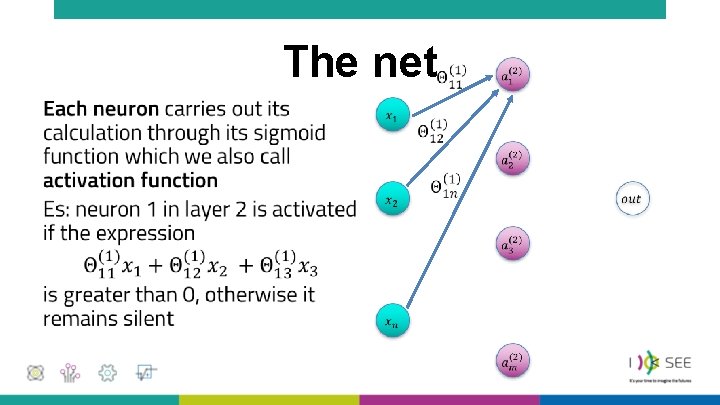

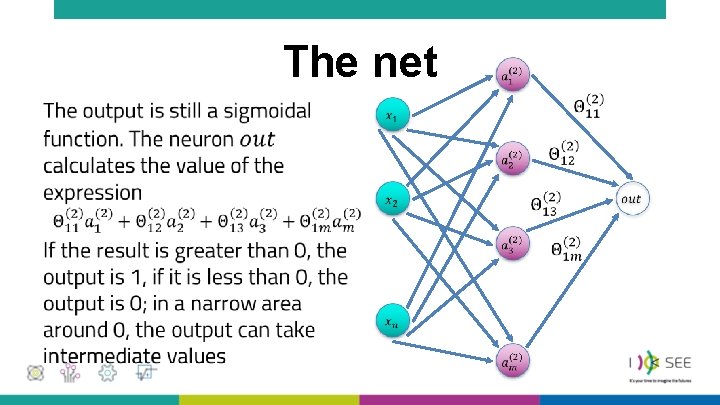

The net •

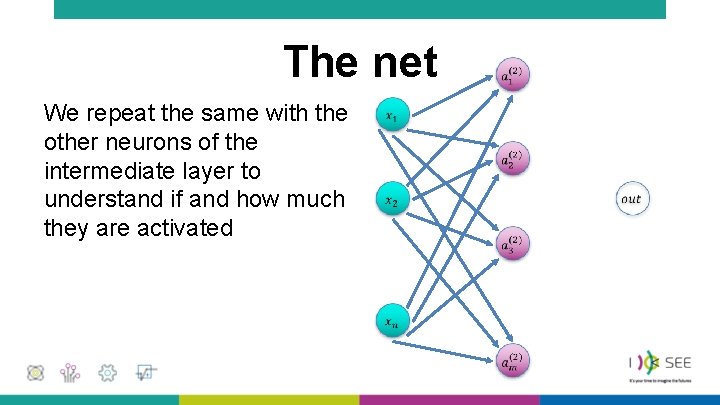

The net We repeat the same with the other neurons of the intermediate layer to understand if and how much they are activated

The net •

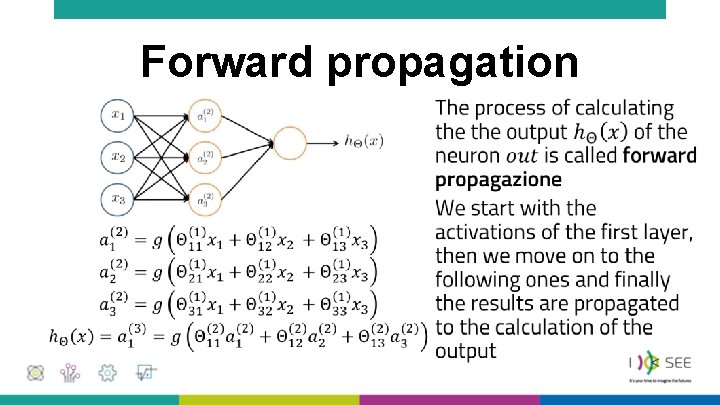

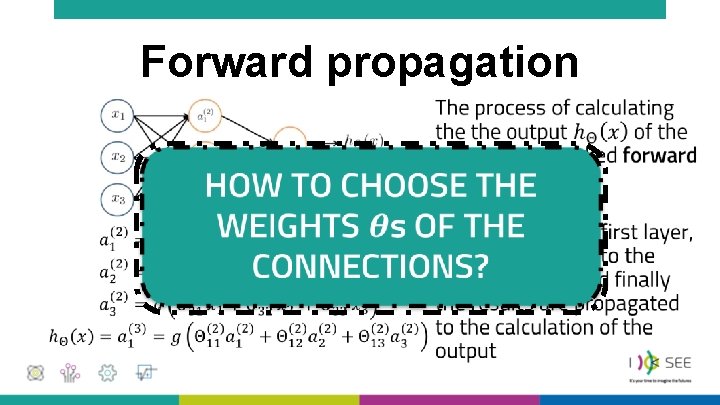

Forward propagation • •

Forward propagation • •

Choice of weights •

• Cost function for neural networks It is a generalization of the cost function used for logistic regressions • It assumes high values (high cost) if the calculated output deviates from the label in the dataset • It takes low values (low cost) if the calculated output is close to the label containing the truth value

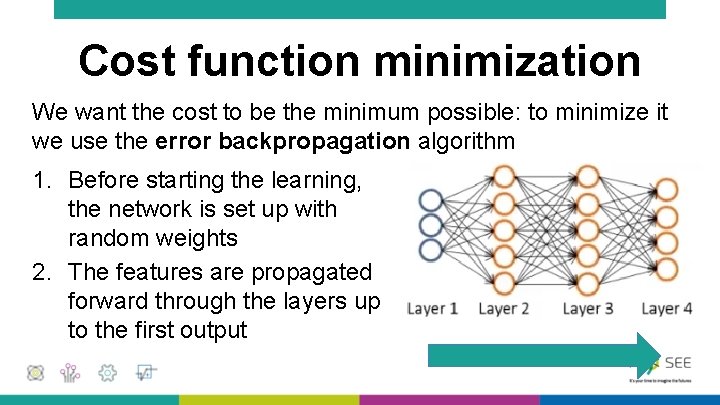

Cost function minimization We want the cost to be the minimum possible: to minimize it we use the error backpropagation algorithm 1. Before starting the learning, the network is set up with random weights 2. The features are propagated forward through the layers up to the first output

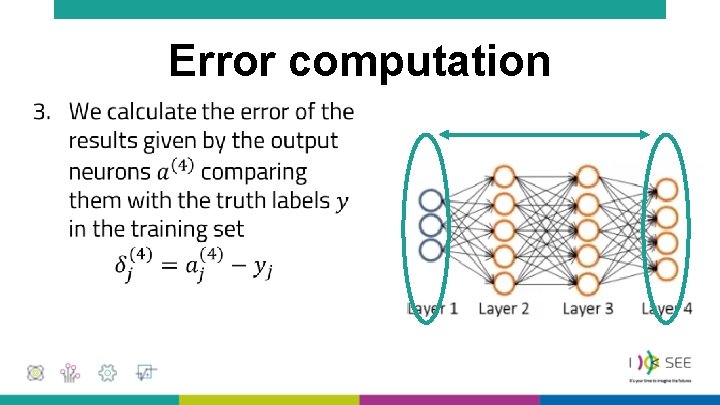

Error computation •

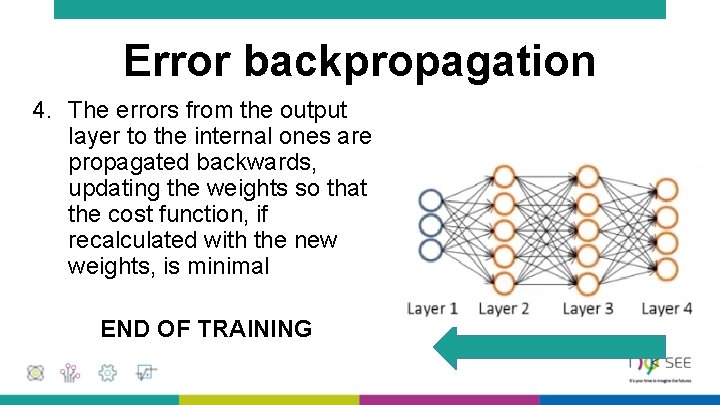

Error backpropagation 4. The errors from the output layer to the internal ones are propagated backwards, updating the weights so that the cost function, if recalculated with the new weights, is minimal END OF TRAINING

Validation and Test • The network model trained with examples of the training dataset must now be validated and then tested • Validation = the network is used to predict the classifications of new examples as the network hyperparameters vary (number of layers, number of neurons in each layer) • Test = the new network is used to evaluate the effectiveness of the model with still new examples

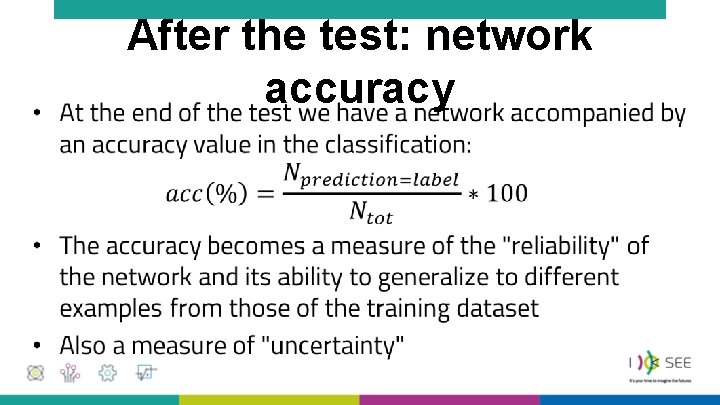

• After the test: network accuracy

We have solved a problem that was NOT describable a priori (we do not know the ALGORITHM with which our brain distinguishes trucks from pedestrians) The resolution is a probabilistic one (each network is accompanied by its efficiency)

Are the machines intelligent? What do we mean for intelligence? Machines that execute (imperative paradigm), derive/infer/deduce (logical paradigm), learn (machine learning paradigm)

What is new in the neural network? What are the news of the machine learning paradigm? What makes a neural network a complex system? Some highlights on the lesson of Prof. Zanarini

We can interpret the neural network as a complex system because it shows some typical properties of these systems

Emergent property The ability of the network to distinguish pedestrians and vehicles, to learn to perform a certain type of task It is not a priori dictated competence: nobody has taught her "what and how" to recognize Roundabout… Flow ("dance") of vehicles that move without an explicitly imposed rule from outside (traffic light) Cellular automata. . . Triangular shapes and "spaceships" emerge from very simple rules

Rules on the agents The individual agents (network nodes, neurons) act (activate) on the basis of a few simple rules (activation function) described in a precise way Birds… Each bird moves based on simple rules: • stay close to the first neighbors, but without bumping them (distance) • go at the same speed as the first neighbors (speed) • move towards the center of the group (density) Roundabout… Rules relating to the individual (precedence to the near left, safety distance) Cellular automata. . . Very simple rules for activating individuals

Small changes and big effects When a "critical" weight changes, even if I do not know why (subsymbolic approach), it can change the expected classification category As various "less critical" weights change, it may not change the expected classification category Birds… The change of a bird can lead to the change in the motion of the entire flock Flute. . . Blowing slightly above or just below the mouthpiece may not produce any sound. Blowing too hard may not cause any sound

Circularity The weights are reassembled example after example, with an error backpropagation process: the result in output modifies the structure of the network itself, by comparison with the input label We will see!

- Slides: 42