Lecture 7 Nonlinear Recursions Time series prediction HillValley

![Nonlinear recursion • Post-nonlinear combination of predecessors • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ Nonlinear recursion • Post-nonlinear combination of predecessors • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-2.jpg)

![Data generation • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ a z[t- ])+ e[t], t= Data generation • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ a z[t- ])+ e[t], t=](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-4.jpg)

![Nonlinear recursion • MLPotts: z[t]=F(z[t-1], z[t-2], …, z[t- ])+ e[t], t= , …, N Nonlinear recursion • MLPotts: z[t]=F(z[t-1], z[t-2], …, z[t- ])+ e[t], t= , …, N](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-13.jpg)

![Lorenz >> NN=20000; >> [x, y, z] = lorenz(NN); >> plot 3(x, y, z, Lorenz >> NN=20000; >> [x, y, z] = lorenz(NN); >> plot 3(x, y, z,](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-24.jpg)

![Paired data x=[]; len=3; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end figure; Paired data x=[]; len=3; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end figure;](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-38.jpg)

![Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y', Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y',](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-39.jpg)

![Paired data x=[]; len=5; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end 數值方法 Paired data x=[]; len=5; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end 數值方法](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-43.jpg)

![Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y', Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y',](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-44.jpg)

- Slides: 45

Lecture 7 Nonlinear Recursions ØTime series prediction ØHill-Valley classification ØLorenz series 數值方法 2008, Applied Mathematics NDHU 1

![Nonlinear recursion Postnonlinear combination of predecessors zttanha 1 zt1 a 2 zt2 Nonlinear recursion • Post-nonlinear combination of predecessors • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-2.jpg)

Nonlinear recursion • Post-nonlinear combination of predecessors • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ a z[t- ])+ e[t], t= , …, N 數值方法 2008, Applied Mathematics NDHU 2

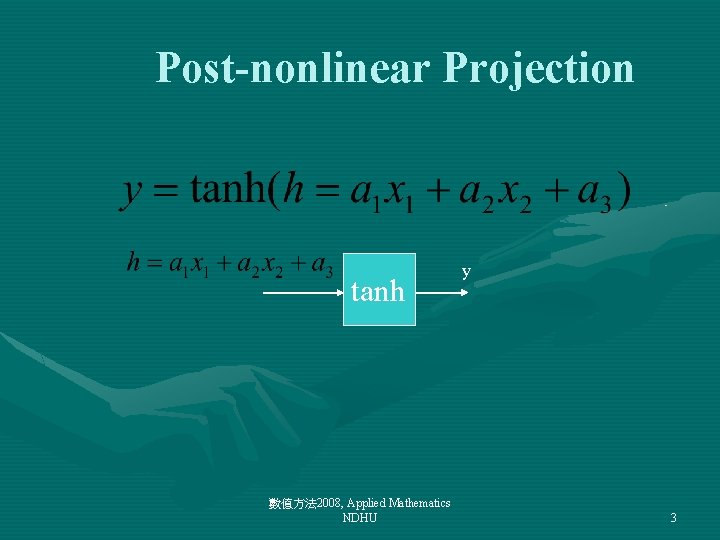

Post-nonlinear Projection tanh 數值方法 2008, Applied Mathematics NDHU y 3

![Data generation zttanha 1 zt1 a 2 zt2 a zt et t Data generation • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ a z[t- ])+ e[t], t=](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-4.jpg)

Data generation • z[t]=tanh(a 1 z[t-1]+ a 2 z[t-2]+…+ a z[t- ])+ e[t], t= , …, N tanh 數值方法 2008, Applied Mathematics NDHU 4

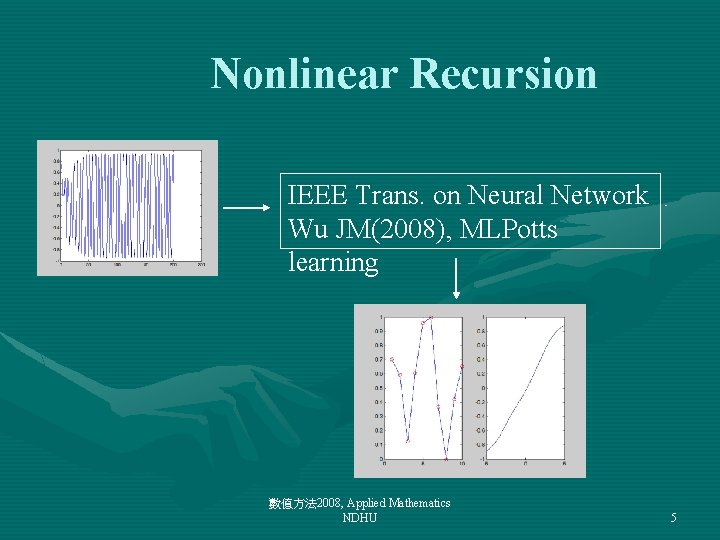

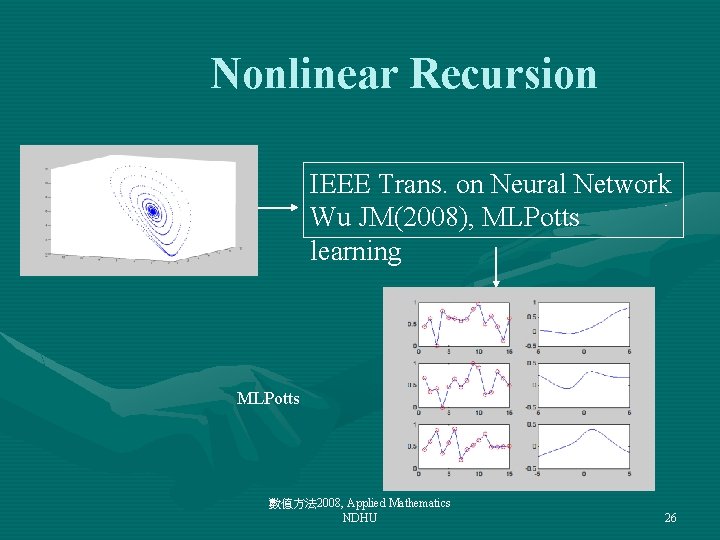

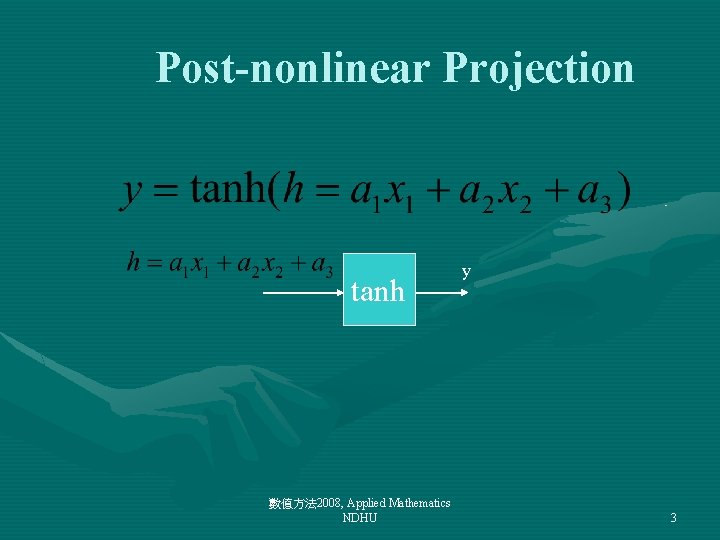

Nonlinear Recursion IEEE Trans. on Neural Network Wu JM(2008), MLPotts learning 數值方法 2008, Applied Mathematics NDHU 5

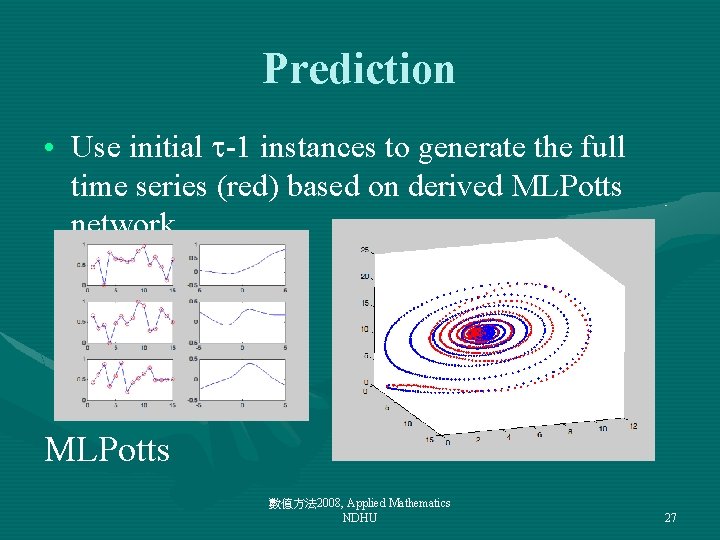

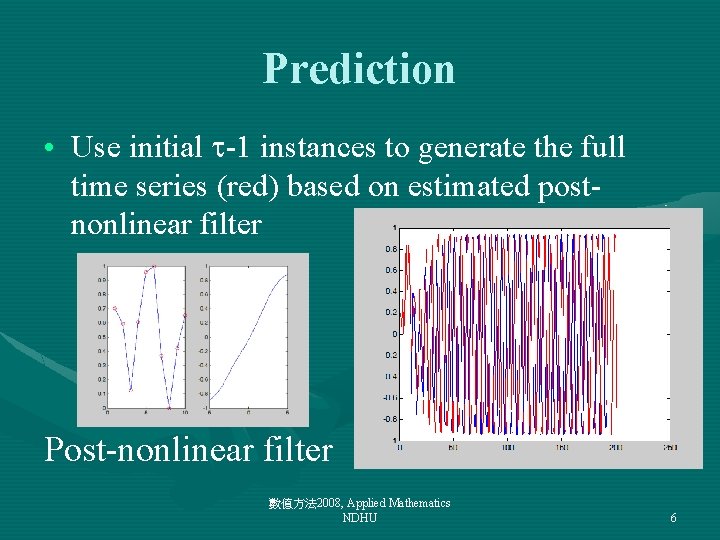

Prediction • Use initial -1 instances to generate the full time series (red) based on estimated postnonlinear filter Post-nonlinear filter 數值方法 2008, Applied Mathematics NDHU 6

Nonlinear recursions: hill-valley • Classify hill and valley UCI Machine Learning Repository 數值方法 2008, Applied Mathematics NDHU 7

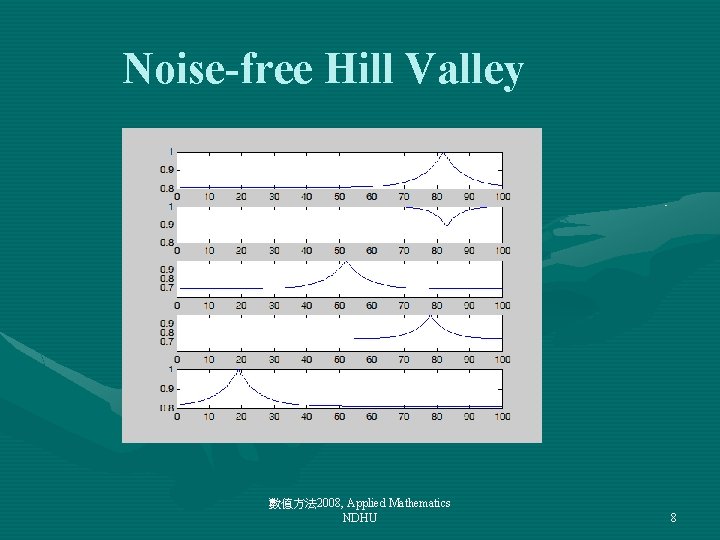

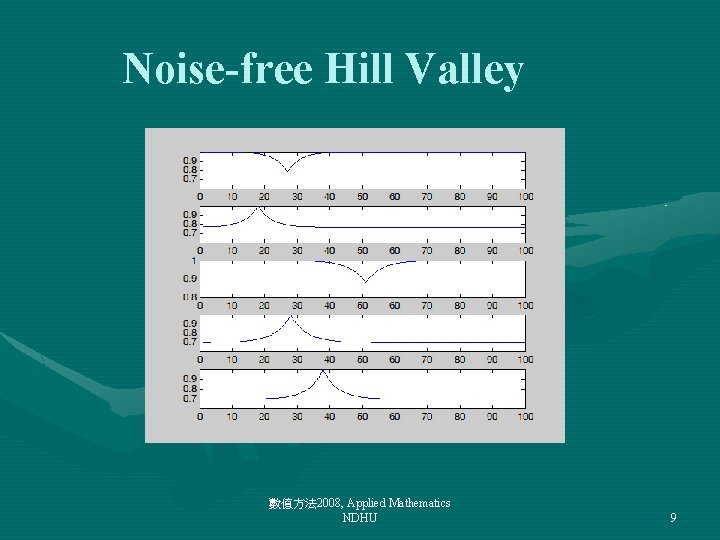

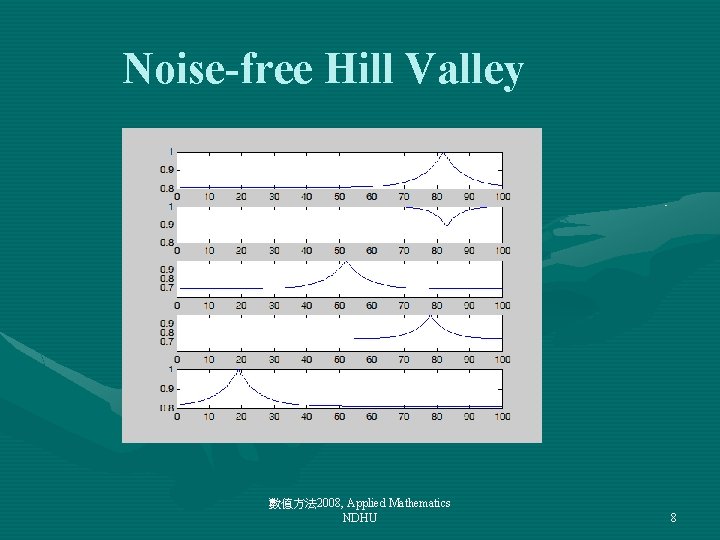

Noise-free Hill Valley 數值方法 2008, Applied Mathematics NDHU 8

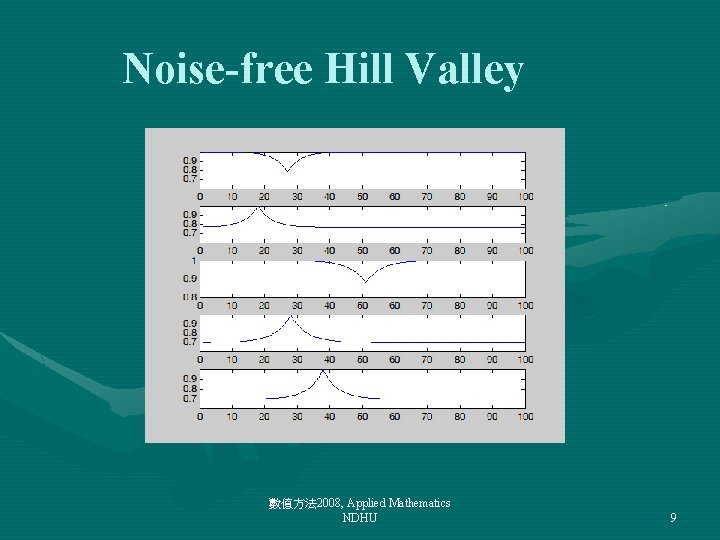

Noise-free Hill Valley 數值方法 2008, Applied Mathematics NDHU 9

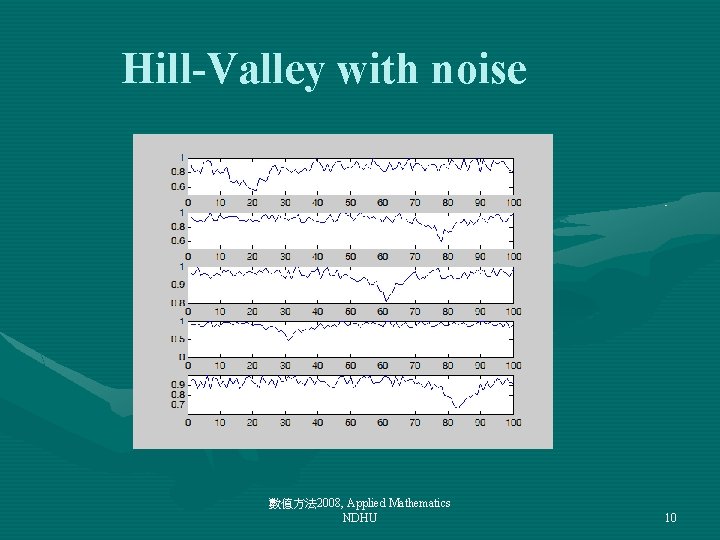

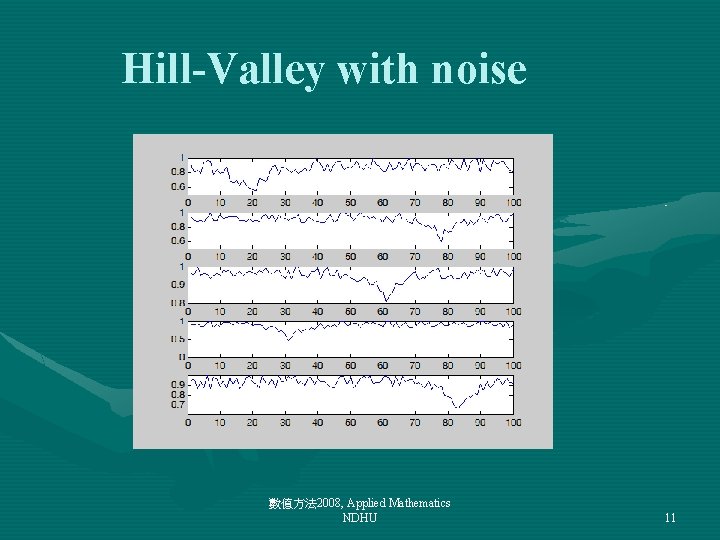

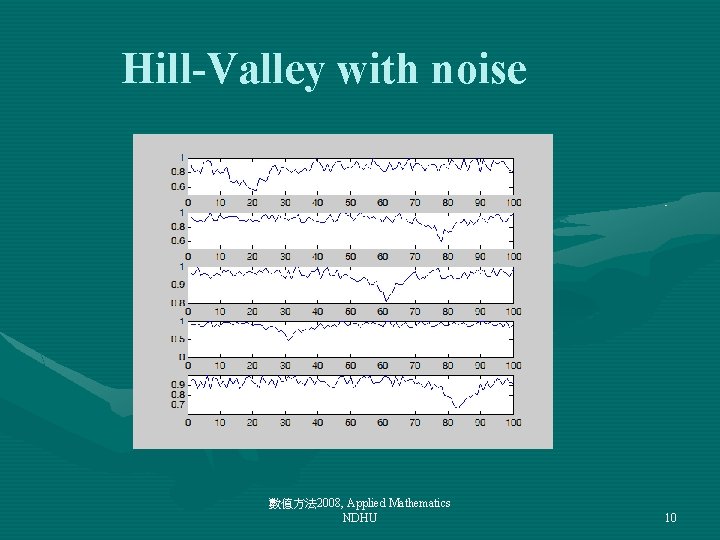

Hill-Valley with noise 數值方法 2008, Applied Mathematics NDHU 10

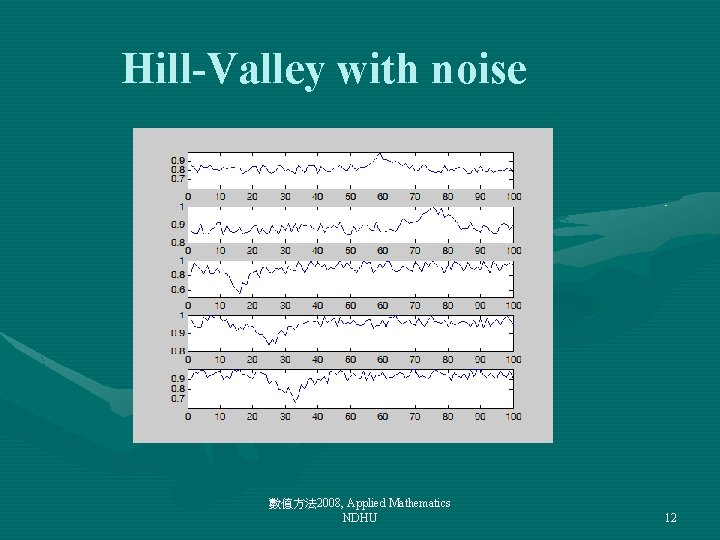

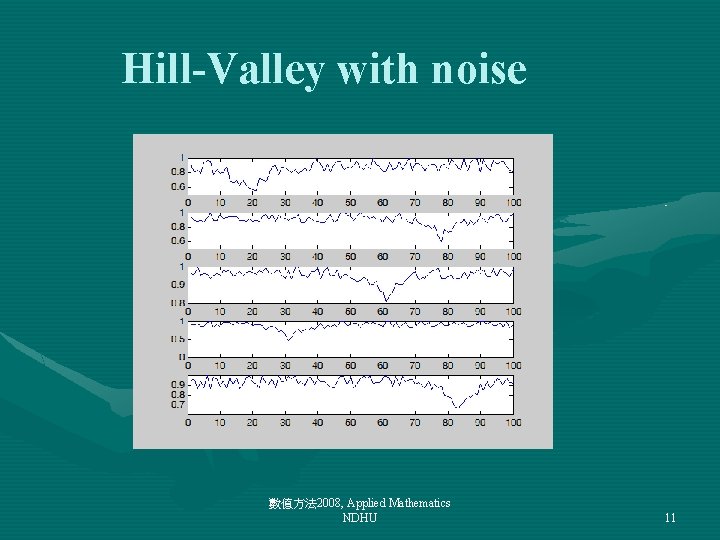

Hill-Valley with noise 數值方法 2008, Applied Mathematics NDHU 11

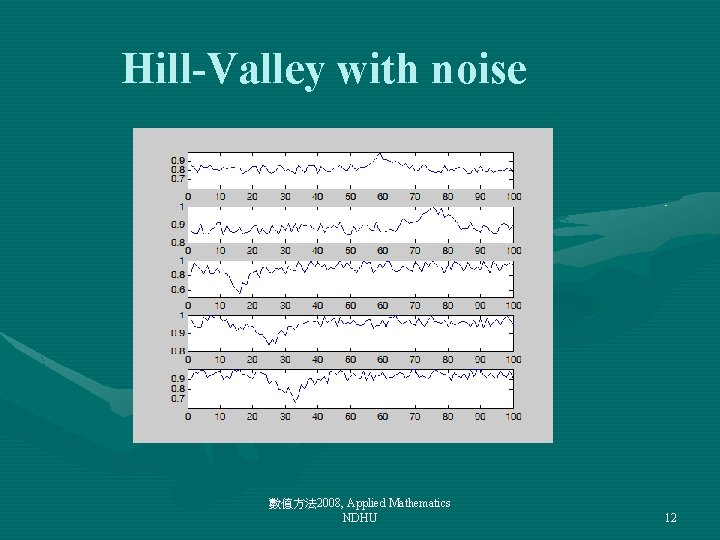

Hill-Valley with noise 數值方法 2008, Applied Mathematics NDHU 12

![Nonlinear recursion MLPotts ztFzt1 zt2 zt et t N Nonlinear recursion • MLPotts: z[t]=F(z[t-1], z[t-2], …, z[t- ])+ e[t], t= , …, N](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-13.jpg)

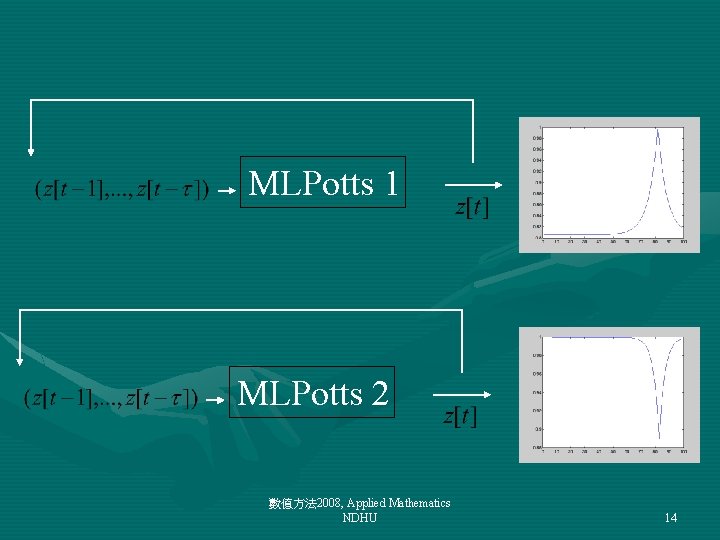

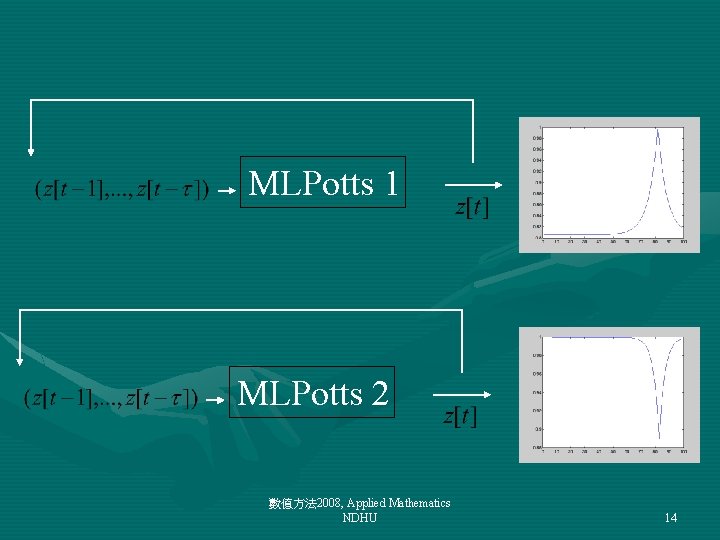

Nonlinear recursion • MLPotts: z[t]=F(z[t-1], z[t-2], …, z[t- ])+ e[t], t= , …, N MLPotts 1 數值方法 2008, Applied Mathematics NDHU 13

MLPotts 1 MLPotts 2 數值方法 2008, Applied Mathematics NDHU 14

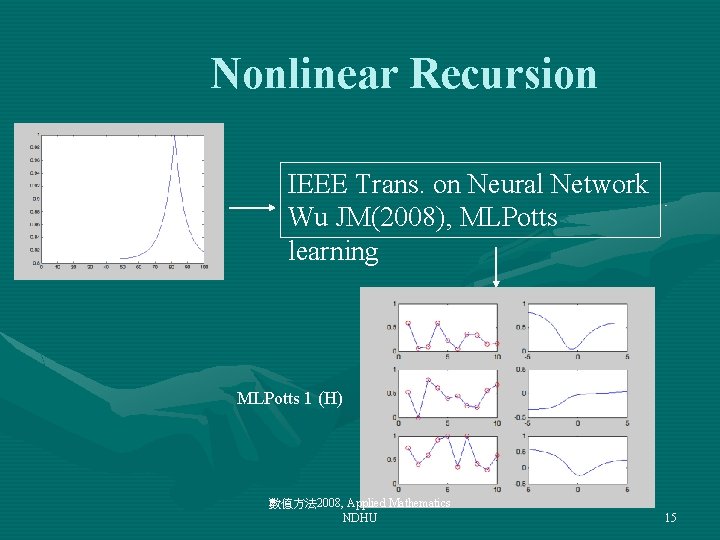

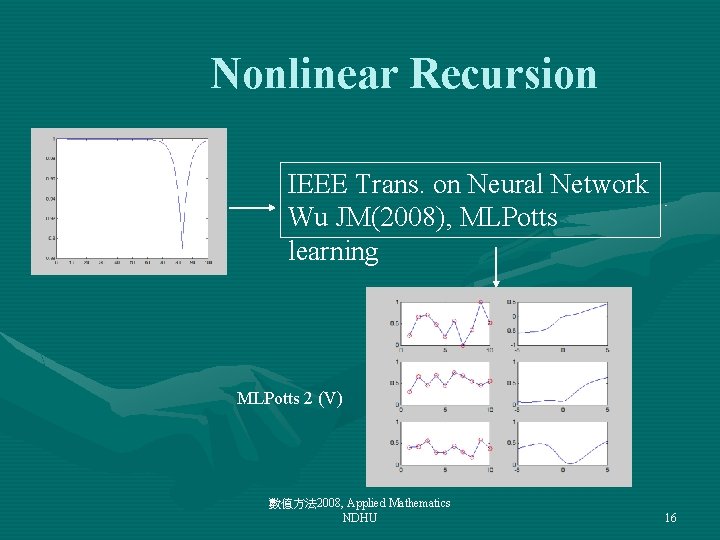

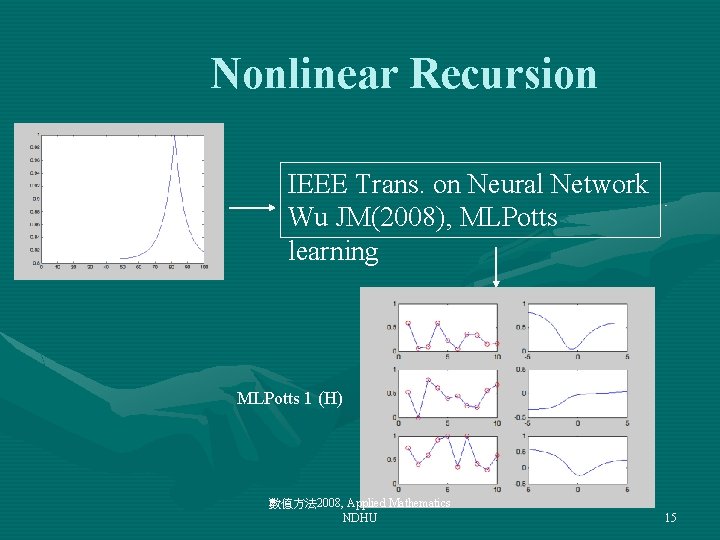

Nonlinear Recursion IEEE Trans. on Neural Network Wu JM(2008), MLPotts learning MLPotts 1 (H) 數值方法 2008, Applied Mathematics NDHU 15

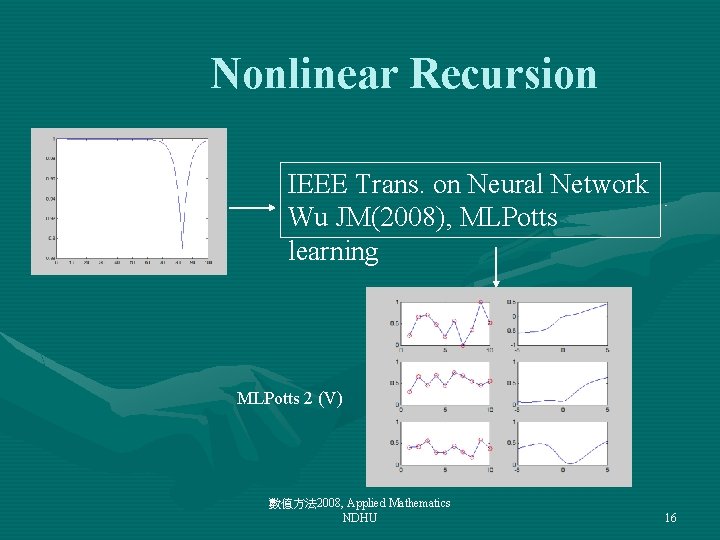

Nonlinear Recursion IEEE Trans. on Neural Network Wu JM(2008), MLPotts learning MLPotts 2 (V) 數值方法 2008, Applied Mathematics NDHU 16

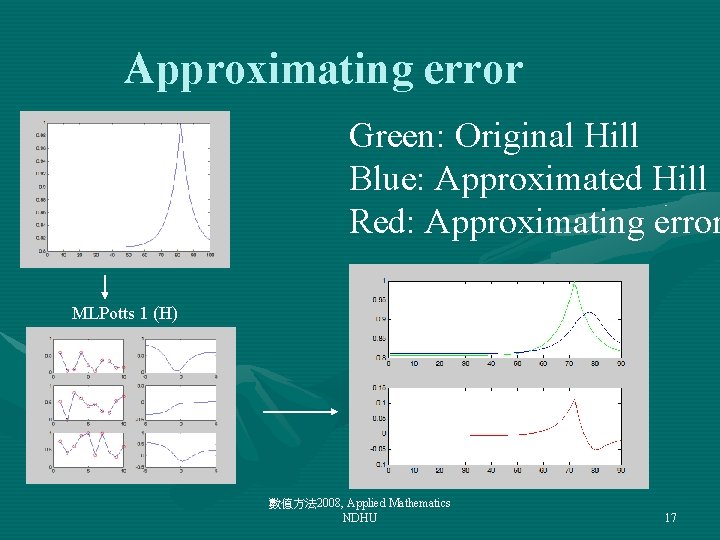

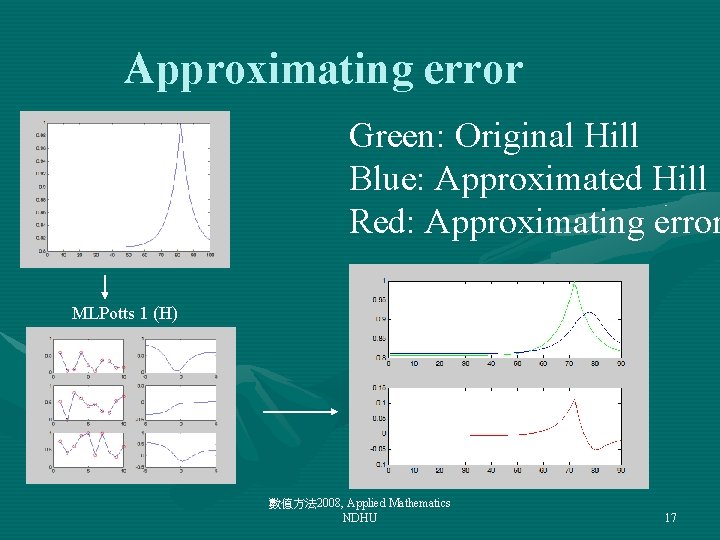

Approximating error Green: Original Hill Blue: Approximated Hill Red: Approximating error MLPotts 1 (H) 數值方法 2008, Applied Mathematics NDHU 17

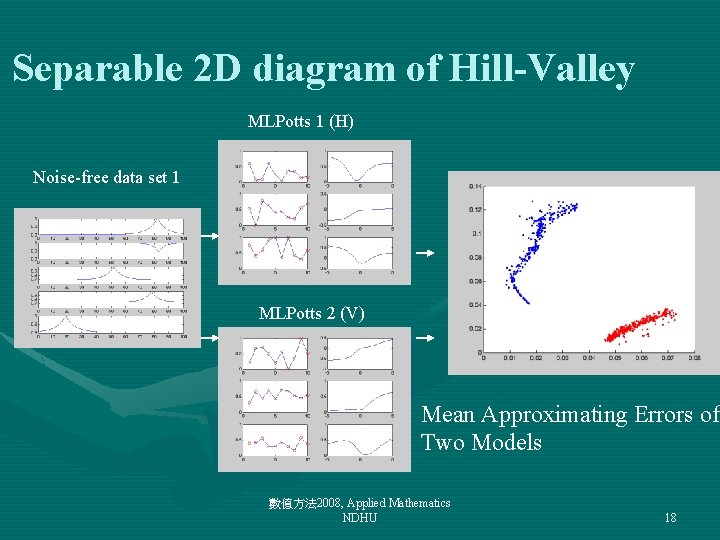

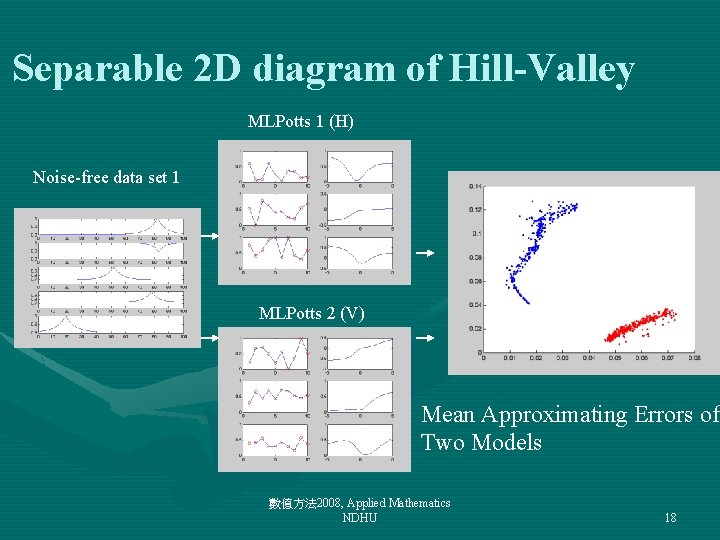

Separable 2 D diagram of Hill-Valley MLPotts 1 (H) Noise-free data set 1 MLPotts 2 (V) Mean Approximating Errors of Two Models 數值方法 2008, Applied Mathematics NDHU 18

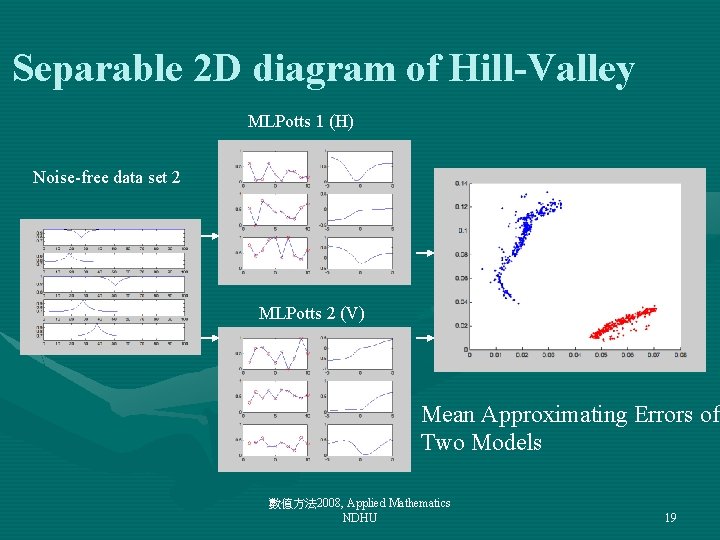

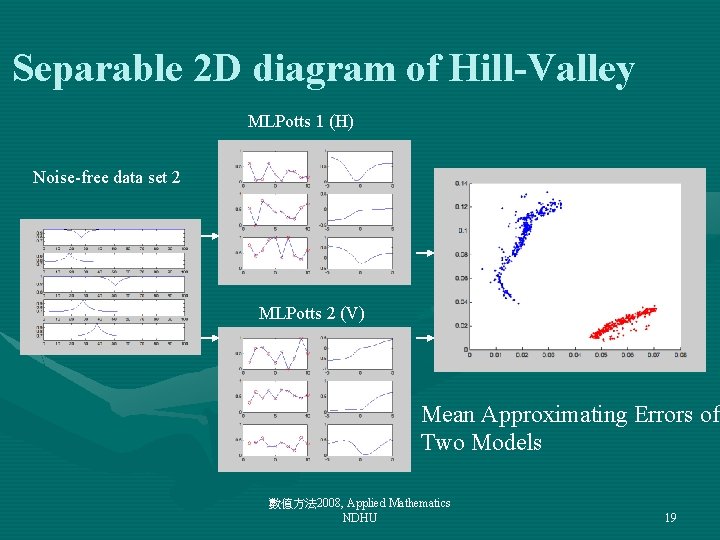

Separable 2 D diagram of Hill-Valley MLPotts 1 (H) Noise-free data set 2 MLPotts 2 (V) Mean Approximating Errors of Two Models 數值方法 2008, Applied Mathematics NDHU 19

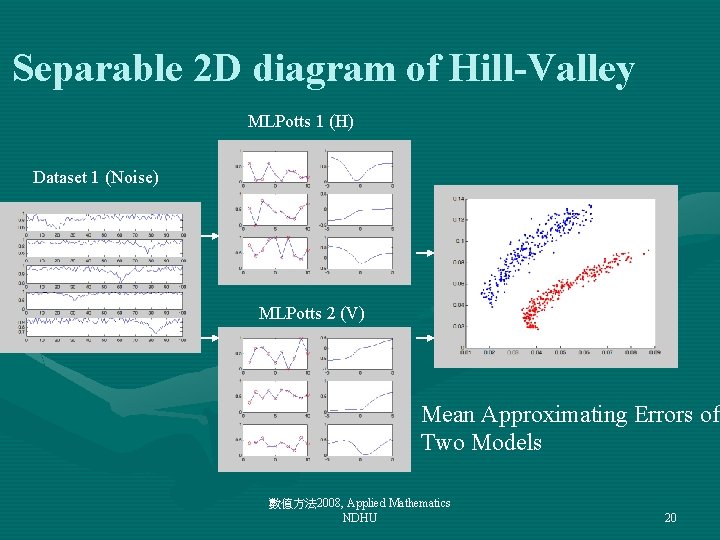

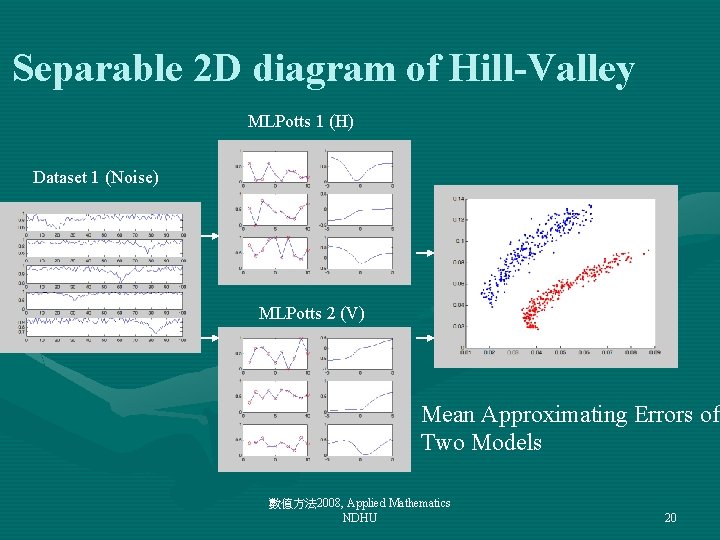

Separable 2 D diagram of Hill-Valley MLPotts 1 (H) Dataset 1 (Noise) MLPotts 2 (V) Mean Approximating Errors of Two Models 數值方法 2008, Applied Mathematics NDHU 20

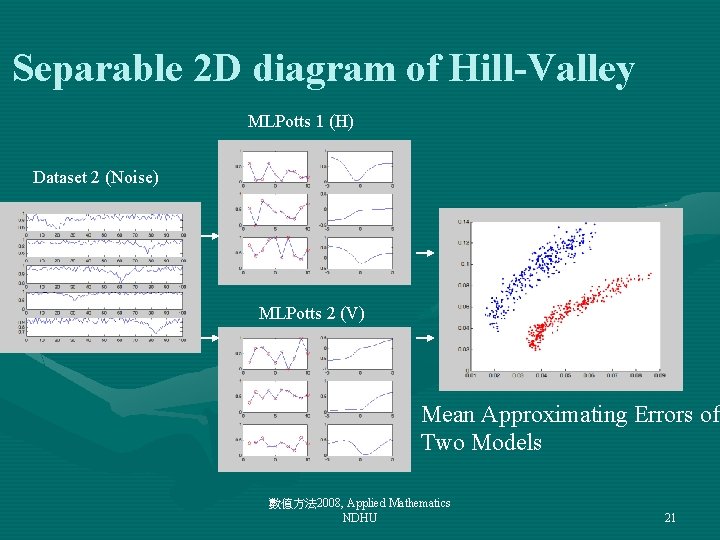

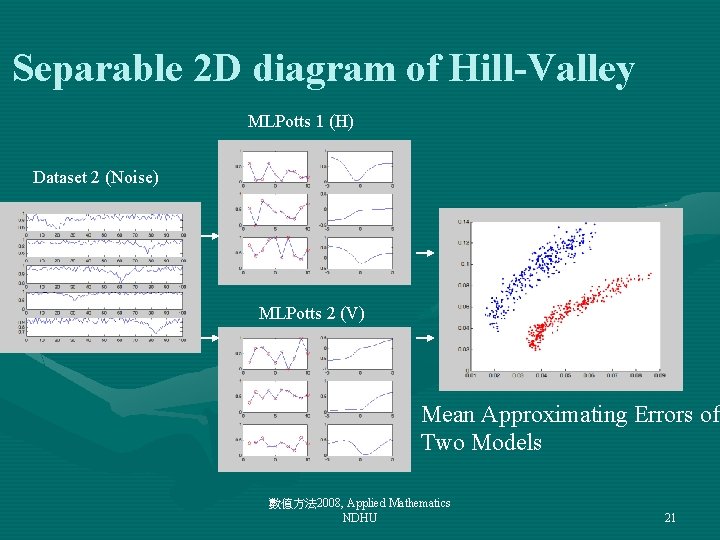

Separable 2 D diagram of Hill-Valley MLPotts 1 (H) Dataset 2 (Noise) MLPotts 2 (V) Mean Approximating Errors of Two Models 數值方法 2008, Applied Mathematics NDHU 21

Chaos Time Series • Eric's Home Page 數值方法 2008, Applied Mathematics NDHU 22

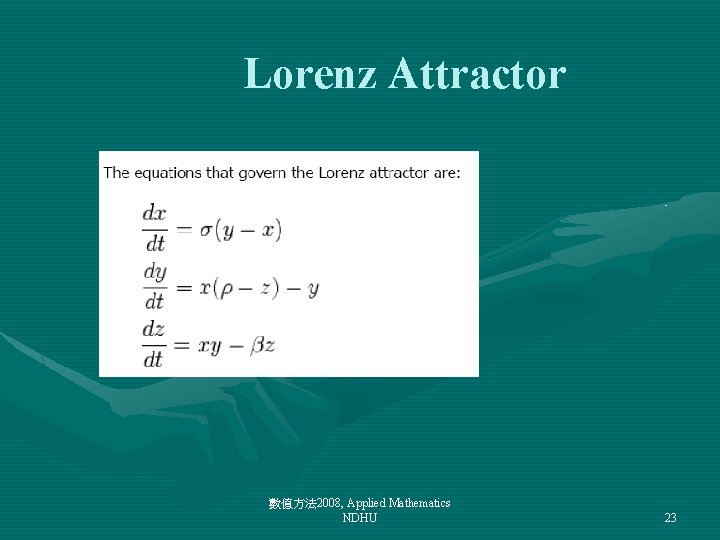

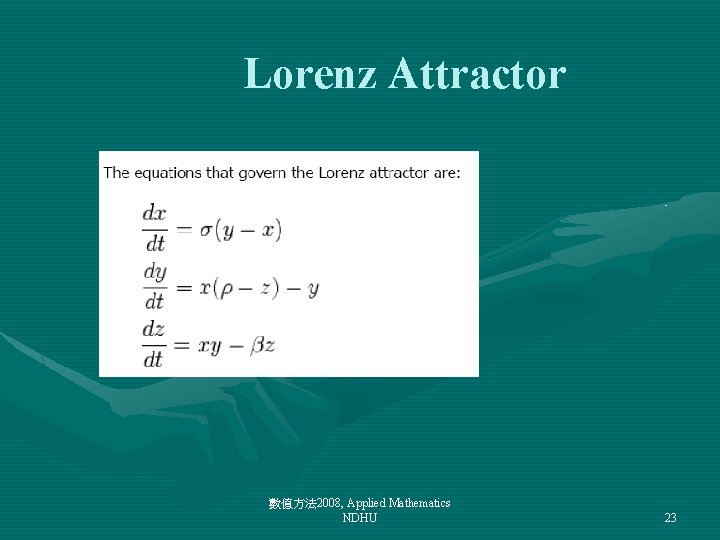

Lorenz Attractor 數值方法 2008, Applied Mathematics NDHU 23

![Lorenz NN20000 x y z lorenzNN plot 3x y z Lorenz >> NN=20000; >> [x, y, z] = lorenz(NN); >> plot 3(x, y, z,](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-24.jpg)

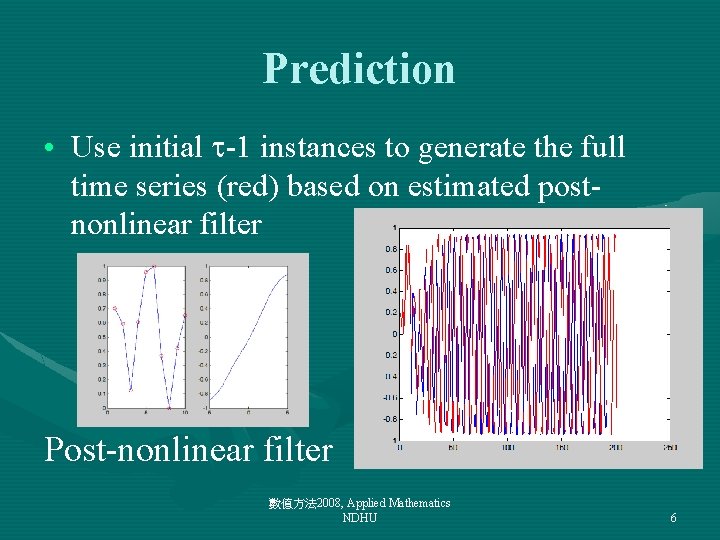

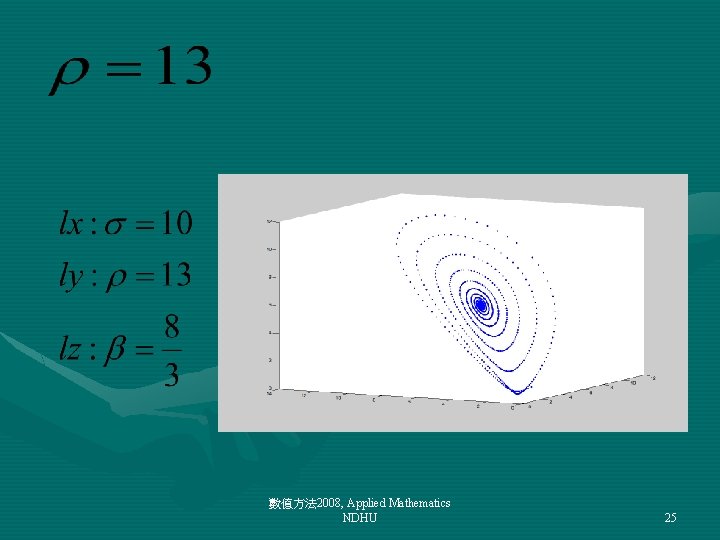

Lorenz >> NN=20000; >> [x, y, z] = lorenz(NN); >> plot 3(x, y, z, '. '); 數值方法 2008, Applied Mathematics NDHU 24

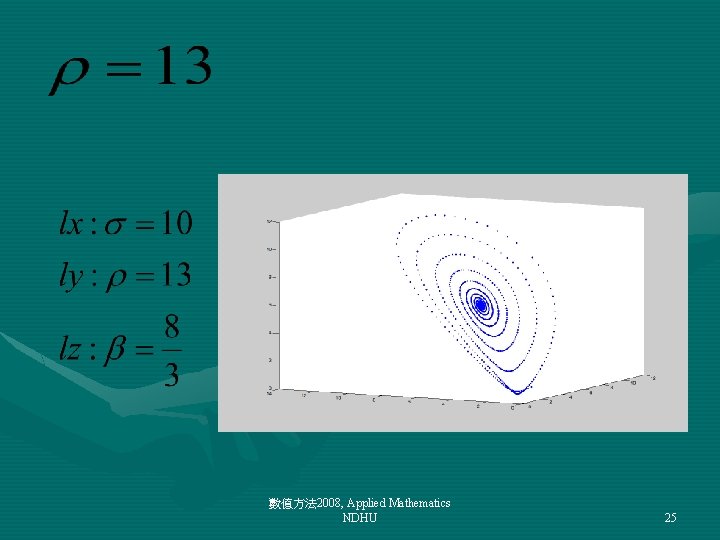

數值方法 2008, Applied Mathematics NDHU 25

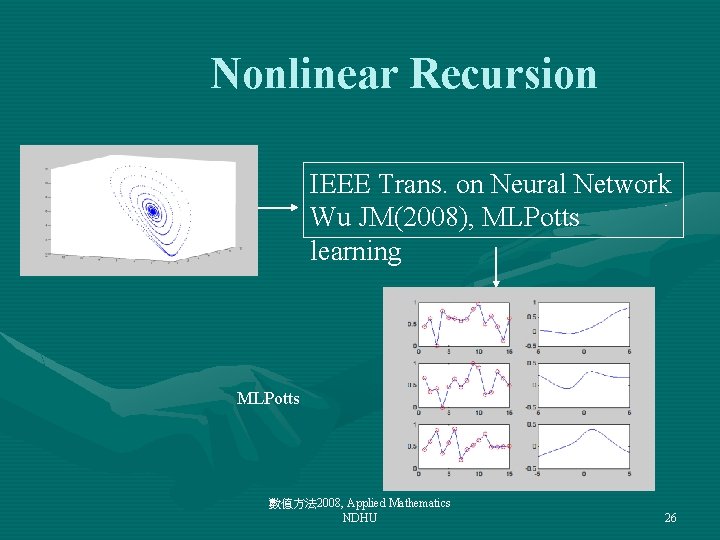

Nonlinear Recursion IEEE Trans. on Neural Network Wu JM(2008), MLPotts learning MLPotts 數值方法 2008, Applied Mathematics NDHU 26

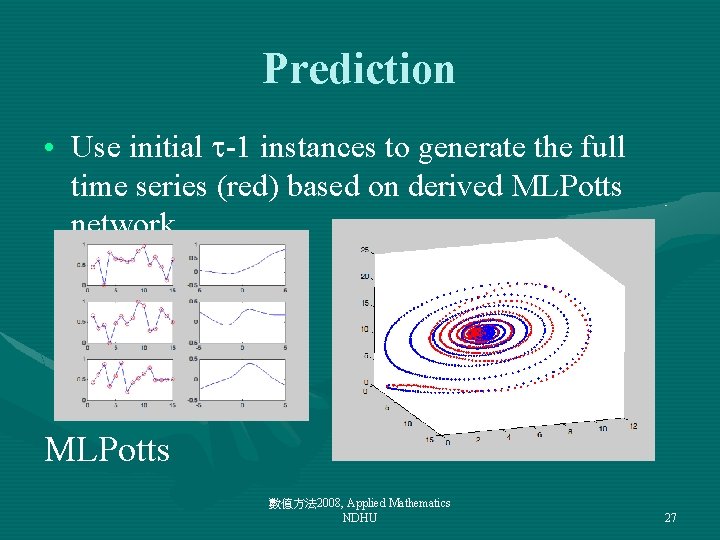

Prediction • Use initial -1 instances to generate the full time series (red) based on derived MLPotts network MLPotts 數值方法 2008, Applied Mathematics NDHU 27

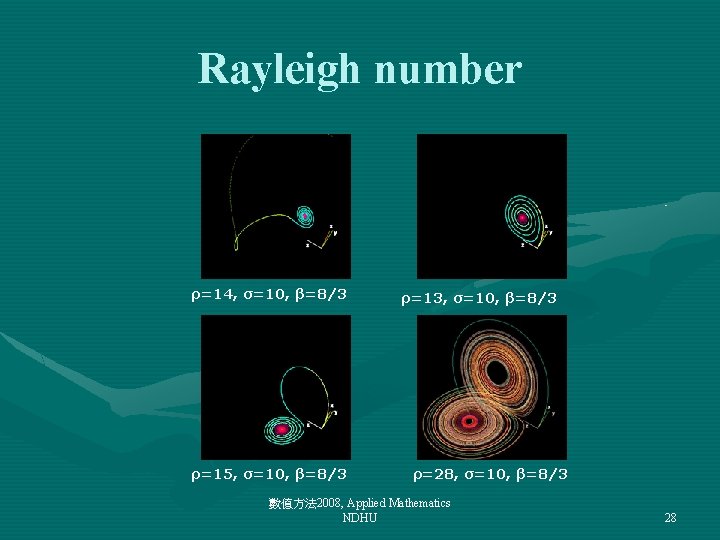

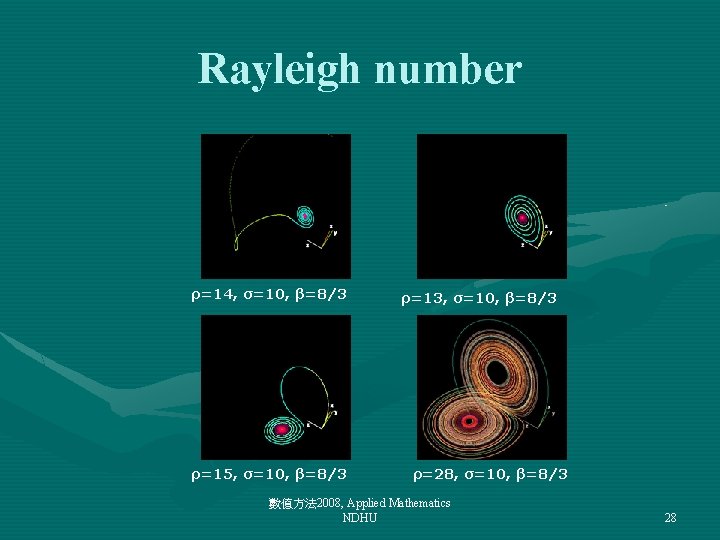

Rayleigh number ρ=14, σ=10, β=8/3 ρ=15, σ=10, β=8/3 ρ=13, σ=10, β=8/3 ρ=28, σ=10, β=8/3 數值方法 2008, Applied Mathematics NDHU 28

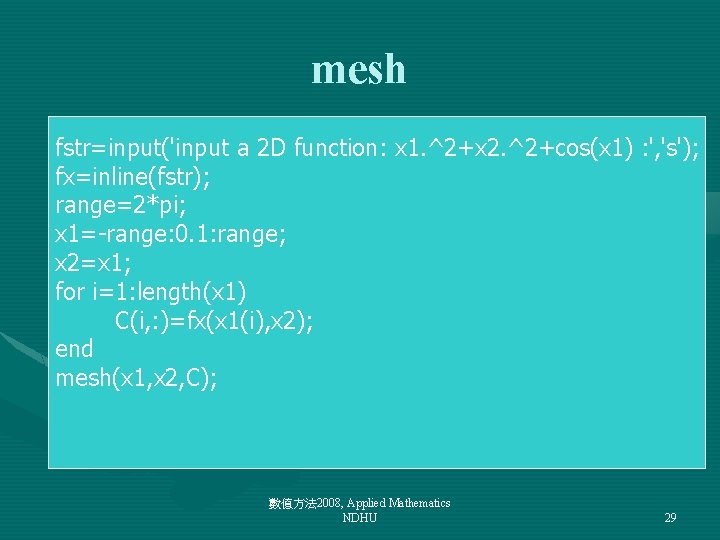

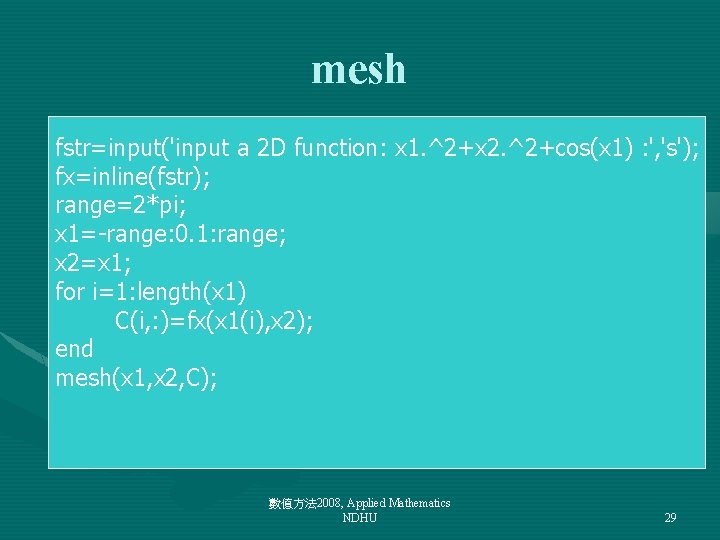

mesh fstr=input('input a 2 D function: x 1. ^2+x 2. ^2+cos(x 1) : ', 's'); fx=inline(fstr); range=2*pi; x 1=-range: 0. 1: range; x 2=x 1; for i=1: length(x 1) C(i, : )=fx(x 1(i), x 2); end mesh(x 1, x 2, C); 數值方法 2008, Applied Mathematics NDHU 29

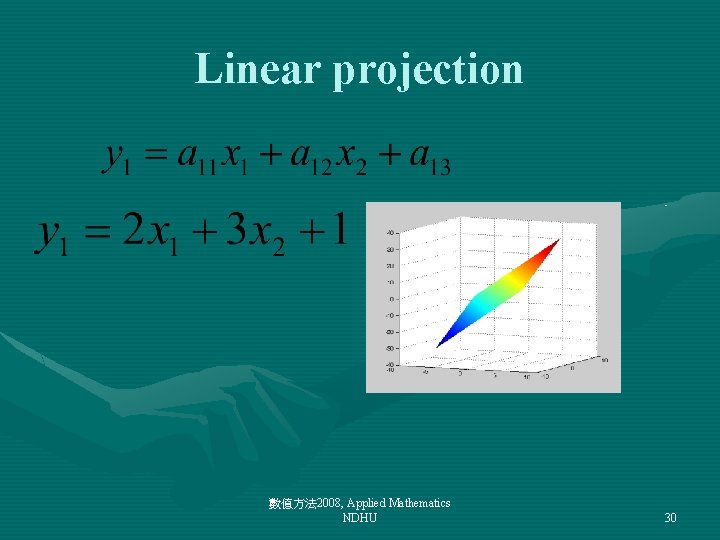

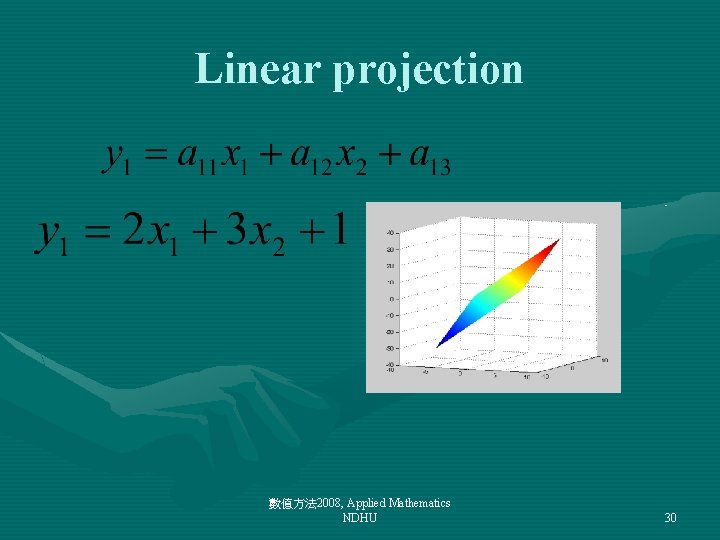

Linear projection 數值方法 2008, Applied Mathematics NDHU 30

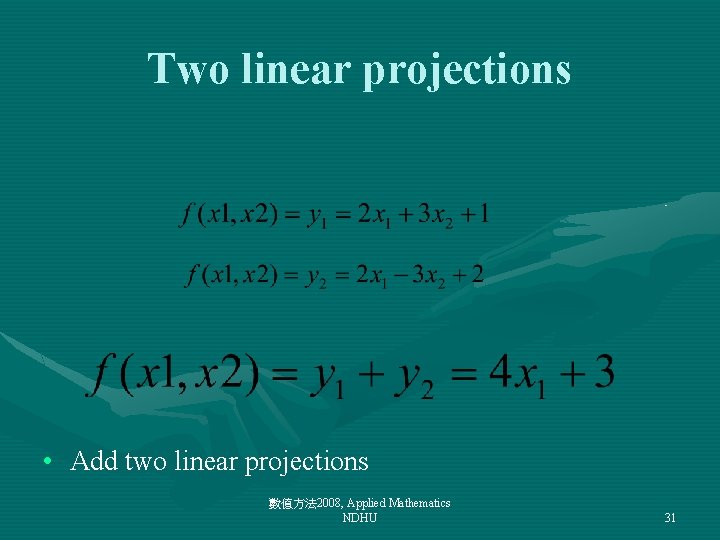

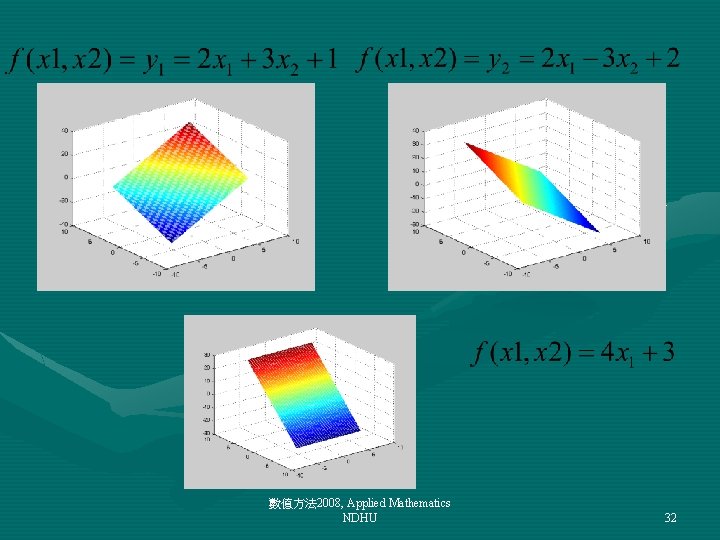

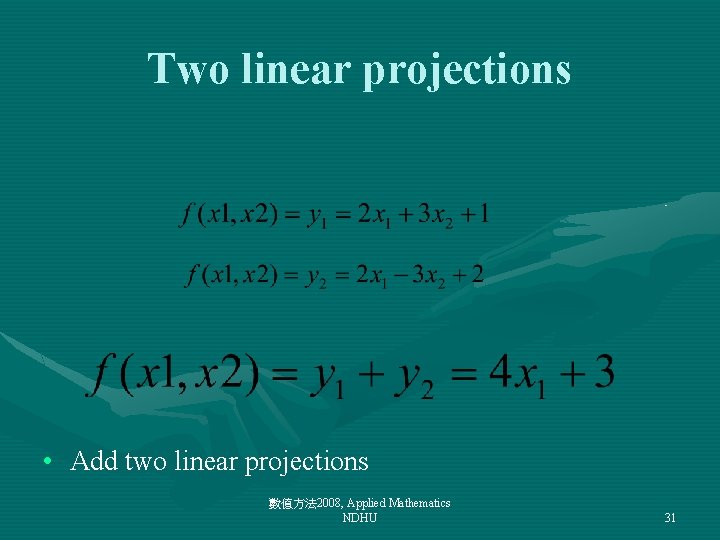

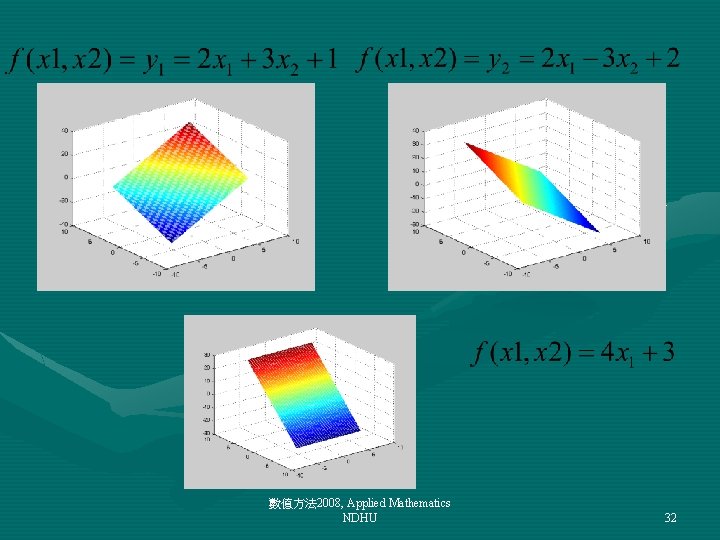

Two linear projections • Add two linear projections 數值方法 2008, Applied Mathematics NDHU 31

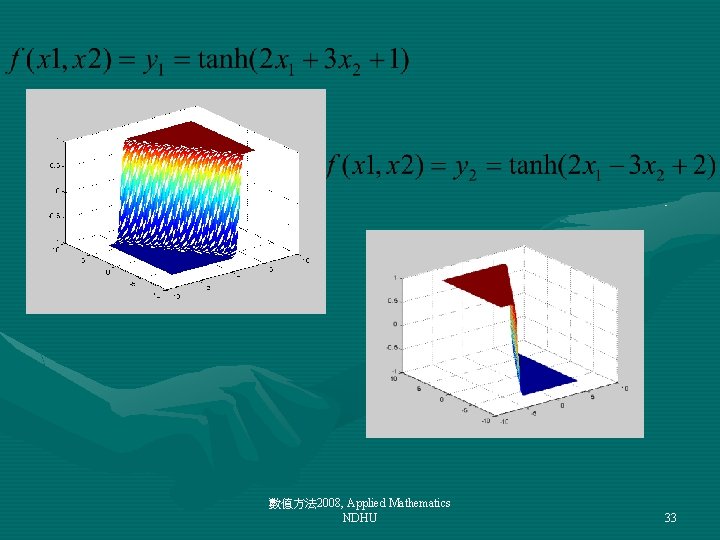

數值方法 2008, Applied Mathematics NDHU 32

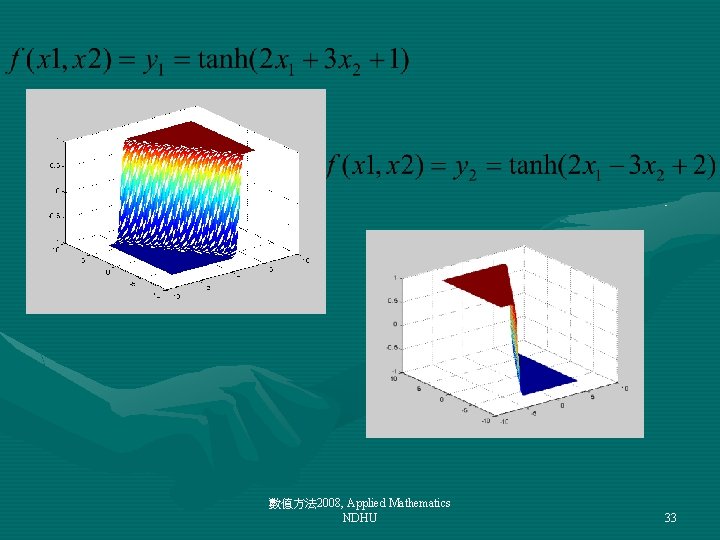

數值方法 2008, Applied Mathematics NDHU 33

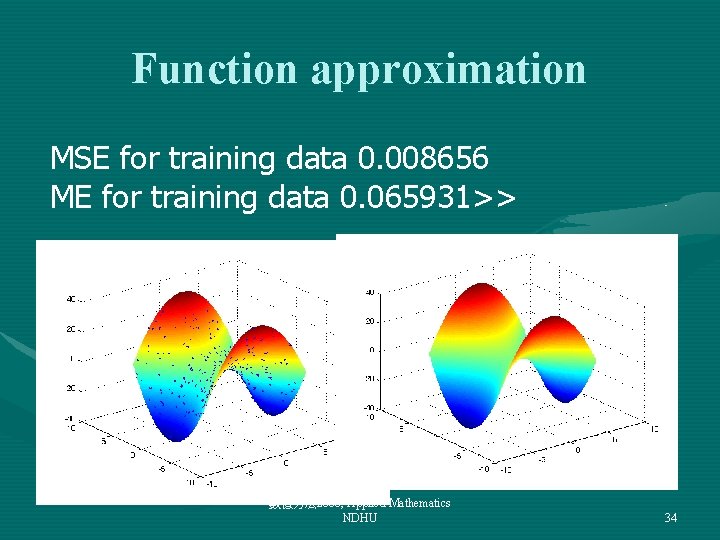

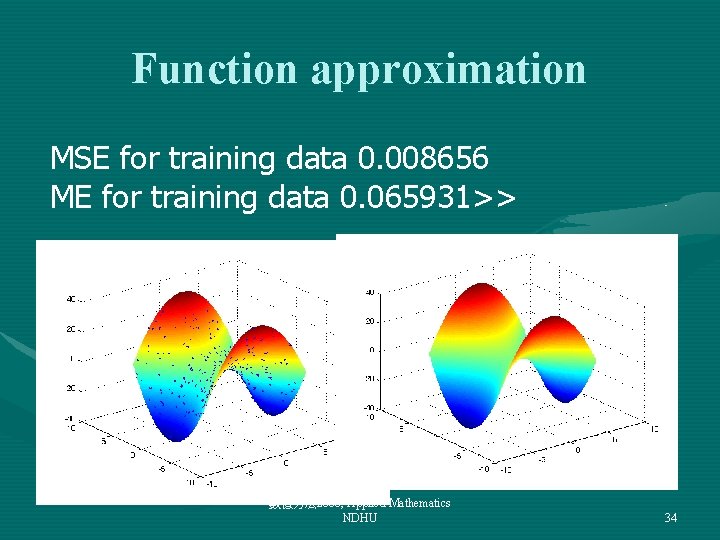

Function approximation MSE for training data 0. 008656 ME for training data 0. 065931>> 數值方法 2008, Applied Mathematics NDHU 34

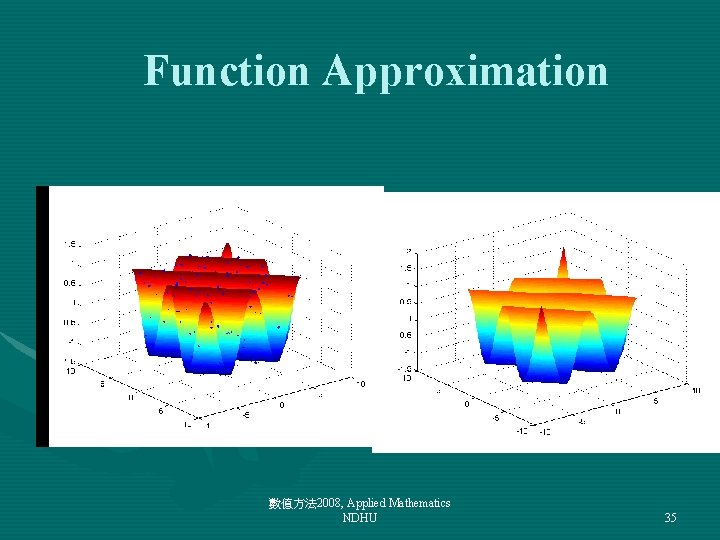

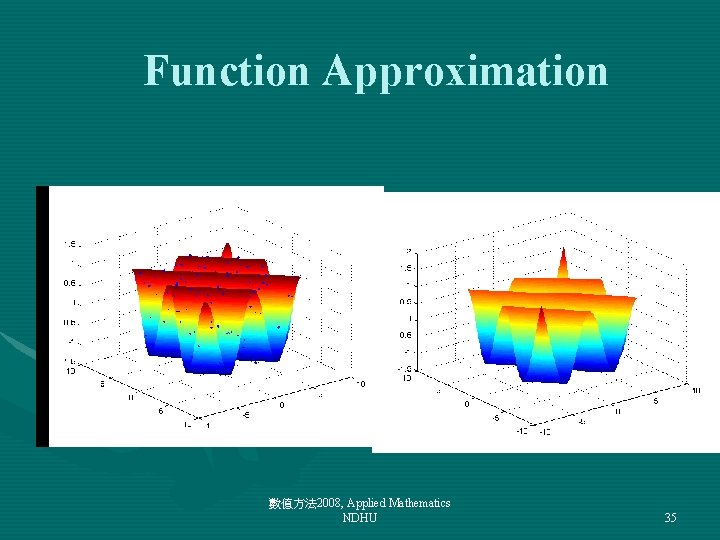

Function Approximation 數值方法 2008, Applied Mathematics NDHU 35

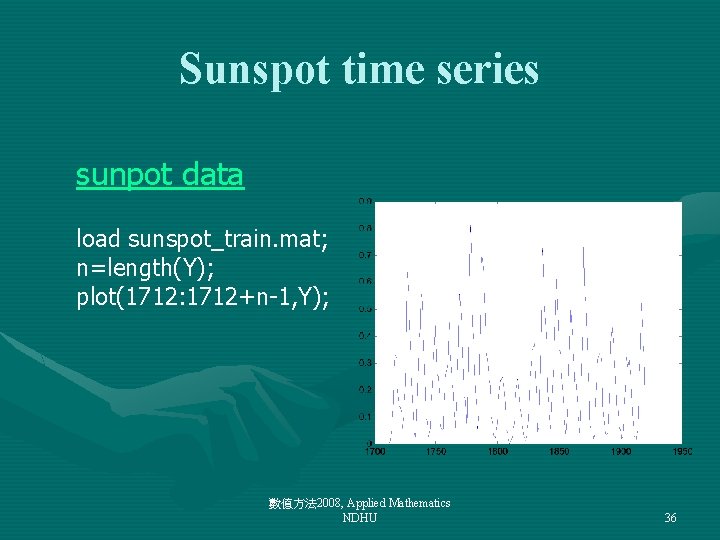

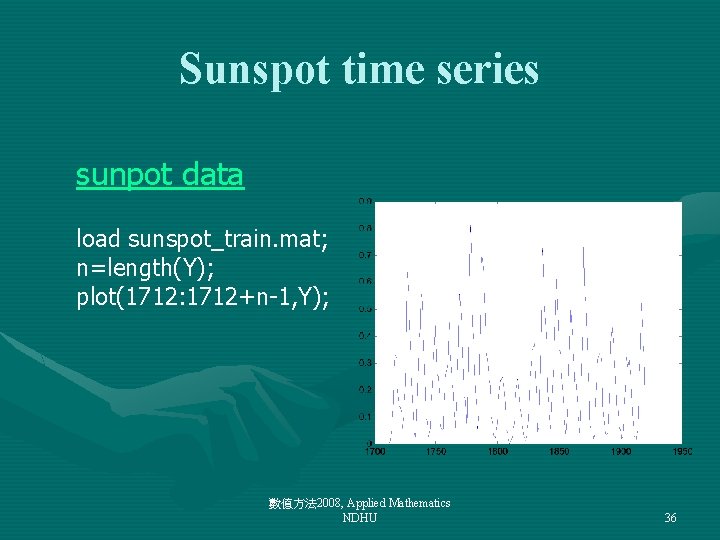

Sunspot time series sunpot data load sunspot_train. mat; n=length(Y); plot(1712: 1712+n-1, Y); 數值方法 2008, Applied Mathematics NDHU 36

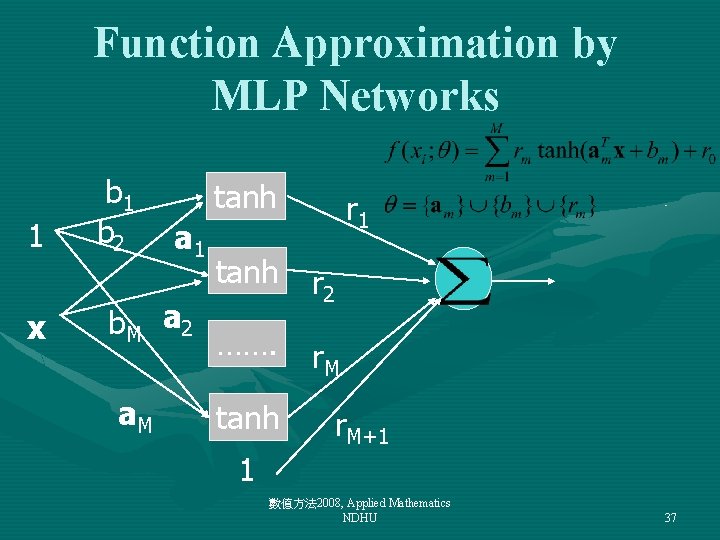

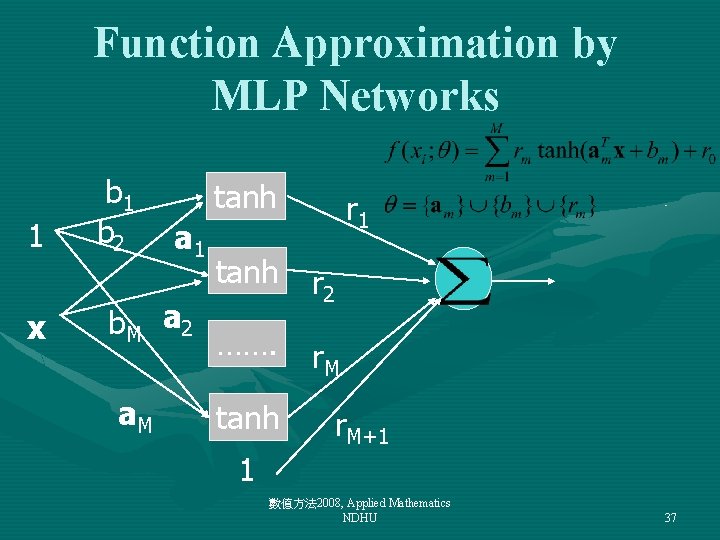

Function Approximation by MLP Networks 1 x b 1 b 2 tanh a 1 b. M a 2 a. M r 1 tanh r 2 ……. r. M tanh r. M+1 1 數值方法 2008, Applied Mathematics NDHU 37

![Paired data x len3 for i1 nlen pYi ilen1 xx p yiYilen end figure Paired data x=[]; len=3; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end figure;](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-38.jpg)

Paired data x=[]; len=3; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end figure; plot 3(x(2, : ), x(1, : ), y, '. '); hold on; 數值方法 2008, Applied Mathematics NDHU 38

![Learning and prediction Minputkeyin the number of hidden units a b rlearnMLPx y Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y',](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-39.jpg)

Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y', M); y=eval_MLP 2(x, r, a, b, M); hold on; plot(1712+2: 1712+2+length(y)-1, y, 'r') MSE for training data 0. 005029 ME for training data 0. 052191 K 數值方法 2008, Applied Mathematics NDHU 39

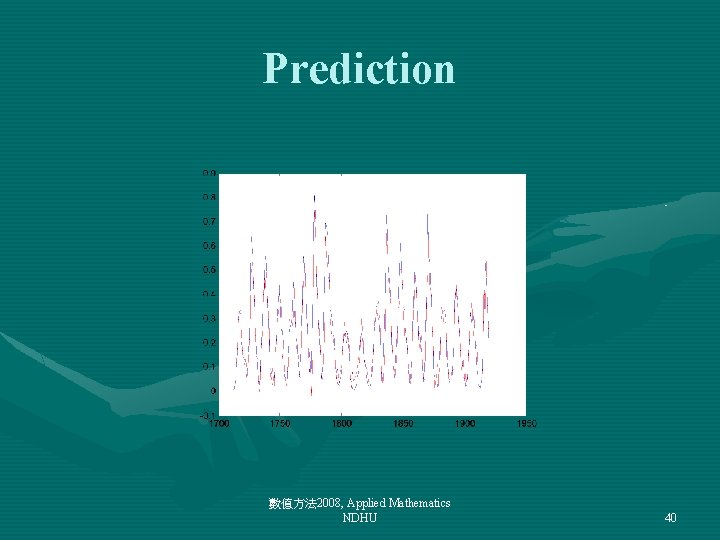

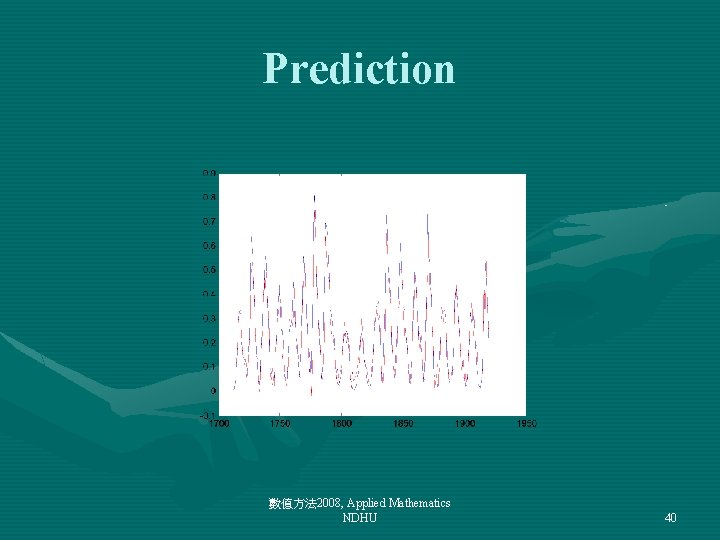

Prediction 數值方法 2008, Applied Mathematics NDHU 40

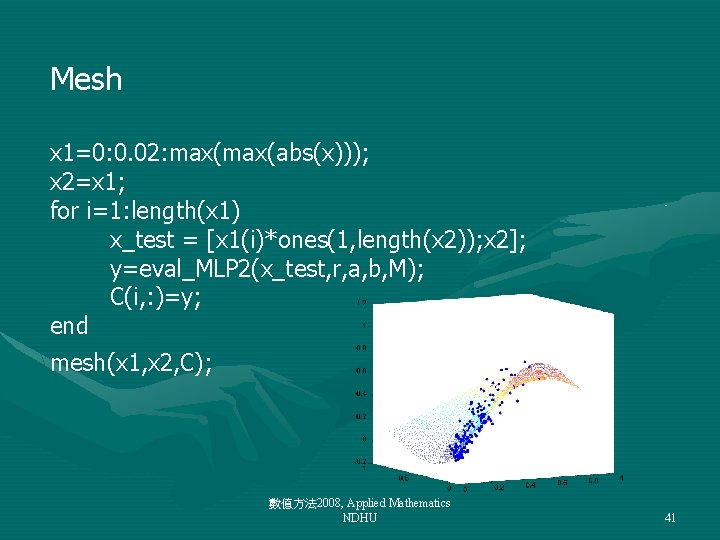

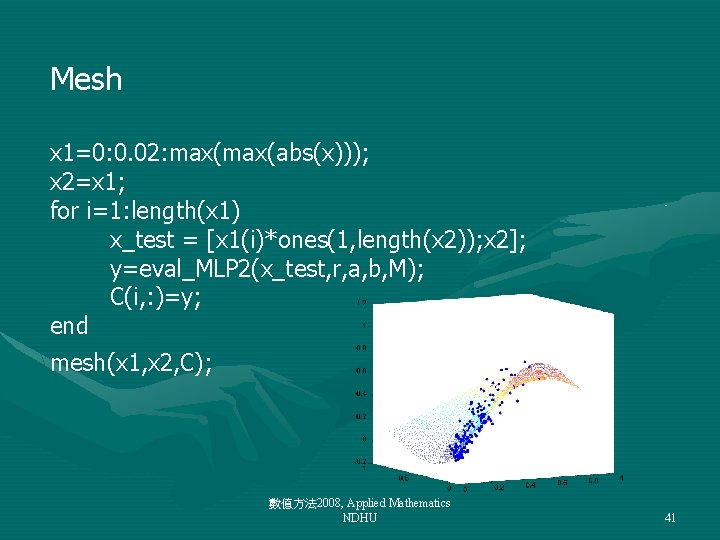

Mesh x 1=0: 0. 02: max(abs(x))); x 2=x 1; for i=1: length(x 1) x_test = [x 1(i)*ones(1, length(x 2)); x 2]; y=eval_MLP 2(x_test, r, a, b, M); C(i, : )=y; end mesh(x 1, x 2, C); 數值方法 2008, Applied Mathematics NDHU 41

Sunspot Source codes 數值方法 2008, Applied Mathematics NDHU 42

![Paired data x len5 for i1 nlen pYi ilen1 xx p yiYilen end 數值方法 Paired data x=[]; len=5; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end 數值方法](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-43.jpg)

Paired data x=[]; len=5; for i=1: n-len p=Y(i: i+len-1)'; x=[x p]; y(i)=Y(i+len); end 數值方法 2008, Applied Mathematics NDHU 43

![Learning and prediction Minputkeyin the number of hidden units a b rlearnMLPx y Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y',](https://slidetodoc.com/presentation_image_h/a3edc7855bd46b87033226fa6f48476f/image-44.jpg)

Learning and prediction M=input('keyin the number of hidden units: '); [a, b, r]=learn_MLP(x', y', M); y=eval_MLP 2(x, r, a, b, M); hold on; plot(1712+2: 1712+2+length(y)-1, y, 'r') ? ? ? 數值方法 2008, Applied Mathematics NDHU 44

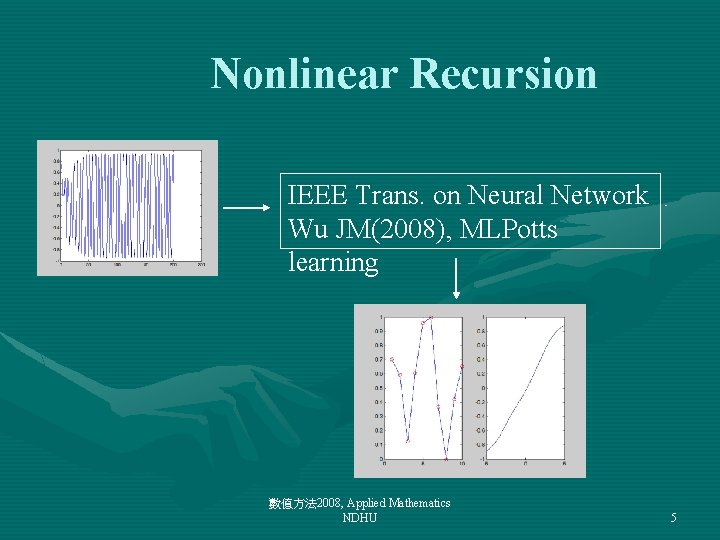

Example • Repeat numerical experiments of slide #42 separately using • Use a table to summarize your numerical results 數值方法 2008, Applied Mathematics NDHU 45