Introduction to Data Mining for Business Intelligence Katy

Introduction to Data Mining for Business Intelligence Katy Azoury Julia Miyaoka April 12, 2011

What is Data Mining? � “Extracting useful information from large data sets. ” – Hand et al. � “Statistics � “Data at scale and speed” - Darryl Pregibon mining is the process of discovering meaningful new correlations, patterns and trends by sifting through large amounts of data stored in repositories, using pattern recognition technologies as well as statistical and mathematical techniques. ”– Gartner Group

Data Mining – Many names � Data Mining � Machine Learning � Statistical Learning � Knowledge Discovery � Predictive Analytics

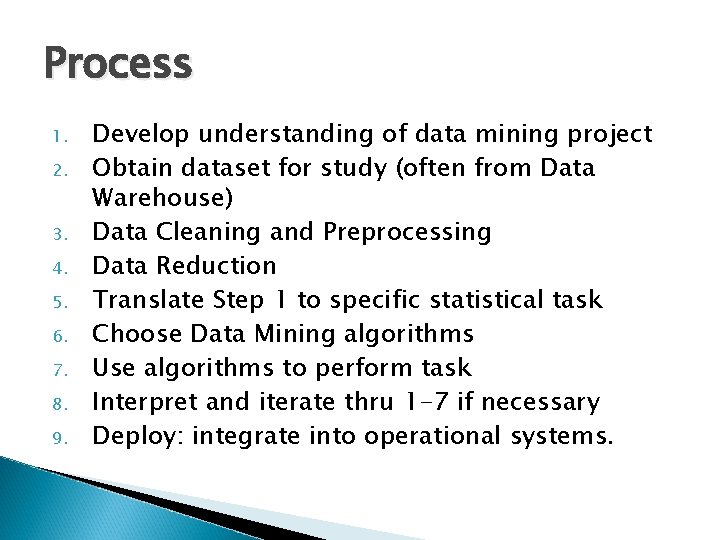

Process 1. 2. 3. 4. 5. 6. 7. 8. 9. Develop understanding of data mining project Obtain dataset for study (often from Data Warehouse) Data Cleaning and Preprocessing Data Reduction Translate Step 1 to specific statistical task Choose Data Mining algorithms Use algorithms to perform task Interpret and iterate thru 1 -7 if necessary Deploy: integrate into operational systems.

Terminology � Observation = record = case = example = instance � Independent variable = Input = attribute = field = feature = predictor � Dependent variable = output = response = target = outcome = label � Learning data = training data � Loss function = error � Validation data = evaluation data = hold-out sample

Types of Data � Numeric ◦ Continuous – ratio (true numeric) and interval (such as dates) ◦ Discrete � Categorical – ◦ Order (like quality levels Excellent, Good, Fair, Poor) ◦ Unordered (like marital status Single, Married, Divorced, Widowed) ◦ Binary (Approve or Disapprove loan application)

Supervised vs Unsupervised Learning � Supervised Learning ◦ The goal is to classify an output or predict a value for an output based on a number of input (independent) variables. ◦ Example: Simple linear regression: Y variable is the outcome and X variable is the predictor. � Unsupervised Learning ◦ No output variable (measure); the goal is to describe associations and patterns in input variables. ◦ Example: Association rules like Amazon’s personalized recommendations.

Error or Loss for supervised learning � Evaluation of supervised learning results is done by comparing predictions to actual results. ◦ If predicting, then typical measurement is mean squared error (MSE). ◦ If classifying, then measurement is number or percent misclassified. � Partition data into training and validation sets ◦ Use training set to fit the model. Loss is underestimated in the training set. ◦ Use validation set to assess how well the model performs. Loss is correctly estimated in the validation set.

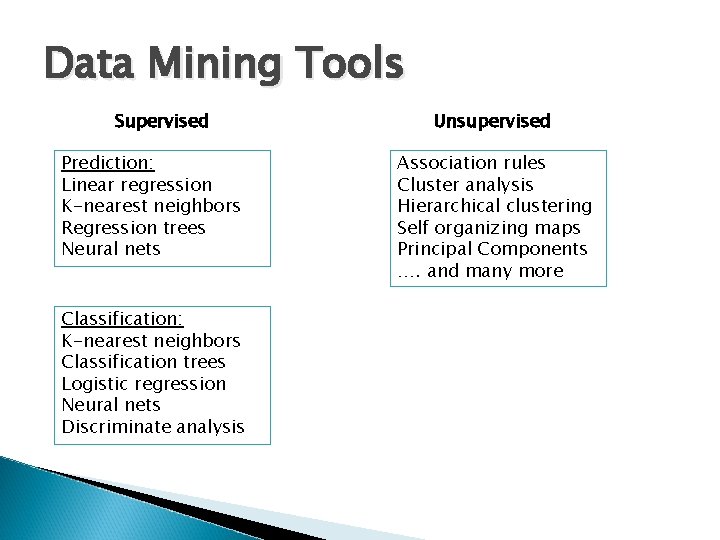

Data Mining Tools Supervised Prediction: Linear regression K-nearest neighbors Regression trees Neural nets Classification: K-nearest neighbors Classification trees Logistic regression Neural nets Discriminate analysis Unsupervised Association rules Cluster analysis Hierarchical clustering Self organizing maps Principal Components …. and many more

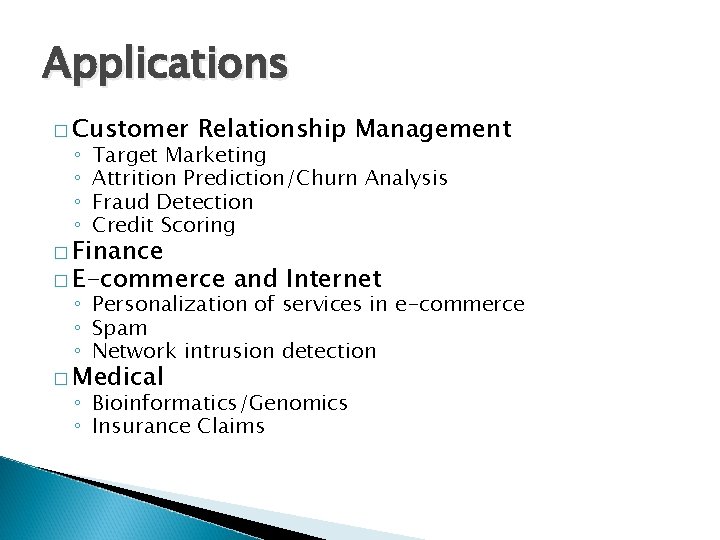

Applications � Customer ◦ ◦ Relationship Management Target Marketing Attrition Prediction/Churn Analysis Fraud Detection Credit Scoring � Finance � E-commerce and Internet ◦ Personalization of services in e-commerce ◦ Spam ◦ Network intrusion detection � Medical ◦ Bioinformatics/Genomics ◦ Insurance Claims

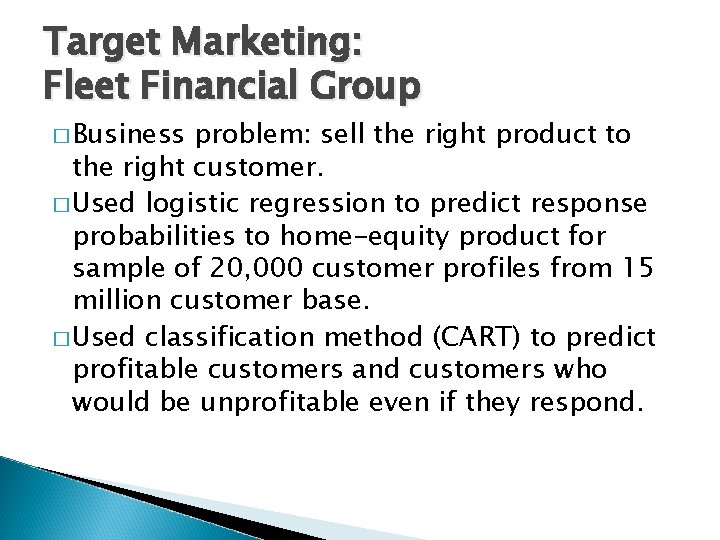

Target Marketing: Fleet Financial Group � Business problem: sell the right product to the right customer. � Used logistic regression to predict response probabilities to home-equity product for sample of 20, 000 customer profiles from 15 million customer base. � Used classification method (CART) to predict profitable customers and customers who would be unprofitable even if they respond.

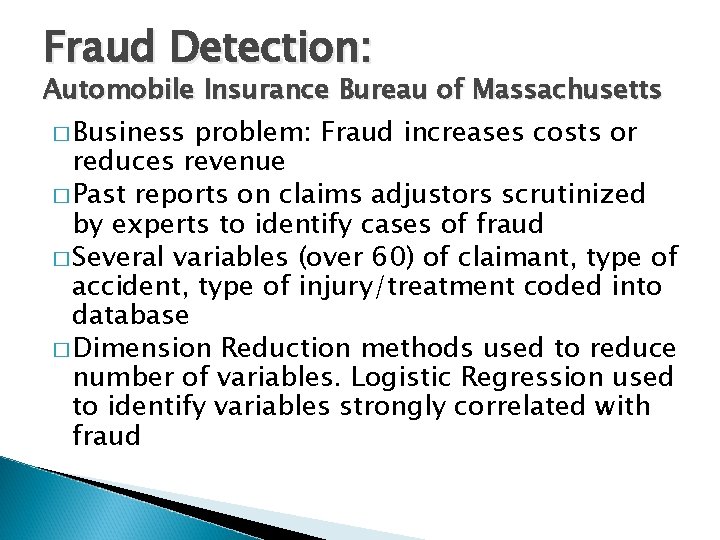

Fraud Detection: Automobile Insurance Bureau of Massachusetts � Business problem: Fraud increases costs or reduces revenue � Past reports on claims adjustors scrutinized by experts to identify cases of fraud � Several variables (over 60) of claimant, type of accident, type of injury/treatment coded into database � Dimension Reduction methods used to reduce number of variables. Logistic Regression used to identify variables strongly correlated with fraud

Personalization of Services: Pandora � Business problem: allow users to build customized “stations” that play music that the user likes. � Uses a k-nearest neighbor approach called the Music Genome Project to choose new songs or artists that are close to the user-specified song or artist. � A song is represented by a list of approximately 400 attributes (gender of lead vocalist, type of background vocals, etc). � Given the attributes of one or more songs, a list of other similar songs is constructed using a distance function.

E-commerce: Dell Computer � Business problem: 50% of Dell’s clients order their computer through the web. However, only 0. 5% of visitors of Dell’s web page become customers. � Solution Approach: Through the sequence of their clicks, cluster customers and design website interventions to maximize the number of customers who eventually buy. � Benefit: Increase revenues

Data Mining Software � � Enterprise Miner (SAS) R, The R Foundation for Statistical Computing. R is a public domain statistical package that originated at Bell Labs as S. � Darwin (Oracle) � Clementine (SPSS) � XLMiner, Excel add in, easy to learn, menu driven. � Teradata � Insightful Miner

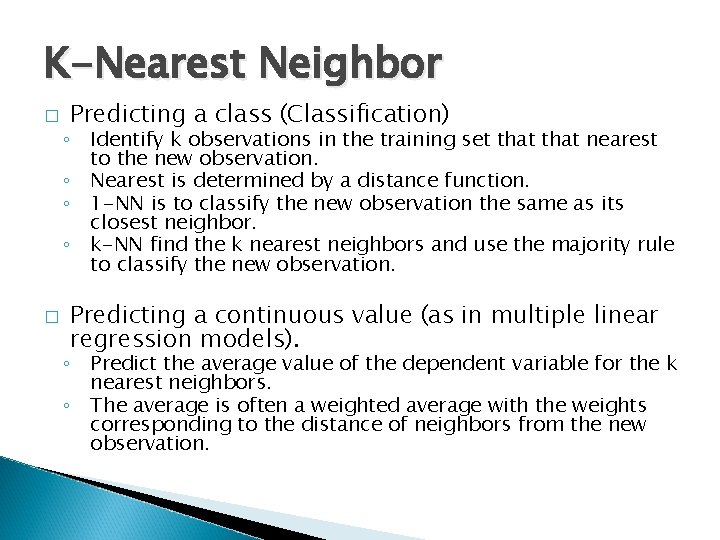

K-Nearest Neighbor � � Predicting a class (Classification) ◦ Identify k observations in the training set that nearest to the new observation. ◦ Nearest is determined by a distance function. ◦ 1 -NN is to classify the new observation the same as its closest neighbor. ◦ k-NN find the k nearest neighbors and use the majority rule to classify the new observation. Predicting a continuous value (as in multiple linear regression models). ◦ Predict the average value of the dependent variable for the k nearest neighbors. ◦ The average is often a weighted average with the weights corresponding to the distance of neighbors from the new observation.

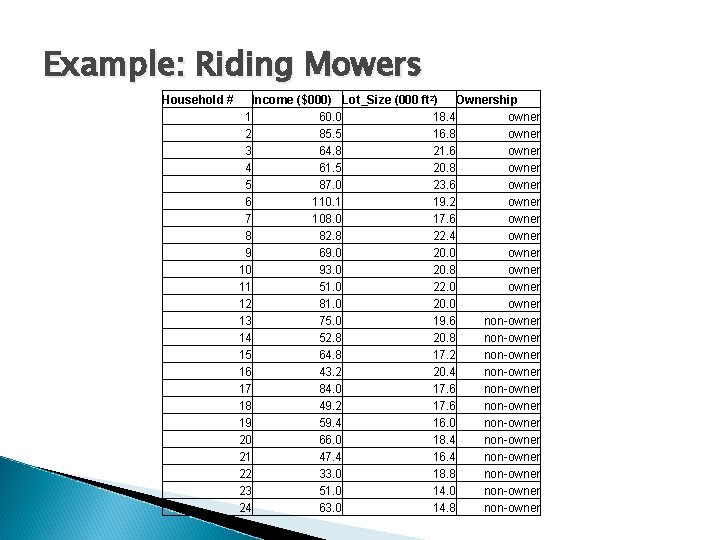

Example: Riding Mowers Household # Income ($000) Lot_Size (000 ft 2) Ownership 1 60. 0 18. 4 owner 2 85. 5 16. 8 owner 3 64. 8 21. 6 owner 4 61. 5 20. 8 owner 5 87. 0 23. 6 owner 6 110. 1 19. 2 owner 7 108. 0 17. 6 owner 8 82. 8 22. 4 owner 9 69. 0 20. 0 owner 10 93. 0 20. 8 owner 11 51. 0 22. 0 owner 12 81. 0 20. 0 owner 13 75. 0 19. 6 non-owner 14 52. 8 20. 8 non-owner 15 64. 8 17. 2 non-owner 16 43. 2 20. 4 non-owner 17 84. 0 17. 6 non-owner 18 49. 2 17. 6 non-owner 19 59. 4 16. 0 non-owner 20 66. 0 18. 4 non-owner 21 47. 4 16. 4 non-owner 22 33. 0 18. 8 non-owner 23 51. 0 14. 0 non-owner 24 63. 0 14. 8 non-owner

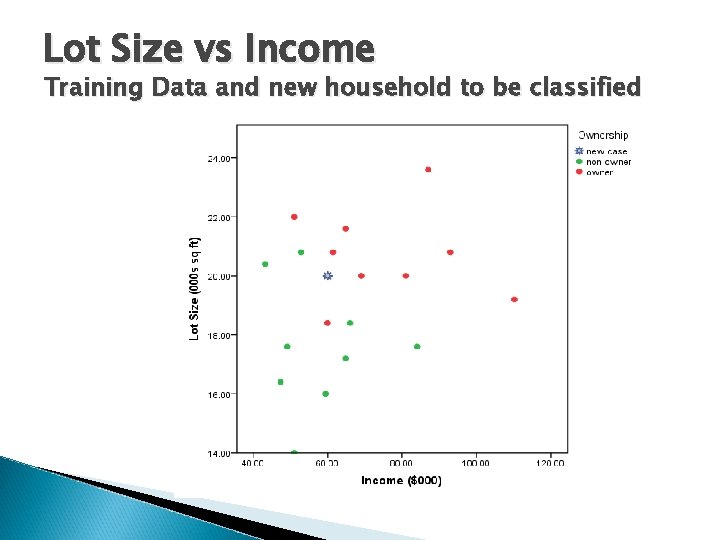

Lot Size vs Income Training Data and new household to be classified

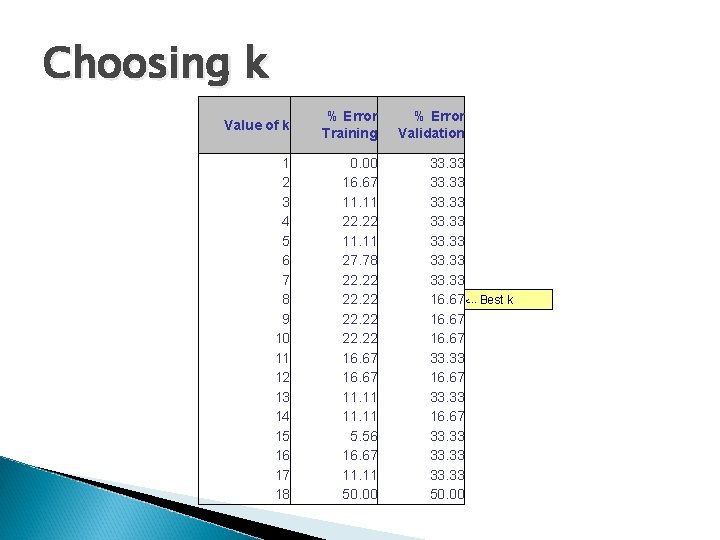

Choosing k Value of k % Error Training 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 0. 00 16. 67 11. 11 22. 22 11. 11 27. 78 22. 22 16. 67 11. 11 5. 56 16. 67 11. 11 50. 00 % Error Validation 33. 33 33. 33 16. 67<--- Best k 16. 67 33. 33 33. 33 50. 00

Classification and Regression Trees (CART) Trees are based on separating observations into subgroups and creating splits based on predictors. � Intuitive and easy to understand � Good performance with many kinds of data. � Developed by Brieman, Friedman, Olshen and Stone (1984). �

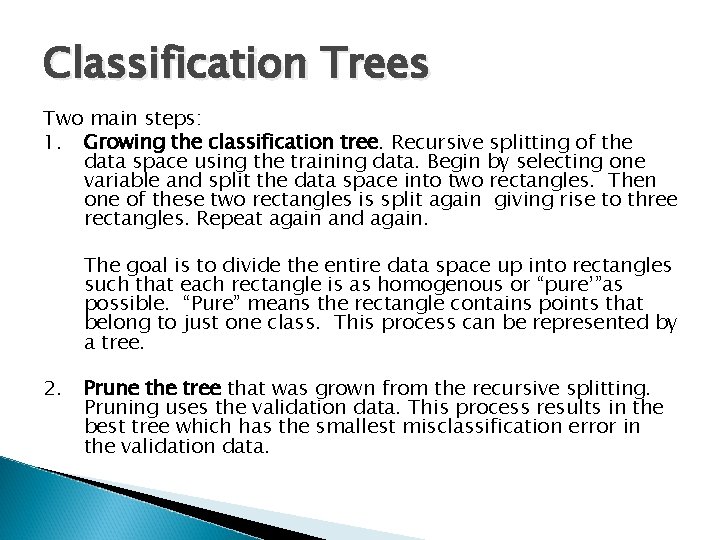

Classification Trees Two main steps: 1. Growing the classification tree. Recursive splitting of the data space using the training data. Begin by selecting one variable and split the data space into two rectangles. Then one of these two rectangles is split again giving rise to three rectangles. Repeat again and again. The goal is to divide the entire data space up into rectangles such that each rectangle is as homogenous or “pure’”as possible. “Pure” means the rectangle contains points that belong to just one class. This process can be represented by a tree. 2. Prune the tree that was grown from the recursive splitting. Pruning uses the validation data. This process results in the best tree which has the smallest misclassification error in the validation data.

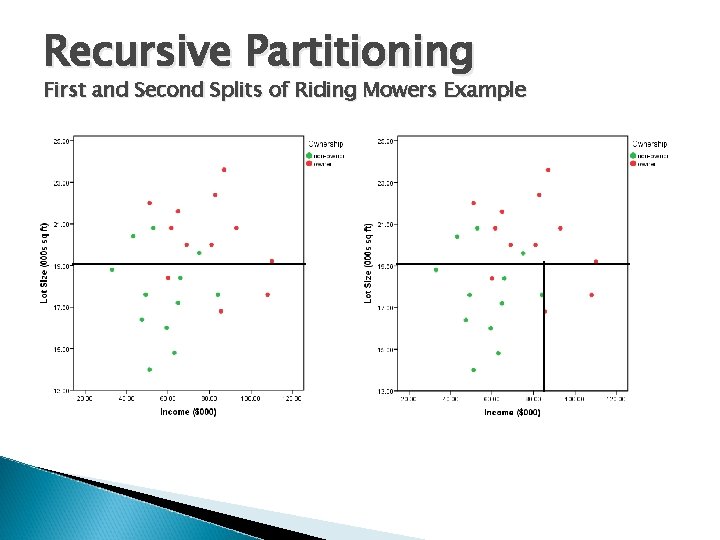

Recursive Partitioning First and Second Splits of Riding Mowers Example

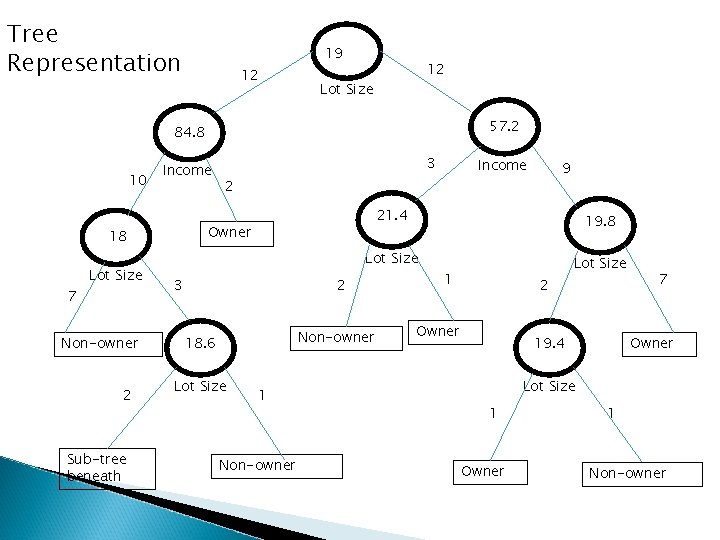

Tree Representation 19 12 12 Lot Size 57. 2 84. 8 10 Income 7 Non-owner 2 Sub-tree beneath Income 2 19. 8 Lot Size 3 2 Non-owner 18. 6 Lot Size 9 21. 4 Owner 18 Lot Size 3 1 Non-owner 1 2 Owner Lot Size 19. 4 7 Owner Lot Size 1 Owner 1 Non-owner

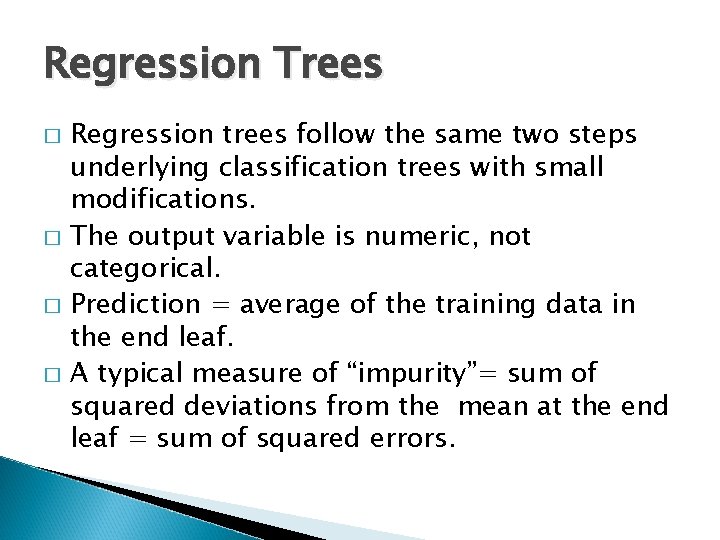

Regression Trees � � Regression trees follow the same two steps underlying classification trees with small modifications. The output variable is numeric, not categorical. Prediction = average of the training data in the end leaf. A typical measure of “impurity”= sum of squared deviations from the mean at the end leaf = sum of squared errors.

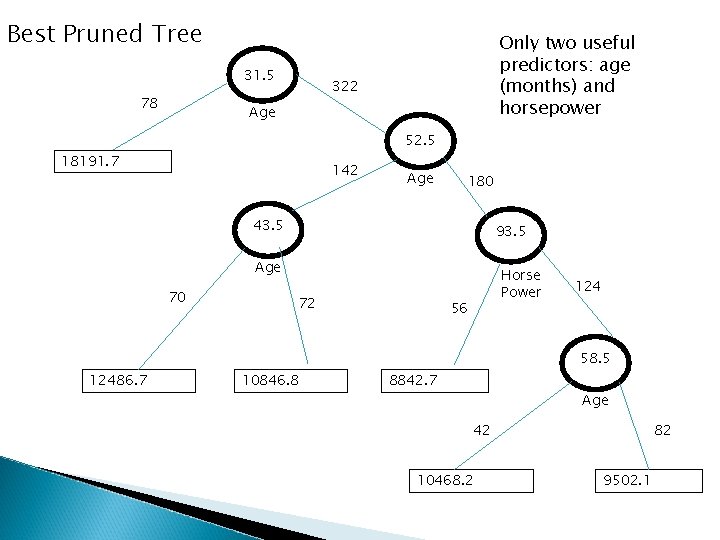

Example: Predicting the Price of Used Toyota Corolla � Goal is to find a predictive model of price (euros) as a function of 10 predictors (age, kilometers, horsepower, weight, fuel-type, power-steering, CD-player, etc. ) � Data set has 1000 sold cars. � Regression tree built using training set of 600 cases. � Pruning of tree used validation set of 400 cases.

Best Pruned Tree 31. 5 78 Only two useful predictors: age (months) and horsepower 322 Age 52. 5 18191. 7 142 Age 180 43. 5 93. 5 Age 70 72 Horse Power 56 124 58. 5 12486. 7 10846. 8 8842. 7 Age 42 10468. 2 82 9502. 1

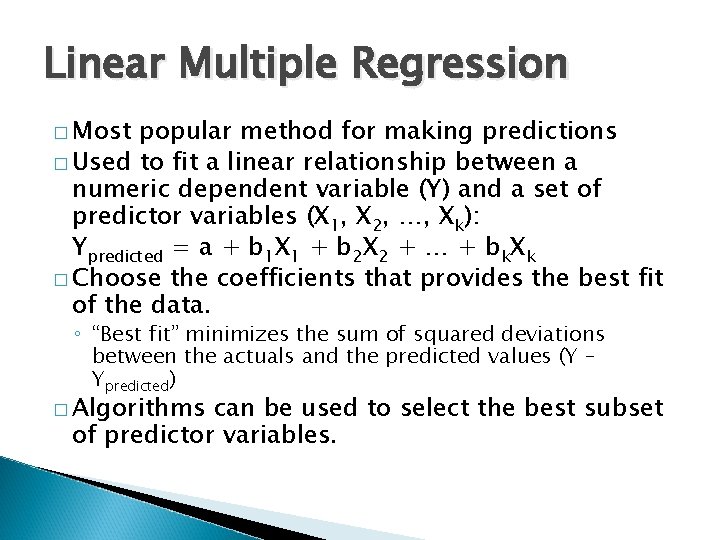

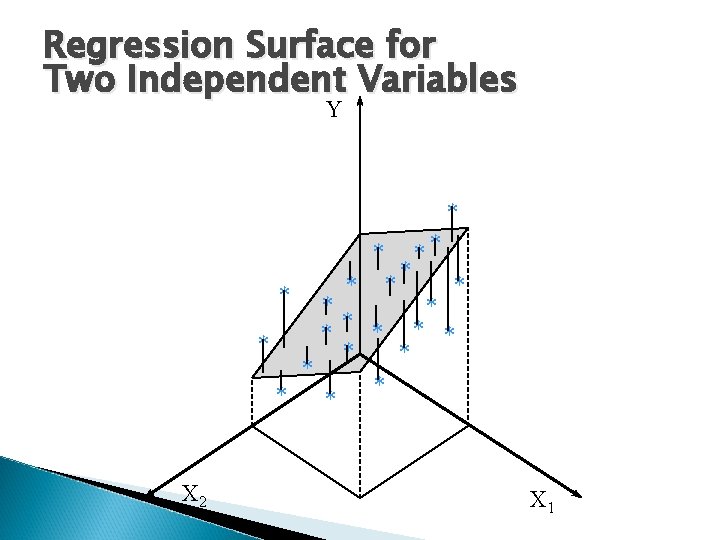

Linear Multiple Regression � Most popular method for making predictions � Used to fit a linear relationship between a numeric dependent variable (Y) and a set of predictor variables (X 1, X 2, …, Xk): Ypredicted = a + b 1 X 1 + b 2 X 2 + … + bk. Xk � Choose the coefficients that provides the best fit of the data. ◦ “Best fit” minimizes the sum of squared deviations between the actuals and the predicted values (Y – Ypredicted) � Algorithms can be used to select the best subset of predictor variables.

Regression Surface for Two Independent Variables Y * * * ** * * * X 2 X 1

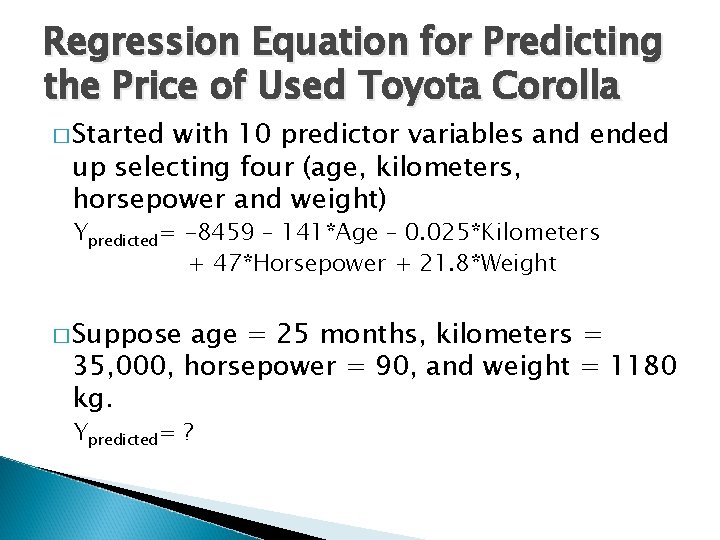

Regression Equation for Predicting the Price of Used Toyota Corolla � Started with 10 predictor variables and ended up selecting four (age, kilometers, horsepower and weight) Ypredicted= -8459 – 141*Age – 0. 025*Kilometers + 47*Horsepower + 21. 8*Weight � Suppose age = 25 months, kilometers = 35, 000, horsepower = 90, and weight = 1180 kg. Ypredicted= ?

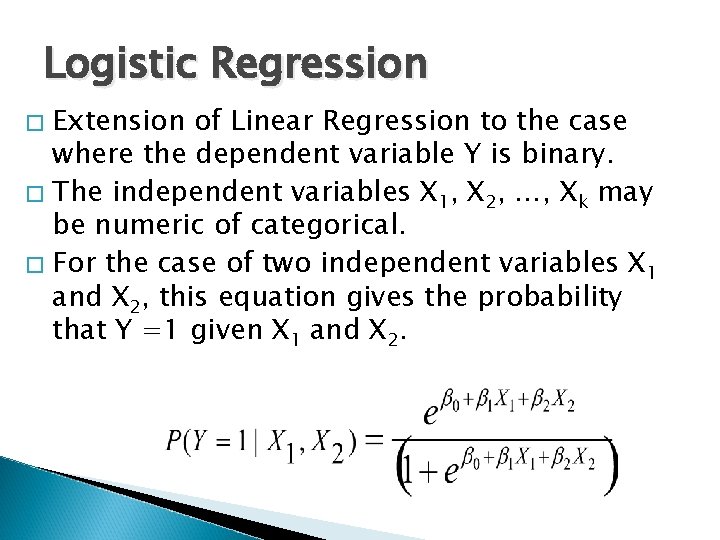

Logistic Regression Extension of Linear Regression to the case where the dependent variable Y is binary. � The independent variables X 1, X 2, …, Xk may be numeric of categorical. � For the case of two independent variables X 1 and X 2, this equation gives the probability that Y =1 given X 1 and X 2. �

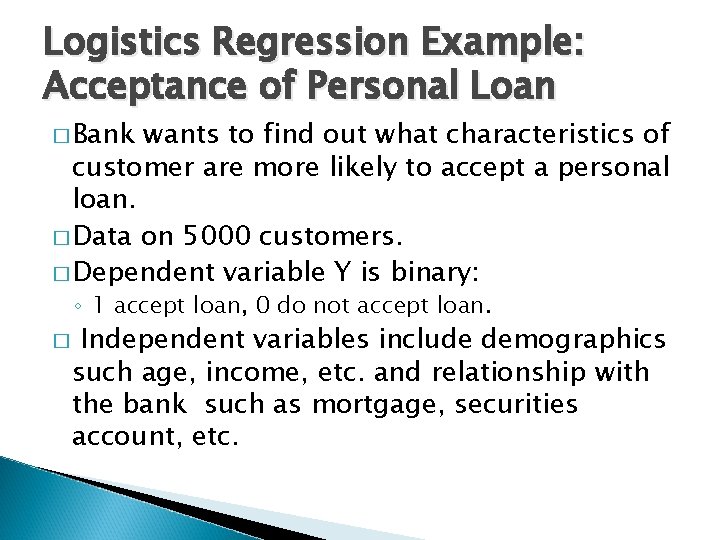

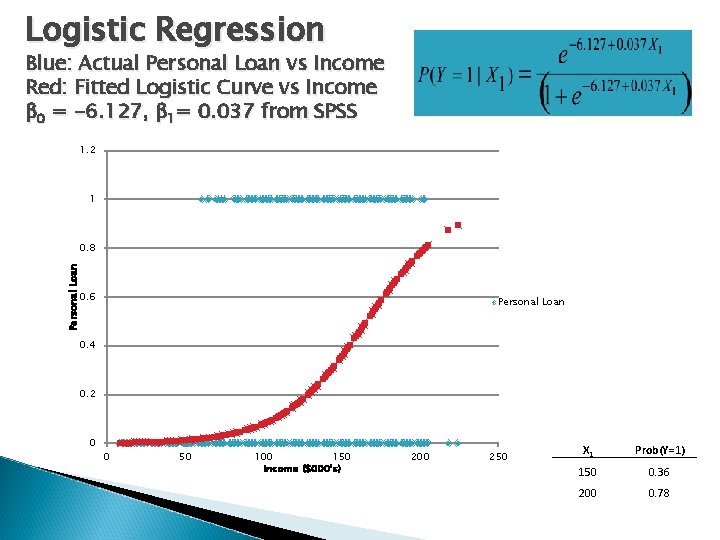

Logistics Regression Example: Acceptance of Personal Loan � Bank wants to find out what characteristics of customer are more likely to accept a personal loan. � Data on 5000 customers. � Dependent variable Y is binary: ◦ 1 accept loan, 0 do not accept loan. � Independent variables include demographics such age, income, etc. and relationship with the bank such as mortgage, securities account, etc.

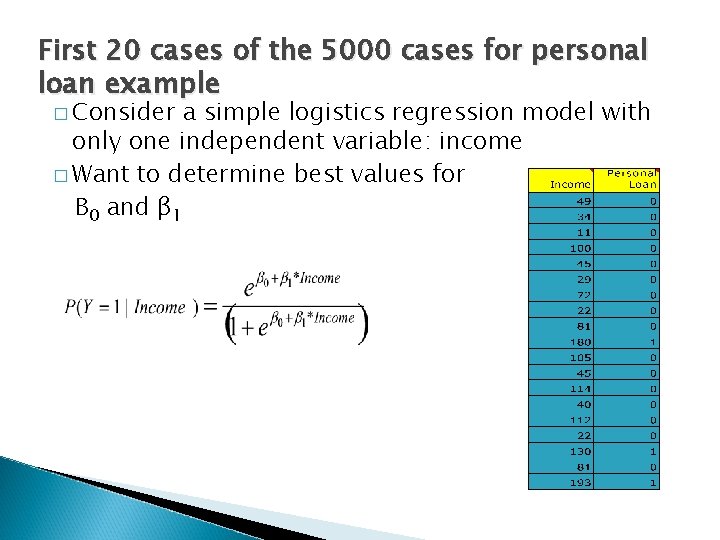

First 20 cases of the 5000 cases for personal loan example � Consider a simple logistics regression model with only one independent variable: income � Want to determine best values for Β 0 and β 1

Logistic Regression Blue: Actual Personal Loan vs Income Red: Fitted Logistic Curve vs Income β 0 = -6. 127, β 1= 0. 037 from SPSS 1. 2 1 Personal Loan 0. 8 0. 6 Personal Loan 0. 4 0. 2 0 0 50 100 150 Income ($000's) 200 250 X 1 Prob(Y=1) 150 0. 36 200 0. 78

Discriminant Analysis � � Classification method Popular technique is Fisher’s linear classification function, which is used to express each class variable as a linear combination of variables (like in regression) Goal: obtain classes that are homogenous but differ the most from each other Common uses: ◦ Classifying organisms into species ◦ Classifying applications for insurance into low and high risk ◦ Classifying bonds into bond rating categories

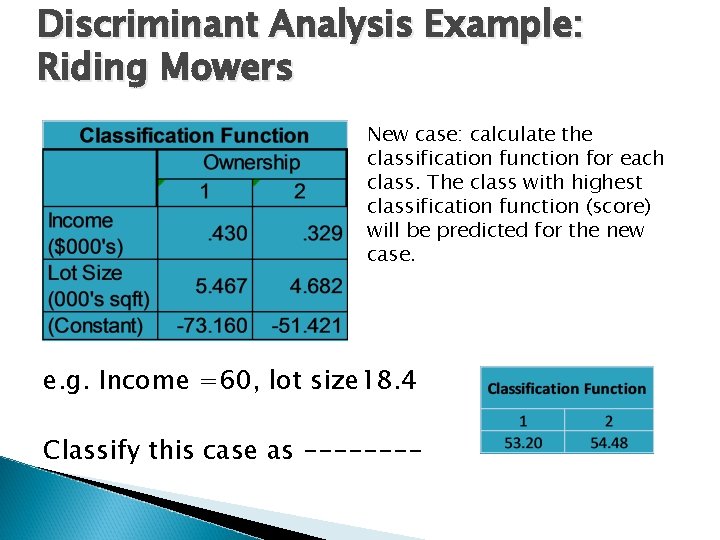

Discriminant Analysis Example: Riding Mowers New case: calculate the classification function for each class. The class with highest classification function (score) will be predicted for the new case. e. g. Income =60, lot size 18. 4 Classify this case as ----

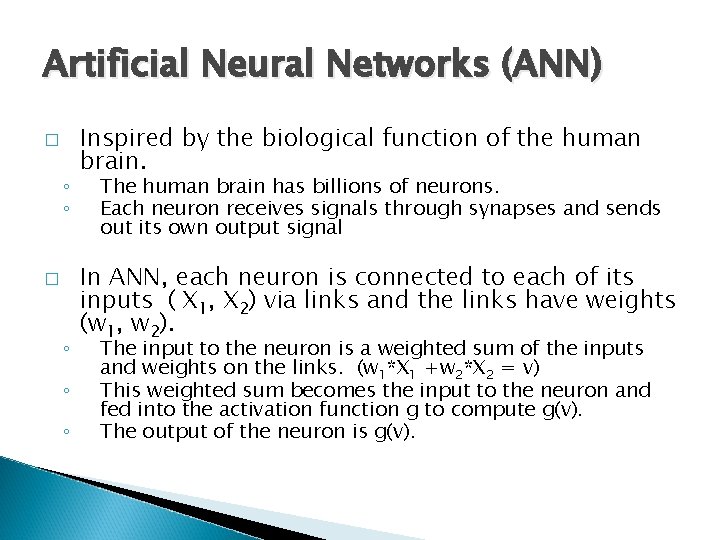

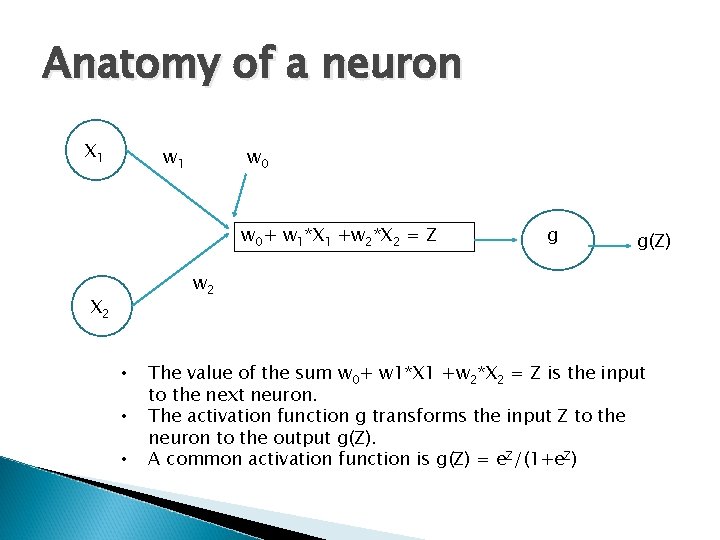

Artificial Neural Networks (ANN) � ◦ ◦ ◦ Inspired by the biological function of the human brain. The human brain has billions of neurons. Each neuron receives signals through synapses and sends out its own output signal In ANN, each neuron is connected to each of its inputs ( X 1, X 2) via links and the links have weights (w 1, w 2). The input to the neuron is a weighted sum of the inputs and weights on the links. (w 1*X 1 +w 2*X 2 = v) This weighted sum becomes the input to the neuron and fed into the activation function g to compute g(v). The output of the neuron is g(v).

Anatomy of a neuron X 1 w 0 w 0+ w 1*X 1 +w 2*X 2 = Z g g(Z) w 2 X 2 • • • The value of the sum w 0+ w 1*X 1 +w 2*X 2 = Z is the input to the next neuron. The activation function g transforms the input Z to the neuron to the output g(Z). A common activation function is g(Z) = e. Z/(1+e. Z)

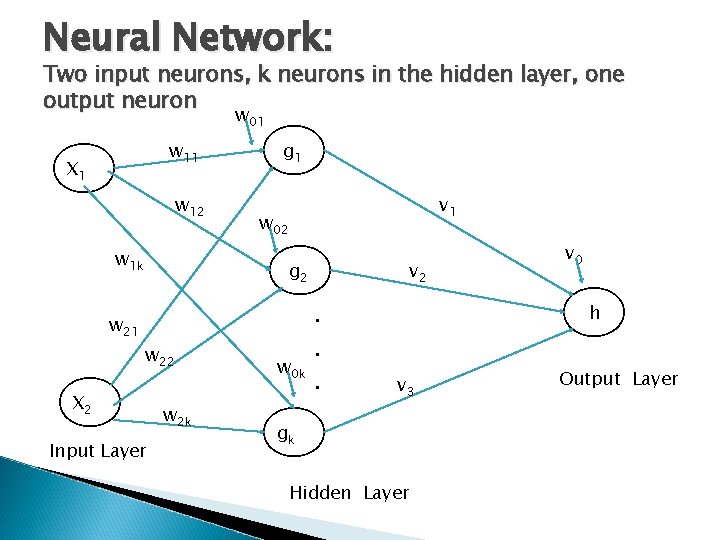

Neural Networks � � Many neural network algorithms Most common algorithms are multilayer feedforward networks. � Input layer nodes accept values for the input (independent) variables. � Last layer has the output neuron. � Layer(s) between the input and the outputs are called hidden layers.

Neural Network: Two input neurons, k neurons in the hidden layer, one output neuron w 01 w 11 X 1 w 12 w 1 k g 1 v 1 w 02 g 2 w 21 w 22 X 2 Input Layer w 2 k . . w 0 k. v 2 v 0 h v 3 gk Hidden Layer Output Layer

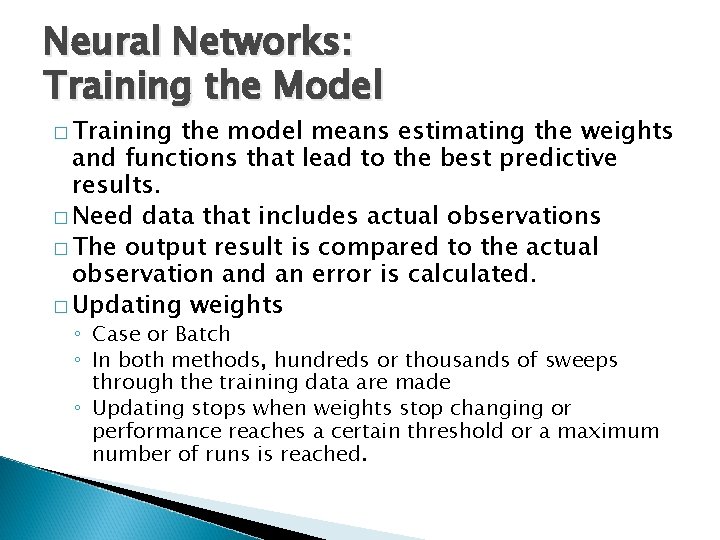

Neural Networks: Training the Model � Training the model means estimating the weights and functions that lead to the best predictive results. � Need data that includes actual observations � The output result is compared to the actual observation and an error is calculated. � Updating weights ◦ Case or Batch ◦ In both methods, hundreds or thousands of sweeps through the training data are made ◦ Updating stops when weights stop changing or performance reaches a certain threshold or a maximum number of runs is reached.

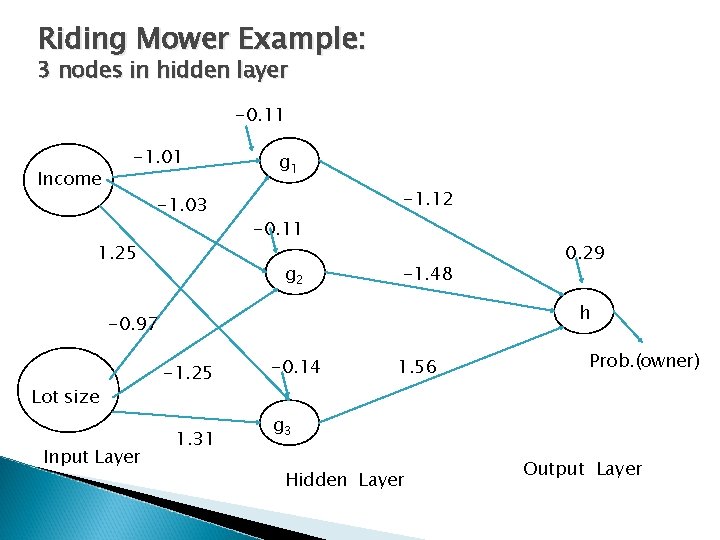

Riding Mower Example: 3 nodes in hidden layer -0. 11 Income -1. 01 -1. 03 1. 25 g 1 -1. 12 -0. 11 g 2 -1. 48 h -0. 97 Lot size Input Layer 0. 29 -1. 25 1. 31 -0. 14 1. 56 Prob. (owner) g 3 Hidden Layer Output Layer

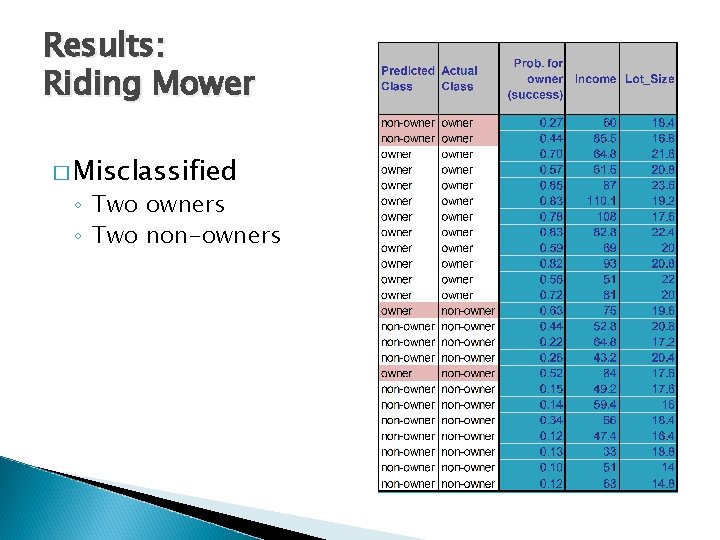

Results: Riding Mower � Misclassified ◦ Two owners ◦ Two non-owners

Unsupervised Learning � Association rules � Cluster Analysis � Hierarchical clustering � Self organizing maps � Principal Components � …. and many more

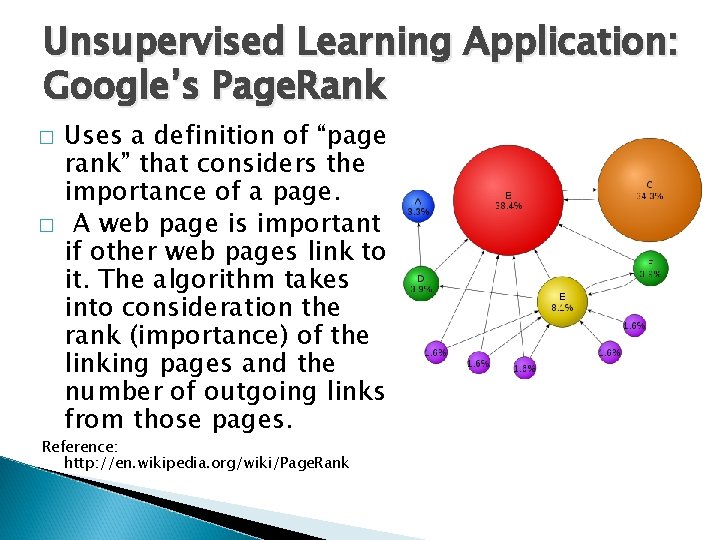

Unsupervised Learning Application: Google’s Page. Rank � � Uses a definition of “page rank” that considers the importance of a page. A web page is important if other web pages link to it. The algorithm takes into consideration the rank (importance) of the linking pages and the number of outgoing links from those pages. Reference: http: //en. wikipedia. org/wiki/Page. Rank

Association Rules � � � Association Rules find interesting relationships in data. Market Basket Analysis is a popular application. Vocabulary: ◦ Itemset = set of items {cereal, cookies} ◦ Support: The probability of an itemset = # {cereal, cookies}/ # observations ◦ Association rules: e. g Itemset {cereal cookies} will cause {milk} Milk is the consequent and {cereal, cookies} is the antecedent. ◦ Confidence of rule: = # {milk, cereal, cookies}/# {cereal, cookies} (Similar to conditional probability)

Association Rules � � � The goal is to find rules that have high confidence. This problem is very hard and computationally intensive. Most popular algorithm: Apriori algorithm due to Agrawal et al (1995) This apriori algorithm can quickly analyze very large data sets. “Association rules are among data mining’s biggest successes. ”- Hastie, Tibshiranai, and Friedman

- Slides: 46