HPVM Performance Portability Programmability and Approximation for Heterogeneous

HPVM: Performance, Portability, Programmability and Approximation for Heterogeneous Parallel Systems Vikram Adve With: Maria Kotsifakou, Hashim Sharif, ♣ Prakalp Srivastava, Adel Ejjeh, Akash Kothari, Benjamin Schreiber, Yifan Zhou Sarita Adve and Sasa Misailovic University of Illinois at Urbana-Champaign ♣Google Brain Supported by: NSF, DARPA, SRC, ONR, Intel, Amazon

Heterogeneous So. Cs are Ubiquitous Heterogenous parallelism and specialization are critical for energy efficiency, performance

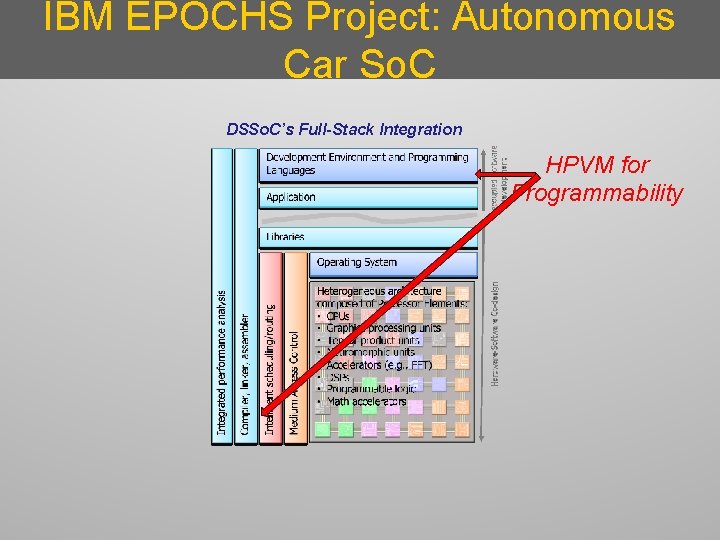

IBM EPOCHS Project: Autonomous Car So. C DSSo. C’s Full-Stack Integration HPVM for Programmability

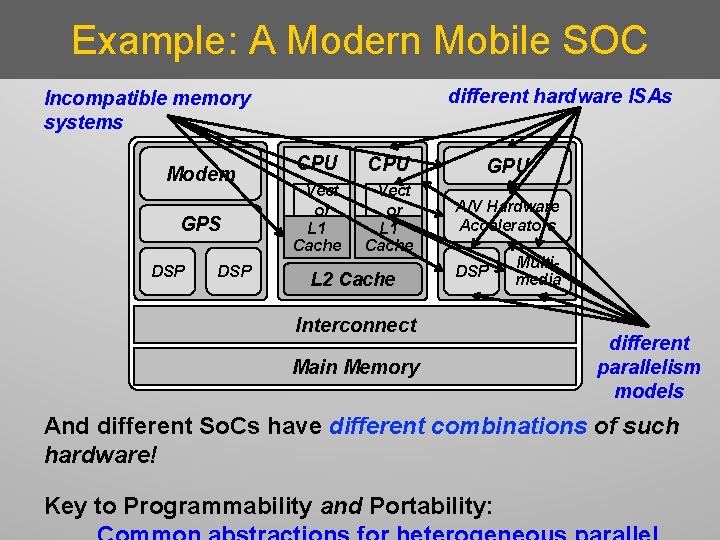

Example: A Modern Mobile SOC different hardware ISAs Incompatible memory systems Modem GPS DSP CPU GPU Vect or L 1 Cache A/V Hardware Accelerators L 2 Cache DSP Interconnect Main Memory Multimedia different parallelism models And different So. Cs have different combinations of such hardware! Key to Programmability and Portability:

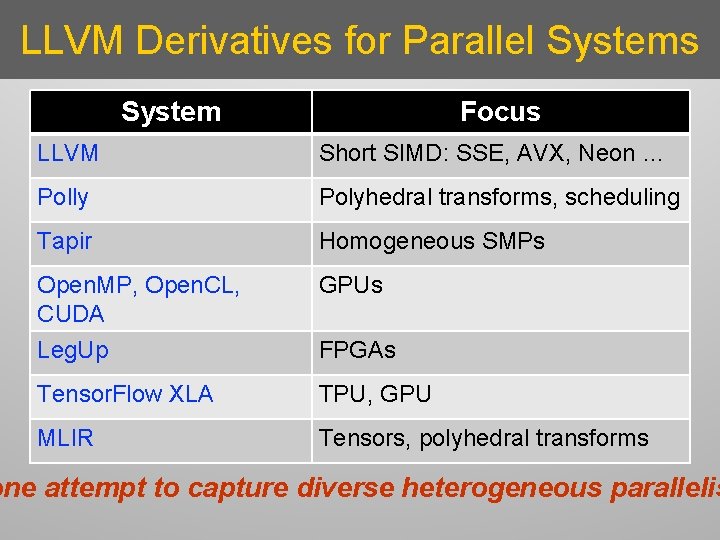

LLVM Derivatives for Parallel Systems System Focus LLVM Short SIMD: SSE, AVX, Neon … Polly Polyhedral transforms, scheduling Tapir Homogeneous SMPs Open. MP, Open. CL, CUDA Leg. Up GPUs Tensor. Flow XLA TPU, GPU MLIR Tensors, polyhedral transforms FPGAs one attempt to capture diverse heterogeneous parallelis

The HPVM Program Representation • A common parallel abstraction • Compiler IR + Virtual ISA + Run-time scheduling hpvm. cs. Illinois. edu

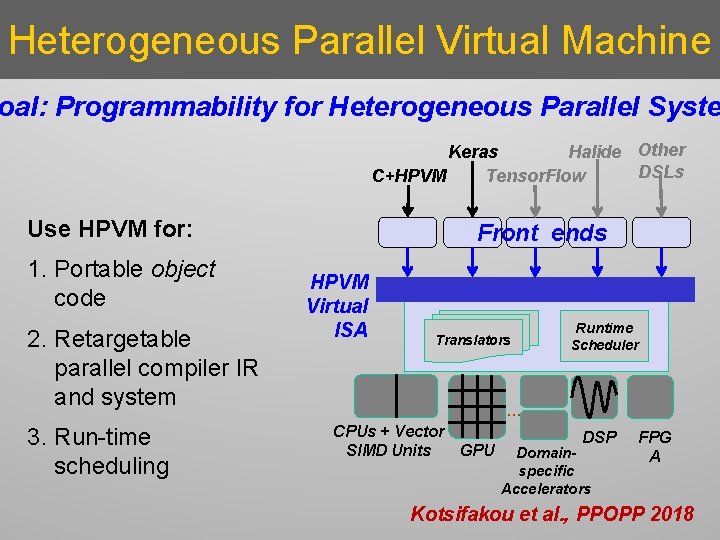

Heterogeneous Parallel Virtual Machine oal: Programmability for Heterogeneous Parallel Syste Keras Halide Other DSLs Tensor. Flow C+HPVM Use HPVM for: 1. Portable object code 2. Retargetable parallel compiler IR and system 3. Run-time scheduling Front ends HPVM Virtual ISA Translators Runtime Scheduler … CPUs + Vector GPU SIMD Units DSP Domainspecific Accelerators FPG A Kotsifakou et al. , PPOPP 2018

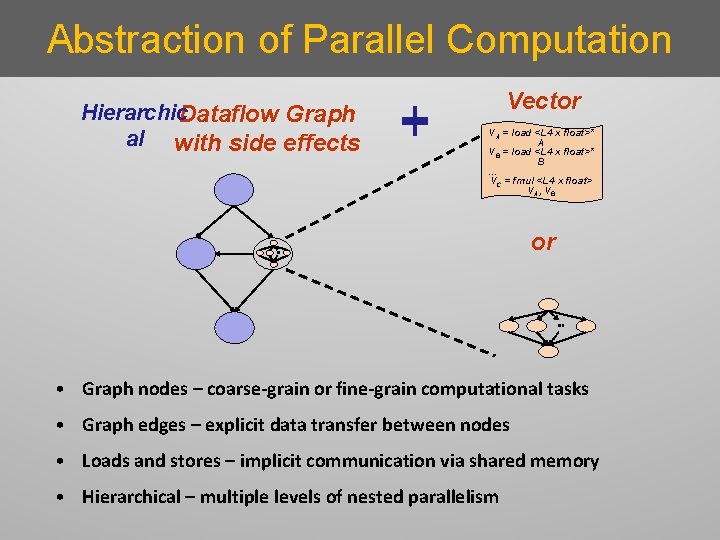

Abstraction of Parallel Computation Hierarchic. Dataflow Graph al with side effects Vector VA = load <L 4 x float>* A VB = load <L 4 x float>* B … VC = fmul <L 4 x float> VA, VB or • Graph nodes – coarse-grain or fine-grain computational tasks • Graph edges – explicit data transfer between nodes • Loads and stores – implicit communication via shared memory • Hierarchical – multiple levels of nested parallelism

![HPVM Node Parallelism Static Dataflow Graph Dynamic Dataflow Graph (conceptual) Node instantiation [N] 1 HPVM Node Parallelism Static Dataflow Graph Dynamic Dataflow Graph (conceptual) Node instantiation [N] 1](http://slidetodoc.com/presentation_image_h2/6a4719e78c88b5f3468099c1e495e571/image-9.jpg)

HPVM Node Parallelism Static Dataflow Graph Dynamic Dataflow Graph (conceptual) Node instantiation [N] 1 2 N Similar to instances of a CUDA or Open. CL kernel body HPVM supports 1 -3 dimensional grids: 1 D, 2 D, 3 D, …

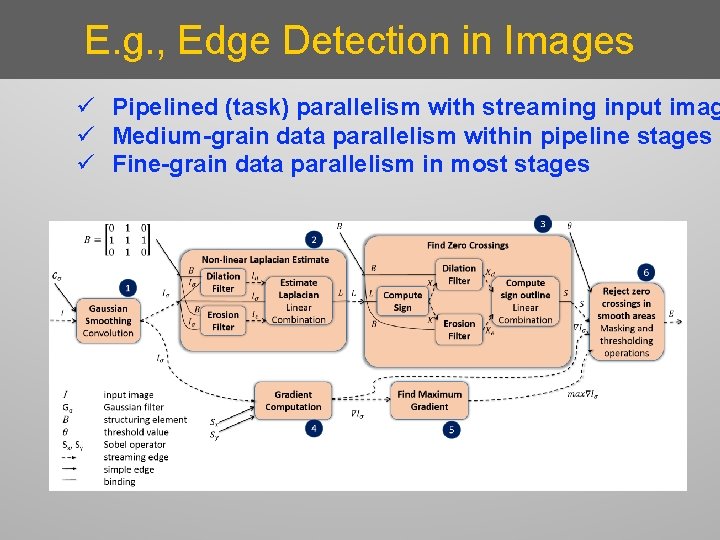

E. g. , Edge Detection in Images ü Pipelined (task) parallelism with streaming input imag ü Medium-grain data parallelism within pipeline stages ü Fine-grain data parallelism in most stages

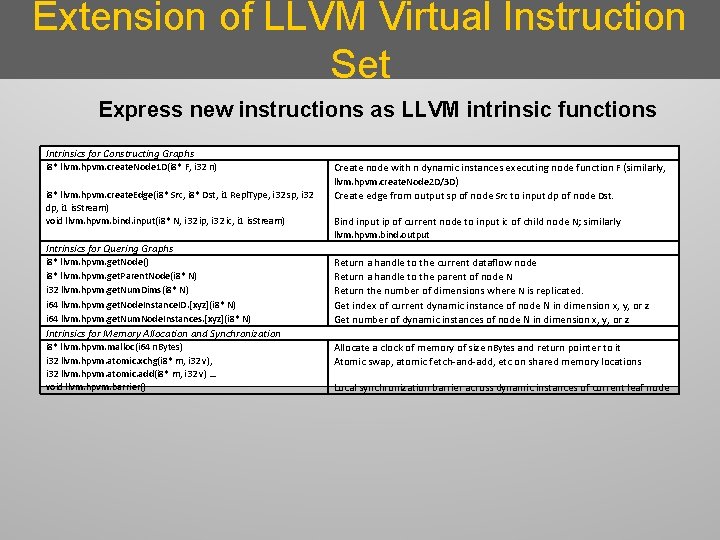

Extension of LLVM Virtual Instruction Set Express new instructions as LLVM intrinsic functions Intrinsics for Constructing Graphs i 8* llvm. hpvm. create. Node 1 D(i 8* F, i 32 n) i 8* llvm. hpvm. create. Edge(i 8* Src, i 8* Dst, i 1 Repl. Type, i 32 sp, i 32 dp, i 1 is. Stream) void llvm. hpvm. bind. input(i 8* N, i 32 ip, i 32 ic, i 1 is. Stream) Create node with n dynamic instances executing node function F (similarly, llvm. hpvm. create. Node 2 D/3 D) Create edge from output sp of node Src to input dp of node Dst. Bind input ip of current node to input ic of child node N; similarly llvm. hpvm. bind. output Intrinsics for Quering Graphs i 8* llvm. hpvm. get. Node() i 8* llvm. hpvm. get. Parent. Node(i 8* N) i 32 llvm. hpvm. get. Num. Dims(i 8* N) i 64 llvm. hpvm. get. Node. Instance. ID. [xyz](i 8* N) i 64 llvm. hpvm. get. Num. Node. Instances. [xyz](i 8* N) Return a handle to the current dataflow node Return a handle to the parent of node N Return the number of dimensions where N is replicated. Get index of current dynamic instance of node N in dimension x, y, or z Get number of dynamic instances of node N in dimension x, y, or z Intrinsics for Memory Allocation and Synchronization i 8* llvm. hpvm. malloc(i 64 n. Bytes) i 32 llvm. hpvm. atomic. xchg(i 8* m, i 32 v), i 32 llvm. hpvm. atomic. add(i 8* m, i 32 v) … void llvm. hpvm. barrier() Allocate a clock of memory of size n. Bytes and return pointer to it Atomic swap, atomic fetch-and-add, etc on shared memory locations Local synchronization barrier across dynamic instances of current leaf node

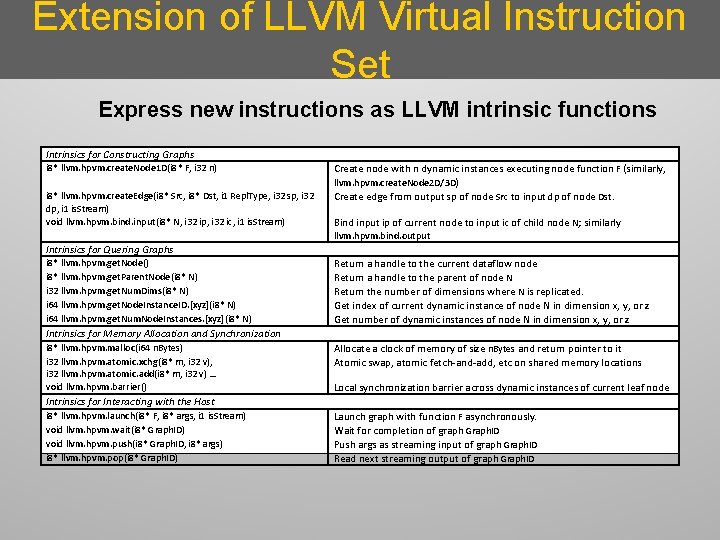

Extension of LLVM Virtual Instruction Set Express new instructions as LLVM intrinsic functions Intrinsics for Constructing Graphs i 8* llvm. hpvm. create. Node 1 D(i 8* F, i 32 n) i 8* llvm. hpvm. create. Edge(i 8* Src, i 8* Dst, i 1 Repl. Type, i 32 sp, i 32 dp, i 1 is. Stream) void llvm. hpvm. bind. input(i 8* N, i 32 ip, i 32 ic, i 1 is. Stream) Create node with n dynamic instances executing node function F (similarly, llvm. hpvm. create. Node 2 D/3 D) Create edge from output sp of node Src to input dp of node Dst. Bind input ip of current node to input ic of child node N; similarly llvm. hpvm. bind. output Intrinsics for Quering Graphs i 8* llvm. hpvm. get. Node() i 8* llvm. hpvm. get. Parent. Node(i 8* N) i 32 llvm. hpvm. get. Num. Dims(i 8* N) i 64 llvm. hpvm. get. Node. Instance. ID. [xyz](i 8* N) i 64 llvm. hpvm. get. Num. Node. Instances. [xyz](i 8* N) Return a handle to the current dataflow node Return a handle to the parent of node N Return the number of dimensions where N is replicated. Get index of current dynamic instance of node N in dimension x, y, or z Get number of dynamic instances of node N in dimension x, y, or z Intrinsics for Memory Allocation and Synchronization i 8* llvm. hpvm. malloc(i 64 n. Bytes) i 32 llvm. hpvm. atomic. xchg(i 8* m, i 32 v), i 32 llvm. hpvm. atomic. add(i 8* m, i 32 v) … void llvm. hpvm. barrier() Allocate a clock of memory of size n. Bytes and return pointer to it Atomic swap, atomic fetch-and-add, etc on shared memory locations Local synchronization barrier across dynamic instances of current leaf node Intrinsics for Interacting with the Host i 8* llvm. hpvm. launch(i 8* F, i 8* args, i 1 is. Stream) void llvm. hpvm. wait(i 8* Graph. ID) void llvm. hpvm. push(i 8* Graph. ID, i 8* args) i 8* llvm. hpvm. pop(i 8* Graph. ID) Launch graph with function F asynchronously. Wait for completion of graph Graph. ID Push args as streaming input of graph Graph. ID Read next streaming output of graph Graph. ID

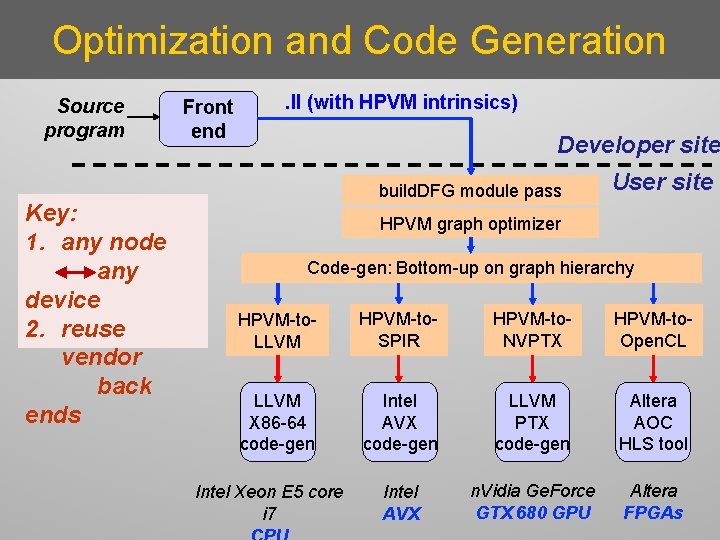

Optimization and Code Generation Source program Key: 1. any node any device 2. reuse vendor back ends Front end . ll (with HPVM intrinsics) Developer site build. DFG module pass User site HPVM graph optimizer Code-gen: Bottom-up on graph hierarchy HPVM-to. LLVM HPVM-to. SPIR HPVM-to. NVPTX HPVM-to. Open. CL LLVM X 86 -64 code-gen Intel AVX code-gen LLVM PTX code-gen Altera AOC HLS tool Intel AVX n. Vidia Ge. Force GTX 680 GPU Altera FPGAs Intel Xeon E 5 core i 7

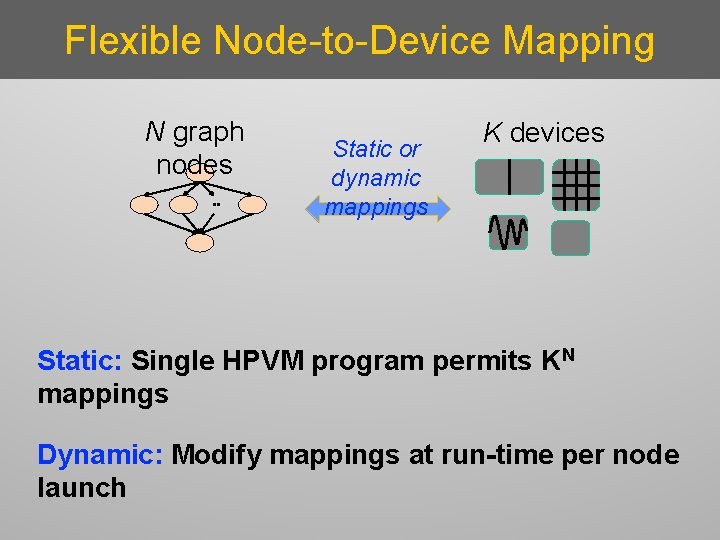

Flexible Node-to-Device Mapping N graph nodes Static or dynamic mappings K devices Static: Single HPVM program permits KN mappings Dynamic: Modify mappings at run-time per node launch

Evaluation: Goals and Methodology Multicore: Intel Xeon E 5 core i 7 Vector ISA: AVX GPU: n. Vidia Ge. Force GTX 680 GPU; 1536 cores; 2 GB 1. Comparisons with separate hand-tuned codes Ø Open. CL (Parboil): SPMV, sgemm, stencil, lbm, bfs, tpacf, cutcp Ø Hand-tuned for GPU: opencl_nvidia (5), opencl_base (2) Ø Hand-tuned for AVX: Same version as GPU except stencil, bfs

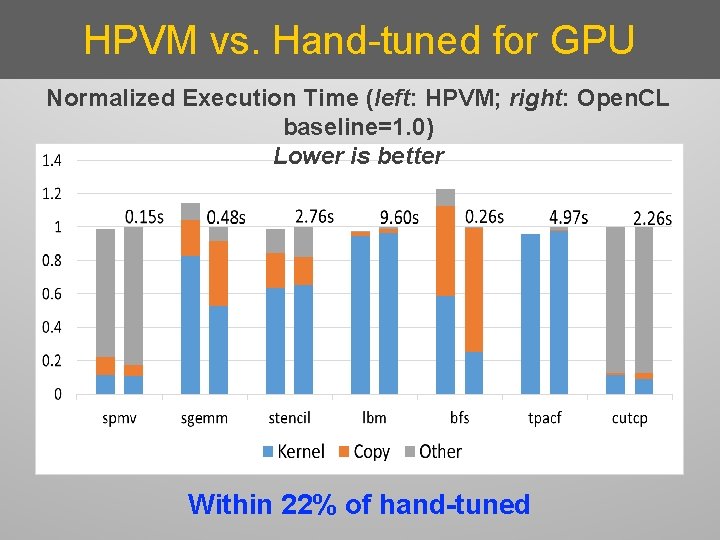

HPVM vs. Hand-tuned for GPU Normalized Execution Time (left: HPVM; right: Open. CL baseline=1. 0) Lower is better Within 22% of hand-tuned

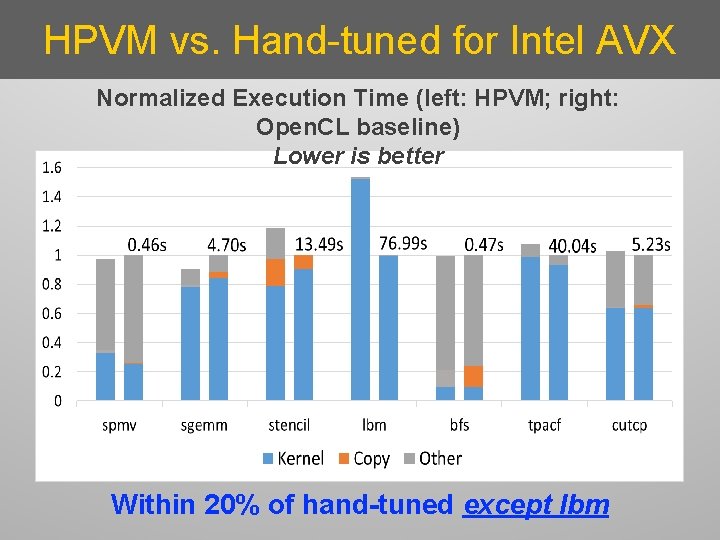

HPVM vs. Hand-tuned for Intel AVX Normalized Execution Time (left: HPVM; right: Open. CL baseline) Lower is better Within 20% of hand-tuned except lbm

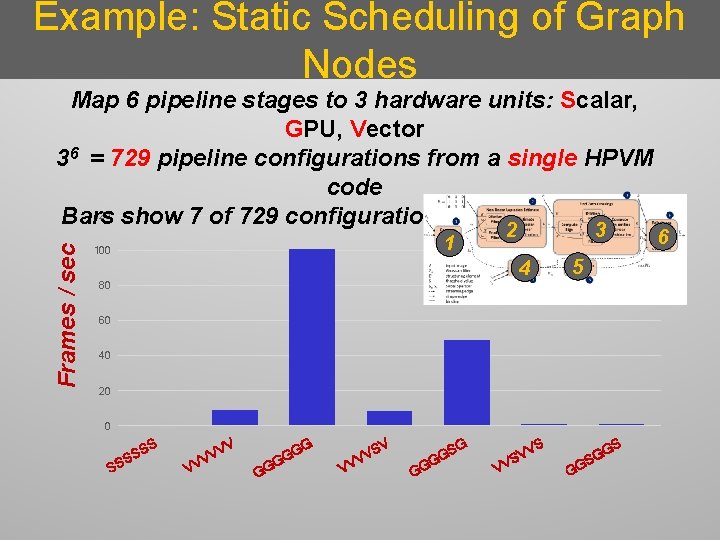

Evaluation: Goals and Methodology Multicore: Intel Xeon E 5 core i 7 Vector ISA: AVX GPU: n. Vidia Ge. Force GTX 680 GPU; 1536 cores; 2 GB 1. Comparisons with separate hand-tuned codes 2. Flexible static scheduling Ø Edge detection in grayscale images Ø Map 6 pipeline stages to 3 PEs: Scalar, GPU, Vector

Example: Static Scheduling of Graph Nodes Frames / sec Map 6 pipeline stages to 3 hardware units: Scalar, GPU, Vector 36 = 729 pipeline configurations from a single HPVM code 120 show 7 of 729 configurations. Higher is better. Bars 100 3 2 1 5… 4 80 60 40 20 0 S SS VV V V VV V G G G VV S VV GG S S GG V VV S V G GG S G 6

Evaluation: Summary Vector ISA: Intel Xeon E 5 core i 7 + AVX GPU: n. Vidia Ge. Force GTX 680 GPU; 1536 cores; 2 GB Benchmarks: Parboil: SPMV, sgemm, stencil, lbm, bfs, tpacf, cutcp Baseline: Separately hand-tuned Open. CL versions 1. HPVM performance comparable to hand-tuned codes 2. Flexible static scheduling: PN static mappings for HPVM program of N nodes 3. Flexible dynamic scheduling: Can reassign DFG nodes flexibly under changing load

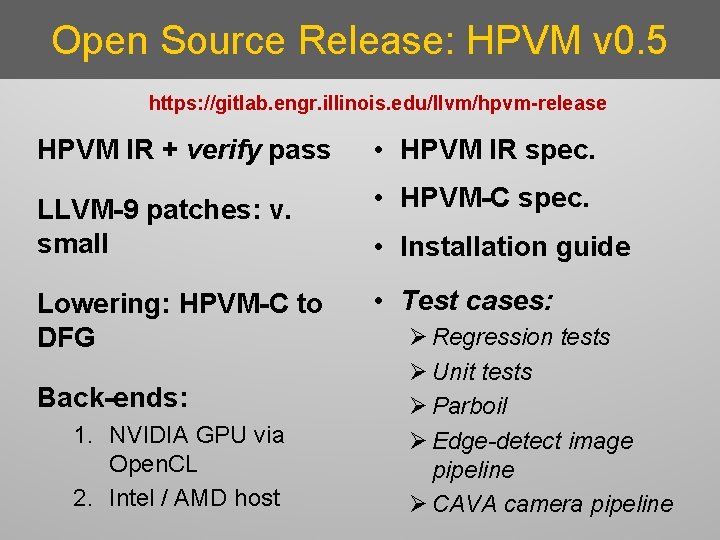

Open Source Release: HPVM v 0. 5 https: //gitlab. engr. illinois. edu/llvm/hpvm-release HPVM IR + verify pass • HPVM IR spec. LLVM-9 patches: v. small • HPVM-C spec. Lowering: HPVM-C to DFG • Test cases: Back-ends: 1. NVIDIA GPU via Open. CL 2. Intel / AMD host • Installation guide Ø Regression tests Ø Unit tests Ø Parboil Ø Edge-detect image pipeline Ø CAVA camera pipeline

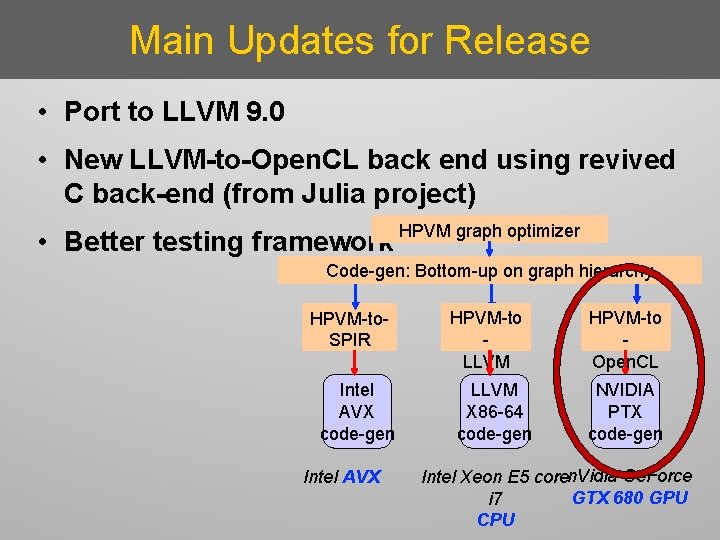

Main Updates for Release • Port to LLVM 9. 0 • New LLVM-to-Open. CL back end using revived C back-end (from Julia project) • Better testing framework HPVM graph optimizer Code-gen: Bottom-up on graph hierarchy HPVM-to. SPIR Intel AVX code-gen Intel AVX HPVM-to LLVM X 86 -64 code-gen HPVM-to Open. CL NVIDIA PTX code-gen Intel Xeon E 5 coren. Vidia Ge. Force GTX 680 GPU i 7 CPU

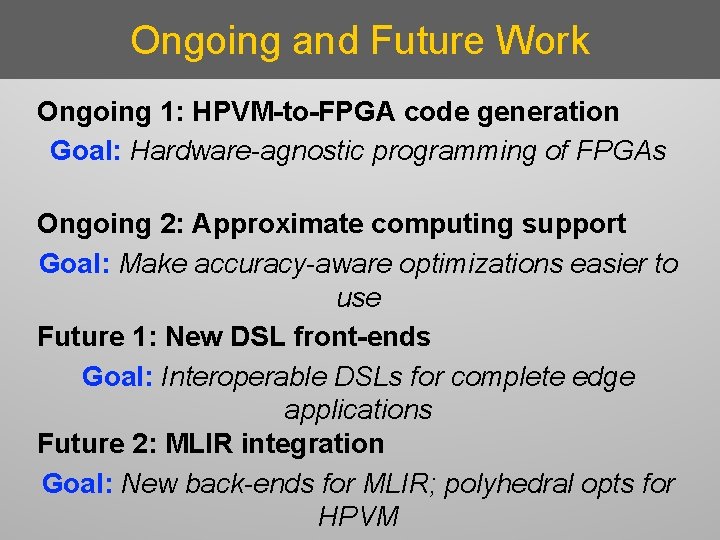

Ongoing and Future Work Ongoing 1: HPVM-to-FPGA code generation Goal: Hardware-agnostic programming of FPGAs Ongoing 2: Approximate computing support Goal: Make accuracy-aware optimizations easier to use Future 1: New DSL front-ends Goal: Interoperable DSLs for complete edge applications Future 2: MLIR integration Goal: New back-ends for MLIR; polyhedral opts for HPVM

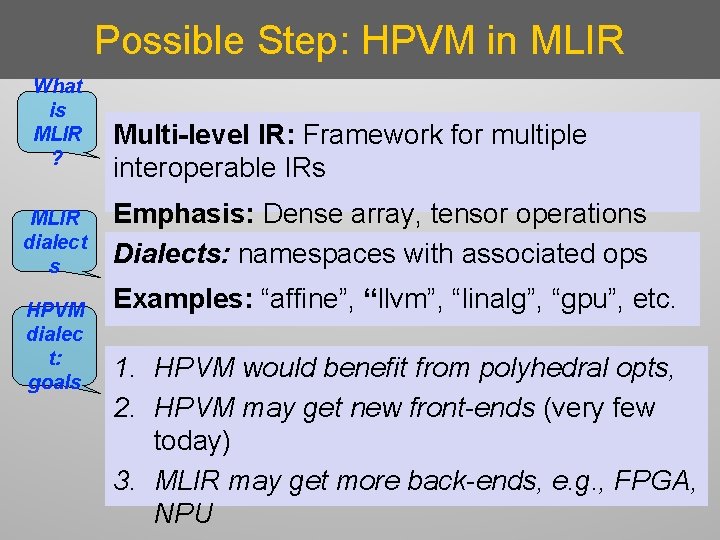

Possible Step: HPVM in MLIR What is MLIR ? MLIR dialect s HPVM dialec t: goals Multi-level IR: Framework for multiple interoperable IRs Emphasis: Dense array, tensor operations Dialects: namespaces with associated ops Examples: “affine”, “llvm”, “linalg”, “gpu”, etc. 1. HPVM would benefit from polyhedral opts, 2. HPVM may get new front-ends (very few today) 3. MLIR may get more back-ends, e. g. , FPGA, NPU

Summary HPVM: portability + performance for heterogeneous systems Ø virtual ISA Ø compiler IR Ø runtime representation Questions?

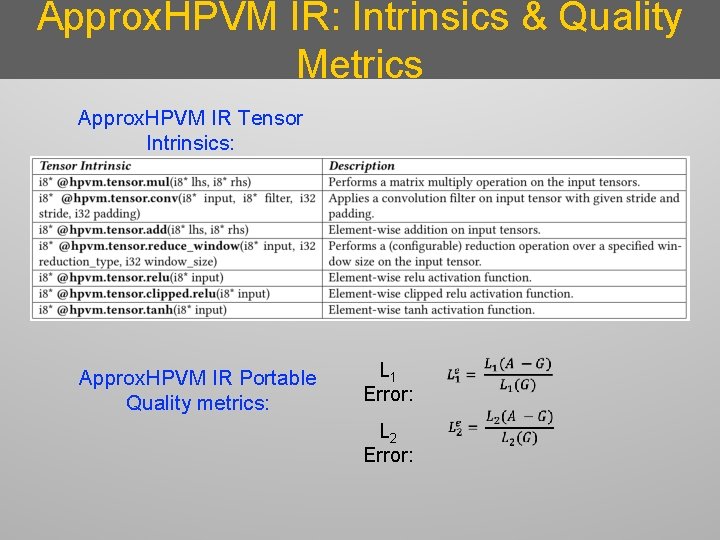

Approx. HPVM IR: Intrinsics & Quality Metrics Approx. HPVM IR Tensor Intrinsics: Approx. HPVM IR Portable Quality metrics: L 1 Error: L 2 Error:

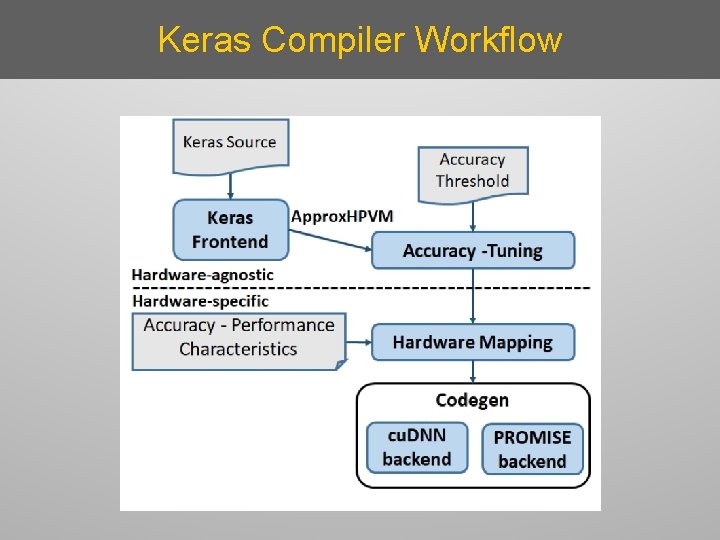

Keras Compiler Workflow

- Slides: 27