Graphics Hardware Joshua Barczak CMSC 435 UMBC ObjectOrder

Graphics Hardware Joshua Barczak CMSC 435 UMBC

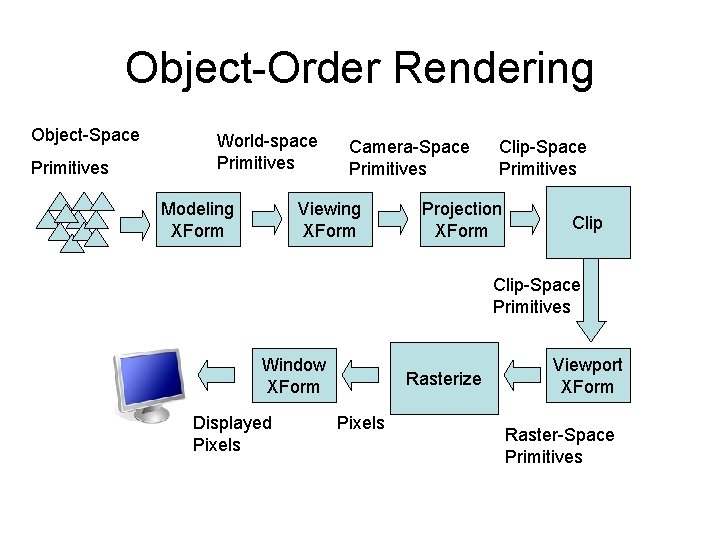

Object-Order Rendering Object-Space Primitives World-space Primitives Modeling XForm Camera-Space Primitives Viewing XForm Clip-Space Primitives Projection XForm Clip-Space Primitives Window XForm Displayed Pixels Rasterize Pixels Viewport XForm Raster-Space Primitives

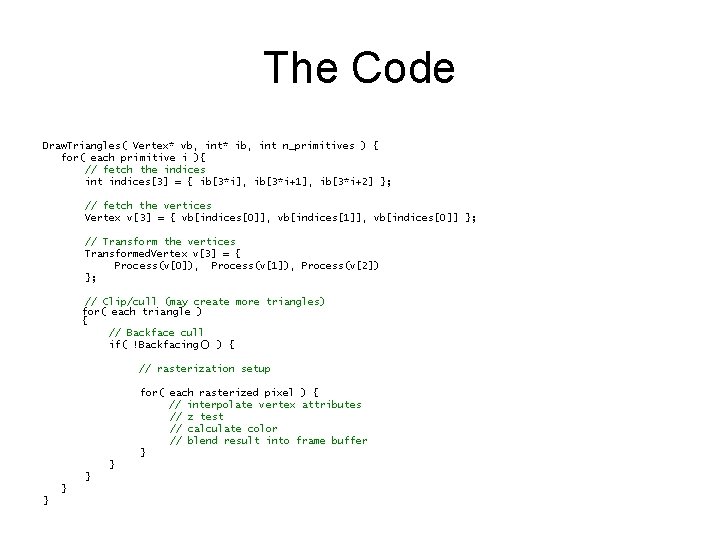

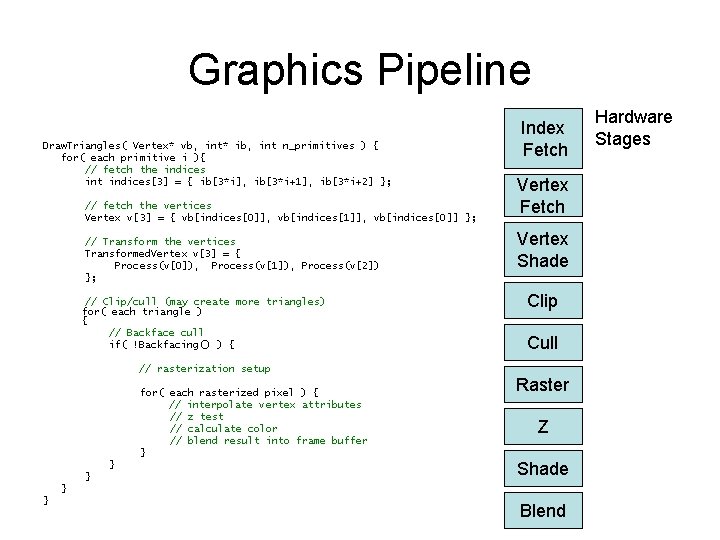

The Code Draw. Triangles( Vertex* vb, int* ib, int n_primitives ) { for( each primitive i ){ // fetch the indices int indices[3] = { ib[3*i], ib[3*i+1], ib[3*i+2] }; // fetch the vertices Vertex v[3] = { vb[indices[0]], vb[indices[1]], vb[indices[0]] }; // Transform the vertices Transformed. Vertex v[3] = { Process(v[0]), Process(v[1]), Process(v[2]) }; // Clip/cull (may create more triangles) for( each triangle ) { // Backface cull if( !Backfacing() ) { // rasterization setup for( each rasterized pixel ) { // interpolate vertex attributes // z test // calculate color // blend result into frame buffer } } }

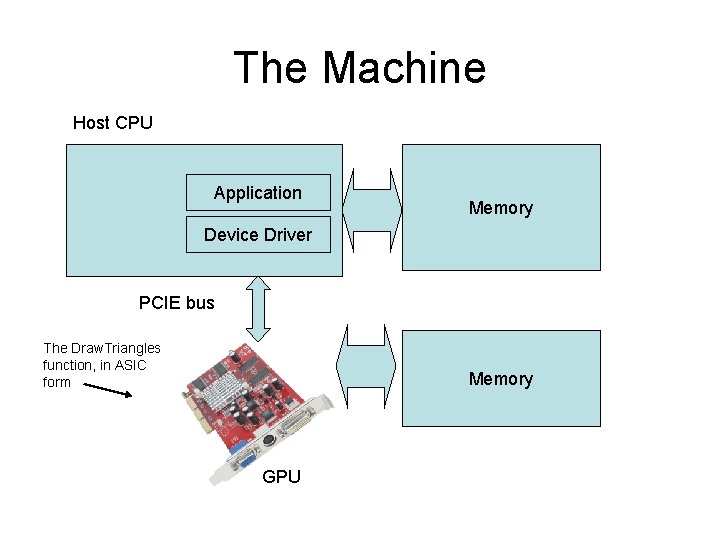

The Machine Host CPU Application Memory Device Driver PCIE bus The Draw. Triangles function, in ASIC form Memory GPU

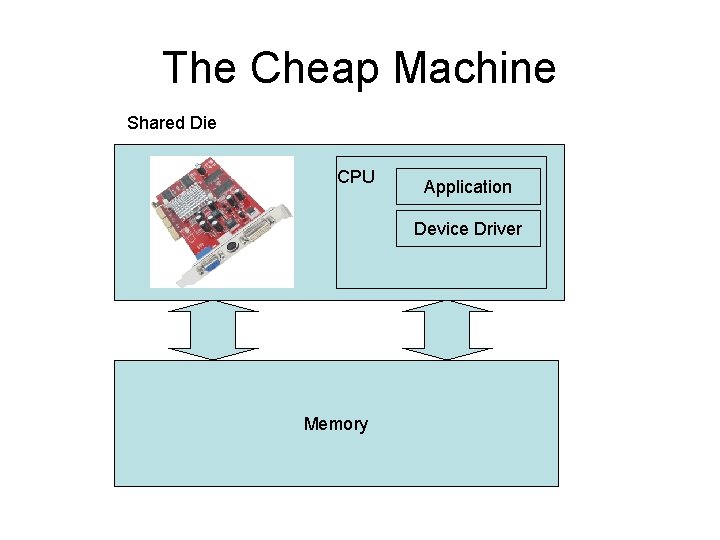

The Cheap Machine Shared Die CPU Application Device Driver Memory

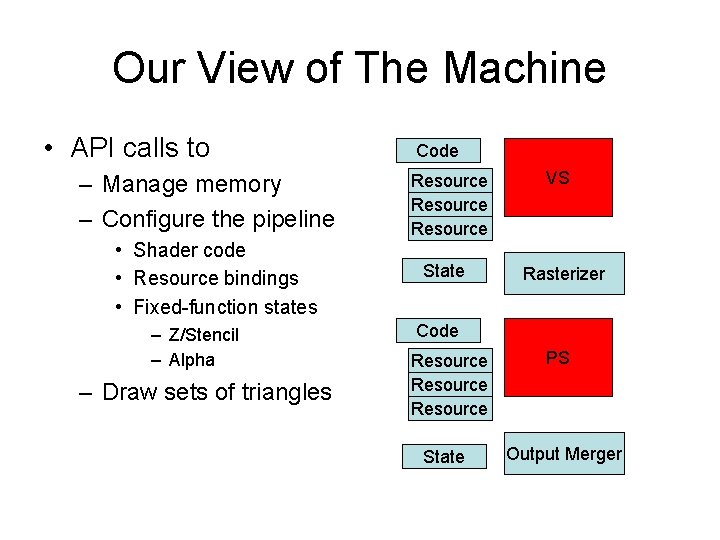

Our View of The Machine • API calls to – Manage memory – Configure the pipeline • Shader code • Resource bindings • Fixed-function states – Z/Stencil – Alpha – Draw sets of triangles Code Resource State VS Rasterizer Code Resource State PS Output Merger

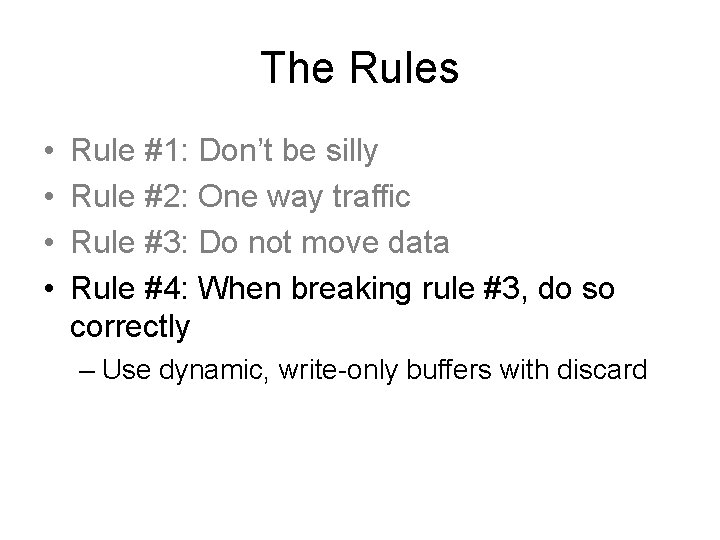

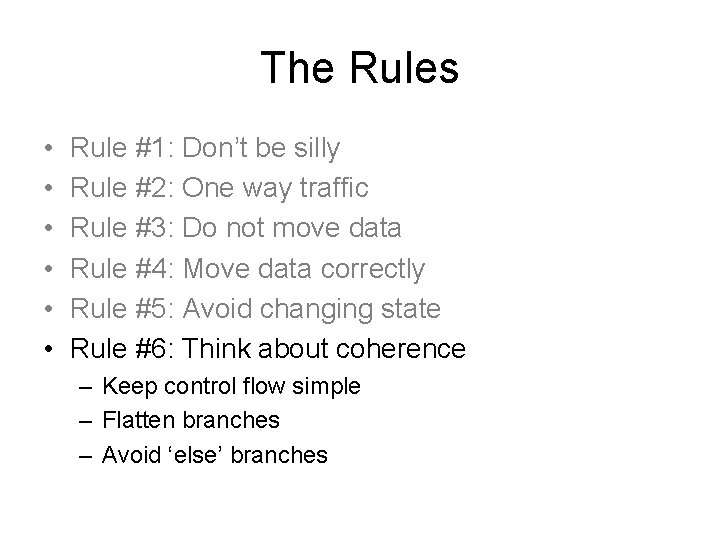

The Rules • Rule #1: Don’t be silly – If you have 1000 triangles, do not make 1000 API calls • gl. Begin/gl. End are evil

The Rules • Rule #1: Don’t be silly – Compute at the correct rate • Per-vertex work is cheaper than per-pixel work – In general – Simplify uniform expressions • • X = CONST * CONST x = const X < SQRT(CONST) x*x < const X = y/CONST; x = y*(1/const) = y*const X = pow( CONST, y) = exp( log(x)*y) = exp(const*y)

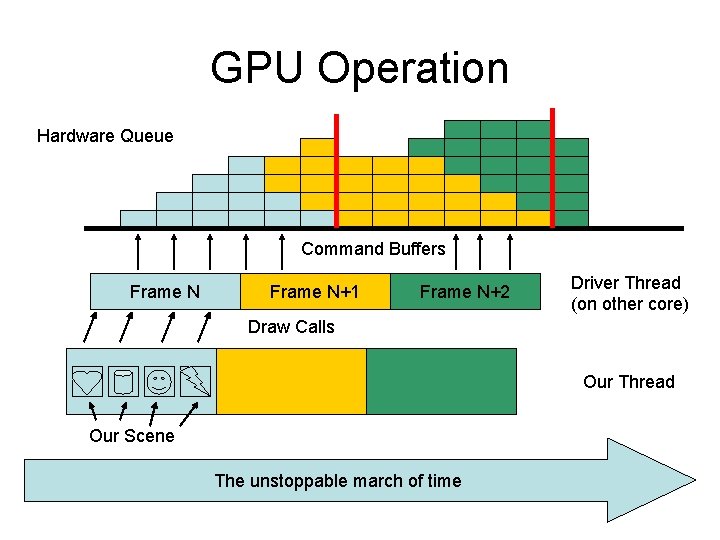

GPU Operation Hardware Queue Command Buffers Frame N+1 Frame N+2 Driver Thread (on other core) Draw Calls Our Thread Our Scene The unstoppable march of time

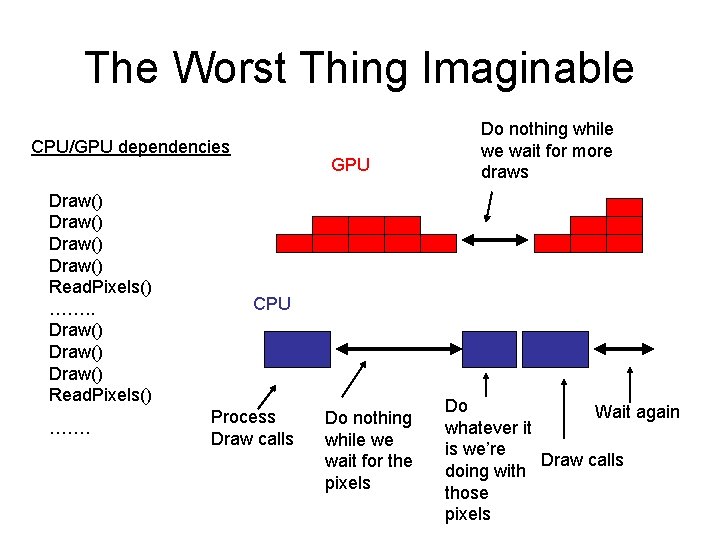

The Worst Thing Imaginable CPU/GPU dependencies Draw() Read. Pixels() ……. GPU Do nothing while we wait for more draws CPU Process Draw calls Do nothing while we wait for the pixels Do Wait again whatever it is we’re Draw calls doing with those pixels

The Rules • Rule #1: Don’t be silly • Rule #2: One way traffic – Don’t read the frame buffer – If you use occlusion queries, wait a few frames before reading them

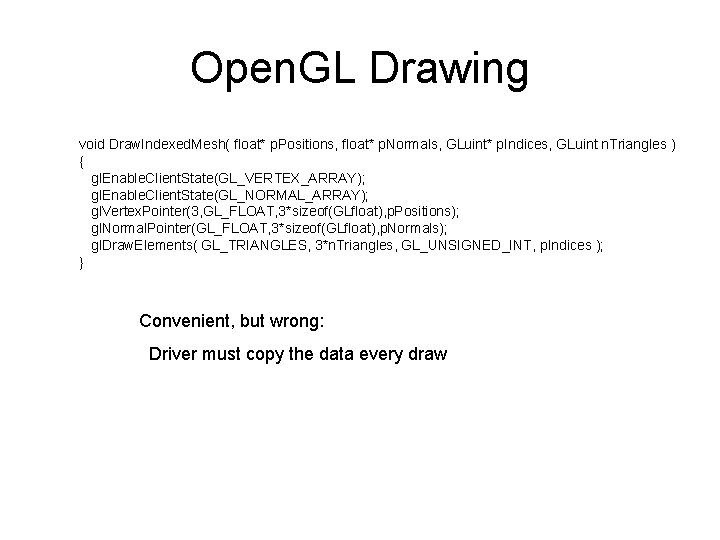

Open. GL Drawing void Draw. Indexed. Mesh( float* p. Positions, float* p. Normals, GLuint* p. Indices, GLuint n. Triangles ) { gl. Enable. Client. State(GL_VERTEX_ARRAY); gl. Enable. Client. State(GL_NORMAL_ARRAY); gl. Vertex. Pointer(3, GL_FLOAT, 3*sizeof(GLfloat), p. Positions); gl. Normal. Pointer(GL_FLOAT, 3*sizeof(GLfloat), p. Normals); gl. Draw. Elements( GL_TRIANGLES, 3*n. Triangles, GL_UNSIGNED_INT, p. Indices ); } Convenient, but wrong: Driver must copy the data every draw

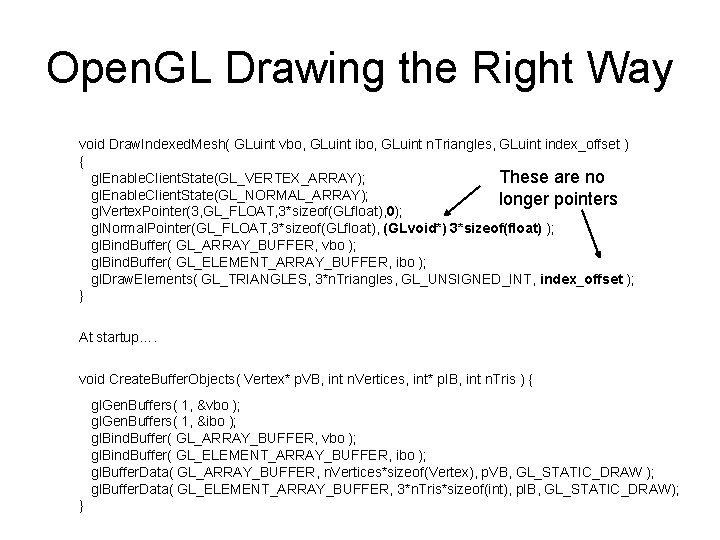

Open. GL Drawing the Right Way void Draw. Indexed. Mesh( GLuint vbo, GLuint ibo, GLuint n. Triangles, GLuint index_offset ) { gl. Enable. Client. State(GL_VERTEX_ARRAY); These are no gl. Enable. Client. State(GL_NORMAL_ARRAY); longer pointers gl. Vertex. Pointer(3, GL_FLOAT, 3*sizeof(GLfloat), 0); gl. Normal. Pointer(GL_FLOAT, 3*sizeof(GLfloat), (GLvoid*) 3*sizeof(float) ); gl. Bind. Buffer( GL_ARRAY_BUFFER, vbo ); gl. Bind. Buffer( GL_ELEMENT_ARRAY_BUFFER, ibo ); gl. Draw. Elements( GL_TRIANGLES, 3*n. Triangles, GL_UNSIGNED_INT, index_offset ); } At startup…. void Create. Buffer. Objects( Vertex* p. VB, int n. Vertices, int* p. IB, int n. Tris ) { gl. Gen. Buffers( 1, &vbo ); gl. Gen. Buffers( 1, &ibo ); gl. Bind. Buffer( GL_ARRAY_BUFFER, vbo ); gl. Bind. Buffer( GL_ELEMENT_ARRAY_BUFFER, ibo ); gl. Buffer. Data( GL_ARRAY_BUFFER, n. Vertices*sizeof(Vertex), p. VB, GL_STATIC_DRAW ); gl. Buffer. Data( GL_ELEMENT_ARRAY_BUFFER, 3*n. Tris*sizeof(int), p. IB, GL_STATIC_DRAW); }

The Rules • Rule #1: Don’t be silly • Rule #2: One way traffic • Rule #3: Do not move data

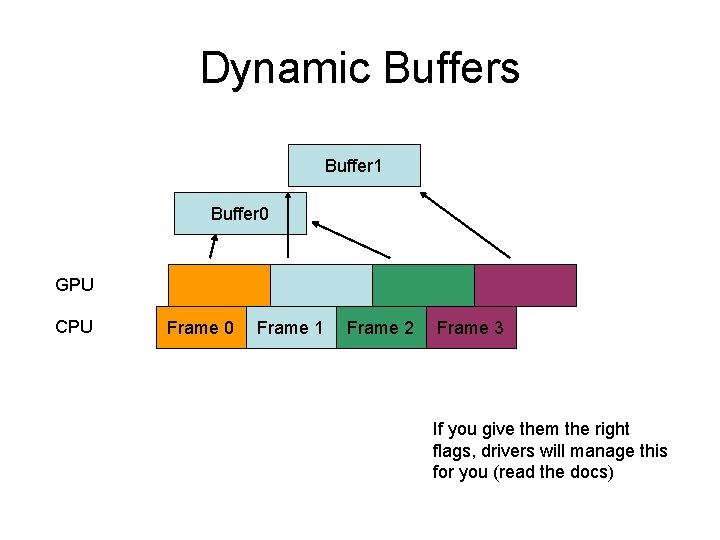

Dynamic Buffers • But… I NEED to move data – Do you really? • Sometimes you do: – Particles – CPU animation • You need to double-buffer to avoid stalls

Dynamic Buffers Buffer 1 Buffer 0 GPU CPU Frame 0 Frame 1 Frame 2 Frame 3 If you give them the right flags, drivers will manage this for you (read the docs)

The Rules • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: When breaking rule #3, do so correctly – Use dynamic, write-only buffers with discard

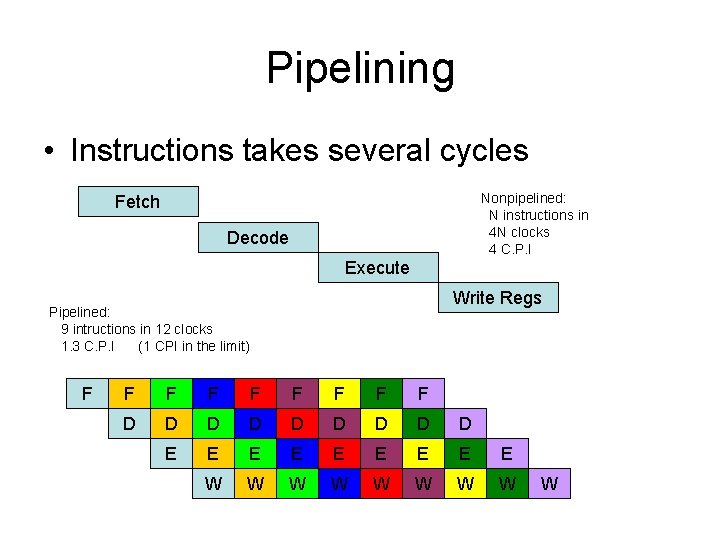

Pipelining • Instructions takes several cycles Nonpipelined: N instructions in 4 N clocks 4 C. P. I Fetch Decode Execute Write Regs Pipelined: 9 intructions in 12 clocks 1. 3 C. P. I (1 CPI in the limit) F F F F F D D D D D E E E E E W W W W W

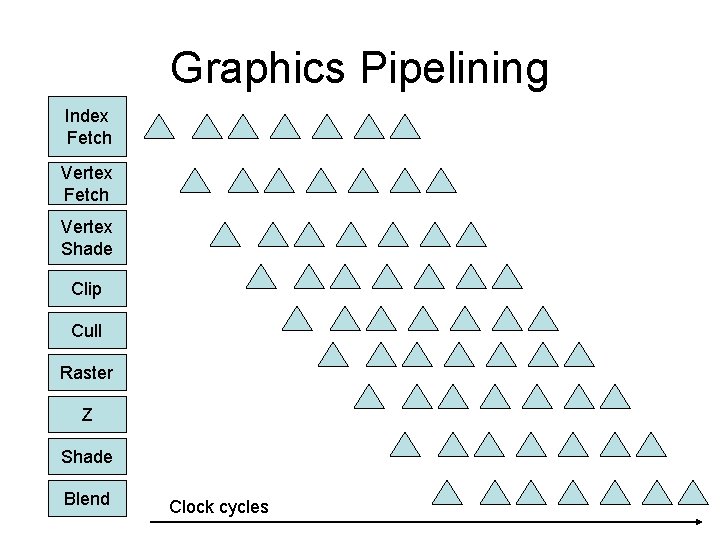

Graphics Pipeline Draw. Triangles( Vertex* vb, int* ib, int n_primitives ) { for( each primitive i ){ // fetch the indices int indices[3] = { ib[3*i], ib[3*i+1], ib[3*i+2] }; // fetch the vertices Vertex v[3] = { vb[indices[0]], vb[indices[1]], vb[indices[0]] }; // Transform the vertices Transformed. Vertex v[3] = { Process(v[0]), Process(v[1]), Process(v[2]) }; // Clip/cull (may create more triangles) for( each triangle ) { // Backface cull if( !Backfacing() ) { // rasterization setup for( each rasterized pixel ) { // interpolate vertex attributes // z test // calculate color // blend result into frame buffer } } } Index Fetch Vertex Shade Clip Cull Raster Z Shade } } Blend Hardware Stages

Graphics Pipelining Index Fetch Vertex Shade Clip Cull Raster Z Shade Blend Clock cycles

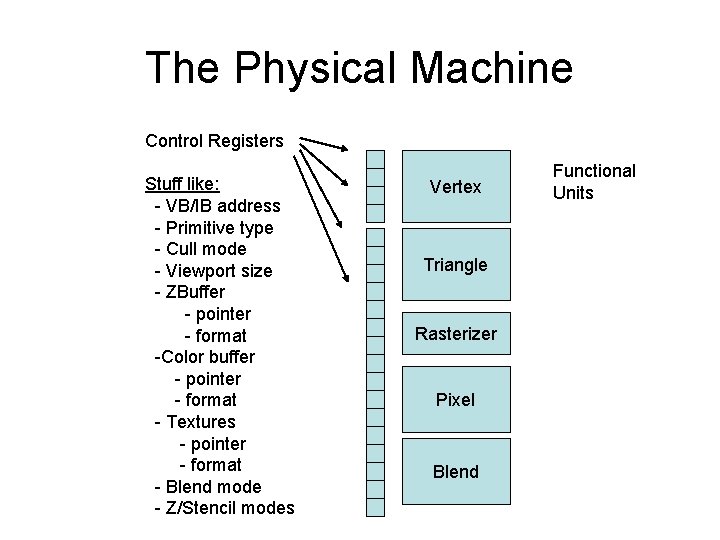

The Physical Machine Control Registers Stuff like: - VB/IB address - Primitive type - Cull mode - Viewport size - ZBuffer - pointer - format -Color buffer - pointer - format - Textures - pointer - format - Blend mode - Z/Stencil modes Vertex Triangle Rasterizer Pixel Blend Functional Units

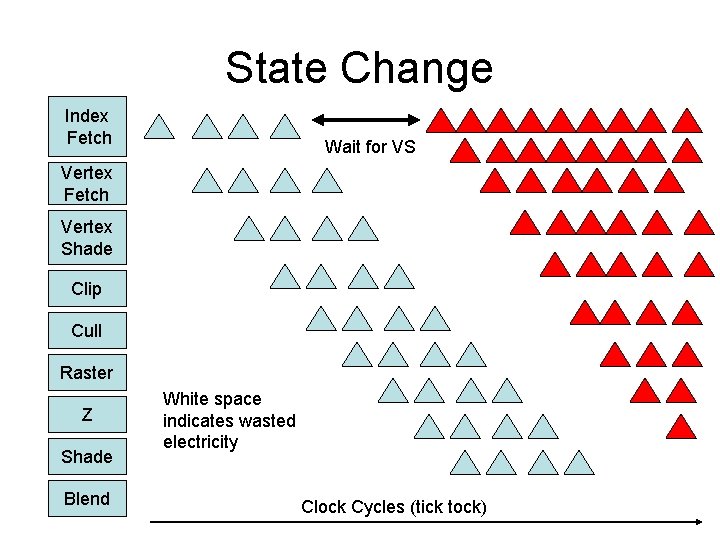

State Change Index Fetch Wait for VS Vertex Fetch Vertex Shade Clip Cull Raster Z Shade Blend White space indicates wasted electricity Clock Cycles (tick tock)

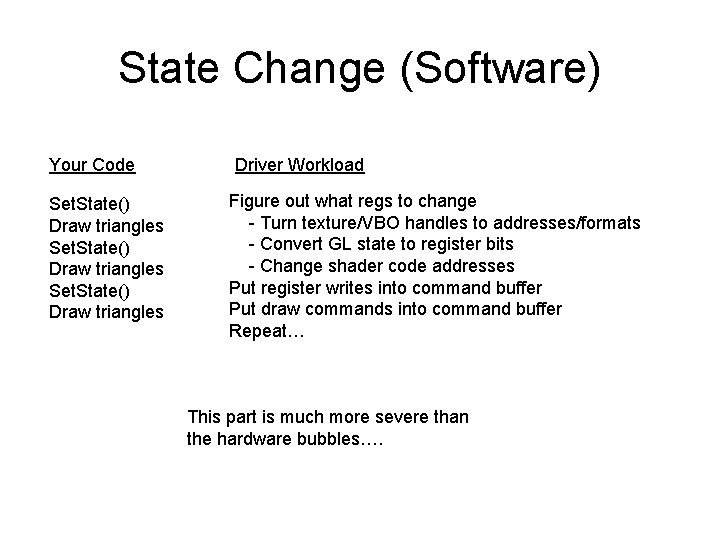

State Change (Software) Your Code Set. State() Draw triangles Driver Workload Figure out what regs to change - Turn texture/VBO handles to addresses/formats - Convert GL state to register bits - Change shader code addresses Put register writes into command buffer Put draw commands into command buffer Repeat… This part is much more severe than the hardware bubbles….

The Rules • • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state – Sort objects by state – Pack meshes together

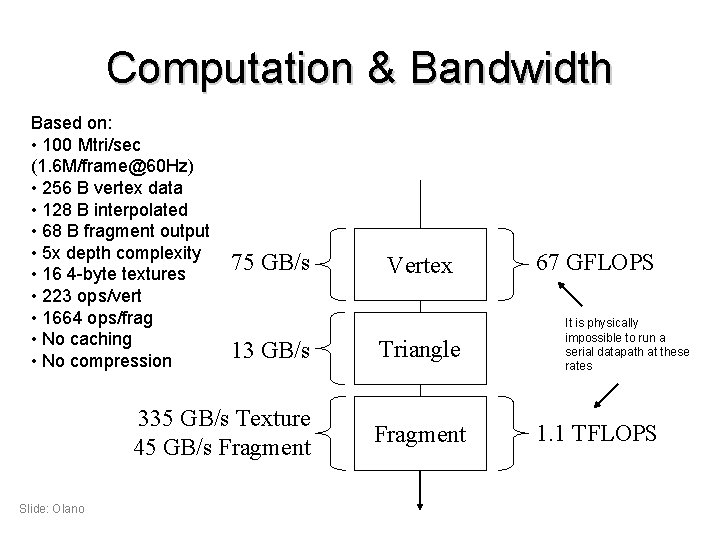

Computation & Bandwidth Based on: • 100 Mtri/sec (1. 6 M/frame@60 Hz) • 256 B vertex data • 128 B interpolated • 68 B fragment output • 5 x depth complexity • 16 4 -byte textures • 223 ops/vert • 1664 ops/frag • No caching • No compression Slide: Olano 75 GB/s Vertex 13 GB/s Triangle 335 GB/s Texture 45 GB/s Fragment 67 GFLOPS It is physically impossible to run a serial datapath at these rates 1. 1 TFLOPS

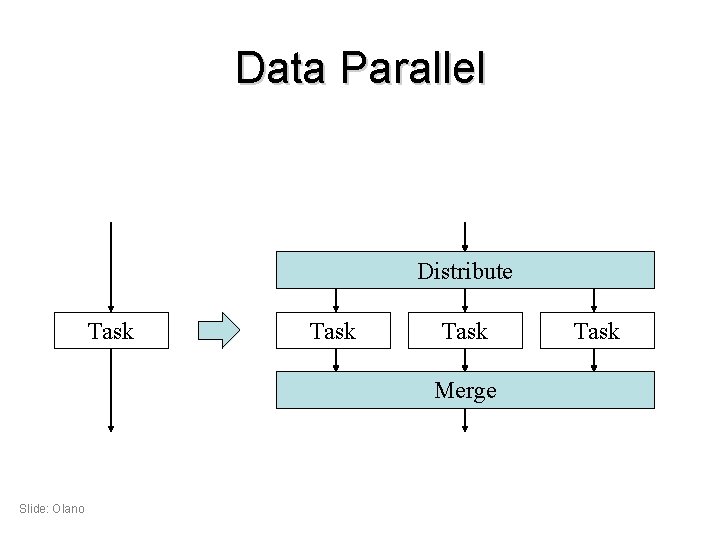

Data Parallel Distribute Task Merge Slide: Olano Task

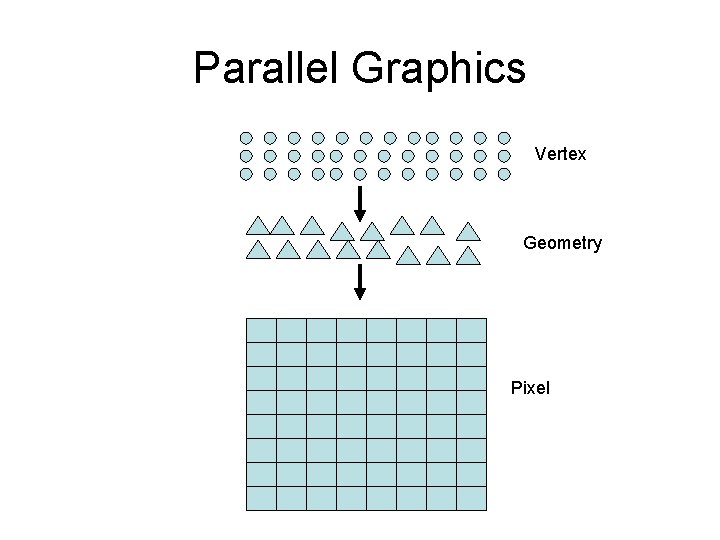

Parallel Graphics Vertex Geometry Pixel

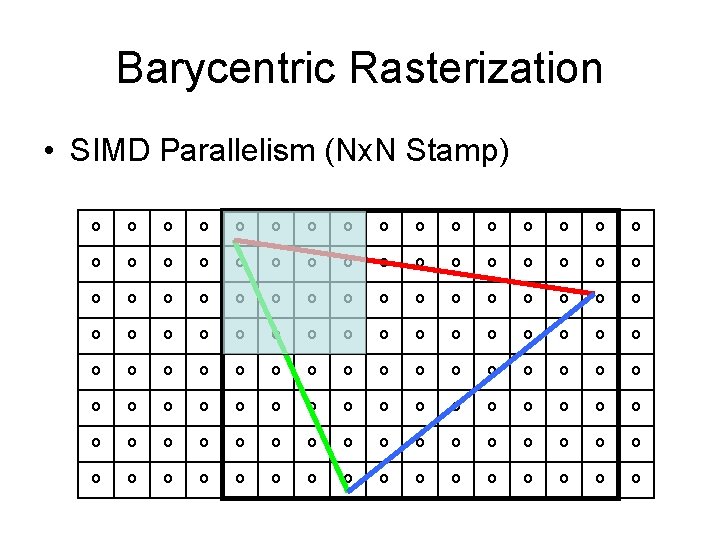

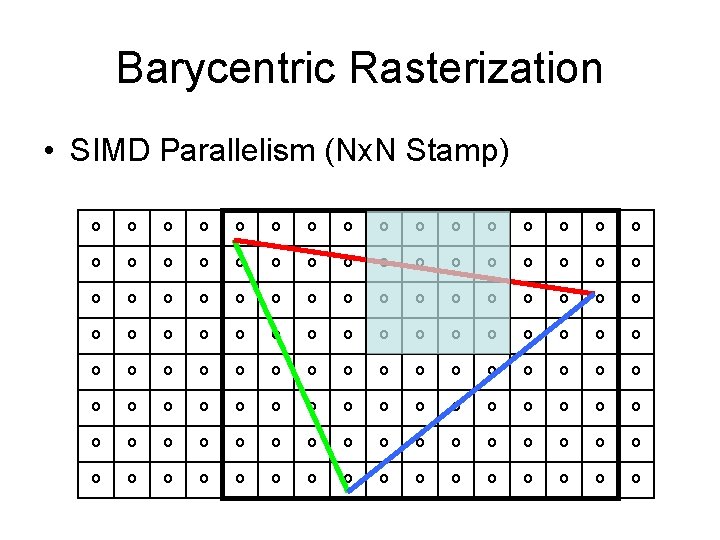

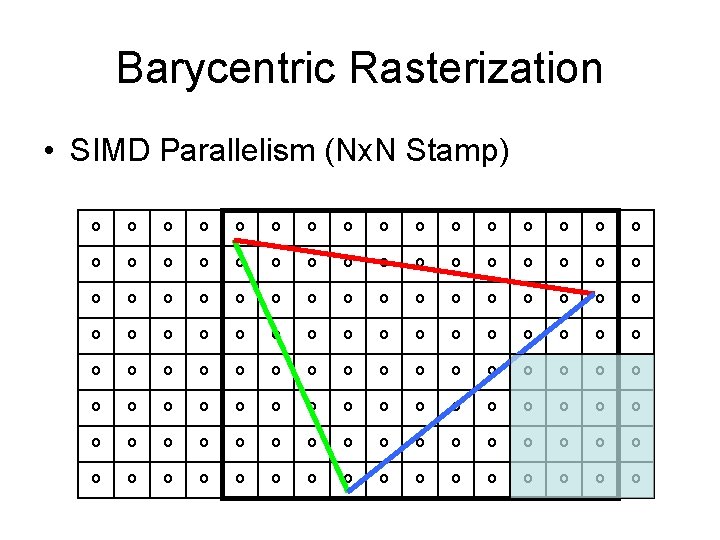

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

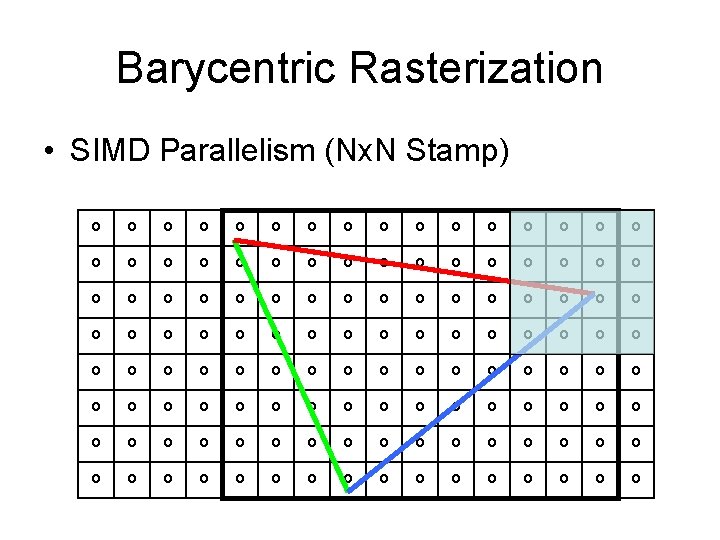

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

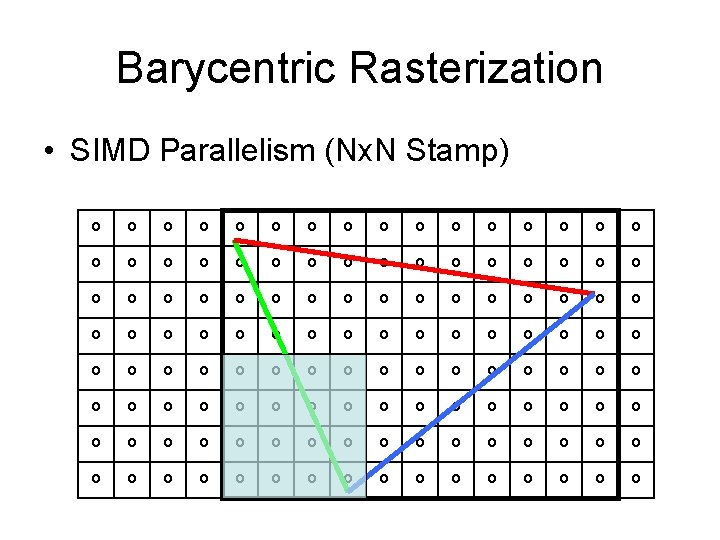

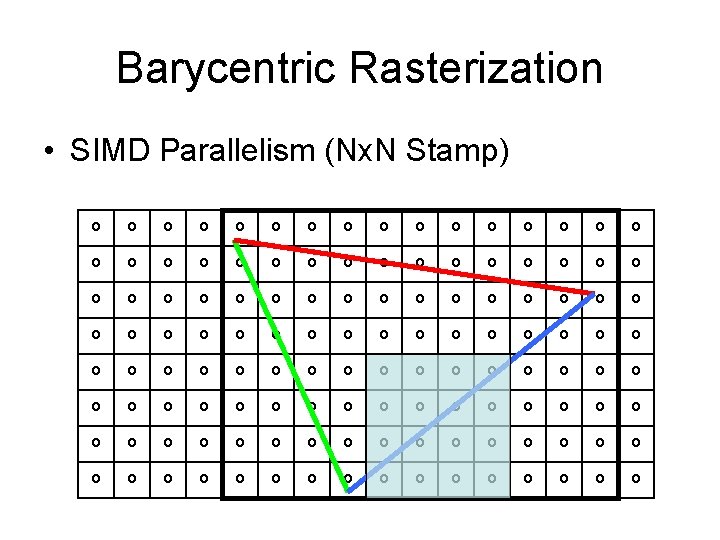

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

Barycentric Rasterization • SIMD Parallelism (Nx. N Stamp)

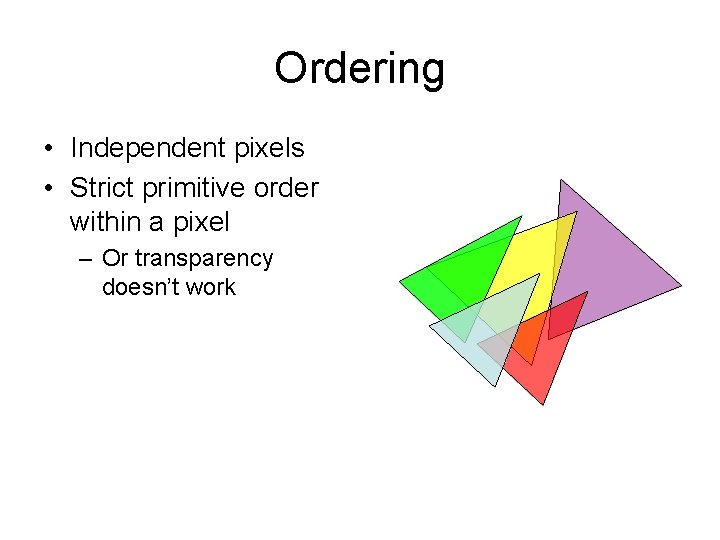

Ordering • Independent pixels • Strict primitive order within a pixel – Or transparency doesn’t work

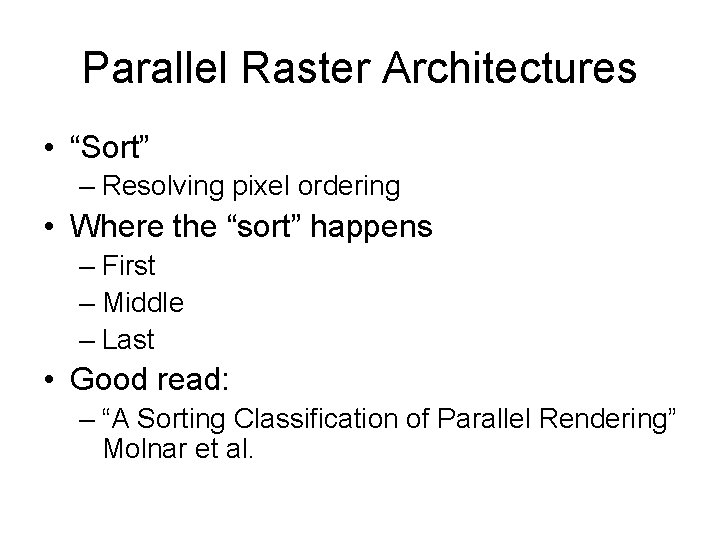

Parallel Raster Architectures • “Sort” – Resolving pixel ordering • Where the “sort” happens – First – Middle – Last • Good read: – “A Sorting Classification of Parallel Rendering” Molnar et al.

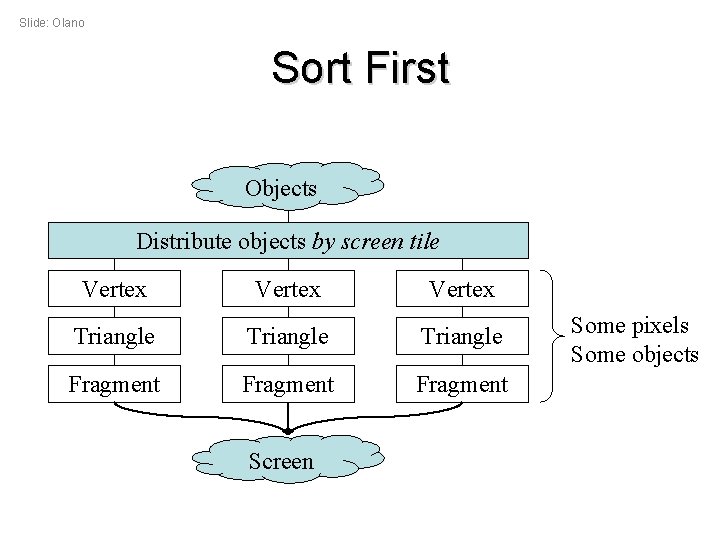

Slide: Olano Sort First Objects Distribute objects by screen tile Vertex Triangle Fragment Screen Some pixels Some objects

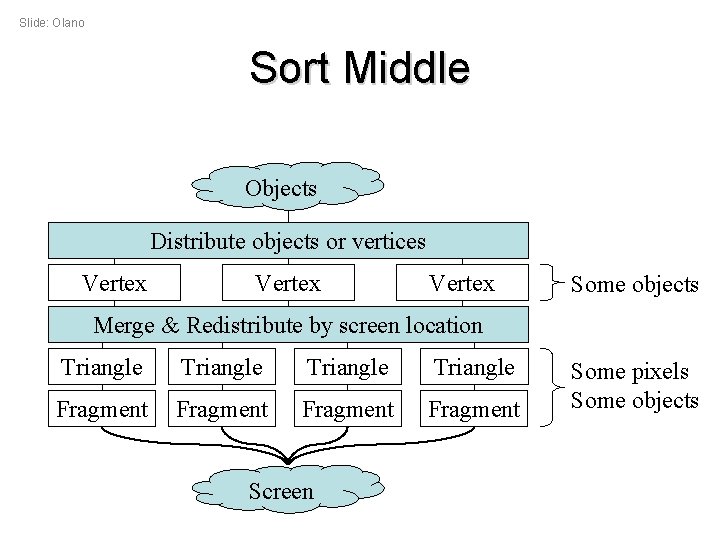

Slide: Olano Sort Middle Objects Distribute objects or vertices Vertex Some objects Merge & Redistribute by screen location Triangle Fragment Screen Some pixels Some objects

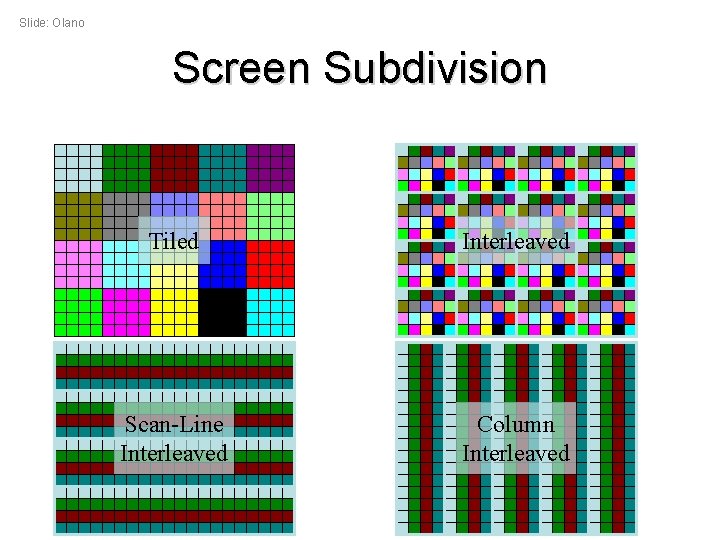

Slide: Olano Screen Subdivision Tiled Interleaved Scan-Line Interleaved Column Interleaved

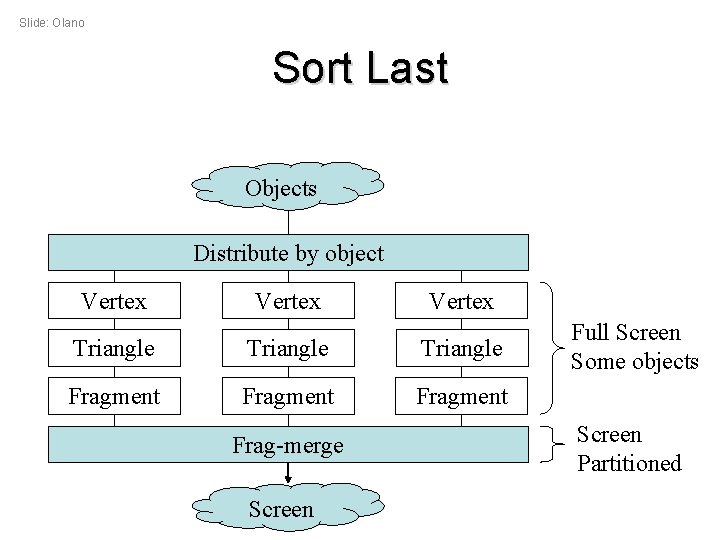

Slide: Olano Sort Last Objects Distribute by object Vertex Triangle Fragment Frag-merge Screen Full Screen Some objects Screen Partitioned

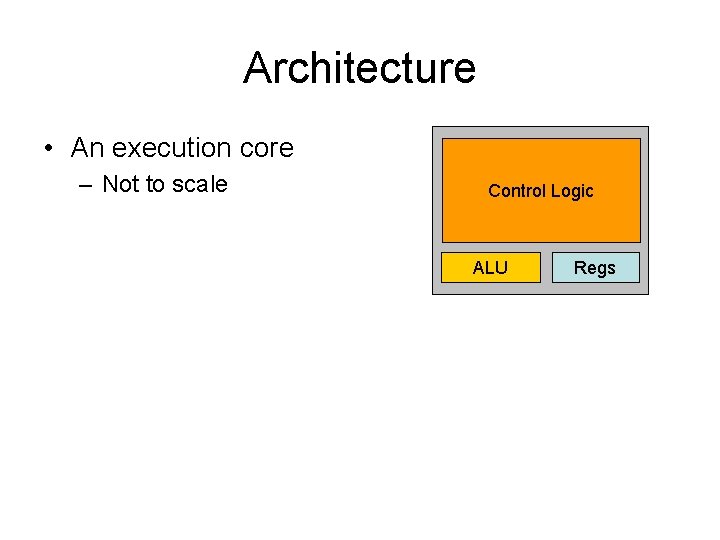

Architecture • An execution core – Not to scale Control Logic ALU Regs

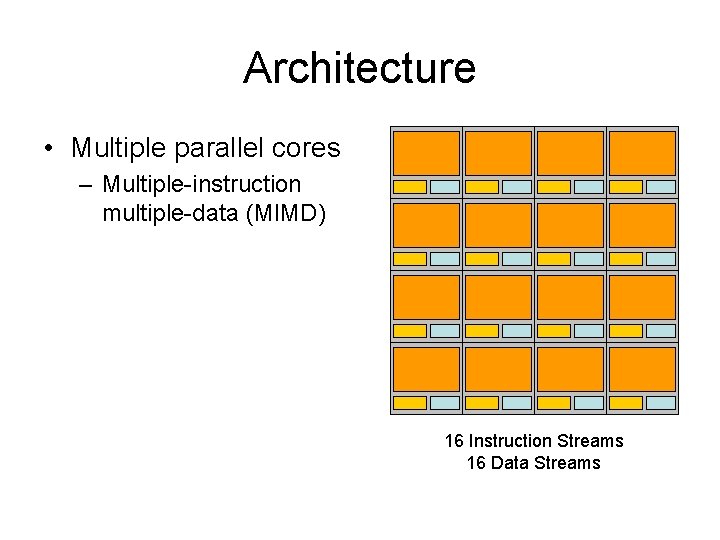

Architecture • Multiple parallel cores – Multiple-instruction multiple-data (MIMD) 16 Instruction Streams 16 Data Streams

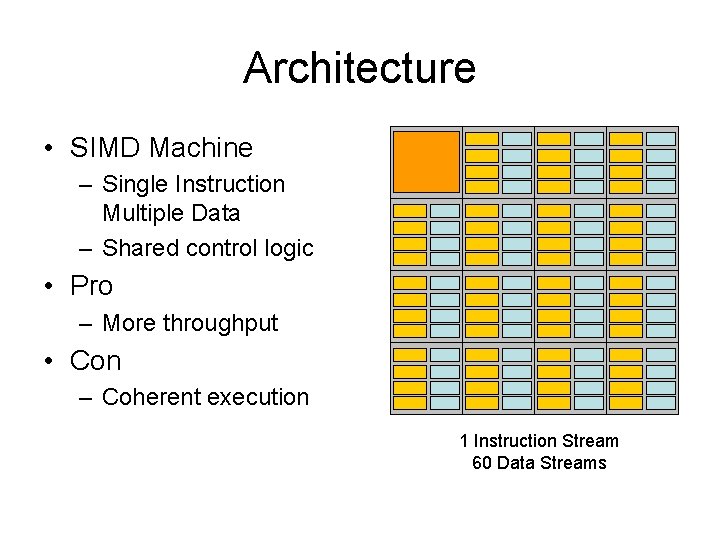

Architecture • SIMD Machine – Single Instruction Multiple Data – Shared control logic • Pro – More throughput • Con – Coherent execution 1 Instruction Stream 60 Data Streams

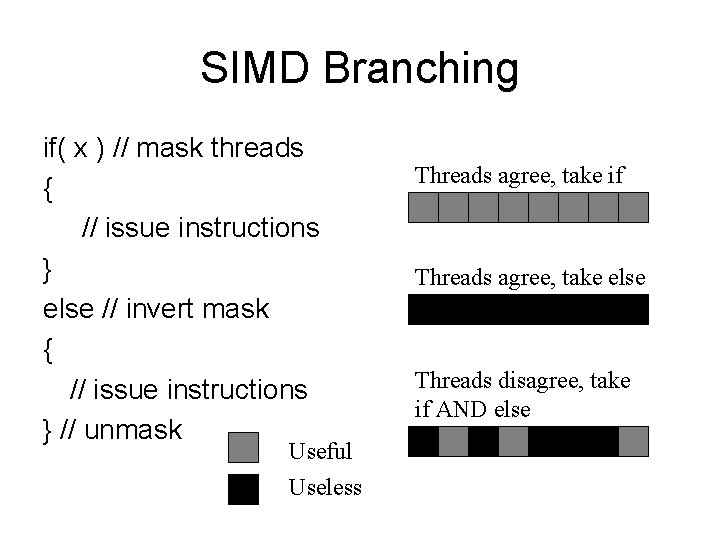

SIMD Branching if( x ) // mask threads { // issue instructions } else // invert mask { // issue instructions } // unmask Useful Useless Threads agree, take if Threads agree, take else Threads disagree, take if AND else

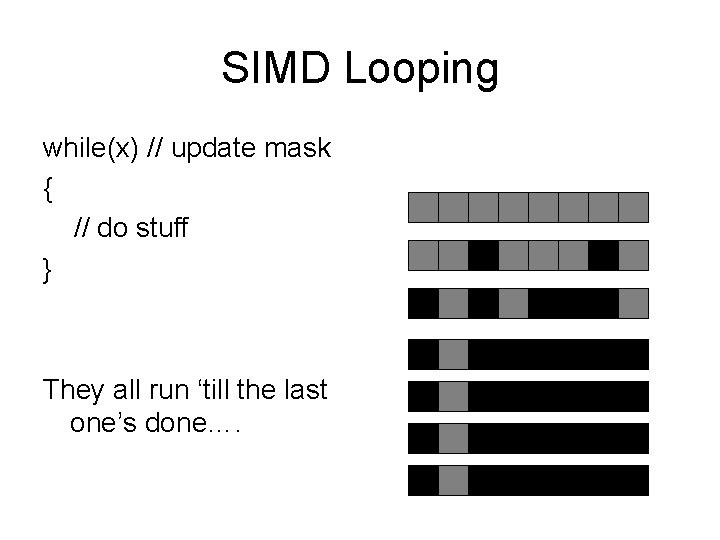

SIMD Looping while(x) // update mask { // do stuff } They all run ‘till the last one’s done….

The Rules • • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence – Keep control flow simple – Flatten branches – Avoid ‘else’ branches

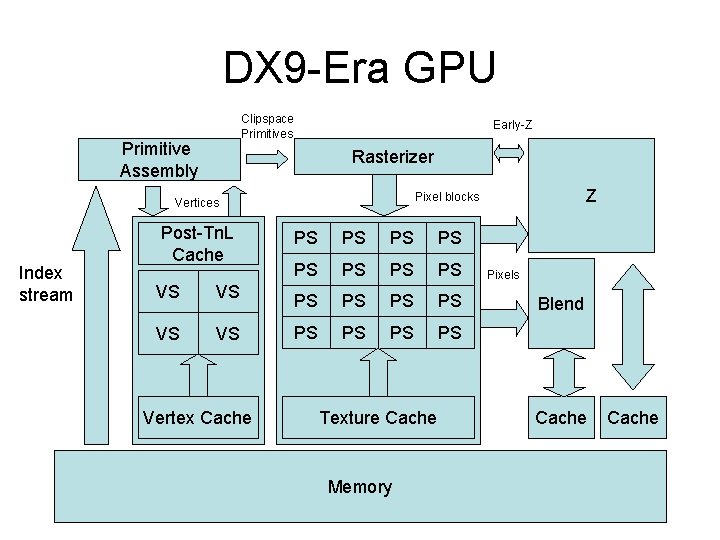

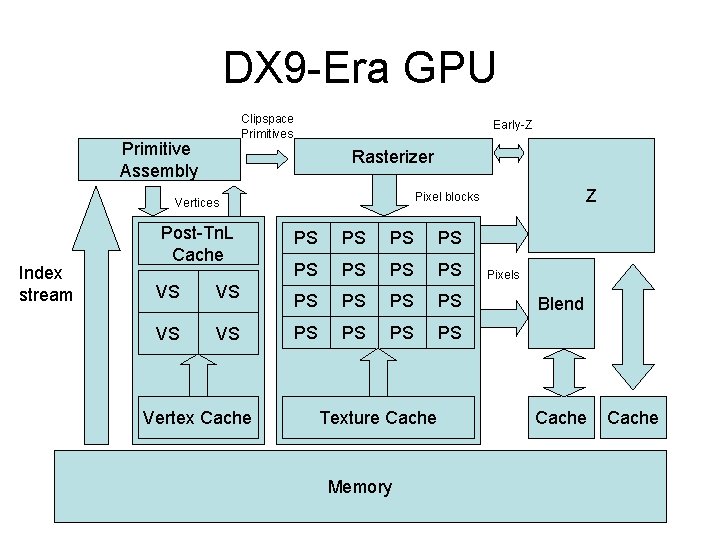

DX 9 -Era GPU Clipspace Primitives Primitive Assembly Early-Z Rasterizer Index stream Post-Tn. L Cache VS VS Vertex Cache Z Pixel blocks Vertices PS PS PS PS Texture Cache Memory Pixels Blend Cache

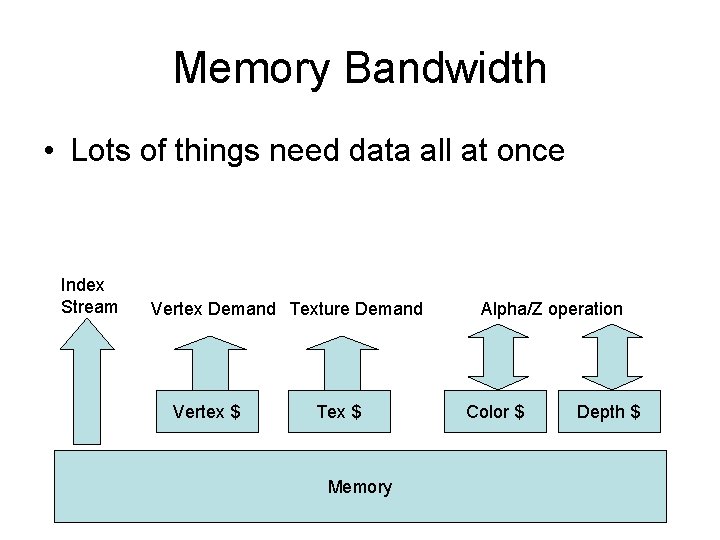

Memory Bandwidth • Lots of things need data all at once Index Stream Vertex Demand Texture Demand Vertex $ Tex $ Memory Alpha/Z operation Color $ Depth $

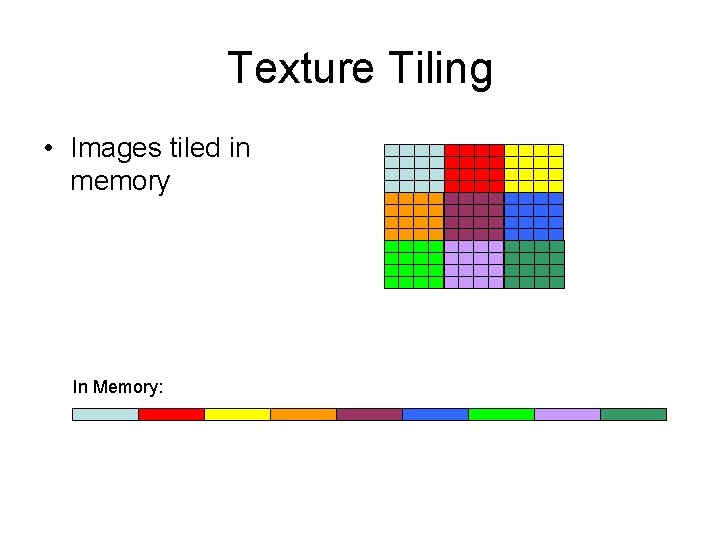

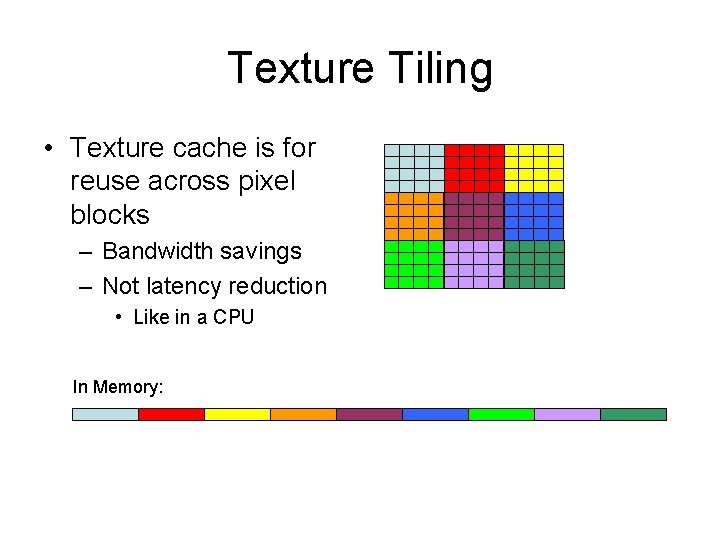

Texture Tiling • Images tiled in memory In Memory:

Texture Tiling • Texture cache is for reuse across pixel blocks – Bandwidth savings – Not latency reduction • Like in a CPU In Memory:

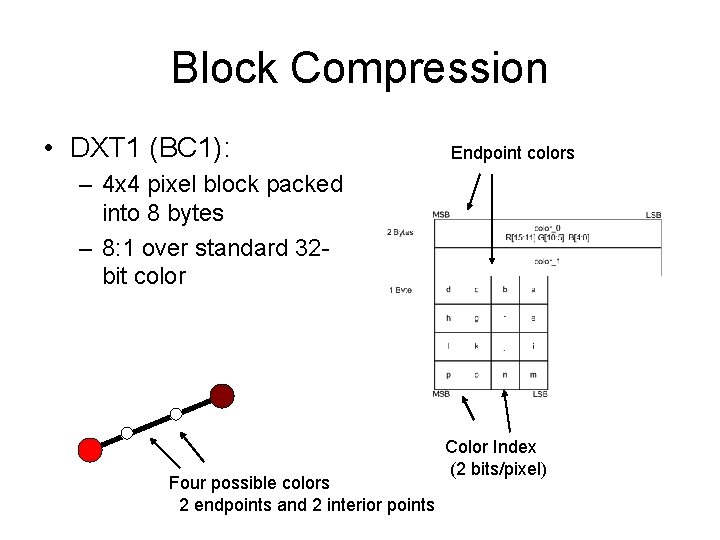

Block Compression • DXT 1 (BC 1): Endpoint colors – 4 x 4 pixel block packed into 8 bytes – 8: 1 over standard 32 bit color Four possible colors 2 endpoints and 2 interior points Color Index (2 bits/pixel)

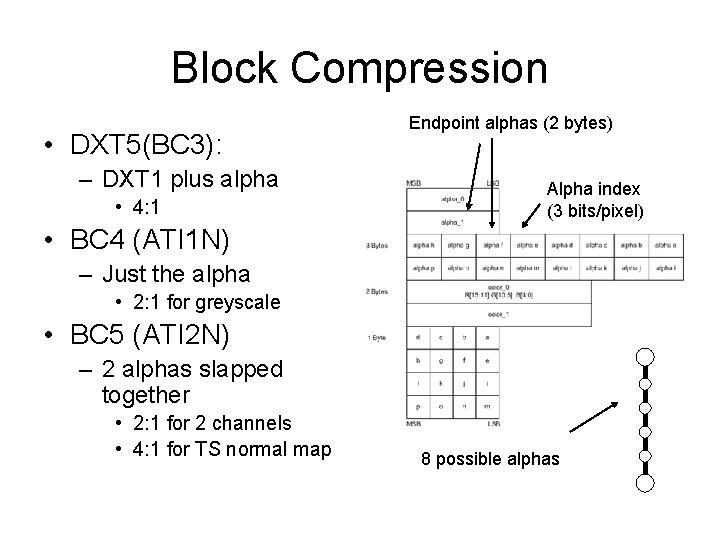

Block Compression • DXT 5(BC 3): – DXT 1 plus alpha • 4: 1 Endpoint alphas (2 bytes) Alpha index (3 bits/pixel) • BC 4 (ATI 1 N) – Just the alpha • 2: 1 for greyscale • BC 5 (ATI 2 N) – 2 alphas slapped together • 2: 1 for 2 channels • 4: 1 for TS normal map 8 possible alphas

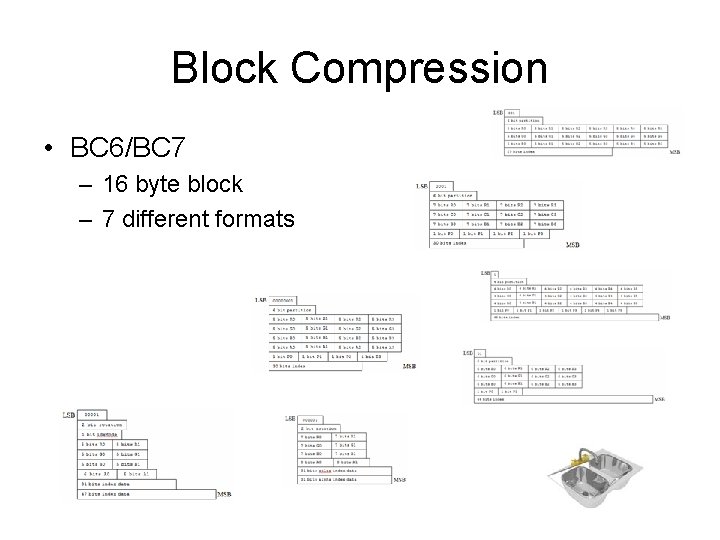

Block Compression • BC 6/BC 7 – 16 byte block – 7 different formats

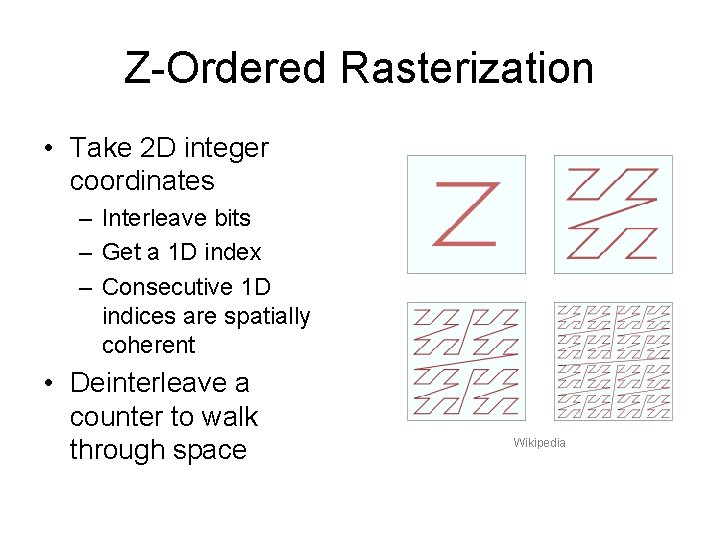

Z-Ordered Rasterization • Take 2 D integer coordinates – Interleave bits – Get a 1 D index – Consecutive 1 D indices are spatially coherent • Deinterleave a counter to walk through space Wikipedia

The Rules • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence Rule #7: Save bandwidth – Small vertex formats • 16 -bit float, 8 -bit fixed – Small texture formats • Compress • Don’t use 4 8 -bit channels for a greyscale image! • See Rules 1 and 6

DX 9 -Era GPU Clipspace Primitives Primitive Assembly Early-Z Rasterizer Index stream Post-Tn. L Cache VS VS Vertex Cache Z Pixel blocks Vertices PS PS PS PS Texture Cache Memory Pixels Blend Cache

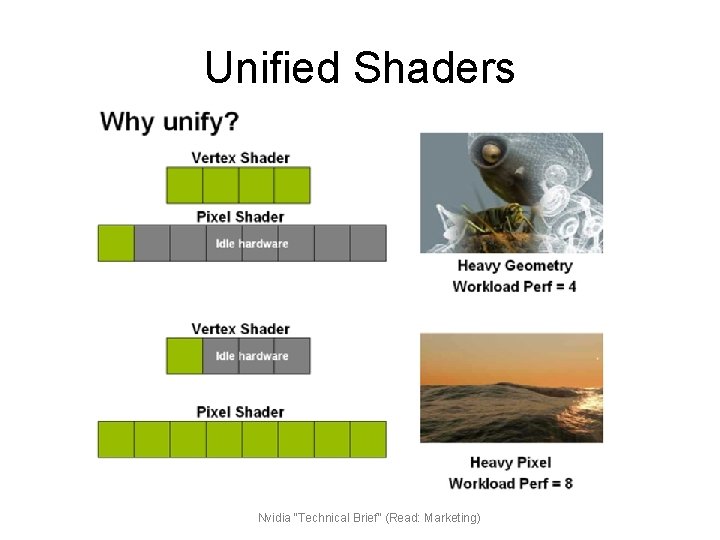

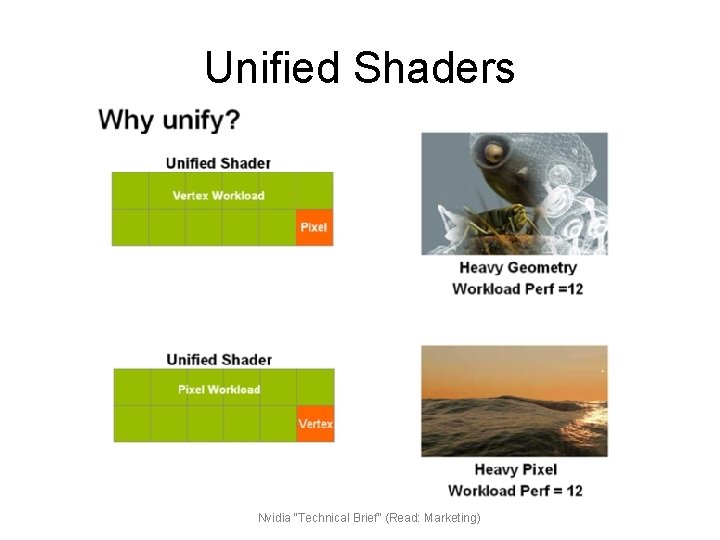

Unified Shaders Nvidia “Technical Brief” (Read: Marketing)

Unified Shaders Nvidia “Technical Brief” (Read: Marketing)

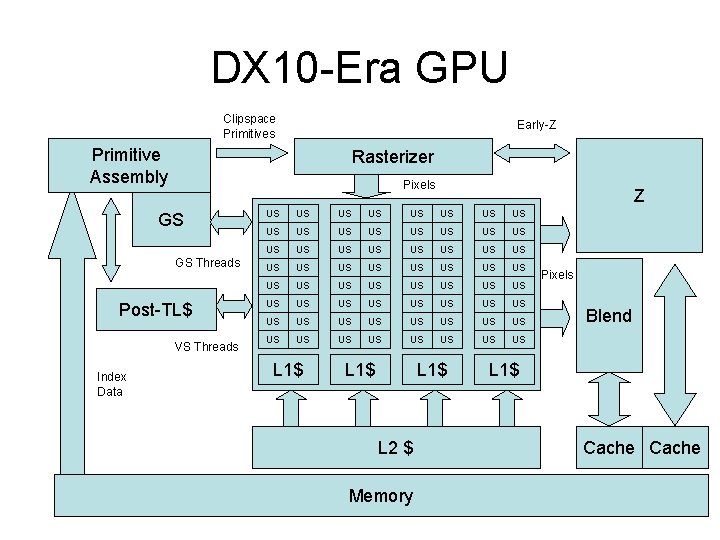

DX 10 -Era GPU Clipspace Primitives Early-Z Primitive Assembly Rasterizer Pixels GS GS Threads Post-TL$ VS Threads Index Data Z US US US US US US US US US US US US US US US US L 1$ L 2 $ Memory Pixels Blend L 1$ Cache

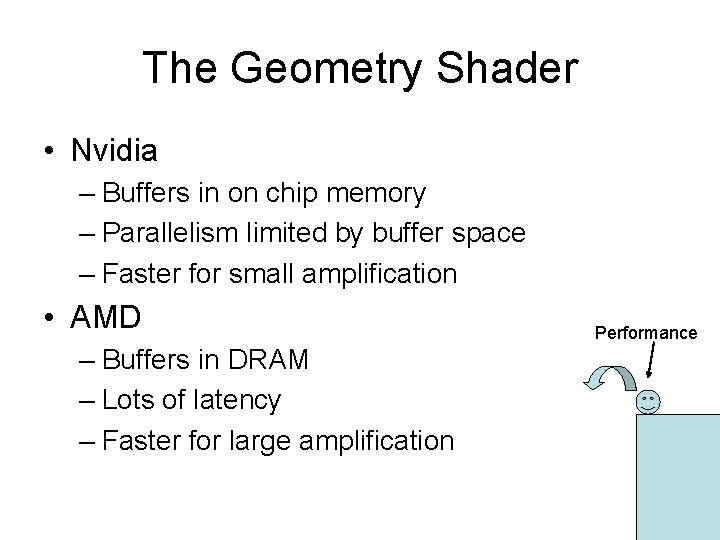

The Geometry Shader • One Primitive In – Point/Line/Triangle – Up to N primitives out • Point/Line/Triangle – Unpredictable data amplification – Order MUST be preserved

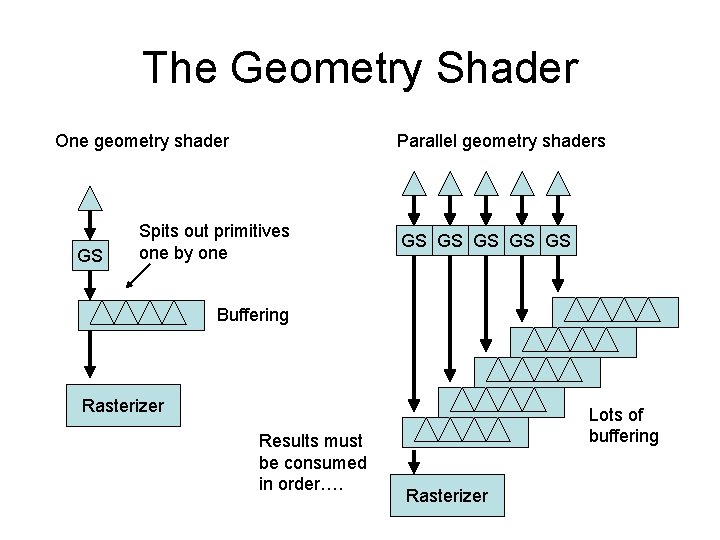

The Geometry Shader One geometry shader GS Parallel geometry shaders Spits out primitives one by one GS GS GS Buffering Rasterizer Results must be consumed in order…. Lots of buffering Rasterizer

The Geometry Shader • Nvidia – Buffers in on chip memory – Parallelism limited by buffer space – Faster for small amplification • AMD – Buffers in DRAM – Lots of latency – Faster for large amplification Performance

The Rules • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence Rule #7: Save bandwidth Rule #8: Geometry shaders are slooooow

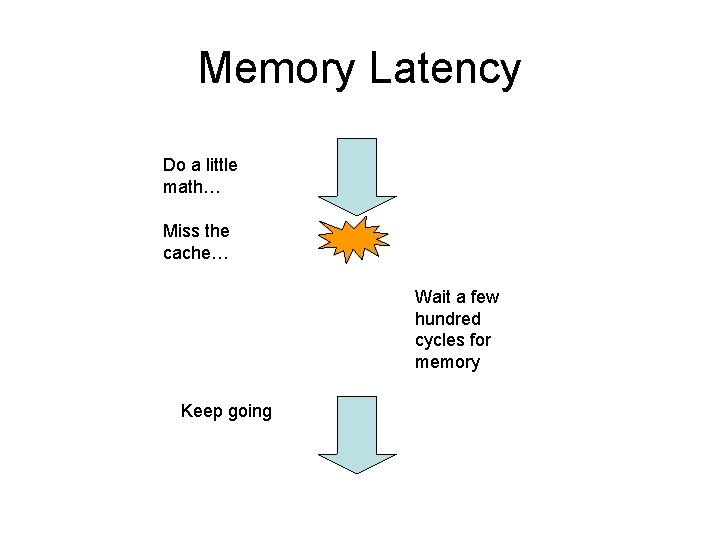

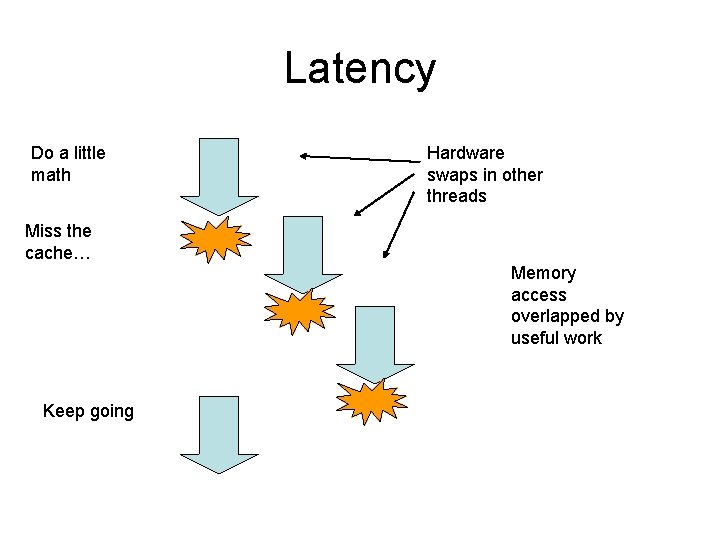

Memory Latency Do a little math… Miss the cache… Wait a few hundred cycles for memory Keep going

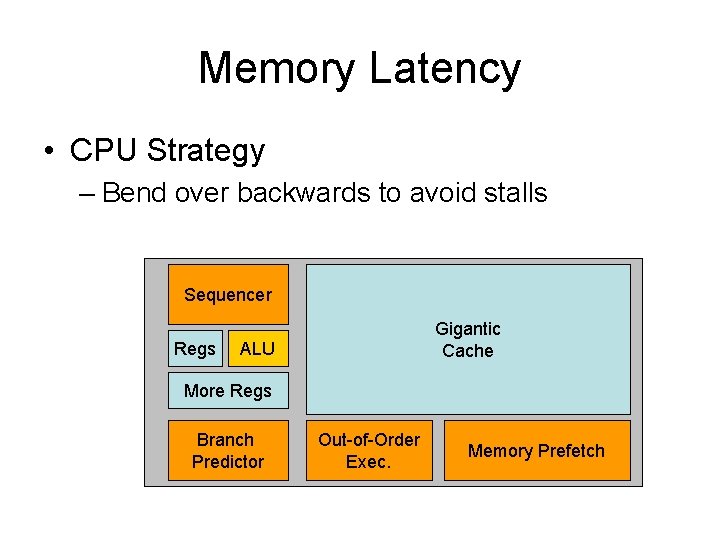

Memory Latency • CPU Strategy – Bend over backwards to avoid stalls Sequencer Regs Gigantic Cache ALU More Regs Branch Predictor Out-of-Order Exec. Memory Prefetch

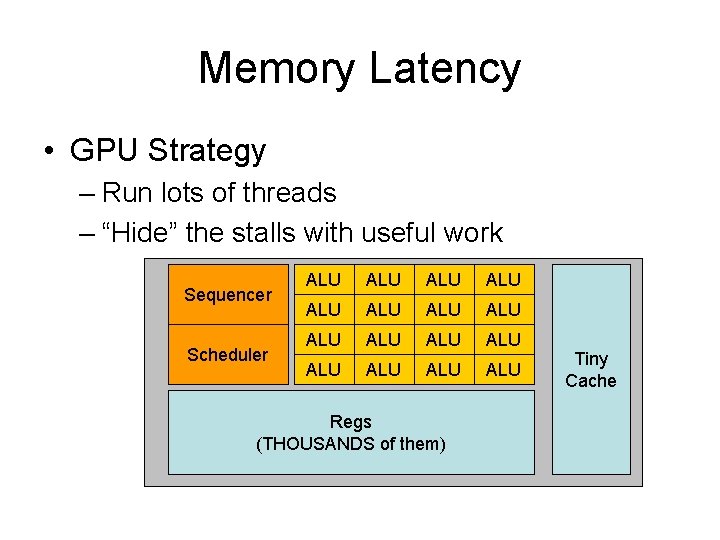

Memory Latency • GPU Strategy – Run lots of threads – “Hide” the stalls with useful work Sequencer Scheduler ALU ALU ALU ALU Regs (THOUSANDS of them) Tiny Cache

Latency Do a little math Hardware swaps in other threads Miss the cache… Memory access overlapped by useful work Keep going

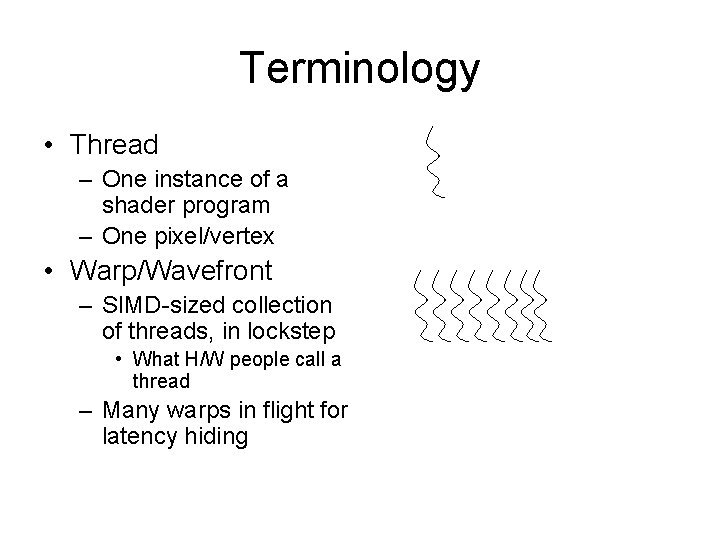

Terminology • Thread – One instance of a shader program – One pixel/vertex • Warp/Wavefront – SIMD-sized collection of threads, in lockstep • What H/W people call a thread – Many warps in flight for latency hiding

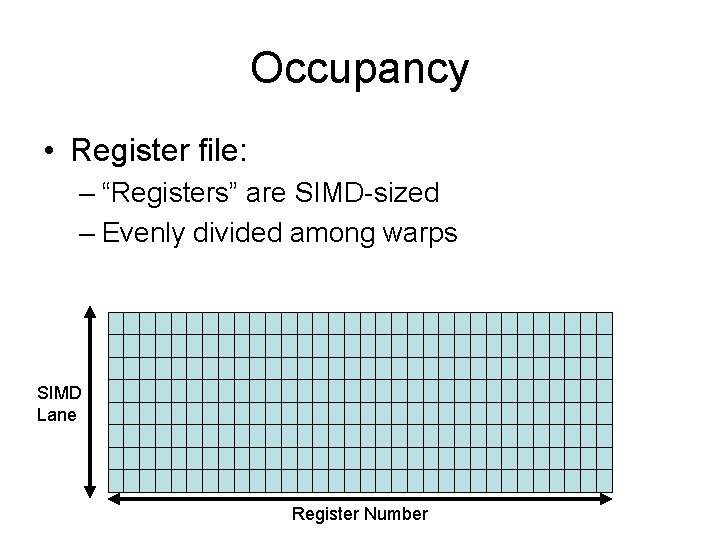

Occupancy • Register file: – “Registers” are SIMD-sized – Evenly divided among warps SIMD Lane Register Number

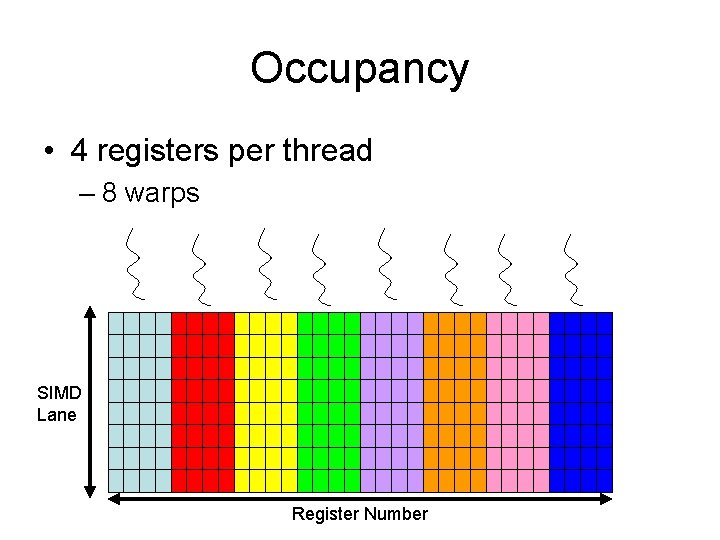

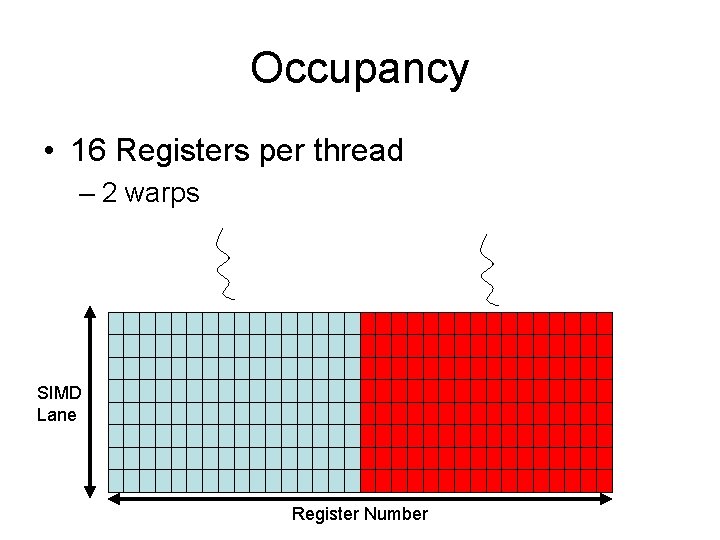

Occupancy • 4 registers per thread – 8 warps SIMD Lane Register Number

Occupancy • 16 Registers per thread – 2 warps SIMD Lane Register Number

The Rules • • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence Rule #7: Save bandwidth Rule #8: Geometry shaders are slooooow Rule #9: Keep the machine full

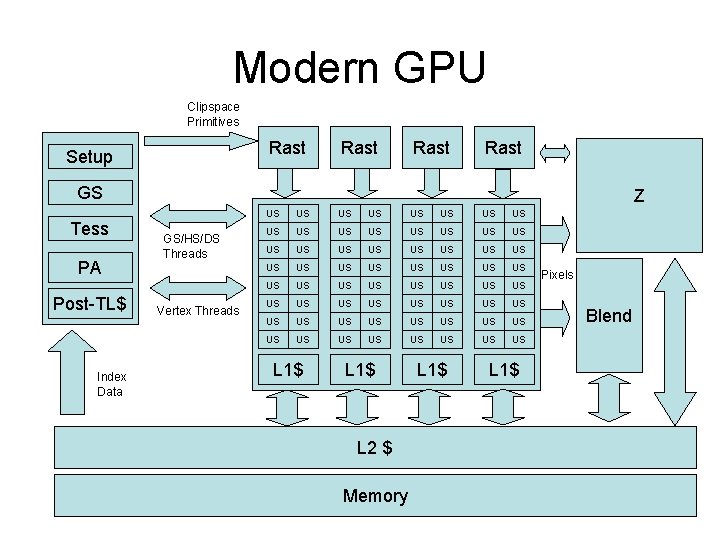

Modern GPU Clipspace Primitives Rast Setup Rast GS Tess PA Post-TL$ Index Data Z GS/HS/DS Threads Vertex Threads US US US US US US US US US US US US US US US US L 1$ L 2 $ Memory L 1$ Pixels Blend

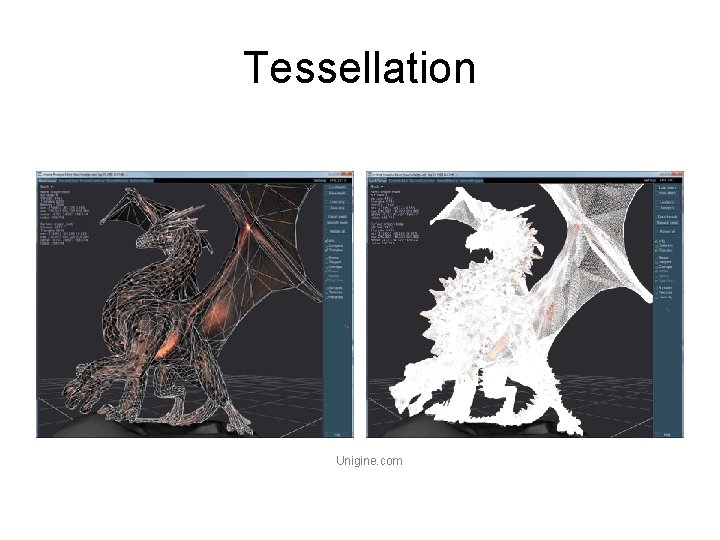

Tessellation Unigine. com

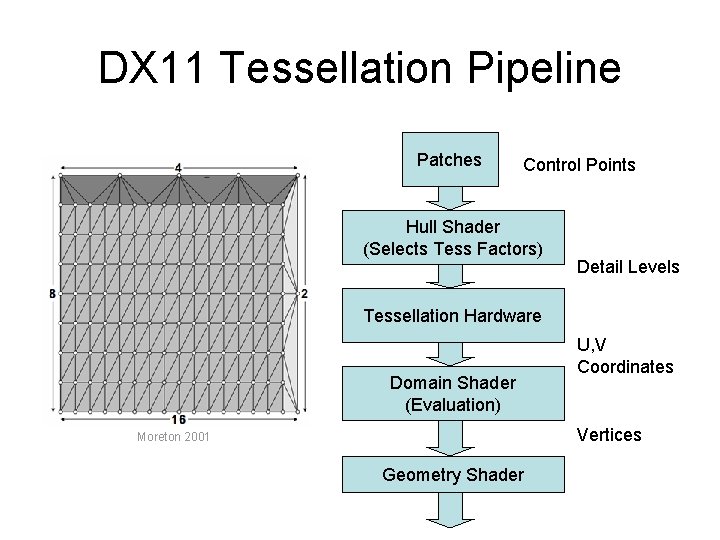

DX 11 Tessellation Pipeline Patches Control Points Hull Shader (Selects Tess Factors) Detail Levels Tessellation Hardware Domain Shader (Evaluation) U, V Coordinates Vertices Moreton 2001 Geometry Shader

Tessellation • Tessellation Pitfalls: – Backface cull happens post-tess • LOTS of wasted DS work – 2 x 2 Quad Utilization problem

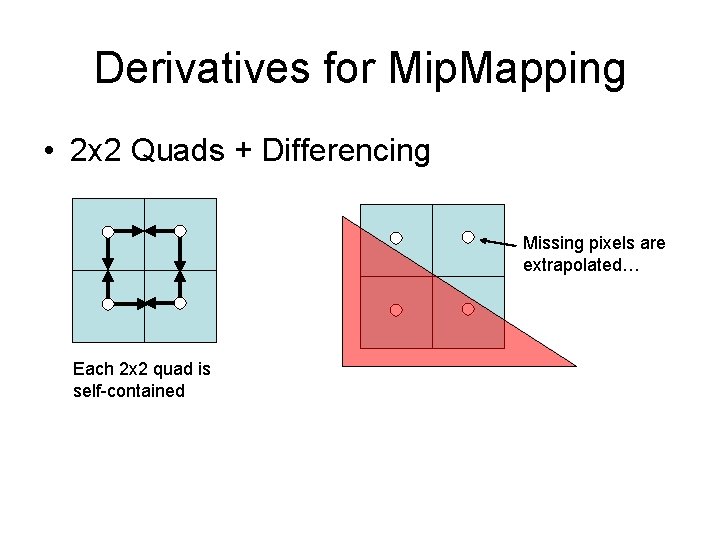

Derivatives for Mip. Mapping • 2 x 2 Quads + Differencing Missing pixels are extrapolated… Each 2 x 2 quad is self-contained

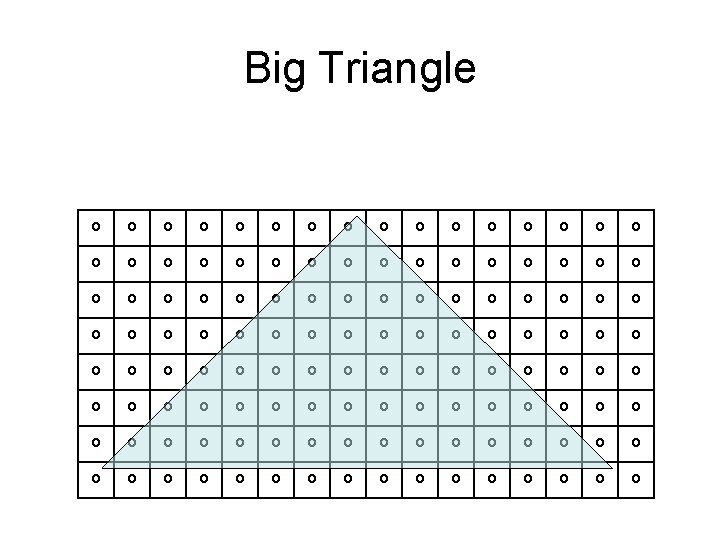

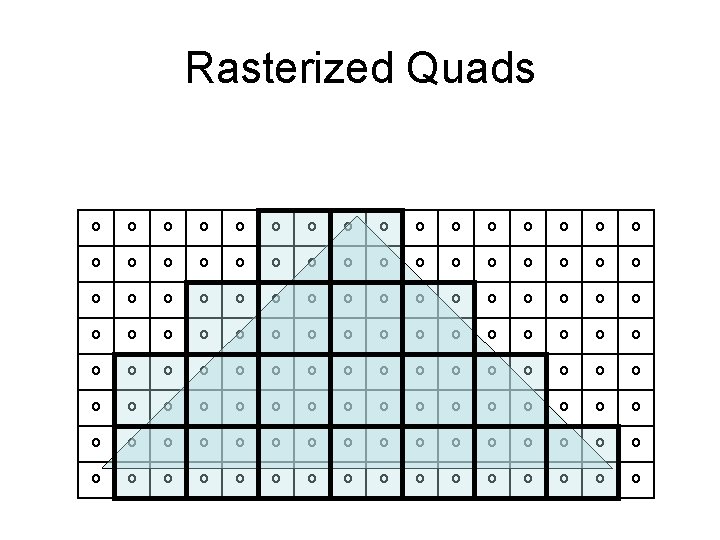

Big Triangle

Rasterized Quads

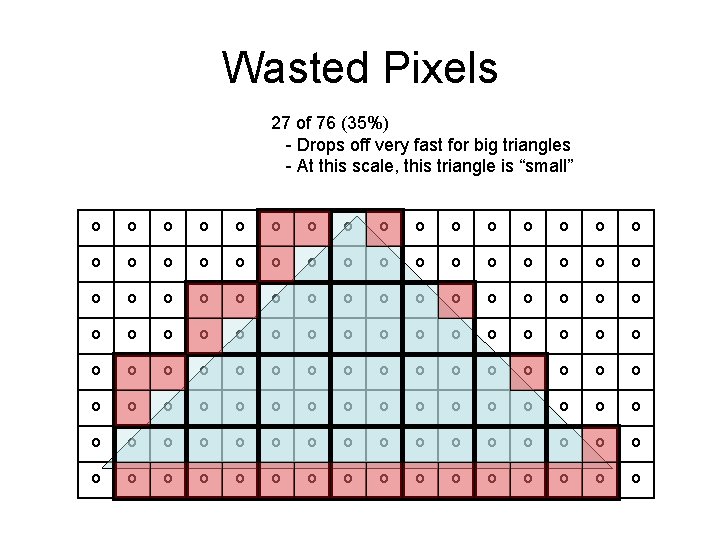

Wasted Pixels 27 of 76 (35%) - Drops off very fast for big triangles - At this scale, this triangle is “small”

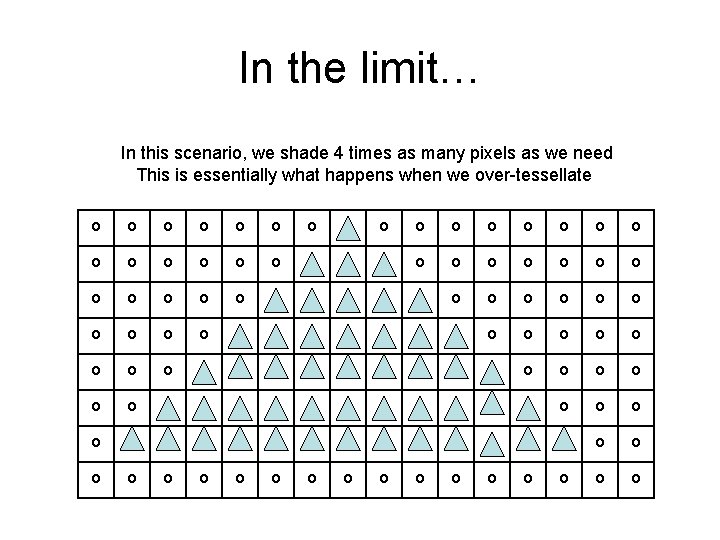

In the limit… In this scenario, we shade 4 times as many pixels as we need This is essentially what happens when we over-tessellate

The Rules • • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence Rule #7: Save bandwidth Rule #8: Geometry shaders are slooooow Rule #9: Keep the machine full Rule #10: Small triangles are slow

The Rules • • • Rule #1: Don’t be silly Rule #2: One way traffic Rule #3: Do not move data Rule #4: Move data correctly Rule #5: Avoid changing state Rule #6: Think about coherence Rule #7: Save bandwidth Rule #8: Geometry shaders are slooooow Rule #9: Keep the machine full Rule #10: Small triangles are slow Rule #11: The rules are subject to change at any time and without notice…

![NVIDIA Ge. Force 6 [Kilgaraff and Fernando, GPU Gems 2] NVIDIA Ge. Force 6 [Kilgaraff and Fernando, GPU Gems 2]](http://slidetodoc.com/presentation_image_h2/b4cd0286a06d7af12fe0c22bee4a6b15/image-83.jpg)

NVIDIA Ge. Force 6 [Kilgaraff and Fernando, GPU Gems 2]

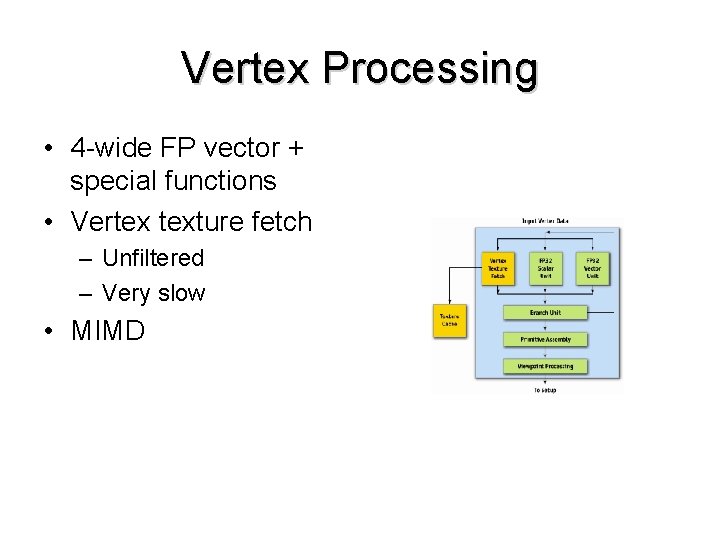

Vertex Processing • 4 -wide FP vector + special functions • Vertex texture fetch – Unfiltered – Very slow • MIMD

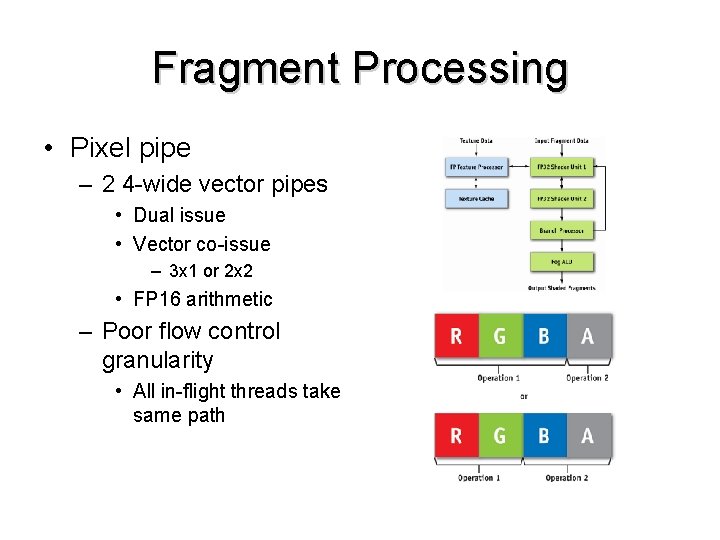

Fragment Processing • Pixel pipe – 2 4 -wide vector pipes • Dual issue • Vector co-issue – 3 x 1 or 2 x 2 • FP 16 arithmetic – Poor flow control granularity • All in-flight threads take same path

![AMD/ATI R 600 [Tom’s Hardware] AMD/ATI R 600 [Tom’s Hardware]](http://slidetodoc.com/presentation_image_h2/b4cd0286a06d7af12fe0c22bee4a6b15/image-86.jpg)

AMD/ATI R 600 [Tom’s Hardware]

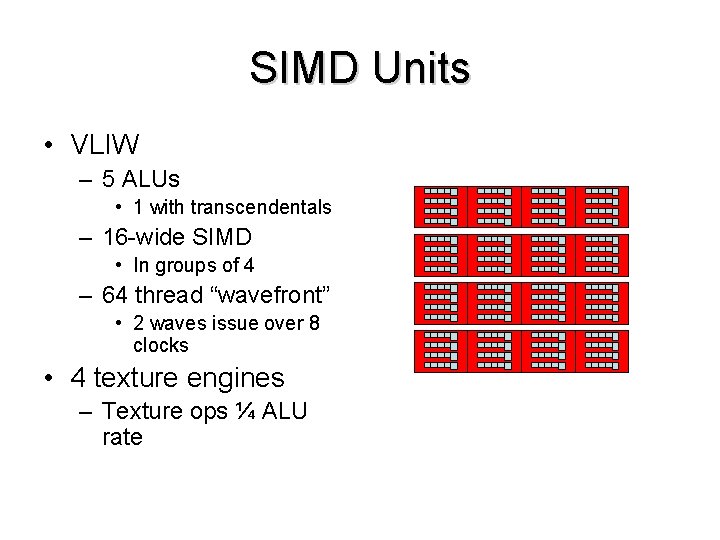

SIMD Units • VLIW – 5 ALUs • 1 with transcendentals – 16 -wide SIMD • In groups of 4 – 64 thread “wavefront” • 2 waves issue over 8 clocks • 4 texture engines – Texture ops ¼ ALU rate

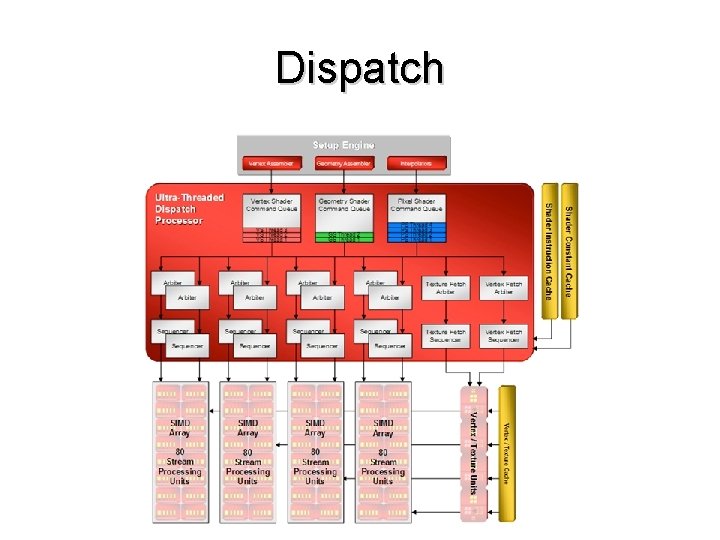

Dispatch

Demo

![NVIDIA G 80 [NVIDIA 8800 Architectural Overview, NVIDIA TB-02787 -001_v 01, November 2006] NVIDIA G 80 [NVIDIA 8800 Architectural Overview, NVIDIA TB-02787 -001_v 01, November 2006]](http://slidetodoc.com/presentation_image_h2/b4cd0286a06d7af12fe0c22bee4a6b15/image-90.jpg)

NVIDIA G 80 [NVIDIA 8800 Architectural Overview, NVIDIA TB-02787 -001_v 01, November 2006]

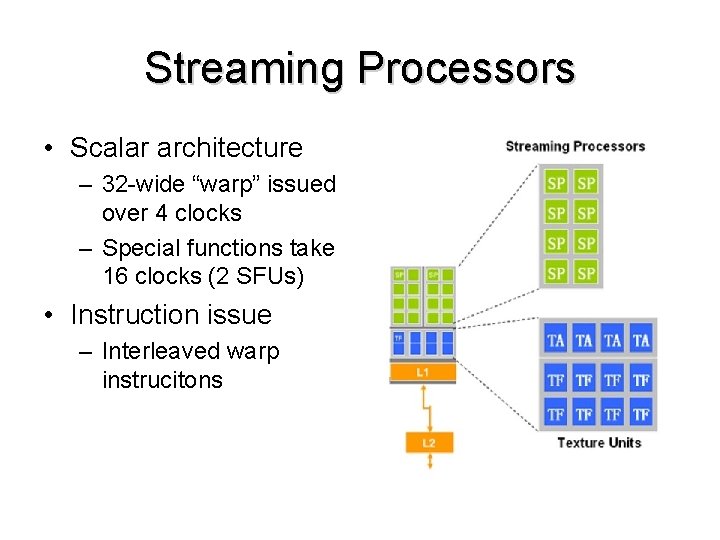

Streaming Processors • Scalar architecture – 32 -wide “warp” issued over 4 clocks – Special functions take 16 clocks (2 SFUs) • Instruction issue – Interleaved warp instrucitons

![NVIDIA Fermi [Beyond 3 D NVIDIA Fermi GPU and Architecture Analysis, 2010] NVIDIA Fermi [Beyond 3 D NVIDIA Fermi GPU and Architecture Analysis, 2010]](http://slidetodoc.com/presentation_image_h2/b4cd0286a06d7af12fe0c22bee4a6b15/image-92.jpg)

NVIDIA Fermi [Beyond 3 D NVIDIA Fermi GPU and Architecture Analysis, 2010]

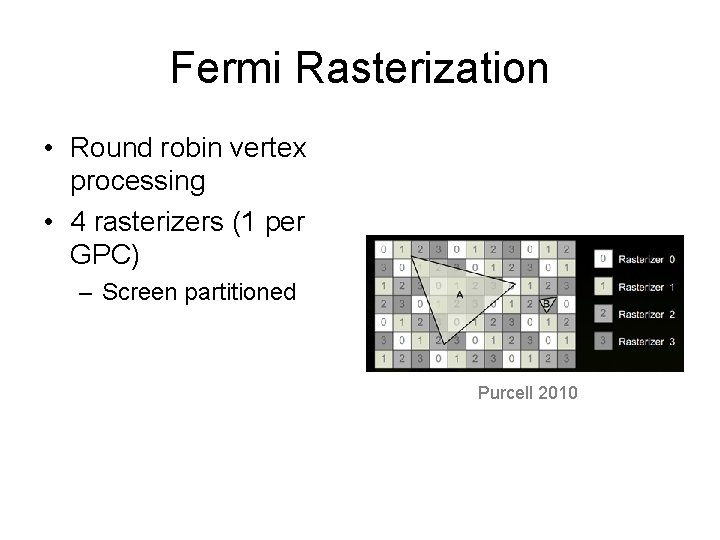

Fermi Rasterization • Round robin vertex processing • 4 rasterizers (1 per GPC) – Screen partitioned Purcell 2010

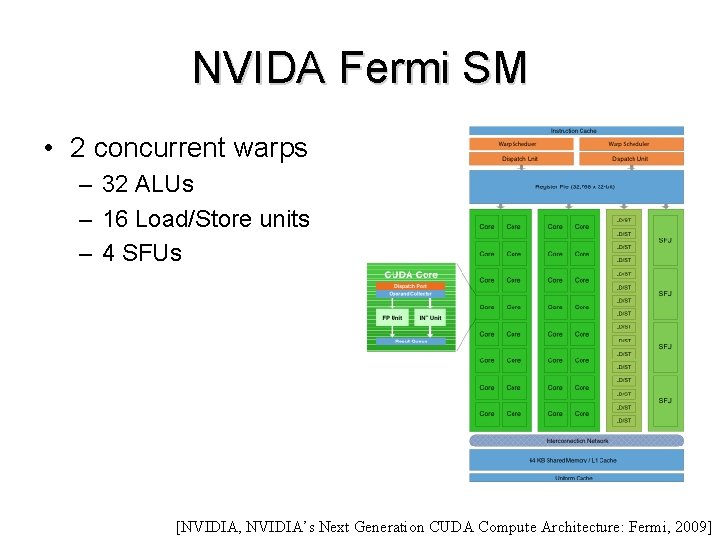

NVIDA Fermi SM • 2 concurrent warps – 32 ALUs – 16 Load/Store units – 4 SFUs [NVIDIA, NVIDIA’s Next Generation CUDA Compute Architecture: Fermi, 2009]

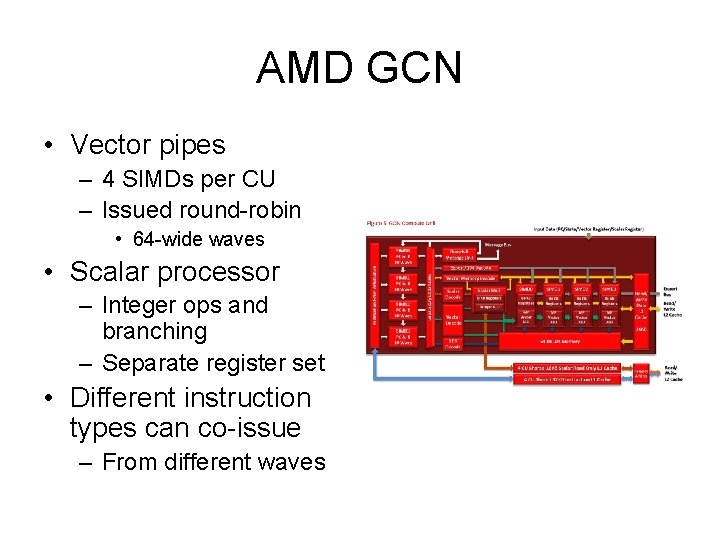

AMD GCN • Vector pipes – 4 SIMDs per CU – Issued round-robin • 64 -wide waves • Scalar processor – Integer ops and branching – Separate register set • Different instruction types can co-issue – From different waves

- Slides: 95