Gaia GeoDistributed Machine Learning Approaching LAN Speeds Kevin

Gaia: Geo-Distributed Machine Learning Approaching LAN Speeds Kevin Hsieh Aaron Harlap, Nandita Vijaykumar, Dimitris Konomis, Gregory R. Ganger, Phillip B. Gibbons, Onur Mutlu† †

Machine Learning and Big Data • Machine learning is widely used to derive useful information from large-scale data Pictures Videos User Activities …… Image Classification Video Analytics Preference Prediction 2

Big Data is Geo-Distributed • A large amount of data is generated rapidly, all over the world 3

![Centralizing Data is Infeasible [1, 2, 3] • Moving data over wide-area networks (WANs) Centralizing Data is Infeasible [1, 2, 3] • Moving data over wide-area networks (WANs)](http://slidetodoc.com/presentation_image_h2/cf67e8d9ac927acae4707f5bb6a0abbb/image-4.jpg)

Centralizing Data is Infeasible [1, 2, 3] • Moving data over wide-area networks (WANs) can be extremely slow • It is also subject to data sovereignty laws 1. Vulimiri et al. , NSDI’ 15 2. Pu et al. , SIGCOMM’ 15 3. Viswanathan et al. , OSDI’ 16 4

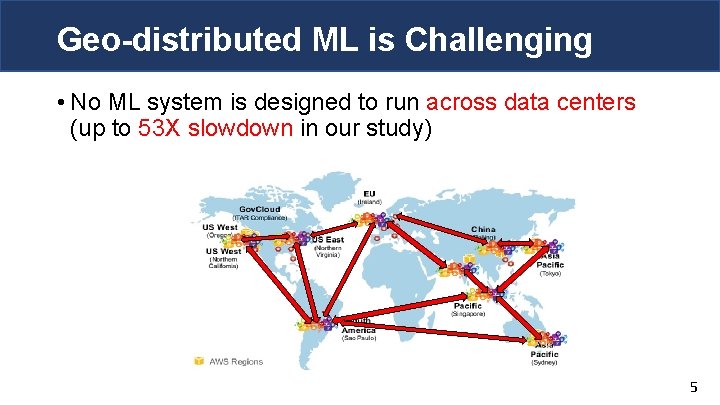

Geo-distributed ML is Challenging • No ML system is designed to run across data centers (up to 53 X slowdown in our study) 5

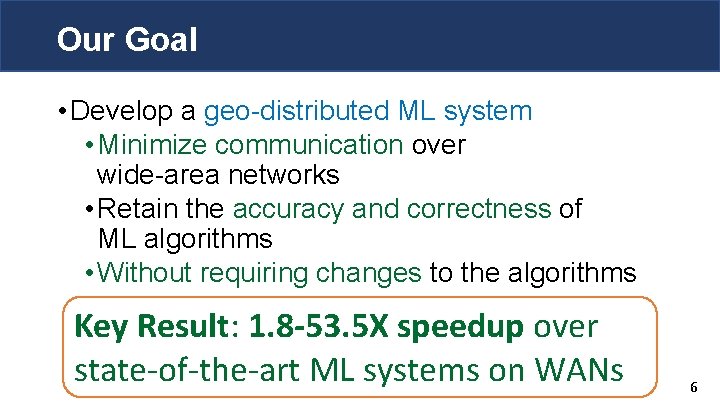

Our Goal • Develop a geo-distributed ML system • Minimize communication over wide-area networks • Retain the accuracy and correctness of ML algorithms • Without requiring changes to the algorithms Key Result: 1. 8 -53. 5 X speedup over state-of-the-art ML systems on WANs 6

Outline • Problem & Goal • Background & Motivation • Gaia System Overview • Approximate Synchronous Parallel • System Implementation • Evaluation • Conclusion 7

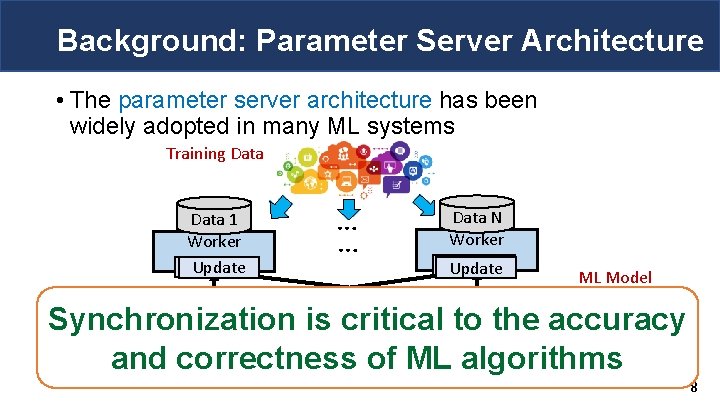

Background: Parameter Server Architecture • The parameter server architecture has been widely adopted in many ML systems Training Data 1 Worker Machine Update 1 … … Data N Worker Machine Update N ML Model Parameter Read … Synchronization is critical to the accuracy Server and correctness of ML algorithms 8

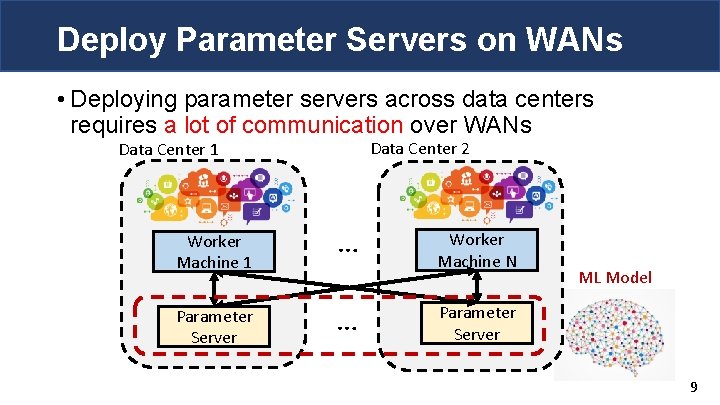

Deploy Parameter Servers on WANs • Deploying parameter servers across data centers requires a lot of communication over WANs Data Center 2 Data Center 1 Worker Machine 1 … Parameter Server … Worker Machine N ML Model Parameter Server 9

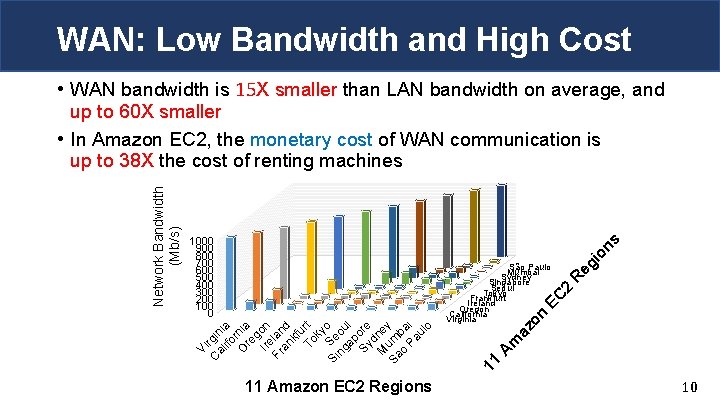

WAN: Low Bandwidth and High Cost 11 Amazon EC 2 Regions eg io ns R 11 A m az on EC São Paulo Mumbai Sydney Singapore Seoul Tokyo Frankfurt Ireland Oregon California Virginia 2 1000 900 800 700 600 500 400 300 200 100 0 Vi C rgin al ia ifo r O nia re g Ire on Fr lan an d kf u To rt ky o S Si e ng ou ap l Sy ore d M ney Sa um o ba Pa i ul o Network Bandwidth (Mb/s) • WAN bandwidth is 15 X smaller than LAN bandwidth on average, and up to 60 X smaller • In Amazon EC 2, the monetary cost of WAN communication is up to 38 X the cost of renting machines 10

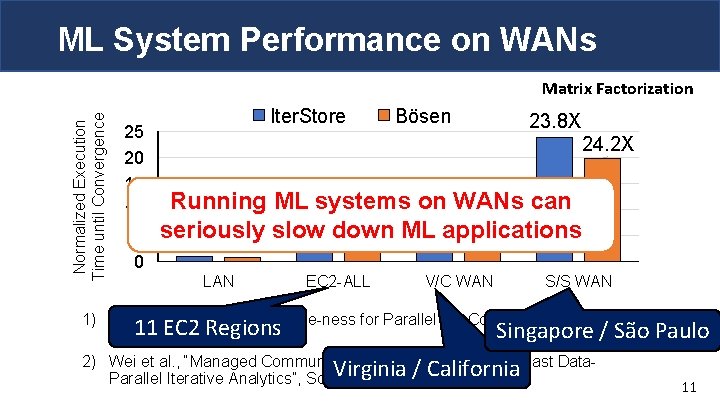

ML System Performance on WANs Normalized Execution Time until Convergence Matrix Factorization 25 20 15 10 5 0 Iter. Store Bӧsen 23. 8 X 24. 2 X Running ML systems on WANs can 5. 9 X 3. 5 X 4. 4 X 3. 7 Xdown seriously slow ML applications LAN EC 2 -ALL V/C WAN S/S WAN 1) Cui et al. , “Exploiting Iterative-ness for Parallel ML Computations”, 11 EC 2 Regions Singapore So. CC’ 14 2) Wei et al. , “Managed Communication and Consistency for Fast Data. Virginia / California Parallel Iterative Analytics”, So. CC’ 15 / São Paulo 11

Outline • Problem & Goal • Background & Motivation • Gaia System Overview • Approximate Synchronous Parallel • System Implementation • Evaluation • Conclusion 12

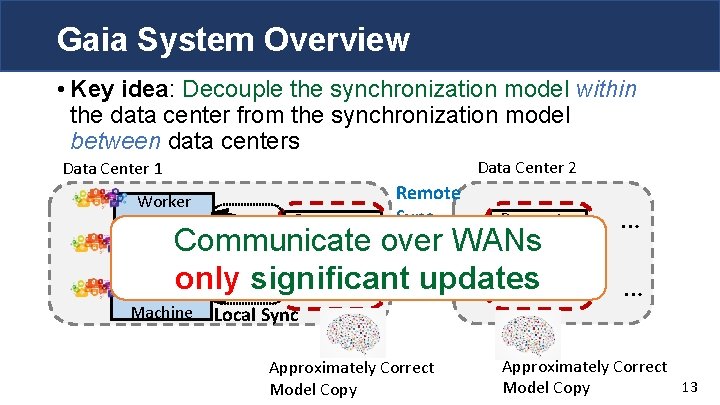

Gaia System Overview • Key idea: Decouple the synchronization model within the data center from the synchronization model between data centers Data Center 2 Data Center 1 Worker Parameter Machine Server Worker Machine Local Sync Remote Sync Parameter Server Communicate over WANs only significant updates Approximately Correct Model Copy … … Approximately Correct 13 Model Copy

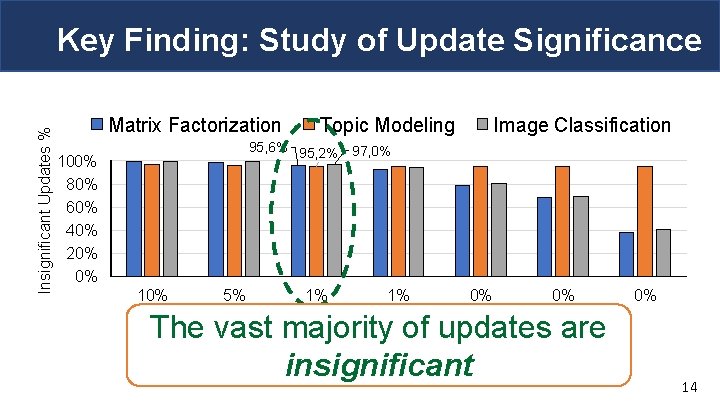

Insignificant Updates % Key Finding: Study of Update Significance Matrix Factorization Topic Modeling Image Classification 95, 6% 95, 2% 97, 0% 100% 80% 60% 40% 20% 0% 10% 5% 1% 1% 0% Threshold of Significant Updates 0% The vast majority of updates are insignificant 0% 14

Outline • Problem & Goal • Background & Motivation • Gaia System Overview • Approximate Synchronous Parallel • System Implementation • Evaluation • Conclusion 15

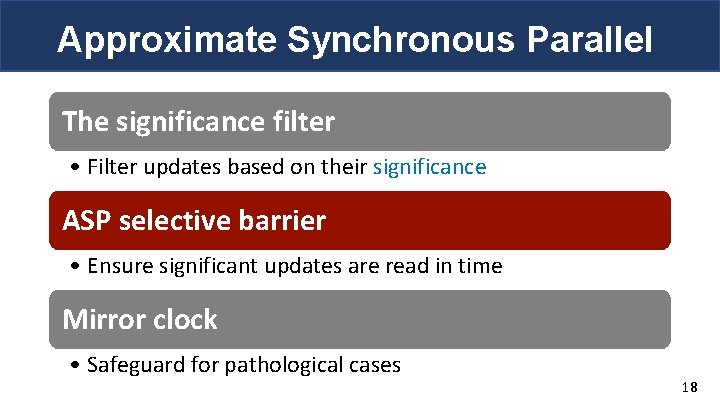

Approximate Synchronous Parallel The significance filter • Filter updates based on their significance ASP selective barrier • Ensure significant updates are read in time Mirror clock • Safe guard for pathological cases 16

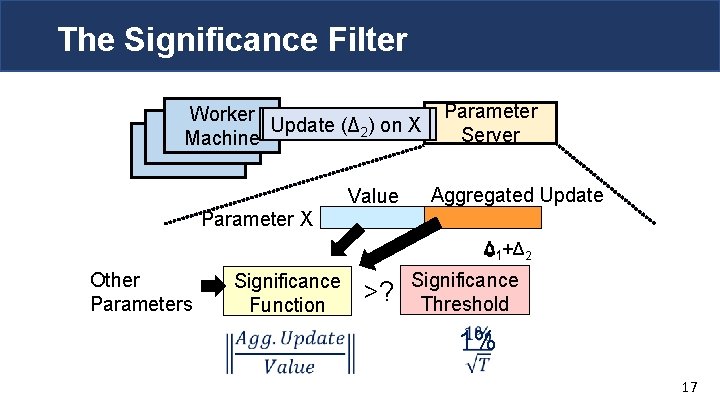

The Significance Filter Worker Update (Δ 21) on X Machine Parameter X Value Parameter Server Aggregated Update Δ 01+Δ 2 Other Parameters Significance Function >? Significance Threshold 1% 17

Approximate Synchronous Parallel The significance filter • Filter updates based on their significance ASP selective barrier • Ensure significant updates are read in time Mirror clock • Safeguard for pathological cases 18

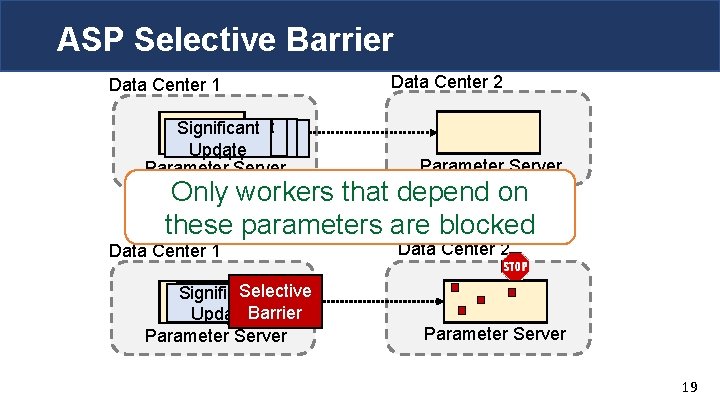

ASP Selective Barrier Data Center 1 Significant Update Parameter Server Data Center 2 Parameter Server Only workers depend on Arrive that too late! these parameters are blocked Data Center 1 Selective Significant Barrier Update Parameter Server Data Center 2 Parameter Server 19

Outline • Problem & Goal • Background & Motivation • Gaia System Overview • Approximate Synchronous Parallel • System Implementation • Evaluation • Conclusion 20

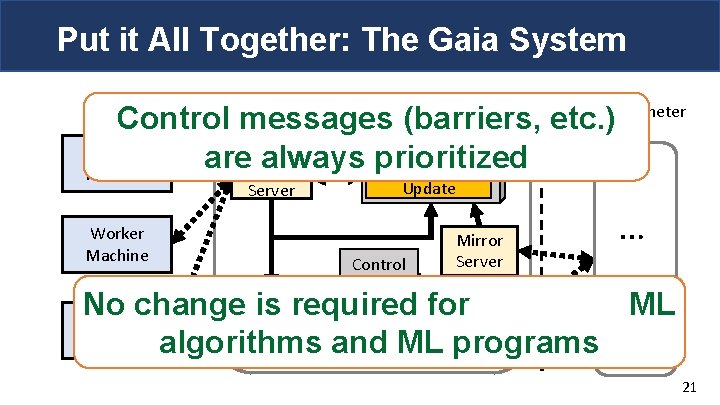

Put it All Together: The Gaia System Data Center Boundary Gaia Parameter Control. Gaiamessages Parameter Server (barriers, etc. ) Server Worker are always prioritized Update Aggregated Local Machine Parameter Store Server Worker Machine Update Control Queue Mirror Server … Significance Selective Mirror No change is required for ML Filter Worker Barrier Client Data Machine algorithms and Queue. ML programs 21

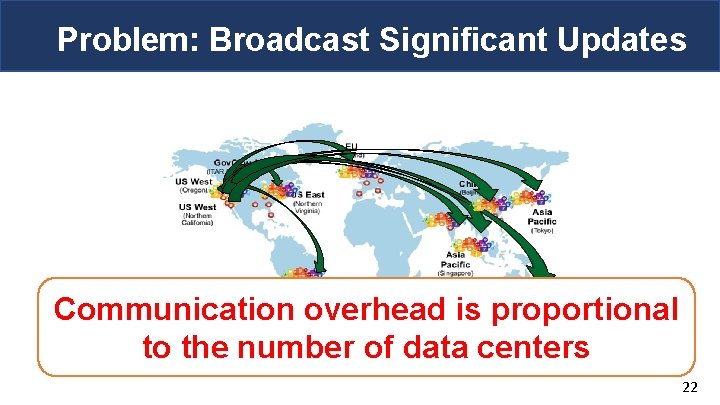

Problem: Broadcast Significant Updates Communication overhead is proportional to the number of data centers 22

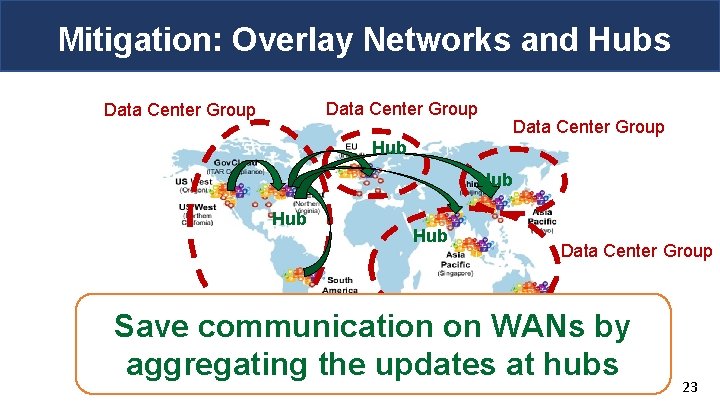

Mitigation: Overlay Networks and Hubs Data Center Group Hub Hub Data Center Group Save communication on WANs by aggregating the updates at hubs 23

Outline • Problem & Goal • Background & Motivation • Gaia System Overview • Approximate Synchronous Parallel • System Implementation • Evaluation • Conclusion 24

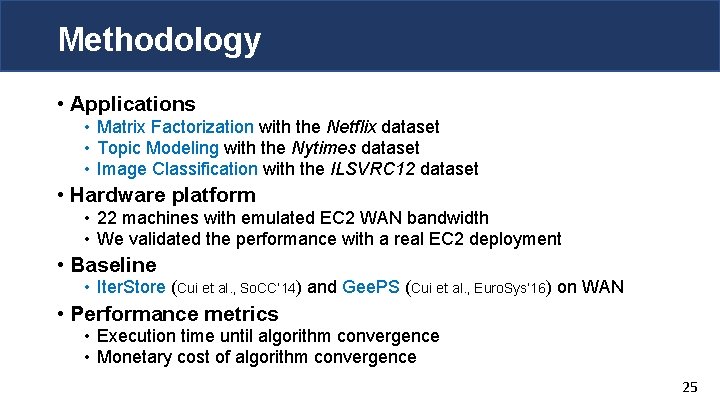

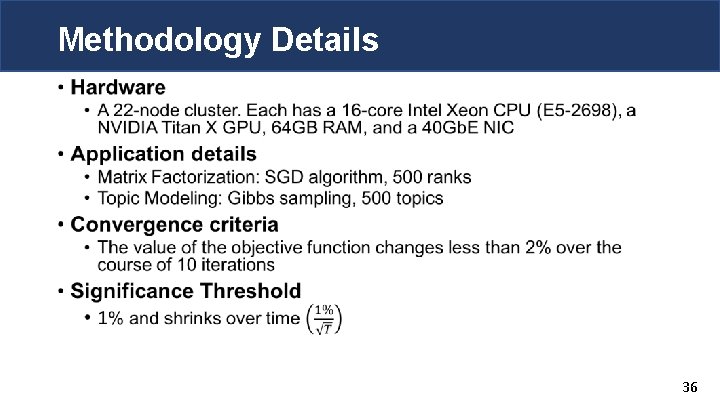

Methodology • Applications • Matrix Factorization with the Netflix dataset • Topic Modeling with the Nytimes dataset • Image Classification with the ILSVRC 12 dataset • Hardware platform • 22 machines with emulated EC 2 WAN bandwidth • We validated the performance with a real EC 2 deployment • Baseline • Iter. Store (Cui et al. , So. CC’ 14) and Gee. PS (Cui et al. , Euro. Sys’ 16) on WAN • Performance metrics • Execution time until algorithm convergence • Monetary cost of algorithm convergence 25

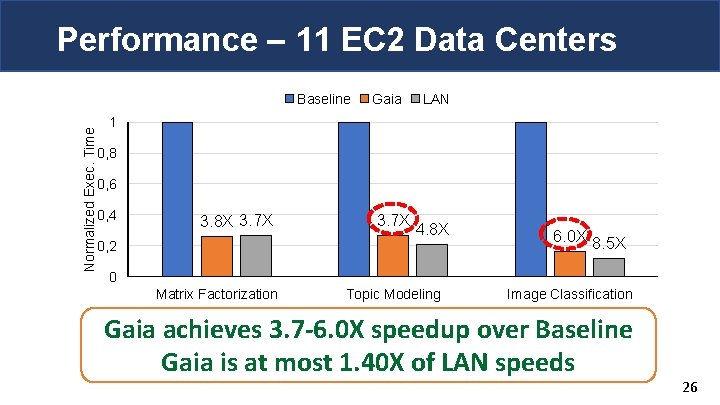

Performance – 11 EC 2 Data Centers Normalized Exec. Time Baseline Gaia LAN 1 0, 8 0, 6 0, 4 3. 8 X 3. 7 X 0, 2 3. 7 X 4. 8 X 6. 0 X 8. 5 X 0 Matrix Factorization Topic Modeling Image Classification Gaia achieves 3. 7 -6. 0 X speedup over Baseline Gaia is at most 1. 40 X of LAN speeds 26

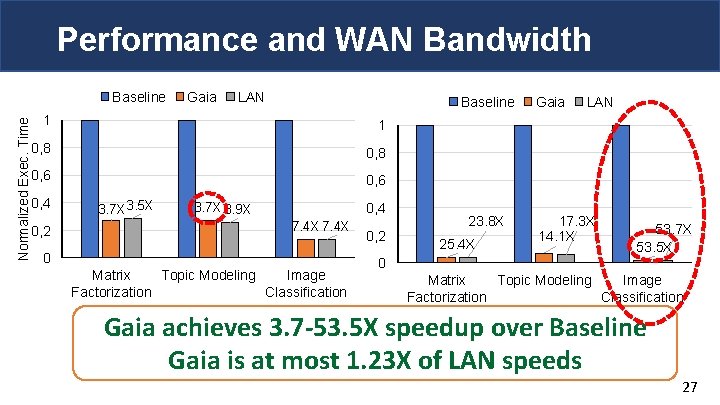

Performance and WAN Bandwidth Normalized Exec. Time Baseline Gaia LAN Baseline 1 1 0, 8 0, 6 0, 4 0, 2 3. 7 X 3. 5 X 0, 4 3. 7 X 3. 9 X 7. 4 X 0 Matrix Topic Modeling Image Factorization Classification 0, 2 23. 8 X 25. 4 X Gaia LAN 17. 3 X 14. 1 X 53. 7 X 53. 5 X 0 Matrix Topic Modeling Image Factorization Classification WAN V/C WAN 3. 7 -53. 5 X speedup over. S/S Gaia achieves Baseline (Singapore/São Paulo) (Virginia/California) Gaia is at most 1. 23 X of LAN speeds 27

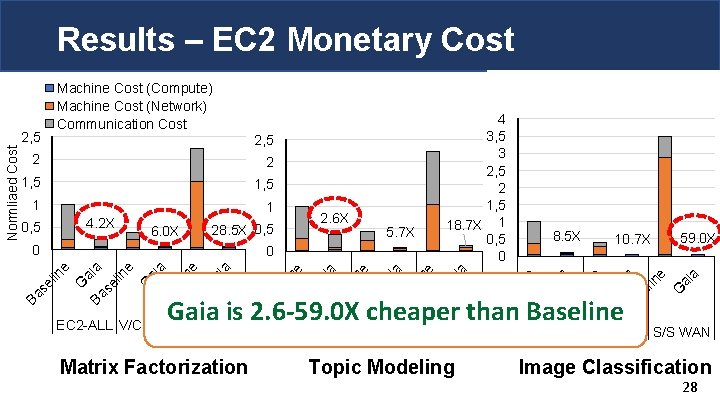

Results – EC 2 Monetary Cost Matrix Factorization ai a G in e 59. 0 X Ba Ba EC 2 -ALL V/C WAN S/S WAN se l in e se l ai a G se l in e ai a 10. 7 X Gaia is 2. 6 -59. 0 X cheaper than Baseline EC 2 -ALL V/C WAN S/S WAN Ba G in e se l ai a Ba G se l in e 0 8. 5 X ai a 5. 7 X G 0 Ba 2. 6 X 28. 5 X 0, 5 in e 6. 0 X Ba 4. 2 X 0, 5 se l 1 ai a 1 G 1, 5 in e 1, 5 se l 2 ai a 2 4 3, 5 3 2, 5 2 1, 5 18. 7 X 1 0, 5 0 G 2, 5 Ba Normliaed Cost 2, 5 Machine Cost (Compute) Machine Cost (Network) Communication Cost EC 2 -ALL V/C WAN S/S WAN Topic Modeling Image Classification 28

More in the Paper • Convergence proof of Approximate Synchronous Parallel (ASP) • ASP vs. fully asynchronous • Gaia vs. centralizing data approach 29

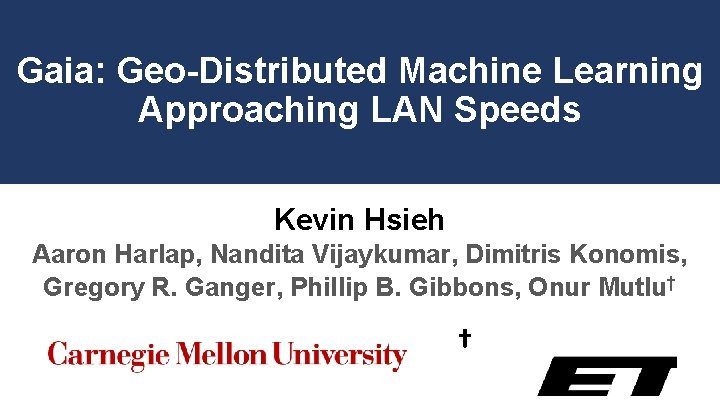

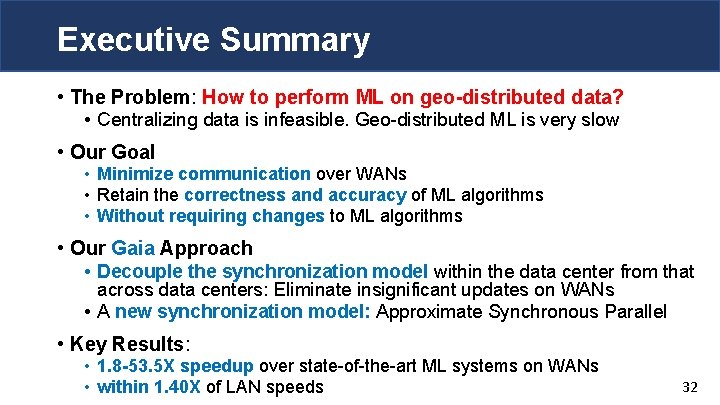

Key Takeaways • The Problem: How to perform ML on geo-distributed data? • Centralizing data is infeasible. Geo-distributed ML is very slow • Our Gaia Approach • Decouple the synchronization model within the data center from that across data centers • Eliminate insignificant updates across data centers • A new synchronization model: Approximate Synchronous Parallel • Retain the correctness and accuracy of ML algorithms • Key Results: • 1. 8 -53. 5 X speedup over state-of-the-art ML systems on WANs • at most 1. 40 X of LAN speeds • without requiring changes to algorithms 30

Gaia: Geo-Distributed Machine Learning Approaching LAN Speeds Kevin Hsieh Aaron Harlap, Nandita Vijaykumar, Dimitris Konomis, Gregory R. Ganger, Phillip B. Gibbons, Onur Mutlu† †

Executive Summary • The Problem: How to perform ML on geo-distributed data? • Centralizing data is infeasible. Geo-distributed ML is very slow • Our Goal • Minimize communication over WANs • Retain the correctness and accuracy of ML algorithms • Without requiring changes to ML algorithms • Our Gaia Approach • Decouple the synchronization model within the data center from that across data centers: Eliminate insignificant updates on WANs • A new synchronization model: Approximate Synchronous Parallel • Key Results: • 1. 8 -53. 5 X speedup over state-of-the-art ML systems on WANs • within 1. 40 X of LAN speeds 32

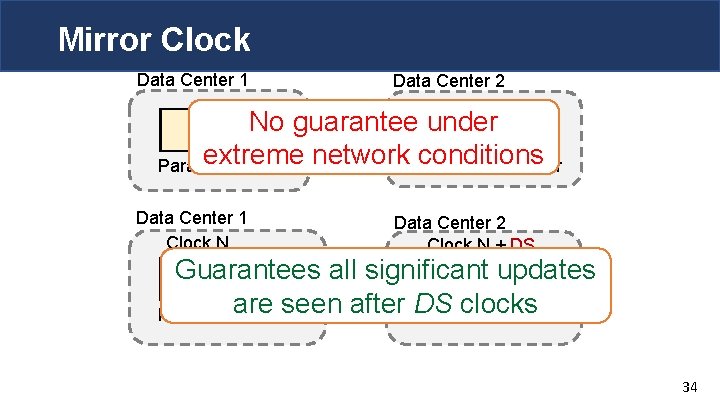

Approximate Synchronous Parallel The significance filter • Filter updates based their significance ASP selective barrier • Ensure significant updates are read in time Mirror clock • Safeguard for pathological cases 33

Mirror Clock Data Center 1 Data Center 2 No guarantee Barrier under extreme Parameter Server network conditions Parameter Server Data Center 1 Clock N Data Center 2 Clock N + DS Guarantees Clock N all significant updates d seen after DS clocks Parameterare Server Parameter Server 34

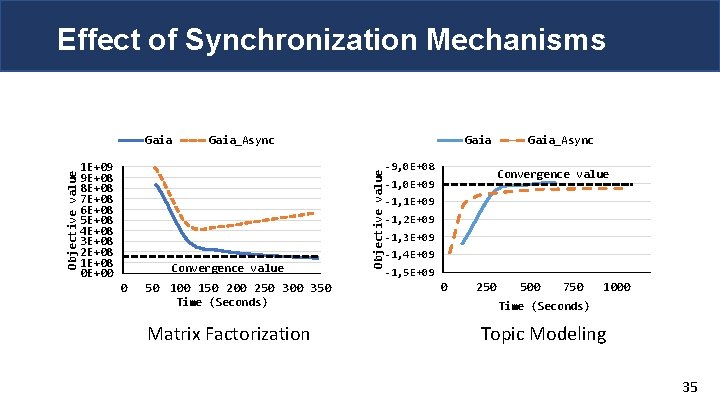

Effect of Synchronization Mechanisms 1 E+09 9 E+08 8 E+08 7 E+08 6 E+08 5 E+08 4 E+08 3 E+08 2 E+08 1 E+08 0 E+00 Gaia_Async Convergence value 0 50 100 150 200 250 300 350 Time (Seconds) Matrix Factorization Gaia Objective value Gaia -9, 0 E+08 Gaia_Async Convergence value -1, 0 E+09 -1, 1 E+09 -1, 2 E+09 -1, 3 E+09 -1, 4 E+09 -1, 5 E+09 0 250 500 750 1000 Time (Seconds) Topic Modeling 35

Methodology Details • 36

![ML System Performance Comparison • Iter. Store [Cui et al. So. CC’ 15] shows ML System Performance Comparison • Iter. Store [Cui et al. So. CC’ 15] shows](http://slidetodoc.com/presentation_image_h2/cf67e8d9ac927acae4707f5bb6a0abbb/image-37.jpg)

ML System Performance Comparison • Iter. Store [Cui et al. So. CC’ 15] shows 10 X performance improvement over Power. Graph [Gonzalez et al. , OSDI’ 12] for Matrix Factorization • Power. Graph matches the performance of Graph. X [Gonzalez et al. , OSDI’ 14], a Spark-based system 37

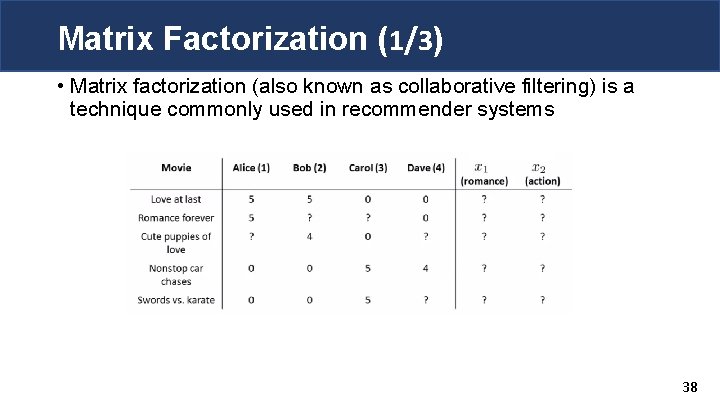

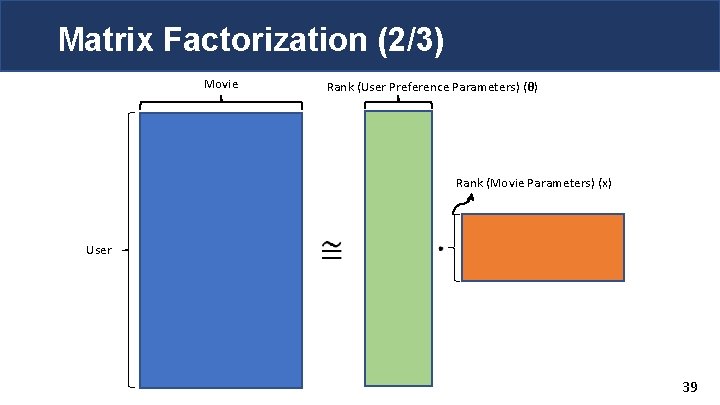

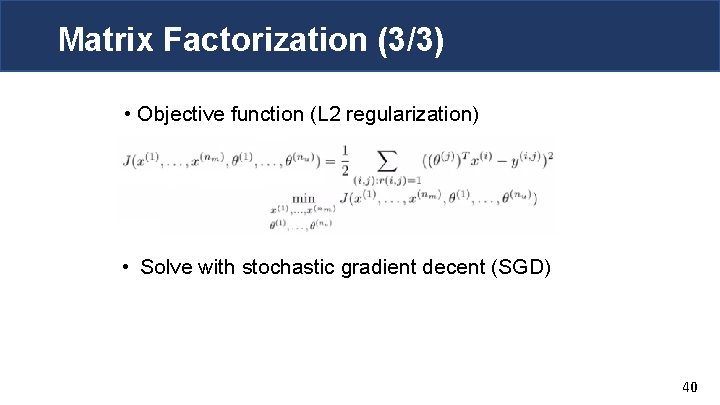

Matrix Factorization (1/3) • Matrix factorization (also known as collaborative filtering) is a technique commonly used in recommender systems 38

Matrix Factorization (2/3) Movie Rank (User Preference Parameters) (θ) Rank (Movie Parameters) (x) User 39

Matrix Factorization (3/3) • Objective function (L 2 regularization) • Solve with stochastic gradient decent (SGD) 40

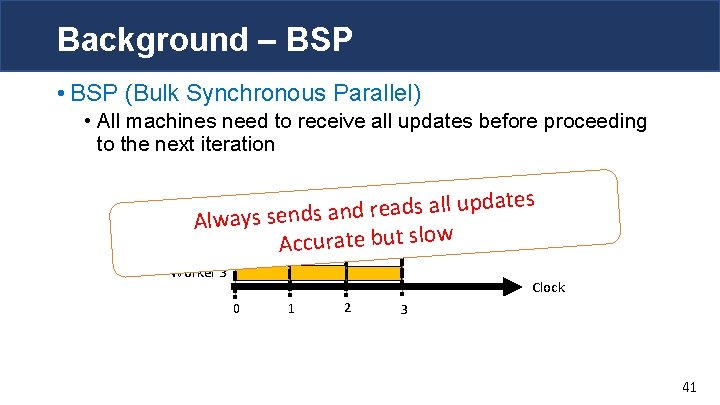

Background – BSP • BSP (Bulk Synchronous Parallel) • All machines need to receive all updates before proceeding to the next iteration ates d p u ll a s d a e r d n a Worker Alw 1 ays sends Worker 2 Accurate but slow Worker 3 Clock 0 1 2 3 41

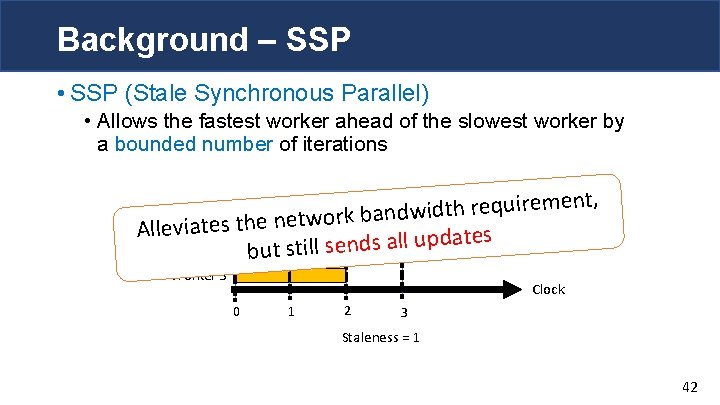

Background – SSP • SSP (Stale Synchronous Parallel) • Allows the fastest worker ahead of the slowest worker by a bounded number of iterations ent, m e ir u q e r h t id w d Worker 1 s the network ban e t ia v e All ates Worker 2 but still sends all upd Worker 3 Clock 0 1 2 3 Staleness = 1 42

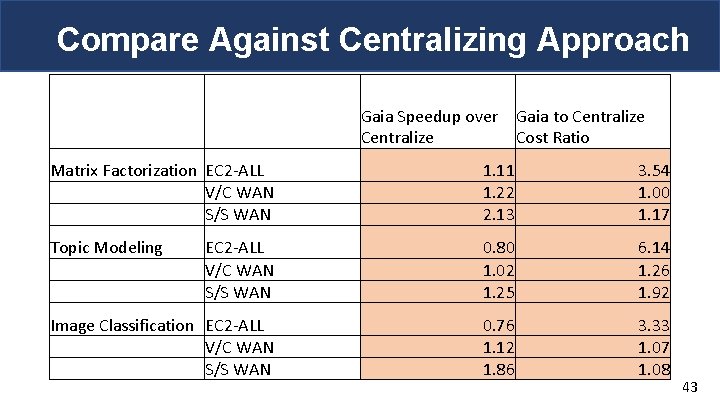

Compare Against Centralizing Approach Gaia Speedup over Gaia to Centralize Cost Ratio Matrix Factorization EC 2 -ALL V/C WAN S/S WAN 1. 11 1. 22 2. 13 3. 54 1. 00 1. 17 Topic Modeling EC 2 -ALL V/C WAN S/S WAN 0. 80 1. 02 1. 25 6. 14 1. 26 1. 92 Image Classification EC 2 -ALL V/C WAN S/S WAN 0. 76 1. 12 1. 86 3. 33 1. 07 1. 08 43

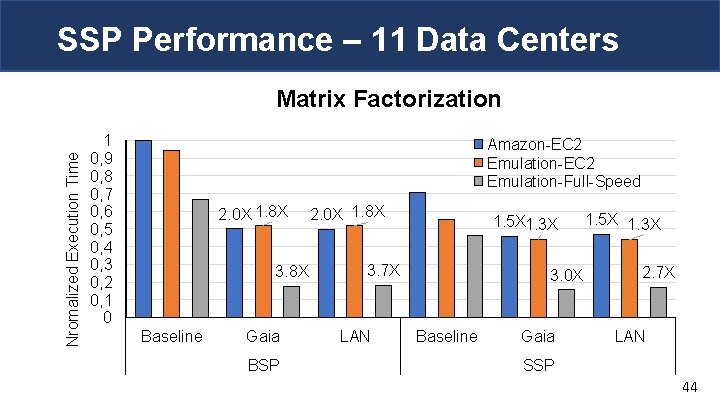

SSP Performance – 11 Data Centers Nromalized Execution Time Matrix Factorization 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Amazon-EC 2 Emulation-Full-Speed 2. 0 X 1. 8 X 3. 8 X Baseline Gaia BSP 2. 0 X 1. 8 X 1. 5 X 1. 3 X 3. 7 X LAN 3. 0 X Baseline Gaia 1. 5 X 1. 3 X 2. 7 X LAN SSP 44

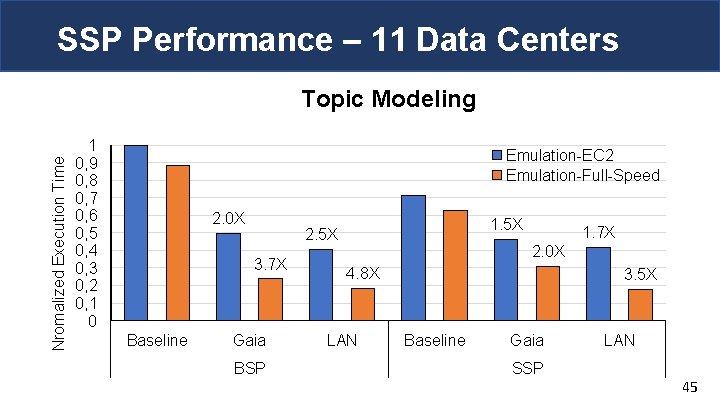

SSP Performance – 11 Data Centers Nromalized Execution Time Topic Modeling 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Emulation-EC 2 Emulation-Full-Speed 2. 0 X 3. 7 X Baseline 1. 5 X 2. 5 X Gaia BSP 1. 7 X 2. 0 X 4. 8 X LAN 3. 5 X Baseline Gaia SSP LAN 45

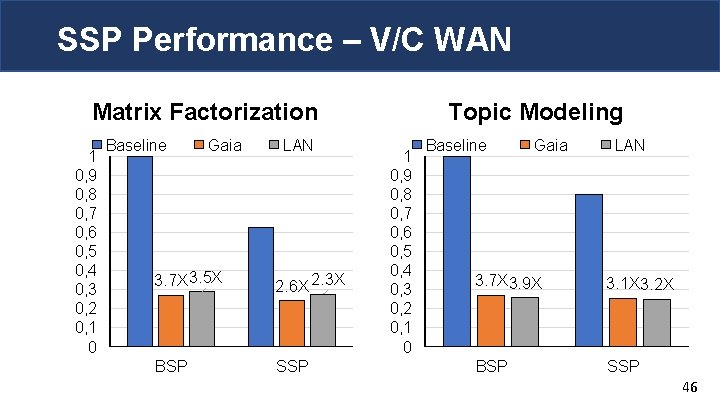

SSP Performance – V/C WAN Matrix Factorization 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Baseline Gaia LAN 3. 7 X 3. 5 X 2. 6 X 2. 3 X BSP SSP Topic Modeling 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Baseline Gaia LAN 3. 7 X 3. 9 X 3. 1 X 3. 2 X BSP SSP 46

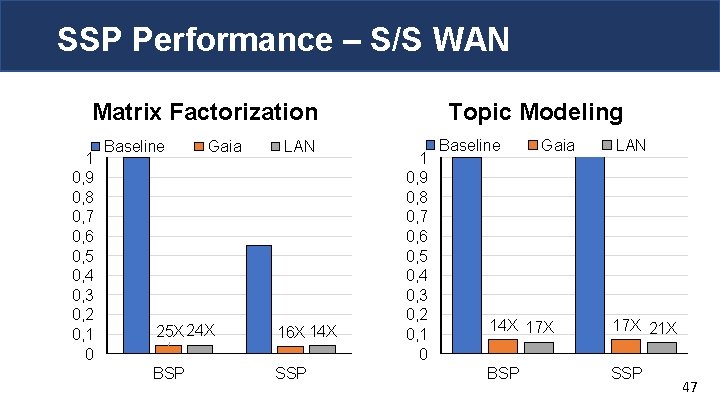

SSP Performance – S/S WAN Matrix Factorization 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Baseline Gaia LAN 25 X 24 X 16 X 14 X BSP SSP Topic Modeling 1 0, 9 0, 8 0, 7 0, 6 0, 5 0, 4 0, 3 0, 2 0, 1 0 Baseline Gaia LAN 14 X 17 X 21 X BSP SSP 47

- Slides: 47