CSCE 713 Advanced Computer Architecture Lecture 5 MPI

- Slides: 59

CSCE 713 Advanced Computer Architecture Lecture 5 MPI Introduction Topics n … Readings January 19, 2012

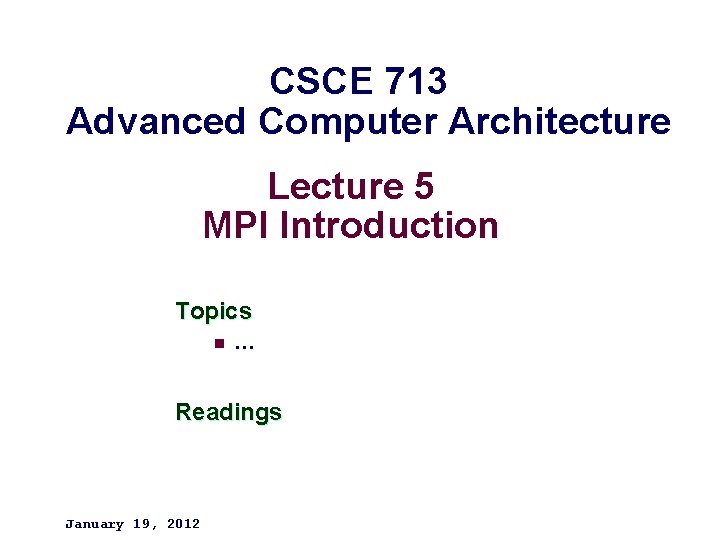

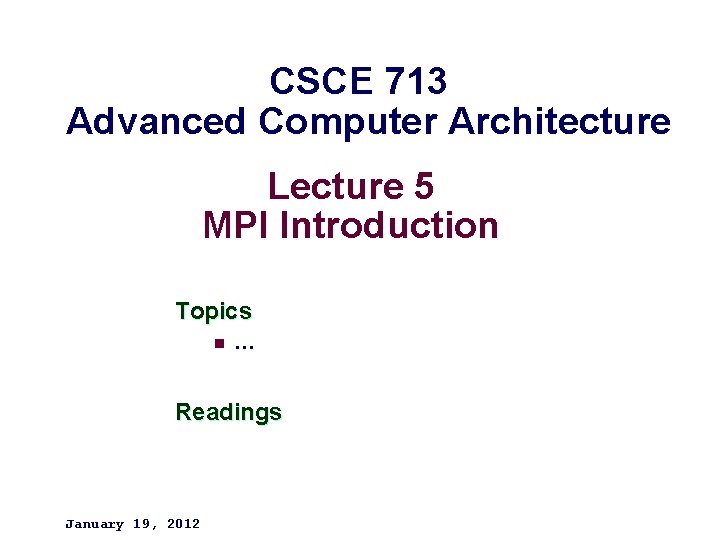

A distributed memory system – 2– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

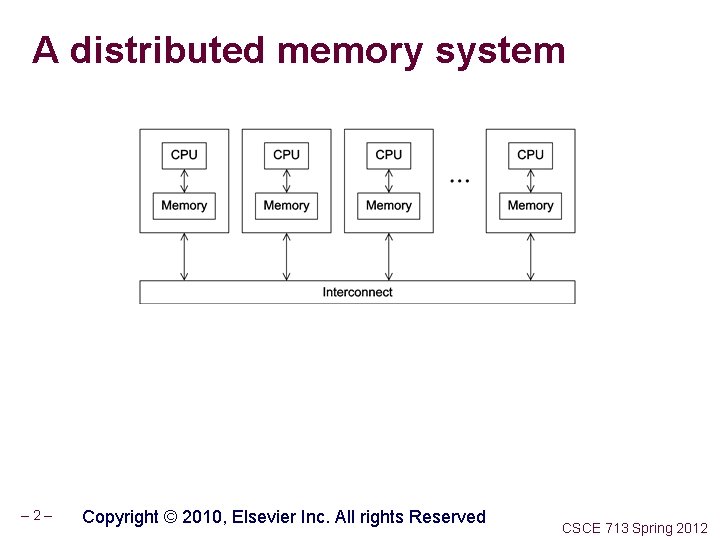

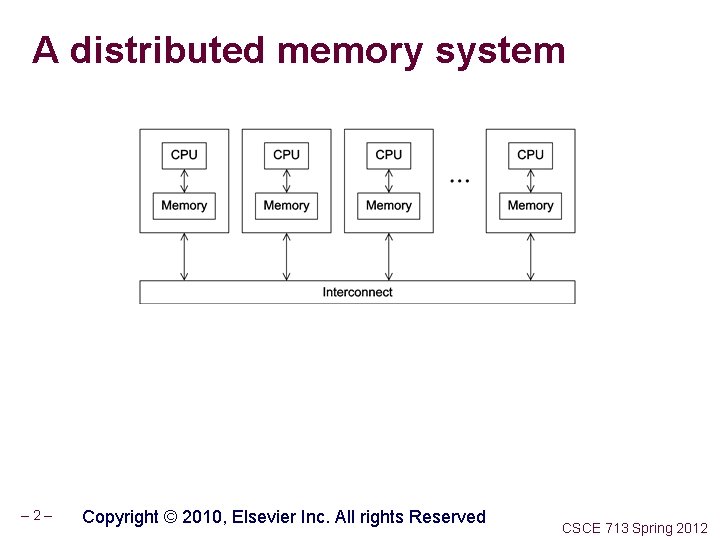

A shared memory system – 3– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Identifying MPI processes Common practice to identify processes by nonnegative integer ranks. p processes are numbered 0, 1, 2, . . p-1 – 4– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

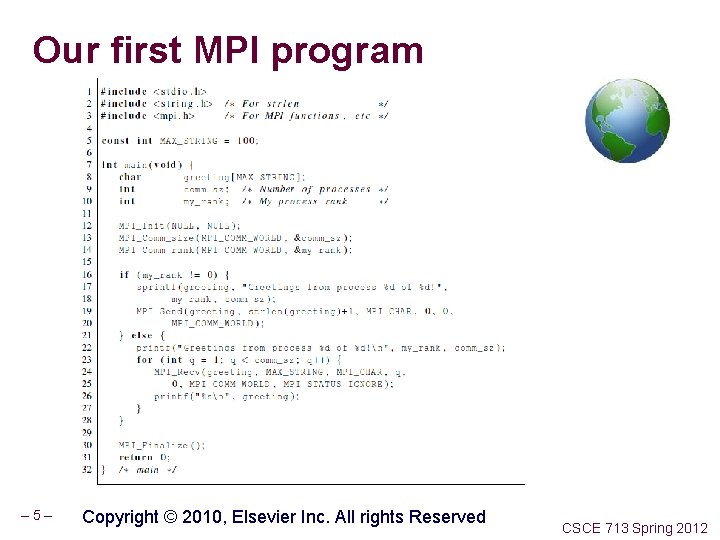

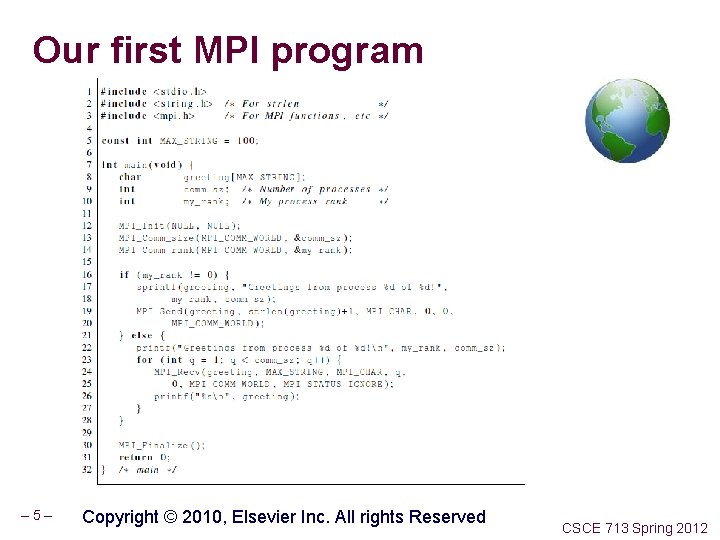

Our first MPI program – 5– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Compilation mpicc -g -Wall -o mpi_hello. c – 6– CSCE 713 Spring 2012

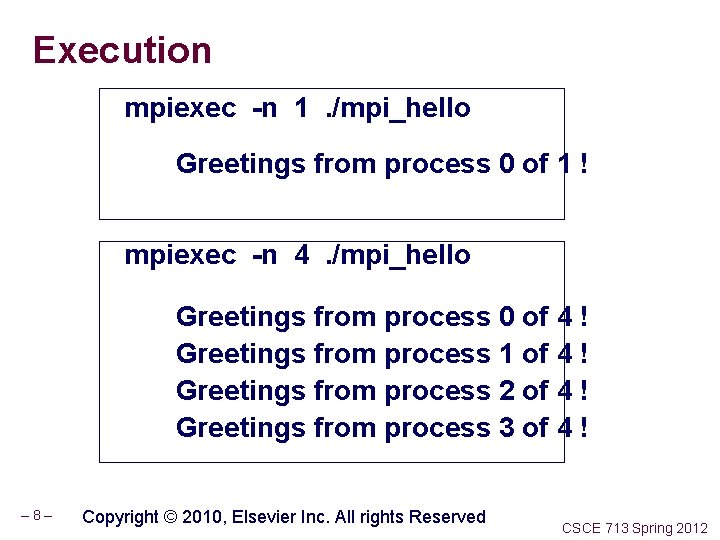

Execution mpiexec -n <number of processes> <executable> mpiexec -n 1. /mpi_hello run with 1 process mpiexec -n 4. /mpi_hello run with 4 processes – 7– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Execution mpiexec -n 1. /mpi_hello Greetings from process 0 of 1 ! mpiexec -n 4. /mpi_hello Greetings from process 0 of 4 ! Greetings from process 1 of 4 ! Greetings from process 2 of 4 ! Greetings from process 3 of 4 ! – 8– Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

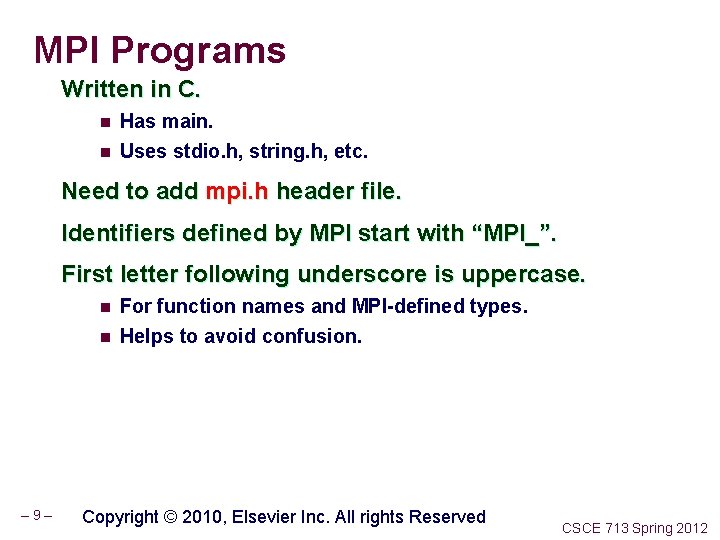

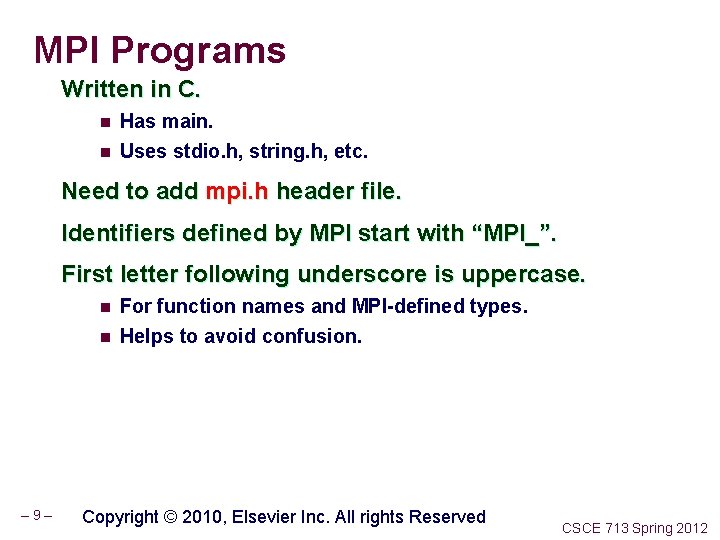

MPI Programs Written in C. n Has main. n Uses stdio. h, string. h, etc. Need to add mpi. h header file. Identifiers defined by MPI start with “MPI_”. First letter following underscore is uppercase. n n – 9– For function names and MPI-defined types. Helps to avoid confusion. Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

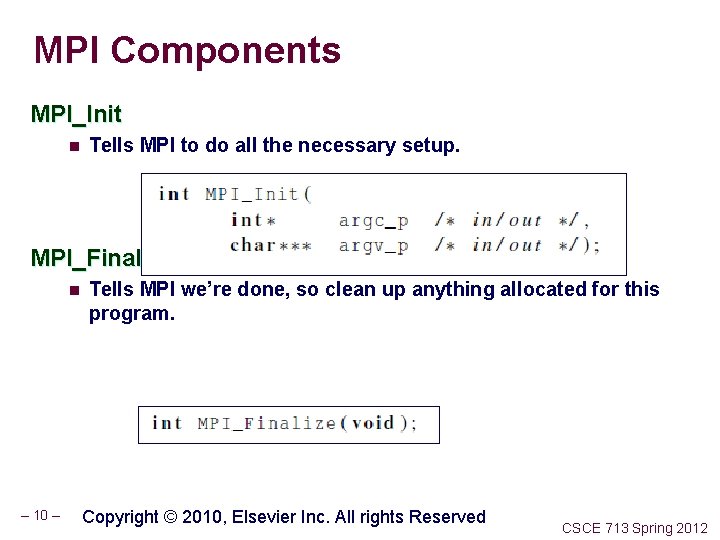

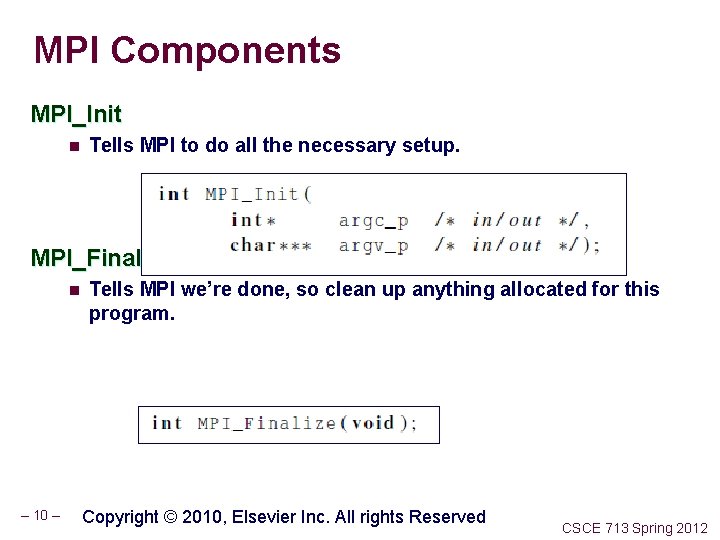

MPI Components MPI_Init n Tells MPI to do all the necessary setup. MPI_Finalize n – 10 – Tells MPI we’re done, so clean up anything allocated for this program. Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

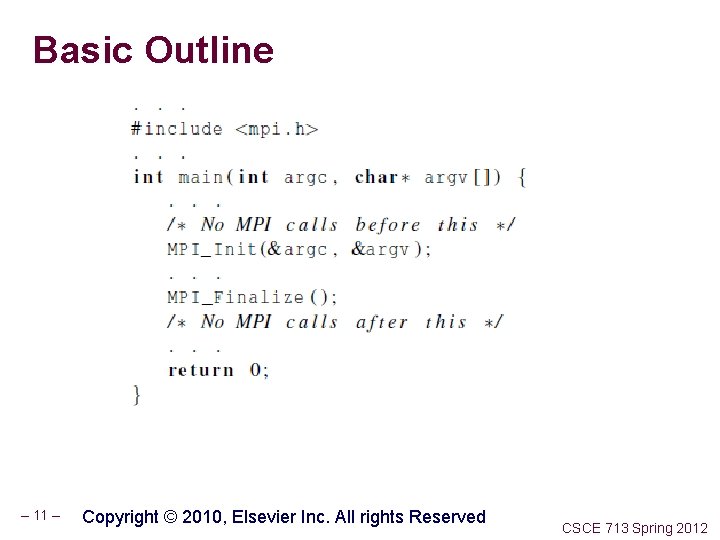

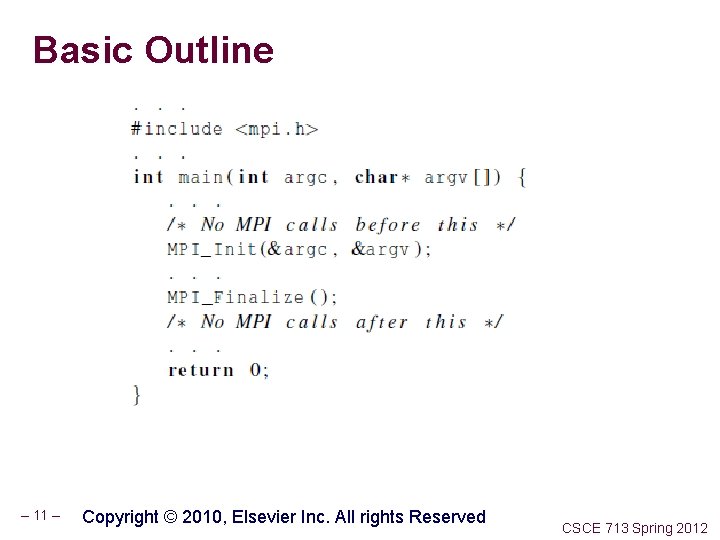

Basic Outline – 11 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

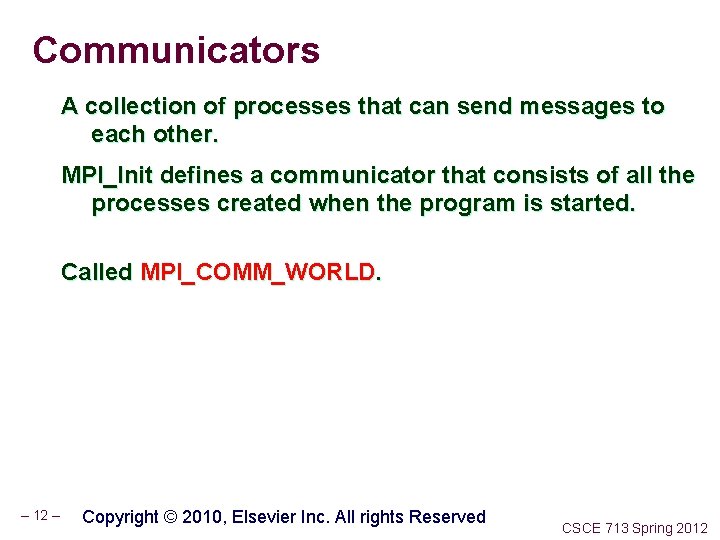

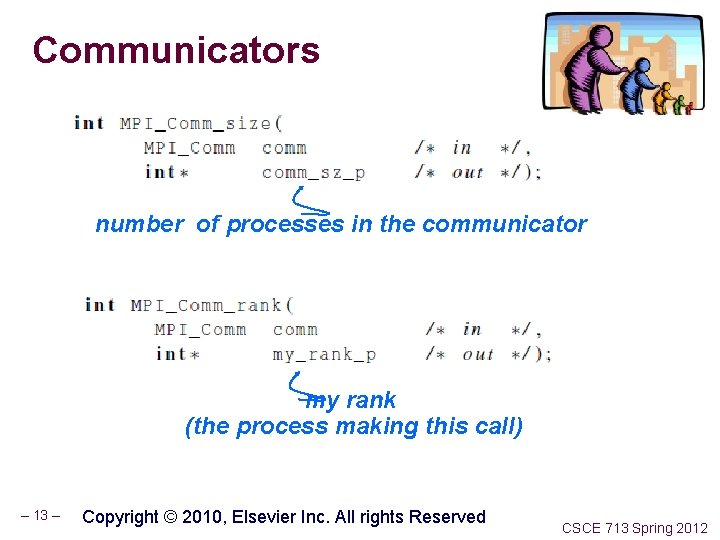

Communicators A collection of processes that can send messages to each other. MPI_Init defines a communicator that consists of all the processes created when the program is started. Called MPI_COMM_WORLD. – 12 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

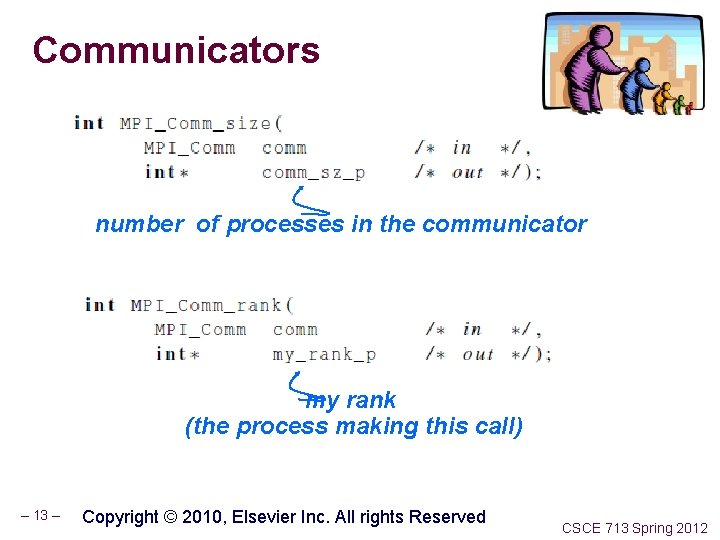

Communicators number of processes in the communicator my rank (the process making this call) – 13 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

SPMD Single-Program Multiple-Data We compile one program. Process 0 does something different. n Receives messages and prints them while the other processes do the work. The if-else construct makes our program SPMD. – 14 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

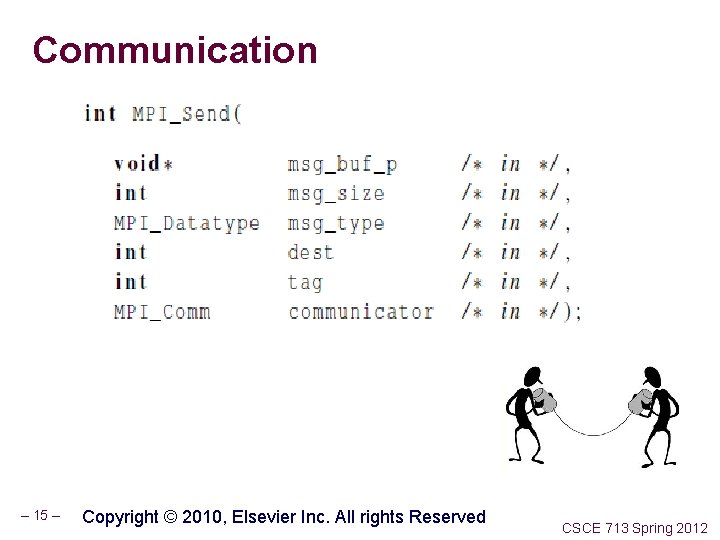

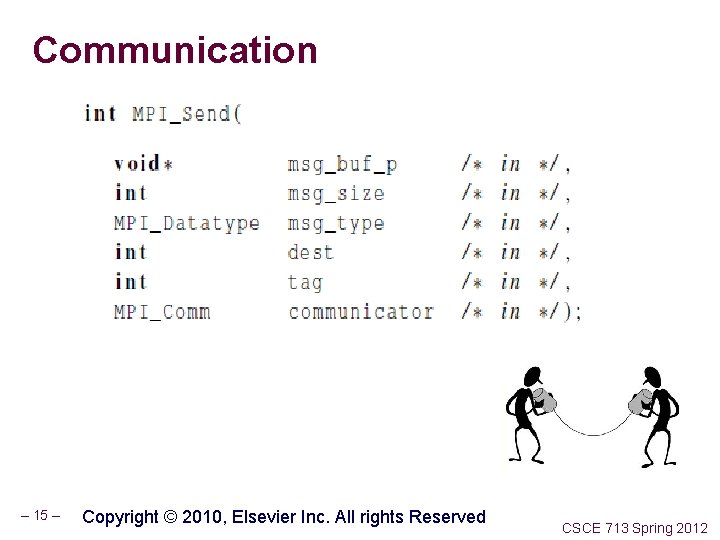

Communication – 15 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

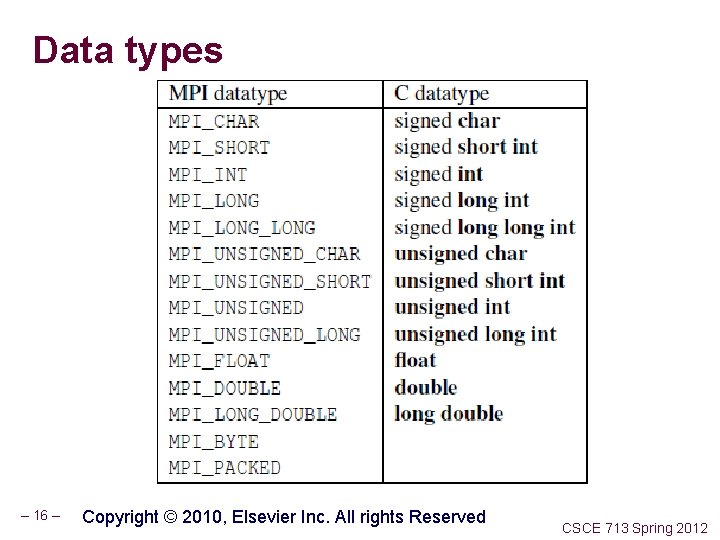

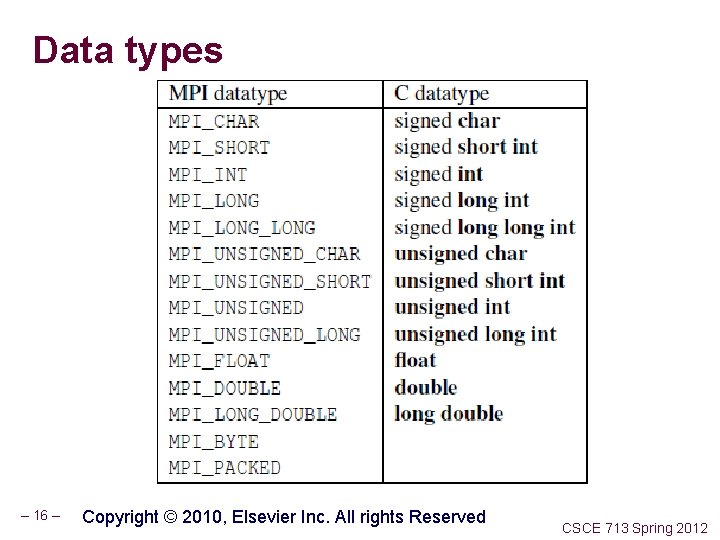

Data types – 16 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

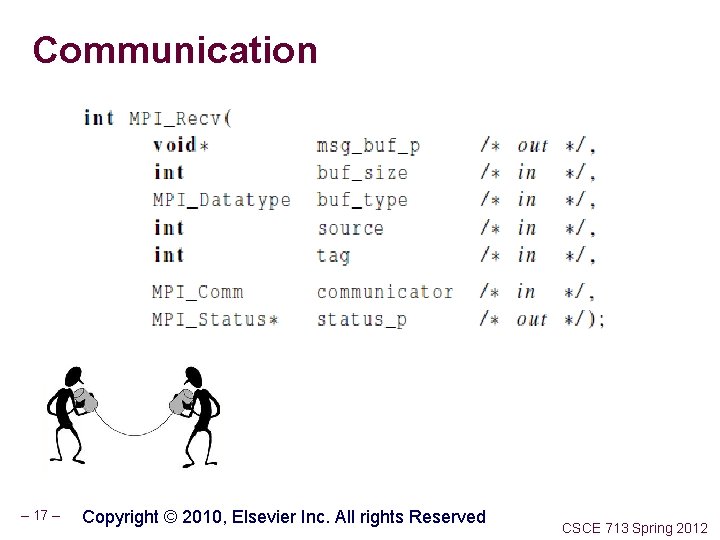

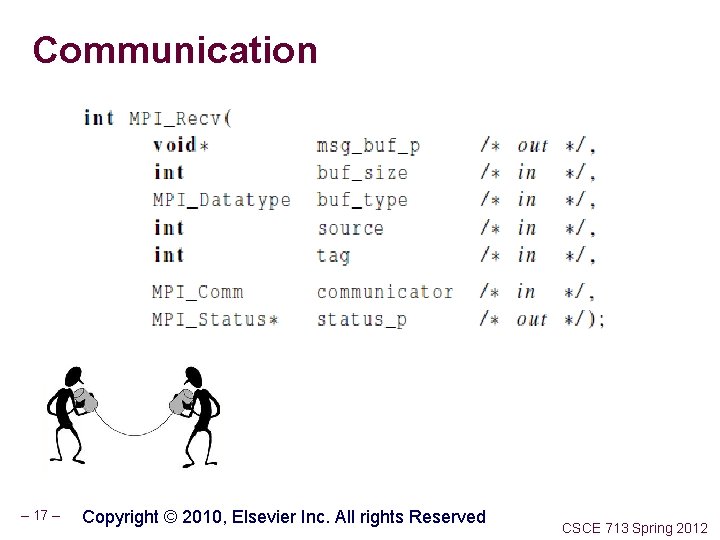

Communication – 17 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

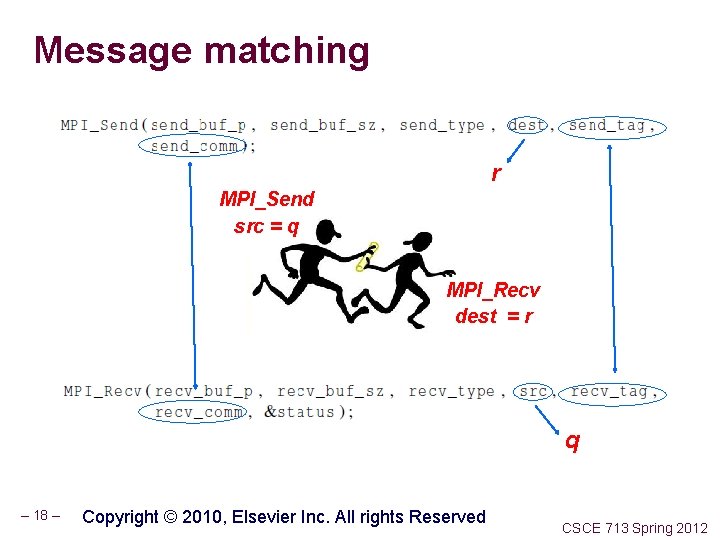

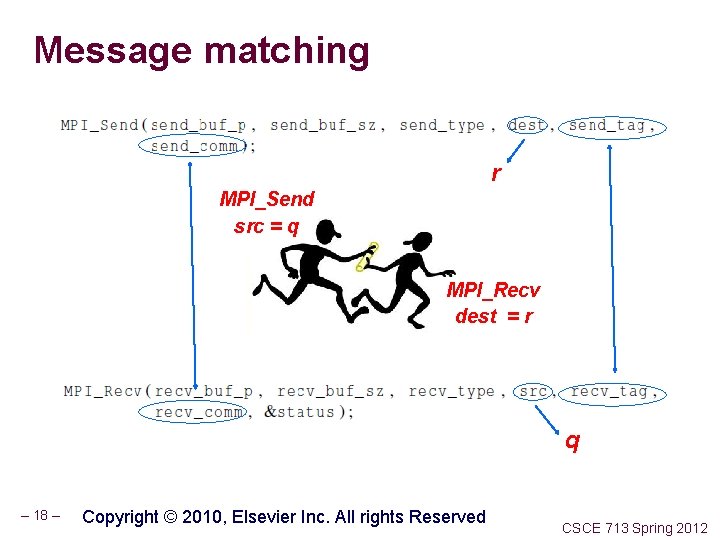

Message matching r MPI_Send src = q MPI_Recv dest = r q – 18 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Receiving messages A receiver can get a message without knowing: n the amount of data in the message, n the sender of the message, or the tag of the message. n – 19 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

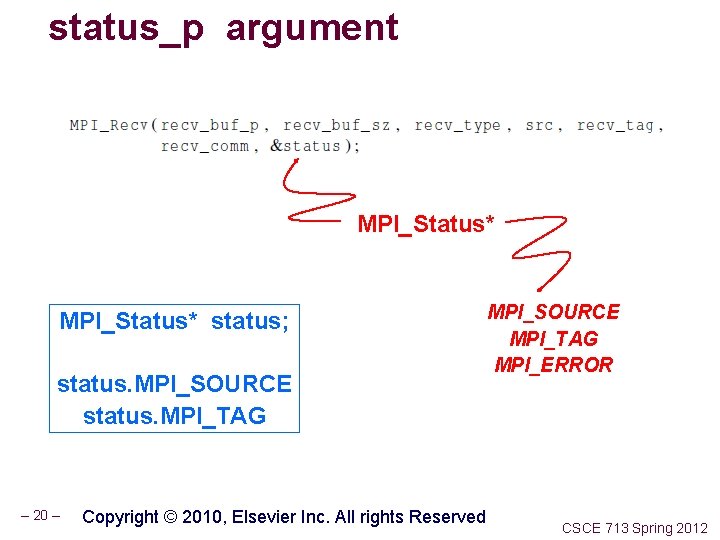

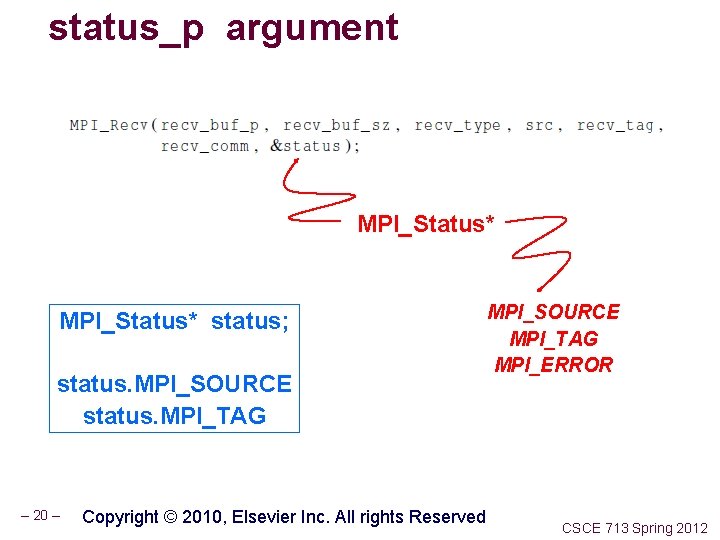

status_p argument MPI_Status* status; status. MPI_SOURCE status. MPI_TAG – 20 – Copyright © 2010, Elsevier Inc. All rights Reserved MPI_SOURCE MPI_TAG MPI_ERROR CSCE 713 Spring 2012

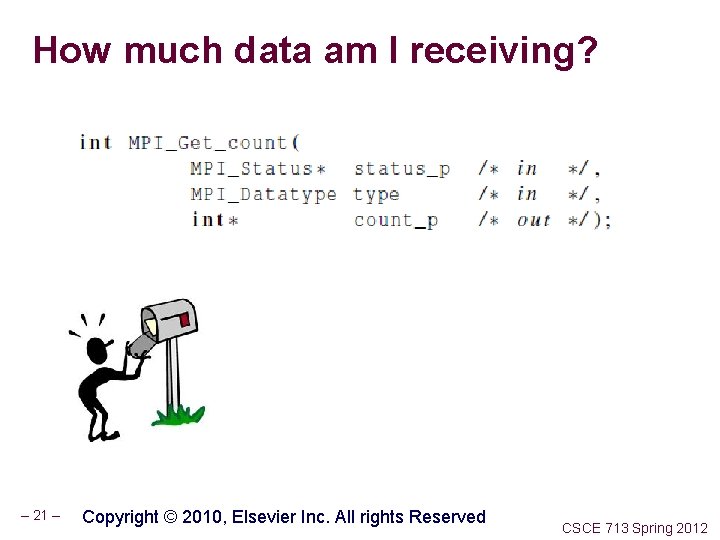

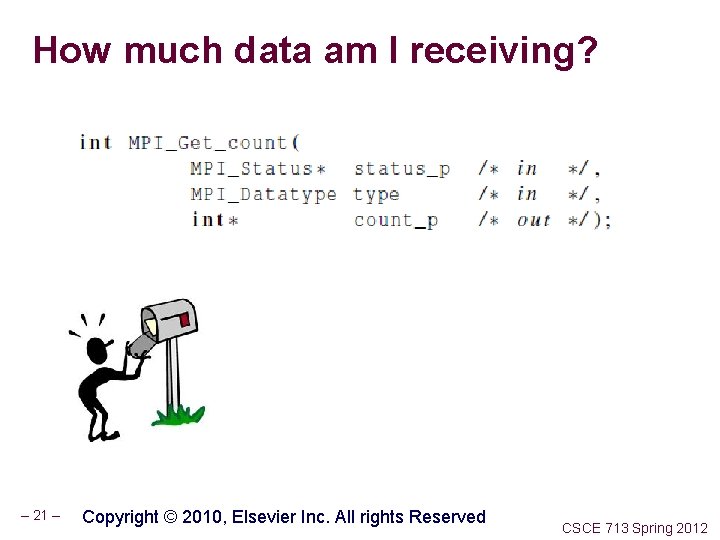

How much data am I receiving? – 21 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Issues with send and receive Exact behavior is determined by the MPI implementation. MPI_Send may behave differently with regard to buffer size, cutoffs and blocking. MPI_Recv always blocks until a matching message is received. Know your implementation; don’t make assumptions! – 22 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

TRAPEZOIDAL RULE IN MPI – 23 – COPYRIGHT © 2010, ELSEVIER INC. ALL RIGHTS RESERVED CSCE 713 Spring 2012

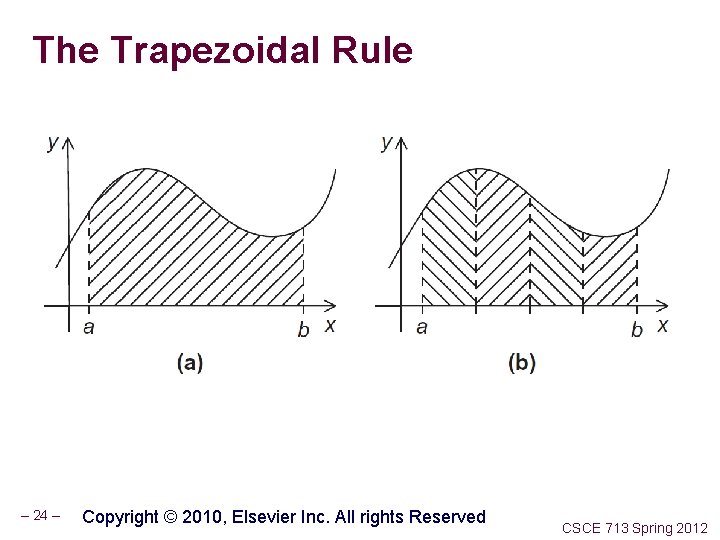

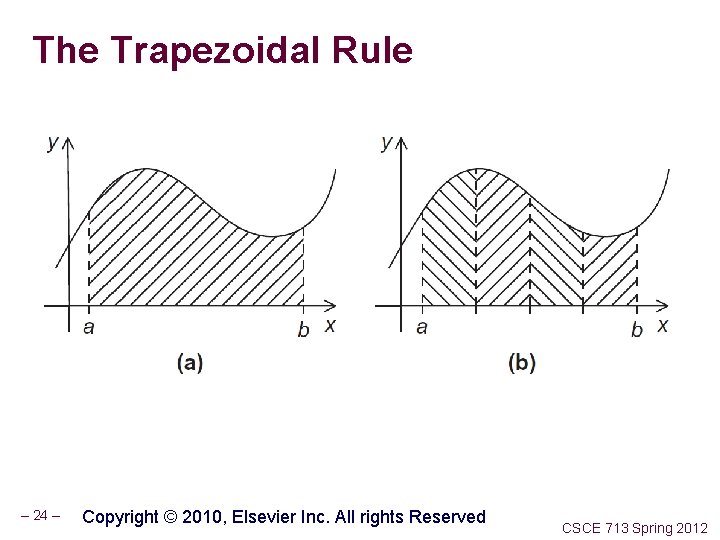

The Trapezoidal Rule – 24 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

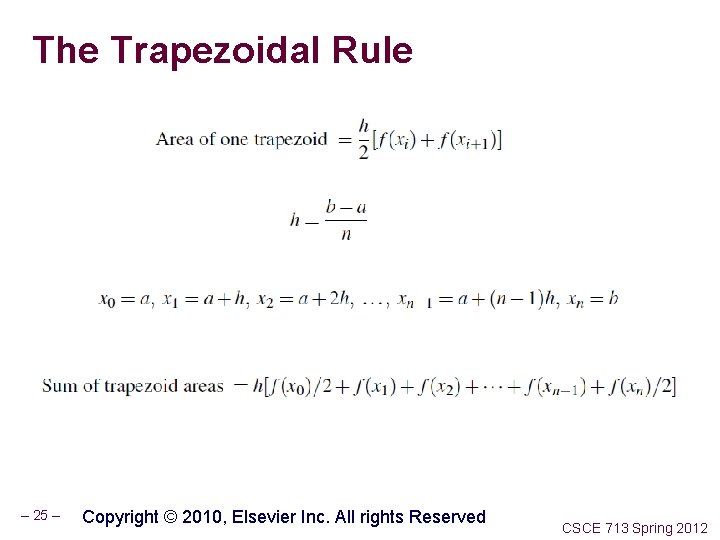

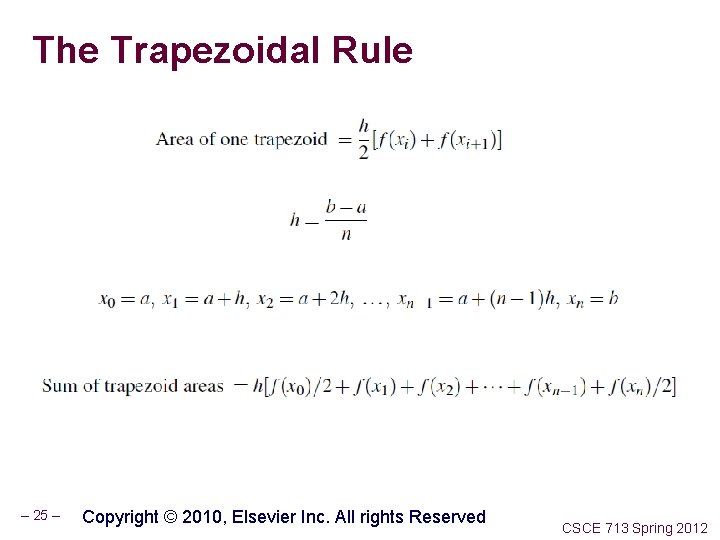

The Trapezoidal Rule – 25 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

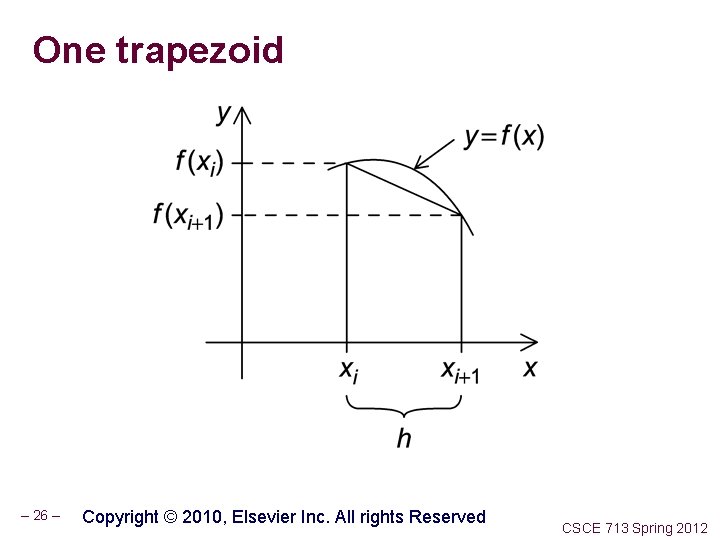

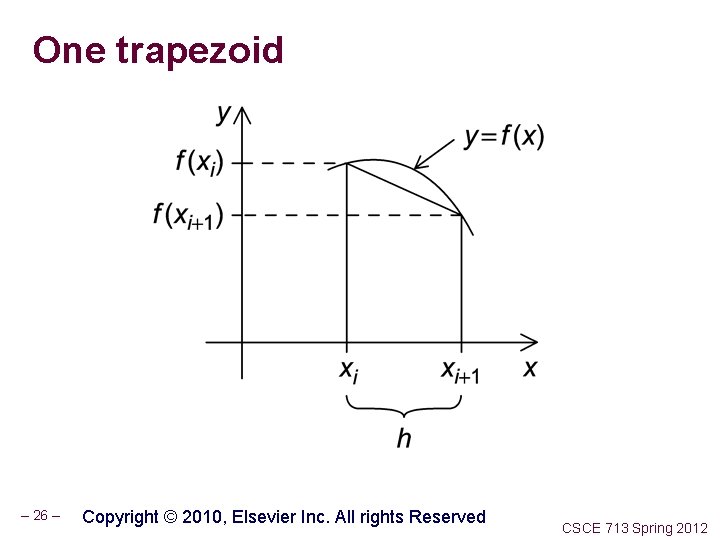

One trapezoid – 26 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

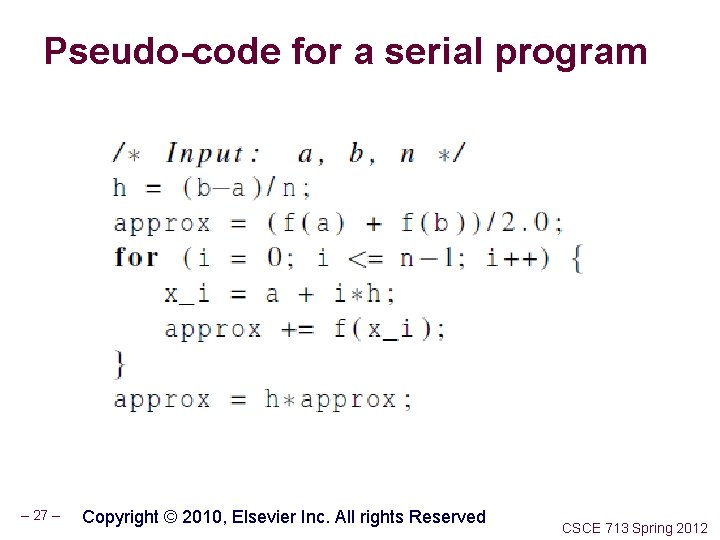

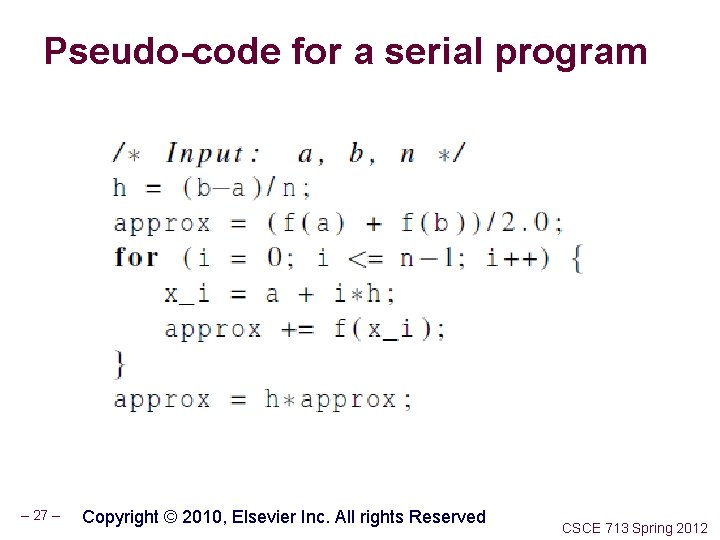

Pseudo-code for a serial program – 27 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

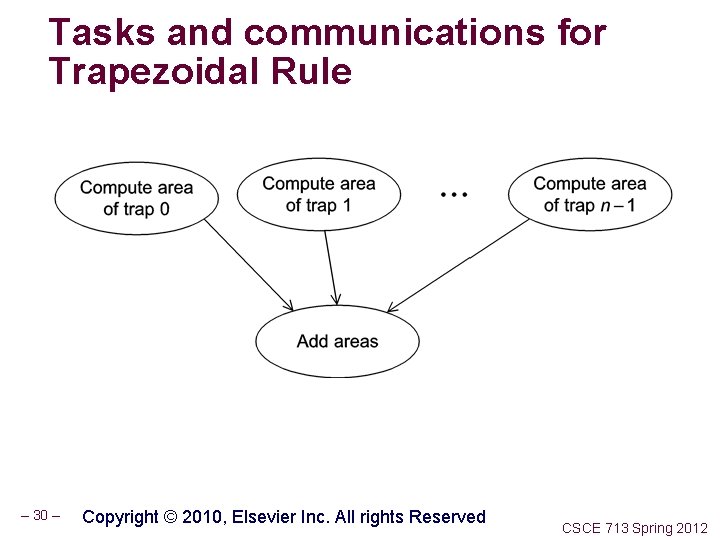

Parallelizing the Trapezoidal Rule 1. Partition problem solution into tasks. 2. Identify communication channels between tasks. 3. Aggregate tasks into composite tasks. 4. Map composite tasks to cores. – 28 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

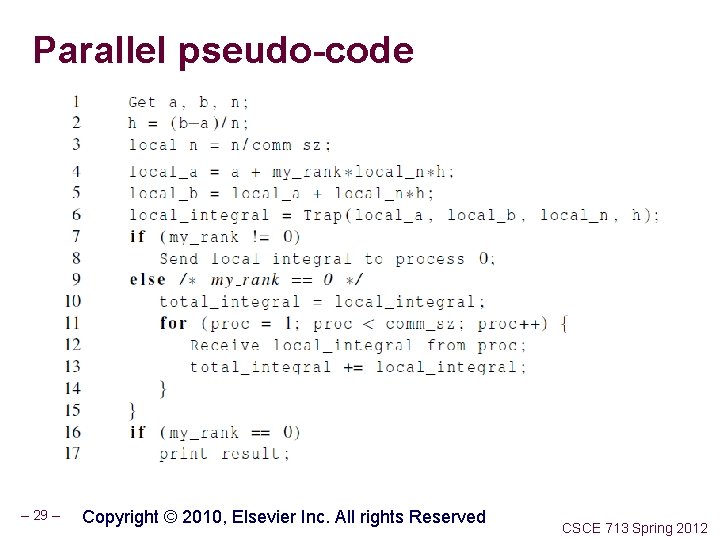

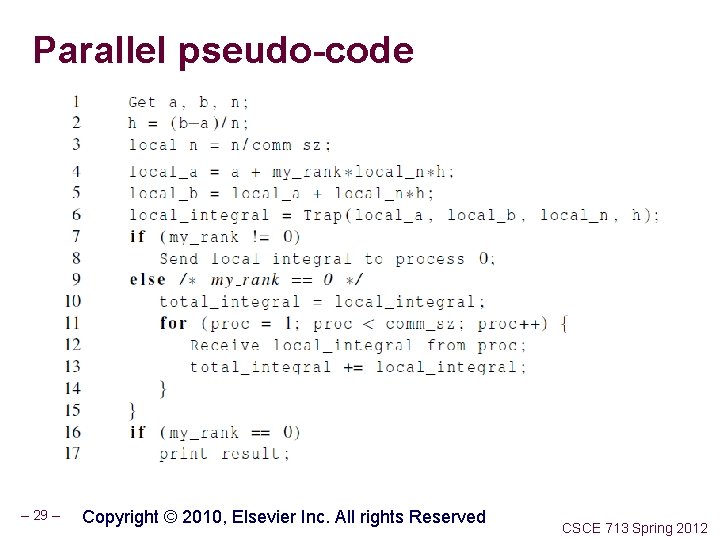

Parallel pseudo-code – 29 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

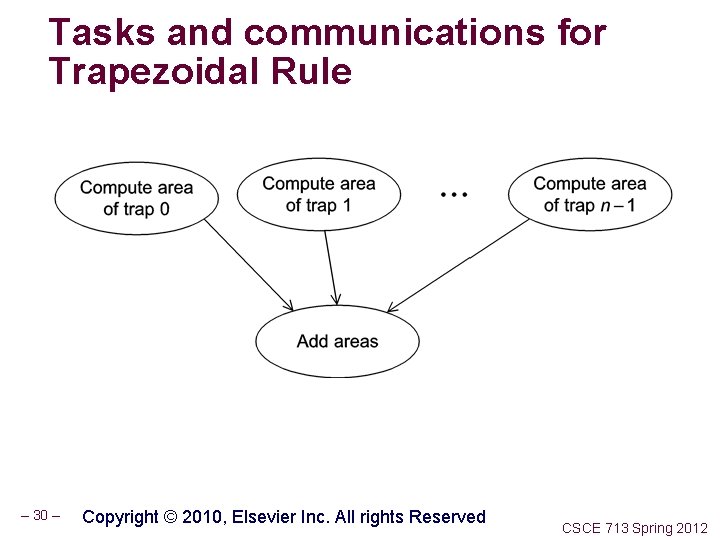

Tasks and communications for Trapezoidal Rule – 30 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

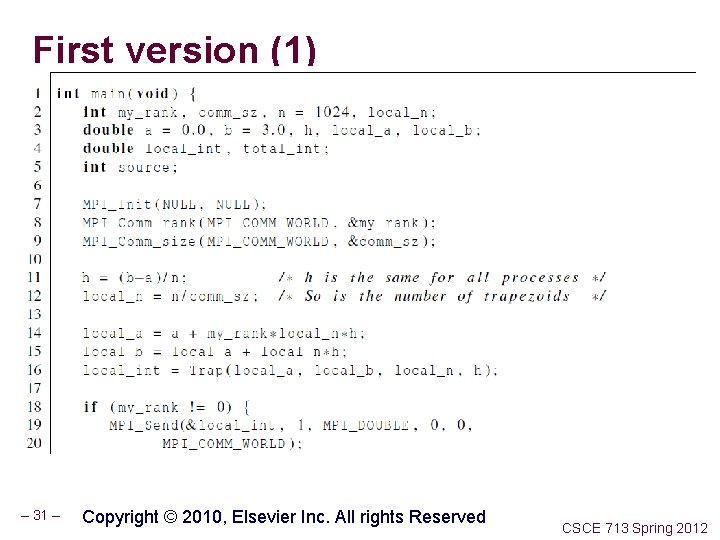

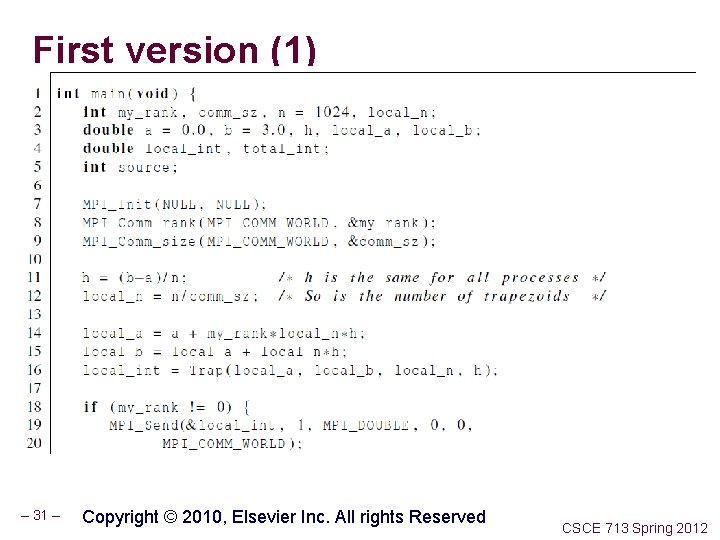

First version (1) – 31 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

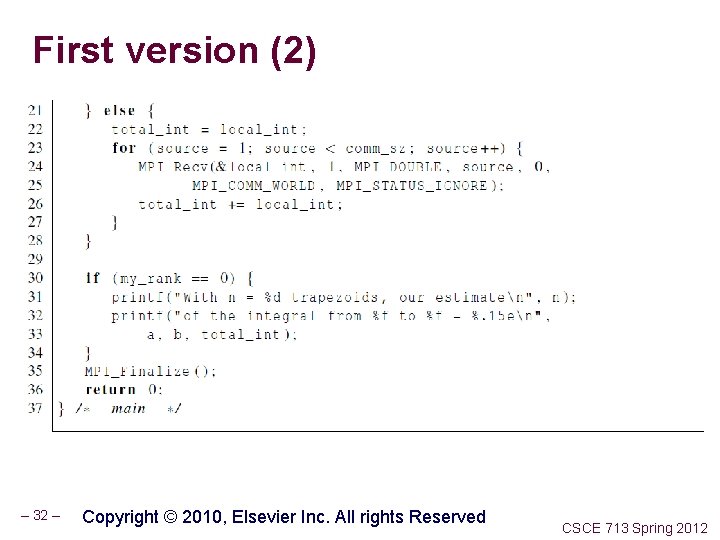

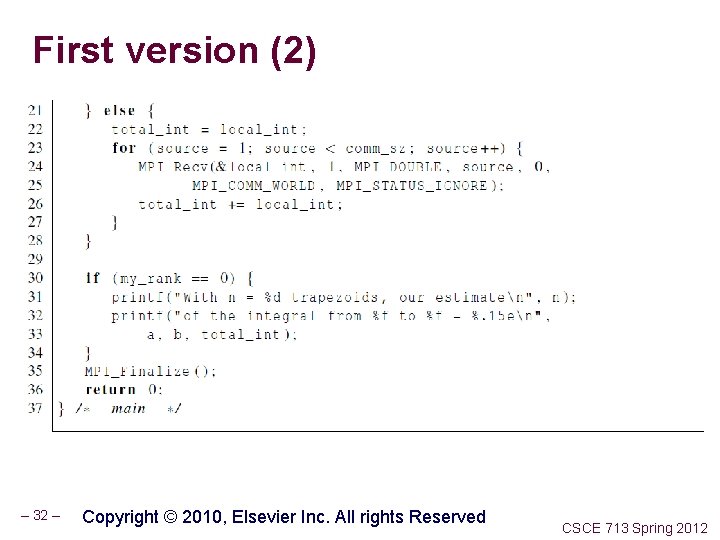

First version (2) – 32 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

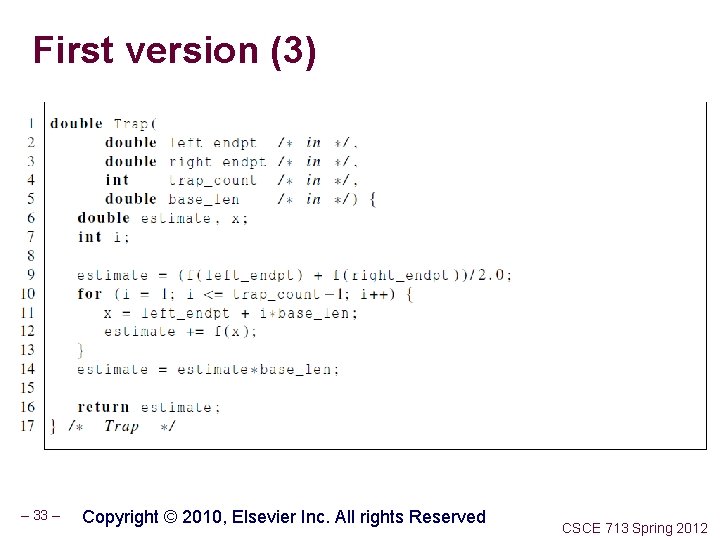

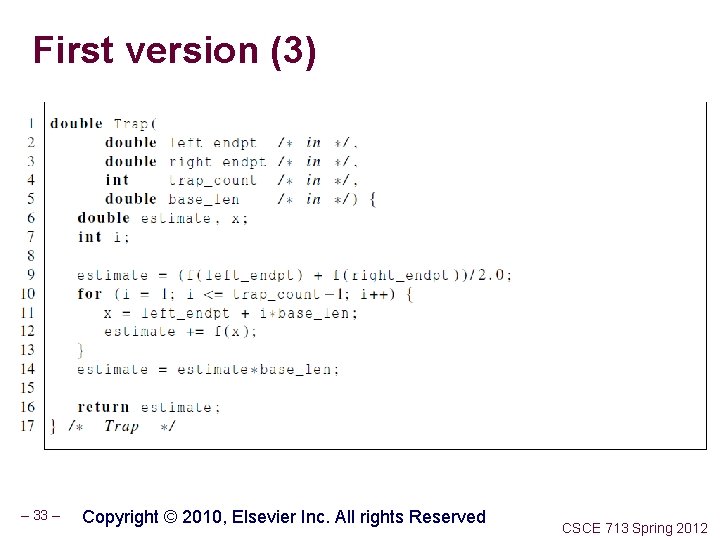

First version (3) – 33 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

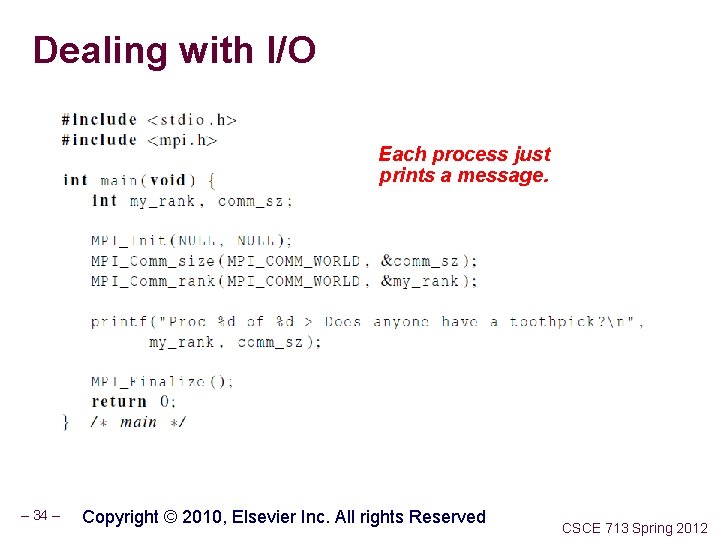

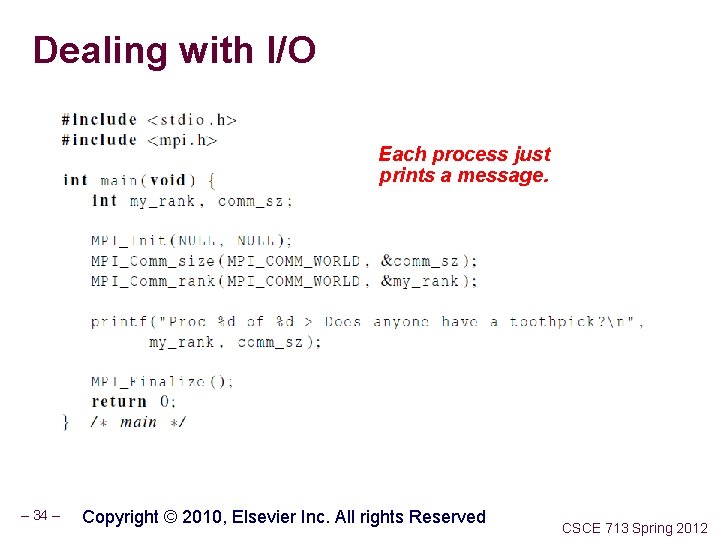

Dealing with I/O Each process just prints a message. – 34 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

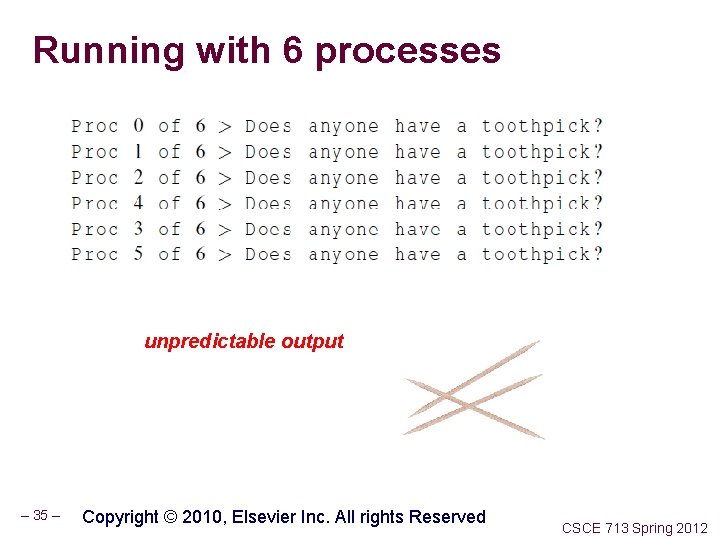

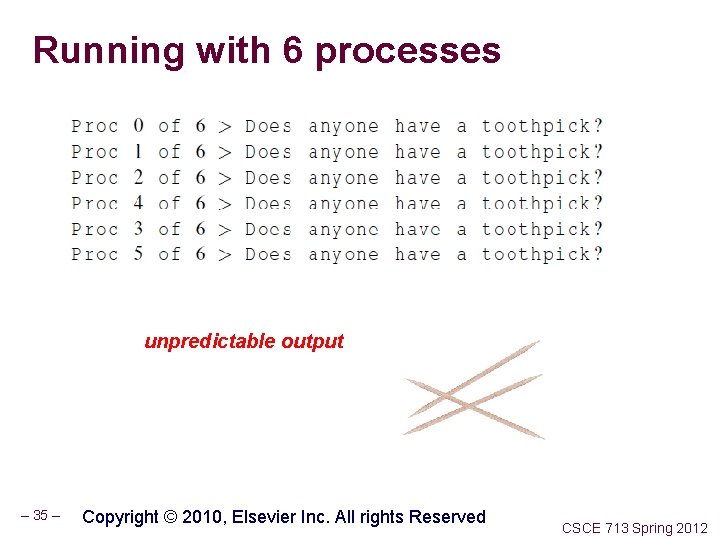

Running with 6 processes unpredictable output – 35 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

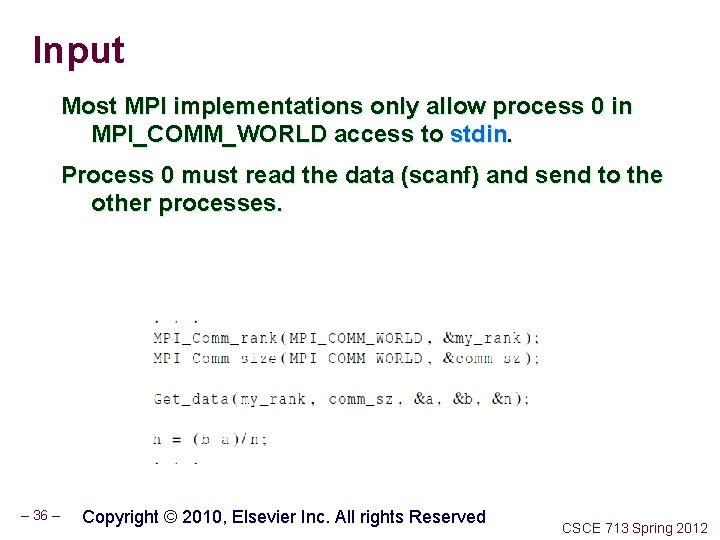

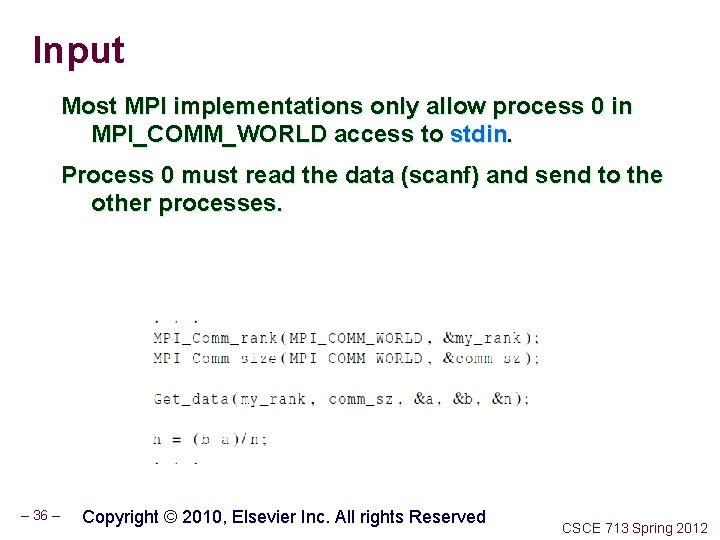

Input Most MPI implementations only allow process 0 in MPI_COMM_WORLD access to stdin. Process 0 must read the data (scanf) and send to the other processes. – 36 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

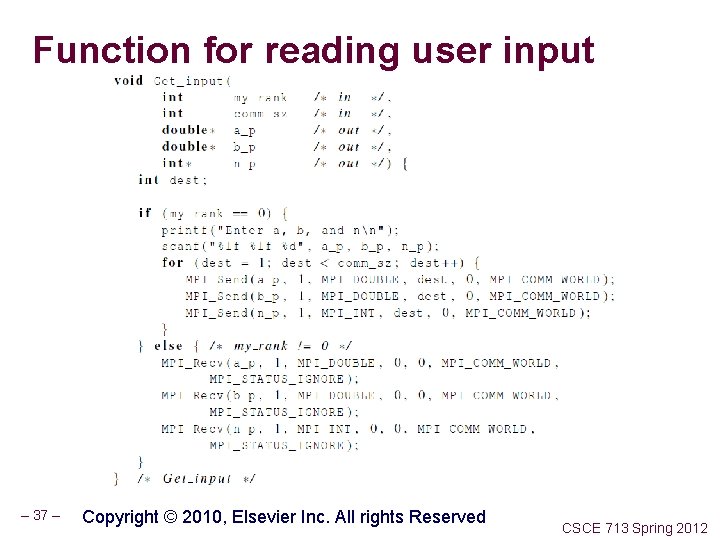

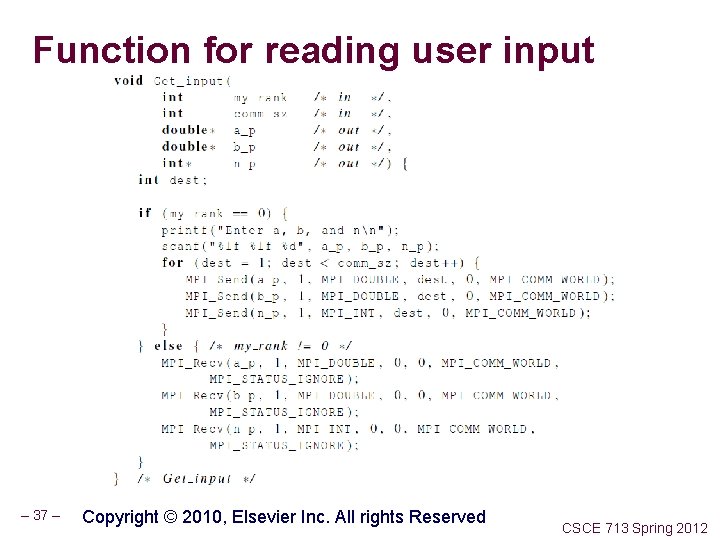

Function for reading user input – 37 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

COLLECTIVE COMMUNICATION – 38 – COPYRIGHT © 2010, ELSEVIER INC. ALL RIGHTS RESERVED CSCE 713 Spring 2012

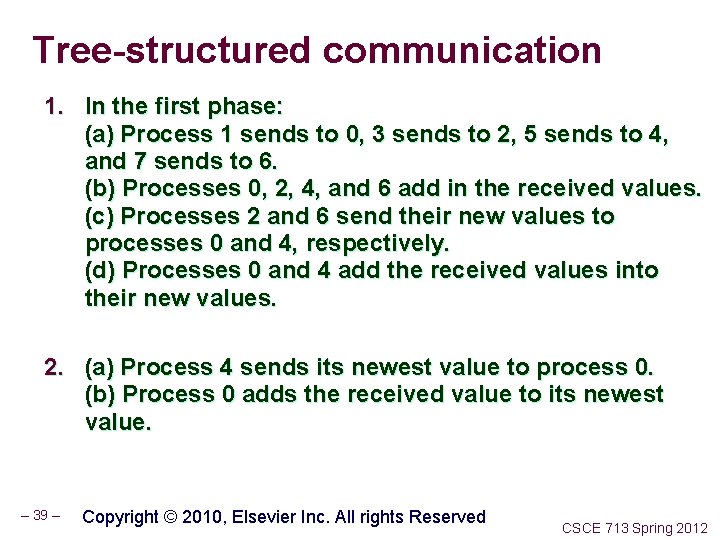

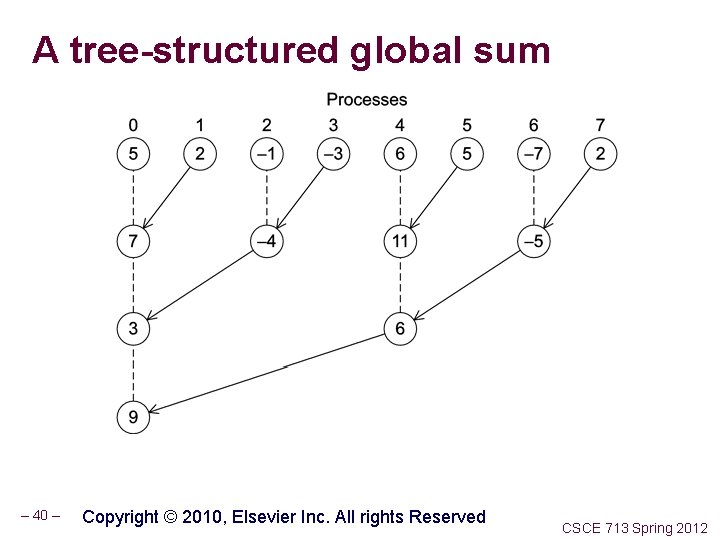

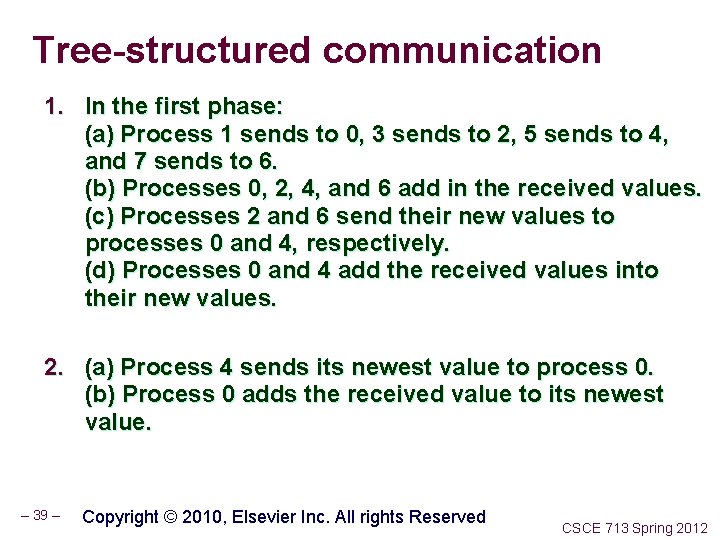

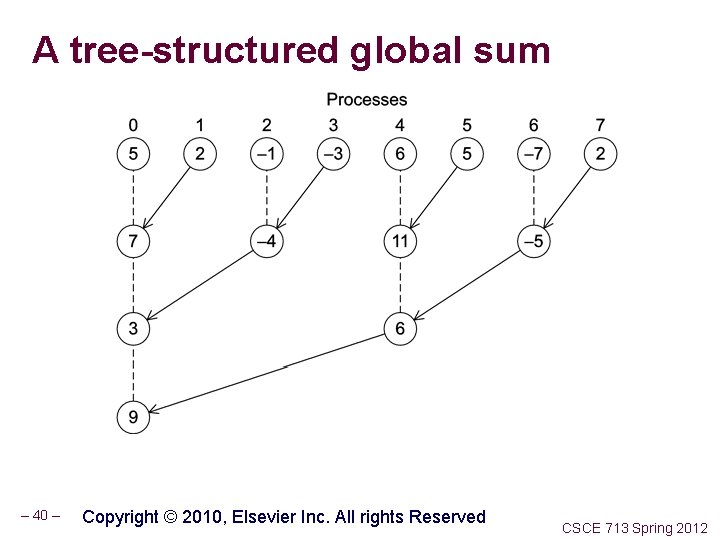

Tree-structured communication 1. In the first phase: (a) Process 1 sends to 0, 3 sends to 2, 5 sends to 4, and 7 sends to 6. (b) Processes 0, 2, 4, and 6 add in the received values. (c) Processes 2 and 6 send their new values to processes 0 and 4, respectively. (d) Processes 0 and 4 add the received values into their new values. 2. (a) Process 4 sends its newest value to process 0. (b) Process 0 adds the received value to its newest value. – 39 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

A tree-structured global sum – 40 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Overview Last Time n Posix Pthreads: create, join, exit, mutexes n /class/csce 713 -006 Code and Data Readings for today n http: //csapp. cs. cmu. edu/public/1 e/public/ch 9 -preview. pdf New n Website alive and kicking; dropbox too! n From Last Lecture’s slides: Gauss-Seidel Method, Barriers, Threads Assignment Next time performance evaluation, barriers and MPI intro n – 41 – CSCE 713 Spring 2012

Threads programming Assignment 1. Matrix addition (embarassingly parallel) 2. Versions a. b. c. d. Sequential with blocking factor Sequential Read without conversions Multi threaded passing number of threads as command line argument (args. c code should be distributed as an example) 3. Plot of several runs 4. Next time – 42 – CSCE 713 Spring 2012

Next few slides are from Parallel Programming by Pacheco /class/csce 713 -006/Code/Pacheco contains the code (again only for use with this course do not distribute) Examples 1. Simple use of mutex in calculating sum 2. Semaphore – 43 – CSCE 713 Spring 2012

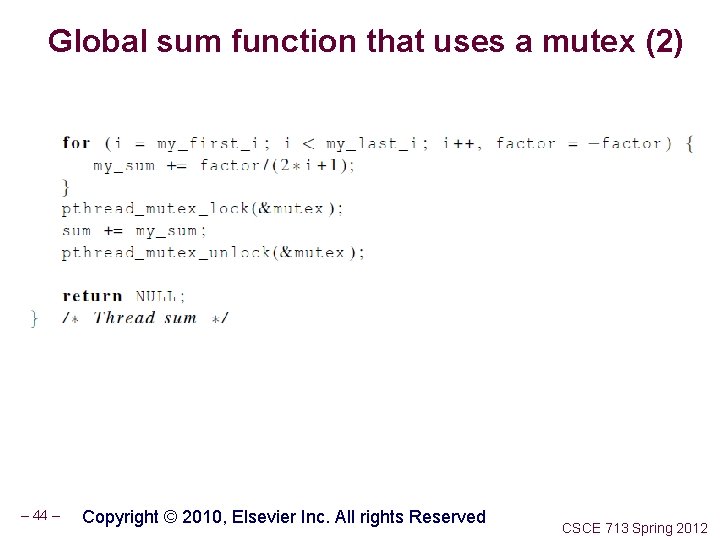

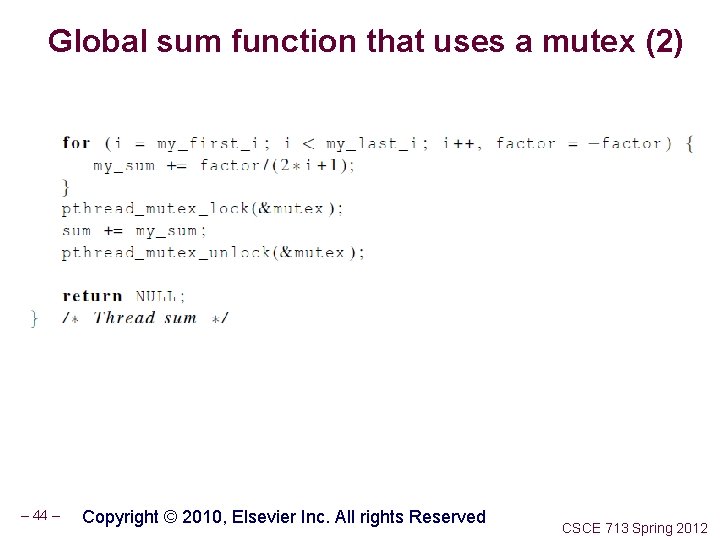

Global sum function that uses a mutex (2) – 44 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Barriers - synchronizing threads Synchronizing threads after a period of computation • No thread proceeds until all others have reached the barrier E. g. , last time iteration of Gauss-Siedel, sync check for convergence Examples of barrier uses and implementations 1. Using barriers for testing convergence, i. e. satisfying a completion criteria 2. Using barriers to time the slowest thread 3. Using barriers for debugging – 45 – CSCE 713 Spring 2012

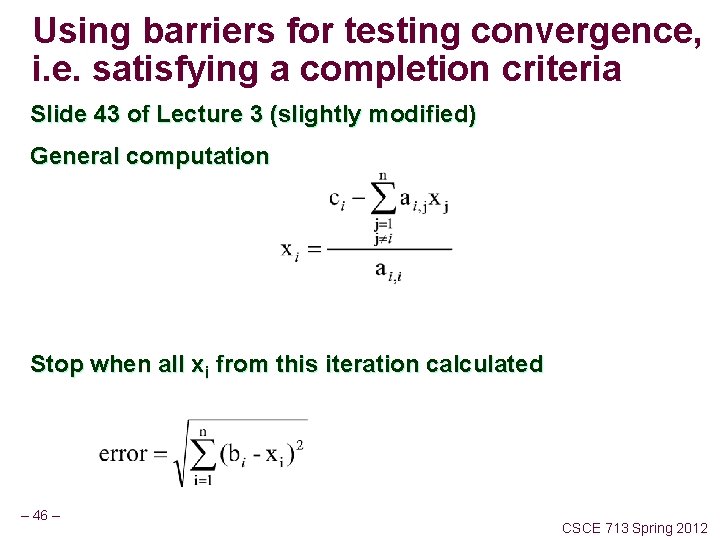

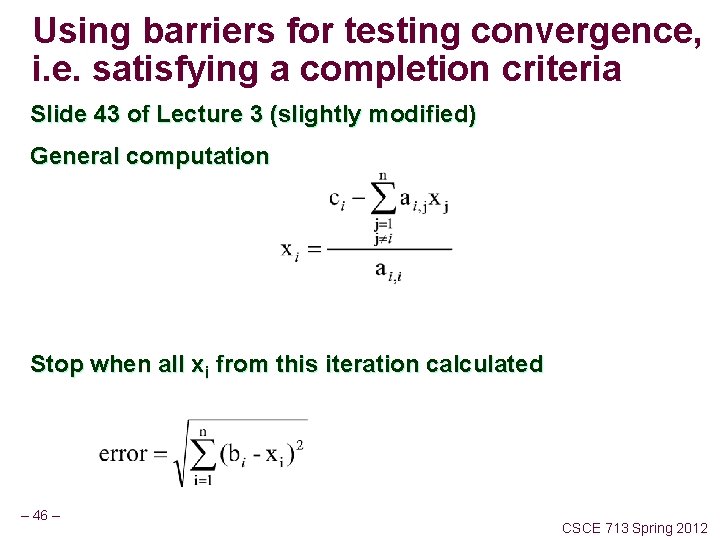

Using barriers for testing convergence, i. e. satisfying a completion criteria Slide 43 of Lecture 3 (slightly modified) General computation Stop when all xi from this iteration calculated – 46 – CSCE 713 Spring 2012

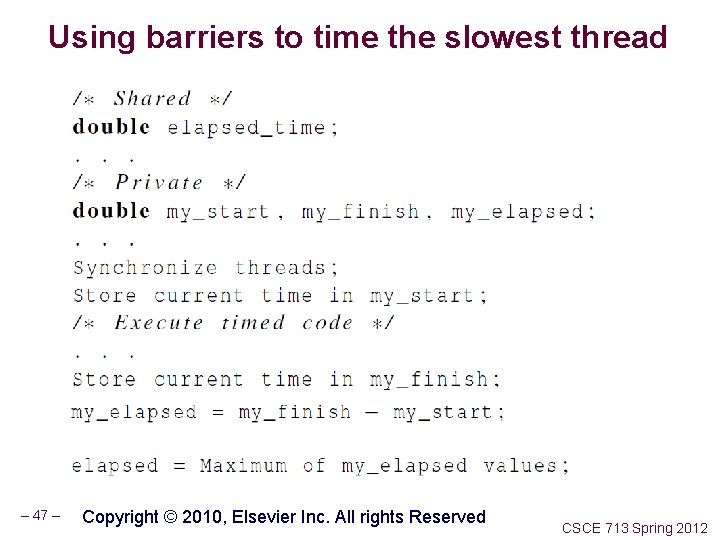

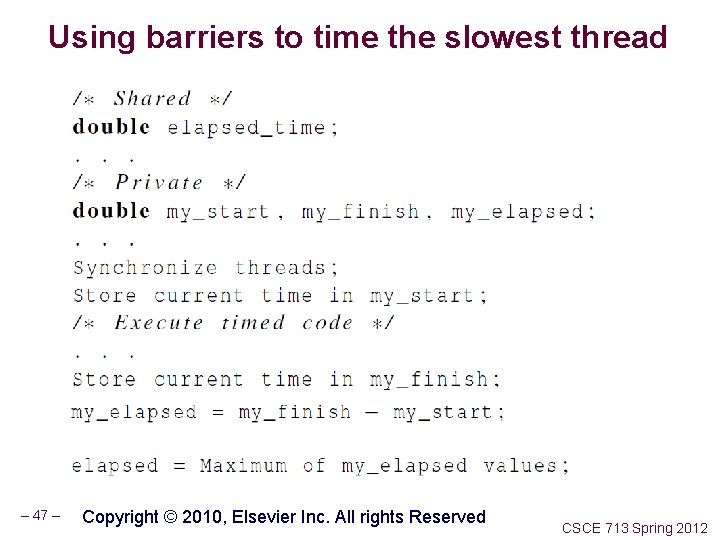

Using barriers to time the slowest thread – 47 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

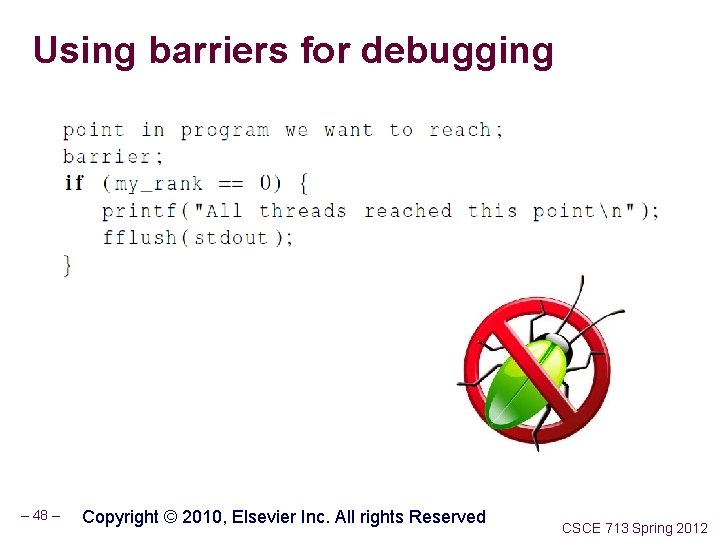

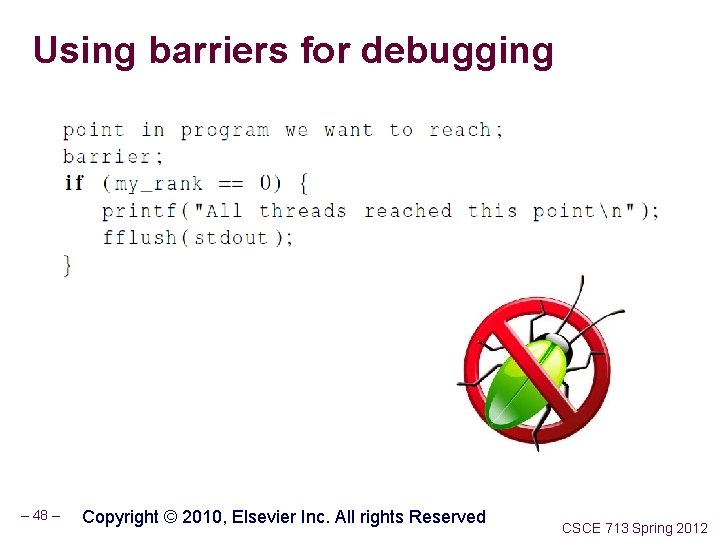

Using barriers for debugging – 48 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Barrier Implementations 1. Mutex , thread. Counter, busy-waits 2. Mutex plus barrier semaphore – 49 – CSCE 713 Spring 2012

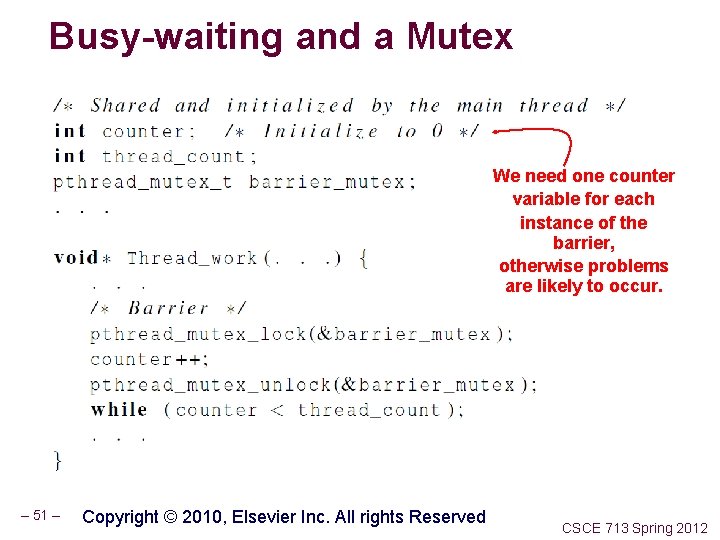

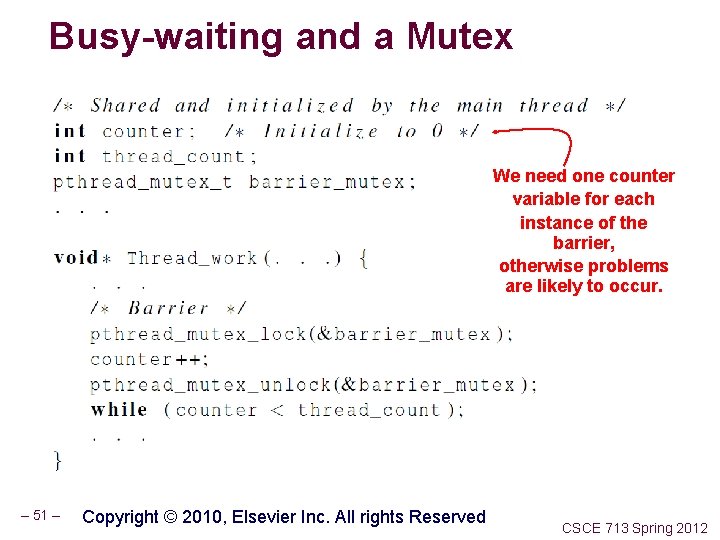

Busy-waiting and a Mutex Implementing a barrier using busy-waiting and a mutex is straightforward. We use a shared counter protected by the mutex. When the counter indicates that every thread has entered the critical section, threads can leave the critical section. – 50 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Busy-waiting and a Mutex We need one counter variable for each instance of the barrier, otherwise problems are likely to occur. – 51 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

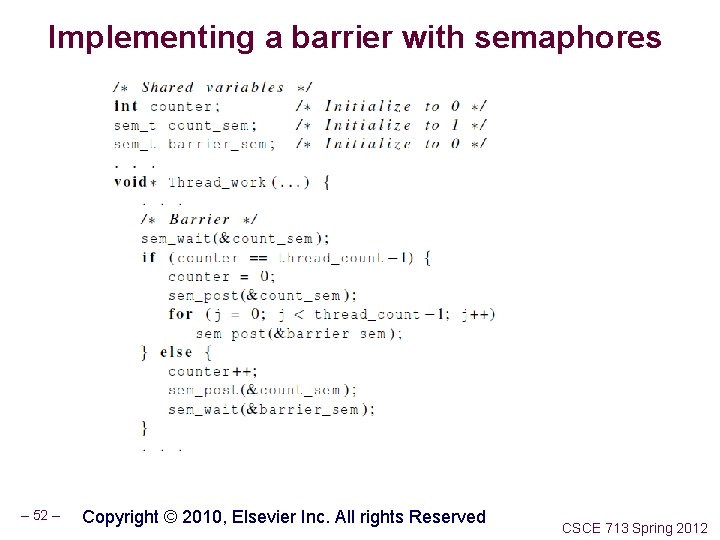

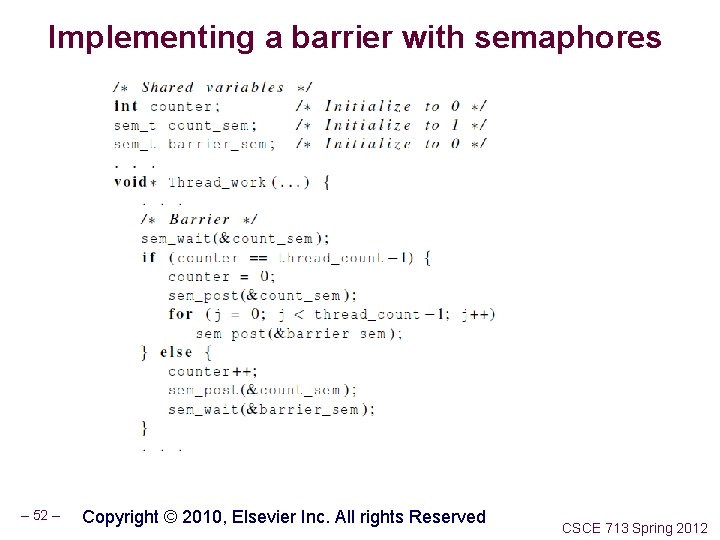

Implementing a barrier with semaphores – 52 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

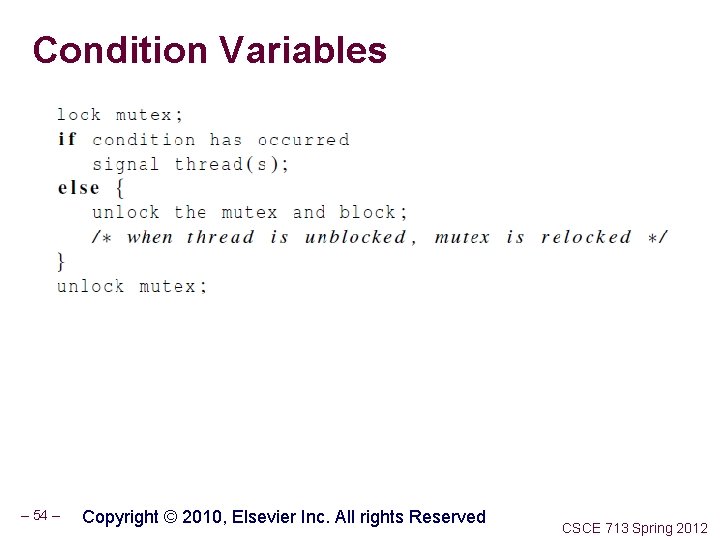

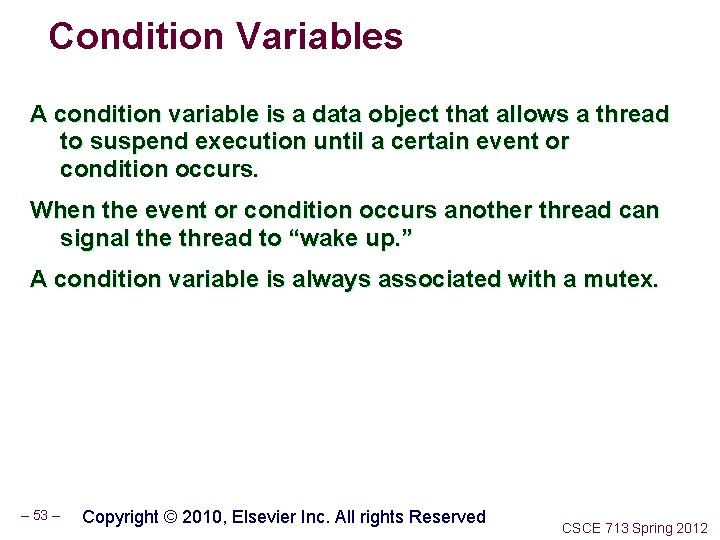

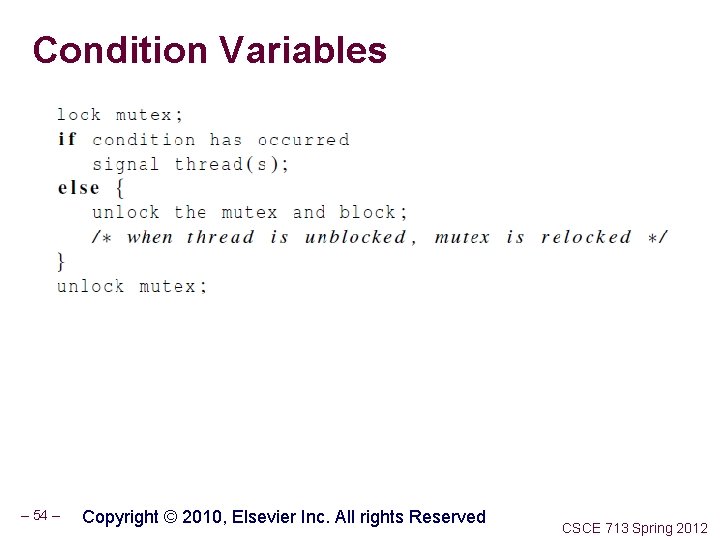

Condition Variables A condition variable is a data object that allows a thread to suspend execution until a certain event or condition occurs. When the event or condition occurs another thread can signal the thread to “wake up. ” A condition variable is always associated with a mutex. – 53 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

Condition Variables – 54 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

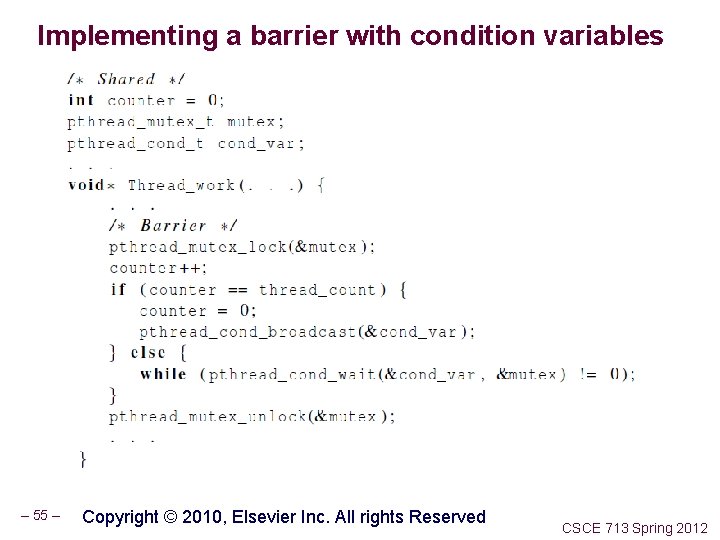

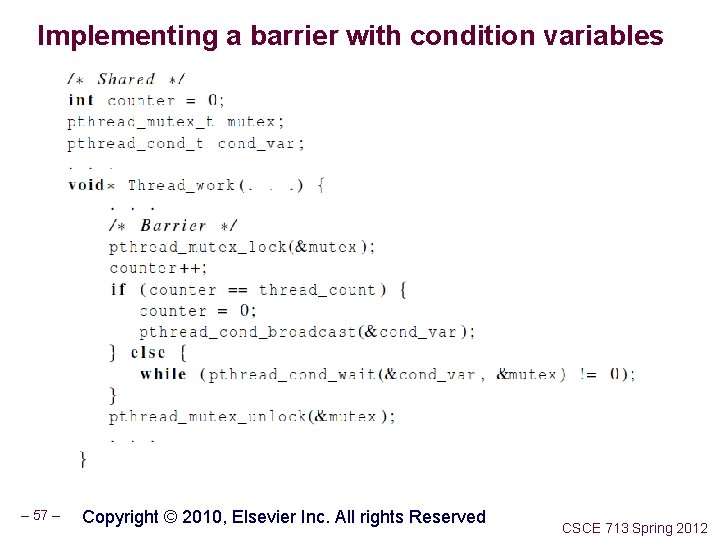

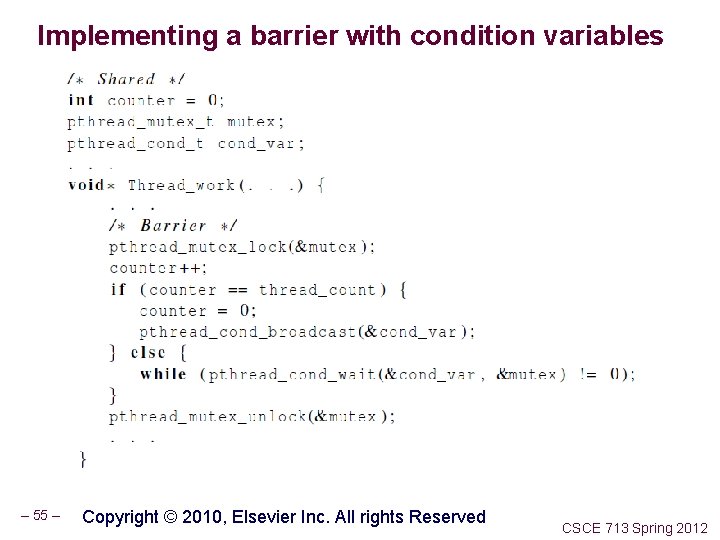

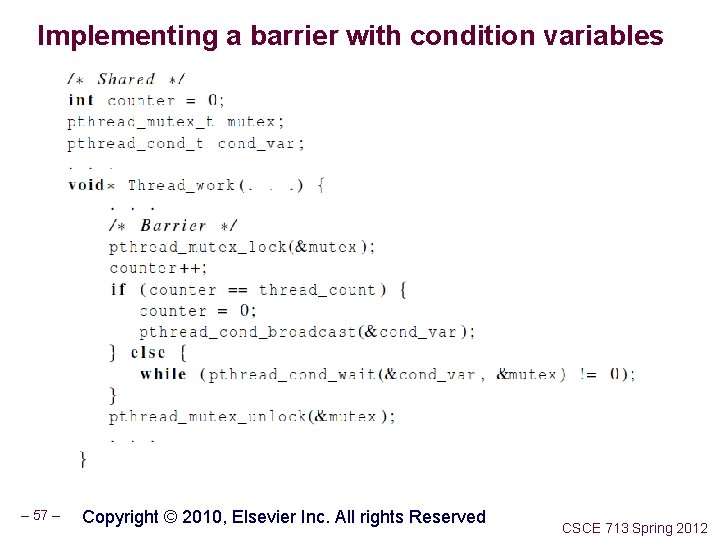

Implementing a barrier with condition variables – 55 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

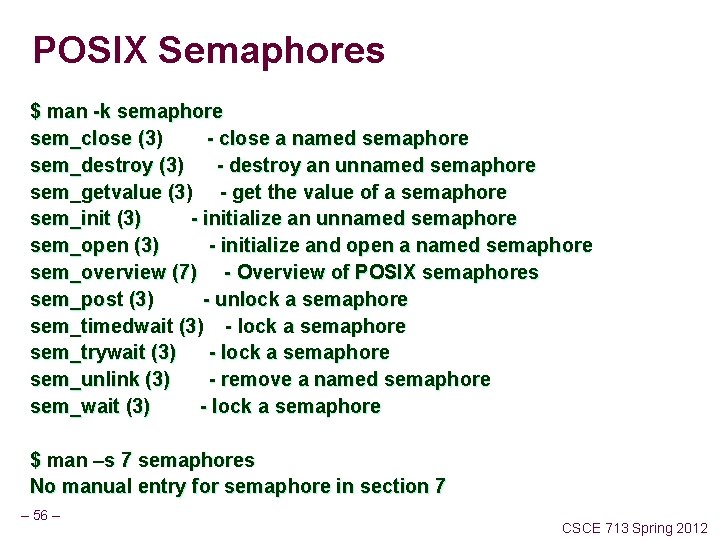

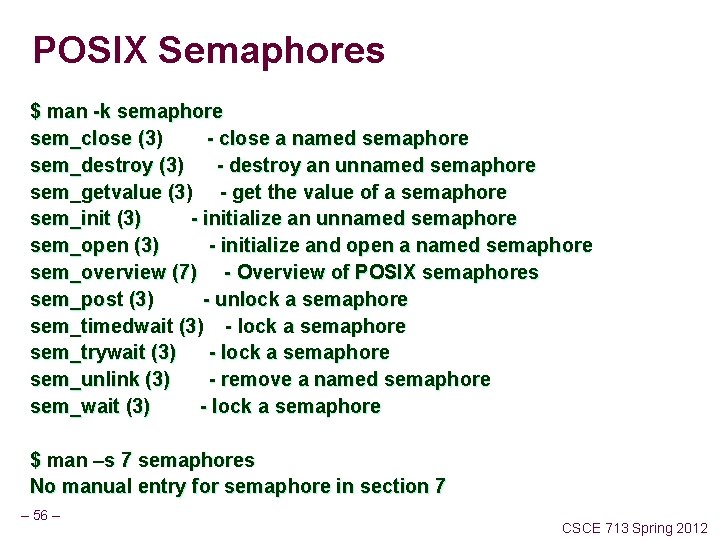

POSIX Semaphores $ man -k semaphore sem_close (3) - close a named semaphore sem_destroy (3) - destroy an unnamed semaphore sem_getvalue (3) - get the value of a semaphore sem_init (3) - initialize an unnamed semaphore sem_open (3) - initialize and open a named semaphore sem_overview (7) - Overview of POSIX semaphores sem_post (3) - unlock a semaphore sem_timedwait (3) - lock a semaphore sem_trywait (3) - lock a semaphore sem_unlink (3) - remove a named semaphore sem_wait (3) - lock a semaphore $ man –s 7 semaphores No manual entry for semaphore in section 7 – 56 – CSCE 713 Spring 2012

Implementing a barrier with condition variables – 57 – Copyright © 2010, Elsevier Inc. All rights Reserved CSCE 713 Spring 2012

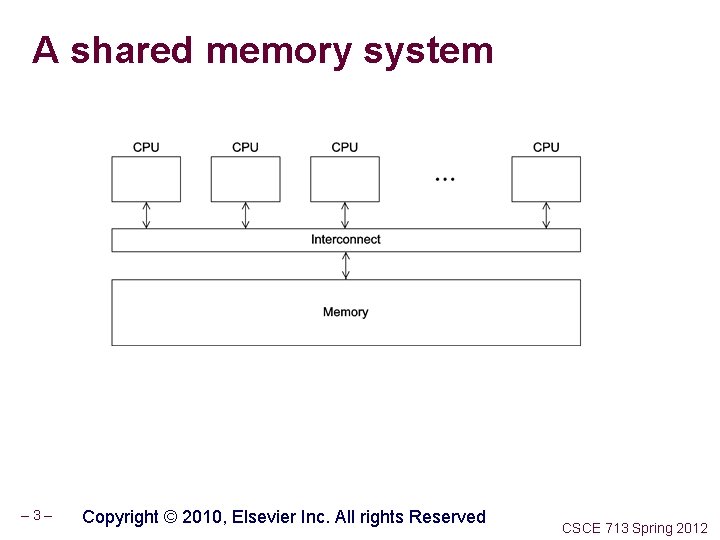

System V Semaphores (Sets) In System V IPC there are semaphore sets Commands • ipcs - provide information on ipc facilities • ipcrm - remove a message queue, semaphore set or shared memory segment etc. System Calls: semctl (2) - semaphore control operations semget (2) - get a semaphore set identifier semop (2) - semaphore operations semtimedop (2) - semaphore operations – 58 – CSCE 713 Spring 2012

– 59 – CSCE 713 Spring 2012