What is MPI q MPI Message Passing Interface

![Example int num_students; num_students: 0 1 double grade[10]; note: 0 1 char note[1024]; grade: Example int num_students; num_students: 0 1 double grade[10]; note: 0 1 char note[1024]; grade:](https://slidetodoc.com/presentation_image_h/965796e341c0255ce80ad32b8345c57d/image-28.jpg)

![Example A: 0 1 double A[10], B[15]; int rank, tag = 1001, tag 1=1002; Example A: 0 1 double A[10], B[15]; int rank, tag = 1001, tag 1=1002;](https://slidetodoc.com/presentation_image_h/965796e341c0255ce80ad32b8345c57d/image-31.jpg)

- Slides: 44

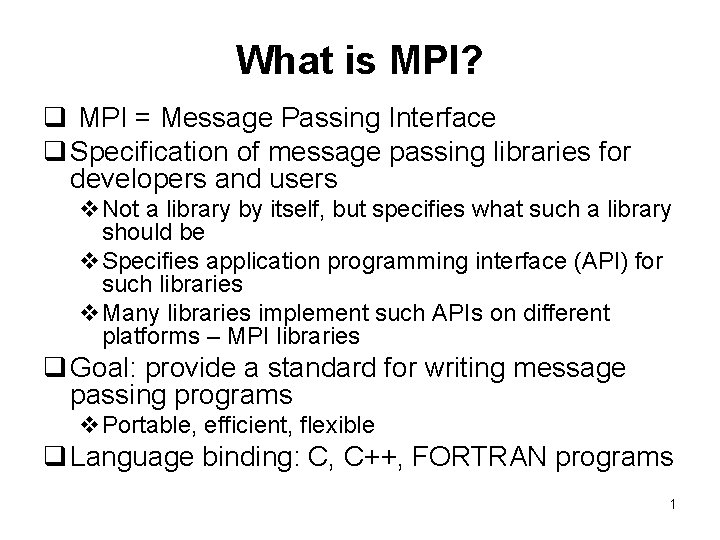

What is MPI? q MPI = Message Passing Interface q Specification of message passing libraries for developers and users v. Not a library by itself, but specifies what such a library should be v. Specifies application programming interface (API) for such libraries v. Many libraries implement such APIs on different platforms – MPI libraries q Goal: provide a standard for writing message passing programs v. Portable, efficient, flexible q Language binding: C, C++, FORTRAN programs 1

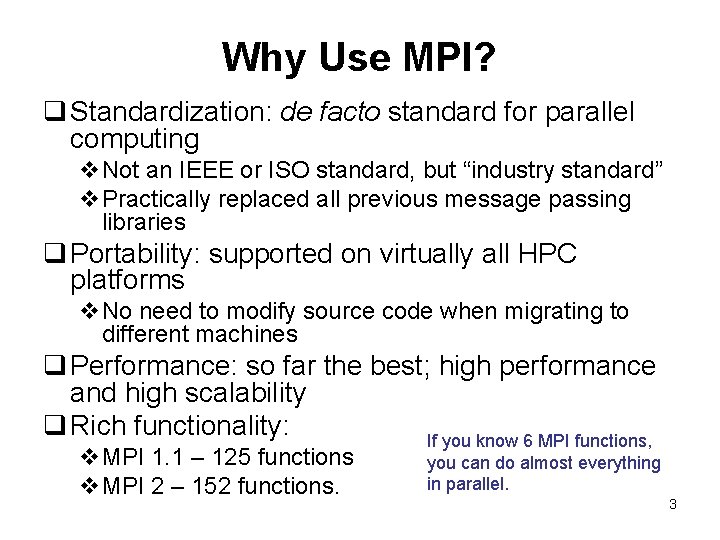

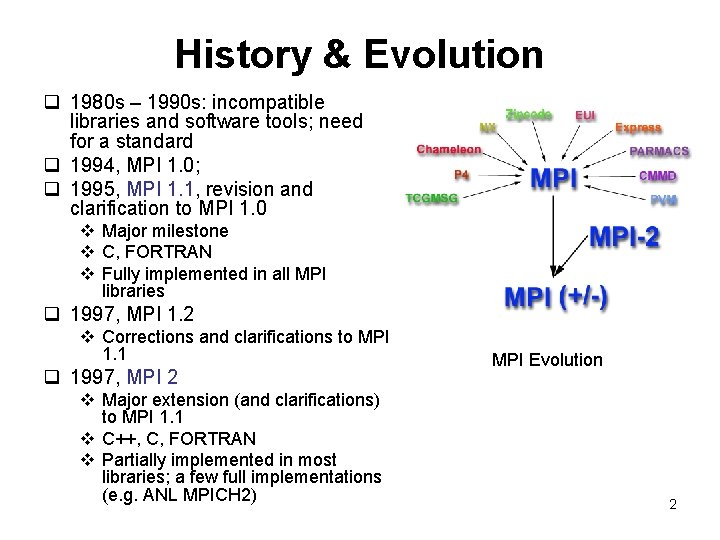

History & Evolution q 1980 s – 1990 s: incompatible libraries and software tools; need for a standard q 1994, MPI 1. 0; q 1995, MPI 1. 1, revision and clarification to MPI 1. 0 v Major milestone v C, FORTRAN v Fully implemented in all MPI libraries q 1997, MPI 1. 2 v Corrections and clarifications to MPI 1. 1 q 1997, MPI 2 v Major extension (and clarifications) to MPI 1. 1 v C++, C, FORTRAN v Partially implemented in most libraries; a few full implementations (e. g. ANL MPICH 2) MPI Evolution 2

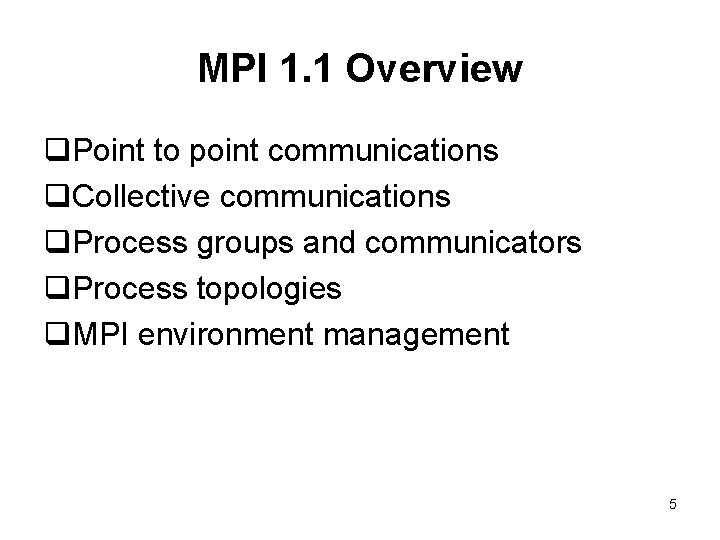

Why Use MPI? q Standardization: de facto standard for parallel computing v. Not an IEEE or ISO standard, but “industry standard” v. Practically replaced all previous message passing libraries q Portability: supported on virtually all HPC platforms v. No need to modify source code when migrating to different machines q Performance: so far the best; high performance and high scalability q Rich functionality: If you know 6 MPI functions, v. MPI 1. 1 – 125 functions v. MPI 2 – 152 functions. you can do almost everything in parallel. 3

Programming Model q Message passing model: data exchange through explicit communications. q For distributed memory, as well as shared-memory parallel machines q User has full control (data partition, distribution): needs to identify parallelism and implement parallel algorithms using MPI function calls. q Number of CPUs in computation is static v New tasks cannot be dynamically spawned during run time (MPI 1. 1) v MPI 2 specifies dynamic process creation and management, but not available in most implementations. v Not necessarily a disadvantage q General assumption: one-to-one mapping of MPI processes to processors (although not necessarily always true). 4

MPI 1. 1 Overview q. Point to point communications q. Collective communications q. Process groups and communicators q. Process topologies q. MPI environment management 5

MPI 2 Overview q. Dynamic process creation and management q. One-sided communications q. MPI Input/Output (Parallel I/O) q. Extended collective communications q. C++ binding 6

MPI Resources q. MPI Standard: vhttp: //www. mpi-forum. org/ q. MPI web sites/tutorials etc, see class web site q. Public domain (free) MPI implementations v. MPICH and MPICH 2 (from ANL) v. LAM MPI 7

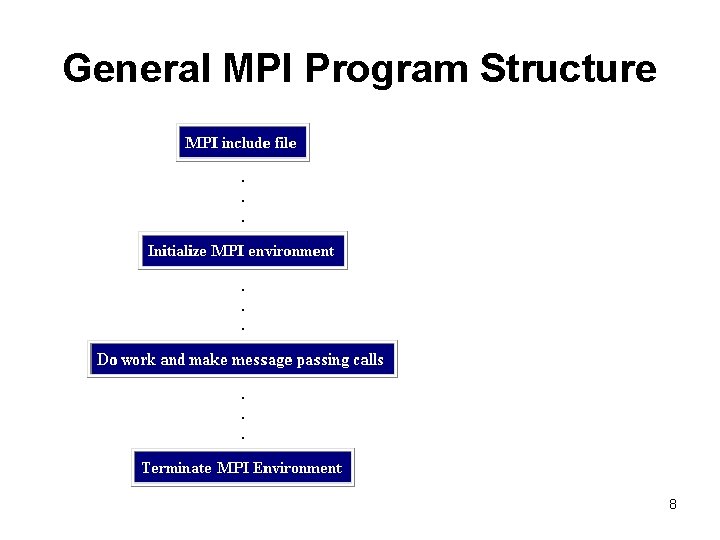

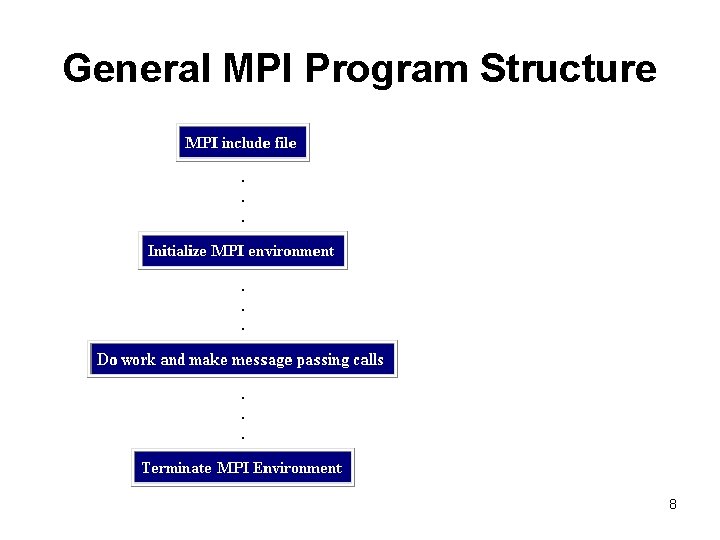

General MPI Program Structure 8

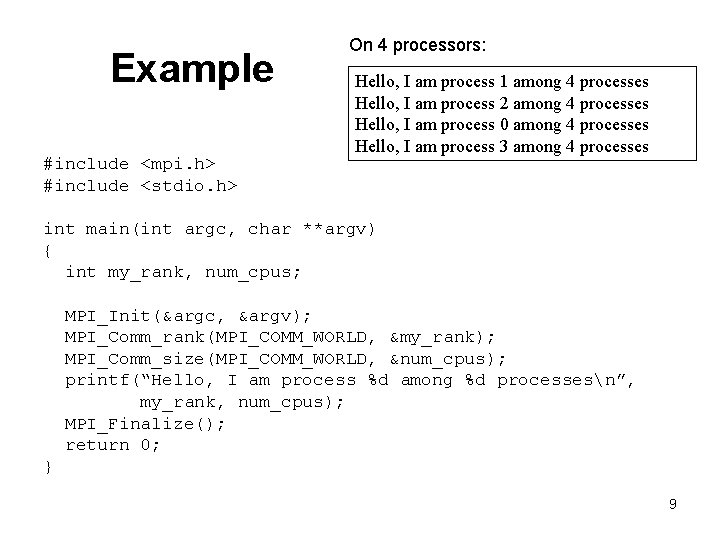

Example #include <mpi. h> #include <stdio. h> On 4 processors: Hello, I am process 1 among 4 processes Hello, I am process 2 among 4 processes Hello, I am process 0 among 4 processes Hello, I am process 3 among 4 processes int main(int argc, char **argv) { int my_rank, num_cpus; MPI_Init(&argc, &argv); MPI_Comm_rank(MPI_COMM_WORLD, &my_rank); MPI_Comm_size(MPI_COMM_WORLD, &num_cpus); printf(“Hello, I am process %d among %d processesn”, my_rank, num_cpus); MPI_Finalize(); return 0; } 9

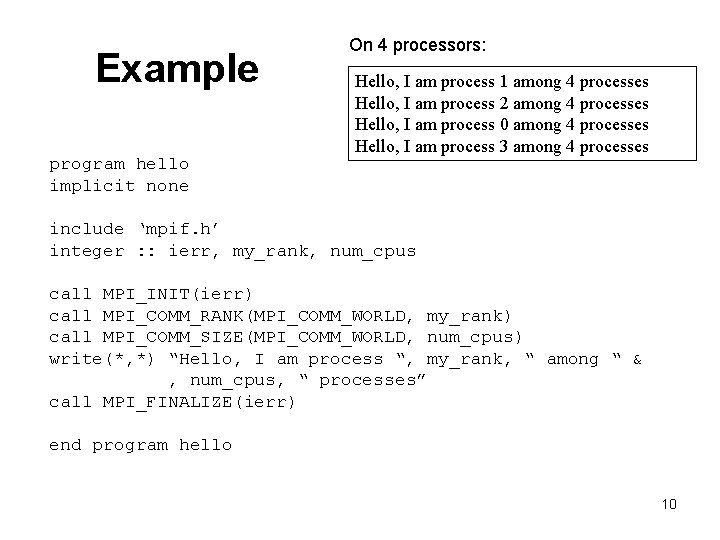

Example program hello implicit none On 4 processors: Hello, I am process 1 among 4 processes Hello, I am process 2 among 4 processes Hello, I am process 0 among 4 processes Hello, I am process 3 among 4 processes include ‘mpif. h’ integer : : ierr, my_rank, num_cpus call MPI_INIT(ierr) call MPI_COMM_RANK(MPI_COMM_WORLD, my_rank) call MPI_COMM_SIZE(MPI_COMM_WORLD, num_cpus) write(*, *) “Hello, I am process “, my_rank, “ among “ & , num_cpus, “ processes” call MPI_FINALIZE(ierr) end program hello 10

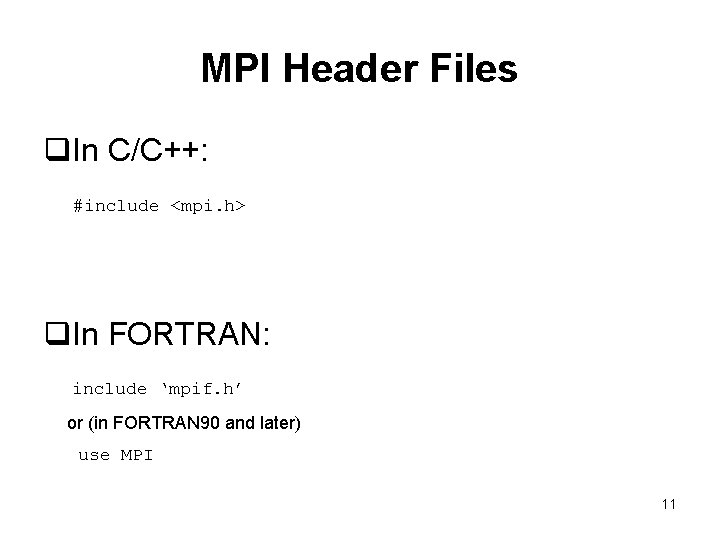

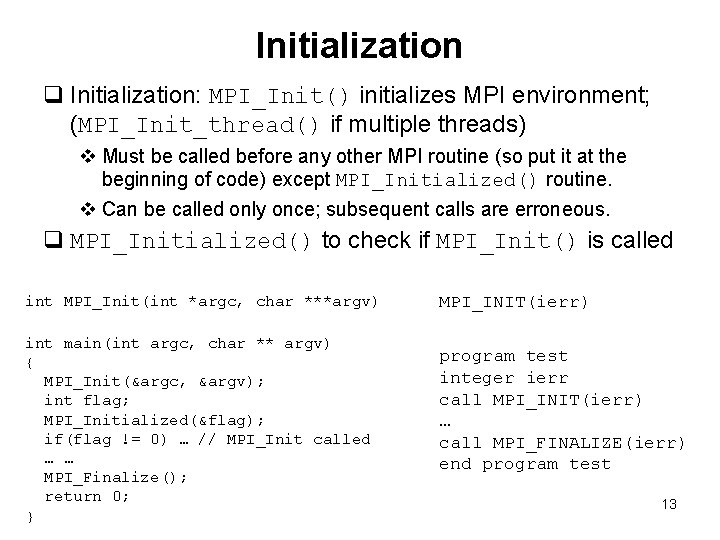

MPI Header Files q. In C/C++: #include <mpi. h> q. In FORTRAN: include ‘mpif. h’ or (in FORTRAN 90 and later) use MPI 11

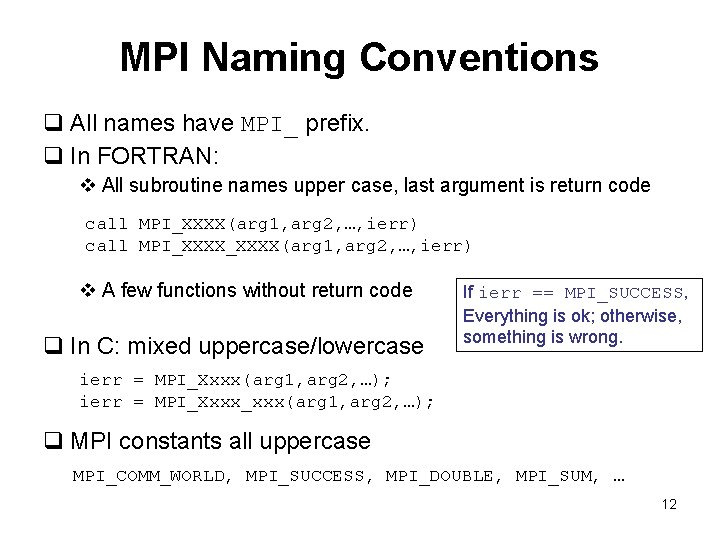

MPI Naming Conventions q All names have MPI_ prefix. q In FORTRAN: v All subroutine names upper case, last argument is return code call MPI_XXXX(arg 1, arg 2, …, ierr) v A few functions without return code q In C: mixed uppercase/lowercase If ierr == MPI_SUCCESS, Everything is ok; otherwise, something is wrong. ierr = MPI_Xxxx(arg 1, arg 2, …); ierr = MPI_Xxxx_xxx(arg 1, arg 2, …); q MPI constants all uppercase MPI_COMM_WORLD, MPI_SUCCESS, MPI_DOUBLE, MPI_SUM, … 12

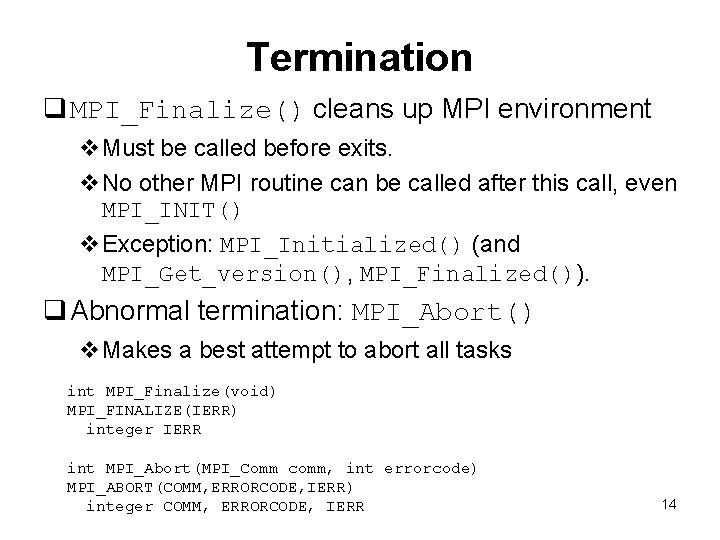

Initialization q Initialization: MPI_Init() initializes MPI environment; (MPI_Init_thread() if multiple threads) v Must be called before any other MPI routine (so put it at the beginning of code) except MPI_Initialized() routine. v Can be called only once; subsequent calls are erroneous. q MPI_Initialized() to check if MPI_Init() is called int MPI_Init(int *argc, char ***argv) int main(int argc, char ** argv) { MPI_Init(&argc, &argv); int flag; MPI_Initialized(&flag); if(flag != 0) … // MPI_Init called … … MPI_Finalize(); return 0; } MPI_INIT(ierr) program test integer ierr call MPI_INIT(ierr) … call MPI_FINALIZE(ierr) end program test 13

Termination q MPI_Finalize() cleans up MPI environment v. Must be called before exits. v. No other MPI routine can be called after this call, even MPI_INIT() v. Exception: MPI_Initialized() (and MPI_Get_version(), MPI_Finalized()). q Abnormal termination: MPI_Abort() v. Makes a best attempt to abort all tasks int MPI_Finalize(void) MPI_FINALIZE(IERR) integer IERR int MPI_Abort(MPI_Comm comm, int errorcode) MPI_ABORT(COMM, ERRORCODE, IERR) integer COMM, ERRORCODE, IERR 14

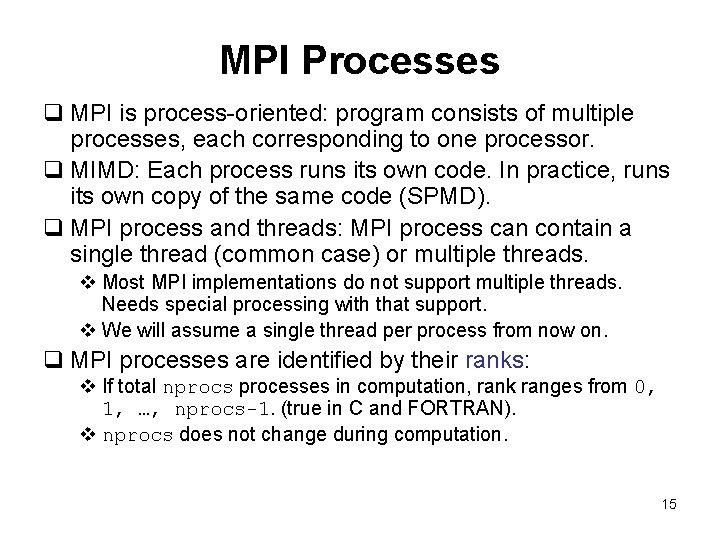

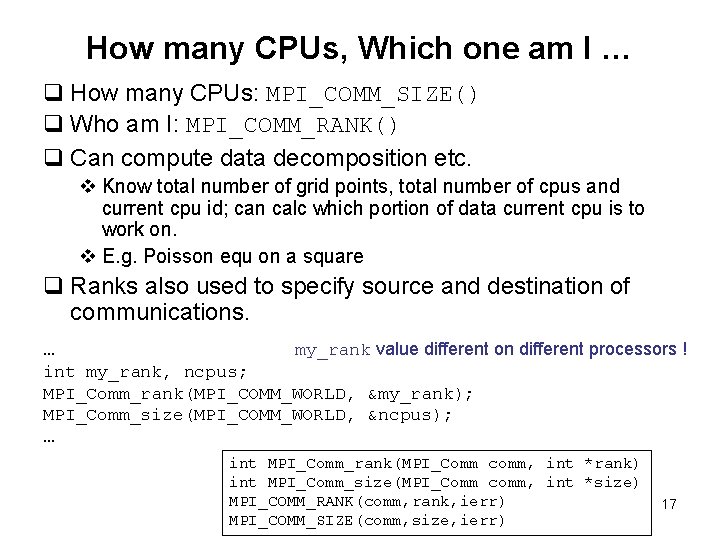

MPI Processes q MPI is process-oriented: program consists of multiple processes, each corresponding to one processor. q MIMD: Each process runs its own code. In practice, runs its own copy of the same code (SPMD). q MPI process and threads: MPI process can contain a single thread (common case) or multiple threads. v Most MPI implementations do not support multiple threads. Needs special processing with that support. v We will assume a single thread per process from now on. q MPI processes are identified by their ranks: v If total nprocs processes in computation, rank ranges from 0, 1, …, nprocs-1. (true in C and FORTRAN). v nprocs does not change during computation. 15

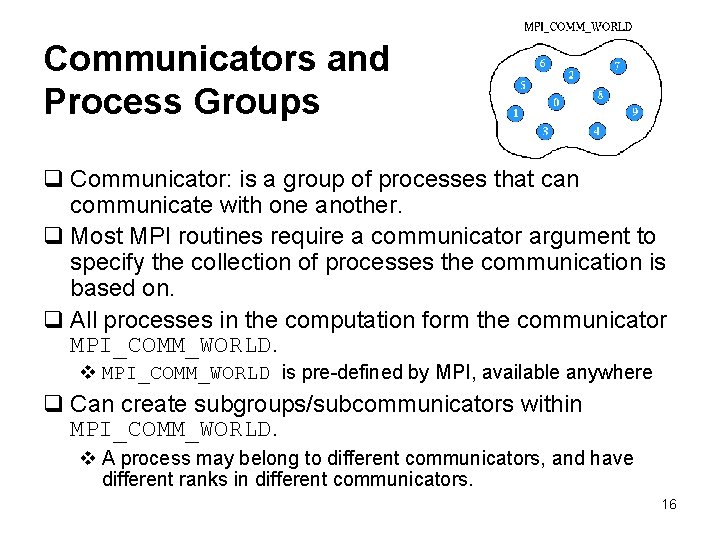

Communicators and Process Groups q Communicator: is a group of processes that can communicate with one another. q Most MPI routines require a communicator argument to specify the collection of processes the communication is based on. q All processes in the computation form the communicator MPI_COMM_WORLD. v MPI_COMM_WORLD is pre-defined by MPI, available anywhere q Can create subgroups/subcommunicators within MPI_COMM_WORLD. v A process may belong to different communicators, and have different ranks in different communicators. 16

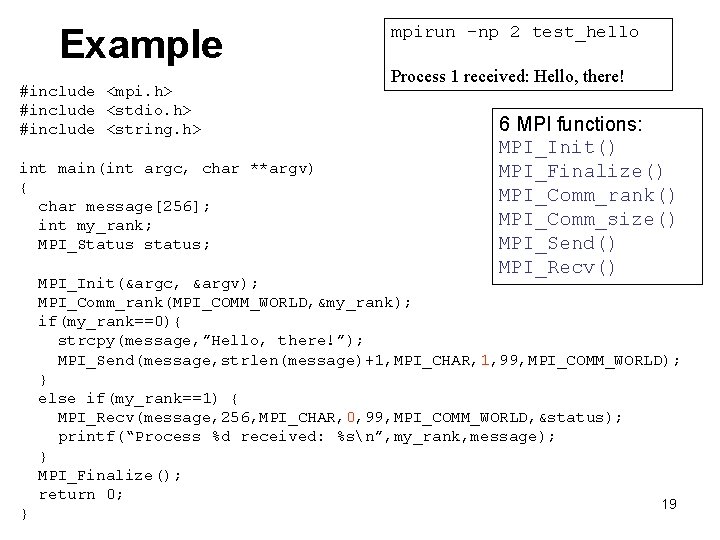

How many CPUs, Which one am I … q How many CPUs: MPI_COMM_SIZE() q Who am I: MPI_COMM_RANK() q Can compute data decomposition etc. v Know total number of grid points, total number of cpus and current cpu id; can calc which portion of data current cpu is to work on. v E. g. Poisson equ on a square q Ranks also used to specify source and destination of communications. … my_rank value different on different processors ! int my_rank, ncpus; MPI_Comm_rank(MPI_COMM_WORLD, &my_rank); MPI_Comm_size(MPI_COMM_WORLD, &ncpus); … int MPI_Comm_rank(MPI_Comm comm, int *rank) int MPI_Comm_size(MPI_Comm comm, int *size) MPI_COMM_RANK(comm, rank, ierr) MPI_COMM_SIZE(comm, size, ierr) 17

Compiling, Running q MPI standard does not specify how to start up the program v Compiling and running MPI code implementation dependent q MPI implementations provide utilities/commands for compiling/running MPI codes q Compile: mpicc, mpi. CC, mpif 77, mpif 90, mp. CC, mpxlf … mpi. CC –o myprog myfile. C (cluster) mpif 90 –o myprog myfile. f 90 (cluster) CC –Ipath_mpi_include –o myprog myfile. C –lmpi (SGI) mp. CC –o myprog myfile. C (IBM) q Run: mpirun, poe, prun, ibrun … mpirun –np 2 myprog (cluster) mpiexec –np 2 myprog (cluster) poe myprog –node 1 –tasks_per_node 2 … (IBM) 18

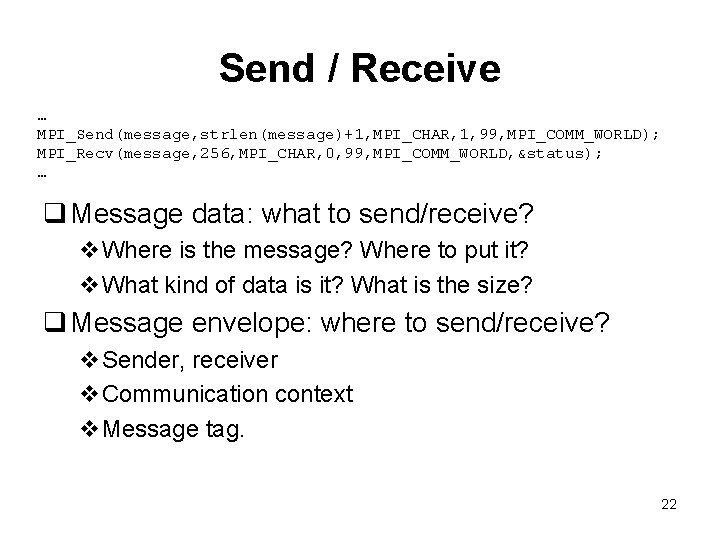

Example #include <mpi. h> #include <stdio. h> #include <string. h> int main(int argc, char **argv) { char message[256]; int my_rank; MPI_Status status; mpirun –np 2 test_hello Process 1 received: Hello, there! 6 MPI functions: MPI_Init() MPI_Finalize() MPI_Comm_rank() MPI_Comm_size() MPI_Send() MPI_Recv() MPI_Init(&argc, &argv); MPI_Comm_rank(MPI_COMM_WORLD, &my_rank); if(my_rank==0){ strcpy(message, ”Hello, there!”); MPI_Send(message, strlen(message)+1, MPI_CHAR, 1, 99, MPI_COMM_WORLD); } else if(my_rank==1) { MPI_Recv(message, 256, MPI_CHAR, 0, 99, MPI_COMM_WORLD, &status); printf(“Process %d received: %sn”, my_rank, message); } MPI_Finalize(); return 0; } 19

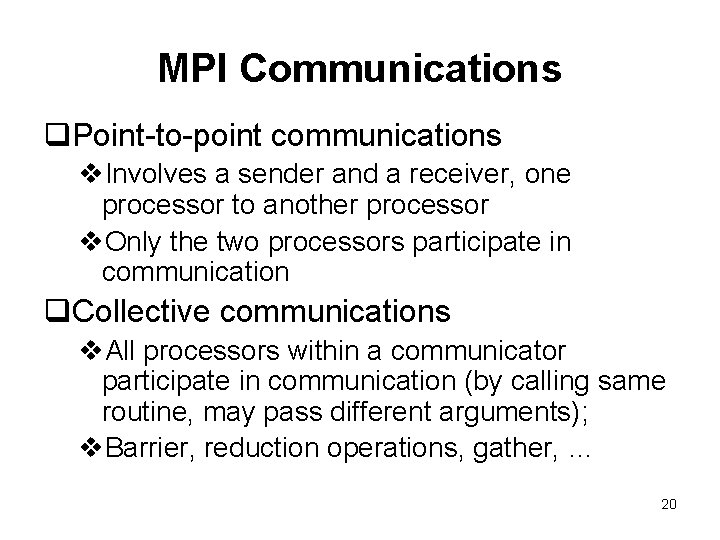

MPI Communications q. Point-to-point communications v. Involves a sender and a receiver, one processor to another processor v. Only the two processors participate in communication q. Collective communications v. All processors within a communicator participate in communication (by calling same routine, may pass different arguments); v. Barrier, reduction operations, gather, … 20

Point-to-Point Communications 21

Send / Receive … MPI_Send(message, strlen(message)+1, MPI_CHAR, 1, 99, MPI_COMM_WORLD); MPI_Recv(message, 256, MPI_CHAR, 0, 99, MPI_COMM_WORLD, &status); … q Message data: what to send/receive? v. Where is the message? Where to put it? v. What kind of data is it? What is the size? q Message envelope: where to send/receive? v. Sender, receiver v. Communication context v. Message tag. 22

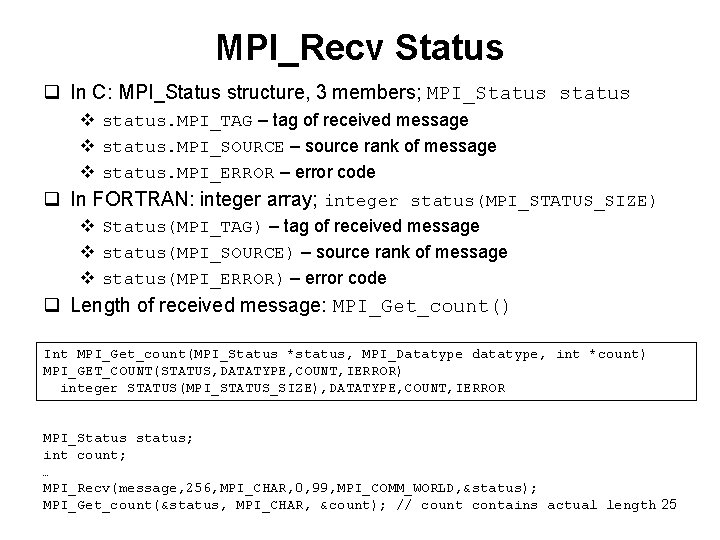

Send int MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_SEND(BUF, COUNT, DATATYPE, DEST, TAG, COMM, IERROR) <type>BUF(*) integer COUNT, DATATYPE, DEST, TAG, COMM, IERROR q buf – memory address of start of message q count – number of data items q datatype – what type each data item is (integer, character, double, float …) q dest – rank of receiving process q tag – additional identification of message q comm – communicator, usually MPI_COMM_WORLD char message[256]; MPI_Send(message, strlen(message)+1, MPI_CHAR, 1, 99, MPI_COMM_WORLD); 23

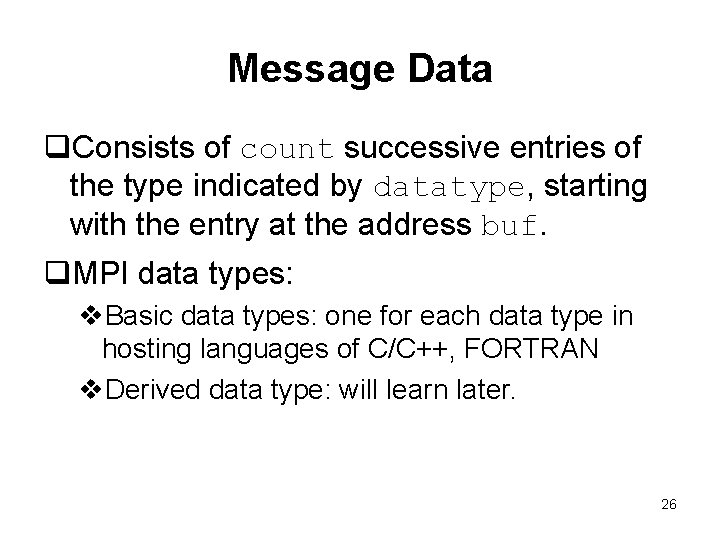

Receive int MPI_Recv(void *buf, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Status *status) MPI_RECV(BUF, COUNT, DATATYPE, SOURCE, TAG, COMM, STATUS, IERROR) <type>BUF(*) integer COUNT, DATATYPE, SOURCE, TAG, COMM, STATUS(MPI_STATUS_SIZE), IERROR q buf – initial address of receive buffer q count – number of elements in receive buffer (size of receive buffer) q q q v may not equal to the count of items actually received v Actual number of data items received can be obtained by calling MPI_Get_count(). datatype – data type in receive buffer source – rank of sending process tag – additional identification for message comm – communicator, usually MPI_COMM_WORLD status – object containing additional info of received message ierror – return code char message[256]; MPI_Recv(message, 256, MPI_CHAR, 0, 99, MPI_COMM_WORLD, &status); Actual number of data items received can be queried from status object; it may be smaller than count, but cannot be larger (if larger overflow error). 24

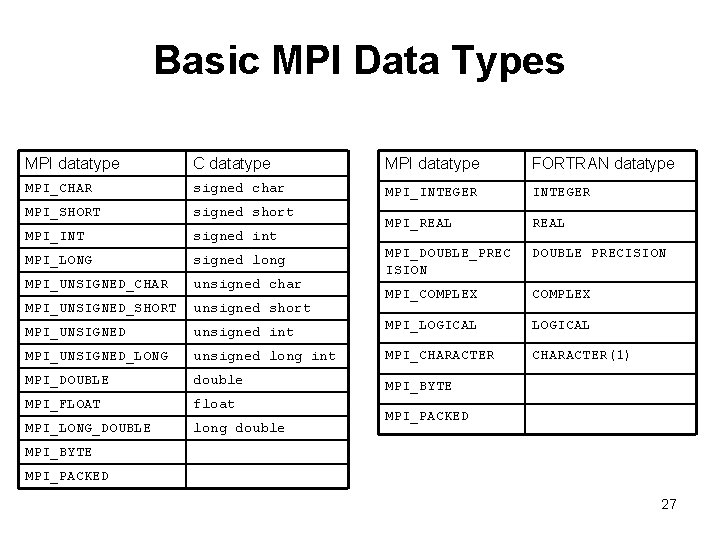

MPI_Recv Status q In C: MPI_Status structure, 3 members; MPI_Status status v status. MPI_TAG – tag of received message v status. MPI_SOURCE – source rank of message v status. MPI_ERROR – error code q In FORTRAN: integer array; integer status(MPI_STATUS_SIZE) v Status(MPI_TAG) – tag of received message v status(MPI_SOURCE) – source rank of message v status(MPI_ERROR) – error code q Length of received message: MPI_Get_count() Int MPI_Get_count(MPI_Status *status, MPI_Datatype datatype, int *count) MPI_GET_COUNT(STATUS, DATATYPE, COUNT, IERROR) integer STATUS(MPI_STATUS_SIZE), DATATYPE, COUNT, IERROR MPI_Status status; int count; … MPI_Recv(message, 256, MPI_CHAR, 0, 99, MPI_COMM_WORLD, &status); MPI_Get_count(&status, MPI_CHAR, &count); // count contains actual length 25

Message Data q. Consists of count successive entries of the type indicated by datatype, starting with the entry at the address buf. q. MPI data types: v. Basic data types: one for each data type in hosting languages of C/C++, FORTRAN v. Derived data type: will learn later. 26

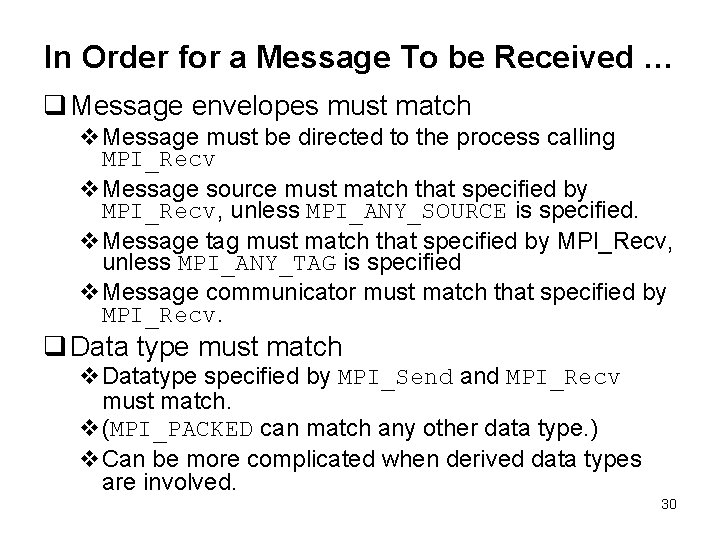

Basic MPI Data Types MPI datatype C datatype MPI datatype FORTRAN datatype MPI_CHAR signed char MPI_INTEGER MPI_SHORT signed short MPI_INT signed int MPI_REAL MPI_LONG signed long DOUBLE PRECISION MPI_UNSIGNED_CHAR unsigned char MPI_DOUBLE_PREC ISION MPI_UNSIGNED_SHORT unsigned short MPI_COMPLEX MPI_UNSIGNED unsigned int MPI_LOGICAL MPI_UNSIGNED_LONG unsigned long int MPI_CHARACTER(1) MPI_DOUBLE double MPI_BYTE MPI_FLOAT float MPI_LONG_DOUBLE long double MPI_PACKED MPI_BYTE MPI_PACKED 27

![Example int numstudents numstudents 0 1 double grade10 note 0 1 char note1024 grade Example int num_students; num_students: 0 1 double grade[10]; note: 0 1 char note[1024]; grade:](https://slidetodoc.com/presentation_image_h/965796e341c0255ce80ad32b8345c57d/image-28.jpg)

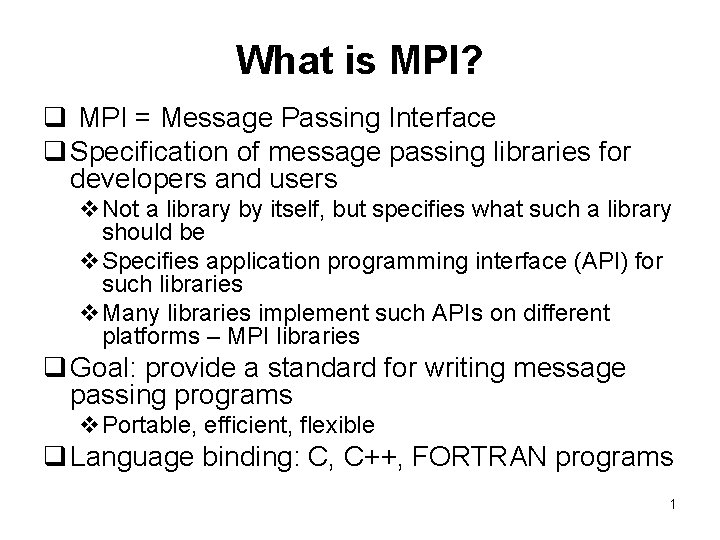

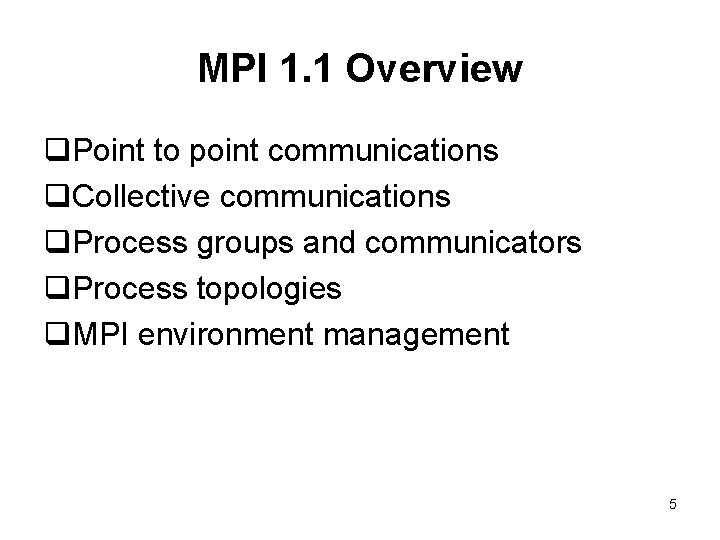

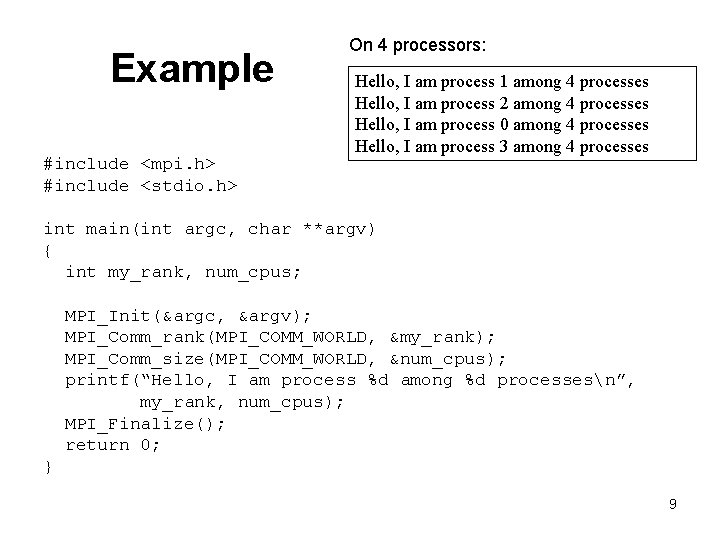

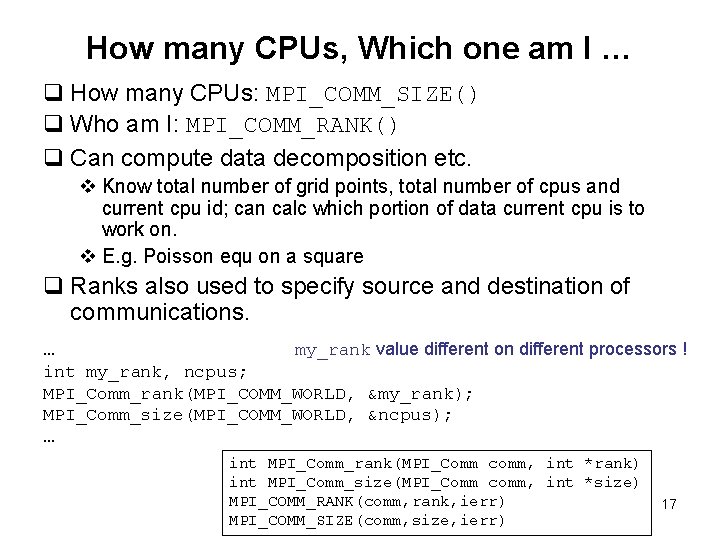

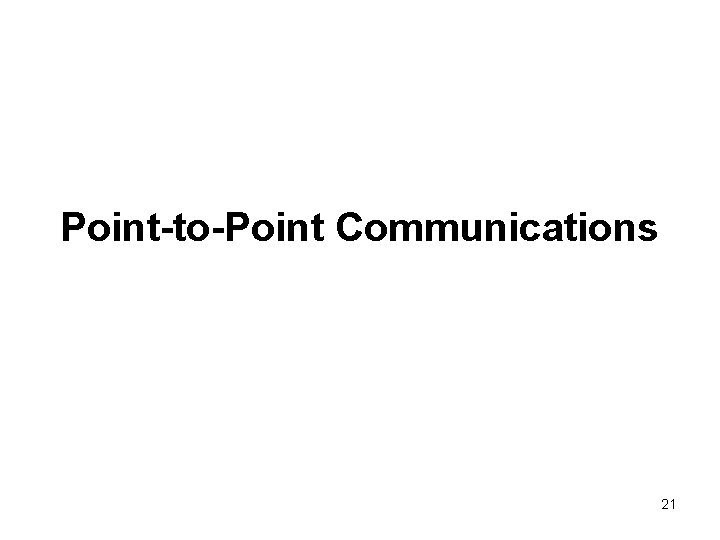

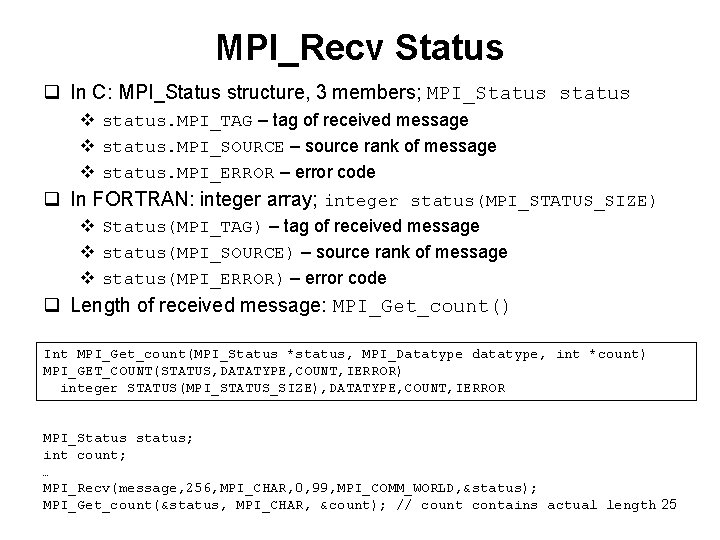

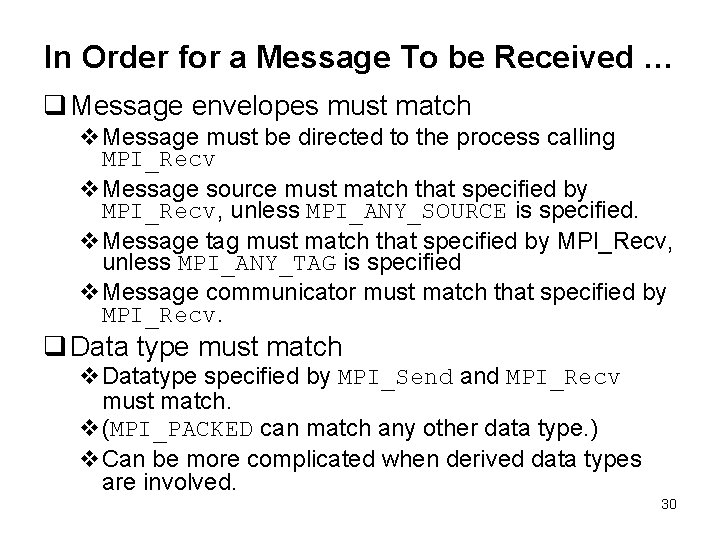

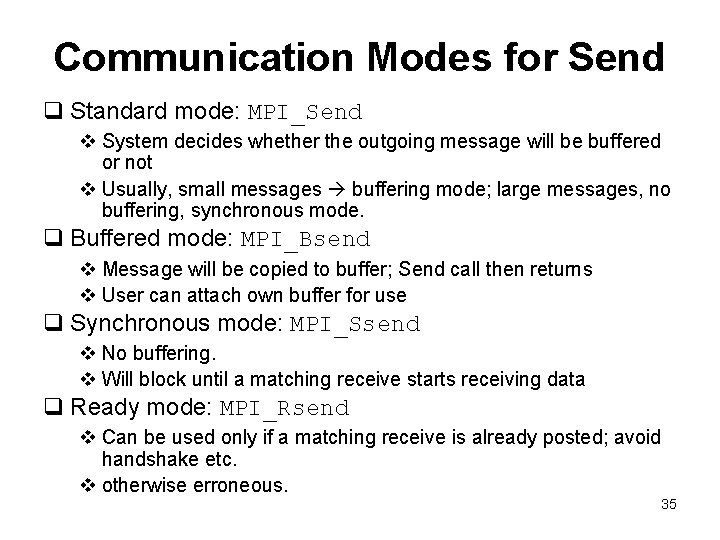

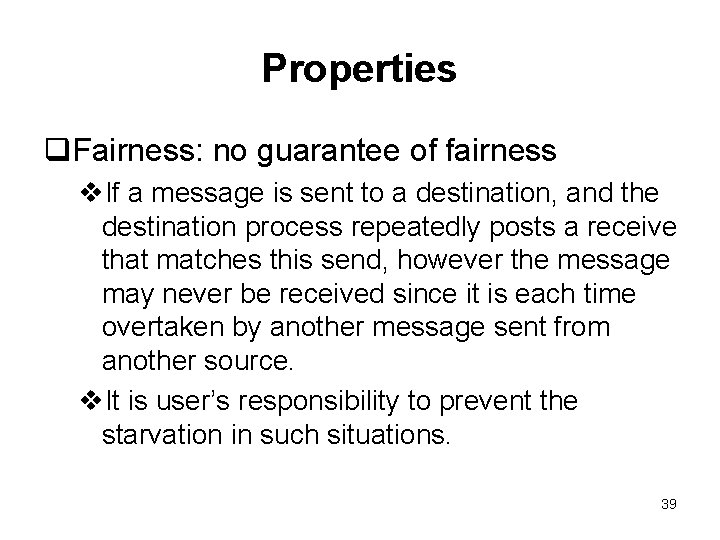

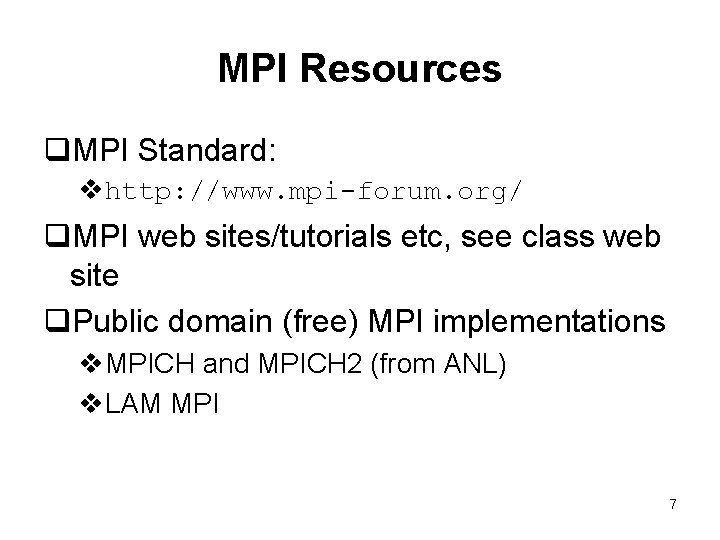

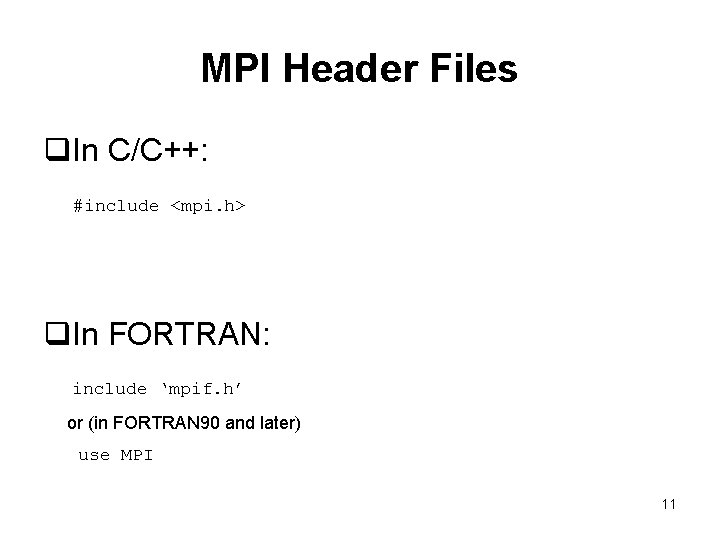

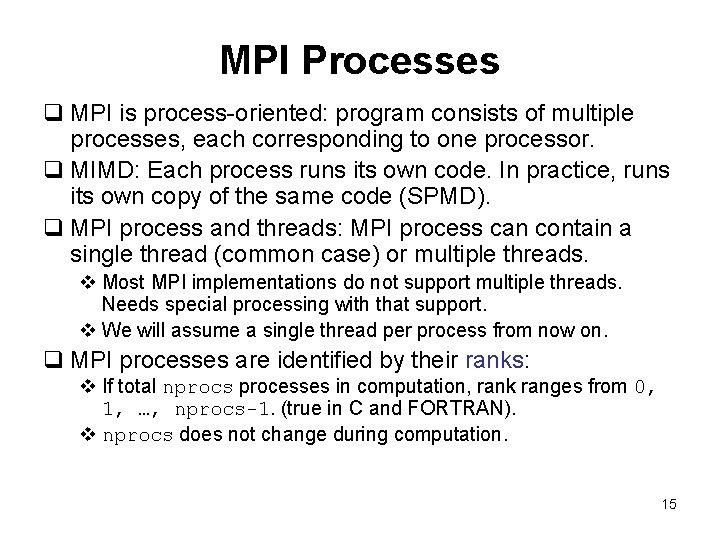

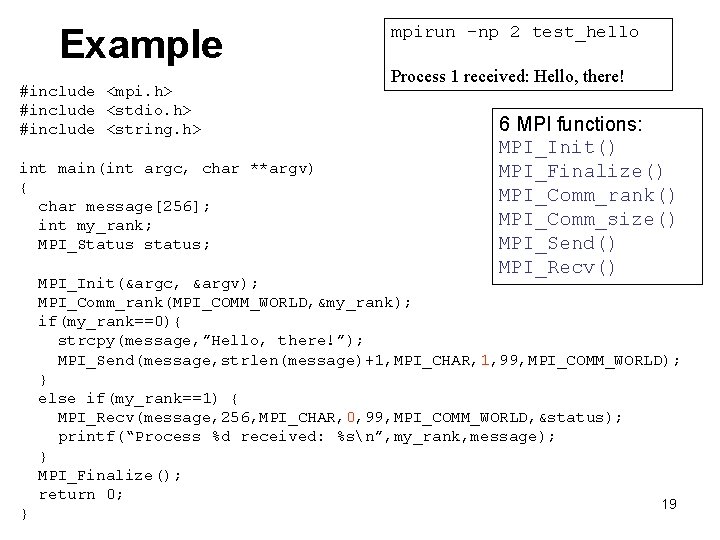

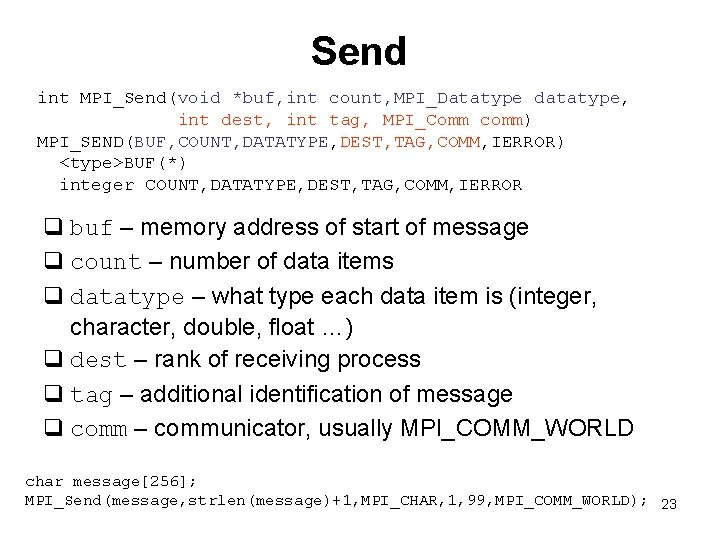

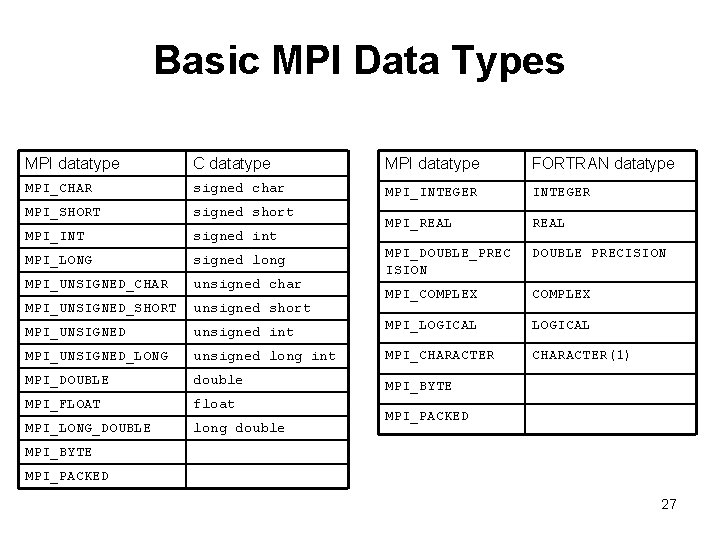

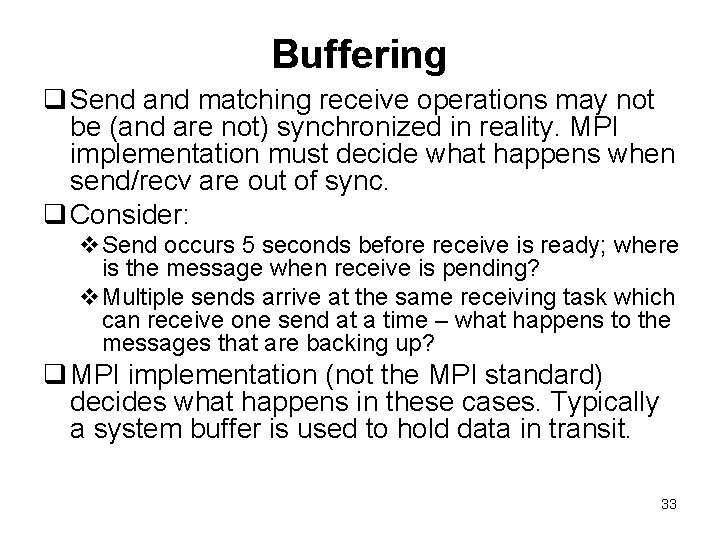

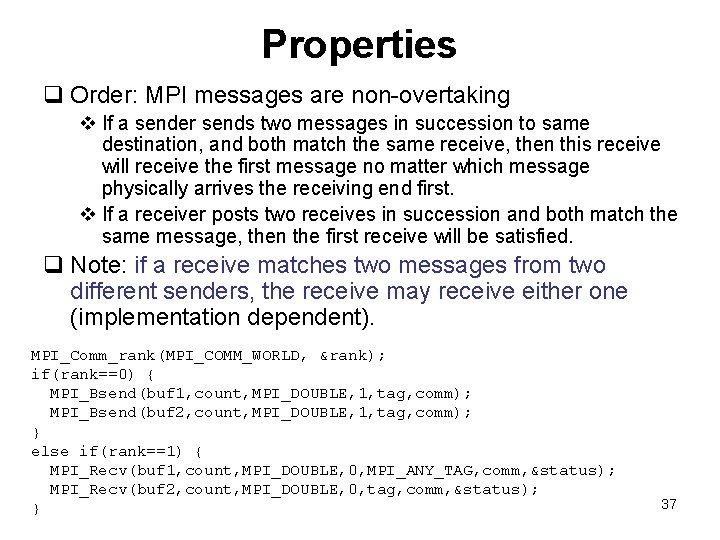

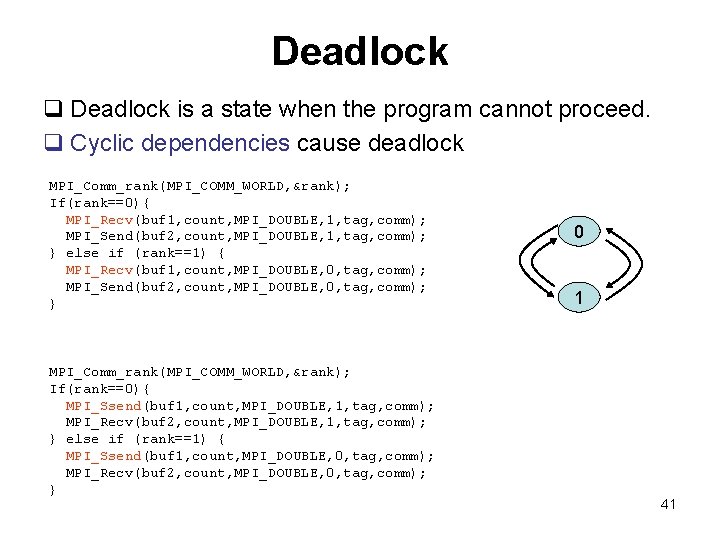

Example int num_students; num_students: 0 1 double grade[10]; note: 0 1 char note[1024]; grade: 0 2 int tag 1=1001, tag 2=1002; int rank, ncpus; MPI_Status status; … MPI_Comm_rank(MPI_COMM_WORLD, &rank); … // set num_students, grade, note in rank=0 cpu if(rank==0){ MPI_Send(&num_students, 1, MPI_INT, 1, tag 1, MPI_COMM_WORLD); MPI_Send(grade, 10, MPI_DOUBLE, 2, tag 1, MPI_COMM_WORLD); MPI_Send(note, strlen(note)+1, MPI_CHAR, 1, tag 2, MPI_COMM_WORLD); } if(rank==1){ MPI_Recv(&num_students, 1, MPI_INT, 0, tag 1, MPI_COMM_WORLD, &status); MPI_Recv(note, 1024, MPI_CHAR, 0, tag 2, MPI_COMM_WORLD, &status); } if(rank==2){ MPI_Recv(grade, 10, MPI_DOUBLE, 0, tag 1, MPI_COMM_WORLD, &status); } … 28

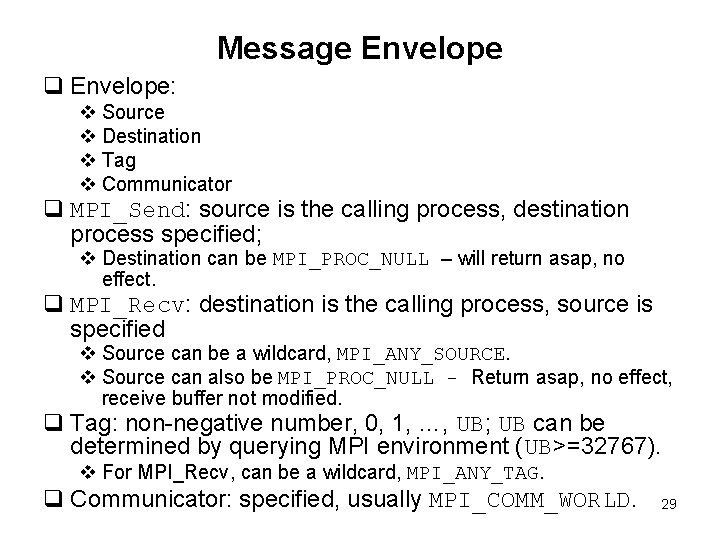

Message Envelope q Envelope: v Source v Destination v Tag v Communicator q MPI_Send: source is the calling process, destination process specified; v Destination can be MPI_PROC_NULL – will return asap, no effect. q MPI_Recv: destination is the calling process, source is specified v Source can be a wildcard, MPI_ANY_SOURCE. v Source can also be MPI_PROC_NULL - Return asap, no effect, receive buffer not modified. q Tag: non-negative number, 0, 1, …, UB; UB can be determined by querying MPI environment (UB>=32767). v For MPI_Recv, can be a wildcard, MPI_ANY_TAG. q Communicator: specified, usually MPI_COMM_WORLD. 29

In Order for a Message To be Received … q Message envelopes must match v. Message must be directed to the process calling MPI_Recv v. Message source must match that specified by MPI_Recv, unless MPI_ANY_SOURCE is specified. v. Message tag must match that specified by MPI_Recv, unless MPI_ANY_TAG is specified v. Message communicator must match that specified by MPI_Recv. q Data type must match v. Datatype specified by MPI_Send and MPI_Recv must match. v(MPI_PACKED can match any other data type. ) v. Can be more complicated when derived data types are involved. 30

![Example A 0 1 double A10 B15 int rank tag 1001 tag 11002 Example A: 0 1 double A[10], B[15]; int rank, tag = 1001, tag 1=1002;](https://slidetodoc.com/presentation_image_h/965796e341c0255ce80ad32b8345c57d/image-31.jpg)

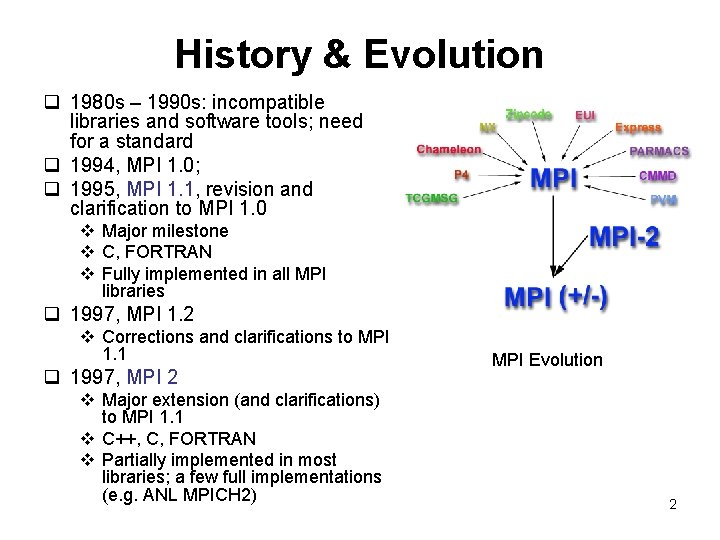

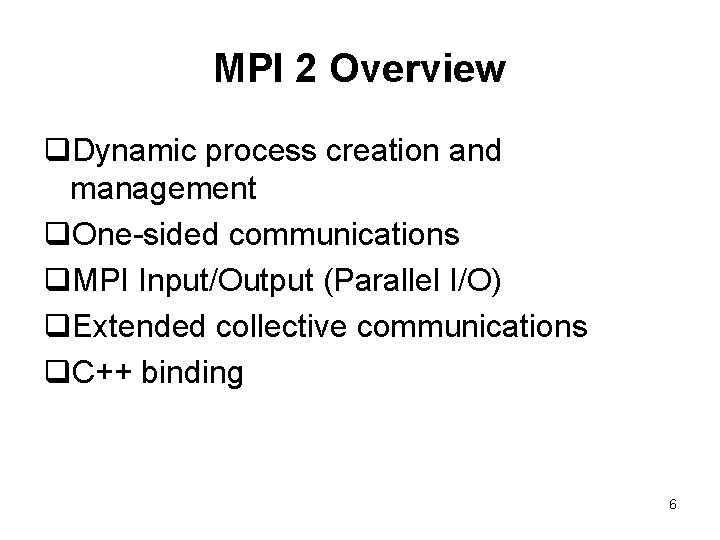

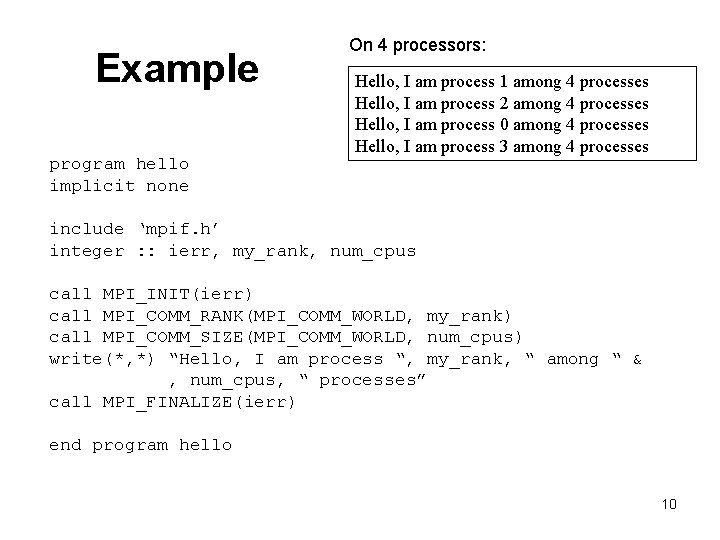

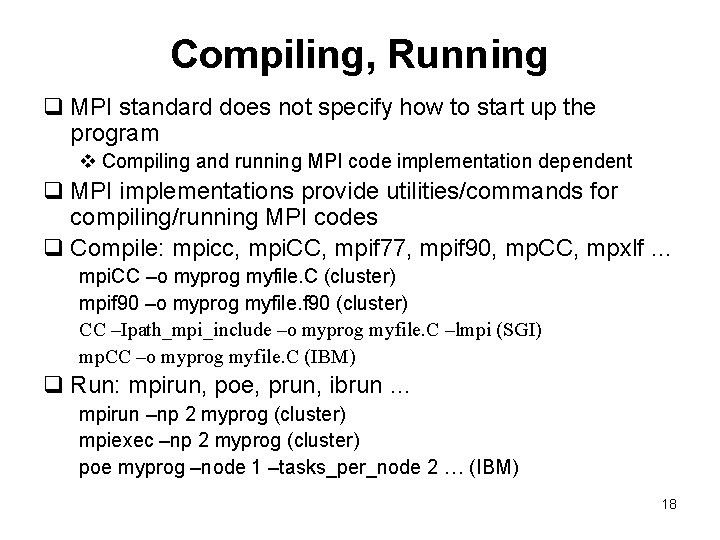

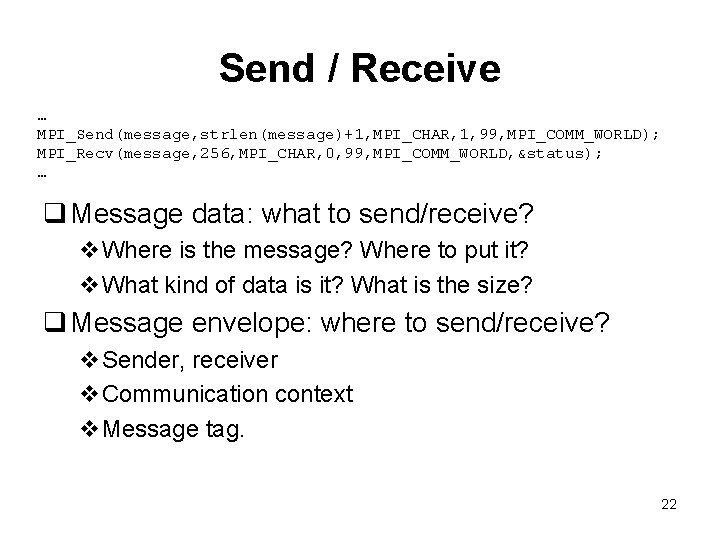

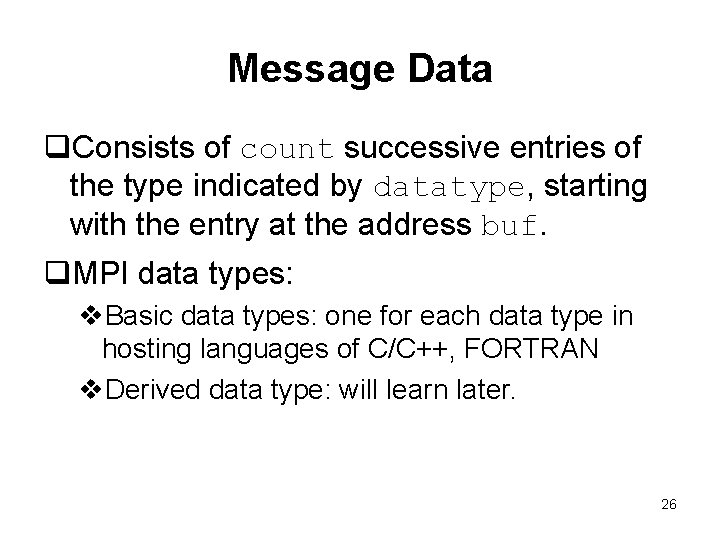

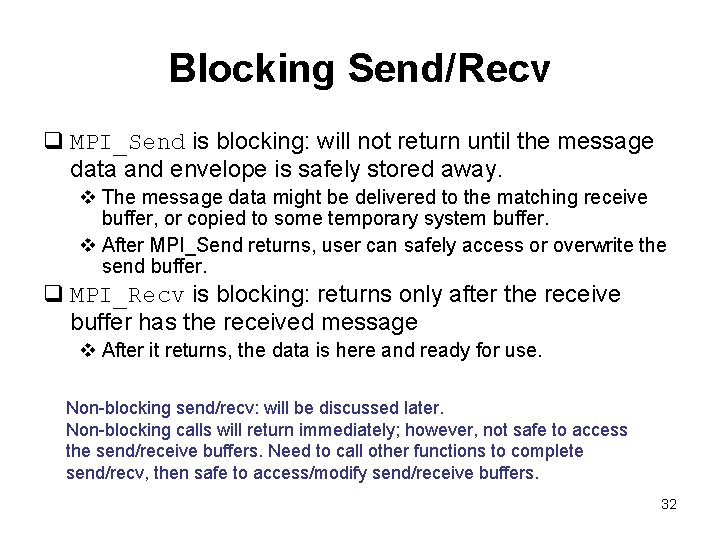

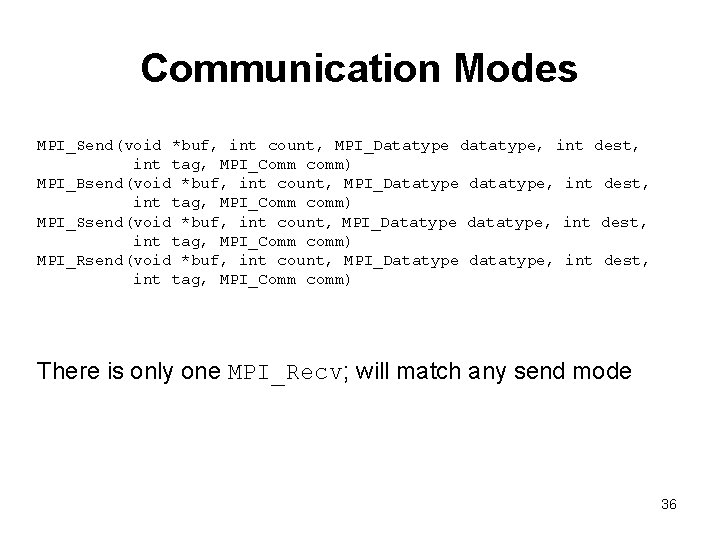

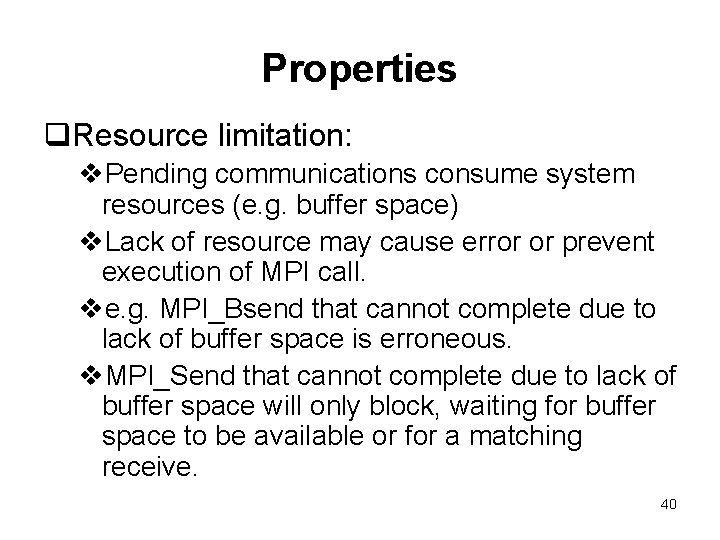

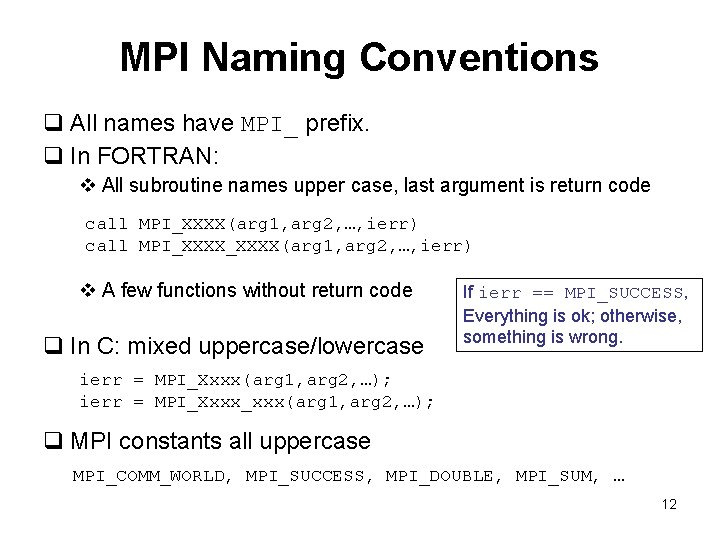

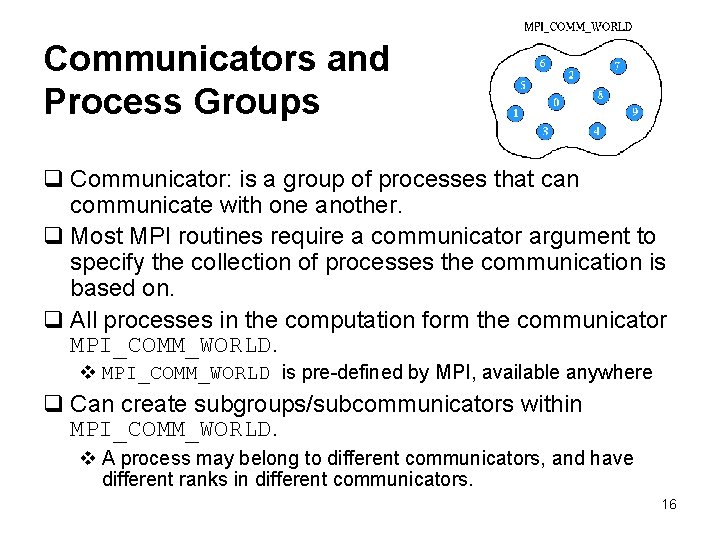

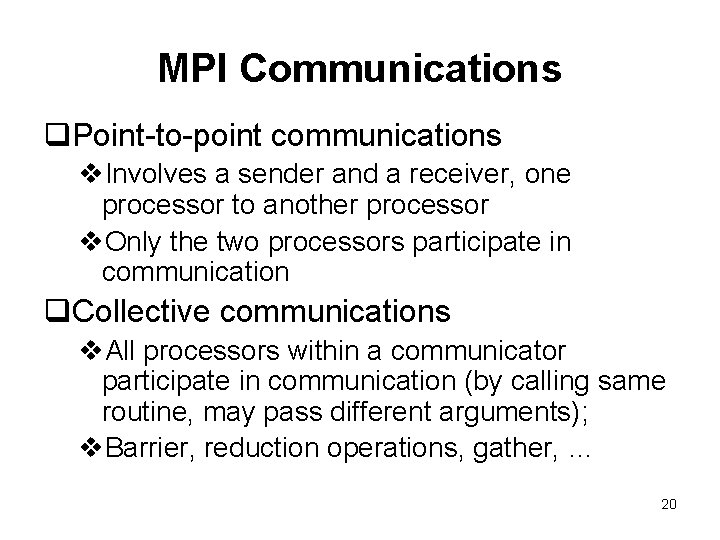

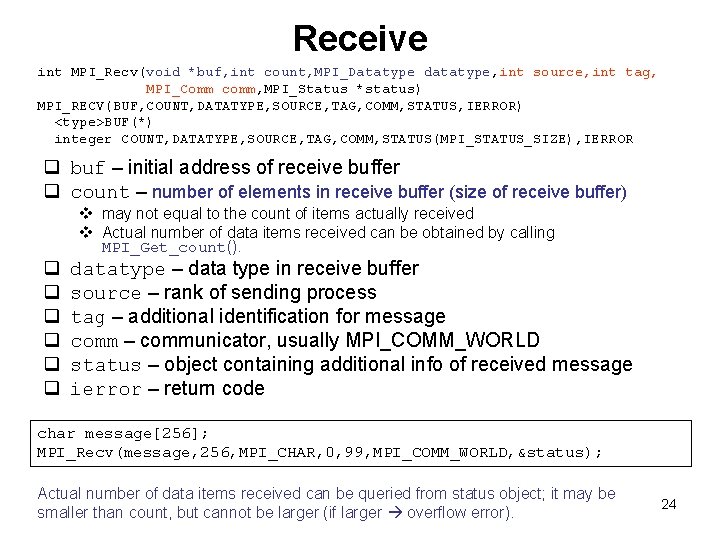

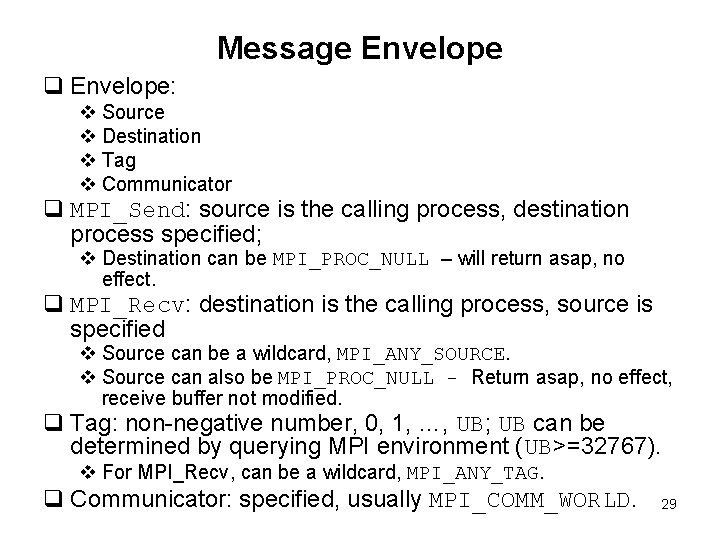

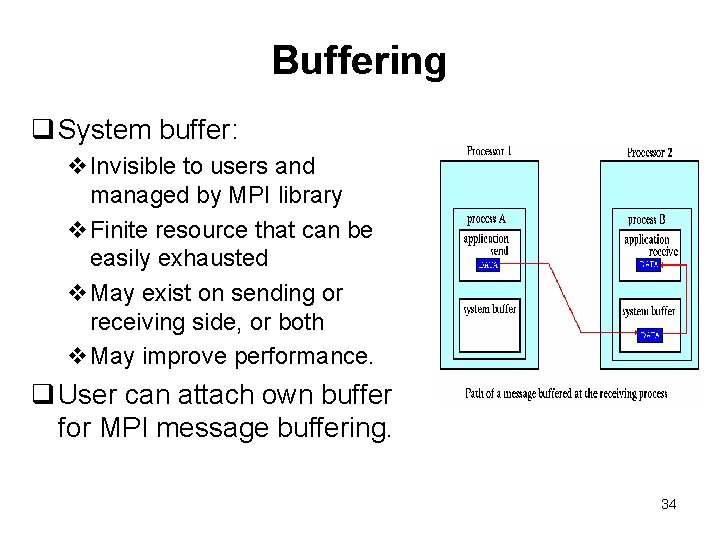

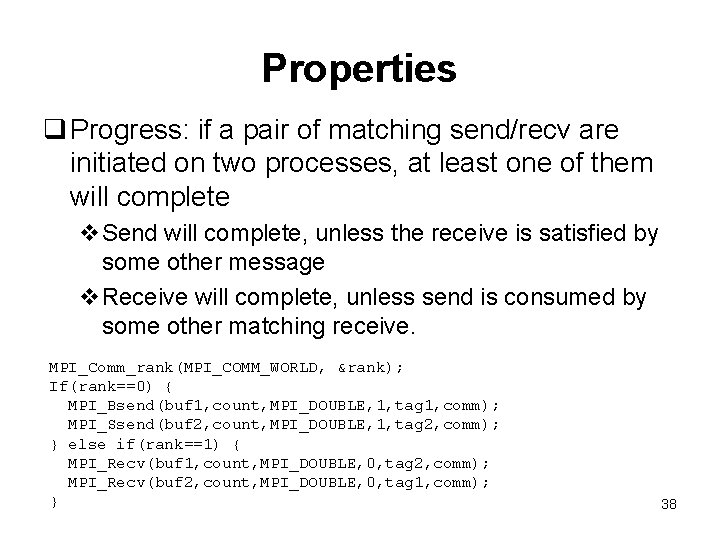

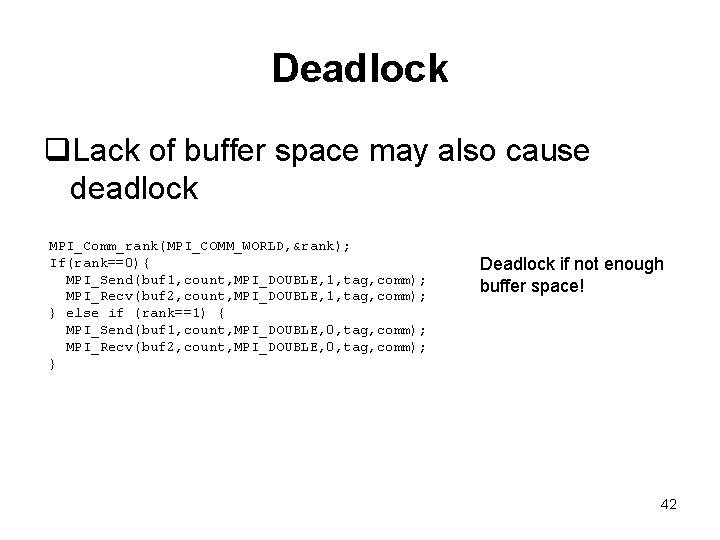

Example A: 0 1 double A[10], B[15]; int rank, tag = 1001, tag 1=1002; MPI_Status status; MPI_Comm_rank(MPI_COMM_WORLD, &rank); … if(rank==0) MPI_Send(A, 10, MPI_DOUBLE, 1, tag, MPI_COMM_WORLD); else if(rank==1){ MPI_Recv(B, 15, MPI_DOUBLE, 0, tag, MPI_COMM_WORLD, &status); // ok // MPI_Recv(B, 15, MPI_FLOAT, 0, tag, MPI_COMM_WORLD, &status); wrong // MPI_Recv(B, 15, MPI_DOUBLE, 0, tag 1, MPI_COMM_WORLD, &status); un-match // MPI_Recv(B, 15, MPI_DOUBLE, 1, tag, MPI_COMM_WORLD, &status); un-match // MPI_Recv(B, 15, MPI_DOUBLE, MPI_ANY_SOURCE, tag, MPI_COMM_WORLD, &status); ok // MPI_Recv(B, 15, MPI_DOUBLE, 0, MPI_ANY_TAG, MPI_COMM_WORLD, &status); ok } 31

Blocking Send/Recv q MPI_Send is blocking: will not return until the message data and envelope is safely stored away. v The message data might be delivered to the matching receive buffer, or copied to some temporary system buffer. v After MPI_Send returns, user can safely access or overwrite the send buffer. q MPI_Recv is blocking: returns only after the receive buffer has the received message v After it returns, the data is here and ready for use. Non-blocking send/recv: will be discussed later. Non-blocking calls will return immediately; however, not safe to access the send/receive buffers. Need to call other functions to complete send/recv, then safe to access/modify send/receive buffers. 32

Buffering q Send and matching receive operations may not be (and are not) synchronized in reality. MPI implementation must decide what happens when send/recv are out of sync. q Consider: v. Send occurs 5 seconds before receive is ready; where is the message when receive is pending? v. Multiple sends arrive at the same receiving task which can receive one send at a time – what happens to the messages that are backing up? q MPI implementation (not the MPI standard) decides what happens in these cases. Typically a system buffer is used to hold data in transit. 33

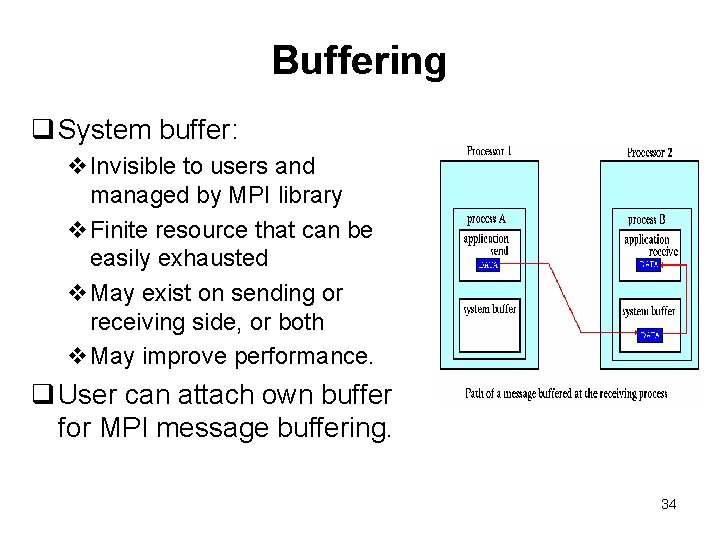

Buffering q System buffer: v. Invisible to users and managed by MPI library v. Finite resource that can be easily exhausted v. May exist on sending or receiving side, or both v. May improve performance. q User can attach own buffer for MPI message buffering. 34

Communication Modes for Send q Standard mode: MPI_Send v System decides whether the outgoing message will be buffered or not v Usually, small messages buffering mode; large messages, no buffering, synchronous mode. q Buffered mode: MPI_Bsend v Message will be copied to buffer; Send call then returns v User can attach own buffer for use q Synchronous mode: MPI_Ssend v No buffering. v Will block until a matching receive starts receiving data q Ready mode: MPI_Rsend v Can be used only if a matching receive is already posted; avoid handshake etc. v otherwise erroneous. 35

Communication Modes MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_Bsend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_Ssend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) MPI_Rsend(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) There is only one MPI_Recv; will match any send mode 36

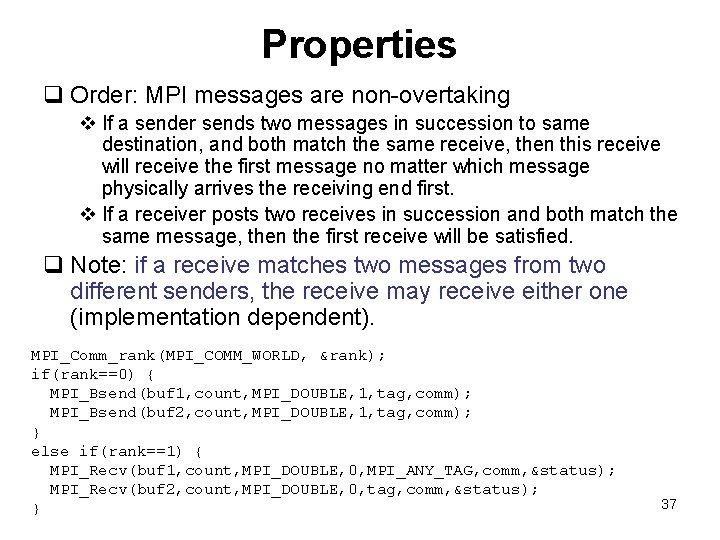

Properties q Order: MPI messages are non-overtaking v If a sender sends two messages in succession to same destination, and both match the same receive, then this receive will receive the first message no matter which message physically arrives the receiving end first. v If a receiver posts two receives in succession and both match the same message, then the first receive will be satisfied. q Note: if a receive matches two messages from two different senders, the receive may receive either one (implementation dependent). MPI_Comm_rank(MPI_COMM_WORLD, &rank); if(rank==0) { MPI_Bsend(buf 1, count, MPI_DOUBLE, 1, tag, comm); MPI_Bsend(buf 2, count, MPI_DOUBLE, 1, tag, comm); } else if(rank==1) { MPI_Recv(buf 1, count, MPI_DOUBLE, 0, MPI_ANY_TAG, comm, &status); MPI_Recv(buf 2, count, MPI_DOUBLE, 0, tag, comm, &status); } 37

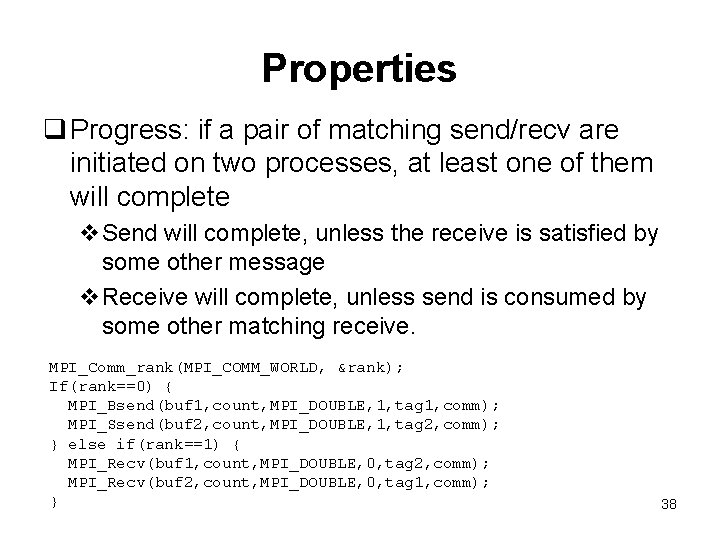

Properties q Progress: if a pair of matching send/recv are initiated on two processes, at least one of them will complete v. Send will complete, unless the receive is satisfied by some other message v. Receive will complete, unless send is consumed by some other matching receive. MPI_Comm_rank(MPI_COMM_WORLD, &rank); If(rank==0) { MPI_Bsend(buf 1, count, MPI_DOUBLE, 1, tag 1, comm); MPI_Ssend(buf 2, count, MPI_DOUBLE, 1, tag 2, comm); } else if(rank==1) { MPI_Recv(buf 1, count, MPI_DOUBLE, 0, tag 2, comm); MPI_Recv(buf 2, count, MPI_DOUBLE, 0, tag 1, comm); } 38

Properties q. Fairness: no guarantee of fairness v. If a message is sent to a destination, and the destination process repeatedly posts a receive that matches this send, however the message may never be received since it is each time overtaken by another message sent from another source. v. It is user’s responsibility to prevent the starvation in such situations. 39

Properties q. Resource limitation: v. Pending communications consume system resources (e. g. buffer space) v. Lack of resource may cause error or prevent execution of MPI call. ve. g. MPI_Bsend that cannot complete due to lack of buffer space is erroneous. v. MPI_Send that cannot complete due to lack of buffer space will only block, waiting for buffer space to be available or for a matching receive. 40

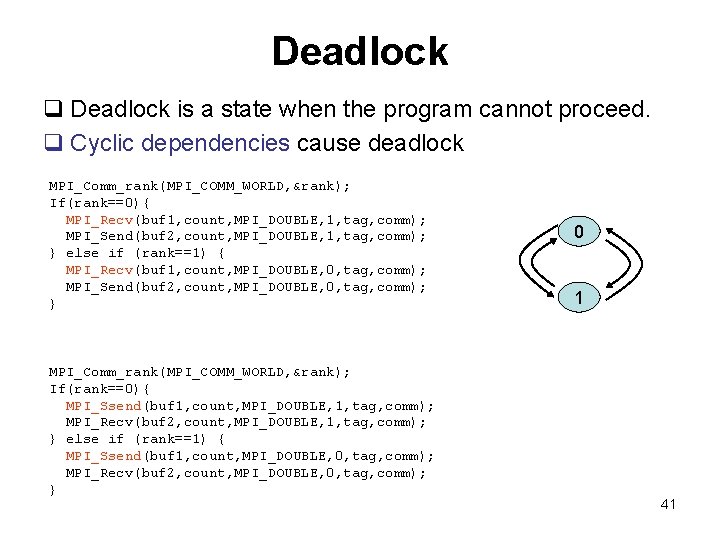

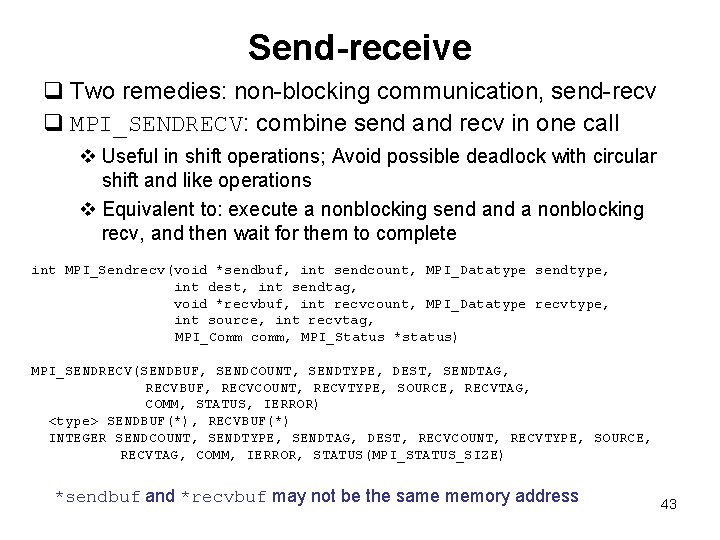

Deadlock q Deadlock is a state when the program cannot proceed. q Cyclic dependencies cause deadlock MPI_Comm_rank(MPI_COMM_WORLD, &rank); If(rank==0){ MPI_Recv(buf 1, count, MPI_DOUBLE, 1, tag, comm); MPI_Send(buf 2, count, MPI_DOUBLE, 1, tag, comm); } else if (rank==1) { MPI_Recv(buf 1, count, MPI_DOUBLE, 0, tag, comm); MPI_Send(buf 2, count, MPI_DOUBLE, 0, tag, comm); } MPI_Comm_rank(MPI_COMM_WORLD, &rank); If(rank==0){ MPI_Ssend(buf 1, count, MPI_DOUBLE, 1, tag, comm); MPI_Recv(buf 2, count, MPI_DOUBLE, 1, tag, comm); } else if (rank==1) { MPI_Ssend(buf 1, count, MPI_DOUBLE, 0, tag, comm); MPI_Recv(buf 2, count, MPI_DOUBLE, 0, tag, comm); } 0 1 41

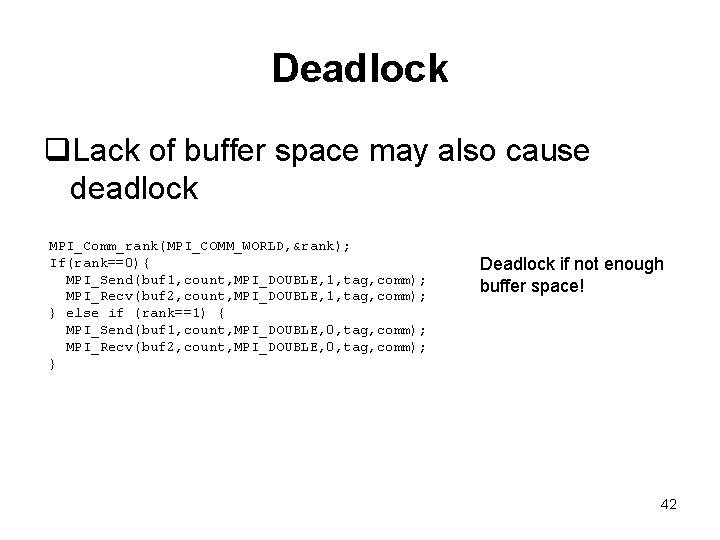

Deadlock q. Lack of buffer space may also cause deadlock MPI_Comm_rank(MPI_COMM_WORLD, &rank); If(rank==0){ MPI_Send(buf 1, count, MPI_DOUBLE, 1, tag, comm); MPI_Recv(buf 2, count, MPI_DOUBLE, 1, tag, comm); } else if (rank==1) { MPI_Send(buf 1, count, MPI_DOUBLE, 0, tag, comm); MPI_Recv(buf 2, count, MPI_DOUBLE, 0, tag, comm); } Deadlock if not enough buffer space! 42

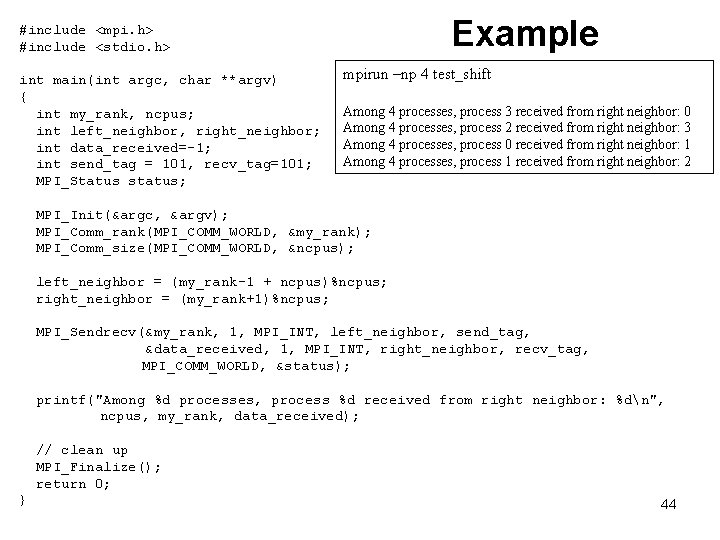

Send-receive q Two remedies: non-blocking communication, send-recv q MPI_SENDRECV: combine send and recv in one call v Useful in shift operations; Avoid possible deadlock with circular shift and like operations v Equivalent to: execute a nonblocking send a nonblocking recv, and then wait for them to complete int MPI_Sendrecv(void *sendbuf, int sendcount, MPI_Datatype sendtype, int dest, int sendtag, void *recvbuf, int recvcount, MPI_Datatype recvtype, int source, int recvtag, MPI_Comm comm, MPI_Status *status) MPI_SENDRECV(SENDBUF, SENDCOUNT, SENDTYPE, DEST, SENDTAG, RECVBUF, RECVCOUNT, RECVTYPE, SOURCE, RECVTAG, COMM, STATUS, IERROR) <type> SENDBUF(*), RECVBUF(*) INTEGER SENDCOUNT, SENDTYPE, SENDTAG, DEST, RECVCOUNT, RECVTYPE, SOURCE, RECVTAG, COMM, IERROR, STATUS(MPI_STATUS_SIZE) *sendbuf and *recvbuf may not be the same memory address 43

Example #include <mpi. h> #include <stdio. h> int main(int argc, char **argv) { int my_rank, ncpus; int left_neighbor, right_neighbor; int data_received=-1; int send_tag = 101, recv_tag=101; MPI_Status status; mpirun –np 4 test_shift Among 4 processes, process 3 received from right neighbor: 0 Among 4 processes, process 2 received from right neighbor: 3 Among 4 processes, process 0 received from right neighbor: 1 Among 4 processes, process 1 received from right neighbor: 2 MPI_Init(&argc, &argv); MPI_Comm_rank(MPI_COMM_WORLD, &my_rank); MPI_Comm_size(MPI_COMM_WORLD, &ncpus); left_neighbor = (my_rank-1 + ncpus)%ncpus; right_neighbor = (my_rank+1)%ncpus; MPI_Sendrecv(&my_rank, 1, MPI_INT, left_neighbor, send_tag, &data_received, 1, MPI_INT, right_neighbor, recv_tag, MPI_COMM_WORLD, &status); printf("Among %d processes, process %d received from right neighbor: %dn", ncpus, my_rank, data_received); // clean up MPI_Finalize(); return 0; } 44