Convolutional Neural Networks in Medical Image Classification Lecturers

![Introduction Literature Review Alex. Net • [Krizhevsky et al. 2012] 38/53 CNN Transfer Learning Introduction Literature Review Alex. Net • [Krizhevsky et al. 2012] 38/53 CNN Transfer Learning](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-38.jpg)

![Introduction Literature Review VGG Net • [Andrea Vedaldi et al. 2014] 39/53 CNN Transfer Introduction Literature Review VGG Net • [Andrea Vedaldi et al. 2014] 39/53 CNN Transfer](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-39.jpg)

![Introduction Literature Review CNN Transfer Learning Google. Net • [Szegedy et al. 2014] 9/53 Introduction Literature Review CNN Transfer Learning Google. Net • [Szegedy et al. 2014] 9/53](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-40.jpg)

![Introduction Literature Review CNN Transfer Learning Case Study Conclusion References 52/53 • [1] Krizhevsky Introduction Literature Review CNN Transfer Learning Case Study Conclusion References 52/53 • [1] Krizhevsky](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-52.jpg)

- Slides: 54

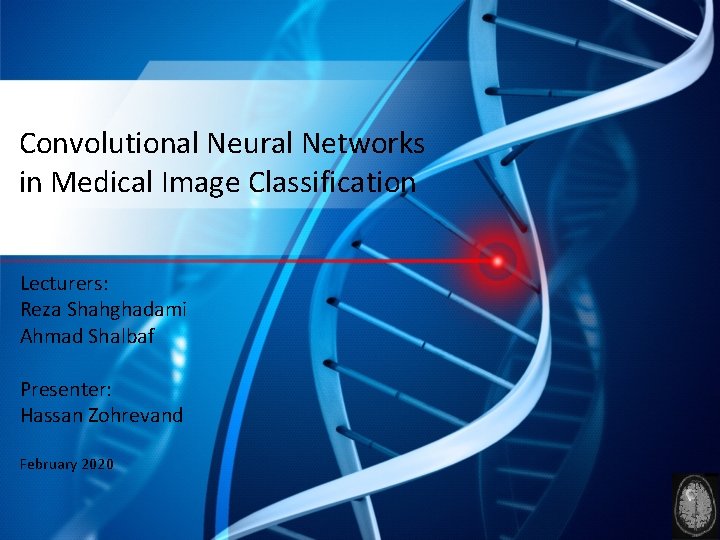

Convolutional Neural Networks in Medical Image Classification Lecturers: Reza Shahghadami Ahmad Shalbaf Presenter: Hassan Zohrevand February 2020

Outline • • • 2/53 Introduction to deep learning Literature Review Convolutional neural networks Transfer Learning Some Examples Conclusion

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Deep Learning: Revolution of Artificial Intelligence Labeled Data Training Prediction Labeled Data Machine Learning algorithm Learned model Predic tion • Field of study gives computers the ability to learn Output Trainable Classifier Extract Hand • Traditional machine features (e. g. Outdoor Yes without beinglearning: explicitlyhand-crafted programmed (e. g. SVM, Random Crafted Features or No) Forrest) • hand-crafted feature extraction: Machine Learning Labeled Data Very tedious and costly to develop Low Level High Level Trainable Output Mid Level algorithm • Deep learning has an inbuilt automatic multi stage feature learning Features Classifier (e. g. outdoor, indoor) Features Usually highly dependent on the application process. Training that learns rich hierarchical representations (i. e. features). Prediction Labeled Data 3/53 Trainable Extract Classifier Hand (e. g. SVM, Crafted Learned model Random Features Forrest) Output (e. g. Outdoor Prediction Yes or No)

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Deep Learning • • 4/53 A machine learning subfield of learning representations of data. To learn (multiple levels of) representation by using a hierarchy of multiple layers Tons of information “Deep Learning doesn’t do different things, it does things differently”

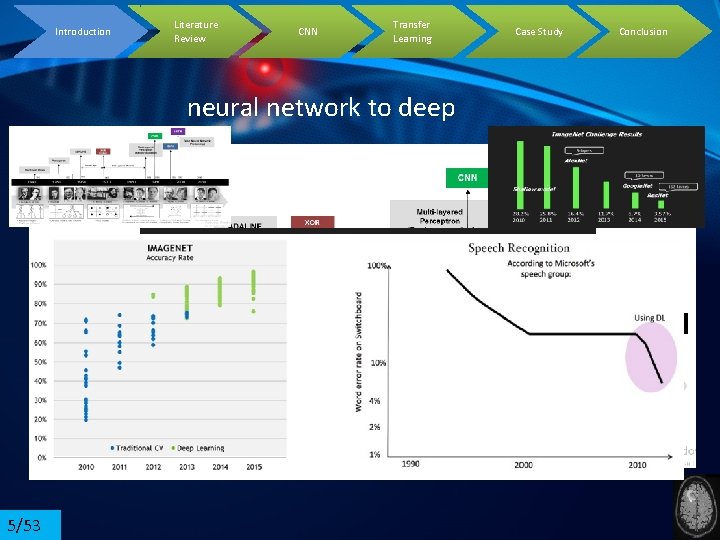

Literature Review Introduction CNN Transfer Learning Case Study neural network to deep 5/53 • Why after more than two decades of introducing CNN concepts, deep learning started to flourish? • 1) lack of sufficient database • 2) lack of powerful hardwares • 3) lack of suitable training algorithms (vanishing gradient) Conclusion

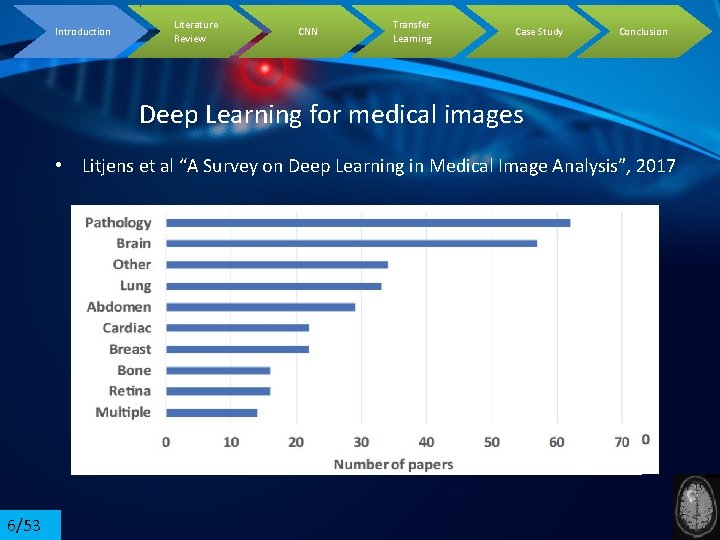

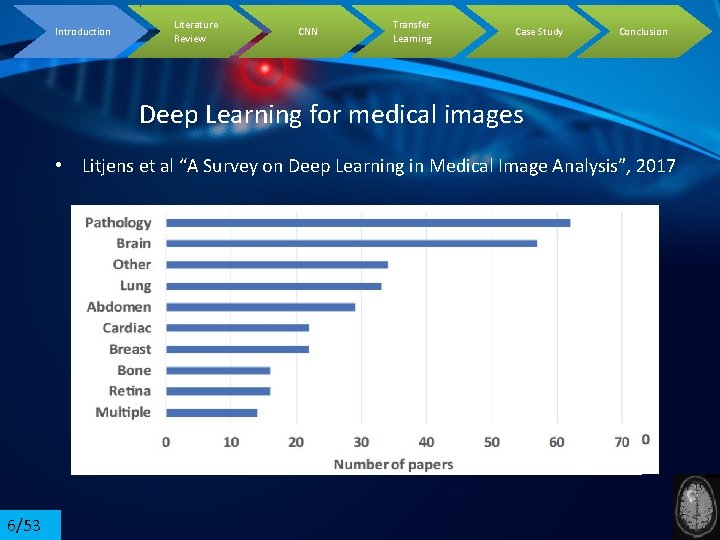

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Deep Learning for medical images • Litjens et al “A Survey on Deep Learning in Medical Image Analysis”, 2017 6/53

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Evolution of Convolutional NN • Alex. Net: Image. Net Classification with Deep Convolutional. Neural Networks, 2012 • Winner of imagenet challenge in 2012 • VGGNet: VERY DEEP CONVOLUTIONAL NETWORKS FOR LARGE-SCALE IMAGE RECOGNITION, 2014 • Second prize of imagenet challenge in 2014 • Google. Net: Going deeper with convolutions, 2014 • Winner of imagenet challenge in 2014 7/53

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Evolution of Convolutional NN • In 2019, CLASSIFICATION OF BRAIN TUMOR FROM MRI USING CNN • 3064 T 1 -weighted images from 233 patients of 3 types of tumor • In 2018: Using deep convolutional neural networks to identify and classify tumor-associated stroma in diagnostic breast biopsies • 2387 tissue sections of benign and malignant from 882 persons • In 2018, Deep learning enables automated scoring of liver fibrosis stages • Using Alexnet architecture 8/53

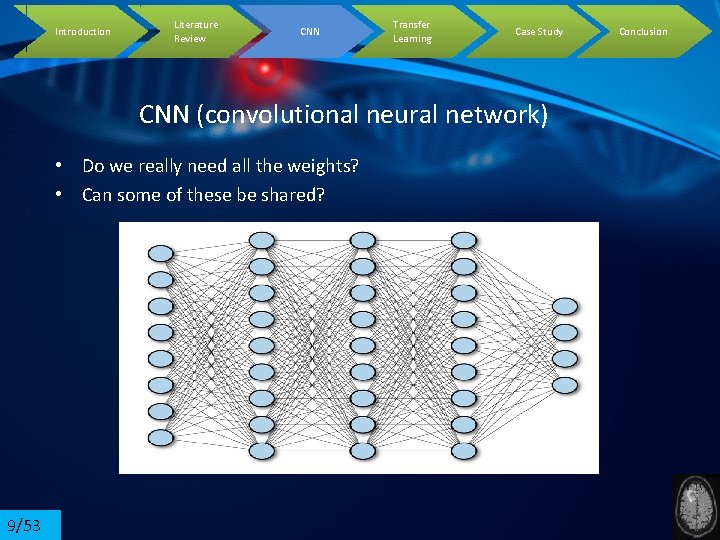

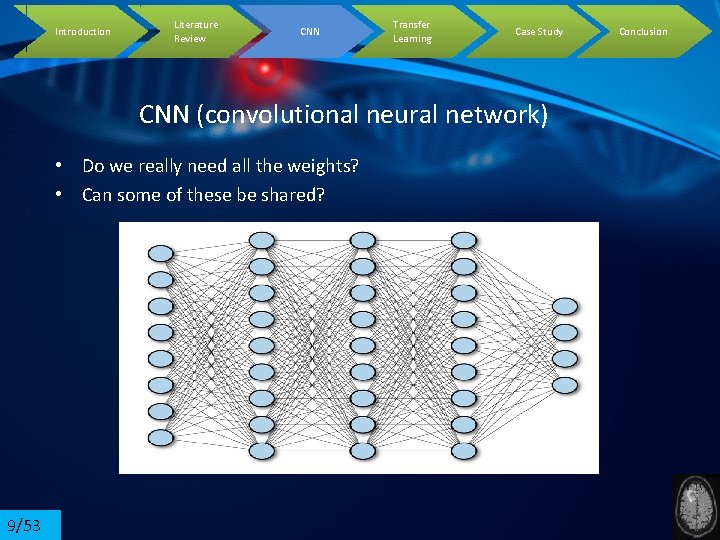

Introduction Literature Review CNN Transfer Learning Case Study CNN (convolutional neural network) • Do we really need all the weights? • Can some of these be shared? 9/53 Conclusion

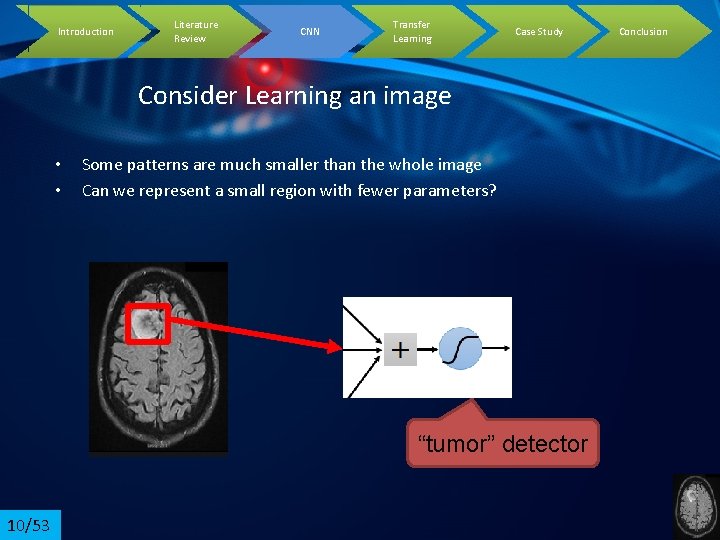

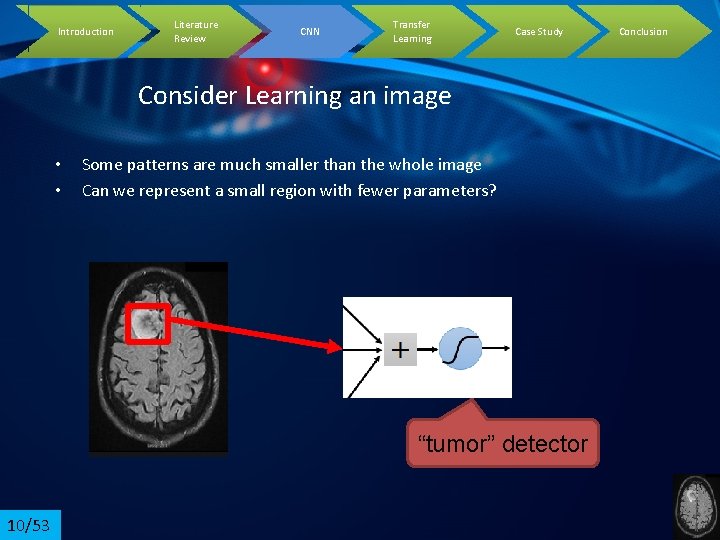

Introduction Literature Review CNN Transfer Learning Case Study Consider Learning an image • • Some patterns are much smaller than the whole image Can we represent a small region with fewer parameters? “tumor” detector 10/53 Conclusion

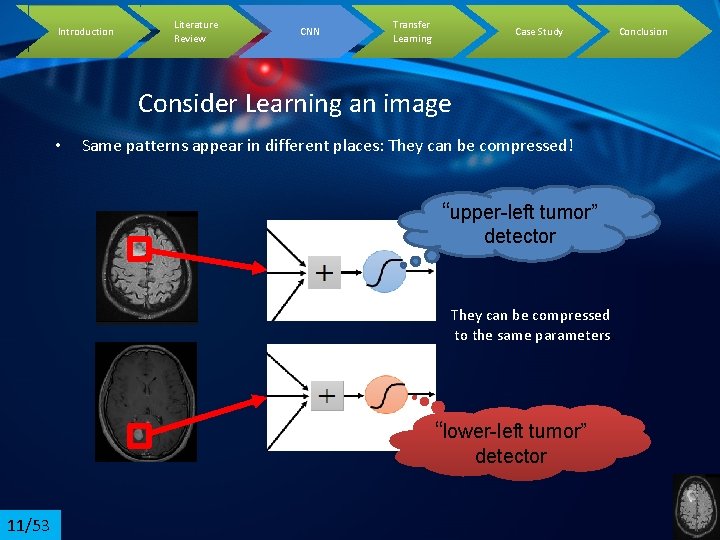

Introduction Literature Review CNN Transfer Learning Case Study Consider Learning an image • Same patterns appear in different places: They can be compressed! “upper-left tumor” detector They can be compressed to the same parameters. “lower-left tumor” detector 11/53 Conclusion

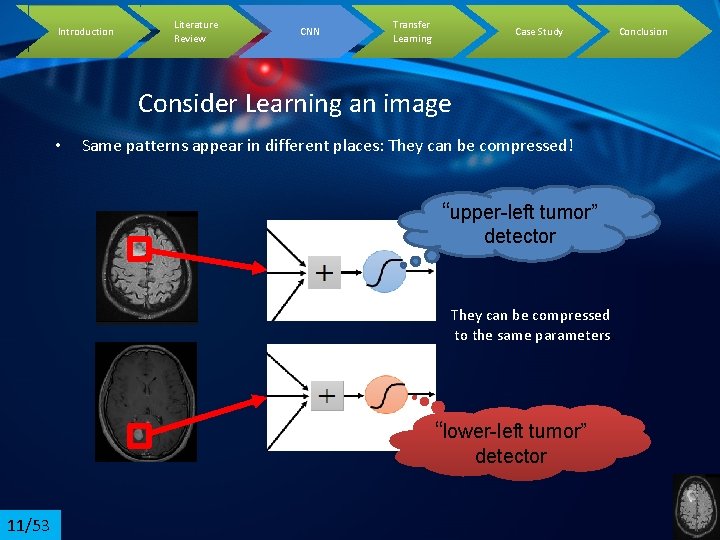

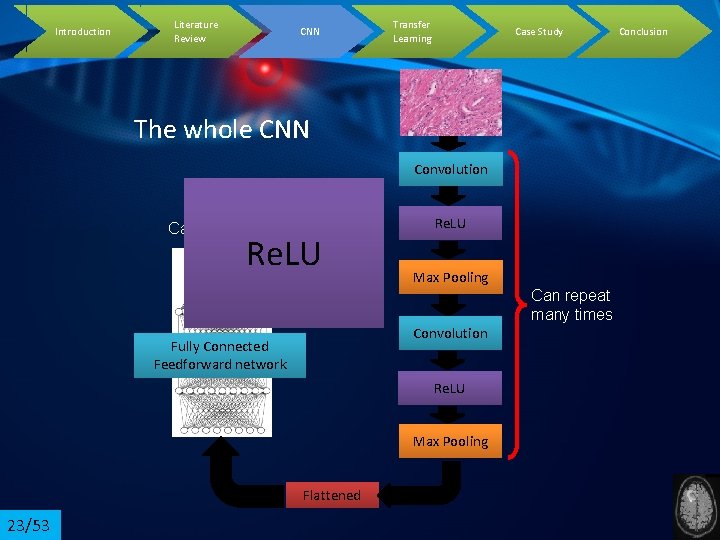

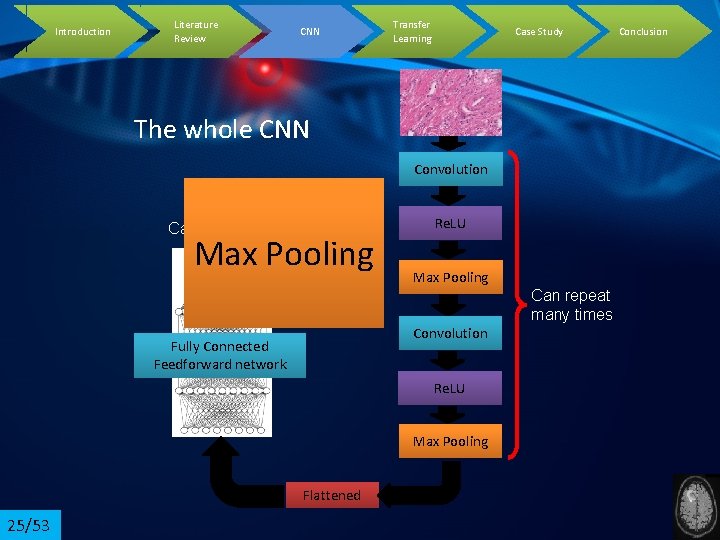

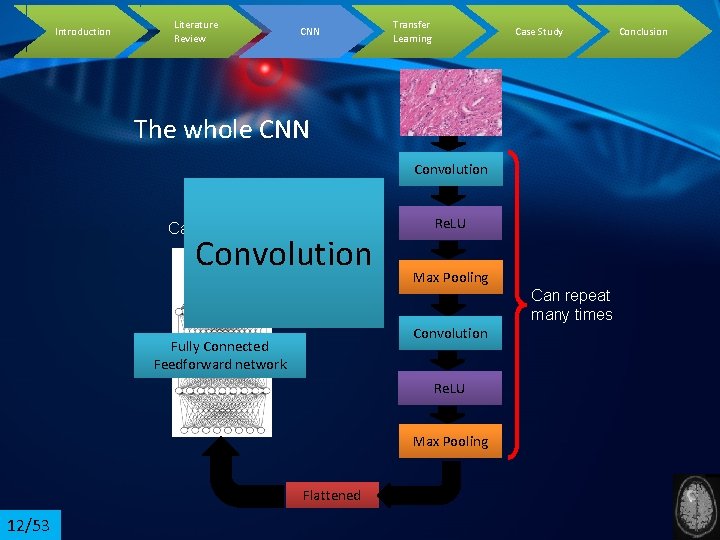

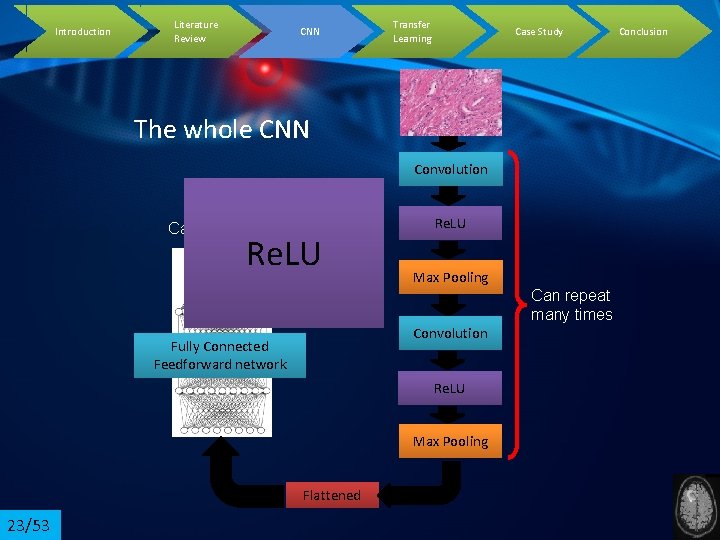

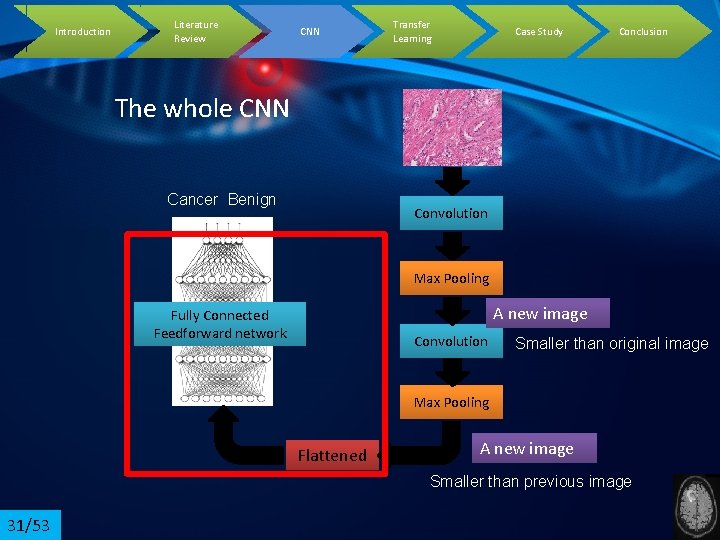

Introduction Literature Review CNN Transfer Learning Case Study The whole CNN Convolution Cancer Benign Convolution Re. LU Max Pooling Can repeat many times Convolution Fully Connected Feedforward network Re. LU Max Pooling Flattened 12/53 Conclusion

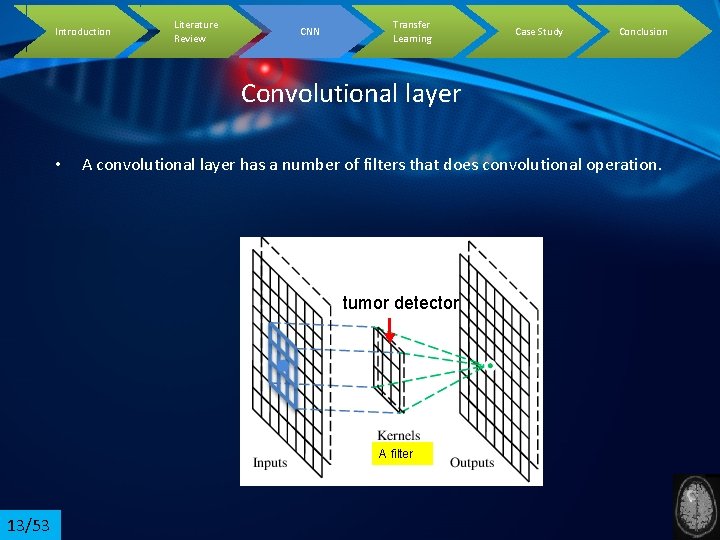

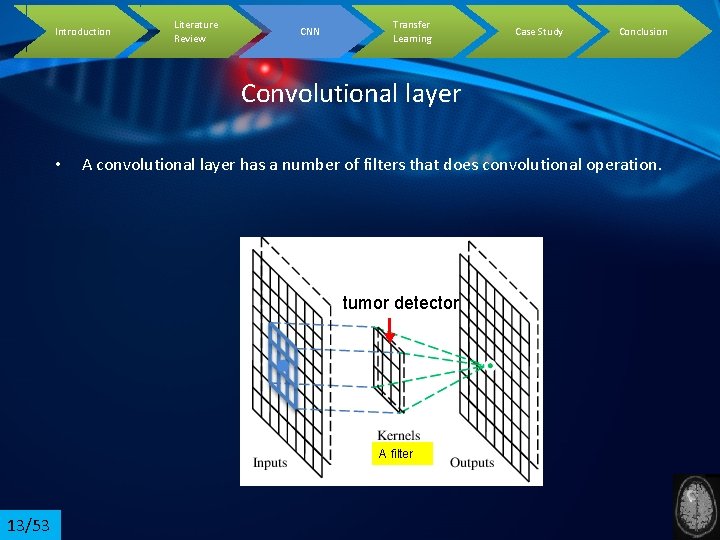

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Convolutional layer • A convolutional layer has a number of filters that does convolutional operation. tumor detector A filter 13/53

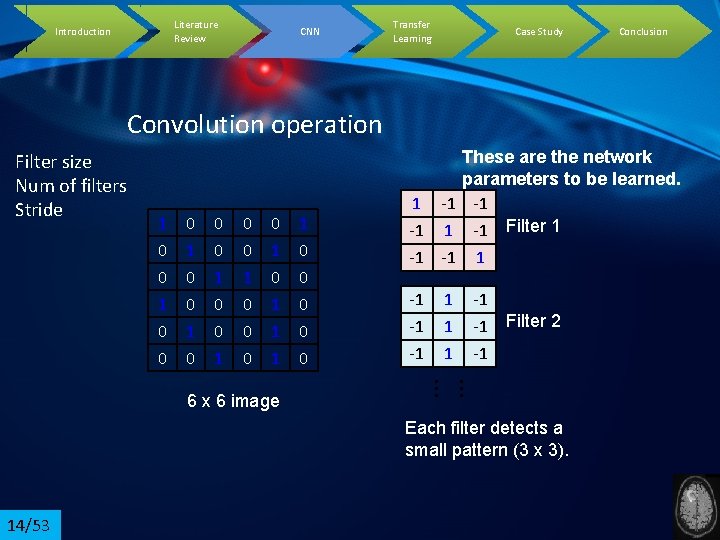

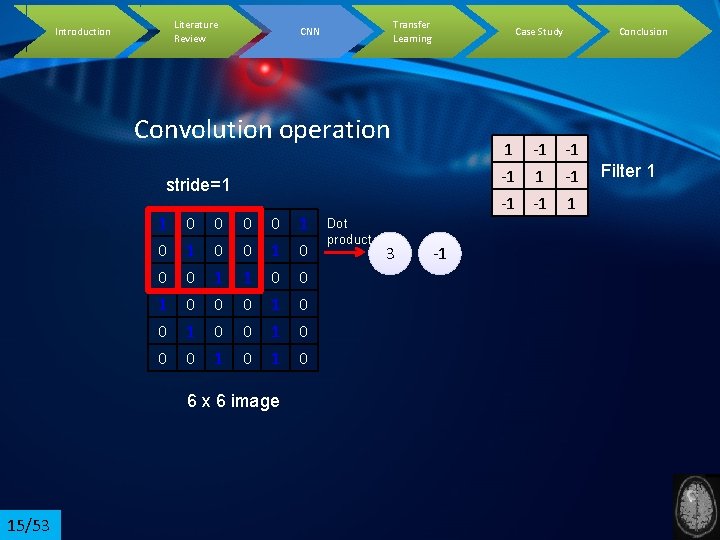

Literature Review Introduction CNN Transfer Learning Case Study Conclusion Convolution operation Filter size Num of filters Stride 1 0 0 0 1 0 0 1 0 1 1 1 -1 -1 -1 Filter 2 … … 6 x 6 image 1 0 0 0 These are the network parameters to be learned. 1 -1 -1 -1 Filter 1 -1 -1 1 Each filter detects a small pattern (3 x 3). 14/53

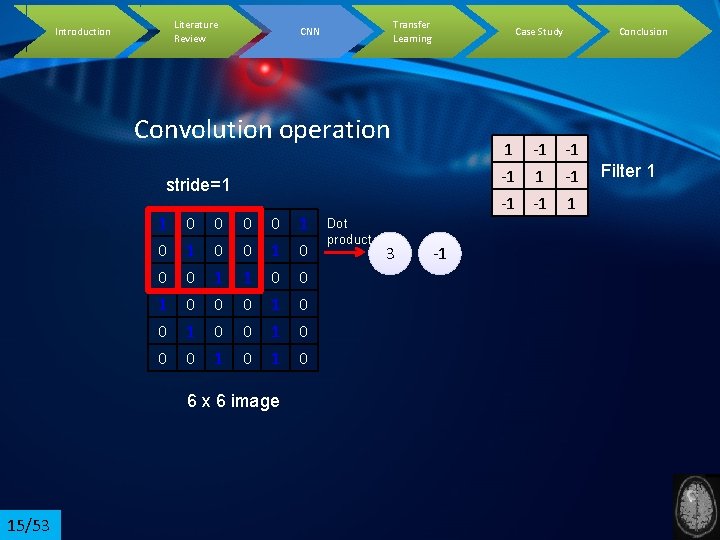

Literature Review Introduction Transfer Learning CNN Case Study Convolution operation 1 -1 -1 -1 1 stride=1 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 15/53 1 0 0 0 Dot product 3 -1 Conclusion Filter 1

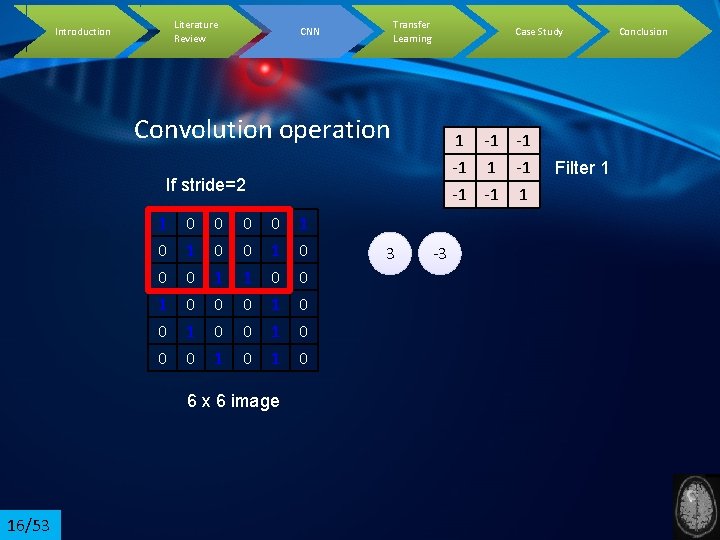

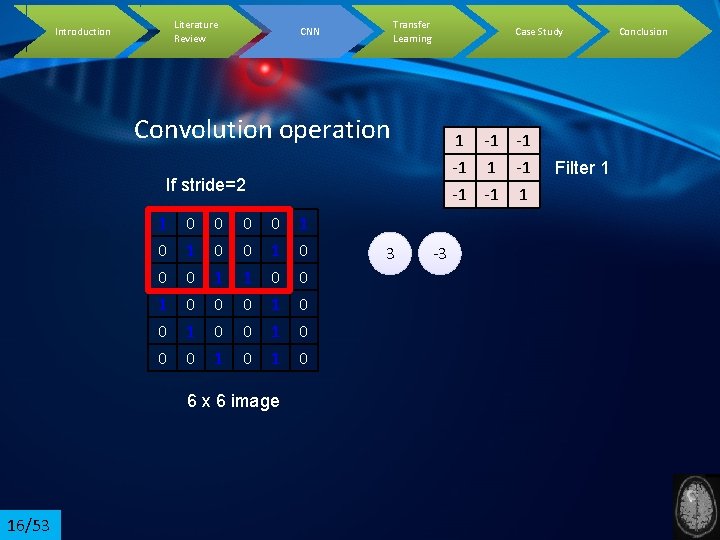

Literature Review Introduction Transfer Learning CNN Case Study Convolution operation 1 -1 -1 -1 1 If stride=2 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 16/53 1 0 0 0 3 -3 Filter 1 Conclusion

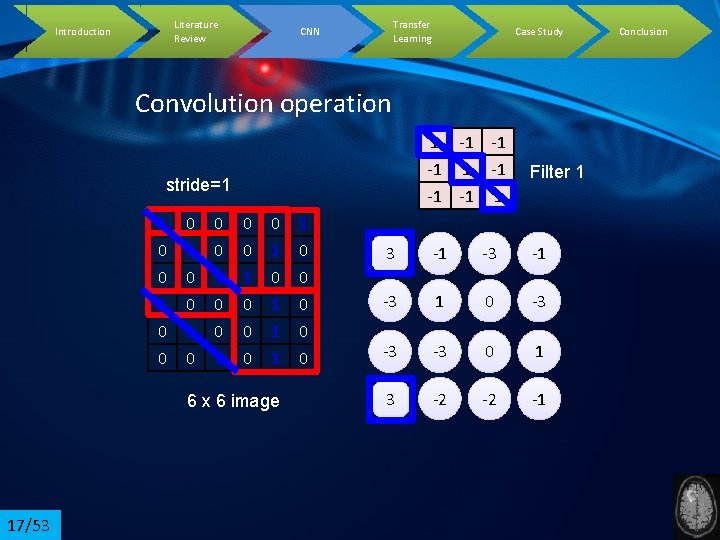

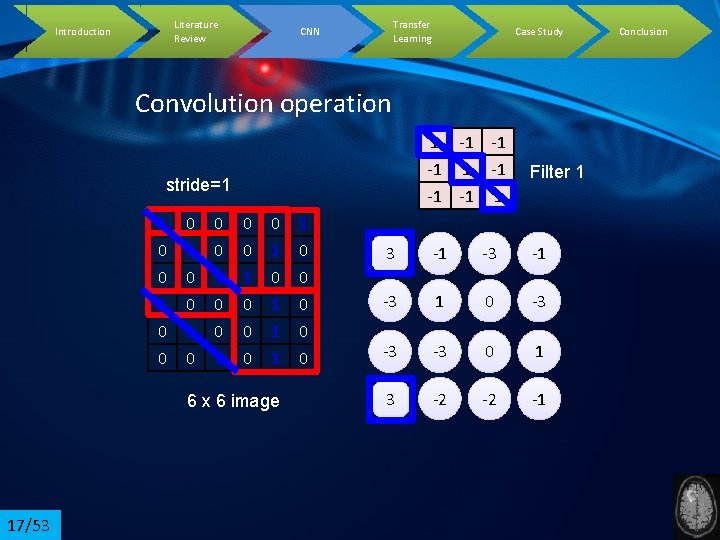

Literature Review Introduction Transfer Learning CNN Case Study Convolution operation 1 -1 -1 -1 1 Filter 1 3 -1 -3 1 0 -3 -3 -3 0 1 3 -2 -2 -1 stride=1 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 17/53 1 0 0 0 Conclusion

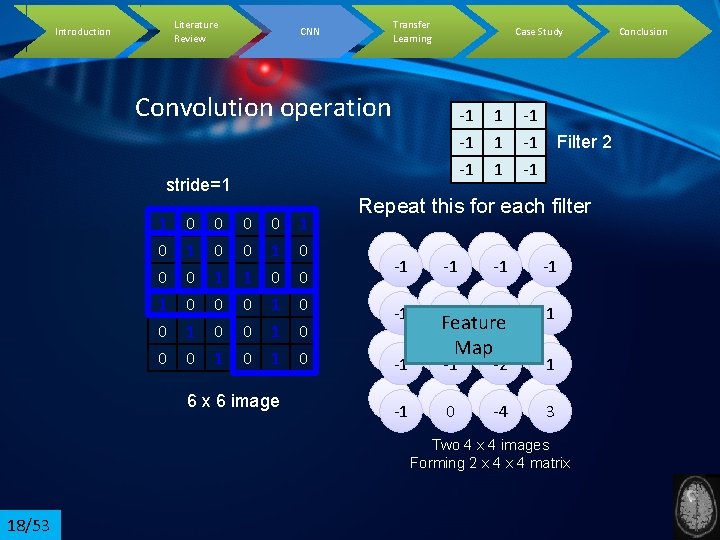

Literature Review Introduction Transfer Learning CNN Case Study Convolution operation stride=1 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 1 0 0 0 -1 -1 -1 -1 Filter 2 Repeat this for each filter 3 -1 -1 -1 -3 -1 1 -1 0 -2 -3 1 -1 -2 -2 0 -2 -4 -3 -1 Feature -3 Map 0 1 1 -1 3 Two 4 x 4 images Forming 2 x 4 matrix 18/53 Conclusion

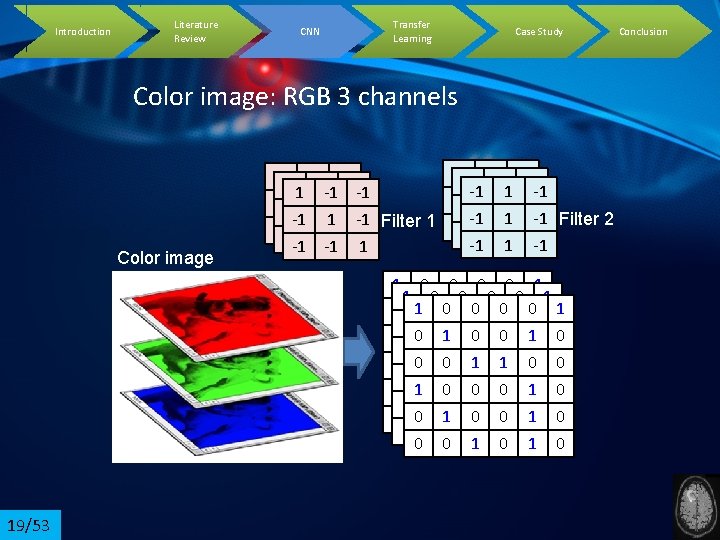

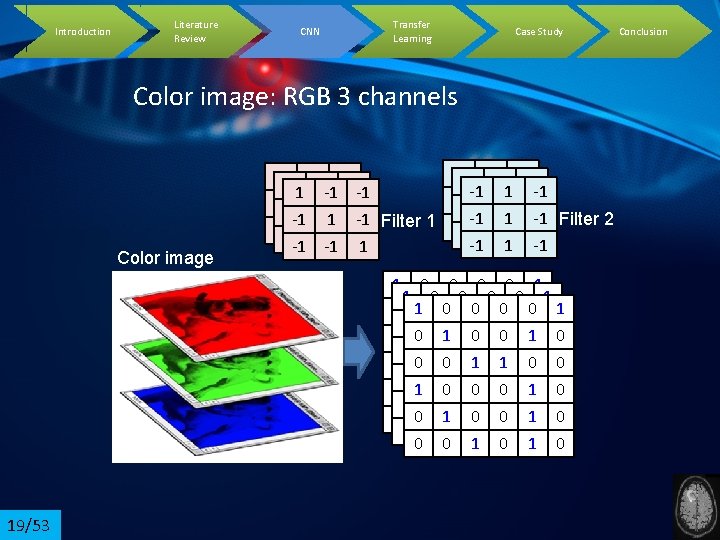

Introduction Literature Review CNN Transfer Learning Case Study Color image: RGB 3 channels Color image -1 -1 11 -1 -1 -1 -1 -1 -1 11 -1 -1 -1 Filter 1 -1 -1 1 1 -1 -1 Filter 2 -1 -1 11 -1 -1 -1 -1 1 1 0 0 0 0 1 0 11 00 00 01 00 1 0 0 00 11 01 00 10 0 1 1 0 0 1 00 00 10 11 00 0 11 00 00 01 10 0 0 1 0 0 00 11 00 01 10 0 1 0 19/53 Conclusion

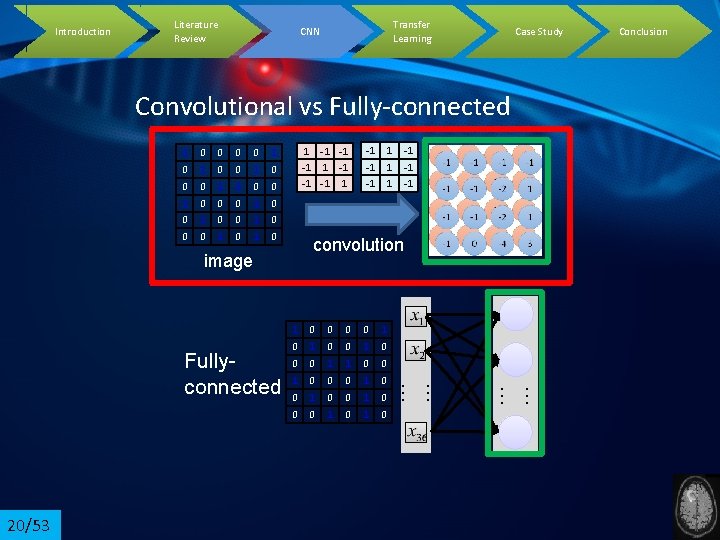

Introduction Literature Review Transfer Learning CNN Case Study Convolutional vs Fully-connected 1 0 0 1 0 0 0 1 0 0 1 0 1 -1 -1 -1 0 0 1 0 0 0 1 1 0 0 0 1 0 0 0 1 0 … … 1 … … 20/53 1 1 1 convolution image Fullyconnected -1 -1 -1 Conclusion

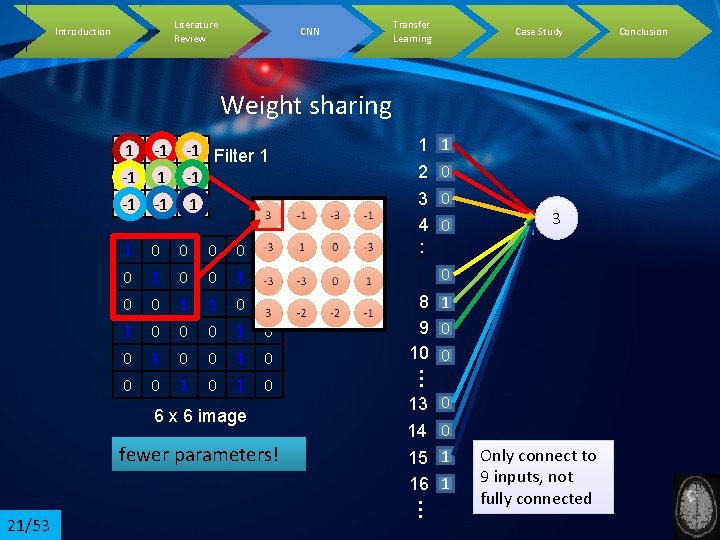

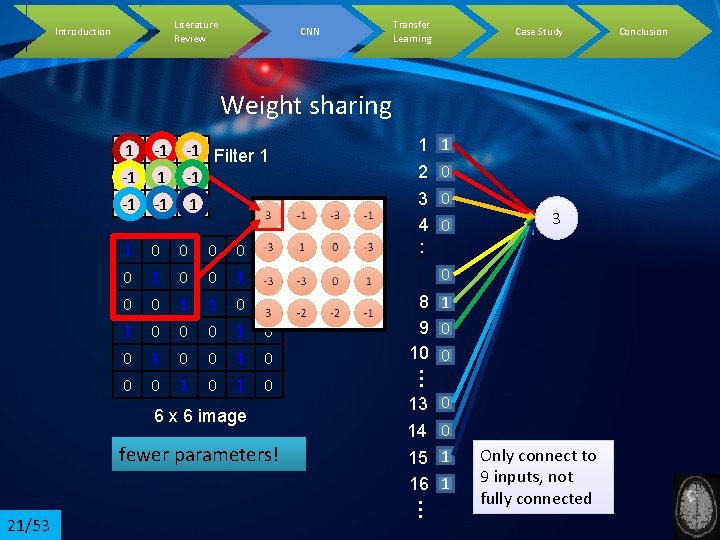

Literature Review Introduction CNN Transfer Learning Case Study Weight sharing 1 -1 -1 Filter 1 -1 -1 -1 1 2 0 3 0 1 0 0 … 0 0 1 0 0 1 0 1 1 0 0 0 6 x 6 image fewer parameters! 0 8 1 9 0 10 0 13 0 14 0 15 1 16 1 … 21/53 3 … 0 1 0 4 0 : Only connect to 9 inputs, not fully connected Conclusion

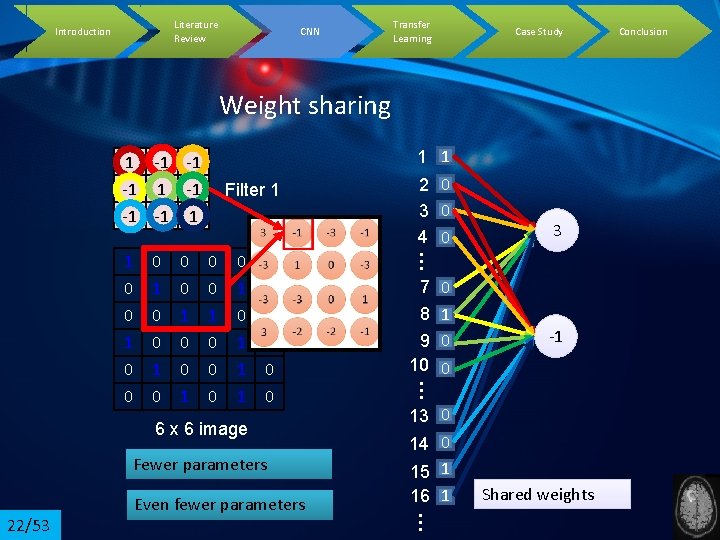

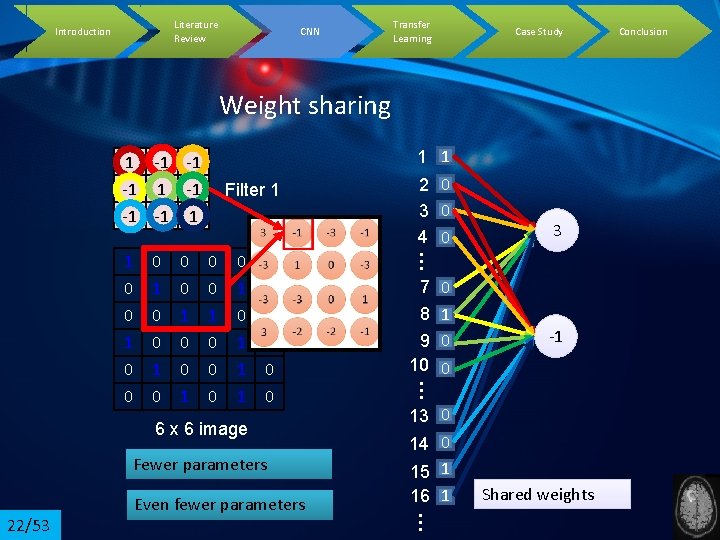

Literature Review Introduction CNN Transfer Learning Case Study Weight sharing 1 -1 -1 -1 1 0 0 0 0 1 1 1 1 0 0 0 6 x 6 image Fewer parameters -1 13 0 14 0 15 1 16 1 … 22/53 Even fewer parameters 7 0 : 8 1 : 9 0 : 0 10 … 0 1 0 3 … 1 0 0 Filter 1 1 1 2 0 3 0 4 0 Shared weights Conclusion

Introduction Literature Review CNN Transfer Learning Case Study The whole CNN Convolution Cancer Benign Re. LU Max Pooling Can repeat many times Convolution Fully Connected Feedforward network Re. LU Max Pooling Flattened 23/53 Conclusion

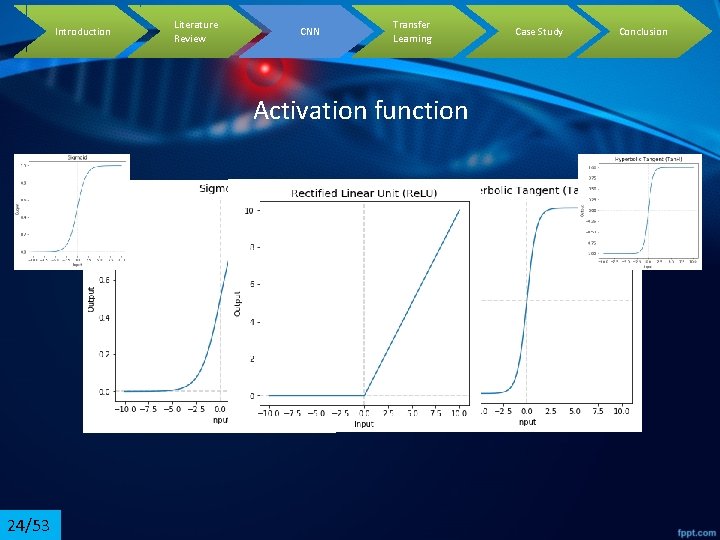

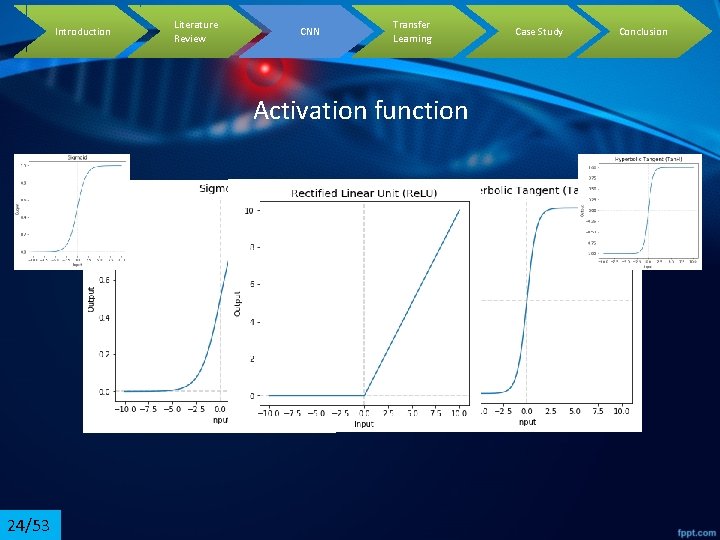

Introduction Literature Review CNN Transfer Learning Activation function 24/53 Case Study Conclusion

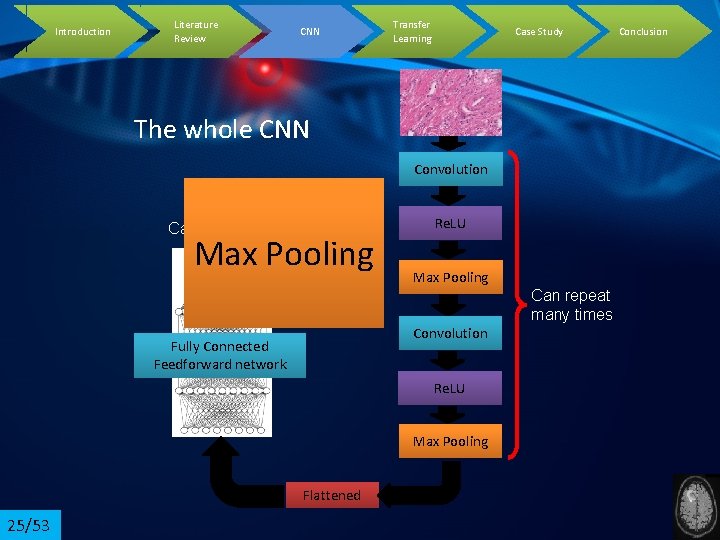

Introduction Literature Review CNN Transfer Learning Case Study The whole CNN Convolution Cancer Benign Max Pooling Re. LU Max Pooling Can repeat many times Convolution Fully Connected Feedforward network Re. LU Max Pooling Flattened 25/53 Conclusion

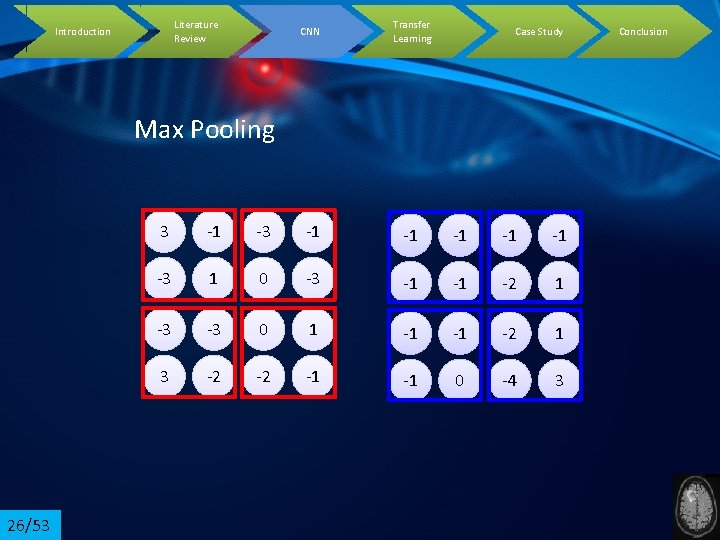

Literature Review Introduction CNN Transfer Learning Case Study Max Pooling 26/53 3 -1 -1 -1 -3 1 0 -3 -1 -1 -2 1 -3 -3 0 1 -1 -1 -2 1 3 -2 -2 -1 -1 0 -4 3 Conclusion

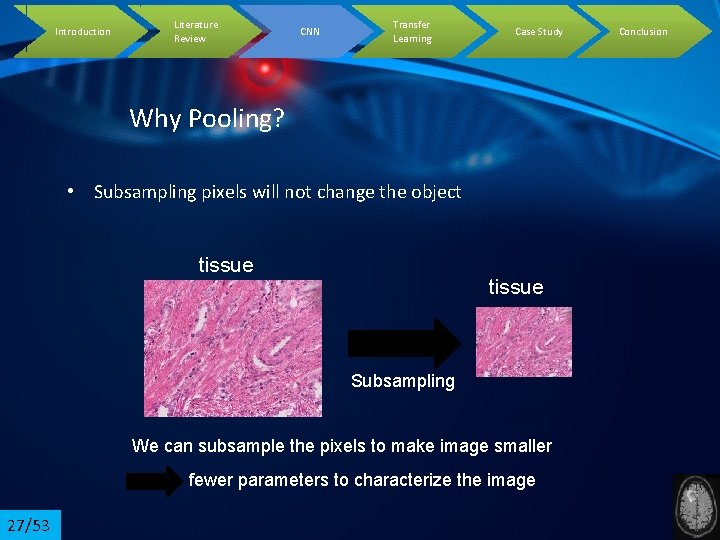

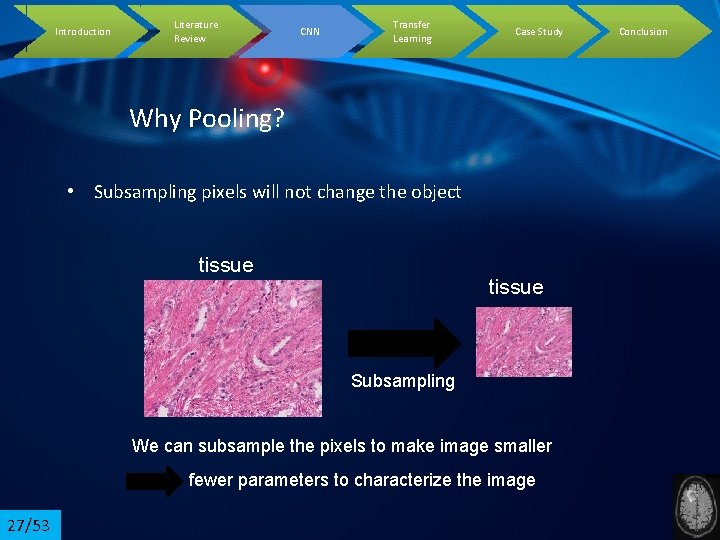

Introduction Literature Review CNN Transfer Learning Case Study Why Pooling? • Subsampling pixels will not change the object tissue Subsampling We can subsample the pixels to make image smaller fewer parameters to characterize the image 27/53 Conclusion

Introduction Literature Review CNN Transfer Learning Case Study Conclusion A CNN compresses a fully connected network in three ways: • Reducing number of connections • Shared weights • Max pooling 28/53

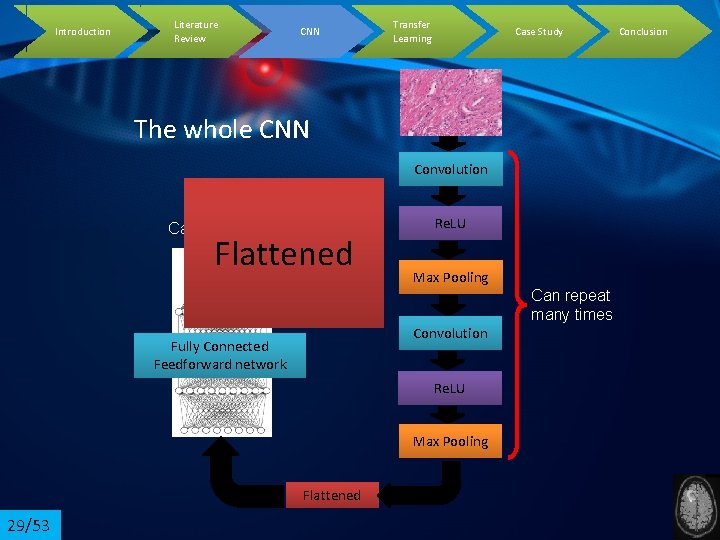

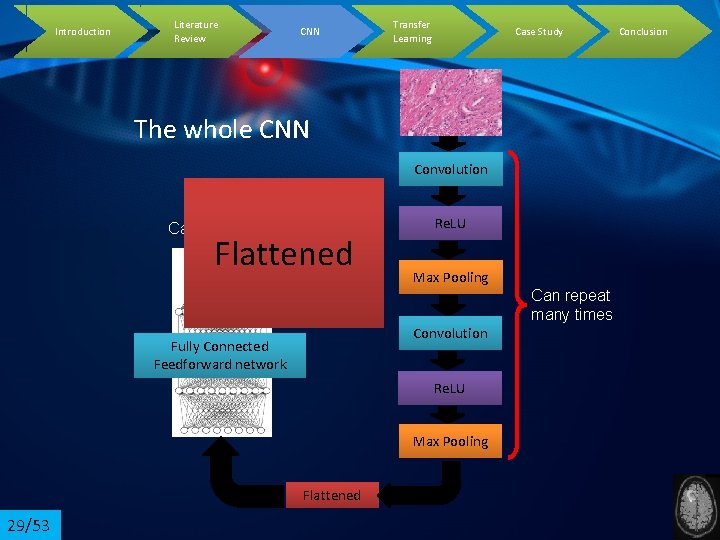

Introduction Literature Review CNN Transfer Learning Case Study The whole CNN Convolution Cancer Benign Flattened Re. LU Max Pooling Can repeat many times Convolution Fully Connected Feedforward network Re. LU Max Pooling Flattened 29/53 Conclusion

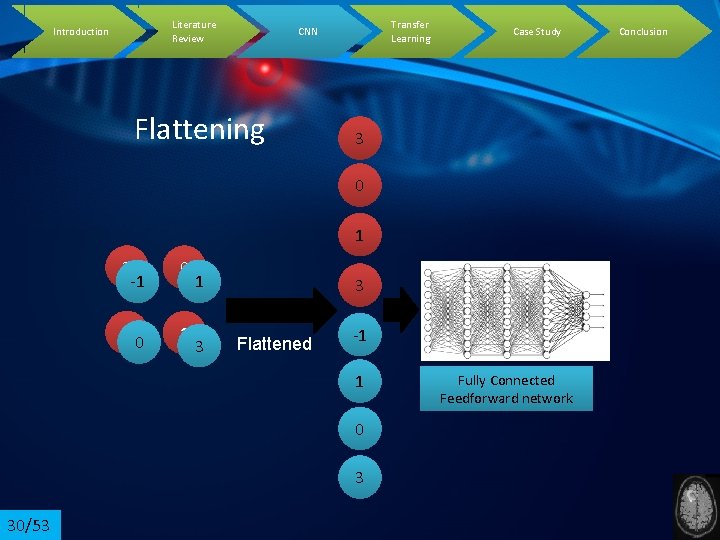

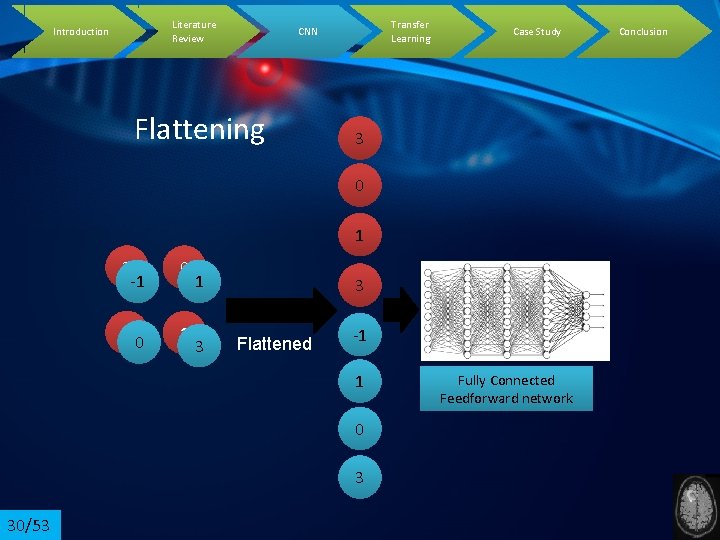

Literature Review Introduction Transfer Learning CNN Flattening Case Study 3 0 1 3 -1 0 3 1 0 1 3 3 Flattened -1 1 0 3 30/53 Fully Connected Feedforward network Conclusion

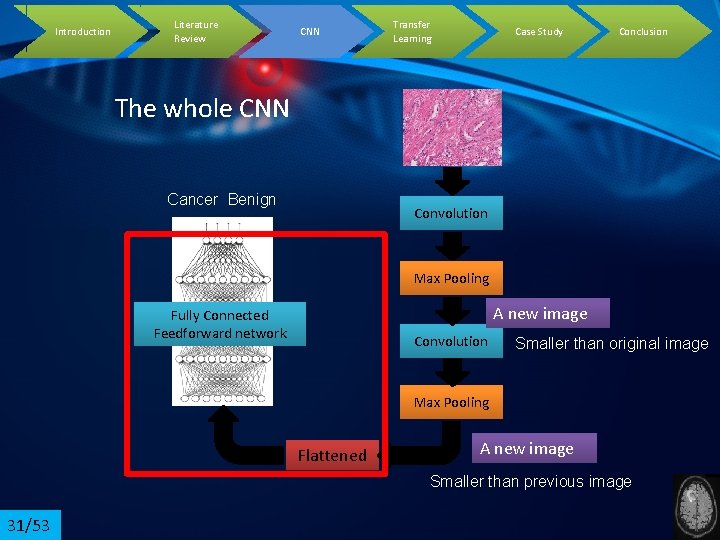

Introduction Literature Review CNN Transfer Learning Case Study Conclusion The whole CNN Cancer Benign Convolution Max Pooling A new image Fully Connected Feedforward network Convolution Smaller than original image Max Pooling Flattened A new image Smaller than previous image 31/53

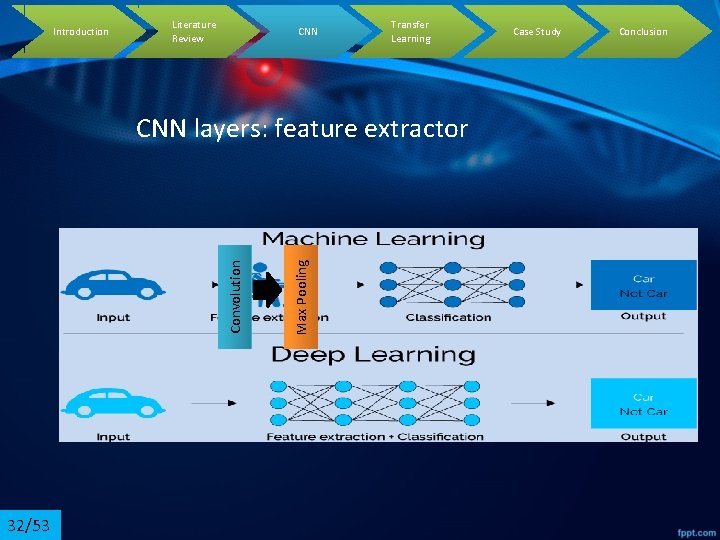

Introduction Literature Review CNN Transfer Learning 32/53 Max Pooling Convolution CNN layers: feature extractor Case Study Conclusion

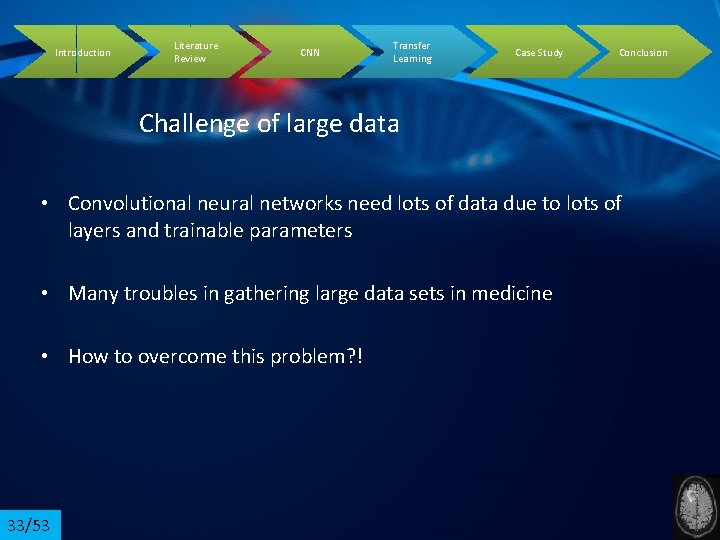

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Challenge of large data • Convolutional neural networks need lots of data due to lots of layers and trainable parameters • Many troubles in gathering large data sets in medicine • How to overcome this problem? ! 33/53

Introduction Literature Review CNN Transfer Learning Challenge of large data • Can we imitate this picture? ? !!!! 34/53 Case Study Conclusion

Introduction Literature Review CNN Transfer Learning Case Study Conclusion Transfer Learning • Humans have an inherent ability to transfer knowledge across tasks. • What we acquire as knowledge while learning about one task, we utilize in the same way to solve related tasks. • The more related the tasks, the easier it is for us to transfer. 35/53

Introduction Literature Review CNN Transfer Learning Case Study Challenge of large data • Know how to ride a motorbike �Learn how to ride a car • Know how to play classic piano �Learn how to play jazz piano • Know math and statistics �Learn machine learning 36/53 Conclusion

Introduction Literature Review CNN Transfer Learning Case Study Challenge of large data • Transfer learning is a machine learning method where a model developed for a task is reused as the starting point for a model on a second task. • Image. Net database: millions of images from 1000 class 37/53 Conclusion

![Introduction Literature Review Alex Net Krizhevsky et al 2012 3853 CNN Transfer Learning Introduction Literature Review Alex. Net • [Krizhevsky et al. 2012] 38/53 CNN Transfer Learning](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-38.jpg)

Introduction Literature Review Alex. Net • [Krizhevsky et al. 2012] 38/53 CNN Transfer Learning Case Study Conclusion

![Introduction Literature Review VGG Net Andrea Vedaldi et al 2014 3953 CNN Transfer Introduction Literature Review VGG Net • [Andrea Vedaldi et al. 2014] 39/53 CNN Transfer](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-39.jpg)

Introduction Literature Review VGG Net • [Andrea Vedaldi et al. 2014] 39/53 CNN Transfer Learning Case Study Conclusion

![Introduction Literature Review CNN Transfer Learning Google Net Szegedy et al 2014 953 Introduction Literature Review CNN Transfer Learning Google. Net • [Szegedy et al. 2014] 9/53](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-40.jpg)

Introduction Literature Review CNN Transfer Learning Google. Net • [Szegedy et al. 2014] 9/53 Inception module Case Study Conclusion

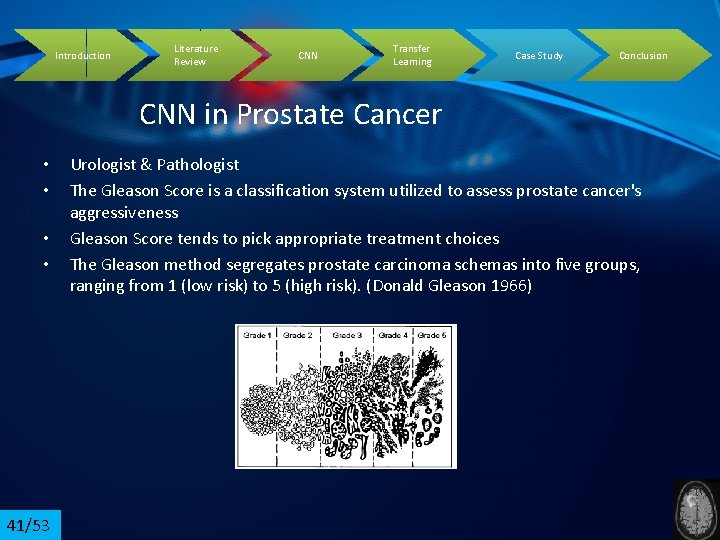

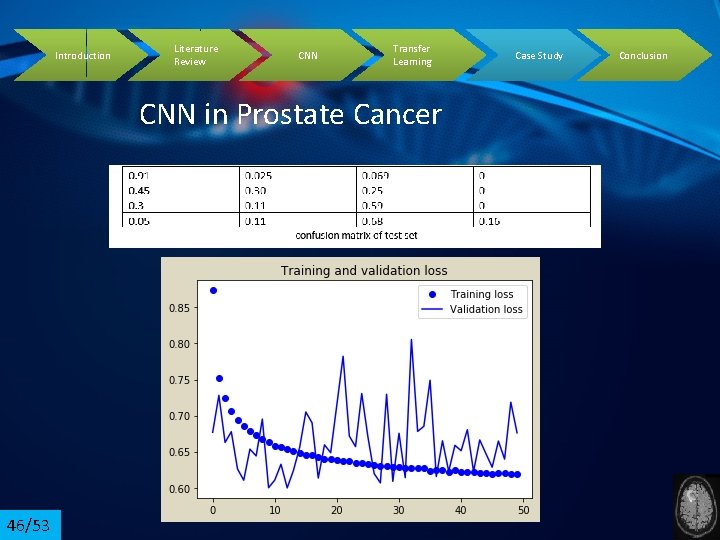

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in Prostate Cancer • • 41/53 Urologist & Pathologist The Gleason Score is a classification system utilized to assess prostate cancer's aggressiveness Gleason Score tends to pick appropriate treatment choices The Gleason method segregates prostate carcinoma schemas into five groups, ranging from 1 (low risk) to 5 (high risk). (Donald Gleason 1966)

Introduction Literature Review CNN Transfer Learning Case Study CNN in Prostate Cancer • The score is sum of the most and the highest secondary schema. • Gleason Score ranging from 2 to 10. • In clinic, Gleason Score ranging from 6 to 10. • The problem: inter-observer variability • 42/53 235 images: high resolution 5120*3 Conclusion

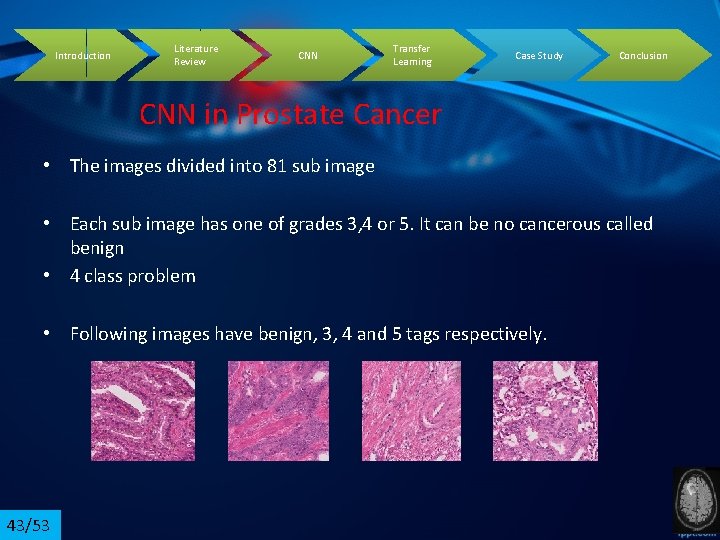

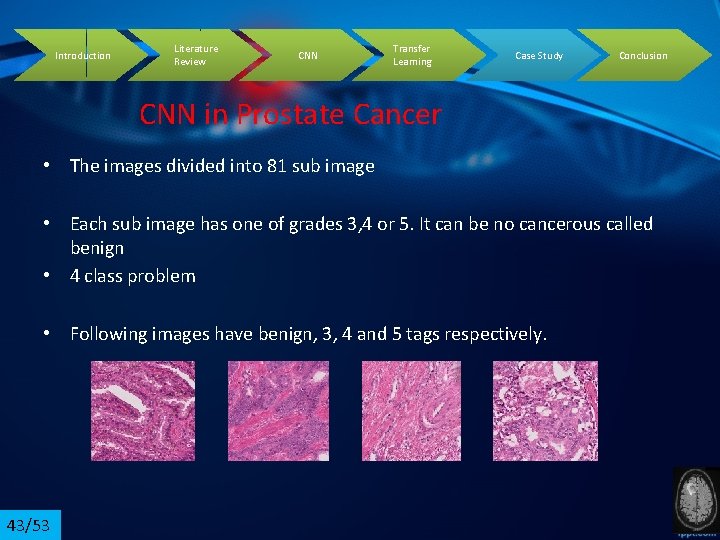

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in Prostate Cancer • The images divided into 81 sub image • Each sub image has one of grades 3, 4 or 5. It can be no cancerous called benign • 4 class problem • Following images have benign, 3, 4 and 5 tags respectively. 43/53

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in Prostate Cancer • • 44/53 Use of transfer learning: Mobile Net, VGG-16, Res Net, Google Net, Dense Net Best performance: VGG-16 The input RGB image size is 224*224 pixel Omit fully-connected layers of VGG 16 a max pooling layer with kernel size 4*4 and stride 4 added to compress the features A softmax layer with 4 neurons has been utilized as a classifier RMSprop was selected as the optimizer with learning rate 0. 001 and cross-entropy loss function

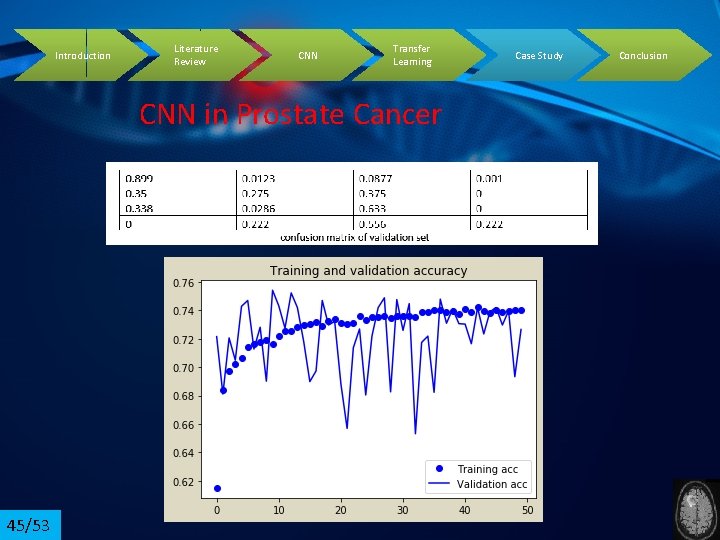

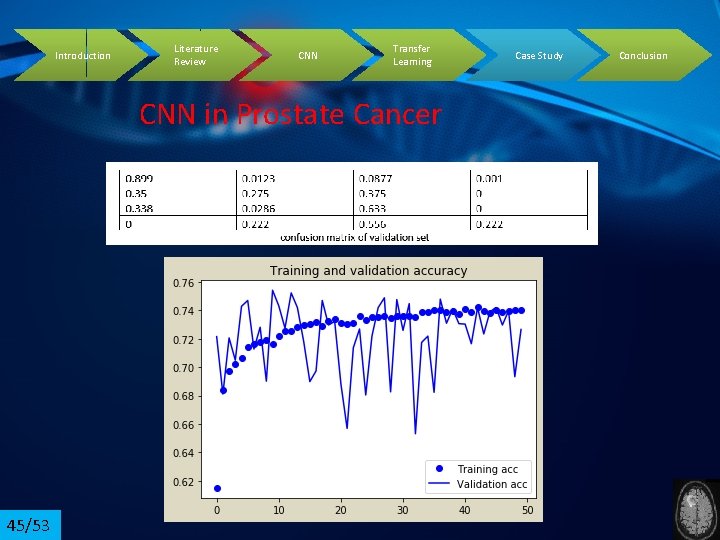

Introduction Literature Review CNN Transfer Learning CNN in Prostate Cancer 45/53 Case Study Conclusion

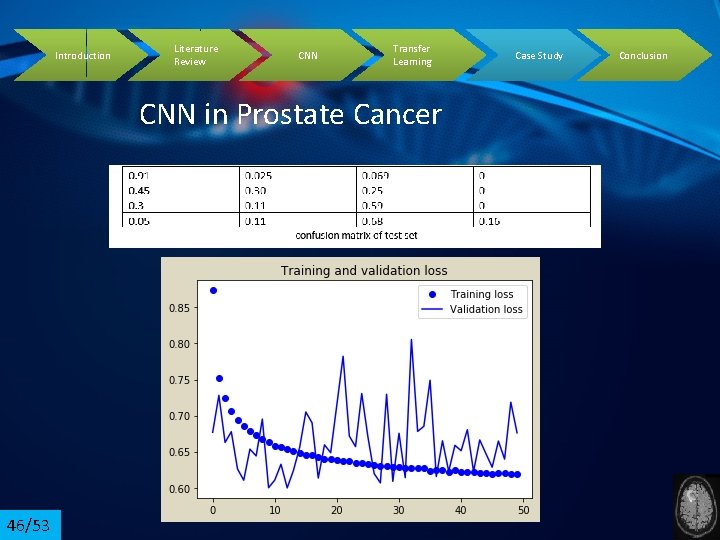

Introduction Literature Review CNN Transfer Learning CNN in Prostate Cancer 46/53 Case Study Conclusion

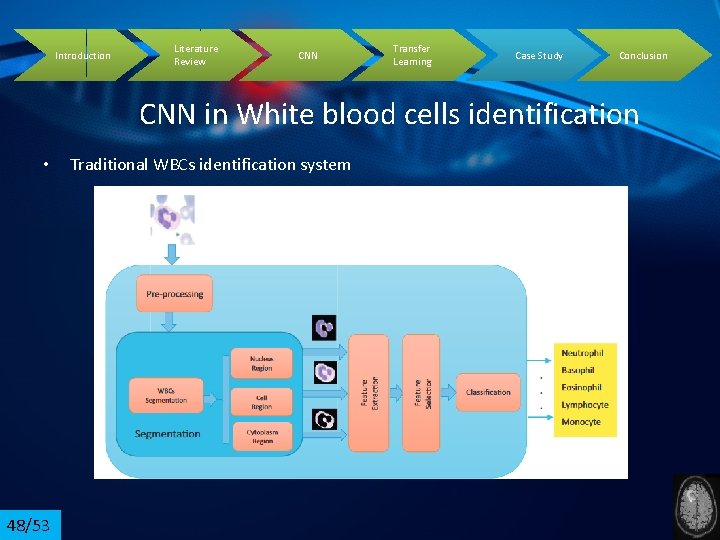

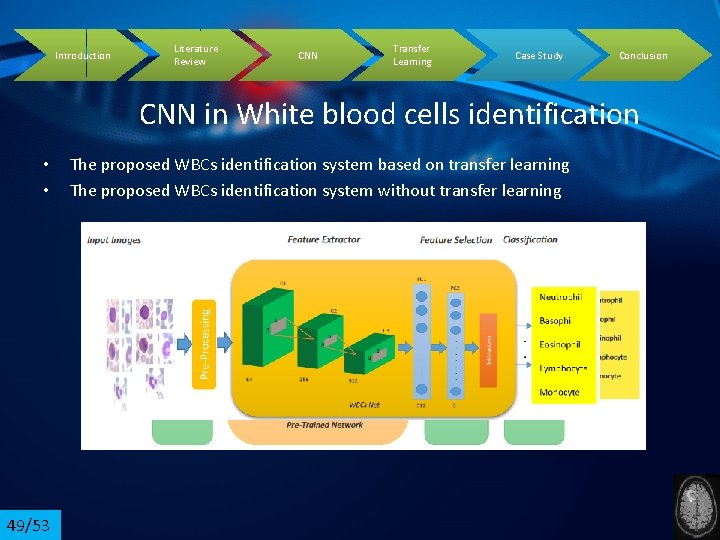

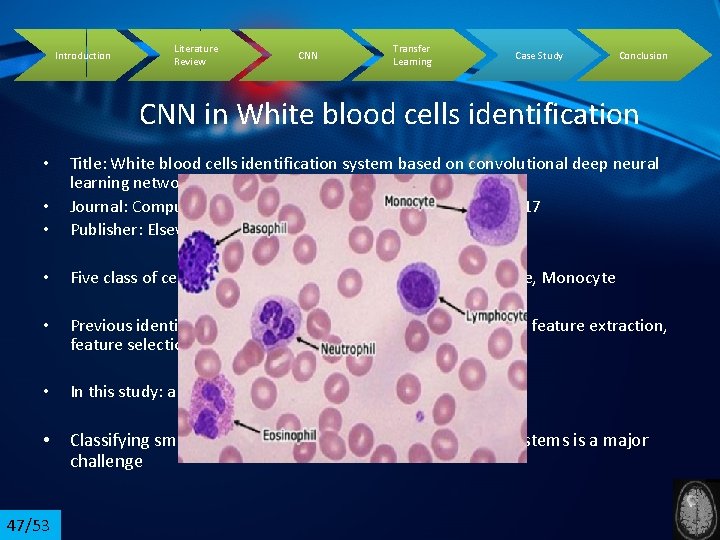

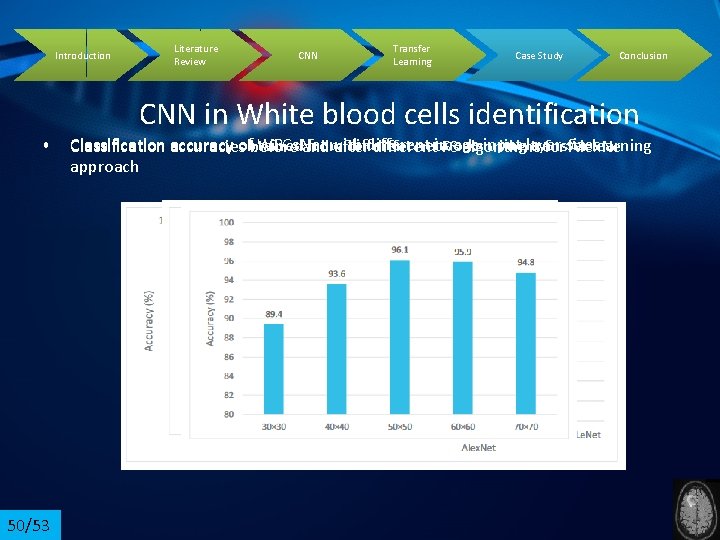

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in White blood cells identification • • Title: White blood cells identification system based on convolutional deep neural learning networks Journal: Computer Methods and Programs in Biomedicine , 2017 Publisher: Elsevier • Five class of cells: Neutrophil, Basophil, Eosinophil, Lymphocyte, Monocyte • Previous identification systems: pre-processing, segmentation, feature extraction, feature selection, and classification • In this study: a novel identification system based on CNN • Classifying small limited data sets through deep learning systems is a major challenge • 47/53

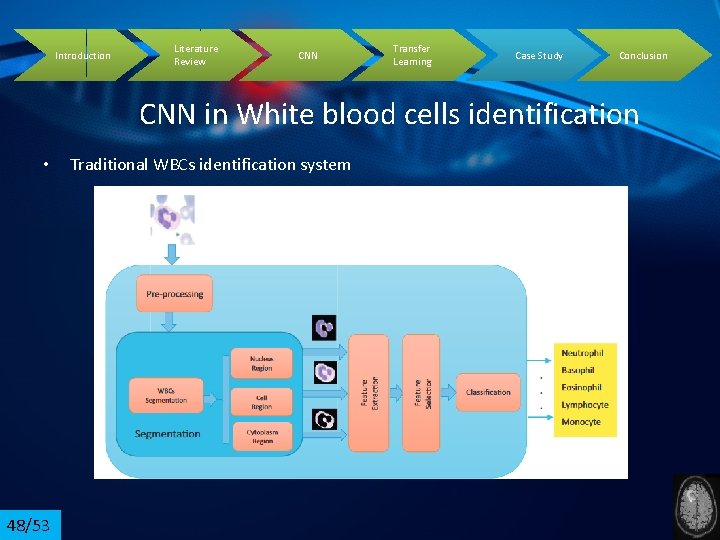

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in White blood cells identification • 48/53 Traditional WBCs identification system

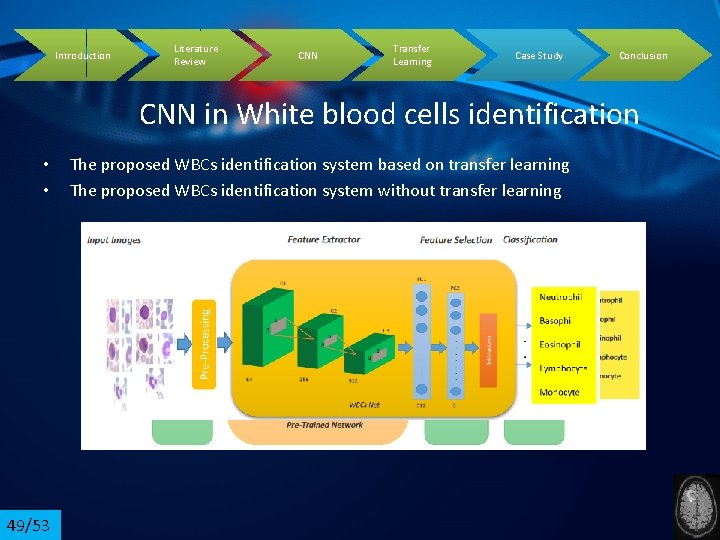

Introduction Literature Review CNN Transfer Learning Case Study Conclusion CNN in White blood cells identification • • 49/53 The proposed WBCs identification system based on transfer learning The proposed WBCs identification system without transfer learning

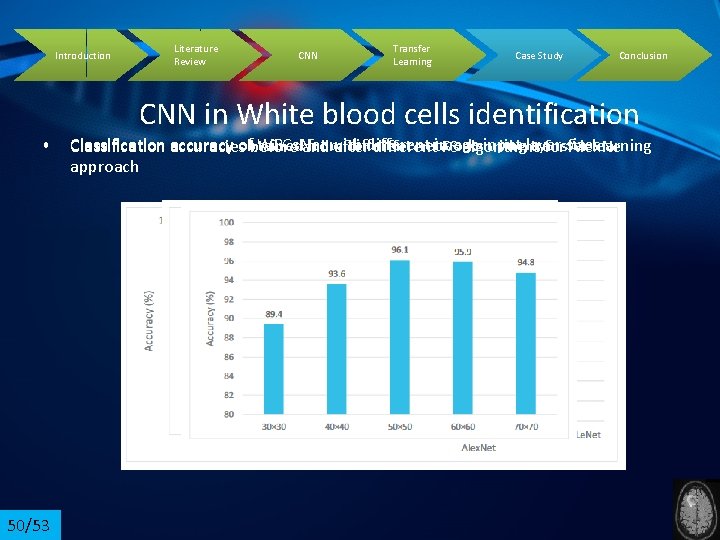

Introduction • 50/53 Literature Review CNN Transfer Learning Case Study Conclusion CNN in White blood cells identification Classification accuracy WBCs. Net with different image input obtained from different networks usinglayer transfer learning accuraciesof before and after different FS algorithms forsizes Alex. Ne approach

Introduction Literature Review CNN Transfer Learning Case Study Conclusion • • 51/53 Advantages 1)automatic feature learning 2)multiple layers 3)high accuracy 4)high generalization 5)Software & Hardware 6)potential to more capability • • • Disdvantages 1)Weak theory 2)high cost of computation 3)large data 4)hyper parameter adjustment 5)training problems

![Introduction Literature Review CNN Transfer Learning Case Study Conclusion References 5253 1 Krizhevsky Introduction Literature Review CNN Transfer Learning Case Study Conclusion References 52/53 • [1] Krizhevsky](https://slidetodoc.com/presentation_image_h2/96017af25c46bf17677bf4f3db19ac57/image-52.jpg)

Introduction Literature Review CNN Transfer Learning Case Study Conclusion References 52/53 • [1] Krizhevsky A, Sutskever I, Hinton GE. Imagenet classification with deep convolutional neural networks. In. Advances in neural information processing systems 2012 (pp. 1097 -1105). • Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition. ar. Xiv preprint ar. Xiv: 1409. 1556. 2014 Sep 4. -------vgg • Szegedy C, Liu W, Jia Y, Sermanet P, Reed S, Anguelov D, Erhan D, Vanhoucke V, Rabinovich A. Going deeper with convolutions. In. Proceedings of the IEEE conference on computer vision and pattern recognition 2015 (pp. 1 -9). • Yu Y, Wang J, Ng CW, Ma Y, Mo S, Fong EL, Xing J, Song Z, Xie Y, Si K, Wee A. Deep learning enables automated scoring of liver fibrosis stages. Scientific reports. 2018 Oct 30; 8(1): 1 -0.

Introduction Literature Review CNN Transfer Learning Case Study Conclusion References • Bejnordi BE, Mullooly M, Pfeiffer RM, Fan S, Vacek PM, Weaver DL, Herschorn S, Brinton LA, van Ginneken B, Karssemeijer N, Beck AH. Using deep convolutional neural networks to identify and classify tumor-associated stroma in diagnostic breast biopsies. Modern Pathology. 2018 Oct; 31(10): 1502 -12. • Mohammed-Amine Zyad, CLASSIFICATION OF BRAIN TUMOR FROM MAGNETIC RESONANCE IMAGING USING CONVOLUTIONAL NEURAL NETWORKS, International Journal of Advanced Science and Technology, 2019 53/53

• Thanks for your attention….