IV Convolutional Codes Introduction Block Codes Code words

IV. Convolutional Codes

Introduction Block Codes: Code words are produced on a block by block basis. In Block Codes, the encoder must buffer an entire block before generating the associated codeword. Some applications have bits arrive serially rather than in large blocks Convolutional codes operate on the incoming message sequence continuously in a serial manner © Tallal Elshabrawy 2

Convolutional Codes Specification A convolutional code is specified by three parameters (n, k, K), where l k/n is the coding rate and determines the number of data bits per coded bit l K is called the constraint length of the encoder where the encoder has K-1 memory elements © Tallal Elshabrawy 3

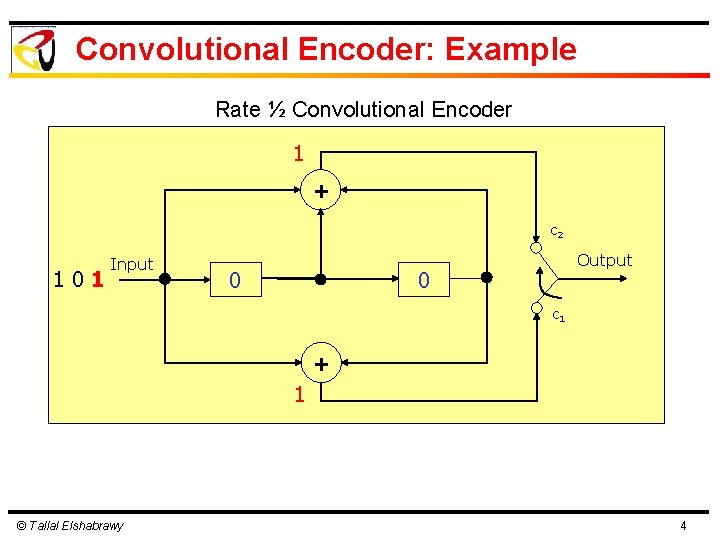

Convolutional Encoder: Example Rate ½ Convolutional Encoder 1 + c 2 101 Input 0 Output 0 c 1 + 1 © Tallal Elshabrawy 4

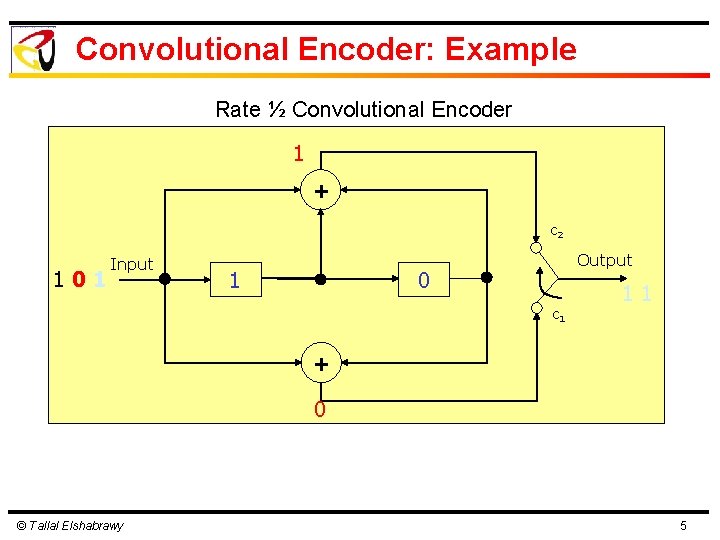

Convolutional Encoder: Example Rate ½ Convolutional Encoder 1 + c 2 101 Input 1 Output 0 c 1 11 + 0 © Tallal Elshabrawy 5

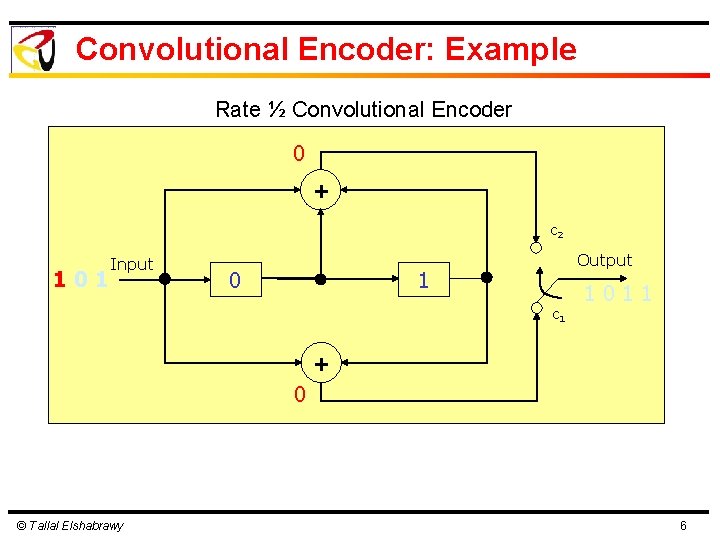

Convolutional Encoder: Example Rate ½ Convolutional Encoder 0 + c 2 101 Input 0 Output 1 c 1 1011 + 0 © Tallal Elshabrawy 6

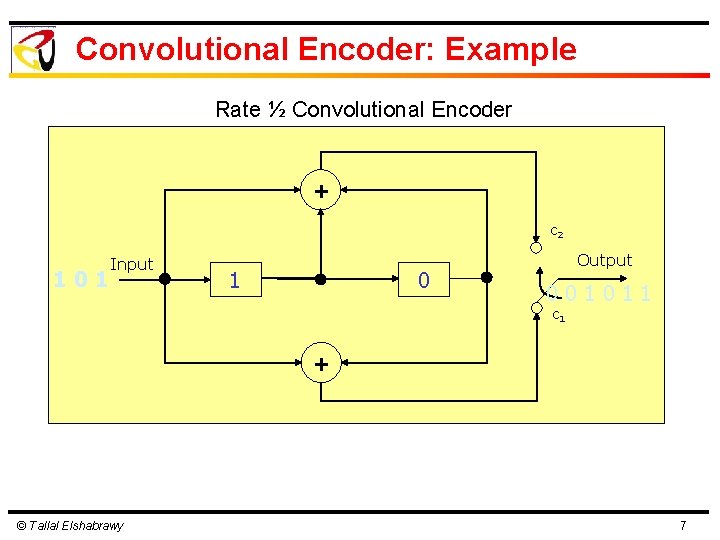

Convolutional Encoder: Example Rate ½ Convolutional Encoder + c 2 101 Input 1 0 Output 001011 c 1 + © Tallal Elshabrawy 7

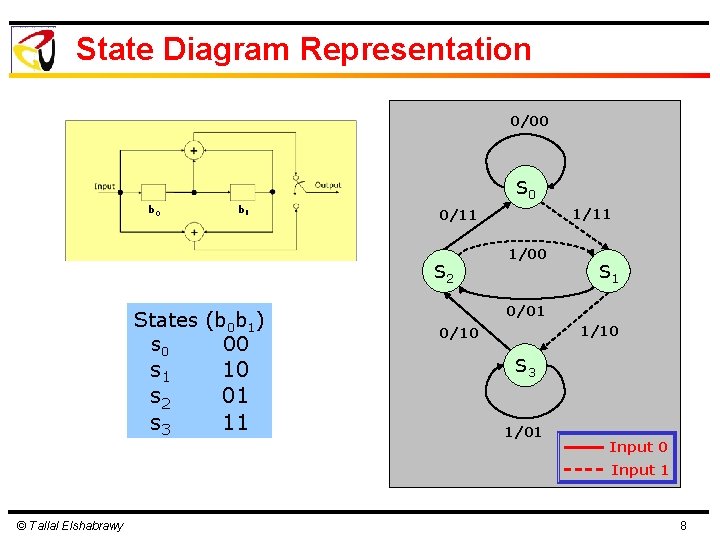

State Diagram Representation 0/00 S 0 b 1 S 2 States (b 0 b 1) s 0 00 s 1 s 2 s 3 10 01 11 1/11 0/11 1/00 S 1 0/01 1/10 0/10 S 3 1/01 Input 0 Input 1 © Tallal Elshabrawy 8

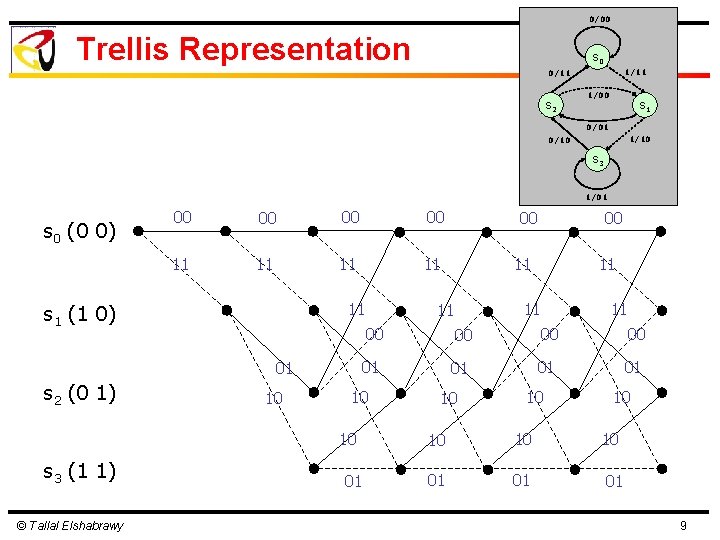

0/00 Trellis Representation S 0 1/11 0/11 S 2 1/00 S 1 0/01 1/10 0/10 S 3 1/01 s 0 (0 0) 00 00 11 11 11 s 1 (1 0) 01 s 2 (0 1) 10 © Tallal Elshabrawy 00 11 11 11 00 00 01 01 10 10 s 3 (1 1) 00 01 10 10 10 01 01 01 9

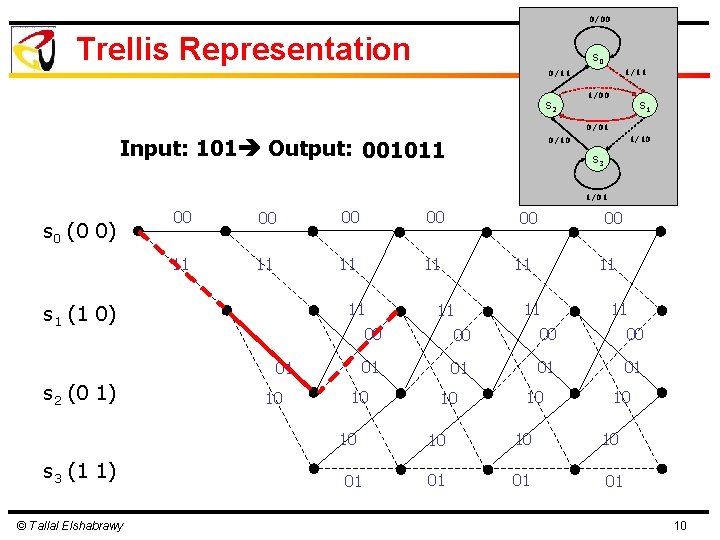

0/00 Trellis Representation S 0 1/11 0/11 S 2 1/00 S 1 0/01 Input: 101 Output: 001011 1/10 0/10 S 3 1/01 s 0 (0 0) 00 00 11 11 11 s 1 (1 0) 01 s 2 (0 1) 10 © Tallal Elshabrawy 00 11 11 11 00 00 01 01 10 10 s 3 (1 1) 00 01 10 10 10 01 01 01 10

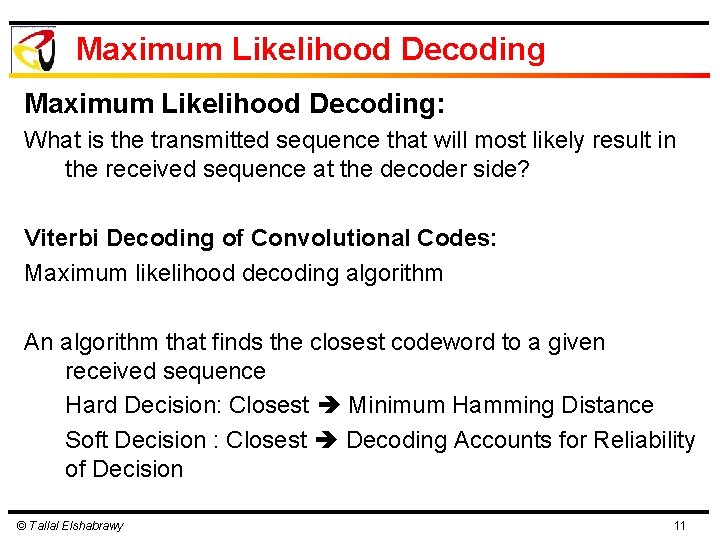

Maximum Likelihood Decoding: What is the transmitted sequence that will most likely result in the received sequence at the decoder side? Viterbi Decoding of Convolutional Codes: Maximum likelihood decoding algorithm An algorithm that finds the closest codeword to a given received sequence Hard Decision: Closest Minimum Hamming Distance Soft Decision : Closest Decoding Accounts for Reliability of Decision © Tallal Elshabrawy 11

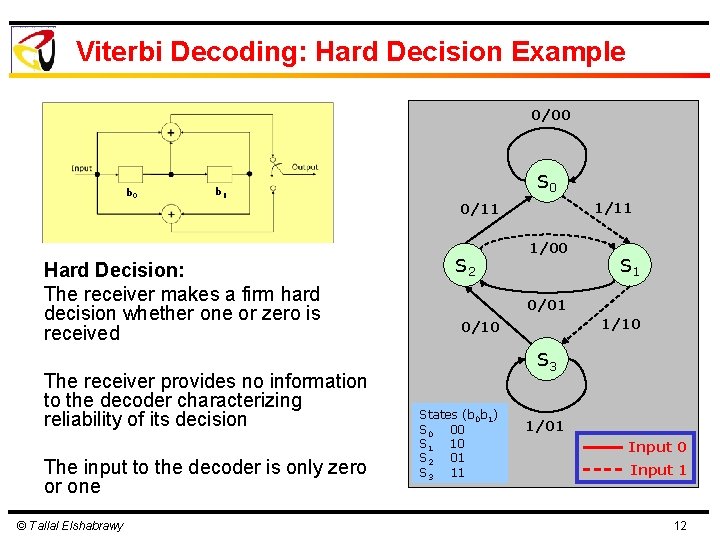

Viterbi Decoding: Hard Decision Example 0/00 b 0 S 0 b 1 1/11 0/11 Hard Decision: The receiver makes a firm hard decision whether one or zero is received The receiver provides no information to the decoder characterizing reliability of its decision The input to the decoder is only zero or one © Tallal Elshabrawy S 2 1/00 S 1 0/01 1/10 0/10 S 3 States (b 0 b 1) S 0 00 S 1 10 S 2 01 S 3 11 1/01 Input 0 Input 1 12

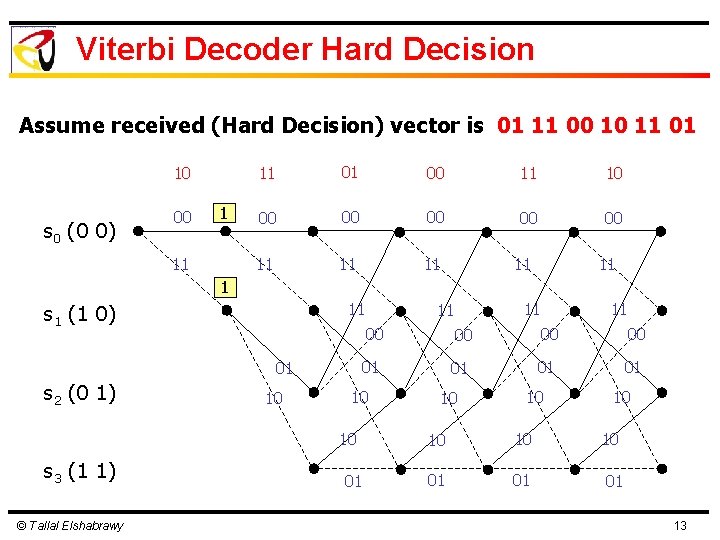

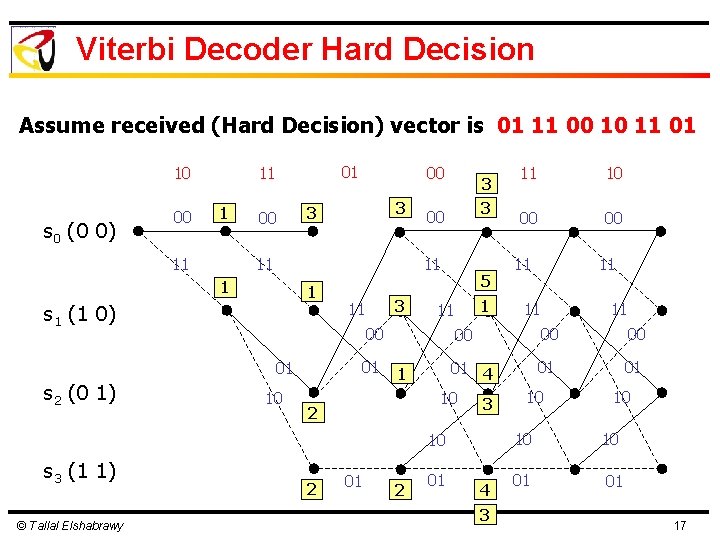

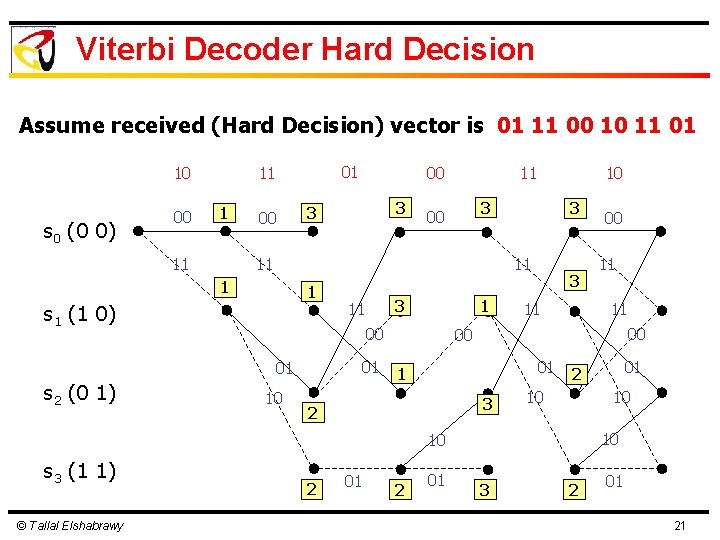

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 1 11 11 01 00 11 10 00 00 00 11 11 11 s 1 (1 0) 01 s 2 (0 1) 10 © Tallal Elshabrawy 11 00 00 01 01 10 10 s 3 (1 1) 11 11 01 10 10 10 01 01 01 13

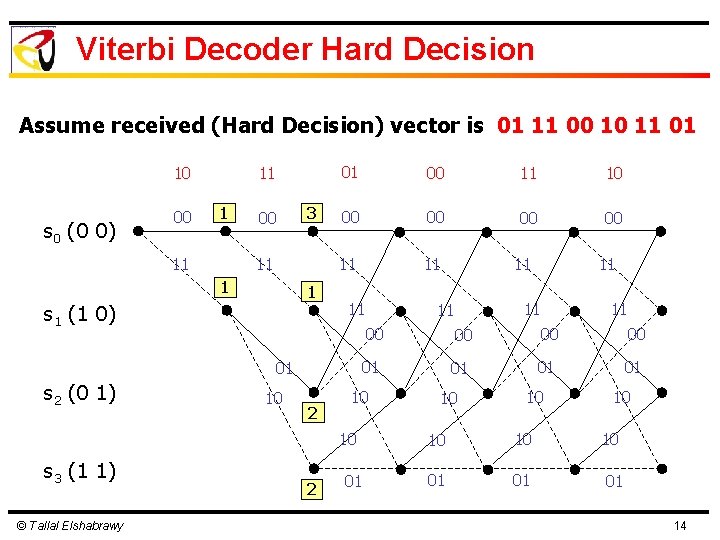

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 11 1 11 00 3 11 1 1 s 1 (1 0) 01 00 11 10 00 00 11 11 11 01 s 2 (0 1) 10 2 © Tallal Elshabrawy 2 11 11 00 00 01 01 10 10 s 3 (1 1) 11 01 10 10 10 01 01 01 14

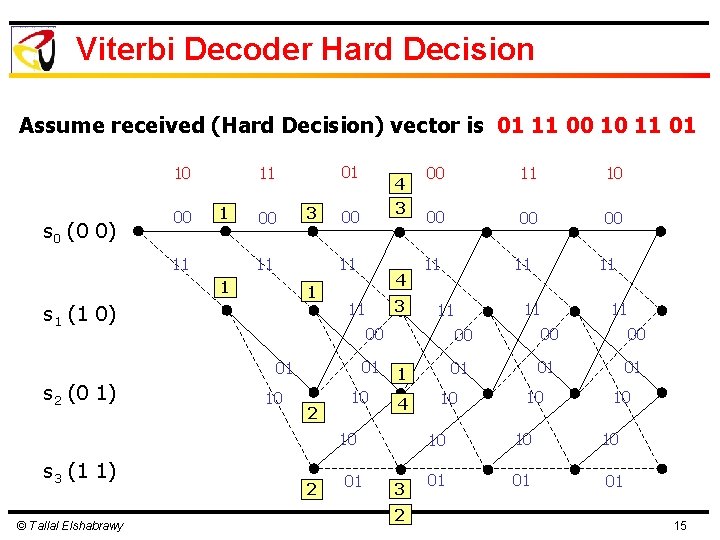

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 11 3 00 11 1 1 s 1 (1 0) 4 3 11 01 © Tallal Elshabrawy 11 10 00 00 00 10 2 10 4 2 01 3 2 11 11 11 1 10 s 3 (1 1) 00 00 01 s 2 (0 1) 4 11 00 00 00 01 01 01 10 10 10 01 01 01 15

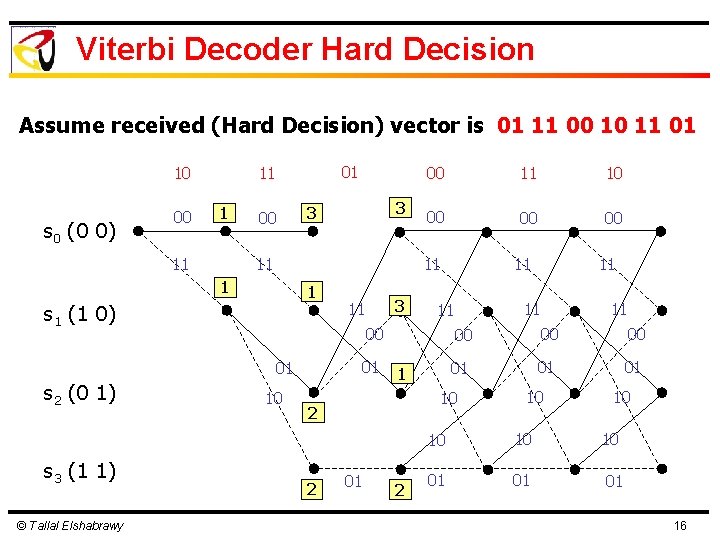

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 11 1 s 1 (1 0) © Tallal Elshabrawy 10 00 00 00 3 11 11 01 10 1 2 01 2 11 00 00 00 01 01 01 10 2 11 11 11 00 01 s 3 (1 1) 11 11 1 s 2 (0 1) 3 3 00 10 10 10 01 01 01 16

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 11 3 3 00 11 1 1 s 1 (1 0) 3 11 01 10 1 2 5 1 11 00 01 s 2 (0 1) 00 © Tallal Elshabrawy 2 01 2 10 00 00 11 11 00 00 00 01 4 10 3 01 01 10 s 3 (1 1) 11 01 4 3 10 10 01 01 17

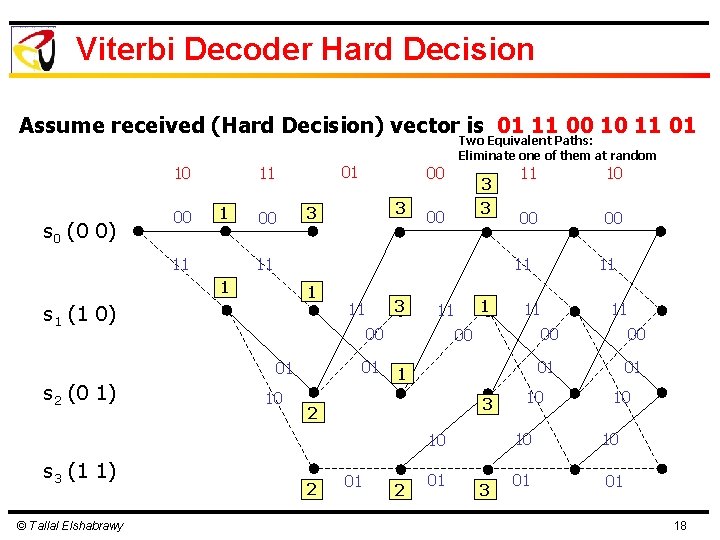

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 00 3 3 00 11 1 s 1 (1 0) 3 11 01 10 1 11 00 01 © Tallal Elshabrawy 10 00 00 1 3 2 2 01 3 11 11 00 10 s 3 (1 1) 11 11 1 s 2 (0 1) Two Equivalent Paths: Eliminate one of them at random 11 00 00 01 01 10 10 01 01 18

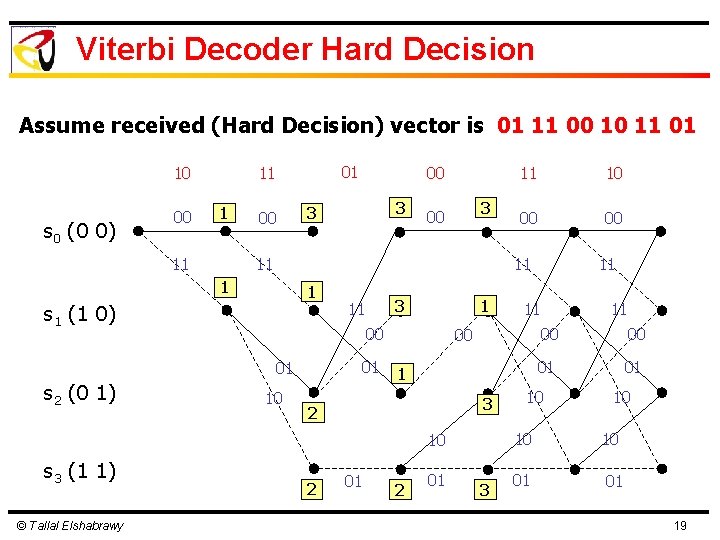

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 3 00 11 1 s 1 (1 0) 3 11 1 00 01 01 © Tallal Elshabrawy 10 00 00 10 1 3 2 2 01 3 11 11 00 10 s 3 (1 1) 11 11 1 s 2 (0 1) 00 11 00 00 01 01 10 10 01 01 19

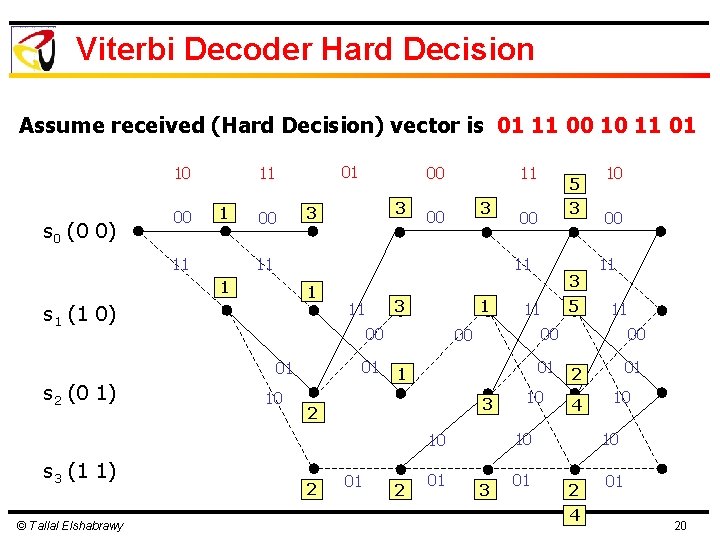

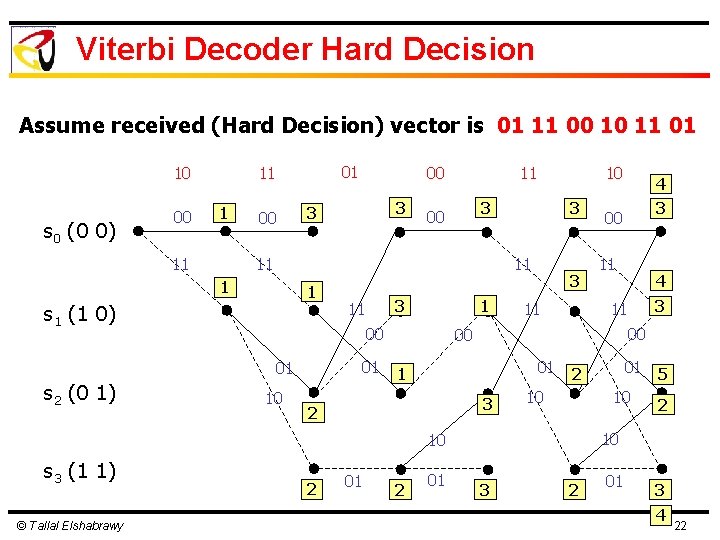

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 11 3 00 11 1 s 1 (1 0) 3 11 1 00 01 01 © Tallal Elshabrawy 3 00 10 01 3 01 2 5 01 10 00 11 11 00 1 2 2 3 11 00 10 00 3 01 01 2 4 10 10 s 3 (1 1) 5 11 1 s 2 (0 1) 00 10 10 2 4 01 20

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 3 00 3 11 1 s 1 (1 0) 3 11 1 00 01 01 10 10 11 11 1 s 2 (0 1) 00 3 11 00 00 01 1 3 2 © Tallal Elshabrawy 2 01 11 11 2 01 01 2 10 10 s 3 (1 1) 00 3 2 01 21

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 3 00 3 11 1 s 1 (1 0) 3 11 1 00 01 01 10 10 11 11 1 s 2 (0 1) 00 3 11 © Tallal Elshabrawy 2 01 1 2 01 4 3 00 3 01 11 11 2 10 01 5 10 2 10 10 s 3 (1 1) 3 00 00 2 4 3 2 01 3 4 22

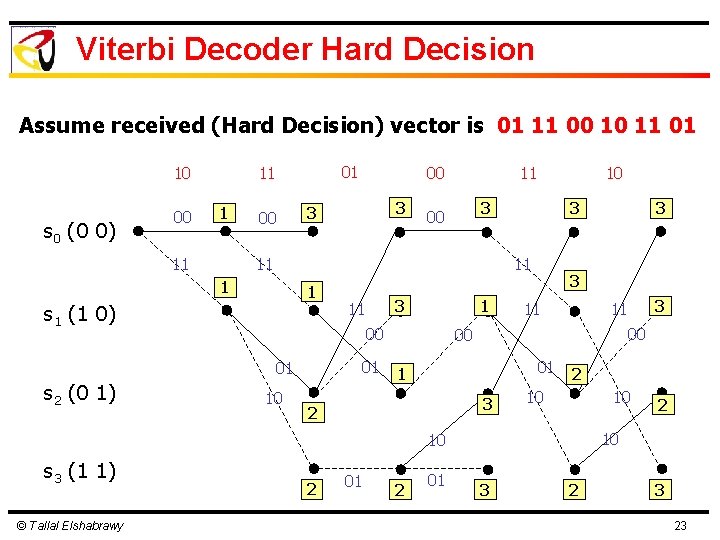

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 10 s 0 (0 0) 00 01 11 00 3 3 3 00 3 11 1 s 1 (1 0) 3 11 1 00 01 01 10 10 11 11 1 s 2 (0 1) 00 3 11 00 00 01 1 3 2 © Tallal Elshabrawy 2 01 3 11 2 10 10 s 3 (1 1) 3 3 23

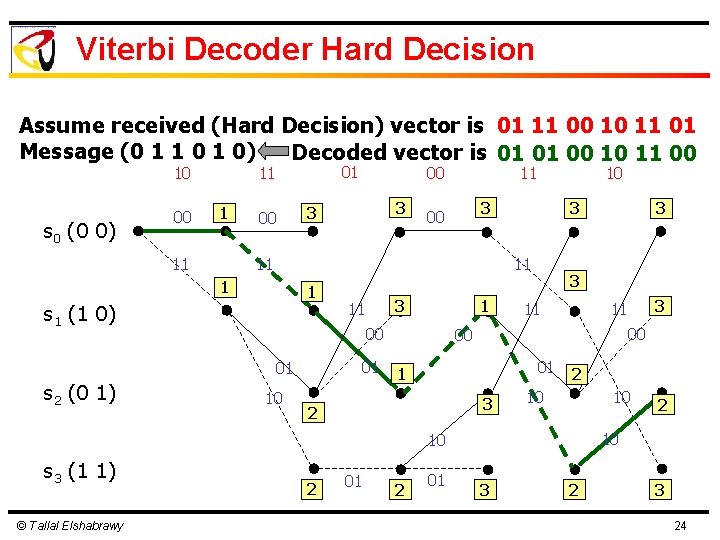

Viterbi Decoder Hard Decision Assume received (Hard Decision) vector is 01 11 00 10 11 01 Message (0 1 1 0) Decoded vector is 01 01 00 10 11 00 10 s 0 (0 0) 00 01 11 00 3 3 3 00 3 11 1 s 1 (1 0) 3 11 1 00 01 01 10 10 11 11 1 s 2 (0 1) 00 3 11 00 00 01 1 3 2 © Tallal Elshabrawy 2 01 3 11 2 10 10 s 3 (1 1) 3 3 24

Puncturing of Convolutional Codes l Increasing Code Rate by Puncturing of Some of the Outputs of Convolutional Encoder l Puncturing Rule selects the Outputs that are Eliminated l The Construction of a Punctured Convolutional Code is that its Trellis should maintain the Same State and Transition Structure of the Base Code © Tallal Elshabrawy 25

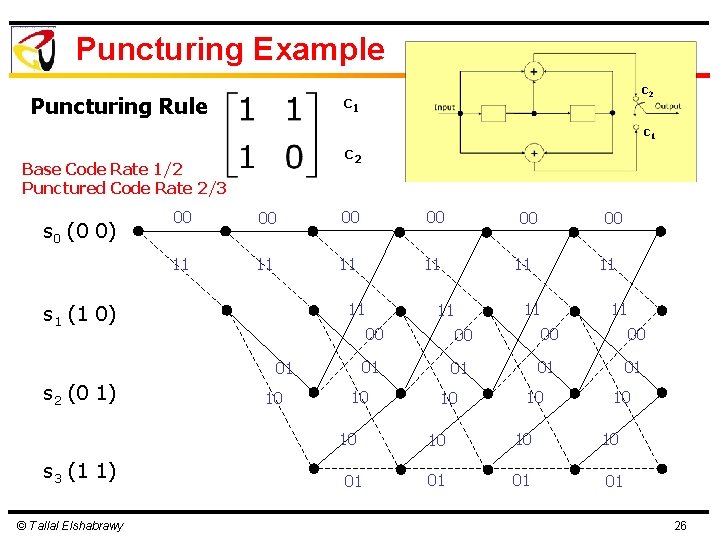

Puncturing Example c 2 c 1 Puncturing Rule c 1 c 2 Base Code Rate 1/2 Punctured Code Rate 2/3 s 0 (0 0) 00 00 11 11 11 s 1 (1 0) 01 s 2 (0 1) 10 © Tallal Elshabrawy 00 11 11 11 00 00 01 01 10 10 s 3 (1 1) 00 01 10 10 10 01 01 01 26

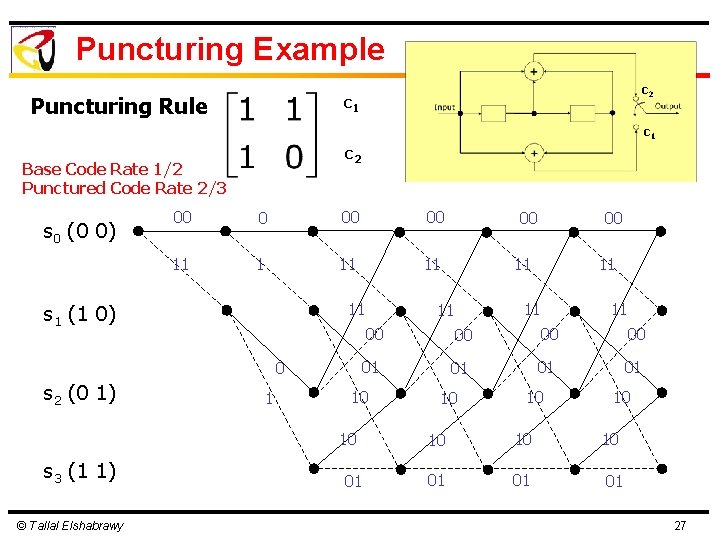

Puncturing Example c 2 c 1 Puncturing Rule c 1 c 2 Base Code Rate 1/2 Punctured Code Rate 2/3 s 0 (0 0) 00 00 11 11 11 s 1 (1 0) 0 s 2 (0 1) 1 © Tallal Elshabrawy 00 11 11 11 00 00 01 01 10 10 s 3 (1 1) 00 01 10 10 10 01 01 01 27

Puncturing Example c 2 c 1 Puncturing Rule c 1 c 2 Base Code Rate 1/2 Punctured Code Rate 2/3 s 0 (0 0) 00 0 11 1 11 s 1 (1 0) 0 s 2 (0 1) 1 © Tallal Elshabrawy 00 11 11 11 00 00 01 01 10 10 s 3 (1 1) 00 01 1 10 10 0 01 01 28

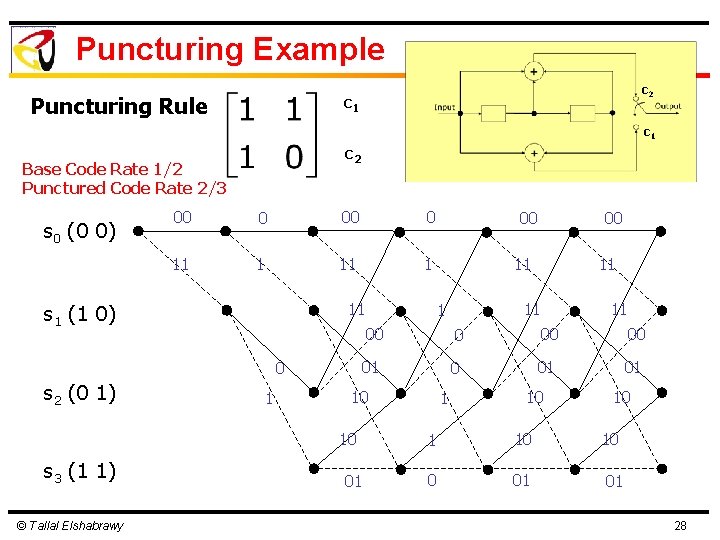

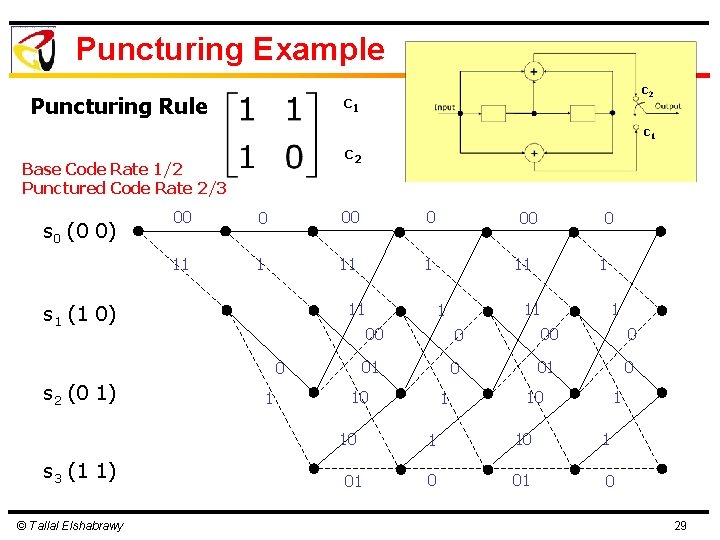

Puncturing Example c 2 c 1 Puncturing Rule c 1 c 2 Base Code Rate 1/2 Punctured Code Rate 2/3 s 0 (0 0) 00 0 11 1 11 s 1 (1 0) 0 s 2 (0 1) 1 © Tallal Elshabrawy 0 11 1 1 00 0 01 0 10 10 s 3 (1 1) 00 01 1 10 1 0 01 0 29

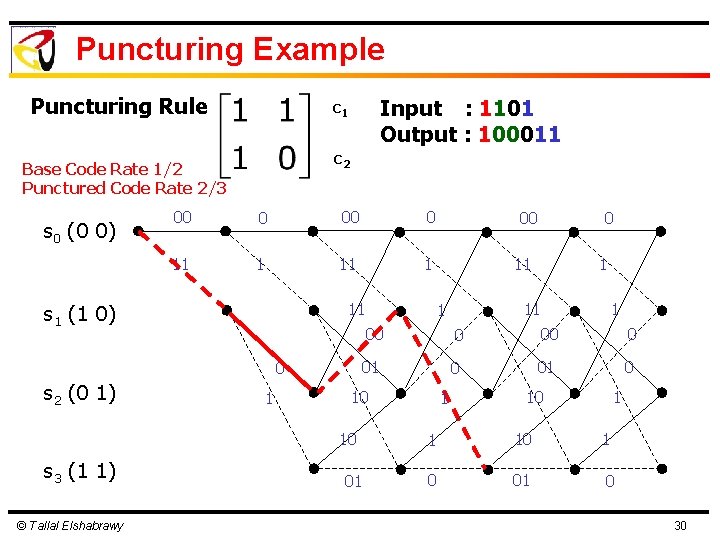

Puncturing Example Puncturing Rule c 2 Base Code Rate 1/2 Punctured Code Rate 2/3 s 0 (0 0) Input : 1101 Output : 100011 c 1 00 0 11 1 11 s 1 (1 0) 0 s 2 (0 1) 1 © Tallal Elshabrawy 0 11 1 1 00 0 01 0 10 10 s 3 (1 1) 00 01 1 10 1 0 01 0 30

- Slides: 30