Decoding of Convolutional Codes 1 Let Cm be

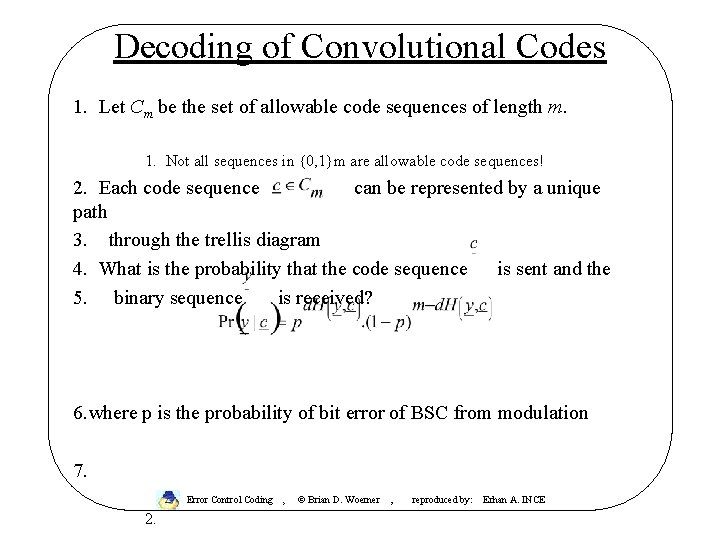

Decoding of Convolutional Codes 1. Let Cm be the set of allowable code sequences of length m. 1. Not all sequences in {0, 1}m are allowable code sequences! 2. Each code sequence can be represented by a unique path 3. through the trellis diagram 4. What is the probability that the code sequence is sent and the 5. binary sequence is received? 6. where p is the probability of bit error of BSC from modulation 7. Error Control Coding , 2. © Brian D. Woerner , reproduced by: Erhan A. INCE

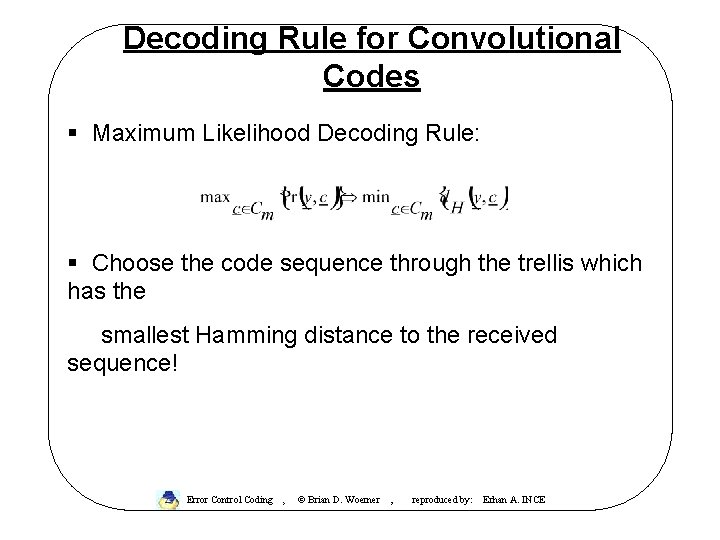

Decoding Rule for Convolutional Codes § Maximum Likelihood Decoding Rule: § Choose the code sequence through the trellis which has the smallest Hamming distance to the received sequence! Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

The Viterbi Algorithm § The Viterbi Algorithm (Viterbi, 1967) is a clever way of implementing Maximum Likelihood Decoding. Computer Scientists will recognize the Viterbi Algorithm as an example of a CS technique called “ Dynamic Programming” § Reference: G. D. Forney, “ The Viterbi Algorithm”, Proceedings of the IEEE, 1973 § Chips are available from many manufacturers which implement the Viterbi Algorithm for K < 10 § Can be used for either hard or soft decision decoding We consider hard decision decoding initially Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Basic Idea of Viterbi Algorithm § There are 2 rm code sequences in Cm. § This number of sequences approaches infinity as m becomes large § Instead of searching through all possible sequences, find the best code sequence "one stage at a time" Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

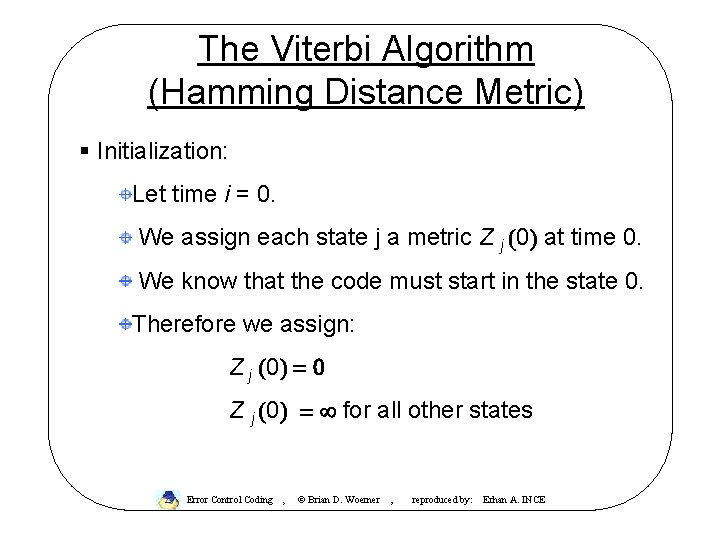

The Viterbi Algorithm (Hamming Distance Metric) § Initialization: Let time i = 0. We assign each state j a metric Z j (0) at time 0. We know that the code must start in the state 0. Therefore we assign: Z j (0) = 0 Z j (0) = ¥ for all other states Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

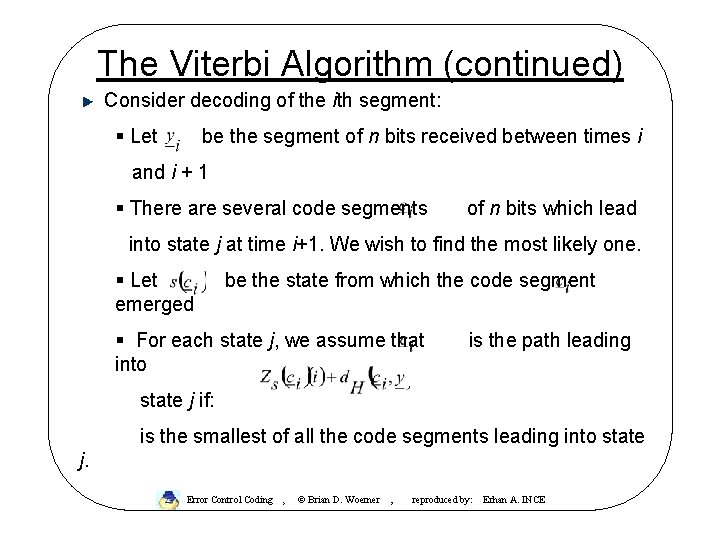

The Viterbi Algorithm (continued) Consider decoding of the ith segment: § Let be the segment of n bits received between times i and i + 1 § There are several code segments of n bits which lead into state j at time i+1. We wish to find the most likely one. § Let emerged be the state from which the code segment § For each state j, we assume that into is the path leading state j if: is the smallest of all the code segments leading into state j. Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

The Viterbi Algorithm (continued) § Iteration: • Let i=i+1 • Repeat previous step • Incorrect paths drop out as i approaches infinity. Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

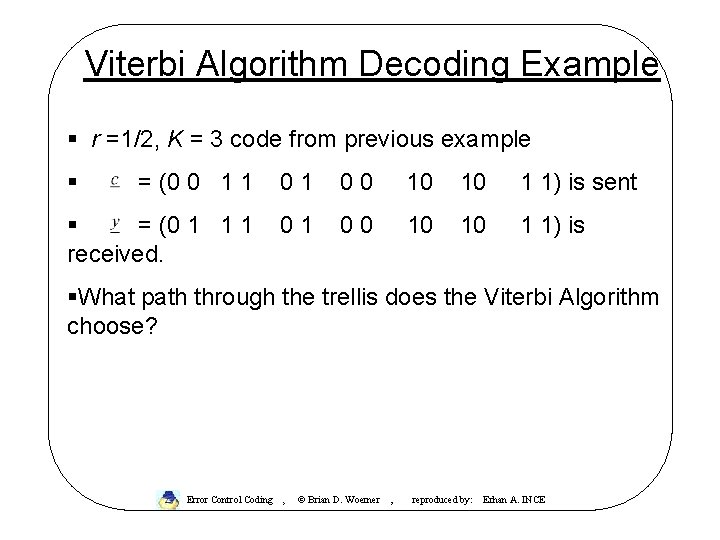

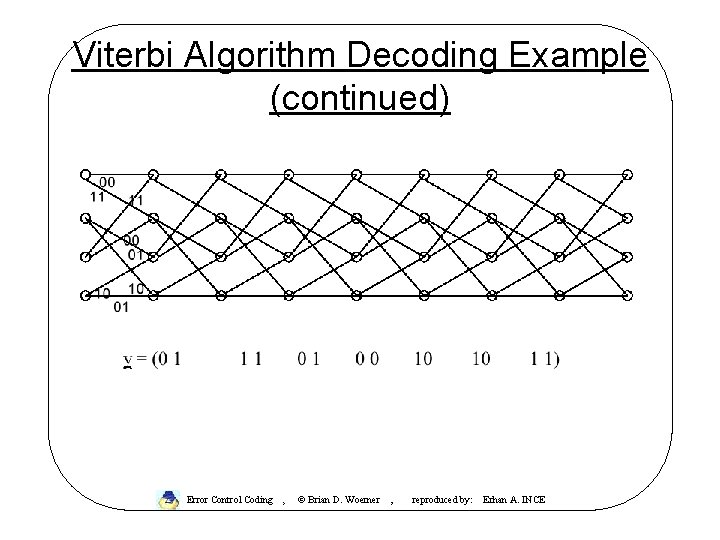

Viterbi Algorithm Decoding Example § r =1/2, K = 3 code from previous example § = (0 0 1 1 01 00 10 10 1 1) is sent § = (0 1 1 1 received. 01 00 10 10 1 1) is §What path through the trellis does the Viterbi Algorithm choose? Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Viterbi Algorithm Decoding Example (continued) Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Viterbi Decoding Examples There is a company Alantro with a example Viterbi decoder on the web, made available to promote their website http: //www. alantro. com/viterbi/workshop. html Your browser must have JAVA-enabled Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Summary of Encoding and Decoding of Convolutional Codes § Convolutional are encoded using a finite state machine. § Optimal decoder for convolutional codes will find the path through the trellis which lies at the shortest distance to the received signal. § Viterbi algorithm reduces the complexity of this search by finding the optimal path one stage at a time. § The complexity of the Viterbi algorithm is proportional Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE to the number of states

Implementation of Viterbi Decoder • Complexity is proportional to number of states – increases exponentially with constrain length K: 2 K • Very suited to parallel implementation – Each state has two transitions into it – Each state has two transitions out of it – Each node must compute two path metrics, add them to previous metric and compare – Much analysis as gone into optimizing implementation of this “Butterfly” calculation Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Other Applications of Viterbi Algorithm § Any problem that can be framed in terms of sequence detection can be solved with the Viterbi Algorithm] § MLSE Equalization § Decoding of continuous phase modulation §Multiuser detection Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Continuous Operation § When continuous operation is desired, decoder will automatically synchronize with transmitted signal without knowing state § Optimal decoding requires waiting until all bits are received to trace back path. §In practice, it is usually safe to assume that all paths have merged after approximately 5 K time intervals diminishing returns after delay of 5 K Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Frame Operation of Convolutional Codes § Frequently, we desire to transfer short (e. g. , 192 bit) frames with convolutional codes. § When we do this, we must find a way to terminate code trellis Truncation Zero-Padding Tail-biting § Note that the trellis code is serving as a ‘block’ code in Error Control Coding , this application © Brian D. Woerner , reproduced by: Erhan A. INCE

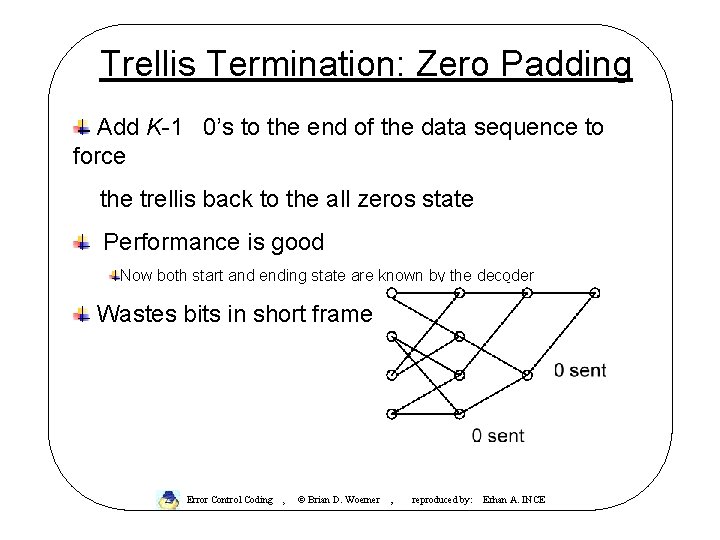

Trellis Termination: Zero Padding Add K-1 0’s to the end of the data sequence to force the trellis back to the all zeros state Performance is good Now both start and ending state are known by the decoder Wastes bits in short frame Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Performance of Convolutional Codes § When the decoder chooses a path through the trellis which diverges from the correct path, this is called an "error event“ § The probability that an error event begins during the current time interval is the "first-event error probablity“ Pe § The minimum Hamming distance separating any two distinct path through the trellis is called the “free distance” dfree. Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

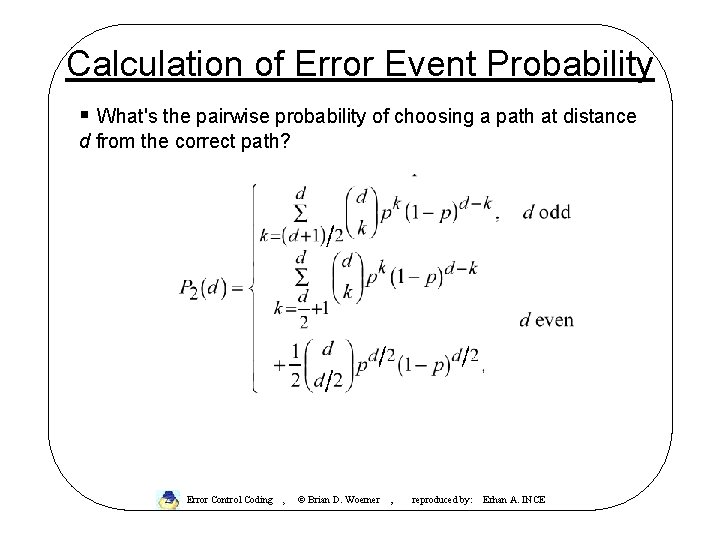

Calculation of Error Event Probability § What's the pairwise probability of choosing a path at distance d from the correct path? Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

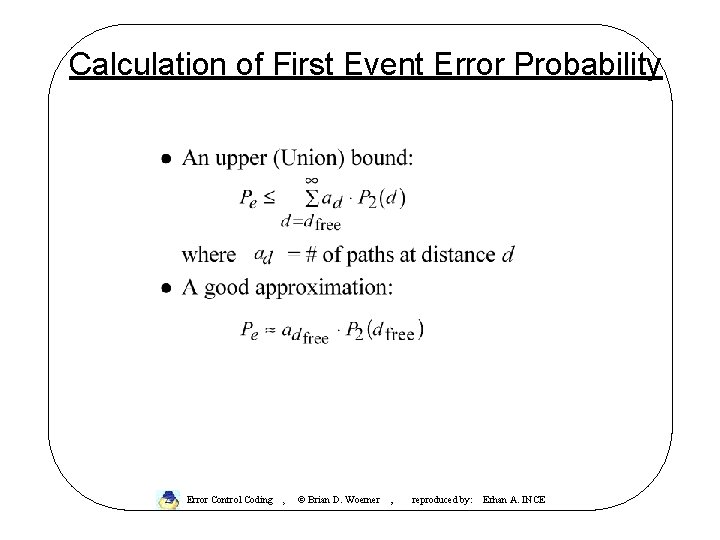

Calculation of First Event Error Probability Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

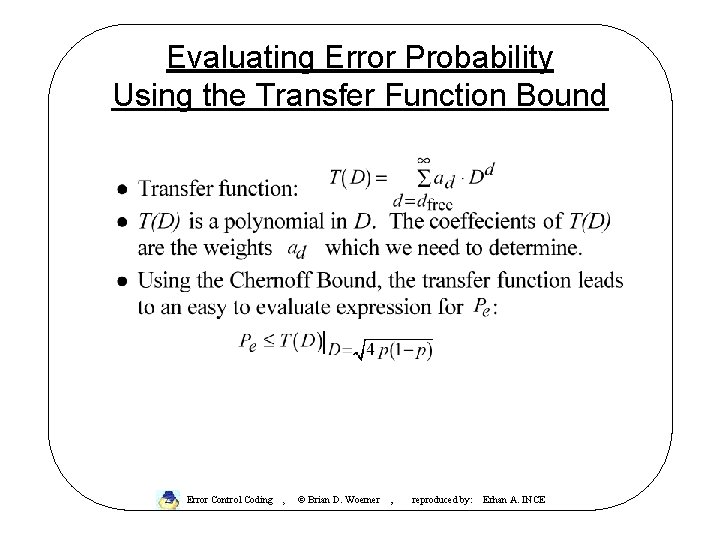

Evaluating Error Probability Using the Transfer Function Bound Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

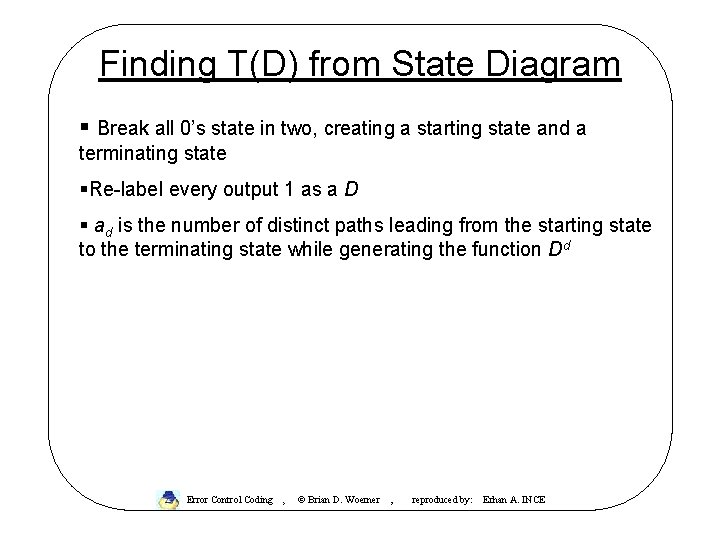

Finding T(D) from State Diagram § Break all 0’s state in two, creating a starting state and a terminating state §Re-label every output 1 as a D § ad is the number of distinct paths leading from the starting state to the terminating state while generating the function Dd Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

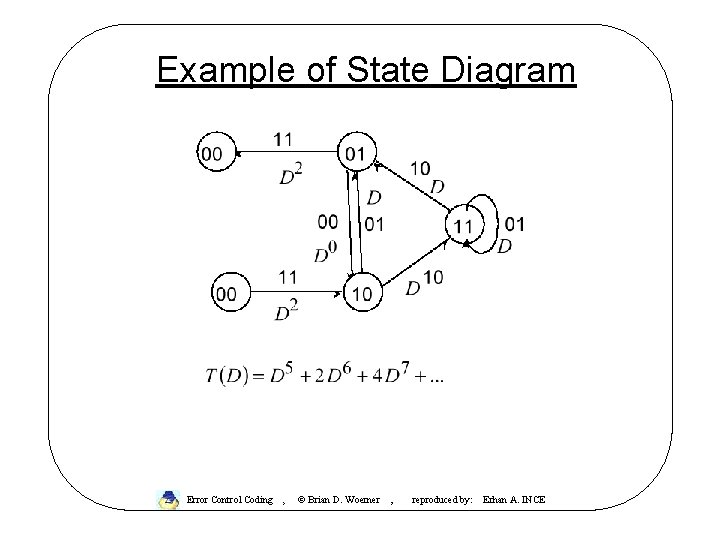

Example of State Diagram Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

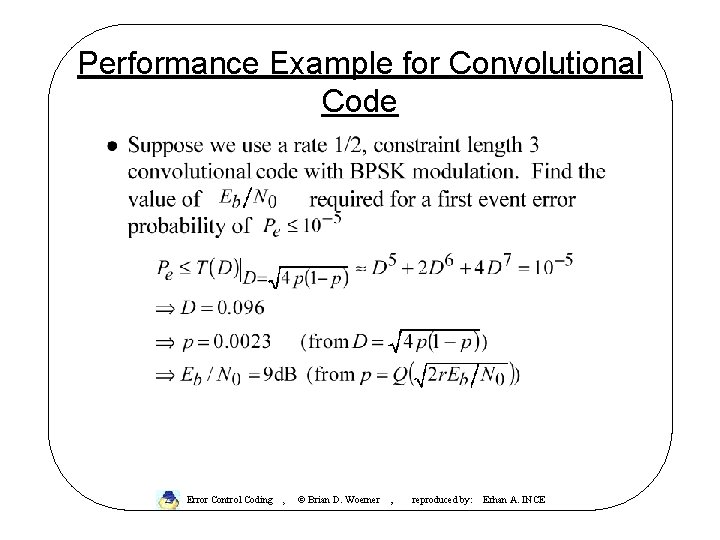

Performance Example for Convolutional Code Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

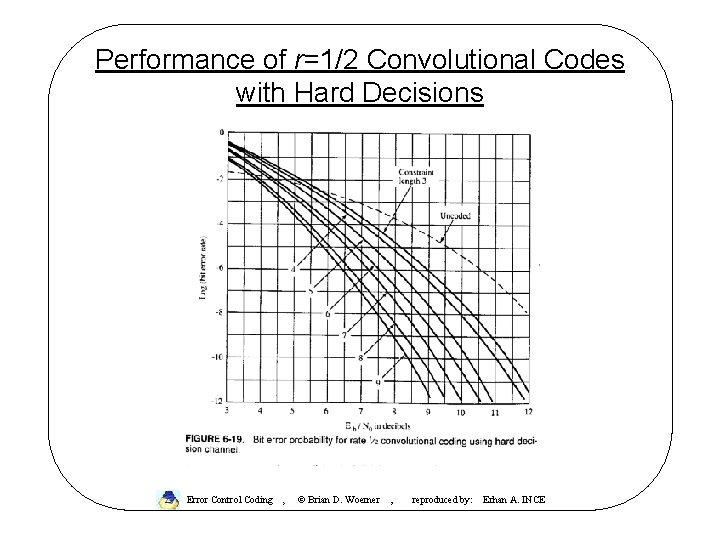

Performance of r=1/2 Convolutional Codes with Hard Decisions Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

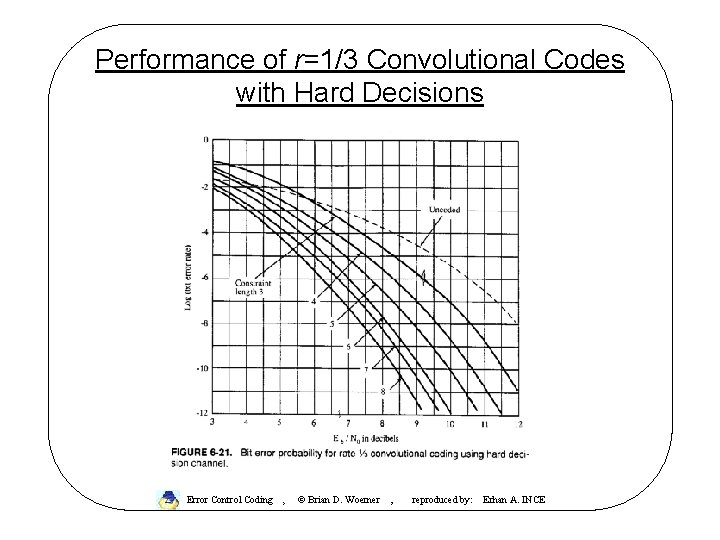

Performance of r=1/3 Convolutional Codes with Hard Decisions Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Punctured Convolutional Codes Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

Practical Examples of Convolutional Codes Error Control Coding , © Brian D. Woerner , reproduced by: Erhan A. INCE

- Slides: 27